Abstract

Consumers and marketers use facial information to make important inferences about others in many business contexts. However, consumers and firms are increasingly concerned about privacy and discrimination. To address privacy–perception trade-offs, the authors propose a novel contour-as-face (CaF) framework that transforms face images into contour images incorporating both the nonoutline and outline features of facial parts. In three empirical studies, the authors (1) compare human perceptions of face and contour images along 15 dimensions commonly assessed in marketing contexts; (2) investigate the effectiveness of contour images for protecting anonymity related to identity, age, and gender; and (3) implement the CaF framework in a real-life online dating context. Results show that the CaF framework effectively resolves privacy–perception trade-off problems by preserving the information that is useful for humans to make inferences about many relevant perceptual dimensions in marketing while making it virtually impossible for humans to infer identity and very difficult to infer age and gender accurately—two critical discrimination factors. Results from the field implementation demonstrate the feasibility and value of using the CaF framework for real-life decision making.

The face plays an important role in decision-making processes in various contexts, including politics (e.g., Todorov et al. 2005), law and justice (e.g., Zebrowitz and McDonald 1991), leadership (e.g., Rule and Ambady 2008), the labor market (e.g., Bóo, Rossi, and Urzúa 2013; Ruffle and Shtudiner 2014), and marketing (e.g., DeShields, Kara, and Kaynak 1996; Kahle and Homer 1985; Keh et al. 2013; Valentine et al. 2014; Xiao and Ding 2014). In the social networking era, the amount of facial information available online has increased dramatically. In 2013, Facebook revealed that its users had uploaded 250 billion photos and were uploading 350 million new photos every day (Smith 2019).

This spread of facial images creates a “face dilemma” for consumers. On the one hand, the online presence of facial information helps people make decisions when using social networking sites such as Facebook, Match.com, and LinkedIn; on the other hand, it may increase the risk of misuse, exploitation, and other improper disclosures of identity information (Cobb and Kohno 2017; Senior and Pankanti 2011) as well as face-based discrimination (e.g., Ruffle and Shtudiner 2014). The European Union’s General Data Protection Regulation reflects the public’s heightened awareness of the need to protect personal information, including facial data.

The face dilemma also affects marketing decisions in various contexts, including service employee selection/allocation, professional service evaluation, and customer relationship management (e.g., Gomulya et al. 2017; Keh et al. 2013). Customers use facial information to assess an employee’s emotional state, attractiveness, and trustworthiness. These assessments in turn affect customer satisfaction, loyalty, and service quality evaluations, especially in the online environment (e.g., Rezlescu et al. 2012). Therefore, it is important for firms to consider facial information when allocating employees to interact with different customers in different contexts. HireVue, a hiring intelligence firm, uses face perception modeling to determine the fitness of potential employees (often those who will be interacting with customers) for companies such as IBM, Unilever, and Hilton. Yet firms must avoid discrimination based on demographics that can be inferred from an employee’s face, such as gender and age (Altonji and Blank 1999). In many places, laws have been passed to prohibit recruitment and wage discrimination based on facial appearance (Ellis and Watson 2012).

Resolving the face dilemma thus requires protecting different facial privacy data in two types of applications: in Type I applications (e.g., mostly related to consumers), facial identities must be protected to reduce the risk of violating individuals’ privacy, and in Type II applications (e.g., employee allocation, professional service evaluation), demographic traits such as gender and age must be protected to prevent face-based discrimination against individuals from certain groups. In both types of applications, it is important to preserve facial cues that help people make useful inferences when making decisions. We propose a novel solution to address the face dilemma: the contour-as-face (CaF) framework. This framework asks a human to make judgments and decisions using face contours as a proxy for faces and then directly models the judgments and decisions as a function of face contours using Fourier transformation. We show that the CaF framework can anonymize face images for Type I and Type II applications without (substantially) compromising the utility of facial information in decision making.

In the sections that follow, we first review the existing literature and describe the motivations for our work by discussing five marketing domains related to the face dilemma. We then introduce the CaF framework as a way to resolve the face dilemmas in Type I and Type II applications. We present three empirical studies in which we investigated the effectiveness of the CaF framework in addressing the face dilemma and CaF’s usefulness in real-life business applications. We conclude with a general discussion of the use of the CaF framework for face-related business contexts, as well as future research directions.

Literature Review and Motivation

When seeing a face, the human brain instantly processes facial information and makes inferences about the person along various dimensions such as identity, demographics, physical state, emotional state, and social traits (Todorov 2017). Table 1 summarizes the types of face-based perceptions and application contexts commonly studied in the literature.

Relevant Literature on Face-Based Perception.

Notes: The complete references of Table 1 can be found in Web Appendix D. Y = the corresponding aspect has been studied in the representative references; N = the corresponding aspect has not been studied in the representative references.

The literature on face-based perceptions is too vast to be covered in this article, so we focus our discussion on the widespread usage of facial data in marketing and business contexts. We first provide a brief review of previous research on the use of face perceptions in business-related contexts; we subsequently discuss privacy issues associated with using facial data. We then describe the motivation for our work: to resolve the two types of privacy–perception trade-offs associated with the use of facial data in various marketing domains. Finally, we discuss the challenges and limitations of existing solutions in addressing the trade-off problems.

Face-Based Perceptions

Face-based perceptions have been widely used in business-related contexts. First, studies have consistently shown that face-based perceptions and preferences can affect individuals’ evaluations of others in various marketing contexts, including advertising, personal selling, service encounters, and networking/dating (e.g., Small and Verrochi 2009; Valentine et al. 2014; Xiao and Ding 2014). Customers demonstrate higher purchase intentions or satisfaction levels when spokespersons, salespeople, and service providers are perceived to be more attractive than their counterparts or demonstrate positive emotional states (e.g., DeShields, Kara, and Kaynak 1996; Hennig-Thurau et al. 2006; Kahle and Homer 1985; Wang et al. 2017). As a result, social inferences from job candidates’ facial information are widely used in the employee selection process (e.g., Caers and Castelyns 2011). For example, face-based perceptions such as maturity, dominance, and competence have been shown to influence the selection of employees (e.g., Gorn, Jiang, and Johar 2008; Graham, Harvey, and Puri 2016; Keh et al. 2013). Second, perceptions from customer facial data can be used to improve targeting and segmentation, firms’ understanding of customer preferences, and marketing effectiveness (Xiao, Kim, and Ding 2013). For example, Lu, Xiao, and Ding (2016) analyzed customers’ facial expressions in videos to infer the customers’ product preferences. Affectiva, an emotion analytics company, helps brands measure customer responses to digital content through facial frames of customers captured while they are viewing digital content online. Lapetus Solutions, an artificial intelligence company, infers various types of information related to customers’ health conditions from customers’ facial images, which has been used by insurance companies for segmentation and targeting.

Facial Privacy

The use of facial data in business contexts is a double-edged sword. As the main source of personal biometric information, the face can be used to identify a person’s demographics or identity. The prevalence of publicly available facial information gives rise to risks and concerns about facial privacy, including face-based discrimination and identity privacy violations.

Face-based discrimination

Face perceptions of demographic information can bias human judgments and decisions (for a review, see Olivola, Funk, and Todorov [2014]) in business contexts. Studies have shown that job allocation decisions are affected by perceptions of employees’ gender and sexual dimorphism (e.g., Altonji and Blank 1999; Landau 1995). For example, Acquisti and Fong (2020) found that access to information about candidates’ sexual dimorphism and ethnicity via online social network profiles could bias employers’ callback decisions. The use of face information in automated hiring software is facing widespread public backlash, with fears that it may lead to more biased hiring decisions (Martin 2018). Despite a large body of literature on the subject, to the best of our knowledge, scholars have yet to propose an effective method to protect disadvantaged groups from face-based discrimination, especially in the online recruitment and job allocation contexts.

Identity privacy

As the use of visual data in business contexts has increased, so too have customers’ concerns about identity privacy when sharing images and videos (Cobb and Kohno 2017; Gross and Acquisti 2005). In a recent survey, more than 75% of the respondents reported that they did not feel comfortable with firms’ use of facial data in either a commercial or human resource context (Ada Lovelace Institute 2019). There are many face images that circulate daily on social media platforms (e.g., Facebook, LinkedIn), photo-sharing apps (e.g., Instagram), and online dating websites. It is likely that a human may look at a photo on an “anonymous” website and recognize the pictured person from memory. Likewise, algorithms can match a photo on an anonymous website, such as a dating site, to a photo on a nonanonymous website such as LinkedIn or Facebook, thus revealing the identity of the person on the anonymous website (Cobb and Kohno 2017).

From a firm’s perspective, the level of protection of customers’ personal data affects a firm’s marketing and financial performance. Privacy has been found to be a key driver of online trust (Martin, Borah, and Palmatier 2017). Higher levels of data security and privacy protection, as perceived by customers, can lead to higher levels of trust and increased purchase intentions, effective purchase behavior, and loyalty to a firm/brand (Martin, Borah, and Palmatier 2017). As a result, protecting customer privacy while gaining insights from customer data has been recognized as the top challenge for chief marketing officers of large firms such as HSBC, Yahoo, and Target, all of which have experienced data breaches in recent years (Benes 2018).

Motivation: Addressing Privacy–Perception Trade-Offs

These conflicting objectives must be addressed to provide value for stakeholders while reducing (or even eliminating) privacy infringement and discrimination. We formally define two major types of privacy–perception trade-offs when using facial data. A Type I identity protection–perception trade-off occurs when an optimizer (e.g., firm) attempts to preserve facial cues that are useful for human (e.g., customer) judgments or decisions while reducing facial cues that contain personally identifiable information, thus protecting facial identities. A Type II discrimination prevention–perception trade-off occurs when an optimizer attempts to preserve facial cues that aid decision making while reducing facial cues that may facilitate appearance-based discrimination.

Based on the literature, we identified five marketing domains (i.e., dating, networking, professional service evaluation, employee selection/allocation, and customer relationship management) that have pressing needs for effective tools to solve Type I (identity protection–perception) and/or Type II (discrimination prevention–perception) trade-off problems. As Table 2 shows, faces are used to achieve different objectives in each of these contexts.

Application Domains for Privacy–Perception Trade-Off.

Notes: Y = perception of this dimension is desired in the corresponding application context; N = perception of this dimension is not desired in the corresponding application context; — = perception of this dimension is irrelevant or its relation to the application context is not clear.

Examples of Type I contexts may include dating and customer relationship management, where firms need to represent the target faces (the faces that are being observed) in a way that can serve as a basis for forming a “first impression” but prevent identification by perceivers (customers or persons who observe the face information). For example, in the online dating context, a face is typically linked to a user’s dating profile. Users may want to conceal their facial identities while retaining the ability to rely on face perceptions (including emotional and physical states and social traits) when screening potential dates. In the context of customer relationship management, a face is typically linked with a target customer. Employees may want to provide customized customer service based on information inferred from the customer’s face (e.g., approachability) without invading the customer’s identity privacy.

Examples of Type II contexts may include employee selection/allocation and professional service evaluation, where firms need to represent the target faces (e.g., service providers’ faces) in a way that can serve as a basis for matching the perceivers’ (e.g., customers’) preferences without inducing face-based discrimination. For example, in the employee selection/allocation context, a face is typically linked with a (potential) employee. Firms may want to improve the target customer’s experience, satisfaction, and purchase intention by selecting/allocating employees whose facial features match the target customer’s preferences on certain perceptual dimensions (e.g., pleasantness, trustworthiness) without discriminating employees in terms of demographics (e.g., gender, age). In the professional service evaluation context, a face is typically linked with a professional service provider (e.g., accountant, banker) who may wish to reveal their facial information on dimensions that would help customers evaluate their service quality (e.g., approachability, confidence, trustworthiness, intelligence) while preventing demographics-based discrimination in customers’ judgments or decisions.

Both types of trade-off problems are potentially relevant to users in the social networking context (e.g., Facebook, LinkedIn), where a face is usually linked to a user’s personal profile. In addition to protecting their own identity privacy, users may want to avoid attracting interest or experiencing discrimination from others based on their gender or age.

Challenges in Resolving the Privacy–Perception Trade-Offs

Despite the large body of literature on face perceptions and facial privacy, to the best of our knowledge, scholars have not studied how to effectively address the privacy–perception trade-offs. The most relevant research domains are face anonymization, which focuses on anonymizing face identity information, and face perception modeling, which focuses on understanding the face information used in various face perceptions. We reviewed the existing methods in both domains based on their abilities to represent and preserve face information for human perception and to mask identity and demographic information. Table 3 provides a comparison of these methods.

A Comparison of Existing Methods for Addressing Privacy–Perception Trade-Off.

Notes: High = the performance of the method on a given dimension is high; Medium = the performance of the method on a given dimension is medium; Low = the performance of the method on a given dimension is low; — = does not apply.

Face anonymization

To reduce the risk of identification in the course of facial data processing, considerable research in computer science has been directed toward the anonymization of faces—that is, the representation of original faces with modified or concealed face information. One way to conceal facial identity is by reducing the amount of identifiable information contained in a face image. Naive methods such as masking, pixelization, and blurring are commonly used, all of which involve removing critical information about important facial parts (e.g., eyes, nose) from the face (Newton, Sweeney, and Malin 2005). By doing so, however, these naive methods can quickly reduce the reliability (i.e., the extent of agreement with perceptions based on the original face image) of human perception on privacy-insensitive dimensions of the face, including emotional states and social traits. Another way to anonymize facial identity information is by replacing the original face image with a surrogate face that has been constructed from a cluster of selected faces (Newton, Sweeney, and Malin 2005). This face replacement method can be modified to preserve the original face’s demographic information (e.g., gender, age) by selecting surrogate faces with the same demographic characteristics (Du et al. 2014). However, it is unable to preserve facial information used for face perceptions on other dimensions, such as emotional states and social traits.

Face perception modeling

Unlike face anonymization research, face perception modeling focuses on identifying key information in an original face image that influences human perception. Previous studies have proposed two main approaches in this domain.

One is the physiognomic (PS) approach, whereby the target face is represented by PS features derived from the coordinates of facial landmarks on key facial parts (i.e., eyebrows, eyes, nose, mouth, and face). The PS approach has been a popular method of investigating the drivers of various face perceptions (Todorov et al. 2008; Vernon et al. 2014). However, it suffers from several inherent problems, including arbitrary facial landmark placement, arbitrary facial feature selection, and a lack of information for the regions between landmarks (Xiao and Ding 2014). Moreover, typical PS measures fail to accurately capture the subtle variations among faces (e.g., shape of face; Xiao and Ding 2014). Because the face image is reduced to a set of points in the PS approach (see Web Appendix C for an example), it is difficult for a human being to make meaningful inferences from it.

The other approach is the eigenface (EF) approach, whereby the target face is represented by a linear function of eigenvectors (referred to as “eigenfaces”) derived from the covariance matrix of a set of face images (for details, see Turk and Pentland [1991]). It was widely used for automatically detecting and matching faces from images but has also been used as a modeling method to understand customers’ preferences for faces (for details, see Xiao and Ding [2014]). There are several limitations of the EF approach: its effectiveness relies heavily on the texture information contained in the target face image and the training images, making it sensitive to changes in the image quality, illumination, scale, and facial expression (Chellappa, Wilson, and Sirohey 1995) that are prevalent in natural face images. It is computationally time-consuming to calculate the eigenvectors when new faces are added to the training database. More importantly, it is not feasible for humans to make any types of inferences about the target face from the function of eigenfaces.

From our review, neither the face anonymization nor face perception modeling methods can effectively represent a face in a way that masks privacy information (e.g., identity, age, gender) while allowing a human to make reasonably reliable perceptions. In the next section, we propose a CaF framework to address this gap in the literature and practice.

The CaF Framework

To address the two types of trade-off problems in the five marketing domains, it is important that firms represent face information in a way that can effectively (1) conceal the facial cues that lead to undesired perceptions (e.g., identity, age, gender), thus reducing the privacy risk and encouraging the sharing of face information; (2) preserve facial cues that lead to desired perceptions (e.g., emotional states, social traits), thus aiding human judgment and decision making; (3) allow for direct modeling of relations between the face information and the perceiver’s judgments/decisions, thus improving business practices related to faces; and (4) encompass diverse real-life business contexts.

In this section, we begin by examining the visual cues driving different types of face perceptions to identify a solution that can satisfy these four criteria. We then propose a CaF framework that is grounded in social psychology, neuropsychology, and computer vision research as a solution. The CaF framework has two main components: the representation component presents facial contours instead of face images to the perceivers, and the modeling component quantifies the relationship between facial features and perceptions using techniques from the face perception and computer vision literature streams.

Visual Cues Driving Face Perceptions

Humans use two main types of visual cues in face perception: texture cues and structural cues (Meinhardt-Injac, Persike, and Meinhardt 2013). Texture cues reflect variations in intensity and color on the facial surface. Evidence shows that texture cues provide critical information regarding a face’s identity, gender, and age and are thus essential in human face recognition, gender classification, and age perception (e.g., Bruce and Langton 1994; Johnston, Hill, and Carman 1992; Porcheron, Mauger, and Russell 2013). For example, a human’s face recognition performance drops by as much as 20%–30% when pigmentation and shading information are eliminated (Johnston, Hill, and Carman 1992). Bruce and Langton (1994) found that skin texture information plays an important role in human gender classification. Porcheron, Mauger, and Russell (2013) found that skin texture and facial contrast are essential for individuals to estimate age with reasonable accuracy. These findings indicate that removing texture cues may effectively mask the facial information that enables identification or induces gender/age-based discrimination.

Structural cues consist of the shapes of facial parts and their spatial relationships. The shapes of facial parts are largely determined by their contours (i.e., boundary outlines), which contain much of the biological information that is fundamental in face perception (Lestrel 2008). Considerable converging evidence shows that the contours (used interchangeably with “shape” in prior studies) of facial parts as well as their spatial configurations can effectively explain human face perception (Tanaka and Farah 1993) along various dimensions, including attractiveness, approachability, confidence, trustworthiness, dominance, baby-facedness, and emotional state (e.g., Todorov et al. 2008; Torrance et al. 2014; Vernon et al. 2014). Although evidence shows that structural cues also contribute to perceptions of identity, age, and gender when combined with texture cues (e.g., Bruce and Langton 1994; George and Hole 2000), the independent role of structural cues in face recognition or age/gender classification remains unclear.

These findings suggest that the trade-off problems may be resolved by removing texture cues from the face while preserving structural cues as captured in facial contours. Drawing on these findings, we propose a radical approach that uses facial contours as a solution satisfying the four criteria delineated at the beginning of this section. Next, we describe the two components of the CaF framework.

CaF Representation

In previous studies, face representation involved presenting a face image to a viewer. The CaF representation component presents a facial contour image instead of a face image as the visual stimulus. This study marks the first time anyone has adopted this representation technique in academia or practice. In line with the literature and practice, the proposed CaF representation component preserves complete contour information about five key facial parts—the eyebrows, eyes, nose, mouth, and overall face—as well as their spatial relations. Figure 1 provides an example of the CaF representation. Additional facial parts (e.g., ears) and three-dimensional contour information may also be used when appropriate.

An example of the CaF representation.

Compared with the existing face representation approaches used in face anonymization (see Table 3), the CaF representation preserves more information related to privacy-insensitive face perceptions. It can thus be used to elicit human perceptions for decision making in many business contexts. This is impossible using modified or concealed face representations and is not feasible using PS or EF representations.

CaF Modeling

Contour-as-face modeling involves two key steps: extracting facial features from the CaF representation and quantifying the effect of the facial features on face perceptions, usually through statistical models or machine learning approaches. The CaF modeling component extracts two types of information from the CaF representation: outline features (i.e., features capturing the holistic contour information of the five key facial parts) and nonoutline features (i.e., features capturing the configural information of facial parts, including the sizes and angles of the five facial parts and their spatial arrangements).

Outline feature extraction

To extract the outline features, the CaF modeling component applies the centroid distance–Fourier descriptor (CD-FD) method, which uses discrete Fourier transformation to convert the contours to mathematically precise Fourier coefficients. These Fourier coefficients are then converted into input variables (referred to as “Fourier descriptors”) for statistical or machine learning models. The CD-FD method is a popular technique used to accurately represent target contour information with features that remain stable through various transformational changes. The following steps briefly describe the method (for a detailed description of the method, see Web Appendix A).

Step 1: Acquire complete contour boundary outline information from the target object image (e.g., key facial parts such as the eyebrows, eyes, nose, mouth, and overall face). The contour boundary information can be extracted either manually or by applying contour/edge detection to the target image. After aligning the starting point and the nominal orientation of the contours, the complete contour boundary outline can be represented by a set of coordinates of contour boundary points, given by

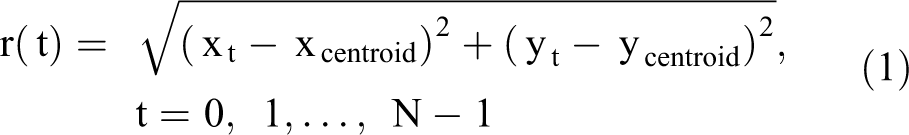

Step 2: Derive the centroid distance function from the coordinates of the contour boundary points. The centroid distance function, r(t), is given by the distance of the boundary points from the centroid of the contour, denoted as {xcentroid, ycentroid}, and is represented by

where

Step 3: Obtain Fourier coefficients by applying discrete Fourier transformation to the centroid distance function r(t). The Fourier coefficients are given by

where i is the imaginary unit. Each Fourier coefficient

Step 4: Obtain the scale-invariant Fourier coefficients by normalizing the magnitudes of the Fourier coefficients by the zero-frequency component, or

where

Step 5: Keep the first K scale-invariant Fourier coefficients that are needed to recover the original contour information with high accuracy. Although discrete Fourier transformation often results in many Fourier coefficients, only a small set (e.g., 10–15) is generally needed to capture the majority of variation among the contours and describe the original contour information with high accuracy (Zhang and Lu 2002). These Fourier coefficients are kept for further analysis.

Step 6: Construct the Fourier descriptors of the contour. Each Fourier descriptor is a vector derived from the corresponding scale-invariant Fourier coefficient. For the purpose of statistical modeling, each Fourier descriptor consists of the normalized magnitude

Nonoutline feature extraction

The nonoutline features are extracted following the face perception literature (e.g., Vernon et al. 2014). First, align the contour image so that the centroids of the left and right eyes are level. Second, calculate five size variables (i.e., the sizes of the eyebrows, eyes, nose, mouth, and overall face) 1 ; four angle variables (i.e., the angles of the eyebrows, eyes, mouth, and overall face); and six spatial placement variables (i.e., eyebrow separation, eye separation, and the vertical placements of the eyebrows, eyes, nose, and mouth) from the aligned contour image. Finally, normalize the extracted features based on face size (where applicable) to obtain scale-invariant features, resulting in 14 variables that are then used to represent the nonoutline features of the CaF representation, denoted by X.

The CaF model specification

The CaF model quantifying the effect of facial contours on face perceptions thus can be given as

where Y represents the perception of a facial contour image and X represents the nonoutline features of the contour image.

We note that our proposed CaF modeling method is a general tool that can be applied to face perception modeling regardless of whether the perception is based on face images or facial contour images (as used in the CaF representation). It can also be used for a wide variety of face perception or face preference modeling applications (e.g., face-related recommendation systems).

Compared with the existing methods used in face perception modeling (see Table 3), the CaF modeling method has several advantages. First, unlike the PS approach, which only captures a small portion of the contour outline information of facial parts, the Fourier descriptors serve as a holistic measure that captures the original facial contour with little loss of information. Second, the CaF method is an objective and robust measure of facial features. Unlike the PS approach, the CaF method does not make a priori assumptions about the importance of specific facial information (e.g., facial landmarks). Unlike the texture features used in the EF approach, the contour information used in the CaF approach is largely invariant to changes in image quality and background. Moreover, the Fourier descriptors are invariant to translation and scale change (Zhang and Lu 2002). Third, the CaF method is easy to implement and computationally simple, which is especially helpful when working with large face data sets (e.g., images or videos on social media). Finally, because of the CaF method’s ability to explicitly describe the contour at different levels of detail (using an additive, orthogonal Fourier series), the researcher can control the contour information entering the model through the number of Fourier coefficients, thus enabling understanding of the effects of different types of facial contour information on face perceptions.

In the following sections, we present analyses from three empirical studies that examine the effectiveness of the proposed CaF framework in addressing the face dilemma. Study 1 investigates the effectiveness of the CaF framework (CaF representation and CaF modeling) in capturing and preserving face information for human perceptions on commonly assessed dimensions and the underlying mechanisms. Study 2 examines the effectiveness of using the CaF representation for masking private information (e.g., identity, age, gender) in real life. We implemented the CaF framework in a real-life online dating context in Study 3 to investigate the feasibility of using the CaF framework for decision making in actual business contexts.

Study 1: The Effectiveness of CaF for Preserving Perception

We aimed to answer the following research questions in Study 1: (1) Do contours of facial parts contain important information that people use in perceptions of faces? (2) If the facial contour information does affect face perceptions, can people make reliable inferences from the CaF representation on the dimensions that are commonly assessed in marketing contexts? (3) What aspects of the facial contour image drive the contour-based perceptions across different perceptual dimensions? To answer the three research questions, we first characterized the relationship between facial contour information and human perceptions of faces on various dimensions using the CaF modeling method and compared the model performance to that of two benchmarks that model face perception. Then, we compared human perceptions on various dimensions after exposure to two types of face representations (facial contour images vs. face images). Finally, we analyzed which aspects of facial contour images influence humans’ perceptual inferences across different perceptual dimensions using variations of the CaF model.

Study Design

Stimulus faces and contour images

To elicit real-life face perception experiences, we used images of real faces from publicly available sources, including university faculty webpages, company employee webpages, and job search sites. We selected 216 stimulus faces (107 men, 109 women) that satisfied the following criteria: (1) not a celebrity (and no resemblance to a celebrity), (2) reasonable resolution (photo size larger than 100 KB), (3) frontal face view, and (4) contours of all facial parts (eyebrows, eyes, nose, mouth, overall face) visible and clear. This ensured that inferences were not affected by poor image quality or nonvisual factors that are irrelevant to face or contour information (e.g., using faces of well-known people). To generate the face image stimuli, we cropped the face photos to include only the face and neck area, thereby reducing the effects from visual factors other than face. To generate the contour image stimuli, we manually extracted the contours of five key facial parts for each face image: the eyebrows, eyes, nose, mouth, and overall face shape (for an example, see Figure 1). The 216 face images and corresponding facial contour images were used as visual stimuli for the study.

Data collection

Following the face perception literature (e.g., Todorov et al. 2008; Vernon et al. 2014), we used 15 perceptual dimensions that have proven to be critical in forming a first impression. These dimensions can be categorized as follows: demographics (e.g., age, sexual dimorphism); emotional state (e.g., smile); apparent physical state (e.g., health, fatness); and social traits (e.g., aggressiveness, approachability, arousal, attractiveness, baby-facedness, confidence, dominance, intelligence, pleasantness, trustworthiness). We applied a between-subjects design to eliminate any potential confusion (and interaction) caused by asking the same person to provide both face-based and contour-based perceptions.

Three hundred fifty-four participants (98 men, 256 women) were in the face image group. Each participant was randomly presented with a (male or female) face image and asked to provide ratings on the 15 dimensions using a seven-point scale. The participants were asked to provide ratings only if they did not know the individuals depicted in the images; they were also allowed to decide how many images to rate. To eliminate potential own-gender perception bias (Hills et al. 2018), we used only those ratings for face images of the opposite gender (about half of the total ratings collected) in the subsequent data analysis. Our conclusions were not qualitatively different when all ratings were included. On average, each participant rated 9.3 face images of the opposite gender.

Five hundred seventy participants (158 men, 412 women) were in the contour image group. We used the same procedure as for the face image group but substituted contour images for the face images. Because this was the first time that contours were used as stimuli for face perceptions, we wanted to avoid introducing any artificial effects related to asking participants to rate dimensions that could be difficult to evaluate from contours. Therefore, we asked participants to provide ratings for any of the 15 dimensions they found interesting and were able to rate using facial contours. 2 The participants in the contour group were shown only contour images of the opposite gender to avoid own-gender perception bias (Hills et al. 2018). On average, each participant rated 25.9 contour images of the opposite gender.

Measures

For each face/contour image in the database, we obtained three sets of information: (1) nonoutline features, (2) outline features, and (3) the participants’ average ratings of the face image and corresponding contour image on the 15 dimensions. We derived 14 nonoutline features for each face/contour image following the procedure described in the CaF modeling subsection. To facilitate statistical analysis, we standardized the 14 nonoutline features with a mean of 0 and variance of 1, yielding 14 nonoutline variables. The outline features were obtained using the CD-FD method described in the CaF modeling subsection. We retained the Fourier coefficients that can reconstruct the contour information with a root mean square error (RMSE) ratio below 5%. 3 The number of Fourier coefficients retained for the left eyebrow, left eye, nose, mouth, and overall face were 5, 5, 12, 9, and 9, respectively. A higher number of Fourier coefficients are needed to capture features with subtle but important differences, such as the nostril area on the nose contour, which typically has substantial variation in the details that convey different perceptual information. A total of 40 Fourier descriptors (i.e., 80 outline features) were retained for the five facial parts. We standardized the Fourier descriptors with a mean of 0 and variance of 1 to facilitate statistical analysis, resulting in 80 outline variables. Overall, we collected 48,013 valid face-based ratings and 101,630 valid contour-based ratings on the 15 dimensions. On average, each face image received 15 valid ratings on each perception dimension, and each contour image received 31 valid ratings on each perception dimension.

Analysis and Results

The role of contour information in perceptions of faces

We examine the role of facial contour information in the perceptions of faces by comparing the model fit and prediction performance of the CaF method with that of two existing face perception models; namely, the PS model (Vernon et al. 2014) and the EF model (Xiao and Ding 2014). We begin the analysis by describing the specifications of the three models.

As discussed previously, the PS model extracts PS measures from the coordinates of salient landmarks on human faces. Following the state-of-the-art work in Vernon et al. (2014), we manually labeled 179 facial landmarks on the 216 face images and derived 47 PS measures based on the placements of the landmarks, 37 of which were related to the five key facial parts in CaF representation and the remaining 10 were related to other facial regions such as the ear, cheekbone, and cheek. We compared the CaF model with a PS model using the 37 PS measures as independent variables (see Web Appendix C for a list of the variables used in the PS model). We also compared the CaF model with a PS model using all 47 PS measures. The conclusions were not qualitatively different (see Web Appendix C).

The EF model extracts facial texture features through a function of eigenvectors derived from the covariance matrix of training face images (for details, see Turk and Pentland [1991]). Xiao and Ding (2014) applied this method in a marketing context to model the preferences of faces in print ads. Following their procedure, we first normalized the 216 face images and removed the noise related to the angle and size of the face. Then we obtained the eigenvectors and eigenvalues using the principle component analysis method. We retained 57 eigenvectors that explained 95% of the variance among the face images. Finally, we calculated the loading vector (57 × 1) for each face. These EF loadings were used as independent variables in the EF model.

For all three models, the mean perception ratings of each face image on the 15 dimensions were used as dependent variables, denoted as

We compared the three models on both goodness-of-fit and leave-one-out prediction performance. 4 The results appear in Table 4. The CaF model performs best overall in explaining the variances in face-based perception ratings (average R2 = .74; average adjusted R2 = .54) and prediction performance (average mean square error [MSE] = .36) when compared with the PS model (average R2 = .56; average adjusted R2 = .47; average MSE = .40) and the EF model (average R2 = .56; average adjusted R2 = .40; average MSE = .48). On most dimensions, the CaF model performs the best, or equally as well, in predicting face-based perception ratings and in explaining the variances in face-based perception ratings. These results suggest that the contour features can improve the explanatory and predictive power of face perception models across various dimensions, relative to the PS and EF features.

Model Comparison: CaF, PS, and EF.

Notes: The best models are highlighted in bold.

Compared with the PS model, the CaF model is able to increase the proportion of explained variance (after adjusting for the number of independent variables) on most dimensions such as baby-facedness (by 22%), intelligence (by 16%), age (by 13%), dominance (by 11%), aggressiveness (by 11%), arousal (by 8%), and confidence (by 8%). This suggests that the contours of the intervening regions among landmarks contain important information people need to make useful inferences on these dimensions. The performance of the PS model and CaF model are close on the smile, trustworthiness, pleasantness, attractiveness, and approachability dimensions, all of which are correlated with the perception of valence (Oosterhof and Todorov 2008). This suggests consistency between the contour features and PS measures in capturing the facial information that is important for valence perceptions along these dimensions. The EF model appears to perform the worst on most dimensions except health, which is largely affected by skin texture information (Henderson et al. 2016). This provides evidence that the structural cues as captured by the contour features play a more important role in most face perceptions than the texture cues captured by eigenvectors.

These results demonstrate that (1) the complete contours of facial parts contain important information people need for face perceptions, and (2) compared with the two state-of-the-art benchmark models, the CaF model is generally more effective in capturing the key facial information driving face perceptions on most dimensions. These findings provide strong evidence for the use of contour information in modeling face perceptions and justify the use of the CaF framework in this context.

Comparing perceptions of contour images versus face images

To examine the effectiveness of the CaF representation in preserving information for commonly assessed face perceptions, we compared human perceptions on the 15 dimensions after exposure to two types of face representations (facial contour images vs. face images). The perceptions based on face images were used as benchmarks to evaluate the reliability of contour-based perceptions. We evaluated the effectiveness of the CaF representation from two aspects: (1) whether and to what extent contour-based perceptions relate to perceptions based on original face images and (2) how contour-based perceptions differ from face-based perceptions across the different perceptual dimensions.

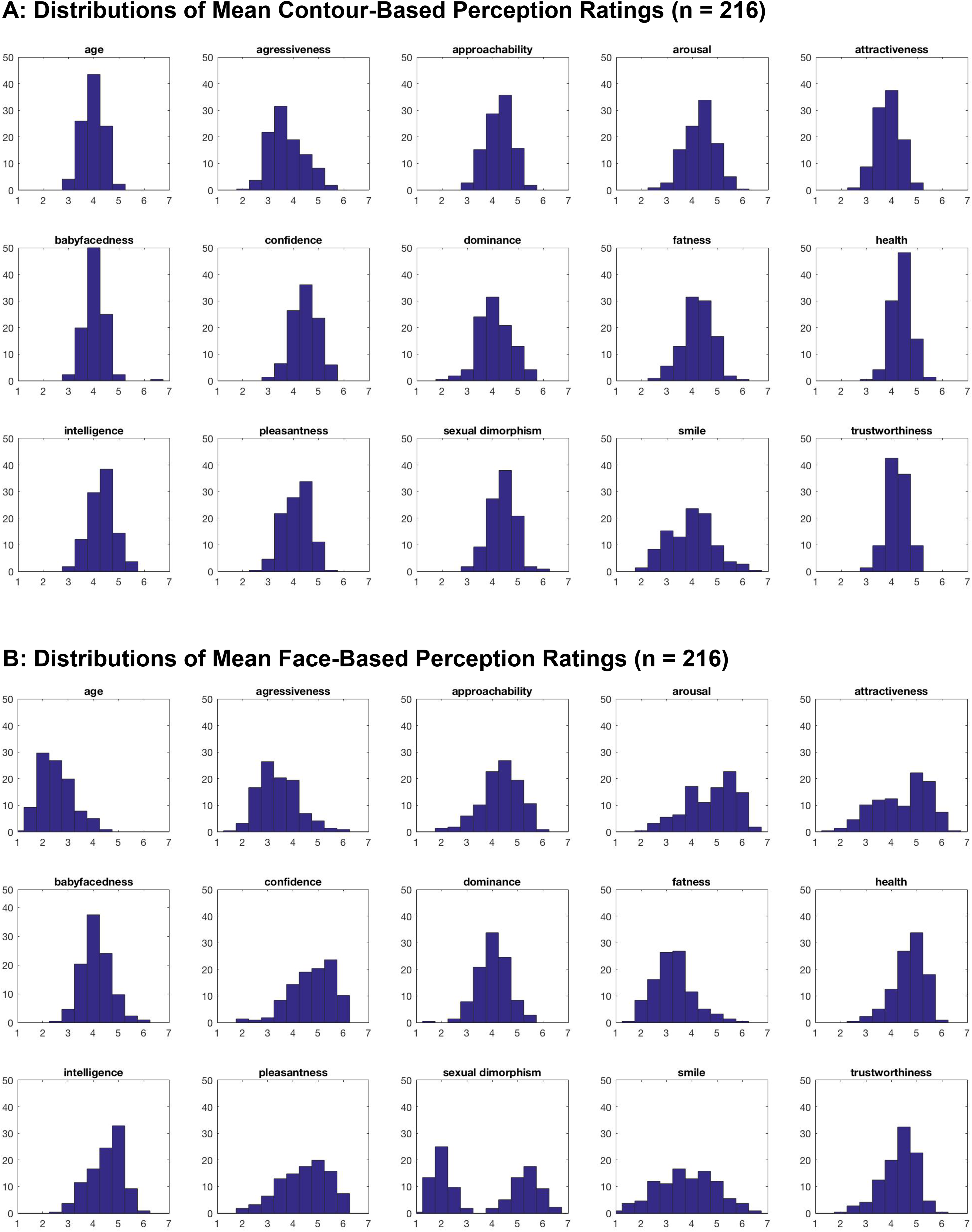

For each face image and the corresponding contour image, we calculated the mean of perception ratings for each dimension. Figure 2 displays the distributions of the mean perception ratings for the 216 contour images and 216 face images on all 15 dimensions. To evaluate the relationship between contour-based and face-based perceptions and the reliability of contour-based perceptions, we calculated the Pearson correlation and the intraclass correlation (ICC, a measure widely used in reliability analysis; for details of ICC criteria, see Hallgren [2012]), of mean perception ratings for all 15 dimensions. The average correlation between contour-based and face-based mean perception ratings for all 15 dimensions was .41, and the average ICC value was .51, indicating that people can make reasonably reliable perceptions using contour images.

Distributions of mean contour-based versus face-based perception ratings, by dimension.

A closer examination reveals that the reliability of contour-based inferences varies across perceptual dimensions: it is much easier for humans to make reliable inferences from face contours about some dimensions (e.g., smile, approachability) than others (e.g., gender, age).

First, people can infer a positive emotional state and correlated dimensions such as pleasantness, attractiveness, approachability, arousal, and confidence (referred to as “valence” dimensions) with considerably high reliability based on contours. Specifically, the contour-based face perceptual inferences were quite reliable for seven dimensions: smile (rsmile = .81, p < .01; ICCsmile = .88), pleasantness (rpleasantness = .62, p < .01; ICCpleasantness = .70), attractiveness (rattractiveness = .62, p < .01; ICCattractiveness = .63), approachability (rapproachability = .51, p < .01; ICCapproachability = .64), arousal (rarousal = .55, p < .01; ICCarousal = .66), and confidence (rconfidence = .49, p < .01; ICCconfidence = .60). These findings are consistent with prior research indicating that valence perceptions are highly correlated with each other and are driven by expression signaling such as a smile (Oosterhof and Todorov 2008; Todorov et al. 2008; Vernon et al. 2014). A further analysis of the discrepancies between the mean contour-based and face-based perceptions on the valence dimensions seems to indicate that the perception of valence is slightly lower when using contour images (e.g., Mattractiveness = 3.87 vs. 4.43, p < .01; Mpleasantness = 4.14 vs. 4.46, p < .01; Marousal = 4.31 vs. 4.76, p < .01; Mconfidence = 4.46 vs. 4.76, p < .01). Interestingly, it seems that faces are perceived to be more homogeneous on these dimensions when using contour images than when using face images. For example, the contour-based perceptions of smile, approachability, attractiveness, pleasantness, arousal, and confidence seem to vary less than face-based perceptions in our data set (SDsmile = .89 vs. 1.14, p < .01; SDapproachability = .53 vs. .77, p < .01; SDattractiveness = .47 vs. 1.06, p < .01; SDpleasantness = .53 vs. .94, p < .01; SDarousal = .59 vs. .98, p < .01; SDconfidence = .51 vs. .86, p < .01), indicating that people can make more differentiating judgments on these dimensions if given more facial information than the facial contours.

Compared with face-based perceptions, contour-based perceptions on the fatness dimension were fairly reliable (rfatness = .48, p < .01; ICCfatness = .64), which is consistent with research suggesting that facial contour information is predictive of fatness (Mayer et al. 2017). In general, it seems that the faces in our database tended to be perceived as fatter when using contour images than when using face images (Mfatness = 4.21 vs. 3.27, p < .01; SDfatness = .59 vs. .77, p < .01).

Second, it appears to be much harder or even impossible for humans to make reliable inferences on the two demographic dimensions of gender and age and their correlated dimensions. For example, Figure 2, Panels A and B, show that participants can perceive gender reasonably well from face images (Figure 2, Panel B, sexual_dimorphism) but fail to identify gender-specific facial features using contours (Figure 2, Panel A, sexual_dimorphism). This indicates that masculine and feminine facial cues are less distinguishable when using contour images (rsexual_dimorphism = .26, p < .01; ICCsexual_dimorphism = .24). This finding is consistent with prior studies showing that texture cues such as skin color and contrast are important for gender perception (Bruce and Langton 1994). Contour-based perceptions for the second demographic dimension, age, were also unreliable (rage = .31, p < .01; ICCage = .44). A comparison between Panel A and Panel B on the age dimension shows that perceptions of age based on contour images deviate significantly from perceptions based on the original face images (Mage = 3.98 vs. 2.52, p < .01; SDage = .42 vs .67, p < .01). 5 Moreover, no significant correlation exists between contour-based and face-based perceptions on another dimension related to age, baby-facedness (rbaby-facedness = .05, p > .10; ICC baby-facedness = .09), indicating that participants cannot effectively infer baby-facedness from contour images. Our analyses seem to support prior findings that perceptions of age and age-related dimensions are largely affected by texture cues such as skin contrast and skin pigmentation (Porcheron, Mauger, and Russell 2013), which are absent from contour images.

Contour-based inferences on the three dimensions that are correlated with gender and age, dominance, aggressiveness, and health (Henderson et al. 2016; Oosterhof and Todorov 2008) were also not reliable (rdominance = .14, p < .05; ICCdominance = .25; raggressiveness = .25, p < .01; ICCaggressiveness = .40; rhealth = .35, p < .01; ICChealth = .47). This supports prior findings showing that perceptions of dominance, aggressiveness, and health are sensitive to signals of physical strength related to sexual dimorphism (gender) and maturity (age) (Henderson et al. 2016; Oosterhof and Todorov 2008) and provides additional evidence that physical strength signals are contained primarily in the texture information rather than in the contour information. Contour-based perceptions on the two dimensions that have proven to be related to both valence dimensions and gender/age, intelligence, and trustworthiness (Oosterhof and Todorov 2008) are also shown to be less reliable (rintelligence = .35, p < .01; ICCintelligence = .50; rtrustworthiness = .34, p < .01; ICCtrustworthiness = .45). This indicates that both facial contour and texture information are needed for making reliable perceptions on the two dimensions.

Overall, the evidence suggests that humans can make effective inferences from facial contours on perceptual dimensions other than sexual dimorphism (gender), age, and the correlated perceptual dimensions. This points to the great potential of the CaF representation in masking age and gender-related facial cues to protect privacy and eliminate discrimination while preserving the information necessary for people to make relevant inferences about other dimensions such as smile, pleasantness, attractiveness, approachability, arousal, and confidence.

Heterogeneity among faces and the reliability of contour-based perception

Figure 2 indicates that another factor that potentially accounts for the variations in the reliability of contour-based perception is the heterogeneity among faces. We explored this through a cluster analysis using the relative differences between the means of contour-based and face-based perception ratings on all 15 dimensions. We used the K-means clustering and tested for K = 1, 2, 3, 4, 5, 6. The four-cluster result is the best based on the gap statistic (Tibshirani, Walther, and Hastie 2001). The four clusters include 69, 36, 77, and 34 images, respectively.

Figure 3 shows comparisons among the four clusters on the reliability of contour-based perceptions, along with the average face-based ratings on all 15 dimensions for each cluster. The results show that there are substantial differences in the reliability of contour-based perceptions among different face groups. In Clusters 1 and 2, estimates of (masculine) sexual dimorphism are more pronounced, but most social trait perceptions (e.g., arousal, attractiveness) tend to be underestimated. By contrast, in Cluster 4, most social trait perceptions (e.g., arousal, attractiveness) tend to be overestimated, but (masculine) sexual dimorphism tends to be less pronounced. Because most faces in Clusters 1 and 2 are female, whereas most faces in Clusters 3 and 4 are male, the overestimation of masculinity for female faces and the underestimation of masculinity for male faces may be attributable to the fact that facial features related to sexual dimorphism are masked in contour images. It is worth noting that the impact of facial contours on perceptions varies significantly even within the same gender. For example, faces in Cluster 4 tend to be overestimated on approachability, whereas contour-based ratings for Cluster 3 are quite close to face-based ratings on the same dimension.

Mean relative difference in perception ratings across contour clusters.

Drivers of contour-based perceptions

To investigate the information in contour images that drives contour-based perceptions, we quantified the relationships between contour facial features and contour-based perception ratings. Following the prior studies on face perceptions (Todorov 2017), we analyzed the contributions of the two types of contour features (nonoutline and outline) on contour-based face perceptions.

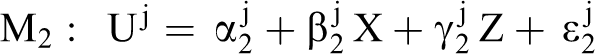

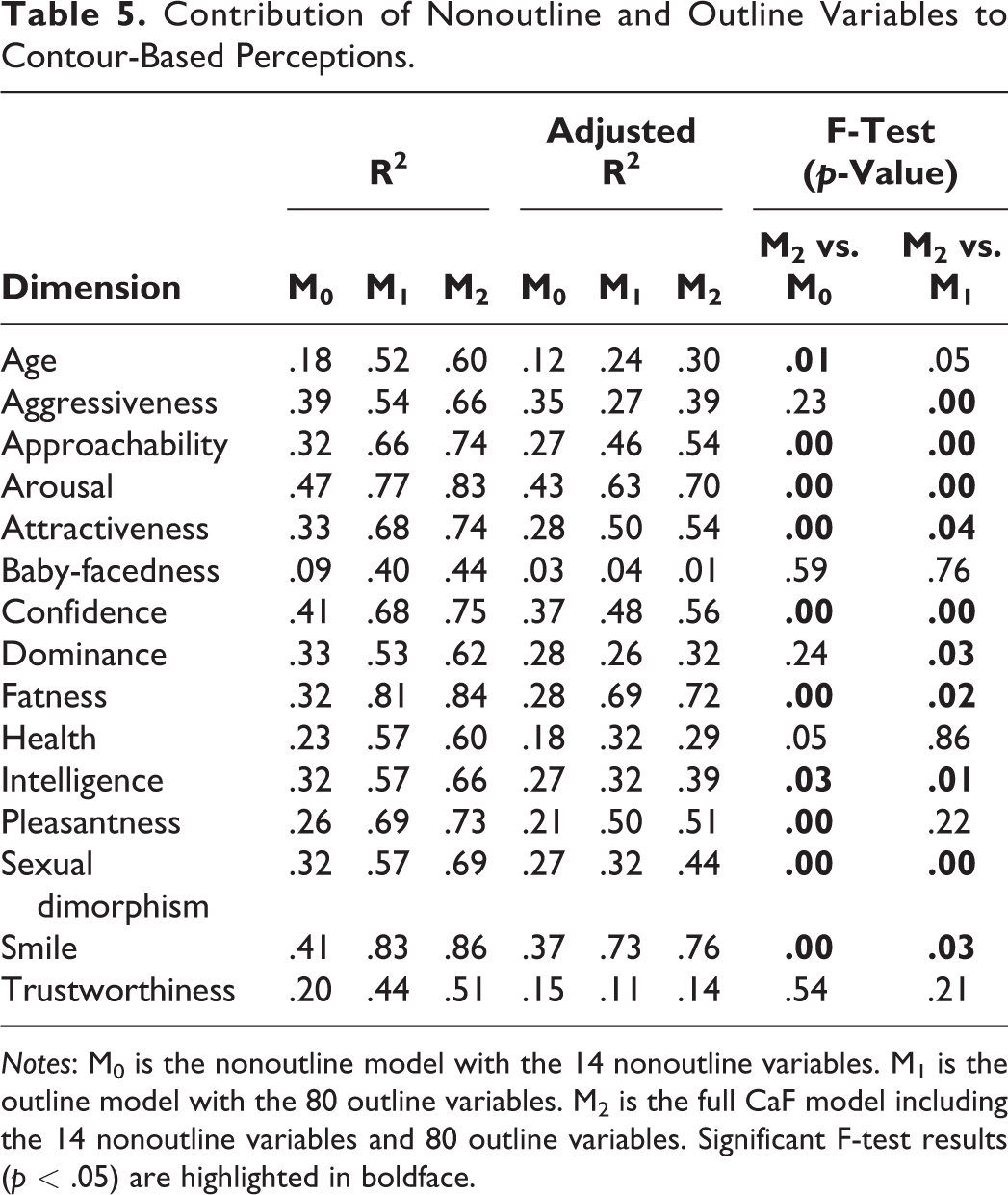

To discern the effect of the two types of contour features, we compared the CaF model (M2) with two variations: a nonoutline model (M0) with 14 nonoutline variables as independent variables and an outline model (M1) with 80 outline variables as independent variables. For all three models, the mean perception ratings of each contour image on the 15 dimensions were used as dependent variables, denoted as

We performed standard linear regression analysis. The results appear in Table 5. The results confirmed our intuition that both outline and nonoutline variables contribute to contour-based face perceptions. Overall, the full model (

Contribution of Nonoutline and Outline Variables to Contour-Based Perceptions.

Notes: M0 is the nonoutline model with the 14 nonoutline variables. M1 is the outline model with the 80 outline variables. M2 is the full CaF model including the 14 nonoutline variables and 80 outline variables. Significant F-test results (p < .05) are highlighted in boldface.

As we expected, the effects of nonoutline and outline variables vary greatly across different perceptual dimensions. First, for the dimensions on which it is easy for humans to make reliable inferences from the contour images—such as smile, pleasantness, approachability, attractiveness, arousal, confidence, and fatness—human perceptions seem to be driven mainly by the outline variables. The full CaF model is able to capture substantial proportions of the variances on these dimensions. Second, for the dimensions on which it is difficult for humans to make reliable inferences from the contour images, the main drivers of people’s perceptions vary. For example, the results indicate that outline information plays a dominant role in people’s contour-based perceptions of age, sexual dimorphism, and health, while nonoutline information plays a dominant role in people’s perceptions of dominance and aggressiveness. Neither outline nor nonoutline variables could effectively explain perceptions of baby-facedness, which is consistent with our findings that there is no significant correlation between contour-based and face-based perceptions on the baby-facedness dimension.

Here, we make two additional notes regarding the results. First, ideally, latent class analysis (or other methods that account for heterogeneity) would be used to further examine the heterogeneity among faces. Such an analysis is not meaningful with our limited face-contour data and large number of variables; however, it should be explored in the future when more data become available. Second, we assessed the out-of-sample prediction performance of our models and found that M2 was only marginally better than M0 in a few cases and equivalent in others. Given the generally superior fit of M2, we hypothesize that this could be due to overfitting and substantial facial heterogeneity. Creating a model that accounts for heterogeneity when a much larger set of face contours and ratings becomes available might help solve this problem and enable exploration of the heterogeneity in contour-based perceptions of different faces.

To further explore the contributions of outline variables associated with individual facial parts, we compared five variations of the CaF model, each using the outline features of a facial part in addition to the 14 nonoutline features as independent variables (see Table 6). The results show that the contributions of outline variables to perceptions of different dimensions vary greatly across facial parts. 6 In general, the outlines of the eyebrows and eyes do not significantly affect most perceptions. The outline of the mouth, however, explains as much as 79% (adjusted R2 = .75) of the variance in contour-based perceptions for smile, indicating that the mouth outline drives the perceptions of smiles in contour images. Similarly, the mouth outline drives perceptions of pleasantness, confidence, arousal, and approachability, which supports findings from prior studies that these perceptions tend to be loaded on the same dimension and are sensitive to emotional cues (Todorov et al. 2008). Including face outline information can explain as much as 78% (adjusted R2 = .74) of the variance in contour-based perceptions of fatness, indicating that the face outline drives perceptions of fatness in contour images. Both the face and mouth outlines drive perceptions of attractiveness. Compared with outlines of the mouth and/or face, the nose outline plays a relatively less important role on most dimensions.

Effects of Outline Variables of Different Facial Parts on Contour-Based Perceptions.

Notes: Significant F-test results (p < .05) are highlighted in bold.

Self-revealed decision rules in contour-based perceptions

To understand the decision rules that people use to make inferences from contour images, we asked another group of participants to explicitly describe the facial cues they used to make inferences based on contour images. We collected responses from a total of 76 participants (19 men, 57 women), each of whom was asked to rate at least five (randomly assigned) contours on five dimensions they had chosen from the 15 dimensions. Two research assistants coded the responses independently and categorized the self-revealed decision rules into different types of contour features. Tables 7 and 8 present the results and sample decision rules. In line with our previous analysis, we analyzed the self-revealed decision rules related to the types of contour features, as well as the key facial parts.

Self-Revealed Decision Rules for Contour-Based Perceptions.

Notes: The percentages shown in the table are calculated by (Number of participants who mentioned the feature for the selected dimension)/(Number of participants who selected the dimension). Two research assistants were hired to code the participants’ responses independently. The Cohen’s kappa of two independent coders is >.90. The facial part that was mentioned most for each perceptual dimension is highlighted in bold.

Examples of Self-Revealed Decision Rules for Contour-Based Perceptions.

Overall, the results from Table 7 demonstrate great consistency with the model-based findings. The self-revealed decision rules show that people rely on both outline and nonoutline information in their judgments of facial contours. In addition, compared with the nonoutline features, people generally use more outline features in their judgments. On average, 85% of participants mentioned the use of outline features in their judgments across each of the 15 dimensions, while 54% of participants mentioned the use of nonoutline features.

Consistent with our model-based findings, the outline information of the mouth (51% 7 ) and the face (42%) are generally used more often in people’s perceptions than the outline information of other facial parts such as the eyebrows (16%), eyes (15%), and nose (7%). At the dimension level (see Tables 7 and 8), the outline information of the mouth is used most often among all facial parts in perceptions of smile (100%), pleasantness (90%), confidence (68%), approachability (71%), and arousal (55%), which is consistent with the model-based findings. The outline information of the face is used most often in perceptions of fatness (90%), health (64%), and age (54%), which supports our findings that face outline variables have significant effects on contour-based perceptions of these dimensions. These findings provide strong evidence that the proposed CaF model is effective in capturing the information people use in contour-based perceptions.

In contrast, the nonoutline information of mouth (3%) and face (5%) is used less often in people’s judgments than the nonoutline information related to other facial parts, such as eyebrow (34%), eye (27%), and nose (15%). For example, people used nonoutline information, including the size, angle, and spatial placement, of the eyebrows and eyes in their judgments of arousal (59% and 62%, respectively), approachability (55% and 31%, respectively), aggressiveness (50% and 33%, respectively), and dominance (46% and 31%, respectively). The nonoutline information of the eyebrows (68%) and nose (55%) seems to be used most often in people’s contour-based perceptions on sexual dimorphism.

In summary, the results in Study 1 demonstrate that the CaF framework is effective as both a stimulus and modeling method, and humans can make meaningful inferences from contour images that are quite consistent with inferences made from the corresponding face images. In addition, our findings show that contours can significantly reduce the accuracy of people’s perceptions of age and gender, two perceptual dimensions that contours are intended to mask to help prevent discrimination.

Study 2: The Effectiveness of CaF for Protecting Privacy

A face’s level of anonymity typically equates to the extent to which face recognition is prevented (Newton, Sweeney, and Malin 2005). In Study 2, we aimed to assess the CaF representation’s ability to mask private information (e.g., identity, age, gender) from human recognition. We focused on human recognition for two reasons. First, marketing contexts in which the CaF framework is most likely to be used (Table 2) typically require privacy protection from other humans, not sophisticated algorithms. Second, it is difficult for algorithms to unmask identities using face contours derived from different photos of the same person, because the contours may differ greatly due to the face angle, head orientation, emotional state (e.g., smiling changes the contours of mouth and face) and the apparent physical state (e.g., the eyes and mouth may be open to different degrees).

Study Design

A typical face recognition task requires participants to identify a target face that matches a stimulus face from a designated database. To test how well contour images are able to anonymize one’s identity and demographics to prevent human recognition/perception, we asked participants to view contour images and identify the corresponding faces from a database. To mimic the use of contour images in real-life applications, we first constructed a database of 260 faces (184 men, 76 women) from the faculty pages at a university. We randomly chose 10 individuals from the database as the target people and searched publicly available sources for different face photos of the target people. We then manually generated the facial contours using these face photos. If a different face photo was not publicly available, we randomly chose another individual from the database as a target individual.

A total of 138 students (47 men, 91 women) from a different university participated in the study and received a fixed payment for participating. The participants were presented with 10 contour images as well as the 260 faces in the database and were asked to identify the faces corresponding to each of the 10 contour images. Each participant was permitted to make up to three guesses per contour image with a decreasing order of confidence score (e.g., “How likely do you think the guessed face and the person in the contour image are the same person?”).

For each contour image, we offered a cash prize (about US$75) to be split evenly among all participants who correctly identified the target face in the contour image as their top choice. If no one correctly identified the target face in their top choice, the prize would be split evenly by all participants who correctly identified the target face in their second choice, and so on. If no one correctly identified the target face in any of their three guesses, the cash prize would be split evenly by all participants.

Analysis and Results

The participants submitted a total of 4,089 guesses about the identities of the people represented by the contour images, of which 1,380 were top choices. We present our analysis of the participants’ top choices here; conclusions from the analyses of the second and third choices were not qualitatively different. The results appear in Table 9.

Results of Human Identification Using Contour Images.

ap-value of the hypothesis testing H0: p ≤ p0 versus H1: p > p0 using the exact binomial test, where p and p0 are the correct identification rate and 1/ (total unique faces guessed by all participants), respectively.

b Target face matches the contour image.

Effect of CaF on anonymizing identity

Overall, the results show that it is very difficult for humans to discern a person’s identity based on contour images. As shown in Table 9, 37 out of the 1,380 guesses were correct, making the overall recognition rate 2.68%.

A further analysis of the face identification results reveals four key findings. First, the ease of recognition seems to vary across different faces, which is consistent with the findings from Study 1. For example, the recognition rates of the 10 contour images ranged from 0% to 10.87%; the overwhelming majority of contour images were not easily identified, with the exception of one (Contour Image 7) whose target face was also the most often guessed face (recognition rate of 10.87%). However, this rate is not significantly larger than the guess rate of the second-most-often-guessed face (5.80%). 8 The verbal feedback collected from participants suggests that the high recognition rate was due to the unique mouth and face contour of the target face. Second, in general, a single contour image can be matched with multiple faces. For example, among the 260 faces in the database, the number of unique guesses for a given contour image ranged from 58 to 77. In fact, out of the nine target faces that were correctly identified, seven were not among the five most-often-guessed faces for the corresponding contours. This indicates that contour images effectively conceal identifiable information. Third, statistical tests show that for the majority of faces, the correct recognition rates were not statistically different from the random guesses among the unique faces guessed by all participants. While the correct identification rate for Contour Image 5 (5.07%) was higher than random guess rate among unique faces guessed by all participants (p < .01), it is not significantly different from the guess rates of the other four most-often-guessed faces. In addition, the correct face was only the third most-often-guessed face. Similarly, for Contour Image 7, although the correct identification rate was higher than random guesses among unique faces guessed by all participants (p < .01), there were no significant differences among the guess rates of the top three most-often-guessed faces. This indicates that although people may use some information from the contour image to narrow down the choice set, it is very difficult for them to identify the correct face beyond random guesses within the choice set. Fourth, even when people correctly identified the faces from the contour images, they were not confident about their judgments based on the contour images. For example, the average confidence score for all 37 correct guesses was 48.6% (SD = 13.6%), which is not significantly larger than the average confidence score for the incorrectly identified faces within individuals 9 (M = 44.6%, SD = 6.6%) or across individuals 10 (M = 44.5%, SD = 8.5%).

Effect of CaF on anonymizing age

To assess whether the CaF representations effectively anonymize age, we hired three research assistants to estimate the ages of all faces in the databases. We used the average perceived age for each face as its estimated age. We first calculated the absolute difference in estimated ages between a face a participant guessed and the actual target face corresponding to a contour, then averaged the values of all participants’ guesses.

The results seem to suggest that age can be completely anonymized using contour images. For example, the difference between the target face and a guessed face ranged from 4.5 years to 9.0 years across the ten contour images (M = 6.23, SD = 1.47), which is not statistically different from a random guess (M = 6.93, SD = 1.23). 11 To quantify the effectiveness of contour images in age anonymization, we defined an age ratio using the estimated ages of the target faces as ground truth. We calculated the age ratio by dividing the mean absolute error of age guess by the mean absolute error of random guess (i.e., if participants randomly pick a person from the database). Higher age ratios indicate better anonymization performance (where 1 represents a random guess). Table 9 shows that, in general, it is very difficult to infer age from contour images.

Effect of CaF on anonymizing gender

To assess whether contours can effectively anonymize gender, we calculated the percentage of guesses that were the incorrect gender, which ranged from 2.9% to 68.4% across all ten contour images (M = 23.5%; SD = 23.3%). Statistical tests show that incorrect identification rates for gender are significantly smaller than a random guess assumption (M = 45.8%, SD = 21.5%), 12 indicating that people can infer gender information from a contour image to some extent. However, further analysis of the confidence scores of the guesses within individual revealed that the average confidence level of correct gender guesses was 44.5% (SD = 7.8%), which is not significantly different from the average confidence score of incorrect gender guesses (M = 45.1%, SD = 9.2%). The results suggest that people are not confident about their gender guesses based on contour images even when they are correct.

To quantify the effectiveness of contour images in gender anonymization, we calculated a gender ratio in a similar way as the age ratio, by dividing the mean absolute error of gender guess by that of random guess. Higher gender ratios indicate better anonymization performance (where 1 represents a random guess). Table 9 shows that the ease of gender recognition varies greatly across faces. Note this is not a direct inference of sexual dimorphism based on face contours, as assessed in Study 1. Because we allowed participants to compare the contour images with face images, the relatively higher accuracy may be due to a correlation in facial contour similarity.

Overall, our analysis provides convincing evidence that it is not easy for a human to guess the identity, age, or gender associated with a contour image even if the corresponding database of face images includes just 260 photos. This suggests that contour images can anonymize one’s identity, as well as age and gender—two critical factors associated with potential discrimination in many real-life contexts—to prevent human recognition/perception.

Study 3: A Field Implementation of CaF in an Online Dating Context

In Study 3, we aimed to investigate the feasibility of applying the CaF framework for real-life decision making that is based on or affected by face perceptions while protecting the privacy of individuals. We chose to implement the CaF framework in the online dating context for three reasons. First, face and face perceptions play an important role in the decision-making process in an online dating context. In a field experiment run by OkCupid, profile pictures accounted for over 90% of users’ profile ratings, while text information accounted for less than 10% (Hern 2014). Second, from the customers’ perspective, revealing one’s facial identity on an online dating website/app poses inherent privacy risks, as an online dating profile usually contains more sensitive information about the user and is usually accessible to a wider audience. As a result, users often experience negative feelings because of the exposure of their faces (Cobb and Kohno 2017). Third, online dating has grown into a billion-dollar industry with tremendous commercial, personal, and social value. In the United States, statistics show that there are over 2,100 dating apps or websites and the online dating service industry is estimated to be worth $3 billion (IBISWorld 2019). A proper solution to the face dilemma in the online dating context can substantially improve customer experience and recommendation systems, thus increasing customer trust and retention, frequency of use, and communication (the presentation of solutions to customers), among other aspects, which are also present in other industries. Next, we describe the filed study design, then present analysis of the customers’ responses.

The Field Study Design

We constructed an online dating site (denoted as “MKQ”), similar to Tinder (https://tinder.com), which relies on facial information and minimal demographics. Unlike Tinder users, MKQ users do not search for potential partners on the site; instead, MKQ matches users automatically based on their facial contour preferences. After registering, users are first asked to provide a facial contour image (or submit a face photo to be converted to facial contour) and basic information such as gender, age, height, and university. To enable automatic matching, users are asked to complete a calibration task by rating randomly assigned facial contours taken from people of the opposite gender using a seven-point scale (everything else being equal, 1 represented a low likelihood of befriending a person with that contour and 7 represented a high likelihood of befriending a person with that contour). Users decide how many contours they would like to rate, knowing that the more they rate, the more accurate the matching will be. Once a user receives a notification about a match made by the site, they can go to the website to view details about the matched partner. The user may click “yes” to accept the match and initiate contact or click “no” to reject the match and wait for the next round of matching. If both parties click “yes,” the two matched users can start to communicate in a private chatroom. We provide details about the matching procedure in Web Appendix B.

MKQ is implemented using PHP and MySQL on a cloud server rented from 1&1 IONOS, a cloud and hosting firm (https://www.ionos.com/). Due to limited resources, we did not promote MKQ commercially. All MKQ users needed a university-based email address to be eligible.

Analysis and Results

By the end of the first month after the launch of MKQ, 385 users (106 men, 217 women, 62 undisclosed) had registered using their personal university email accounts. The users understood that MKQ is a real service that matches individuals with potentially desirable partners of the opposite gender. There is no fee to join, and members do not receive any compensation. By the end of the first month, 198 (70 men, 128 women) out of the 385 members had completed 13,311 contour evaluations with ratings covering the entire range of the scale (M = 3.54, SD = 1.47). Evidently, individuals can make strong negative and positive inferences from contour images in an online dating context, based on the number of contour ratings at the extreme ends of the scale (ratings of 1 = 1,339; ratings of 2 = 1,977; ratings of 3 = 3,102; ratings of 4 = 3,522; ratings of 5 = 2,136; ratings of 6 = 957; ratings of 7 = 278). The MKQ members appear to have had no problem making discriminant inferences based on contour images to facilitate dating decisions.

Users’ dating-related inferences from CaF representations