Abstract

This article introduces Digital In-Context Experiments (DICE), an experimental paradigm that enables researchers to study entire social media feeds while tracking users’ granular behavioral data at the post level. Current research paradigms (vignette-based experiments, online platform studies, and observational studies) predominantly focus on isolated social media posts without examining how users consume content within the broader context of a feed. This isolation overlooks the competing attention between content (e.g., sponsored posts or ads) within a feed and contextual spillovers that occur when users scroll through continuous streams of content. DICE complements existing paradigms by presenting posts within scrollable feeds, more closely resembling how users experience content on social media. This allows researchers to systematically manipulate entire feed compositions while unobtrusively tracking participants’ scrolling behavior. To demonstrate the potential of DICE, the article presents two illustrative case studies that examine contextual spillovers and predict ad recall in environments where content competes for attention. The authors conclude with directions for future research and managerial perspectives derived from expert interviews with marketing professionals. An accompanying open-source app, available at https://dice-app.org, enables researchers to conveniently integrate the experimental paradigm into their preferred workflow.

Keywords

Social media platforms have evolved from traditional social networks to sophisticated algorithmic ecosystems that monetize attention and shape the consumption patterns of over five billion users around the world (Chayka 2025; Statista 2025). This is reflected in a continuously evolving and growing body of research examining consumer and firm behavior on these platforms (for recent reviews, see Anderson and Wood 2021; Aridor et al. 2024; Bleier, Fossen, and Shapira 2024; Shankar et al. 2022). However, despite social media's continuous evolution as well as its commercial and societal significance, the way marketing scholars have studied people's behavior on social media has remained relatively unchanged over the last decade.

In the domain of social media, researchers typically choose between vignette-based experiments and field studies, where the former remains the most widely used research paradigm. A core benefit of vignette experiments is that they offer high experimental control. Yet, their reliance on relatively stylized stimuli (e.g., presenting a screenshot of a single post vs. a scrollable feed with multiple posts) and limited focus on actual behaviors have led to calls for greater ecological validity (e.g., Morales, Amir, and Lee 2017; Pham 2013; Van Heerde et al. 2021). Accordingly, researchers have increasingly leveraged field data in observational studies, capturing naturally occurring behavior on social media platforms. Typically, these data have been collected via web scraping or APIs (application programming interfaces; Boegershausen et al. 2022). More recently, researchers started using the A/B testing tools provided by social media platforms to conduct online platform studies (Braun et al. 2024). What is unique about social media platforms is that both types of field studies are impacted by frequently changing content delivery algorithms, which are currently impossible to observe (see Gordon, Moakler, and Zettelmeyer 2023), raising concerns about the internal and construct validity in observational social media and online platform studies (Xu, Zhang, and Zhou 2020).

Against this backdrop, this article introduces Digital In-Context Experiments (DICE) as a new experimental paradigm. With DICE, researchers can create stimuli that mimic social media feeds. These feeds require study participants to engage in browsing behavior, progressively discovering new content as they scroll through it. This shift from single posts to feeds might seem trivial, but it facilitates three contributions. First, it increases ecological validity as study participants engage in a task with stimuli that resemble user interfaces of existing social media platforms. Second, the focus on feeds enables researchers to test the influence of contextual factors and spillover effects resulting from the composition and sequencing of content within that feed. Third, researchers can track this browsing behavior in terms of both user reactions (e.g., likes, comments) and post-level dwell times (i.e., how long each post was visible to the user). 1 Post-level dwell times can be used to approximate attention-related mechanisms. While this measure is used internally by platforms like Facebook and X (formerly known as Twitter) to optimize user experiences and targeting algorithms (see Berger, Moe, and Schweidel 2023; Yi et al. 2014), it is generally unavailable for academic researchers in field studies and vignette experiments focusing on single posts.

As a technological contribution and to facilitate the adoption of DICE, this article provides an open-source app that implements the proposed experimental paradigm, ensuring that DICE is accessible to researchers with varying technical expertise through a web configurator with a no-code interface (available at https://dice-app.org). This configurator enables researchers to create studies efficiently, reducing time investment, learning requirements, and financial costs. All source code is built on widely used frameworks, such as oTree (Chen, Schonger, and Wickens 2016), and is openly available, allowing researchers to further customize the experimental paradigm to their specific needs.

In what follows, we first synthesize the three dominant research paradigms in social media research and their implications for validity and study design. We then introduce DICE, including its features, its integration with other tools (e.g., survey platforms such as Qualtrics), and its measurement capabilities. Two case studies illustrate the experimental paradigm in action for marketing-relevant phenomena. The final section presents a discussion of limitations as well as extensions and how DICE can address new research questions or provide new (or more nuanced) answers to existing questions. This section also highlights potential applications based on expert interviews with marketing professionals.

Research Paradigms in Social Media Research

Vignette-based experiments, online platform studies (e.g., A/B tests on Facebook), and observational studies (e.g., data scraped from X) have been the backbone of extant social media research in marketing and related disciplines. 2 We briefly review how researchers typically use these different paradigms while referring to a recently published Journal of Marketing article (i.e., Zhou, Du, and Cutright 2022) as an illustrative example, as it conveniently features all three types of social media studies within a single article.

Vignette-Based Experiments

In the domain of social media, vignette experiments typically feature a single social media post varying the focal construct(s) of interest between different experimental conditions. For example, participants in Study 2 of Zhou, Du, and Cutright (2022; see their Web Appendix F for the stimuli) were randomly shown one of two screenshots displaying a single tweet from the brand KitKat either praising a competitor or featuring a control message. Subsequently, study participants reported their attitudes toward KitKat and the competitor brand (i.e., Twix).

Among the three main paradigms of social media studies, vignette experiments can offer the highest level of internal validity as researchers have full control over the treatment (e.g., experimental manipulations) and the treatment assignment (e.g., randomization and compliance). By carefully designing their stimuli and measures, researchers can also achieve high levels of construct validity (i.e., close alignment between the specific operationalization and the higher-order theoretical construct; Shadish, Cook, and Campbell 2002). These studies are generally easy to design, execute, and analyze.

However, vignette experiments have been criticized for their relatively low levels of ecological validity (Morales, Amir, and Lee 2017) and are described as “research-by-convenience” (Pham 2013, pp. 419–20), failing to sufficiently mimic an environment that would be informative of consumers’ actual behaviors observable in a real consumption setting. Vignette experiments also tend to magnify the salience of the focal aspects of the treatment, potentially leading to an overestimation of the size of the observed effect compared with what might be found in a more noisy field setting (e.g., Dubois et al. 2021).

These concerns are particularly pronounced in social media research, where the prototypical vignettes often aim to resemble the user interface (i.e., how content is presented visually) but not the user experience on social media platforms (i.e., how users interact with the content). More specifically, study participants typically encounter a screenshot or image of a single post, even though they are used to browsing feeds in which many posts compete for attention. Thus, these studies may invite more focused attention to single stimuli that may diverge from how participants would approach the same post in a real social media feed (see Pham 2013). Additionally, without the competitive context of feeds, researchers cannot examine which specific posts “cut through the clutter” to be noticed and potentially recalled by consumers (e.g., Pieters, Warlop, and Wedel 2002; Villarroel Ordenes et al. 2019). To offset the limitations of vignette-based experiments, researchers have increasingly turned to two types of field studies (Blanchard et al. 2022): online platform studies and observational studies.

Online Platform Studies

Since 2021, more than 30 articles in leading marketing journals have utilized the so-called A/B testing functionalities provided by social media platforms to conduct online platform studies (Boegershausen et al. 2025). Specifically, researchers use these tools to disguise their stimuli as sponsored posts (i.e., ads) and compare their effectiveness in a naturalistic social media environment (Braun et al. 2024). For example, in Study 1 of Zhou, Du, and Cutright (2022), 13,719 Facebook users saw one of three ads promoting a fictional car wash provider. For each of these ads, Zhou, Du, and Cutright (2022) retrieved aggregate summary statistics (i.e., clicks/impressions) from Meta's Ads Manager to compute and compare the click-through rates of these three different ads.

These tools are primarily intended for (commercial) advertisers, are fairly easy to use, and provide an opportunity to study people's behavior in the wild by tracking conversion outcomes across the purchase funnel. Another benefit of online platform studies is that online ad platforms (e.g., Meta's Ads Manager) enable researchers to target actual social media users based on demographics or so-called user interests (Boegershausen et al. 2025). Thus, these ad platforms also provide low-cost access to large and diverse samples. In sum, online platform studies offer high levels of realism, as they are conducted directly on social media platforms and measure actual user behavior, unbeknownst to the users themselves.

In light of their “A/B testing” label, these functionalities might appear to be randomized controlled trials (RCTs) run in a naturalistic environment. If they were indeed RCTs, online platform studies would be the gold standard in social media research. Unfortunately, by using these tools, researchers relinquish control over critical elements of the study design to these platforms. Most critically, after randomization, social media platforms employ targeting algorithms that distort the initially clean random assignment of participants to different treatments due to so-called “divergent delivery” (Johnson 2023, p. 473). This term describes the process wherein variations of ads are served to different compositions of users based on expected audience reactions, user classifications, and bidding algorithms (e.g., Braun and Schwartz 2025). The platforms’ classifications and algorithms are unobservable to the researcher as they only receive limited summary statistics about users (e.g., gender, age cohort, device type) at the aggregate level. Even with privileged access to much larger datasets and advanced methods than those typically available for individual research projects, it remains difficult, if not impossible, to “adequately control for the selection effects induced by the advertising platform” (Gordon, Moakler, and Zettelmeyer 2023, p. 770). Additionally, at any given time, social media platforms run numerous internal A/B tests to optimize their platform, which are also unobservable to researchers and may commingle with their study.

In sum, the inherent confoundedness of the effect of ad characteristics and the algorithms that deliver them limits the construct validity and internal validity of online platform studies (Boegershausen et al. 2025). Unfortunately, most social media platforms do not communicate these mechanisms and their implications transparently. Thus, despite their increasing popularity in marketing research, some (e.g., Braun et al. 2024) have started to question whether online platform studies should be used in academic research, given their inability to isolate constructs of interest.

Observational Studies

The third and final paradigm, observational studies in the social media domain, is also based on field data. In contrast to the previous two paradigms, it is nonexperimental and retrieves existing social media data. Platforms like X and Facebook are among the most frequently scraped sources in articles published in leading marketing journals (Boegershausen et al. 2022). An example of such an observational social media study is the pilot study of Zhou, Du, and Cutright (2022), featuring 8,393 tweets from three gaming console manufacturers collected from X (previously Twitter). The authors coded the tweets for their focal construct of interest (i.e., whether they mentioned a competitor) and compared aggregate user reactions to the tweets (i.e., number of likes, number of retweets).

A critical advantage of such observational data is that it features consequential dependent variables (e.g., likes, comments) of practical relevance (Inman et al. 2018). Observational studies are noninterventional because researchers typically retrieve data retrospectively without interfering in the data-generating process or actively manipulating key variables. Thus, observational studies are unlikely to be affected by demand effects and help identify ecologically valid effects “in the real world.” Such studies also enable researchers to collect large sample sizes across different geographies at relatively low costs.

There are methodological challenges, however, such as endogeneity, inherent to observational studies that can, at least in part, be addressed through statistical techniques (Goldfarb, Tucker, and Wang 2022). 3 Yet, observational social media studies face another major idiosyncratic challenge that is more difficult to resolve through methodological sophistication alone: algorithmic interference. This describes how both information display and retrieval are affected by the optimization and personalization algorithms on social media platforms (Xu, Zhang, and Zhou 2020). As with online platform studies, observed outcomes (e.g., the number of likes) are shaped by opaque platform algorithms and their interaction with properties of the focal content. Algorithms might interfere not only during the data generation stage (e.g., which users are exposed to which posts), but also during the data retrieval stage (e.g., which posts researchers can extract). This raises concerns about construct and internal validity in observational social media studies, which are difficult to address (e.g., Davidson et al. 2023; Xu, Zhang, and Zhou 2020). Finally, it is challenging to obtain individual-level user data in observational studies, constraining the testing of underlying processes that produce the observed aggregated user reactions to the content.

The Case for Digital In-Context Experiments

Prior research often has effectively managed a high level of experimental control and high study realism across the entirety of the empirical package, but not necessarily within a single study. While triangulation across studies is often a powerful strategy, a particular challenge in social media research is the concern about whether individual studies, on their own, possess sufficient levels of construct and internal validity (Blanchard et al. 2022). Importantly, black-box algorithms in field studies impede the isolation of the same theoretical construct as manipulated in vignette-based experiments. However, effective triangulation requires testing the effect of the same construct across paradigms (see also Lynch 1999, p. 370). To this end, we propose a novel experimental paradigm, DICE, which offers new opportunities for triangulation in social media research by combining the experimental control and individual-level data of vignette-based experiments with more of the realism of field studies. This approach enables researchers to increase a study's ecological validity without compromising its internal and construct validity.

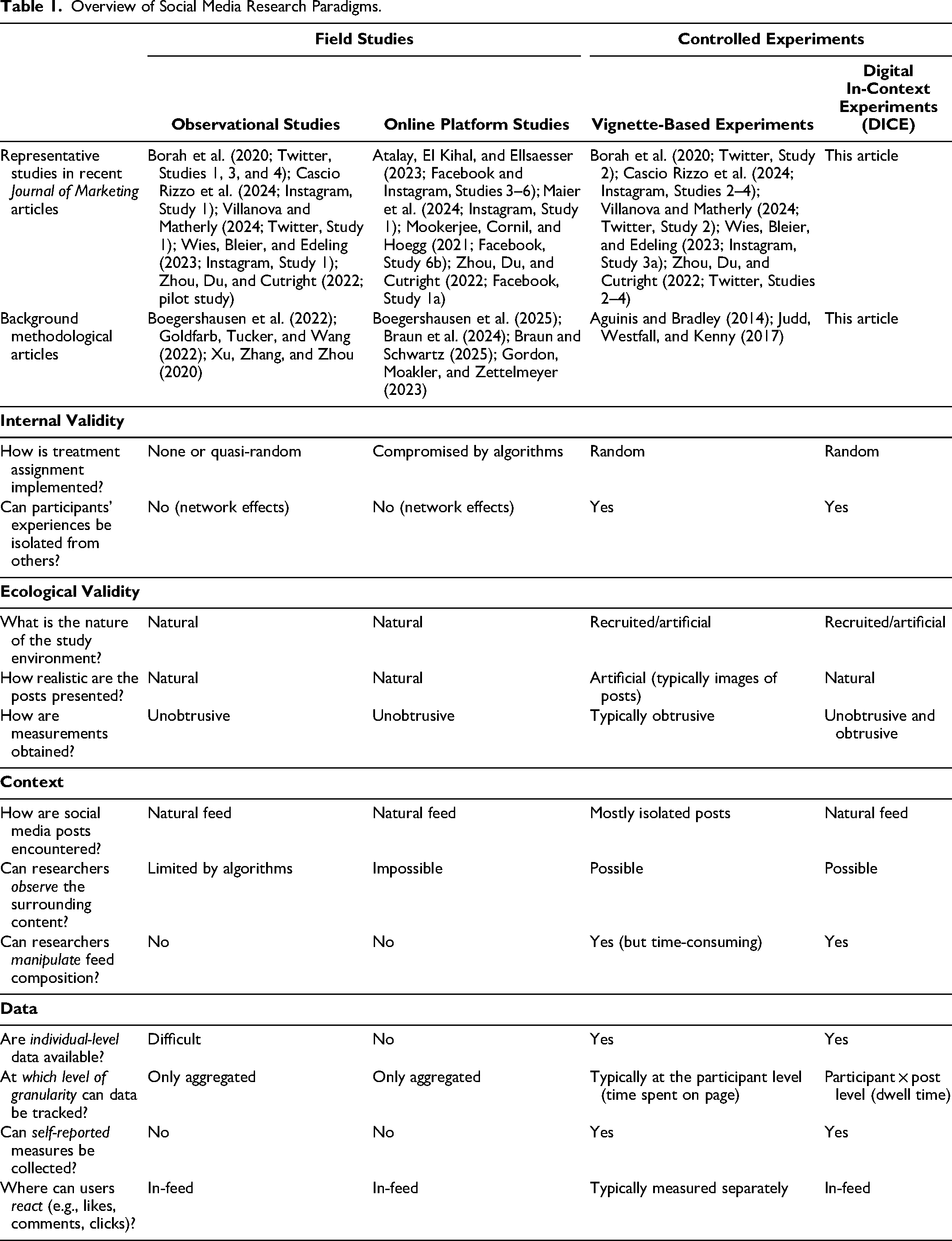

Table 1 summarizes the comparison of the three core research paradigms in social media research, arranged from naturalistic field settings (i.e., observational studies and online platform studies) to vignette-based experiments, and the opportunities provided by DICE. DICE enables researchers to move beyond studying focal posts in isolation to study marketing phenomena that are inherently contextual (e.g., Evangelidis et al. 2024; Stremersch et al. 2023). A prominent example is the context of brand safety concerns, where exposure to harmful content (e.g., hate speech, violent imagery) affects perceptions of and engagement with focal posts (a phenomenon we demonstrate in Case Study 1). The ability to manipulate entire feeds also enables researchers to examine phenomena that are sequential by nature, such as content ranking and presentation order (e.g., Khambatta et al. 2023). The feed structure not only allows study participants to browse the content, but also allows researchers to observe how users engage with it. To this end, DICE offers researchers access to dwell time data at the participant × post level, which measures the time each study participant spends on each post of the feed. While most existing research tools (e.g., Qualtrics, oTree) offer easy access to “time spent on page” measures, this level of granularity is not readily available. Yet, these post-level dwell times provide an unobtrusive window into attention-related processes that shape engagement when different posts compete for users’ limited attention (Loewenstein and Wojtowicz 2025). We refer to measures as “unobtrusive” when a participant's behavior is recorded without requiring participant awareness, whereas “obtrusive” measures capture an overt action or survey response. DICE facilitates the collection of both unobtrusive and obtrusive measures (e.g., attitude ratings as part of a follow-up survey), giving researchers the option to combine both behavioral and attitudinal data.

Overview of Social Media Research Paradigms.

Importantly, DICE seeks to complement rather than replace existing research paradigms, which all have their unique advantages and disadvantages (see Table 1). Depending on research objectives, all paradigms can provide valuable insights for marketing theory and practice. For instance, vignette experiments are still preferable when researchers want to test early-stage theories, where the contextual richness exacerbates the detection of focal effects. Online platform studies remain valuable when ecological validity in actual platform environments is important, such as when testing interventions intended for real-world applications or when algorithmic delivery is itself part of the phenomenon under study. Observational studies remain essential to identify naturally occurring patterns, study long-term effects, or examine constructs that cannot be ethically or practically manipulated. DICE is most applicable when the social media context itself is relevant to the phenomenon and when researchers seek to collect more granular data at the post × participant level. Given its feed-based structure, DICE is well suited to examine which context cuts through the clutter (e.g., the effect of a particular ad feature on brand recall).

DICE also has specific limitations worth acknowledging. While creating more realistic social media feeds, it does not fully recreate the social dynamics of actual platforms where users interact with one another. The current implementation focuses primarily on browsing behavior rather than direct social interactions between users. Additionally, and in contrast to field studies, DICE studies rely on recruited respondents who are fully aware that they are participating in a study. Hence, while DICE offers greater ecological validity than vignette experiments, it obviously cannot match the naturalistic setting of field studies. These limitations reflect intentional design choices that prioritize experimental control while enhancing study realism. Our “General Discussion” section offers further reflections on limitations and potential remedies.

Finally, other research methodologies, such as eye-tracking, can provide richer behavioral data to describe attention processes. Although technical advances have made these methods more accessible, their adoption in social media research remains limited, often due to resource requirements and potentially also due to the challenge of creating ecologically valid stimuli. DICE may facilitate eye-tracking studies in the social media domain via the creation of feeds that more closely resemble those on existing social media platforms.

To facilitate the adoption of DICE, we offer an open-source app that allows researchers to configure and collect data using this experimental paradigm. The next section details the user experience from both the researcher's and participant's perspectives.

DICE App Implementation

An open-source app to implement DICE studies is available at https://dice-app.org/. DICE integrates a combination of technologies: We employ core features of oTree (Chen, Schonger, and Wickens 2016) for participant randomization and to collect basic user information as well as connecting to recruitment platforms like Prolific automatically. We use a front-end interface called Bootstrap, originally developed by X (Matsinopoulos 2020), to display realistic feeds with all standard social media interface elements and responsive layouts across device types. Finally, we developed custom JavaScript tracking to capture behavioral data, including post-level dwell times, scrolling patterns, and user interactions (e.g., capturing likes and comments). Such functionalities are not readily available in oTree, but are essential for tracking behavior at the participant × post level. Our reliance on these established open-source frameworks is intended to increase the accessibility for other researchers to extend and contribute to the DICE app (see the “General Discussion” section for potential extensions).

In what follows, we describe how to implement studies, the front-end view for study participants, the workflow for researchers, and the behavioral data generated by the app.

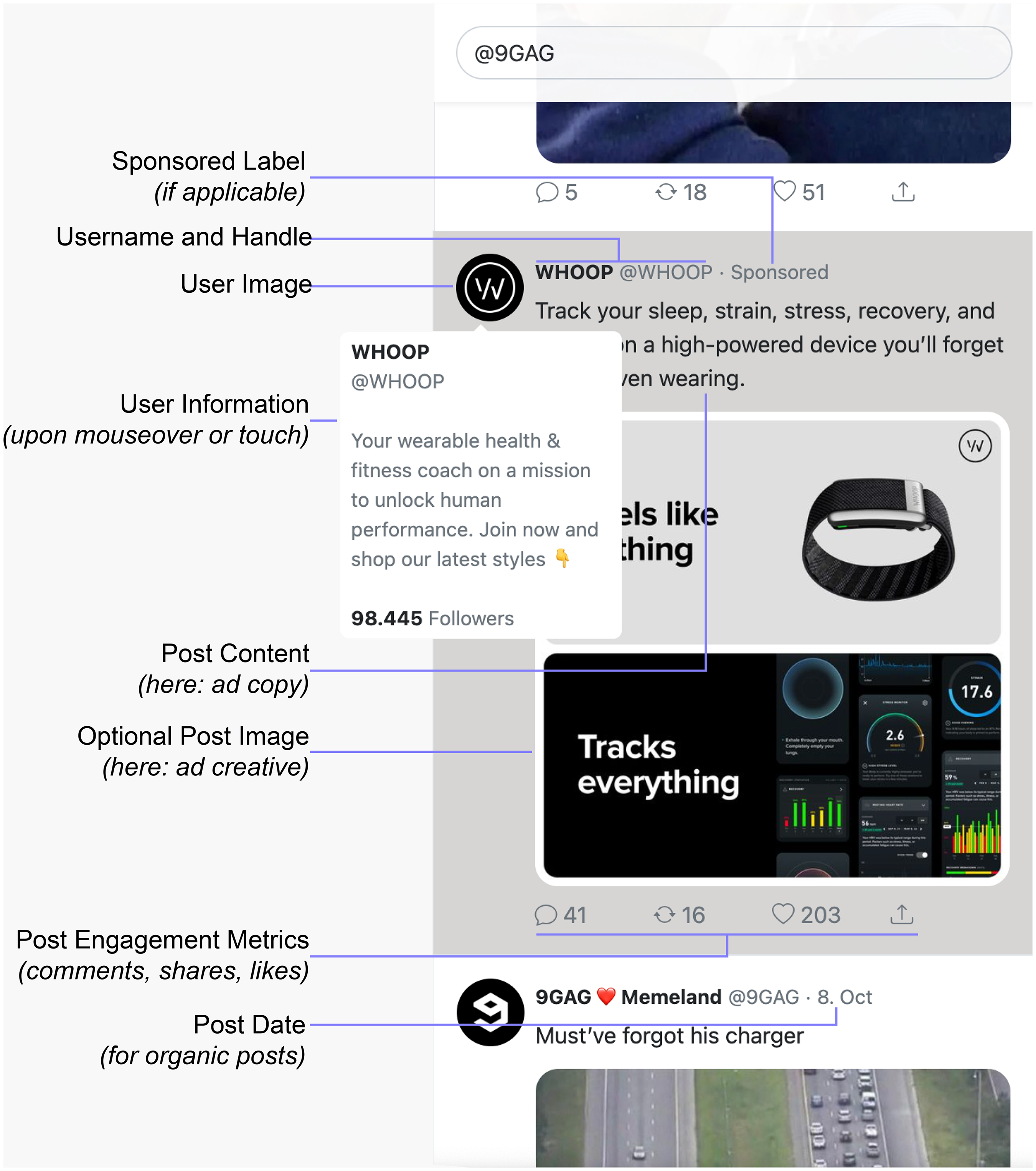

DICE from the Perspective of Study Participants

The DICE app creates responsive stimuli: It is compatible with multiple device types, including desktop, mobile, and tablets, as all content is automatically resized and rendered for different devices. As illustrated in Figure 1, DICE creates a social media feed that mimics the key elements of major microblogging platforms, such as user identification (profile picture and handle), post type indicators (e.g., “sponsored” labels for branded content, as shown for the company Whoop), the main content (combining the copy and creative for a sponsored or organic post), and engagement metrics (likes, shares, comments). The interface also provides interactive elements. For example, study participants receive additional user information when they hover over profile pictures or usernames, mimicking typical social media functions. Each post combines these elements in a familiar layout, and participants can interact with each element of the feed.

Browsing a DICE Feed (Study Participant Perspective).

After browsing the feed, participants are redirected to the researcher's preferred survey platform (e.g., Qualtrics), where they may respond to survey questions. Finally, participants are automatically redirected to a recruitment platform of the researcher's choice, where a dedicated completion code verifies a respondent's completed participation.

DICE from the Perspective of Researchers

This article's companion website (https://dice-app.org/) offers a web configurator to set the parameters for a study in DICE. A critical step in designing a study via the app is creating a CSV (comma-separated values) file that specifies all posts (i.e., both organic and sponsored content), their sequence, and any experimental conditions. This CSV template enables researchers to systematically organize content in feeds without the need to manually edit images while maintaining experimental control over exactly when and where posts are presented (e.g., either specifying a fixed sequence or fully randomizing some or all posts, assigning posts to specific experimental conditions). Web Appendix A displays the four main steps in setting up and running a study in DICE. Additionally, the companion website offers a video walk-through for the detailed setup of the CSV stimuli file.

Behavioral Data

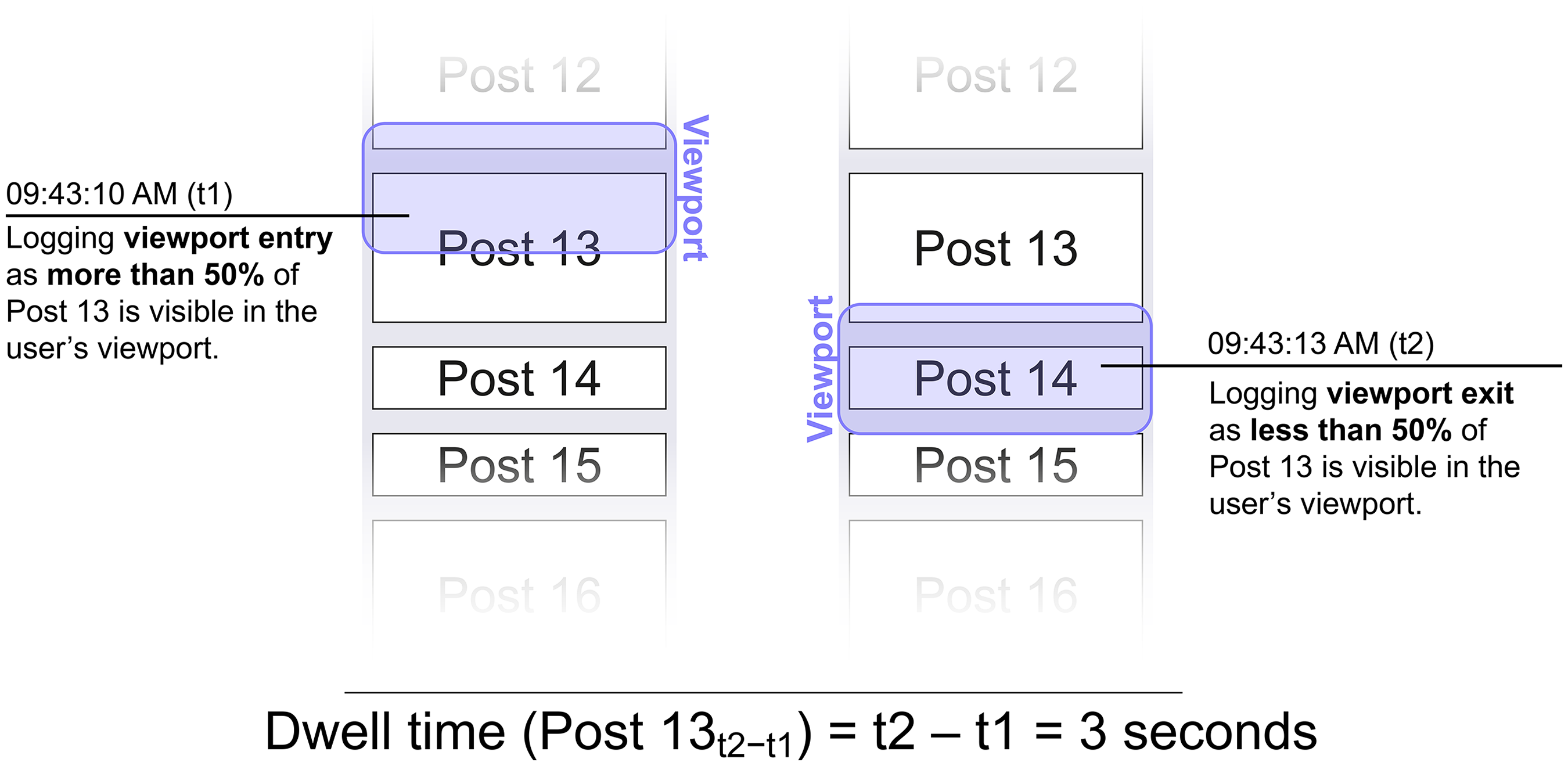

The DICE app unobtrusively measures behavioral data by tracking so-called viewport events and user interactions (Yi et al. 2014). The viewport represents the visible portion of a web page that a user sees on their screen at any given moment. As users scroll through their feed, posts enter and exit this viewport. As shown in Figure 2, the DICE app records the precise time stamps of each of these entry and exit events. By calculating the difference between these two events for every post, we compute the dwell time for each post. Thus, this measure captures how long each post remained visible when participants browsed through their feed. All other things equal, the faster (slower) a participant scrolls through the feed, the shorter (longer) the dwell time of a post.

Post-Level Dwell Time Measurement.

To account for differences between posts with different sizes (e.g., when some posts contain images while others are text-only), the app tracks the specific height of each post measured in pixels. This allows researchers to normalize the dwell time data by dividing a post's dwell time by its size (i.e., height). In Figure 2, a threshold of 50% specifies that an entry event is triggered as soon as at least 50% of a post becomes visible within the viewport. Depending on theoretical and practical considerations, researchers can also set custom thresholds that define when entry and exit events are triggered.

The app computes dwell time measurements at each exit event, meaning posts that reenter the viewport generate multiple dwell time records. For instance, scrolling down past a post, returning to it, and scrolling past it again creates two separate dwell time measurements. As a consequence, this tracking also enables researchers to analyze detailed browsing or scrolling trajectories of participants. Such viewport-based measures have been used successfully as proxies for attention (e.g., Simonov, Valletti, and Veiga 2025).

Participants’ interactions with the feed are recorded in nested data structures where interactions are logged at the participant × post level. Each observation contains identifiers for the participant and the specific post, dwell times, and user reactions (e.g., likes, comments). This data structure lends itself to exploring diverse research questions with various analytical approaches. For example, researchers can examine engagement patterns with specific posts between participants or leverage the nested structure for increased statistical power in multilevel models within participants (McNeish 2023; Wooldridge 2010, pp. 281–387). Our case studies demonstrate these different approaches to analyzing such data, and we provide further information about the data structure in the documentation on our companion website.

If researchers decide to append a survey to the social media browsing task (e.g., using Qualtrics), the DICE app provides URL parameters to forward a participant's unique identifier when redirecting from the DICE app to Qualtrics. This enables the researcher to match a participant's behavior on the feed with subsequent survey responses.

Empirical Demonstrations

Two case studies illustrate the application of DICE. These studies are designed to replicate and expand classic context effects within a more ecologically valid social media feed environment using DICE. The focus of the case studies is to illustrate the usage and resulting data streams of DICE rather than to advance theory. This section aims to achieve two objectives. First, the studies demonstrate the ability to manipulate entire feed compositions and sequences, rather than just individual social media posts. Second, they demonstrate how the post-level dwell time measurement can be used to approximate attention, complementing insights gained from traditional self-report measures. Substantively, Case Study 1 examines how content surrounding a sponsored post affects brand perceptions, while Case Study 2 explores how the specific position of a post within a feed influences attention and brand recall. Technical implementation details for both case studies, including configuration steps and technical setup, are available in Web Appendix B and on the companion website.

Case Study 1: Feed Composition and Context Effects

Case Study 1 demonstrates DICE's capability to study context effects with high experimental control and study realism. This study illustrates how researchers can systematically manipulate the broader context (i.e., the composition of the feed) in which users encounter a specific post (in this case, a sponsored post from a brand). Substantively, Case Study 1 examines the issue of brand safety in social media advertising. Brand safety refers to the idea that advertising should not appear in contexts that could harm a brand's reputation (Fournier and Srinivasan 2023). This concern is particularly relevant for social media advertising, where platforms use automated systems to place ads in dynamic, user-generated content environments. These systems often lack the nuanced understanding needed to identify potentially problematic contexts that could harm a brand. While industry reports suggest that up to 75% of brands have experienced such unsafe brand exposures (Ahmad et al. 2024; GumGum 2017), examining these effects in the field risks apparent brand damage.

Experimental Design

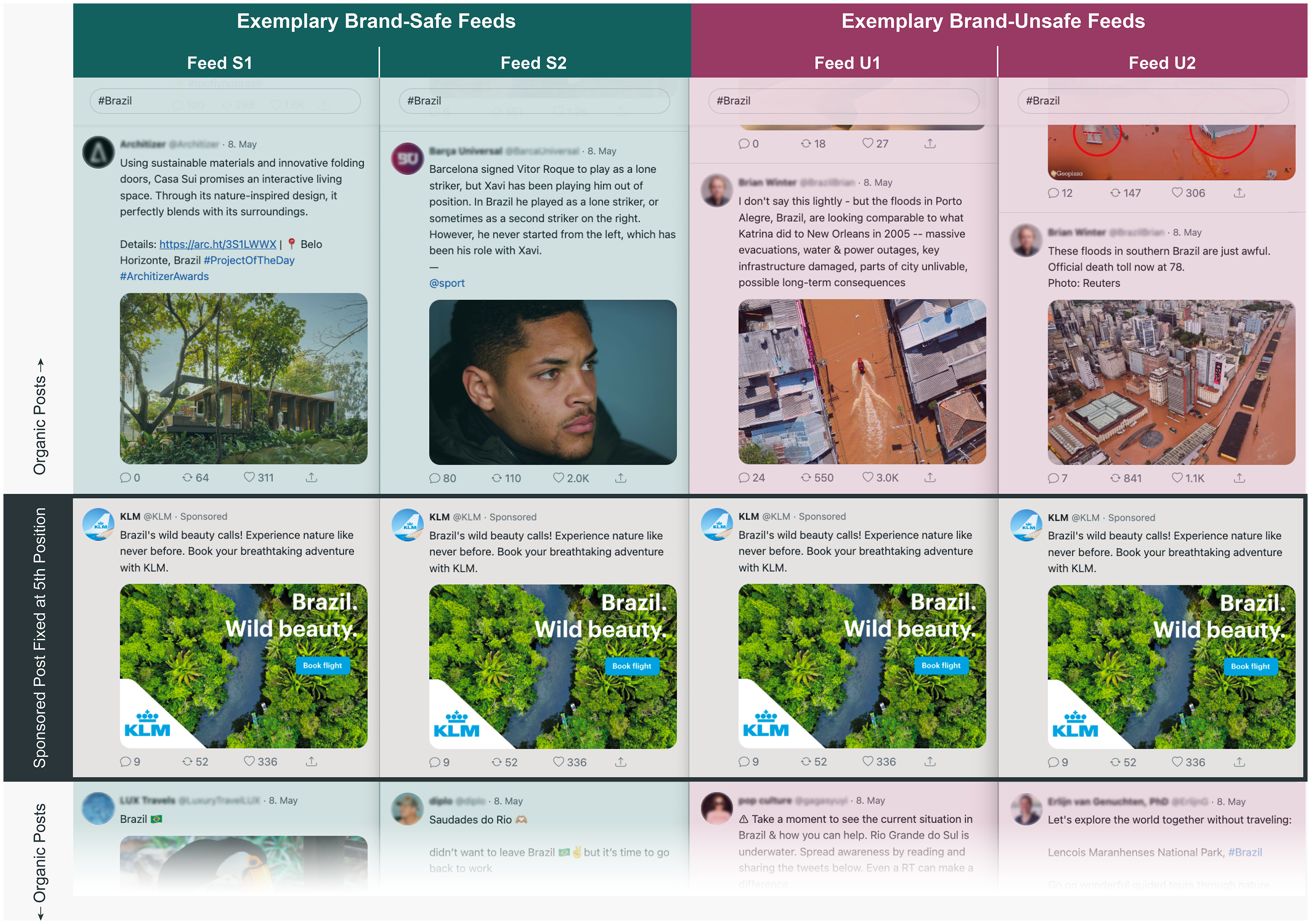

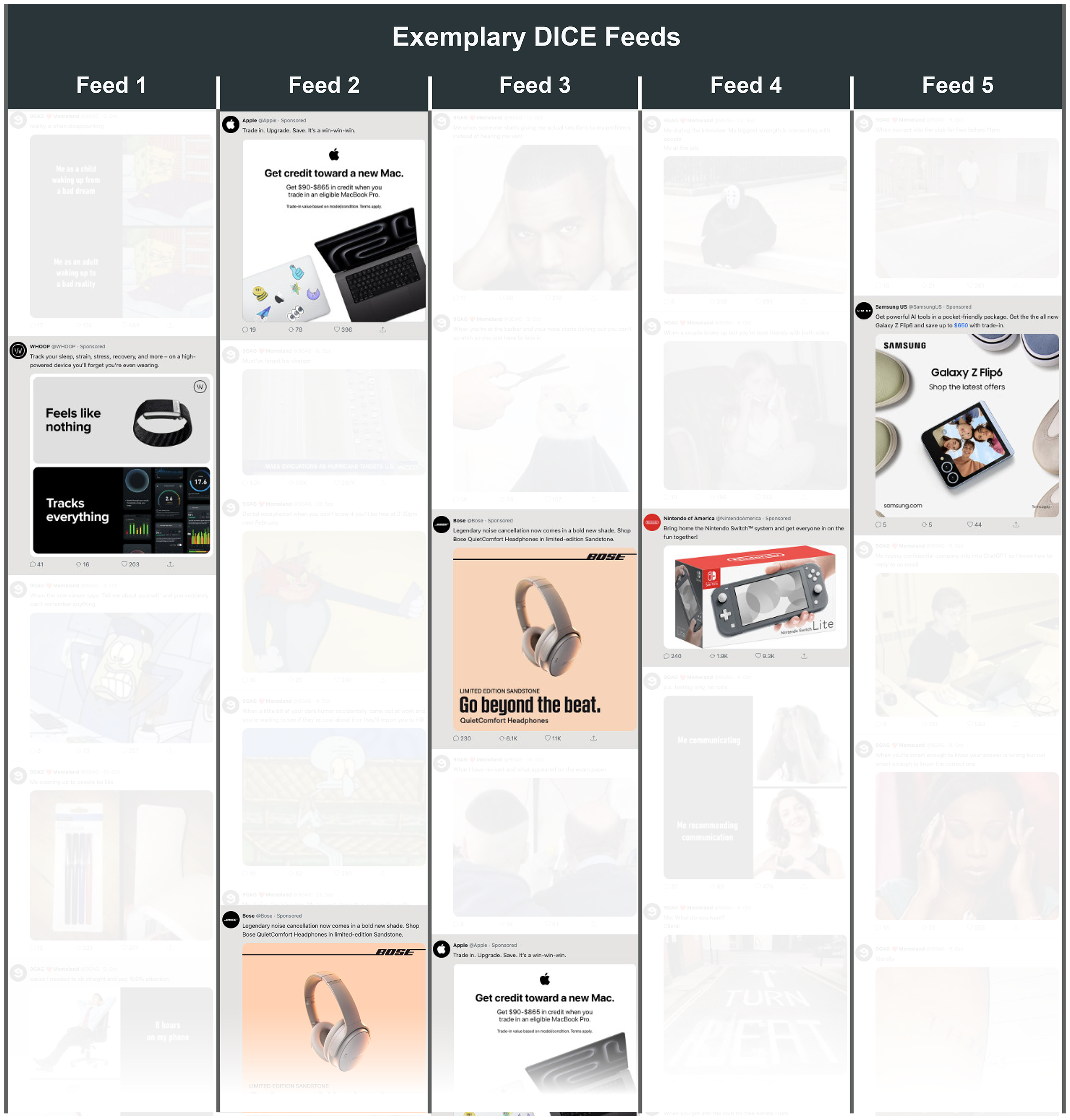

To test how brand-(un)safe contexts affect brand perceptions, we created two social media feeds that were identical in structure but varied in their content surrounding a sponsored post (see Figure 3 for exemplary screenshots of the brand feeds). The sponsored post in both conditions was a fictitious ad by the airline KLM promoting flights to Brazil.

Exemplary DICE Feeds from Case Study 1.

In the brand-safe condition, the sponsored post was surrounded by actual organic posts covering Brazil scraped from the web. In the brand-unsafe condition, however, the sponsored post was surrounded by another set of scraped organic posts about the severe flooding that occurred during the time of the study. Such a situation is precisely the type of contextual mismatch that automated systems can create and managers fear due to the adverse consequences for brands (Ahmad et al. 2024; GumGum 2017). In both conditions, the sponsored post was always fixed in the fifth position, whereas the order of the organic posts varied randomly.

Procedure

We recruited 982 U.S. American participants on Prolific (Mage = 39 years; 56% female, 41% male, 2% nonbinary or third gender, .4% preferred not to disclose, .4% preferred to self-describe) to participate in the study. Participants browsed the feed on their own devices (75% desktop, 21% mobile, and 4% tablet). After scrolling through the feed, participants were redirected to a Qualtrics survey, where they first provided demographic information as a filler task. Next, participants reported their brand attitude toward KLM using three seven-point scales (1 = “Negative,” and 7 = “Positive”; 1 = “Unfavorable,” and 7 = “Favorable”; 1 = “Dislike,” and 7 = “Like”; α = .96). Finally, we assessed participants’ awareness of the flooding in Brazil. All stimuli, materials, data, and analysis code for both case studies are available on OSF (https://osf.io/2xs5c/).

Data

The dataset comprises 982 participants and 19,640 observations at the participant × post level. We restrict our analyses to user engagement with the sponsored post (i.e., the KLM ad) and log-transformed the raw post-level dwell times to reduce skewness. Therefore, the final sample contains 955 observations on the participant × sponsored post level. 4

Results and Discussion

Brand attitudes toward KLM were less positive in the brand-unsafe feed condition (Mu = 4.310, SDu = 1.366) compared with the brand-safe feed condition (Ms = 4.821, SDs = 1.161, b = −.510, SE = .082, t(953) = −6.217, p < .001, d = .403).

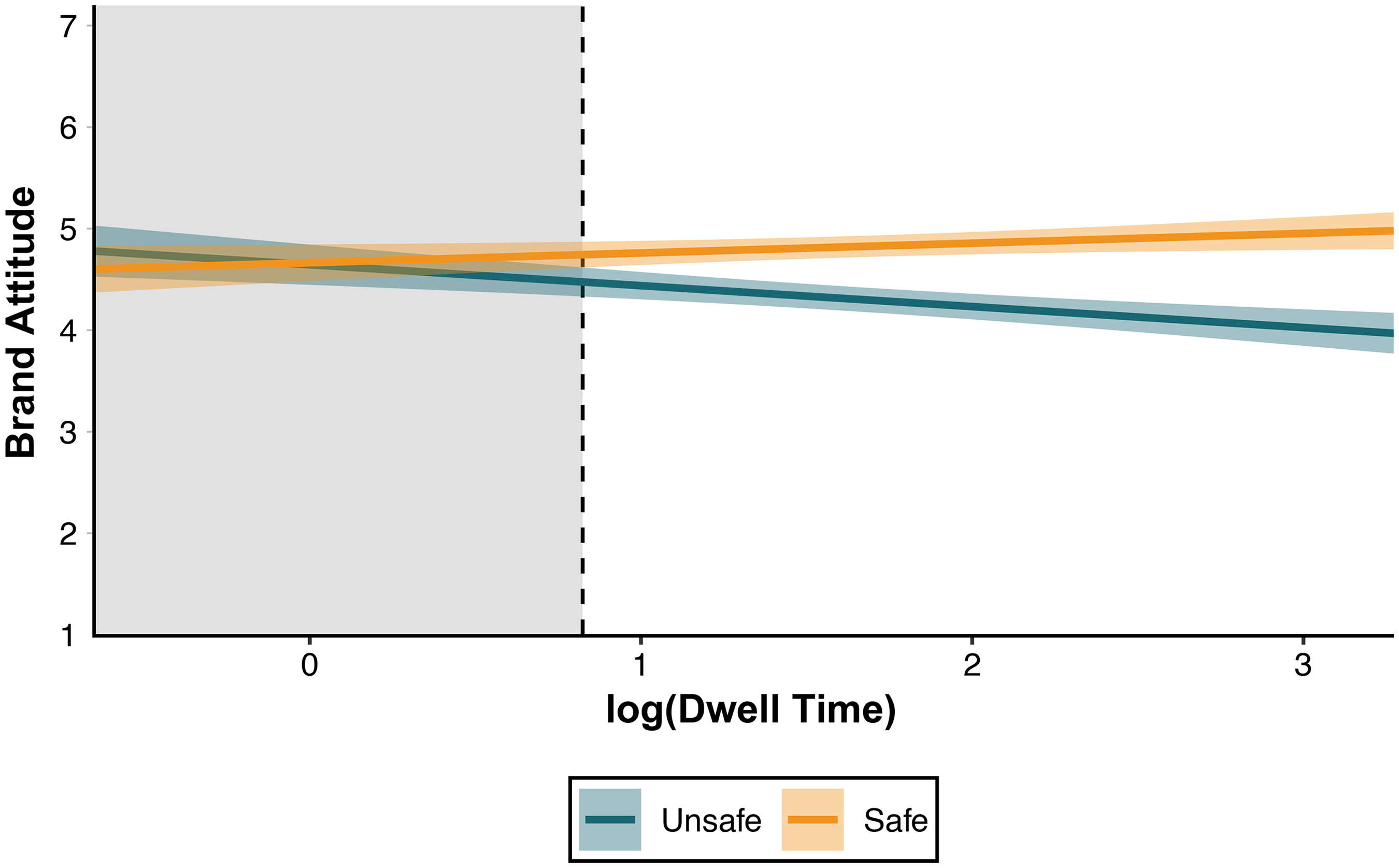

To further explore the interplay between the KLM ad's context and brand attitudes, we examined whether the dwell time of the ad moderated the previously reported main effect of context. An ordinary least squares regression revealed an interaction between the brand safety context manipulation and dwell time (b = −.302, SE = .068, t(951) = −4.455, p < .001), such that the negative effect of an unsafe context on brand attitude was more pronounced when participants spent more time viewing the sponsored post (see Figure 4). In contrast, the effect was much smaller for participants who did not spend much time on the sponsored post. We did not find main effects of brand safety (b = −.020, SE = .137, t(951) = −.143, p = .886) or dwell time (b = .096, SE = .050, t(951) = 1.929, p = .054) on brand attitudes. The ad's dwell time did not vary across brand safety conditions (b = −.002, SE = .078, t(953) = .022, p = .982). Finally, this moderation is robust to alternative model specifications (see Web Appendix C), where we repeated the same analysis while controlling for the dwell time allocated to all organic posts (b = −.316, SE = .068, t(950) = −4.662, p < .001).

Moderation of the Effect of Context on Brand Attitudes by Dwell Time.

From a substantive perspective, the findings provide experimental support for brand safety concerns and reveal an intuitive nuance: Contextual misplacements primarily harm brand perceptions when consumers pay sufficient attention to the content. When attention is minimal (indicated by minimal dwell times), the negative impact of unsafe contexts is neutralized. From a methodological perspective, this study illustrates how researchers can manipulate entire feed compositions rather than just single social media posts and how DICE's post-level dwell time data can be used as a behavioral measure (or proxy) of attention.

Case Study 2: Position Effects and Competing Attention Between Brands

Rather than focusing on a single post, Case Study 2 examines how multiple brands compete for user attention within the same feed—a common scenario in social media advertising, where multiple advertisers target the same user and try to cut through the clutter of both organic content and competing ads (Villarroel Ordenes et al. 2019). Case Study 2 also illustrates how to approximate attention patterns across an entire feed containing multiple sponsored posts from different advertisers in the same product category. By studying how users engage with multiple sponsored posts in a single feed, we can better understand the dynamics of attention allocation in social media environments where brands compete for attention, building on related memory and context effects studied in traditional advertising environments (Pieters, Warlop, and Wedel 2002). Specifically, we examine how the position of sponsored posts affects brand recall when multiple brands are presented within the same feed, and how the dwell times captured through DICE help explain these effects.

Experimental Design

To investigate the relationship between ad placement and brand recall, we simulated a social media feed containing both organic and sponsored posts. Whereas the set of organic and sponsored posts was the same for all participants, we randomized the sequence in which participants were exposed to these posts between subjects.

The feed featured 35 organic posts and five consumer electronics ads (sponsored posts; see Figure 5 for example feeds). We selected ads from established consumer electronics brands (i.e., Apple, Bose, Nintendo, Samsung, Whoop). The sponsored posts were actual ads retrieved from Facebook's Ad Library, a publicly accessible database that archives advertisements run by advertisers across Meta's platforms. The selected sponsored posts shared similar basic characteristics, including the presence of a product image, brand logo, and brief text as part of the advertisement. The organic content featured a collection of memes taken from the platform 9gag, a popular internet meme collection with over 16 million followers on X. We intentionally chose memes as organic content to offer a conservative test of how branded content competes for attention on social media, particularly among younger users (Malodia et al. 2022).

Exemplary Feed Compositions (Case Study 2).

Procedure

We recruited 300 younger (filtered for age < 36 years) U.S. American participants from Prolific (Mage = 29.42 years; 53% female, 45% male, 1% nonbinary or third gender, .7% preferred not to disclose, .3% preferred to self-describe). Participants browsed the simulated feed on their own devices (72% desktop, 25% mobile, and 3% tablet). After scrolling through the feed, we redirected participants to a Qualtrics survey, where they first provided demographic information as a filler task. Next, we measured whether participants recalled seeing the ads by the five brands in the feed. Specifically, we measured both free and cued brand recall. To measure cued brand recall, we showed participants a list of 20 brands from different categories and asked them to indicate whether they recalled seeing any of them (Campbell and Keller 2003; Simonov, Valletti, and Veiga 2025), including a no-recall option. The results for both brand recall measures were highly consistent. For parsimony, we report the cued recall results in the article and the results for unaided recall in Web Appendix D.

Data

Our dataset comprises 300 participants and 10,377 observations at the participant × post level. In the subsequent analyses, we focus on sponsored posts. We exclude the first and final two posts from our analysis due to participants familiarizing themselves with DICE when loading the feed (i.e., for the first posts) and deciding whether to proceed to the follow-up survey at the bottom of the feed (i.e., for the last posts). Thus, our final sample size for Case Study 2 is 1,283 observations on the participant × sponsored post level.

Position in feed (subsequently referred to only as “position”) is our main independent variable. We measured post-level dwell times as the number of seconds during which at least 50% of a post's pixels were visible on screen. We log-transformed the raw dwell times to reduce skewness. Unlike Case Study 1, where we focused on a single post (i.e., the KLM ad), the focal posts of interest (i.e., ads) in Case Study 2 vary in their post height. Thus, as described in the “Behavioral Data” subsection of the “DICE App Implementation” section, we normalized our dwell time measure by dividing it by post height to control for differently sized posts (i.e., the height in pixels of a sponsored post on a participant's screen).

Results and Discussion

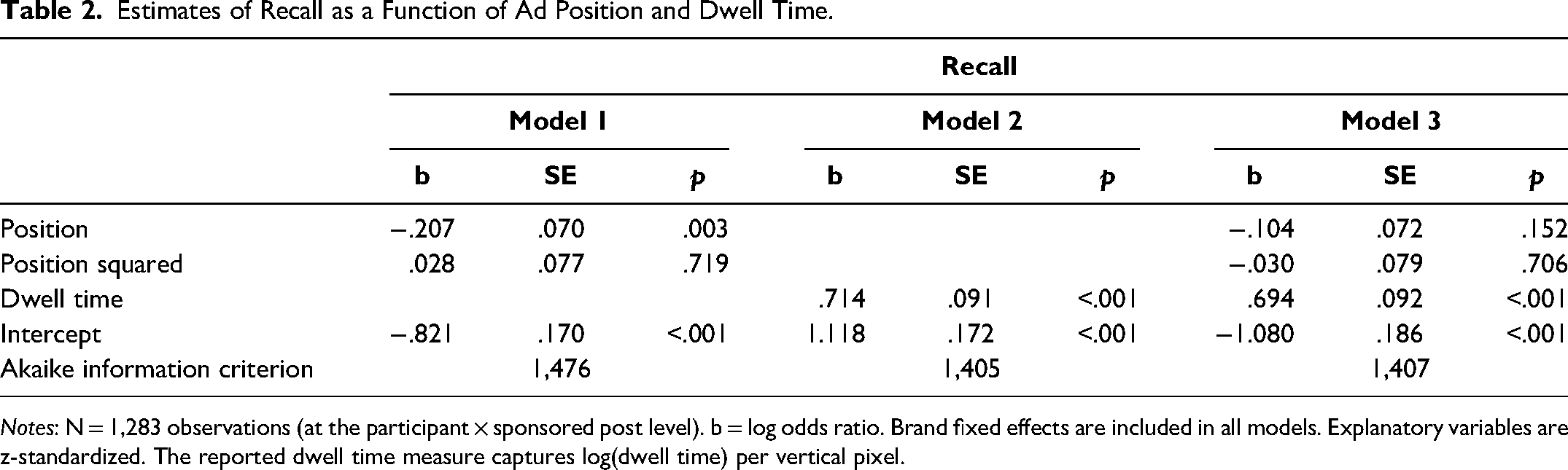

To test the impact of ad position on brand recall, we used a mixed-effects model with a logistic link function to account for multiple observations nested within participants and ads. We estimated the effect of ad position on recall and captured between-participant heterogeneity through random intercepts while controlling for brand fixed effects (for more details, see Web Appendix E).

The effect of position on recall was negative (b = −.207, SE = .070, p < .003; see Model 1 in Table 2), suggesting a primacy effect such that the farther up (down) an ad is displayed in a feed, the more (less) participants recall seeing the ad. We also examined potential nonlinear effects of position (i.e., to assess whether especially the beginning and end of a feed promote ad recall) by adding a quadratic term, but found no evidence for such effects. These results are robust to alternative model specifications, such as using a dummy variable to contrast the first ad position against all subsequent positions (see Web Appendix D).

Estimates of Recall as a Function of Ad Position and Dwell Time.

Notes: N = 1,283 observations (at the participant × sponsored post level). b = log odds ratio. Brand fixed effects are included in all models. Explanatory variables are z-standardized. The reported dwell time measure captures log(dwell time) per vertical pixel.

As shown in Model 2 in Table 2, the post-level dwell time of a user predicts ad recall (b = .714, SE = .091, p < .001). More importantly, we also found that the dwell time allocated to an ad was a stronger predictor of recall than the position of the ad in the feed. Specifically, Model 3, including both position and dwell time, shows that the effect of position becomes indistinguishable from zero (b = −.104, SE = .072, p = .152), while the dwell time coefficient remains almost unchanged (b = .694, SE = .092, p < .001). The minimal change in the Akaike information criterion when adding the position coefficients between Model 2 and Model 3 suggests that including ad position does not improve the model's fit to the data. A subsequent 1-1-1 hierarchical mediation analysis indeed supports that post-level dwell time mediates the relationship between ad position and recall (a × b = −.019, 95% CI = [−.027, −.013], proportion mediated = .539, 95% CI = [.282, 1.369], p = .002). Web Appendix F reports additional analyses that suggest limited heterogeneity in these patterns across different sponsored posts. While brands may differ in their baseline dwell times due to potential familiarity differences, dwell time consistently predicts recall across brands.

From a substantive perspective, the findings demonstrate how memory formation operates when brands compete for limited attention in a social media environment, consistent with prior work on attention and memory under competitive exposure (Pieters, Warlop, and Wedel 2002). From a more methodological perspective, Case Study 2 demonstrates how to combine the behavioral data (i.e., post-level dwell time as a proxy of attention) with self-reports from conventional survey measures (e.g., recall of branded content).

Finally, in both case studies, we also tracked other behavioral engagement metrics, such as likes and comments on individual posts. However, we observed low incident rates in both studies, which is not surprising as participants were not incentivized in any form to engage (e.g., like or comment) with the feed (e.g., only 144 [30] participants liked [replied to] any post in the feed in Case Study 2, and these numbers were even lower for sponsored posts, with 32 [4] participants liking [replying to] at least one sponsored post). Given these low engagement rates, we did not focus our analyses on these metrics, though the complete behavioral data for all studies are fully available in our OSF repository for researchers interested in exploring these patterns further.

General Discussion

A central methodological challenge in social media research is the disconnect between how users browse and consume dynamic content that competes for attention and how researchers typically study such content in isolation. This disconnect exists because recreating, controlling, and manipulating realistic feed environments is practically challenging: Vignette experiments require researchers to manually create feed layouts using image editing software, making the process both labor-intensive and prone to errors. In field settings, it is typically impossible to even observe, let alone manipulate, the individual feeds of different users. DICE seeks to complement these existing research paradigms by offering a middle ground that combines the control of vignette-based experiments with scrollable feeds that mimic the environment of field studies. This focus on feeds offers three opportunities. First, while maintaining experimental control as in vignette experiments, it increases a study's ecological validity by presenting stimuli and browsing tasks that more closely resemble how users typically experience content on social media. Second, it enables researchers to systematically control and manipulate entire feed compositions, allowing researchers to examine sequence, context, and spillover effects between posts. Third, it provides an environment in which post-level dwell time measurements can be used as behavioral proxies for attention allocation. An open-source app and no-code web configurator enable researchers to implement such studies with low time investment, limited learning requirements, and no additional financial costs.

This approach reflects a broader movement in marketing toward developing more sophisticated experimental tools that balance ecological validity with experimental control. Similar advancements include custom online marketplaces that authentically mirror real shopping experiences (Morozov and Tuchman 2024), browser extensions that track and manipulate participants’ actual browsing behaviors (Farronato, Fradkin, and Karr 2024), or platforms such as the Open Science Online Grocery (Howe, Ubel, and Fitzsimons 2022) that enable naturalistic consumer behavior studies online. Beyond increased study realism, such tech-forward developments also facilitate more sophisticated methodological approaches, such as adaptive experimental designs (Offer-Westort, Coppock, and Green 2021) and stimulus sampling techniques (Simonsohn, Montealegre, and Evangelidis 2025).

Future Research Directions

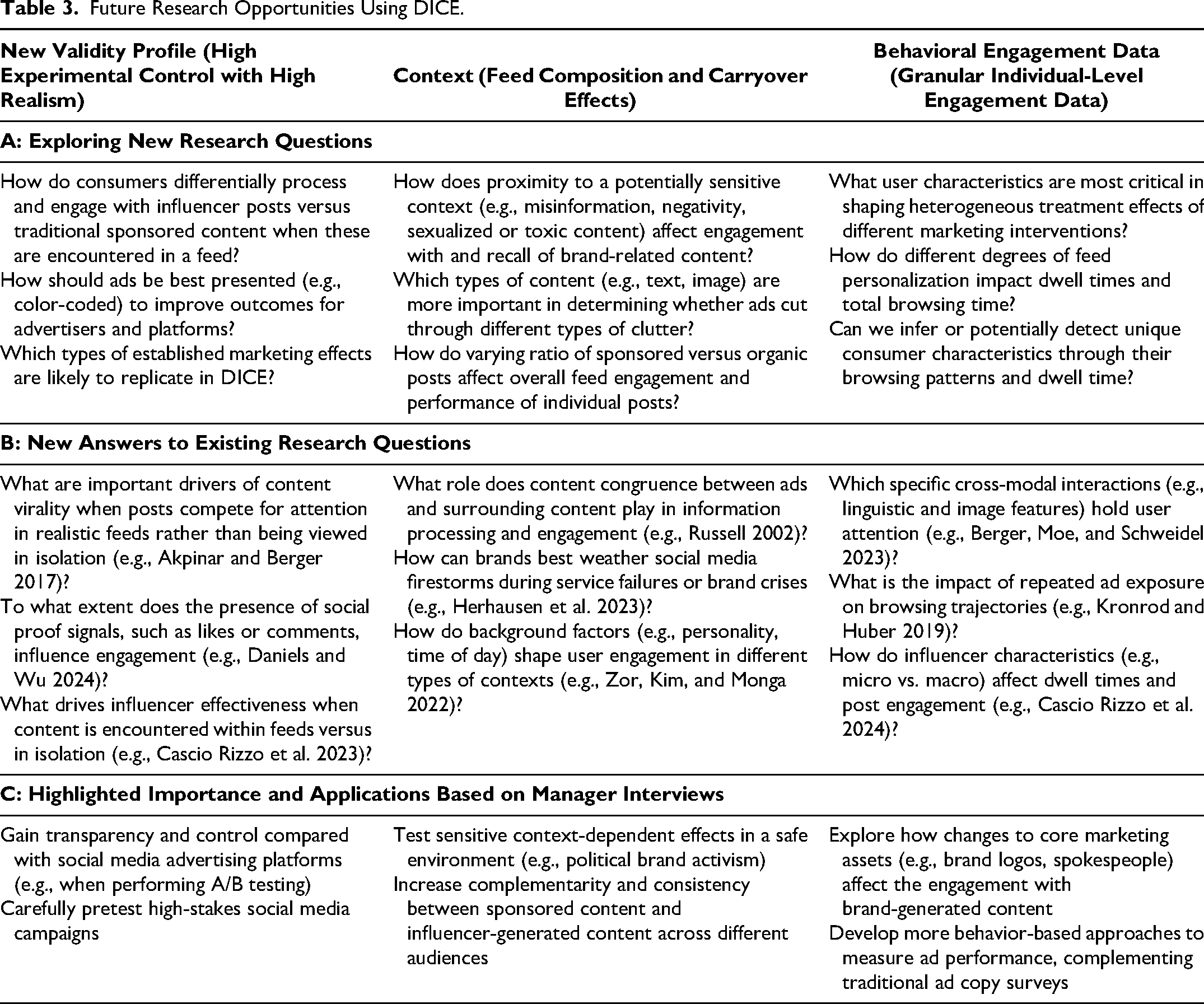

DICE offers several avenues for future work. Table 3 organizes these opportunities along three dimensions: (1) DICE's validity profile combining high experimental control with enhanced realism, (2) the ability to study context effects through feed composition manipulation, and (3) access to granular behavioral data. First, DICE's enhanced realism while maintaining experimental control enables researchers to test, for example, how influencer-generated content competes with traditional sponsored posts when both appear within the same feed, or to investigate how different ad presentation formats (e.g., variations in visual prominence) affect user engagement when content competes for attention. Second, the ability to systematically manipulate entire feed compositions opens new research directions around contextual spillovers. Future work could examine how exposure to potentially sensitive content (e.g., misinformation, polarizing political content) affects engagement with brand messages, or how varying ratios of commercial versus organic content might impact users’ overall feed engagement and the performance of individual posts. Third, DICE's granular tracking capabilities enable novel approaches to study attention and processing mechanisms. For example, future research might examine how repeated ad exposure affects browsing trajectories or which content modalities (e.g., textual vs. visual) most effectively capture and maintain attention in competitive social media environments for different types of consumers. Future research could also integrate DICE with complementary methodologies. For instance, combining DICE with eye-tracking technology could provide even more precise measures of attention.

Future Research Opportunities Using DICE.

DICE also offers a new methodological lens for reexamining and extending prior research findings. For example, it might help researchers better understand conditions that contribute to social media content going viral (e.g., Berger, Moe, and Schweidel 2023), how brands best navigate social media firestorms (e.g., Herhausen et al. 2023), and under which conditions congruence with surrounding content helps or hurts user engagement (e.g., Russell 2002).

Finally, DICE is also well-suited to implement more complex designs. Consider, for example, a study on how the emotional composition of entire feeds shapes engagement patterns. While past research suggests that negativity at the post level drives engagement (Robertson et al. 2023), it is unclear whether this finding extends to the feed level. Specifically, researchers could design a more complex study in DICE to investigate how feed-level negativity affects social media engagement. Such a study could use a stimulus sampling approach (Simonsohn, Montealegre, and Evangelidis 2025) to create 50 pairs of social media posts. Each pair would consist of a neutral baseline and a negatively framed counterpart. Study participants would be exposed to feeds containing 25 randomly selected posts, with each post randomly assigned to either the positive or negative version. Thus, this design would feature a more continuous manipulation (e.g., 0 to 25 negative posts), with exact proportions varying randomly across participants while preserving experimental control. Such variation would also allow researchers to probe potential nonlinear relationships. For example, while moderate feed negativity might maximize engagement through emotional arousal, extreme negativity might become aversive. The findings of this study may reveal potential tensions in platform design between engagement maximization and revenue generation, as feed negativity may drive user engagement while raising brand safety concerns.

Managerial Perspectives

Besides highlighting avenues for future research, Table 3 also outlines potential applications of DICE, as gained from interviews conducted with nine marketing practitioners and executives. Our interviewees spanned corporations from various industries (e.g., fast-moving consumer goods, apparel, food delivery) and firm sizes, with more than half of the interviewees working for international corporations with more than $100 million USD revenue in 2023 (for more details about our interviewees, see Web Appendix G). Across these interviews, three core themes emerged regarding the potential role of DICE: the generation of more transparent user insights, a sandbox environment for safe pretesting, and systematic campaign evaluation. In terms of transparency, managers saw strong value in regaining control over both the data-generating process and user characteristics. Some of the interviewees were acutely aware that A/B tests on major social media platforms often lack transparency about why certain content performs better for which kind of users. For instance, a manager at a large fast-moving consumer goods company indicated that these platforms “always keep some information to themselves, allowing for less transparent insights.” Because it is simple to design and append a survey to the browsing task, user characteristics and preferences can easily be elicited with DICE, providing deeper insights about how treatment effects vary across customer segments.

A second theme that managers valued is DICE's safe sandbox environment for risk-free experimentation. Multiple interviewees emphasized the value of testing content before committing to expensive campaigns on major platforms. For example, a manager at a social media agency perceived DICE as a useful “tool to run tests for our clients in a ‘safe environment’ before launching a major campaign.” This sandbox approach addresses several practical concerns for practitioners: It eliminates the risk of damaging brand reputation through poorly performing content, allows for iterative testing without budget constraints, and provides a controlled environment to understand user reactions before public exposure. Taken together, these features collectively enable managers to optimize a brand's marketing content before launching campaigns on major social media platforms that could reach millions of users and require more substantial resources.

As a third theme, several of the interviewees highlighted the possibility of using DICE to fine-tune their own marketing material (e.g., sponsored posts) to complement (or test against) well-performing influencer-generated content. This is well expressed by a manager at a different fast-moving consumer goods company, who viewed DICE as promising to explore “which specific content pieces work well and how our internal content pieces can be adapted to be as strong as user-generated content.” Additionally, DICE can also support postcampaign evaluation to identify the key driving features that contribute to the success of a campaign. Such efforts may produce more generalizable insights that can inform marketing strategies beyond the specific platform on which the campaign was run.

Across all themes, practitioners also emphasized that the behavioral data were perceived as complementary to more traditional ad copy surveys as well as that the app was “user-friendly” and could be integrated into existing workflows. While these insights are by no means representative, they highlight that marketing practitioners valued DICE's combination of experimental control and behavioral tracking capabilities, enabling them not just to optimize content performance, but to better understand the mechanisms driving that performance before committing resources to large-scale, costly campaigns.

Limitations and Extensions

DICE is no silver bullet and comes with limitations. Participants need to be recruited and interact with researcher-curated content rather than their personal feeds, potentially altering how they would naturally engage with the content. Additionally, DICE lacks the algorithmic, personalized content selection and social interaction capabilities of social media platforms. These constraints, however, represent intentional design choices prioritizing experimental control. The controlled environment, though more realistic than single-post vignette experiments, still represents a more artificial browsing context where session durations might differ from the duration of browsing one's own feed.

The increased realism that DICE provides comes with inherent methodological trade-offs. Researchers may encounter greater heterogeneity in participant responses and browsing trajectories, which can increase error variance and require larger sample sizes for adequate statistical power and more careful attention to data validation procedures. 5 In general, researchers (and reviewers) should expect smaller effect sizes compared with those of vignette experiments using a single post, as content competes for attention and participants are not prompted to engage with specific posts in the interest of greater study realism. Furthermore, the complexity of feed-based stimuli may also require more sophisticated analytical approaches, such as multilevel modeling techniques that account for sequential engagement (as in Case Study 2). Finally, dwell times should be seen as behavioral proxies rather than direct measures of attention allocation. While minimal dwell times reliably indicate inattention, longer dwell times do not necessarily indicate greater attention allocation (see also Loewenstein and Wojtowicz 2025).

Due to DICE's modular, open-source architecture, researchers can address specific limitations through future app extensions and customization. The app's oTree foundation supports multiplayer functionality for researchers seeking to study social interactions. For example, participants could react to a shared feed (e.g., through comments or likes), which then informs the social context shown to others. This would enable researchers to study phenomena such as opinion formation or polarization in social media environments. Those interested in algorithmic effects could implement basic recommendation systems based on collected behavioral data in a two-stage design (i.e., collect dwell times from one sample to curate a feed for a subsequent group).

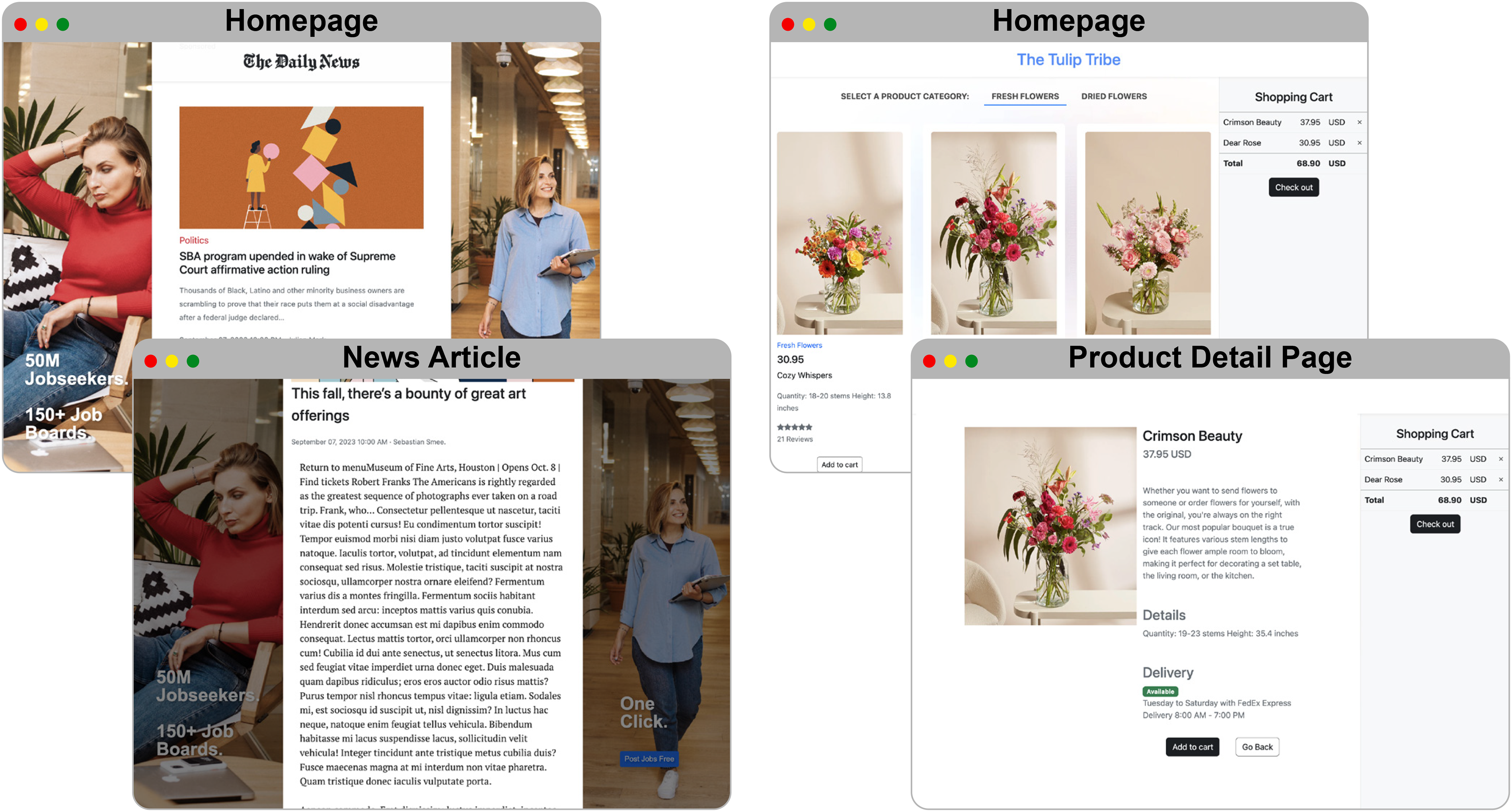

To demonstrate the flexibility to extend DICE, we have developed variations of the app using three different social media layouts (X-like, LinkedIn-like, and generic feeds) that are included in the core implementation. Furthermore, as shown in Figure 6, the DICE app could be adapted to any scrollable, feed-like structure. Specifically, preliminary versions of a news and e-commerce interface (see also Howe, Ubel, and Fitzsimons 2022) serve as a proof of concept and illustrate how to apply DICE to other digital environments using a scrollable feed. These extensions underscore that the technical functionalities and applications of DICE extend beyond social media research.

Potential Extensions of DICE to News and Shopping Feeds.

In summary, the current research presents an experimental paradigm, along with an open-source research app, a no-code web configurator, and granular behavioral tracking capabilities that seek to complement existing paradigms in social media research. DICE combines the advantages of high experimental control found in vignette-based experiments with more realistic contexts that mimic the environments in which observational and platform studies are conducted. Our hope is that DICE, and its companion app, will enable researchers to address important future marketing questions and phenomena across social media and other digital environments with greater precision and ecological validity.

Supplemental Material

sj-pdf-1-jmx-10.1177_00222429251371702 - Supplemental material for DICE: Advancing Social Media Research Through Digital In-Context Experiments

Supplemental material, sj-pdf-1-jmx-10.1177_00222429251371702 for DICE: Advancing Social Media Research Through Digital In-Context Experiments by Hauke Roggenkamp, Johannes Boegershausen and Christian Hildebrand in Journal of Marketing

Footnotes

Acknowledgments

The authors are grateful to the JM review team for their helpful and constructive comments. The authors also thank Jonas Goergen, Jochen Hartmann, Uri Barnea, Ioannis Evangelidis, Ana Martinovici, Gilian Ponte, David Kusterer, Emanuel de Bellis, Clemens Stachl, Bram van den Bergh, Anne-Kathrin Klesse, Ale Smidts, Cátia Alves, Hanna Smith, and seminar participants at the Rotterdam School of Management, University of St. Gallen, Technical University of Munich, Bocconi University, Vienna University of Economics and Business, and Penn State for their helpful comments on this project.

Author Note

This article is based on the dissertation of the first author.

Coeditor

Rik Pieters

Associate Editor

Vamsi K. Kanuri

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was financially supported by the Swiss National Science Foundation (100018_215544) and the Erasmus Research Institute of Management (ERIM).