Abstract

Existing language and literacy assessments have been widely validated and applied among monolingual students to identify those at risk for difficulties in reading, yet for emergent bilingual students (EBs), the effectiveness of current assessments to identify potential reading difficulties remains unknown. The present systematic review aimed to examine the criterion validity of assessments conducted among EBs to predict reading achievement in their second language (L2), in addition to the status quo of research methods (i.e., participant and assessment characteristics). A literature search yielded 23 studies that targeted preschool to fifth-grade EBs. Results suggest that decoding, reading fluency, and phonological awareness assessments presented close to satisfactory evidence of criterion validity, whereas assessments of other skills, such as reading comprehension, rapid automatized naming, letter knowledge, and verbal memory, showed weaker validity. Included studies showed homogenous profiles of EBs, indicating a lack of evidence for EBs from various language backgrounds. Existing assessments involved various domains of literacy, including code-related skills, oral language, and domain-general cognitive skills. These assessments also varied across aspects of standardization and language of administration. Limitations and suggestions for future research are discussed.

Over half of the world’s population uses more than one language (Grosjean, 2021), and some learn to read in a second language (L2) as a societal language, or the language of communication and classroom instruction (e.g., learning English in the United States for Spanish-speaking immigrant students; Dixon et al., 2012; Ellis, 2008). When L2 is used as the language of literacy instruction, emergent bilinguals represent a vulnerable population in general and special educational settings as they are still developing their language proficiency in both languages (Goldenberg, 2020) and often exhibit an achievement gap in reading and writing when compared with their monolingual peers (National Assessment of Educational Progress, 2022). We use the term emergent bilinguals (EBs) in the current article to refer to students that learn to read in an L2 that is different from their first language (L1), or home language, while they are still developing their L2 language proficiency. 1 They will potentially become bilingual as a result of developing L1 and L2 proficiency at home and school. By using the term “emergent,” we recognize their ongoing process of language learning that builds on their existing linguistic resources in their L1 (García et al., 2008; Piñón et al., 2022).

Teachers and schools often evaluate students’ language and literacy skills as part of response to intervention models and the assessments used play a crucial role in identifying students both in need of intervention and those who may need special education services (Samson & Lesaux, 2009). When it comes to the identification of learning disabilities for special education services, EBs are often underrepresented in kindergarten and first grade, when educators might hesitate to refer EBs for eligibility decisions, citing developing language proficiency as a confounding factor (Burr et al., 2015; Goodrich et al., 2022; Samson & Lesaux, 2009). On the other hand, at upper-grade levels, EBs are overrepresented in special education services, when educators are more likely to attribute their reading and writing difficulties to underlying learning disabilities (Goodrich et al., 2022; Samson & Lesaux, 2009).

These trends in the over- or under-identification of EBs with reading difficulties point to the need for unbiased, accurate assessments that help teachers understand the literacy progress of EB students. Assessments that are highly predictive of future reading achievement are desired for accurate early identification and intervention (Jenkins et al., 2007; Jenkins & Johnson, n.d.). Although much research has been conducted and synthesized on the accuracy of literacy assessments for monolingual English students (e.g., January & Klingbeil, 2020), less research has focused on the accuracy of these assessments with EBs (Landry et al., 2022). In addition, assessments conducted in EBs’ developing L2 may not fully capture their early cognitive and linguistic skills that are foundational to reading (Goodrich et al., 2022). Given the increasing numbers of EBs in schools today (National Center for Education Statistics, 2024) and the need for accurate early assessments of literacy skills, this study aimed to examine the current validity evidence on the use of cognitive, language, and literacy assessments among EBs. The current review targeted a globally widespread group of EBs, focusing on various L1 backgrounds and encompassing a full range of grade levels from preschool to late elementary. By synthesizing the validity of existing assessments for EB students, whose unique linguistic experiences challenge traditional assessment systems (De Houwer, 2023; Solano-Flores, 2008), the current systematic review provides timely evidence for practitioners’ critical selection of assessments to identify at-risk readers and offers theoretical implications for assessment validation efforts among EB students.

Identifying Students at Risk for Reading Difficulties: Current Practices

The early identification of students at risk for reading difficulties is a common practice worldwide (e.g., Singapore, the United States, India, Malaysia, Japan, Netherlands, and Denmark; Mather et al., 2020). Early identification provides in-time, intensive, and targeted instructional intervention instead of waiting for students to fail (Jenkins & Johnson, n.d.). Furthermore, students’ performance on reading-related skills provides information on their strengths and weaknesses, which helps to plan individualized instructional goals. For accurate interpretation of results, assessments should achieve satisfactory reliability and validity (American Educational Research Association [AERA] et al., 2014). Reliability refers to the consistency of results across testing occasions, and validity refers to the accuracy of the assessment in measuring the proposed constructs. The current review focused exclusively on validity.

To identify reading difficulties, assessments should measure reading-related constructs that highly indicate one’s reading success or failure. Thus, Jenkins et al. (2007) noted criterion validity and classification accuracy as important psychometric indicators of validity for reading-related assessments. Criterion validity taps into the strength of the correlation between students’ performance on a screening measure and an established reading measure. It includes concurrent validity, which examines the relationship between the screening measure and the criterion measure administered at a similar time, and predictive validity, which taps into the predictive relationship to future reading outcomes assessed later. A correlation coefficient exceeding 0.60 is desirable, according to the National Center on Intensive Intervention (2020). Importantly, the evidence of assessment validity may vary with new situations where the assessment is administered, including the type of criterion measure and the characteristics of test takers (AERA et al., 2014). Therefore, when synthesizing the evidence of criterion validity in the current review, we also synthesized the situations for the criterion validity evidence, including the characteristics of participants and criterion measures.

According to the Simple View of Reading (Hoover & Gough, 1990), skilled reading is a product of skilled decoding and listening comprehension. An examination of both components can inform students’ potential type of reading difficulties (Hoover, 2024). For example, poor readers may have difficulties in both decoding and listening comprehension or solely struggle in listening comprehension or decoding. Building upon the Simple View of Reading, the Cognitive Foundations Framework (Tunmer & Hoover, 2019) suggested that foundational skills (e.g., letter knowledge, semantic knowledge, phonological awareness [PA], and syntactic knowledge) can further pinpoint the potential sources of reading difficulties, thus offering a clearer direction for intensive instruction. Therefore, an assessment used to identify students at risk for reading difficulties may consider different linguistic and cognitive constructs depending on the targeted reading outcomes and the grade level (Jenkins & Johnson, n.d.). In a recent meta-analysis on the criterion validity of curriculum-based assessments for kindergarteners to second graders, January and Klingbeil (2020) examined the assessments for PA (onset sounds and phoneme segmenting), letter knowledge (letter names and letter sounds), and word-level reading (word identification and nonsense word reading). They found that assessments of word-level reading were examined more often than PA and letter knowledge. Word reading assessments showed stronger concurrent and predictive validity for phonics, oral text reading, and broad reading outcomes (concurrent: r = .60–.80; predictive: r = .52–.83), compared with moderate correlations for PA assessments (concurrent: r = .34–.51; predictive: r = .35–.42). Letter knowledge showed moderate to strong criterion validity for various outcomes (concurrent: r = .55–.57; predictive: r = .52–.64). It is important to note; however, besides linguistic assessments, such as PA, letter knowledge, and word reading, assessments should also consider skills that might indicate reading disabilities, such as vocabulary, working memory, attention, and other oral language measures (Jenkins et al., 2007).

Identifying EBs at Risk for Reading Difficulties

One current gap in literacy education for the EB population is the lack of assessment tools that are standardized and validated to appropriately screen for potential risks of L2 reading difficulties or assessment tools that will monitor EBs’ progress in L2 literacy achievement (Miciak & Fletcher, 2020). Simply applying the same assessment, the same standard of interpreting assessment performance, and the same cutoff levels developed for monolinguals to infer potential risks among EBs may impose monolingual bias by suggesting EBs are at a disadvantage in literacy (De Houwer, 2023). Most of the existing assessments available in L2 were normed without further investigation on whether they are also valid for EBs. For example, among the 161 reading screening tools for preschool to fifth grade listed by the National Center on Intensive Intervention (2021), only 99 provided bias analysis, whereas only 33 of the listed assessments examined the potential bias for EBs. Thus, for around 80% of assessments used as screening tools, it is unknown whether the assessments are biased against EBs. When assessments are not appropriate for EBs with developing L2 language proficiency, educators may find them frustrating to implement (Komesidou et al., 2022) and challenging to understand EBs’ risk for reading disabilities (Geva et al., 2019; Goodrich et al., 2022).

Another challenge of assessing EBs is a lack of understanding of this population, as pointed out in Solano-Flores (2008), which asked, “Who is given tests in what language by whom, when, and where?” (p. 189). The EB population presents great heterogeneity in their language proficiency and dominance, which is rarely captured in the existing assessment system. In addition, poor performance in L2 reading may result from developing language proficiency and/or reading disabilities, such as dyslexia or reading comprehension difficulties (Geva et al., 2019). A recent special issue on EBs’ reading and writing difficulties highlighted the following: the importance of the constellations of oral language skills (e.g., PA, vocabulary knowledge, and morphosyntactic ability) in both L1 and L2 and cognitive skills (e.g., executive function, rapid automatized naming [RAN]) in early identification of reading difficulties in bilingual learners (Zhang & Wang, 2023, p. 4).

Thus, the current systematic review aimed to evaluate language, literacy, and cognitive assessments as well as identifying for whom the validity evidence of these assessments is available.

Compared with assessing monolinguals, assessing EBs is more challenging because one needs to find the best approach to capture the cognitive and linguistic skills that are most relevant to L2 reading while considering their developing language proficiency. The underlying common cognitive processes hypothesis (Geva & Ryan, 1993) suggests that cognitive skills, such as working memory, RAN, and executive functioning are common underlying processes. In addition, metalinguistic awareness (i.e., PA, morphological awareness [MA], and orthographic awareness) is possibly language-general and transfers from one language to the other (transfer facilitation hypothesis; Koda, 2008). For example, Cantonese-English EBs’ rhyme awareness and RAN measured in Chinese (L1) were correlated with English (L2) cognitive measures at a moderate or strong level (Shum et al., 2016). Students with reading difficulties in L1 also displayed deficits in phoneme processing in both L1 and L2. When it comes to assessing cognitive skills among EBs to identify potential reading difficulties in L2, cognitive skills in L1 and L2 are not necessarily two separate constructs, but one common underlying construct that could be elicited by L1 when L2 assessment is not available or not possible to be administered.

Yet, existing meta-analyses found that metalinguistic awareness, decoding, reading fluency, and oral language measured in L2 had higher correlations with L2 reading outcomes than in L1 (e.g., Jeon & Yamashita, 2014; Ke et al., 2023; Melby-Lervåg & Lervåg, 2011). However, when measured in L2, EBs’ developing L2 language proficiency may constrain the comprehension of the task or production of answers, leading to erroneous responses (Geva et al., 2019). Farnia and Geva (2013) assessed EBs and monolinguals in Canada on their verbal memory with digit recall and nonword repetition tasks. They found that first grader EBs had lower performance than their monolingual peers. Therefore, when assessing EBs for potential L2 reading difficulties, the lower scores in cognitive and linguistic tasks in L2 might be confounded by developing language proficiency.

Rather than considering one’s L1 and L2 as two separate constructs in assessment, other approaches emerged to capture EBs’ knowledge and skills that are not specific to either L1 or L2, but their holistic linguistic system. For example, Goodrich and Lonigan (2018) found that conceptual scoring that gives credit to each vocabulary word known by EBs in either one of their languages could be a useful scoring method for those with developing language proficiency in L2. In addition, translanguaging is also being used for assessments among EBs with limited proficiency in L2 to assess one’s content knowledge, where EBs can see or listen to items in their L1 and/or L2, as well as responding with L1 and/or L2 (e.g., Lopez et al., 2017).

Previous Reviews on the Assessment Among EBs for Reading Difficulties

Previous reviews (Dixon et al., 2022; Newell et al., 2020; Oh et al., 2023) have addressed important questions concerning assessments with EBs, which can inform the identification of reading difficulties with screening and progress-monitoring efforts. Newell et al. (2020) examined 31 studies on the effectiveness of oral reading fluency assessments to predict reading performances among EBs between kindergarten and eighth grade. They found a moderate to strong correlation between oral reading fluency and reading outcomes (r = .36–.82). The correlational variation differed by grade level, the language of assessment (i.e., within-language vs. cross-language), and L2 language proficiency, though the language proficiency increased with grade level. Another systematic review by Dixon et al. (2022) focused on the use of dynamic assessment to identify EBs with reading disorders. Dynamic assessment contrasts with static assessment and measures one’s potential learning abilities in response to instruction, to predict one’s future performance rather than examining the status quo in L2 reading skills. When applied among Spanish-English EBs, dynamic assessments of word decoding had higher classification accuracy in identifying reading disorders in English (L2) than static measures. To summarize, existing reviews showed that assessments are partially effective in identifying bilingual students who may be at risk for reading difficulties, and the assessment validity varied with various factors, including the assessment approach (dynamic vs. static), language proficiency, and language of assessment.

The Current Study

To identify students at risk of reading difficulties and potential learning disabilities, educators need assessments that accurately reflect one’s reading-related skills. Previous reviews (Dixon et al., 2022; Newell et al., 2020) provided preliminary evidence on the potential of using some literacy assessments (e.g., oral reading fluency, dynamic assessment of word reading, reading comprehension) to identify EBs at risk for reading difficulties, yet assessments of other skills for early identification (e.g., PA, word decoding, letter knowledge, and RAN) are understudied. In addition, little is known about the assessments for EB population globally, so a comprehensive review of existing assessments for this population is needed.

The current study aimed to evaluate the available criterion validity evidence of assessment tools used to screen or progress-monitor EBs of different language backgrounds for their reading achievement. In addition, it is necessary to understand the situations (i.e., test-takers and criterion measures) where the evidence of validity is available. Therefore, the current study referred to previous systematic reviews (Newell et al., 2020; Oh et al., 2023) that summarized the participant characteristics, synthesizing the grade level and linguistic backgrounds of participants in included studies. While the current review does not necessarily target those with learning disabilities, it is expected to inform the identification of learning disabilities if assessments with satisfactory or unsatisfactory validity are found from the current review, thus minimizing the risk of misinterpretation of assessment results with EBs. We aimed to address the following questions:

For which participants (i.e., ages/grade levels and languages spoken) are measures and evidence of validity currently available?

What languages and constructs are examined in studies focusing on the criterion validity for L2 reading outcomes?

What is the criterion validity (i.e., concurrent and predictive validity) of existing assessments for reading achievement in L2 among EBs?

Does the criterion validity of existing assessments vary with the language of assessment?

Method

Literature Search and Screening

The literature search and screening process was a three-part procedure designed to capture studies that could potentially report on the criterion validity of screening tools for EBs. First, we searched electronic databases for published and unpublished literature from EBSCO (APA PsycINFO, ERIC, British Education Index, and Education Source), Web of Science, and ProQuest (Dissertation and Thesis Global and Linguistics and Language Behavior Abstracts). We searched titles and abstracts containing keywords related to EBs, reading difficulties, and assessment. The three keyword clusters were combined with the Boolean operator AND. In databases where subject terms are used (i.e., ERIC, APA PsycInfo, and Education Source), we also searched for studies with subject terms related to the keywords (see Table S1 from Supplemental Material for a complete list of search terms and results). The search did not limit publication format (i.e., peer-reviewed articles, book chapters, reports, dissertations, and theses) or date and was conducted in February 2023. An updated search was conducted in June 2024.

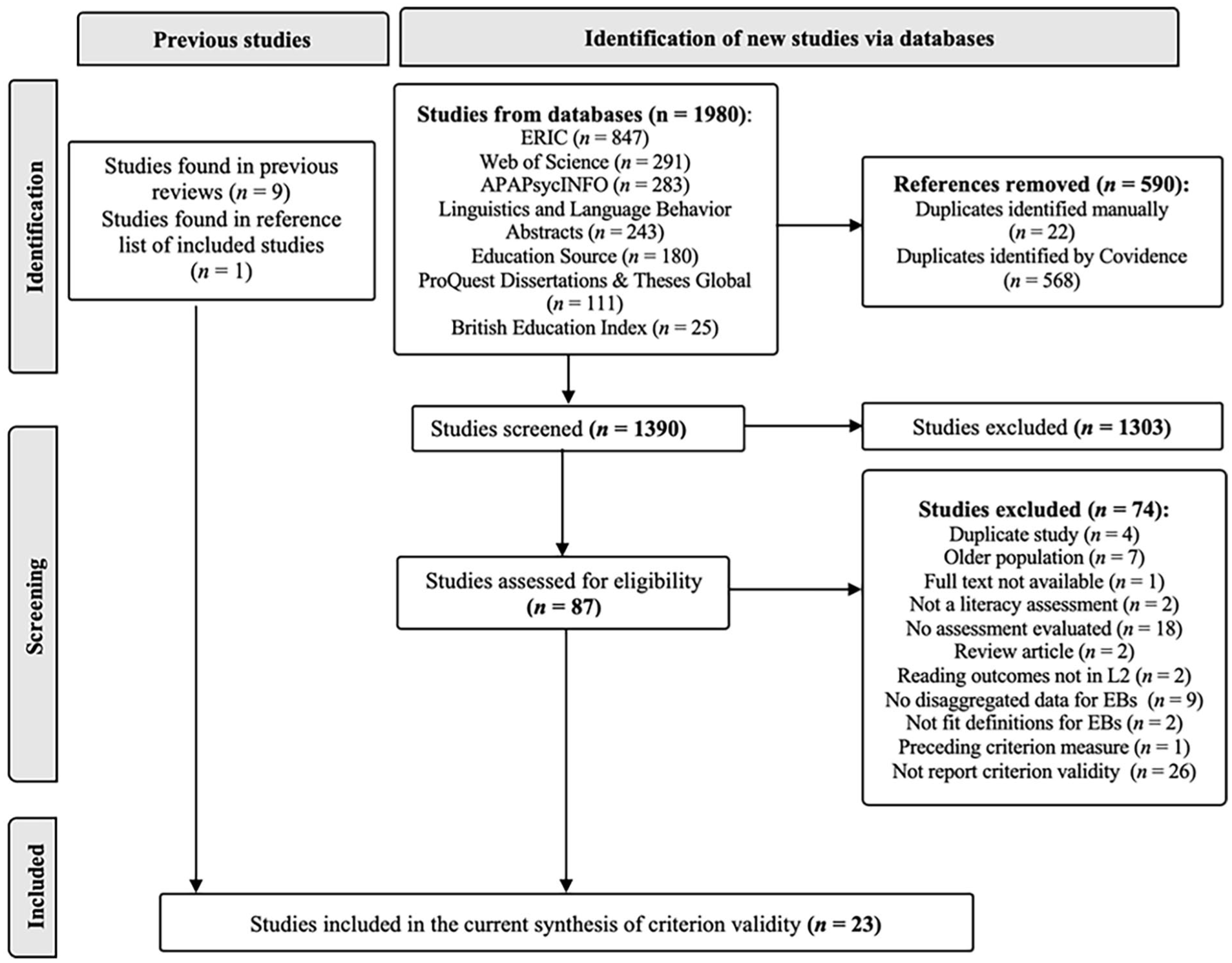

The electronic database search yielded 1,980 publications. After removing 590 duplicates, 1,390 publications remained for screening. The first author screened the titles and abstracts based on the inclusion criteria on Covidence (2020). The screening process was supported by a trained undergraduate research assistant, who independently and randomly screened a third of the studies. The agreement rate was 92.13%, and disagreements were resolved through discussion between the research assistant and the first author by reviewing the inclusion criteria. The initial screening from the electronic database search resulted in 87 publications eligible for full-text screening. Second, we conducted an ancestral search by hand-searching reference lists of all included publications, identifying one publication (Klein & Jimerson, 2005). Third, we reviewed the studies included in previous systematic reviews (Dixon et al., 2022; Newell et al., 2020) and identified nine additional studies (Baker & Good, 1995; Baker, 2007; Betts et al., 2006; Ganan et al., 2015; Han et al., 2015; Kim et al., 2016; Sáez et al., 2010; Vanderwood et al., 2014; Wiley & Deno, 2005).

During the full-text screening of the 87 studies, 74 studies were excluded according to our inclusion criteria. For dissertations and theses later published in a peer-reviewed journal, we included the published journal article (e.g., Han et al., 2015) rather than the original dissertation (e.g., Nam, 2012). A total of 23 studies that met all inclusion criteria were included for coding. Figure 1 illustrates the literature search process.

PRISMA Flow Chart of the Process Involved in Literature Search and Inclusion.

Inclusion and Exclusion Criteria

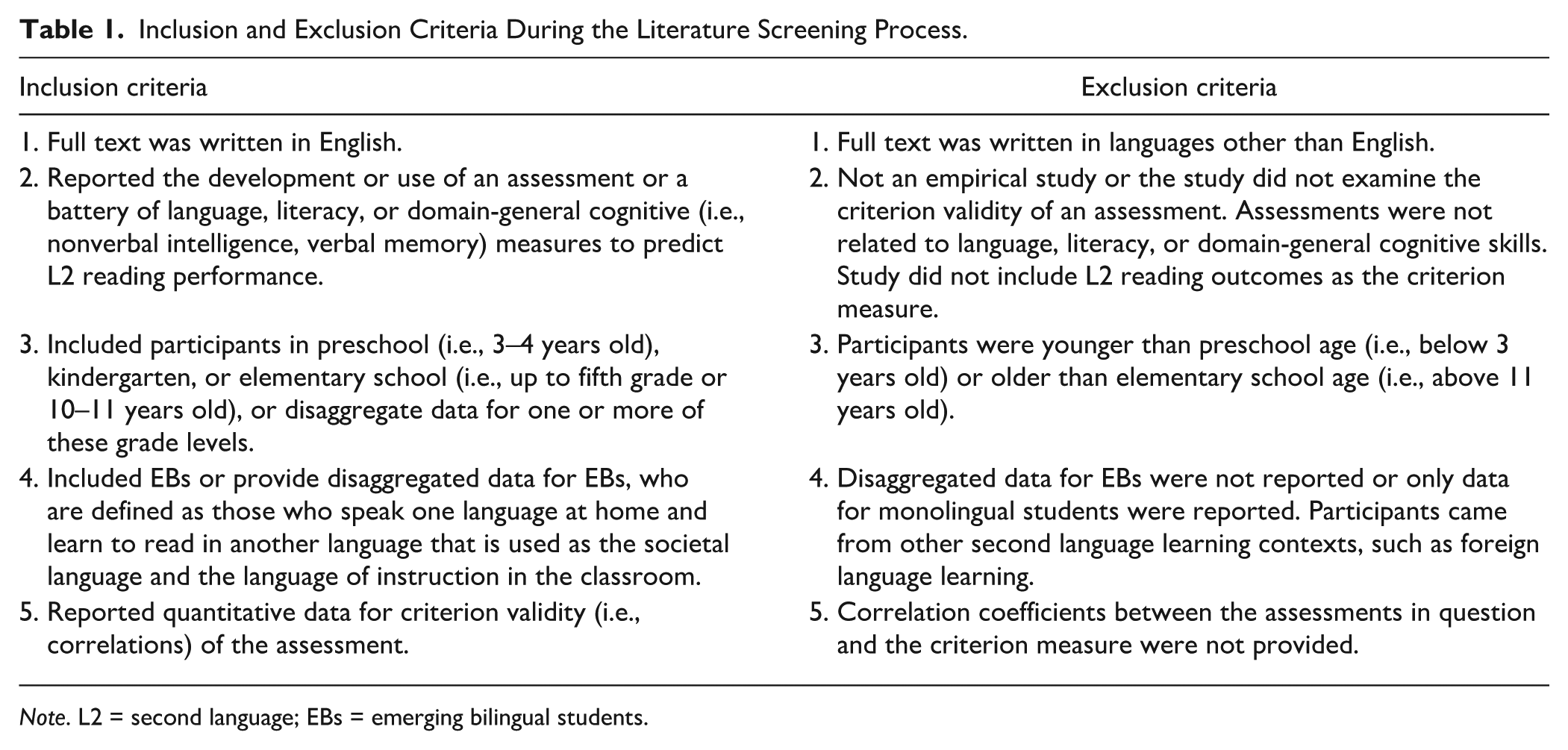

To be eligible for inclusion, studies had to meet the inclusion criteria, which were outlined in Table 1. Studies meeting the exclusion criteria were excluded during the title and abstract screening or full-text screening process.

Inclusion and Exclusion Criteria During the Literature Screening Process.

Note. L2 = second language; EBs = emerging bilingual students.

Study Coding

We employed a specific coding schema to extract data on criterion validity evidence from the included studies. Two authors with expertise in reading instruction and systematic review methodologies independently analyzed two practice studies to ensure reliability. Subsequently, the first author coded all included studies, whereas the second or third author independently coded 30% of the studies, which were randomly selected. Discrepancies between coders were resolved by discussion and consultation of the original article. A structured coding template was employed on Covidence, capturing essential details from each study as outlined below:

(1) Participant Information: We coded sample size, grade or age level, L1 and L2, L1 and L2 language proficiency, socio-economic status (SES), and language(s) used in instruction. Grade levels corresponded to those reported in the studies to acknowledge variations worldwide. Lower-SES was designated when more than half of the sample were identified as lower-SES or more than half of the participants’ school/district was qualified as low-SES.

(2) Details of Assessments: For the assessments in question and the criterion measure, we coded the names of assessments, languages of assessment, domain, and constructs of assessment, as well as the standardization of assessments. To categorize the constructs of assessments, we adapted the schema from previous existing literature, including code-related (e.g., RAN, letter knowledge), oral language (e.g., oral vocabulary), and domain-general cognitive (e.g., verbal memory) assessments (e.g., Bhalloo & Molnar, 2023; Hjetland et al., 2017), adding an additional category of literacy (e.g., decoding, reading fluency). We only included those in L2 for criterion measures, except when the criterion was determined by reading performance in both L1 and L2 (e.g., Eikerling et al., 2022). In this case, we coded both languages.

(3) Research Design: The research design was extracted to identify whether studies utilized a longitudinal or concurrent design. A longitudinal design was designated when the time lag of assessment administration exceeded 1 month (January & Klingbeil, 2020).

(4) Quantitative Evidence: Validity evidence was identified with pair-wise correlation coefficients (e.g., Pearson’s r) between the assessment and the criterion measure.

Results

The 23 studies that met our search criteria were published between 1989 and 2022, representing research conducted across five countries: the United States, Italy, Australia, South Africa, and India. The studies included approximately 9,038 participants across various educational levels, from preschool through the elementary grades. Among studies providing age information, participants ranged from 4.22 to 10.58 years old. Studies included journal articles (n = 17, 74%), dissertations (n = 4, 17%), a conference paper (n = 1, 4%), and a technical report (n = 1, 4%).

Research Question 1: Participant Characteristics

Fourteen studies (61%) included a specific grade level, ranging from preschool to third grade, whereas others included participants from more than one grade level. Four studies (17%) reported results on disaggregated samples (Farmer, 2013; Klein & Jimerson, 2005; Sáez et al., 2010; Wiley & Deno, 2005), and three studies (13%) included participants from aggregated grade levels (Eikerling et al., 2022; Fletcher et al., 2015; Rao et al., 2021). The other two (9%) reported criterion validity across grade levels (e.g., students were screened in kindergarten and assessed on the criterion measure in first grade) with the same sample (Fien et al., 2008; Millett, 2011). Seventeen studies (74%) involved participants primarily from lower socioeconomic status (SES) backgrounds. Only 14 studies (61%) provided information about the language instruction program in which participants were involved. Seven studies (30%) included participants receiving L2 instruction only, four studies (17%) included participants receiving dual language instruction, and three (13%) included a mix of participants either receiving L2-only instruction or dual language instruction. Within the same study, participants sometimes varied in the amount of dual language instruction they received. For example, Baker (2007) included three out of four schools that provided 90 min of Spanish (L1) and 45 min of English (L2) reading instruction, and one school provided 45 min of Spanish and 90 min of English reading instruction. Other studies (e.g., Ganan et al., 2015; Rao et al., 2021; Wilsenach, 2016) did not clearly outline the proportion of L1 and L2 instructional time.

The studies predominantly involved EBs with English as L2, except one focused on Italian as L2 (Eikerling et al., 2022). Twenty studies (87%) reported EBs’ L1, including Spanish, Korean, English, Somali, Hmong, Hindi, Northern Soto, Anindilyakwa (an indigenous language in Australia), and Marathi. Spanish-English EBs in the United States were the most studied. A third of the studies did not clarify L1 and/or L2 language proficiency among EBs (n = 8). Fifteen studies (65%) provided information on EBs’ language profiles in L1 and/or L2 but varied in the approach to gauge language use and proficiency. Some studies (e.g., Farmer, 2013; Ganan et al., 2015; Han et al., 2015) indicated language proficiency with students’ language status designated by the school, some (e.g., Farver et al., 2007; Petersen & Gillam, 2013) reported EBs’ proficiency via family-reported language use, whereas others (e.g., Kim et al., 2016; Millett, 2011) included language proficiency measures and standards reported by researchers or school systems. In addition, only a few studies (Baker & Good, 1995; Farver et al., 2007; Petersen & Gillam, 2013) provided a comprehensive report of language proficiency and usage in both L1 and L2. This varied approach to assessing language proficiency made it challenging to synthesize the L1-L2 language proficiency across studies. Generally, while some studies included participants with developing L2 proficiency (e.g., Baker & Good, 1995; Farmer, 2013; Fien et al., 2008; Fletcher et al., 2015; Ganan et al., 2015; Millet, 2011), others included diverse levels of L2 language proficiency, from developing to full L2 proficiency. The participant characteristics of each study are detailed in Table S2 in the Supplemental Materials.

Research Question 2: Languages and Constructs of Assessments

Language of the Assessments

Eight studies (35%) reported assessments in L1, belonging to alphabetic or morpho-syllabic writing systems, and 19 (83%) studies reported assessments in L2, belonging to alphabetic writing systems. Three (13%) studies (Baker, 2007; Farver et al., 2007; Petersen & Gillam, 2013) compared the validity of parallel measures in both L1 and L2. A summary of the assessments used across studies can be found in Table S3 in the Supplemental Materials.

Literacy Constructs of the Assessments

Literacy Assessments

Seventeen studies examined 27 literacy assessments (i.e., spelling, decoding, text reading fluency, and reading comprehension). Most of these assessments were administered in L2 (89%) and were standardized assessments (78%). The assessments were conducted across a wide range of grade levels, from kindergarteners to fifth graders. Reading fluency (52%) and decoding (33%) assessments were the most examined, generally among first to third graders. Reading comprehension assessments were less available (11%) and were mostly for third to fifth graders. Spelling assessment was only examined once among kindergarteners (Dubeck, 2008).

Code-Related Assessments

Five studies examined 17 code-related assessments (i.e., PA, letter knowledge, print concept, orthographic awareness, RAN), which were administered more in L1 (59%, Eikerling et al., 2022; Fletcher et al., 2015; Petersen & Gillam, 2013) than in L2. PA (35%) and RAN (24%) were the most examined. In addition, Petersen and Gillam (2013) used the best language score (BLS), derived from the higher score from an assessment conducted by L1 or L2, to represent a child’s conceptual score in the language system. More assessments were researcher-developed (76%; Eikerling et al., 2022; Fletcher et al., 2015; Petersen & Gillam, 2013) than standardized. Code-related assessments were conducted from the preschool level (Fletcher et al., 2015) to fifth graders as an aggregated sample (Eikerling et al., 2022).

Oral Language Assessments

Five oral language assessments were examined in three studies, including two on grammar (Eikerling et al., 2022), two on oral language ability of semantics and syntax (Petersen & Gillam, 2013), and one on oral vocabulary (Sáez et al., 2010). More assessments were examined in L2 (80%, Eikerling et al., 2022; Petersen & Gillam, 2013; Sáez et al., 2010) than in L1 or BLS (Petersen & Gillam, 2013). More standardized assessments were used (80%, Petersen & Gillam, 2013; Sáez et al., 2010) than researcher-developed assessments (Eikerling et al., 2022). Measures of oral language were assessed from kindergarten (Petersen & Gillam, 2013) to fifth grade (Sáez et al., 2010).

Domain-General Cognitive Assessments

Two studies examined three domain-general cognitive skill assessments (i.e., verbal memory, assessed with nonword and sentence repetition). All assessments were researcher-developed and were available in L1, L2, and BLS. L1 was more frequently used as the language of assessment (L1: Petersen & Gillam, 2013; Wilsenach, 2016; L2 & BLS: Petersen & Gillam, 2013). Petersen and Gillam (2013) examined verbal memory assessment among kindergarteners, whereas Wilsenach (2016) focused on third graders.

Composite Assessments

Three studies examined the validity of a single assessment battery for multiple skills. Get Ready to Read! (Farver et al., 2007) examined code-related skills and early writing skills. The 5-Screening Index (Jansky et al., 1989) included code-related skills, oral language skills, domain-general skills, and visual motor skills. The Dyslexia Assessment for Languages of India–Dyslexia Assessment Battery (Rao et al., 2021) included measures of code-related skills (i.e., letter knowledge, PA, and RAN), oral language (i.e., semantics, verbal fluency), and literacy (i.e., decoding, spelling, and reading comprehension).

Criterion Measures

The synthesis focused on criterion measures of reading or early reading outcomes. It excluded non-reading measures (e.g., spelling, vocabulary, or verbal memory) or those administered in L1. The 23 studies used a total of 36 assessments, with most measuring a single reading or early reading skill (e.g., letter knowledge, PA, decoding, text reading fluency, or reading comprehension), including decoding risk derived from word reading in L1 and L2 (Eikerling et al., 2022). Reading comprehension, decoding, and reading fluency (53%) were primarily used for criterion measures. Fourteen assessments (39%) measured broad reading, derived from a composite of two or more skills. Most (92%) criterion measures were formal, standardized assessments, such as the Stanford Achievement Test, California Achievement Test, and Woodcock-Johnson Test of Achievement. However, the studies under-reported whether these standardized criterion assessments were normed and tested among the EB population.

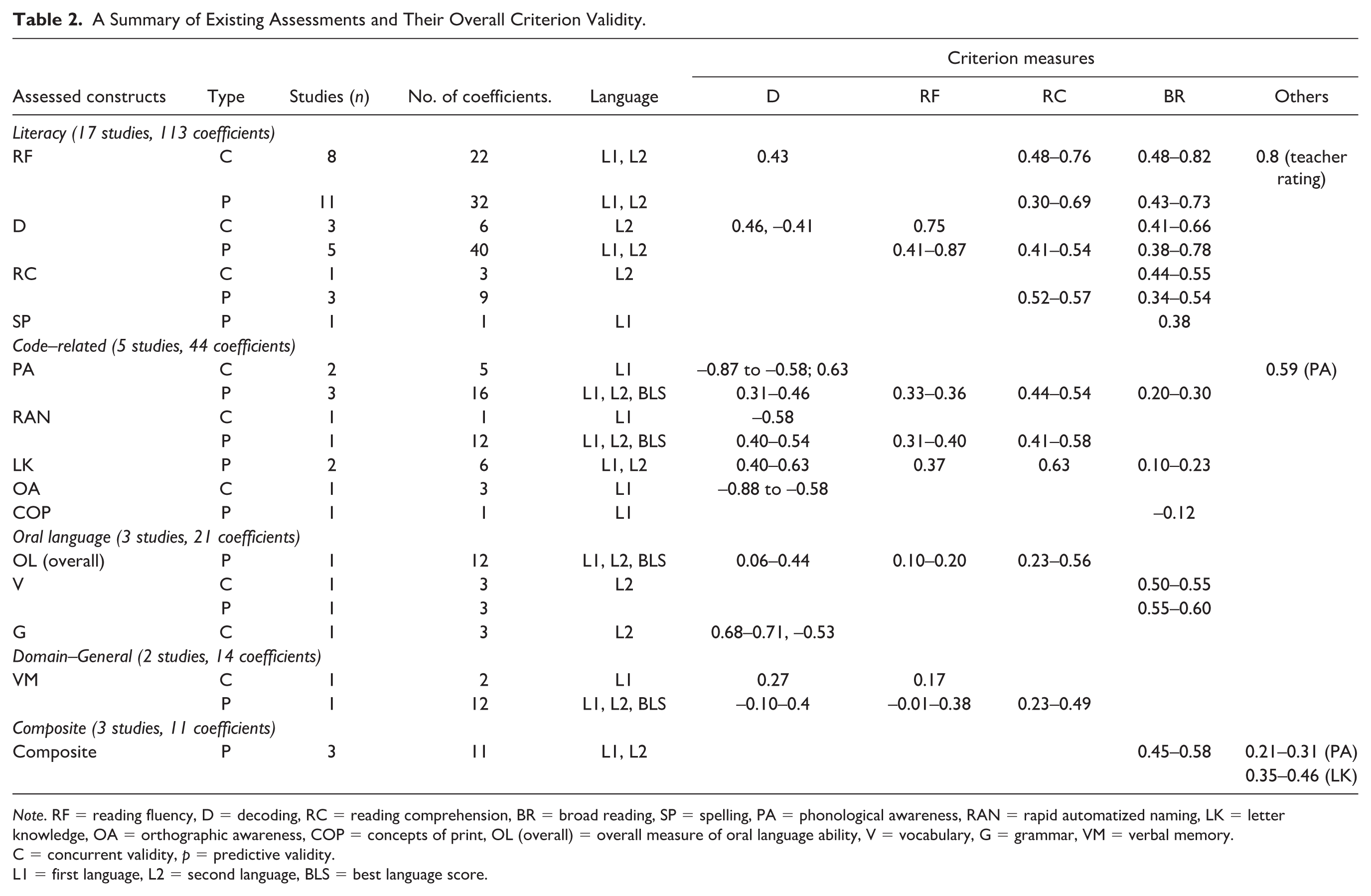

Research Question 3: Concurrent and Predictive Criterion Validity

Synthesis of the 23 studies generated 47 concurrent validity correlations from nine studies and 155 predictive validity correlations from 19 studies. Table 2 shows a summary of the range of criterion validity by domains of assessed constructs (i.e., literacy, code-related, oral language, and domain-general cognitive skills), type of validity, amount of evidence, and language of assessment. Table S2 in the Supplemental Materials shows the criterion validity reported by each assessment from all included studies. We considered correlational coefficients .60 and above as strong evidence, .30–.59 as moderate evidence, and below 0.30 as weak evidence for criterion validity (Cohen, 1988).

A Summary of Existing Assessments and Their Overall Criterion Validity.

Note. RF = reading fluency, D = decoding, RC = reading comprehension, BR = broad reading, SP = spelling, PA = phonological awareness, RAN = rapid automatized naming, LK = letter knowledge, OA = orthographic awareness, COP = concepts of print, OL (overall) = overall measure of oral language ability, V = vocabulary, G = grammar, VM = verbal memory.

C = concurrent validity, p = predictive validity.

L1 = first language, L2 = second language, BLS = best language score.

Literacy Assessments

Reading Fluency

Both concurrent and predictive validity were reported for reading fluency assessments. Eight studies across first to fifth graders examined the concurrent validity of reading fluency assessed in L1 and L2, generating 22 correlational coefficients. The concurrent validity of reading fluency was generally satisfactory: 68% of the concurrent validity coefficients for reading fluency, all in L2, exceeded .60 against reading comprehension (r = .63–.76), broad reading measures (r = .61–.82), and teacher rating of reading skills (r = .80). Other reported correlational coefficients were mainly at a moderate level for reading fluency assessed in L1 and L2 among first to third graders for broad reading (r = .48–.53) and reading comprehension (r = .48–.55), and among second to fifth graders for decoding risk (r = .43).

Eleven studies provided 32 correlation coefficients for predictive validity of reading fluency assessments among first to fifth graders, conducted in L1 or L2. All evidence was moderate to strong. Nine studies reported 17 coefficients higher than r = .60 for broad reading and reading comprehension. Nine studies reported 14 coefficients ranging from r = .40 to .59 for broad reading or reading comprehension. Only one study (Millett, 2011) reported a correlation coefficient as low as r = .30 with reading comprehension as the criterion measure.

Decoding

Six studies with 46 correlation coefficients explored the criterion validity of decoding assessment. Of these, three studies reported six concurrent validity correlations for decoding assessments in L2. Strong correlations were reported only by Fien et al. (2008) with reading fluency (r = .75) and broad reading (r = .62–.66) among kindergarteners and first graders. Moderate correlations were found with decoding risk scores (r = –.41–.46; Eikerling et al., 2022) and broad reading outcomes (r = .41; Sáez et al., 2010).

For predictive validity, five studies reported 40 correlation coefficients from standardized assessments for kindergarteners to second graders, primarily assessed in L2. Baker (2007) was the only study that evaluated decoding assessments in L1. Four studies reported strong predictive validity of decoding assessment with broad reading (r = .62–.78) and reading fluency (r = .65–.87) outcomes. All five studies reported a moderate correlation between decoding assessment in L1 and L2 and broad reading (r = .38–.58), reading comprehension (r = .41–.54), and reading fluency (r = .41–.58).

Reading Comprehension

Three studies reported 12 correlation coefficients, with three for concurrent validity and nine for predictive validity. The concurrent validity was reported by Sáez et al. (2010) only, showing a moderate correlation (r = .44–.55) with broad reading among third to fifth graders. The predictive validity was reported by three studies, with criterion measures of reading comprehension (r = .52–.57; Wiley & Deno, 2005) or broad reading (r = .34–.54; Kim et al., 2016; Sáez et al., 2010) administered in less than a year. Most of the correlational evidence for predictive validity (78%) showed moderate correlation (r = .40–.57; Sáez et al., 2010; Wiley & Deno, 2005).

Spelling

Only one study (Dubeck, 2008) reported the predictive validity of spelling assessment, with broad reading as the criterion measure. Dubeck assessed spelling in L1 in the spring of kindergarten, finding a moderate correlation (r = .38) between L1 spelling and L2 broad reading skills.

Code-Related Assessments

Phonological Awareness

Five studies reported criterion validity of PA assessments. Two studies reported five concurrent validity correlations. PA assessments in both studies were in L1, showing satisfactory concurrent validity (|r| > .60) with criterion measures of decoding and PA (Eikerling et al., 2022; Fletcher et al., 2015). Eikerling et al. measured PA response time and used dyslexia risk as the criterion measure, operationalized as a compound score of word reading risk in L1 (English or Mandarin) and L2 (Italian). The higher the score, the higher risk one would be in decoding. Unexpectedly, they found that higher response time in PA assessments was associated with lower decoding risk (r = –.87– –.58). Three studies reported 16 correlation coefficients for the predictive validity of PA assessments in L1, L2, and BLS. These studies reported weak to moderate correlations with reading outcomes. Predictive validity for decoding (r = .31–.46) and reading comprehension (r = .44–.54) was more satisfactory than broad reading (r = .20–.30) and reading fluency (r = .33–.36).

RAN

Two studies reported 13 correlational coefficients for RAN criterion validity. For concurrent validity, Eikerling et al. (2022) reported a close to strong correlation (r = –.58) between Mandarin (L1) RAN tasks and compound word reading risk scores. Petersen and Gillam (2013) reported the predictive validity of RAN assessment among kindergarteners, highlighting a moderate correlation overall (r = .31–.58), especially in L2 and BLS, with decoding (r = .41–.54), reading fluency (r = .31–.40), and reading comprehension (r = .41–.58) as the criterion measure.

Letter Knowledge

Two studies reported six correlational coefficients for predictive validity of letter knowledge in L1 (Dubeck, 2008) and L2 (Petersen & Gillam, 2013) among kindergarteners. The correlation coefficients ranged from .10 to .63, indicating stronger criterion validity with decoding (r = .40–.63) and reading comprehension (r = .63), compared with broad reading skills (r = .10–.23) and reading fluency (r = .37).

Other Code-Related Skills

Other code-related skills, print concepts (Dubeck, 2008) and orthographic awareness (Eikerling et al., 2022), were examined in L1. Orthographic awareness assessments showed promising concurrent validity against overall decoding risk score (Eikerling et al., 2022): the Spearman rho’s correlation for orthographic awareness accuracy was –.88 with decoding risk score, indicating a higher accuracy rate was strongly associated with lower risk. Unexpectedly, more response time of orthographic awareness tasks was associated with lower decoding risk (r = –.58). Print concepts measured in L1 during the spring of kindergarten showed a weak correlation with broad reading skills (r = –.12; Dubeck, 2008).

Oral Language Assessments

Three studies reported twenty-one correlation coefficients. Concurrent validity was reported with six correlational coefficients on oral vocabulary and grammar assessments, both conducted in L2. Vocabulary assessment showed moderate concurrent (r = .50–.55) validity with broad reading skills (Sáez et al., 2010). Grammar judgment assessed among second to fifth graders showed moderate to strong correlation in general: The concurrent validity of grammar judgment accuracy with decoding ranged from .68 to .71, and the concurrent validity of grammar judgment response time with a compound word reading risk score was –.53 (Eikerling et al., 2022). Predictive validity was reported on vocabulary assessment and overall oral language ability assessment in L1, L2, and BLS (Petersen & Gillam, 2013; Sáez et al., 2010). Overall oral language ability assessment did not show high correlational evidence with decoding or reading fluency (r = .06–.31), except when oral language was measured in BLS (r = .44). Oral language assessed in L2 and BLS showed moderate correlation with reading comprehension (r = .53–.56; Petersen & Gillam, 2013). Similar results were found with broad reading skills assessed among third to fifth graders (Sáez et al., 2010). The correlation between oral vocabulary and broad reading skills ranged from .55 to .60.

Domain-General Cognitive Assessments

Verbal memory assessment was reported by two studies with 14 correlation coefficients that evaluated verbal memory in L1, L2, and BLS. The concurrent validity of verbal memory in L1 reported by Wilsenach (2016) among third graders in South Africa showed a weak correlation with L2 decoding and reading fluency (r = .17–.27). Similar findings were reported for predictive validity with decoding and reading fluency as the criterion measures (L1: r = .18–.36; L2: r = –.01–.19) among kindergarteners (Petersen & Gillam, 2013). However, verbal memory assessment exhibited stronger predictive validity with reading comprehension (r = .23–.49), especially in L2 and BLS.

Composite Assessments

Three studies reported the predictive validity of assessments of various skills. Farver et al. (2007) examined a screener that covered letter knowledge, print concepts, PA, and early writing among Spanish-English preschoolers. The assessment, examined in both L1 and L2, showed a moderate correlation with letter knowledge (r = .35–.46) and a weak correlation with PA (r = .21–.31). The other two studies (i.e., Jansky et al., 1989; Rao et al., 2021) included a broader scope of skills, showing better predictive validity. Jansky et al. (1989) evaluated another screener that assessed letter knowledge, vocabulary, visual motor skills, and verbal memory at the beginning of first grade among predominantly Spanish-English EBs. The battery showed moderate correlations (r = .45–.53) with broad reading assessed from the end of second grade to fifth grade. Another assessment battery by Rao et al. (2021) was examined among EBs with Hindi or Marathi as L1 and English as L2. The assessment demonstrated close to satisfactory evidence of criterion validity (r = .58) with broad reading outcomes. However, the timing of these assessments was not specified, making it unclear whether the validity was concurrent or predictive.

Research Question 4: Variation of Criterion Validity With Language of Assessment

Only three studies directly compared the criterion validity between assessments conducted in L1, L2, and BLS, capturing various domains of assessments. A composite of early literacy skills (Farver et al., 2007) showed overall better predictive validity in L2 than in L1, especially with letter knowledge (L1: r = .35; L2: r = .46) as the criterion measure. For literacy assessments, reading fluency assessments demonstrated higher criterion validity in L2 (r = .67–.80) than in L1 (r = .52–.61) with reading comprehension and broad reading outcomes among second grade Spanish-English EBs (Baker, 2007). On the other hand, decoding assessments showed slightly higher predictive validity in L1 (r = .44–.49) than in L2 (r = .41–.47) for reading comprehension and broad reading outcomes (Baker, 2007).

Comparison for code-related, oral language, and domain-general cognitive assessments was reported by Petersen and Gillam (2013), which revealed a complex pattern across L1, L2, and BLS. PA assessed in L1 and BLS showed better predictive validity with decoding (L1 & BLS: r = .32–.46; L2: r = .31–.37) and reading comprehension (L1 & BLS: r = .51–.54; L2: r = .44), whereas PA assessed in L2 (r = .36) showed slightly better predictive validity with reading fluency than in L1 or BLS (r = .33–.34). For RAN assessments, the predictive validity favored L2 and BLS for decoding (r = .41–.54) and reading comprehension (r = .58) over L1 for decoding (r = .40–.43) and reading comprehension (r = .41). RAN assessed in L1 showed higher correlation with reading fluency (r = .40) than in BLS or L2 (r = .31). Oral language assessment showed better predictive validity for reading comprehension in BLS or L2 (r = .53–.56), and better predictive validity for decoding in BLS (r = .44) rather than L1 or L2 (r = .29–.31). Verbal memory showed overall better predictive validity when assessed in BLS (r = .36–.49) for decoding, reading fluency, and reading comprehension.

Discussion

The present review aimed to assess the criterion validity of existing assessments to predict reading achievement in EB learners. We synthesized (a) participant characteristics, (b) assessment features, (c) overall criterion validity, and (d) the potential impact of the language of assessments on criterion validity. A total of 23 studies met the inclusion criteria for the review.

For Whom Assessments Are Available

Overall, the current systematic review revealed the examination of assessment criterion validity among EBs across the United States, Italy, Australia, South Africa, and India, representing a wide range of cultural and linguistic contexts. The studies included approximately 9,038 participants from preschool through the elementary grades. Although the grade levels varied across studies, a majority (78%) were conducted among early grade levels, from preschool to third grade, responding to the importance of early identification of at-risk readers through foundational language and literacy skills (Jenkins et al., 2007; Jenkins & Johnson, n.d.; Tunmer & Hoover, 2019). However, it is equally important for future research to evaluate whether assessments at the upper grade levels show satisfactory evidence of validity. We also found that most studies examined a specific grade level or reported validity results through disaggregated grade levels, which are expected to provide a more locally appropriate approximation of the criterion validity, compared with including an aggregated sample of various grade levels, considering that EB students’ L2 proficiency is dynamic over time, and may affect the strength of criterion validity (Kim et al., 2016).

Despite the global representation of EBs, most assessments were examined for English as L2, mainly among Spanish-English EBs in the United States. In the current review, studies with students from lower SES backgrounds were also EBs in the United States. The distribution of evidence contrasts with the global diversity of EBs. For example, Joshi and McBride (2019) emphasized the need for an assessment system for the two-billion people using the abugidic writing system in countries like India, Ethiopia, or Eritrea, where a majority of learners are multilingual and biliterate or multi-literate. For those using non-alphabetic writing systems (e.g., Chinese) while learning to read in an L2 (e.g., English. Italian), whether we can apply the existing assessments from EBs with alphabetic L1 background (e.g., Spanish) remains unknown. For example, the contribution of MA and PA to reading development is different among alphabetic and non-alphabetic writing systems (e.g., Ruan et al., 2018). Chinese-speaking EBs with learning disabilities in Chinese as L1 struggled with PA and MA, whereas those with reading difficulties in English as L2 struggled with PA alone. In comparison, those poor in both orthographies struggled with PA, MA, and RAN (McBride-Chang et al., 2012). Thus, the current review highlighted a need to extend the evidence of assessment validity to a wider population of EBs, especially for non-alphabetic backgrounds. Existing findings on the cognitive and linguistic profiles of EBs with reading difficulties in L1 and L2 (e.g., McBride-Chang et al., 2012; Swanson et al., 2020) could further inform the construction of assessment that best captures the language-specific and language-general skills that predict L2 reading difficulties.

The current review synthesized information on language instructional programs and EBs’ L1-L2 language profiles, which affect assessment validity and interpretation (Geva et al., 2019; Goodrich et al., 2022; Newell et al., 2020). Findings revealed a diversity of language instruction programs (e.g., Baker, 2007; Dubeck, 2008) and a lack of detailed reports on EBs’ language environment, echoing findings by Newell et al. (2020) on the application of oral reading fluency assessments with EBs. Furthermore, the review identified inconsistencies in the report of EBs’ language use and proficiency, ranging from indirect school reports to family surveys. This underscores the complexity of defining EBs, who are heterogeneous in their language proficiency and usage across contexts, affected by factors like age of L2 acquisition and learning environment (De Houwer, 2023; Grosjean, 2021). This challenges the traditional school labeling systems, questioning their reliability across schools (Newell et al., 2020). The findings underscored the need for assessments that account for EBs’ comprehensive oral language in L1 and L2. Kim et al. (2016) demonstrated that the predictive validity of oral reading fluency assessments varied with English language proficiency among Spanish-English EBs.

Languages and Constructs Assessed

The reviewed assessments targeted several domains, including oral language, code-related, literacy, and domain-general cognitive skills, with a mix of administrations in EBs’ L1 or L2. Assessments showed variability in the language of assessment, targeted age groups, and standardization. Few studies examined oral language, domain-general cognitive, and composite assessments, complicating the identification of a clear pattern. Standardized assessments predominantly focused on literacy, oral language, and composite skills, examined primarily in L2. In contrast, code-related and domain-general cognitive skill assessments were more often conducted in L1 and designed by researchers. A wide range of grade levels were targeted by all domains. Specifically, code-related tasks were mainly given to younger children (preschool to second grade), decoding and reading fluency assessments to early elementary students (first to third grade), and reading comprehension evaluations to upper elementary students (third to fifth grade).

We identified the use of BLS to capture a child’s conceptual knowledge in PA, RAN, verbal memory, and oral language, which recognized the holistic linguistic system from all languages spoken (Petersen & Gillam, 2013). Despite emerging doubt about the fairness of static, standardized language and literacy assessments to identify reading disabilities (e.g., Dixon et al., 2022), most included studies only examined EBs’ cognitive and linguistic skills in either L1 or L2, indicating a more traditional view of one’s L1 and L2 as separate linguistic systems (De Houwer, 2023), rather than understanding one’s cognitive and linguistic repertoire in two or more languages as a whole system. A lack of holistic consideration of EBs’ linguistic and cognitive system might easily introduce a deficit-oriented mindset when they are being compared with their monolingual peers (Luk, 2023). When making educational decisions on EBs’ risk for learning disabilities, such as language disorder and dyslexia, assessments not accounting for their existing knowledge and skills in all languages as a whole create frustration among educators (Komesidou et al., 2022) and may induce misidentification of learning disabilities for EBs.

Overall Evidence of Criterion Validity

Literacy assessments, especially decoding and reading fluency, showed strong criterion validity, with four out of six studies demonstrating strong criterion validity (r ≥ .60) of decoding assessment with broad reading (r = .62–.78) and reading fluency (r =.65–.87) outcomes. All studies indicate at least moderate correlations across various reading measures. The findings aligned with January and Klingbeil (2020), which reported that the average criterion validity of decoding assessments, indexed by correlational coefficients, ranged from r = .52 with broad reading and r = .83 with oral reading outcomes. Reading fluency assessments from the current review also showed overall strong criterion validity across various criterion measures (reading comprehension: r = .48–.76; broad reading: r = .48–.82; teacher rating of reading: r = .80) despite variability that potentially linked to students’ grade level and L2 language proficiency (Newell et al., 2020). Conversely, reading comprehension assessment moderately correlated with reading comprehension (r = .52–.57) or broad reading (r = .34–.54) outcomes.

Code-related assessments of PA, letter knowledge, and RAN showed weak to moderate evidence of criterion validity across studies, demonstrating variability with criterion measures. For example, PA assessments showed stronger criterion validity with PA (r = .59), decoding risk (r = –.87– –.58), decoding (.31–.46), and reading comprehension (r = .44–.54) outcomes but weaker validity with broad reading and reading fluency (r = .20–.36). Similarly, letter knowledge had stronger criterion validity with reading comprehension and decoding (r = .40–.63) over broad reading skills and reading fluency (r = .10–.37). The lower correlation for code-related assessments than for literacy assessments aligns with findings by January and Klingbeil (2020). Like PA assessments, the criterion validity of RAN assessments emerged as moderate to strong for decoding and reading comprehension outcomes.

The review indicated a need for more evidence regarding assessments of oral language, verbal memory, orthographic awareness, print concept, and spelling. Oral language assessments in L2 or BLS showed a moderate criterion validity with reading comprehension and broad reading (r = .50–.60), although results varied for decoding and reading fluency. Verbal memory correlations with reading outcomes were weak in L1 or L2 (r = –.10–.36) but stronger in BLS settings with reading comprehension (r = .36–.49). Some assessments, like orthographic awareness, showed moderate to strong validity. In contrast, others, like print concepts and spelling, had weaker evidence. However, the findings were not yet generalizable because of the limited evidence. Multiple-measure assessments (e.g., oral language, code-related, and domain-general skills) did not outperform single-measure assessments when correlated with single code-related skills (r = .21–.46) or broad reading outcomes (r = .45–.58).

The current review explored the language of assessment as a potential moderator, although only four out of 23 studies provided direct evidence. A synthesis of these four studies showed that the language of assessment impacts criterion validity, with the effect depending on the specific skills measured. For example, PA assessed in L1 and BLS showed better predictive validity with decoding and reading comprehension than in L2. However, PA assessed in L2 showed better predictive validity with reading fluency. In addition, assessments of composite skills, reading fluency, RAN, and oral language showed better criterion validity in L2, whereas RAN, decoding, and PA yielded better criterion validity in L1. BLS showed better results in capturing some skills, such as verbal memory, which showed better predictive validity for decoding, reading fluency, and reading comprehension.

Limitations

This review thoroughly examines literacy assessments across various domains and identifies areas needing further research. First, although the literature search did not restrict country, publication type, or year of publication, it did not explicitly include technical reports on commercial, standardized assessments. Future work could employ a broader search strategy. Second, although the current review described grade levels and language proficiency as characteristics of participants, it did not evaluate whether these are potential moderators for assessment validity (January & Klingbeil, 2020; Newell et al., 2020). Finally, the current review did not examine the validity of the criterion measures of EBs, assuming that the criterion measures chosen by the researchers validly and reliably captured reading performances of EBs.

Practical and Theoretical Implications

Although EBs should be screened for risk of reading difficulties, schools likely do not have access to assessments that have been evaluated and normed for the EB population. The current review suggested that while some literacy assessments showed satisfactory validity with external, standardized measures of reading achievement, assessments of non-literacy constructs, like oral language, letter knowledge, or verbal memory did not necessarily show good validity with criterion measures. Furthermore, with the validity evidence varying with the construct of criterion reading outcomes and the language(s) of administration, it is recommended for educators to review the validity evidence from the assessment manual to confirm whether the EB population was included in the validation process (International Dyslexia Association, 2023) and what were the specific criterion measures used for validation. In addition, practitioners are recommended to interpret the assessment results beyond the given percentile or the standard scores. Rather, other factors should be taken into consideration when determining EBs’ risk of reading difficulties, such as their response to quality literacy instruction, their assessment performances compared with EB peers with similar linguistic backgrounds, their language backgrounds and experience at home, and the reasons for erroneous responses in assessments (Goodrich et al., 2022; International Dyslexia Association, 2023).

To better understand the assessment of EBs’ language, literacy, and cognitive skills to identify those at risk for reading difficulties, future research should include a more diverse range of EBs, particularly those from non-alphabetic language backgrounds, such as Chinese-English or Arabic-Dutch EBs, who are developing language and literacy skills in non-alphabetic and alphabetic languages. Furthermore, students’ language instruction program and language use and proficiency should be examined and reported, considering that these factors may affect the interpretation of validity evidence. For example, EBs who receive L2-only instruction at school should be considered separately from those who participate in dual-language education due to the quantity of L1-L2 exposure and, thus, differences in L1 and L2 language and literacy proficiency. Furthermore, there remains a gap in understanding the optimal approach for administering assessments in terms of the language(s) of assessment, a question not fully addressed by existing studies. In addition, exploring assessments in areas beyond literacy, such as oral language and cognitive skills, is essential.

Conclusion

The current systematic review evaluated the criterion validity of assessments of multiple domains—including oral language, code-related, literacy, and domain-general cognitive assessments—aiming to predict L2 reading concurrently and longitudinally. Among 23 studies, significant evidence of criterion validity was found primarily for decoding, reading fluency, and PA. In contrast, constructs like reading comprehension, RAN, letter knowledge, and domain-general skills showed weaker validity. The review highlighted variability in validity based on criterion measures and assessment language. Future research should include a broader scope of the EB population and take into consideration EBs’ linguistic backgrounds as a potential factor in assessment outcomes.

Supplemental Material

sj-docx-1-ldx-10.1177_00222194251331533 – Supplemental material for Identifying Emergent Bilinguals at Risk for Reading Difficulties: A Systematic Review of Criterion-Validity of Existing Assessments

Supplemental material, sj-docx-1-ldx-10.1177_00222194251331533 for Identifying Emergent Bilinguals at Risk for Reading Difficulties: A Systematic Review of Criterion-Validity of Existing Assessments by Jialin Lai, Marianne Rice, Juan F. Quinonez-Beltran, Ramona T. Pittman and R. Malatesha Joshi in Journal of Learning Disabilities

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.