Abstract

We examined whether a Phonics + Set for Variability (SfV) reading intervention would lead to better irregular word reading compared to Phonics + Morphology within a cluster randomized control trial (RCT) design with a follow-up measurement. The participants were 273 Grades 2 and 3 students with reading difficulties (139 in the Phonics + SfV and 134 in the Phonics + Morphology) who received intervention in small groups (2–4 children), 4 times a week, 30 minutes each time, for 15 weeks. All participants were from schools in Alberta, Canada. Results of hierarchical linear modeling showed that there was a significant effect of intervention on all reading outcomes (e.g., from pre- to posttest the effect sizes for Phonics + SfV ranged from g = 0.74 to 1.54 and for Phonics + Morphology from g = 0.75 to 1.49). Unexpectedly, there were no differences between the intervention conditions in any of the outcome variables, including irregular word reading and morphological awareness that the interventions partly focused on.

In the last two decades, both classroom reading instruction and “pull-out” interventions in English have been heavily influenced by research on phonics, which emphasizes the systematic teaching of connections between graphemes and phonemes. Because English spellings are based on an alphabetic system in which the primary purpose of letters is to represent sounds, it makes sense to teach children the grapheme-phoneme correspondences (GPCs) to decode both familiar and unfamiliar words. In line with this argument, several intervention studies have shown that phonics instruction produces better results than no phonics instruction or nonliteracy instruction (e.g., Ehri et al., 2001; Galuschka et al., 2014; McArthur et al., 2018; Slavin et al., 2011, for evidence from meta-analyses). For example, in a systematic review of 14 randomized control trial (RCT) reading interventions that compared phonics training to no phonics, or nonliteracy training (e.g., math), McArthur et al. (2018) found that phonics had moderate to large effects on improving regular word reading, nonword reading accuracy, and irregular word reading accuracy in English-speaking poor readers.

However, English is also known to have an opaque orthography, meaning that not all words can be read accurately by applying the GPC rules (Seymour et al., 2003). The words that cannot be read accurately by applying the GPC rules are called irregular words (Coltheart, 2006) (see Note 1). Whereas phonics can be used to accurately read regular words, phonics alone may not lead to accurate reading of irregular words (we acknowledge though that parts of the irregular words might be regular and can be read correctly by applying the GPC rules).

To address the issue of irregular word reading, Tunmer and Chapman (2012) proposed a “2-step model of word decoding” that posits the existence of a second-step in decoding—the linking of a spelling pronunciation to a word representation undertaken after children have created phoneme strings. This second step requires children to have a flexible mental “Set for Variability” (SfV) to match pronunciations derived from GPCs to entries in their mental lexicon (see Elbro et al., 2012; Steacy et al., 2019, for additional studies). The term “Set for Variability” is rooted in the work reported by Gibson (1965) and Venezky (1999), who suggested that in order for English-speaking children to be successful in using phonics they should also develop a “set for variability.” More specifically, Venezky (1999) stated that “if what is first produced does not sound like something already known from listening, a child has to change one or more of the sound associations (most probably a vowel) and try again.” (p. 232) SfV is most often measured using an oral task where children are asked to correct the pronunciation of a regularized irregular word (e.g., “wasp” pronounced as “waesp”).

Studies have shown that SfV can be trained in children (see Colenbrander et al., 2022; Dyson et al., 2017; Savage et al., 2018; Zipke, 2016). Savage et al. (2018) conducted an intervention study that involved two sites in Canada to examine the added benefits of teaching SfV. They followed a group of Grade 1 children with reading difficulties from a first pre-test (September) to a second pre-test (December), to a post-test (May), and to a delayed post-test administered in the fall of Grade 2. In the Phonics + SfV condition, children received intensive instruction in GPCs as well as in variable pronunciations (e.g., for s, c, g, th) that systematically taught them to blend these phonemes to pronounce words. Savage et al. (2018) showed that there were statistically significant advantages at post-test for the Phonics + SfV program over the control group (they had phonics and sight words both taught in the traditional way that promoted rote memorization) on measures of word reading and spelling. Advantages favoring the SfV group remained for word reading and sentence comprehension at delayed post-test at the beginning of Grade 2, 5 months after the intervention had finished. Positive effects of SfV have also been reported in a recent study by Colenbrander et al. (2022) that compared different methods of instruction for teaching irregular words in typically developing children. In their study, 85 kindergarten children were randomly assigned to the Look and Say (LSay), Look and Spell (LSpell), Mispronunciation Correction (MPC; that is, SfV), or a wait-list control condition. Children were taught 12 irregular words over three sessions controlling for instructional time and the number of exposures to written and spoken words across conditions. Children showed evidence of superior learning of trained words in the MPC and LSpell conditions, compared to LSay and controls. Differences between the MPC and LSpell conditions were not significant.

An alternative way of getting to the pronunciation of irregular words may be through morphology. There is existing evidence that morphology interventions can have a small-to-moderate effect on word reading (see P. N. Bowers et al., 2010; Goodwin & Ahn, 2010, 2013, for meta-analyses), although none of the existing studies have specifically focused on irregular words. The English spelling system is designed to represent both the sounds (phonology) and the meaning (morphology) of words, and this is why English is described as a morphophonemic language (Venezky, 1967). Because the spelling-meaning connection in English is more reliable than the orthography-phonology connection (e.g., the “w” in two, twice, twin, twenty is used to signal the meaning connection of these words as opposed to “too,” “to,” “two” that unless we use it in a sentence we do not know which /to/ someone is referring to), this means that if we were to teach children the connection between the spelling of words and their meaning, it should lead to at least as large effects in irregular word reading as in regular word reading. In other words, the type of words should not matter if our focus is the teaching of the spelling-meaning connections. A program that trains the spelling-meaning connections is called Structured Word Inquiry (P. N. Bowers & Kirby, 2010). In Structured Word Inquiry (SWI), children learn about the morphology and etymology of words and how to generate and test hypotheses about the spelling–meaning connection of words when there is a conflict (e.g., Why is there a w in two but not in too?). Research on SWI is still in its infancy (e.g., P. N. Bowers & Kirby, 2010; Colenbrander et al., 2021; Georgiou et al., 2021). For example, Georgiou et al. (2021) examined the effects of SWI on reading in a group of persistently poor Grade 3 readers. After 10 weeks of one-on-one intervention, they found no significant effects of intervention on WRAT Word Reading, but they could not test if the effects varied as a function of word type.

The Present Study

Working with a group of Grades 2 and 3 children with reading difficulties, the aim of the present study was to examine whether a Phonics + SfV intervention would lead to a larger improvement in children’s reading performance (particularly in irregular word reading) than a Phonics + Morphology intervention. Given that SfV was designed to help struggling readers try alternate pronunciations of different graphemes that may be the cause of irregularity in words, we expected that it would lead to significantly better results than the Morphology intervention that does not focus on specific graphemes (Hypothesis 1). Because the two interventions differ in the SfV and morphology content, we also assessed SfV (i.e., the ability to generate alternate pronunciations of certain graphemes) and morphological awareness (i.e., the ability to identify and manipulate the smallest units of meaning) to explore any differential effects of instruction on these processes at post-tests. We expected that the Phonics + SfV intervention would lead to better performance in the SfV task and the Phonics + Morphology intervention would lead to better performance in the morphological awareness task (Hypothesis 2).

Method

Research Design

The study used a cluster Randomized Controlled Trial (RCT) design with a follow-up measurement. Prior to recruiting our participants, we performed a power analysis to determine the number of classes (Level 2) required for the study. The analysis revealed that with a conventional alpha of .05, approximately n = 80 classes would be sufficient to detect an effect size of .25 with the conventional power of .80.

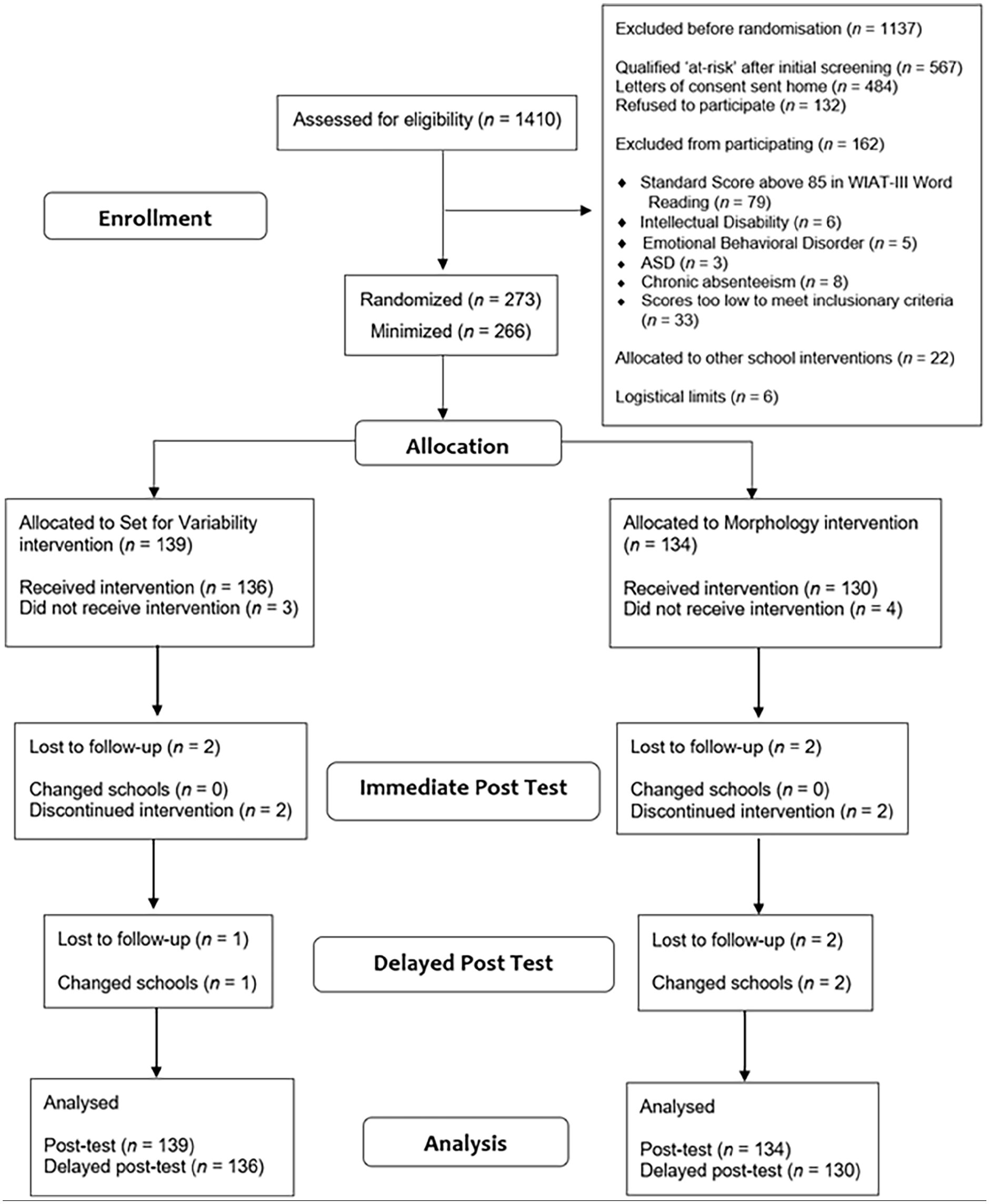

A true random number generator (www.random.org) was used to allocate the classes to conditions by first creating a 1 or 2 number code to represent the two intervention conditions. Two hundred seventy-three Grades 2 and 3 children from 80 classrooms nested within 24 schools across four school divisions in Alberta (Canada) were recruited to participate in the study. A CONSORT flow diagram of all participants in the study is reported in Figure 1.

ITT Analysis Consort Flow Diagram of Participants.

Participants

The participants were selected in a stepwise fashion. First, during the second week of September 2021, the teachers of 86 Grades 2 and 3 classes in the four participating school divisions assessed 1,410 children in three reading screeners (Sight Word Efficiency and Phonemic Decoding Efficiency from the Test of Word Reading Efficiency [TOWRE-2; Torgesen et al., 2012], and the Test of Silent Word Reading Fluency-2 [TOSWRF-2; Mather et al., 2014]). This initial screening identified 567 children with reading difficulties who performed below a standard score of 90 in at least two reading screeners. The next step involved checking if any of these children were experiencing any additional difficulties that would prevent them from participating in the intervention. Eighty-three children were further excluded because (a) they were identified as having intellectual, sensory, or behavioral difficulties (e.g., Code 42; Special Education Coding Criteria, Alberta Education, 2021); and/or (b) they had a history of chronic absenteeism at school (i.e., history of missing one or more weeks per month of school).

Next, we sought parental consent for the remaining 484 children to participate in further testing and in our intervention. Three hundred fifty-two received parental consent and were subsequently assessed by our trained researchers on WIAT-III Word Reading (Wechsler, 2009) to exclude any children who might have been dysfluent (as shown by their performance in the three reading screeners) but accurate. This was necessary because our intervention aimed to improve children’s reading accuracy and these children did not experience difficulties in reading accuracy. Seventy-nine children with a standard score above 85 in WIAT-III Word Reading (Wechsler, 2009) were subsequently excluded, thus leaving our sample with 273 children (127 females; 146 males; Mage = 7.68 years at pre-test, SD = .69) from 80 classes. Six classes from the initial pool of 86 classes in the participating schools no longer had qualifying children to participate in our intervention.

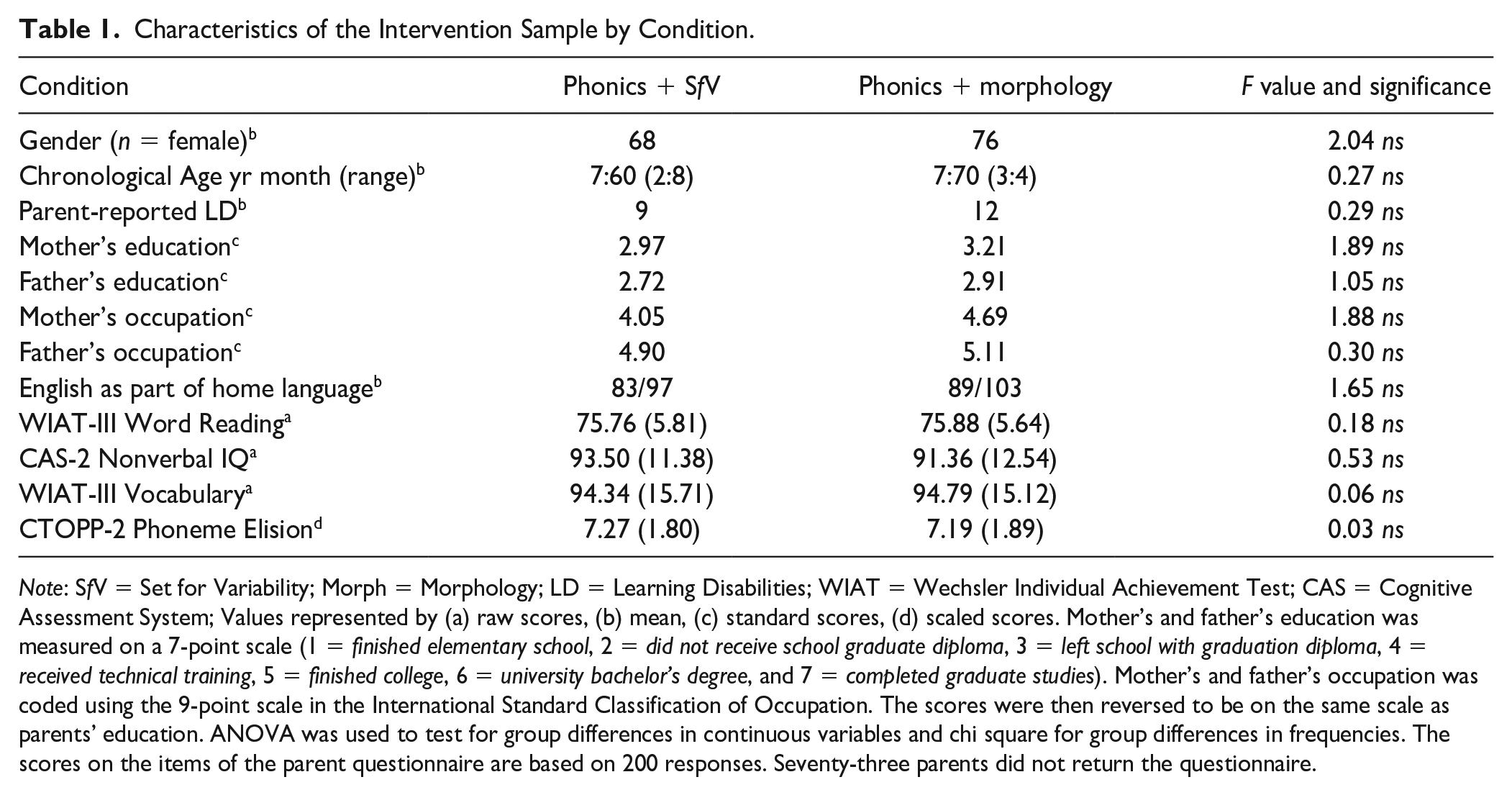

Of these 273 children, 139 from 40 classes were assigned to Condition 1 and 134 from 40 classes were assigned to Condition 2 (see Table 1, for sample characteristics). Randomization to the two intervention conditions happened at the class level. This means that all students from the same class were assigned to the same intervention condition. Seven students (2.6% of the total sample: 1.1% from Condition 1 and 1.5% from Condition 2) did not complete the intervention. Four students withdrew from the study before immediate post-test due to poor attendance, and three students moved during the intervention to a different school division. At immediate post-test, 1.4% of the total sample (0.7% from Condition 1 and 0.7% from Condition 2) was not available to be tested. In addition, 1.1% of the total sample (0.4% from Condition 1 and 0.7% from Condition 2) was not available to be tested at delayed post-test.

Characteristics of the Intervention Sample by Condition.

Note: SfV = Set for Variability; Morph = Morphology; LD = Learning Disabilities; WIAT = Wechsler Individual Achievement Test; CAS = Cognitive Assessment System; Values represented by (a) raw scores, (b) mean, (c) standard scores, (d) scaled scores. Mother’s and father’s education was measured on a 7-point scale (1 = finished elementary school, 2 = did not receive school graduate diploma, 3 = left school with graduation diploma, 4 = received technical training, 5 = finished college, 6 = university bachelor’s degree, and 7 = completed graduate studies). Mother’s and father’s occupation was coded using the 9-point scale in the International Standard Classification of Occupation. The scores were then reversed to be on the same scale as parents’ education. ANOVA was used to test for group differences in continuous variables and chi square for group differences in frequencies. The scores on the items of the parent questionnaire are based on 200 responses. Seventy-three parents did not return the questionnaire.

Materials

Children were assessed four times: at the screening phase (second week of September 2021), at pre-test (last 2 weeks of September 2021), post-test (last 2 weeks of February of 2022), and delayed-post-test (last 2 weeks of May 2022). The screening was carried out by the classroom teachers and the rest by trained research assistants (RAs).

Parent Questionnaire

Parents filled out a questionnaire at the beginning of the study to collect some background information on the participating children. The questionnaire included the following seven questions: (a) whether their child had normal or corrected-to-normal hearing and vision; (b) whether their child had a diagnosis of learning disabilities (yes, no and if yes, then what kind of learning disability); (c) what was the mother’s highest achieved educational level; (d) what was the father’s highest achieved educational level; (e) what was the mother’s occupation; (f) what was the father’s occupation; and (g) if English was spoken at home.

Screening Measures

Sight Word Efficiency (SWE)

For the SWE subtest (Torgesen et al., 2012), children were asked to read as fast as possible a list of 108 real words, divided into four columns of 27. The score was the total number of words read correctly in 45 seconds.

Phonemic Decoding Efficiency (PDE)

For the PDE subtest (Torgesen et al., 2012), children were asked to read as fast as possible a list of 66 pseudowords (e.g., ip, ko, pim), divided into three columns of 22. The score was the total number of pseudowords read correctly in 45 seconds.

Test of Silent Word Reading Fluency (TOSWRF)

In TOSWRF-2 (Mather et al., 2014), children were presented with 32 rows of words with no spaces between them (e.g., dimhowfigblue). Children were given 3 minutes to identify and draw a line between the boundaries of as many words as possible (e.g., dim/how/fig/blue). The score was the number of words correctly identified within the 3-minute time limit.

Pre-test, Post-test, and Delayed Post-test Measures

Nonverbal IQ

Simultaneous Matrices from the Cognitive Assessment System (CAS2-Brief; Naglieri et al., 2014) was used to asses nonverbal IQ at pre-test. Children were shown an array of shapes and geometric designs that were interrelated within a visual matrix and had a missing piece. Children were then asked to select from six possible choices one picture that would accurately complete the visual matrix. The task was discontinued after three consecutive errors and a participant’s score was the total number correct (max = 44). In this task, the raw score was converted into a scaled score following the instructions in the manual. Cronbach alpha reliability in our sample was .94.

Vocabulary

The Listening Comprehension subtest of the Wechsler Individual Achievement Test-3 (WIAT-III; Wechsler, 2009) was used to assess children’s vocabulary knowledge at pre-test. Children were told a word by the tester and asked to preview a set of four pictures, then point to the one picture that correctly represented the word. The task was discontinued after four consecutive errors and a participant’s score was the total number correct (max = 19). In this task, the raw score was converted into a standard score following the instructions in the manual. Cronbach alpha reliability in our sample was .90.

Phonological Awareness

The Phoneme Elision subtest of the Comprehensive Test of Phonological Processing 2 (CTOPP-2; Wagner et al., 2013) was used to assess phonological awareness at pre-test. Children were first asked to repeat a word that was provided orally by the tester and then say what was left in the word after smaller parts were taken away. The task was discontinued after three consecutive errors and a participant’s score was the total number correct (max = 33). In this task, the raw score was converted into a scaled score following the instructions in the manual. Cronbach alpha reliability in our sample was .94.

Morphological Awareness

The Inflection and Derivational Word Analogies task from Kirby et al. (2012) was used to assess morphological awareness at all measurement points. The task consisted of two subtests, one with 10 inflectional and one with 10 derivational items, given in a fixed order. Children were asked to say aloud the missing word based on a pattern from a set of words (e.g., run: ran, walk: walked). The tester would say, “I am going to ask you to figure out some missing words. If I say push and then I say pushed; when I say jump, then I should say . . . ?” If children did not complete the analogy correctly by responding jumped, the tester would provide feedback by explaining how each pair were alike. The same procedure was followed for the other two practice items. No feedback was given during the test items. The task was discontinued after the participant made four consecutive errors on each of the inflection and derivational subtests. A participant’s score was the total number of inflected and derived items correct (max = 10 each). Cronbach alpha reliability in our sample ranged from .82 to .86.

SfV

The SfV task (Tunmer & Chapman, 2012) was administered at all measurement points to assess children’s ability to determine the correct pronunciation of irregular words that were “mispronounced” based on a regularized decoding (e.g., /brikfast/ for /brɛkfast/). The task does not involve written words; it is an oral pronunciation correction task. The tester would orally provide the regularized word and then ask children to tell them back the actual word. Before attempting the test items, children completed three practice items to ensure they understood the instructions. Feedback was provided only during the practice items. Children were asked to try all 18 words and a child’s score was the total number of words pronounced correctly (max = 18). Cronbach alpha reliability in our sample ranged from .84 to .86.

Word Reading Tasks

We administered three measures of word reading at all measurement points: the Word Reading task from WIAT-III (Wechsler, 2009), the Castles and Coltheart-3 (CC3) reading task (Castles, 2022) that has three conditions (regular words, irregular words, and nonwords), and an experimenter-designed word reading task with words trained during the intervention.

Word Reading

For the Word Reading subtest (Wechsler, 2009), children were asked to read words from a list of 75 words arranged in increasing difficulty. The task was discontinued after five consecutive errors and a participant’s score was the total number correct. The raw score was converted into a standard score following the instructions in the manual. Cronbach alpha reliability in our sample ranged from .93 to .96.

Castles and Coltheart 3 (CC3)

The Castles and Coltheart 3 (Castles, 2022) reading task was used to assess children’s ability to read sets of regular, irregular, and nonwords each containing four cards with 10 words on each card. The cards in each set increased in difficulty. Children began with Regular Word Card 1 and were asked to read all 10 words on the card. If children read six or more words correctly, they were asked to attempt Regular Word Card 2, then Card 3 and then Card 4, respectively. The procedure continued until five or more errors on a regular word card were made. Children would then move onto attempting the Irregular Words set followed by the Nonwords set with the same discontinuation rule applied. A participant’s score was the total number of words read correctly in each set (max = 40). Cronbach alpha reliability in our sample ranged from .92 to .95.

Experimenter-Designed Word Reading

The experimenter-designed word reading tasks were used to assess students’ ability to read regular and irregular words that were taught in the intervention, and nonwords that were derived from real words taught in the intervention. Each task contained 12 words and children were asked to attempt all the words in the task. A participant’s score was the total number of words read correctly in each task (max = 12). Cronbach alpha reliability in our sample ranged from .88 to .92.

Procedure

Children were individually assessed in a quiet room at their school by trained RAs. The training of the RAs was conducted by the first author, lasted about 3 hours, and included a mock administration of the tasks and scoring. Two senior RAs also cross-checked all scoring of the data, the data entry, and the calculation of standard/scaled scores. Inter-rater reliability for scoring was .99. Ethics permission for this study was obtained from the research ethics board of the University of Alberta (Pro00111077).

Interventionists

The interventions were carried out by 60 classroom teachers and education assistants (EAs) assigned to the study. The interventionists held a range of degrees from education (B. Ed., M. Ed., and Ed.D), with many having additional training in teaching reading and experience with delivering reading intervention. The interventionists in Condition 1 (Phonics + SfV) were trained by the second author while the first author trained the Condition 2 (Phonics + Morphology) group. A group training session was held for each condition in different locations and lasted approximately 2 hours. In these sessions, each trainer gave an overview of the intervention goals, reviewed the scope and sequence of the lessons, and demonstrated one lesson plan in detail, acting out different scenarios that could arise. Interventionists had the opportunity to review their intervention materials with other interventionists in their group and ask questions to clarify their understanding of the content. During the intervention, the interventionists were encouraged to contact the first or second author to ask any questions.

Delivery of Interventions

The small group interventions began in the Fall term immediately after the pre-test. Interventions were run with groups of two to four children outside of the classroom in a dedicated quiet space for 30-minute sessions, 4 times a week (3 lessons + 1 review lesson) over 15 weeks. Children received an average of 26 hours of intervention (some students had missed some intervention lessons and this is why the average number of hours is less than 30).

Intervention Lessons

Each intervention condition covered 45 GPCs and 49 irregular words that frequently occur in children’s books. The irregular words used in the intervention lessons were selected from Fry’s 300-word list (Fry, 1980). Each intervention condition also included 15 review lessons that gave children the opportunity to practice their learning at the end of each week with game-like activities.

In each 30-minute lesson, children were given instruction in phonological awareness and phonics focusing intensively on the explicit teaching of GPCs through the direct mapping of text that progresses from simple to more complex letter patterns. Children were taught alternative strategies for decoding irregular words in the two conditions (see below) and engaged with real books through shared book reading instruction (e.g., Savage et al., 2018).

Phonics + SfV

In Condition 1, children were taught how to use MPC, an SfV strategy, to decode irregular words (see Appendix, for lesson details). During instruction, children were given an irregular word to work through using a series of reflections to determine if the word made sense and, if not, they were guided to recognize an “irregular grapheme-phoneme correspondence” that might need to be flipped or substituted using another. If the children did not know another GPC that fit, or if the word pronounced did not make sense, they were prompted to think about the pronunciation of the irregular word in the context of a sentence.

Phonics + Morphology

In Condition 2, children were taught morphology to decode irregular words (see Appendix, for lesson details) using an adaptation of SWI by P. N. Bowers (2009). The SWI instructional framework was used to guide the children through investigating the spelling–meaning–pronunciation connection in different irregular words. Children were asked to define the meaning of the irregular word (correct use in an oral sentence), determine how the word was built (identify any bases and/or affixes), think of other words that may be morphological relatives (share a common base), and discuss any shifts in the pronunciation of words when there was a suffixing change.

Treatment Integrity

To assess Treatment Integrity (TI), interventionists in each condition were observed at least twice by trained observers. The observers included the first and second authors, and literacy consultants that received 1 hour of training on the role and structure of TI in the interventions and also viewed a 30-minute video recording that demonstrated the “proficient” delivery of a sample lesson. An experimenter-designed TI rubric (adapted from Savage et al., 2018) was designed to evaluate five main areas of lesson delivery: (a) Content Completion, (b) Order of Content Delivery, (c) Time Management, (d) Quality of Instruction, and (e) Student Behavior. Under the Quality of Instruction category, interventionists were observed and scored on understanding of lesson content, communication of the content, and skill in addressing individual student needs. Each component was scored on a 3-point scale (0 = “insufficient,” 1 = “limited,” 2 = “proficient”). Seven interventionists that obtained a score of 1 or 0 were given additional training (approximately 3 hours long) and guidance by the first author or literacy consultant assigned to that condition and were observed again.

Two intervention sessions were independently observed to calculate inter-rater reliability. Analyses of all these scores showed 98% agreement in Phonics + SfV and 97% in Phonics + Morphology interventions. Mean scores for each observer were calculated for all observed sessions separately for each of the five TI components. Mean rankings were uniformly high (e.g., 1.73 to 1.89 on a maximum possible score of 2). Mann–Whitney U tests for each TI component by condition (Phonics + SfV vs. Phonics + Morphology) adjusting for multiple contrasts were nonsignificant (all ps > .10). Mean fidelity ratings for the interventionists were identical across each condition (M = 1.81), confirming that both interventions were implemented equally well.

Data Analysis

Data analysis involved three steps. First, we conducted a preliminary analysis on all data to check for any deviations from normality (i.e., performance ±3 SDs from the group’s mean) and for any group differences on extraneous variables (e.g., general cognitive ability, age and gender distribution). Second, we performed hierarchical linear modeling (HLM) to determine if there was a significant main effect of the intervention condition on the outcome measures at posttest and delayed posttest. Hierarchical linear modeling is recommended for dealing with nested data because it can distinguish individual and group effects on the multiple continuous and discrete outcome variables and control for Type-1 error (Raudenbush & Bryk, 2002). Finally, we calculated effect sizes (Hedges’ g).

Results

Preliminary Data Analyses

There was only a modest loss of data in the main attainment database: one child was lost prior to randomization, one child was removed by their school after randomization but before testing, and four children were assigned to conditions, but no data was collected at any point. There were 11 children with missing data at one or other post-test (6 at post-and 11 at delayed post-test). The total missing data made up less than 2% (missing = 1.48%) of the total test data with no variables missing more than 2.5% data. Missingness was completely at random: Little’s Missing Completely at Random (MCAR) test, χ2 = 119.17 df = 8, ns (exact sig = 0.013 assessed against conservative significance of p < .001), so multi-level modeling could safely be run on data as is without introducing confounds.

Comparability of the Two Intervention Conditions at Pre-test

To ensure that the process of randomization to treatment condition had been successful in eliminating pretest imbalance in main outcomes and to control for extraneous variables (e.g., socioeconomic status indexed by parents’ education and occupation), comparisons were first undertaken on relevant pre-test measures. Inspection of Table 1 shows that the two intervention conditions in our sample were not significantly different on pre-test reading and on a range of extraneous variables (i.e., chronological age, gender, parents’ education, and occupation). Formal statistical testing assumed a conservative alpha (p < .01) to control for multiple tests.

Hierarchical Growth Curve Model Building

The hierarchical or multi-level (MLM) models require stepwise construction and verification prior to running. In each case, the nesting (clustering) of scores violates the assumption of statistical independence of each data point in standard ordinary least squares (OLS) models. Preliminary “unconditional models” of raw score attainment data using classroom-level indices of clustering were thus first run to assess nestedness. These analyses showed that there was a high level of shared classroom-level variance (i.e., substantial nestedness of scores in classrooms), such that MLM modeling was appropriate (Hayes, 2006; Hox, 2010). These were: 54.61% in WIAT-III Word Reading; 11.99% in WIAT-III Vocabulary; 12.50% MA inflected analogies; 26.55% in MA derivational analogies; 41.42% in Intervention regular words; 41.64% in Intervention irregular words; 18.71% in Intervention nonwords; 44.88% in SfV; 36.14% in CC3 regular words; 41.11% in CC3 irregular words; and 29.94% in CC3 nonwords. Similar results were obtained using INTERVENTIONISTID (the unit of interventionist as opposed to regular classroom) as a second measure of nested variance instead of classroom. It is worth noting here that similar levels of shared classroom variance were reported by Savage et al. (2018) who used an analogous sample (i.e., intraclass correlation coefficients ranged between .09 and .45) and may reflect the fact that our sample consisted of struggling readers and there is often variation in the use of evidenced curriculum for their specific needs in Canadian schools.

To assess repeated measures of time of assessment (pretest, post-test, and delayed post-test), we created a “stacked” longitudinal data set using the procedures and syntax described by Peugh and Enders (2005). Such repeated measures (a form of longitudinal growth curve model) accurately code the statistical variance associated with repeated assessment of each child’s attainment over time in designs with nonequal treatment sample sizes and assessment intervals, while also controlling for known data nestedness (Shek & Ma, 2011). In their purest form, repeated measures models assume equal temporal distances at the formal pre-test, post-test, and delayed post-test phases of data collection between each child’s assessment. As this very high degree of temporal precision is unlikely to be achievable in very large multivariate intervention designs such as ours, we first formally assessed this assumption of temporal structure. Following procedures described by Singer and Willett (2003), we first evaluated candidate structure-constrained Wave-defined (pre-test vs. post-test vs. delayed posttest), and precise elapsed time from pre-test (Time)—defined models versus unstructured (purely chronological age-defined) longitudinal growth models. Inspection of resultant variance models and model-fit statistics (Deviance -2Loglikelihood [2LL], Akaike’s Information Criteria [AIC], Hurvich and Tsai’s Criterion [AICC], and Schwarz’s Bayesian Criterion [BIC]) all showed better model fit with Time over Wave and Chronological Age modeling of temporal data features. We thus used a structured (Time-based) model for all analyses and centered all Level 1 attainment scores at Times 1 to 3 (pre-test, post-test, and delayed post-test) to the corresponding mean of the pre-test scores.

We then assessed the adequacy of linear and nonlinear (quadratic) growth using the procedures and syntax described by Shek and Ma (2011). This revealed that a positive linear model provided the best fit for inflectional and derivational morphology and CC3 irregular words, with nonsignificant quadratic terms evident when subsequently entered after the linear term. A negative quadratic model (positive growth but diminishing in trajectory over post- to delayed-post-tests) best fitted WIAT-III Word Reading, CC3 regular word and CC3 nonword reading, experimental regular, irregular and nonword reading, and SfV over Time. Following Shek and Ma (2011), the respective best fitting models for Time for each variable were retained and used for the main analyses.

Results of Multilevel Growth Curve Analyses

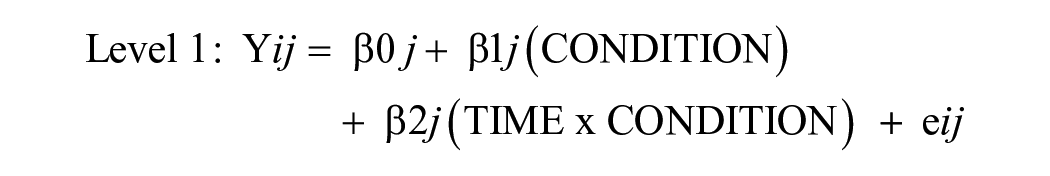

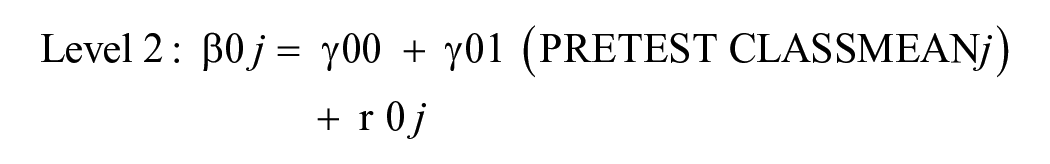

We undertook longitudinal MLM using the raw attainment test scores. We modeled fixed effects of intervention Condition: (Phonics + SfV vs. Phonics + Morphology) over time with repeated measures on Time (at pre-test, post-test, and delayed post-test) as well as the Condition × Time interaction effect. Corresponding pre-test nested attainment (e.g., grand-mean centered classroom-average WIAT-III Word Reading measures for WIAT-III Word Reading Wave outcome measures) was entered to assess and control for data nestedness in all analyses. We subsequently entered a variable coding intervention dosage (the number of lessons each child received at Level 1—the student level) as an index of dosage and observed in subsequent models. Our base statistical model is formally described by the following pair of statistical equations:

Equations at Level 1 (individual student) and Level 2 (nested classrooms) describe this analytic model at the student and classroom levels for student i in classroom j, respectively. A quadratic term (TIME2) replaced TIME only when indicated by preliminary analyses described above. Results showed that the nested pre-test control variable for each analysis was always a significant predictor of attainment. MLM run as here within IBM analysis frameworks zero-codes the second intervention (here morphology) as a baseline and then zero-references the first intervention (here, SfV) to assess the potential differential effects of the two conditions.

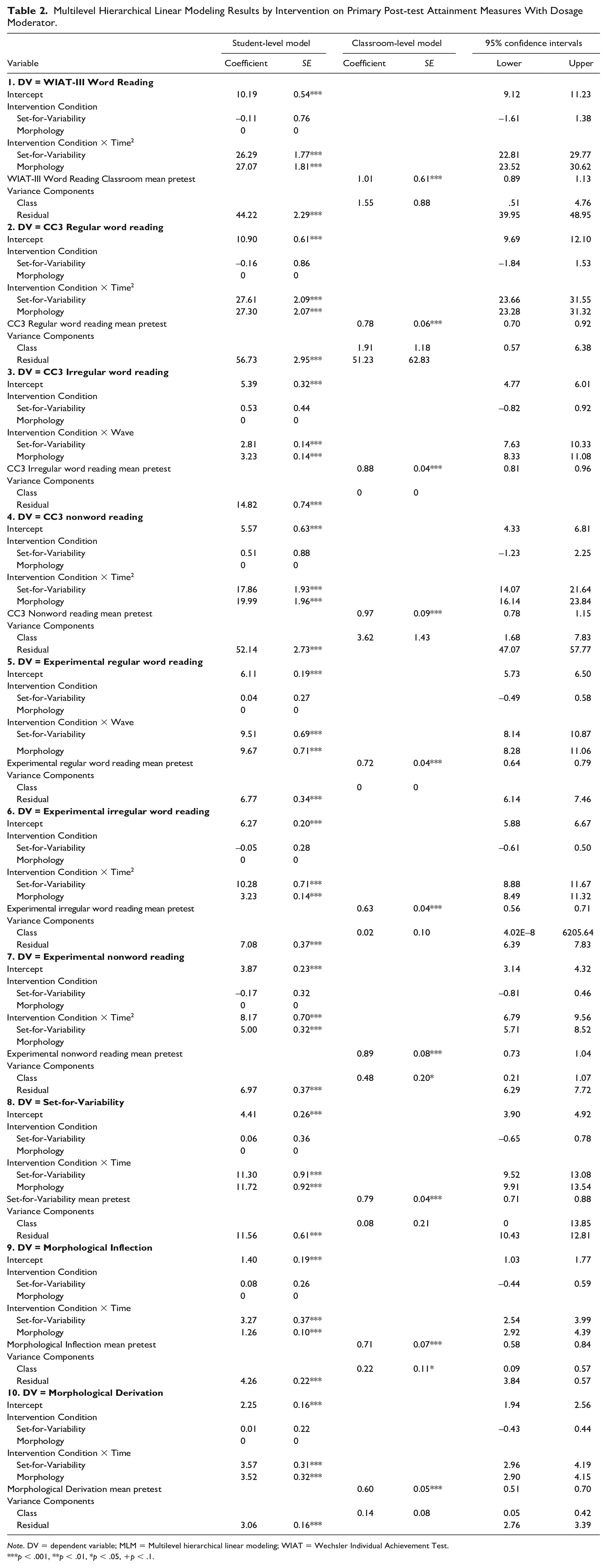

Results of MLM depicted in Table 2 also showed that there was no main effect of intervention condition on any outcome measures. There was, however, a significant interaction effect of Time × Intervention Condition for both intervention conditions for all 10 outcome measures reported, showing that there was a significant change in attainment between pre- and post-tests in both interventions. Upper and lower 95% confidence intervals around these estimates of change over time were both well above zero for both interventions in all analyses, showing that we can have high confidence that the positive findings reported here for intervention were not due to chance.

Multilevel Hierarchical Linear Modeling Results by Intervention on Primary Post-test Attainment Measures With Dosage Moderator.

Note. DV = dependent variable; MLM = Multilevel hierarchical linear modeling; WIAT = Wechsler Individual Achievement Test.

p < .001, **p < .01, *p < .05, +p < .1.

Subsequent analyses adding variables further improved all indices of overall model-fit in all analyses reported (2LL, AIC, AICC, and BIC). Results shown in Table 2 indicate that dosage was a near significant predictor of growth in WIAT-III Word Reading across both interventions (p = .054) and a significant predictor of intervention regular word reading (p = .023). Dosage also predicted the growth of SfV in the SfV-taught condition only (p = .04, here with 95% both CIs above zero). Dosage was a nonsignificant predictor of all other effects in all other outcome measures. There was no significant difference between Intervention Conditions overall on the number of lessons received (F = 2.35, p = .13). Finally, we reran all analyses nested by Interventionist Group rather than by Classroom and the reported effects remained the same.

Discussion

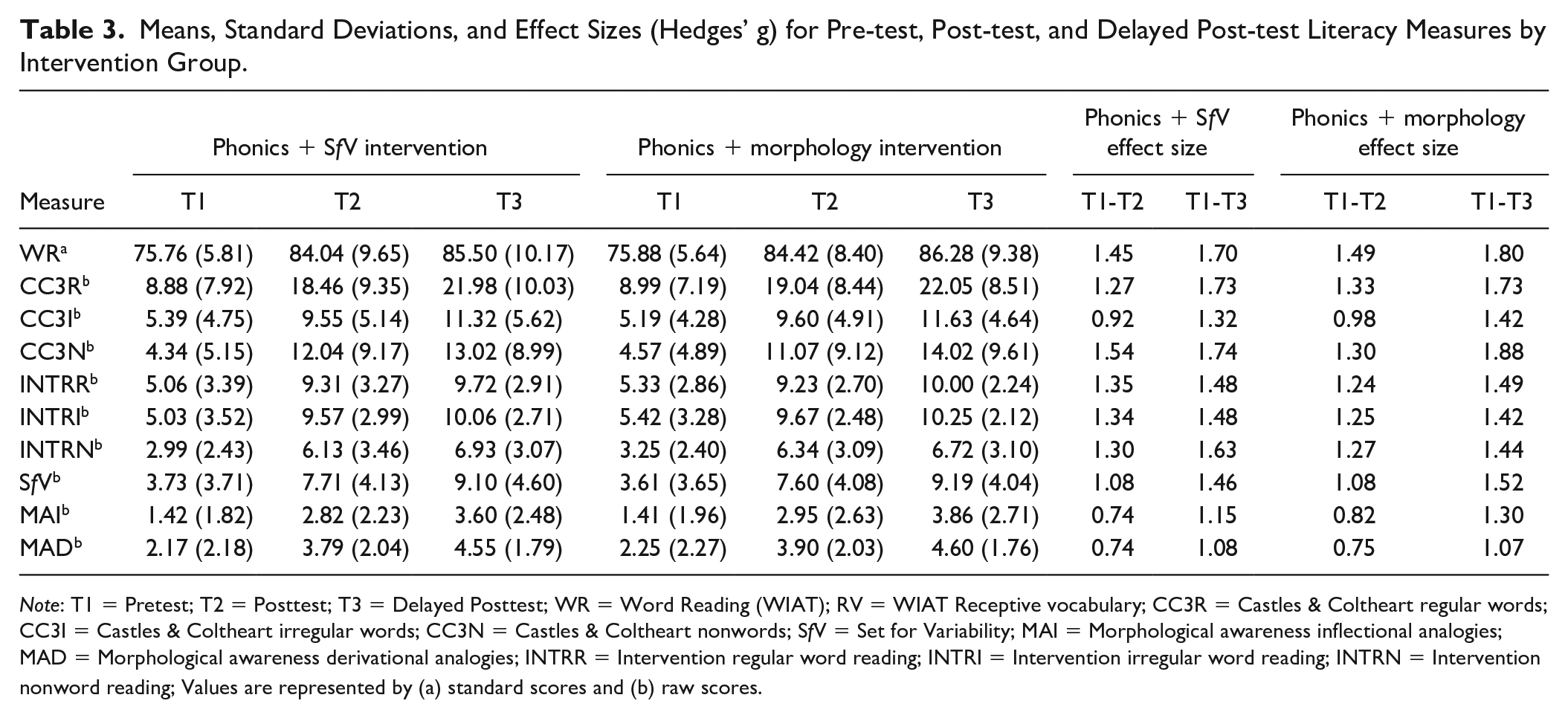

The purpose of this study was to examine whether Phonics + SfV intervention would produce better reading results (particularly in irregular word reading) than Phonics + Morphology intervention for a group of Grades 2 and 3 children with reading difficulties. Our results showed first a significant improvement on all measures in both reading interventions from pre-test to post-test and delayed post-test and the effect sizes were large (see Table 3). Similar to Savage et al. (2018), we found strong positive effects of the Tier 2 intervention in this group of struggling readers.

Means, Standard Deviations, and Effect Sizes (Hedges’ g) for Pre-test, Post-test, and Delayed Post-test Literacy Measures by Intervention Group.

Note: T1 = Pretest; T2 = Posttest; T3 = Delayed Posttest; WR = Word Reading (WIAT); RV = WIAT Receptive vocabulary; CC3R = Castles & Coltheart regular words; CC3I = Castles & Coltheart irregular words; CC3N = Castles & Coltheart nonwords; SfV = Set for Variability; MAI = Morphological awareness inflectional analogies; MAD = Morphological awareness derivational analogies; INTRR = Intervention regular word reading; INTRI = Intervention irregular word reading; INTRN = Intervention nonword reading; Values are represented by (a) standard scores and (b) raw scores.

The large effects of both intervention programs in our study may be due to the fact that both intervention conditions combined explicit instruction in the foundational skills of word recognition (i.e., phonological awareness and phonics) as opposed to interventions targeting either phonological awareness or phonics. Our choice of including instruction in phonological awareness was intentional because previous studies have shown that children with better phonological awareness are better prepared to receive phonics instruction (Savage et al., 2020). The average standard scores in WIAT-III Word Reading following the intervention show that the scores did not reach average for the whole sample (i.e., the average standard score at post-test was 84.04 and 84.42 for the SfV and morphology condition, respectively), but suggest strong improvements had been made over the time period covered. Notably, these positive effects were sustained at delayed post-test, and if anything, there was evidence of modest growth (not “fade”) in many effects from post-test to delayed post-test.

Growth curve comparisons revealed that a positive linear model provided best fit for inflectional and derivational morphology, and CC3 irregular word reading. This suggests that equivalent growth was made post-intervention as between pre- and post-test. A negative quadratic model best fitted WIAT-III Word Reading, CC3 regular and nonword reading, experimental regular, irregular and nonword reading and SfV over time. This means that although significant growth was still made between post- and delayed post-test, the size of this growth was less than between pre- and posttest. This latter growth pattern might suggest the need for either more sustained initial small group interventions or for subsequent ongoing intervention to maintain progress over the sample as a whole, especially in domains related to decoding. The evidence of dosage effects related to regular word reading also supports this interpretation. The linear growth evidenced in morphology and irregular word reading is encouraging, and suggestive of much independent (postintervention) learning, presumably via some form of generalization of learning or post-intervention self-teaching in reading.

In contrast to our first hypothesis, SfV and morphology produced similar results on irregular word reading. There might be two explanations for this finding: First, it may be due to the fact that both required some level of access to semantics (SfV by asking children to think if this is a word they know and by putting the word in a sentence, and Morphology by asking children to identify the base, create word sums and find relatives of that word), which then led to similar results. Unfortunately, no previous studies have directly compared the effects of teaching irregular words through SfV or Morphology and therefore we cannot compare our findings to those. However, Dyson et al. (2017) did use MPC strategy (i.e., SfV) in their intervention and also included CC2 irregular word reading as an outcome measure (CC3 uses the same irregular words as CC2). We calculated the effect size (Hedges’ g) in Dyson et al.’s study from pre- to post-test (they did not have delayed post-test) and it was g = 0.51 for CC2 and g = 0.92 for mispronunciation correction untaught words. Both are substantially lower than the effect sizes we got in our SfV intervention condition. A possible reason for this difference is that Dyson et al.’s intervention was conducted in small groups of up to eight children (M. S. Hall & Burns’, 2018, meta-analysis has shown that groups larger than 5 produce smaller effects) in two 20-minutes sessions per week for 4 weeks (our intervention was four times longer). Second, it is possible that the time allotted to teaching SfV or Morphology was not long enough to produce meaningful differences in irregular word reading.

We also found no differential effects of intervention on SfV and morphology outcome measures (in contrast to our second hypothesis), while nested analyses and inspection of effect sizes here show that both measures showed robust improvement at both post-tests. It may be that greater instructional time is needed to produce differential effects on morphology and SfV outcomes. Such work is already afoot in our team. It may however also be that consideration of the overall effect sizes alone does not illuminate possible distinct cause and effect relationships that may exist here. Such patterns might usefully be further explored using other research designs in future work.

Finally, the number of lessons received by each child (i.e., dosage) was a near-significant predictor of growth in WIAT-III Word Reading across both interventions; a significant predictor of intervention regular word reading, and of growth of SfV in the SfV condition only, but was a nonsignificant predictor in all other outcome measures. There was no evidence of differential mediation of effects across the two intervention conditions by the number of intervention sessions received in any analysis. The average number of lessons received per child was 52.06 (SD = 5.46) out of a maximum of 60, so most schools delivered a high “dosage” of intervention, potentially reducing the effects of dosage. These findings are in line with previous RCT studies implemented with students with reading difficulties (e.g., Gunn et al., 2005; Lovett et al., 2017) that have found medium to large effect sizes on word reading when dosage is greater than 50 lessons.

Implications for Practice

We delivered intervention to a large group of struggling readers across multiple schools via certified staff trained by university partners. Such training and delivery via university-school board partnership shows promise in improving the reading ability of Grades 2 and 3 children with reading difficulties. Although we did not have a “business-as-usual” group in our study, we make this argument on the basis of using a norm-referenced assessment (WIAT-III Word Reading) in our study. Results on the norm-referenced WIAT-III Word Reading task further suggest that it is important to deliver at least 50 intervention lessons to achieve the full effect in either intervention, so schools should be encouraged to do this in the future. In studies with students who had moderate to severe word-level reading difficulties, C. Hall et al. (2023) found that higher dosage interventions, on average, yielded slightly larger effect sizes than lower dosage interventions. Finally, a very small minority of interventionists in our study delivered lower quality teaching interventions. These might usefully be the focus of additional PD support for maximal impact. The fact that a vast majority of the lessons was well-implemented, however, suggests that the professional training model was appropriate for the study’s purpose.

Limitations

Some limitations of this study should be reported. First, the participating school divisions did not allow us to also include a “Business-As-Usual” (BAU) group that would not receive any intervention. Even though we did not have a BAU group, the fact that we included two intervention groups that were matched on several extraneous variables and we also used some norm-referenced assessments (i.e., WIAT-III Word Reading) gives us some confidence that the observed effects of the intervention are “true” effects and not simply the result of maturation. Certainly, a future study should replicate our findings using a BAU group. Second, even though our reading tasks included multimorphemic words (see WIAT Word Reading), we did not specifically test children in multimorphemic word reading and this may have not given the children in the Phonics + Morphology condition the opportunity to fully display their knowledge in reading these words. Third, children in the SfV condition would not be able to give us the correct pronunciation of a word if they did not already have that word in their oral vocabulary. Unfortunately, we did not check how many of the words they practiced in the intervention they already knew. Fourth, 13% of our sample was speaking a language other than English at home. This may have impacted performance in SfV, a task that is potentially related to knowledge and ability to use standard English pronunciation. Finally, some caution should be attached to the interpretation of quadratic growth curves measured over only three time points. Future work could usefully explore growth patterns over multiple time points.

Conclusion

Our findings add to the body of early literacy intervention studies (e.g., Savage & Cloutier, 2017; Wanzek et al., 2018) by showing that explicit, systematic, and intensive instruction can significantly improve the reading performance of struggling readers. The effect sizes were large which suggests that when theory-driven interventions are delivered with fidelity and school divisions invest in training their teacher interventionists, a very good chance of reducing reading difficulties exists.

Footnotes

Appendix

Acknowledgements

We would like to thank Greater St. Albert School Division, Fort Vermilion School Division, Lakeland School Division, and Black Gold School Division for their participation in the intervention study. Most importantly, we would like to thank each one of our interventionists and literacy consultants who devoted their time and efforts to support all the children in our project.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This study was funded with a grant from Alberta Education (RPP-2021-0010) to the second author.