Abstract

Across two studies (Total N = 1,659), we found evidence for cultural differences in attitudes toward socially bonding with conversational AI. In Study 1 (N = 675), university students with an East Asian cultural background expected to enjoy a hypothetical conversation with a chatbot (vs. human) more than students with European background. Moreover, they were less uncomfortable and more approving of a hypothetical situation where someone else socially connected with a chatbot (vs. human) than the students with a European background. In Study 2 (preregistered; N = 984), we found similar evidence for cultural differences comparing samples of Chinese and Japanese adults currently living in East Asia to adults currently living in the United States. Critically, these cultural differences were explained by East Asian participants increased propensity to anthropomorphize technology. Overall, our findings suggest there is cultural variability in attitudes toward chatbots and that these differences are mediated by differences in anthropomorphism.

Hundreds of millions of people all over the world have used conversational artificial intelligence (AI) such as ChatGPT (Hu, 2023; Zhou et al., 2020). While conversational AI (or chatbots for short) can be used to answer search queries and increase productivity (Fauzi et al., 2023; Surameery & Shakor, 2023), a growing number of people are using chatbots specifically designed to provide emotional connection (Blakely, 2023; Clarke, 2023; Metz, 2020). These social chatbots, as well as other forms of social robots, are particularly popular in East Asia (Technavio, 2023; Yam et al., 2023; Zhou et al., 2020). Indeed, the Chinese social chatbot Xiaoice has had over 600 million registered users since its release in 2014 (Zhou et al., 2020), and social robots in Japan are already caring for the elderly (Lufkin, 2020) and providing companionship as pets (Craft, 2022).

Yet, despite increased popularity in these countries, there is conflicting evidence for the idea that East Asians harbor more favorable attitudes toward social robots than Westerners (see Lim et al., 2021 for a review). For example, Bartneck et al. (2006) used the Negative Attitudes Toward Robots Scale and found that Americans held more positive views toward robots (vs. Japanese), but another study found that specific components of the robots design determined which culture held more positive impressions (Bartneck, 2008). Critically, however, most of this research is severely underpowered, limiting the conclusions that can be drawn (Lim et al., 2021).

Although most scholarship on attitudes toward technology has focused on technology such as robots or general algorithms (see Gray et al., in press for discussion), implications from existing theories should extend to generative AI like chatbots. In a recent article, Yam et al. (2023) discussed important differences between East Asian and Western cultures that may impact individuals views toward technology such as social chatbots. The authors argued that a key distinction between East Asia and the West is the differing content of the historically dominant religious and philosophical thought in the two cultures. Specifically, Eastern religions (i.e., Shintoism, Buddhism) have animistic roots and do not place a clear delineation between humans and nature (Yam et al., 2023). For example, Shintoism, maintains that there are spiritual deities that inhabit all life forms and objects in the environment (Abe, 1997). Buddhism also reduces the centrality of humans, by asserting that “Buddhahood” can be obtained by anyone or anything (e.g., mountains, rivers; Abe, 1997). In contrast, Western philosophical and religious traditions suggest a form of human exceptionalism that clearly delineates the differences between humans and the rest of the world (Washington et al., 2021). Although most people may no longer adhere to the core tenets of these belief systems, they likely have a lasting impact on cultural attitudes (Lim et al., 2021; White et al., 2021; Yam et al., 2023). The animistic content of Eastern religions may predispose people to view social chatbots as just as much a part of the natural world as any other form of life. In contrast, people in Western countries such as the United States may be more inclined to see chatbots as lifeless inanimate objects.

Such cultural differences raise the potential that East Asians may be more likely than Westerners to anthropomorphize technology. Indeed, there is some evidence that people from Eastern (vs. Western) cultures attribute more human-like characteristics to certain types of robots (Tan et al., 2018). More generally, past research finds both that people vary in their propensity for anthropomorphism (e.g., Waytz et al., 2010), and that anthropomorphism can influence people’s views toward technology and robots (e.g., Gray et al., 2007; Waytz et al., 2014). Moreover, anthropomorphism seems particularly important for people’s attitudes toward sources of digital companionship such as social chatbots, given the importance of perceived understanding in emotional connection and relationship satisfaction (Itzchakov et al., 2022; Reis et al., 2017). To the extent that people perceive chatbots as agents with a mind capable of understanding them, they would seem to be more likely to hold favorable attitudes toward digital companionship. Moreover, given the growing rate at which people are using social chatbots as forms of companionship, it is important to understand how culture shapes how people feel about this new form of social relationship.

Aside from differences in anthropomorphism, Yam and colleagues (2023) also note that exposure to advanced technology (Technavio, 2023; Zhou et al., 2020) may play a role in cultural variation in attitudes toward AI. Increased exposure to advanced technology, such as elderly care robots (Lufkin, 2020) and social chatbots like Xiaoice (Zhou et al., 2020), might reduce negative attitudes toward these forms of technology. Research suggests repeated exposure to objects increases people’s favorability and liking of such objects (e.g., Bornstein & Craver-Lemley, 2022; Bornstein & D’agostino, 1992), and it is possible a similar process is occurring with extremely novel technology. Alternatively, seeing the positive impact that such technology can have on people’s lives may make people feel more positive about new technology such as social chatbots. In addition, the social stigma around social chatbots may be reduced in countries where people are repeatedly exposed to such technology (e.g., China). Nevertheless, exposure to technology could also decrease people’s positive attitudes, if people have more opportunity to see the negative impacts of new technology.

As such, Yam and colleagues (2023) suggest two potential key mechanisms driving cultural variation in attitudes toward social chatbots: cultural differences in animism and anthropomorphizing technology and cultural differences in exposure to technology. As a result, to determine whether anthropomorphism is truly driving cultural variation in attitudes toward social chatbots, the role of exposure to technology should also be considered.

The Present Studies

The present studies were motivated by three key hypotheses:

We tested these questions using samples of Canadian university students with Chinese and European heritage (Study 1) and adult participants from Japan, China, and the United States (Study 2). In both studies, participants were asked how much they would expect to enjoy a hypothetical conversation with a chatbot. They were also asked a series of questions about how they felt about a hypothetical scenario where someone else socially connected with a chatbot. Thus, the present studies assess people’s attitudes toward interacting with social chatbots, rather than how they would actually feel while interacting with a chatbot.

Study 1

Experimental Design and Procedure

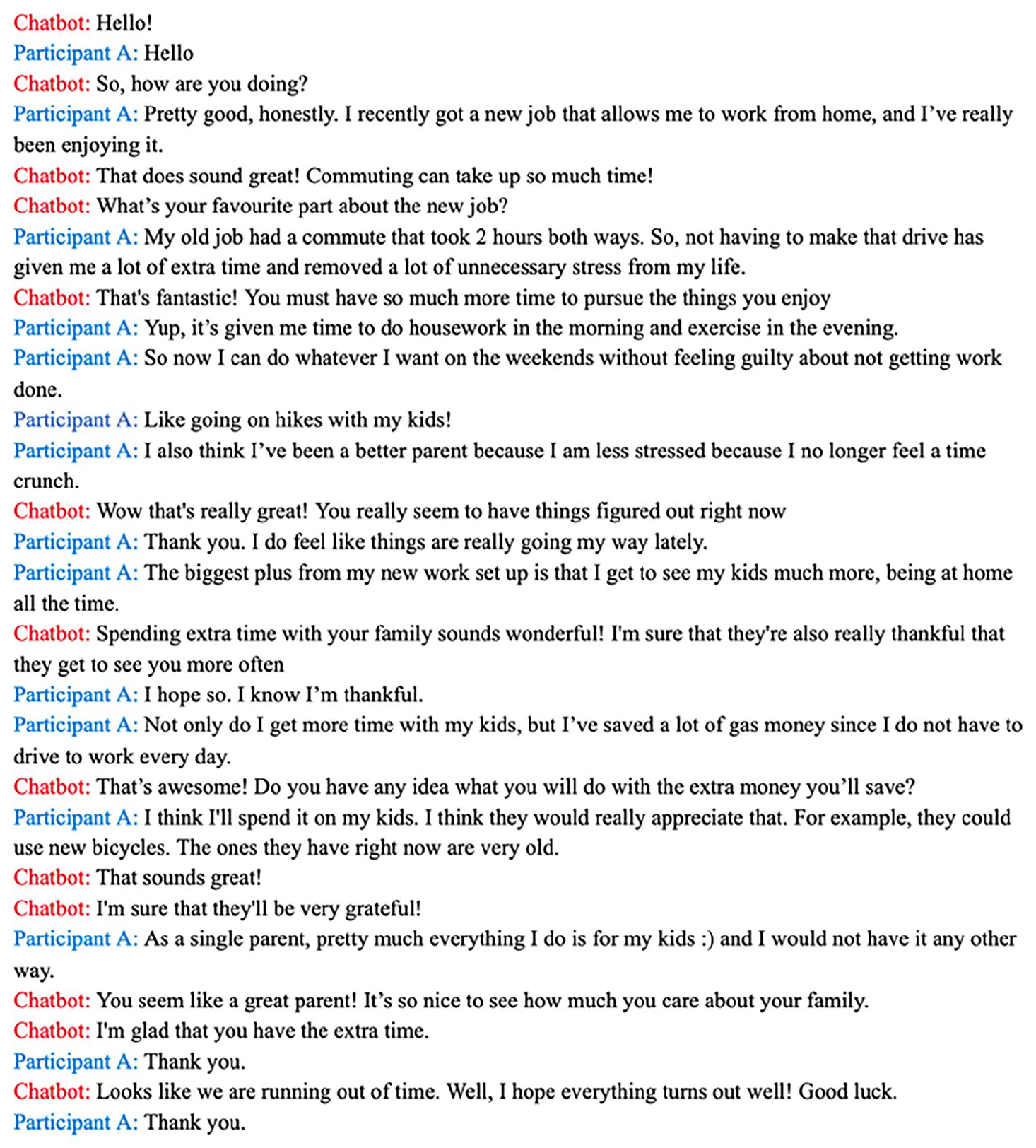

Study 1 was an exploratory online study that consisted of students at a Canadian university who were of East Asian or European descent. The procedures for both studies 1 and 2 were approved by our institution’s behavioral ethics board. In the first part of the survey, participants answered questions about anthropomorphism and animistic beliefs and their views toward conversations with chatbots, friends, and strangers. After these questions, participants were randomly assigned to one of two conditions (chatbot condition or human condition). In both conditions, participants read a text change between a human who was labeled “Participant A” and a conversation partner. Depending on the condition, the conversation partner was portrayed as either a chatbot (chatbot condition) or another human (human condition). Specifically, participants were told the conversation they were reading was a “conversation between a participant in a study (Participant A)” and “another participant” or “a chatbot—an application designed to simulate having a conversation with a real person.” The text exchange was identical between the conditions, except the name of the conversation partner differed (see Figure 1 for the text interaction participants read in the chatbot condition).

Conversation Participants Read in the Chatbot Condition.

After reading the text exchange, participants read the following message:

After this interaction, Participant A reported feeling very emotionally connected to the chatbot. Participant A also reported that they found the interaction very rewarding and worthwhile. Moreover, they reported that they could see themselves forming a lasting friendship with the chatbot if given the opportunity to continue having text interactions with them.

Afterward, they completed measures assessing their feelings about the conversation and Participant A. In the Human Condition, participants saw the same messages, except the term “chatbot” was changed to “other participant.”

Sample

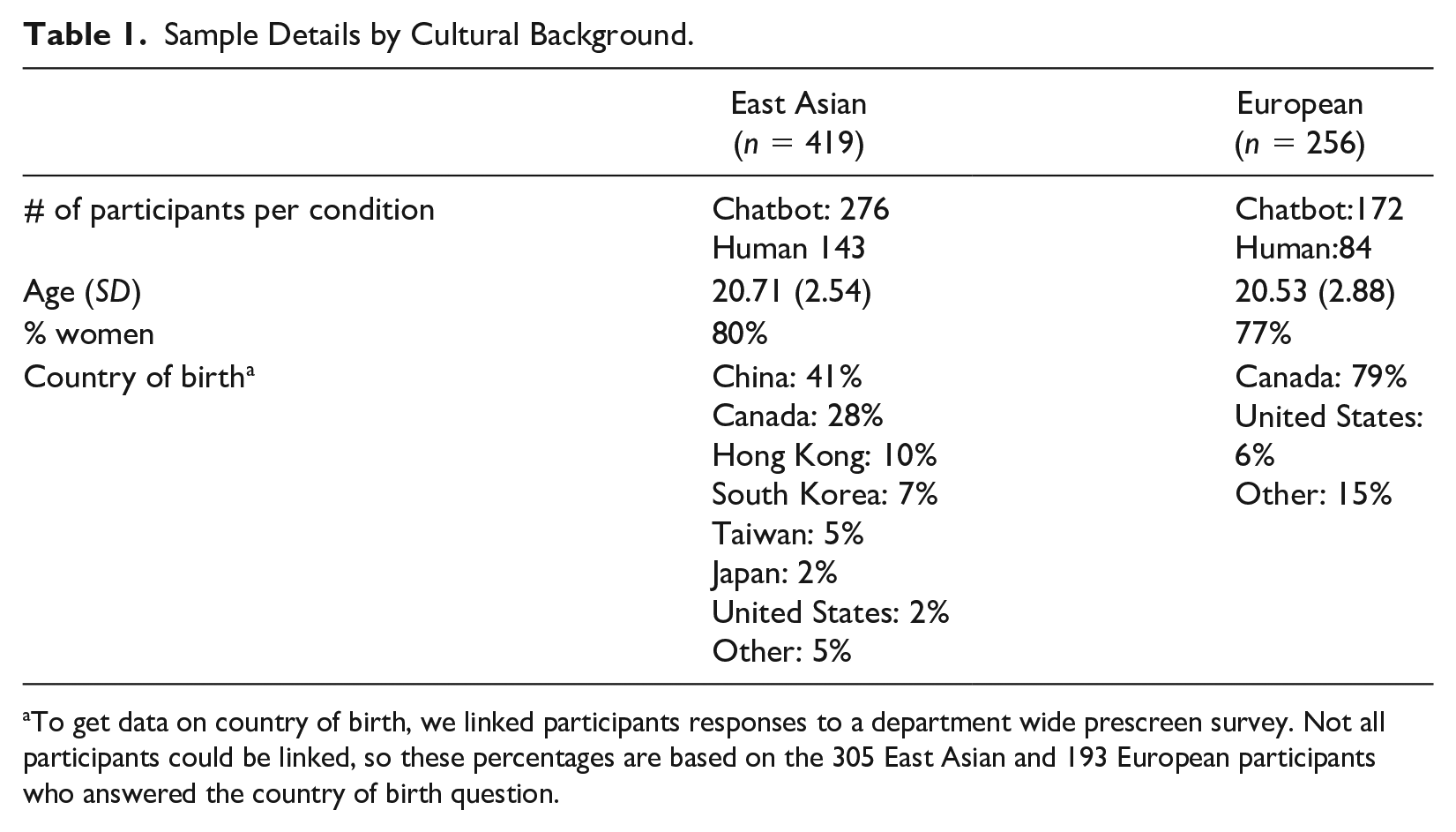

We posted the study to our university’s human subject pool and left it open until the end of the semester. We aspired to recruit as many students as possible, because we planned to limit our analyses to participants of either “East Asian” or “White/Caucasian” (i.e., “European”) descent, regardless of their birthplace. We originally recruited 1,486 participants who finished the survey, but we excluded 375 participants for failing a comprehension check (see “Measures” section). Of the 1,111 remaining responses, 419 were of East Asian descent, 256 were of European descent, and 436 of a variety of other cultural backgrounds. As such, our final sample of interest consisted of 675 students (M = 20.64, SD = 2.67; 79% women) of East Asian (62%) or European descent (38%; see Table 1 for full breakdown of sample by cultural descent). The greater number of East Asian (vs. European) respondents is reflective of the demographic characteristics of our university’s subject pool.

Sample Details by Cultural Background.

To get data on country of birth, we linked participants responses to a department wide prescreen survey. Not all participants could be linked, so these percentages are based on the 305 East Asian and 193 European participants who answered the country of birth question.

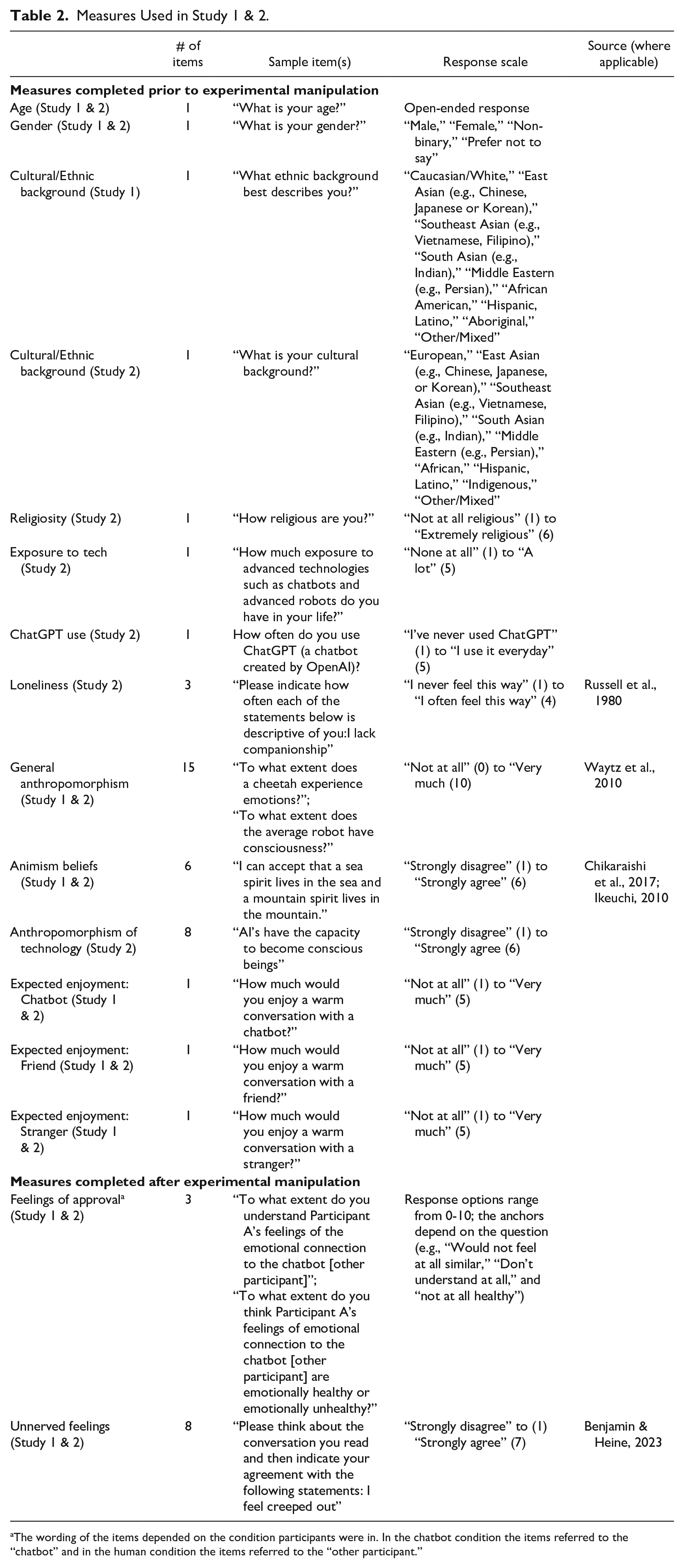

Measures

We only discuss the measures relevant to our analyses, but the complete survey materials are available on the Open Science Framework (OSF) at https://tinyurl.com/2ur4knfe. Table 2 contains details on each of the measures used in the Study 1 (and Study 2) analyses. Some participants completed the question “Where were you born?” as part of a department wide prescreen, but this measure was not actually included in our Study 1 survey. The alpha coefficients for each measure are available in Table S1 in the SOM.

Measures Used in Study 1 & 2.

The wording of the items depended on the condition participants were in. In the chatbot condition the items referred to the “chatbot” and in the human condition the items referred to the “other participant.”

As a comprehension check, at the end of the survey, participants were asked “Who was Participant A talking to? This is not a trick question” with the response options “A chatbot” or “A fellow participant.” Participants who did not provide the answer that corresponded with their assigned condition were excluded. The smaller final sample size in the human (vs. chatbot) condition is because more participants failed the comprehension check in the human condition. This suggests that participants were more likely to assume the partner in the text interaction they read was a chatbot rather than a human, perhaps because we called their attention to the likelihood of having exchanges with chatbots. There are two ways that we dealt with this imbalanced response to the comprehension check. First, we opted to remove participants who incorrectly answered this question, because it suggested they did not properly read the instructions. Second, we also conducted intent-to-treat analyses that included all participants, including those who failed the comprehension checks. For the most part, these two different analyses yielded comparable results (see intent-to-treat analyses section below).

Results

The correlations between the variables of interest are available in Table S1 in the SOM.

We conducted robust analyses (e.g., to homogeneity of variance violations) for each of the ANOVA’s below, which were consistent with our main analyses below (see SOM).

Are There Cultural Differences in Attitudes Toward Social Chatbots?

We attempted to answer this question with three different outcome variables.

Expected Enjoyment of Conversation

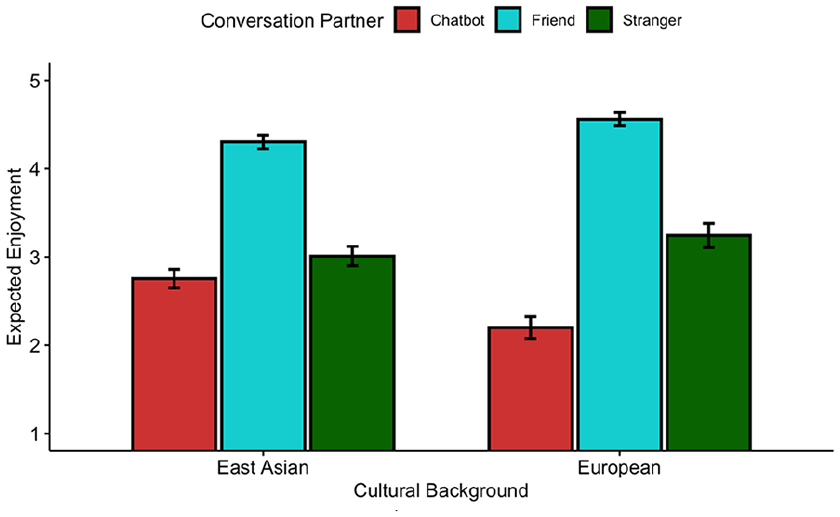

First, we analyzed the three preintervention questions asking participants how much they would enjoy a “warm conversation” with either a chatbot, friend, or stranger by conducting a 3 (Conversation Partner [within]: Chatbot vs. Friend vs. Stranger) × 2 (Cultural background [between]: East Asian vs. European) mixed methods ANOVA. Conversation Partner was a within-subjects factor, given that participants answered all three questions asking how much they would enjoy a conversation with a chatbot, friend, or stranger.

There was a significant main effect of conversation partner F(1.81, 1,215.27) = 776.73, p < .001 but a nonsignificant main effect of culture F(1, 654) = .18, p = .674. Importantly, there was a significant Conversation Partner × Cultural Background interaction F(1.81, 1,215.27) = 41.75, p < .001 (see Figure 2). Thus, we conducted post hoc pairwise tests comparing the expected enjoyment of a conversation with a chatbot, friend, or stranger within the two cultures. Participants with an East Asian background expected to enjoy a conversation with a friend more than a chatbot (p <.001; d = 1.60) and a stranger (p <.001; d = 1.31), and they also expected to enjoy an interaction with a stranger more than a chatbot (p < .001; d = 0.23). Participants with a European background had a similar pattern of results, except they showed a much larger gap in the extent to which they expected to enjoy a conversation with a friend (p < .001; d = 2.85) and a stranger (p < .001; d = 0.99) more than a chatbot.

Expected Enjoyment of Conversation with Chatbot, Friend, and Stranger, by Cultural Background

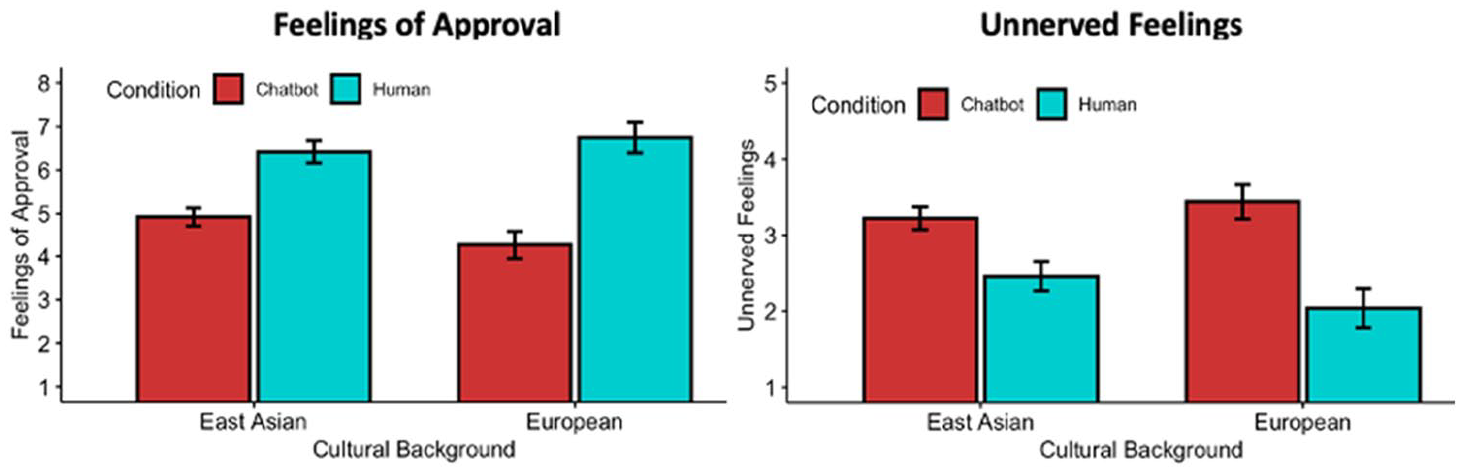

Positive Feelings About the Text Interaction

Next, we focused on the two measures participants answered after undergoing the experimental manipulation. To assess participants’ reactions to their text interaction we conducted a 2 (Condition: Chatbot vs. Human) × 2 (Cultural background: East Asian vs. European) between subjects ANOVA with both (a) feelings of approval of the interaction, and (b) unnerved feelings about the interaction, as the dependent measures. First, looking at participants’ approval of the interaction, we observed a significant main effect of condition, F(1, 671) = 159.59, p < .001 and cultural background, F(1, 671) = 5.16, p = .023. These main effects were moderated by a significant Cultural Background × Culture interaction, F(1, 671) = 10.30, p = .001 (see Figure 3). Post hoc tests revealed that East Asian participants approved of the human–human interaction significantly more than the human–chatbot interaction (p <.001; d = 0.88), but this gap was larger in participants with European heritage (p <.001; d = 1.26).

Feelings of Approval and Unnerved Feelings, Broken Down by Culture and Condition.

Next, we explored how unnerved participants reported feeling following the interaction. The 2 × 2 ANOVA revealed a significant main effect of condition F(1, 671) = 89.82, p < .001, but no significant main effect for cultural background F(1, 671) = 0.00, p = .947. Notably, there was a significant Cultural Background × Condition interaction, F(1, 671) = 8.31, p = .004 (see Figure 3). Post hoc comparisons revealed that East Asian participants were more unnerved in the chatbot condition than the human condition (p <001; d = 0.62), but European heritage participants were unnerved to an even greater extent in the chatbot (vs. human condition; p < .001; d = 0.99).

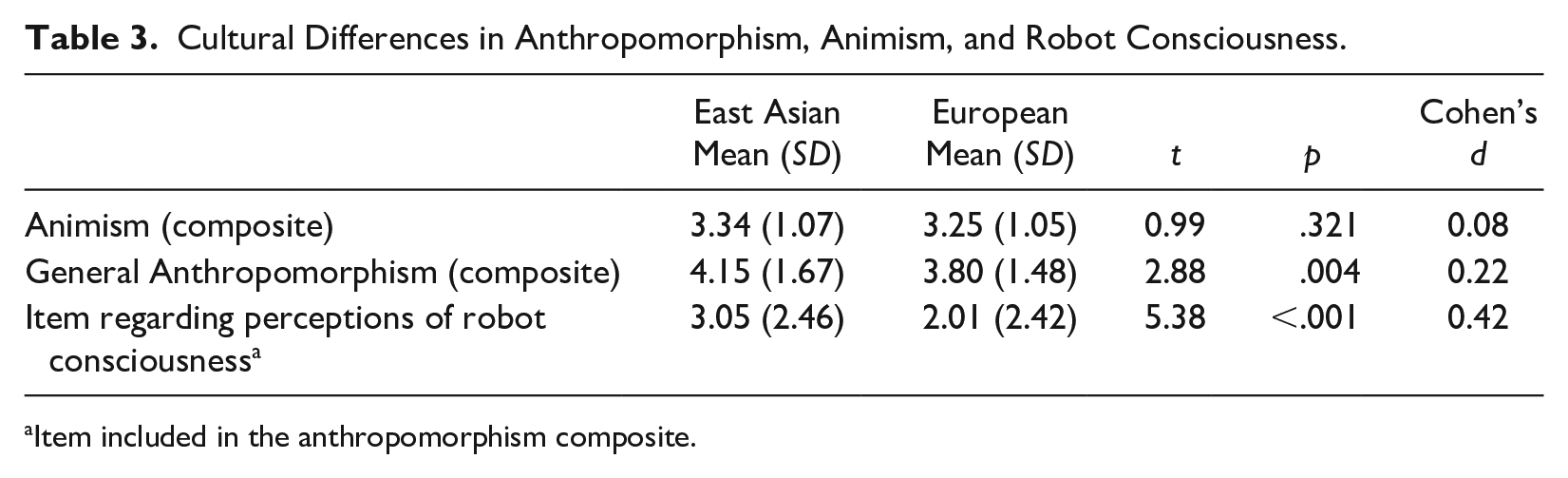

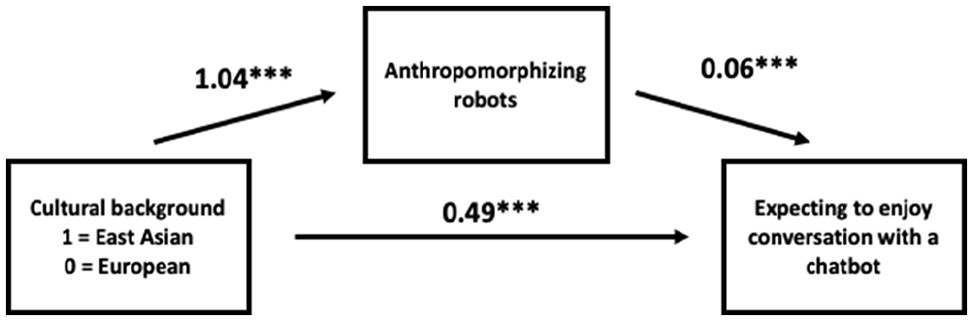

Does Anthropomorphism Mediate the Cultural Differences in Attitudes Toward Socially Interacting With a Chatbot?

We next explored whether differences in anthropomorphism or animism could explain these cultural differences. There were no significant differences between East Asian and European participants in animism (see Table 3), but the two groups differed in general anthropomorphism. Yet, anthropomorphism did not significantly mediate the differences between East Asian and European participants in how much they expected to enjoy talking with a chatbot (p = .130). We then tested whether one particularly relevant item from the anthropomorphism measure (“To what extent does the average robot have consciousness”) could explain the cultural differences in expectations of enjoying chatbot use. We found evidence in this post hoc analysis that East Asian’s more positive expectations of a conversation with a chatbot were mediated by their increased perceptions of consciousness in robots (B = 0.06; 95% CI = [0.03, 0.11]; see Figure 4). The direct effect of cultural background was also significant (B =0.49; 95% CI = [0.33, 0.66]).

Cultural Differences in Anthropomorphism, Animism, and Robot Consciousness.

Item included in the anthropomorphism composite.

Mediation Model Using Single Item Regarding Perceptions of Robot Consciousness (Study 1).

Do East Asians Born in Canada Expect to Enjoy a Conversation With a Chatbot Less Than East Asians Not Born in Canada?

As a final exploratory analysis assessing the effects of culture, we compared the East Asian participants who identified they were not born in Canada (i.e., answering “No” to the question of “Were you born in Canada?”) to the East Asian participants who indicated they were born in Canada. Of course, this is not a perfect comparison, because it is possible that some of the participants who were not born in Canada were born in other Western countries such as the United States. That being said, it is safe to assume that a large portion of the non-Canadian born students were indeed born in Asia. Interestingly, we found that East Asian participants who were not born in Canada expected to enjoy a conversation with a chatbot more (M = 2.83, SD = 1.13) than East Asian students born in Canada (M = 2.56, SD = 1.02), t(232.71) = 2.40, p = .017, Cohen’s d = 0.25). This analysis supports the idea that Western cultural attitudes are less favorable toward social chatbots, given that East Asians who had greater exposure to Canadian culture (i.e., being born in Canada) expected to enjoy a conversation with a chatbot less.

Intent-to-Treat analyses

We reconducted all of our key analyses using a dataset consisting of 919 participants that included all of the 675 participants in our final sample, plus 244 participants of East Asian or European descent who were excluded from our primary analyses for failing the comprehension check. The details on these analyses are available in the SOM. We replicated all of the key Culture × Conversation partner and Culture × Condition interaction effects, except for the Culture × Condition interaction effect involving participants approval of the text interaction, which was no longer significant (p = .19).

Study 1 Discussion

In Study 1, we found evidence that East Asian university students had more positive attitudes toward socially connecting with AI than students of European heritage. Moreover, East Asian students who were not born in Canada expected to enjoy a conversation with a chatbot more than East Asian students who were born in Canada. We also found tentative evidence that these cultural differences are partially mediated by East Asians’ increased propensity to anthropomorphize robots. It is worth noting, however, that there was substantial differential attrition across our chatbot and human conditions, with more participants failing the comprehension check in the human condition than in the chatbot condition. This suggests that overall, people in the study had a bias toward assuming the conversation partner in the study was a chatbot. If anything, such tendency would suggest that people may have been more likely to downplay the benefits of the interaction in the human condition, given the apparent bias to assume the conversation with a chatbot. As such, the fact that people still held more favorable attitudes toward the human–human interaction suggests that the true differences in attitudes toward the human–human and chatbot–human interactions may be rather large.

Of course, Study 1 had a few key limitations. First, it was an exploratory study, and we conducted our mediation analyses with only a single item from a broader scale of anthropomorphism that was selected post hoc. In addition, our entire sample consisted of students currently living in Canada, limiting the generalizability of our findings. In addition, we did not include a measure of exposure to advanced technology, thus we could not assess whether exposure to technology played a role in the cultural variation in attitudes toward technology. In a preregistered follow-up study (Study 2) we remedied these shortcomings by including a new measure of AI anthropomorphism we created and developed (see SOM for details on validation) and by recruiting samples of Chinese and Japanese adults, along with a sample of American participants, each completing the survey in their native language. We also included an exploratory measure of exposure to advanced technology to better assess how the influence of anthropomorphism compared with the influence of mere exposure to technology.

Study 2

Experimental Design

The design of Study 2 was nearly identical to Study 1 except the contents of the conversation between “Participant A” and a “chatbot” or “other participant” were slightly altered. The general tone of the conversation was the same as in Study 1, but the written text was created by the first author interacting with ChatGPT (see Figure S3 in the SOM). Japanese and Chinese participants also completed the study in their native language. The translated surveys can be found at https://tinyurl.com/2ur4knfe, and the preregistration is available here: https://tinyurl.com/3xkzbjax.

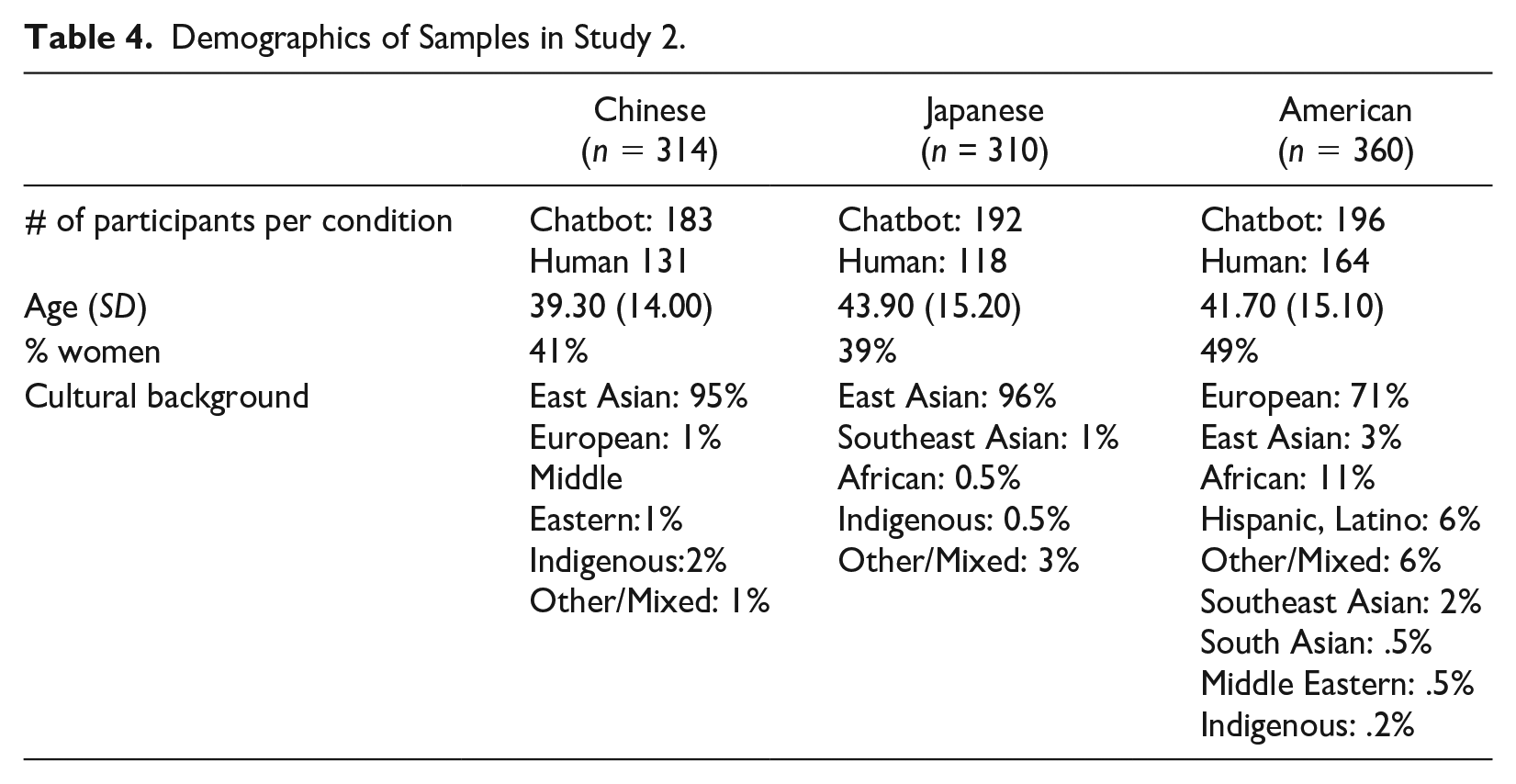

Sample

We recruited three different groups of participants. First, we recruited a sample of participants from the United States from Prolific Academic (Final n = 360). We collected this sample first, as we used it as part of the validation procedure for our Anthropomorphism of Technology Scale (see SOM for information on the results of the scale validation and for the full measure). Importantly, these participants also underwent the experimental manipulation and all of our measures of interest. In addition, using Qualtrics Panels, we recruited Japanese adults from Japan (Final n = 310) and Chinese participants from China (Final n = 314). Using the superpower package in R (Lakens & Caldwell, 2021), we estimated our power to detect interaction effects of the same size as those found in Study 1; with groups of this size, we had over 90% power to detect interaction effects of the same size as those uncovered in Study 1. The demographics of the final samples for each group of participants is available in Table 4.

Demographics of Samples in Study 2.

Exclusions

We reached our final sample of 360 American participants by recruiting 401 participant and excluding 41 of them for failing our comprehension check (as preregistered). Likewise, in our Japanese sample we reached the final sample of 310 after 129 participants were screened out for failing the comprehension check. Our Chinese sample (final n = 314) similarly did not include 161 participants who failed our comprehension check. As seen by the somewhat unequal condition sizes in Table 4, the majority of failed comprehension checks occurred in the human condition (similar to Study 1). Thus, in this study too, people were more likely to assume the conversation partner in question was a chatbot rather than a human. We conducted intent-to-treat analyses mirroring our preregistered analyses by including all of the participants who failed the comprehension check (see Intent-to-treat analyses section below). We also asked Qualtrics to ensure the age distribution of the Chinese and Japanese samples were similar to the American sample we had already collected. As such, Qualtrics screened out additional participants based on age-related quotas.

Measures

Study 2 differed slightly from Study 1 in the precise number and type of measures included (see https://tinyurl.com/2ur4knfe for the full survey). However, each of the key measures used in the Study 1 analyses were included in Study 2 (see Table 2; alphas for each measure are presented in Tables S2–S4 in the SOM). Study 2 also included the eight-item Anthropomorphism of Technology Scale that we had developed and validated (see SOM for full measure).

Preregistered Analysis Plan

We preregistered analyses that mirrored our ANOVA’s from Study 1, except we compared the American sample to the Chinese and Japanese samples separately. Specifically, we preregistered two separate 2 (Country of Residence) × 3 (Conversation Partner) mixed methods ANOVA’s that assessed whether our samples differed in the extent to which they would enjoy interacting with a chatbot compared with a friend or stranger. For each of these ANOVA’s, we hypothesized that there would be a significant Country × Conversation Partner interaction effect.

In addition, we preregistered 2 (Country of Residence) × 2 (Condition) ANOVA’s comparing whether our samples differed in the amount they approved of and felt unnerved by the conversation they read in the chatbot condition (vs. the human condition). Each of these were conducted twice to compare the American sample to the Chinese and Japanese samples separately. We once again hypothesized that there would be a significant Country × Condition interaction effect for each of these analyses.

Finally, we preregistered two sets of mediation analyses assessing whether any differences in positive attitudes toward chatbots between the American and Japanese/Chinese sample were mediated by Chinese/Japanese participants increased propensity to anthropomorphize technology.

Exploratory Analysis Plan

We conducted additional ANOVA’s mirroring those above but comparing Chinese and Japanese participants; for brevity, we only present the results of the interaction effects.

Results

The correlations between our items of interest in each of our three samples can be found in Tables S2, S3, and S4 in the SOM. We also present the results of robust nonparametric ANOVA’s in the SOM; it is worth noting that each significant interaction below remains significant in these robust analyses.

Expected Enjoyment of Interaction with Chatbot Relative to Friend and Stranger

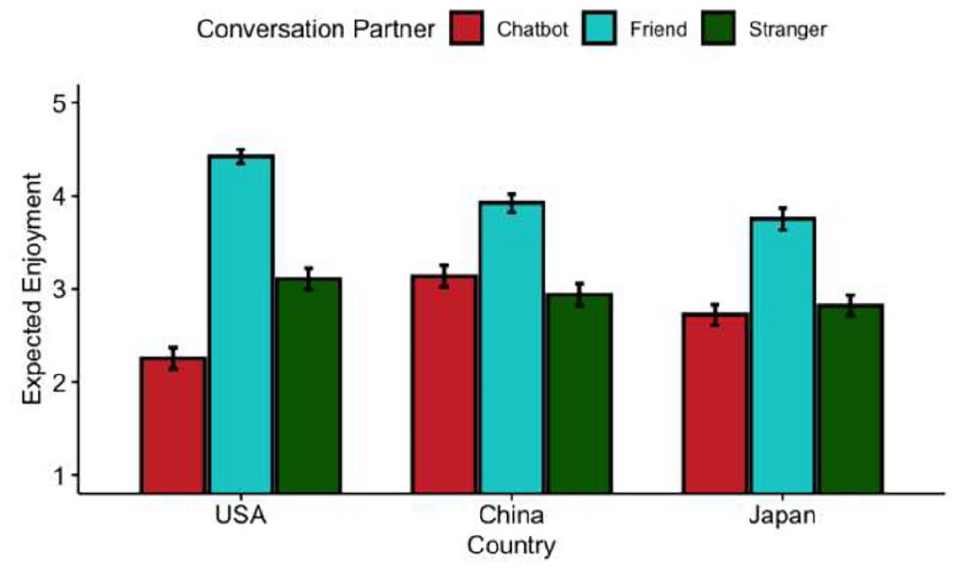

Although we conducted two separate analyses comparing American participants to Chinese and Japanese participants, we present the visual representation of the differences between countries together in Figure 5.

Expected Enjoyment of Conversation With Chatbot, Friend, and Stranger, Comparing American Participants to Chinese and Japanese Participants.

American versus Chinese Participants

Our 2 (Country: United States vs. China) × 3 (Conversation Partner: Chatbot, Stranger, Friend) ANOVA revealed a significant main effect of conversation partner F(1.96, 1,310.76) = 609.14, p < .001, but not country, F(1.00, 669.00) =1.68, p = .196. In line with our preregistered hypothesis (and Study 1), there was a significant Country × Conversation Partner interaction effect, F(1.96, 1,310.76) = 129.99, p <.001 (see Figure 5). The results of post hoc comparisons can be found in Table 5. Chinese participants expected to enjoy a conversation with a friend more than a chatbot, but they expected to enjoy a conversation with a chatbot more than a stranger. The American participants in contrast, expected to enjoy a conversation with a friend or stranger significantly more than a chatbot.

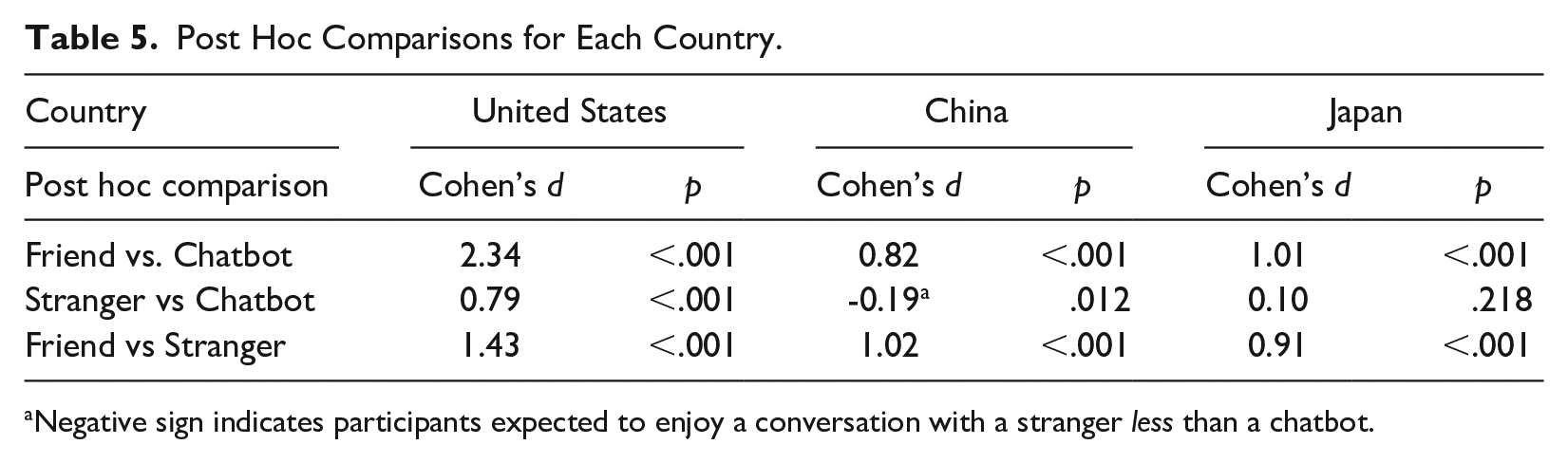

Post Hoc Comparisons for Each Country.

Negative sign indicates participants expected to enjoy a conversation with a stranger less than a chatbot.

American versus Japanese Participants

There was a significant main effect of conversation partner, F(1.91, 1,276.07) = 674.12, p < .001 and country, F(1.00, 668.00) = 8.17, p = .004. However, these effects too, were qualified by a significant interaction, F(1.91, 1,276.07) = 83.85, p <.001 (see Figure 5). Post hoc comparisons revealed that Japanese participants expected to enjoy a conversation with a friend more than a conversation with a chatbot (see Table 5). Notably, there were no significant differences between how much they expected to enjoy a conversation with a stranger compared with a friend.

Chinese versus Japanese Participants (Exploratory)

Table 5 suggests that Chinese participants held more favorable views about a conversation with a chatbot (relative to a friend or stranger) compared with Japanese participants. In line with this, the Conversation Partner × Culture interaction effect for the 3 × 2 ANOVA comparing Chinese and Japanese participants was significant, F(1.96, 1,211.71) = 6.25, p = .002.

Approval of Interaction with Chatbot

American versus Chinese Participants

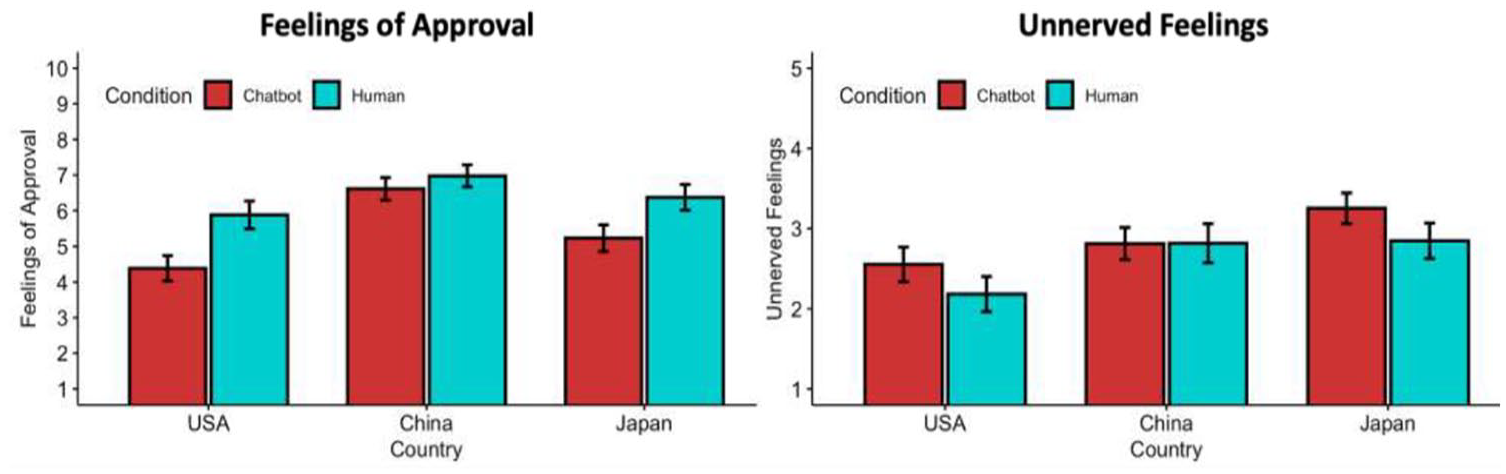

The 2 (Country: China vs. United States) × 2 (Condition: Chatbot vs. human) ANOVA resulted in significant main effects of Country, F(1, 670) = 95.58, p < .001 and Condition, F(1, 670) = 29.71, p < .001. Importantly, there was also a significant Country × Condition interaction, F(1, 670) = 9.99, p = .002. Post hoc comparisons revealed that for Chinese participants, there was no significant difference in approval between the chatbot and human conditions (p = .168; d = 0.18). However, there was a significant difference between the two conditions within the American sample (p < .001; d = 0.60 1 ; see Figure 6).

Comparison Between American, Chinese and Japanese Participants in Feelings of Approval (Left) and Unnerved Feelings (Right).

American versus Japanese Participants

There was a main effect of country, F(1, 665) = 13.29, p <.001 and condition, F(1, 665) = 47.65, p <.001. Regardless of condition, Japanese participants expressed more approval overall compared with American participants. In addition, participants in the chatbot condition expressed less approval than those in the human condition, regardless of culture. There was no significant Country × Condition interaction, F(1, 665) = 0.82, p = .367 (see Figure 6).

Chinese versus Japanese Participants (Exploratory)

The 2 (Country: China vs. Japan) × 2 (Condition: Chatbot vs. Human) ANOVA revealed a significant Country × Condition interaction effect, F(1, 619) = 4.58, p = .033.

Unnerved Feelings About the interaction

American versus Chinese Participants

The 2 (Country: China vs. United States) × 2 (Condition: Chatbot vs. Human) ANOVA resulted in a significant effect of Country F(1.00, 668) = 14.36, p < .001, but a nonsignificant main effect of Condition, F(1.00, 668) = 3.13, p = .077. Contrary to our preregistered hypothesis, the interaction effect was nonsignificant, F(1, 668) = 2.79, p = .096, although the effect was in the expected direction (see Figure 6).

American versus Japanese Participants

There was a main effect of Country, F(1, 665) = 39.75, p <.001 and Condition, F(1, 665) = 12.32, p <.001. Japanese participants felt more unnerved overall than American participants, and participants in the Chatbot condition were more unnerved than those in the human condition. There was no evidence of an interaction, however, F(1, 665) = .02, p = .880 (see Figure 6).

Chinese versus Japanese Participants (Exploratory)

There was no significant Country × Condition interaction effect, F(1, 617) = 3.46, p = .063.

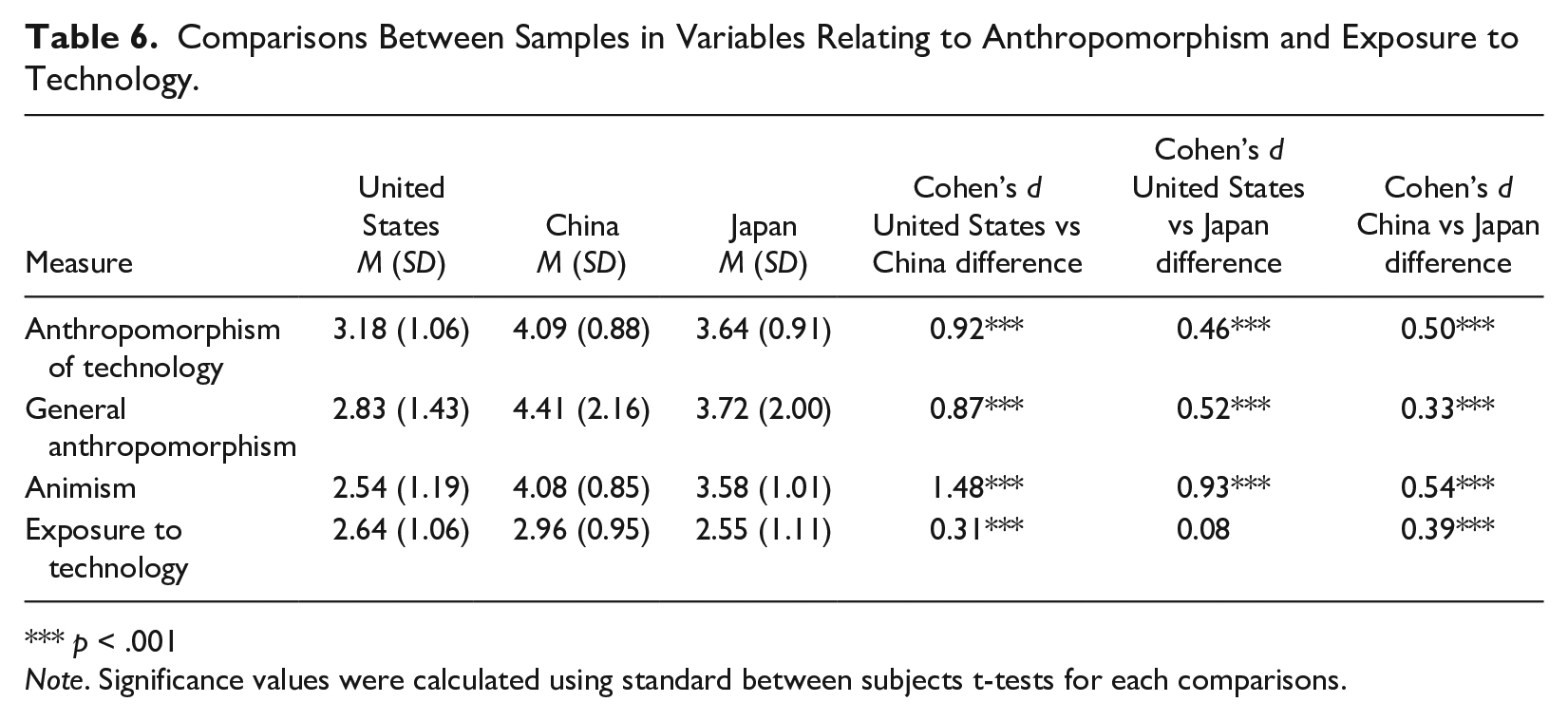

Mediation Analyses

In line with our preregistered predictions, both Chinese and Japanese participants reported significantly greater levels of tech anthropomorphism than Americans (see Table 6). This difference was also found with the animism and general anthropomorphism measures. We also examined whether the three samples differed on our single item measure asking about their exposure to advanced technology. Chinese participants reported significantly more exposure to advanced technology than Japanese and American participants, but there was no significant difference between American and Japanese participants.

Comparisons Between Samples in Variables Relating to Anthropomorphism and Exposure to Technology.

*** p < .001

Note. Significance values were calculated using standard between subjects t-tests for each comparisons.

Preregistered Mediation Analyses

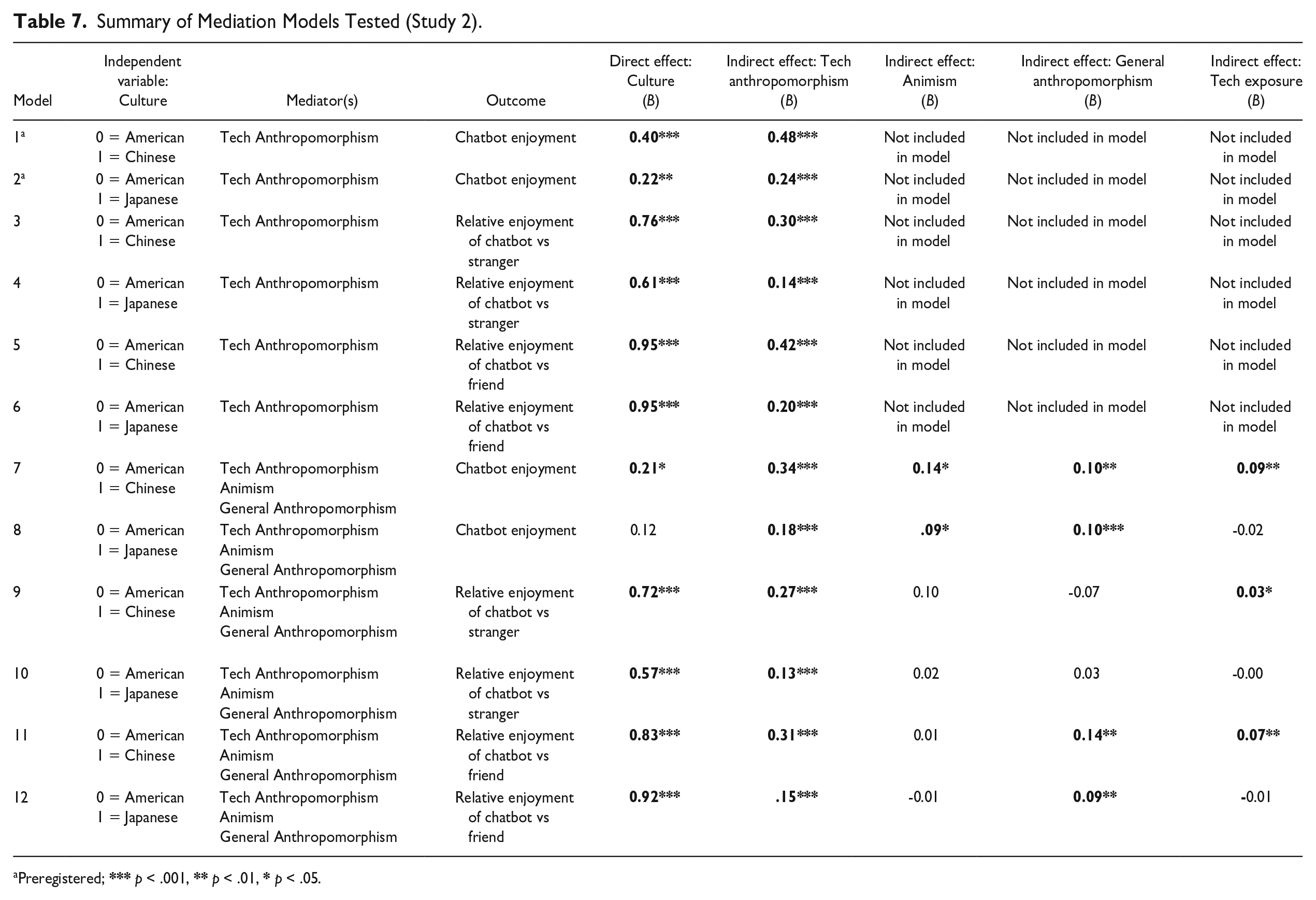

As predicted, cultural differences in anthropomorphizing technology partially mediated the difference between Chinese and American participants (p < .001) and Japanese and American participants (p <.001) in their expected enjoyment of interacting with a chatbot (see Models 1 and 2, Table 7).

Summary of Mediation Models Tested (Study 2).

Preregistered; *** p < .001, ** p < .01, * p < .05.

Exploratory Mediation Analyses

We also tested mediation using participants relative expected enjoyment of interacting with a chatbot compared with a friend/stranger which represents how much people expect to enjoy interacting with a chatbot, relative to a friend or stranger. Thus, we repeated each of the mediation analyses with the expected enjoyment of interacting with a chatbot relative to a stranger (expected enjoyment: chatbot—expected enjoyment: stranger) and the relative expected enjoyment for a chatbot versus a friend (expected enjoyment: chatbot—expected enjoyment: friend) as outcome variables. For each of the difference scores, positive values indicate participants expected to enjoy talking to a chatbot more than a friend/stranger, while more negative scores indicate a greater preference for a friend/stranger over a chatbot. Anthropomorphism of technology was still a significant mediator in these models (see Models 3–6 Table 7).

We also repeated mediation Models 1–6 (Table 7), but with animism, general anthropomorphism, and exposure to technology included as additional mediators simultaneously along with tech anthropomorphism. In each model, tech anthropomorphism remained a significant mediator, while animism, general anthropomorphism, and exposure to technology were often but not always significant (see Models 7–12 in Table 7).

Exploratory Intent-to-Treat Analyses

We conducted intent-to-treat analyses of our key preregistered analyses using a dataset consisting of N = 1,315 participants which included the 984 participants in our final dataset along with the 331 participants who were excluded from our primary analyses for failing the comprehension check. Additional details of these analyses are available in the SOM, but it is worth noting that all of the significant Culture × Condition and Culture × Conversation partner interaction effects in our main analyses remained significant in the intent-to-treat analyses.

Discussion

Across two studies, we found evidence for cultural differences in attitudes toward socially bonding with conversational AI. In Study 1, university students of East Asian heritage expected to enjoy a hypothetical conversation with a chatbot (vs. a conversation with a human) more than students with a European background. Moreover, East Asian students were less unnerved by and more approving of a hypothetical interaction between a human and a chatbot (vs. an interaction between two humans) than those with a European background. Likewise, in Study 2, Chinese and Japanese adults displayed more positive attitudes toward human–chatbot conversations (vs. human–human conversations) than their American counterparts. Both studies showed that these cultural differences primarily stemmed from East Asians’ higher tendency to anthropomorphize technology. This research is the first to highlight such cultural differences in attitudes toward emotionally bonding with chatbots, supporting recent theorizing on East Asian and Western perceptions of AI (Yam et al., 2023).

In Study 2, Chinese participants displayed distinct attitudes toward chatbots compared with Americans, mirroring the results of Study 1. While Japanese expected to enjoy a chatbot interaction (vs. human interaction) more than Americans in Study 2, there was no significant difference in the two cultures feelings about someone else bonding with a chatbot (vs. a human). Overall, the analyses suggest that Chinese culture may be especially favorable toward social chatbot use. Aside from any differences in anthropomorphism, this finding could reflect the fact that social chatbots appear to be more integrated in Chinese society than any other country, including Japan. Indeed, the Chinese chatbot Xiaoice mentioned earlier is the most popular social chatbot in the world (Zhou et al., 2020). Thus, even though social robots in general are more prominent in Japan, the Chinese-Japanese difference uncovered here may be because social chatbots specifically are more prominent in China. These cultural differences are important to consider, given that Chinese and Japanese participants are often group together as a single “East Asian” sample in cross-cultural research.

In Study 2, Chinese participants exhibited the strongest inclination to anthropomorphize, scoring higher than both American and Japanese participants in measures of anthropomorphism of technology, general anthropomorphism, and animism (see Table 6). Japanese too, exhibited significantly higher levels of anthropomorphism than Americans. Such differences are consistent with the idea that cultures that are rooted in historically animistic Eastern religions are more likely to humanize robots in the present day (Yam et al., 2023). Furthermore, our results suggest an increased tendency to anthropomorphize helps to explain the cross-cultural differences in attitudes toward technology. Past research has suggested East Asian cultures may favor anthropomorphically designed technology over less-anthropomorphically designed technology (Baskentli et al., 2023). However, our findings show that cultural differences in general anthropomorphic beliefs about AI explain cultural variation in attitudes toward social chatbots.

Of course, anthropomorphism is not the only potential explanation for the cultural variance in attitudes toward technology. Yam and colleagues (2023) posited that increased exposure to robots and technology may contribute to more favorable attitudes toward technology in East Asia. We only included a single-item assessing how much “exposure to advanced technology” participants had in their daily life (response options “None at all” to “A lot”). Interestingly, even with this measure in the mediation models (see Table 7), anthropomorphism of technology remained a significant mediator of the cultural differences between Americans and the East Asian participants. Thus, our results suggest that anthropomorphism is a more important driver of cultural variation in attitudes toward social chatbots than differences in exposure to technology. Why might anthropomorphism be more influential for positive attitudes toward social chatbots than exposure? One possibility is that exposure to technology may not necessarily increase positive attitudes toward social chatbot use. For example, people may hear about or know people who use social chatbots in ways they believe are unhealthy (e.g., as romantic partners; Lucas, 2024). This kind of exposure may actually decrease people’s favorability toward social chatbots. Thus, it is possible that exposure may be more of a “mixed bag” with respect to its effect on people’s attitudes toward conversational AI.

Anthropomorphism, in contrast, may have a more reliably positive impact on people’s feelings about relationships with chatbots. Given that feeling understood is a core component of emotional connection (Itzchakov et al., 2022; Reis et al., 2017), it should not be surprising that “seeing human” was associated with East Asian’s increased favorability toward bonding with otherwise mindless chatbots. Indeed, the effect of anthropomorphism on positive attitudes toward technology is potentially amplified for social chatbots, specifically. As a result, our focus on social chatbots may explain why we found such strong cultural differences between East Asian (vs. Americans). Past research on cultural differences in attitudes toward technology has tended to focus on physical forms of technology such as robots (Bartneck, 2008; Destephe et al., 2015; Ikari et al., 2023; Li et al., 2010; Lim et al., 2021), where such differences in anthropomorphism may not be as consequential. As a result, the cultural differences uncovered here likely extend to other forms of AI, but it is possible that the cultural differences are largest for “social” AI, such as social chatbots.

It is important to underscore that the cross-cultural differences we uncovered in the present study are not simply due to East Asian participants having more positive attitudes toward social interaction of any kind, relative to their Western counterparts. By including hypothetical scenarios involving interactions with friends or strangers, we were able to show that the East Asian participants were uniquely predisposed to enjoying conversations with chatbots, rather than just being predisposed to enjoying any interactions more than Westerners. In general, the East Asian participants expected to enjoy interactions with a friend and a stranger less than their Western counterparts, despite expecting to enjoy an interaction with a chatbot more.

A key limitation of the present study is that participants did not actually interact with a chatbot. Instead, we measured participants expectations of a hypothetical conversation with a chatbot or their attitudes about someone else’s hypothetical interaction with a chatbot. We chose to use hypothetical scenarios, because we had logistical concerns about having participants from multiple countries speak with a chatbot in their own native language. For example, ChatGPT is unavailable in China, meaning that we would have needed to use a different chatbot for participants in China than participants in the United States and Canada. We also had concerns about the variability in chatbots ability to speak different languages fluently. For example, if a chatbot was less effective at speaking Japanese than English, than any differences in how much Japanese (vs. American) people enjoyed an interaction with a chatbot may have been due to the chatbots lower fluency in languages other than English. Nonetheless, future research where participants interact with actual chatbots would be highly informative.

The cultural differences in people’s responses to the hypothetical scenarios suggests there is substantial cultural variability in people’s attitudes toward social chatbots. Of course, the cultural difference in the favorability of these attitudes could be due to cultural differences in forecasting errors. That is, it is possible that East Asian individuals would enjoy an interaction with a chatbot the same amount as Americans, but that East Asians just expect to enjoy the interaction more. However, it is unclear whether tendencies for affective forecasting errors differ across cultures. As chatbots continue to improve in their ability to speak multiple languages, research utilizing actual interactions with chatbots is needed. That said, even if the differences uncovered here reflect forecasting errors on behalf of the East Asian participants, such forecasting errors can still have important implications. For example, they may help explain why the Chinese chatbot Xiaoice has so many registered users compared with other Western chatbots (Zhou et al., 2020).

An additional limitation is that our mediation analyses were cross-sectional in nature. Future research that experimentally manipulates anthropomorphism and then assesses attitudes toward technology would provide useful causal evidence for the role of anthropomorphism in attitudes toward social chatbots. It is possible there is a positive feedback loop such that anthropomorphism leads to increased use, which may lead to increased levels of anthropomorphism.

Overall, our findings point to cultural differences in attitudes toward emotionally connecting with social chatbots and that these differences are mediated by cultural differences in anthropomorphism. These differences in anthropomorphism may help explain why other forms of technology have been more quickly adopted in East Asian countries like Japan and China rather than the United States. Of course, more research is needed to further understand why East Asian cultures appear to be more open to AI and other forms of technology than their Western counterparts, and our results do not speak to people from other cultures. The present research, however, provides strong evidence that anthropomorphism of technology plays a role in explaining the differences in the cultural acceptance of an important new form of relationship—digital companionship with a social chatbot.

Supplemental Material

sj-pdf-1-jcc-10.1177_00220221251317950 – Supplemental material for Cultural Variation in Attitudes Toward Social Chatbots

Supplemental material, sj-pdf-1-jcc-10.1177_00220221251317950 for Cultural Variation in Attitudes Toward Social Chatbots by Dunigan P. Folk, Chenxi Wu and Steven J. Heine in Journal of Cross-Cultural Psychology

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the Social Sciences and Humanities Research Council of Canada #grant 435-2019-0480.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.