Abstract

This explorative study investigated (a) whether social attraction, self-disclosure, interaction quality, intimacy, empathy and communicative competence play a role in getting-acquainted interactions between humans and a chatbot, and (b) whether humans can build a relationship with a chatbot. Although human-machine communication research suggests that humans can develop feelings for computers, this does not automatically imply that humans experience feelings of friendship with a chatbot. In this longitudinal study, 118 participants had seven interactions with chatbot Mitsuku over a 3-week period. After each interaction participants filled out a questionnaire. The results showed that the social processes decreased after each interaction and feelings of friendship were low. In line with the ABCDE model of relationship development, the social processes that aid relationship continuation decrease, leading to deterioration of the relationship. Furthermore, a novelty effect was at play after the first interaction, after which the chatbot became predictable and the interactions less enjoyable.

Keywords

The use of chatbots—dialogue-based programs designed to show humanlike behavior (Vassallo et al., 2010)—in various settings is increasing exponentially. Chatbots carry out simple, online, text-based conversations, frequently replacing human interlocutors for things like basic customer service interactions. One exciting development is social chatbots, used for social, relational, and therapeutic purposes (Mujeeb et al., 2017). Social bots present themselves as humanlike, with personality and appropriate emotions, which increases interactants’ satisfaction (Demeure et al., 2011). The primary goal of social chatbots is to be a virtual companion to its users, to establish an emotional connection and/or provide social support, for instance to people with mental health problems (Shum et al., 2018). This sort of affective communication can stimulate attraction and create a bond between the user and the chatbot. As more chatbots are created with the goal of socializing and making friends (e.g., Mitsuku, Replika), it is important to better understand the possibility of relationship formation between humans and chatbots.

In contrast to computer-mediated communication (CMC), where people communicate through digital technologies, human-machine communication (HMC) encompasses the ongoing sense-making process between humans and machines (Guzman, 2016). Communication with chatbots is a form of HMC, which suggests people are not talking through a machine, but with a machine. Research shows that people adjust their use of language when they communicate with a computer (Mou & Xu, 2017). For example, messages to chatbots contain fewer words per message, more profanity, and more negative emotion words, compared to human-human interactions (Mou & Xu, 2017).

Although HMC is different from CMC, research has shown that it is possible for humans to form relationships with a machine or a computer (e.g., Bickmore & Picard, 2005). Early on, Reeves and Nass (1996) demonstrated that people apply the same social rules to interactions with computers as they do in interactions with humans. According to the computers as social actors (CASA) paradigm people treat their computers as social beings (Nass & Moon, 2000). Research shows that chatbots can make people feel flattered, evoke politeness and as a result, people tend to treat them as if they were a colleague, team member or a friend (Bickmore & Picard, 2005). People have a fundamental need to form social relationships, even if that relationship is with a chatbot.

Although research suggests that humans can develop feelings for inanimate objects like computers (Nass & Moon, 2000; Reeves & Nass, 1996), this does not automatically imply that this results in feelings of friendship. According to Levinger’s (1980) ABCDE model, the process of friendship formation develops from initial attraction, to continuance or deterioration, which means the relationship ends. Whether or not continuation of a relationship takes place, depends on people’s emotional investment, the frequency of interaction, the level of intimacy and affect intensity, which are fundamental human needs (Shum et al., 2018; Weigel & Murray, 2000). Also, other disciplines, such as philosophy, even claim that it is impossible for humans to form a friendship with a chatbot. One of the prerequisites of friendship is that friends truly care about each other (e.g., Aristotle Nicomachean Ethics; Cocking & Kennett, 1998). Although social bots can act as if they care about the person they are interacting with, they simply are not capable of genuinely caring in this way. Does this chatbot’s lack of genuinely caring for the human, hinder the development of a human’s feelings of friendship toward the chatbot?

Though the viewpoints from different disciplines are not incompatible, they do not result in one clear expectation regarding the question if people can build a relationship with a social chatbot. Most studies comparing human-human and human-chatbot getting-acquainted interactions are based on a single interaction (e.g., Edwards et al., 2014; Mou & Xu, 2017) although relationships take time to develop (Hays, 1984). Therefore, the aim of this longitudinal study is to explore (a) what social processes may play a role in getting-acquainted interactions between humans and a chatbot, and (b) whether it is possible for humans to build a relationship with a social chatbot.

The evolution of chatbots

One of the first chatbots was Joseph Weuzenbaum’s Eliza (1966), a simple, female chatbot, which mimicked a Rogerian psychotherapist. Since then, different chatbots have been developed, such as Jabberwacky and Alice. Contemporary chatbots are deployable in many different situations: in education, as a source of information, for customer service, and as a tool for e-commerce. Furthermore, chatbots are increasingly used for social and therapeutic purposes. There are chatbots helping people to stop smoking (e.g., StopSmoking), helping people suffering from depression and stress (e.g., Woebot; Mujeeb et al., 2017), and for meditation (e.g., MeditateBot). Finally, there are social chatbots acting like companions with whom people can socially interact (e.g., Mitsuku; Shum et al., 2018).

Although chatbots are becoming increasingly human-like, the question is if chatbots are capable of socially engaging in interactions necessary for relationship formation. An important obstacle for computers is that they must be able to not only understand the meanings of words, but also comprehend the endless variability of expression in how those words are used in a language to communicate meaning (Hill et al., 2015). Chatbots perform quite well within specific domains and have specific areas of knowledge (Chang et al., 2008; Shum et al., 2018). However, even the most advanced chatbots are limited regarding the depth of information they have concerning a topic (e.g., Hill et al., 2015; Mou & Xu, 2017). When two humans interact, the conversation is goal-driven and there is usually an element of common history or shared experience over time (Mou & Xu, 2017). Specifically, communication between people has a unity and generally builds on what has been said before (Beran, 2018). Although chatbots learn from the interactions they have, and can improve based on those, they are unable to specifically learn from one individual conversation and target their responses accordingly. Consequentially, the chatbot is unable to remember personal details from one interaction and is thus unable to reference those (Hill et al., 2015). Finally, human language is motivated by emotions and feelings, something a chatbot does not have. This suggests that there are important layers missing from chatbot communication.

Research indeed shows that people react differently to a chatbot, compared to a human interlocutor (Mou & Xu, 2017). In a study comparing human-human interactions to human-chatbot interactions, Mou and Xu (2017) found that human users could tell that the chatbot’s responses were less natural compared to a human interlocutor. When humans interacted with the chatbot, they were less open, less agreeable, and less extroverted, compared to interactions with a human. Another study by Ho et al. (2018) revealed that people used clearer and simpler language when talking to a (perceived) chatbot, compared to a human interlocutor. Similarly, Shechtman and Horowitz (2003) found that individuals in human-human interactions used more words and spent more time overall in the conversation. Research even shows that the anonymous nature of HMC results in greater use of profanity when interacting with chatbots (Hill et al., 2015).

Thus, research shows that people converse differently with chatbots compared to humans. How does that impact friendship formation? From CMC research we know that it is possible for humans to become socially attracted to other humans via reduced-cues technologies (e.g., Antheunis et al., 2012). For example, in text-based CMC, visual anonymity ensures that people feel safer to disclose (intimate) personal information about themselves, which stimulates social attraction and friendship formation (Antheunis et al., 2012; Joinson, 2001). The affordances offered in text-based CMC resemble chatbot communication, as both modes are synonymous, text-based technologies with limited cues (Hill et al., 2015). In order to understand the possibility of relationship formation between humans and chatbots, as more chatbots are created with the goal of socializing and making friends, this study examines whether people can build a relationship with a social chatbot.

Social processes relevant for relationship formation

To investigate if humans can build a relationship with a chatbot, we first go back to the process of relationship formation, which, according to Levinger (1980) occurs in stages. Levinger’s (1980) model of relationship development assumes an ABCDE sequence of relationship development where A stands for initial attraction, B for build-up, C for continuation of the relationship, D for deterioration or decline, and E for ending. In between the phases, commitment plays an important role and is crucial for relationship continuation. A relationship is believed to deteriorate because of a decline in rewarding outcomes and an increase in negative outcomes ( Winstead et al., 1988). This so-called stage model describes how individuals go through distinct stages as they initiate and build relationships with other people and has previously been applied to conceptualize relationship development as a linear sequence of stages (Macapagal et al., 2015). Research has, for instance, applied this model to describe the process, defined as a phase shift, through which previously stable relationships become unstable (e.g., Baron et al., 1994). Levinger (1983) stated that the model should be viewed as an organizational device to comprehend relationship development. In the current study, we do not aim to test this model, but rather to use the model to capture some of the key aspects of relationship building.

Relationship formation occurs in stages and whether a relationship continues, depends on the state of continuation. Specifically, placid static continuation and unstable continuation will lead to relationship deterioration, while satisfying continuation means the relationship is likely to grow (Weigel & Murray, 2000). Based on decades of research on human-human interactions and relationship formation, we can distinguish several social processes that may facilitate satisfying continuation and, thereby, friendship formation. A (social) relationship, is a co-operative, supportive, and caring bond between two or more people (Foster, 2005). A “friend” is defined as “somebody to talk to, to depend on and rely on for help, support, and caring, and to have fun and enjoy doing things with” (Rawlins, 1992, p. 271). Friendships are formed through similar interests and emotional affection and include elements of trust, honesty, safety, loyalty, support, understanding, and acceptance (Tillmann-Healy, 2003). For a friendship to develop, it is important that people like one another. Social attraction, or the positive feeling of liking toward another person (McCroskey et al., 2006), is a first social process that may aid relationship development. People generally want to continue communicating and build relationships with someone they like. Thus, social attraction is crucial for relationships to progress from phase A (attraction) to phase B (build-up) in Levinger’s (1980) model. When people lack the desire to form a friendly relationship with someone, a friendship is unlikely to build.

Next, self-disclosure, intimacy, and the quality of interactions may determine whether continuation of a relationship will take place (phase C). Self-disclosure is defined as the act of revealing personal information to others (Archer & Burleson, 1980). As relationships develop, self-disclosure is expected to increase (Ruppel, 2015). When getting to know someone, the act of disclosing personal information involves risk and vulnerability on the part of the discloser, which increases the likelihood of relationship development (Valkenburg & Peter, 2009). When one person discloses personal information to another, it is likely that this disclosure is reciprocated which means mutual trust and understanding is enhanced. Self-disclosure is seen as a positive reward, in that people may believe that they have been singled out to receive intimate information, which enhances social attraction (Collins & Miller, 1994). Thus, as people disclose more intimate information, they develop stronger relationships (Valkenburg & Peter, 2009). Self-disclosure is one of the cornerstones of the formation and development of relationships (e.g., Joinson, 2001).

What is also important in initiating relationships and determines whether relationships will continue, is how interactants rate their initial interactions in terms of intimacy and quality. Intimacy is described as the underlying affective reaction toward another person in an interaction (Edinger & Patterson, 1983). Interpersonal intimacy is characterized by openness, receptivity, and harmony (Edinger & Patterson, 1983). The more intimate interactions are, the less interpersonal uncertainty exists and the more likely a relationship can develop (Berger & Calabrese, 1975). As uncertainty reduces, affinity will develop. Burgoon and Hale (1984) suggest that as intimacy develops, communicators become more involved, more trusting, and communicate more attitude likeness to one another, which means the relationship becomes more personal.

The quality of interactions is also important for relationship continuation. Interactions that are rated as higher in quality include more involved communicators who are more skilled at interaction management and show more fluent and coordinated speech (Burgoon & Le Poire, 1999). Furthermore, these interactions involve communications who come across as friendly and pleasant (Coker & Burgoon, 1987). Studies show that, like intimacy, communication quality is strongly related to interpersonal trust and source credibility (Edwards et al., 2014). A qualitatively better interaction is more comfortable, creating a sense of common ground between people, which, in turn, promotes higher levels of credibility and trust, leading to increasing likeability of the interactant (Houser et al., 2008). This suggests that the better and more qualitative interactions are perceived, the more likely a relationship will develop.

HMC and the process of relationship formation

Communication technologies are increasingly used in relationships, so it is not surprising that many studies have focused on analyzing the role of these technologies in how relationships are formed and maintained (e.g., Antheunis et al., 2012; Ruppel, 2015). These studies show that social attraction, self-disclosure, intimacy, and quality in CMC interactions enhance relationship development (e.g., Antheunis et al., 2012; Joinson, 2001). According the Social Penetration theory (SPT), particularly self-disclosure plays an important role in stimulating relationship development (Altman, & Taylor, 1973). As relationships develop, elements like self-disclosure, intimacy, and social attraction are expected to increase (Ruppel, 2015). These processes are employed to compensate for the lack of nonverbal cues and the textual nature of the medium, which is similar to chatbot communication. Some studies even view chatbot communication as a form of textual CMC (Hill et al., 2015).

Furthermore, Walther’s (1992) social information processing (SIP) theory suggests that if given enough time, people show the same social processes and relational communication in CMC as they do FTF, as people are naturally motivated to form relationships. Moreover, positive affect is believed to increase with social interaction, which facilitates relationship formation (Vittengl & Holt, 2000). However, as outlined above, communicating through CMC with a human or a chatbot are different experiences (e.g., Hill et al., 2015; Mou & Xu, 2017), so we do not yet know whether these social processes associated with relationship formation in interactions between humans, will indeed develop over time in interactions between a human and a chatbot. Therefore, we have formulated the following research questions:

Two other factors may be important when analyzing initial interactions between humans and a social chatbot: empathy and communication competence. Specifically, a lack of empathy and poor communication competence may contribute to a decline (phase D) and subsequent ending (phase E) of a relationship (Levinger, 1980). Research highlights the importance of empathy and social skills on the part of social chatbots for the bot to be a valuable companion to a human and for a human to establish an emotional connection with the chatbot (e.g., Shum et al., 2018). Empathy is especially important, as a chatbot needs to be able to identify the user’s emotions from an interaction, see how these emotions develop over time and understand the individual’s emotional needs (Shum et al., 2018). One of the reasons people prefer emotional support from a human is because they respond positively to empathy and they prefer personalized support (Smith et al., 2016). Research shows that computers can provide acceptable emotional support, but good quality emotional support comes from someone who empathizes with the user, which is something a chatbot must be capable of (Smith et al., 2016).

Another factor that is important in social interactions, is communication competence; the social skill someone has in carrying on a social conversation and is related to someone’s intelligence, skill, creativity, and motivation to communicate (Coker & Burgoon, 1987; Demeure et al., 2011). Communication competence also suggests that interlocutors can understand one another in a conversation (Jones et al., 1999). This is reflected in the types of words used, the topics discussed, and the adherence to the social rules of an interaction. In developing relationships, communication competence encompasses positive social behaviors that reflect one’s ability to integrate thinking, feeling and behavior into a social interaction (Jackson et al., 1995). Allegedly, people are more attracted to people who are competent at communicating and are, in turn, more likely to bond with these people.

Although studies suggest the importance of empathy and communication competence in social interactions, few studies have analyzed whether chatbots encompass such qualities. While research highlights the importance for a social chatbot to show empathy and have the ability to detect a human’s emotions (Shum et al., 2018), it remains unclear whether today’s chatbots do indeed come across as empathic and communicatively competent to their users. Therefore, we formulate the following research question:

The aforementioned social processes are derived from research on relationship formation between two humans and may be present in human-chatbot interactions as well. Furthermore, these social processes facilitate bonding and relationship formation between humans, but we do not yet know whether it is possible for humans to experience feelings of friendship toward a chatbot. If these processes indeed progress in human-chatbot interactions, feelings of friendship formation are expected. A recent study by Ho et al. (2018) revealed that people experienced as many emotional, relational, and psychological benefits when disclosing to a chatbot as to a human interaction partner. These findings may suggest that it is possible for humans to experience positive relational outcomes when talking to a chatbot. Hence, our final research question is as follows:

Method

Participants and procedure

The sample in this study consisted of 118 participants (47 men; 71 women) whose age ranged from 18 to 58 years old (M = 23.51; SD = 6.56). The majority indicated they had a diploma obtained at university (54.2%) or at an upper secondary education institution (44.9%). When asked how frequently participants interacted with chatbots, only four participants (3.4%) indicated they did so daily. Most participants were not experienced in interacting with chatbots as they indicated they only interacted with chatbots several times a year (50%), followed by never (36.4%). The most frequently mentioned motives for interacting with a chatbot were customer service (54.2%), fun/entertainment (19.5%), and online shopping (16.9%).

This is an explorative study with a longitudinal research design, led by six experimenters, who recruited participants through a participant pool at a university and through convenience sampling. Participants participated voluntarily or were given course credit for their participation. In order to participate in the study, they had to be able to speak and write in English, as the chatbot used in this study only spoke English, and they had to have Facebook Messenger or Telegram installed on their phone or computer. The chatbot used in this study is called Mitsuku (https://www.pandorabots.com/mitsuku/) and is easily accessible on these applications. Mitsuku presents herself as an 18-year old female chatbot from the city of Leeds. She was developed in 2005 by Steve Worswick and has since won the Loebner prize five times (in 2013, 2016, 2017, 2018, and 2019), which is awarded to the most human-like system at an international competition between developers of computer applications.

Participants first joined an information session on campus, where groups of 25 participants filled out a consent form, to signify their participation in the study, and their consent for the use of the data obtained. Next, they were informed about the study and asked to fill out a pretest questionnaire with demographic questions and questions about their experience with chatbots. Only 1 participant indicated that he/she had already interacted with Mitsuku before the start of the study and this participant was thus excluded from further analyses. After filling out the questionnaire, participants were asked to install Mitsuku on their phone or computer and told that their first interaction with Mitsuku would be held a few days later.

All interactions had to last at least 5 minutes for participants to receive credit for their participation. This was checked by looking at the date and time stamps of all interactions. The interactions were held every 3 days over a 3-week period, as research shows relationships take 3–9 weeks to develop (Hays, 1984). Between each interaction, there was a 2-day interval where no interaction took place. During the interactions, participants were simply asked to talk to Mitsuku and get acquainted with her. On the day of the interaction, participants received a reminder that they had to interact with Mitsuku. After each interaction, participants filled out a short questionnaire. A day after the seventh and final interaction, a post-test questionnaire was filled out with questions about the chatbot and questions measuring participants’ feelings of friendship toward the chatbot.

Self-report measures

The variables social attraction, self-disclosure, intimacy, quality, empathy, and communication competence were measured seven times, after each interaction with Mitsuku, while feelings of friendship was measured once, in the post-test questionnaire.

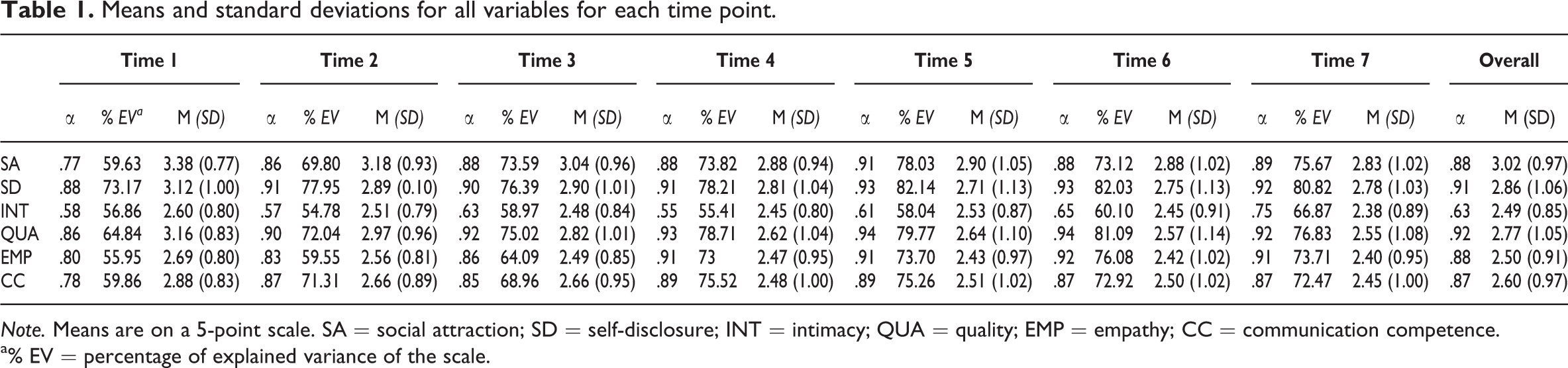

Social attraction

The social attraction of participants toward Mitsuku was based on the social attraction scale by McCroskey et al. (2006). Participants were asked to what extent they agreed with the following statements: “Mitsuku is pleasant to be with,” “Mitsuku is sociable with me,” “Mitsuku is easy to get along with,” and “Mitsuku is not very friendly” (reverse-coded). The response categories ranged from 1 (completely disagree) to 5 (completely agree). For the means, standard deviations and Cronbach’s alpha for each time point for all variables, see Table 1.

Means and standard deviations for all variables for each time point.

Note. Means are on a 5-point scale. SA = social attraction; SD = self-disclosure; INT = intimacy; QUA = quality; EMP = empathy; CC = communication competence.

a% EV = percentage of explained variance of the scale.

Self-disclosure

The self-disclosure of participants during the interactions was measured based on Ledbetter (2009). Participants were asked to what extent they agreed with the following statements: “I felt like I could be personal during the interaction,” “I felt comfortable disclosing personal information during the interaction,” “It was easy to disclose personal information in the interaction,” and “I felt like I could be open during the interaction.” The response categories ranged from 1 (completely disagree) to 5 (completely agree).

Intimacy

Interaction intimacy was measured with three statements based on Nowak and Biocca (2003). Participants were asked to indicate to what extent they agreed with those statements. The response categories ranged from 1 (completely disagree) to 5 (completely agree). The items were “I experienced the interaction as intimate,” “I felt involved in the interaction,” and “I experienced the interaction as superficial” (reverse-coded).

Interaction quality

Interaction quality was based on Berry and Hansen (2000). Participants were asked to what extent they agreed with the statements, on a 5-point scale, ranging from 1 (completely disagree) to 5 (completely agree). The items were “I enjoyed the interaction with Mitsuku,” “I considered the interaction with Mitsuku to be smooth, natural, and relaxed,” “I would like to interact with Mitsuku again,” “I feel the interaction with Mitsuku was satisfying,” and “I consider the interaction with Mitsuku to be pleasant.”

Empathy

Empathy was measured based on research by Stiff et al. (1988) and asked participants to what extent they agreed with these statements: “Mitsuku said the right thing to make me feel better,” “Mitsuku responded appropriately to my feelings and emotions,” “Mitsuku came across as empathic,” “Mitsuku said the right thing at the right time,” and “Mitsuku was a good listener.” The response categories ranged from 1 (completely disagree) to 5 (completely agree).

Communication competence

This scale was based on Demeure et al. (2011) and the items were: “Mitsuku communicated properly,” “Mitsuku communicated correctly,” “Mitsuku came across as competent,” and “Mitsuku came across as believable.” The response categories ranged from 1 (completely disagree) to 5 (completely agree).

Feelings of friendship

Whether people experienced feelings of friendship toward Mitsuku was measured once, after the final interaction, with the social attraction scale from the measurement of interpersonal attraction (McCroskey et al., 2006). Some items were designed specifically for this study. The final measurement included the following items: “I would be upset if I could not interact with Mitsuku again,” “I feel like Mitsuku is my friend,” “I will continue to interact with Mitsuku in the future,” “I think Mitsuku could be a friend of mine,” “Mitsuku and I could never establish a personal friendship with each other” (reverse-coded), and “I could become close friends with Mitsuku.” The response categories ranged from 1 (completely disagree) to 5 (completely agree) and the items formed a one-dimensional scale with a Cronbach’s alpha of .86 (M = 1.66; SD = 0.72).

Results

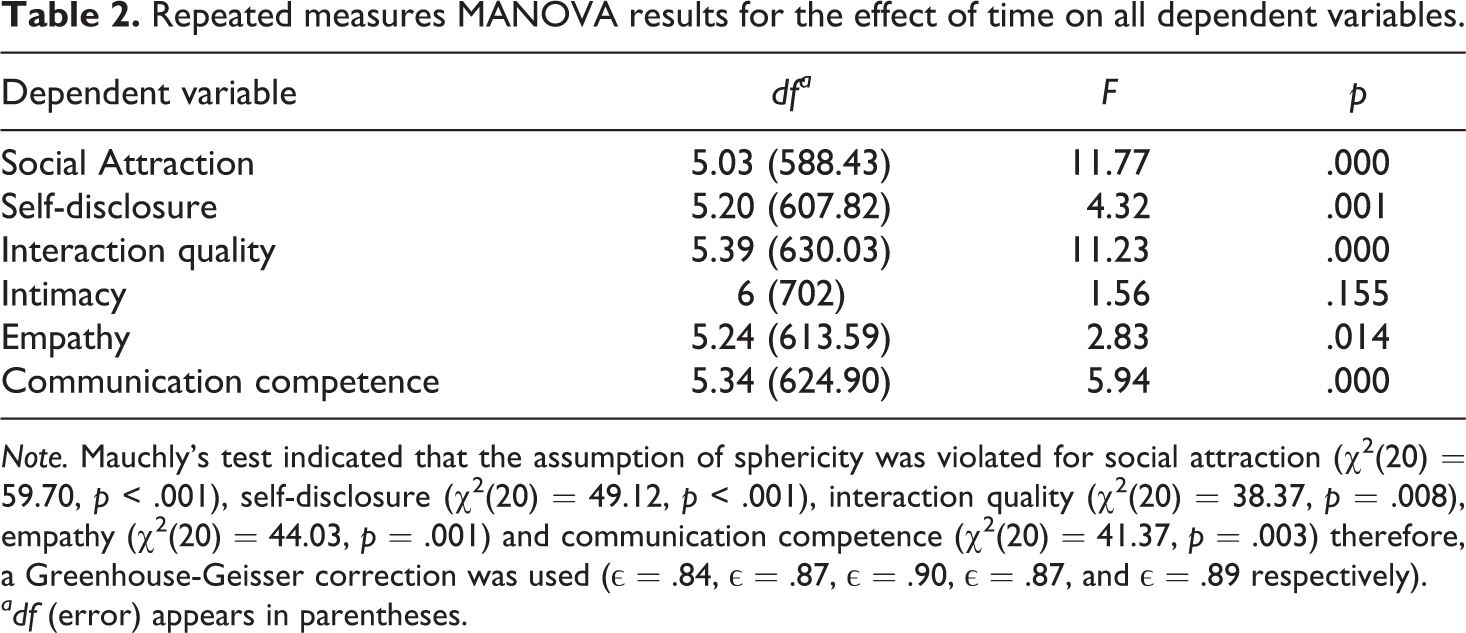

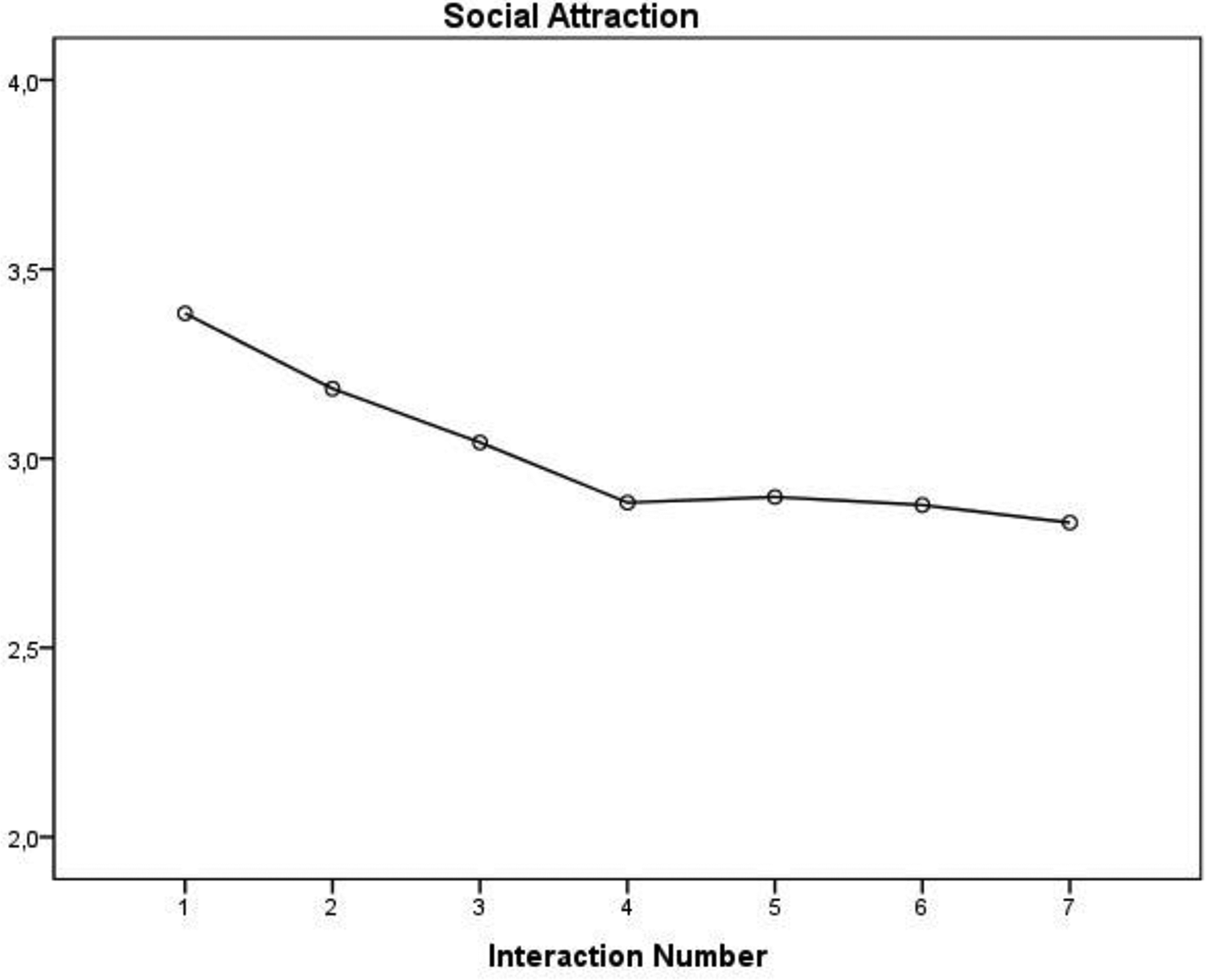

To test RQ1–RQ4 we conducted a repeated measures MANOVA with time (with seven time points) as a within-subject factor and social attraction, self-disclosure, intimacy, quality, empathy, and communicative competence as the dependent variables. Additionally, we ran simple contrasts where we compared all time points to the last time point (7). Therefore, time point 7 was the reference group. Since we ran six contrasts, we applied the Bonferonni correction, which set the appropriate alpha level to .008 (.05/6). The analysis revealed that there was a significant effect of time on social attraction, self-disclosure, interaction quality, empathy, and communication competence (see Table 2 for the statistics). More specifically, simple contrasts revealed that there was a significant difference between time point 1 (M = 3.38; SD = 0.77) and time point 2 (M = 3.18; SD = 0.93) compared to time point 7 (M = 2.83; SD = 1.02) with regard to the level of social attraction. These results show that there was a significant decrease in social attraction over time point 1 (F(1, 117) = 39.65, p < .001) and time point 2 (F(1, 117) = 15.14, p < .001) compared to the seventh time point. The results are visualized in Figure 1.

Repeated measures MANOVA results for the effect of time on all dependent variables.

Note. Mauchly’s test indicated that the assumption of sphericity was violated for social attraction (χ2(20) = 59.70, p < .001), self-disclosure (χ2(20) = 49.12, p < .001), interaction quality (χ2(20) = 38.37, p = .008), empathy (χ2(20) = 44.03, p = .001) and communication competence (χ2(20) = 41.37, p = .003) therefore, a Greenhouse-Geisser correction was used (ε = .84, ε = .87, ε = .90, ε = .87, and ε = .89 respectively).

adf (error) appears in parentheses.

Social attraction.

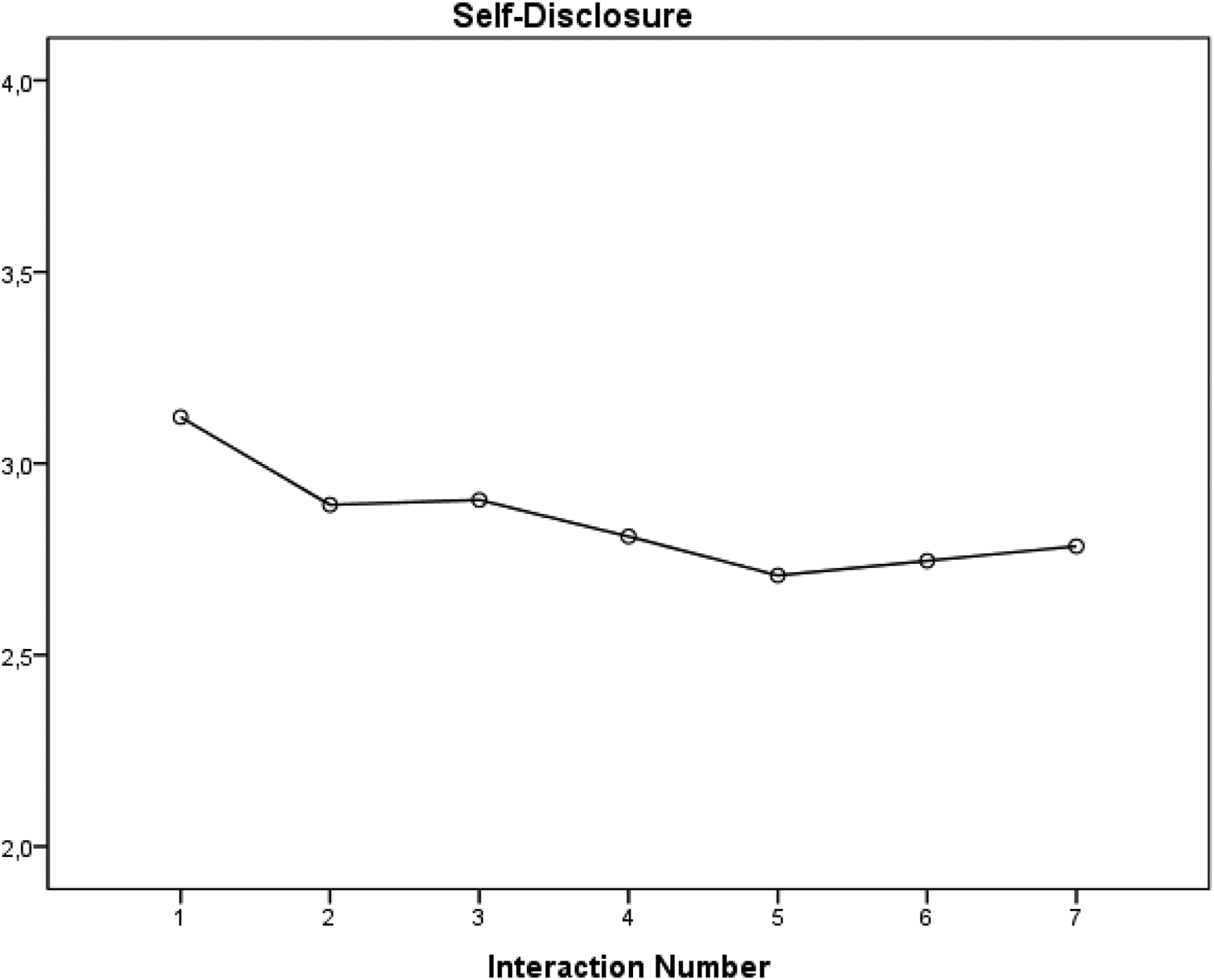

For self-disclosure, simple contrasts revealed that there was a significant difference only between time point 1 (M = 3.12; SD = 1.00) and time point 7 (M = 2.78; SD = 1.03) regarding the level of self-disclosure, F(1, 117) = 9.71, p = .002. There were no significant differences between the other time points. The results reveal that participants’ level of self-disclosure significantly decreased when comparing time point 1 to time point 7 (see Figure 2).

Self-disclosure.

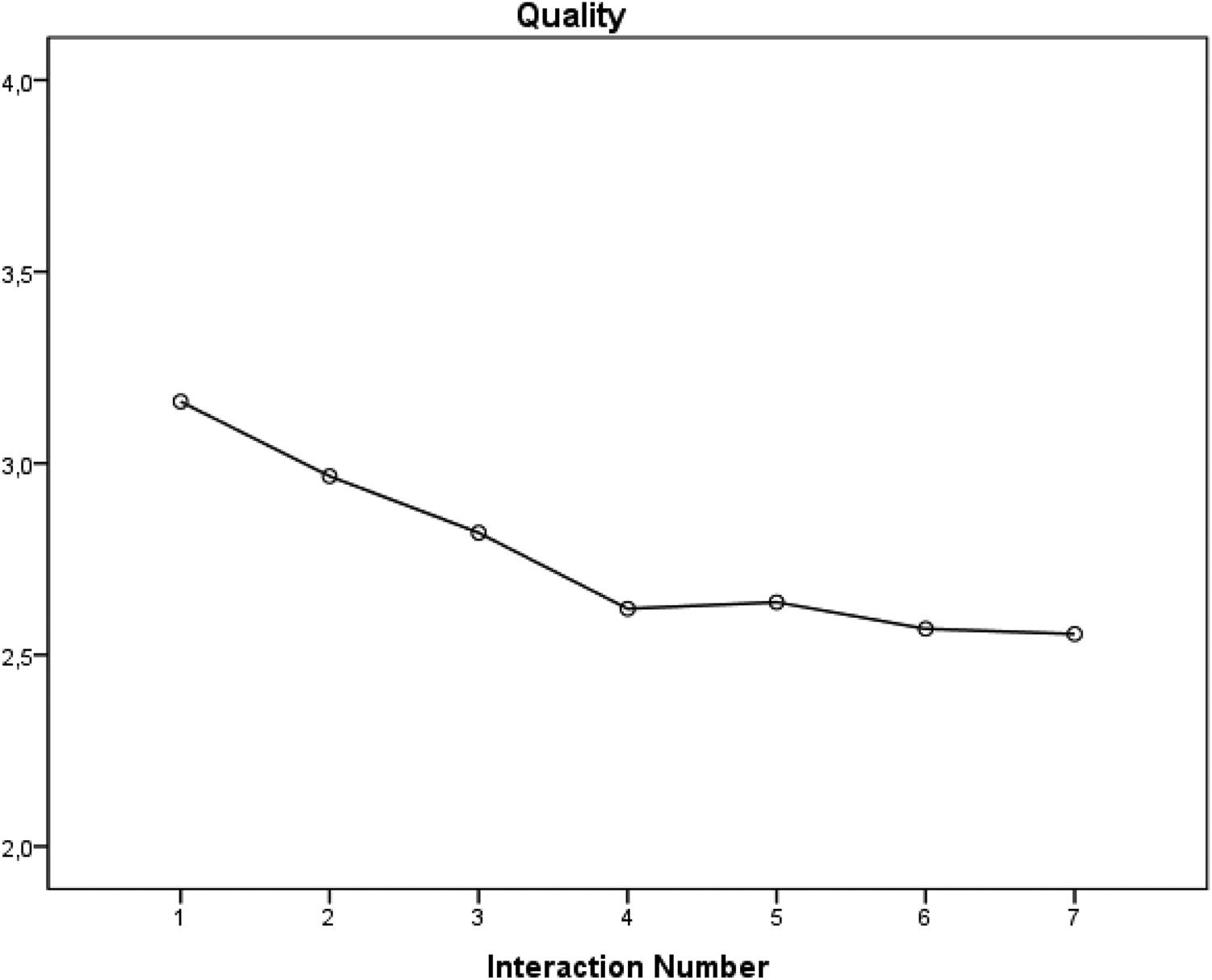

For interaction quality, simple contrasts revealed that there was a significant difference between time point 1 (M = 3.16; SD = 0.83), time point 2 (M = 2.97; SD = 0.96), and time point 3 (M = 2.82; SD = 1.01), as compared to time point 7 (M = 2.55; SD = 1.08). The results show that there was a significant decrease in the perceived quality of the interactions over time point 1 (F(1, 117) = 38.49, p < .001), time point 2 (F(1, 117) = 16.34, p < .001) and time point 3 (F(1, 117) = 7.85, p = .006) compared to the seventh time point (see Figure 3).

Interaction quality.

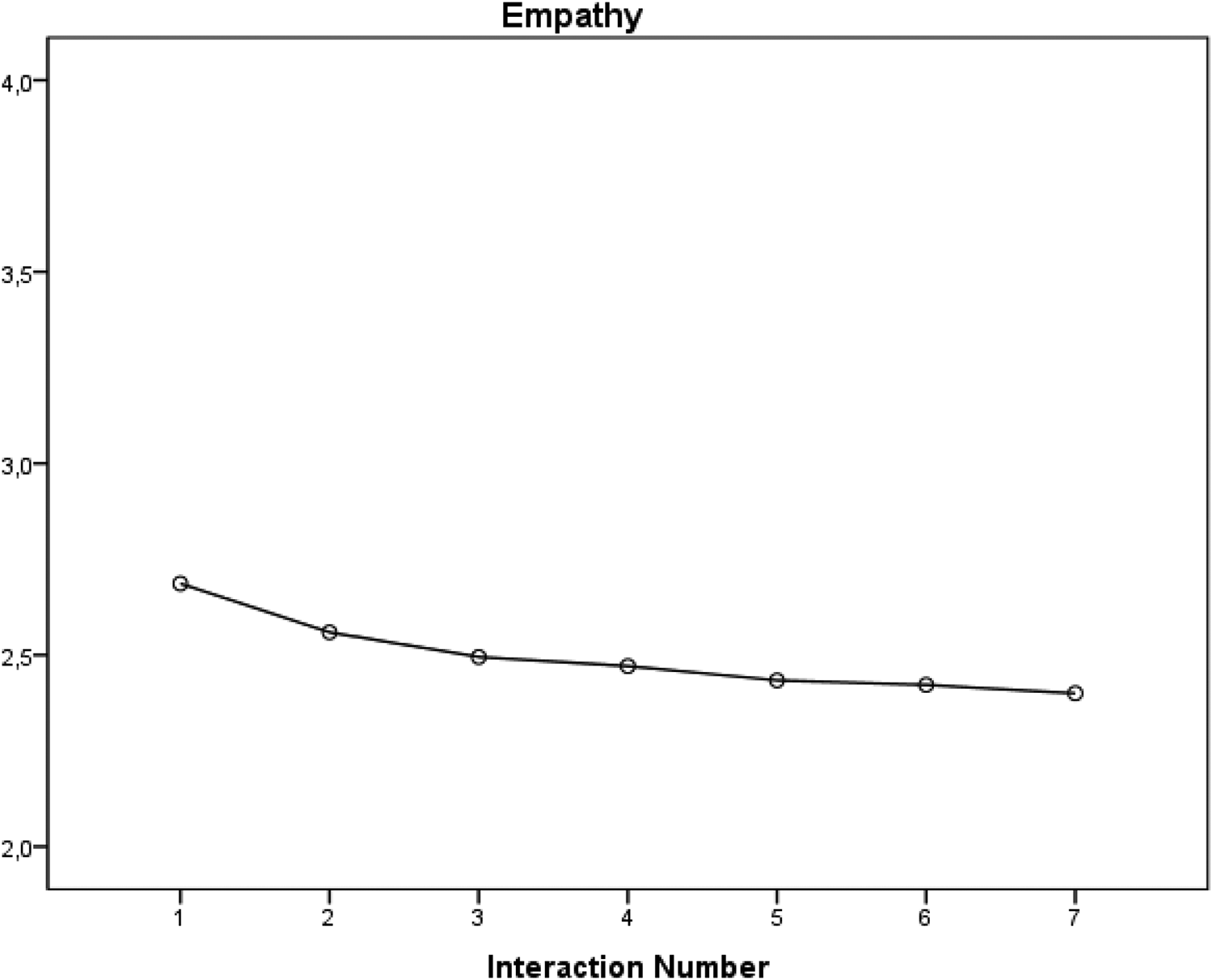

For the analyses of time on perceived empathy, contrasts revealed that there was a significant difference between time point 1 (M = 2.69; SD = 0.80) and time point 2 (M = 2.56; SD = 0.81), compared to time point 7 (M = 2.40; SD = 0.95). This suggests that people’s perceptions of empathy of the chatbot significantly decreased when comparing time point 1 (F(1, 117) = 9.99, p = .002) to the seventh time point (see Figure 4).

Empathy.

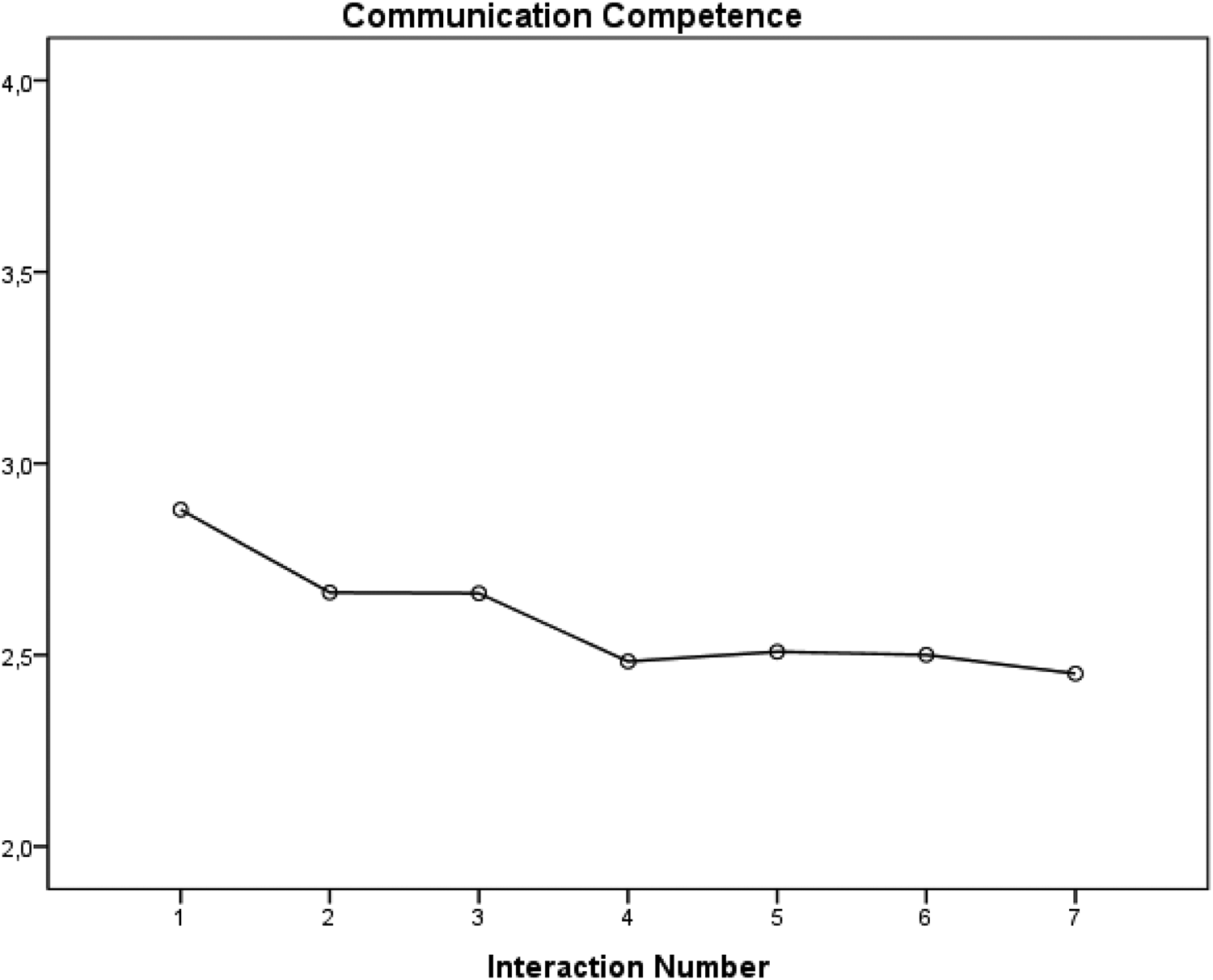

Finally, for communication competence contrasts revealed that there was a significant difference between time point 1 (M = 2.88; SD = 0.83) and time point 3 (M = 2.66; SD = 0.95), compared to time point 7 (M = 2.45; SD = 1.00) concerning the level of communication competence. This suggests that the level of perceived communication competence of the chatbot significantly decreased when comparing time point 1 (F(1, 117) = 20.39, p < .001) and time point 3 (F(1, 117) = 7.88, p = .006) to time point 7 (see Figure 5).

Communication competence.

The final research question (RQ5) asked to what extent people experience feelings of friendship toward Mitsuku. The descriptive statistics reveal that the overall score of feelings of friendship after the final interaction was very low, M = 1.66; SD = 0.72 (on a scale of 1 to 5). Thus, it seems that, after having had multiple interactions with a social chatbot, humans did not experience feelings of friendships toward the chatbot.

Discussion

The aim of this explorative study was to investigate (a) what social processes may play a role in getting-acquainted interactions between humans and a chatbot, and (b) whether it is possible for humans to build a relationship with a chatbot.

First, our results reveal that apart from intimacy, all social processes associated with relationship formation decreased over time; people were less socially attracted toward the chatbot, self-disclosed less, regarded the interactions as lower in quality, found the chatbot less empathic, and less communicatively competent. The level of perceived intimacy was not found to differ between interactions, which means that participants perceived all chatbot interactions as equally intimate (with scores ranging from 2.38 to 2.60 on a 5-point scale). It may be that participants were not prompted by the chatbot to engage in intimate self-disclosure. Specifically, the chatbot’s questions may have been of a superficial nature. In studies where participants were found to disclose intimately to a virtual agent they were often prompted to do so, for instance by the agent asking intimate questions (e.g., Lucas et al., 2014). People match the intimacy level of initial disclosures, which enhances social attraction (Collins & Miller, 1994). Thus, if a chatbot does not ask questions that prompt intimate disclosures, or discloses personal information about herself, the topic of the interaction remains superficial.

Second, our results show that, after multiple interactions with a social chatbot, the participants indicate that they did not perceive the chatbot as their friend. Hence, we conclude that people are not yet capable of developing feelings of friendship toward a social chatbot. This can be explained by the SPT (Altman & Taylor, 1973), which states that for a friendship to develop, intimate interpersonal communication is needed, which primarily occurs through intimate self-disclosure. However, in the interactions in our study, there was a decrease of intimacy instead of an increase and the participants disclosed less during the seven interactions. According to Beran (2018), complex relationships like friendships exist because of interpersonal exchanges between people with faces, souls, and personalities. Additionally, these exchanges are often led by emotions and may contain jokes or implicit language; layers often lacking in chatbot communication. Thus, although a social chatbot can be programmed to use natural language and to mimic human communication (Edwards et al., 2014), according to our study, this is not enough for feelings of friendship to occur.

Thus, our findings show that the social processes shown to aid relationship formation decrease after multiple interactions with a social chatbot. In line with Levinger’s (1980) ACBDE model, the social processes that aid satisfying continuation of a relationship, decrease which, ultimately, leads to deterioration of the relationship (phase D in the model). The ratings of the social processes were all relatively high after the first interaction, suggesting that the expectations people had of the chatbot after the first interaction were high; and apparently, these expectations decreased after multiple interactions. This is in contrast with HMC research, which suggests that, given enough time, people are expected to come to similar levels of interpersonal outcomes when talking to both robots and people (Spence et al., 2014). However, when two humans get acquainted, each subsequent interaction contributes to a shared experience and a common history between two people. Intimate details and experiences are shared, memories are made and in doing so, people get more acquainted and become closer (Clark & Brennan, 1991). In chatbot communication, there is no element of shared experience and chatbots are unable to reference earlier conversations (Hill et al., 2015). Moreover, participants commented that they “expected a bit too much from Mitsuku” and that “if she had more of a story to her, some history, she’d have more to talk about,” suggesting that this element was missing from the interactions. Additionally, participants commented that interactions felt “very robotic and standardized” and that the chatbot “did not recognize sarcasm and a lot of other aspects in language comprehension.” Thus, it seems that the conversations with the chatbot lacked depth and humanness, which suggests that the chatbot was not advanced enough for interactants to become socially attracted toward her.

In sum, the findings show that in human-chatbot interactions important social processes like self-disclosure and interaction quality decrease over time, which may explain why people in this study did not develop feelings of friendship toward a social chatbot. If we assume that the chatbot’s interaction processes remained constant, it may be that the participants’ expectations of the chatbot after each interaction decreased. Thus, the participants expected less and less from the chatbot after each interaction, which could have affected how they communicated with and reacted to the chatbot. This, in turn, explains the low score in feelings of friendship after the final interaction with the bot.

Theoretical and practical implications

The main implication of the current study is that this particular chatbot Mitsuku is not humanlike enough for humans to develop feelings of friendship toward the bot. Based on the results of this study and feedback from the participants we can suggest some reasons, namely that the chatbot has no memory, lacks humor and empathy, and is too superficial. These aspects hinder the process of relationship formation between humans and a social chatbot. Thus, according to the social exchange theory (Emerson, 1976), the costs outweigh the rewards of this relationship as the efforts of the participants are not reciprocated by the chatbot. Furthermore, a chatbot needs to not only understand the meanings of words and sentences but also grasp the variability with which words are used in human language to communicate meaning (Hill et al., 2015). For instance, the chatbot is unable to understand sarcasm and lacks the ability to detect certain human emotional needs (Shum et al., 2018). These needs may also differ depending on the person the chatbot is talking to; which suggests that there is a need to develop a social chatbot that matches an individual’s personality (Chang et al., 2008).

Second, it seems a novelty effect is at play when it comes to interactions between humans and social chatbots. An important characteristic of any new technology is novelty (i.e., the newness of the technology in the eyes of the adopter; Wells et al., 2010). After the curiosity of the new technology (i.e., the chatbot) vanishes, and humans are confronted with the reality of the ability of the technology (i.e., how the chatbot actually communicates), they perceive lower usefulness of that technology. The expectancies people initially had about that technology (i.e., the chatbot) are violated. This is in line with expectancy violations theory, suggesting that when someone acts differently in an interaction than you initially expected, this violates your expectations of that person (Burgoon & Hale, 1988). Specifically, these negative violations lead to more unfavorable outcomes, compared to when behavior matches an individual’s expectations. This is also in line with Levinger’s (1980) ABCDE model, which explains that relationships end in the final stage (E) because of a decline in rewarding outcomes. Our findings show that participants’ ratings of most social processes examined here were above average after the first interaction, suggesting that participants enjoyed communicating with the chatbot and had high expectations for future interactions. However, these perceptions decreased over time, which indicates a negative violation valence. After multiple interactions individuals become aware of the chatbot’s capabilities as well as its limitations. As a result, people become disillusioned; they start off with high expectations of what the chatbot is capable of, but this deteriorates as the chatbot becomes more predictable and less empathic, making the interactions less enjoyable.

Furthermore, our findings have implications for Nass and Moon’s (2000) CASA paradigm, which states that people react in a social manner to computers and other technologies as if they were real people. According to this paradigm, people apply the same social rules when interacting with a computer as they would when interacting with another person. Our findings show that, after an initial interaction with a chatbot, people’s expectations are indeed high and individuals rate their interactions with the chatbot as high in quality, intimacy, and view the chatbot as empathic, communicatively competent, and likeable. However, after multiple interactions the chatbot becomes predictable, robotic, and superficial. Although this may seem to contradict the CASA paradigm, if people were having the same predictable, superficial interactions with another human they would likely respond similarly. Hence, it seems the same social rules indeed apply in human-chatbot interactions as in human-human interactions.

Finally, our findings have practical implications for chatbot developers. Technical issues, such as repetitive and predictable dialogue, inappropriate responses, and misunderstandings inhibit the process of human-chatbot friendship development. These issues need to be solved if social chatbots are to take on the role of a long-term companion or a friend. Specifically, social chatbots need to be designed in a way that they can target their language toward an individual, by getting to know individual users, and building a shared experience on what has been said in earlier conversations. Furthermore, by including elements like reciprocal self-disclosure and enabling chatbots to distinguish between individual users and target their responses accordingly, the social processes analyzed in this study will be able to develop in a similar way as in human-human interactions. As a result, the interactions will become more personal and feelings of friendship might be able to grow.

Limitations and suggestions for future research

This study is one of the first to employ a longitudinal research design and examine multiple interactions between humans and a social chatbot, to determine whether it is possible for people to build a relationship with a chatbot. This study is, however, not without limitations. First, this research is an initial attempt to explore important social processes, derived from CMC and interpersonal relationship formation research, in human-chatbot interactions. Although our findings have important implications for human-chatbot communication, as multiple interactions reveal the surpassing of a novelty effect, it would be interesting to compare these interactions to human-human interactions. Future research could employ a longitudinal experiment, where humans have multiple interactions with chatbots and humans, to see whether the social processes examined here differ in both types of interactions. Furthermore, these studies could code the interactions for, for instance, self-disclosure. By comparing self-reported and actual data, we gain a more coherent picture of the social processes at play in human-chatbot interactions.

Second, the reason for the low score in friendship formation may also be due to various user-, message-, and/or context-related factors. Due to the voluntary and changing nature of friendship, the development of a friendship does not necessarily follow a linear path. Particular “turning points” in people’s lives can cause relationships to move forward, or shift backward (Weigel & Murray, 2000). Furthermore, the degree and frequency of contact between two people building a friendship can vary and the process of friendship development occurs over multiple interactions (Finchum & Weber, 2000). Levinger’s (1980) ABCDE model of relationship development states that the continuation of relationships depends on, among other things, the frequency of interaction (Weiger & Murray, 2000). In this study, participants were asked to interact with the chatbot on specific days for at least 5 minutes and this may have influenced our results. People engaging with a chatbot in real life out of free will may exhibit different reactions to the chatbot than those found here. Future research could explore people’s motivations for freely interacting with social chatbots and their feelings of friendship toward the bot, considering different interaction frequencies and durations. Furthermore, these studies can compare multiple chatbots or even employ a Wizard of Oz paradigm (e.g., Ho et al., 2018), where people are made to believe they are talking to a chatbot (while they are talking to another human). Because of the current chatbot limitations, as shown in the results of the present study, a Wizard of Oz paradigm may give more insight into whether people are actually open to building a friendship with a chatbot.

Third, the fact that the social processes examined in our study decreased over time, may also be due to an initial elevation bias (Shrout et al., 2018). This bias concerns high initial self-reported ratings regarding people’s thoughts and/or feelings, which then decline over time. Essentially this happens when people, at the first time point, exaggerate their current emotional states because they include only information they perceive to be relevant, which may involve information from the past or future. As a result, at later time points, they provide information they perceive to be new and thereby ignoring their current emotional state. This initial elevation bias may explain why the social processes we examined declined over time. However, this bias has been shown to be larger for behaviors and negative mental states, compared to positive states, which were examined in the current study. Furthermore, researchers suggest dropping the initial observation in repeated measures designs to adjust for the initial elevation bias, while our results also show a signification decline at later time points (after the first observation).

Finally, just because humans do not develop feelings of friendship toward a chatbot, does not mean they cannot be suitable companions for, for instance, lonely people or people suffering from depression (e.g., Woebot). Analyzing lonely or socially anxious people in future studies may give more insight into which types of people do benefit from chatbot communication and whether some personality characteristics influence whether people do in fact develop some type of bond with a social chatbot.

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Open research statement

As part of IARR’s encouragement of open research practices, the authors have provided the following information: This research was not pre-registered. The data used in the research are available. The data can be obtained by emailing