Abstract

For qualitative scholars, achieving interpretive depth when analyzing large global datasets represents a particular challenge. It is, however, a challenge worth navigating given the power of such datasets to provide a depth of insight into large-scale societal issues. Building on existing insights about qualitative analysis and examples from my own experience of large global research projects, here I seek to offer some advice in this regard. I provide three general heuristics that scholars can keep in mind as characterizing this endeavor: the hermeneutic circle, distributed cognition; and embracing doubt. I then show how these heuristics unfold practically, illustrating a four-step process that I hope is helpful for qualitative researchers seeking to balance depth and breadth to understand macro phenomena.

“I feel thin, sort of stretched, like butter scraped over too much bread.”—J.R.R. Tolkien, The Fellowship of the Ring.

Engaging large-scope phenomena requires innovative and specific qualitative methods (Hoon & Baluch, 2024; Marcus, 1995). In particular, gaining interpretive depth of insight into macro phenomena via large global-dispersed qualitative datasets represents unique challenges (Prasad & Shadnam, 2023). It can be important to seek

The transition from my PhD research to participating in a global ethnography illustrates this challenge. During my doctoral study, I meticulously conducted and transcribed each interview and read each transcript multiple times, allowing for deep immersion in all “parts” of the data. During the analytical process, I could readily recall every detail from across my interviews, which facilitated interpretive leaps and connections across data points. This contrasted with my subsequent role as a team member collecting, managing, and analyzing a significantly larger global dataset. Grappling with the vastness of the new dataset was daunting. Achieving the same level of intimacy with every aspect of the dataset that I have been used to in my PhD project was impossible. This led to increased doubt for me at an individual level as an interpreter of that data (Locke et al., 2008), especially given I was immersed in some, rather than all, the specific local sites making up the global dataset (Jarzabkowski et al., 2015a). However, with time, I developed a deep understanding of the globally dispersed practices we were studying, albeit through a different process than that of my PhD research. Here I seek to reflect on what that process entailed, in the hope that it helps others similarly confronted with analyzing such large and dispersed datasets.

This endeavor is important because, as organizational and management scholars, we should seek an understanding of the most important issues facing humanity (Harley & Fleming, 2021). Such complex grand challenges like inequality, public health, polarization, and the climate crises (Amis & Janz, 2020; Ferraro et al., 2015; George et al., 2016) are large-scope phenomena that evolve across different geographical and institutional boundaries and involve multiple interconnected stakeholders and organizations (Jarzabkowski et al., 2019; Waddock et al., 2015). Thus, the study of global challenges, including overtime, can benefit from larger globally dispersed datasets (Hoon & Baluch, 2024). Yet, despite this need for breadth and scale in certain research projects, it is also important that we do not forgo the interpretive depth of insight into these challenges. Localization remains central to the power of qualitative methods (Gephart Jr, 2004; Van Maanen, 2011a) to develop insights via immersion “in the symbolic/cultural world that is under investigation and unearth the underlying webs of identities, relations, and practices” (Prasad & Shadnam, 2023, p. 841).

Balancing depth and breadth is central, even if only implicitly, to many discussions about qualitative analysis (Howard-Grenville et al., 2021). Below I therefore weave together existing insights within organizational research methods to offer some non-prescriptive general reflections for navigating the specific demands in this regard of global datasets. Which represents an extreme case of the depth versus breadth quandary. In this sense, mine is a bricolage approach, involving combining various analytical insights and itself should be viewed as a general roadmap to be reworked as required to suit the demands of a specific project (Pratt et al., 2022). In this regard, I will first introduce what I mean by large global datasets via the example of two research projects I have been involved in and upon which this reflection is based. Second, I will propose three heuristics for researchers interpreting large global qualitative datasets of various forms to be guided by. Third, I will illustrate four practical steps that represent one approach to analyzing large global datasets. Finally, I will bring these together via a framework and some concluding remarks.

Large-Scale Qualitative Research Projects: An Example

I have been a team-member on two research projects involving large global qualitative datasets, and these serve as examples of the type of research projects I am discussing here. “Project 1” was a global team ethnography into reinsurance trading practices (Jarzabkowski et al., 2015b). This consisted of 935 formal observation fieldnotes (plus another 146 additional field interactions with associated fieldnotes) and 431 interviews. Fieldwork was conducted by five of us and spanned 23 countries (Jarzabkowski et al., 2015b). “Project 2” is a research project into insurance “Protection Gap Entities” (PGEs) in the context of escalating risk globally. These are not-for-profit entities set up, usually by governments, to provide insurance protection that would otherwise be unavailable within a purely private sector context. This global project involved four of us collecting interviews (460 interviews), observation (148 separate events) and documentary data (956 documents) across 49 countries and 17 “protection gap entities” (Jarzabkowski et al., 2023).

These projects will be further referenced below as illustrative examples. The foundation for interpreting this data was a deep immersive understanding of micro-practices, critical incidents, and local sites. Yet at the same time, our interpretative understanding was based on an inductive analysis of the entire dataset to access the “globality” and global interconnections of the focal phenomenon (e.g., Jarzabkowski & Bednarek, 2018; Jarzabkowski et al., 2015b, 2023). Below I bring together some lessons learnt regarding the general analytical process common to both projects. A process through which—among a milieu of sites, data points, and team members—researchers can gain interpretive depth of insight into global phenomenon.

Some General Heuristics for Approaching the Interpretation of Large Global Datasets

I suggest three general heuristics to guide scholars analyzing large globally dispersed datasets, while seeking interpretive depth of insight into macro phenomena. These are: the hermeneutic circle (Gadamer, 1989), distributed cognition (Hutchins, 1995), and the link between doubt and discovery (Locke et al., 2008).

First, the “hermeneutic circle,” which depicts interpretation as an iterative movement between a “whole” and its “parts,” is a useful framework for thinking about analyzing large global datasets. Interpretation is seen as an attempt to understand “the whole through comprehending the meaning of the parts divining the whole” (Crotty, 1998, p. 92; Gadamer, 1988). Each iteration between part (e.g., such as interviewee or organizational site) and whole (e.g., the focal macro phenomenon and global dataset) enhances clarity regarding both the individual parts and the whole by virtue of their interconnection (Prasad, 2002).

Such analytical maneuvers that iterate between “zooming out” on the whole and “zooming in” via detailed coding of its parts are important when approaching large-scale datasets (Nicolini, 2009). Given the expansive nature of the dataset, attempting to comprehend each “part” before relating them to the “whole” may yield fragmented insights. And ultimately decenter the focal (global) phenomenon into which interpretive depth of insight is being sought. However, not grounding the understanding of “the whole” within the in-depth interpretive analysis of specific parts prevents the nuanced localized insight essential for qualitatively understanding that whole (Zilber & Meyer, 2022). For example, without this iterative examination of the “part” and the “whole” in our Project 1, the common thread between an underwriter in London engaging in casual banter with a familiar broker and an underwriter in Zurich taking a client on a stroll up Uetliberg would have been overlooked. And we would have missed (or not followed) the overarching pattern of such “contextualizing” micro-practices in the global trading of risks (See Chapter 3, Jarzabkowski et al., 2015b). In this way, researchers iterating between part and whole facilitates their interpretive understanding of the focal global phenomenon as it manifests in local contexts and interconnects across them.

Second, researchers need to expect and embrace the

Third, researchers need to expect and embrace

Practical Steps for Gaining Interpretive Depth into Large Global Datasets

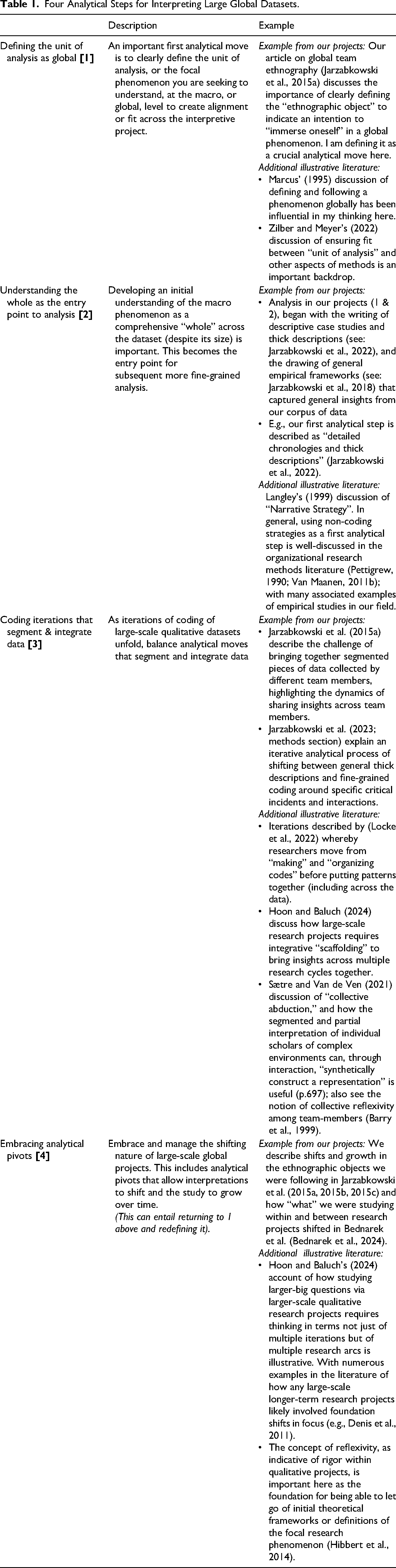

The practical guidelines I provide below put the above heuristics into action. These practical guidelines are general enough to be combined with various specific analytical or coding techniques and tools depending on the demands of a particular research project. While they are not distinct sequential steps, I have attempted to order these four actionable principles (see Table 1) in terms of

Four Analytical Steps for Interpreting Large Global Datasets.

Defining the Unit of Analysis as Global: Ensuring fit and Focus [1]

In interpretive qualitative work, the focal research phenomenon is actively defined by the researcher as part of the research process (Howard-Grenville et al., 2021; Prasad, 2002). An important first analytical move is thus to clearly define a study's unit of analysis at the macro, or global, level. This analytical move creates alignment or fit (Howard-Grenville et al., 2021; Zilber & Meyer, 2022), between what a researcher is seeking to gain depth of immersive understanding into and the dataset's global breadth. Via clarifying the global nature of the research phenomenon, the interpretive objective is defined as seeking immersive depth

Conducting a global ethnography of reinsurance trading (Project 1) provides an example. This involved an explicit initial analytical shift in emphasis from the organizational to the global. Defining this clearly and becoming cognitive of it was a central analytical move that arose inductively while the research team was in the field. Instead of the foundational question guiding interpretation being “Am I immersed in the practices of [Organization X ]?” this meant the emphasis became “Am I immersed in the global practices of reinsurance underwriting?” (Jarzabkowski et al., 2015a). Thus, my exploration of this practice across various contexts was not a dispersion of focus, but a deliberate and analytically informed “following” of the phenomenon I sought to understand (Marcus, 1995). For example, I didn't reach an analytical dead-end with only three instances of underwriters utilizing geographical maps to contextualize risk within, say, the “Eastern Europe Team” at “Reinsurance Organization X.” Our analysis instead surfaced how those specific idiosyncratic instances in a specific organizational team were part of a broader pattern of interconnected but localized “contextualizing practices” (Jarzabkowski et al., 2015b). It clarified that it was immersion not within the “Eastern Europe Team,” but in the global practices of reinsurance trading that underpinned our interpretations. Defining the unit of analysis carefully is thus a crucial analytical move in focusing interpretive efforts and ensuring that the inherent breadth of a dispersed dataset contributes to a deeper immersive understanding of a focal global phenomenon.

Understanding the Whole as the Entry Point to Analysis [2]

Once the focus on a macro phenomenon has been clarified, it is beneficial to focus on comprehending that phenomenon as a “whole.” Namely, analytical moves that focus on accessing the entirety of the dispersed dataset are a useful inductive first step. This often is what is occurring in the field through the noting of general sensitizing concepts (Blumer, 1954) in analytical memos. However, a way it can formalized analytically is via Langley's (1999) “narrative strategy.” This strategy is used in different ways by many researchers, including when detailed thick descriptions are constructed from raw data (Geertz, 1973) as an entry point into analysis. An indicative example is Pratt et al.'s (2022) discussion of how scholars can eschew first-order coding as a first analytical step (e.g., Gioia et al., 2013), to instead construct composite narratives to represent the general pattern in the data (Smets et al., 2015; Sonenshein, 2010). Such, analytical techniques are particularly important for navigating large global datasets. This may seem counter-intuitive as a natural inclination when approaching the large corpus of globally dispersed data can be to carve off a bite-sized manageable piece. However, developing insight into the whole first, helps ensure the clarity and direction of subsequent coding endeavors; for example, via inductively surfacing a more specific research question and general themes of interest. This provides some guardrails for the coding process, which is helpful given the dataset's size in preventing things like excessive proliferation of codes (Gioia et al., 2013). It is also a way of centering the macro phenomenon driving the investigation from the start in determining “what's interesting” within and between specific local sites.

My own experience again provides a foundation for this advice. Many of my academic outputs related to large-scale datasets, often detail that our first analytical step was the writing of thick descriptions (Jarzabkowski et al., 2022). Such thick descriptions can be seen in the subsequent presentation of our data, for example via opening vignettes in our book chapters (Jarzabkowski et al., 2015b, 2023). I believe that we grew in confidence in terms of actioning this analytical step throughout our two research projects. In Project 2, we spent over a year discussing, writing memo notes, and capturing our impressions of emerging data themes via various analytical artifacts like empirical frameworks trying to work out “what was happening” at a macro level before coding. In this way, via our immersion in the field our first analytical moves focused on building an initial interpretation of the overall phenomenon rather than slicing and dicing that data.

Coding Iterations That Segment and Integrate Data [3]

As researchers engage in iterative cycles of inductive coding of large global qualitative datasets (Locke et al., 2022), thinking about ways to segment and integrate data is helpful. Large, dispersed datasets need to be segmented into meaningful “parts,” usually via delineations within CADAS software. This facilitates “deep dives,” via focused in-vivo coding, into specific parts—including via specific iterations between subsets of data and specific explanatory theories (3a). It is also important to balance this with analytical moves integrating these segmented parts (3b). That is, integrative “scaffolding” (Hoon & Baluch, 2024) that reconnects both the researcher, as the interpretive instrument, and those parts to the entire dataset and to an emerging overarching conceptual lens. For example, in Project 1, as part of coding, we zoomed into the subset of data on competition as an explanatory theme, iterating between that subset of the wider dataset and the specific theoretical lens of competitive dynamics (3a). However, this “deep dive” into competing remained embedded in our overall focus on global “market making,” and our overarching social practice framework (Jarzabkowski & Bednarek, 2018; Chapter 5, Jarzabkowski et al., 2015b) (3b). I will now discuss examples of these analytical moves grounded respectively in segmentation and integration.

Deciding how to focus iterations of coding efforts when dealing with large-scale qualitative datasets is a crucial analytical task. Here, I'll outline three (nonexclusive) approaches for segmenting data to facilitate targeted analysis for illustrative purposes. First, researchers often segment data by type to reflect the unique characteristics of each data source. For example, in Project 1, distinct coding methodologies were employed for observation fieldnotes, observation videos, and interviews, leading to the development of separate coding structures and NVivo files. This segmentation allowed for nuanced analysis tailored to the specific nature of each data type, as illustrated by a specific iteration between video data and multimodal analysis and theory (Jarzabkowski et al., 2015c). Secondly, researchers can “zoom in” by constructing specific cases within the global dataset (such as a team, organization, country, critical incident, or indicative routine) to illuminate specific explanatory facets of the global phenomenon. After researchers have an overall understanding of “what is going on here” in terms of the entirety of the dataset (see Step 2), they can use that knowledge to decide which “parts” might be analytically fruitful to zoom in on to illuminate a particualr aspect of the global phenomenon. For example, in our projects, we analytically focused on events like the “Thai Floods” (Project 1) or geographically bounded cases like “Switzerland” (Project 2) as iterations in our coding to gain insight into practices of market cycle construction (Jarzabkowski et al., 2015b; Chapter 2) and resilience (Jarzabkowski et al., 2023; Chapter 5), respectively. Lastly, as part of iterations in their coding, researchers can delve deeper into certain general themes via more fine-grained coding when they “emerge” as central to understanding the focal macro phenomena. For example, in Project 1 we engaged in detailed coding of themes like “competing” and “modelling,” with each theme now associated with multiple first-order codes and carefully mapped relationships in our NVivo files. Meanwhile, other broad themes, such as “cursing,” have remained coded for at a general level but largely uninterrogated (at least so far!). In summary, segmenting data in large qualitative datasets can be achieved through differentiation by data type, focusing on specific cases, and iterations of focused inductive fine-grain coding around certain illuminating general themes.

It is also important to incorporate analytical moves that integrate these parts, and I outline four such analytical “scaffolding” examples (Hoon & Baluch, 2024). Firstly, meticulous record-keeping of evolving coding structures is crucial in maintaining coherence in the coding of various parts of a large-scale qualitative dataset. For example, in Project 1, we recorded changes in coding structures for observation and interview data and compared these weekly, facilitating the integration of insights across parts of the dataset as required. Secondly, leveraging the collective expertise of a research team through regular interactions can reorient analysis toward “the whole.” Team interactions can be used as analytical moves that integrate interpretations about specific “parts” of the macro phenomena (Barry et al., 1999). For instance, in our projects, we dedicated regular team meetings and away days to knowledge-sharing and collaborative development of frameworks, theorizing, analytical artifacts, and coding structures to build insights grounded in the wider dataset (Jarzabkowski et al., 2015a). Thirdly, it is beneficial to distribute coding tasks in ways that enhance individual understanding of “the whole.” It is beneficial for an individual researcher to code multiple parts of the dataset, sometimes simultaneously, to enable them to access more of the breadth of the dataset as a foundation for their interpretations. For example, when in the thick of coding for Project 1 I simultaneously analyzed different types of data (observations and interviews) across different geographical contexts, enabling pattern identification across different data segments. Finally, a general theoretical orientation to approach the entirety of the dataset, and access the macro phenomenon, can be settled on as an integrative scaffold. For instance, in Project 1, practice theory, largely drawing from the work of Ted Schatzki, was crucial. Even when specific lenses were required, such as competitive dynamics to refine our insights into competing, the practice approach served as a guiding framework that facilitated integration and coherence across different parts of the analysis (Jarzabkowski & Bednarek, 2018; Jarzabkowski et al., 2015b). Paradox theory ultimately emerged as integrative analytical scaffolding for the parts in Project 2 (Jarzabkowski et al., 2022, 2023). In summary, such analytical moves that build integrative scaffolding help ensure a comprehensive understanding of the dataset and of macro-phenomena.

Embracing Analytical Pivots: Growth and Change Over Time [4]

The timescales for engaging with large datasets focused on global phenomena are long and “multi-arc” (Hoon & Baluch, 2024). Given this, analysis of such datasets is often a multi-year process, entailing different arcs of analytically informed “returns to the field” to follow the globality of the focal phenomenon. As part of this “long march” (Van Maanen et al., 2007), researchers should consciously seek out interpretive pivots that shift and grow the foundational elements of their project. Consequently, how the unit of analysis is defined and the whole “understood” are not static but shift and become increasingly global as part of the interpretive process. That is Steps 1 and 2 above should be returned to and reworked via this step.

Given the long timescales required to collect and analyze large global qualitative datasets, researchers should anticipate and actively cultivate explicit interpretive pivot points. Such significant shifts in analytical focus over a long-term project's lifespan are indicative of analytical rigor. In particular, this is because they represent the cultivation of reflexivity (Alvesson & Skoldberg, 2000). Reflexivity is important for all interpretivist research and can enable researchers to rework their initial interpretation and definitions as part of ongoing discovery. Such shifts may result in the focal empirical phenomena being redefined (defined via Step 1 above). As Hibbert et al. (2014) state, reflexivity can entail re-evaluating “our relational connectedness to the phenomenon we seek to research.” For example, in Project 1, the research team transitioned from examining “reinsurance underwriting practices in London and Bermuda” to analyzing “global reinsurance practices.” These pivotal junctures can also involve a shift in the overall conceptual approach or theory through which to interpret the data (Hoon & Baluch, 2024). This can include re-evaluating the theoretical assumptions underlying the project and a consequent (re)interpretation of the entire dataset. For example, in Project 2, our initial inclination was to engage with our data via a social practice theory lens, in alignment with Project 1. However, the overarching driving conceptual framework shifted after we engaged in our initial research arc and “paradox” became the central conceptual scaffolding (Bednarek et al., 2024; Jarzabkowski et al., 2019, 2022). In both cases, holding on to enough doubt was central to accessing the reflexivity to interrogate some of our underlying fundamental assumptions and create these necessary shifts in focus that meant out research process was multiarc (Hoon & Baluch, 2024).

The general interpretative trajectory inherent in these shifts in focus should be one of

A final point is that these interpretive pivots discussed here can also represent decision points for researchers around the ending and beginning of different research projects. Does a particular pivot represent a change in the foundations of an existing project or the end of one project and the beginning of another? In our case, at one point we “left behind” Project 1 (reinsurance trading practices) and began Project 2 (efforts to address insurance protection gaps). Our engagement in Project 1 enabled the construction of that new, more expanded, research phenomenon (Bednarek et al., 2024); they are not completely disconnected. Yet, thus far we have not treated them as distinct arcs of the same project via integrating them. This process of constructing beginnings and ends to projects when dealing with these analytical pivots over time is itself an interpretive task.

Discussion: Framework Analyzing Large Global Qualitative Datasets

I have shared reflections on analyzing large global qualitative datasets where there is a need or desire to balance breadth and depth (Prasad & Shadnam, 2023). I bring the above discussion together to depict (Figure 1) the interpretive process unfolding as an iteration between whole-part (Figure 1a) (Gadamer, 1988). In particular, with those parts being distributed (Hutchins, 1995) across multiple researchers, CAQDAS files, and local sites (B). As the heuristics I introduced suggest, these iterations grow the understanding of “the whole” via doubt-laden quests for discovery (C) (Locke et al., 2008).

A framework for interpreting large global qualitative datasets.

I have attempted to show how this process (underpinned by these heuristics) can be practised via four analytical steps [Figure 1, 1–4]. Firstly, it is crucial to clarify the phenomenon, or unit of analysis, in a manner that aligns with the distributed nature of the dataset. This involves defining “the whole” into which immersive interpretive insight is sort as “global.” This focuses analytical efforts (Jarzabkowski et al., 2015a) and creates “fit” (Zilber & Meyer, 2022) that enables depth to be possible

Concluding Remarks

Inductive qualitative research can offer nuanced and surprising insights regarding large-scale global phenomena, including the most pressing grand challenges facing us as societies (Eisenhardt et al., 2016; Hoon & Baluch, 2024; Jarzabkowski et al., 2019). More specifically, meaningful insights and practical impact (Bednarek et al., 2024) can arise via qualitative studies that balance depth of immersion and global breadth. It is, however, the interpretive efforts of the researchers involved that ensure that this is a meaningful enterprise, rather than simply an empiricist drive for more data for its own sake (Alvesson & Skoldberg, 2000). Much of the power of qualitative research resides in its ability to, via its interpretive lens and immersion in contexts, “provide a localized understanding of the cultural processes—meaning making—as it occurs from a few vantage points” (Van Maanen, 2011a, 2011b, p.221). The question this piece has grappled with is how we retain these unique properties of interpretive qualitative research when following phenomena interconnected across multiple different organizations and actors, including globally (Jarzabkowski et al., 2015a; Marcus, 1995).

This paper is ultimately a non-exhaustive reflection. Different large-scale projects will need different tools based on the idiosyncrasies of their aims and design (Pratt et al., 2022; Zilber & Meyer, 2022). As such, methodological dexterity and conceptual nimbleness are, as always, critical in how the broad lessons offered in this piece might be applied effectively (Cloutier, 2024). Analyzing qualitative data usually involves a sense of “drowning” (Langley, 1999) and “getting lost” (Gioia et al., 2013) if you are doing right, with doubt not something a researcher can avoid as part of the analytical process (Locke et al., 2008). Yet, a general roadmap can help keep things generally on track, especially when the journey is a long and global one. At least that was my motivation in writing this piece and sharing some lessons learnt.

Footnotes

Acknowledgments

Thank you to all those I have analyzed large qualitative datasets alongside, including Adrianna Allocato, Gary Burke, Laure Cabantous, Eugenia Cacciatori, Konstantinos Chalkias, Rhianna Gallagher, Mustafa Kavas, Elisabeth Krull, Michael Smets, and Paul Spee. And especially to Paula Jarzabkowski from whom I have learnt so much and who commented on a draft of this paper.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.