Abstract

In carrying out research, qualitative scholars routinely struggle with having to navigate between planned and emergent research design strategies. Pressure from funders and gatekeepers to plan research can be high, but too much planning can interfere with the ethos of discovery that characterizes inductive qualitative research. On the other hand, study designs that are overly emergent present their own array of risks. In this essay, I argue for the integration of planned and emergent approaches to qualitative research design. I outline strategies for making planned research designs more reflexive and emergent, and strategies for making emergent research designs more directive and planned. I present two competencies—conceptual nimbleness and methodological reflexivity—that can be helpful for designing studies in this way and discuss how these deliberately emergent designs should be reported, with a view to enhancing the transparency and trustworthiness of qualitative research methods more generally.

How much advance planning and deliberate design does inductive qualitative research require? Opinions among qualitative researchers and methodologists vary considerably. Those abiding by the core tenets of grounded theory (Corbin & Strauss, 2008; Glaser & Strauss, 1967), ethnography (Van Maanen, 2011; Watson, 2011), or naturalistic inquiry (Lincoln & Guba, 1985) privilege what they refer to as “emergent” designs. One enters a field site with minimal conceptual baggage and a priori assumptions, leaving the field and the scholar as instruments to drive the process of determining what is interesting, what data to collect, and what ultimately can be theorized about. For these authors, qualitative research is emergent by definition since “what will be learned at a site is always dependent on the interaction between investigator and context (…) which cannot be fully predictable” (Lincoln & Guba, 1985, p. 208). In this sense, qualitative research design is something that is “‘played by ear’: it must unfold, cascade, roll, emerge” (ibid, p. 209). Because they encourage openness and learning, proponents believe that emergent research designs are more likely to produce interesting data that are generative of insight (Wiedner & Ansari, 2018).

Emergent designs can be risky, however. Gatekeepers and busy executives are likely to be skeptical or view emergent designs as unserious, which can hamper access. Researchers likewise risk going down various rabbit holes and blind alleys, amassing large amounts of unusable data (Langley, 2018). Or they may end up “finding” something that is either trivial or already well known (Pratt, 2016), resulting in many hours of data collection and analysis work that does not reveal anything interesting or novel enough to be worth publishing. As such, highly inductive, loosely designed studies may turn out to be “a waste of time” (Miles & Huberman, 1994, p. 17) because lack of sufficient planning may result in research projects being effectively “ruined” (Berg, 2004, p. 32). For these authors, tighter designs represent the “wiser course” (Miles et al., 2020, p. 14).

Entering a field with pre-conceived idea of what one is interested in or looking for, however, can lead to a different array of disappointments. Glaser and Strauss (1967) warn that “data collected according to a pre-planned routine are more likely to force the analyst into irrelevant directions and harmful pitfalls” (Glaser & Strauss, 1967, p. 48). It may turn out that the assumptions underpinning one's chosen design choices (what to observe, who to speak to, etc.) are wrong. One wishes to study decision-making around an important organizational issue, only to realize later that important decisions related to the issue are taking place elsewhere, in unexpected places, or by organizational actors that researchers had not thought about or did not know existed. Such occurrences are by no means infrequent, in part because gatekeepers and informants themselves may be unaware (or at least not fully aware) of the full spectrum of organizational processes, and in part because they deliberately hide information from researchers, for various reasons (Karjalainen et al., 2015). Likewise, too clear a focus on what one is interested in research based on the literature may lead to bias and force-fitting of data into predetermined categories that have been abstracted from prior research, undermining the qualitative research enterprise altogether (Gioia et al., 2013; Pratt, 2009).

Planned versus emergent qualitative research design strategies need not, however, be viewed as oppositions, where favoring one approach implicitly undermines the perceived quality and trustworthiness of the other (Wiedner & Ansari, 2018) or supposes the necessity of making “design trade-offs” (Patton, 2015, p. 258) Indeed, a large part of conducting qualitative research involves letting a research site speak for itself while at the same time taking steps that facilitate the emergence of a unique take on “what is going on here”. As Maxwell (2013) notes, terms such as “tight” vs “loose” (Miles & Huberman, 1994), or “fixed” vs “flexible” (Robson, 2011) for qualifying research designs are not helpful, since they can lead researchers to “overlook or ignore the numerous ways in which studies can vary, not just in the amount of prestructuring, but also in how prestructuring is used” (p. 89, italics in original). And while researchers who support planning recognize that “no matter how much thought was put into designing a research strategy before entering the field, qualitative researchers have to adapt decisions on the fly and off-script once in the field” (Zilber & Meyer, 2022, p. 378), they typically offer little in the form of advice on how to do this.

In this essay, I offer some ideas for how researchers might better integrate emergent design approaches into their planned designs while providing direction and structure to this emergence, which I view as essential for conducting high-quality and impactful qualitative research in a (hopefully) efficacious way. I argue further that conceiving “deliberately emergent” qualitative research designs, as I propose here, is facilitated by the development of two core competencies: conceptual nimbleness and methodological dexterity. In the following paragraphs, I outline some of the reasons why I think a better and more systematic integration of deliberate and emergent research designs is necessary; propose a series of deliberately emergent design strategies; discuss the core competencies required to effectively design studies in this way; and offer some suggestions for how to write qualitative research methodology sections that are truer to how inductive research is actually done, and by so doing, enhance both methods transparency and the trustworthiness of qualitative research findings (Jarzabkowski et al., 2021; Pratt et al., 2020).

The Limits of Planned Approaches to Qualitative Research Design

Planned approaches to research design are attractive and reassuring, as much to researchers themselves, as to the consumers of research outputs (other researchers, the media, the public) and external stakeholders (informants, funders, research ethics boards, etc.). Planned designs convey a sense that researchers have a focus, that they know what they are doing, and what they are looking for. Informants know what to expect, and funders can demonstrate that they have done their due diligence in ensuring that the monies they have granted are not wasted. Indeed, funders are likely to be lambasted by their own stakeholders for funding research that has no clearly articulated aims, data collection plan, and /or timeline for completion. Informants on their part typically loathe to answer questions or grant access to researchers without some idea about what a study is about, what its aims are, and what participation entails. Finally, researchers themselves, especially novice ones, often find planned research reassuring – the idea of entering a field with no idea about where to start or how to proceed can be quite stressful (Watson, 2011).

Despite their necessity and many advantages, there are also important limitations to planned research designs. These include lack of methodological imagination, the risk of collecting canned and uninteresting data (e.g., finding what one is looking for) and possible derailment because of the vagaries of access, events, and changing circumstances.

Lack of Methodological Imagination. The everyday “doing” of research—designing studies and writing research proposals for funding agencies, completing IRB (Institutional Review Board) requests, or simply managing multiple projects, to name only a few—frequently leads to the routinization of research practice. When designing a new study, researchers tend to fall back on familiar practices and gravitate toward research approaches that are popular or have worked for them in the past (Harley & Cornelissen, 2022; Locke et al., 2022; Zilber, 2020). Technical proficiency and experience will often guide their design decisions aimed at ensuring the collection of “good” data: scholars will ensure theory-method fit (Gehman et al., 2018), be sure to diversify and triangulate their sources, obtain the perspectives of multiple informants, ask open questions, etc. (Jarzabkowski et al., 2021; Pratt et al., 2020). All of which qualify as exemplary research practices. Once committed to a particular research design with informants or funders, changing it might prove difficult. And yet, imagining novel ways of collecting or analyzing qualitative data often comes in the “doing”: when confronted with specific challenges or encountering an unanticipated development. The pages of qualitative research methods journals are filled with retrospective accounts of how researchers navigated such situations, seeking to turn them into methodological contributions that others may take inspiration from (see for example Jarzabkowski et al., 2019; Smith, 2002). Conducting research in a routinized fashion or “by the book”, however, often prevents methodological innovation. It also, unfortunately—and more concerningly—does not guarantee insight.

Collecting Uninteresting Data. Overly planned approaches and a lack of methodological imagination increase the risk of collecting data that comes across as “canned” (researchers found what they were looking for), and thus devoid of meaningful insight. Occasionally, one comes across (and some of us may very well have made this mistake ourselves, essentially learning the hard way how not to conduct qualitative research!) the novice researcher who has diligently conducted a series of interviews or observation sessions, and the most they can draw from their data are descriptive categories of informants’ opinions, without generating any insight into why these people said what they said, how they came to form these views, or why they did what they did. This is because they dared not to stray from their standardized interview or observation protocols. In such cases, the risk is high that researchers will gather a lot of data about “what” questions, which tend to be descriptive, at the expense of data about “how” and “why” questions, which are more elusive and often require improvised, and skilled in-the-moment prodding, but which are also more likely to generate insight.

Vagaries of Access. Finally, planned approaches are limited by the reality that despite one's best efforts, no amount of planning can account for the vagaries of access, events, and changing circumstances, all of which are likely to impact a study's design—if not derail it entirely—as data collection progresses (Bruni, 2006; Cunliffe & Alcadipani, 2016). And this notwithstanding the parallel reality that gaining access is often merely a trigger for yet more access “work” that needs to be done. Indeed, even if a gatekeeper grants formal access, individual informants may not cooperate (Alcadipani & Cunliffe, 2023). A gatekeeper's understanding of what information, documents, or meetings a researcher has access to may also differ substantially from a researcher's understanding of the same. Karjalainen et al. (2015) discuss the multiple layers of access that must be negotiated in organizational contexts, as well as the many roadblocks, both subtle and less subtle, that researchers often encounter in doing so, even when researching topics that are non-controversial or that organizations have a vested interest in.

Likewise, as relationships build and trust grows, so does the breadth and depth of access (Feldman, 2003; Feldman et al., 2003; Peticca-Harris et al., 2016). Trust in fieldwork can be fickle, however, and it is hard to predict when, how and for how long it will be granted. Unexpected events and even mishaps can nurture it—Steve Barley talks about how an opportunity to help a particular informant during his fieldwork in a hospital went a long way in building trust among a whole category of informants who were important for his research but who until then had remained aloof in his presence (Barley, 1990). Similarly, an inadvertent faux pas can instantly damage trust that may have taken months to build.

Finally, as time passes, so do circumstances and the actors involved, and with it, the modalities of access. A once-open door may close or need to be renegotiated. Events may change a setting's openness to external observers. Likewise, determinations of “what is going on here” and “what's interesting” may shift. Changing circumstances or events may short-circuit what was initially perceived as a fruitful line of inquiry, calling for an expansion or shift in the terms of access already allowed, which may or may not be granted. Sometimes, too much access is granted, and a researcher runs into the issue of needing to decide, given limited resources and time, what to focus their attention on. Other times, it is only retrospectively that one realizes that something has been missed, requiring a researcher to renegotiate access to investigate an insight further or to obtain additional data in support of a claim or construct, which also may or may not be granted.

Thus, despite a researcher's best efforts, even the best and most thoughtful of planned approaches can go awry. Rigid planning prevents improvisation (Barrett, 2012) and reflexivity (Mason, 2002) and may get in the way of “serendipity” (Fine & Deegan, 1996; Wiedner & Ansari, 2018), leading to missed opportunities. Hence the benefits of more emergent approaches, but these too have their limits, to which we turn now.

The Limits of Emergent Approaches to Qualitative Research Design

In response to the limits posed by planned approaches to qualitative research design, certain researchers have praised the virtues of more emergent approaches (Wiedner & Ansari, 2018). They remind qualitative researchers of the core tenets of discovery (Locke, 2011) that underpin grounded theory, which involves following intuitions about what might be interesting about a research setting, right from the start. One interview, one document, or one observation—even the very first one—opens up research design possibilities, which are then explored and followed up on (Corbin & Strauss, 2008). Despite their appeal and track record for generating novel insight, however, there are also important limitations to emergent research designs. These include loss of time and resources, lack of direction and focus resulting in the collection of overly eclectic, ad-hoc, and/or incomplete data, and heightened difficulties related to securing and maintaining access to a field site.

Loss of Time and Resources. It is not unusual to hear qualitative researchers claim having collected “massive” amounts of data. The qualifiers used may differ, but all reflect a magnitude of some sort: in qualitative research, one has invariably collected a “tremendous”, “incredible” or “unbelievable” amount of data. It is almost as if claiming to have engaged in qualitative research must go hand-in-hand with having to also claim—de facto—that one has also collected “a ton” of data. And while the general mantra is that researchers should collect as much data as they can (Langley, 2018), doing so requires a tremendous amount of resources and time. Plus quantity does not necessarily mean quality (Howard-Grenville et al., 2021). It is possible to collect a “ton” of data that are largely and potentially useless, as it is too disparate or incomplete to afford any basis for identifying patterns or comparing units of analysis systematically.

This is unfortunate, as there is much to gain and a lot of heartache to avoid from taking a more deliberate and planned approach to designing a qualitative study. Underqualified calls to collect massive amounts of data also invite a ramping up of expectations as regards the amount of data researchers are expected to collect for any given qualitative study. Whereas 25–30 interviews (along with other data sources) might have been sufficient at one time to publish in top journals—Dutton and Dukerich's (1991) iconic study on image and identity in organizational adaptation was based on 25 interviews (along with internal documentation); as was Greenwood et al.’ (2002) study on field transformation. Lately, it is not unusual for studies to include 100+ interviews (for example, Raffaelli, DeJordy and McDonald's study on the modernization of the Swiss watch industry featured 147 semi-structured interviews, 119 archival interviews and considerable documentation (Raffaelli et al., 2022); Julia DiBenigno's study of mental health services provision in the U.S. Army included a full year of ethnographic observation prior to her conducting 183 interviews for the specific study at hand (DiBenigno, 2020); Colbert, Bloom and Nielsen's study on calling in the caregiving professions featured 236 narrative interviews and Kate Kellogg's study on micro-level institutional change in professional organizations was based 566 individual 1–2 h shadowing sessions with managers, doctors, nurses and secretaries (Kellogg, 2019). While these studies may be outliers, what qualifies as “saturation” in terms of data collection has certainly gone up over the years and shows no signs of abating. Given the time, energy, and resources that collecting qualitative data implicates, collecting a lot of data indiscriminately seem counterproductive at best.

Lack of Direction and Focus. Even more concerning is to have spent considerable time and resources collecting “massive” amounts of data, overly large chunks of which turn out to be unusable. There is always some degree of “loss” in qualitative data collection. It happens that informants are cagey and don’t share all that much information. Or they lie, and one must collect a lot of data before figuring out that such is the case (Maanen, 1979). We collect reams of documents unrelated to our topic or spend hours and hours observing activities that in the end are not relevant for our topic. This is normal and to be expected. Indiscriminate data collection can become problematic, however, when “playing by ear,” one ends up collecting massive amounts of eclectic, incomplete, and most concerningly: non-comparable data. Observations of events, such as meetings, on an ad-hoc basis are rarely useful, for example. It is almost always better to observe a series: multiple occurrences of the same meeting over time. It is also preferable to collect documents in sets: the minutes for every meeting of a certain type (budget meeting, for example) over a period of time (like a year), instead of just the occasional one or two that an informant happens to give you. Doing this effectively usually requires at least some degree of planning.

Barriers to Entry. Finally, and as previously mentioned, applying for research funding, and obtaining IRB approval is simply impossible without specifying a study's objectives, its positioning within a known conversation in the literature, an overview of the type and quantity of data that are to be collected, and a timeline. Although not impossible, securing access to field sites—especially in business, organization, or management settings—can be tricky without a detailed research proposal on hand. Gatekeepers inevitably want to know what a study is going to be about and what is expected of the organization and targeted informants, should they accept to participate. Negotiating entry and full access for a study based on a mainly emergent research design is tricky, and often only possible for researchers with prior, well-established contacts in a given organization, preferably with a track record. Without this, barriers to entry are likely to be high, and the quality of access is limited. Limited access, in turn, considerably limits qualitative researchers’ capacity to generate novel and meaningful insight.

In light of these frequent and parallel qualitative research design problems, how do researchers reap the benefits of design, while remaining open to evolving insights? How do they maintain rigor while adapting their research designs to changing circumstances? In other words, how do they balance planned vs emergent research design concerns, both in advance and in situ? In the following paragraphs, I propose a series of strategies and tactics that help make planned research designs more reflexive (and hence more emergent) and emergent research designs more directive (and hence more planned). In this, I am inspired by conversations with highly accomplished qualitative researchers sharing their personal experiences of designing and conducting innovative and impactful qualitative research. I follow this with a discussion of the skills that I think can be helpful for conceiving deliberately emergent research designs and suggestions for how to communicate integrated approaches in the methods sections of published work.

Strategies for Integrating Emergence into Planned Research Designs

It is possible to design qualitative research in a manner that is thoughtful rather than routine and in ways that do not undermine improvisation or blindside researchers from emergent new insights. Strategies for designing research more thoughtfully include taking the time to undertake one or more exploratory studies prior to commencing a research project formally and putting extra effort into thinking in advance about the different ways one might “get at”, approach, or operationalize a research problem empirically, so as to effectively capture a phenomenon of interest, possibly from more than one angle or perspective. Such advanced thinking also puts researchers in a better position to negotiate the terms of their access to a given field site.

Conducting Exploratory Studies. Conducting exploratory studies prior to commencing formal data collection for a project is an undervalued and underused strategy that can be helpful to researchers seeking to construct more thoughtful and flexible research designs. Exploratory studies can take many forms. In some cases, they can take the form of a planned intervention which acts as a “getting to know you” exercise, where researchers enter a field in order to conduct a case study, a survey, or a workshop of some kind (design thinking, scenario planning, stakeholder mapping, organizational culture, or identity assessments, etc.) as a way of gaining initial access to a field site. Such interventions allow informants to get to know the researchers, and feel reassured about their intentions and gain confidence about the “value-add” their presence might provide. If a pro-bono intervention goes well, and informants are pleased with the deliverables, then our natural tendency as humans to reciprocate favors rendered (Gouldner, 1960) may enable access that had been denied previously.

In other cases, exploratory studies can take the form of a job search strategy, where researchers approach informants to seek their opinions on how to approach a given phenomenon. Such inquiries may not only provide new and fresh ideas for how to do so they may also open up new doors, previously closed to the researcher (Barley, 1990). Exploratory studies can likewise take the form of a handful of relatively informal interviews and observations done mainly to ascertain the suitability of a field site, and its receptivity to participating in a study or to help zone in on a specific phenomenon of interest which would then become the focus of a planned study. Such interviews are a means for researchers to learn more about a potential research site—a context, event, field, industry, organization, or collection of organizations to understand its boundaries, key players, local culture, and lingo—all of which are necessary for understanding “what is going on”. For example, in their study on elastic coordination (2014), Spencer Harrison and Bess Rouse conducted pilot interviews with dancers and choreographers in advance of carrying out their full study, which allowed them to design it specifically around themes that arose from their analysis of those interviews. Had they engaged in their field site without such prior exploring, the data they collected may not have lent itself to studying those themes, which their prior engagement with the literature had suggested were puzzling and, as such, presented a potential opportunity to contribute (Golden-Biddle & Locke, 2007). In their analysis of the descriptions of how authors obtained access to their field sites in more than 500 qualitative articles published in top journals between 1986 and 2013, Alexander and Smith (2019) noted a correlation between researchers’ activities prior to entering a field site and the degree of access they were ultimately able to secure, suggesting that building rapport and legitimacy with key actors in advance facilitates access, something that conducting exploratory studies can help achieve.

Conducting Thought Experiments. Conducting thought experiments on alternative design choices and their methodological implications is another useful deliberately emergent research design strategy. Typical design decisions concern things like deciding on a research approach and data collection strategy (types and quantity of data to be collected), choosing suitable cases (if conducting a multiple case study), making choices regarding appropriate levels and units of analysis (Zilber & Meyer, 2022) and ensuring data triangulation (Patton, 2003, p. 556). Routine design decisions can be made more thoughtful and generative in various ways. This might involve, for example, considering and including less conventional forms of data, including multi-modal data (visual, textual, verbal, bodily, and material) in a study (Hindmarsh & Pilnick, 2007; Höllerer et al., 2018; LeBaron et al., 2018; Wenzel & Koch, 2018). Even with more conventional forms of data (such as interviews), it might involve thinking a bit more carefully about both the types of questions to ask informants (if using interviews as a data collection method), when exactly to ask which questions (in terms of the order of questions, but also temporally within a given time frame and over time) (Langley & Meziani, 2020). It means potentially visualizing a space prior to engaging in observation or participant observation to imagine the sorts of things—related to the research question posed—one should be paying attention to, including the physical and symbolic aspects of a given space or location where interactions between informants occur (Cartel et al., 2022). This also means being much more attentive to the inherent limitations of various design choices and thinking of ways to avoid or compensate for them in advance (Zilber, 2020). And it may involve creative thinking around outcome variables (ibid), and potential units of analysis, including possible interactions between units and levels of analysis, each with methodological design implications of their own. This list is not exhaustive. The overall aim of this ensemble of thought experiments is to ensure that one's research design, from the outset, incorporates greater conceptual flexibility than might have been the case had researchers approached the field using standard designs and preset assumptions.

Layering or Phasing Data Collection. A third useful deliberate design strategy is to layer the data collection process. A first layer can be the “pre-study”, where initial inquiry deliberately casts a wide net. Certain studies, such as Emily Heaphy's (2013) study on institutional maintenance or Julia DiBenigno's (2020) study on rapid relationality included a first phase of data collection that focused mainly on gaining a deep understanding of their chosen field site. More in-depth than the exploratory studies and/or pilot interviews described above, these pre-studies allow researchers to map the overall terrain and gain the knowledge necessary to reach beyond surface-level issues and problems and dig deep into dynamics that informants themselves are often unaware of, paving the way for richer and more insightful contributions to the literature. A researcher's deep knowledge of the context often grants them credibility with informants, who may consequently be more open to discussing specifics with them, rather than repeating or explaining banalities. Later, during more focused data collection, interview time—which must be viewed as limited and therefore precious—is not wasted asking questions about things that are widely known within the context or can easily be obtained another way. Subsequent “layers” might involve the parsing out of possible research threads (i.e., different lines of inquiry) that can potentially lead to individual studies that are part of a larger research project, with researchers making additional design decisions accordingly.

If conducting an in-depth pre-study is not possible, deliberately integrating planned pauses in a research design can be another useful focusing device. Some researchers will start analysis only after data collection is done. Their initial focus is on collecting the richest data possible and saving it for future analysis. But there is always a risk in doing this. It is not unusual—even for experienced researchers—for an insight or hunch to emerge during data analysis that cannot be pursued for lack of sufficient or appropriate data. But such a hunch could have been pursued had they picked up on it sooner when data were being collected. Researchers can thus benefit from integrating a planned pause in their data collection process, one (or more) that might fall “naturally” within the stream of activity of a given research site (a holiday period, the lull between projects, etc.), for example. This time can be used to conduct a more in-depth analysis of the data collected up until that point, with a view to adjusting and/or supplementing data collection methods in subsequent phases. For example, in his account of the fieldwork he undertook “to explain how technically induced changes in an interaction order might lead to organizational and occupational change” (p. 221), which led to the publication of his now iconic study on CT scanners (Barley, 1986), Barley (1990) discusses how he withdrew from his field site for seven weeks, the time he used to analyze the data he had collected thus far. He notes how this led him to notice that the radiologists he had been observing “seemed less authoritarian when making requests of technologists who operated newer technologies” (p. 234). This prompted him to start tape-recording examinations when he later re-entered the field and to later analyze radiologists’ and technologists’ speech acts (ibid) which he may not have done had he not taken such a pause.

Finally, and more generally, exploratory studies and pre-design reflexivity can be helpful when it comes to negotiating more open terms of access to a given field site. While such steps cannot circumvent the politics involved in gaining and maintaining access to a field (Cunliffe & Alcadipani, 2016), they can minimize easily avoidable mishaps. For example, if a researcher judges that sustained observation or a multi-level inquiry (talking to managers and line persons, for example) is necessary for studying a given phenomenon of interest, then ensuring that access to these types of data will be allowed early in the research process becomes important. While trust can be built, and additional access secured over time—indeed ethnographers often pride themselves in how they manage to integrate into settings that are usually closed to outsiders (Anteby, 2024)—there is never any guarantee that this will happen (we typically only hear of the success stories, never the failures). Researchers can very well end up with a partial and incomplete dataset because the hoped-for access never fully materializes. A certain amount of planning and negotiating ahead of time can consequently save researchers from some of the heartache that results from the vagaries of access. Having conducted an exploratory study with the organization or field site beforehand, perhaps one that included a small-scale deliverable for the organization which they may have found useful, helps establish at least an early base for trust, which often comes with more room to maneuver and openness when negotiating further access.

Undertaking pre-design work deliberately can thus potentially save researchers considerable time and effort and reduce the risks associated with trying to figure things out “on the fly”. Even a cursory exploratory study can help establish a clearer distinction between data that is collected mainly for informational purposes—to learn the local culture and jargon, gain a general sense of the “lay of the land” of the given setting (who is who, who does what, when etc.)—and data that are collected with a view to elucidating a specific empirical phenomenon (or several). This in turn can help reduce the quantity of data that qualitative researchers need to analyze, at least to start (what Locke, Feldman, and Golden-Biddle (2022) refer to as “organizing to code”). It also helps researchers think ahead about how a research project might be parsed out into smaller research projects, each a study in and of itself, so as to integrate these into the overall design of a bigger, umbrella study (in terms of data collection strategies, e.g., types and forms of data to collect). It also makes it possible to compartmentalize data as it is being collected (thus ensuring that data collection is systematic, that nothing is missed, and that it is “amenable to orderly analysis” (Barley, 1990, p. 234)), something that is easier and less time consuming to do prospectively, rather than retrospectively.

Strategies for Integrating Planning into Emergent Research Designs

Even the best and most thoughtful planned research design will not shield a researcher from surprises and mishaps, opportunities, circumstances, and/or events that either open doors or close them, occasionally upending a research endeavor completely and at other times offering unique opportunities that researchers would be (and should be) loath to miss. Given as well that inductive researchers in principle tend to enter a research site guided by a logic of discovery with at most only loosely defined ideas about what they are looking for, strategies for harnessing insights as they arise become centrally important to any qualitative research endeavor. A number of strategies can help researchers manage the inevitable pitfalls that occur during data collection while taking advantage of the opportunities embedded within them.

Starting Analysis Early. The most important strategy (and practice) for engaging in effective emergent research design is to follow a core precept of grounded theory analysis (Charmaz, 2006; Corbin & Strauss, 2008; Locke, 2001), and that is to start analyzing data very early in the research process While this advice has practically become a truism among qualitative scholars, few researchers do it as routinely and systematically as they should, mainly because it is extremely difficult to do diligently. Beyond the multiple pressures, distractions, and demands made on scholars on a day-to-day basis (even on those who can claim to be free of teaching and administrative duties while they conduct research), there is the reality of the “double work” that collecting and analyzing data simultaneously involves. And while circumstances may dictate what, when, and how data may be collected, researchers often have more control over these than they may otherwise recognize or acknowledge. Timeliness may be of the essence in some cases, where intensive data collection is necessary (during a conference, when conducting multiple interviews in quick sequence shortly after a meeting to facilitate recall, etc.) but not always. In such cases, researchers would be wise to deliberately carve out times in their daily agendas not only for ample notetaking and data transcription but also for analyzing data while collecting it—in the same way that they are encouraged to carve out and schedule times to write (Silvia, 2007). It is too tempting and easy to postpone analysis “until later” when one has more time and more data, at which point, it may be too late or too difficult to adjust one's research design in a way that takes advantage of opportunities as they present themselves. It is not always possible nor convenient to go back to a research setting or to re-interview a large number of informants about some dimensions we suddenly realize may be an important part of our emerging theory. While discipline in this regard is a personal thing that varies among researchers, working in pairs or in teams is often an effective way of disciplining and routinizing the process of at least talking about emerging insights and how to harness them effectively.

Performing “On-the-Fly” Loose Analysis. Undertaking “on-the-fly” data analysis (which is different from more systematic analysis described above) is facilitated by the adoption of a practiced reflexivity (Alvesson & Kärreman, 2007; Cunliffe, 2003) where researchers continuously and repeatedly ask themselves “what is going on here?” and “what is this a case of?”, adjusting their research designs as needed. Indeed, their ongoing and evolving answers to these questions will help them identify promising concepts and, in a second step, validate their relevance and potential richness, which they can do by zooming in and out of their phenomenon of interest (Nicolini, 2009) and asking: “How might I “show” this concept?”; “What other forms of evidence might exist for it?” “How might such evidence be collected?” and on this basis think of the implications, from a design perspective, that collecting the necessary evidence to do so requires. Such reflexivity guides the data collection process in situ—where trade-offs between collecting more, less, and/or different types of data at different levels of analysis are evaluated reflexively, systematically, and in an ongoing manner, to ensure that one does not later end up with promising bits and pieces of data that are too few or too disparate to allow for meaningful comparison and analysis (Langley, 2018), and as such are unusable as evidence for what might otherwise have been a promising line of inquiry.

More specifically, practiced reflexivity invites researchers to continuously think about what they should be focusing their attention on, what they need to be tracking and how to track whatever this is in a systematic fashion. While anecdotes, incidents, and on-offs might generate hunches about what is going on in a given setting, they are not, in and of themselves, explanatory, nor do they provide sufficient evidence to back an emerging theoretical claim. Rather, hunches signal to researchers what it is that they should perhaps be paying more attention to. As such, they can be viewed as useful guides for directing (or redirecting) ongoing data collection efforts.

When engaging in such reflexivity, the point is not to go “looking” for something, but rather to identify similar and recurring instances of something that might be tracked systematically, and then later can be contrasted and compared. Such instances might take various forms, such as recurring happenings (events, meetings, celebrations, etc.), occurrences (situations of conflict, decisions, interactions, “social dramas” (Pettigrew, 1990), etc.), or scripts. A researcher's focus then turns toward collecting data on a series of these in a systematic way. What does an instance consist of? What types of data best represent it? Such reflections might invite a new focus for future observations, the collection of different types of documentary or archival evidence, or the addition of new questions to an interview protocol. The key is to collect data associated with each instance systematically, so that they can become, if relevant, a potential unit of analysis and a basis for comparison in subsequent phases of data analysis.

Hunches can also point to the dimensions of a construct (Corbin & Strauss, 2008). During data collection, it might occur to a researcher that gender, race, experience, status, time of day, season, or whatever other factor appears to matter in terms of explaining a given phenomenon. On this basis, researchers should take note and begin collecting data on these dimensions systematically, so that subsequent classifications of data can be made on this basis, and emerging constructs contrasted and compared accordingly. Playing with data in this way can be key to generating insight but is only possible if one has taken care to first systematically collect the necessary data points they need to play with.

Taking Momentary Pauses During Fieldwork. Conducting on-the-fly analysis requires taking frequent, momentary pauses—ideally documented in memos (Saldana, 2016) to reflect on whether the ongoing data collection process continues to be fit for purpose. The sorts of questions that one might ask during such short, reflective pauses is whether a researcher is talking to the right people, focusing on the most appropriate happenings, or ensuring the ongoing trustworthiness (Pratt et al., 2020) of the data collected. If, for example, an informant makes an interesting but surprising claim, how additional evidence be gathered to corroborate that claim? If an informant suggests that the researchers talk to a specific individual (snowball sampling), what might be some plausible reasons for including this informant in one's sample and how is this informant related to other categories of informants who have already taken part in the study? Is this person simply an additional informant within an existing category or do they represent a new category of informants altogether? If the latter—in the spirit of seeking to minimize “stragglers” within a data set—is there a plausible reason to “open up” the study to this new category of informants altogether (and hence interview several, rather than just one representative of that category to ensure representativity of that particular viewpoint)? If so, to what extent? If an informant mentions a particular document that might be of interest, how relevant is it to track down that document? More importantly, is this a one-off document or one that is part of a series (an annual report, a bi-weekly survey, a monthly publication, etc.), and if so, is it relevant then to collect all the instances of that type of document (again, because an ad-hoc document is less likely to offer credible evidence of something)? Are non-subjectively determined outcome data available and accessible? Can these be helpful in some way, and if so, how? These questions and their answers represent ongoing—in vivo and in situ—data triangulation efforts which are important for ensuring trustworthiness in the data collection process (Jarzabkowski et al., 2021; Pratt et al., 2020). When in doubt in this regard, the usual mantra given to qualitative researchers is to be “data greedy” (Langley, 2018; Rouse & Harrison, 2022, Author's Voice) and to collect everything that they possibly can. This however can very quickly become unwieldy and unmanageable given available time and resource constraints. Reflexive decisions can be helpful in this regard, which momentary pauses facilitate.

On-the-fly analysis is also facilitated when researchers are proactive and systematic about writing analytical memos (Emerson et al., 2011; Saldana, 2016), going back to the literature, drawing tentative models (Langley & Ravasi, 2019), building working tables (Cloutier & Ravasi, 2021) and writing tentative abstracts in an ongoing way as they are collecting data. As with analysis, memo writing is something we often postpone or do cursorily for lack of time, but such tendencies should be resisted. This again is something that is made easier when working in teams (Jarzabkowski et al., 2015), especially when deadlines for the routine sharing of such memos with team members are set, which helps instill discipline in the process. Some researchers advocate presenting their initial ideas and interpretations about an ongoing research effort often and very early in the process—to peers during brown bag lunches, at local workshops and mini-conferences, etc.—on the basis that this not only helps clarify their thinking, it also allows them to gauge how much their ideas are likely to resonate with their intended audiences (Suddaby, 2015), and on this basis, pivot if required.

Pivoting. A final useful strategy for integrating emergence into a planned research design is for researchers to develop the ability and wherewithal to pivot during data collection, when and if needed. Despite one's best efforts and careful planning, data collection efforts encounter roadblocks: open doors may close, researchers may be asked to leave a research site prior to data collection is completed, or new circumstances or events (a merger, a bankruptcy, a key informant is fired or changes jobs, etc.) may derail promising lines of inquiry. In other instances, circumstances might present new developments that offer new theorizing opportunities. In such situations, it might become relevant to reframe an ongoing study such that the data already collected can be used and/or that new data that are accessible might be added to address a new research question, resulting from a different positioning in the same or different literature. Being able to pivot in this way can save a faltering data collection effort from being relegated to the dustbin of incomplete research projects. Doing so can mean having to situate one's project in an entirely different conversation in the literature than the one initially proposed, based on the sense that one's data are more likely to make a novel contribution to the literature. Indeed, a big part of qualitative data analysis involves identifying tight data/theory couplings (Langley, 2018), where the evidence found is novel or counterintuitive and helps to advance theorizing in a particular domain of scholarship inquiry. Finding such tight couplings is sometimes a case of trying different theories for size (Smith, 2002). We do not always get it right the first time, hence the need to pivot.

Designing research as an overall research “project” rather than as a specific research “study” facilitates pivoting, as at the outset, different angles of inquiry and levels of analysis can be embedded into the overall project design, making it easier to reorient a particular study within it, if need be. A deep knowledge of the literature, both the literature directly related to the phenomenon of interest and adjacent literature reports at multiple levels of analysis, also facilitates pivoting. If a micro approach is no longer feasible, perhaps there is sufficient data (or easily accessible additional data) to allow for a meso or macro view of the same phenomenon. If data collection on a longitudinal study is cut short, perhaps there is enough data to zoom in and theorize about phenomena occurring within a shorter timeframe. New openings may offer similar opportunities. Pivoting facilitates researchers’ ability to take advantage of them.

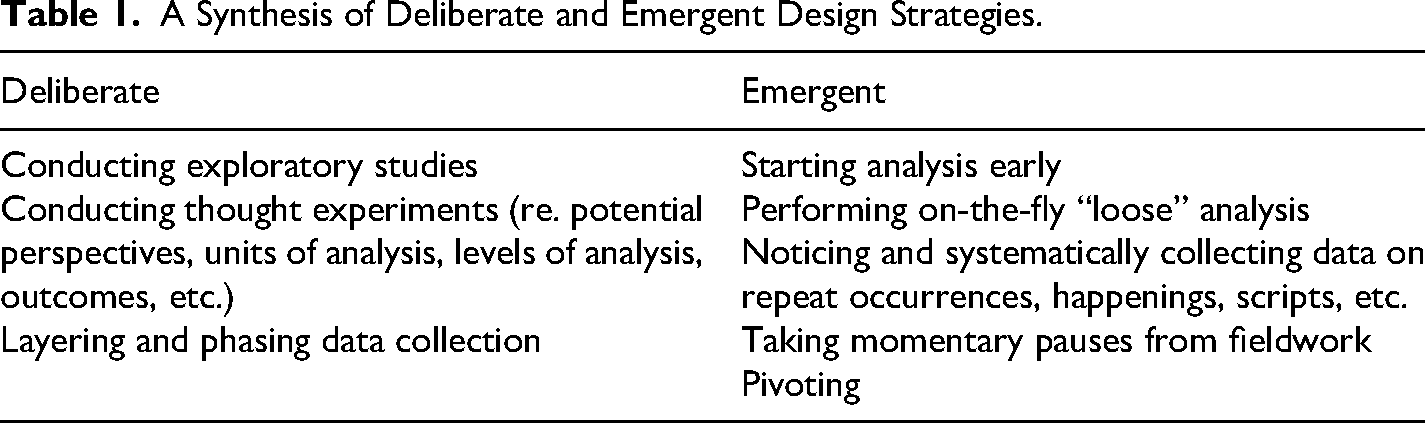

The list of deliberate and emergent strategies proposed here appears in Table 1. This list does not pretend to be exhaustive, especially given that researchers are constantly devising new and creative ways of navigating the unexpected twists and turns that inevitably arise when conducting qualitative research. It does however provide a useful starting point for dealing with such twists and turns and sets the foundations for developing conceptual nimbleness and methodological dexterity in the conduct of qualitative research.

A Synthesis of Deliberate and Emergent Design Strategies.

Competencies Worth Developing: Conceptual Nimbleness and Methodological Dexterity

Proposing a list of ways researchers can design and conduct qualitative research that is well-planned and yet adaptive is of course easier said than done. In my view, doing this well requires that researchers develop two core competencies, which I call: “conceptual nimbleness” and “methodological dexterity”. Both are required, in my view, to gain the necessary wherewithal to be able to identify potential empirical “opportunities to contribute” as they arise (Golden-Biddle & Locke, 2007) while making methodological choices that make it possible for researchers to pursue those opportunities in ways that allow them to build a robust and convincing story around them.

Conceptual nimbleness represents a researcher's ability to abstract from empirical data in various and creative ways while anchoring such abstractions in known conversations in the literature. This may be the literature initially envisaged as relevant for studying the phenomenon of interest, or an entirely different literature, to which the data at hand offer the opportunity to make a more significant contribution. The same data may lead to multiple interpretations, and thus contribute to different potential conversations in the literature. Finding the best theory/data coupling or “match” is a central part of the qualitative research analytical process. Developing this ability is thus important for producing impactful research.

How does one develop conceptual nimbleness? First, by reading extensively on various topics, both related and unrelated to one's specific field of interest. Denny Gioia, an accomplished qualitative researcher, discusses how he views himself as a “Renaissance Man”, someone who is interested in and develops knowledge on a great many topics (Gioia, 2013). Many qualitative researchers in business and management read widely and take inspiration for their ideas from texts in philosophy, sociology, anthropology, political science, and others (Barley, 2004; Tsoukas, 2018) in addition to texts—both journal articles and books—within their own field. They are not hyper-specialists. Accepting to do a lot of reviewing for journals on a wide range of topics can also be helpful for discovering new literature reports that we may not be familiar with. This of course also means reading some of the references cited by the authors you are reviewing to gain a sense of the conversation and the key debates within it. Second, researchers can develop conceptual nimbleness by routinely thinking of empirical phenomena as examples of theories. Somewhat like musicians who play the same song in different styles, researchers can generate conjectures (Weick, 1989) about their data by asking themselves repeatedly: “What is going on here” and “What is this a case of?” (or “What could this be a case of?”) to explore what different theories might apply, and when the shoe seems to fit, to investigate the related literature for gaps and puzzles that one's data and potential findings may help address or illuminate. Conceptual nimbleness is essential to be able to iterate between data and theory (Locke et al., 2022) that generate the conceptual leaps (Klag & Langley, 2013) that enrich understanding and allow us to progress on the road to generating insightful and convincing theory.

Methodological dexterity, on the other hand, represents the ability to make effective use of both planned and emergent research design strategies, both prior to starting a new qualitative research study, and during its conduct, alternating between both in an ongoing, proactive, and integrated way. It also means expanding one's methodological toolbox and cultivating methodological imagination so as to always be open and ready to collect new types of data and/or experiment with new ways of collecting and analyzing data beyond the usual templates (Harley & Cornelissen, 2022; Locke et al., 2022). Developing this ability can help researchers better navigate the “back-and-forth character in which concepts, conjectures, and data are in continuous interplay” (Van Maanen et al., 2007, p. 1146).

How does one develop methodological dexterity? In a similar way to being curious about and accumulating knowledge about research domains and theories, one can be curious and accumulate knowledge about qualitative methods. For a start, this involves making it a habit to examine the methods section of qualitative papers that are published in recognized journals, paying close attention to those that notably make use of and/or combine novel approaches. “Behind-the-scenes” workshops with qualitative researchers can be helpful for obtaining the analytical backstory behind published work. And finally, routinely reading methods papers and edited books in which different or new approaches are outlined and discussed (see for example Boréus & Bergström, 2017; Cassell & Symon, 2004; Elsbach & Kramer, 2016; Feldman, 1995; Langley, 1999) can also be helpful for building one's own repository of data collection and analysis techniques to call upon as needed and especially when there is an opportunity or a necessity to “pivot” while conducting a study.

In 2007, Van Maanen and colleagues argued saying, “It is an irony of scholarly practice in organizational research that the process of abduction, which likely goes on in most if not all promising research projects, is largely hidden from view” (2007, p. 1149). Fifteen years on, those wishing to learn this craft are as much left in the dark as they were back when these authors wrote their articles. Together, conceptual nimbleness and methodological dexterity help lift the veil—at least partly—from the processes that lead to the generation of insight from qualitative inductive and abductive analysis (Klag & Langley, 2013; Locke et al., 2008; Tavory & Timmermans, 2014).

Implications for Writing Up Deliberately Emergent Research Designs

By and large, the methods sections of journal papers tend to follow “the narrative structure of an idealized hypothetically-derived experimental report” (Locke, 2011, p. 639), in which research design, data collection, and analysis are presented as distinct and sequential activities, with findings emerging directly from these. Hidden from view, is the integrated, iterative, and very “live” process that leads to insight and characterizes qualitative research that adopts a discovery epistemology (Locke, 2011; Locke et al., 2016). Such forms of methodological reporting present a distortion (Wiedner & Ansari, 2018) which we collectively maintain under the pretense—or the misguided belief—that designing a study and collecting data first and analyzing it later is somehow better or more rigorous. While a detailed account of every in situ iterative decision made by researchers while carrying out their research is neither realistic nor necessary, a more accurate and realistic account of the reflexivity that goes into the designing of qualitative research studies should be encouraged, on the basis that it provides a more accurate rendition of what researchers actually did to bring “into relation data, ideas and conceptual resources to develop their insights” (Locke, 2011, p. 640). Reporting reflectively on key methodological decisions made in situ and detailing specific moments that led to insight as they arose provides a clearer and more credible roadmap that helps show how researchers came to the conclusions they are now presenting to their peers. These accounts might be done narratively, or figuratively (see for example Harrison & Rouse, 2014) and need not be exceedingly detailed nor overly long. Rather than methods as traditionally understood, such accounts would reflect methodological journeys that editors, reviewers, and readers can more easily assess and/or learn from, enhancing qualitative methods’ transparency, credibility, and overall trustworthiness (Lincoln & Guba, 1985; Pratt et al., 2020).

Conclusion

Pettigrew discusses how qualitative research, and in particular longitudinal research, is a process of “planned opportunism” that requires a “judicious mixture of forethought and intention, chance, opportunism, and environmental preparedness” (Pettigrew, 1990, p. 274), a process that well-published qualitative scholars have tended to navigate with the use of a bundle and mix of planned and emergent research design strategies, which they choose judiciously and opportunistically, on the basis of their intuition and experience, in combination with a fair dose of serendipity and luck (Fine & Deegan, 1996; Wiedner & Ansari, 2018). The surprises that lead to insight can occur any time: at the beginning, middle, or end of a research process (Van Maanen et al., 2007). This explains in part why scholars often view qualitative research as a craft (Prasad, 2017) that is difficult to teach and can only be properly learned by doing, notably (and preferably) via apprenticeship (Locke, 2011). This essay is an attempt to outline how this work might be done more reflexively and systematically, hopefully with fewer errors, “untold stories and blind alleys” along the way (Langley, 2018, p. 465).

Although more generative than wholly planned approaches and more focused than wholly emergent ones, deliberately emergent approaches to qualitative research design are not a panacea for overcoming all of the challenges one might encounter when conducting qualitative research. Conceptual nimbleness and methodological dexterity enhance one's ability to carry out such approaches well, but on occasions, despite their skill and best efforts, there are data that researchers simply cannot get. In which case they need to make a call: Can they continue to tell their story without these data? Or must they try to tell a different story? What data are needed to tell this story? In any given setting, there are always multiple stories going on and multiple ways in which those stories can be told (Anteby, 2024). Ultimately, the “doing” of qualitative research is about finding a compelling story to tell with the data that you have.

Footnotes

Acknowledgments

Special thanks to Daisy Chung, Mark de Rond, Julia DiBenigno, Anne-Laure Fayard, Arvind Karunakaran, Bess Rouse, and Tammar Zilber for sharing their personal experiences of designing and conducting innovative and impactful qualitative research. Many of the points raised and advice given here were derived from their very thoughtful insights. The author also wishes to thank Sam Van Elk and Martha Feldman for their thoughtful feedback on prior versions of this manuscript.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.