Abstract

Surveys among bureaucrats are a widely used tool of data collection in public administration research. However, survey researchers in public administration are facing various challenges, such as declining response rates among bureaucrat respondents and limited respondent pools. Moreover, researchers seeking to establish robust empirical evidence on the drivers of bureaucrats’ behaviour and attitudes encounter difficulties in collecting longitudinal individual-level data. This article argues that the research community should collectively address these challenges and demonstrates one way to achieve this: by establishing a researcher-driven survey infrastructure. We present such a novel survey infrastructure that may serve as a prototype for implementing this research agenda worldwide. We illustrate the innovative potential of combining longitudinal and paired research designs that involve parallel surveys of relevant stakeholders, including politicians and citizens. Finally, the article discusses dilemmas and trade-offs in the operation of survey infrastructures for public administration research.

Points for practitioners

Bureaucrat surveys are facing declining response rates and limited access to existing data. Survey infrastructures with bureaucrat respondents ensure targeted, cost-efficient and less burdensome data collection. To provide robust scientific and policy-relevant knowledge on the effects of intended changes and unpredictable events, survey data must be collected at different points in time. Government ministries and agencies should support survey infrastructures and offer access to bureaucrat respondents to improve their knowledge base and obtain a stronger foundation for policy decisions.

Introduction

Surveys are an established and valuable methodological tool in contemporary public administration (PA) research (Khurshid and Schuster, 2023; Verhoest et al., 2018; Yackee and Yackee, 2021). Yet there are important challenges in the implementation, analysis and reporting of survey research (G Lee et al., 2012). To conduct survey research, scholars crucially depend on direct access to bureaucrats as respondents, which often involves overcoming practical hurdles (e.g. obtaining consent from the organizational leadership) and technical hurdles (e.g. email firewalls). Survey researchers in PA also face a common pool resource problem, with multiple research teams, as well as governments themselves, targeting the same, limited pool of survey respondents. This is especially problematic if they are interested in a distinct group of bureaucrats, such as central government officials, who are typically fewer in number than, for example, local employees and who are of great interest to researchers due to their prominent role in policymaking (Kroken et al., 2025). Indeed, survey response rates among bureaucrats have been declining over time (see, for example, Yackee and Yackee, 2021: 560). Another problem is that survey data are often not made publicly available to the research community, creating incentives to field even more surveys. These challenges are significant for scholars interested in the behaviours and attitudes of bureaucrats, who rely on access to this hard-to-reach population (Jilke and Van Ryzin, 2017; G Lee et al., 2012).

We also observe a mismatch between some of the most fundamental questions in PA and the data collection tools available and typically used by PA researchers. In particular, few researchers are using longitudinal research designs such as panel analyses to establish cause–effect relationships (Murdoch et al., 2023), especially at the level of individual bureaucrats (for some exceptions, see Brænder and Andersen, 2013; Geys et al., 2020; Verlinden et al., 2025). This is unfortunate because many theories of administrative behaviour include assumptions about phenomena unfolding over time. For instance, researchers are often interested in causal effects of distinct events on the behaviour and attitudes of individual bureaucrats, including management reforms, organizational restructuring or changes in government, as well as dynamic processes such as workplace socialization (Geys et al., 2024a; Kjeldsen and Jacobsen, 2012). Whereas time-series data are sometimes available, there are few surveys collecting individual-level panel data (Fernandez et al., 2015; Stritch, 2017). 1 Taken together, PA researchers seeking to use surveys to collect individual-level data face considerable challenges, especially if they are interested in phenomena that require studying actors over time.

Finally, surveys of bureaucrats themselves are far from the only methodological approach in survey research in PA. Bureaucrats do not operate in a vacuum, and the relationship between bureaucrats and other stakeholders is an essential topic in PA research. This includes literature on the roles and relationships of politicians and bureaucrats (Grøn et al., 2024) as well as citizens’ views on and interactions with government (Lee and Van Ryzin, 2020; Vogel et al., 2025). Whereas a growing number of studies compares citizens and elites, that literature is primarily interested in comparing attitudes and preferences of citizens and politicians (Dellmuth et al., 2022; Kertzer, 2022; Walgrave et al., 2023). Future research needs to incorporate bureaucrats as crucial societal actors, because of their prominent role in policy formation and the increasing importance of citizen–state interactions (e.g. Jakobsen et al., 2016). We argue that there is a great potential for innovation in PA research through the combination of surveys of bureaucrats and other relevant populations, especially citizens and politicians, and we show in this paper how a survey research infrastructure that comprises different respondent populations can facilitate such research.

This article argues that establishing a permanent research infrastructure for surveys of bureaucrats is a potentially fruitful way to address the above-mentioned challenges. Whereas research infrastructures are often associated with large scientific equipment, they can be broadly defined as facilities that enable researchers to explore various types of research questions and which are open to external users. 2 We first present our experiences with the establishment and piloting of the Norwegian Panel of Public Administrators (Norsk forvaltningspanel, NFP), which may serve as a prototype to the research community for addressing several of the issues outlined above.

After that, we outline the innovative potential of this type of survey infrastructure, both for implementing longitudinal research designs and for combining surveys of bureaucrats with surveys of other relevant stakeholders using paired survey designs which use identical survey items across different respondent populations (cf. Kertzer and Renshon, 2022). More specifically, we explore the potential of cross-sectional and over-time comparisons between bureaucrats and other respondent groups. For this purpose, we outline four data collection strategies that involve comparisons along two dimensions, namely over time and across respondent groups.

Finally, we discuss dilemmas and challenges that may arise from collecting longitudinal data on bureaucrats and collecting paired data with other respondent groups. This includes the choice of repeated questions, panel attrition and potential challenges of fielding public opinion survey questions that are unrelated to bureaucrats’ workplace or tasks. Based on experiences in operating the NFP infrastructure, we discuss these trade-offs and provide possible solutions with the aim of stimulating methodological reflections in the field of PA research.

Taken together, we propose a novel way of collecting high-quality survey data on bureaucrats as a response to pertinent problems of data collection, and to share experiences and challenges in the practical development of a research infrastructure with the broader PA research community. The article will therefore not present empirical data from the panel(s) but outline ways in which longitudinal and paired survey data can be employed for the advancement of PA research. To showcase the innovative potential of the infrastructure, we present examples of scholarly work based on NFP data.

The importance of research infrastructures for surveys of bureaucrats

As a response to the challenges of respondent access, survey overload and data sharing, NFP was created in 2020 as a permanent research laboratory consisting of employees in the Norwegian central administration, including ministries and agencies (Bach et al., 2022). NFP routinely fields surveys to respondents twice a year and recruits new panel members at regular intervals. The panel allows for repeated observations of individuals over time, including the possibility for repeated measurements of identical questions. Through an identification key, individual respondents are identifiable across multiple waves of data collection.

NFP is designed as researcher-driven infrastructure that invites researcher groups to submit proposals for survey items through open calls. NFP uses a time-sharing model, which means that surveys include questions from multiple research groups (‘omnibus surveys’) who share an allotted amount of time in a survey (Mutz, 2011: 5–7). Proposals are subsequently reviewed by a scientific committee and undergo intensive piloting before fielding. By including items submitted by different research groups who can jointly use background variables (Mutz, 2011), the time-sharing model significantly reduces the problem of survey overload. To access respondents, NFP relies on a combination of publicly available contact information and negotiated access with the leadership of surveyed organizations. Finally, de-identified data are made available in a public repository after a quarantine period.

The overall design of NFP is expected to save resources for both respondents and researchers, reduce the overall number of surveys being fielded and provide high-quality data on bureaucrats. The infrastructure lowers the practical hurdles for researchers in accessing respondents, which is among the most time- and resource-intensive aspects of fielding surveys. Moreover, the restricted space in each survey offered by the time-sharing model encourages strict prioritization by the research groups who submit survey items.

NFP is part of a larger research infrastructure, Coordinated Online Panels for Research on Democracy and Governance in Norway (KODEM), which has been granted the status of national research infrastructure from 2025 onwards. 3 KODEM also hosts a citizen panel and a panel of elected representatives which allow for the collection of paired survey data – that is, using identical survey items across these different panels (cf. Kertzer and Renshon, 2022). This unique feature allows researchers to address novel research questions about the relationship between PA and other key actors in the government system. The infrastructure facilitates direct comparisons between citizens and different elites (Kertzer, 2022) as well as over-time comparisons between different groups of respondents. We elaborate below on the value of contrasting data of bureaucrats with significant other actor groups in their environment, namely citizens and politicians.

Paired and longitudinal data collection on bureaucrats, politicians and citizens: four approaches

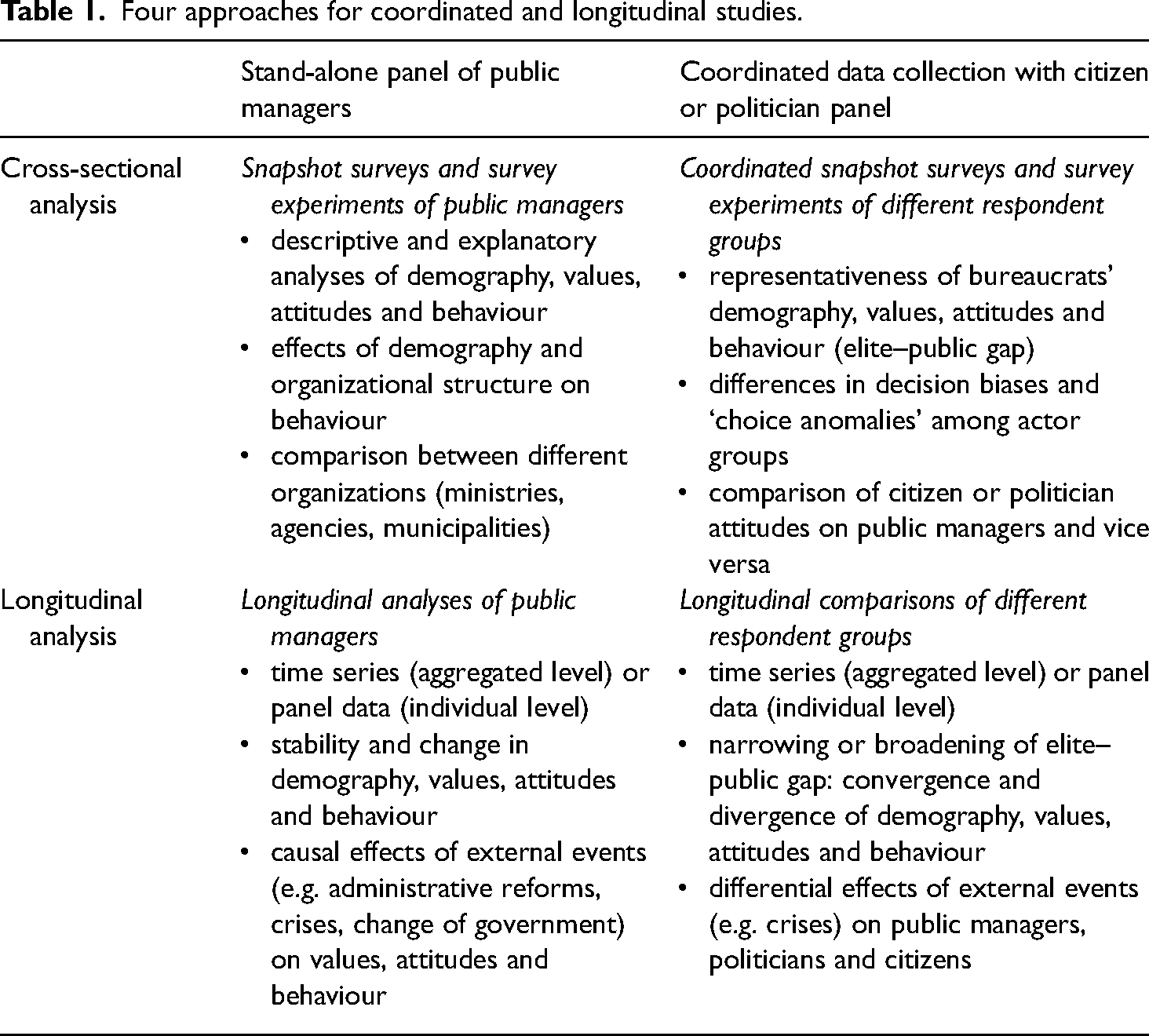

This section provides an overview of widespread survey research designs within PA using bureaucrat respondents, namely cross-sectional and longitudinal studies. After that, we outline the innovative potential of coordinated cross-sectional and longitudinal data collection among bureaucrats, citizens and politicians. We condense this into four approaches for survey research on bureaucrats, politicians and citizens using different research designs (Table 1).

Four approaches for coordinated and longitudinal studies.

Although writing this with our experiences with the Norwegian infrastructure in mind, our aim is to stimulate new approaches to theoretically driven, empirical research in PA more broadly.

Snapshot surveys of bureaucrats

Most surveys of bureaucrats are cross-sectional (a ‘snapshot’) of a given population. In their simplest form, these are ‘one-off’ surveys in the context of a single research project. Snapshot surveys allow for descriptive and explanatory analyses of various phenomena, such as bureaucrats’ role perceptions, decision-making behaviour (as expressed through survey responses) and their perceptions of reform effects (Egeberg and Trondal, 2018; Wynen et al., 2019). Such studies typically consider individual (micro-level), organizational (meso-level) and country-level (macro-level) explanations. One limitation of cross-sectional studies is endogeneity or the fact that cause-and-effect relationships are hard to disentangle. Thus, unless they include experimental manipulation (Druckman, 2022; Mutz, 2011), cross-sectional studies provide a weak basis for causal inferences.

A growing number of surveys in PA research are comparative and designed to measure actor-level attributes across countries (Verhoest et al., 2018). These include project-based surveys, such as the Coordinating for Cohesion in the Public Sector of the Future (COCOPS) survey of senior managers (Hammerschmid et al., 2016) and surveys of agency chief executives (Schillemans et al., 2021; Verhoest et al., 2012). Another prominent example is the Global Survey of Public Servants that conducts similar surveys in different world regions in order to ‘to harmonize large-scale comparable micro-survey data from public servants around the world’ (Schuster et al., 2023: 983). Although the primary goal of these surveys is comparative in nature, they can also be considered a parallel variant of cross-sectional surveys, as they allow for the analysis of within-country variation, which is often substantial.

There are two important differences between one-off and panel-based cross-sectional surveys. First, to conduct one-off surveys, researchers must spend scarce time and resources accessing respondents. In a panel of bureaucrats, researchers are relieved of the burden of negotiating and organizing access to respondents. Second, whereas one-off surveys must collect information about dependent, independent and control variables simultaneously, a panel infrastructure offers researchers access to previously collected data. This may include demographic information such as age, gender and education which could be used as explanatory or control variables (Bach et al., 2022). Finally, by pooling information collecting during different survey waves, a panel structure allows researchers to include routinely collected batteries of core questions (for example, on turnover intention) as well as previously collected data.

Longitudinal surveys of bureaucrats

A second research design is longitudinal survey data collected among bureaucrats at two or more points of time, yet not coordinated with other groups (panels). Public organizations are constantly subject to reform and change (Carroll et al., 2022). Longitudinal data are required to do systematic studies of continuity and change over time in the attitudes and behaviour of bureaucrats. More specifically, to strengthen inferences about causal relations, researchers need to collect identical data at different points in time.

Longitudinal studies have gained a more prominent place within PA research in recent years, yet they have not exhausted their potential (Murdoch et al., 2023). Longitudinal data are useful in the sense that causes precede effects. Because of their sequential structure, they reduce the problem of endogeneity and are thus better suited to address some of the problems of causality that have traditionally afflicted cross-sectional studies (Stritch, 2017; Zhu, 2013). Yet, to draw causal inferences, the data must meet the independence requirement according to which some units are randomly assigned to one or several treatment conditions whereas others are a control group representing the counterfactual – that is, what happens if nothing happens (Druckman, 2022: 29–30).

In longitudinal studies of bureaucrats, causal designs will often involve an ‘as if’ randomization, where researchers must demonstrate that observed units are similar except for their allocation to a treatment or control group (Druckman, 2022: 37–39). Although major crises such as COVID-19 will often affect all employees equally, reorganizations are often restricted to certain administrative units, or they are implemented sequentially. Likewise, changes of ministers may occur outside a wholesale shift of government (Askim et al., 2024). Major events that do not affect the entire administration – such as a terror attack affecting some office locations more than others (Geys et al., 2023) – constitute opportunities for causal analysis. In addition, the problem of common-source bias is reduced with longitudinal data compared to cross-sectional data (Kim and Daniel, 2020).

Longitudinal data have been used to study a wide range of topics in PA. In the US, the American State Administrators Project (ASAP) has collected survey data on state agency leaders since the 1960s which allows for assessing continuity and change over time in the American administration – such as autonomy and leadership (Yackee and Yackee, 2021). For instance, research using ASAP shows that agency leaders shift their partisan identification in response to political turnover (Geys et al., 2024b). In addition, a growing number of countries regularly conduct government-wide employee surveys (Khurshid and Schuster, 2023). For example, the US Office of Personnel Management annually conducts the Federal Employee Viewpoint Survey. Although this survey has been used in many academic publications, it is restricted by the lack of individual identifiers which would transform it into a panel study (Fernandez et al., 2015).

Surprisingly, only a few studies within the burgeoning field of public service motivation (PSM) have used longitudinal research designs. In a systematic review, Ritz et al. (2016: 418) observe that no more than 7.4% of the reviewed articles used longitudinal data. For instance, Ward (2014) used panel data to examine how PSM changes over time, whereas Taylor and Westover (2011) used time-series data to examine determinants of job satisfaction. Brænder and Andersen (2013) studied effects on PSM among soldiers who served in Afghanistan. Van Loon et al. (2018) assessed the relationship between PSM and organizational performance in a longitudinal study, using aggregated survey data. Although longitudinal surveys of bureaucrats are not ideal for understanding attraction to the public sector in the first place (for a study including pre job-entry data, see Kjeldsen and Jacobsen, 2012), they are well suited for addressing antecedents and consequences of PSM, as well as socialization effects in different career stages, and have the potential to advance theorizing and provide actionable insights for practice.

In a review of longitudinal data in PA research, Stritch (2017) observes that most longitudinal studies use organizational (meso-level) data. Thus, there is a need for more longitudinal survey data to understand the (micro-level) attitudes, experiences and behaviours of bureaucrats over time. Although citizen surveys that are repeated at regular intervals using similar questions are well established in general social science research, the authors are aware of only few efforts of continuous data collection in PA research. To systematically engage in such an effort requires a permanent research infrastructure and agreement among scholars on relevant survey items. We return to these issues below.

Coordinated snapshot surveys of bureaucrats, politicians and citizens

Another research design which becomes possible through survey infrastructures such as KODEM involves a coordinated, simultaneous data collection among multiple respondent populations, such as bureaucrats, politicians and citizens (and potentially other actor groups). This approach allows for the assessment of elite–public and elite–elite gaps (e.g. between politicians and bureaucrats), which may take the form of compositional differences (demographic backgrounds), attitudes and decision-making behaviour (Dellmuth et al., 2022; Kertzer, 2022). Moreover, a growing body of literature studies those gaps using survey experiments, investigating differential treatment effects across different populations. For instance, scholars have compared decision-making biases of politicians and general populations, showing that politicians exhibit similar or even higher levels of bias than citizens (Sheffer et al., 2018). However, systematic comparisons between bureaucrats, citizens or politicians are less widespread and would benefit from coordinated survey infrastructures as outlined in this study.

A fundamental issue pertains to the representativeness of public bureaucracies compared to the general population. The representative bureaucracy literature (see Meier, 2019 for an overview) explores both passive representation (the degree to which demographic attributes of bureaucrats reflect those of the general population) and active representation (the degree to which these attributes shape decision-making targeting the societal groups they represent). The prevalence of passive and active representation may be studied through surveys and survey experiments (Jilke and Tummers, 2018). The question of the bureaucracy's representativeness has also been expanded to cover policy preferences. For instance, Murdoch et al. (2018) find a high degree of preference convergence among citizens and European Commission bureaucrats regarding preferred levels of European Union (EU) integration.

Another comparative dimension is related to the (political) attitudes of bureaucrats, citizens and politicians. Comparisons between bureaucrats and other respondent groups can improve our understanding of the distinct preferences of bureaucrats. This is illustrated in a recent study using the KODEM infrastructure. This study used a paired survey experiment to examine how citizens, politicians and bureaucrats perceive the competence and political responsiveness of agency heads in Norway (Askim et al., 2025). The study shows that agency heads who deviate from the ideal of a career civil servant with subject expertise are considered less competent and more politically responsive. Moreover, the study finds differential treatment effects, showing that citizens are largely indifferent to hiring agency heads with political background, whereas bureaucrats are clearly negative. This study illustrates how a research infrastructure consisting of multiple panels provides new insights by comparing attitudes between citizen and elite respondents on issues of meritocracy and politicization.

An important question in PA research concerns whether differences in political attitudes between bureaucrats and citizens are the result of compositional differences, or other factors, such as self-selection and socialization dynamics. In a meta-analysis, Kertzer (2022) finds that elite–public gaps are often overstated, and can largely be attributed to compositional differences between those groups. A recent study using the NFP infrastructure finds important differences in support of legal harmonization in the EU between citizens, politicians and bureaucrats, which are at least partially explained by compositional differences (Moland, 2024).

Finally, coordinated panels of bureaucrats, citizens and politicians allow for comparing the reciprocal attitudes and expectations that these groups may hold. We return to this aspect in the subsequent section.

Coordinated longitudinal surveys of bureaucrats, politicians and citizens

Combining time- and cross-panel designs is the most ambitious and therefore the least used research strategy in PA research. This section sketches the innovation potential of this research design. The combination of paired and panel designs allows novel research questions to be asked about the role of bureaucrats within a wider societal context. A combination of comparisons between groups and panel (i.e. longitudinal) data enables researchers to compare patterns across groups over time. This design can address the fundamental question of whether attitudes and behaviours converge or diverge over time. For instance, one may study attitudes to organizational reforms and unplanned events over time and across different groups. Some changes are unexpected (crises) whereas others are planned (reforms) (Wynen et al., 2019). For both types of change, data collection through panels allows for measuring actors’ attitudes through repeated questions (e.g. institutional trust) before, during and after a change. In the case of crises, panels also allow for ad hoc surveys on context-specific questions. To a limited extent, citizen surveys have been utilized to measure changes in citizen–state interactions over time (see Trautendorfer et al., 2023), but without systematic comparisons of citizen and bureaucrat samples.

A further set of research questions that can be addressed by coordinated longitudinal data concerns opinion leadership and mutual expectations. Research on opinion leadership has often been centred around the role of media but also shows that media are ‘movable’ in response to political leaders’ positions (Habel, 2012). However, far less is known about the influence of administrative elites and the relationship between elected and non-elected government officials. Moreover, when governments shift or engage in democratic backsliding, to what extent are politicians’ expectations towards bureaucrats’ responsiveness reciprocated by the bureaucrats themselves? How resilient are fundamental bureaucratic values such as legality and truthfulness in changing societal contexts (Yesilkagit et al., 2024)? These are fundamental questions for studying PA in a democratic context, and both sets of questions may be addressed using longitudinal survey data from different panels, representing different groups.

To sum up the discussion on the four avenues for PA survey research, we see a preponderance of cross-sectional designs. We argue that collecting longitudinal data (panel data in particular) at short intervals provides better opportunities for studying processes of change. In addition, coordinated collection of data from different actor groups allows for analyses of PA within a wider societal context, offering more comprehensive knowledge on the role of PA under varying and shifting governing conditions. Although it is a promising way forward, we also need to be mindful of possible dilemmas and trade-offs in the panel-based strategy.

Dilemmas and trade-offs of coordinated and longitudinal panels

As much as this article advocates for the value of individual-level panel data in PA research, we also acknowledge challenges to such data collection strategies. This section discusses some key challenges: representativeness or external validity of samples, access to respondents, attrition, mixed questions and perceptions of irrelevance, and finally conditioning.

Representativeness

A first concern relates to the representativeness or external validity of the sample relative to the population (Lee et al., 2012). In the quest for representative samples, the data quality of surveys relies profoundly on respondents’ willingness to take part. Arguably, probability samples are the gold standard for recruiting participants in general population surveys. The analogous procedure for recruiting participants to bureaucrat surveys is to draw a random sample from a known population of employees. Alternatively, some surveys use a census, inviting all employees fulfilling certain criteria to participate (Christensen et al., 2018; Khurshid and Schuster, 2023). Probability samples minimize the burden imposed on participants and leave possibilities for recruiting new participants. That said, to assess sampling bias probability sampling requires information about the population regarding relevant personal and job-related characteristics. In the case of NFP, some demographic data are available, allowing for the estimation of representativeness at different administrative levels. 4

Accessing participants

A second recurrent challenge is accessing participants, which in practical terms means accessing names and personal addresses (email or, depending on the context, mail address). One promising approach towards developing new strategies for data collection is to collaborate with governments and negotiate for survey space for standardized questions. This strategy has the potential to push the boundaries of comparative research if similar questions are used across different contexts (Schuster et al., 2023). However, close collaboration with governments can present a dilemma by potentially restricting researchers’ autonomy in developing survey questions, as researchers may be compelled by public authorities to make compromises on survey content as a condition of cooperation (for a related discussion, see Jensen et al., 2024).

Alternative ways of recruiting participants include reaching out to professional associations (or labour unions) or relying on publicly available contact information, but these approaches come at the risk of biased sampling. Similarly, our experience indicates that ministries and agencies with many individual users often withhold employee contact details on their websites, which creates biases in the sampling procedure. At present, NFP combines the strategy of contacting the leadership of relevant ministries and agencies and collecting publicly available contact information. In addition, involved researchers disseminate their findings to practitioners and thereby establish close collaboration with government organizations.

Attrition

Third, the literature on panel designs highlights attrition as a key challenge concerning sample selection and bias. Attrition refers to non-response and dropout due to fatigue or panel members’ negative reactions towards specific survey questions. First, when respondents drop out of the panel, there will be a corresponding decrease in the number of observations, which negatively affects statistical power. Second, and perhaps most importantly, low retention rates risk causing bias when dropouts are not random, which means that sample representativeness suffers over time.

Arguably, compared to general population samples, attrition plays out differently, although not necessarily less significantly, in a sample of bureaucrats. In a citizen panel, new participants can be recruited from a near-boundless respondent pool. By contrast, bureaucrats constitute a restricted base from where to recruit new participants. Consequently, attrition will sooner lead to problems of draining the respondent pool in a bureaucrat sample. Another challenge related to attrition in PA panels concerns job turnover and staff mobility (Lynn, 2018). Whereas attrition in the conventional sense implies that respondents tire of participating, turnover implies that they are no longer part of the population of interest (such as ministry-level bureaucrats). Alternatively, they might move to a different position and drop out of the panel for practical reasons – for example, because they have a new email address. For now, we have observed in NFP that attrition among bureaucrats follows similar patterns to those among citizens, with approximately 50% of the respondents dropping out after responding to one survey (Skjervheim et al., 2023). A close monitoring of attrition in NFP will provide more knowledge in the future concerning retention bias for this specific population. To minimize the risk of losing access to respondents in NFP, they are asked for different types of contact information upon registering for the panel.

Mixed questions and perceptions of irrelevance

Fourth, when data collection is coordinated with multiple panels, surveys of bureaucrats consist of a mixed set of questions, some related to bureaucrats’ workplace and others on topics of more general interest. Cross-panel comparisons often imply that some questions are more pertinent to a particular group than others. Bureaucrats may drop out if they receive too many questions that they perceive as irrelevant to their professional role or context. A specific version of this challenge arises when questions are perceived as sensitive in a work-related setting, especially in central administrations. For instance, questions about respondents’ political opinions (such as party preferences) have been found to result in higher levels of item non-response (Bach et al., 2022).

To counteract perceptions of irrelevance or sensitivity among respondents, questions that are posed to different panels must be thoroughly assessed in advance, especially during the peer-review process of survey proposals. In addition, it is crucial to pilot surveys fielded to bureaucrats, which is an integral part of NFP. Finally, in NFP, respondents are informed in advance about the importance of collecting data across different societal groups, and we have no evidence of significant differences in item non-response between bureaucrats and other respondents on survey items such as climate change, integration or political trust (Bach et al., 2022).

Conditioning

A final and pertinent challenge for panel surveys discussed here is conditioning, in which respondents could be affected by their repeated exposure to survey questions and over time grow less representative of the population (Sturgis et al., 2007). With respect to conditioning effects, they may be immediate (from awareness of being observed) or long term (a gradual change stemming from a continued exposure to certain topics). We argue that a panel of bureaucrats in the central government is not entirely comparable to population samples. Bureaucrats are normally well versed in topics covered in workplace-related surveys, whereas citizens arguably spend less time reflecting on the survey questions they are being asked. Accordingly, we should expect a higher degree of stability in the responses of long-time members in a panel of bureaucrats than in a citizen panel. As a result, observed changes between waves of data collection are more likely caused by actual changes than by conditioning effects. Also, we expect bureaucrats to be more genuinely interested in the contents of surveys. We should thus expect the problem of ‘professional respondents’ (Matthijsse et al., 2015), who are mainly interested in financial incentives for taking part in surveys, to be less acute in panels of government officials than in population samples. In NFP, participants thus receive no financial compensation. The remedies for problems of conditioning are not always straightforward. Yet the NFP represents a unique opportunity to gain methodological insights into the questions arising from coordinated and longitudinal data collection.

Conclusion

Survey data at the individual level – particularly longitudinal data and data that are paired with other respondent groups – remain scarce in PA research, despite their critical importance for understanding topics such as change processes, role perceptions and relationships with service users. This article has presented a novel survey infrastructure that enables both longitudinal and coordinated data collection across bureaucrats, politicians and citizens. We argue that survey infrastructures are instrumental for advancing empirical and theoretical insights in PA, and the Norwegian experiences with NFP offer a prototype for similar efforts globally.

Our article yields four key contributions. First, we highlight the need for more targeted and efficient data collection. Research infrastructures like NFP reduce the burden on both researchers and respondents through a time-sharing model. This ensures more sustainable and cost-effective data collection compared to stand-alone research projects.

Second, research infrastructures are instrumental to attain an improved understanding of changes related to public administration. Longitudinal data allow for the study of reforms, crises and other dynamic developments, enhancing our understanding of change processes and their effects.

Third, we highlight the importance and innovative potential of collecting data that facilitate cross-group comparisons. Paired surveys enable systematic comparisons between bureaucrats, citizens and politicians. This helps identify gaps in expectations, values and perceptions – insights that are crucial for improving trust, responsiveness and government performance.

Fourth, innovations in data collection support evidence-based policymaking. By facilitating research based on high-quality, policy-relevant data, survey infrastructures empower political and bureaucratic decision-makers to base decisions on sound empirical evidence rather than intuition or ad hoc information. By facilitating more robust causal inferences, panel infrastructures can provide more convincing justifications for policy decisions.

As with other forms of data collection, survey infrastructures present some challenges. We have discussed dilemmas and trade-offs in the design and operation of such infrastructures, including issues of representativeness, attrition and panel conditioning. We have illustrated potential solutions to these challenges, using the case of NFP. Principally, we have underlined the need for a thorough review procedure in such infrastructures, not only to improve survey item quality, but to gain confidence among (would-be) respondents. This is particularly important for bureaucrat panels due to the restricted pool of respondents.

Ultimately, this article calls for a broader methodological conversation in PA research. The article has exposed pressing issues related to surveying bureaucrats as a distinct target group. At the least, we cannot take for granted that all issues concerning panel designs can effectively be dealt with on a generic basis. In other words, there is a need for a dedicated debate on PA survey methodology (see also Khurshid and Schuster, 2023). This article aims to stimulate such a conversation on the possibilities and challenges of establishing a survey infrastructure for research on government bureaucrats.

Footnotes

Acknowledgements

We thank the journal's anonymous referees for their constructive comments. We also thank the participants at the workshop ‘Longitudinal Directions in Studies of Public Administration’ 26–27 February 2022 in Bergen (Norway) for their comments on an earlier version of this manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This work was supported by the Research Council of Norway (grant number 350235).