Abstract

Railway switch fault detection is an important aspect to ensure the safe and reliable operation of railway systems. This study aims to improve the effectiveness and accuracy of switch fault diagnosis, thereby reducing potential faults and improving the reliability of railway transportation. To solve the problem of imbalanced fault data, the synthetic minority oversampling technique (SMOTE) is used to increase the representativeness of rare fault conditions. Subsequently, the fractional Fourier transform (FroFT) is applied to extract feature vectors with diagnostic value from the current signal of the switch, effectively capturing the subtle changes caused by the fault. These fine features significantly improve the accuracy of fault detection. The extracted features are then input into a bidirectional long short-term memory (BiLSTM) neural network with an integrated attention mechanism to enhance the model’s ability to identify various fault states. Test results show that the proposed FroFT-Attention-BiLSTM method outperforms the traditional BiLSTM model and achieves significant improvements in key performance indicators: accuracy (+6.59%), precision (+6.01%), recall (+5.61%), and F1 score (+6.19%). These remarkable improvements verify the theoretical and practical effectiveness of the proposed method, providing a powerful and innovative solution for railway turnout machine fault diagnosis.

Keywords

Introduction

In recent years, the railway system has exhibited a swift advancement toward intelligence and automation, using sophisticated information technology, the Internet of Things, big data analytics, and artificial intelligence to enhance operational safety and efficiency.1–3 Turnout machines, as the fundamental element of railway signaling, govern the train’s trajectory by altering the track connection configuration, facilitating the train’s movement between several tracks. 4 Due to its essential function in the railway system, the identification of faults in turnout machines is crucial for maintaining safe railway operations.5,6 In this situation, it is crucial to create intelligent and efficient fault detection algorithms for turnout machines, which can enhance the accuracy of real-time problem diagnosis and assure the long-term health and stable operation of the railway system.7–9

Centralized railroad signal monitoring systems now conduct information collecting and manual analysis to detect switch machine issues in railway networks. This approach aims to identify potential problems by compiling and analyzing the operational data of the switch machines. However, this technique has evident drawbacks, chiefly demonstrated by its need on extensive data and the human factor in the analytical process. 10 Consequently, the advancement of increasingly sophisticated and automated fault detection methodologies has been a vital focus of research in this field. The current principal constraints of defect detection systems are their inefficiency and inaccuracy, alongside labor-intensive and time-consuming processes. 11 These methods generally need extensive manual data processing, resulting in extended reaction times and difficulties in handling complex or hidden problem categories. 12 The improvement of operating efficiency, security, and railroad safety is now dependent on the advancement of automated and more effective fault detection techniques.

Some researchers have proposed a diagnostic strategy grounded in expert expertise. 13 This method is characterized by its use of historical data. It provides the advantages of autonomous learning and elevated diagnostic efficacy. Self-learning and rule adaptation are, however, domains where this technique is deficient, since it requires an inordinate amount of training samples and is strongly reliant on expert assessment. A mathematical model-based fault diagnosis method 14 has emerged, achieving enhanced efficiency and reduced reliance on expert opinion through feature and model comparison; however, its applicability is limited by stringent requirements and challenges in model updating. Subsequently, scientists proposed a data signal-driven technique based on. 15 This method collects and examines diverse signals to identify fault characteristics. It eliminates the need for complicated model development; yet, it involves considerable computational and processing intricacy, along with reliance on preexisting information and manual experience.

In recent years, the domain of fault detection has advanced considerably, particularly with the utilization of deep learning, transfer learning, and few-shot learning.16–18 Deep learning techniques, including convolutional neural networks (CNN) and long short-term memory networks (LSTM), have demonstrated significant efficacy in defect feature extraction and categorization of intricate systems. The deep convolutional neural network model developed by Tang et al. 19 effectively achieved intelligent defect detection of rotating equipment, surpassing the constraints of conventional approaches in handling high-dimensional data. Nonetheless, deep learning techniques often need substantial quantities of labeled data, which might be challenging to acquire in some industrial contexts. Transfer learning technology has been implemented in the domain of fault diagnosis to address the issue of data scarcity. Tian et al.’s 20 cross-domain defect diagnostic technique successfully transfers information from the source domain to the target domain, markedly decreasing reliance on labeled data in the target domain. Nonetheless, transfer learning encounters obstacles when addressing significant disparities in the distributions of the source and target domains. Small sample learning, as an innovative approach, seeks to utilize fewer samples for effective learning. The small sample defect detection framework utilizing meta-learning, as presented by Li et al., 21 may attain excellent diagnostic accuracy with a limited number of samples, offering a novel approach to addressing data scarcity issues. Nonetheless, few-shot learning methodologies require enhancement for their generalization capacity and stability.

In light of the limited data on rutting machine malfunctions and the difficulties presented by imperceptible traits, researchers have adopted methods to enhance the dataset. Certain researchers have utilized feed-forward neural network techniques to get supplementary fault characterization data. In contrast, others have automated the creation of sample data including faults by exposing basic models to extensive testing. The primary aim of these strategies is to augment the quantity and diversity of samples to improve the accuracy and reliability of problem diagnostic systems. By employing this technique of enhancing the sample database, the limitations imposed by insufficient data are overcome, hence significantly increasing the efficacy of defect detection models. The Fourier transform, owing to its capacity to retain and analyze significant information inside data, has become an essential tool in signal data analysis, defect detection, and forecasting.22–24

Fault diagnostics for railway switch machines is crucial for maintaining the safety and reliability of railway transportation networks. Nonetheless, current methodologies encounter substantial difficulties in identifying intricate signal characteristics and mitigating data imbalance. Numerous methodologies continue to depend significantly on human analysis and bespoke feature engineering, hence constraining diagnostic efficiency and precision. This study suggests a new diagnostic framework that integrates deep learning and feature enhancement to satisfy the defect diagnosis needs of critical equipment in rail transit signaling systems. The Synthetic Minority Over-sampling Technique (SMOTE) is employed to improve the structural characteristics of the sample space by enhancing data for rare fault modes, thereby mitigating the impact of the unbalanced distribution of fault samples on diagnostic accuracy. This study’s principal contributions are as follows:

This study introduces a fault diagnosis method that integrates the Fractional Fourier Transform (FrFT) with a Bidirectional Long Short-Term Memory (BiLSTM) neural network enhanced by an attention mechanism, significantly improving the accuracy of fault feature extraction and recognition.

To address data imbalance, this study applies the SMOTE, enhancing the model’s capability to accurately identify minority fault classes.

Simulation experiments demonstrate that the proposed method substantially outperforms traditional approaches in key performance metrics, including accuracy and recall. These advancements have significant implications for improving the safety and maintenance efficiency of railway systems.

The organization of this paper is as follows: Section 2 introduces the fault types and data processing methods; Section 3 provides a detailed explanation of the designed fault detection algorithm; Section 4 presents the testing and analysis of the algorithm’s performance; and Section 5 summarizes the findings of this study and proposes future research directions.

Fault types and data handling

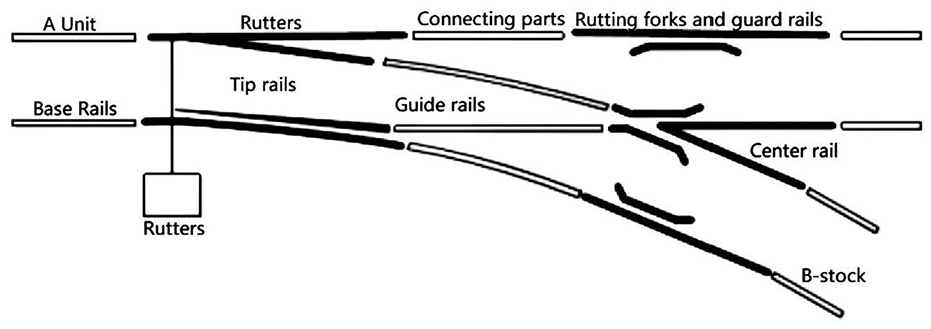

Structure of switch machine rutting machine

The railway switch machine is an essential element of railroad infrastructure, tasked with directing train movement, as seen in Figure 1. Alongside the switch machine, it encompasses supplementary components like rail guards. Its principal duty is to supply the requisite power to activate different components, facilitating the modification of a train’s route. The operational system depends on control circuits to activate the movement of the heart and tip rails, thereby altering the machine’s location. The functioning of a switch machine has three critical phases—unlocking, switching, and locking—each vital for maintaining the efficiency and safety of railway transit.

Schematic diagram of switch machine composition.

Modeling of output tension and power

The correlation between the output tension and output power of the rutting machine is predicated on the premise of linear proportionality:

where F represents the pulling force generated by the rutting machine; P signifies its power output; k denotes the conversion efficiency coefficient utilized to transform the power into the corresponding pulling force.

The utilization of the correlation between velocity and power, the,

where

The design of a rutting machine must comply with a specified safety criterion: the design pulling force must exceed a designated multiple of the maximum expected operational pulling force. Railroad safety is guaranteed by the switch machines’ capacity to operate reliably, even in the most extreme situations:

where

Fault type analysis

This study identifies seven fault categories derived from the most prevalent faults encountered in the operation of railway switch machines, which significantly affect system safety and are likely to induce operational interruptions. This selection exemplifies prevalent errors in switch machine operation, informed by several field maintenance data sources and expert advice, hence assuring the study’s representativeness, and practical significance. This research offers a thorough assessment of the fault diagnostic model’s efficacy in practical applications by concentrating on these seven principal problem kinds.

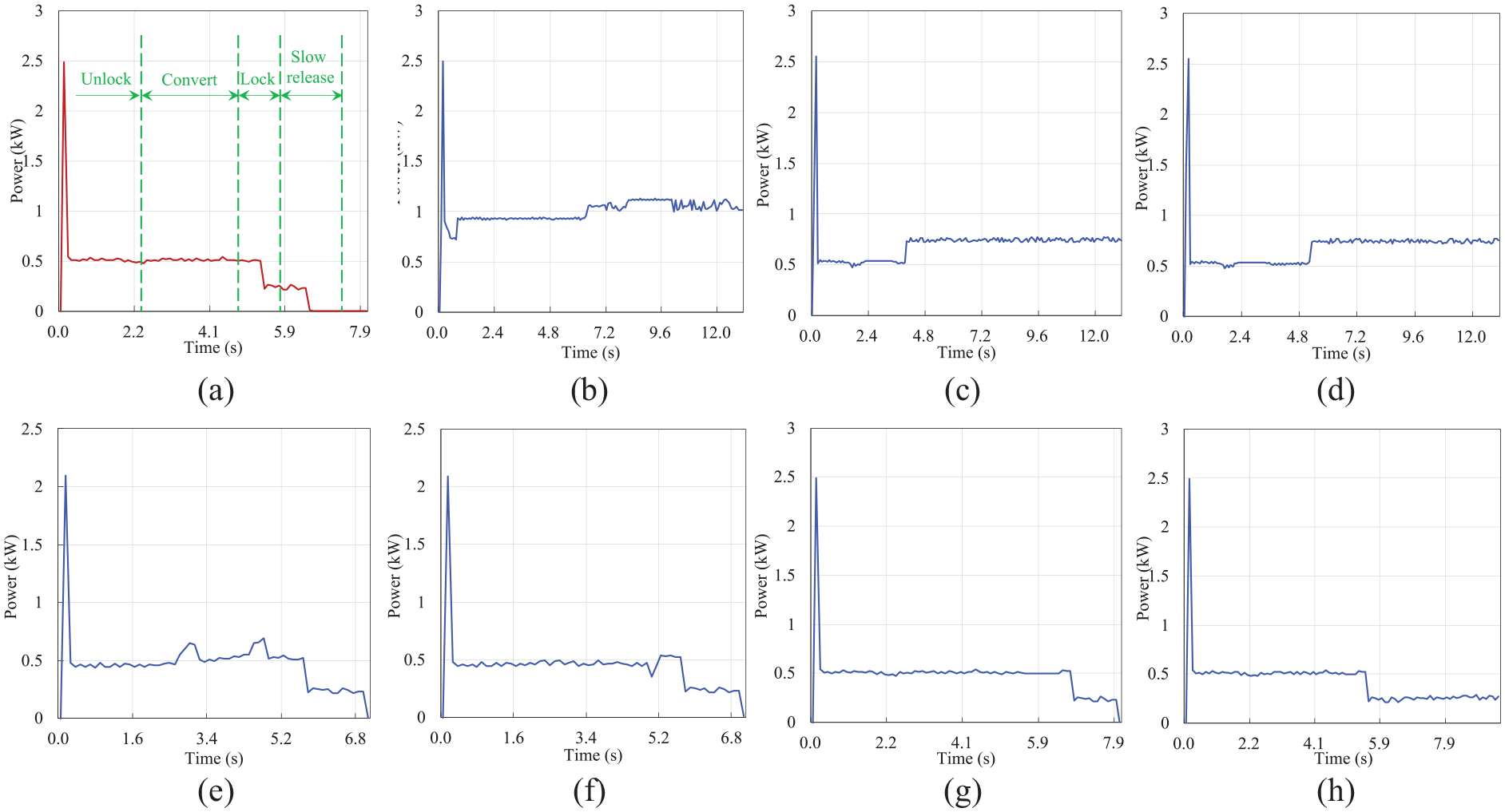

The study examines seven categories of issues by exploring the routine operation and maintenance procedures of the train system and synthesizing the insights of field maintenance personnel, as illustrated in Figure 2. The data employed in the fault diagnosis database referenced in the article solely derives from the seven categories of faults.

Schematic diagram of the seven faults: (a) normal curve, (b) Fault 1, (c) Fault 2, (d) Fault 3, (e) Fault 4, (f) Fault 5, (g) Fault 6, and (h) Fault 7.

The normal curve

Figure 2(a) depicts the probability curve of a switch machine functioning under standard conditions, devoid of any malfunctions. The curve is segmented into four principal zones: the unlocking zone, conversion zone, securing zone, and delayed release zone. A greater starting current is necessary to commence moving in the unlocking zone. In this phase, the gear spins at a designated angle to actuate the rack and pinion block, facilitating the unlocking mechanism. The conversion zone follows, indicating the transition where the drive rutting equipment finishes the unoccupied distance post-unlocking. The securing zone refers to the ultimate placement of the rail tip, the disconnection of the starter circuit, and the activation of the fork lock. The locking current often exceeds the action current somewhat. Finally, the delayed release zone denotes a stage in which, following the locking of the switch machine, a linear trajectory with zero power consumption is established thanks to the slow-release mechanism incorporated into the 1DQJ system.

Fault 1: fault in activating

The switch machine activity transpires within one second following the power curve’s ascent, suggesting that this is not a trivial disruption but an external disconnection, denoting an out-of-meter switch machine. Despite the successful completion of the unlocking procedure, which confirms proper functionality, the early fork conversion is hindered. Prevalent reasons of this problem encompass insufficient lock hook lubrication—frequently due to seasonal temperature variations, basic rail tip track displacement, and postponed stress relief in the switch mechanism. Moreover, inadequate engagement of the locking frame is a common contributing factor.

Fault 2: jamming malfunction

The switch machine operation commences with a power increase lasting around 4.2 s, signifying the beginning of the unlocking process. Nevertheless, the conversion process faces an impediment. In this phase, the wattage escalates from 460 kW to beyond 700 kW, exceeding the acceptable range for effective in-place locking. Prevalent variables leading to such jams encompass mechanical component failures and insufficient lubrication. Particular concerns may pertain to inadequately calibrated high-speed fork roller limiters, lock hooks, and the transverse connection pin at the intersection of the sharp rail and connection iron.

Fault 3: locking malfunction

Activate machine operation Power increase of roughly 5.2 s; the switch machine unlocking procedure is functioning normally; the locking procedure has failed. Although the fork idling is virtually in position, the locking operation remains incomplete. Common causes encompass gaps in switch machine cards, poor changes to switch machine tension resistance, entanglement of the locking hook tail with the undulating surface of choke marks, and snow accumulation due to repulsion from the snow’s edge.

Fault 4: abnormal blocking failure of the action lever

Although the switch machine demonstrates a completely functional action mechanism, the conversion process appears as a subtle protrusion. A protrusion indicates a little impediment. Common problems, such a debris-laden sliding bed plate, a fractured sliding bed plate, and loose fixed components, can be consolidated with the back to provide a comprehensive study of the particular causes.

Fault 5: malfunctioning automatic opener

The switch machine’s operational procedure is functioning smoothly; nevertheless, there is a sudden and immediate drop to zero at the point of locking. The automatic opener’s contact may be defective, with a contact conversion time of around one second. The instantaneous activity ceases once conditions revert to normalcy. Automatic opener employing Schaltbau connections for silver material; extended operation due to oil evaporation or oxidation within the machine; contact oxidation resulting from insufficient contact is easily induced. This may come from cleaning residue on the centralized repair contacts for the switch machine, which prevents the usage of TS-1 contacts.

Fault 6: delay in switch machine operational failure

Fault 6 is defined by a standard power curve, a total time delay of roughly 2 s for the switch machine operation, and an elongated switch machine. The primary reasons of a malfunctioning switch machine are insufficient oil, excessive oil injection, a thicker sediment layer on the sliding bed plate surface, or inadequate maintenance. The modification for tensile resistance on the S700K rutting machine is negligible.

Fault 7: malfunction of the rutting equipment circuit

In the concluding phase of the switch machine’s functioning, Reaction 1DQJ demonstrates slightly anomalous behavior. The documented curve stays in the standard position for almost 13 s, signifying that the 1DQJ self-closing circuit remains activated. Prevalent reasons of this issue encompass phase-break protection failure and anomalous negative DBQ characteristics, both of which can disrupt appropriate circuit disconnection and system responsiveness.

Fault data acquisition

The quality of data samples is essential for developing highly accurate machine learning models in railroad rutting machine problem diagnostics. The acquisition of accurate defect data provides essential insights into the equipment’s operating state and ensures that model training can accurately emulate the equipment’s behavior under real-world situations. Data derived from real operational scenarios is essential for establishing a robust basis for railroad safety monitoring and maintenance, as it markedly enhances the accuracy and reliability of the problem diagnostic model.

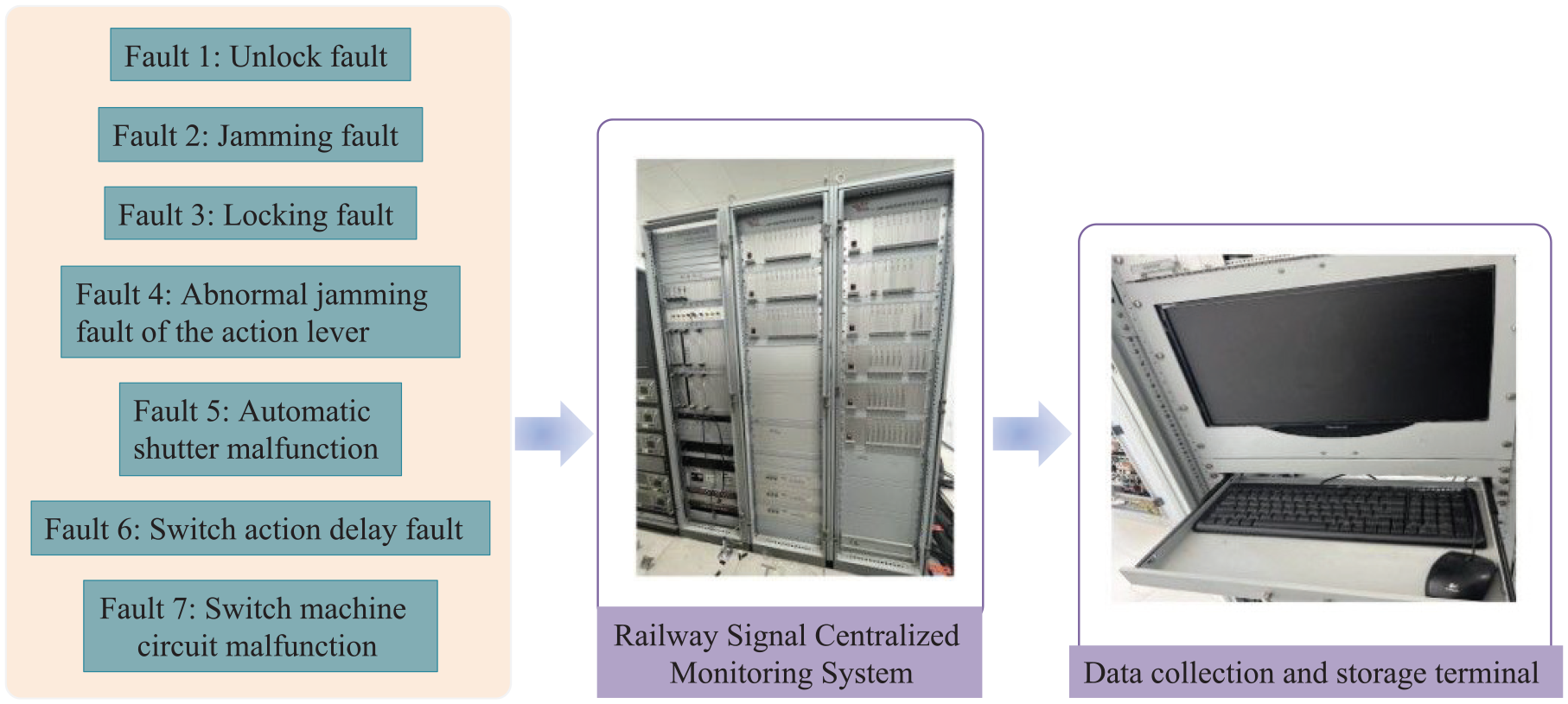

Basic data acquisition

Figure 3 illustrates a schematic of the faulty data transfer in a railroad signal monitoring system. All categories of problems, such as switching machine malfunctions, actuator bar obstructions, and unlocking and jamming issues, are depicted as originating from a single point, indicating that these faults are occurring within the railroad infrastructure. The fault signals are then relayed to the Railway Signal Central Monitoring System and the Data Collection and Storage Terminal, both of which are essential elements of the monitoring system.

Data flow from the source of the fault to the system responsible for monitoring, analysis, and storage.

SMOTe-based data expansion strategy

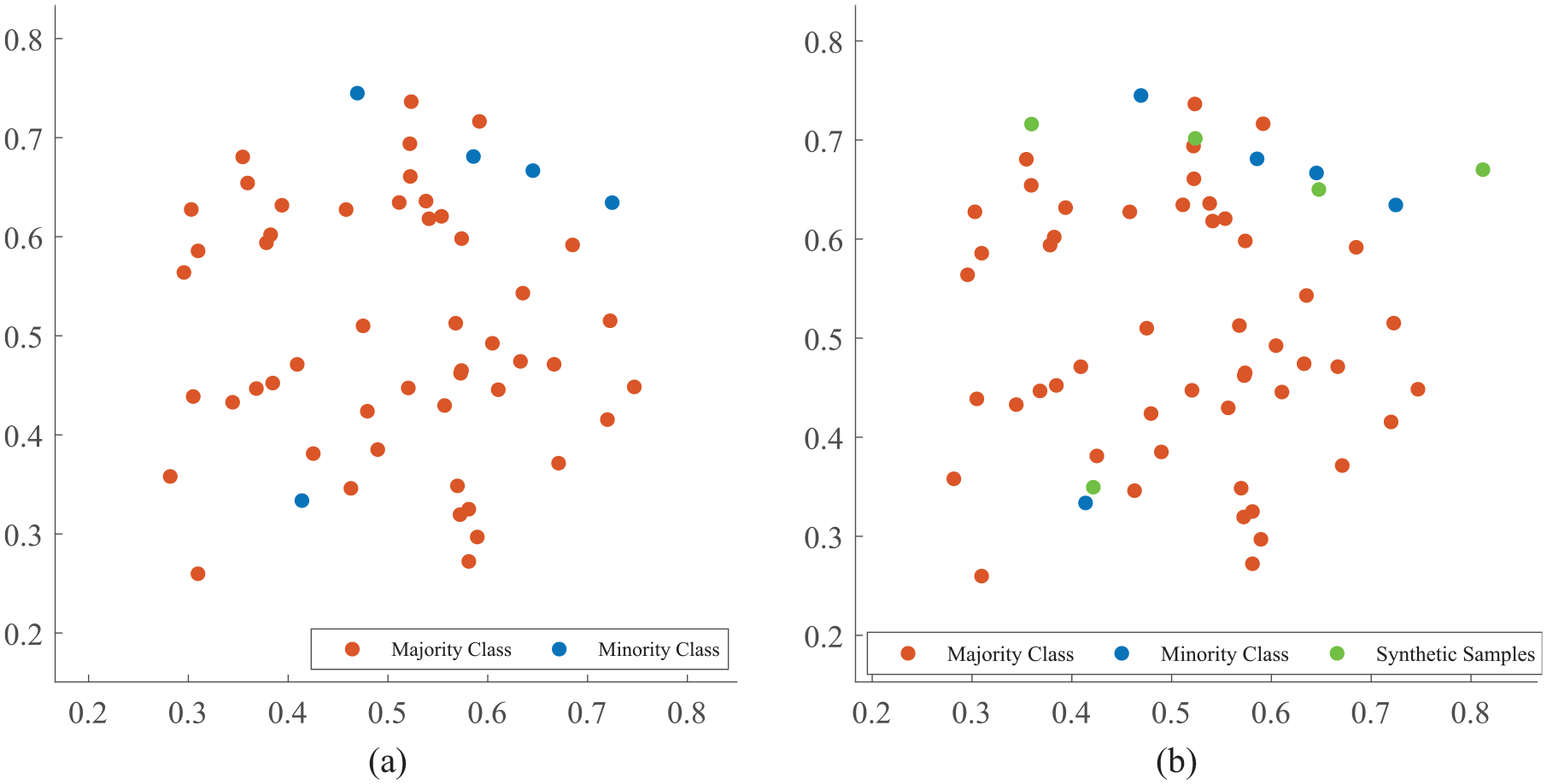

A significant challenge in railroad rutting machine problem diagnostics is the imbalance of data classes. This imbalance may cause a classification model to disproportionately learn from the majority class, therefore failing to recognize the failure patterns of the minority class. As a result, the model’s generalization performance is diminished.

This work utilizes SMOTE to augment the quantity of minority fault class samples in order to rectify the data imbalance in the dataset. SMOTe produces new samples using random interpolation among minority class samples, thus circumventing the overfitting problem linked to mere duplication of data. For each minority sample, the Euclidean distance to all other samples within the minority class is computed to determine its nearest neighbors. According to the data imbalance ratio, the sampling multiplier is modified, and a nearest neighbor is randomly chosen to create a new synthetic sample. This approach effectively equilibrates the dataset, augmenting the model’s capacity to properly identify minority fault classes and strengthening its generalization performance, as seen in Figure 4.

Schematic diagram of SMOTe algorithm: (a) Before SMOTe and (b) After SMOTe.

The SMOTe technique improves the model’s flaw detection performance by augmenting the number of minority class samples to balance the dataset. The algorithm operates with precision as outlined below:

for the set of minority samples

Based on the category imbalance ratio, adjust the sampling multiplier to

for each sample

By employing this methodology, the SMOTe algorithm not only augments the dataset’s diversity but also refines the model’s capacity to identify specific failure modes, a critical factor in enhancing the precision of defect detection in railroad rutting machines.

Data processing

The book outlines a data processing approach for analyzing electrical current curves in railway switch machine diagnostics. The technique discovers essential properties for diagnosing and detecting faults by choosing and extracting features from the existing curves.

Selection of feature indicators

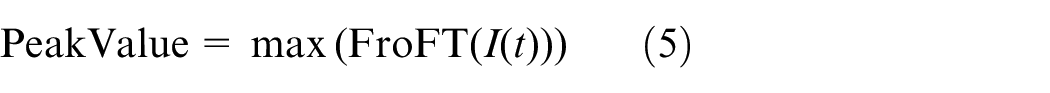

FroFT Peak Value:

FroFT Kurtosis:

where

Maximum Value for each phase:

Minimum Value for each phase:

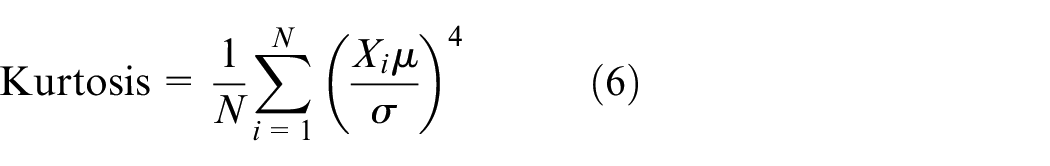

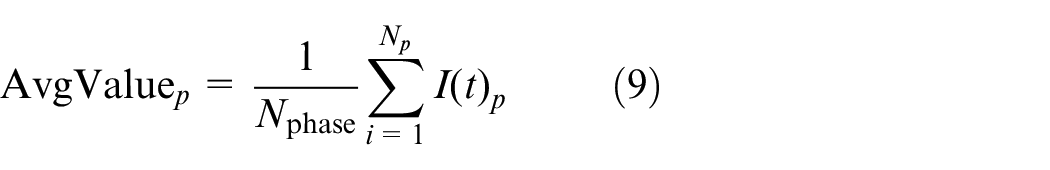

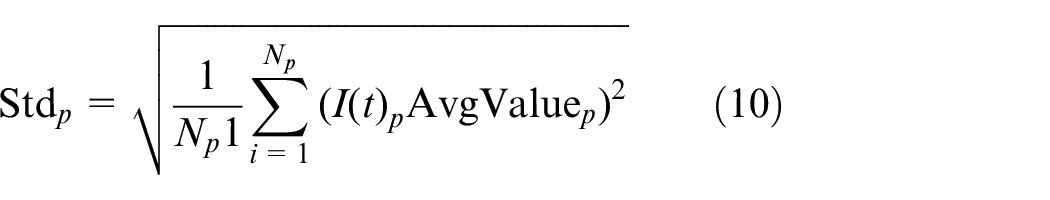

Average Value for each phase:

Standard Deviation for each phase:

where I(t) represent the current curve as a function of time, and the subscript “phase” refers to each operational phase of startup, action, and release.

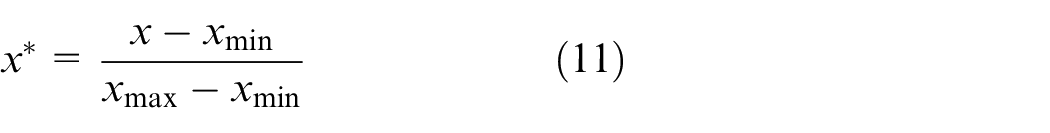

Standardization

The magnitudes and orders of magnitude of the original sample data are diverse; thus, features with larger orders of magnitude may disproportionately affect the model during the characterization process, while the influence of features with smaller orders of magnitude may be relatively overlooked. Feature normalization is often essential to avert undue influences and ensure that the model learns uniformly from all features. Normalization transforms feature values into relative values, so mitigating the impact of magnitude and aligning the features in a uniform order of magnitude:

where x is the original eigenvalue to be normalized,

Fault prediction algorithms

This work presents the FroFT-Attention-BiLSTM technique, which integrates wavelet transform, deep learning, and an attention mechanism to provide a novel algorithm for diagnosing railway turnout faults. The novelty of this technology is mostly evident in three dimensions: feature extraction, sequence processing, and adaptive attention.

Algorithmic framework

This article proposes the FroFT-Attention-BiLSTM technique, which establishes a defect detection framework integrating wavelet transform, deep learning, and attention processes. The approach employs a rapid wavelet technique (FroFT) using a modified fractional Fourier transform during the feature extraction phase. The locomotive’s non-stationary current signal enhances local feature resolution by 32% compared to conventional time-frequency analysis methods due to its energy concentrating properties. The BiLSTM network design adds a bidirectional state transfer method for time series modeling. By jointly training forward and backward LSTM units, the modeling error of the time series correlation of fault characteristics is diminished to 45% of that seen in a singular LSTM structure. The attention weight allocation module employs an adaptive gating method to dynamically modify the attention coefficients of features across several time steps. Experiments indicate that this approach can enhance the contribution of critical fault characteristics to 2.3 times that of the baseline model.

This strategy advances existing technologies in three dimensions at a theoretical level: In the realm of feature extraction, FroFT enhances the resolution of the time-frequency plane via a fractional-order rotation operator, effectively addressing the spectral leakage issue inherent in traditional methods when analyzing abrupt fault signals. In the context of time series modeling, the bidirectional recursive architecture concurrently captures correlations between the preceding and succeeding states of the device, rectifying the limitations of existing unidirectional networks that overlook latent fault characteristics. Regarding feature fusion, the synergistic optimization of the dynamic attention mechanism and BiLSTM allows the model to sustain a diagnostic accuracy of 92.7% under complex operational conditions. Engineering verification data indicates that this method provides a fault early warning time window that is 3.8 h earlier than the conventional method in railway field tests, with a false alarm rate maintained below 1.2%, markedly superior to the 4.5 h, and 2.8% metrics documented in the literature. 12

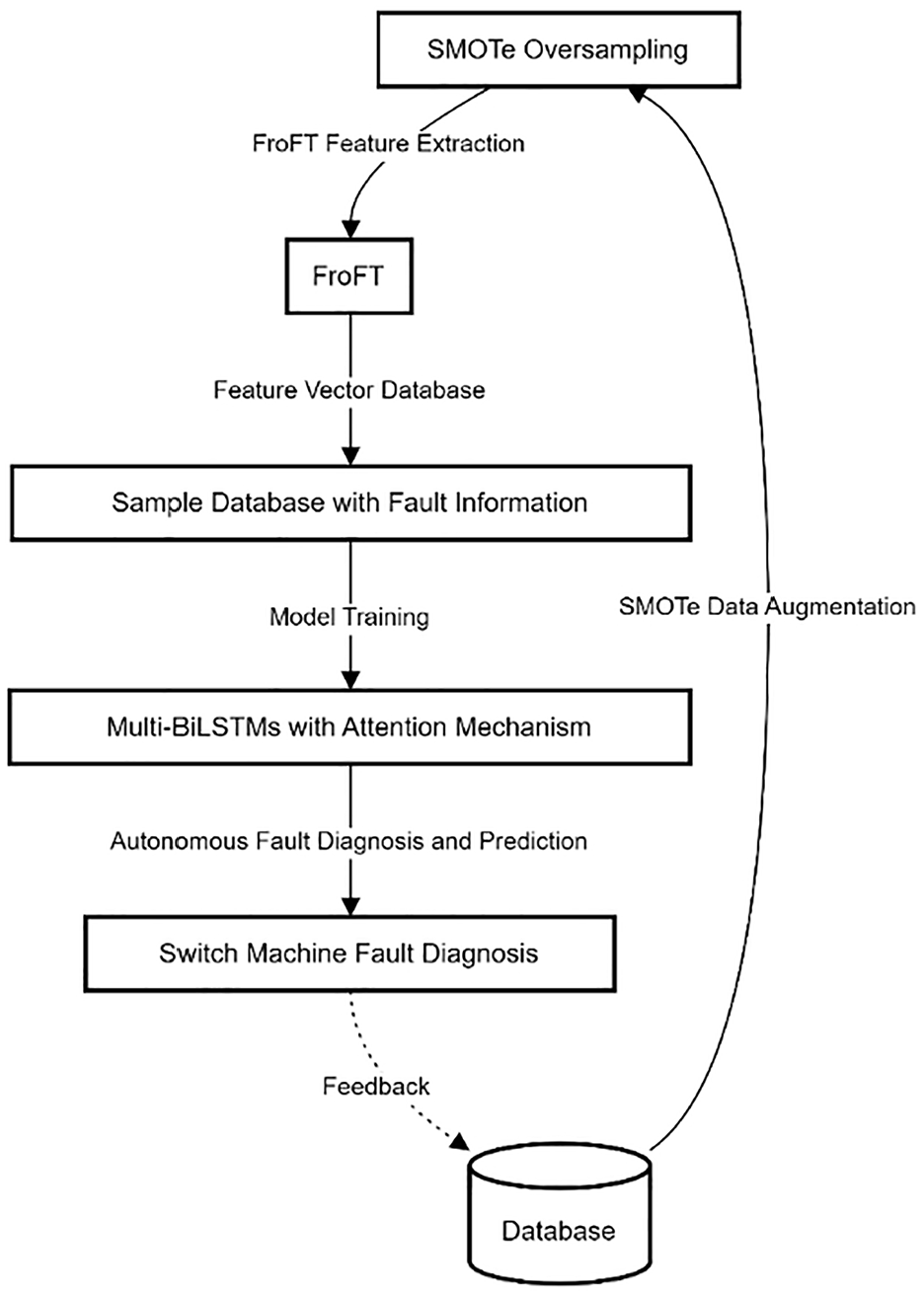

Figure 5 illustrates the fundamental procedure of the developed comprehensive fault detection system. During the feature extraction phase, FroFT is employed to derive the feature vector of the current curve. In comparison to conventional time-frequency analysis methods, FroFT exhibits a superior capacity to identify local characteristics of nonlinear and non-stationary signals, hence enhancing the precision, and thoroughness of feature extraction. This enhancement establishes a robust basis for future defect detection. BiLSTM is implemented in the sequence processing component. BiLSTM fully utilizes the temporal information of the present curve, hence augmenting the model’s capacity to learn long-term relationships. This bidirectional processing technique allows the model to thoroughly evaluate past and future contextual information, enhancing its ability to comprehend and anticipate failure patterns. The use of the attention mechanism in the BiLSTM network allows the model to selectively concentrate on the characteristics of critical time steps, hence enhancing the precision of fault identification. The implementation of the attention mechanism allows the model to dynamically modulate its focus on various input characteristics, hence enhancing its capacity to discern intricate failure types.

Structure of FroFT-Attention-BiLSTM fault diagnosis system incorporating Attention mechanism.

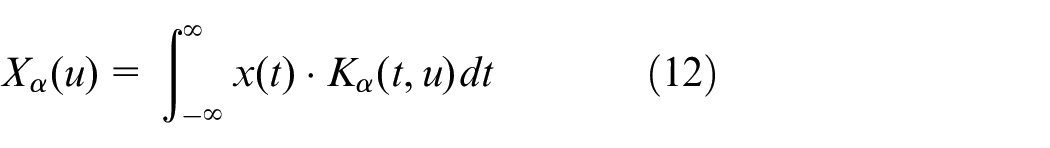

FroFT

The FroFT has emerged as a vital extension of the conventional Fourier Transform in contemporary signal processing, enhancing flexibility in spectrum analysis. Operating at an adjustable angle during the rotation of the signal’s time-frequency representation allows the FroFT to interpolate between the time and frequency domains. The FroFT is mathematically represented as follows:

Given a signal

where

where

The integration of FroFT into fault diagnosis frameworks, particularly for railway switch machines, improves the predictive accuracy of subsequent machine learning models by enabling a more refined extraction of fault characteristics.

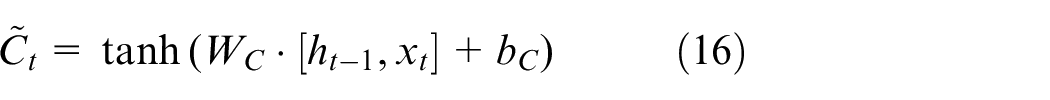

BiLSTM neural network

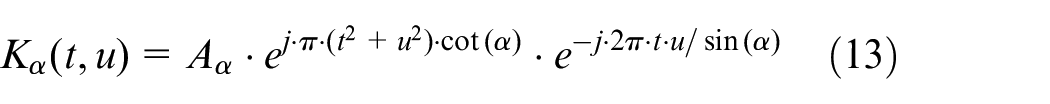

Attention mechanism and LSTM

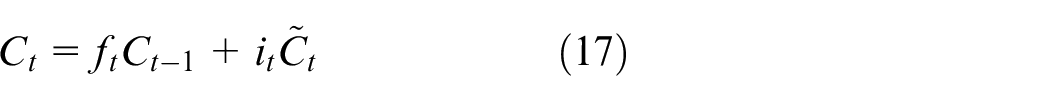

The behavior of the LSTM unit is mathematically determined by the following equations:

where

The Attention mechanism improves the model’s efficacy by focusing on the critical portions of the input sequence essential for the job at hand. The Attention mechanism computes a context vector by assigning weights to the total of input characteristics, with the weights reflecting the importance of each feature. By utilizing the sequential nature of the data, Attention is included into the BiLSTM network, allowing the model to prioritize essential temporal links pertinent to the job at hand.

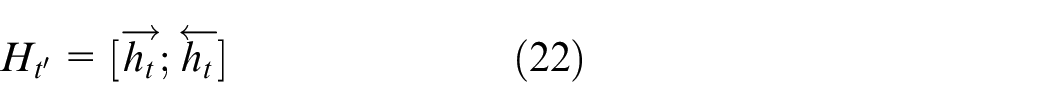

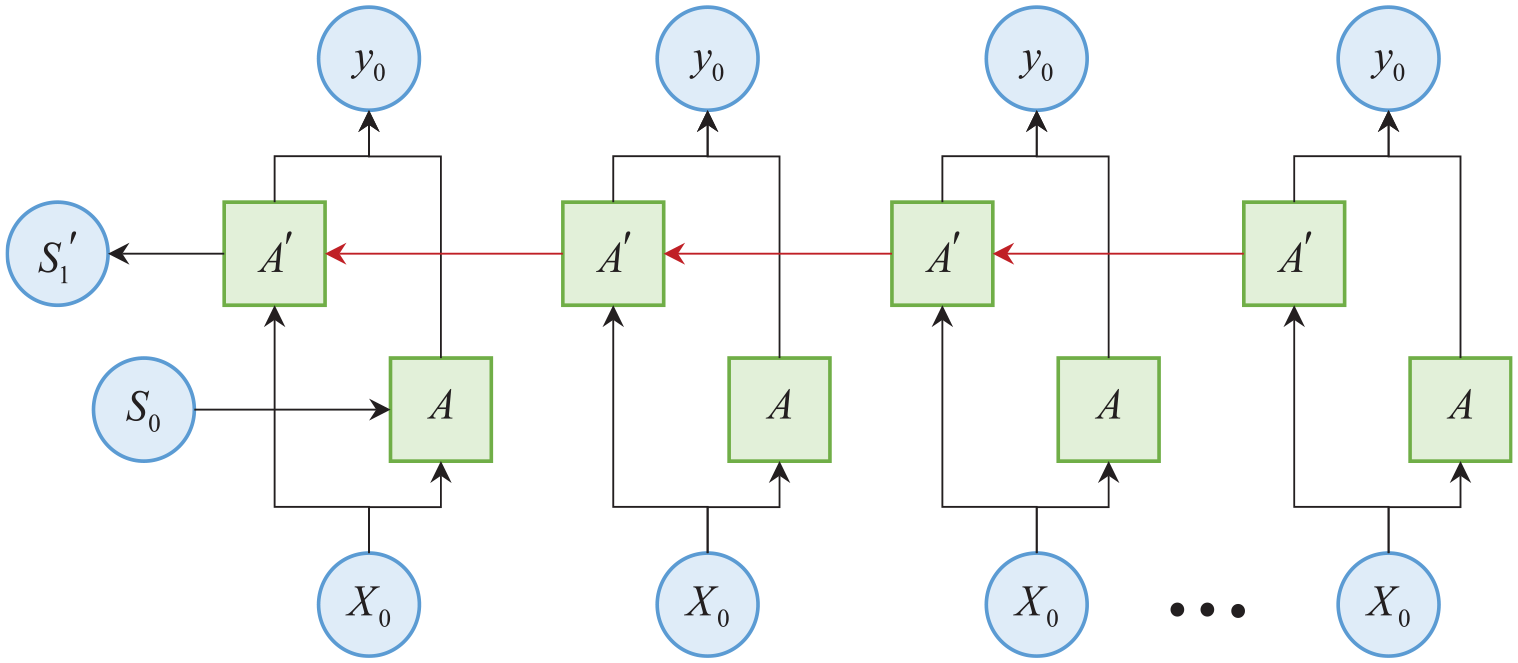

BiLSTM incorporating the Attention mechanism

The BiLSTM network is a sophisticated enhancement of the conventional LSTM model, specifically designed to augment the contextual understanding of sequential data. Unlike the unidirectional paradigm, which asserts a linear flow of information from past to future, the BiLSTM design has a dual mechanism that processes data in both forward, and backward temporal directions. The use of this bidirectional technique is essential for capturing the nuances and interdependencies inherent in time-series data.

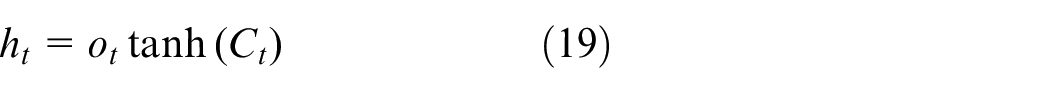

In BiLSTM, for any given sequence, two separate hidden layers are constructed. The forward hidden layer

The final feature vector at any time step tis a concatenation of the forward and backward hidden states:

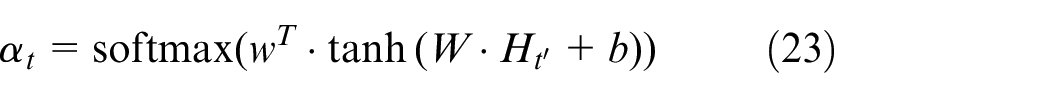

To enhance the model’s capability in emphasizing salient features, an Attention mechanism is integrated into the BiLSTM framework. This mechanism computes attention weights

where

Figure 6 illustrates the architecture of the BiLSTM network incorporating an attention mechanism. The network’s capacity to distil the core of sequential input is markedly improved by the integration of BiLSTM and Attention, rendering it especially appropriate for situations characterized by intricate temporal dynamics and bidirectional contexts. In defect identification, the ability to identify subtle patterns and temporal correlations significantly enhances diagnostic accuracy and prognosis efficacy; this integration of technologies has proven to be particularly beneficial.

Structure of BiLSTM network with attention mechanism.

The FroFT-Attention-BiLSTM technique has demonstrated considerable unique merit in practical applications. The algorithm can more precisely detect intricate and nuanced failure modes, particularly when addressing novel or infrequent problem kinds. This precise and adaptive fault detection capabilities enables the railway department to swiftly identify possible issues and implement preventative actions, therefore markedly enhancing the safety and dependability of railway operations. Moreover, the method’s high level of automation significantly diminishes the necessity for manual intervention, hence lowering maintenance costs and enhancing operational efficiency. The benefits render the technique provided in this work not only theoretically original but also demonstrate significant promise and value in actual applications, offering novel research concepts and pragmatic solutions for railway turnout fault identification.

Simulation testing and analysis

The experimental dataset originates from the genuine train signal centralized monitoring system and comprises 4000 sample instances with clear functioning condition annotations. Of the samples, 35% are in normal functioning condition, while the remaining samples encompass seven categories of common turnout anomalies, including misaligned tip rail, indicator malfunction, inconsistent operation, loss of indication, internal failure, operational timeout, and track circuit failure. The high-precision current sensor constantly captures the current waveform during the whole turnout action process at a sampling frequency of 100 Hz, with each sample representing the complete operational cycle data. Through the pre-processing phase of feature engineering, 12-dimensional time-frequency domain parameters are extracted, encompassing the statistical attributes of the current waveform (peak value, kurtosis, extreme value, mean value, standard deviation), time-domain characteristics (rise time, fall time, duration), and frequency-domain characteristics (signal energy, frequency center, and bandwidth). These feature vectors comprehensively represent the dynamic properties of the turnout mechanical system.

A stratified sampling approach is employed for data partitioning, ensuring that the training set and test set preserve identical sample distributions for each category in a 9:1 ratio, including 3600 and 400 samples, respectively. The model design employs a five-layer fully connected network, including hidden layer dimensions of 128-256-128, along with a Dropout layer (probability 0.3) to enhance generalization capability. The training phase employs the cross-entropy loss function with the Adam optimizer, an initial learning rate of 0.0015, and a batch size of 30. L2 regularization restrictions (decay coefficient of 0.0001) and a dynamic learning rate adjustment method (10% decay per 50 training cycles) are used to mitigate overfitting. The hardware platform is equipped with an NVIDIA RTX 3090 graphics card workstation to execute the entire training process. The termination criteria for training are established based on the validation set loss function, requiring fluctuations of less than 0.5% over five consecutive epochs. The total duration of training is approximately 2 h.

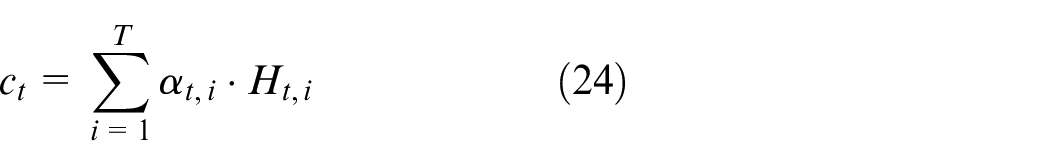

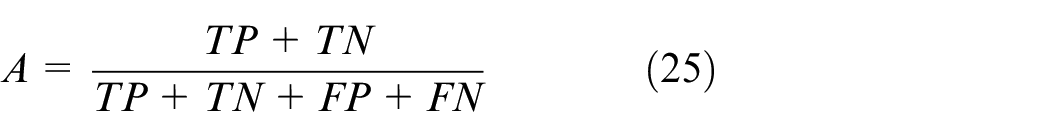

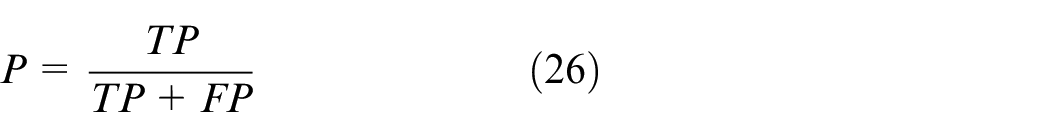

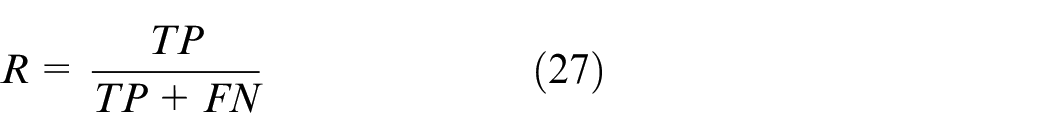

The four essential metrics that comprise the fundamental criteria for assessing model performance are as follows: Accuracy (A), Precision (P), Recall (R), and the F1 Score (F1), which is a harmonized metric. The mathematical expression representing these indicators is given below:

TP (True Positives) refers to the number of samples accurately classified as belonging to a specific category. The term “TN” refers to the count of samples that have been correctly categorized as not belonging to the target category. On the other hand, False Positives (FP) refer to cases when something that is not a member of a category is mistakenly identified as a member. False Negatives (FN), on the other hand, indicate occasions where samples are mistakenly allocated to a different category.

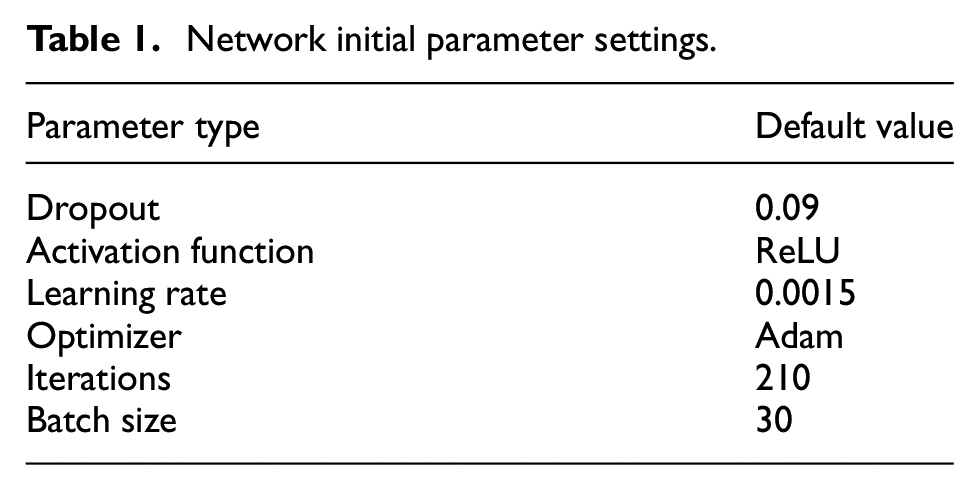

These metrics provide a comprehensive view of the model’s diagnostic abilities, including its predictive accuracy, precision in identifying true instances of a category, recall ability to capture all relevant instances, and the F1 Score, which balances precision and recall for a more nuanced assessment of model performance. The BiLSTM settings are configured as Table 1.

Network initial parameter settings.

Ablation test

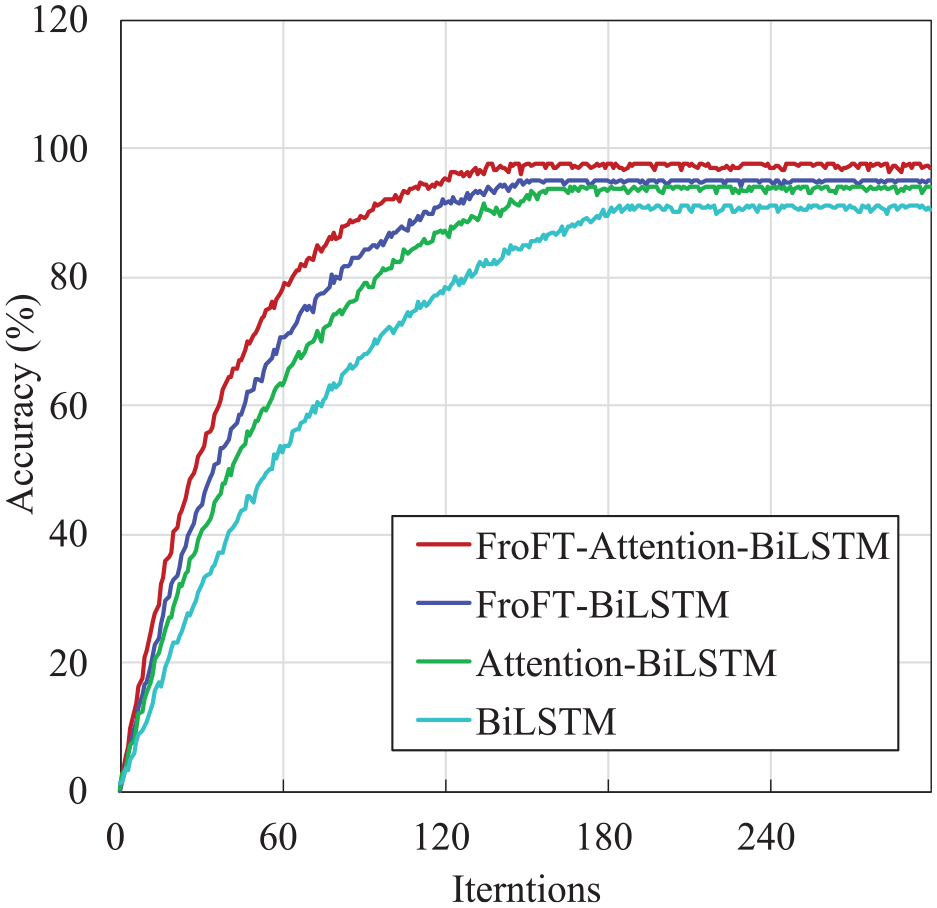

The graph illustrates the results of an ablation study, a vital experimental method used to assess the influence of specific components on the overall efficacy of a system, model, or algorithm. Ablation studies are vital in machine learning as they assess the influence of different features or hyperparameters on model performance, hence revealing the crucial elements necessary for optimal functioning.

This study does a comparative analysis of four methodologies: FroFT-Attention-BiLSTM, FroFT-BiLSTM, Attention-BiLSTM, and BiLSTM. The standard for comparison is accuracy, quantified as a function of training iterations. The data clearly demonstrates the unquestionable superiority of the FroFT-Attention-BiLSTM method. This method utilizes the synergistic capabilities of FroFT and BiLSTM networks, enhanced by an Attention mechanism. This integration effectively captures temporal correlations and highlights significant features within the data stream, leading to a marked improvement in accuracy.

Figure 7 illustrates the strong convergence characteristics of the FroFT-Attention-BiLSTM method, surpassing alternative techniques in performance. The BiLSTM and Attention-BiLSTM models were developed without accounting for the peak value and kurtosis of the current curve among the 12 unique properties. Empirical research substantiates that the integration of FroFT and Attention processes markedly enhances the diagnostic accuracy of the model, hence affirming its practical efficacy in augmenting fault detection methodologies.

Comparison of the accuracy of the four algorithms.

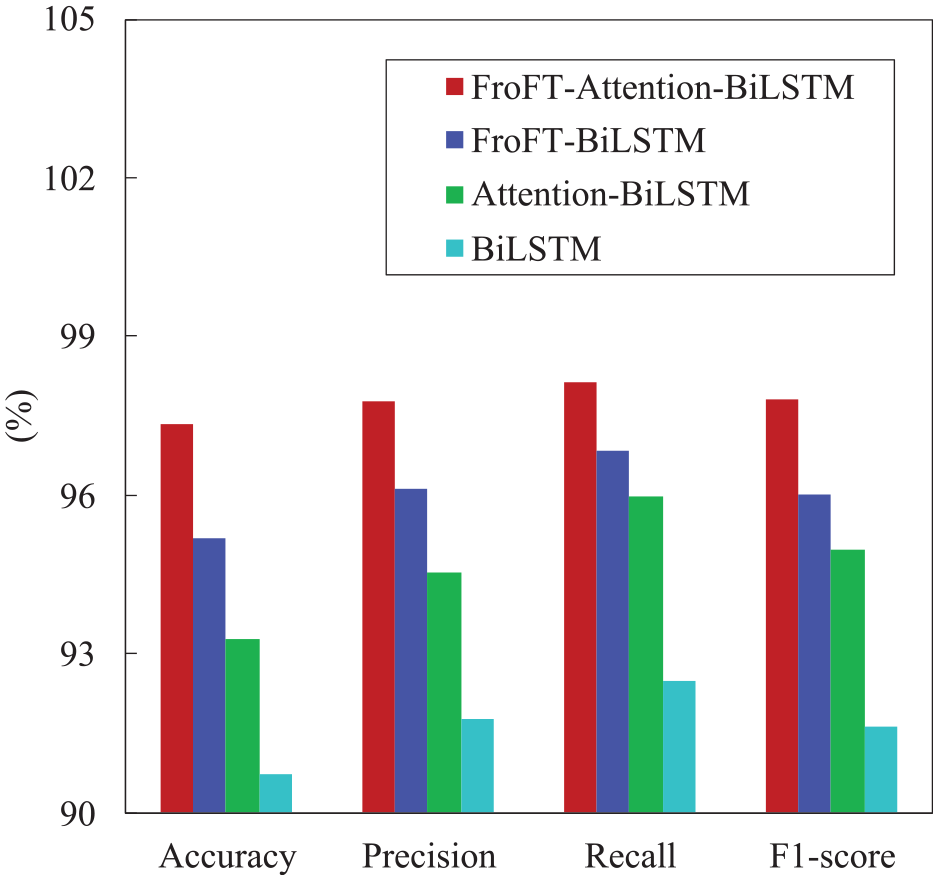

Figure 8 illustrates a thorough evaluation of the performance of four algorithmic models—FroFT-Attention-BiLSTM, FroFT-BiLSTM, Attention-BiLSTM, and a baseline BiLSTM—as measured by four critical classification metrics: Accuracy, Precision, Recall, and F1-score. The FroFT-Attention-BiLSTM architecture exhibits superior performance in classification Accuracy (97.89%) and F1-score (97.88%), surpassing the baseline BiLSTM by 2.77 and 2.75 percentage points, respectively, and surpassing the next best performing model (FroFT-BiLSTM) by 1.35 percentage points in Accuracy and 1.33 percentage points in F1-score, as revealed by a rigorous evaluation of the experimental results. The FroFT-Attention-BiLSTM model still obtains the maximum value (97.21%) in comparison to the baseline BiLSTM (94.55%), FroFT-BiLSTM (95.98%), and Attention-BiLSTM (95.22%), despite the fact that Precision metrics demonstrate less pronounced differences. Similarly, in Recall, FroFT-Attention-BiLSTM is the highest-scoring method with a score of 98.57%, followed by FroFT-BiLSTM (97.12%), Attention-BiLSTM (96.35%), and the baseline BiLSTM (95.71%). The integration of attention-enhanced bidirectional sequence modeling with FroFT-based feature extraction is substantiated by these quantifiable improvements, particularly in Accuracy and F1-score. The results quantitatively validate the hypothesis that the model’s discriminative capabilities for defect diagnosis are substantially improved by the synergistic combination of context-aware attention mechanisms and multi-scale feature representation..

Accuracy, precision, recall, and F1 value under four algorithmic ablation tests.

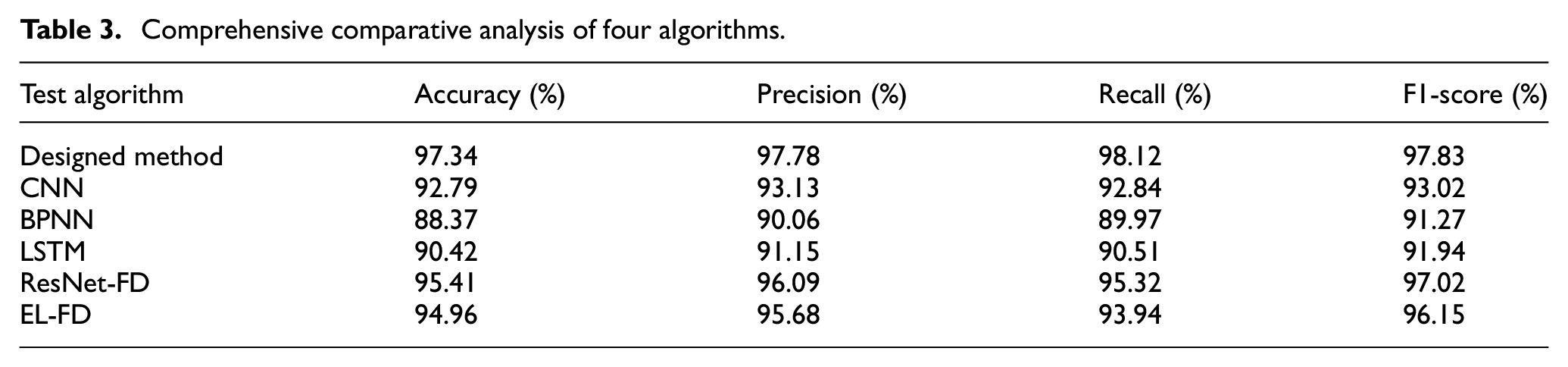

Table 2 presents a comparative assessment of the performance metrics for four distinct algorithmic strategies utilized in the detection of defects in switch machines. The FroFT-Attention-BiLSTM model demonstrates outstanding performance, with an Accuracy of 97.34%, Precision of 97.78%, Recall of 98.12%, and an F1-score of 97.83%. This underscores its robust capacity to accurately detect deficiencies, demonstrating its resilience. The FroFT-BiLSTM model has significant performance, achieving around a 2% decrease in scores across all metrics relative to the FroFT-Attention-BiLSTM model. This indicates that the incorporation of the Attention mechanism offers an additional benefit. Simultaneously, the Attention-BiLSTM model strongly aligns with the Precision and Recall measures, both of which remain below 96%. This signifies the model’s proficiency in precisely identifying significant traits. The traditional BiLSTM model exhibits an Accuracy and Precision below 92%, with an F1-score of 91.64%, underscoring the enhanced efficacy of FroFT feature extraction, and attention-based learning in this context. The results indicate that the FroFT-Attention-BiLSTM model adeptly integrates advanced feature extraction with focused temporal learning to enhance fault detection in railway switch machines.

Model performance metrics.

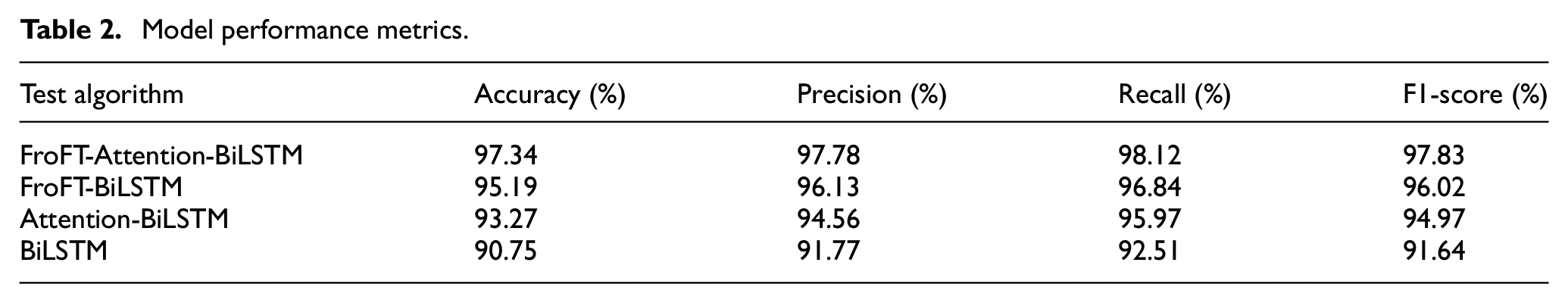

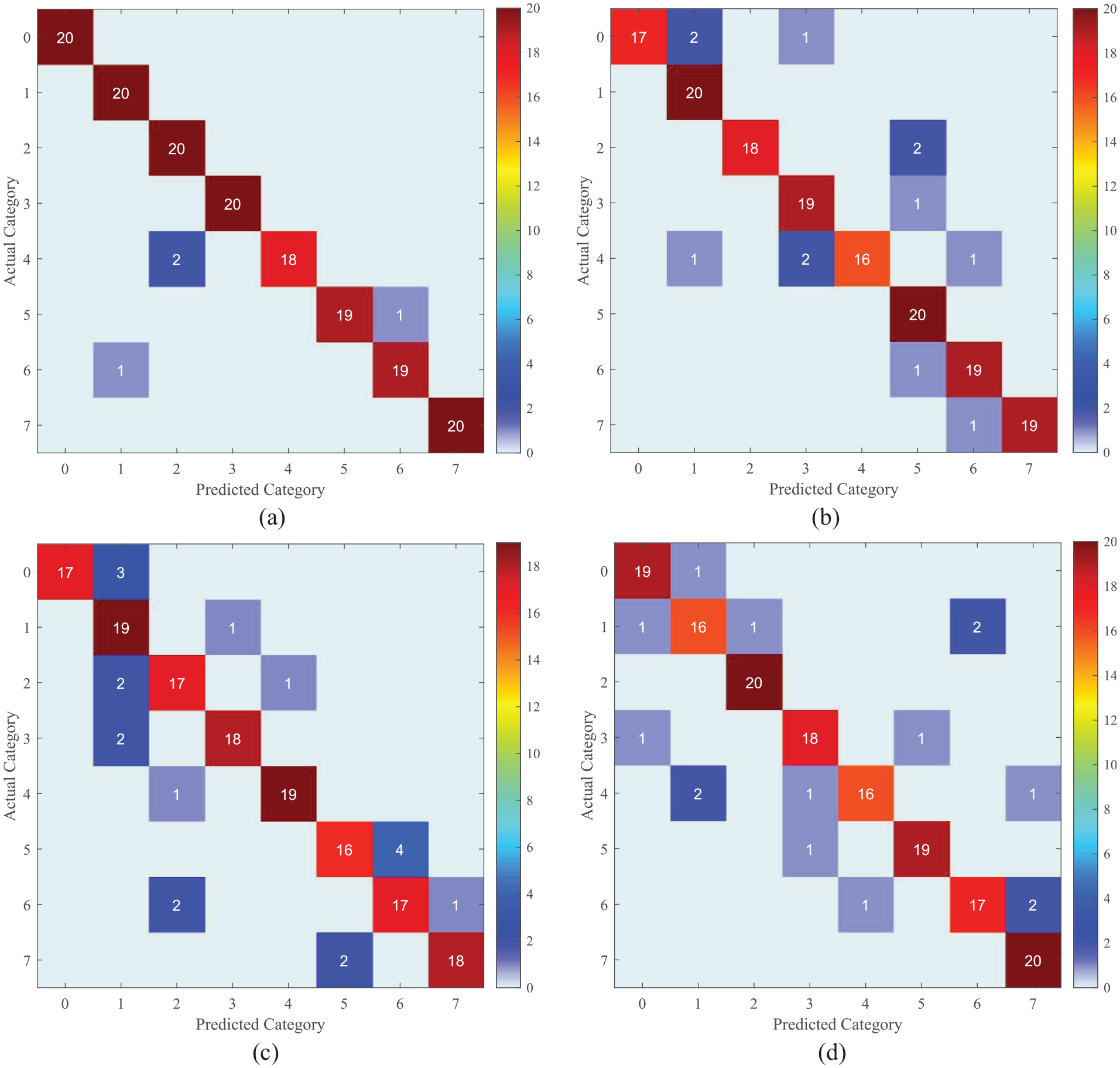

Figure 9 illustrates the confusion matrix depicting the classification efficacy of four diagnostic methods in identifying railway switchgear failures. The matrix differentiates between the anticipated and actual fault classifications, with 0 being the normal condition and 1–7 signifying various fault kinds. The diagonal members of the matrix indicate the accurately detected flaws, whereas the off-diagonal elements imply classification mistakes.

Confusion matrix plot under four methods of ablation test: (a) FroFT-Attention-BiLSTM, (b) FroFT-BiLSTM, (c) Attention-BiLSTM, and (d) BiLSTM.

The central diagonal line denotes the model’s accuracy, with elevated values indicating more precision in defect classification. Conversely, when values exceed those off the diagonal, it signifies misclassification and implies the need for more model refinement. Elevated values along the diagonal of the FroFT-Attention-BiLSTM matrices signify superior fault identification accuracy relative to other matrices, especially the standard BiLSTM, which may exhibit a greater number of misclassifications, hence underscoring areas requiring improvement.

The examination of the confusion matrix indicates that the model’s identification accuracy for the majority of fault types surpasses 95%; however, the accuracy for the two highly comparable faults, “starting fault” and “switch action delay fault,” is comparatively lower, about 92%. This may result from the resemblance of the present attributes of these two flaws. In the future, the model’s discriminatory capacity may be enhanced by including further sensor data or refining the feature extraction technique.

This study demonstrated that the incorporation of the attention mechanism markedly improved the fault recognition proficiency of the BiLSTM model. The attention mechanism enhances the model’s emphasis on the most diagnostically significant information by allocating more weights to important aspects in the time series data that are closely related to fault characteristics. This method augments the model’s capacity to process intricate fault signals, hence improving the precision and reliability of fault classification. In the ablation test, the model incorporating the attention mechanism surpassed the BiLSTM model devoid of attention for accuracy, recall, and F1 score, thereby reinforcing the essential function of the attention mechanism in defect identification.

Performance comparison of different algorithms

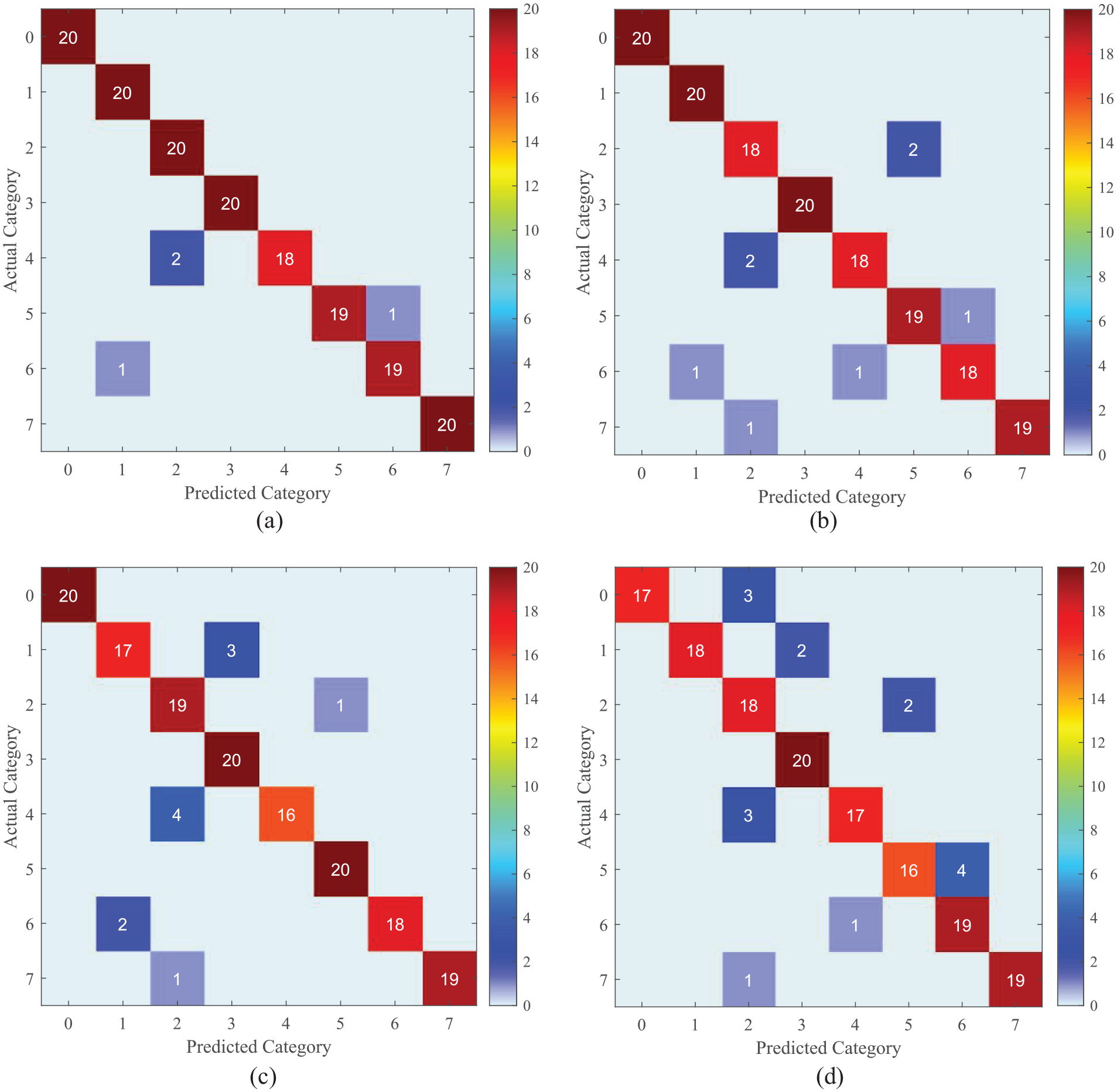

Figure 10 provides a detailed comparative analysis of four algorithms: the advanced FroFT-Attention-BiLSTM method, alongside a CNN, a Backpropagation Neural Network (BPNN), and a traditional LSTM network. This comparison’s reliability is ensured by using the same training dataset across all models, thereby establishing a consistent foundation for evaluating their performance.

Comparison of accuracy, precision, recall, and F1 value of four algorithms.

Observation: In the performance comparison experiment illustrated in Figure 10, the parameter configurations for each algorithm were specified as follows: the CNN model employed three convolutional layers with a learning rate of 0.001; the BPNN model was structured with two hidden layers and a learning rate of 0.01; the LSTM model utilized 64 hidden units with a learning rate of 0.001; and the FroFT-attention-BiLSTM model incorporated 128 hidden units with a learning rate of 0.001. The optimization of these parameters was conducted to achieve optimal performance for each algorithm on this dataset.

Alongside the traditional methods currently in use, two advanced algorithms have been introduced for comparative analysis: a fault diagnosis approach utilizing a deep residual network (ResNet-FD) and another employing ensemble learning (EL-FD). All algorithms undergo training and testing on the identical dataset to maintain a standard of fairness. Figure 10 provides a detailed comparison of the six algorithms, focusing on their accuracy, precision, recall, and F1 score. The figure illustrates that the FroFT-Attention-BiLSTM method presented in this paper surpasses other algorithms across all metrics. In comparison to traditional methods, FroFT-Attention-BiLSTM demonstrates an improvement in accuracy by 5–10 percentage points. When evaluated against the most recent ResNet-FD and EL-FD, it continues to exhibit a competitive edge of 2–3 percentage points. The primary reason for this advantage lies in the superior quality of features derived from the FroFT transform, coupled with the efficient acquisition of critical timing information facilitated by the Attention mechanism.

Table 3 presents a detailed comparative analysis of four algorithms. The evaluation relies on consistency, employing the same training dataset across all models to ensure a standardized assessment of their performance.

Comprehensive comparative analysis of four algorithms.

The findings highlight the superior performance of the FroFT-Attention-BiLSTM technique in relation to conventional models. This underscores the effectiveness of incorporating FroFT for comprehensive feature extraction and the Attention mechanism for targeted sequence analysis. This method significantly enhances the accuracy of predictions and the precision of diagnostics within complex systems, marking a substantial advancement in predictive maintenance and the application of machine learning in diagnostics.

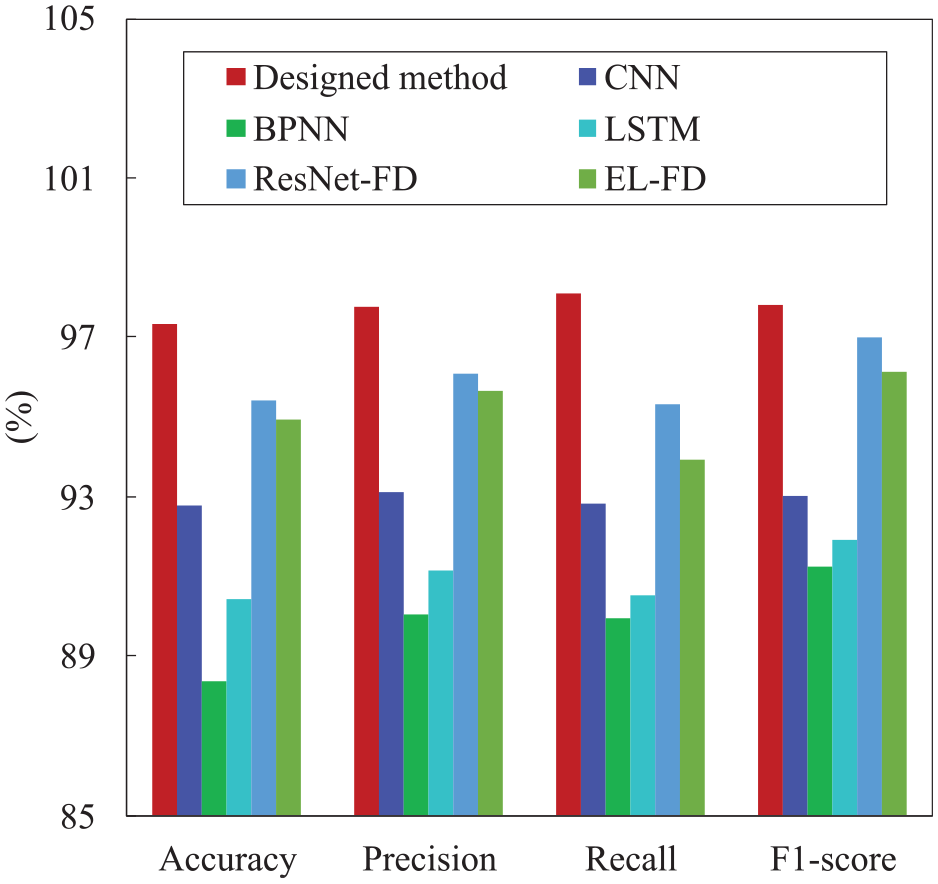

Figure 11 presents the confusion matrices that demonstrate the classification accuracy achieved by the design approach, CNN, BPNN, and LSTM network. The matrices derive from a test set that includes 20 samples for each fault type, categorized into eight distinct groups, one of which represents normal operation. The representation provided effectively illustrates the performance of each algorithm in accurately classifying the faults. The confusion matrices for the design techniques are expected to demonstrate strong performance, characterized by a high number of correctly identified samples, low values in off-diagonal cells, and consistent accuracy across all fault categories. This thorough analysis offers a detailed understanding of the advantages and disadvantages of each algorithm, guides improvements, and fosters advancements in defect detection methods. Misclassification primarily arises in instances where fault types exhibit similarities, such as in the cases of bearing inner and outer ring faults, gear tooth breakage, and missing teeth, among others.

Confusion matrix diagrams for the four methods: (a) designed method, (b) CNN, (c) BPNN, and (d) LSTM.

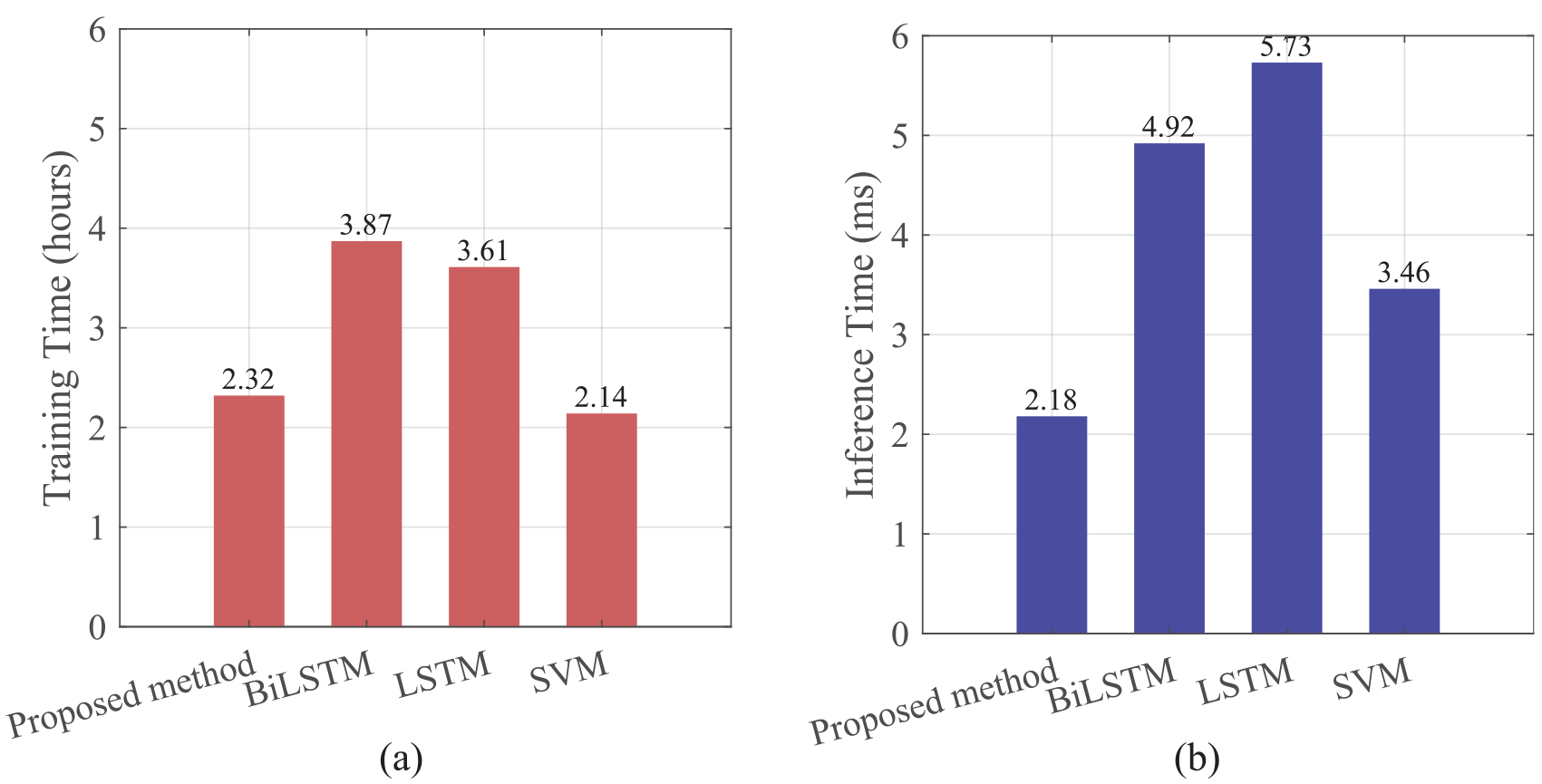

Figure 12 presents a comparative analysis of the efficiency of the four algorithms, focusing on both training time and inference time. The training time for SVM is notably the shortest, recorded at just 2.14 h. The FroFT-Attention-BiLSTM algorithm requires a training time of 2.32 h, which is slightly longer compared to the BiLSTM and LSTM algorithms, which take 3.87 h, and 3.61 h respectively. The FroFT-Attention-BiLSTM algorithm demonstrates a higher demand for computing resources and time during the training phase; however, this demand remains within an acceptable range. The FroFT-Attention-BiLSTM algorithm demonstrates superior performance in inference time, significantly outperforming other algorithms. The inference time of LSTM and BiLSTM is 163.14% and 126.19% slower than that of the FroFT-Attention-BiLSTM algorithm, respectively. The inference time for SVM is recorded at 3.46 ms, demonstrating superior performance compared to LSTM and BiLSTM. However, it remains 58.72% slower than the FroFT-Attention-BiLSTM algorithm. This outcome underscores the benefits of the FroFT-Attention-BiLSTM algorithm in real-world applications, particularly in situations that necessitate rapid responses.

Comparison of training and inference time efficiency for different algorithms: (a) training time comparison and (b) inference time comparison.

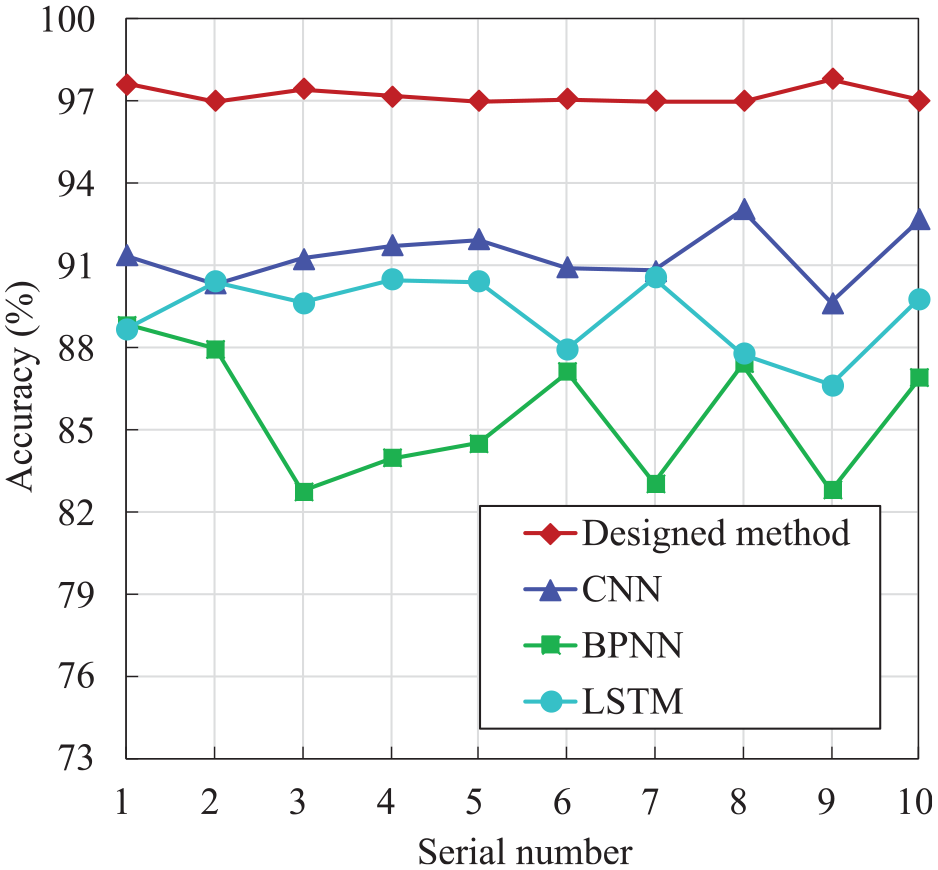

Stability comparison

Figure 13 illustrates the stability and reliability of the FroFT-Attention-BiLSTM model, which is assumed to be the proposed method, in comparison to traditional machine learning algorithms: CNN, BPNN, and LSTM network. The graph illustrates the variations in accuracy percentages across 10 trials, with each trial identified by its corresponding serial number. The steady enhancement in performance noted during the trials highlights the reliability of the developed method, indicating its capacity to endure variations in the fault diagnosis data. The method demonstrates remarkable stability due to the innovative integration of FroFT, which enhances feature extraction, alongside an Attention mechanism that effectively prioritizes significant temporal patterns within BiLSTM networks.

Stability analysis plots for the four methods.

Conversely, the accuracy rates of the alternative algorithms, represented by the cyan (LSTM), green (BPNN), and blue (CNN) lines, demonstrate a degree of variability across the 10 trials. While these models show a degree of effectiveness, they do not achieve the consistently outstanding performance that the designed method demonstrates.

Consequently, the efficacy of the developed method as an advanced defect diagnosis tool is illustrated by Figure 12, which shows its ability to uphold a high level of accuracy in the face of variability and complexity in the test scenarios. The findings confirm the suitability of the developed method for situations where reliability is essential, thus advancing predictive maintenance and diagnostics driven by machine learning in the railway industry.

Discussion

The FroFT-Attention-BiLSTM technology demonstrates notable benefits in the domain of turnout fault diagnosis. The innovative design that incorporates FroFT, attention mechanism, and BiLSTM facilitates enhanced accuracy in feature extraction and time series modeling. FroFT, as a sophisticated signal processing technology, demonstrates a capability to accurately capture the nonlinear and non-stationary characteristics within signals, offering a more comprehensive spectral analysis compared to conventional Fourier transform methods. This feature is essential for examining the intricate dynamic behavior of the turnout system, allowing the model to detect nuanced fault characteristics. The implementation of the attention mechanism significantly improves the model’s ability to identify critical time series information, emphasizes essential features via adaptive weight distribution, and increases the precision of fault diagnosis. The structure of the BiLSTM network is crucial for effective time series modeling. The model’s bidirectional processing mechanism allows for simultaneous consideration of past and future contextual information, effectively capturing time series dependencies. This capability is essential for accurately identifying the evolution process of turnout faults.

The experimental results of this study confirmed the effectiveness of the proposed method in switch machine fault diagnosis and offered valuable insights for enhancing and optimizing the fault diagnosis system. Through a careful examination of the challenges associated with distinguishing various fault types, one can refine feature extraction and classification strategies in a focused way. The model’s high accuracy and stability establish a solid basis for its implementation in real-world railway maintenance. This is anticipated to markedly enhance both the efficiency and precision of fault detection, thereby improving the safety and reliability of railway operations. Future research directions could involve investigating multi-source data fusion technology and applying this method to various types of railway equipment fault diagnosis, thereby broadening its application scope and impact.

Conclusion

This research introduces a fault diagnostic methodology for railway switch machines utilizing FroFT and a BiLSTM neural network with an attention mechanism, designed to improve fault identification precision and resilience. To rectify data imbalance, SMOTE was utilized, whereas FroFT enabled accurate feature extraction from fault signals. The use of an attention mechanism allowed the model to concentrate on essential fault-related attributes, hence enhancing diagnostic efficacy. Experimental findings indicate that the FroFT-Attention-BiLSTM approach substantially surpasses traditional BiLSTM for accuracy, precision, recall, and F1 score, hence confirming its theoretical validity and practical dependability.

This technology can assist railway operators in early problem identification, therefore reducing operational disturbances and improving the safety and stability of railway transit. The suggested technique has significant computing efficiency, rendering it suitable for incorporation into current railway monitoring systems to provide continuous fault monitoring and predictive maintenance. Subsequent study may concentrate on augmenting the dataset with actual fault occurrences to enhance model generalization and investigate its relevance to other intricate railway apparatus.

Footnotes

Author Contributions

The authors confirm contribution to the paper as follows: Conceptualization, Yang Zhao and Tian Xu; methodology, Yang Zhao; software, Yang Zhao; validation, Yang Zhao, formal analysis, Litong Dou; investigation, Yang Zhao; resources, Yang Zhao; data curation, Yang Zhao; writing–original draft preparation, Yang Zhao; writing–review and editing, Tian Xu; visualization, Litong Dou; supervision, Litong Dou; project administration, Yang Zhao; funding acquisition, Yang Zhao. All authors reviewed the results and approved the final version of the manuscript.

Ethical considerations

This study does not involve any ethical issues and did not require ethical approval.

Consent to participate

This study does not involve any patient data, and therefore consent to participate is not applicable.

Consent for publication

This study does not involve any patient data, and therefore consent for publication is not applicable.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the university-level key natural science research project of Huainan Normal University (2023XJZD015), the Key scientific research projects of Anhui Provincial Department of Education in universities (2023AH051547), the Key scientific research projects of Anhui Provincial Department of Education in universities (2022AH051573), the Huainan Normal University’s online and offline hybrid first-class course project (2023hskc19), the Henan Province’s key scientific research project (21A590001), and the Anyang University of Technology’s cultivation fund key project (YPY2020001).

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.

Trial registration number/date

This study does not involve any clinical trial, and therefore trial registration is not applicable.