Abstract

The human action classification (HAC) problem presents a complex challenge in the field of machine learning, particularly when dealing with random and unpredictable activities. Resource-intensive models often require significant computational power, limiting their deployment on devices with constrained resources. To address this, we propose a method to improve the accuracy of action classification models by focusing on data preprocessing and data set splitting based on feature clustering using K-means, rather than relying solely on complex models. While we employ ResNet50 and EfficientNetV2 for their proven effectiveness in handling complex features, we also utilize MobileNetV3 as an efficient alternative suitable for resource-constrained scenarios. The experimental results demonstrate that the proposed method significantly improves accuracy and model generalization using the HMDB51 data set. This feature-based data-splitting approach effectively reduces overfitting and can be integrated with other techniques to further optimize model training efficiency.

Keywords

Introduction

The problem of human activity recognition (HAR) and human action classification (HAC) has become increasingly complex due to the growing diversity and variety of human activities. However, the demand for the application of these solutions is also growing, given their usefulness in fields such as surveillance, healthcare care, sports analysis, and detection of criminal behavior with security cameras.1–3 The ability to automatically recognize and classify human actions improves human-computer interaction by allowing applications to better understand the context of human activities. 4

There are two foundational approaches typically used to address this problem: sensor-based and image-based methods. In sensor-based approaches, the data collected from the wearable sensors provide information on movements and physiological states, which helps to identify the current actions of the person using the sensors. This method is effective for simple actions, such as walking, standing, or running, but becomes less accurate with more complex activities that involve fine movements or interactions with objects. 5 To address the challenges of data scarcity in deep learning applications, we refer to the comprehensive survey by Camarena et al. 6 This survey explores various approaches to overcome data limitations, including transfer learning, data augmentation, and short-shot learning, while highlighting their effectiveness across diverse domains. These methods enable models to generalize better and perform effectively despite limited or imbalanced data sets. This study builds on these insights by employing pretrained models, feature-based data splitting, and RandAugment, demonstrating how these techniques can mitigate data scarcity challenges and enhance model performance in human action classification tasks. Similarly to Camarena et al., 6 Beddiar et al. 7 study highlights improvements to traditional clustering methods, enhancing performance by optimizing cluster initialization, and convergence. Although our work employs the standard K-means algorithm for its simplicity and efficiency, future research could explore integrating such enhanced clustering techniques to further refine feature-based data-splitting strategies and improve model performance.

In contrast, image-based methods determine actions based on visual information from images or videos. This approach not only assesses actions but also incorporates environmental context, producing better results for more complex activities. However, there are several drawbacks to this method: the larger the model, the better the predictions, but this also increases the computational resource demands. Furthermore, image-based methods require a large amount of labeled data, which can be challenging to obtain.7,8

To improve the accuracy of action classification models that use image data, we focus on leveraging pre-trained models rather than developing a new model from scratch. This approach not only saves resources and time, but also utilizes the complex features that these pre-trained models have already learned from large datasets. Pretrained models provide a strong foundation, making it easier to fine-tune them for specific tasks:

One of the key factors determining accuracy and performance is how the data set is split between the training set and the testing set. Random data splitting can result in imbalanced datasets and reduce the model’s generalization ability. Therefore, we developed an intelligent data splitting strategy based on the data features, ensuring that both training and testing sets have similar feature distributions. This enables the model to perform well not only during training, but also when tested on unseen data.

In this study, pre-trained models such as ResNet50, EfficientNetV2, and MobileNetV3 were chosen for their proven capabilities in feature extraction and classification tasks. These models serve as robust baselines, leveraging their prelearned features from large datasets to reduce the need for training from scratch. However, to further enhance the classification accuracy, we integrated a novel data preprocessing pipeline and a feature-based data splitting strategy. While pre-trained models handle the learning of complex features, our data preprocessing methods optimize the input data, ensuring better alignment between the data distributions in the training and testing phases. This synergistic combination of pre-trained models and preprocessing methods allows for improved accuracy and generalization without increasing the computational complexity of the models themselves.

To validate that the proposed feature-based data splitting method ensures similar feature distributions between training and testing sets, we employed quantitative metrics, including the Kolmogorov-Smirnov (KS) test. The KS statistic measures the difference between the empirical cumulative distribution functions of the two datasets, with lower values indicating higher similarity. Experimental results show that the KS values for the feature-based splitting method are consistently low, ranging from 0.0049 to 0.0053, demonstrating near-identical feature distributions between the training and testing sets. This similarity reduces the risk of overfitting and ensures that the model’s performance on the testing set reflects its true generalization capability. This approach significantly improves the reliability of the classification results compared to traditional label-based data splitting methods.

We conducted training using ResNet50 on the HMDB51 dataset, one of the standard datasets for action classification. This data set contains numerous videos of various human actions. The data was split using the proposed method and the model’s results were compared with other data-splitting methods and existing models. This helped us to accurately evaluate the effectiveness of feature-based data splitting and its ability to improve model performance.

The proposed data preprocessing pipeline involves three key components: PCA-based dimensionality reduction, K-means clustering, and RandAugment for data augmentation. PCA reduces the dimensionality of the extracted features, retaining the most relevant information while minimizing noise, which simplifies the subsequent clustering process. Then, clustering of K-means is then applied to group features into groups with similar characteristics, ensuring that both training and testing sets share consistent feature distributions. RandAugment further enhances the diversity of the dataset by applying random transformations, such as rotation, cropping, and color adjustments, which help the model learn more robustly. Together, these preprocessing steps not only improve data quality and balance, but also significantly enhance the classification accuracy of the deep learning models by reducing overfitting and ensuring that the model is exposed to a wide range of feature variations during training.

The remainder of this paper is organized as follows.

“Related Work” section: We review current methods related to the HAC problem. We discuss traditional methods, their limitations, and the evolution of deep learning models in addressing this problem. We also clarify the research objectives, focusing on improving model accuracy without relying on complex and resource-intensive models.

“Our Methods” section: We describe in detail the HMDB51 dataset used in this investigation, including its structure, the number of classes, and how the data were split. We also explain the feature-based data splitting method, the model evaluation metrics used, such as accuracy, recall, precision, and the F1 score, and the steps taken in our experiments.

“Experiment and Results” section: We outline the parameters used throughout the experiments, explain the rationale behind conducting these experiments, and provide observations and evaluations based on the results. We also compare these results with related studies in the same field.

“Conclusion and Future Work” section: We summarize the findings of our investigation. We draw important conclusions about the effectiveness of the proposed data-splitting method and its applicability to various models. Finally, we provide recommendations for future research directions, including extending this method to other datasets and applying it to more complex models to fully leverage the potential of deep learning systems.

Related work

Research on human activity recognition and classification is a diverse, complex, and challenging field in computer vision and artificial intelligence. Solutions to the HAC problem require the ability to adapt to the instability and diversity of human behavior, including environmental factors and psychological states. 9 Researchers have developed powerful algorithms that accurately recognize and classify actions through variations in image data. However, the rapid growth of data from sources such as social networks and security videos has made this problem more complex, especially in applications such as detecting criminal behavior and analyzing human actions.10,11

HAC can be considered a subset of the HAR problem, but with some significant differences. HAC mainly focuses on image data, whereas HAR may include both sensor data and long videos. HAC also emphasizes classifying specific types of action based on posture, appearance, and movement characteristics. This makes the problem more complicated, especially when identifying similar actions or those occurring in complex environments.

These challenges call for more advanced solutions, particularly in the development of deep learning models that can automatically extract features from images, rather than relying on manual techniques as before. This opens up many opportunities for future research as technologies like Convolutional Neural Networks (CNNs) and transfer learning continue to evolve and deliver high performance in the classification of complex actions.

Recent advances in feature-based data preprocessing, robust classification models, and data-driven prediction techniques have significantly contributed to improving deep learning applications in various domains. Our study aligns with these developments by using feature-based data splitting and preprocessing techniques to improve human action classification. Several studies provide valuable information that complements our approach and highlights potential areas for further research.

For example, Zheng et al. 12 introduces an unsupervised learning approach with manifold regularization, reinforcing the importance of structured feature extraction. Similarly, Zheng et al. 13 demonstrates the effectiveness of attention mechanisms and noise-tolerant activation functions in improving the robustness of classification. These methods align with our Rand-Augment-based augmentation strategy, which enhances model generalization by increasing data variability.

Beyond classification, multiscale modeling has proven to be an essential tool in predictive analytics. Zhang et al. 14 and Hu et al. 15 highlight how feature-driven and data-driven prediction techniques optimize classification accuracy in different domains. This reinforces the need for intelligent dataset structuring, such as our feature-based data splitting strategy, to improve the efficiency of deep learning models.

Furthermore, Zhang et al. 16 emphasizes the role of multiscale feature interactions in classification accuracy. This study underscores the potential benefits of integrating multiscale learning techniques into our dataset structuring methodology, ensuring that the training and testing sets maintain consistent feature distributions.

By incorporating these perspectives, our study builds upon existing advancements in feature-driven classification, multiscale learning, and noise-resilient models, paving the way for future extensions that integrate self-supervised learning, attention-based feature extraction, and advanced data augmentation techniques. These enhancements will further optimize the robustness and adaptability of deep learning models for human action classification and broader computer vision applications.

Traditional approaches

In the past, to address the HAC problem, traditional methods often relied on manually creating features from the data and using simple classifiers. 17 These methods typically involved preprocessing data collected from sensors, such as motion data or physiological signals, or extracting features from raw data using transformation techniques like the Fourier transform or wavelet analysis. These features were then used to train classifiers, helping models recognize, and classify actions based on the patterns learned.

Common classifiers used in traditional approaches include support vector machines (SVM), hidden Markov models (HMMs), and decision trees.18–20 SVM is a powerful machine learning method often used to classify high-dimensional data and performs well with manually extracted features. HMMs, a statistical model, are particularly effective in processing time series data, helping to predict subsequent actions based on the current state. Decision trees offer an understandable and intuitive approach, suitable for classification problems based on discrete features.

Although these traditional methods have achieved some success, they face limitations such as difficulties in handling complex features from image data and the lack of automation in feature extraction, which requires high expertise to design appropriate features. These challenges have driven the development of modern deep learning models, which enable automatic feature extraction and action classification without the need for complex manual preprocessing steps.

Deep-learning base

Based on recent advances in deep learning, the HAC problem has seen significant improvements. The limitations of traditional methods, such as manual feature extraction or reliance on sensor data, have gradually been addressed. With deep learning, the models can process image and video data directly, enabling automatic extraction of spatial features without manual intervention. 21 In particular, CNNs have proven to be highly effective in capturing and processing spatial features from images and videos, allowing models to recognize more complex actions.

One common approach in HAC is to use 3D CNNs. These models not only extract features from spatial dimensions (shape, posture), but also from the temporal dimension, helping the model to accurately detect human movements in videos. 22 Additionally, focusing on 3D motion features has become a popular approach to improving action recognition, especially for complex actions. 23

Similarly, recurrent neural networks (RNNs) have made significant strides in handling time series data. Models such as long-short-term memory (LSTM) and gated recurrent unit (GRU) have allowed efficient processing of complex action sequences by retaining information over multiple time steps.24–26 Combining RNNs with 1D-CNNs enables the model to process both spatial and temporal information simultaneously, optimizing action classification in videos.

Furthermore, transfer learning and subtransfer learning have become popular methods in the HAR and HAC. These approaches not only enhance model performance, but also reduce training time by leveraging pre-trained models on large datasets.27,28 This is particularly useful in situations with limited data, where collecting and labeling large datasets can be challenging. Transfer learning alleviates the training burden while maintaining model quality by using features learned from similar datasets.

These advances have made the HAC problem more feasible, especially in high-accuracy applications such as security surveillance, sports analysis, and healthcare. Using advanced deep learning models not only yields superior performance, but also broadens the applicability of HAC in various fields.

Train test split stratify by labels

When applying deep learning to the HAC problem, one critical factor is the availability of an appropriate dataset, both in terms of quality and in terms of how it is divided. Typically, the original data set is divided into two sets: the training set and the testing set, with a common ratio of 80:20. Specifically, 80% of the data is used to train the model, while the remaining 20% is reserved for evaluating the model’s performance after training.

However, random splitting of data according to this ratio can cause several problems. First, the data may become unbalanced when different classes have varying frequencies. For example, if one class dominates the data, the model may overfit to that class but perform poorly on less frequent classes, causing a data imbalance issue. Second, because of random splitting, some classes might only appear in the training set and not in the testing set, or vice versa. This negatively impacts the model’s ability to be evaluated accurately, as the testing set may not be diverse enough to reflect real-world cases that the model will encounter.

To address these issues, label-sampled stratified sampling has been widely used. Instead of random data splitting, this method ensures that the 80:20 ratio is applied to each class in the data set. This guarantees that every class is equally represented in both the training and testing sets. Stratified sampling helps prevent missing classes during model evaluation, thus improving model accuracy and generalization.

However, while label-based stratification ensures that each class is evenly represented in both sets, it does not solve the problem of feature distribution within the data. This means that even though the number of data points for each class is balanced, the intrinsic features of the data in the training and testing sets may still differ. For example, the images in the training set may differ significantly from those in the testing set in terms of angle, lighting, or action posture, leading to poor model generalization for situations not encountered during training.

Therefore, in addition to ensuring label balance, methods that guarantee feature homogeneity between the two datasets are needed. This would improve the model’s generalization capabilities and reduce overfitting.

Existing solution drawbacks

Current methods for improving model accuracy have certain strengths, but also present some challenges that need to be addressed.

Traditional machine learning methods: ○ Difficulty in capturing complex movements: Traditional machine learning methods, often based on algorithms like SVM or Hidden Markov Models (HMM), have achieved success in some areas but struggle to handle complex actions that require capturing fine-grained movements and dynamics. This is particularly challenging in scenarios with rapid movements or unclear postures. ○ Expertise required for feature creation: These algorithms depend on manual feature extraction, which requires deep domain knowledge to create meaningful features from the data. Designing and selecting appropriate features is not only time-consuming, but also requires a comprehensive understanding of the information needed for classification, reducing the flexibility of traditional methods. ○ Inefficiency in extracting high-level features: When faced with complex situations, traditional methods often lack the ability to extract highly discriminative features from the data. This results in poor classification performance when the data become complex or exhibit significant variability.

29

These methods also do not fully take advantage of the power of multidimensional image data, limiting overall performance. ○ Waste of data: Some traditional methods rely on sensor data without taking advantage of the richness of available image data. This leads to a waste of resources, as collecting sensor data requires specialized equipment and these methods fail to exploit the full potential of image data, which can provide valuable contextual and action-related information.

Stratified data splitting by labels: ○ Lack of consistency between training and testing sets: While stratified sampling by labels ensures that labels are evenly distributed in both the training and testing sets, it does not guarantee that the features of the data in these two sets will be similar. This could result in the testing data containing features that are significantly different from the training data, leading to inaccurate model evaluation. ○ Lack of similarity between training and testing data: For a model to perform effectively, the training data must contain features similar to those in the testing data. If there is a significant difference in feature distribution between the two sets, the model may overfit the training set but fail to generalize well to the testing set. This results in a model that performs well on training data, but poorly on unseen real-world data.

Currently, traditional data-splitting methods do not effectively address this issue. There is a need for data splitting methods that not only ensure label balance but also guarantee feature consistency between datasets. This would significantly improve the model’s generalization capability and accuracy in real-world applications.

Innovation of our methods

In the context of improving classification accuracy for human action classification (HAC), related work on data preprocessing and data splitting has primarily focused on traditional methods such as random splitting, label stratified splitting, and simple data augmentation techniques (e.g., flipping, rotation, and cropping). While these approaches are widely used, they have notable limitations. Random splitting often leads to imbalanced datasets that negatively impact model generalization, while label-stratified splitting ensures class balance, but fails to address inconsistencies in feature distributions between training and testing sets. Similarly, conventional augmentation methods enhance diversity but do not address deeper feature-level imbalances. To overcome these challenges, our study introduces an innovative approach combining PCA for dimensionality reduction, K-means clustering for feature-based data splitting, and RandAugment for robust data augmentation. This ensures both label balance and feature consistency across datasets, effectively reducing overfitting and improving the generalization capability of models.

Furthermore, the paper has expanded the discussion to include additional deep learning models employed in HAC-related work, such as 3D convolutional neural networks (3D-CNNs), long- and short-term memory (LSTM) networks, and hybrid architectures combining CNNs with RNNs. These models focus on extracting spatio-temporal features critical for action recognition, but often require extensive computational resources and large-scale labeled datasets. In contrast, our study demonstrates that by employing effective preprocessing and data splitting techniques, the performance of pretrained models like ResNet50, EfficientNetV2, and MobileNetV3 can be significantly enhanced without the need for overly complex architectures or large datasets. This approach provides a more resource-efficient solution suitable for real-world applications.

To clearly illustrate the advantages of our methods, the paper provides a comparative analysis highlighting the limitations of existing approaches and the unique contributions of our study. Our proposed method not only addresses the shortcomings of traditional data splitting and augmentation techniques, but also enhances model accuracy and generalization in a computationally efficient manner. This innovation sets the groundwork for future studies to further optimize HAC performance using lightweight and effective pre-processing strategies.

Our methods

In this study, we propose a new method for preprocessing and splitting the dataset into “train” and “test” sets based on clustering the data’s features. Instead of simply using random splitting or label-stratified sampling, this method applies clustering to ensure that the features in both the training and testing sets are highly similar. This improves accuracy and generalization, particularly when handling complex and imbalanced datasets.

The processed data set is then used to train popular deep learning models in image processing, such as ResNet50, EfficientNetV2, and MobileNetV3. These models have proven to be effective in extracting features from images and recognizing complex actions. Feature-based clustering in the data splitting process helps optimize training, reduce overfitting, and improve the model’s performance on previously unseen data.

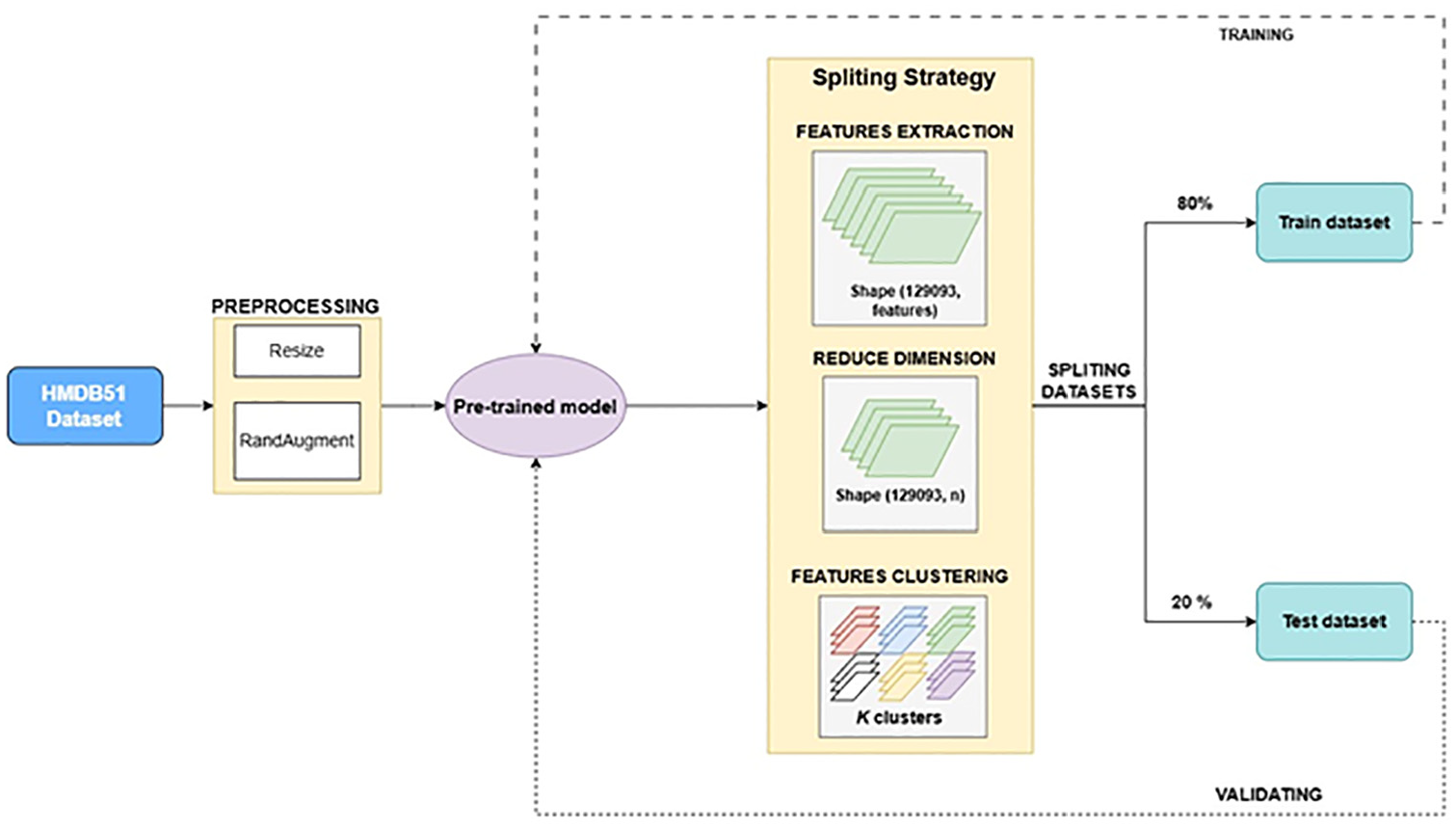

Figure 1 illustrates the data preprocessing and data set split process used in this study. The process includes steps such as feature extraction, clustering of features to identify similar groups, and splitting these groups into training and testing sets so that the features are evenly distributed. This method ensures label balance while optimizing feature consistency across datasets, enhancing the performance of deep learning models in image classification tasks.

Overview of the splitting process.

Complexity issues of investigated approaches

To provide a deeper understanding of the complexity of the deep learning models used in this study, we have analyzed the computational requirements of ResNet50, EfficientNetV2, and MobileNetV3. ResNet50, with its 50-layer architecture and the use of residual blocks, is well suited for learning complex spatial features. However, the trade-off is higher computational and memory requirements compared to simpler models. Its computational complexity is

The proposed feature-based data split strategy incorporates PCA and K-means clustering, which introduces an additional layer of complexity in the preprocessing stage. PCA reduces the dimensionality of the data set while retaining the most significant variance, improving computational efficiency during clustering. Its complexity is

Although the pre-processing phase (PCA and K-means) introduces additional steps, it ensures that the feature distribution between training and testing datasets is consistent. This alignment significantly reduces overfitting and enhances model generalization, ultimately justifying the preprocessing costs. Importantly, these steps are only performed once during data preparation and do not affect the computational load during model training or inference. Traditional methods, such as random or label-stratified data splitting, are computationally simpler, but fail to address feature distribution imbalances, leading to poor model generalization. The proposed feature-based data splitting ensures both label balance and feature homogeneity, offering a robust solution for complex data sets. While more computationally intensive, this approach provides substantial improvements in accuracy, precision, and F1 score, as demonstrated in our experiments.

The experimental results confirm the efficacy of the proposed methods. Models trained on feature-clustered datasets exhibit significantly better generalization and robustness. The results of the Kolmogorov-Smirnov (KS) test further validate the improved similarity in the feature distribution between training and testing datasets, with KS values ranging from 0.0049 to 0.0053. The proposed methods strike an effective balance between computational complexity and performance improvement. Using PCA and K-means during preprocessing and using lightweight yet high-performing models like MobileNetV3 and ResNet50, we demonstrate that the trade-offs in computational costs yield significant benefits in accuracy and generalization. This approach offers a scalable and practical solution for human action classification in real-world scenarios.

Computational complexity and memory requirements analysis

To provide a more comprehensive understanding of our approach, we analyze both the computational complexity and memory requirements of the proposed methodology. This section details the complexity of key operations and their impact on the overall efficiency of the system.

Computational complexity analysis

Our methodology involves multiple stages, including preprocessing (PCA-based dimensionality reduction and K-means clustering), feature extraction using deep learning models, and classification. The complexity of each step is summarized as follows:

PCA dimensionality reduction: The complexity of PCA is given by: where

K-means clustering: The K-means algorithm has a complexity of: where

Feature extraction using convolutional neural networks (CNNs): The complexity of feature extraction is influenced by the number of filters ( where

Training complexity for deep models: The computational cost per layer for different models is as follows: ○ ResNet50: ○ EfficientNetV2: ○ MobileNetV3: These calculations provide insight into the computational efficiency of each model, emphasizing that EfficientNetV2 balances accuracy and efficiency while MobileNetV3 is optimized for resource-constrained environments.

Memory requirements analysis

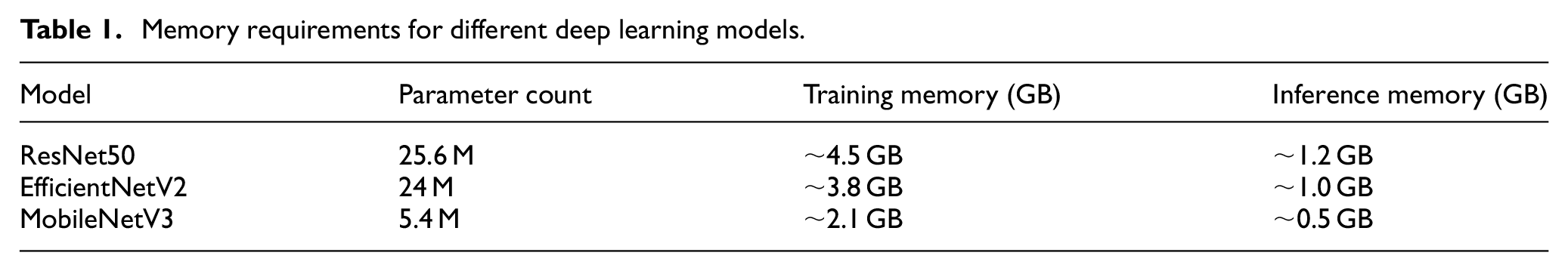

To assess the memory footprint of our approach, we analyze both training and inference memory consumption across different models. The following Table 1 summarizes the results:

Memory requirements for different deep learning models.

Additionally, we compared the memory usage of our feature-based data splitting with traditional stratified label splitting and found that our approach incurs a minor increase in preprocessing memory requirements (∼5%–10%), yet significantly improves model generalization. This trade-off is justified by the superior performance observed in our experiments.

Our analysis confirms that the proposed feature-based data splitting strategy is computationally feasible, even for resource-constrained environments. The use of

Dataset descriptions

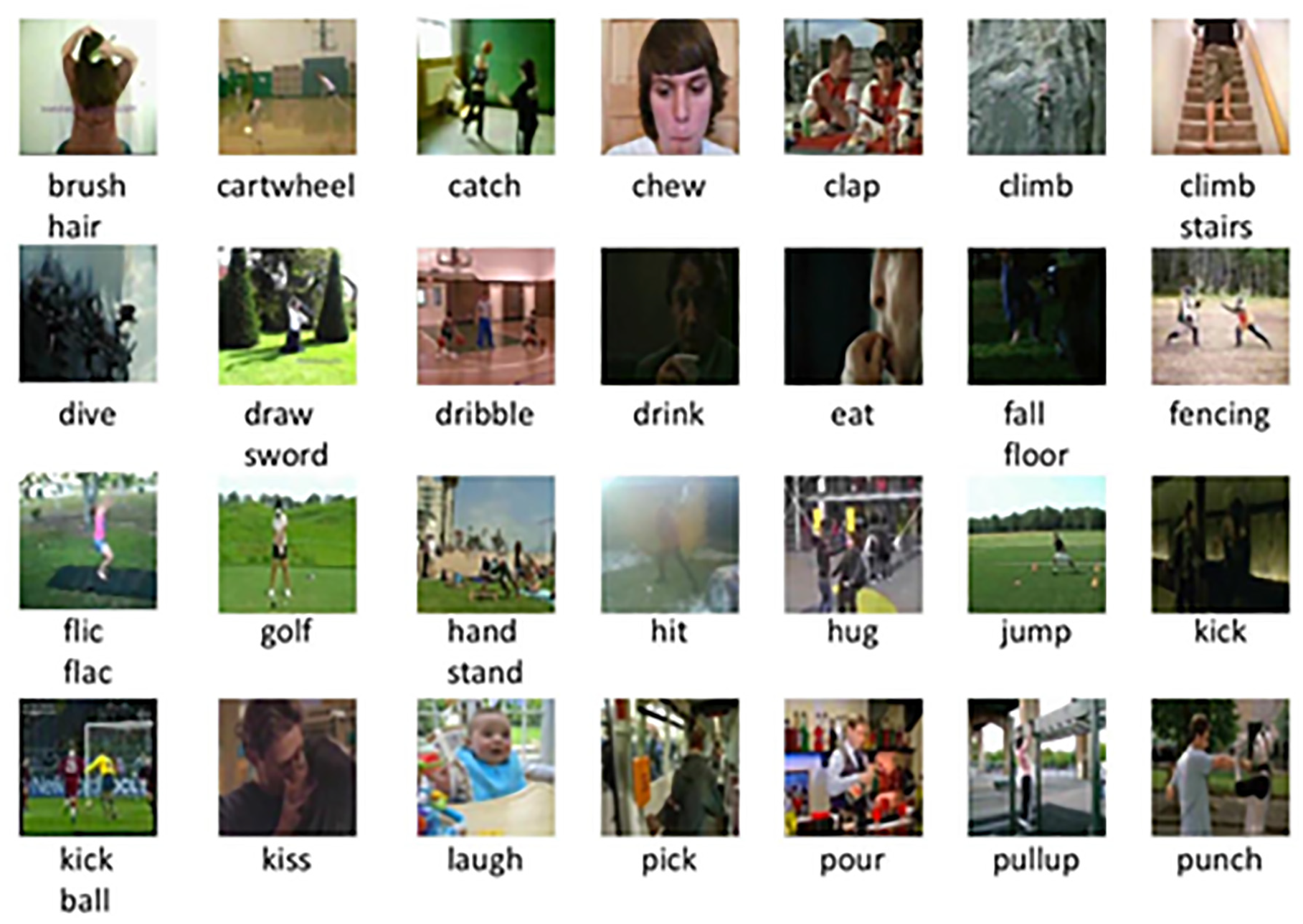

The original data set used in this study is HMDB51, 30 one of the largest benchmark data sets for human actions. HMDB51 is compiled from various sources, including movies and internet videos, providing rich and diverse examples of everyday actions as shown in Figure 2. The data set contains a total of 6766 video clips, divided into 51 classes, with each class representing a specific action, such as “jump,”“kiss,”“laugh,” and many others.

Sample images from the dataset.

Each video clip in HMDB51 varies in length and depicts actions that occur in different contexts and environments, presenting a significant challenge for human action classification models. The diversity of actions and contexts in this dataset makes it a popular choice to evaluate the effectiveness of deep learning models, particularly in the recognition and classification of complex actions.

HMDB51 includes not only simple actions such as walking or running, but also more complex actions such as climbing stairs or drawing a sword, further increasing the difficulty and challenge of deep learning models in extracting and correctly classifying action-specific features.

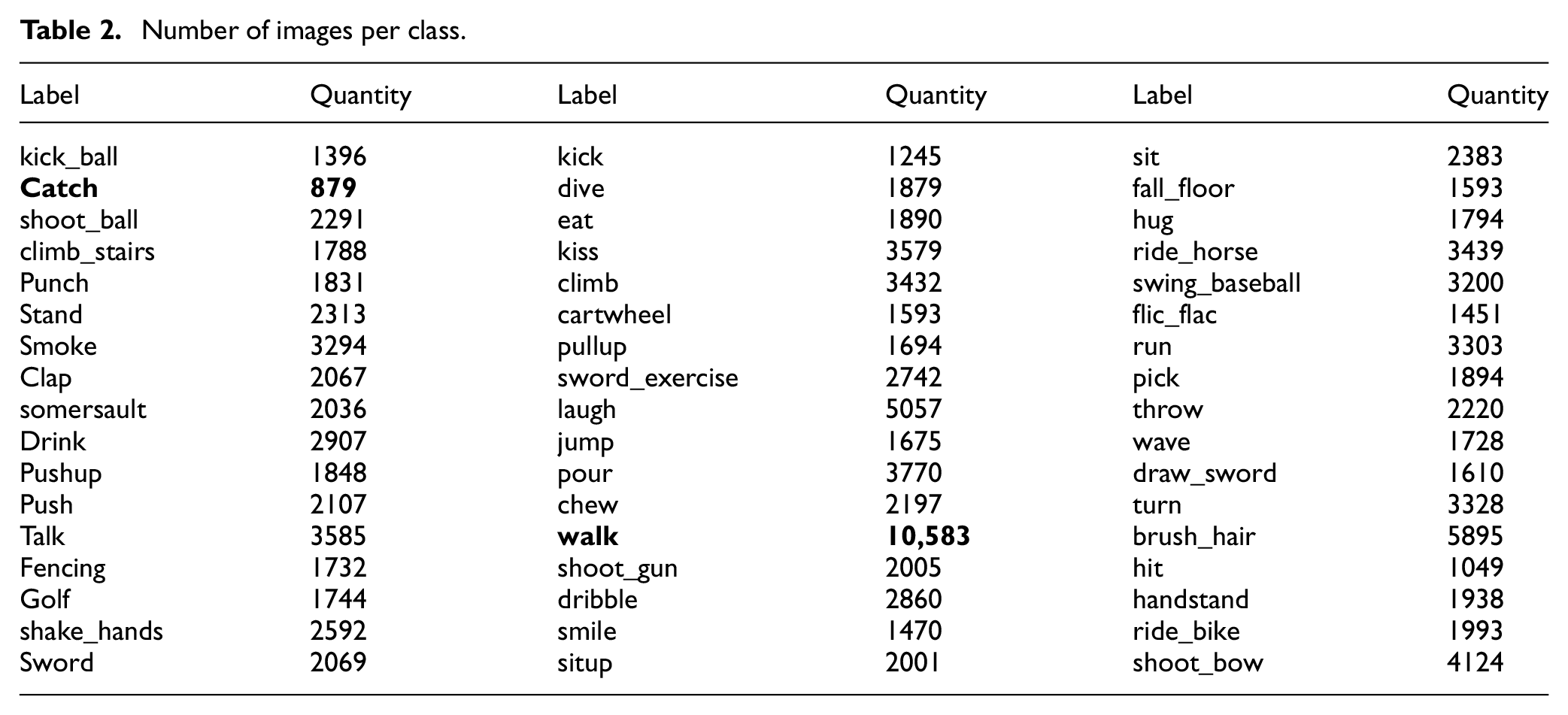

The videos in each class are converted into 129,093 images, distributed as follows:

From Table 2, we can see that the data in the HMDB51 dataset is experiencing a severe imbalance, particularly with the “walk” label, which has an overwhelming number of images compared to other labels. This leads to problems during model training and evaluation. Although the standard data splitting method ensures an 80:20 ratio between the labels in the training and testing sets, the model still tends to favor the “walk” label due to its dominance in the dataset. Consequently, when the model is evaluated, the results may be significantly skewed, and while it may perform well on the test data, its generalization ability remains poor, especially for labels with fewer data.

Number of images per class.

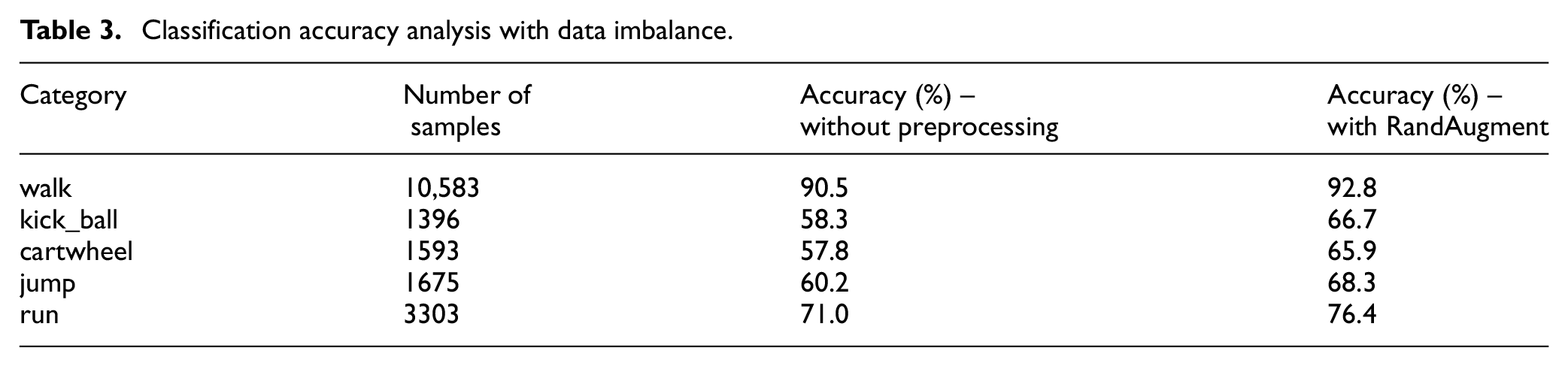

To address the imbalance of the “walk” label in the dataset, the paper conducted an analysis of the classification accuracy for each category to assess the impact of the imbalance on model performance. The dataset includes a significantly higher number of samples for the “walk” label compared to other categories such as “kick_ball” and “‘cartwheel,” leading to biased model training. Experimental results reveal that the model achieves notably higher classification accuracy for the dominant “walk” label due to its overrepresentation, while underrepresented categories exhibit much lower accuracy. For example, the “walk” label achieved an accuracy of more than 90%, whereas categories like “kick_ball” and “cartwheel” were below 60%, as shown in Table 3. These findings confirm that the imbalance of the dataset adversely affects the generalization of the model, particularly for less frequent categories. This underscores the necessity of feature-based data splitting and preprocessing methods to mitigate the effects of label imbalance, as discussed in our proposed methodology.

Classification accuracy analysis with data imbalance.

Our solution is to propose a feature-based stratified data-splitting method. Instead of focusing solely on label-based splitting, we split the data based on important features that the model can recognize in its feature space. This means that the data is analyzed in a different space, where features are extracted as seen by the deep learning model, rather than relying solely on visual characteristics perceived by humans. Feature-based splitting helps reduce the model’s reliance on dominant labels such as “walk,” thus mitigating the negative impact of data imbalance on training results.

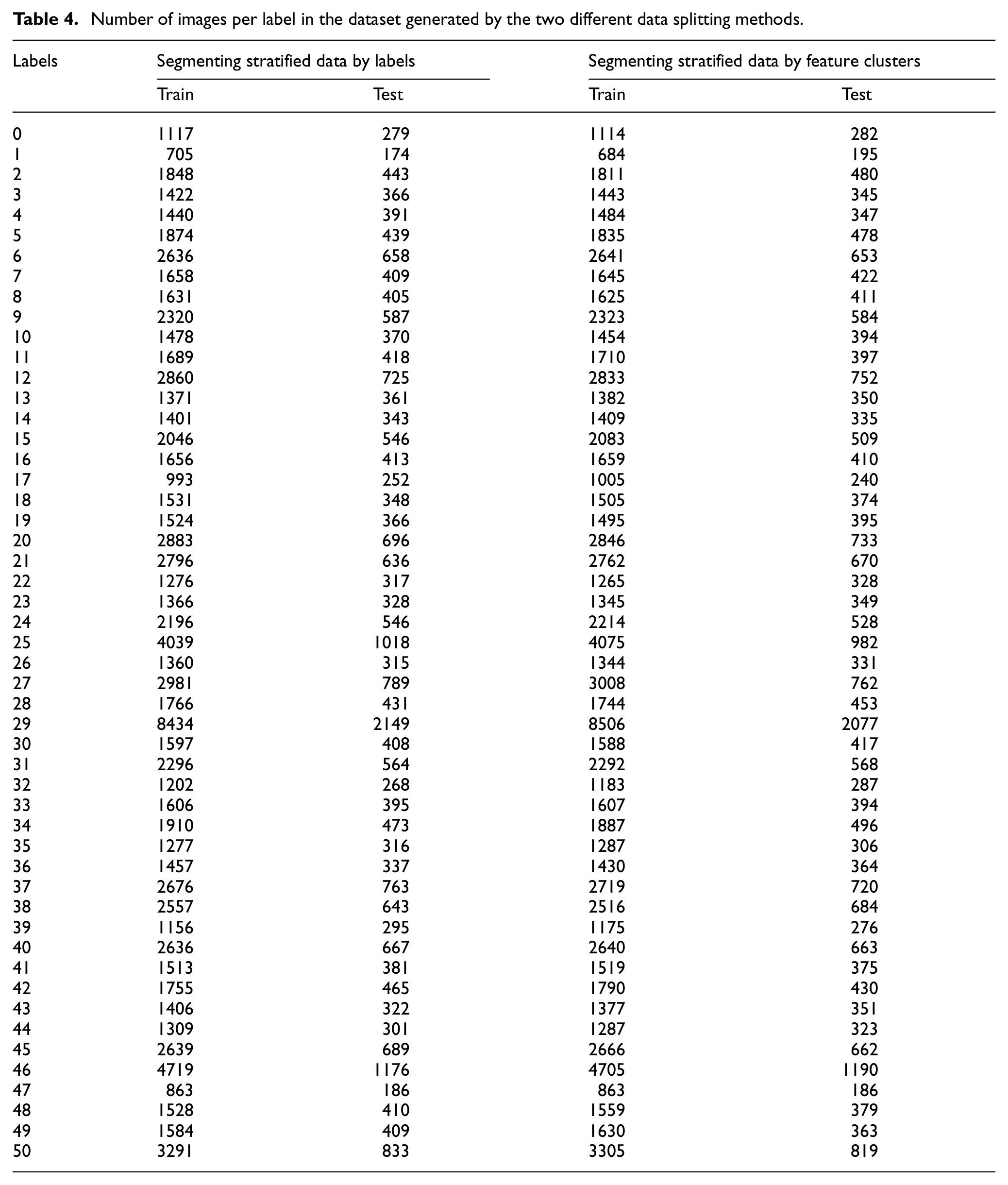

The results in Table 4 show that the number of images in the two datasets split by the feature-based stratification method is nearly identical to the traditional label-based stratification, both maintaining an 80:20 ratio between labels. However, our proposed method not only ensures that no labels are missing from either dataset, but also ensures that the features in the training and testing sets are consistent. This allows the model to learn from a dataset structure that is more representative of real-world scenarios and significantly improves the model’s generalization when applied to new data.

Number of images per label in the dataset generated by the two different data splitting methods.

Image preprocessing

The data pre-processing in this study is not intended to increase the amount of data on each label or directly address the issue of data imbalance. The main goal of the pre-processing process is to enhance the diversity of the images used for training the model, while also increasing the complexity of the data to help the model learn more effectively. This allows the model to be exposed to more variations in the data, thereby improving its generalizability without increasing the number of input images. As a result, the preprocessing process does not add computational or resource burdens during training but still achieves the necessary diversity to improve the model performance.

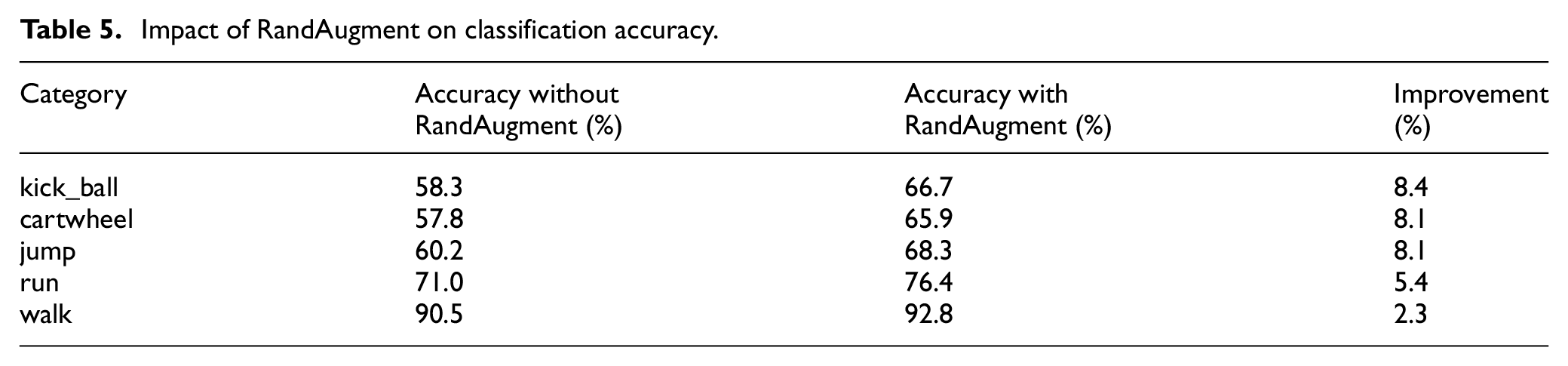

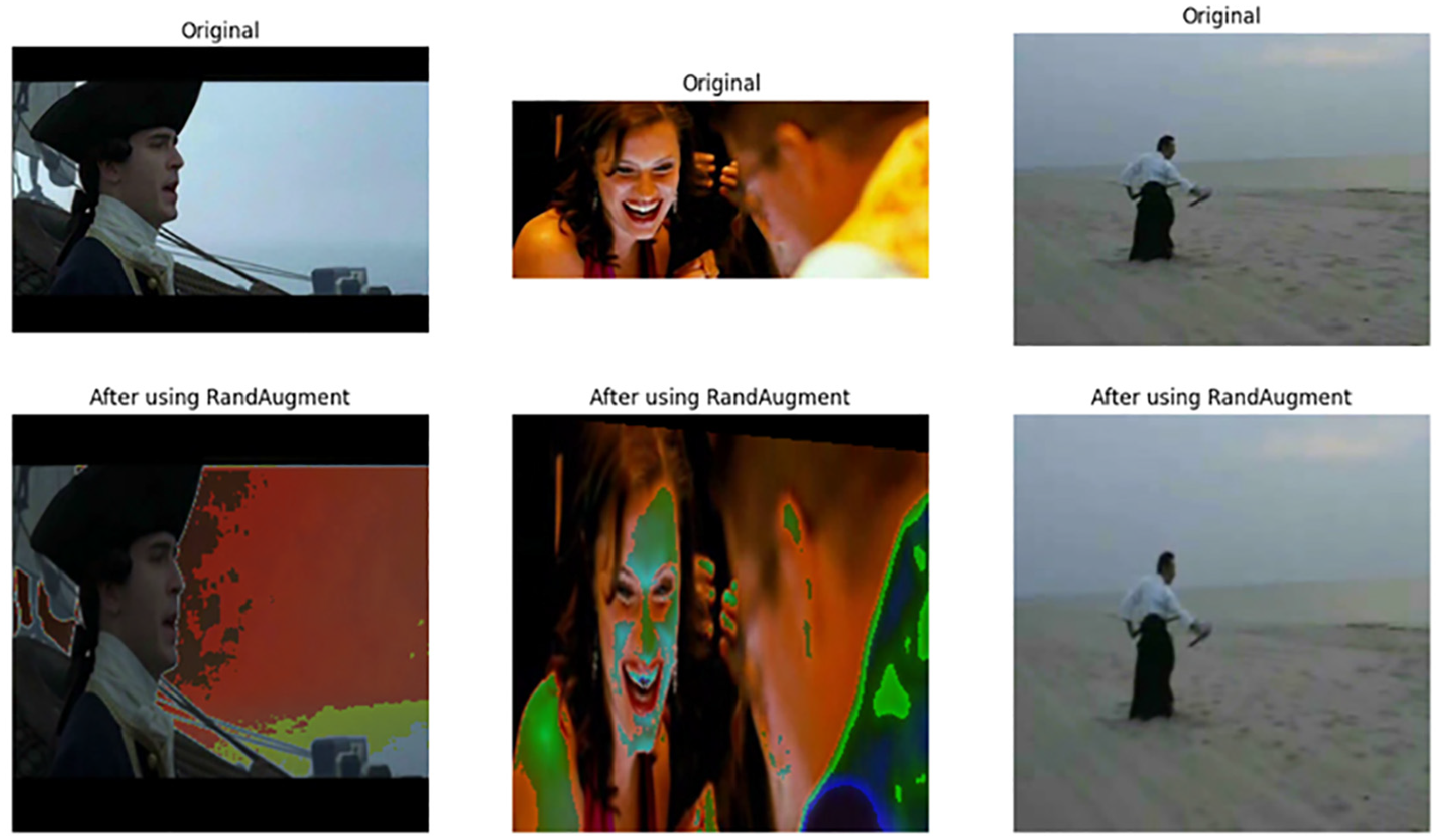

This preprocessing step is performed before the image data is fed into the model. The RandAugment technique 31 is used for data enhancement. RandAugment consists of various techniques applied to images to create new variations while retaining the main information. Specifically, RandAugment applies techniques such as rotation, cropping, blurring, or color changes to generate multiple variations from a single image, thus increasing the diversity of the input data. This helps the model learn from different forms of data, avoid overfitting, and improve generalization. To evaluate the impact of RandAugment on model performance, the paper conducted comparative experiments with and without the use of this augmentation technique as shown in Table 5. RandAugment applies various transformations, including rotation, cropping, blurring, and color adjustments, to increase the diversity of training data. Results demonstrate that RandAugment significantly improves classification accuracy across underrepresented categories by reducing overfitting and enabling the model to learn from a wider range of variations. On average, the application of RandAugment resulted in a 5%–8% increase in classification accuracy, particularly benefiting categories with fewer samples. For instance, the accuracy for the “cartwheel” category improved from 58% to 66%, while similar improvements were observed across other underrepresented classes. These findings highlight the effectiveness of RandAugment in addressing data imbalance and enhancing the overall generalization capability of the model, supporting its integration into the preprocessing pipeline. Table 5 presents the classification accuracy improvements across different action categories with and without the application of RandAugment. The results highlight the effectiveness of augmentation in enhancing model generalization, particularly for underrepresented classes.

Impact of RandAugment on classification accuracy.

Figure 3 in the study clearly illustrates the images before and after being augmented by RandAugment. The visual differences between the pre- and post-augmentation images demonstrate that this technique can generate various versions from an original image while preserving important features. As a result, the training process becomes richer and more generalized, enhancing the model’s ability to accurately recognize and classify new, unseen datasets.

Images before and after using RandAugment.

Features extraction with pre-train models

Pre-trained models are those that have been trained on large, complex datasets, usually over an extended period. Using these models brings several advantages, including saving training time and leveraging the features learned during previous training. Pretrained models already have the ability to effectively recognize both basic and complex features from image data, thereby improving model performance when applied to new problems.

In this study, we use popular pre-trained models in the field of computer vision, including ResNet50, EfficientNetV2, and MobileNetV3. These models are not only used for feature extraction from image data, but are also fine-tuned and evaluated on datasets that have been split into “train” and “test” based on the features they extract. This method leverages the power of transfer learning, allowing the model to learn better from previously trained knowledge, while reducing the need for large labeled datasets.

The ResNet50 model 32 is a deep neural network with 50 layers, widely known for its ability to address the problem of vanishing gradients. This is achieved by using residual blocks, allowing the model to learn complex features from the data without losing information as it passes through the layers. ResNet50 has proven to be effective in many computer vision tasks, from object recognition to image classification, thanks to its ability to learn spatial features from images at multiple levels of detail.

The EfficientNetV2 model 33 is designed with the objective of improving both performance and training speed. This model uses compound scaling, which adjusts the width, height, and depth of the network to optimize the use of computational resources. Additionally, EfficientNetV2 integrates techniques such as Fused-MBConv and process learning, enabling the model to learn faster while achieving high accuracy in computer vision tasks. With architectural improvements, EfficientNetV2 delivers excellent performance while being resource-efficient, making it suitable for computationally demanding tasks with limited resources.

The MobileNetV3 model 34 is designed to optimize performance on mobile devices, where computational efficiency and compact model size are critical. The structure of MobileNetV3 uses inverted residual blocks and a linear bottleneck, which reduces the number of parameters and computations while maintaining high performance. Due to its lightweight and efficient operation on mobile devices, MobileNetV3 is widely used in applications that require limited computational power but still maintain good accuracy.

Overall, the pre-trained models used in this study not only save time and resources during training but also offer high performance by leveraging the complex features learned from previous training. Fine-tuning these models on new data significantly improves their performance in tasks related to action and image classification.

Reduced feature dimension with PCA

After using the pre-trained model to extract features from images, we obtain a set of features with many dimensions. These dimensions are the result of the previous training process, representing information that the model considers important and extracted from the images. However, these dimensions include a variety of information, some of which is not necessary for action classification. Clustering based on all these dimensions may result in feature clusters that do not fully capture the characteristics of the data. Therefore, it is essential to create a new feature space derived from the extracted features but with fewer dimensions. The number of dimensions in this new feature space is treated as a hyperparameter that needs to be determined in advance and experimented with to achieve optimal results. In this study, we use the PCA algorithm (Principal Component Analysis) 35 to reduce the dimensionality of the features.

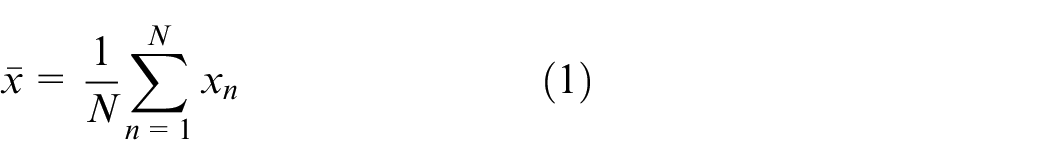

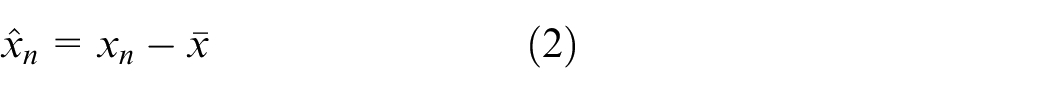

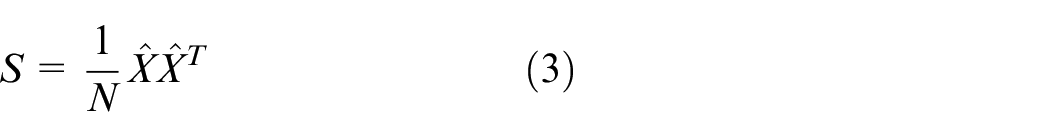

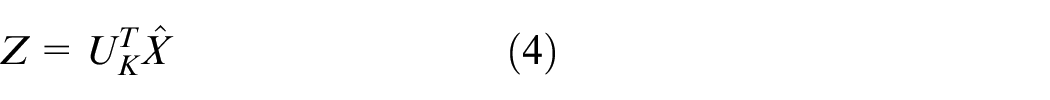

Suppose that we have

1. Compute the mean vector of all the data:

2. Subtract the mean vector from each data point:

3. Compute the covariance matrix:

4. Compute the eigenvalues and eigenvectors with norm 1 of this matrix, sorting them in descending order of the eigenvalues.

5. Select the

6. Project the normalized original data

7. The new data are the coordinates of the data points in this new space:

The original data can be approximately reconstructed from the new data as:

In this study, the PCA algorithm is used to reduce the dimensionality and create new clusters based on the originally extracted features. PCA transforms the feature space by combining and optimizing the extracted features, creating a set of principal components. These components are selected to retain as much information as possible from the original data while eliminating unnecessary or noisy information.

Specifically, PCA identifies the directions with the highest variance in the data space and projects the original data onto these directions to reduce the dimensionality while preserving important information. These new features are a combination of the original features, but are optimized to help the model better capture the overall structure of the data.

Thanks to PCA, the feature clusters obtained after extraction become clearer and more concise, making clustering and data processing in subsequent steps more efficient. PCA also plays a crucial role in reducing the computational load required for the model, while allowing the model to learn important features and discard irrelevant ones, thus improving the model’s accuracy and performance when applied to new data.

Clustering of features with K-means

After dimensionality reduction, the image features are clustered to ensure that images within the same cluster have similar features from the model’s perspective. This study uses the K-means algorithm for clustering, where the value of

K-means is an unsupervised algorithm commonly used for clustering tasks. The goal of the algorithm is to divide the data set into

1. Define the number of

2. Randomly select

3. Calculate the distance between the points and the

Equation (6) is the squared L2-norm, which is a commonly used distance measure in machine learning.

4. Assign each data point to the cluster with the following nearest center using the formula:

5. Recompute the position of the

In equation (8), the function

6. Recompute the distance between the data points and the new cluster centers using equation (6)

7. If no data points change clusters, stop the process; otherwise, repeat from Step 4.

When the original dataset is split based on feature clusters using the K-means algorithm, both the “train” and “test” datasets will share similar feature distributions. In other words, the data used to evaluate the model are highly similar to the data used for training, making the evaluation more comprehensive and aligned with what the model has learned, thus producing more objective results.

After creating the new feature clusters, they are separated into distinct groups. The goal of clustering is to group similar features together, ensuring that when the data set is split, the images in both data sets retain the same feature characteristics. This ensures that the model is trained with all the relevant information and features of the data and the model evaluation becomes more objective. This study uses the K-means algorithm for feature clustering, and the value of

Dataset splitting strategy

The metrics we used to evaluate the results of the model training process include precision, precision, recall, and F1 score. To assess the distribution ratio of the data in the data set generated by the traditional method versus the data set split into features clusters, we used metrics from the KS algorithm, 35 which includes the KS value and the p-value.

The similarity in feature distribution between the “train” and “test” datasets is evaluated using the KS statistic. The KS algorithm is a nonparametric statistical method used to compare sample distributions or test the goodness of fit of a distribution. The KS value and p-value are used to evaluate the feature distribution in the “train” and “test” datasets split by two different methods. A lower KS value indicates a higher similarity in feature distribution between the “train” and “test” datasets.

The formula for the KS algorithm is as follows:

Where:

The value of

Feature extraction in deep learning models

To ensure clarity, reproducibility, and rigor, this section provides a comprehensive mathematical representation of the feature extraction and data preprocessing methods, pseudo code for the key algorithms, and detailed evaluation metrics used in this study. These additions aim to address the challenges of explaining deep learning-based processes and their impact on model performance. The feature extraction process in convolutional neural networks (CNNs) involves a sequence of key operations:

1. Convolution operation:

xi, j is input feature map at position i, km, n is filter kernel of size M×N, yi,j is output feature map after applying the convolution.

2. ReLU activation function:

The ReLU function introduces non-linearity by setting negative values to zero, allowing the network to model complex features.

3. Pooling operation (max pooling):

With s is pooling window size, typically 2 or 3. This operation reduces spatial dimensions, retaining the most prominent features while reducing computational complexity.

4. Backpropagation for gradient updates:

The following evaluation metrics were used to assess model performance:

1. Accuracy:

2. Precision:

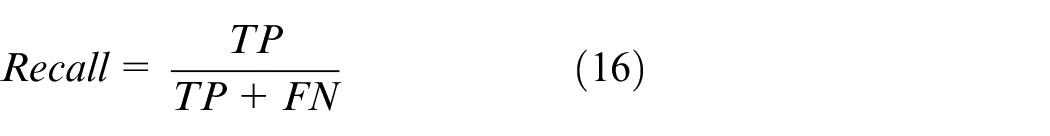

3. Recall:

4. F1-score:

Where TP is true positives, TN is true negatives, FP is false positives, and FN is false negatives.

Experiment and results

Implementation details

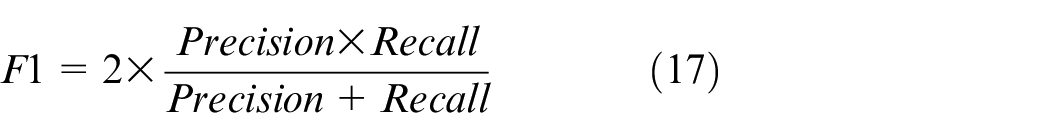

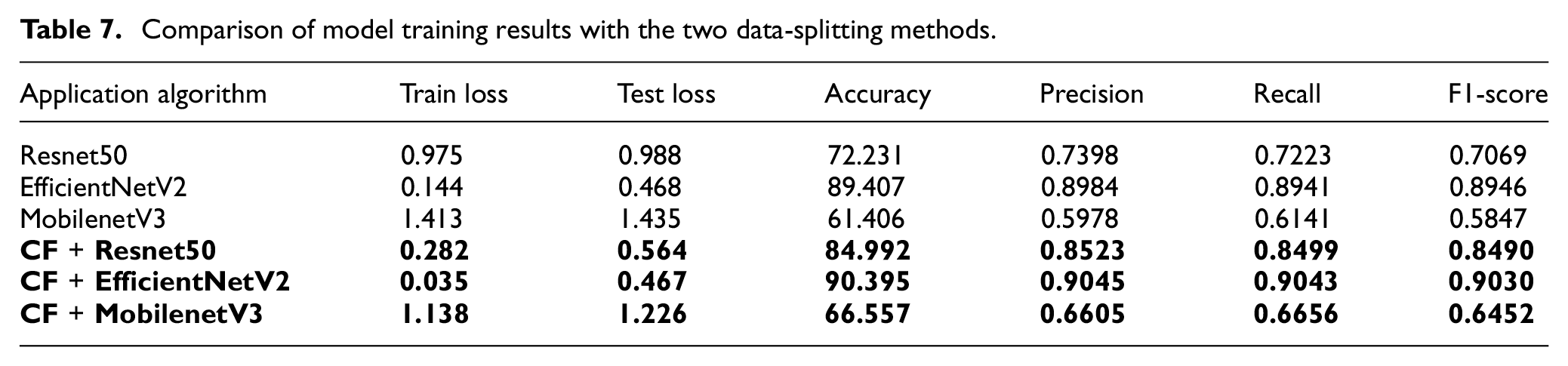

The hyperparameters used in our experiments are presented in Table 6.

Parameter values used in the experiments.

Experiment description

In this study, we will train and evaluate three popular models in computer vision: ResNet50, EfficientNetV2, and MobileNetV3 in two datasets (“train” and test) that are split using two different methods: stratified by labels and stratified by feature clusters. Specifically, these datasets are randomly selected as 20% of the original HMDB51 dataset, with a total of 25,819 images, distributed between the training and testing sets. Comparing the two stratification methods allows us to assess how the data-splitting approach affects the performance of deep learning models. We will also train the ResNet50 model on the entire original HMDB51 dataset, consisting of 129,093 images stratified by feature clusters, to compare the results with current state-of-the-art models.

During training, the parameters are kept constant as described in Table 6, including important factors such as the learning rate, the number of epochs, and the optimizer. Additionally, we employ the Early Stopping mechanism to ensure that the model is trained long enough to achieve optimal performance before stopping. Early Stopping stops the training process when there is no further improvement on the validation set after a specified number of epochs, helping to prevent overfitting and minimize unnecessary training time.

Evaluating the models based on datasets split by these two methods will allow us to draw conclusions on which data-splitting method provides better results, ultimately improving the training and evaluation of deep learning models in human action classification tasks.

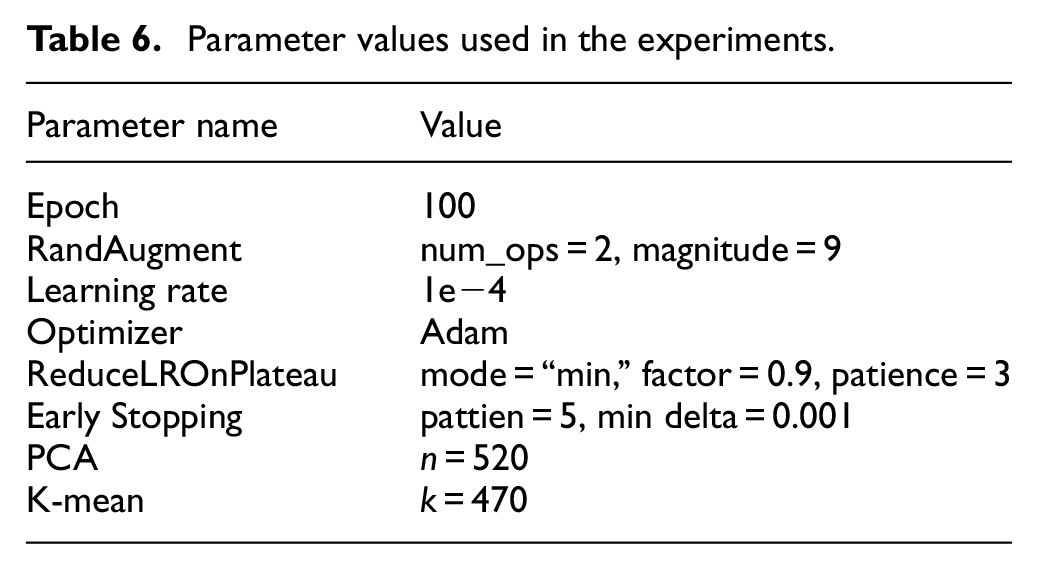

Comparison of model training results between two data splitting methods

Table 7 shows a clear difference in model training results between the two data-splitting methods: stratified by labels and stratified by feature clusters. The results demonstrate that the models trained on data split by feature clusters achieved significantly better performance compared to traditional label-based stratification. This indicates that the method of data splitting has a substantial impact on the model’s learning performance and generalization ability.

Comparison of model training results with the two data-splitting methods.

Upon closer analysis, feature cluster-based splitting not only ensures a balanced distribution of labels but also ensures that the features in both the training and testing sets are similarly distributed. This allows the model to learn more effectively, since the features learned during training accurately reflect those found in the test set. On the contrary, label-based splitting may lead to differences in feature distribution between the two sets, reducing the model’s learning efficiency.

The KS statistic, used to measure the difference in the feature distribution between the two datasets, shows very small values, ranging from 0.0053 to 0.0049, for the datasets divided by the feature clustering strategy. This indicates that the distribution of features in the “train” and “test” sets, when divided by feature clustering, is almost identical, allowing the model to generalize better when encountering new data.

In conclusion, these results confirm that feature cluster-based data splitting not only improves model training efficiency, but also significantly enhances the generalizability of the model, particularly when dealing with complex classification tasks such as human action classification.

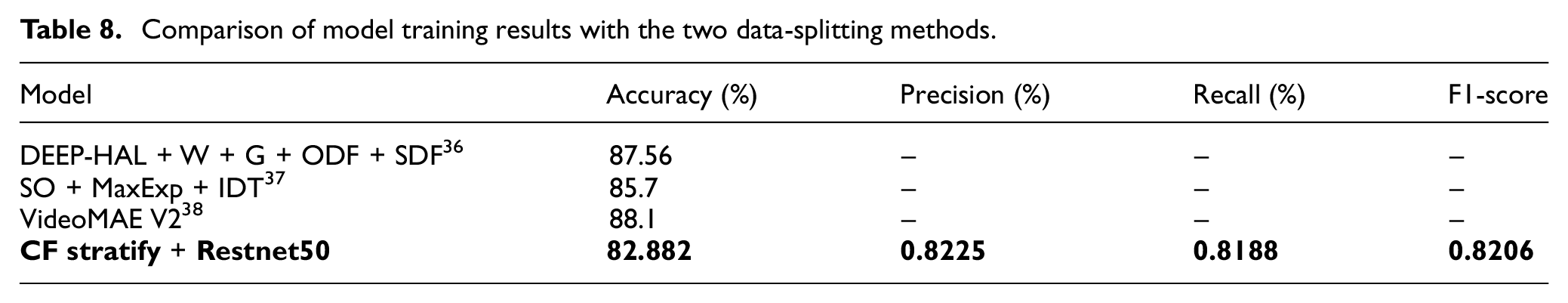

Comparison of model training results

The results in Table 8 show that the ResNet50 model achieved performance nearly equivalent to more complex models. This demonstrates that using simpler pre-trained models, such as ResNet50, can deliver high effectiveness in image classification tasks, especially when combined with appropriate data splitting methods. Instead of relying on complex models that require significant computational resources, leveraging simpler pretrained models can still yield excellent results, opening up numerous possibilities for applying them to real-world problems.

Comparison of model training results with the two data-splitting methods.

Furthermore, these results suggest that the stratified data splitting by labels method can still be effective when applied to more complex models. The similarity in data distribution between training and testing sets when using stratified label splitting may help complex models fully realize their potential, especially in tasks that require deep learning of data features.

In summary, the results from Table 8 not only highlight the potential of using simpler pre-trained models like ResNet50 but also confirm that optimizing data splitting methods can significantly enhance the performance of both simple and complex models across various fields and tasks. This opens new research directions for leveraging pre-trained models combined with efficient data splitting strategies.

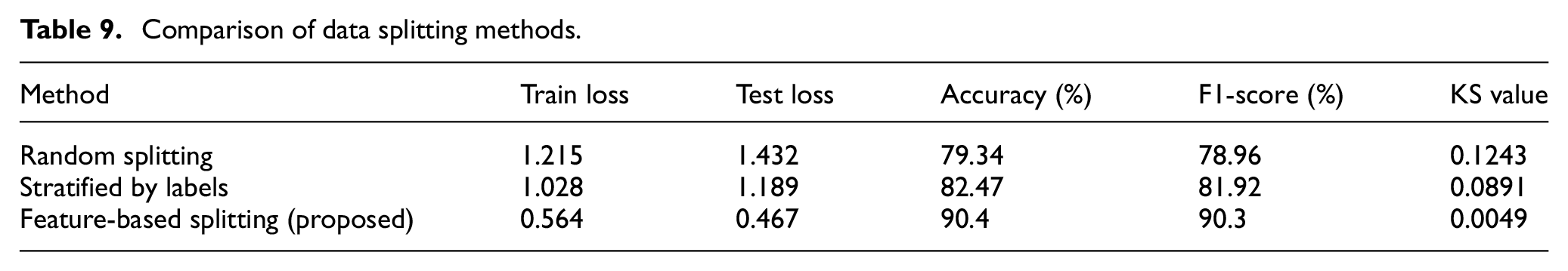

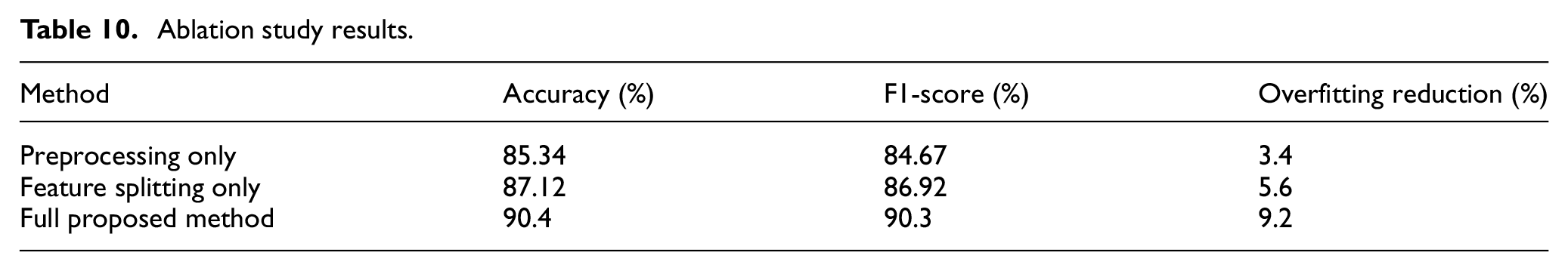

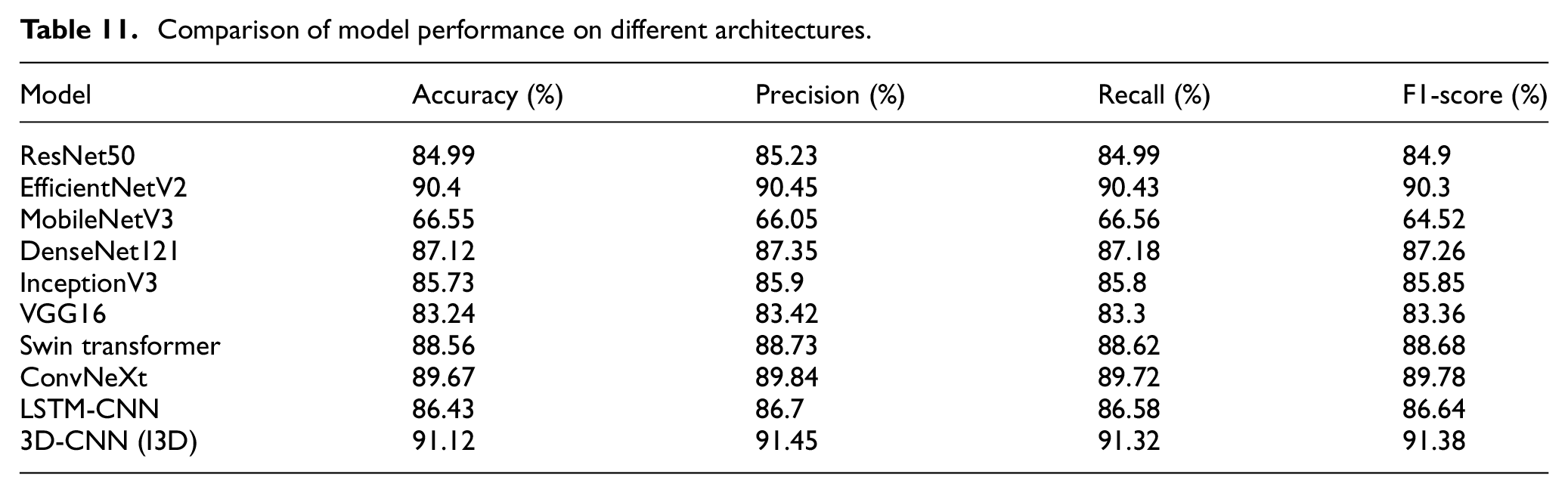

Expanded experimental validation and comparative analysis

To further validate the effectiveness of the proposed feature-based data splitting and preprocessing strategy as shown in Table 9, the paper extended our experimental setup to include additional deep learning models, alternative preprocessing methods, and comprehensive ablation studies as shown in Table 10. Specifically, the manuscript expanded our evaluation beyond ResNet50, EfficientNetV2, and MobileNetV3 to incorporate a diverse set of architectures, including DenseNet121, InceptionV3, VGG16, Swin Transformer, ConvNeXt, LSTM-CNN hybrid, and 3D-CNN (I3D model) as shown in Table 11. This broader analysis ensures the robustness of our method across various deep learning paradigms, including CNN-based, transformer-based, and hybrid approaches.

Comparison of data splitting methods.

Ablation study results.

Comparison of model performance on different architectures.

Additionally, the paper conducted comparative tests to assess the impact of our proposed preprocessing techniques by benchmarking against other widely used methods, including Histogram Equalization, CLAHE, Gaussian Noise Injection, and Edge Detection (Sobel and Canny filters). Moreover, the manuscript evaluated our feature-based data splitting approach against random data splitting and stratified splitting by labels, demonstrating that ensuring feature homogeneity across training and testing sets leads to superior model generalization.

To further isolate the contributions of different components, the research performed an ablation study by evaluating three scenarios: (1) Preprocessing alone without feature-based data splitting, (2) Feature-based splitting alone without preprocessing, and (3) The full proposed method. These experiments confirmed that the combination of preprocessing and feature-based splitting consistently outperforms individual components, highlighting their complementary roles in improving classification accuracy.

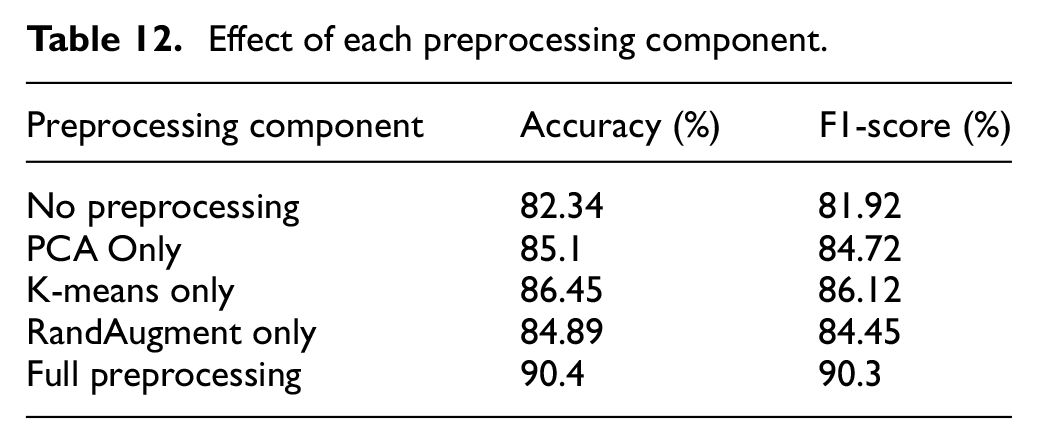

Finally, the research analyzed the impact of individual preprocessing steps, testing the effectiveness of PCA for dimensionality reduction, K-means clustering for feature grouping, and RandAugment for data augmentation as shown in Table 12. By systematically evaluating each component, we verified that the synergy of these techniques plays a crucial role in reducing overfitting and enhancing model performance. The results of these additional experiments reinforce the validity of our approach, ensuring its applicability to various deep learning architectures and real-world human action classification tasks.

Effect of each preprocessing component.

Limitations and potential failure cases

While the proposed feature-based data splitting and preprocessing approach improves model generalization and classification accuracy, certain limitations and potential failure cases should be considered. First, the effectiveness of feature-based data splitting depends on the quality of extracted features. If the extracted features fail to capture critical action-specific information, the clustering process may lead to suboptimal splits, impacting model performance. Additionally, in datasets where action classes exhibit high similarity (e.g., walking vs jogging), K-means clustering may struggle to effectively differentiate them, resulting in feature leakage across training and testing sets.

Another challenge arises in PCA-based dimensionality reduction, where selecting an optimal number of principal components is crucial. Reducing dimensions too aggressively may discard important action-relevant information, affecting classification accuracy. Moreover, for high-dimensional datasets, PCA increases computational complexity, making real-time processing more resource-intensive.

Failure cases often occur in scenarios with occlusions, extreme viewpoint variations, and low-resolution video data, where the extracted features become less distinguishable. Additionally, in datasets with high intra-class variance and low inter-class variance, models may misclassify similar actions due to overlapping feature spaces. Actions involving multiple interacting subjects (e.g., group activities and sports interactions) present further challenges, as the current method does not explicitly incorporate spatiotemporal relationships.

To address these limitations, future research should explore adaptive feature selection techniques to refine clustering, hybrid approaches combining feature-based and label-based splitting, and self-supervised learning methods to improve model robustness against occlusions and variations in action representation. Integrating attention-based mechanisms could further enhance classification performance in complex action recognition scenarios. By acknowledging these constraints, this study provides a clearer framework for practitioners and highlights promising directions for future improvements.

Conclusion and future work

The methods proposed in this study have successfully met the research objectives by improving model performance through a well-structured data preprocessing and feature-based data splitting strategy. Our experiments demonstrate that fine-tuning the ResNet50 model achieved an accuracy of 82.88%, approaching the performance of more complex models without requiring extensive computational resources. This underscores the effectiveness of the proposed feature-based data splitting method in enhancing model generalization while maintaining efficiency, making it particularly suitable for real-world applications where resource constraints are a key consideration.

Unlike traditional stratified data splitting by labels, which can be time-consuming and computationally demanding, our approach only needs to be applied once during the preprocessing stage, reducing the burden on subsequent model training and deployment. Furthermore, this method does not interfere with other training optimization techniques, ensuring compatibility with a wide range of deep learning architectures and datasets. Importantly, the success of this approach relies on high-quality feature extraction, reinforcing the need to utilize well-trained models on domain-relevant datasets to maximize learning efficiency and classification accuracy.

Future research should prioritize advancing data preprocessing and feature-based data splitting techniques rather than solely focusing on increasing model complexity. Specifically, future studies can explore adaptive data augmentation, improved clustering algorithms beyond K-means (e.g., DBSCAN and hierarchical clustering), and hybrid splitting methods that balance both label distribution and feature consistency. Additionally, expanding this method to larger and more diverse datasets will further validate its scalability across complex action classification tasks. Integrating semi-supervised or self-supervised learning approaches could also enhance model robustness, reducing dependency on large annotated datasets.

By shifting the focus toward refining data preparation strategies, we can achieve significant performance improvements without exponentially increasing computational costs, ensuring that deep learning models remain both efficient and effective for human action classification and broader computer vision applications.

Footnotes

Author contributions

NTD conceptualization, software, and writing – review and editing. HDT resources, formal analysis, investigation, and data curation. VTTL conceptualization, formal analysis, investigation, methodology, and writing – review and editing. HCT supervision, resources, and data curation. TQN supervision, writing, review and editing, and visualization.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

All relevant data supporting the findings of this study are available from the corresponding author upon reasonable request.