Abstract

We offer a critical inquiry into the faltering entry of an anthropomorphised AI (ro)bot, an algorithm without physical or visual form, into the workplace in a media consultancy company. While living a digital life in the virtual world, the ro(bot) was given a human name. We highlight the unexpected consequences the humanisation of an early form of artificial intelligence (AI) has on the affects circulating between people and the new technology and between members of different organisational groups. We argue that anthropomorphising technologies such as AI influences the affective life of organisations and amplifies existing discontent between organisational members, complicating the introduction of the technology. Focusing on human–AI interaction, our analysis reveals a rift between managers who are excited and hopeful about the future capabilities of AI and employees who are frustrated and angry about its present shortcomings. We conclude that collective affects play a central role in contemporary technology-driven organisations in which the role people play in relation to the avalanche of AI technologies is often neglected.

Keywords

Introduction

This article explores the fortunes and misfortunes of a (ro)bot that entered the workplace of the media consultancy company Wizz (a pseudonym). The technology initially presented to us by company managers as an ‘early-stage artificial intelligence-driven robot’ was given a male human name – we call him Max – by the Chief Technology Officer (CTO), his ‘father’. Other organisational members adopted this name, and Max was referred to with the pronouns ‘he’ and ‘him’ (referring to a human) and the noun ‘robot’ (referring to a thing) – a mixed approach we, too, espoused in our study. The act of anthropomorphising or humanising artificial intelligence (AI) (Bartneck et al., 2007a; Epley et al., 2007; Mori, 1970; Yam et al., 2022) became a captivating empirical phenomenon to follow, especially when we learned that Max had neither physical nor graphical form. We found that the humanised (ro)bot Max prompted affects (Ashforth and Humphrey, 2022) such as excitement, frustration and anger that became shared and collective as they circulated among organisational members (Barsade and Gibson, 2007; Manning, 2009). Max seemed to become an inanimate amplifier of existing discontent between managers and employees, influencing the affective organisational life at Wizz.

The anthropomorphised (ro)bot Max is a timely and relevant subject of inquiry. Science fiction has taught us that AI embedded in supercomputers can be given human-sounding names such as VIKI (Virtual Interactive Kinetic Intelligence) in the 2004 film I, Robot, or HAL (Heuristically programmed Algorithmic computer) in the 1968 film 2001: A Space Odyssey. We are also familiar with faceless virtual assistants, such as Amazon’s Alexa or Apple’s Siri, that mimic human voices when answering our questions. However, we observed something different – nothing quite as high-profile or threatening as futuristic, dystopian Hollywood versions of AI or as practical and service-oriented as Alexa or Siri. Max became a new type of organisational member at Wizz, introduced with great enthusiasm as a ‘junior colleague’ by the management but received with scepticism and resentment by the employees assigned to work with him.

Our research is not the first to suggest that advanced digital technologies have an impact on affective organisational life. Wilson (2011) noted that in the field of computer sciences, relationships between artificiality and affectivity have been studied for some time, and affective constraints are incorporated into the study of artificial systems. Affects such as fear and disgust have been found to be triggered among humans when interacting with robots appearing ‘too’ human (Mori, 1970). In organisation and management studies, recent research has explored how affective intensification triggered by digitalisation is instating itself not only in the digital spaces of organisational life but also in society at large (Just, 2019), how affects have a profound role in the circulation of technologies and technologies in the circulation of affects (Sage et al., 2020) and how transdigital innovation spaces where participants act with and through technologies can form affective circuits that stoke and direct affect (Endrissat and Islam, 2022). In our study, we take a profoundly human approach. We focus on how affects are triggered by AI technology taking the form of the (ro)bot Max, how organisational members project affects onto the AI technology and how human-to-human affects are influenced by it.

Our study has wider significance because the (ro)bot Max can be seen as a prime example of a contemporary strategic technology project undertaken in organisations. Like other digital technologies, it was launched with high expectations, but organisational life turned out to be more complicated than technology enthusiasts had anticipated. Our study exemplifies a persistent rift between management and employees as a carefully crafted strategic plan (detailed in a PowerPoint presentation) clashes with mundane operational challenges in the organisation. What makes the story of Max the (ro)bot revealing is the peculiar humanising of a technological entity without a physical form and the collective affects this humanising generated, fuelling dissatisfaction and conflict.

With these thoughts, we join the theoretical discussion on human–AI interaction in organisation and management studies. We rely on interpretivist epistemology (Alvesson and Sköldberg, 2017; Einola and Alvesson, 2019; Stake, 2010) to study the local effects of a strategic AI automation project in a technology-savvy company in an industry that has adjusted to the challenges of digitalisation for decades. Through observation of organisational life at Wizz and interviews with managers and employees, we explore through an affect lens how people experienced the introduction of Max. The following research questions guided our inquiry: (1) how does the entry of an early-stage AI technology change affective organisational life? and (2) why do these changes occur?

Our study contributes to research on human–AI interaction by elucidating how people can extend their collective affects to include advanced technology when engaging with anthropomorphised virtual AI in the workplace. Expectations of human (or human-like) behaviour trigger different collective affects that influence both human–AI and human–human interaction and, ultimately, the success and cost of these resource-absorbing technology projects.

The remainder of the article is structured as follows. We first present our subject of inquiry (anthropomorphising technology) and theoretical lens (affect). We then introduce our empirical material and analysis, offer our key findings, discuss our contributions and suggest ideas for future research.

Theoretical framework

Cutting-edge AI technologies tend to be legitimised as objects of desire that contain a radical promise of disruption (Vesa and Tienari, 2022). They challenge and change organisational practices (Bailey et al., 2022) and influence work design and jobs (Wang et al., 2020). Existing research conceptualises emerging technologies as ‘a set of evolving relations’ (Wang et al., 2020) points to the ‘co-constitutive relation’ between technology and organising (Faraj and Pachidi, 2021), thus suggesting a relational approach to studying them (Bailey et al., 2022). There is a nascent literature in organisation and management studies focusing on what happens when AI technologies are introduced and coordinated with the work humans do (Sergeeva et al., 2020) and when humans are required to acquire new skills in working with them (Barrett et al., 2012). New digital technologies challenge professional roles, considerations of status and forms of collaboration in organisations (Sergeeva et al., 2020). However, how AI applications co-exist with people is still a relatively poorly understood emerging phenomenon (Einola and Khoreva, 2023). We suggest that studying how digital technologies are anthropomorphised offers one way forward in understanding human–AI interaction.

Anthropomorphism and human–AI interaction

Originating from the Greek anthropos (meaning ‘human’) and morphe (‘shape’ or ‘form’), anthropomorphism is more than attributing life to the non-living (Epley et al., 2007) or giving superficial characteristics such as a human-like face or body to a non-human agent (Waytz et al., 2014). For Latour (1992, p. 235), anthropos and morphos together mean either that ‘which has human shape’ or that ‘which gives shape to humans’. The notion of reciprocity implies that AI is not only made by people but also shapes what being human is or can be (Harari, 2016; Latour, 1992). What human–AI interaction becomes is a process that has only begun but in which human agency and organisations have a key role to play.

Anthropomorphism is understood as a process of inductive inference whereby people attribute to non-humans distinctively human characteristics such as the capacity for rational thought and conscious feeling (Gray et al., 2007). It implies imbuing the real or imagined behaviour of non-human agents with human-like properties, characteristics, motivations, intentions and emotions (Bartneck et al., 2007a; Epley et al., 2007; Mori, 1970). Matters related to a mind and its capability to perceive, such as conscious experience, metacognition and intentionality (Gray et al., 2007), as well as human-like emotional states and behavioural characteristics (Epley et al., 2007) are central to understanding the phenomenon.

The tendency to anthropomorphise technologies makes it easier to convince us humans of their intelligence (Proudfoot, 2011). Watson (2019) suggests that the notion of artificial intelligence ‘dares’ us to compare our human modes of reasoning with the behaviour of algorithms. However, there is an often forgotten social and political side to the tendency to anthropomorphise technologies. We speak of AI that thinks, learns, takes our jobs, is smart and so on – a vocabulary that humanises technology and implicitly assumes that technology has a will of its own. For instance, Yam et al. (2022), in their study based on psychological experiments, found that an anthropomorphised robot supervisor delivering negative feedback was more likely than a non-anthropomorphised robot to be perceived as possessing agency. In another study also based on experiments, people were found to evaluate service robots more positively when they were anthropomorphised and seemed more human-like, that is, considered capable of both agency (the ability to think) and experience (the ability to feel) (Yam et al., 2021). However, robots, bots and algorithms have no volition (at least for now) and are not ‘motivated’ to take humans’ jobs (Fleming, 2019). They are only as smart or stupid as people design them to be and depend on what the people who co-exist with them decide to use them for. Assigning human qualities such as motivation to digital technologies with or without physical form seems misguided, and the organisational impact of this peculiar humanisation is still very much an unexplored territory.

Research has often focused on human perceptions based on pictures of robots (Bartneck et al., 2007a) or real-life moving robots (Bartneck et al., 2007b). Chun and Knight (2020) found that employees’ mental models of industrial robots they worked with, and their ways of anthropomorphising these robots, demonstrated a form of ‘human–machine sociability’. According to Proudfoot (2011), while situations and contexts differ, robots can only exercise their artificial agency in a socially constructed context where people deliberately outsource specific tasks to them. It is always people who choose whether to abdicate this authority and empower a piece of technology to intervene on our behalf (see also Vesa and Tienari, 2022).

Few studies in organisation and management studies account for anthropomorphising forms of technology without a physical presence, like virtual assistants, bots or algorithms with learning modules that are human-like in their behaviour but do not have any material presence or graphic representation (Sheehan et al., 2020). Yet, these forms of new technology are increasingly common in knowledge-intensive businesses such as media consultancy where they are moulded by organisational relations and interaction (Fleming, 2019). Here, we enter a practical and symbolic space that is not only about people using technologies as tools but also people relating to and interacting with technologies and projecting their imaginings on them. This creates a new type of co-existence where the relations and boundaries between people and technologies may become blurred as both influence each other (Bailey et al., 2022; Einola and Khoreva, 2023; Faraj and Pachidi, 2021).

Collective affects and technology

Building on the above discussion, we adopt an affect perspective to understand what happens when anthropomorphised AI technologies enter the workplace. We turn our attention to affect to explore how people collectively feel about technology and how relations between people and technologies unfold. Affect is widely discussed in organisation and management studies (e.g. Ashforth and Humphrey, 2022; Barsade and Gibson, 2007; Endrissat and Islam, 2022; Fotaki et al., 2017; Sage et al., 2020; Thompson and Willmott, 2016) as well as in, for example, critical media studies (e.g. Dean, 2015; Just, 2019) and gender studies (e.g. Ahmed, 2010; Pullen et al., 2017). Affect is an umbrella term encompassing a range of feelings that individuals experience and share, including feeling states (i.e. emotions and moods) and feeling traits (i.e. dispositional affects) (Barsade and Gibson, 2007).

Affect can be understood as the outcome of encounters between entities and how entities are affected by these encounters (Deleuze, 1988). It is the capacity to affect and be affected (Thrift, 2004) and the name we give to vital forces ‘beyond only emotion – that can serve to drive us toward movement’ (Seigworth and Gregg, 2010: 1). When we are affected by entities or objects such as technologies, we evaluate them, and our bodies express these evaluations (Ahmed, 2010). It is commonly argued that affect is pre- and trans-personal, or relational (Manning, 2009), moving between bodies and configuring shared and collective experiences (Endrissat and Islam, 2022; Thrift, 2008). While affect is elusive and difficult to study (Ashforth and Humphrey, 2022; Endrissat and Islam, 2022; Fotaki et al., 2017; Keevers and Sykes, 2016), it is highlighted as a mobilising power and key driver of collective action (Endrissat and Islam, 2022; Karppi et al., 2016; Resch et al., 2021). Affects do not rest within individuals but circulate, enabling ‘collective’ affects (Barsade and Gibson, 2007; Manning, 2009). Emotional contagion is a primary mechanism through which affects are shared and become social and collective. These encompass human and non-human agents such as technologies (Ash, 2015; Endrissat and Islam, 2022). Affects help bring ‘intensities and forces’ of organisational life (Beyes and Steyaert, 2012) in the ‘late capitalist system’ (Massumi, 2002) into being. As such, affects in relations and interactions between people and technologies can help change transform organisations (e.g. Beyes and De Cock, 2017; Fotaki et al., 2017).

The observation that affects have a strong relational component becomes particularly interesting when we extend this relationality to include not only anthropomorphised physical objects such as robots but also virtual technologies such as algorithms and bots. Because of the ongoing rapid expansion of advanced digital technologies in and across workplaces, we suggest it is important to explore the changes they trigger in the affective lives of organisations – a phenomenon that is thus far not well understood in organisation and management studies. Following examples from studies on technologies and organising (e.g. Bailey et al., 2022; Faraj and Pachidi, 2021) and affect (e.g. Manning, 2009), we seek to deepen understanding of how anthropomorphised early-stage forms of AI may influence collective affects in a company that relies on digital technologies to survive and succeed in conditions of intensifying competition.

According to Leyer and Schneider (2021), AI-enabled software is a technological entity with decision competencies that represents a new form of agent in organisations. However, their view is a managerial one, because the agency they propose is reserved for managerial work in that ‘when managers have the option to delegate a decision to AI-enabled software, the software acts as an agent on the managers’ behalf’ (2021: 713). To get closer to organisational life as its members live it, we focused on managers, employees and the humanised (ro)bot Max. We studied people with and without managerial and technology development duties to better understand how advanced AI technologies are received and what type of shared and collective affects they trigger among those whose cooperation is needed to make their implementation successful in the organisation.

Research design

Study context

Our study was conducted in a media consultancy we call Wizz (a pseudonym), a subsidiary of a multinational corporation (MNC) located in a Nordic country in Europe. A media consultancy is an intermediary between consumers of products and services and the companies that sell these. They design, execute and measure the efficiency of media campaigns in both traditional and digital media and work with other companies, such as advertising agencies, that provide the content for these campaigns. As a subsidiary of an MNC, Wizz enjoys relative autonomy in conducting its business and is acknowledged for pioneering digital services. At the time of our empirical work in 2019–2020, it had some 170 employees and 150 corporate customers. Work at Wizz is team-based and driven by customer projects. Each employee in a customer-facing role is typically assigned to between two and six projects at any given time. Like its competitors, Wizz balances between working with traditional media (television, radio, print and billboards) and digital media (internet-based applications such as Google, Facebook, Instagram and TikTok), stretching its resources and capabilities because of the constant evolution of the media scene and advances in technologies in both media types.

Through our personal contacts, we were given the opportunity to get inside Wizz and to study its people and digital tools. The Chief Executive Officer (CEO) is a high-profile business executive who is well known in the media consultancy business, and his technology partnerships and visions for the future of AI are frequently portrayed in business media. Because of our interest in advanced digital technology implementations in organisations, we had followed Wizz (and its CEO) for some time before targeting the company for our research project. We learned in our first meeting with the CEO that business challenges leading to a shrinking bottom line had pushed Wizz management to turn to AI technologies to seek alternative business models. A CTO had been hired for this purpose. In time, he became one of our key informants. At the time of our research, one of his biggest challenges was what he considered a slow pace of adoption of a new AI application by employees in the traditional media department, who, in the words of both the CTO and CEO, were resisting change.

We carried out an ethnographically inspired empirical study guided by an interpretivist epistemology, seeking to understand ‘how things work’ at Wizz (Alvesson and Sköldberg, 2017; Einola and Alvesson, 2019; Stake, 2010). Our study was conducted through observation on the company premises and semi-structured interviews with managers and employees. Our research unfolded as an iterative process where we moved back and forth between empirical work and theory development. We adjusted our research design during the process to better capture the emerging insights. As our understanding of the company, its people, the industry dynamics and the business grew, we moved from an initial focus on ‘employee resistance to new technology’ to ‘employee dislike of AI’ and then to ‘organisational affects’.

While managers often talked about employee resistance to technologies and reluctance to change to explain the slow progress of AI implementation at Wizz, after talking with employees and studying the history of the media consultancy business and its journey of digitalisation, we became sceptical of this explanation. Many employees in the traditional media department where Max the (ro)bot was first introduced had long employment histories in the industry. They told us about the various digital tools they used and the many disrupted technology projects they had experienced at Wizz. When we confronted them with questions that subtly explored their reluctance or difficulties in learning to use new technologies, we received frustrated and angry responses. ‘I know that’s what the boss probably thinks, but really, someone who cannot use technology tools and who cannot cope with change would never have survived in this industry for this long’ was a typical response.

Our observations pointed to the importance of affects as part of the puzzle of why AI implementation at Wizz was slow. They enabled us to see the managerial version of the ‘truth’ in a different light. Employees seemed to have no specific likes or dislikes about any technologies. They longed for more stability and clarity about how to use their changing technology tools. They also wished for more resources to help detect and report problems with new technology solutions such as Max, and expressed a need for a single point of contact who was knowledgeable about AI and their work. At the time of our study, this role did not exist.

Two members of our research team, including a person with experience in managing corporate technology projects, spent time at the company premises to become more familiar with its people and observe how they behaved and felt. Working like this enabled us to interact with each other and key informants and cross-check observations across multiple people. We were able to acquire additional information, sharpen our interpretations and ensure the trustworthiness of our emerging understandings (Lincoln and Guba, 1985; Schaefer and Alvesson, 2020). An example of an important topic we returned to multiple times was the complex technology the company used to build its AI applications (including ‘Max’) and its role in the business of Wizz.

The technology roadmap and Max the ro(bot)

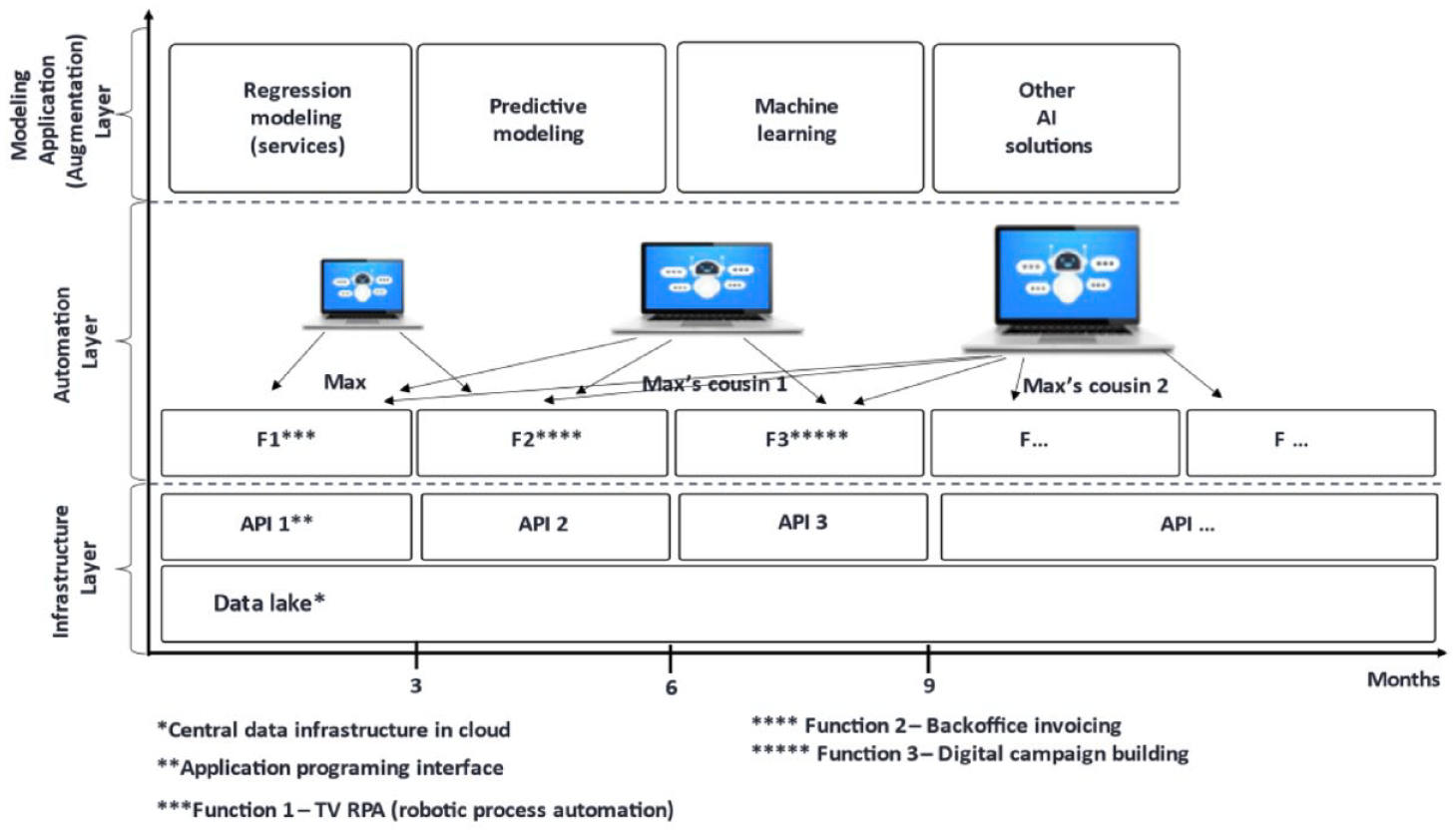

We learned early on that a detailed, long-term technology roadmap was central to the intended strategic transformation of Wizz. The CEO and CTO referred to it repeatedly and frequently framed their discussion about the company’s future around it (see Figure 1 for our simplified version). We understood from this roadmap that among other new technologies and tools that were introduced, the company’s first AI-driven rule-based software (ro)bot Max was to be in a key position.

Max as part of the technology roadmap (our adaptation of the Wizz roadmap).

Like all other organisational members, the ro(bot) was given a human name (we changed it to Max for confidentiality reasons), although he lived his digital life in the virtual world with no physical appearance – alone for now. Max obeyed a code written by people and based on rules called algorithms to execute pre-defined tasks and reach pre-set goals from which there was no deviation. Max was designed to emulate the work of people when operating in the Windows desktop environment. His role was not to be as smart as or smarter than his human colleagues but to take over one task people did: booking advertisement space. In expert language, Max’s job was mainly in the domain of basic RPA (robotic process automation). He was programmed to take care of booking processes for advertising space on television channels and to send an email to a (human) media planner to confirm when this task was completed. If a problem occurred, an error code was sent. When we asked employees to familiarise us with how they worked, we observed early on that Max made errors, causing frustration among employees over many similar incidents during the workday. At the time of our fieldwork, the code Max obeyed was not perfect, and he was repeatedly unable to complete his work despite incremental improvements by coders.

Max was also assigned other tasks. Other versions of him worked with back-office processes related to invoicing and time reporting. These tasks were performed after office hours. Unlike in the booking process, no interaction or collaboration with people was required for these tasks. Overall, the tasks and goals of Max were rather rudimentary. We gradually realised that he was not an advanced form of AI (yet) but under constant development.

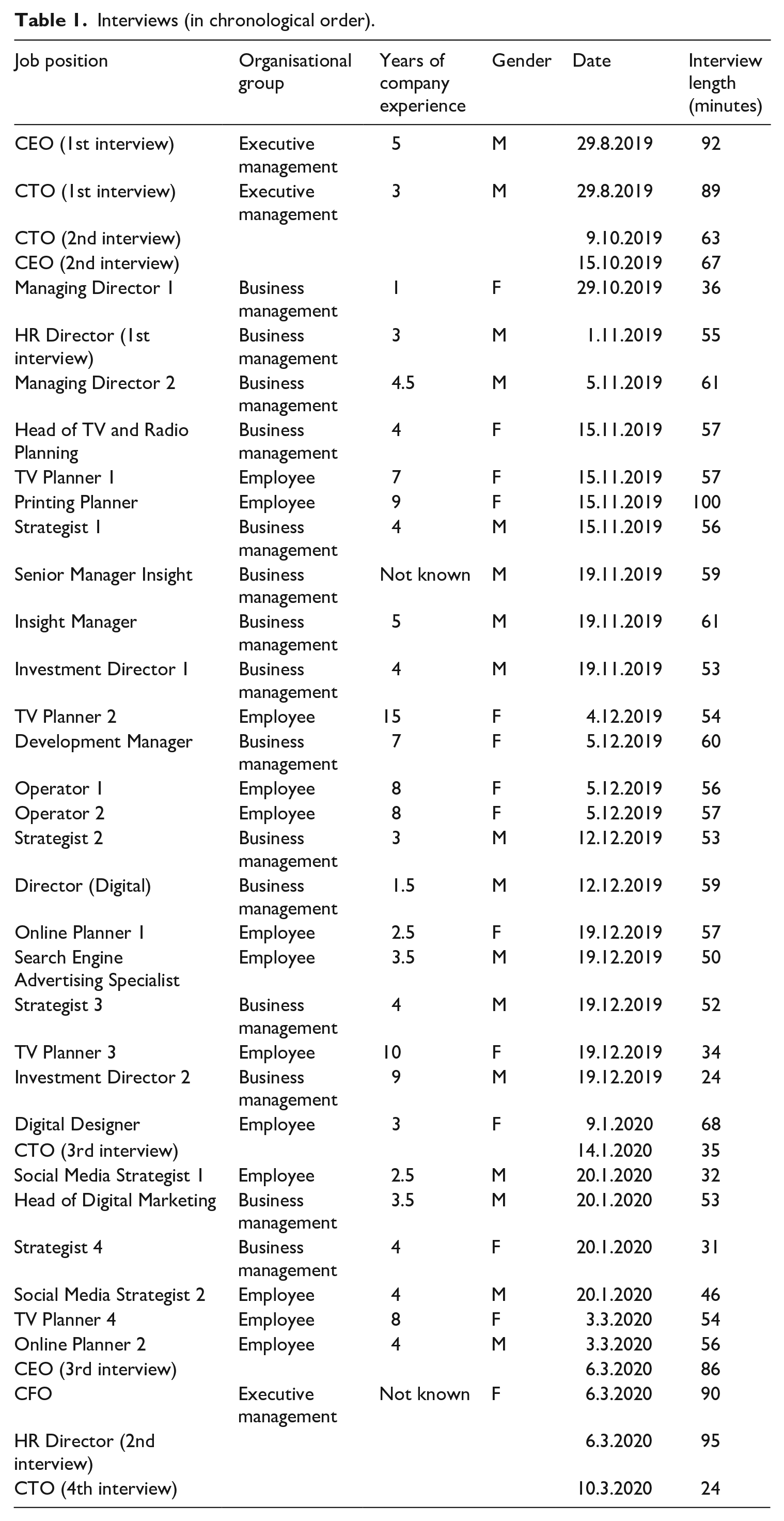

Observation and interviews

Our research was conducted through observation on the Wizz premises and 37 semi-structured interviews recorded and transcribed verbatim (see Table 1). Numerous field observations were made that guided our research process towards affects. Informal encounters and conversations with different organisational members were frequent. The interviews were conducted face to face and lasted between 24 and 100 minutes, amounting to approximately 36 hours in total. We also conducted off-site data collection, mainly targeting the management, both before and after the fieldwork, by email, chat and telephone, and we followed Wizz in the national and international media. Our research team spent a total of approximately 150 hours at Wizz over a period of 14 months (including six months of more intense fieldwork). Two experienced researchers worked in tandem. In this way, we could take notes of what we heard and saw, including emotional responses and body language, and contrast each other’s interpretations in real time to give meaning to what we observed.

Interviews (in chronological order).

Our onsite fieldwork began by interviewing the CEO and the CTO. During these interviews, we discovered that Max was about to enter the workplace. The ‘implementation’ began shortly afterwards. After these initial interviews, we conducted 35 more with managers and employees, focusing mainly on new technologies (including Max) and the changes these new technologies brought to the managers’ and employees’ work. We began our interviews with those organisational members who we noticed were most willing to formulate their thoughts and interested in talking to us. We had many meetings and conversations with some informants, but with others, only a short and more superficial or focused encounter was possible. We were satisfied when we began to detect patterns and repetition in our interviewees’ talk and realised that we had understood a topic that seemed important.

We adapted our questions and our interviewing style to each interviewee to get close to them and to show that we were interested in how they made sense of the entry of Max into the workplace. We asked questions (often repeatedly and sometimes on many occasions to ensure that our understanding was in line with what the interviewees meant) about the nature, need, scope, purpose, benefits, drawbacks and doubts regarding the newly implemented AI technology and Max in particular. Our interviews were accompanied by numerous informal meetings in the company cafeteria and other spaces, such as the company courtyard, during which the same topics were discussed in a relaxed and friendly atmosphere. Gradually, how Max was anthropomorphised and how this technology generated sometimes heated comments and discussions at Wizz became a focal point in our study.

Constantly asking ‘why?’ and ‘why not?’, questioning what we saw and heard, and trying to get beyond simplistic understandings of interview accounts and observations helped us develop a deeper understanding of how human–AI interaction worked at Wizz (Alvesson and Sköldberg, 2017; Stake, 2010). The two members of our research team took notes during and after each interview and discussed them, often on the company premises, where further observations and contacts with interviewees were made. With each visit to Wizz, our approach became more fine-tuned as we started to understand the role that the anthropomorphised ro(bot) played as a new kind of organisational member and the collective affects it evoked.

In time, we started to consider Max not only as an algorithm and a piece of (malfunctioning) software that managers and others anthropomorphised and that triggered different affects in different organisational groups but also as an amplifier of pre-existing collective (managerial and employee) affects that it mediated and further shaped through its artificial agency. We began to understand how collective affects played an important role in human–(ro)bot interaction at Wizz and how this slowed down the introduction of AI technology. We first found it strange that a (ro)bot with no body was referred to by a human name, presented as a ‘colleague’ (by the CTO) and ‘team member’ (by the Insight Manager), and described as ‘stupid’ (by TV Planner 2), ‘a beginner’ (by TV Planner 3), and ‘in need of supervision’ (by Operator 1). All these are essentially human qualities. Associating a non-human object or entity with human qualities created expectations and aroused different affects, depending on the personal experiences and role this technology played in people’s organisational lives. Affect thus became a theoretical lens through which we could analyse the anthropomorphised Max’s fortunes and misfortunes at Wizz.

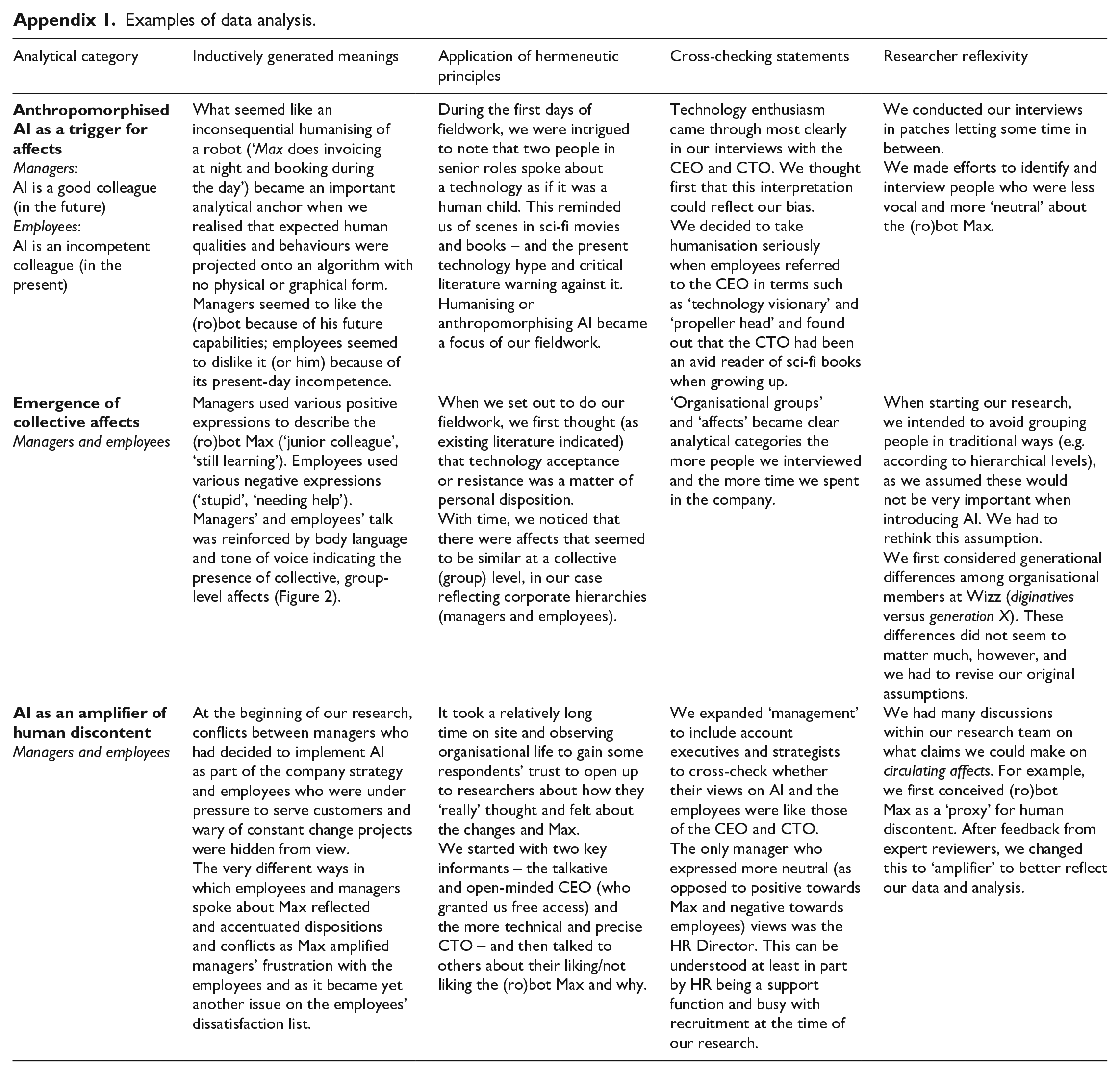

Analysis

Our study approach followed the interpretive tradition of case studies that seek a deep understanding of human experience (Stake, 2010). This offers an alternative to the increasing tendency for qualitative researchers in organisation and management studies to ‘systematically’ code data. Coding as a procedure is not appropriate for all types of qualitative empirical materials or for all traditions of qualitative inquiry; it can be seen as a static, reductionist and mechanistic process that detaches pieces of data from broader contextual understandings (Alvesson and Sköldberg, 2017; Bansal and Corley, 2011). In line with our interpretative epistemology, we explored how organisational members (managers and employees) behaved and felt and how they ascribed meaning to their behaviour and feelings and those of others, including Max. We relied on reasoning and reflection (Alvesson and Sköldberg, 2017; Einola and Alvesson, 2019) grounded in our close engagement with Wizz.

Two broad criteria for assessing the quality of our research apply: the coherence of our argumentation and the transparency of the process through which we arrived at the understandings we present as our key findings (Harley and Cornelissen, 2020). We do not ‘hide’ (Kump, 2022) behind a rigid and templated approach that limits the potential for revealing surprises and unexpected findings. Our analysis of the empirical materials relies on: (1) inductively generated meanings, (2) understandings of these meanings through the use of hermeneutic principles, (3) cross-checking the reliability of interview statements through further interviews and observations and (4) reflecting on our initial understandings and observations and interviewees’ statements (Einola and Alvesson, 2019; Gjerde and Alvesson, 2020).

Notes on our thoughts and observations during and after company visits and interviews were a starting point for our discussions immediately after each interview and visit. The purposes of these discussions were to share our fresh impressions of what we had seen and heard and identify areas about which we needed to obtain more information in subsequent visits. One such area was how stable the observed anthropomorphising was over time and how common the negative collective affects were among the employees, in contrast to the positive affects so clearly expressed by the CEO, CTO and other managers. We relied on the CTO as an anchoring point – a key informant with whom we talked multiple times to gauge his views and understand the technology roadmap for which he was responsible (see Figure 1).

We engaged in close hermeneutical reading and re-reading of our research material as it accumulated, gradually developing a feeling for the parts (i.e. interviewee statements as people made sense of their own and others’ experiences) and the whole (i.e. combining individual statements into ‘organisational groups’ such as managers and employees in traditional media emerged as a meaningful analytical procedure). We tried constantly to make sense of specific passages in the data that seemed to represent potentially important parts of the whole while conveying important clues about something specific, deeper and less obvious (see Appendix 1 for examples of how our data analysis was conducted).

To understand what kind of work Max was supposed to do, we asked employees in traditional media to describe their work process and what knowledge was needed when buying media slots. The employees repeatedly described the task as not straightforward or suitable for simple automation, in contrast to what the managers implied. The need for tacit knowledge, personal contacts and human interaction with the media companies selling advertising slots was more the rule than the exception. Many employees expressed a desire to educate their management on how to deal with the change better. The employees’ main frustrations were the lack of time available to get used to Max, lack of managerial consideration of the time employees needed to solve and report Max’s errors so that his code could be improved and employees’ voices and competence being downplayed – not a dislike of Max or technology in general. We learned that while employees at Wizz were accustomed to adopting new technologies in their work, previous technology projects had not proceeded as planned, and many employees seemed to have become suspicious about the introduction of such projects to the workplace.

To capture affects at Wizz, we followed the example of Maitlis and Ozcelik (2004). Two of our research team members first made notes separately each time interviewees referred to how they felt, how they responded emotionally to the questions and how body language complemented their verbal reactions. These notes were compared and discussed, and recurring and collective affects were identified. While observing the behaviours of organisational members, research team members made notes of emotional reactions and how these were shared between people. We began to see an affect-driven rift between organisational groups and a growing discontent amplified by a mix of positive (managers) and negative (employees) collective affects towards Max and towards each other. We detected a variety of verbal and visual signs of circulating affects. We compiled a list of collective affects of managers and employees and re-checked our empirical materials for these.

Managers expressed excitement and hope, individually and together, in interviews (talk and body language) and in encounters on the company premises. They spoke in an upbeat way and laughed heartily when talking about Max. Over time, we came to understand better the managers’ engaged tone and their excitement when they talked about Max. The significant amount of time and dedication the busy CEO, CTO and other senior managers gave to us reflected not only their ambition to make the company thrive and their enthusiasm for the new technology Max represented but also their positive affects (e.g. hope and excitement) towards the (ro)bot Max. However, we also heard and saw managers express frustration (with nervous bodily movements and sighing) with employees whom they considered to be technology averse and unable or unwilling to welcome their new AI colleague into the workplace.

Employees, in contrast, communicated by their tones of voice and manners of expression negative collective affects (e.g. frustration and anger) towards Max and other technology change projects (past and future), as well as feelings of resentment towards management for constantly changing tools and creating messy processes that they felt made their routines more cumbersome. While talking about their engagement with Max in emotional ways, employees engaged in bodily movements such as tapping their fingers on a table, speaking rapidly, rolling their eyes and sighing. We considered these to be expressions of their shared frustration. Some employees lost their temper, expressing anger and desperation, individually and together, in interviews and encounters we observed.

Finally, because of our focus on anthropomorphising and affect, terminological ambiguity at Wizz became an integral part of our study. There are different views on what a robot, bot, virtual assistant, AI and other related technical terms such as RPA mean and what their boundaries are (on the difference between RPA and AI, for example, see Ribeiro et al., 2021). Our study uses the term ‘(ro)bot’ to follow the talk of organisational members at Wizz, none of whom were computer or robotics scientists and who simply used the term ‘robot’ for the bot, which existed only in virtual space. Max’s very lack of materiality was a key aspect guiding our inquiry. The accuracy of the definitions did not seem to matter much to organisational members and seemed irrelevant to our efforts to understand affects that circulated in the organisation and were influenced by the introduction of Max.

Findings

In this section, we elucidate how and why collective affects formed at Wizz by focusing on relations between managers, employees in traditional media and the (ro)bot Max. This is done below through four themes: (1) anthropomorphised (ro)bot Max as a trigger for affects, (2) managers’ collective affects, (3) employees’ collective affects and (4) (ro)bot Max as an amplifier for human discontent.

Anthropomorphised (ro)bot Max as a trigger for affects

Introducing Max as a new ‘colleague’ (instead of a new tool), with expectations of its human-like behaviour, created a new dimension for organisational affects at Wizz. Both managers and employees indicated that the ro(bot) Max was different from other technological artefacts commonly used at modern workplaces, such as mobile phones, ordinary software and collaborative online platforms. This difference became evident in our first interviews, when the CEO and CTO attributed human qualities to Max. They presented the technology as a ‘colleague’, ‘employee’ and ‘team member’. When asked about naming the (ro)bot, a senior manager looked at us quizzically and explained: ‘Well, Max needs a name; he is one of our employees.’ Anthropomorphising, an apparently simple and inconsequential twist in organisational life, became a fertile ground for affects to emerge and circulate. Managers underlined the junior status of Max: Max is a young employee; he is learning. [. . .] He does only what he is programmed to do, so he can’t do anything else. (CTO, 2nd interview) Max is our junior colleague. He does these [booking] tasks faster. And our employees can do something else in the meantime. (Managing Director 1)

While Max was presented as ‘young’ and ‘junior’, he was clearly appreciated by managers as a new ‘team member’: Max is a team member who helps us to increase client satisfaction; he helps us to gain more clients. (Investment Director 2) We do things here as a team. [. . .] Max is definitely part of the team. (Insight Manager)

While talking with managers, we noticed that they often spoke about future prototypes of Max rather than the current version of the ro(bot). This became clear in our communication with the CTO after our fieldwork: Max is not really an AI yet, because it is still a rule-based software robot. In the future, he can be integrated with machine learning modules, which will make him slightly more intelligent, so he might become a specific type of AI-RPA. He and other process robots are only the visible tip of an iceberg that is the cloud-based infrastructure and modelling environment that will eventually help people to utilise data and AI to make themselves much more productive and valuable for the company and the clients. (CTO, excerpt from email correspondence, 31 March 2021)

In talking about Max, managers embraced the future. The junior colleague Max (both an ‘it’ and ‘he’ in the email quoted above) was closely related to his more advanced ‘cousins’ who were supposed to enter Wizz and who would be manifestations of a ‘real’ AI, available in the future. Using somewhat derogatory language about the present-day Max (suddenly calling him ‘it’), the CTO expressed a belief in a better future waiting for Max, together with his ‘cousins’. Max was the ‘tip of the iceberg’, resting on the cloud-based architecture that was the real source of disruption (see Figure 1). He was a necessary part of a technological evolution (rather than a quick transformation, as envisioned earlier) – an embryonic version of a genuine AI solution of the future and a tangible manifestation of the powerful capabilities ‘hidden’ in the underlying technology infrastructure.

The managers’ collective affects were evident not only in their hopefulness and excitement, and the warm and passionate tone they used to talk about Max, especially the future Max, but also in their body language (showing excitement, drawing on a board enthusiastically, gesturing with their hands, bursting into laughter), the large amount of time they gave to us to talk and educate us about technologies at Wizz, and the many ways in which they attributed human qualities to this ro(bot) in their talk. This was clearly the future.

A different picture of Max emerged when we observed and talked to employees working with traditional media at Wizz. They, too, anthropomorphised Max and called him a ‘colleague’. However, they referred to the then-present version of Max as ‘stupid’, a ‘beginner’ and ‘underdeveloped’. He was described as an ‘unreliable friend’ needing help (Online Planner 1) and a ‘colleague one needs to constantly keep an eye on’ (TV Planner 4). Disappointment, frustration and annoyance were shared: Max does not understand everything [. . .] it is not so simple to integrate him into our work. Max is quite stupid, and he is just a beginner. So, if we use Max for our campaign, it will take us more time, [as] he can obtain the number of viewers but not the correct audience for the campaign. (TV Planner 2)

It became clear to us that rather than embracing the future, employees were frustrated and annoyed about Max and his shortcomings in the here and now: ‘Max helps us with booking our campaigns, but unfortunately, he does not understand everything; he is a beginner. For example, he doesn’t know our customer’s target group. [. . .] Maybe when I retire, he’ll get smarter’ (TV Planner 3). While managers talked about Max and the future in abstract ways, employees pointed to its inadequacy and concrete flaws in the present: Max is not scary, but he is not yet developed enough so that he can do everything we need him to do [long pause]. We need to supervise him. (Social Media Strategist 1) I don’t see myself worrying about Max taking over my job [laughing]. His capacities are quite limited at the moment. (Social Media Strategist 2)

Future orientation – grasping what the management saw on the horizon populated by more and better Maxes – was irrelevant or missing altogether in the employees’ talk. The employees anthropomorphising Max, calling him ‘stupid’ (TV Planner 2), could be seen as a projection of their general discontent with the management and of their own rather limited view of what their jobs could be in the future and of changes they would need to embrace to learn to work with this new ‘colleague’.

Managers’ collective affects

The shared collective affects of managers towards Max included excitement, hope, sympathy and appreciation. They acknowledged Max’s shortcomings and collectively reacted positively towards his mistakes as something understandable. Instead of present shortcomings, they focused on his better future: While Max should give them [employees] more time to think and help them to do their work better, I think some still don’t like Max. During this year, hopefully, people will see that Max and the work he does are valuable and that his work impacts everybody’s life in some way or another. (CTO, 4th interview)

The ‘junior’ status of Max was routinely highlighted as a natural explanation for all his shortcomings: Max is a junior colleague, he makes mistakes, but we see a lot of potential in him. Max cannot work alone; he needs people to boss him around. [. . .] I would say that [of] a specialist’s job, Max is able to do 30–40% of [the] work tasks, but the rest needs to be done by the specialist. (Head of Digital Marketing)

However, while the future Max with his great ‘potential’ was embraced, managers’ collective affects towards employees included frustration: They [employees] still book everything in their old way; they fill in an Excel sheet that calculates numbers, which they put in manually. [. . .] The new way requires them to use a new Excel sheet that produces an algorithm for the robot. [. . .] It’s like moving from an old car with a [manual] gear[box] to an automatic one. I’m amazed that people just won’t do this small thing and we must go through all this, time and again. (CTO, 2nd interview)

Here, the CTO offers an analogy to cars and points out that one needs to adapt to the demands and possibilities of the technology at hand. For him, to move from the old way of working to the new was akin to switching from a car with manual gears to an automatic. The user has everything to gain and nothing to lose. In this analogy, and in a curious move away from anthropomorphism, the change is to new ways of working with AI as a rational and mechanical choice. There is little or no place for sentimentalism or emotions. We observed how the CTO regularly called on individual employees to persuade them to use the new (ro)bot and disrupt their old Excel-based manual booking routines. As the CEO said: We [management] created steps, and when we try to bring the processes in, we get complaints that management is just trying to enforce new rules. But I think that we should somehow encourage employees themselves to create these processes. It’s very difficult. People tend to stick to their ways of doing things. (CEO, 2nd interview)

Here, the positive collective affects of excitement and appreciation managers expressed towards the future capabilities of Max were contrasted with negative present-day collective affects such as frustration towards what managers perceived as passive employees who were incapable of or reluctant to change and who feared taking responsibility.

Employees’ collective affects

Unlike managers, employees in traditional media had to work with the present underdeveloped version of Max. Their collective affects encompassed frustration, anger, indignation, irony and annoyance: Max could have been a great colleague, but often he can’t perform his tasks [taking a deep breath], and it is me who has to fix all his mistakes while I am supposed to perform more exciting and creative tasks. (Operator 1)

For employees, despite them calling the algorithm ‘Max’, this was yet another not-so-successful technology project that failed to deliver on its promises: The intention was that a lot of manual work is removed from what we do so that we can concentrate on designing and strategy. But it has not yet come to life in any way; the appearance of Max has not reduced the amount of manual work for us. He just keeps on sending error messages to us. (Operator 2)

Promises of smarter ways of working were not kept, and frustration mounted among employees. Affects circulated, and the rift between employees and managers widened: We [employees] are trying to train him [Max], but at the moment, we can’t trust him, we can’t rely on him, he is too immature, [and] we constantly need to supervise him, while our management believes that he is our lifesaver and we are just stubborn and afraid that he is going to take our jobs. (Printing Planner)

We noticed how some employees’ respect of, and trust in, management was being eroded: The robot is supposed to make our work easier and smoother, but he is not there yet; he simply does not have enough skills [raising eyebrows] [long pause]. And I truly don’t understand why [names of managers] keep on asking us to work with this robot. (TV Planner 2)

Our discussions with employees working with traditional media showed that their tasks followed a different pattern than the car transmissions in the CTO’s analogy. In the example below, a TV Planner picked up the anthropomorphising language used by the management to emphasise the gap between what the ‘strategic level’ in the company had promised Max would deliver and what Max could do in practice, leaving employees like herself to figure out how to find time to ‘help’ Max: Our bosses have said that now you are going to do this [start working with Max]. But for us, it is more of a surprise, and we are thinking: ‘How are we going to make this happen? Who is going to make it happen?’ [. . .] All these things must be thought through [speaking firmly] [. . .] and at the end of the day, it’s us who do all that work. (TV Planner 1)

Frustrated and sometimes angry employees felt hopeless as the total amount of work increased. According to their logic, customer service had to come first, and they needed to work billable hours to keep their jobs. Helping Max did not ‘count’ towards how they were assessed and rewarded. They witnessed how Max the (ro)bot produced more extra work than utility and was not helpful as they worked on customer projects with tight deadlines. The task to be automated (the booking process) involved frequent exceptions to the rule and unexpected process outcomes that required communication between people exchanging their tacit knowledge. The rudimentary algorithm that was Max’s ‘brain’ did not capture all this.

Negative collective affects that employees experienced towards the present-day capabilities of Max were accompanied by their scepticism towards management initiatives and a feeling of a lack of appreciation for their hard work. These reflected how employees felt about their increasing workload, what for them were unfulfilled promises made by the management, and fatigue with many previous and rarely successful technology projects. Employees felt they needed more time and appreciation: ‘You can’t rebuild the ship in one night. [. . .] We need time and a little bit of gratitude to fix all these Maxes and other shiny tools’ (Printing Planner). Employees also felt they could not see where they (and the company) were going and were frustrated by not being able to do high-quality work: It is not that we are against Max; rather, I feel that we need to be given some time and space to get used to all this [long pause]. [. . .] We also need to see where we are going. [. . .] We are overloaded [with Max entering the workplace], and the quality of our work is affected by this. (Operator 1)

Employees felt that the management pushed the unsatisfactory current version of Max too hard and too quickly. For them, problems with Max reflected what they felt was a lack of appreciation for their work by managers: ‘I think it would be wise to listen to our wishes and how we would like to work with all these digital tools. I think that would help us to stay in this building [i.e. at Wizz]’ (Operator 2).

The sense of transformative momentum and urgency the management initially communicated in launching Max was not shared by the employees for whom the rhetoric was a matter of top–down information flow with little novelty value or relevance for their work. Employees coped with yet another change initiative without great excitement. Their concern was with delivering customer projects and registering as many billable hours as possible. However, many of them had to spend long extra hours testing Max’s functions for the coders to gradually improve his performance. For employees, working with Max required time-consuming personal efforts to improve the ro(bot) in practice, with no end in sight and little recognition for their efforts.

(Ro)bot Max as an amplifier for human discontent

At the time of our research, Max became an amplifier for both managers’ negative affects towards the employees and employees’ negative affects towards the management. These affects included resentment, frustration, desperation and hopelessness, expressed in both words and gestures. In the managers’ talk, negative collective affects of employees in traditional media towards Max were not connected with his present limitations but were seen as a reflection of employees’ reluctance and ineptitude to change. Anthropomorphising the ro(bot), then, was a projection of management’s general discontent with the employees and of their own idealised relation to AI, unconditional technology enthusiasm and a gaze that was preoccupied with the future horizon. This distracted managers from appreciating the daily work that needed to be done by the employees in the here and now: ‘It is so difficult for our people [employees] to get out of the feeling of being too busy to learn how to handle Max [raising eyebrows] [sigh and long pause]’ (Director, Digital). Managers attributed the slow adoption of Max in the workplace to employees not understanding what is best for them: The things the robot does are at a very basic level. These are administrative things. Max does things that people are able to do very easily, but having a robot doing these things just makes more sense. In that way, people can concentrate on other things. But somehow our people always find excuses not to work with the robot [spreads his arms]. (Investment Director 2)

With all the attention given to the AI implementation and the apparently clumsy entry of Max into the workplace, it was perhaps understandable that management blamed employees working with traditional media for the (ro)bot’s mishaps. This reflects a sense of superiority and technology enthusiasm that were evident in the way managers talked about employees, although they never criticised employees directly nor had an open conflict with them. A business manager unpacked this thought bluntly in one short sentence: ‘They [employees] don’t know how to use the tech, how to work with Max, and they don’t see what the advantages of using him are.’ This viewpoint was contested by employees among whom collective affects also rose towards Max – albeit in very different ways.

The affective term ‘junior’ used by managers did not mitigate the frustration felt by employees in traditional media whose collective affects about Max were generally negative. This highlighted the tensions that we learned were present in the organisation before the introduction of AI. Humanising the (ro)bot became problematic when employees’ active participation and collaboration were needed, while their skills, expertise and needs were disregarded in the effort to get the company’s AI transformation off the ground: Now, with the introduction of all these Maxes, our voices need to be heard. [. . .] We are knowledgeable and can recommend how to work with robots, how not to work with robots, and what needs to happen [emphasising each word separately]. (TV Planner 2)

Employees’ talk about Max reflected an apparent lack of managerial focus on people whose services were needed to work on customer projects: ‘I would say that even if we have 10 Maxes here, we must not forget that people are most important. Our management needs to remember to invest in us, our development, and our well-being’ (TV Planner 3).

The tasks of employees working with traditional media at Wizz were portrayed by managers as mechanical, low-skill and low-key. The number of employees working with traditional media had been reduced as the media and advertising industries shifted towards social media and the internet and was expected to be further reduced. However, many of these employees were still there – and many customers still intended to advertise on radio, television and billboards. Employees working with these types of media typically had considerable work experience. They had vast contact networks and personal connections that they needed to perform their jobs. Many of them also engaged in consultancy-type tasks with customers. Each employee seemed to have a slightly different job profile reflecting their personal skills, career development, and interests. They were far from being mere performers of routine tasks: I think it is great to have Max around and glorify him, but [names of managers] should remember that it is us [employees] who make all the important choices and decisions; we are the ones translating the work of Max into a human language and dealing with the customers. (TV Planner 4)

Whereas managers experienced positive collective affects about the future possibilities of the AI technology and projected this future vision to the more modest present version of this technology, and blamed employees for not embracing Max, employees forced to work with the present version experienced negative affects towards Max and the management. We witnessed how this mismatch amplified negative affects circulating between managers and employees, slowed the adoption of the anthropomorphised ro(bot) and resulted in employees’ general unwillingness to implement AI solutions in the workplace.

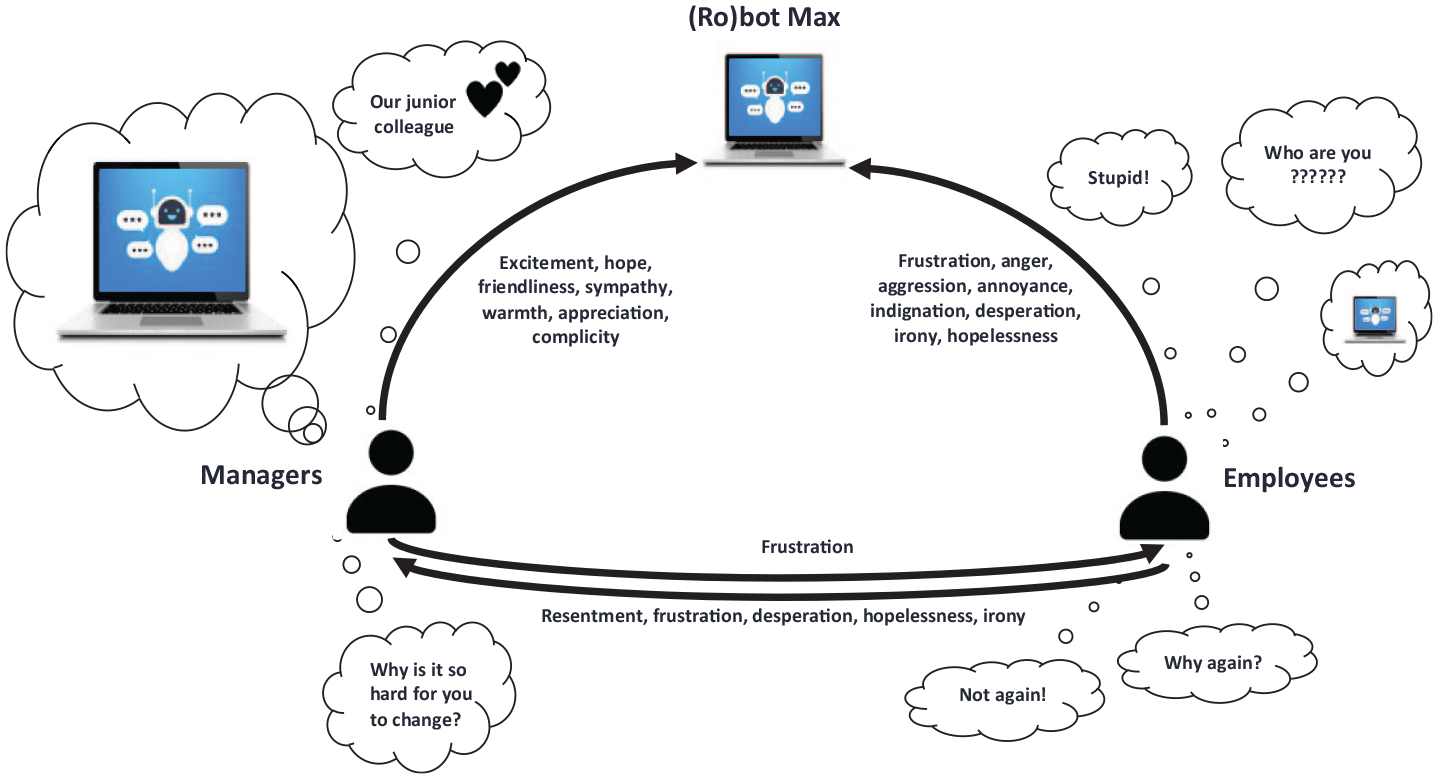

Discussion

This article offers a critical inquiry into the faltering entry of an anthropomorphised (ro)bot, an algorithm without physical or visual form, into a workplace. We argue that a circle of mixed collective affects was formed in the organisation where human affects were extended to include an artificially intelligent ‘colleague’ (see Figure 2). Our findings portray the complex relationship between affectively charged members of organisational groups and affectively inert technology (Latour, 1992) that emerges when what AI technologies do confronts the labour of humans (Sergeeva et al., 2020), highlighting how encounters between people and AI can amplify collective affects circulating in the organisation. As such, our study enhances understanding of the entry of AI in organisations where people interact with these technologies and where cooperation and agency of different organisational groups is needed (Barrett et al., 2012; Einola and Khoreva, 2023; Faraj and Pachidi, 2021; Sergeeva et al., 2020; Shestakofsky, 2017). Specifically, we offer three contributions to organisation and management research on human–AI interaction.

Anthropomorphised (ro)bot Max and a circle of mixed collective affects.

First, our study has detailed how anthropomorphising technological entities such as AI leads to expectations about human behaviour, thus influencing the affective life of the organisation. Although the (ro)bot we studied had neither physical form, like factory robots, nor a digitalised graphical representation, like virtual bots (Chun and Knight, 2020; Mori, 1970; Sheehan et al., 2020), ‘he’ was expected to behave like a human because he was designed to conduct tasks previously done by humans and he was talked about like a human (Glikson and Woolley, 2020; Haenlein and Kaplan, 2019; Raisch and Krakowski, 2021). This technology was an algorithm, however, only ‘living’ in virtual reality and in the imagination of organisational members. While the (ro)bot did not appear or sound human to be considered eerie or likeable (Bartneck et al., 2007a, 2007b; Mori, 1970), he was treated as a new type of organisational member. The (ro)bot influenced the affective life of the organisation by ‘his’ very presence, by influencing how organisational members behaved and felt, by intervening in existing relations between humans and by forging a new type of evolving relationality between this new technology and organisational members (Faraj and Pachidi, 2021).

While AI is not active or passive per se, it can shape what being human is or can be (Harari, 2016; Latour, 1992). Relations and interaction between managers and employees in the organisation are crucial here. How the new AI technology is framed by members of different organisational groups (in terms of its relations with people and organising) influences existing relations and amplifies collective affects. Our study has shown how AI solutions are not introduced in a vacuum. Their implementation is conditioned by affects circulating in the organisation.

Second, we have elucidated the ambiguity and messiness in human–AI interaction and considered the consequences this can have for the organisation. When the affective life of an organisation is disturbed by the clumsy entry of an anthropomorphised technological entity, this can lead to heightened mutual distrust and blame games. The ‘fit’ between humans and technologies in organisations and organising is conditioned by human relations, and it is thus a complex and evolving question (Wang et al., 2020). We found that different time horizons (unspecified future versus the here and now) influenced collective affects of organisational groups. There was a notable gap between what the management envisioned as a future ro(bot) and what the employees experienced as its present version. While the employees were slowly getting used to interacting with the (ro)bot, the management moved on to talking about his future ‘cousins’, who were only pictures among others in the technology roadmap most organisational members struggled to understand but who apparently were waiting to transform the company’s operations. Also, while managers talked in abstract terms about how the (ro)bot was supposed to help employees to organise their tasks, employees judged its applicability by the concrete changes it brought into their daily work in the here and now. This rift between the abstractness of transformative strategy, endorsed by management, and the concreteness of operations emanating from different work tasks and responsibilities, highlighted by employees, was an important cause of negative affects and discontent in the organisation, which the humanisation of AI fuelled.

At the same time, terminological ambiguity and messiness (i.e. whether the AI solution is a robot, bot or something else) was an integral part of its clumsy entry into the workplace. In an organisation whose members are not computer or robotics scientists or management scholars keen on understanding human–AI interaction (Raisch and Krakowski, 2021; Ribeiro et al., 2021), vagueness of terms and expressions used for new technologies suggest specific meanings (and not others), and this ambiguity influences how the technology is received and what collective affects it sets into motion and amplifies. Management first talked about a ‘robot’, building on an imaginary future, and this led to frustration among employees who were concerned about the functionality of their technology tools and increasing workloads in the present. Overall, we found that different time horizons, levels of abstraction and concreteness, and terminological ambiguity influenced the collective affects of different organisational groups and hindered the implementation of AI solutions. If we assume that technologies ‘come into being and have meaning in the world’ through relations with people and organisations (Bailey et al., 2022, p. 5), our findings suggest that these relations are infused with affects and complicated by different meanings that organisational actors give to human–AI interaction.

Third, we have shown how an anthropomorphised (ro)bot entering a workplace brought to the surface frustrations that had been forming for some time. Managers and employees projected their frustration with each other (and with earlier experiences of technology projects) via and onto the (ro)bot, thus amplifying negative collective affects circulating in the organisation. These affects constituted an ambiguous yet powerful force for complicating the ongoing organisational transformation (Beyes and De Cock, 2017; Fotaki et al., 2017). The affective lens thus offers a way to capture ‘intensities and forces’ (Beyes and Steyaert, 2012) that can remain hidden when technology projects are implemented and studied. We noticed that the (ro)bot as an imaginary technological entity became a node in relations and interaction between humans. An immaterial virtual ‘colleague’ residing in the imagination of humans can be likened to a hologram produced by a projection of expected human behaviour onto a non-human technical object (Latour, 1992).

We argue that evolving sociability and affects between people and technologies and between people who are affected by these technologies are crucial for understanding human–AI interaction and co-existence (Einola and Khoreva, 2023) and contemporary technologised organisational life in general. Following the idea that AI can only exercise its (artificial) agency as a result of a socially constructed context where some tasks are deliberately outsourced to technologies (Watson, 2019), our study confirms that it is people who choose whether to abdicate their agency and authority to empower a piece of technology to intervene on their behalf (see, for example, Proudfoot, 2011; Vesa and Tienari, 2022). While organisation and management scholars widely agree that new technologies have the potential to fundamentally shape all aspects of organising, entwining humans in more intimate and complex relations (Bailey et al., 2022), relational studies tend to emphasise the technological aspects of these relations. We complement existing research by highlighting the human, and human affect, in the ‘constitutive relations’ between technologies and organising (Faraj and Pachidi, 2021).

Our study opens avenues for future research. First, further exploration is needed to see how our findings on human–AI interaction and affects play out in companies in comparable industries and at more advanced stages of AI implementation. This is needed to deepen understanding of how people relate to each other when AI and other advanced technological innovations enter their workplaces and influence the affective life of organisations. Second, while we have refrained from taking a moral or ethical stance on AI and its implications (Watson, 2019), there is mounting evidence of potential dangers associated with a naively done implementation of new technologies (Davenport, 2018; Lindebaum et al., 2020). The ‘dark side’ of technologies and digitalisation, a previously neglected topic in organisation and management studies, is attracting research attention (Lanier, 2014; Townsend, 2017). We agree with Saifer and Dacin (2022), who propose that we dig deeper into the aesthetic, emotional and discursive aspects of technologies to be better equipped to explore the complexities and dangers they pose to organisational life, and suggest that this is complemented with a focus on collective affects. Finally, our findings suggest that human–AI interaction is a messy, ambiguous, confusing, contested and affectual phenomenon that must be studied through different lenses and using multiple methods. We propose that future studies focus on enhancing understanding of different forms of co-existence between people and constantly changing anthropomorphised AI applications in different evolving workplace contexts.

Our study offers important reflections for managers in charge of implementing advanced technology projects, such as AI, in organisations. There are real-life consequences with far reaching ripple effects of what may seem as a playful or innocent humanisation of technology. In this study, we have shown how anthropomorphising AI may lead to changes in affective organisational life, amplifying existing discontent between members of different organisational groups. When organisational life is increasingly technologised, management tends to focus on the future and explain the slower than expected progress with employee resistance. However, employees’ frustrations are likely to be grounded in a lack of appreciation of their daily work and skills as well as in their negative experiences of earlier technology projects. In the era of artificial intelligence, managing people and their relations with technologies with care is as timely as it ever was, and working with AI must be recognised, assessed and rewarded fairly. It is profoundly a human project requiring collaboration of all parties whose engagement is needed for these implementations to succeed.

Conclusion

In this article, we have contributed to research on human–AI interaction in organisation and management studies by exploring how a disruptive technology can change affective organisational life and by considering why these affective changes occur. In our study, organisational members anthropomorphised an early-stage AI application, a (ro)bot, with no physical or graphical form. This humanisation generated different shared affects towards the (ro)bot that amplified existing tensions between managers and employees. We conclude that collective affects play a central role in contemporary technology-driven organisations where the role people play in relation to the present avalanche of AI technologies is often neglected. The unrealistically high expectations of what a given AI solution can deliver are at least in part a result of extensive technology hype in our society, exaggerated managerial technology enthusiasm paired with inadequate understanding of organisational life and downplaying of the skills and knowledge that only people can possess to get work done.

Footnotes

Appendix

Examples of data analysis.

| Analytical category | Inductively generated meanings | Application of hermeneutic principles | Cross-checking statements | Researcher reflexivity |

|---|---|---|---|---|

Managers: AI is a good colleague (in the future) Employees: AI is an incompetent colleague (in the present) |

What seemed like an inconsequential humanising of a robot (‘Max does invoicing at night and booking during the day’) became an important analytical anchor when we realised that expected human qualities and behaviours were projected onto an algorithm with no physical or graphical form. Managers seemed to like the (ro)bot because of his future capabilities; employees seemed to dislike it (or him) because of its present-day incompetence. |

During the first days of fieldwork, we were intrigued to note that two people in senior roles spoke about a technology as if it was a human child. This reminded us of scenes in sci-fi movies and books – and the present technology hype and critical literature warning against it. Humanising or anthropomorphising AI became a focus of our fieldwork. |

Technology enthusiasm came through most clearly in our interviews with the CEO and CTO. We thought first that this interpretation could reflect our bias. We decided to take humanisation seriously when employees referred to the CEO in terms such as ‘technology visionary’ and ‘propeller head’ and found out that the CTO had been an avid reader of sci-fi books when growing up. |

We conducted our interviews in patches letting some time in between. We made efforts to identify and interview people who were less vocal and more ‘neutral’ about the (ro)bot Max. |

|

Managers and employees |

Managers used various positive expressions to describe the (ro)bot Max (‘junior colleague’, ‘still learning’). Employees used various negative expressions (‘stupid’, ‘needing help’). Managers’ and employees’ talk was reinforced by body language and tone of voice indicating the presence of collective, group-level affects (Figure 2). |

When we set out to do our fieldwork, we first thought (as existing literature indicated) that technology acceptance or resistance was a matter of personal disposition. With time, we noticed that there were affects that seemed to be similar at a collective (group) level, in our case reflecting corporate hierarchies (managers and employees). |

‘Organisational groups’ and ‘affects’ became clear analytical categories the more people we interviewed and the more time we spent in the company. | When starting our research, we intended to avoid grouping people in traditional ways (e.g. according to hierarchical levels), as we assumed these would not be very important when introducing AI. We had to rethink this assumption. We first considered generational differences among organisational members at Wizz (diginatives versus generation X). These differences did not seem to matter much, however, and we had to revise our original assumptions. |

|

Managers and employees |

At the beginning of our research, conflicts between managers who had decided to implement AI as part of the company strategy and employees who were under pressure to serve customers and wary of constant change projects were hidden from view. The very different ways in which employees and managers spoke about Max reflected and accentuated dispositions and conflicts as Max amplified managers’ frustration with the employees and as it became yet another issue on the employees’ dissatisfaction list. |

It took a relatively long time on site and observing organisational life to gain some respondents’ trust to open up to researchers about how they ‘really’ thought and felt about the changes and Max. We started with two key informants – the talkative and open-minded CEO (who granted us free access) and the more technical and precise CTO – and then talked to others about their liking/not liking the (ro)bot Max and why. |

We expanded ‘management’ to include account executives and strategists to cross-check whether their views on AI and the employees were like those of the CEO and CTO. The only manager who expressed more neutral (as opposed to positive towards Max and negative towards employees) views was the HR Director. This can be understood at least in part by HR being a support function and busy with recruitment at the time of our research. |

We had many discussions within our research team on what claims we could make on circulating affects. For example, we first conceived (ro)bot Max as a ‘proxy’ for human discontent. After feedback from expert reviewers, we changed this to ‘amplifier’ to better reflect our data and analysis. |

Acknowledgements

The authors wish to thank the anonymous reviewers for engaging with our research and helping us make it better, and Professor Frank den Hond for his inspiring comments on an early version of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship and/or publication of this article: this work was supported by the Foundation for Economic Education Liikesivistysrahasto [220165, 2022] and Dr. h.c. Marcus Wallenberg’s Foundation for Research in Business administration [2022].