Abstract

Objective

This study investigated whether individual differences in multi-tasking ability (MTa) modulate the benefit and cost of supervising imperfect automation on performance, workload, situation awareness, stress, and trust in simulated air traffic control (ATC).

Background

Automation is rarely perfectly reliable, and automation failures can have significant detrimental effects. Prior work established that MTa can modulate the benefit of perfectly reliable automation. However, it is unknown whether MTa influences the cost of supervising imperfect automation.

Methods

MTa was indexed using a latent factor from three cognitive tasks completed by 113 undergraduate students. Participants completed two ATC blocks: one without automation (manual) and one with automated assistance for accepting incoming aircraft, handing-off outgoing aircraft, and conflict detection (violations of minimum separation). Conflict detection automation was perfectly reliable. Automation highlighting aircraft needing acceptance and hand-off was imperfect, missing 30% of events (the “unreliable” trials).

Results

Lower-MTa participants obtained greater performance benefits to aircraft hand-off from reliable-automation but suffered greater costs from unreliable automation compared to manual hand-off, relative to higher-MTa participants. Situation awareness was improved by automation provision, and workload reduced, although MTa did not vary these effects. Stress reduction with automation, compared to manual, was greater for lower-MTa compared to higher-MTa participants. Higher-MTa participants calibrated trust across the different reliability of ATC tasks more effectively.

Conclusion

MTa can lead to differentiated effects of imperfect automation on aircraft hand-off, perceived stress and trust.

Application

MTa may warrant consideration in personnel hiring and role selection for work contexts where automation reliability is volatile.

Introduction

Many modern workplaces require workers to strategically direct and divide their attention across multiple tasks when interacting with technology, which is cognitively demanding (Gutzwiller et al., 2019). When task demands overwhelm workers’ cognitive capacity this can result in reduced task performance (Matthews et al., 1996) and increased accident risk in safety critical settings (Clarke, 2012). Automation can support worker decision making by performing some, or all their tasks. Reliable automation (i.e., performing accurately and as expected) can release cognitive capacity and reduce workload relative to unaided manual performance (Kaber & Endsley, 2004; Onnasch, Wickens, et al., 2014). Automation is therefore essential for managing increasing demands in workplaces such as air traffic control (ATC), where growing traffic volumes may overwhelm human ability to maintain safety without automated assistance (Trapsilawati et al., 2017).

Reliable automation typically reduces task demands on operators, resulting in improved performance, reduced stress, and reduced mental workload (Onnasch, Wickens, et al., 2014). Perfectly reliable automation is not guaranteed due to inherent limitations of complex technology and the unpredictable nature of modern work environments. Automation can be “brittle” when operating in circumstances for which it was not designed, resulting in incorrect automated actions/advice (Smith, 2017). Automation failures can be elementary, involving missing or lost functionality, or more systemic (Jamieson & Skraaning, 2024). Alternatively, automation may work as intended by the designer but not as the operator expects (Sebok & Wickens, 2017). Regardless of the cause, imperfect automation can result in poorer performance than the operator performing the same task unaided (Strickland et al., 2023; Van Acker et al., 2018). It is therefore essential for operators to be selected, trained, and supported by the system to respond appropriately to imperfect automation. The current study examines how individual differences in cognitive ability, specifically multi-tasking, affect performance when working with imperfect automation.

Multi-Tasking Ability and Automation Supervision

In general, when task demands increase, individuals with more cognitive capacity can better maintain manual task performance (Schumacher et al., 2001). Studies have also sought to identify which facets of cognitive capacity impact operator performance when working with automation. For example, greater working memory capacity has been associated with calibrated trust and reliance on automation (Rovira et al., 2017). Further, it has been suggested that individuals who can more effectively switch attention perform better in multi-tasking environments (Chen & Barnes, 2012; Chen & Terrence, 2009; Wright and Chen, 2018) and may work more effectively with imperfect automation.

Individual multi-tasking ability (MTa) varies widely (Redick et al., 2016) and is commonly defined as “the strategic direction of attention” between multiple tasks (Gutzwiller et al., 2019, p. 197). Specifically, MTa can be defined in terms of three elements: (1) concurrently performing multiple tasks, (2) consciously shifting between tasks, and (3) performing component tasks in a short duration (Oswald et al., 2007). Concurrent task performance is challenging when tasks compete for the same limited pool of cognitive/attentional resources (e.g., Multiple Resource Theory, Wickens & Boles, 1983; Cognitive Bottleneck Theory, Pashler, 1984). Performing short duration consecutive tasks and shifting between tasks can incur costs arising from the need to reconfigure perceptual and cognitive resources (Monsell, 2003; Visser et al., 1999).

Monitoring automation typically requires operators to multi-task as they switch between attending to tasks needing manual completion and monitoring automation performance. As such, supervising automation may cognitively strain lower-MTa individuals (Balfe et al., 2015). Greenwell-Barnden et al. (2025) investigated how MTa interacted with simulated ATC performance. Perfectly reliable automation used highlighting and flashing to assist participants in identifying which incoming aircraft required acceptance and which outgoing aircraft required hand-off. While higher-MTa resulted in better performance (acceptance/hand-off speed and accuracy), participants with lower-MTa received greater benefits from the provision of automation. However, as this automation was perfectly reliable, it is unknown how these findings extend to the supervision of imperfect automation.

There are several reasons why MTa may modulate the cost of supervising imperfect automation. First, MTa may impact when (or if) operators detect automation errors (Balfe et al., 2015; Strand et al., 2014). Higher-MTa individuals may rely less on automation, and/or be less complacent in following its advice, leading to better detection of automation failures (Cak et al., 2020; Cullen et al., 2014). Performing multiple tasks concurrently may bring lower-MTa individuals closer to the limit of their capacity to monitor automation, potentially leading to slower/less accurate failure detection (McGarry et al., 2003; Rovira et al., 2002). In addition, having detected the failure, MTa may impact the speed of intervening to correct the failure (Bowden et al., 2024). Therefore, lower-MTa individuals may take longer to (a) detect errors and/or (b) take back manual control (Körber et al., 2015; Wickens et al., 2005).

In addition to impacting performance, automation affects operator experience. Psychological constructs, including situation awareness (SA), workload, stress, and trust, are frequently examined in relation to automation (Durso & Alexander; 2010; Loft et al., 2023; Onnasch, Wickens, et al., 2014) and may interact with MTa, a possibility which to the best of our knowledge has not been examined.

SA can be defined as the perception and comprehension of the task environment and projection of its near-future states (Endsley, 1988; Vu & Chiappe, 2015). Both reliable and imperfect automation can impair operator SA, relative to manual performance (Manzey et al., 2012; Strybel et al., 2016). While Greenwell-Barnden et al. (2025) found no effect of perfectly reliable automation on SA, and no modulating effect of MTa, imperfect automation may lead to a different result. For example, lower-MTa individuals may have poorer SA with imperfect automation if they are overwhelmed by task demands and rely more on automation, compared to higher-MTa individuals.

Workload describes the relationship between task demands and available operator mental capacity, with higher workload occurring as task demands approach human cognitive capacity (Young et al., 2015). Higher workload can lead to poorer SA (Durso & Alexander, 2010; Loft et al., 2023), although the relationship is complex and workload and SA can often not associate (Endsley et al., 2024). Lower-MTa individuals could experience higher workload (or less workload reduction) with imperfect automation compared to manual due to greater residual demands on their capacity (Kahneman, 1973; Saqer & Parasuraman, 2014). For example, automation misses could increase workload if operators are required to increase their vigilance to compensate for the system’s failure (Wickens et al., 2015; Young et al., 2015). Thus, while reliable automation may have workload benefits for lower-MTa individuals (Greenwell-Barnden et al., 2025), the workload cost of imperfect automation may be greater. This conjecture has not been previously examined.

While lower workload may reduce stress with reliable automation, imperfect automation may increase stress (if workload is increased; Matthews & Desmond, 2002). Stress may also increase with imperfect automation requiring the performance of multiple tasks at once (Sauer et al., 2012). Increased stress may therefore contribute to poorer human performance outcomes when using imperfect automation (Parasuraman et al., 2008). This could be particularly detrimental for lower-MTa individuals who need to expend more cognitive effort when performing multiple tasks due to limited cognitive resource availability (Salvucci & Taatgen, 2011; Tombu & Jolicœur, 2003).

Trust in automation varies as a function of (a) automation reliability, such that higher trust should be placed in perfectly reliable automation relative to imperfect automation (Bliss & Dunn, 2000; Chiou & Lee, 2023), (b) individuals’ perceived ability to supervise automation, and (c) individuals’ level of manual task ability (Lee & See, 2004; Moray et al., 2000). Lower-MTa individuals may trust automation more due to lower perceived manual task ability, regardless of the reliability of automation. While higher-MTa individuals may trust automation less, even when it is reliable, because they have higher confidence in their own abilities, and therefore less perceived need for assistance (Pop et al., 2015).

Examining SA, workload, stress, and trust provides insight into the mechanisms underlying MTa-related performance differences with imperfect automation. These operator state measures may serve as diagnostic indicators of whether performance effects stem from capacity limitations (workload), psychological burden (stress), or inappropriate automation reliance/monitoring patterns (trust, SA), potentially informing both theoretical understanding and practical interventions to enhance task performance.

Current Study

We investigated the extent to which imperfect automation interacted with MTa to predict task performance and other outcomes in simulated ATC. Participants completed two ATC blocks: one manual, and one with automated assistance. In the manual block, participants accepted incoming aircraft, handed-off departing aircraft, and intervened to prevent loss of separation between aircraft (conflicts). In the automation block, participants were provided with aircraft color highlighting to draw their attention to aircraft requiring acceptance or handing-off (Greenwell-Barnden et al., 2025). This can be considered a ‘lower degree’ of automation (Wickens et al., 2010) as it augments information acquisition and processing, with the participant still needing to action automated advice. Acceptance and hand-off automation were imperfect (30% miss-rate). This reliability was selected based on a quantitative literature review which suggested 70% accuracy is a “tipping point” beyond which unreliable automation becomes detrimental and performance outcomes are typically poorer than unaided (i.e., manual) performance (Wickens & Dixon, 2007). For the conflict detection task, perfectly reliable automation correctly highlighted all conflicting aircraft.

MTa was indexed via three cognitive tasks; Psychological Refractory Period (PRP; Van Selst et al., 1999), Dual Response Selection task (Dual task; Dux et al., 2009), and Attentional Blink task (AB; Chun & Potter, 1995). The Dual task and the AB task capture the central processing limits associated with multi-tasking (Carrier & Pashler, 1995; Chun & Potter, 2001) and are linked to brain regions common to multi-tasking processes, including task-switching (Dux et al., 2006). These tasks have been used to represent MTa components previously (Klapp et al., 2019; Tombu & Jolicœur, 2003) and in one study found to load onto a response selection latent factor, indicating commonalities in underlying processes (Bender et al., 2018).

In the automation block, we predicted that the performance benefits to aircraft acceptance and hand-off (i.e., faster/more accurate) on reliable-automation trials relative to manual trials would be greater for individuals with lower-MTa (replicating Greenwell-Barnden et al., 2025). We also predicted that the performance cost to aircraft acceptance and hand-off (i.e., slower/less accurate) on unreliable-automation trials relative to manual trials (Metzger & Parasuraman, 2005; Rovira & Parasuraman, 2010), would be greater for lower-MTa participants. For the perfectly-reliable conflict detection automation, we predicted a main effect of better automation-assisted conflict detection performance than manual performance (Bowden et al., 2025, and replicating Greenwell-Barnden et al., 2025). However, given MTa did not affect these performance gains with reliable conflict detection automation in Greenwell-Barnden et al. (2025), we predicted no interaction with MTa.

As per Greenwell-Barnden et al. (2025), higher-MTa participants are expected to have better SA (MTa main effect). Whether MTa would interact with unreliable-automation to predict SA was less clear. As outlined previously, participants may have poorer SA with imperfect automation (relative to their SA during manual task completion) due to the higher resource allocation required to manage task demands (Jipp & Ackerman, 2016; Kaber et al., 2000). Therefore, we tentatively predicted better SA in the manual block, compared to imperfect automation.

Although 30% imperfect for aircraft acceptance and hand-off, automation should overall reduce perceived workload for acceptance, hand-off, and conflict detection (automation use main effect) (Bowden et al., 2025; Onnasch, Ruff, & Manzey, 2014; and replicating Greenwell-Barnden et al., 2025). Further, we predicted that lower-MTa participants would show a greater reduction in perceived workload compared to manual, even with imperfect automation, than higher-MTa participants. Higher-MTa participants may experience less stress than lower-MTa participants (MTa main effect; Sauer et al., 2012). Higher-MTa participants may trust automation more appropriately relative to its reliability (i.e., interaction such that higher trust for reliable conflict detection automation than for imperfect acceptance and hand-off automation). In contrast, lower-MTa participants may not calibrate their trust based on the differential reliability of automation across the three tasks.

Methods

Participants

University undergraduate students (N = 121) participated and received AUD$10 and partial course credit. Eight participants’ data were excluded: four had missing data on at least one task, and four performed below chance accuracy on the Dual task. The final sample was N = 113 (36 males, 75 females, two identified as “other,” M age = 21.23, SD = 6.38, range = 18–47 years). The sample size and number of stimuli resulted in ∼2000–8000 observations, which exceeds recommended observations for repeated-measures designs (e.g., 40 participants with 40 stimuli each; Brysbaert & Stevens, 2018).

Eight participants had completed a previous ATC study. To estimate whether this resulted in performance differences relative to naïve participants, we conducted preliminary analyses including prior experience as a factor. Prior experience did not significantly predict performance and so we elected to include these eight participants in the analyses below. This research complied with the tenets of the Declaration of Helsinki and was approved by the Human Research Ethics Committee at The University of Western Australia. Informed consent was obtained from each participant.

Procedure

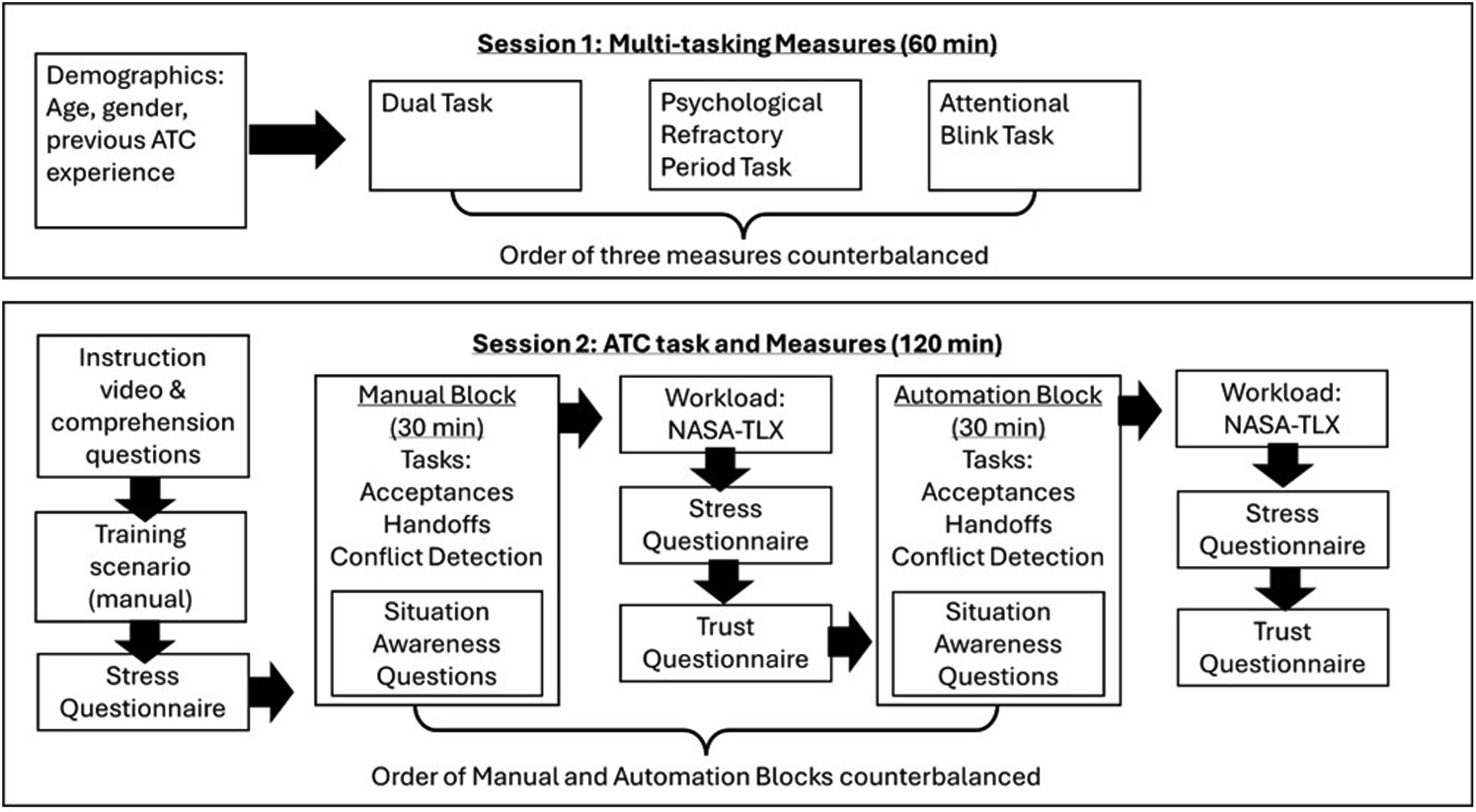

The study was completed across two sessions, with an intervening 10–15 min break to reduce fatigue.

Session 1 (60 min): Participants were informed of the procedure and provided demographics. Participants then completed three MTa tasks in counterbalanced order.

Session 2 (120 min): Participants completed a 20-min audio-visual training presentation in PowerPoint which explained the ATC task, the difference between manual and automation conditions, and the nature of the SA queries. Participants were told the automation “should be reliable, but may not be perfect” and to continue to accept/hand-off aircraft even if they noticed automation did not highlight an aircraft needing acceptance/hand-off. Participants were instructed to prioritize conflict detection among the tasks. Training concluded with 10 multi-choice questions to ensure comprehension. Participants then completed a 20-min manual practice session and were provided with task performance feedback. Participants then completed two 30-min blocks (manual, automation) in counterbalanced order. Reaction time (RT) and accuracy for acceptance, handoff, conflict detection tasks, and SA questions were collected during the blocks. Workload, stress, and trust measures were collected post-block (stress was also assessed before the ATC task commenced—see Figure 1). Study procedure and timeline.

Measures

For the multitasking battery measures, we employed the methodologies described in Greenwell-Barnden et al. (2025), with no modifications. In addition, readers can consult the relevant citations below for full stimulus parameters for each task.

Multi-tasking—The Dual Response Selection task (Dual task; Dux et al., 2009). On each trial participants saw a single shape (hexagon/triangle), heard a complex tone, or were presented with both simultaneously. Symbols and tones were mapped to keys on the right or left hand (one hand per task, counterbalanced between participants), and participants identified them without pressing both keys simultaneously. Participants learned keyboard mapping corresponding to the tones and symbols during 24 counterbalanced practice trials. Experimental trials comprised 4 blocks with 36 trials each (144 total). Faster RT indicated lower dual-task processing cost.

Multi-tasking—Psychological Refractory Period (PRP; Van Selst et al., 1999). On each trial, participants heard a complex tone from a set of four for 200 ms and saw a symbol (#, &, @, or %) for 200–600 ms, with a short (200 ms) or long (1000 ms) blank interval between stimuli presentation. Each of these two types of tasks was mapped to keys on the right or left hand (counterbalanced between participants). Participants learned keyboard mapping corresponding to the four complex tones and symbols during 128 practice trials. Experimental trials comprised 5 blocks with 32 trials each (160 total). Faster RTs reflected more efficient task-switching.

Multi-tasking—Attentional Blink task (AB; Chun & Potter, 1995). On each trial, participants identified two target letters (excluding I, L, O, Q, U, V, and X) among 18 distractors items (digits 2–9 and symbols) presented for 100 ms. Targets (T1 and T2) were separated by zero, two, or seven distractors. The task contained 120 trials, split equally across distractor conditions. The AB was calculated as the difference in accuracy between T1 and T2. A smaller AB indicates better multi-tasking ability via better working memory and distractor filtering.

Performance—Air Traffic Control Task (Fothergill et al., 2009). Participants supervised a sector of airspace through which aircraft traveled along intersecting flight paths and at varying cruising altitudes and speeds. Participants accepted aircraft by pressing the “A” key and clicking on the aircraft within 20s of it crossing the boundary into the controlled sector. Participants handed-off aircraft by pressing the “H” key and clicking on the aircraft within 20s of it crossing the boundary to exit the sector. Participants prevented aircraft breaching minimum safe separation (1,000 ft vertical, five nautical miles lateral) by clicking on aircraft, then selecting the other aircraft in the conflict pair. A correct intervention resulted in one of the aircraft increasing altitude by 1,000 ft to avoid the conflict.

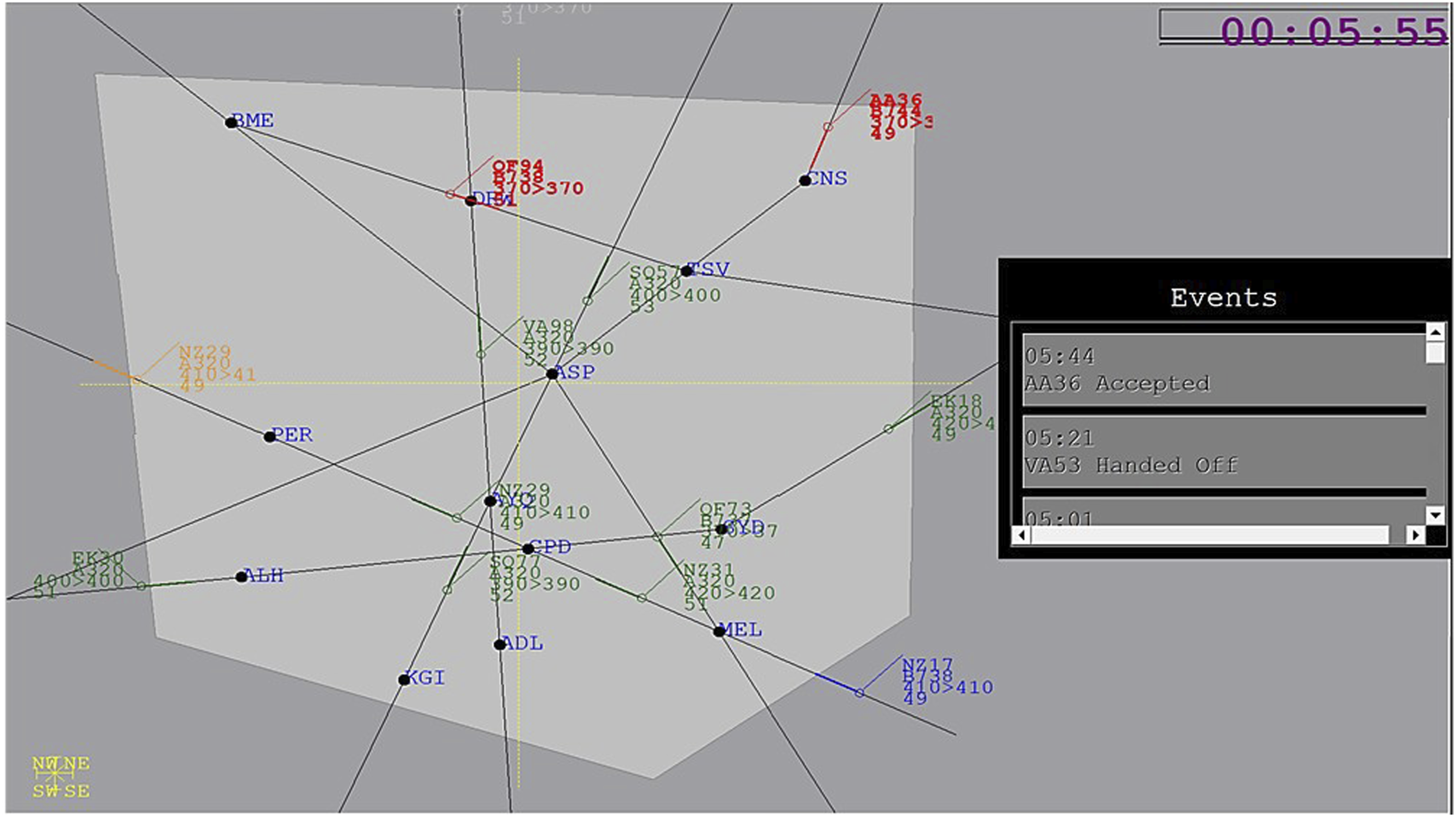

Participants completed two blocks: one manual and one with automation (order counter-balanced). In the manual block, acceptances, hand-offs, and conflict detection were performed by the participant without automation. In the automation block (Figure 2), acceptance and hand-off automation, when reliable, used highlighting and flashing to draw participant attention towards aircraft in need of acceptance (blue) or hand-off (orange). Automation was 100% reliable for the first 5 min. Afterward, approximately every third aircraft was missed by automation. Overall, acceptance/hand-off automation was 70% reliable. Conflict detection automation highlighted all pairs of aircraft that would potentially conflict or pass close (near-misses). Conflict detection automation was 100% reliable. Adjusted RTs (mean RT divided by accuracy) were calculated separately for reliable and unreliable acceptance, hand-off, and conflict detection trials to account for potential speed-accuracy trade-offs (Liesefeld & Janczyk, 2019; Visser et al., 2015). ATC sector display. When automation was reliable, aircraft flashed blue when they approached the participants’ sector (light grey polygon) indicating they required acceptance (NZ17) or orange indicating they required hand-off (NZ29). Pairs of aircraft that might potentially conflict were red and bolded (AA36 and QF94). Acceptance and Handoff automation was 70% reliable, and the Conflict Detection automation was 100% reliable. In the manual block, all aircraft remained green throughout. Actions performed by the participant were logged in the “Events” box on the right of screen.

Situation awareness: A modified Situation Present Awareness Method (SPAM; Durso & Dattel, 2004) measured SA. Participants were instructed to click on a “Ready for Question?” prompt within 10s. The task was then paused, but the display was not blanked. One SA query was then presented along with four responses. Participants were instructed to click a response option as quickly and accurately as possible. Queries appeared every 2–3 min (18 total). The full list of SA queries is presented in Supplemental Materials. Adjusted RT was calculated as for performance measures (as noted above).

Workload: The NASA-TLX (Hart & Staveland, 1988) measured subjective workload. Participants completed the NASA-TLX twice—once after each block. A global workload score was calculated as the mean of the six weighted subscale scores, ranging from 0 (low) to 100 (high).

Stress: The Short Stress-State Questionnaire (SSSQ; Helton & Näswall, 2015) was completed before the first block (pre-task baseline) and then after each of the two blocks. This measure comprises 24 items with responses on a 5-point Likert scale (“not at all” to “extremely”). Higher total scores indicate greater perceived stress.

Trust: Following each block, participants completed six questions with responses on a 5-point Likert scale ranging from 1 (strongly disagree) to 5 (strongly agree) about their “trust” in the acceptance/hand-off automation and in the conflict detection automation (Merritt et al., 2013). Higher total scores indicate greater trust in automation.

Analysis was conducted in R. Linear mixed models using the lme4 plugin (Bates et al., 2015) for R (R Core Team, 2015).

Results

Data Cleaning

The following data cleaning was conducted before calculating a multi-tasking factor. For the PRP and Dual tasks, mean target RTs were calculated for correct trials only. Trials with RTs <150 ms and >3SD above the mean RT for each participant were excluded. PRP also removed responses less than 50 ms apart, as these do not reflect sequential decision making (Ulrich & Miller, 2008). This resulted in the removal of 0.90% of Dual task and 13.2% of PRP data. Additionally, all participant data were omitted for a task if they had an overall mean accuracy of less than 50%. This removed four participants’ Dual task (none removed from AB or PRP task).

For the ATC task, acceptance and hand-off data in the automation block were separated into “trial type” (reliable, unreliable). RT outliers were removed for the manual block and each trial type independently, using the outlier criteria described above. Adjusted RTs (mean RT divided by accuracy) were then calculated. See Supplemental Materials for task descriptive statistics.

MTa Factor Score

Three variables were used to formulate the latent MTa factor (following Greenwell-Barnden et al., 2025; Redick, 2016). These included: mean RT at shortest inter-target interval in the PRP task, mean RT in the dual stimulus presentation condition in the Dual task, and AB Combined T1s for all lags. Task descriptive statistics are presented in Supplemental Materials. These variables represent the most difficult condition within each task, thus better reflecting MTa. Variables were centered by transforming on a z-distribution before factor analysis. A participant’s MTa factor score was created by saving the Bartlett scores from the factor analysis which isolate shared variance on a factor across the tasks included (DiStefano et al., 2009). Scores were transformed (multiplied by −1) to ensure higher scores represented better MTa (Bartholomew et al., 2009). There was an adequate range of MTa factor scores (SD = 0.78, min = −1.73, max = 2.22) comparable to our previous study (Greenwell-Barnden et al., 2025).

Linear Mixed Models on ATC Performance and Situation Awareness

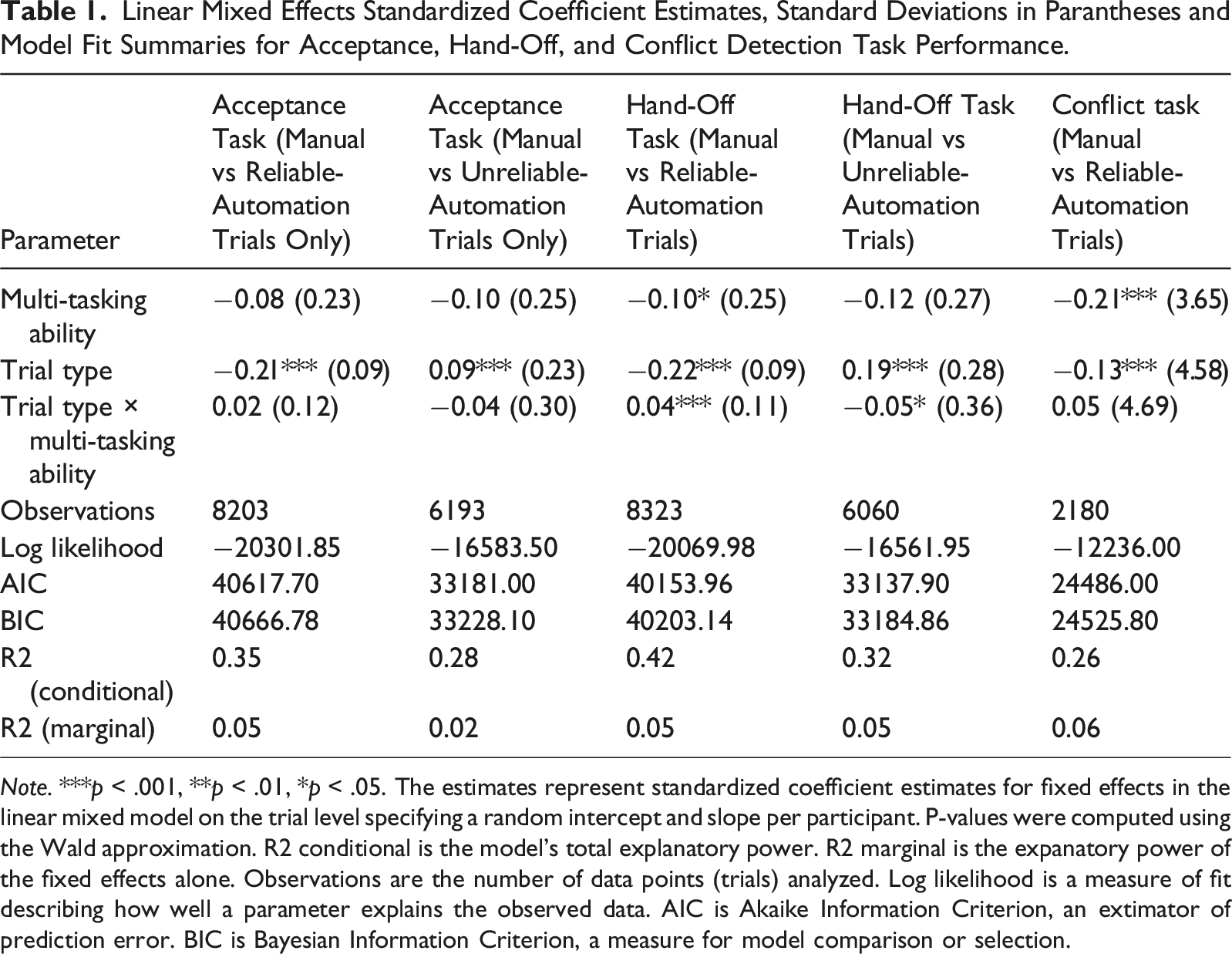

Linear Mixed Effects Standardized Coefficient Estimates, Standard Deviations in Parantheses and Model Fit Summaries for Acceptance, Hand-Off, and Conflict Detection Task Performance.

Note. ***p < .001, **p < .01, *p < .05. The estimates represent standardized coefficient estimates for fixed effects in the linear mixed model on the trial level specifying a random intercept and slope per participant. P-values were computed using the Wald approximation. R2 conditional is the model’s total explanatory power. R2 marginal is the expanatory power of the fixed effects alone. Observations are the number of data points (trials) analyzed. Log likelihood is a measure of fit describing how well a parameter explains the observed data. AIC is Akaike Information Criterion, an extimator of prediction error. BIC is Bayesian Information Criterion, a measure for model comparison or selection.

ATC Performance: Separate LMM analyses were conducted for the acceptance and hand-off tasks, first comparing manual and reliable-automation trials, and then comparing manual and unreliable-automation trials.

Aircraft acceptance was better on reliable-automation trials than manual trials (i.e., negative beta indicating a decrease in adjusted RT), and poorer on unreliable-automation trials than manual trials (i.e., positive beta indicating an increase in adjusted RT). MTa was not a significant predictor, and the interaction between MTa and trial type was not significant.

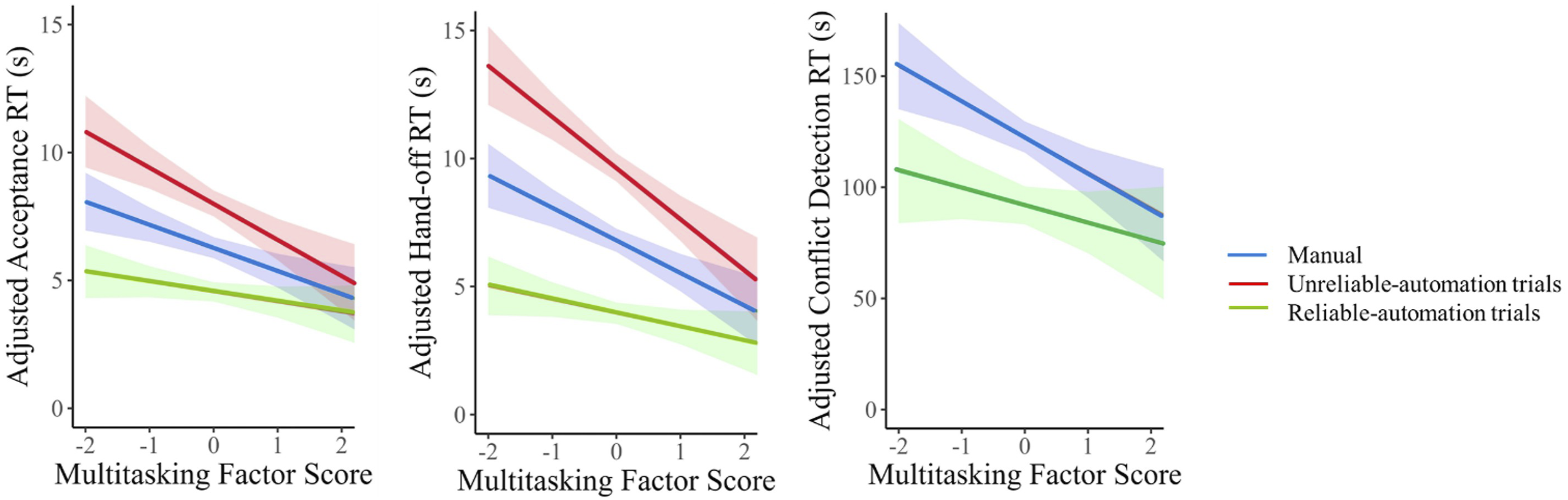

Hand-off performance was better on reliable-automation trials than manual trials and poorer on unreliable-automation trials than manual trials. Higher-MTa participants performed better than lower-MTa participants. The interaction between MTa and trial type was significant for both reliable-automation and unreliable-automation trials (compared to manual). Figure 3 shows the performance of higher-MTa participants was less affected by automation compared to lower-MTa participants, regardless of automation reliability. Lower-MTa participants received a performance benefit (compared to manual) on reliable-automation trials, but also a greater performance cost on unreliable-automation trials. Adjusted RT (mean RT divided by accuracy in seconds) for acceptance, hand-off, and conflict detection tasks by trial type (manual, reliable-automation, and unreliable-automation) against multi-tasking (MTa) score (normed). LMM slope and intercept are provided. Lower adjusted RT reflects better performance. The blue line (dashes) represents manual trials, the red line (solid) represents unreliable-automation trials, and the green line (dots) represents reliable-automation trials.

For the conflict detection task, automation was perfectly reliable. Therefore, we compared reliable-automation and manual trials (Figure 3). Trial type was a significant predictor, such that conflict detection was better on automation trials relative to manual trials. MTa was also a significant predictor, such that higher-MTa participants had better conflict detection than lower-MTa participants. The interaction between MTa and trial type was not significant.

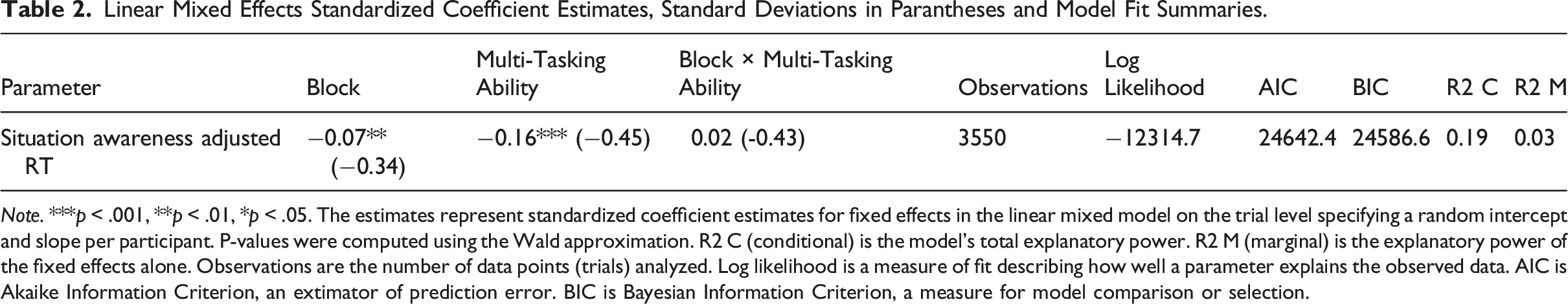

Linear Mixed Effects Standardized Coefficient Estimates, Standard Deviations in Parantheses and Model Fit Summaries.

Note. ***p < .001, **p < .01, *p < .05. The estimates represent standardized coefficient estimates for fixed effects in the linear mixed model on the trial level specifying a random intercept and slope per participant. P-values were computed using the Wald approximation. R2 C (conditional) is the model’s total explanatory power. R2 M (marginal) is the explanatory power of the fixed effects alone. Observations are the number of data points (trials) analyzed. Log likelihood is a measure of fit describing how well a parameter explains the observed data. AIC is Akaike Information Criterion, an extimator of prediction error. BIC is Bayesian Information Criterion, a measure for model comparison or selection.

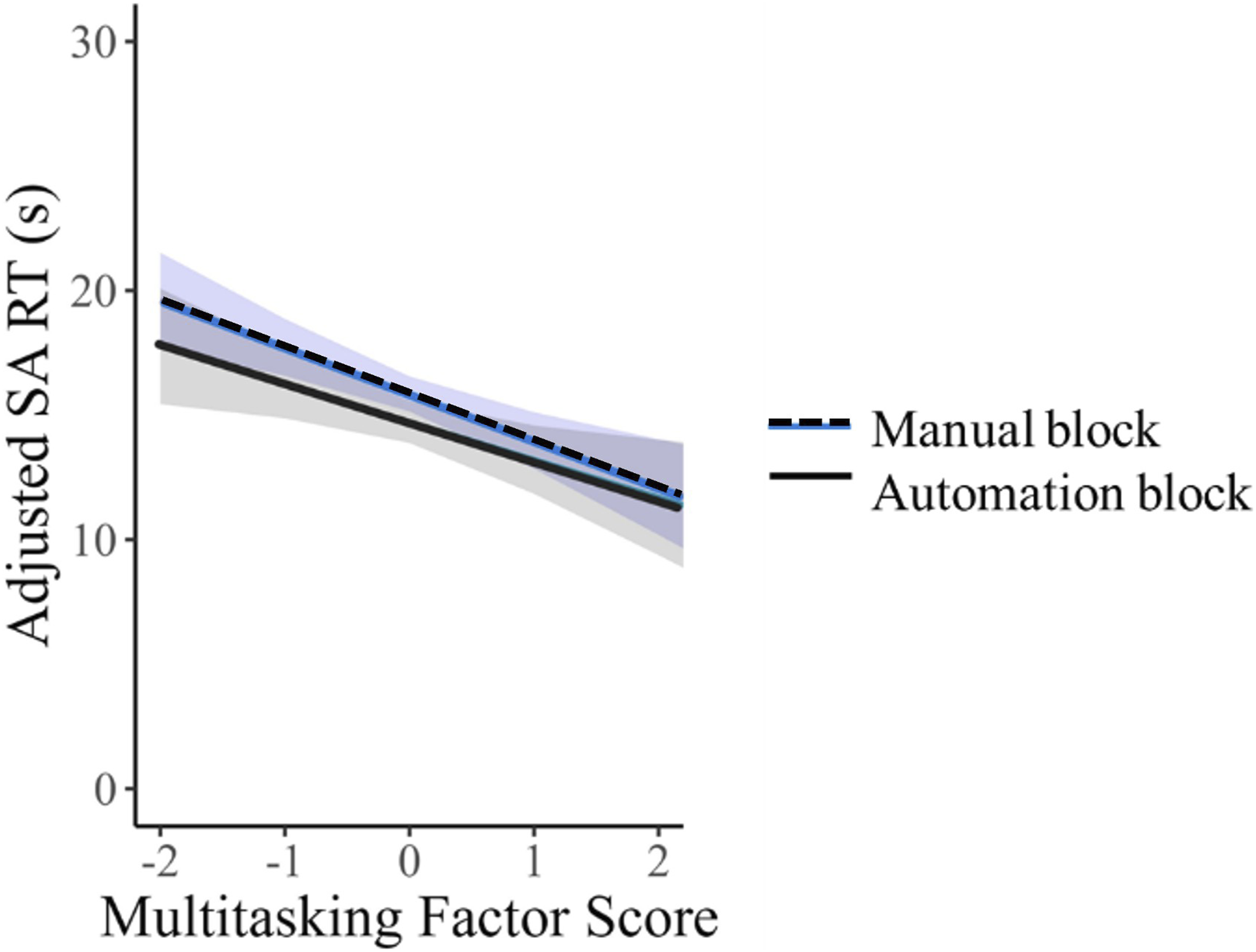

Adjusted RT (mean RT divided by accuracy in seconds) for situation awareness by block (manual and automation) against multi-tasking score (MTa, normed). LMM slope and intercept are provided. Lower adjusted RT reflects better situation awareness. The blue line (dashes) represents the manual block, and the black line (solid) represents the automation block.

ANOVAs on Workload, Stress, and Trust

LMM was not suitable for workload, stress, and trust measures as there were only two data points per participant (i.e., one workload score for the manual block and one for automation block). Therefore mixed-ANOVAs were conducted. Block (manual and automated) was a within-subjects factor with two levels. Participants were separated into three approximately equal MTa groups based on relative MTa score (lower = bottom 33%, medium = middle 33%, higher = top 33%). MTa group was a between-subjects factor with three levels. A one-way ANOVA conducted to validate these groups showed a significant difference between MTa group means (Mlower = -0.85, Mmedium = −0.04, Mhigher = 0.91), F (2,68) = 231.04, p < .001. Simple comparisons showed all groups significantly differed, all p < .001.

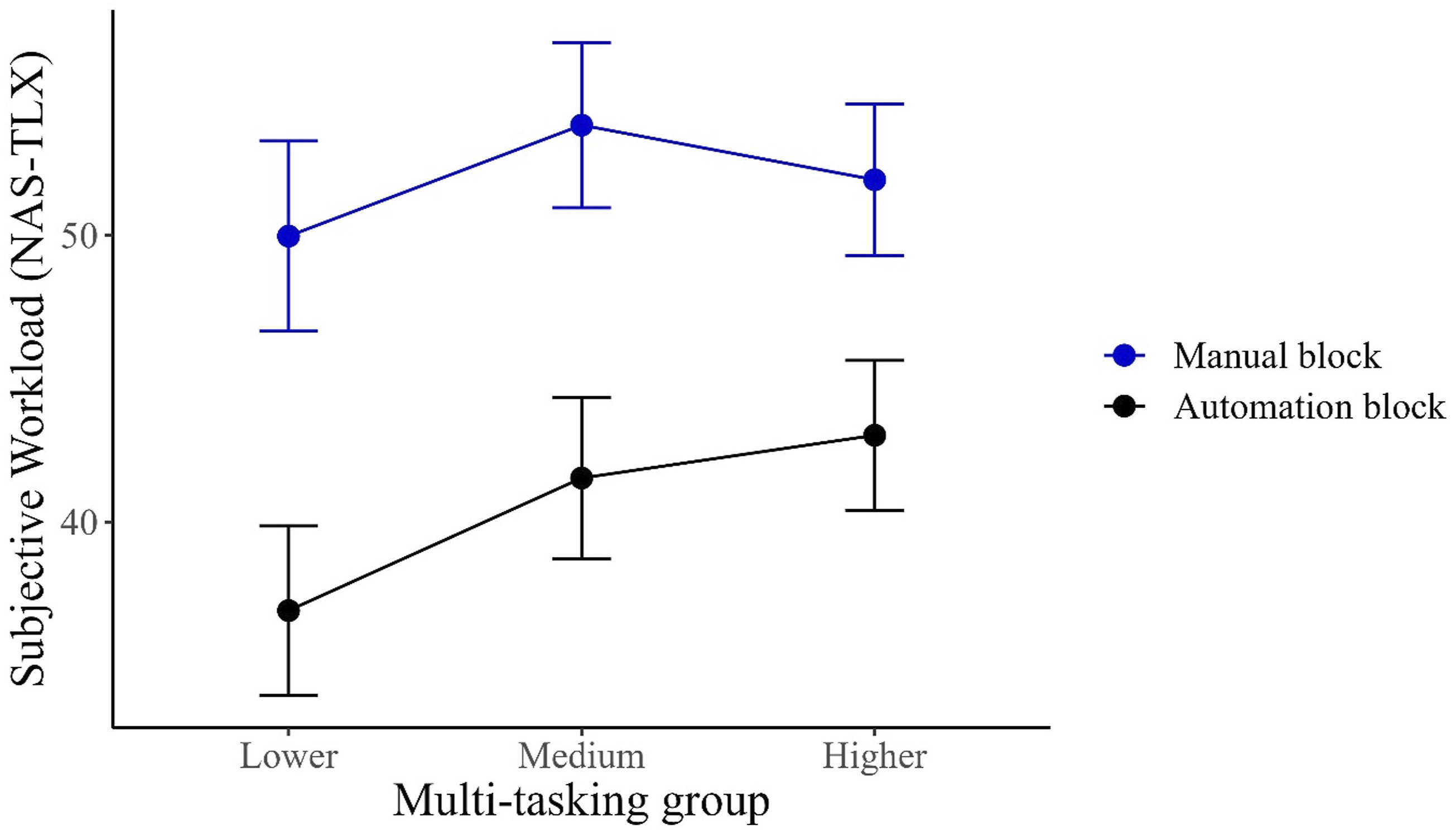

Workload: There was an effect of block, F (1,110) = 46.31, p < .001, η

2

= 0.10, where workload was lower in the automated compared to manual block (Figure 5). There was no effect of MTa group, and no interaction (both F < 1). Subjective workload measure (NASA-TLX) across three groups of multi-tasking ability (MTa) and two blocks of the ATC. Error bars represent 95% confidence intervals around the estimate of marginal means (between subjects).

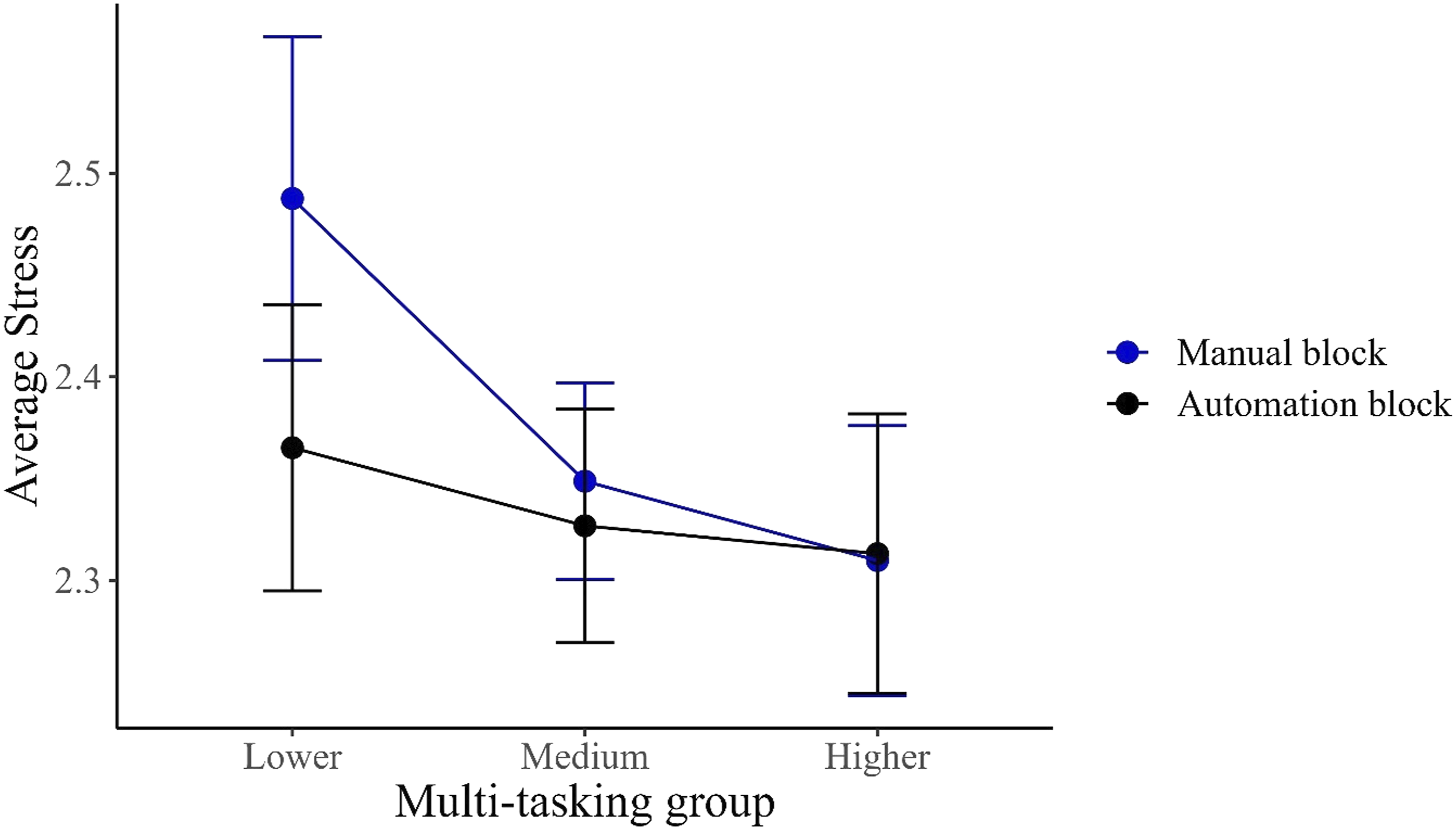

Stress: Pre-task stress was included as a covariate. There were no main effects of MTa or block (both F < 1). There was an interaction between MTa group and block, F (2,108) = 3.18, p < .05, η

2

= 0.003. As illustrated in Figure 6, the difference in reduction of stress between manual and automated blocks was greatest for the lower-MTa group. A follow-up comparison confirmed that stress was lower in the automated block (M = 2.37, SD = 0.43) compared to manual block (M = 2.49, SD = 0.48) for the lower-MTa group, t (36) = 3.55, p < .001, η

2

= .58. In contrast, there was no difference in stress between automated and manual blocks for medium and higher-MTa groups, t < 1. Post-block stress ratings across three groups of multi-tasking ability (MTa) and two blocks of the ATC. Error bars represent 95% confidence intervals around the estimate of marginal means (between subjects).

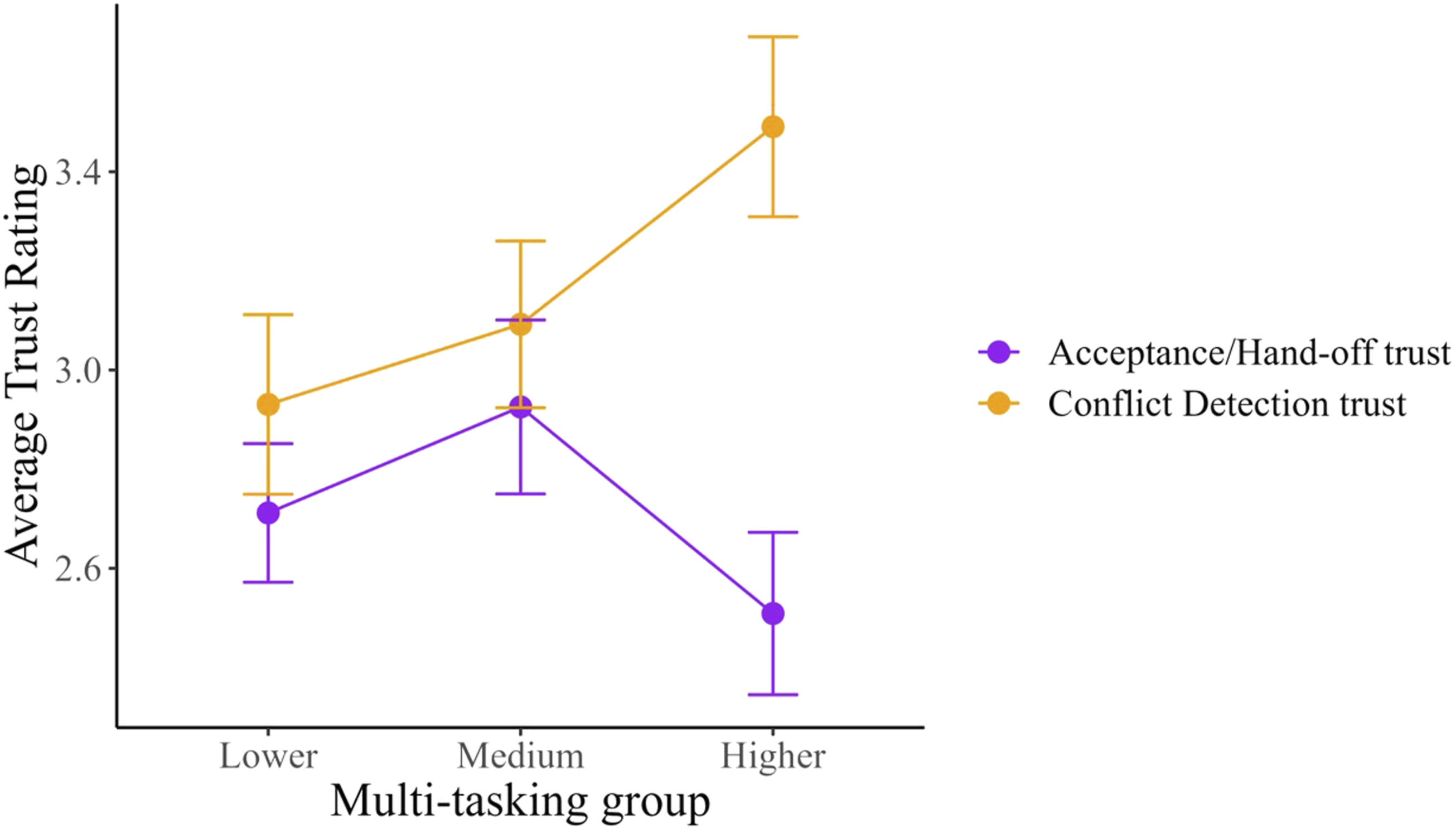

Trust. There was an effect of task, F (1,108) = 15.34, p < .001, η

2

= 0.048, where the reliable conflict detection automation was trusted more (M = 3.19, SD = 1.09) than imperfect acceptance and hand-off automation (M = 2.70, SD = 0.98). There was no effect of MTa group, F < 1. There was an interaction between MTa group and task, F (1,108) = 5.83, p < .05, η

2

= 0.037 (Figure 7). Trust was lower for acceptance/hand-offs (M = 2.47, SD = 0.99) compared to the conflict detection task (M = 3.50, SD = 1.08) for the higher-MTa group, t (108) = 5.05, p < .001, η

2

= .80. In contrast, there were no differences between trust for acceptance/hand-offs and conflict detection automation for the lower and medium groups, t < 1. Post-automated block average trust ratings across multi-tasking ability (MTa) groups and two levels of reliability for the tasks in the ATC. Acceptance and Handoff tasks were 70% reliable, and the Conflict Detection task was 100% reliable. Error bars represent 95% confidence intervals around the estimate of marginal means (between subjects).

Discussion

This study examined the effect of imperfectly reliable automation, and how it differentially impacted an individual’s performance as a function of their MTa. In Greenwell-Barnden et al. (2025), we established that MTa can modulate the benefit of perfectly reliable-automation on performance, in that those with lower-MTa benefited more from reliable automation. We aimed to extend these findings to imperfect automation by examining whether imperfect automation interacts with MTa to predict costs to task performance, workload, SA, stress, and trust in simulated ATC. We predicted that lower-MTa individuals would experience poorer outcomes with imperfect automation (i.e., poorer performance, higher workload and stress, and mis-calibrated trust in automation) compared to manual trials, when compared to higher-MTa individuals.

We found partial evidence supporting this prediction, as the cost of imperfect automation to aircraft hand-off, but not aircraft acceptance, was greater for participants with lower-MTa. We also found greater benefits to aircraft hand-off, but not aircraft acceptance, compared to manual trials for lower-compared to higher-MTa participants. This inconsistency across outcomes between acceptance and hand-off tasks was unexpected, and the lack of differential benefit from reliable automation between those with lower- and higher-MTa does not replicate Greenwell-Barnden et al. (2025). The predicted main effects of MTa were observed, with lower-MTa participants performing poorer than higher-MTa participants on the hand-off and conflict detection tasks (no significant effect for acceptances). Predicted automation use effects were also observed compared to manual, with better performance in reliable-automation trials and poorer performance in unreliable-automation trials, consistent with previous findings in the human-automation teaming literature (Bowden et al., 2024; Körber et al., 2015; Strand et al., 2014; Strickland et al., 2023).

These findings suggest lower-MTa participants took longer to detect aircraft hand-off automation errors and take action to compensate for automation failure. Conversely, higher-MTa participants possibly realized sooner that hand-off automation was imperfect, and compensated, potentially because they had greater task-switching and attentional capacity as indicated by their better trust calibration (they showed decreased trust in imperfect acceptance/hand-off automation than the reliable conflict detection automation). Alternatively, higher-MTa participants may have relied on aircraft hand-off automation less (Cak et al., 2020). This is supported by their higher workload in the automated block than the other MTa groups, as they possibly realized hand-off automation was imperfect sooner, and compensated for it, which increased their workload slightly.

There was an automation benefit to SA with imperfectly reliable automation compared to manual. This is contrary to previous studies that found poorer SA under automation than manual conditions (e.g., Onnasch, Wickens, et al., 2014; Strybel et al., 2016), and our previous work where SA was not affected by automation (Greenwell-Barnden et al., 2025). In our previous study automation was reliable, and visual-search requirements were therefore reduced. Here, the imperfect acceptance/hand-off automation may have required participants to pay more attention to their task environment, thus increasing their relative speed of SA query response, but as reported below automation provision reduced workload.

Automation did not interact with MTa as predicted for workload. However, there was an automation benefit consistent with Greenwell-Barnden et al. (2025; also see meta-analysis by Onnasch, Wickens, et al., 2014) for perfectly reliable-automation compared to manual (despite imperfect automation reliability). The presence of reliable conflict detection automation may explain the overall reduced workload, as conflict detection is a more challenging task than the acceptance/hand-off task. This is similar to Di Nocera et al. (2006) who found that subjective workload was not affected by automation errors in ATC.

As predicted, MTa interacted with automation such that lower-MTa participants reported a reduction in stress when provided imperfect automation compared to manual. In contrast, there were no differences with imperfect automation compared to manual for other MTa groups. This suggests that for lower-MTa participants, perfectly reliable conflict detection automation reduced stress, which outweighed the potential stress induced by imperfect acceptance/hand-off automation. This contrasts with previous research which has suggested automation can increase stress in tasks as long in duration as the current task (i.e., >30 min) by reducing motivation, concentration, and energetic arousal resulting in fatigue compared to manual task performance (McGarry et al., 2003). More broadly, it should also be noted that the current task duration (2 × 30 min blocks) certainly does not reflect full work shifts in operational environments and MTa could fluctuate over time, potentially as a function of task-fatigue, attentional lapses, or change in motivation (Rann & Almor, 2025). As such, future research should investigate the relationship between MTa and automation supervision in longer duration tasks.

Also as predicted, MTa interacted with automation such that higher-MTa participants had better trust calibration in that they trusted the perfectly reliable conflict detection automation more than the imperfect acceptance/hand-off automation. For lower and medium MTa groups, trust did not differ across perfect and imperfectly reliable automation. This finding potentially could account for higher-MTa participants’ better conflict detection task performance, as better trust calibration may free up cognitive capacity to monitor the reliability of the hand-off automation.

Limitations, Future Directions, and Practical Implications

These findings are based on one type of automation error (misses: failing to flag a relevant event) and thus may not generalize to other failure situations. Other types of failure such as false alarms (inappropriate or unnecessary alerts) and how they interact with individual cognitive capabilities should be examined. Previous literature has suggested automation false alarms may differentially impact performance compared to misses, in part due to misses being more salient (Chen & Terrence, 2009; Wickens et al., 2010). False alarm failures may therefore have a commensurately larger impact on operators with lower MTa.

The current study showed some evidence that the benefit and cost of imperfect automation varies with MTa in novice undergraduate students. Expert operators however undoubtedly differ in their experience, motivation, and skill compared to novices (Balfe et al., 2015; Jamieson & Skraaning, 2018). As such, experts may have better recovery performance when automation fails (Roth et al., 2019), have different perceptions of workload (Matthews & Desmond, 2002), and may be more trusting of automation (Niu et al., 2018). Thus, the present pattern of findings could differ for experts who may experience different benefits and costs of automation failure. MTa effects may not apply to experts who have significant practice, which may overcome differences in cognitive ability. Alternatively, the MTa interaction may exist in experts, but to a smaller extent. Expert operators may also have different modality-based multi-tasking requirements in their task environments (auditory as well as visual). Multi-tasking modality could be investigated in future studies for its impact on performance with imperfect automation.

The key current findings are that automation may not assist everyone equally, and that automation failures also have differential effects on performance, stress, and trust determined, at least in part, by an individual’s MTa. To avoid greater costs of automation error being borne by those who are least capable of compensating for such system failures, it may be necessary to selectively hire operators for their MTa and put those with the greatest ability in roles where automation is anticipated to be particularly vulnerable to errors. This may include placing higher-MTa individuals in roles where the consequence of failure of automation is greater (e.g., critical safety monitoring), creating mixed-ability teams where higher-MTa individuals can monitor automation and provide support to lower-MTa operators, and/or providing more structured training and support for lower-MTa operators such as decision trees for dealing with automation failure. Hence, an individually tailored approach to automation may provide the best outcomes when automation reliability cannot be guaranteed.

Conclusions

Multi-tasking ability varied the impact of imperfect automation in a simulated air traffic control task. Multi-tasking ability may lead to differentiated effects of imperfect automation on performance, situation awareness, stress, and trust with imperfect automation. Taken together with the prior findings of Greenwell-Barnden et al. (2025), it may be beneficial to consider individual cognitive ability when determining what automation should be deployed in the workplace, as those who gain a significant benefit from reliable automation may also suffer greater costs when automation was unreliable.

Key points

Participants with lower-MTa had greater performance benefits from reliable aircraft hand-off automation (compared to manual), but greater costs with imperfect hand-off automation (compared to manual), relative to those with higher-MTa. Situation awareness was unexpectedly higher with imperfect automation (compared to manual), potentially due to increased attention required to monitor imperfect automation. Imperfect automation reduced stress for participants with lower-MTa (compared to manual) but did not for participants with higher-MTa. Participants with higher-MTa calibrated trust across the different reliability of ATC tasks more effectively.

Supplemental Material

Supplemental Material - How Multi-Tasking Ability Impacts Performance, Workload, Situation Awareness, Stress and Trust with Simulated Imperfect Automation

Supplemental Material for How Multi-Tasking Ability Impacts Performance, Workload, Situation Awareness, Stress and Trust with Simulated Imperfect Automation by Jayden N. Greenwell-Barnden, Troy A. W. Visser, Shayne Loft, Susannah J. Whitney, Vanessa K. Bowden in Human Factors.

Footnotes

Ethical Considerations

This research complied with the tenets of the Declaration of Helsinki and was approved by the Human Research Ethics Committee at The University of Western Australia.

Consent to Participate

Informed consent was obtained from each participant.

Consent for Publication

Participants were informed in the Participant Information Form that deidentified data may be included in published research before consenting to participate.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported through an Australian Government Research Training Program Scholarship and the Australian Army and Defense Science Partnerships agreement of the Defense Science and Technology Group (ID 7120), as part of the Human Performance Research network (HPRnet).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data for this study is not made publicly available.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.