Abstract

Objective

To review and synthesise research on technological debiasing strategies across domains, present a novel distributed cognition-based classification system, and discuss theoretical implications for the field.

Background

Distributed cognition theory is valuable for understanding and mitigating cognitive biases in high-stakes settings where sensemaking and problem-solving are contingent upon information representations and flows in the decision environment. Shifting the focus of debiasing from individuals to systems, technological debiasing strategies involve designing system components to minimise the negative impacts of cognitive bias on performance. To integrate these strategies into real-world practices effectively, it is imperative to clarify the current state of evidence and types of strategies utilised.

Methods

We conducted systematic searches across six databases. Following screening and data charting, identified strategies were classified into (i) group composition and structure, (ii) information design and (iii) procedural debiasing, based on distributed cognition principles, and cognitive biases, classified into eight categories.

Results

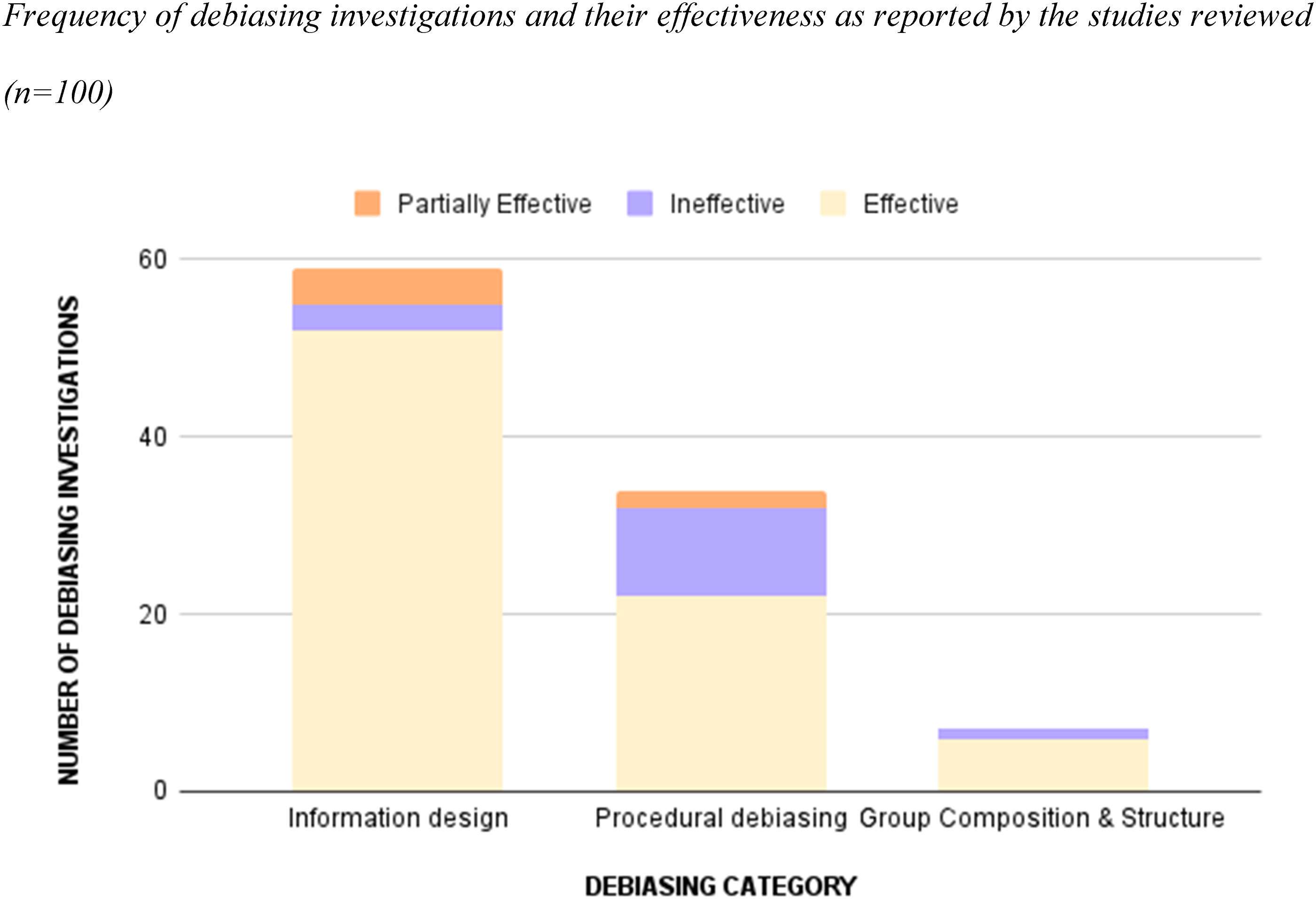

Eighty articles met the inclusion criteria, addressing 100 debiasing investigations and 91 cognitive biases. A majority (80%) of the identified debiasing strategies were reportedly effective, whereas fourteen were ineffective and six were partially effective. Information design strategies were studied most, followed by procedural debiasing, and group structure and composition. Gaps and directions for future work are discussed.

Conclusion

Through the lens of distributed cognition theory, technological debiasing represents a reconceptualisation of cognitive bias mitigation, showing promise for real-world application.

Application

The study results and debiasing classification presented can inform the design of high-stakes work systems to support cognition and minimise judgement errors.

Introduction

Expertise and training are only a part of sustained optimal performance in many real-world settings. High-stakes decision-making literature has long demonstrated the impact of the spatial, temporal, social and information context on outcomes. In essence, cognitive processes are distributed across the environment within which they occur (Hutchins, 1995). These processes can be degraded by otherwise adaptive cognitive biases due to inadequate information representations through different components of the system. Cognition has traditionally been conceptualised in terms of information processing within the mind, placing the burden of errors solely on the individual. Fischhoff (1982) first classified debiasing efforts based on whether the cognitive bias originates from “the judge, the task, or some mismatch between the two” (p. 424). Later works, including Soll et al. (2015) and Larrick (2004), have discussed the importance of broadening the scope of debiasing to the environment.

In this paper, we expand on reorienting cognition from the individual to the system and its relevance to understanding and mitigating biases in reasoning (i.e., debiasing) in practice. To achieve this, we draw on distributed cognition theory, defining and reviewing technological debiasing strategies from the literature, classifying them along the principles of the distributed cognition framework, and discussing implications for enhancing human factors and performance in real-world settings.

Cognitive biases represent cognitive processes that influence the application of specific reasoning rules to information, when forming judgements or making decisions. First introduced by Tversky and Kahneman (1974), cognitive biases are defined as systematic patterns of deviation from a predetermined rational ideal of reasoning. While ecologically adaptive (Haselton et al., 2015), they have the potential to lead to erroneous judgements and negatively consequential decisions. Cognitive biases are well-documented in a variety of critical performance contexts including surgery (Armstrong et al., 2023), crisis management (Comes, 2016) and aviation (Murata et al., 2015). These situations are often characterised by high cognitive load, uncertainty, task saturation and environmental disruptors which may exacerbate the prevalence and impact of cognitive biases.

Debiasing for Human Factors

Lilienfield et al. (2009) wrote of debiasing as encompassing “not only techniques that eliminate biases but also those that diminish their intensity or frequency” (p. 391). Although not yet formally defined in the context of human factors, debiasing is understood as efforts or techniques to eliminate the presence of bias or the undesirable consequences of biases in judgement and decision-making tasks. The instrumental view of rationality is the most applicable to human factors; the goal is to improve human and system capacity to maximise utility, accuracy and goal-achievement, acknowledging that biases may be adaptive in achieving these ends. Experts may utilise simplifying heuristics to guide their judgement, but their use alone does not define expert performance. Optimal outcomes are achieved when there is coherence between the heuristics applied and the conditions of performance (Kahneman & Klein, 2009). The goal of debiasing should be to reduce errors and inefficiencies and promote the achievement of optimal outcomes given the opportunities and constraints of the decision environment and human capabilities. This means, the decision-making environment must be aligned to fit adaptive human reasoning, as opposed to attempting to debias the human judge to completely eliminate any biased tendencies.

Widely studied, cognitive and motivational strategies (Arkes, 1991; Larrick, 2004), that aim to “modify the decision-maker” (Soll et al. 2015; p. 926), maintain the individual at the core of biased decision-making. Cognitive strategies attempt to modify individuals’ reasoning through instruction (e.g., Fahsing et al., 2023) or training (e.g., Dunbar et al., 2014), whereas motivational strategies utilise incentives and accountability (e.g., Finkelstein et al., 2022). However, from a human factors perspective, there are limitations to the real-world application of such approaches.

Cognitive and motivational strategies assume that humans have unlimited cognitive resources at their disposal to support analytic and rational reasoning. They further require that humans can recognise when they are at risk of being biased. Both cognitive and motivational strategies largely rely on humans’ conscious will and ability. The efficacy of these strategies is further limited by the “bias blind spot,” our tendency to see and exaggerate the impact of biases in others, while denying the existence of our own (Pronin, 2009).

Optimal debiasing strategies should instead be context-specific and minimise reliance on individuals’ cognitive resources in order to attenuate the trade-off between cognitive effort and accuracy (Larrick, 2004). In many critical contexts, it is unlikely that individuals can always allocate the motivation and cognitive resources to engage in accurate and elaborate reasoning to reach optimal decisions. In order to model performance environments where human sensemaking and environmental factors function cohesively as a unified system, further consideration of the broader contextual factors that influence judgement in critical situations is necessary. Distributed cognition provides a framework to reorient debiasing applications to high-stakes contexts, taking into consideration environmental constraints and opportunities.

Distributed Cognition and Technological debiasing

The distributed cognition approach views cognition not as confined within an individual, but as an emergent property of the system within which the decisional task is performed. This contrasts with the classical approach to cognition that places the emphasis solely on the individual’s own capacity. Hutchins (1995) proposed the view of cognitive activity as organised across (i) different members of teams (i.e., social environment), (ii) the tools and artefacts (i.e., material environment) and (iii) time (i.e., flow and transformation of information over time). In this view, human sensemaking is one part of the whole system.

In the distributed cognition approach, positive outcomes are attributed to the smooth and effective coordination between human and nonhuman contributors to the system, and not merely to human skill. In this vein, biased decision processes and outcomes can be considered a product of ineffective information representation, transformation and assimilation through different components of the system. As such, debiasing strategies in high-performance contexts must address all these system-level factors, beyond individual human cognition.

Soll et al. (2015) referred to these as “modify the environment” strategies. Larrick (2004) conceptualised these strategies as “technological debiasing strategies.” Building on existing work and incorporating distributed cognition theory, we define technological debiasing strategies as use or modification of systems, processes, artefacts and agents, both human and nonhuman, that are external to individual decision-maker(s) for the purpose of minimising biased reasoning. In this context, technology is defined broadly as any process, artefact, tool or component of the decision environment (including humans), that is external to the individual mind. It includes but is not limited to digital technology.

Further extending this notion, technological debiasing strategies can be broadly classified into three categories: (i) Information design, (ii) Procedural debiasing and (iii) Group composition and structure, based on the three processes of distributed cognition introduced by Hutchins (2000) and further elaborated on by Hollan et al. (2000).

Information design

Information design strategies pertain to how we make sense of information structures and stimuli from the decision environment. Cognitive processes “involve coordination between internal and external (material or environmental) structures” (Hollan et al., 2000, p. 175). Externally presented information interacts with humans’ internal cognitive structures, resulting in sensemaking. However, biases may occur when information stimuli do not align with internal representations. Addressing the flow or interpretation of information from information sources in the environment into human cognitive processing, information design debiasing strategies modify the organisation, structure and nature of the information available or selectively present specific types of information (e.g., Cook & Smallman, 2008) to ensure accurate mental representations of information used in decision-making.

Procedural debiasing

The term procedural debiasing was first introduced by Lopes (1987), whose work aimed to modify the cognitive procedures within the judge (or individual decision-maker) to debias judgements. Here, however, procedural debiasing pertains to strategies that improve the nature of tasks in order to fit human cognition for optimal judgement or decision outcomes. According to distributed cognition theory, cognitive processes are “distributed through time such that the outcomes of earlier events can alter later events” (Hollan et al., 2000, p. 175). Biases can, hence, occur when the sequence of information gathering or decision subtasks are organised in ways that promote inaccurate weighting or processing of information based on when in the decision workflow information is presented or processed. Further, they can potentially accumulate throughout the system’s cognitive workflow. Addressing the temporally interdependent nature of decision tasks, procedural debiasing strategies include modification of task components, tools and sequences of subtasks (e.g., Bhandari et al., 2008).

Group composition and structure

Group composition and structure modifying strategies include the use of groups to debias judgements, including replacing individuals with groups (e.g., O’Leary, 2011) but also modifying group structures to debias group decisions (e.g., Meissner & Wulf, 2017). This category corresponds to the idea that cognitive processes are “distributed across members of a social group” (p. 175). Biases can occur at the group-level, due to interactional properties of social cognition that may facilitate or hinder different types of reasoning. Modifying social antecedents of cognitive biases in collective decision-making, these strategies address information exchange between members of a team occurring as a product of various collective and individual attributes.

The objective of this scoping review is to characterise evidence on technological debiasing strategies across domains according to the three distributed cognition principles (Hollan et al., 2000; Hutchins, 1995) for human factors and identify considerations for determining their applicability to real-world settings.

Methods

The scoping review method is best suited to the nature of the research that constitutes this inquiry, since the literature is broad, fragmented across disciplines and uses varied terminology and conceptualisations of bias and debiasing. We synthesised the literature and identified research gaps, following Arksey and O’Malley’s (2005) recommendations for scoping reviews, modified by Levac et al. (2010), that comprises the five following stages:

Identifying the Research Question

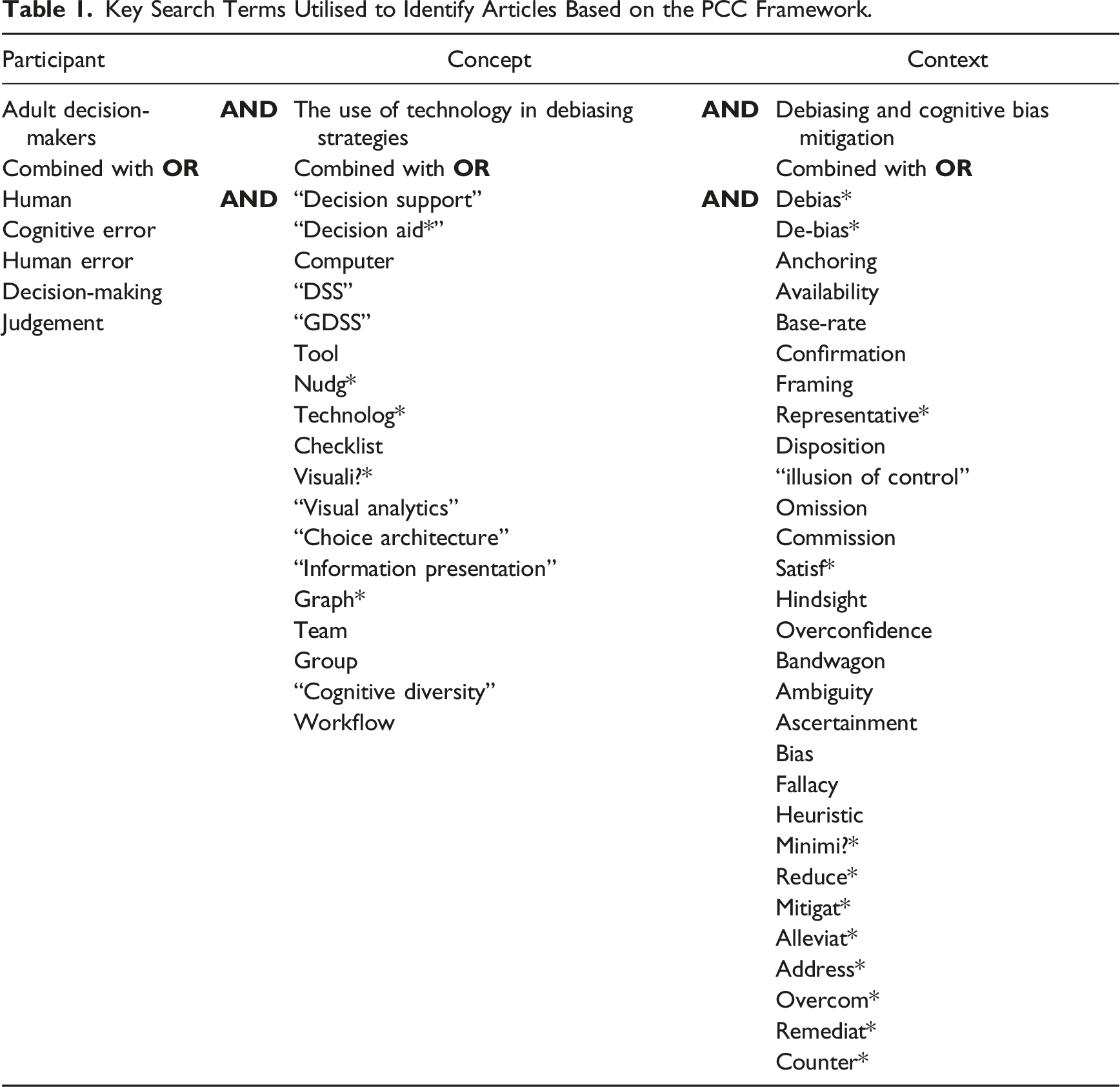

Following the PCC (Participant, Concept, Context) framework recommended by the Joanna Briggs Institute (JBI; Pollock et al., 2023), the primary research question guiding the scoping review is “What types of technological debiasing strategies have been employed to debias human judgement and decision-making across domains?”

Identifying Relevant Studies

Key Search Terms Utilised to Identify Articles Based on the PCC Framework.

Study Selection

Studies were selected if they met the following inclusion criteria: published peer-reviewed, experimental studies in English that explicitly aimed to minimise cognitive bias in human decision-making or judgements using any technological strategy and addressed at least one type of cognitive bias. This included studies across all industry contexts, disciplines and healthy adult populations. Studies were excluded if they (i) addressed debiasing for behaviour change or learning, (ii) employed cognitive or motivational strategies, (iii) involved nonadult populations or (iv) populations with mental health conditions, (v) not peer-reviewed publications and (vi) if full texts were not available. There were no limits on the year of publication; all relevant studies published before September 2023 were included.

Charting the Data

Information extracted from the identified articles included authorship, year of publication, sample size and type, experimental design, level of analysis (individual vs. group), industry domain, biases as named in the studies, cognitive bias category, debiasing strategies tested, debiasing category, exposure variables, outcome variables and effectiveness of the debiasing strategy (effective, ineffective or partially effective) as reported by the studies.

Collating and Summarising Data

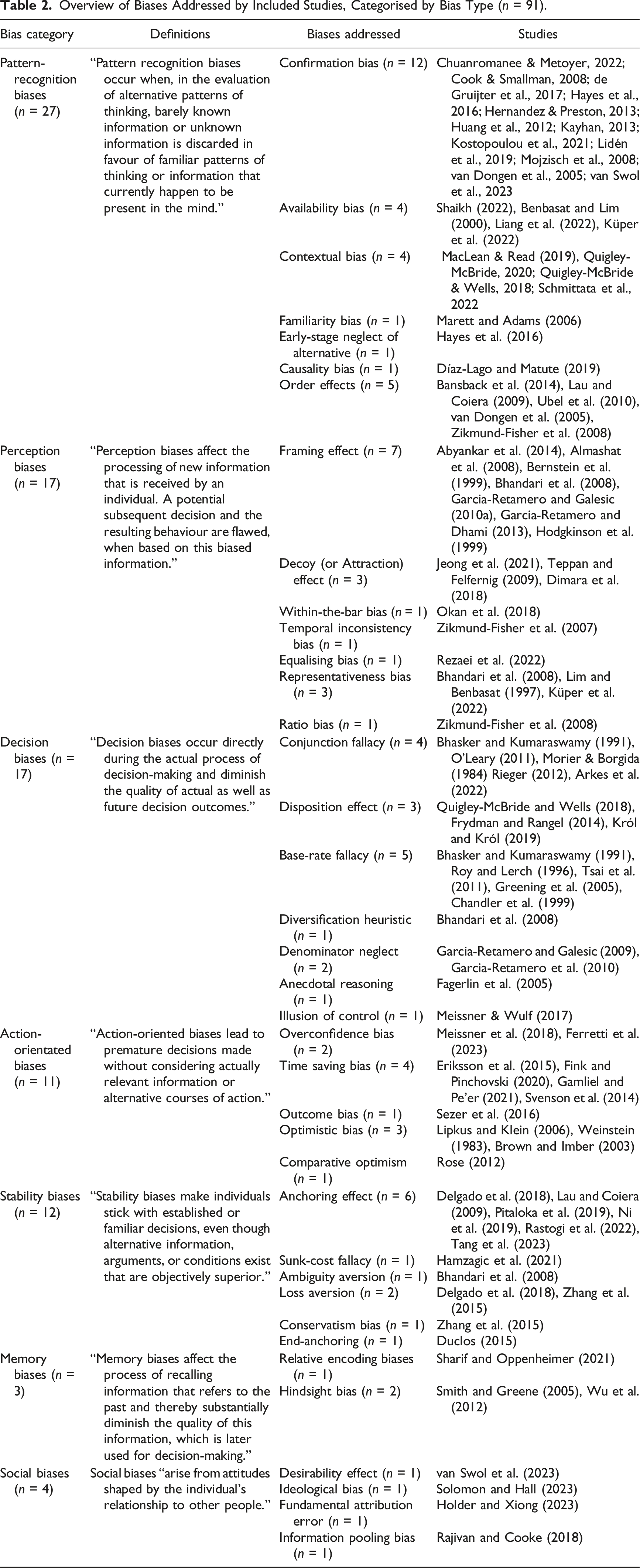

To categorise the cognitive biases studied, Fleischmann et al.’s (2014) taxonomy of eight cognitive biases in information systems literature was used as it was derived from the analysis of literature in an applied performance domain. The taxonomy comprises (i) perception biases, (ii) pattern-recognition biases, (iii) memory biases, (iv) decision biases, (v) action-orientated biases, (vi) stability biases, (vii) social biases and (viii) interest biases. The debiasing strategy categories described in the included studies were derived based on distributed cognition theory principles and classified into the three aforementioned categories: (i) information design, (ii) procedural debiasing and (iii) group composition and structure. Descriptive statistics were used to summarise the data. Analysed data are presented in tabular and graphical formats as appropriate to aid synthesis.

Results

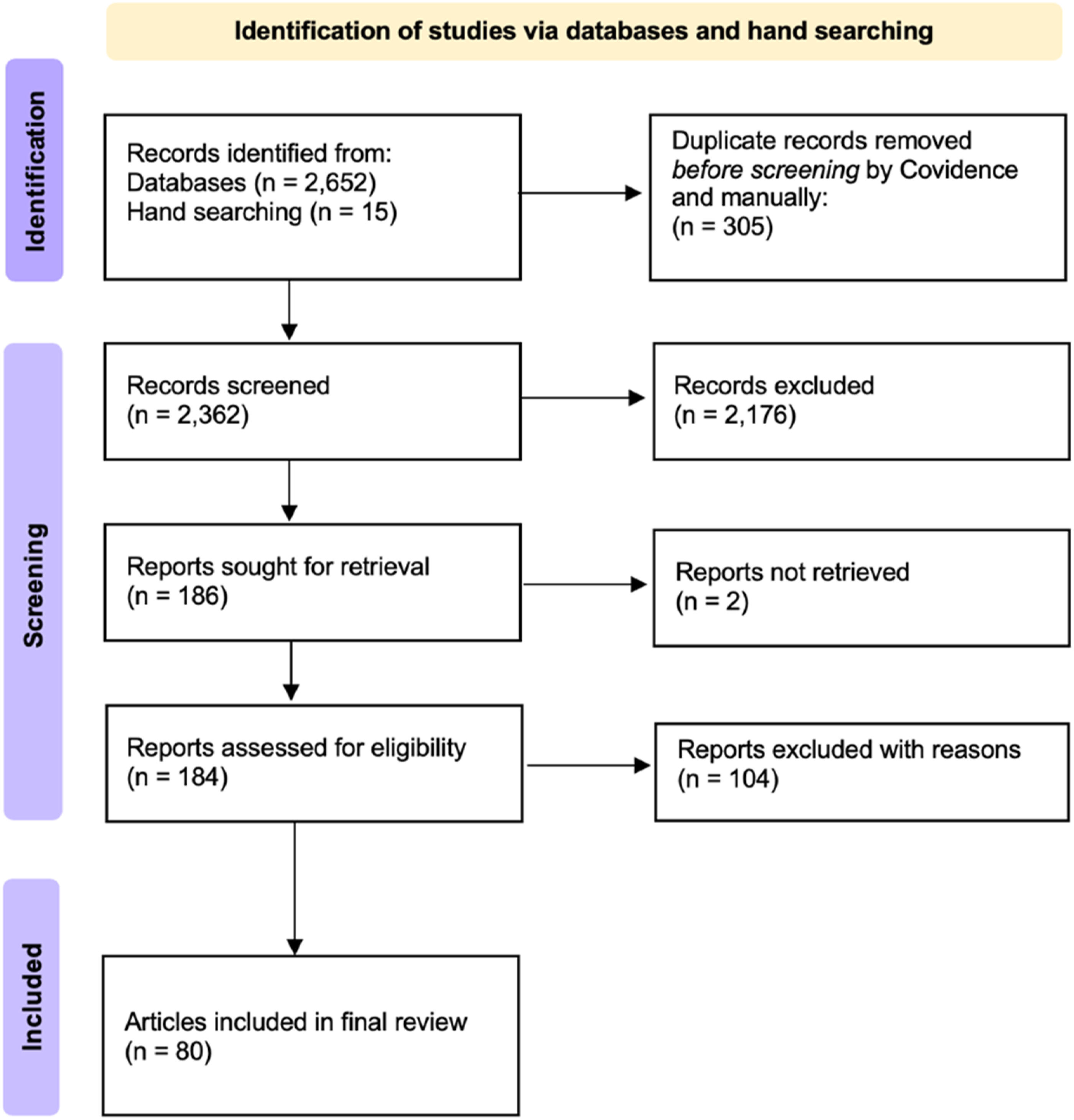

Keyword and hand searches resulted in a total of 2667 records. After the removal of 305 duplicates, two independent reviewers screened 2362 abstracts. Of the 184 full texts screened, 80 articles met the inclusion criteria and were included for data extraction. See Figure 1 for a flow chart of screening methods. Identified studies reported 100 debiasing strategies, addressing 91 cognitive biases. Of these, 71 studies (88.8%) investigated one bias, eight studies (10.2%) investigated two biases, and one study addressed three biases within one debiasing intervention. Fifty-eight studies (72.5%) used a single debiasing strategy and 20 (25%) employed more than one strategy to debias one or more biases. Methods flow chart based on Preferred Reporting Items for Systematic reviews and Meta-Analyses extension for Scoping Reviews (PRISMA-ScR; Tricco et al., 2018).

Domains of Included Studies

The most represented domains were healthcare (n = 26, 32.5%), management (n = 13, 16.3%) and legal or forensic decision-making (n = 9, 11.3%). Three (3.8%) studies examined transport-related judgements. Other domains studied include finance, transport, construction, software development, intelligence analysis, political and sport-related judgements. Seventeen studies (21.3%) did not study debiasing within a specialised industry or domain, instead recruiting lay participants to lab-based decision-making tasks and experimental paradigms with no specific domain-focus.

Study Sample Characteristics

Sample sizes varied widely, ranging from 27 to 4101 participants. Thirteen studies recruited participants from the relevant judgement context, that is, samples representative of those making the decisions in real-world settings, such as navy intelligence analysts (Cook & Smallman, 2008), physicians (Arkes et al., 2022) and crime scene investigators (de Gruijter et al., 2017). Thirty-three studies included student samples. Of these, 28 studied student-only samples. Three involved student samples in educational training relevant to the industry or context of application, such as law students (Lidén et al., 2019; Schmittata et al., 2022) and medical students (Almashat et al., 2008). Four studies included a mixture of student and professional samples. These included judges and law students (Lidén et al., 2019) and managers and management students (Hodgkinson et al., 1999; Ni et al., 2019; Pitaloka et al., 2019). Twenty studies involved unspecified samples drawn from the general population.

Biases Addressed

Overview of Biases Addressed by Included Studies, Categorised by Bias Type (n = 91).

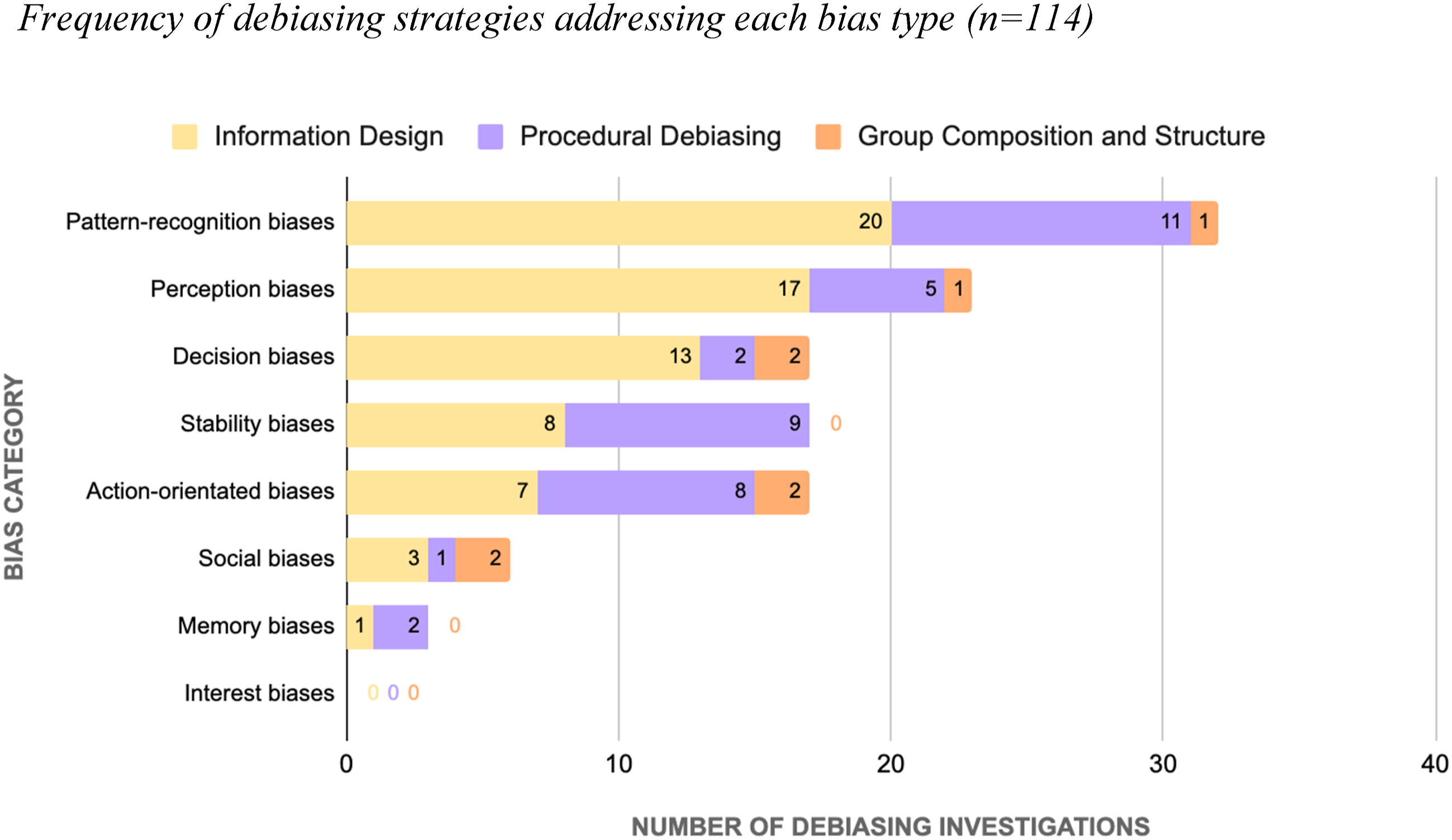

Debiasing Strategies Investigated

Of the 100 debiasing strategies, information design (i.e., presentation, restructuring or selective presentation of information) accounted for 59%, followed by 34 studies examining procedural debiasing, and 7 exploring group composition and structure. Several studies used a single debiasing strategy to address multiple biases, and some used multiple strategies to address a single bias. There were 114 individual debiasing-bias investigation pairs (Figure 2). See Appendix 1 for overview of included papers classified by debiasing strategy and cognitive bias type. Frequency of debiasing strategies addressing each bias type (n = 114).

Reported Effectiveness of Technological Debiasing Strategies

Of the 100 debiasing strategies, study authors reported 80 to be effective in minimising bias. Strategies were considered effective using a single criterion: the identification of a statistically significant difference between experimental groups, wherein one group’s responses were significantly less prone to cognitive bias than the comparator (Figure 3). Fourteen strategies were reported as ineffective. Ineffective strategies were those that either found no significant difference between groups (n = 12/14), or a statistically significant increase in biased reasoning (n = 2/14). Six strategies were partially effective, that is, either effective under specific conditions (Abyankar et al., 2014; Ni et al., 2019; Rieger, 2012; Sezer et al., 2016; Shaikh, 2022; van Dogen et al., 2005) or effective in mitigating one of the biases studied but not another (Bhasker & Kumaraswamy, 1991). Frequency of debiasing investigations and their effectiveness as reported by the studies reviewed (n = 100).

Levels of Analysis: Individual Versus Group

Nine studies (11.3%) examined cognitive biases in group judgements, though none of those were biases that occur uniquely in groups such as groupthink—groups’ tendencies to maintain unanimity in their beliefs or ideas even when faulty (Janis, 1971)—or the bandwagon effect—individuals’ tendency to think and behave as others do and “join the crowd” (Leibenstein, 1950, p. 184). Strategies addressed at the group-level were ideological bias, illusion of control bias, overconfidence bias, conjunction fallacy, availability bias, confirmation bias, representativeness bias, hindsight bias and information pooling bias. One study (O’Leary, 2011) compared individuals’ and groups’ proneness to the conjunction fallacy, concluding that groups are less prone to the bias than individuals.

Outcome Measures

A range of outcome measures were utilised in the identified studies to operationalise biased and unbiased judgement and decision-making. The majority studied probability or likelihood estimates (e.g., Chandler et al., 1999; Küper et al., 2022; Okan et al., 2018), judgement or decision accuracy (e.g., Fink & Pinchovski, 2020; MacLean & Read, 2019; Tang et al., 2023), absolute and relative risk estimates (e.g., Garcia-Retamero & Galesic, 2010b; Lipkus & Klein, 2006; Ubel et al., 2010), choice or preference judgements (e.g., Abyankar et al., 2014; Bernstein et al., 1999; Jeong et al., 2021) and information behaviour (e.g., Kostopoulou et al., 2021; Mojzisch et al., 2008). Others reported confidence in judgement or decision, and domain-specific measures such as guilt assessments (Lidén et al., 2019; Schmittata et al., 2022) and jury verdicts (Smith & Greene, 2005).

Discussion

Through systematically scoping the literature, we identified 100 technological debiasing strategies within 80 studies that were tested across domains and organised them according to three distinct novel theoretically derived categories based on the distributed cognition framework. Building on the well-established idea of extending the boundaries of debiasing techniques to the decision environment, we found considerable scope to advance empirical work based on the contributions of the present review, the avenues for which are discussed below alongside considerations for practical applications.

Hinsz (2015) proposed that teams are a type of technology. Defining technology as “the specific methods, materials and devices used to solve practical problems,” he suggested that individual decision-makers utilise team members as “cognitive technology” (p. 219) to solve problems as they extend the capacity of an individual’s cognition. By viewing teams as technology, the strengths of team cognition can be systematically leveraged, and weaknesses minimised to enhance task performance. This “teams-as-technology” perspective aligns with a fundamental principle of the distributed cognition framework, that cognition occurs in a system of interdependent individuals. By integrating evidence on individual and team cognition, team composition can be systematically modified to fit the task. In practice, this may involve assessing individual and group attributes to form teams that can minimise bias-driven judgement errors.

Gaps and Future Research

The present review covered debiasing investigations across multiple domains including healthcare-related, legal, organisational, and financial decision-making. All but two studies (Kostopoulou et al., 2021; Solomon & Hall, 2023) were based in lab-based and otherwise controlled environments, with a majority involving student samples, which limits conclusions that can be drawn with respect to applicability in naturalistic performance settings. More work establishing ecological validity through rigorous quasi-experimental investigations or utilising synthetic task environments to approximate the realism of real-world conditions of performance is required to establish efficacy of debiasing strategies in human factors practice. Specific needs for the field involve (i) including more representative samples in future studies, (ii) inquiry into experts’ openness to technological debiasing strategies, (iii) measuring the efficacy of the strategies and (iv) evaluating impact of strategies on cognitive load or time to complete tasks.

Further, a majority of the studies identified in the present review addressed biases at the individual level. Seven investigations aimed to debias decision-making at the group level. There were no studies examining biases that occur only at the group-level. Given that most real-world high-stakes decisions are made by teams, testing debiasing strategies at the group-level is critical for advancing application of debiasing in human factors.

The digital and computational nature of many contemporary real-world tasks means that debiasing strategies must evolve rapidly to keep pace. At the intersection of information design and procedural debiasing strategies are avenues for novel research advancing real-time visual feedback (Shaikh, 2022), including customizable and interactive visualisations (Tsai et al., 2011). The feasibility of computationally aided detection of biases, based on objective behavioural interaction metrics using visual analytic platforms and tools has already been demonstrated (Crowley et al., 2013; Wall et al., 2017). In addition to assessing bias probability in real-time, utilising these systems can enable feedback and customisability of digital interfaces to support human cognition.

An important distinction to note is between mitigating cognitive bias and mitigating the negative effects of cognitive bias. Not all biased decision-making is undesirable or inaccurate. Specific biases in certain types of decision-making can also lead to ideal outcomes, while saving time and cognitive resources. This means that sometimes, debiasing can look like “rebiasing,” that is, inducing one bias to offset the effects of another (Larrick, 2004), or leveraging an existing adaptive heuristic to guide the decision-making towards the optimal outcome. For example, manipulating the order of information presented to allow decision-makers to assign greater weight to the initial chunk of information (leveraging the anchoring bias), may be helpful to offset the confirmation bias when either the initial information contains evidence that disconfirms prior beliefs or is objectively the most important factor in each decision situation. Rebiasing involves making trade-offs between the risks associated with either bias, and prioritising the one with most upside or least downside. As such, this requires robust experimental testing before implementing in human factors practice for real-world consequential decisions.

Practical Implications

To improve judgement and decision-making (JDM) performance, one may (i) leverage biased tendencies, (ii) minimise impact of bias on outcomes or (iii) eradicate impact of bias on outcomes. With respect to their utilisation outside of experimental settings and in practice, we draw on the extant literature and Soll’s (2015) recommendations for choosing debiasing strategies, to propose a set of criteria to be considered when assessing applicability of a technological debiasing strategy for a given decision situation. These include (i) risks associated with bias, (ii) nature and sources of bias, (iii) judgement or decision task properties, (iv) properties of the decision environment, (v) team properties (for team-based tasks) and (vi) individual psychological and physiological factors, all described below.

Risk Associated

Considerations for risks associated involve establishing the (i) potential for harm (What is the potential for harm if a cognitive bias is left unchecked?) and (ii) margin of error (What is the margin of error that defines the threshold for unacceptable risk?).

Nature and Sources of Bias

To specify the target of debiasing strategies and assess the source or nature of cognitive bias, several classification systems including Fleischmann et al. (2014) have been suggested in literature. Another notable classification is Arkes’ (1991) which categorises biases into three types: association-based (due to reliance on accessibility of information in memory), strategy-based (due to use of suboptimal reasoning strategies or misapplication of decision rules) and psychophysically-based (due to improper translation or accessing of information stimuli in the environment). The source of bias can determine which features of the decision environment can be modified to minimise its undesired effects.

Judgement and Decision-Making (JDM) Task Properties

Task properties relevant to debiasing include (i) task type (What type of decision task is being performed? E.g., probability or likelihood assessment, hypothesis evaluation, choice and risk estimation), (ii) task frequency (How frequently is this task performed? Is the task performed nonroutinely or regularly and repetitively?), (iii) task complexity (How complex is the task, in terms of the number of distinct information items and acts involved, the degree of item integration required to perform it and how variable the task components and their relationships are?) (Wood, 1986), (iv) value criteria (What is considered an optimal outcome? How is the success or failure of a decision evaluated?) and (v) time (Under what time constraints is the task being performed? Is the time allocated to a task fixed or flexible?).

Properties of the Decision Environment

The properties of the decision environment include (i) role (Who is involved in performing the JDM task?) (ii) information needs (What information is required and utilised to perform the task?), (iii) cognitive artefacts (What artefacts are currently utilised to perform the task?) and (iv) information flow (How does information currently flow, and how should information flow in the system from one element to another? E.g., information flow direction, transformation, facilitators and barriers).

Team Properties (For Team-Based Tasks)

For team-based tasks, team properties to be considered include (i) role distribution (What are the established roles and responsibilities in the team? How rigid or fluid are they?), (ii) Cognitive diversity and compatibility (Across what characteristics should teams be homogeneous and heterogeneous to ensure adequate information pool and comprehensive information elaboration and synthesis?) (Mello & Rentsch, 2015; Wang et al., 2024), (iii) team dynamics (What team dynamics are facilitating or hindering effective problem-solving? E.g., cohesiveness, hierarchical rigidity and team familiarity) and (iv) integration of any nonhuman teammates (How can autonomous or nonautonomous agents such as artificial intelligence overcome or aid experts in overcoming the limitations of human cognition to enhance performance?) (Vold, 2024).

Psychological and Physiological Factors

Psychological and physiological considerations for debiasing include (i) cognitive load (How much intrinsic and extrinsic cognitive load are individuals under? Would the debiasing strategy reduce or add to the cognitive load required to perform tasks?), (ii) fatigue (What level of fatigue are individuals experiencing? For instance, one may consider prioritising minimising errors due to bias under conditions where fatigue is likely such as decisions made at the end of a shift during a handover in a hospital environment.) and (iii) individual differences (How do individuals responsible for the task fare on individual difference attributes such as personality, need for cognition and working memory capacity?).

Limitations of the Review

Whilst the results of this scoping review are encouraging and point to a growing body of literature on debiasing using distributed cognition, our findings are to be interpreted in light of several limitations. First, given the fragmented nature of research in the area and the use of a wide range of terms to describe the same phenomena, it is possible that our review may have excluded some relevant studies. The lack of consistency in terminology, definitions and conceptualisations of bias or debiasing allows for limited comparability. Certain ambiguities are also apparent; some debiasing strategies can fall into more than one category since distributed cognition is a complex process comprising interwoven subprocesses. For instance, debiasing techniques involving “choice architecture” can be considered procedural debiasing or information design. While the debiasing categorisation presented here is not absolute, this scoping review lays the groundwork for advancing debiasing processes and interventions upon which future studies may build. Further, due to differing perspectives on the nature and sources of biases, the neat assignment of cognitive biases to categories has been an ongoing challenge in literature. Fleischmann et al.’s (2014) taxonomy offers a categorisation relevant to human factors. However, these bias categories are not definitive and to be regarded as a starting point towards the development of debiasing hypotheses in context.

Second, most of the studies addressed in this review were reported to be effective in either completely eliminating the impact of bias on the decision outcome, reducing the impact of bias or reducing the undesirable effects of bias on the judgement or decision outcome. The strategies were reported as effective or ineffective relative to the conditions specified in the heterogeneous set of experimental studies, limiting the comparison of the effectiveness of each of the three technological debiasing strategy types. Given this heterogeneity in study domains, experimental manipulations, and outcomes studied, a meta-analysis was outside the scope of this scoping review (Lipsey & Wilson, 2001).

Third, to make the task of reviewing the literature manageable, our inclusion criteria specified studies that only explicitly aimed at debiasing decision-making and judgement. However, experimental studies on any cognitive bias where one condition displayed effects of bias and the other did not, may also be evidence of debiasing. Despite this limitation, our work has exemplified the application of a framework of distributed cognition principles along which practitioners and researchers can assess current evidence on debiasing (whether explicit or not) for future work.

Finally, ironically, even in the research literature on bias, there is potential for publication bias. This is well-documented in academia and psychological literature (e.g., Gaillard & Devine, 2022; Ioannidis et al., 2014; Maier et al., 2022; Siegel et al., 2022) wherein evidence supporting the effectiveness of a strategy may be more likely to be published than evidence to the contrary (e.g., the filing cabinet effect). Given the expansive nature of work in this area, it was not possible to access unpublished data from all research groups studying this phenomenon.

Conclusion

Cognitive processes are not confined to the minds of individuals. In scoping the literature on technological debiasing, this review highlights the potential of adopting a distributed cognition approach to debiasing judgements through the utilisation of technological strategies. Our review established a link between distributed cognition approaches to human factors and cognitive bias mitigation, applying these principles to real-world practice. The distributed cognition-based classification can aid human factors practitioners and researchers in identifying debiasing strategies relevant to their domains of interest and provides a structured basis to guide practice and future research. Any domain that requires simultaneous, constant, and collaborative processing of information, particularly under conditions of uncertainty, information overload or time pressure can benefit from integrating debiasing considerations into the design of cognitive systems. Technological debiasing may be particularly impactful for teams who must collaborate to make consequential decisions in uncertain contexts ranging from spaceflight to surgery.

Key Points

• Negatively consequential cognitive biases in high-stakes decision-making can be considered a product of inappropriate flow and representation of information in the decision environment. • We propose that the distributed cognition theory provides a new perspective on understanding and implementing bias mitigation strategies, called technological debiasing strategies, in-context. • The 80 papers analysed here provide evidence supporting the applicability of technological debiasing strategies across domains, with significant gaps and opportunities. • Real-world application of these strategies requires a holistic human factors consideration of decision environments and further conceptual and empirical work.

Supplemental Material

Supplemental Material - Debiasing Judgements Using a Distributed Cognition Approach: A Scoping Review of Technological Strategies

Supplemental Material for Debiasing Judgements Using a Distributed Cognition Approach: A Scoping Review of Technological Strategies by Harini Dharanikota, Emma Howie, Lorraine Hope, Stephen J. Wigmore, Richard J. E. Skipworth and Steven Yule in Human Factors.

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Dr Yule reports research grants from the National Institutes for Health, Canadian Department of National Defense, National Aeronautics and Space Administration, Melville Trust for Care and Cure of Cancer and Royal College of Surgeons of Edinburgh, outside the submitted work.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by The Melville Trust for Care and Cure of Cancer (R47363).

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.