Abstract

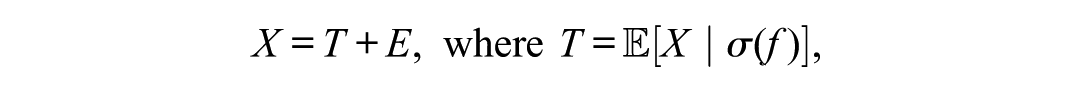

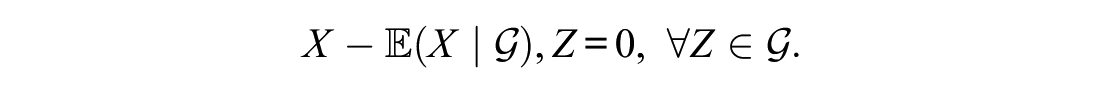

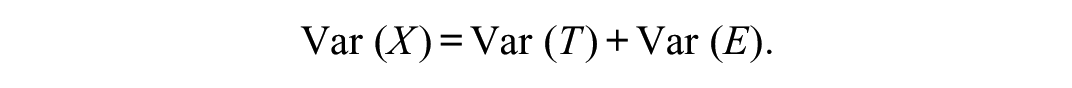

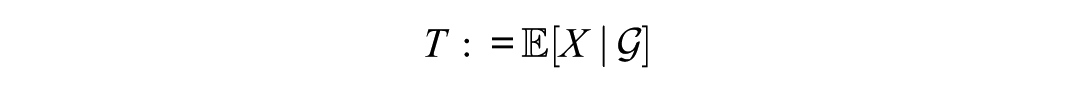

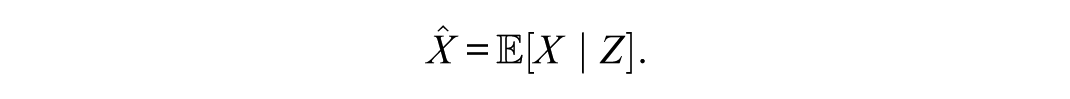

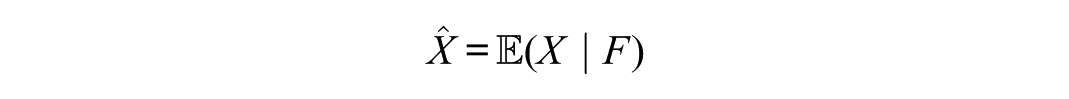

This article reconceptualizes reliability as a theorem derived from the projection geometry of Hilbert space rather than an assumption of classical test theory. Within this framework, the true score is defined as the conditional expectation

Keywords

In practice, researchers estimate reliability using test–retest, alternate forms, or rater-agreement designs, although single administrations remain most common. Despite its ubiquity, definitions of the true score are rarely made explicit, obscuring differences among competing formulations of test theory or the fact that multiple versions exist. Confusion persists in textbooks and empirical studies regarding true scores, error scores, and the reliability of measurement data. Although several authors have addressed these ambiguities (e.g., Kroc & Zumbo, 2020; Raykov & Marcoulides, 2011; Sijtsma, 2009; Sijtsma & Pfadt, 2021; Zumbo & Kroc, 2019; Zumbo & Rupp, 2004), they continue to hinder both the interpretation of reliability and the selection of appropriate indices. Among book-length treatments, Raykov and Marcoulides (2011, Chapter 5) provide one of the clearest clarifications of these misconceptions.

Reliability is a foundational concept in psychometric theory, yet its formal definition remains fragmented across different frameworks. Classical test theory (CTT) defines reliability as the ratio of true-score variance to observed-score variance. Despite its ubiquity, this definition often lacks a rigorous mathematical basis and is treated as an assumption rather than a theorem.

Recent developments in operator-theoretic test theory provide a modern foundation for reliability grounded in Hilbert space geometry. Within this framework, the true score is the orthogonal projection of the observed score onto the subspace of random variables measurable with respect to the latent construct. Reliability then emerges as the squared cosine between the observed and true scores, or equivalently, the Rayleigh quotient associated with the projection operator.

This article extends Zimmerman and Zumbo’s (2001) operator-theoretic approach by establishing reliability as a corollary of the projection theorem in

Beyond its mathematical reformulation, this framework clarifies the interpretation of reliability in both theoretical and applied contexts. It underscores that reliability is not merely a property of test scores but an intrinsic feature of the projection geometry linking observed and true scores.

Purpose and Structure of the Paper

It has been nearly 60 years since Zimmerman initiated this line of inquiry into test theory, which resulted in the contemporary operator-theoretic test theory (e.g., Williams & Zimmerman, 1977; Zimmerman, 1969a, 1969b, 1969c, 1970, 1972, 1975, 1976; Zimmerman et al., 1968), much of it published in this journal. In light of this history, the purpose of the present article is to revisit, extend, and refine foundational psychometric concepts of reliability for contemporary use in an operator-theoretic test theory formalization. These concepts will recur throughout the exposition, their meaning enriched as methodological and theoretical components are introduced. The repetition of key terms and concepts reflects their evolving role within the developing framework.

The purpose of this article is to formalize reliability as a theorem within an operator-theoretic framework, establishing conditional expectation as the orthogonal projection in

The article proceeds as follows. The section Preliminary Remarks: Definitions and Levels of Abstraction in Mental Test Theory sets the stage for the article by providing key definitions and the description of the development of test theory by progressively more abstract formalisms. The section Hilbert Space Framework and Conditional Expectation introduces the Hilbert space foundation and demonstrates that conditional expectation functions as an orthogonal projection operator. The section Variance Decomposition and Reliability as Projection develops the variance decomposition central to CTT and shows how reliability emerges as the squared cosine between true and observed scores. The section Extensions to Regression, Factor Models, and Time Series generalizes this result across models that share the projection structure. The section Numerical Illustrations provides examples that clarify the computational and interpretive aspects of the theory. The section Implications for Estimation and Measurement discusses the conceptual consequences for psychometric practice. The final section, Conclusion and Future Directions, highlights potential extensions of operator-theoretic methods in measurement theory.

Technical details appear in two appendices. Appendix A presents a worked numerical example, and Appendix B provides a measure-theoretic construction of conditional expectation using the Radon–Nikodým theorem.

Preliminary Remarks: Definitions and Levels of Abstraction in Mental Test Theory

The development of mental test theory reflects a progression from concrete score formulations to increasingly abstract mathematical representations. Early models emphasized observable scores and empirical reliability coefficients, whereas later frameworks expressed reliability and validity in algebraic and probabilistic terms. Contemporary formulations extend these ideas into geometric and operator-theoretic spaces, where random variables are treated as elements of

Early contributions by Spearman (1904) and Yule established the foundation, later synthesized by Gulliksen (1950). However, these early approaches lacked formal rigor. A major advance came with the formalization of CTT by Guttman (1945), Cronbach et al. (1963), Novick (1966), Rozeboom (1966), and Lord and Novick (1968). These authors explicitly defined observed, true, and error scores as random variables with well-specified properties expressed through variances, covariances, and correlations. As Raykov and Marcoulides (2011) noted, CTT dominated test development across the educational, behavioral, and social sciences for most of the 20th century, though not without controversy.

Gulliksen (1950), Novick (1966), Novick and Lewis (1967), and Lord and Novick (1968) codified reliability within this axiomatic framework. By contrast, Zimmerman (1975), Steyer (1988, 1989), and Zimmerman and Zumbo (2001) introduced measure-theoretic and operator-theoretic alternatives that treat reliability as a provable consequence of conditional expectation and orthogonal projection in Hilbert space. Conditional expectation is central in both probability and statistics (e.g., Athreya & Lahiri, 2006; Billingsley, 1995; Durrett, 2010; Steyer & Nagel, 2017) and plays an equally central role in psychometric theory (Steyer, 1988, 1989; Zimmerman, 1975; Zimmerman and Zumbo, 2001). As Kroc and Zumbo (2020, p. 6) observed, conditional expectation determines the measurement error structure that specifies which sample units are exchangeable across models. Zimmerman (1975) advanced a measure-theoretic definition of the true score as a conditional expectation, extending earlier treatments by Gulliksen (1950) and Novick (1966). Zimmerman and Zumbo (2001) subsequently formalized this approach in an operator-theoretic framework that extends beyond CTT.

Definitions of Reliability

Although Zimmerman (1975), Steyer (1988, 1989), and Zimmerman and Zumbo (2001) provide mathematically rigorous formalizations of test theory, they do not invalidate earlier formulations such as those of Novick (1966) or Lord and Novick (1968). Rather, they extend and complement them, showing how distinct mathematical frameworks—axiomatic, measure-theoretic, and operator-theoretic—yield different but coherent perspectives on test theory and reliability. Across these perspectives, reliability has been conceptualized as replication, as axioms, as variance decomposition, or as projection. Coefficients such as Cronbach’s (1951)

Definition of CTT

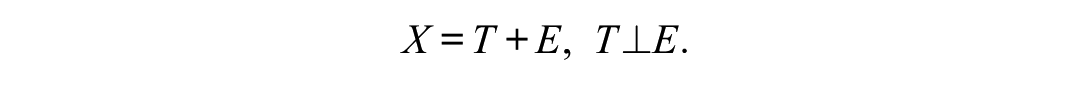

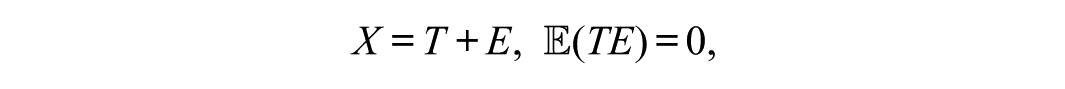

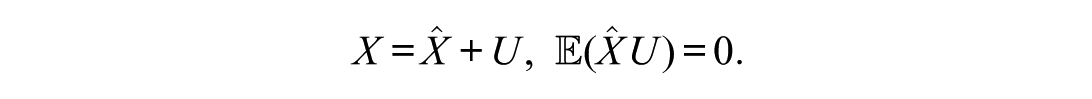

Following Zimmerman and Zumbo (2001), Zumbo and Kroc (2019), and Kroc and Zumbo (2020), CTT is not an empirical model but a formal structure defined by

where X denotes the observed score,

Mathematical Consequences (Not Assumptions)

From this definition, two properties follow immediately:

CTT is thus definitional rather than assumptive. What the classical literature sometimes describes as “axioms” (mean-zero error, uncorrelated true and error scores) are, in fact, theorems derived from the formal definition above.

Definitions of Reliability in CTT

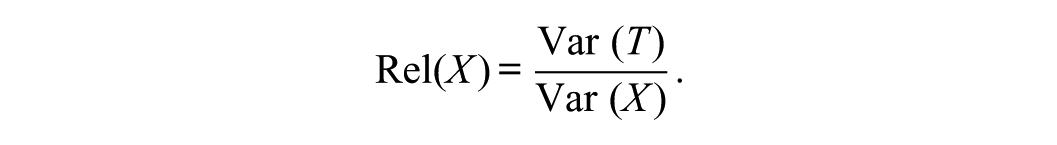

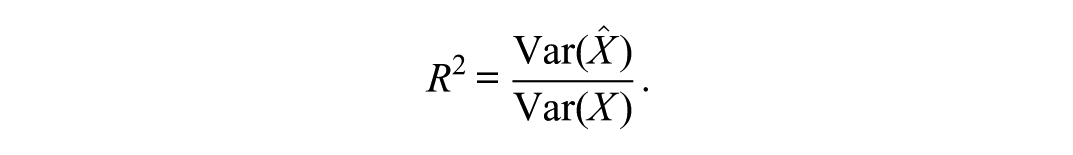

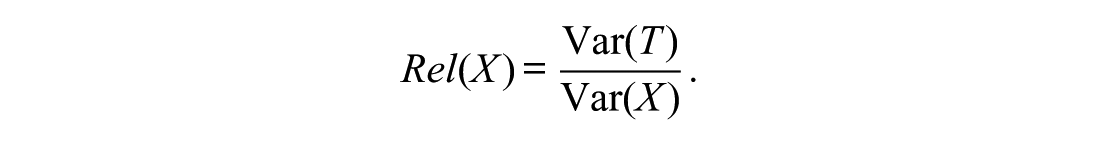

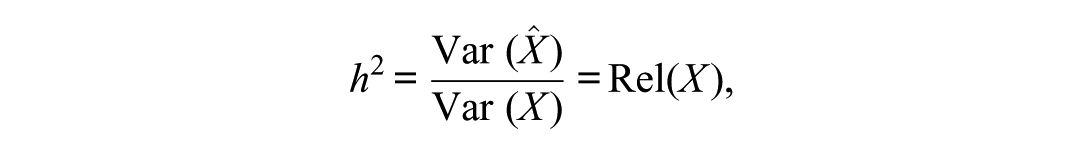

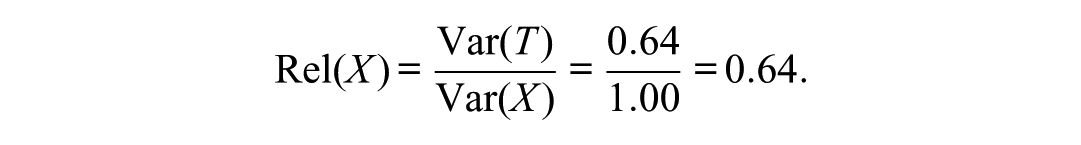

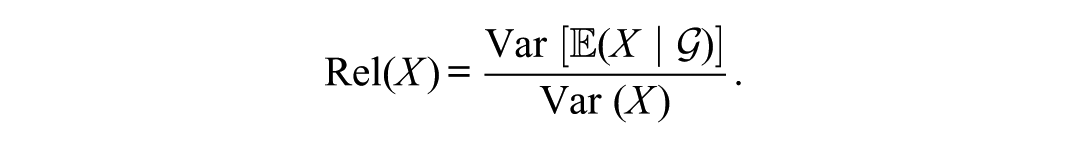

In CTT, reliability is defined as the ratio of true-score variance to total variance:

This canonical formulation expresses the efficiency of measurement as the ratio of signal (true variance) to total variance. It emphasizes that reliability is a population-level property, not an individual one, and depends on how true and error scores are formally defined within a given measurement framework.

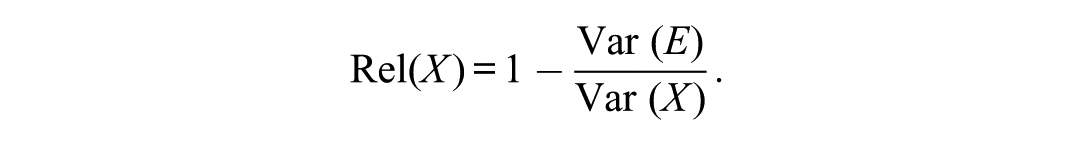

Equivalently,

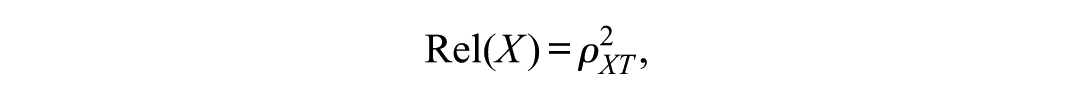

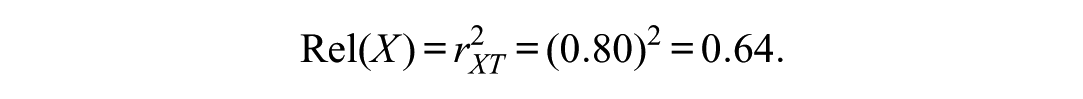

This definition corresponds to the squared correlation between observed and true scores:

where

Equating reliability with internal consistency or a single coefficient obscures its theoretical basis. Measurement precision is real, but frameworks such as CTT (e.g., Raykov & Marcoulides, 2011), generalizability theory (Brennan, 2001; Webb et al., 2006), operator-theoretic models (Zimmerman & Zumbo, 2001), and latent state–trait approaches (Steyer et al., 2015; Steyer & Schmitt, 1990) conceptualize it differently. No single formula captures reliability in full.

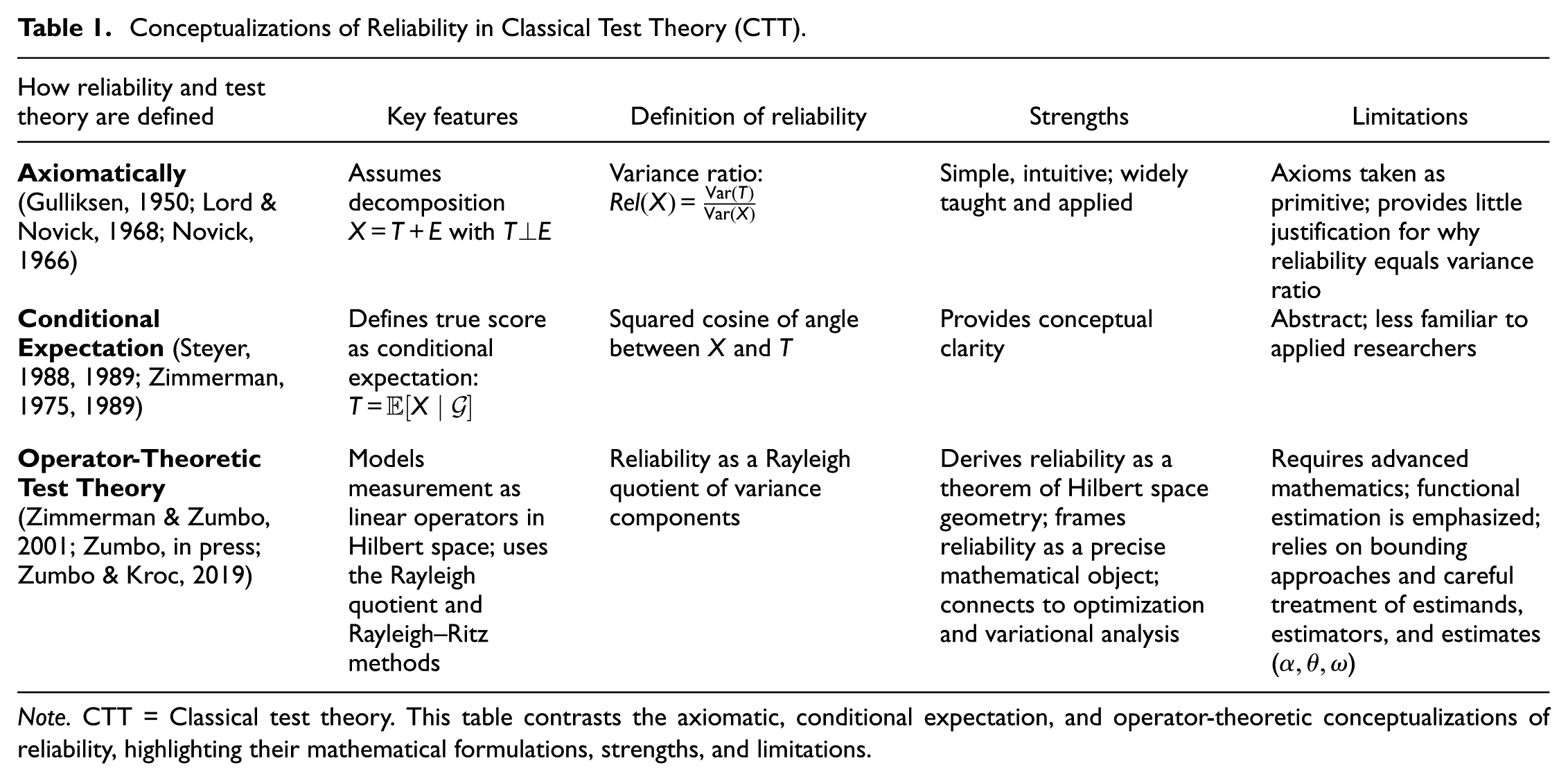

To clarify these distinctions, Table 1 contrasts three major conceptualizations of reliability in psychometrics—axiomatic, conditional expectation, and operator-theoretic. Although measure theory provides a rigorous probabilistic foundation, it remains unfamiliar to many researchers outside mathematics. This likely explains why Novick (1966) and Lord and Novick (1968) incorporated measure-theoretic ideas implicitly, preserving mathematical integrity while maintaining accessibility. Zimmerman (1975) made these foundations explicit, defining true scores as conditional expectations and extending psychometric constructs into the language of measure-theoretic probability. Steyer (1988, 1989) further developed this approach by defining

Conceptualizations of Reliability in Classical Test Theory (CTT)

Note. CTT = Classical test theory. This table contrasts the axiomatic, conditional expectation, and operator-theoretic conceptualizations of reliability, highlighting their mathematical formulations, strengths, and limitations.

Building on Zimmerman’s (1975) foundation, Zimmerman and Zumbo (2001) introduced an operator-theoretic formalization that situates test theory within Hilbert space. In this framework, observed scores are vectors in a Hilbert space, and measurement processes are represented as linear operators acting on those vectors. True and error scores correspond to orthogonal projections onto complementary subspaces, ensuring that the decomposition

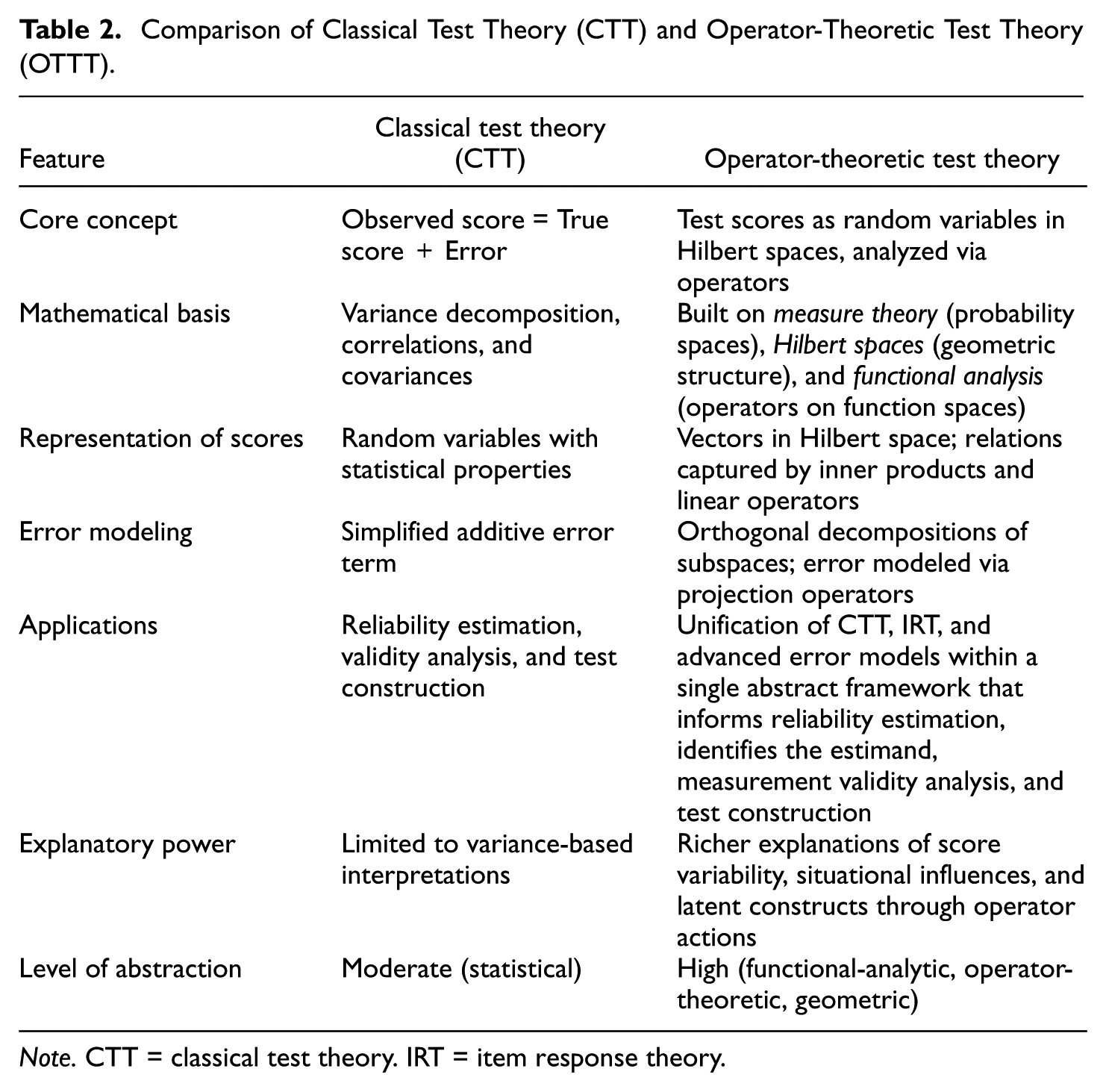

Table 2 summarizes the main contrasts between CTT and operator-theoretic test theory. While CTT is rooted in variance decomposition and correlations, the operator-theoretic framework reframes reliability, error, and latent structure in terms of orthogonal projections and linear operators. This unifying framework connects CTT with modern approaches such as item response theory and generalizability theory, offering greater explanatory power for understanding variability in test performance. Operator-theoretic test theory is therefore best viewed as a distinct theoretical framework rather than an extension of CTT.

Comparison of Classical Test Theory (CTT) and Operator-Theoretic Test Theory (OTTT)

Note. CTT = classical test theory. IRT = item response theory.

Operator-theoretic test theory rests on measure-theoretic foundations. Measure theory provides the probabilistic framework for defining expectations, variances, and covariances, while Hilbert space supplies the geometric setting in which operator theory represents and analyzes reliability. Within this geometry, random variables are represented as vectors, and projections formalize the decomposition of test scores into true and error components. The need for this formalism has been recognized by Zimmerman (1975) and Zimmerman and Zumbo (2001), and further extended by Steyer and Schmitt (1990) and Kroc and Zumbo (2020) in their discussions of measurement error and exchangeability.

The next section formalizes this structure by introducing the Hilbert space framework and defining conditional expectation as the orthogonal projection that underlies reliability.

Hilbert Space Framework and Conditional Expectation

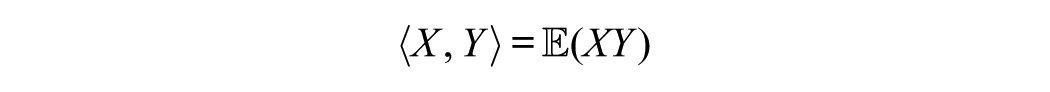

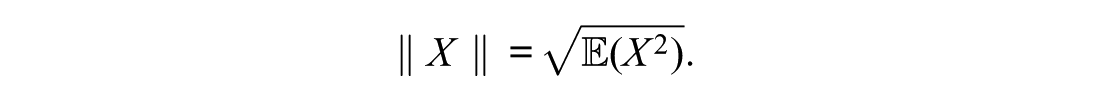

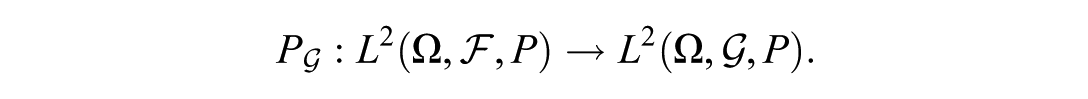

This section introduces the mathematical foundation of the operator-theoretic approach. Let

and norm

In this space, random variables correspond to vectors, and expectations correspond to projections onto subspaces of constant random variables.

A closed subspace

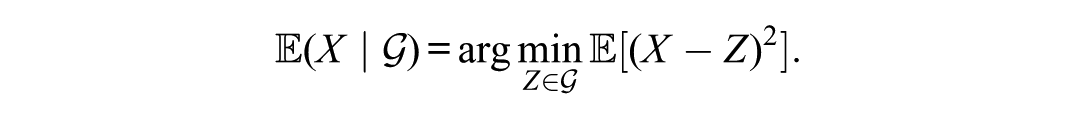

Thus, conditional expectation functions as the orthogonal projection of

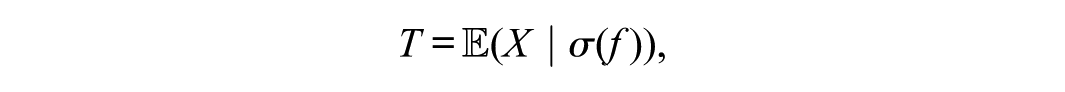

In the context of test theory, let

The Hilbert space formalism thus provides a rigorous foundation for the test-score decomposition: the true score is the projection of the observed score onto the σ-algebra representing the latent variable, and the error term is the orthogonal complement of that projection. This formulation replaces the traditional assumption of uncorrelated errors with a theorem arising from projection geometry.

A measure-theoretic construction of conditional expectation is provided in Appendix B.

Variance Decomposition and Reliability as Projection

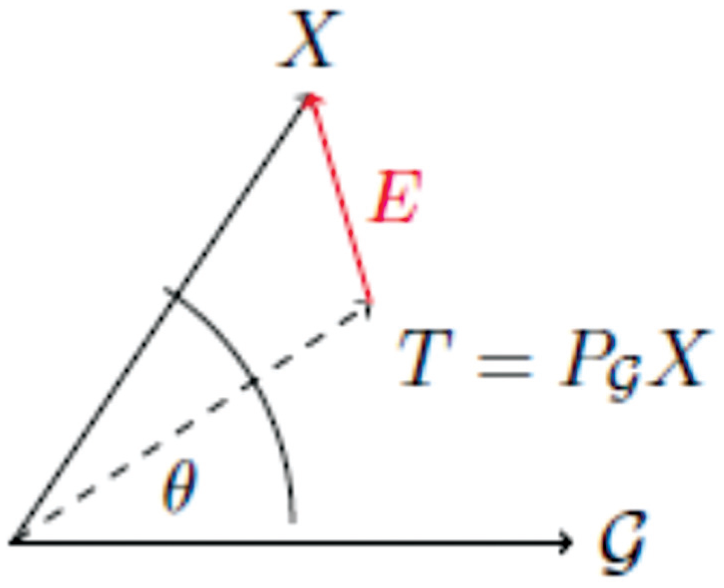

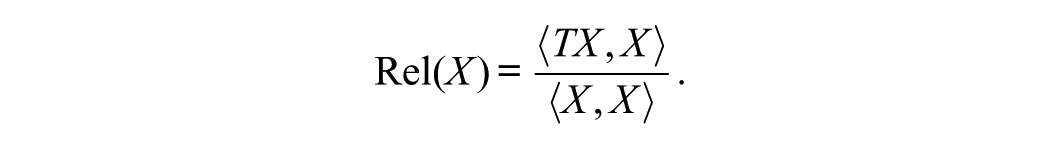

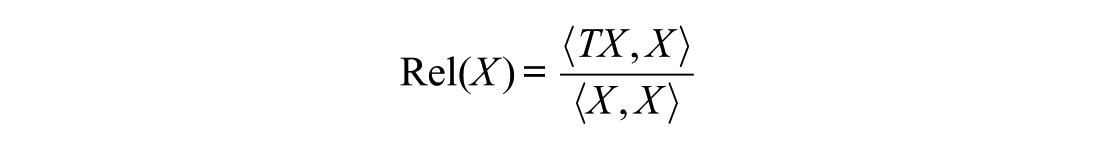

The operator-theoretic framework allows the reliability of observed scores to be derived directly from Hilbert space geometry. Let

the total variance of

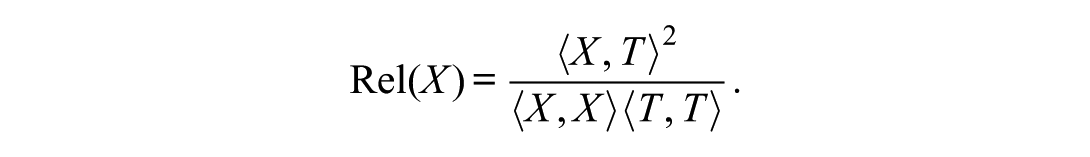

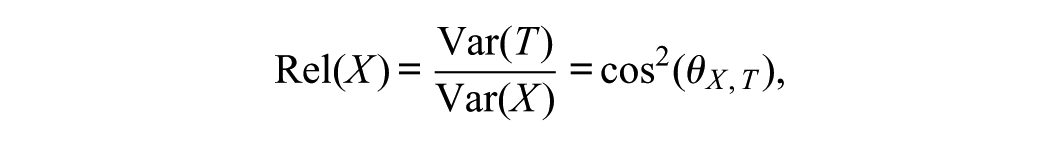

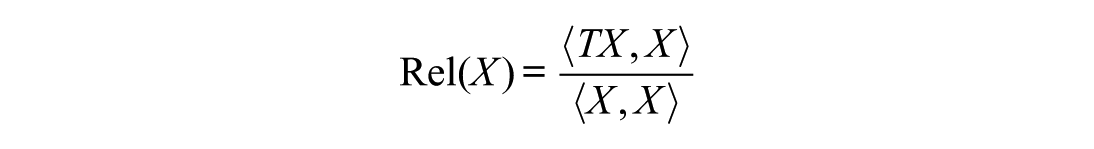

The reliability of

Substituting this expression yields

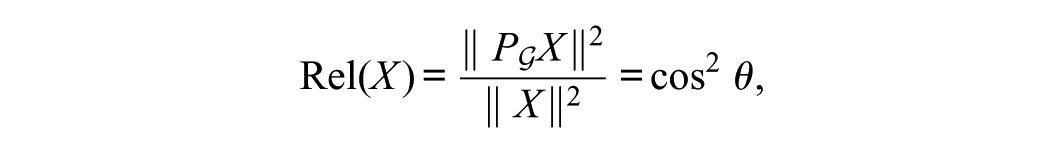

This form expresses reliability as the squared cosine of the angle between

Thus, reliability arises naturally from projection geometry. It is not an assumed property of test scores but a theorem describing how an observed variable relates to its conditional expectation.

Mathematical Structure of Operator-Theoretic Test Theory

This section of the article develops the mathematical structure of operator-theoretic test theory rather than estimation or inference. Recall that operator-theoretic formulations of test theory build on Hilbert space methods, which themselves rest on measure-theoretic foundations. That is, measure theory formalizes the probabilistic quantities of expectation, variance, and covariance; Hilbert-space geometry, through operator theory, provides the analytic framework for representing and studying reliability.

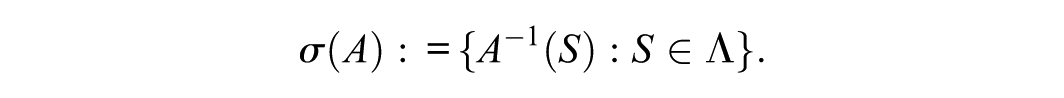

Let

In psychometric terms, this framework formalizes how individuals in a population are linked to their possible observed scores through the probability structure.

Definition of Operator-Theoretic Test Theory

Definition (True Score)

Let

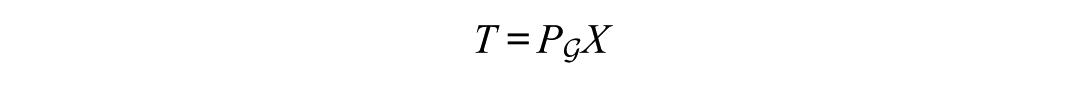

which coincides with the orthogonal projection

where

The conditional expectation is an operator that maps

Thus,

Thus, in Zimmerman and Zumbo’s framework, a sub-

Within Zimmerman and Zumbo’s operator formalization,

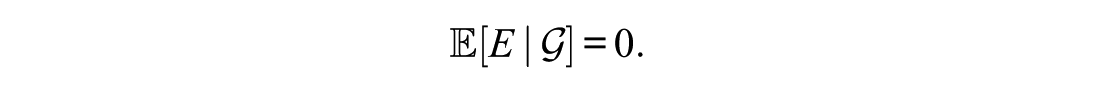

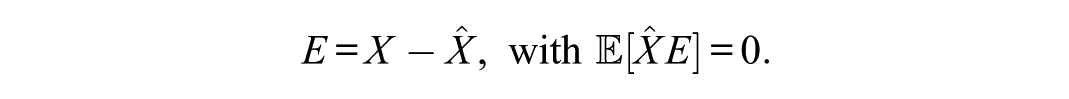

Definition (Error Score)

The error score is the residual

From a psychometric perspective,

Across frameworks, the choice of

Reliability as Projection Norm

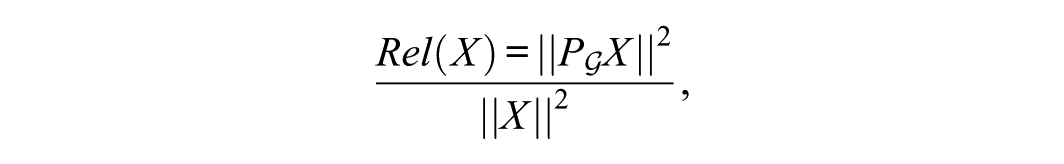

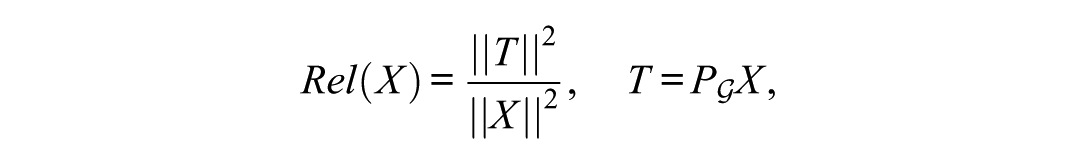

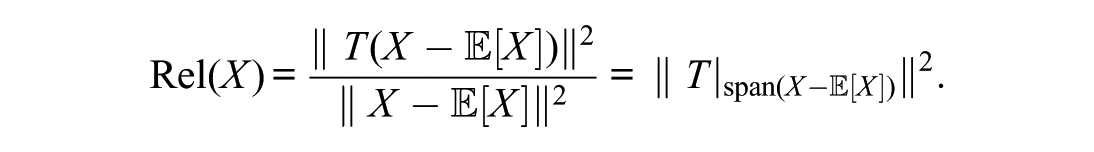

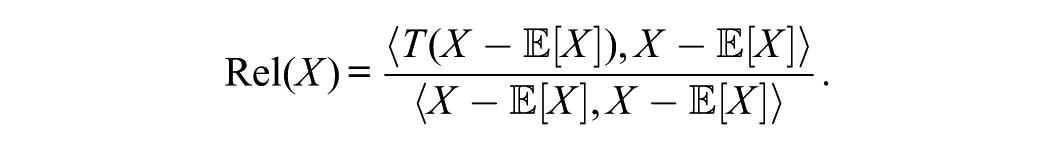

Under the operator-theoretic view, the reliability of

where

Equivalently, reliability is the proportion of observed variance explained by the projection onto the true-score subspace:

In Hilbert space terms, this definition is a Rayleigh quotient.

Reliability as a Squared Cosine

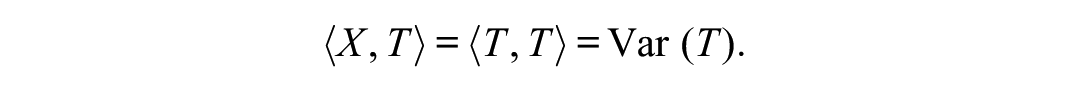

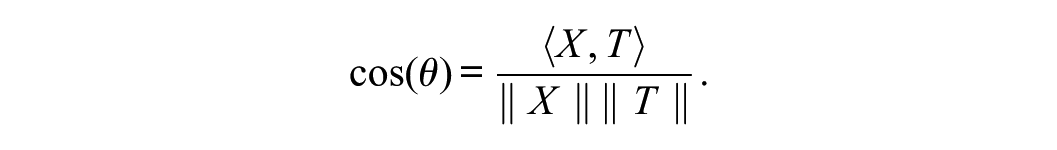

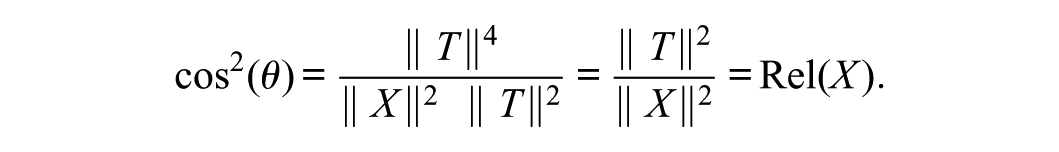

Reliability can be expressed geometrically using inner products, angles, and Pythagoras’s theorem in Hilbert space. In Hilbert space, the cosine of the angle between two vectors is

Because

Therefore, geometrically, reliability is the squared cosine of the angle between

where

In plain language, reliability reflects how close the observed score vector lies to the true score subspace. A small angle (

Although reliability has been defined in various ways within test theory—and for most purposes it matters little whether a given algebraic expression is treated as a definition or as a theorem—an important advantage of defining it as the ratio of true-score variance to observed-score variance is that this formulation applies to all observed scores with nonzero variance. In contrast, the squared correlation is undefined when the true-score variance equals zero. Drawing connections among the definitions, the reliability coefficient is defined as the proportion of variance in the observed score

Orthogonal Projection of Observed Score

Reliability as Projection

The projection theorem tells us that any random variable

The key insight is that reliability is about the quality of this projection. If most of the length of

Reliability Follows as a Corollary of Projection Geometry in

The metric interpretation of reliability follows directly from the geometric fact that conditional expectation is an orthogonal projection in

Conditional expectation is not only a statistical concept but also admits a functional-analytic interpretation. The projection theorem in Hilbert spaces states that every element

When you take

Reliability as a Rayleigh Quotient

Integrating the operator-norm interpretation with the Rayleigh quotient perspective, more generally, reliability can be interpreted as the operator norm of the projection

As Zimmerman and Zumbo (2001) note, this follows from the fact that

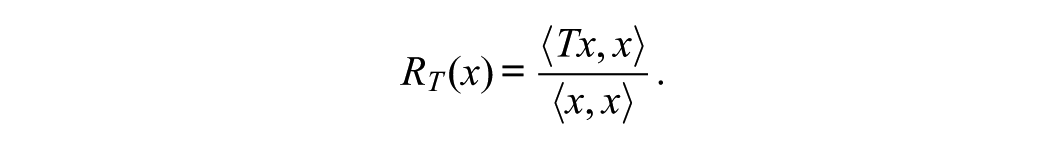

Equivalently, reliability can be expressed as a Rayleigh quotient of the operator

This form highlights reliability as an intrinsic spectral property of the projection operator. In functional analysis, the Rayleigh quotient measures how much of a vector’s energy lies in the direction preserved by a self-adjoint operator. When applied to test theory, it expresses the proportion of observed-score variance that remains under the action of the true-score projection. Reliability equals one if and only if

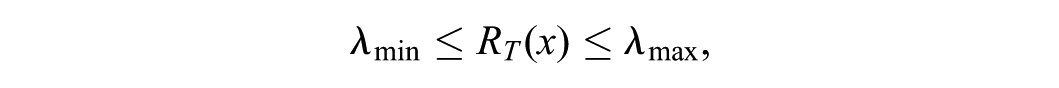

In functional analysis, the Rayleigh quotient plays a central role in connecting operator theory, geometry, and optimization. For a self-adjoint (Hermitian) linear operator

This expression measures how the operator

For compact self-adjoint operators, the Rayleigh quotient satisfies,

where

Thus, the Rayleigh quotient defines an optimization problem—the Rayleigh–Ritz variational principle—whose solutions identify the principal directions in which the operator acts as a pure scaling transformation. This variational characterization carries directly into test theory. Let

Then, the reliability of

Because

showing that reliability is the squared cosine of the angle between the observed and true scores.

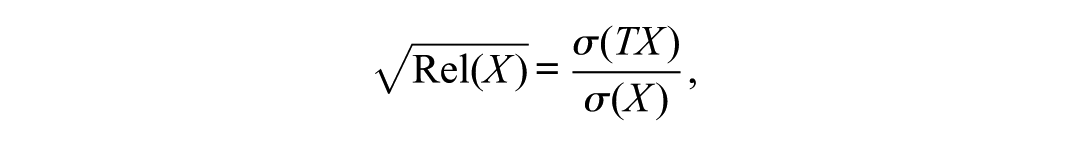

Equivalently, the square root of reliability,

is the ratio of the length of the projection of

From this perspective, a reliable score is “close” to the subspace of true scores—its projection retains nearly the same length as the original vector. If the projection is much shorter, the observed and true-score vectors are nearly orthogonal, and reliability approaches zero.

Thus, reliability can be viewed as a Rayleigh quotient quantifying the efficiency with which an observed score preserves the energy (variance) of its projection onto the true-score subspace.

This interpretation situates reliability within the same mathematical framework that governs principal components, eigenvalue problems, and variational formulations in physics and engineering. Reliability, in this view, is not merely a descriptive coefficient but a theorem of Hilbert space geometry—a scalar functional summarizing the action of the true-score operator on the space of observed scores.

Implications for Applied Psychometrics

In applied psychometrics, reliability coefficients such as Cronbach’s (1951)

thus, it represents an ideal, model-free quantity, while the classical coefficients provide realizable, data-dependent approximations that are contingent on test design and measurement model. The distinction underscores that applied reliability indices are not reliability itself but estimators of the Rayleigh quotient within specific psychometric frameworks.

Reliability as a Spectral Property of the True-Score Operator

From the standpoint of functional analysis, the Rayleigh quotient

defines the proportion of the squared length of

Geometrically, if

This spectral view links reliability to fundamental concepts in operator theory and variational analysis. In the same way that the Rayleigh–Ritz method characterizes eigenvalues as extrema of Rayleigh quotients, reliability represents an extremal property of test scores under projection. The coefficient, therefore, has a dual interpretation: algebraically, as a variance ratio, and geometrically, as the squared cosine between

Connections to Estimation and Measurement Error

The spectral view of reliability clarifies why common estimators, such as coefficient

In this framework, each estimator corresponds to a different projection operator that captures only part of the true-score variance. For example, coefficient

From the perspective of operator theory, estimation error arises because the empirical covariance operator

In sum, reliability coefficients computed from data quantify the empirical projection efficiency of the measurement process. Their accuracy depends on how closely the assumed model approximates the true-score operator that governs the underlying Hilbert-space geometry of the measurement system.

Geometric and Statistical Unification

As we see in Zimmerman and Zumbo (2001) and Zumbo (2023), the projection interpretation reveals that reliability, regression

Formally, all three quantities share the structure

where

This unification highlights the deep mathematical continuity across measurement models: reliability,

Summary, Axioms, and Theorems in Operator-Theoretic Test Theory

Thus, reliability can be understood simultaneously as (a) a geometric measure of alignment between

As Zumbo (in press) shows, this formalization yields four insights.

First, reliability quantifies the proportion of observed variance attributable to systematic variance, situating it as a measure of alignment between

Second, the geometric interpretation clarifies that reliability equals one when the observed score lies entirely in the true-score subspace (no error) and approaches zero as it becomes orthogonal (all error), paralleling signal-to-noise ratios in PCA and signal processing (Zimmerman & Zumbo, 2001).

Third, the conditional expectation

Fourth, the classical theory of reliability, rooted in CTT, traditionally depends on assumptions such as parallel forms, tau-equivalence, and equal weighting of items. These assumptions, while convenient for analytic tractability, impose strict limitations on the generalizability and interpretability of reliability coefficients such as Cronbach’s

By contrast, the Hilbert space and operator-theoretic formulation of reliability—grounded in the tools of projection geometry and conditional expectation—transcends these limitations. This Rayleigh quotient form defines reliability geometrically as the squared cosine of the angle between

Terminological Clarification

At several points, we clarified that coefficients such as

Having established reliability as a projection coefficient, we now generalize the same geometric structure to broader statistical models that also embody projection operators.

Extensions to Regression, Factor Models, and Time Series

The geometric structure of reliability extends naturally to regression, factor-analytic models, and time series. In each case, prediction or estimation can be interpreted as an orthogonal projection in the Hilbert space

Regression: Reliability as

As noted by Zumbo (2007) and Zimmerman and Zumbo (2001), the geometric approach also shows why reliability is analogous to the familiar

Consider a regression model in which we predict an observed score

This is exactly the conditional expectation, and thus exactly the projection of

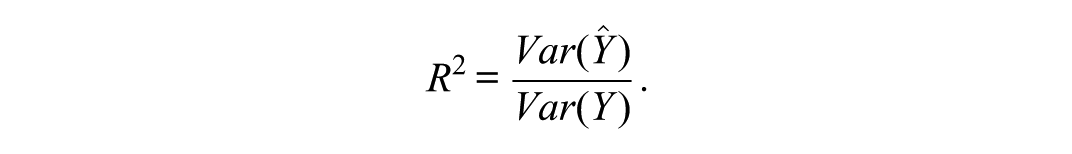

The regression coefficient of determination is

However, this is the same form as reliability:

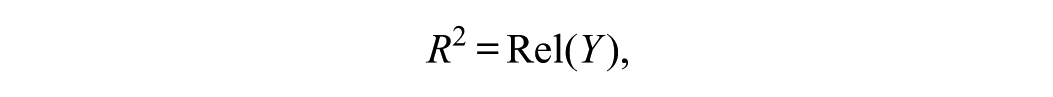

Thus, as Zumbo (2007) states, reliability is simply the

Comparing this with the definition of reliability shows that

when

Factor-Analytic Communality as Projection

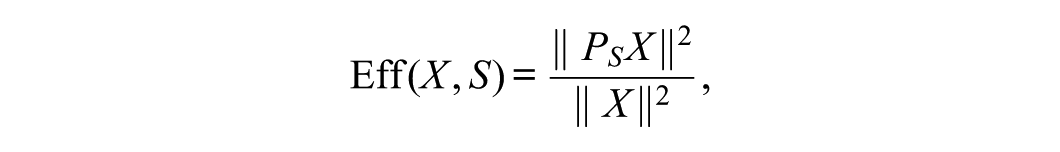

The same principle applies to factor analysis. Let

denote its projection onto the subspace spanned by the common factors

The communality of

showing that communality is also a measure of projection efficiency. When factors perfectly reproduce

Across regression, factor analysis, and test theory, reliability shares a unified geometric interpretation: it quantifies how well an observed variable aligns with its projection onto a subspace representing systematic variation. This projection-based view links psychometric reliability to a broad family of statistical concepts grounded in Hilbert space geometry.

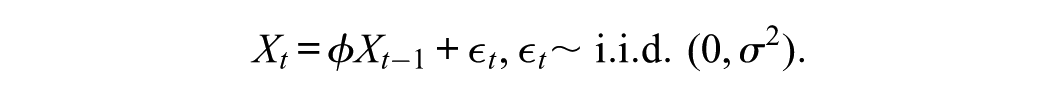

Time Series: Reliability as Predictability

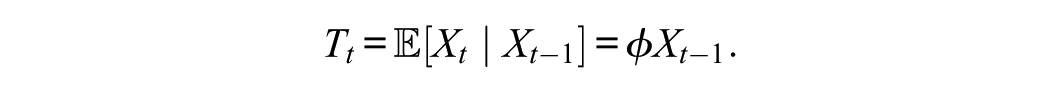

A third connection arises in time-series analysis. Consider an autoregressive model of order 1 (AR[1]):

The one-step predictor of

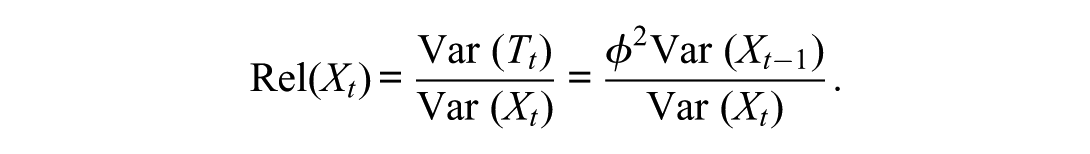

The reliability of

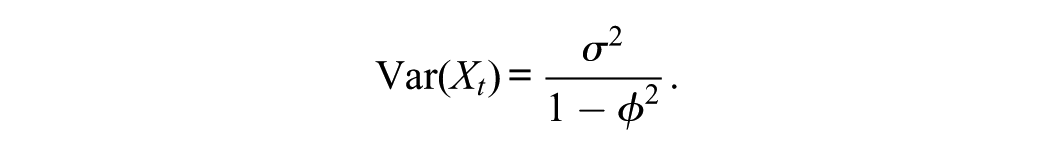

In the stationary AR(1) case,

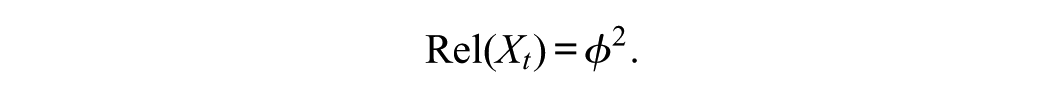

So reliability simplifies to

Thus, the autoregressive coefficient

Practical Implications

Recasting CTT in operator-theoretic terms has direct consequences for applied test development and interpretation. By treating the true score as a projection rather than an unobservable latent variable, practitioners can frame reliability and error not as assumptions but as intrinsic mathematical consequences of the model. This shift clarifies long-standing misconceptions about the nature of measurement error. It emphasizes that reliability is a geometric property of score alignment rather than a fixed attribute of a test. In practice, this perspective provides a more rigorous foundation for evaluating test quality, comparing measurement models, and integrating CTT with modern psychometric frameworks such as item response theory. It also enhances interpretability by showing how test scores can be decomposed into systematic and error components through projections in Hilbert space, offering both conceptual clarity and methodological precision in real-world testing scenarios.

Integrative Implications

Taken together, the operator-theoretic reformulation of CTT unifies its conceptual foundations with practical applications, offering a coherent framework that bridges theory and practice.

Numerical Illustrations

This section provides numerical examples to illustrate the projection-based definition of reliability. Each example demonstrates how

Example: Reliability in a Two-Variable System

Consider two standardized variables,

This value indicates that 64% of the variance in

The geometric representation clarifies that reliability depends solely on the alignment between

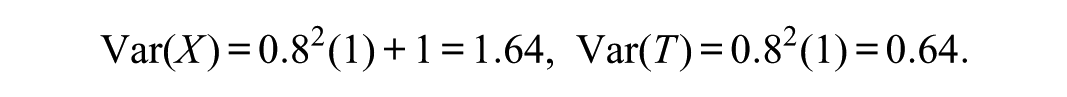

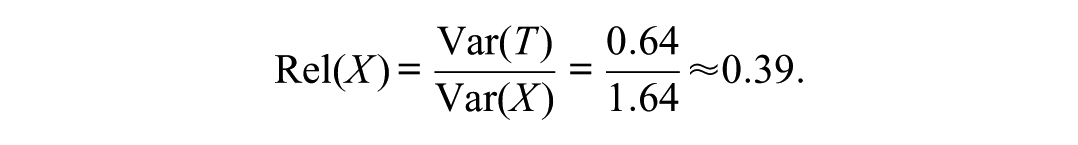

Operator-Theoretic Computation

Suppose the true score is generated by the conditional expectation

Therefore,

This result follows directly from the orthogonal projection theorem:

These examples show that reliability can be derived geometrically, statistically, or computationally from the same principle of orthogonal projection. The projection-based approach provides a unified interpretation of reliability across CTT, regression, and factor models. A worked example is presented in Appendix A.

Implications for Estimation and Measurement

The projection-based definition of reliability reframes several aspects of psychometric estimation and interpretation. Because

Reliability Estimation and Sampling

Traditional reliability coefficients, such as Cronbach’s (1951) coefficient alpha, congeneric reliability, or hierarchical omega (McDonald, 1999), can be interpreted as sample-based estimates of

This equation emphasizes that reliability estimation involves approximating the conditional expectation operator using observed data. Sampling variability affects the empirical estimate of

Conceptual and Interpretive Implications

Viewing reliability as a projection theorem unifies several concepts that are often treated separately. Reliability, regression

The geometric formulation also clarifies the meaning of “measurement error.” Error is the orthogonal complement of the projection that defines the true score, and its variance quantifies the portion of

Finally, the projection perspective integrates classical reliability with modern statistical frameworks. In Bayesian estimation, for example, the posterior mean

Overall, the operator-theoretic view strengthens the conceptual foundation of reliability by embedding it in a general geometric theory of estimation. This perspective allows psychometricians to interpret reliability not as an assumption or coefficient but as a theorem describing the structure of expectation, variance, and projection in measurement.

Conclusion and Future Directions

This article has reframed reliability as a theorem rather than an assumption of CTT. By situating reliability within the geometry of Hilbert space, the analysis demonstrates that the true score is the orthogonal projection of the observed score onto the subspace defined by the latent variable. Reliability, expressed as

Conceptually, this reformulation clarifies that reliability is not an empirical artifact of a specific test or model but a mathematical property of expectation and variance in

Methodologically, this approach provides a foundation for unifying psychometric estimation with modern predictive modeling. The same projection principles that define

Future research can explore these extensions systematically. Potential directions include defining reliability for generalized linear and nonparametric models, developing operator-based reliability estimators, and examining how projection geometry interacts with measurement invariance and latent-variable identification.

By interpreting reliability as a corollary of the projection theorem, this article provides a rigorous and unifying mathematical basis for measurement theory. It connects classical psychometric constructs with modern statistical theory, reinforcing the view that reliability reflects not merely consistency in measurement but the geometry of expectation itself.

New Avenues for Psychometric Research

On Quantifiers and the Estimand When Selecting Reliability Coefficients

As Zumbo and Kroc (2019) emphasized, a central issue in test theory is whether an algebraic expression is taken as a definition or as a theorem. An advantage of defining reliability as the ratio of true-score variance to observed-score variance is that this definition applies to all observed scores with nonzero variance. In contrast, the squared correlation is undefined if the true-score variance is zero.

More generally, Zimmerman and Zumbo (2001) introduced an operator-theoretic formulation of CTT. In their approach, the measurement process is expressed as a collection of linear operators acting on a Hilbert space of true-score vectors. Within this framework, the true score and error score correspond naturally to projection operators on the Hilbert space. Once this identification is made, geometric concepts such as distance, length, angle, and orthogonality have direct implications for test theory. Zimmerman and Zumbo further showed that reliability itself can be understood as a mathematical object defined through projection, thereby situating it as an inherent feature of the operator-theoretic structure.

It is this mathematical object—the conventional CTT reliability—that Zumbo and his colleagues refer to as theoretical reliability. The qualifier theoretical is appropriate because this psychometric construct arises from the abstract structure of the Hilbert space and is not formally estimated in routine psychometric practice. Instead, commonly used reliability coefficients (e.g., Cronbach’s α) are best understood as bounding, from below, the theoretical reliability (see Zumbo, 1999).

As Zumbo and Kroc (2019) note, although the psychometric literature frequently uses the phrase to estimate the reliability, the term estimate is somewhat misleading. From a strictly formal perspective, coefficients such as Cronbach’s α may be regarded as biased estimators of theoretical reliability. However, this is not the sense in which practitioners typically use the term. It is therefore clearer to say that one may measure or quantify reliability through statistical procedures and measurement designs that yield bounds on the theoretical value. These procedures include repeated, structured data-collection strategies combined with measurement models such as parallel forms, tau-equivalence, or essential tau-equivalence. Approaches such as the empirical copula method (Bonanomi et al., 2015; Zumbo, in press) further illustrate how empirical strategies can be used to bound reliability.

Because theoretical reliability is formally defined as a ratio of variance components at the population level, a given numerical value of reliability may correspond to multiple possible combinations of true-score variance and error-score variance. To address this, psychometricians typically impose bounds on the error component of the variance ratio. In doing so, they define a quantifier of reliability through (a) the choice of how error is bounded (e.g., internal consistency, interrater variation, or test–retest variation), (b) the design of the measurement experiment, and (c) the choice of estimator. Different estimators naturally yield different properties of the resulting sample values, and which properties are most desirable depends on the analytic objectives.

Finally, much of the confusion surrounding reliability arises from the failure to distinguish among estimators, estimates, and estimands. As emphasized in statistics, an estimator is a formula or function applied to data; an estimate is the numerical value obtained from applying the estimator to a sample; and the estimand is the underlying quantity the estimator is designed to capture. Clarifying these distinctions is crucial to resolving persistent ambiguities in psychometric discussions of reliability.

For example, reliability emerges as a projection operator—a Rayleigh quotient—that Zumbo (1999) and colleagues (e.g., Gadermann et al., 2012; Liu et al., 2009; Zumbo et al., 2007) term theoretical reliability, the estimand. Unlike other formulations, in which reliability remains conceptually vague, the Rayleigh quotient defines it as a precise mathematical object grounded in Hilbert space.

Bounded Interpretations and the Unification of Diverse Reliability Coefficients

Zimmerman and Zumbo’s operator-theoretic perspective, viewing test theory through the lens of Hilbert spaces and operator theory, helped to open the line of research that the present work extends. Their recognition that reliability possesses a fundamental Rayleigh quotient structure was a key mathematical insight, enabling current developments around bounded interpretations and the unification of diverse reliability coefficients. Notably, the operator-theoretic foundation laid in Zimmerman and Zumbo’s developments provides the rigorous mathematical infrastructure that underlies recent advances on the quantification–estimation distinction, the bounded nature of reliability measures, and the reinterpretation of coefficients such as Armor’s (1974) coefficient

Conceptual Foundations

Zumbo and Kroc’s (2019) and Zumbo’s (in press) description of the estimand/estimator/estimate framework for reliability emphasizes the need to separate what we want to know (theoretical concept or psychometric construct) from how we calculate it (procedure) and what we get (numerical value). Ignoring these distinctions risks reducing reliability theory to numeric manipulation without grounding in meaning. The central point is: conceptual clarity in reliability theory requires respecting the hierarchy of estimand → estimator → estimate. For example, Zumbo (in press) used the reconceptualization of theoretical reliability using a Rayleigh quotient within geometric test theory, introducing polychoric and copula estimators for composite reliability of ordered categorical scores. It establishes Armor’s (1974) coefficient theta (θ) as a distinct psychometric index, independent of Cronbach’s (1951) coefficient alpha (α), and demonstrates its computation and interpretation with empirical data, positioning θ as a robust alternative for estimating composite reliability.

Quantification Versus Statistical Estimation

Quantification is about defining what reliability means as a psychometric construct and how it connects to the measurement operation. Statistical estimation is about using formulas to approximate this psychometric construct from data, with sampling variability. The confusion arises when applied researchers leap straight to estimation (e.g., “Cronbach’s α = .85”) without clarifying quantification (what psychometric construct this actually represents). What we learn is that quantification must precede estimation. Without it, reliability coefficients are interpreted as ends in themselves rather than bounded representations of theoretical reliability defined in operator-theoretic test theory.

Reliability as a Bounded Object

Reliability coefficients (α, ω, θ, GLB, etc.) are best understood as bounds on a more fundamental psychometric construct—theoretical reliability. For example, coefficient α is a lower bound under tau-equivalence, coefficient θ is not “weighted α” but a distinct estimator of reliability-as-Rayleigh-quotient (Zumbo, in press). Other coefficients provide different bounding relationships depending on assumptions. Insight provided by operator-theoretic test theory: Instead of asking which coefficient is best, the question becomes which bound is most meaningful for the theoretical psychometric construct and measurement context?

Operator-Theoretic and Geometric Reframing

In Hilbert space, reliability is naturally expressed as a Rayleigh quotient:

where

Practical Implications

Reporting reliability should move from “reliability = .85” to statements like:

“Internal consistency (a lower bound for reliability under tau-equivalence) estimated at .85 [CI].” This clarifies (a) the quantifier (what aspect of reliability is bounded), (b) the estimator (formula used), (c) the estimate (numerical value + uncertainty), and (d) the assumptions behind the bound. Operator-theoretic test theory provides the following insight: transparent reporting reframes reliability as a bounded, assumption-dependent estimate of a deeper theoretical psychometric construct, the Rayleigh quotient as the estimand.

Integrative Contribution

The resulting approach at once provides mathematical rigor by embedding reliability in operator theory and Rayleigh quotient geometry and practical guidance by reframing reliability coefficients as bound-selecting tools, not definitive measures. The result is a unified foundation that could reshape both knowledge mobilization and pedagogy (clarity on estimand/estimator/estimate) and practice (bound-sensitive reporting), while also opening paths for theoretical generalization (new operator-based reliability quantifiers).

Reinterpretation of True Scores in Operator-Theoretic Test Theory

The alternative interpretation of true scores builds on conditioning not only on the person but also on the situation in which measurement occurs. In this reinterpretation, the true score is defined as the conditional expectation,

where

In short, operator-theoretic test theory provides a framework for reinterpreting the familiar decomposition

By contrast, the conventional interpretation defines the true score as a property of the test-taker alone. This view is grounded in the classical formulations of Guttman (1945, 1953), Lord and Novick (1968), and Novick (1966), where the true score was defined as the expectation of an individual’s observed scores over infinite independent (memoryless) replications of a test. Lord and Novick’s notion of the “propensity distribution” formalized this as the hypothetical distribution of observed scores under repeated administrations, with the test-taker’s memory wiped clean between trials. In this view, variation in observed scores arises solely from measurement error, given the repeated-measures metaphor.

Without the measure-theoretic framework that is part of operator-theoretic test theory, one must rely on the metaphor of “wiping the test-taker’s memory clean between replications,” which explains why Lord and Novick and others described the true score in this way. This metaphor also underlies why many accounts of CTT frame it as a repeated-measures assessment experiment; it is embedded in the very definition of the true score. Moreover, this repeated-measures view helps explain why some writers portray CTT as imposing immutable outcome variables, why simple difference scores are often dismissed as poor indicators of change (Zumbo, 1999), and why this has been described as a metaphor run amok.

In contrast, the operator-theoretic reinterpretation defines the true score as conditioning on all possible outcomes of the measurement process

Thus, the operator-theoretic reinterpretation reframes the true score not as an abstract property of the individual under hypothetical replications, but as an ecologically grounded psychometric construct that reflects the individual’s performance across the full range of contexts in which measurement occurs (Zumbo, 2023).

True Score as Conditional Expectation Over All Possible Outcomes for a Respondent, Not Repeated Testing

The operator-theoretic reinterpretation avoids these limitations by conditioning directly on the test-taker or respondent in the measurement process and needing to invoke the memoryless random variable concept. Instead of invoking hypothetical replications, the true score is understood as the conditional expectation over all possible outcomes of

Within the operator-theoretic formalization,

In summary, in CTT, the true score is a fixed property of the individual, defined by infinite replications. However, for the operator-theoretic reinterpretation of a true score as person-in-situation (Anastasi, 1983; Steyer et al., 1992), defined by conditional expectation, consistent with ecological validity and explanation-focused measurement (Zumbo, 2023).

Conclusion and Implications

The geometric view of reliability as projection provides both theoretical clarity and practical insight. Instead of being treated as an axiom of CTT, reliability emerges as a theorem: a necessary consequence of orthogonal projection in Hilbert space. In this section, we expand on the conceptual, knowledge mobilization, practical, and methodological implications of this framework.

Conceptual Clarity

CTT often presents reliability in a somewhat ad hoc fashion, with formulas justified by tradition rather than derivation. By situating reliability within the projection theorem, we see that:

Reliability is not a psychometric convention but a mathematical necessity.

The decomposition

Reliability is precisely the squared cosine of the angle between

This reframing shifts reliability from being a peculiar artifact of test theory to being a manifestation of a general structure underlying regression, factor analysis, and time-series forecasting.

By grounding reliability in the projection theorem, we elevate it from a psychometric convention to a universal mathematical structure. This has several broader impacts:

It builds a bridge between psychometrics and statistics, situating test theory alongside regression and time series as part of the same projection framework.

It clarifies to practitioners that reliability is not arbitrary but necessary, derived from geometry.

It empowers educators to teach reliability visually and intuitively, enhancing accessibility for students.

Ultimately, this reframing strengthens the foundations of measurement theory, both conceptually and pedagogically.

Practical Implications for Test Design

The projection view clarifies several design principles:

Thus, test construction becomes the task of engineering vectors that project efficiently onto the latent subspace.

Footnotes

Appendix A

Appendix B

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Canada Research Chairs program (AWD-016179 UBCEDUCA 2020) and the UBC Paragon Research Initiative to Bruno Zumbo (Award Number: AWD-024645 UBCEDUCA 2023), and the UBC Distinguished University Scholar program.