Abstract

This article develops a unified geometric framework linking expectation, regression, test theory, reliability, and item response theory through the concept of Bregman projection. Building on operator-theoretic and convex-analytic foundations, the framework extends the linear geometry of classical test theory (CTT) into nonlinear and information-geometric settings. Reliability and regression emerge as measures of projection efficiency—linear in Hilbert space and nonlinear under convex potentials. The exposition demonstrates that classical conditional expectation, least-squares regression, and information projections in exponential-family models share a common mathematical structure defined by Bregman divergence. By situating CTT within this broader geometric context, the article clarifies relationships between measurement, expectation, and statistical inference, providing a coherent foundation for nonlinear measurement and estimation in psychometrics.

Keywords

Building on foundational contributions from Zimmerman (1975) and Zimmerman and Zumbo (2001), Zumbo (in press-a) recently described an operator-theoretic framework reconceptualizing reliability as a theorem derived from the projection geometry of Hilbert space rather than as an assumption of classical test theory (CTT). This paper extends the linear operator-theoretic framework developed in the companion work (Zumbo, in press-a) to nonlinear and information-geometric settings.

In that earlier formulation, projection and reliability were defined within the Euclidean geometry of

Motivation and Applied Implications

Psychometric practice increasingly involves complex data involving nonlinear relationships as a result of, for example, ordinal items responses, bounded scales, and skewed distributions (Raykov & Marcoulides, 2011). Existing extensions (e.g., item response theory [IRT], generalized linear latent models) handle these through specialized link functions, but often lack a unifying theoretical foundation. The Bregman framework addresses this need by providing a single geometric principle—projection under a convex potential—that governs both linear and nonlinear models.

In applied test analysis, this generalization allows reliability, regression, and scoring procedures to be interpreted consistently across data types and model forms. For example, reliability defined via divergence rather than variance remains meaningful for ordinal, categorical, or information-based measures, preserving the projection interpretation of true score even outside Euclidean spaces. This perspective reframes model comparison, measurement precision, and estimation efficiency as geometric questions about curvature and projection alignment, thereby offering a unified theoretical and applied toolkit for modern psychometrics.

This geometric perspective transforms measurement from a question of linear decomposition to one of projection alignment within curved spaces, offering a principled foundation for both theoretical innovation and applied psychometric practice.

Aim and Overview

As noted by Zumbo (in press-a), nearly six decades have passed since Zimmerman and his collaborators (e.g., Williams & Zimmerman, 1977; Zimmerman, 1969a, 1969b, 1969c, 1970, 1972, 1975, 1976; Zimmerman et al., 1968) established a rigorous, probabilistic, and geometric foundation for CTT,

The remainder of this paper is organized as follows. The next section revisits projection and reliability within the Euclidean geometry of

Preliminaries: Projection and Reliability Within the Euclidean Geometry of L2

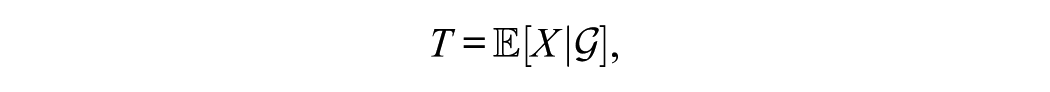

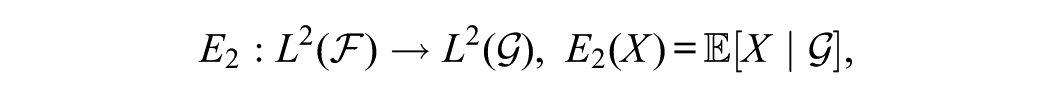

As described by Zumbo (in press-a), let

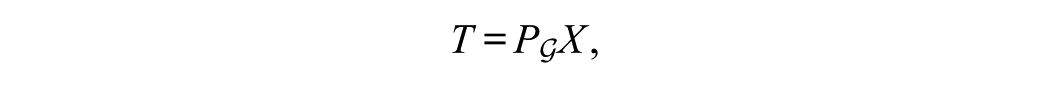

which coincides with the orthogonal projection

where

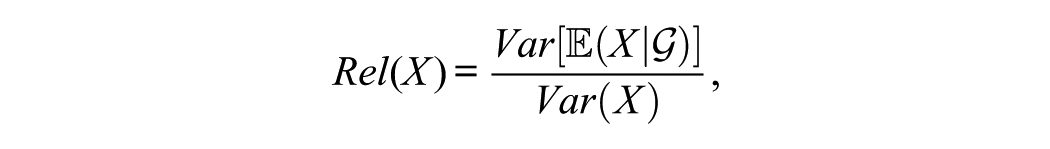

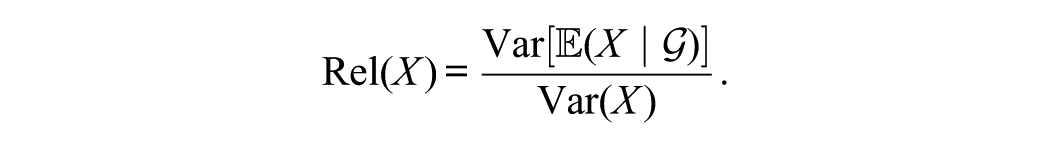

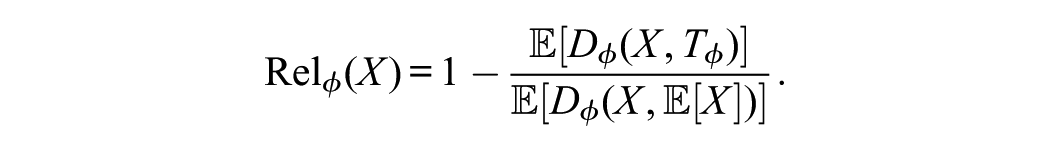

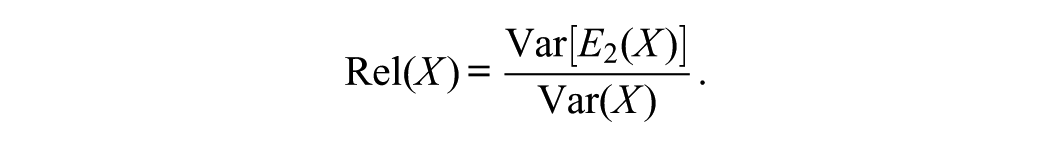

Reliability, defined as the variance ratio

quantifies projection efficiency—the squared cosine between observed and true-score projections. This operator-theoretic view unifies reliability, regression

Building on this foundation, Zumbo (in press-b) extended the theory using the Rayleigh quotient to define polychoric and copula-based estimators for composite reliability. These developments positioned theta reliability (Armor, 1974) as a robust, theoretically grounded alternative to Cronbach’s alpha (Cronbach, 1951) and motivated a broader generalization of reliability and regression through Bregman projections and non-Euclidean geometries.

The sections that follow not only formalize the Bregman projection but also contrast it with other generalizations of Euclidean test geometry, clarifying its distinct advantages for modern psychometric modeling.

Bregman Projections, Statistics, and Probability

Analytic and Convex Foundations

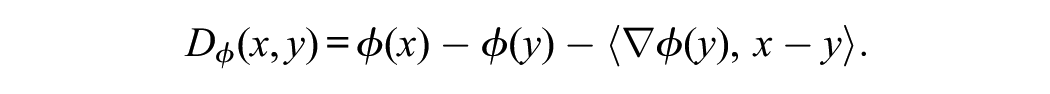

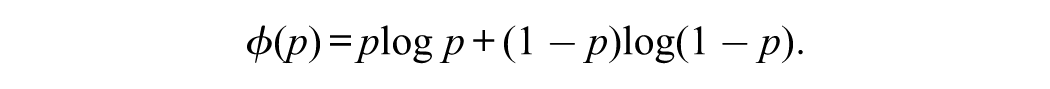

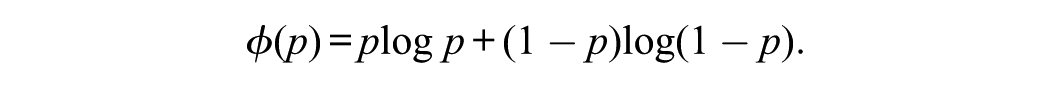

Functional analysis provides the linear framework in which expectation and reliability emerge as orthogonal projections in Hilbert space. Convex analysis extends this structure by replacing linearity with curvature, allowing projection and optimization to occur on non-Euclidean manifolds. The Bregman divergence arises from this shift, measuring discrepancy through the tangent geometry induced by a convex potential

Together, functional and convex analysis reveal that linear and nonlinear measurement models share a common geometric foundation: projection as optimal approximation under either squared distance or convex divergence.

Although no single monograph focuses exclusively on Bregman projections in statistics and probability, their connection to these fields is well established across research papers, conference proceedings, and specialized texts in convex analysis, optimization, and statistical inference. Bregman projections play key roles in statistical estimation, robust statistics, and optimal transport, where they are closely related to algorithms such as the iterative proportional fitting procedure. Readers seeking a detailed discussion of the underlying mathematics may consult (Amari, 2016; Amari et al., 2018; Banerjee et al., 2005a, 2005b; Bauschke & Combettes, 2017; Bregman, 1967; Calin & Udrişte, 2014; Correa et al., 2023; Dodds et al., 1990; Li, 2021; Nielsen, 2019; Nielsen et al., 2022; Nielsen & Nock, 2014; Stummer & Vajda, 2012). Collectively, these works provide some of the mathematical foundations for the geometric and operator-theoretic concepts discussed in this paper.

Nonlinearity of Bregman Projections

This subsection clarifies the nonlinearity of Bregman projections, explains its geometric origin, and situates the concept within the broader framework developed in this paper.

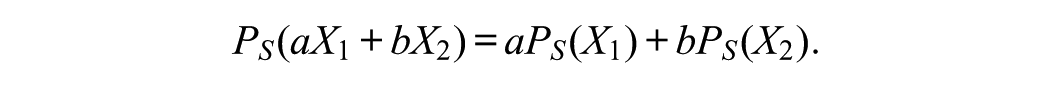

Although Bregman projections generalize the classical notion of orthogonal projection, they are generally nonlinear operators. In the Euclidean setting, the projection of a random variable or vector

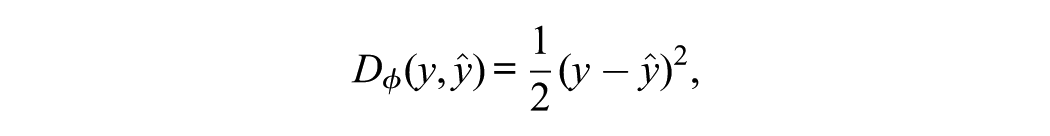

This linearity arises from the quadratic potential

When

depends on the local curvature of the potential. Because the Bregman divergence

Geometrically, the convex potential

where

When

This geometric distinction shows that Bregman projections extend—rather than merely generalize—Euclidean projections. They describe projection in curved spaces where expectation, regression, and reliability are governed by divergence rather than distance.

What Is a Bregman Projection?

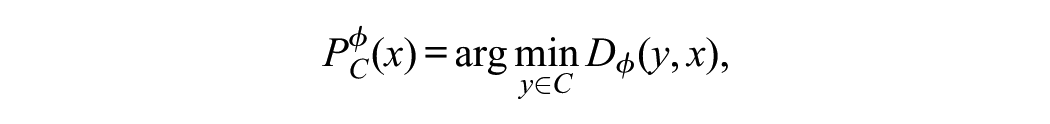

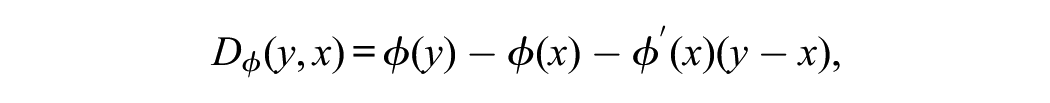

A Bregman projection extends the concept of orthogonal projection by replacing Euclidean distance with a Bregman divergence. Given a strictly convex and differentiable function

This divergence induces a geometric structure in which distances and projections behave differently from those in standard Euclidean space.

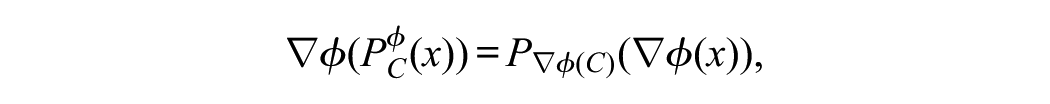

Dual Geometry and the Primal Space

The primal space refers to the original coordinate system in which data or variables naturally reside. The dual space, by contrast, is defined through the gradient map

Linearity in the Dual Space

In the dual space, the Bregman projection becomes a linear operation because the divergence behaves analogously to a squared Euclidean distance. Consequently, projection onto a convex set—such as a hyperplane or affine subspace—can be computed using standard linear algebraic tools. This property parallels the operation of the mirror descent algorithm, in which updates are linear in the dual space but become nonlinear when transformed back to the primal space.

Nonlinearity in the Primal Space

When the projected point is mapped back to the original (primal) space using the inverse gradient

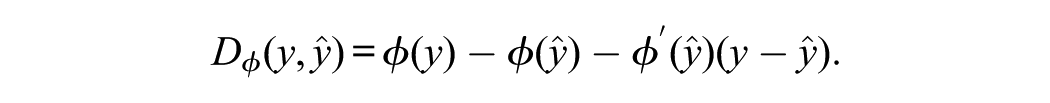

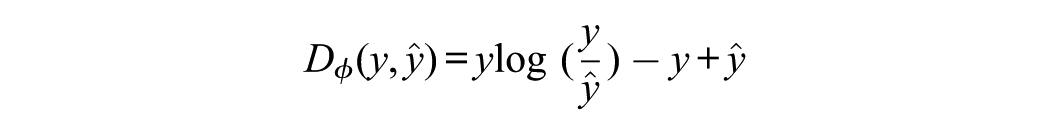

Bregman Divergence and Generalized Expectation

Let

This quantity measures the excess of

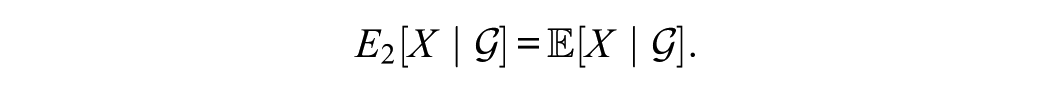

When

which underlies least-squares estimation and the Hilbert-space projection operator.

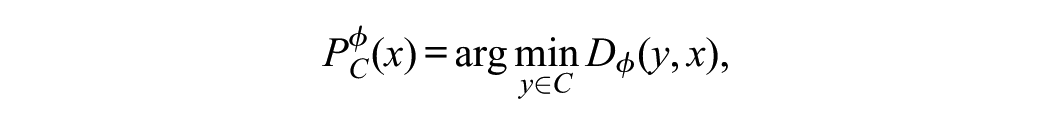

For any closed convex set

where the minimizer exists and is unique because

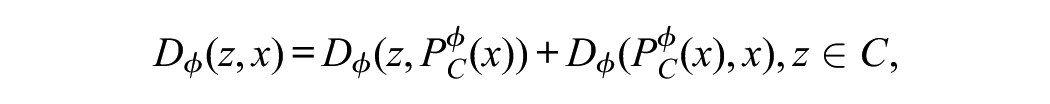

which extends the familiar Pythagorean theorem from Euclidean to Bregman geometry.

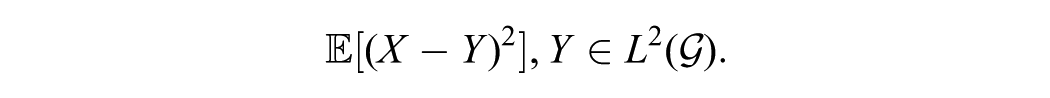

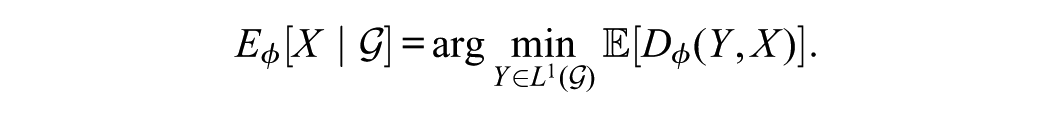

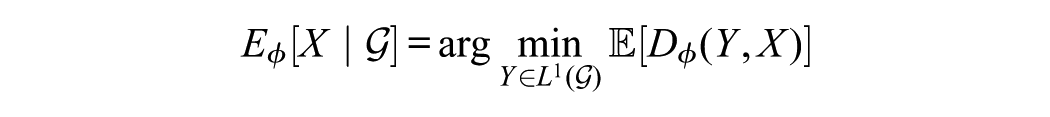

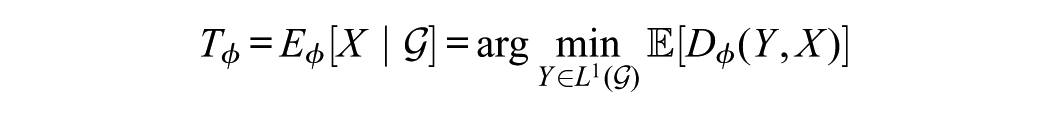

In probability and statistics, the conditional expectation

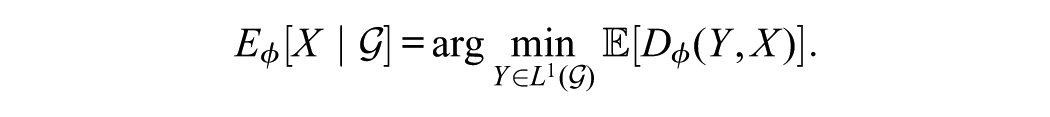

This characterization extends directly to Bregman geometry. For any convex potential

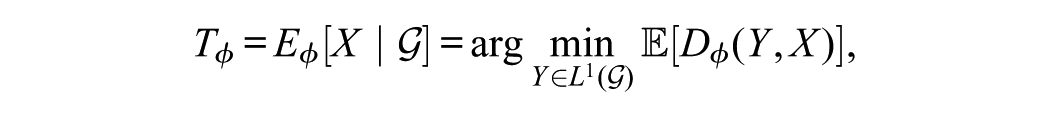

This operator represents the Bregman projection of

Thus,

Bregman Regression

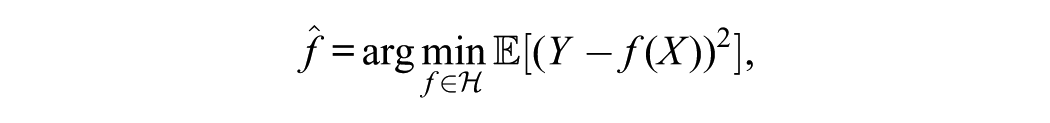

As shown by Zumbo (in press-a), reliability, regression

which represents the orthogonal projection of

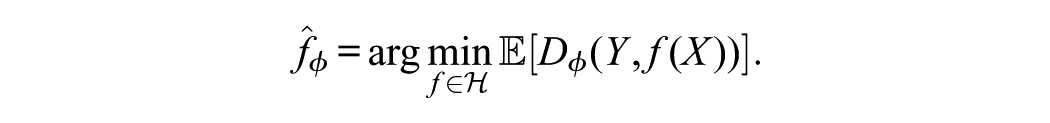

The Bregman generalization replaces the quadratic loss with a divergence determined by a convex potential

The resulting estimator is the Bregman projection of

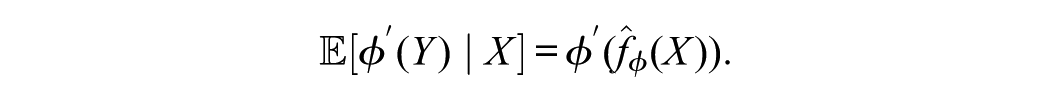

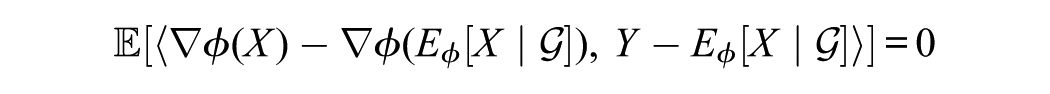

The first-order optimality condition for Bregman regression is

This condition generalizes the normal equations of least squares, showing that the derivative of the potential

it produces the cross-entropy condition for logistic regression.

From this perspective, many common estimation methods—including least-squares, Poisson, Gamma, and logistic regression—are special cases of Bregman regression, each arising from a specific potential function. The geometry of the potential defines both the loss function and the projection structure of the model.

The geometric logic of Bregman regression extends naturally to psychometric test theory. In both contexts, estimation involves projecting observed data onto a structured subspace that represents systematic variation—predictors in regression or latent traits in measurement models. This shared structure allows reliability, traditionally defined in terms of variance, to be reformulated as a measure of projection efficiency under the same divergence geometry. Thus, test theory can be viewed not as a separate statistical domain but as an applied instance of Bregman projection, where the goal is to quantify how effectively observed responses align with their latent expectations.

Bregman Projection and Psychometric Test Theory

It bears worth restating that in test theory, the true score is defined as the projection

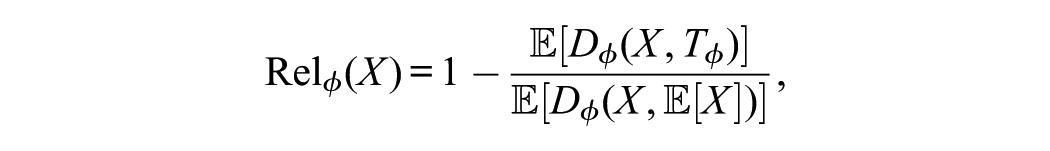

and reliability quantifies the proportion of variance explained by this projection:

Within Bregman geometry, the true score generalizes to

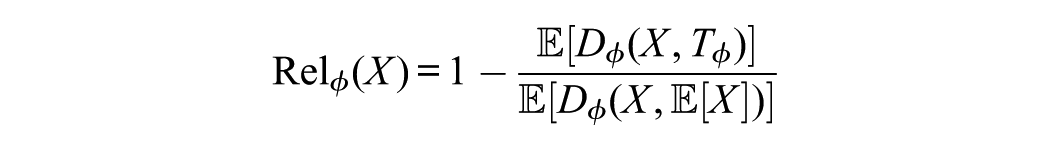

and reliability becomes

When

By reframing both regression and reliability as Bregman projections, psychometric theory gains a unified geometric foundation. The same principle that governs estimation in generalized linear models also defines reliability as projection efficiency. This synthesis links traditional psychometric constructs—true scores, regression, and reliability coefficients—to modern convex and information-geometric methods, providing a rigorous and flexible framework for theoretical and applied measurement.

In this view, reliability is not tied to a specific metric. However, it represents a universal measure of projection efficiency—how effectively a given geometry (quadratic, power-law, or informational) captures systematic variation in observed data.

From an applied perspective, this geometric reformulation directly informs applied test analysis. It provides a unified lens for interpreting traditional reliability coefficients, item response models, and nonlinear estimation methods as instances of the same projection principle. By identifying the underlying geometry of each model, researchers can evaluate how measurement precision, model fit, and information efficiency emerge from a single mathematical operation—projection under a convex potential.

Bregman Projection and Operator-Theoretic Test Theory

An operator-theoretic test theory formalizes measurement and reliability as projection phenomena in Hilbert space (Zumbo’s in press-a). The conditional expectation operator

projects an observed score

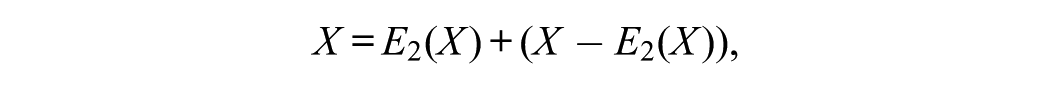

The corresponding orthogonal decomposition,

defines the true score and error components of CTT. The operator

The Bregman framework generalizes this structure to convex geometries. Instead of the quadratic potential defining Euclidean space, it employs a differentiable, strictly convex function

and defines the generalized conditional expectation as

When

Although

Mathematical Clarifications and Operator Properties

Although full proofs are beyond the intended aim and purpose of this article, the main operator-theoretic results can be summarized.

The generalized conditional expectation

inherits several core properties of the Hilbert-space projection

for all

These results are well established in convex-analytic and information-geometric literature (see Amari, 2016; Banerjee et al., 2005a; Nielsen & Nock, 2014). Readers interested in formal derivations may consult

Monotonicity Properties of Rel

A natural question concerns whether the generalized reliability measure

preserves the monotonicity and boundedness properties of the classical reliability coefficient.

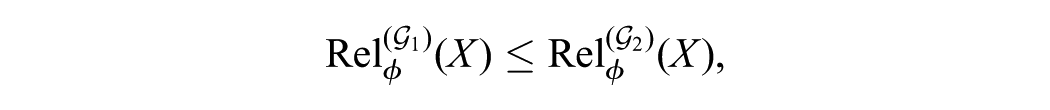

Under standard regularity conditions—strict convexity of

ensuring that finer latent representations cannot reduce reliability.

3.

These results are established based on Banerjee and Basu’s (2017) description of generalized conditional expectations in Bregman geometry, Nielsen and Boltz (2011), and information-geometric literature (see Amari & Nagaoka, 2000). The monotonicity proof follows directly from the Pythagorean property of Bregman divergences, which ensures that any additional conditioning information (i.e., expanding

Comparison With Other Geometric Generalizations

Several frameworks extend CTT beyond Euclidean geometry, including kernel-based Hilbert spaces and Bayesian geometric approaches. Kernel methods generalize linear models through implicit feature mappings, increasing representational flexibility while preserving an underlying Hilbertian (inner product) structure.

In contrast, Bregman geometry replaces the inner product with a convex potential, creating a family of non-Euclidean geometries that generalize both linear and exponential families. This substitution shifts the foundation of measurement from distance to divergence, enabling projection and estimation to be defined by minimizing expected convex loss rather than squared error.

Bayesian geometric frameworks, by comparison, represent uncertainty through probability manifolds and the Fisher–Rao metric, emphasizing curvature in posterior distributions. Although they capture probabilistic structure, they operate within fixed priors and parameterizations. The Bregman framework differs by generalizing the loss function itself, creating a direct link between estimation, reliability, and information-theoretic efficiency without requiring prior distributions or parametric assumptions.

Thus, Bregman geometry unifies loss-based optimization and geometric representation within a single convex-analytic framework. It encompasses Euclidean distance, cross-entropy, and Kullback–Leibler (KL) divergence as special cases, extending both kernel and Bayesian generalizations by redefining measurement geometry at the level of divergence rather than representation.

In this unified perspective, both theories articulate reliability as projection efficiency—the degree to which observed scores align with their latent projections. The operator-theoretic formulation situates this principle within the spectral structure of linear operators in

Thus, the operator-theoretic and Bregman approaches express the same principle: measurement as projection from an observed space onto a latent structure. The operator-theoretic model describes the linear Euclidean case, while the Bregman framework extends it to nonlinear, information-geometric spaces. The conditional expectation operator

In this unified perspective, both theories articulate reliability as projection efficiency—the degree to which observed scores align with their latent projections. The operator-theoretic formulation situates this principle within the spectral structure of linear operators in

Having situated psychometric reliability within this broader Bregman framework, the next step is to formalize the operator-theoretic properties that ensure the generalization remains mathematically coherent. The following section develops the underlying geometric foundations—idempotence, minimality, and generalized orthogonality—that parallel the properties of linear projection in

These results establish that the nonlinear Bregman operator preserves the essential structure of expectation and reliability, even when the measurement geometry departs from Euclidean form. They provide the rigorous bridge between the conceptual interpretation of reliability as projection efficiency and its formal realization in curved, information-geometric spaces.

Geometric Foundations of Psychometric Theory

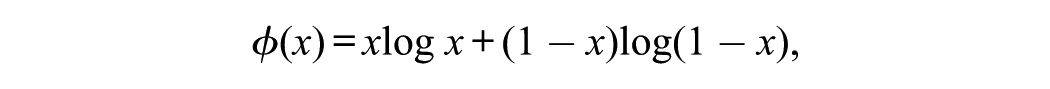

In test theory, the projection of observed scores onto their latent expectations occurs in a Euclidean geometry defined by the quadratic potential

Models such as IRT, generalized linear latent models, and ordinal reliability extend this structure to non-Euclidean geometries induced by other convex potentials.

In these settings, the mapping between latent and observed scores becomes nonlinear, and reliability reflects projection efficiency under a Bregman divergence rather than variance reduction alone.

This unified geometric framework situates both linear and nonlinear measurement models within a single theoretical continuum, linking regression, reliability, and estimation as forms of projection in curved statistical spaces.

Both regression and IRT can be viewed as Bregman projections. In linear regression, fitted values are the orthogonal projection of outcomes onto the predictor subspace, minimizing squared error—the divergence generated by the quadratic potential. In IRT, estimation occurs in a different geometry: the potential corresponds to the log-likelihood or cross-entropy, and projection takes place in the curved manifold defined by the model’s link function. Despite their apparent differences, both frameworks perform the same operation—identifying the element in a constrained space that minimizes expected divergence from observed data. Thus, regression, reliability, and IRT all express a single geometric principle of approximation under different convex potentials.

Nonlinearity in Psychometric Models

Understanding that reliability, regression, and IRT share this projection principle clarifies the role of nonlinearity in psychometric theory. In Euclidean geometry, projections are linear, producing additive and proportional relationships between observed and latent variables.

In the broader Bregman setting, the geometry is curved, and projections become nonlinear when expressed in the original measurement space. This curvature introduces the multiplicative and logistic relations that characterize modern psychometric models.

CTT assumes linear, additive relationships—a direct consequence of the quadratic potential.

However, when measurement processes follow nonlinear or multiplicative patterns, the Bregman framework provides a more general curved geometric description. For example, in logistic or Poisson models, projections occur along manifolds defined by the link function of

In Euclidean space, reliability quantifies the proportion of variance explained by a linear projection. Under non-Euclidean geometries, reliability quantifies the reduction in expected divergence between observed and latent scores. The generalized measure, denoted

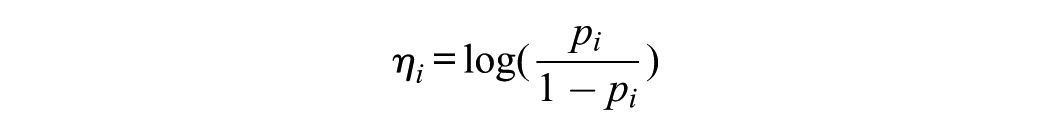

IRT as Nonlinear Projection

The Bregman framework provides a natural bridge between test theory and modern nonlinear latent variable models, including IRT. CTT treats observed scores as linear functions of latent variables plus additive error—consistent with Euclidean geometry and the quadratic potential

Bregman geometry generalizes this structure by allowing projection in a curved statistical space determined by a convex potential

is the KL divergence, which underlies exponential-family models such as logistic and Poisson IRT. Here, the projection

is generated by the potential

In these dual coordinates, the model is linear:

Mapping back to the probability scale reintroduces nonlinearity, illustrating that IRT models are linear in the dual space and nonlinear in the primal space—a defining feature of Bregman projections. This dual space structure explains why both maximum likelihood and expected a posteriori (EAP) estimation in IRT correspond to Bregman projections. The likelihood function measures fit as divergence between observed and model-implied probabilities, and its optimization minimizes expected divergence.

This same principle extends to multidimensional IRT, where the latent space may be curved but remains dually flat under information geometry. Viewed geometrically, IRT is a measurement model on a curved manifold induced by a convex potential, where estimation, prediction, and scoring correspond to projection operations that minimize expected divergence.

Each family of item response models corresponds to a distinct potential

Reliability as Projection Efficiency

Within this framework, reliability generalizes as projection efficiency under divergence geometry. In CTT, reliability represents the proportion of variance explained by the projection

leads to a reliability measure

which reduces to the variance ratio when

For other potentials,

In IRT, this interpretation connects reliability to the Fisher information function, which quantifies local curvature in the model’s information geometry. Greater curvature corresponds to higher sensitivity to changes in the latent coordinate

Both variance-based reliability in CTT and information-based reliability in IRT measure the same geometric quantity: alignment between observed and latent representations. In Euclidean geometry, this alignment is measured by squared deviation; in information geometry, by divergence. From this unified perspective, reliability is not a model-specific coefficient but a geometric property of measurement—the degree to which observed data lie near their theoretical projections in the geometry defined by the model’s convex potential. This view provides a coherent mathematical foundation linking classical and modern psychometric theory, situating both within a continuous family of linear and nonlinear measurement geometries.

Ordinal Reliability and Underlying Variable Models in the Bregman Framework

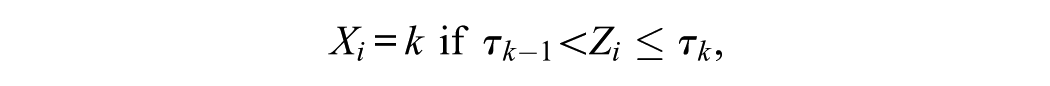

The concept of ordinal reliability, developed from underlying variable models and polychoric correlations (Zumbo et al., 2007), aligns naturally with the geometry of Bregman projections.

In these models, each observed ordinal score

where

In the Bregman framework, this latent variable formulation embeds the ordinal response space within a curved manifold induced by a convex potential

From this perspective, ordinal reliability reflects projection efficiency in the latent Euclidean space that best approximates the observed ordinal responses under the divergence induced by

The polychoric correlation matrix estimates this latent covariance structure by identifying the projection of the ordinal data that minimizes expected divergence from a multivariate normal model. Computing ordinal reliability through polychoric correlations is therefore equivalent to performing a Bregman projection from discrete ordinal data onto a continuous latent Gaussian manifold. The resulting coefficient quantifies how efficiently the observed ordinal indicators align with their latent projections—analogous to variance-based reliability in

This geometric interpretation also provides a foundation for Zumbo’s (in press-b) treatment of polychoric and copula-based estimators of composite reliability. These estimators reconstruct an underlying continuous geometry consistent with a particular potential—quadratic for Gaussian copulas or more general convex functions for other dependence structures. Each potential defines a specific notion of “distance” or divergence appropriate to the measurement scale and data distribution.

Hence, ordinal reliability is not merely an empirical correction for discretization but a geometric projection problem. The thresholds defining ordinal categories act as nonlinear coordinate boundaries, and polychoric correlations reconstruct the latent inner products that determine projection efficiency. The observed ordinal space is curved, but the latent space remains linear, and reliability quantifies how well projections from one to the other preserve alignment.

This interpretation parallels the treatment of categorical responses in IRT. Both frameworks describe measurement as a projection from a discrete observed space onto a continuous latent manifold defined by a convex potential. In IRT, this projection occurs through logit or probit link functions, representing the gradient transformation

In the ordinal model, the thresholds partition the latent distribution analogously, producing observed categories that are nonlinear manifestations of an underlying linear geometry.

In both cases, estimation seeks the Bregman projection that minimizes expected divergence between observed responses and the latent model. Thus, ordinal reliability and IRT reliability express the same geometric principle.

Each quantifies how efficiently observed categorical responses align with their latent projections within the model’s information geometry. The polychoric approach reconstructs the latent covariance structure, whereas IRT estimation identifies the parameters defining the latent traits and response functions.

In both frameworks, reliability measures the degree to which nonlinear mappings from latent to observed space preserve geometric alignment—whether expressed as variance explained in

As an overview, ordinal reliability, developed through underlying variable models and polychoric correlations, aligns with Bregman geometry. Ordinal scores map nonlinearly from latent continuous variables through threshold functions, analogous to transformations between primal and dual spaces. Polychoric correlations estimate the covariance structure of the latent space by identifying projections minimizing expected divergence. Thus, ordinal reliability represents projection efficiency in a latent convex geometry, linking seamlessly with the IRT formulation.

Concluding Remarks

I advance a unified geometric framework for psychometric theory. It connects CTT, operator-theoretic test theory, IRT, regression, and reliability through the mathematical construct of Bregman projections. The paper positions measurement as a projection problem within convex and information-geometric spaces, drawing on functional analysis, convex analysis, and information geometry to reinterpret long-standing psychometric concepts.

The main contribution lies not in new data or simulation results but in theoretical synthesis—clarifying that expectation, regression, and reliability are unified by a shared geometric principle. The work extends the author’s prior operator-theoretic research (Zumbo, in press-a) and aligns with ongoing theoretical modernization in measurement science.

The Bregman framework provides a geometric foundation for understanding expectation, regression, and reliability within a single theoretical structure. By replacing the quadratic potential that defines Euclidean geometry with a general convex potential, this approach encompasses a wide class of statistical and psychometric models. Conditional expectation, least-squares regression, and classical reliability emerge as special cases of Bregman projections under the quadratic potential

This unifying view clarifies that many methods in psychometrics—such as regression, factor analysis, item response theory, and composite reliability estimation—share a common mathematical foundation: projection under a divergence defined by a convex potential. Reliability, for example, quantifies the efficiency of this projection and generalizes naturally across linear and nonlinear models. Regression likewise represents the Bregman projection that minimizes expected divergence between observed and predicted values.

By situating CTT within the broader class of Bregman geometries, reliability emerges not as a static parameter tied to linear models but as a projection efficiency that depends on the chosen geometry of measurement and strengthens conceptual links between classical and modern measurement theories.

Bregman projections thus offer a unifying principle that connects the statistical and geometric foundations of measurement. They demonstrate that reliability, estimation, and model fit can each be understood as instances of a broader projection framework, governed by the choice of divergence and its associated geometry. This view situates psychometric theory within a coherent mathematical structure that generalizes beyond linear models.

It unifies the Hilbert-space representations of test theory with the information-geometric frameworks that underpin modern statistical learning. In this perspective, measurement error is understood as the residual divergence of a projection, and reliability expresses the efficiency with which observed data align with latent structures. Bregman geometry thereby provides a rigorous mathematical formalization of long-standing psychometric concepts while preserving their substantive interpretability.

By extending measurement theory into nonlinear and information-geometric domains, this approach suggests that psychometric quantities are not fixed properties of models but context-dependent expressions of geometric relationships. Such a reconceptualization opens a path toward integrating modern machine learning, information theory, and CTT under a common projection-based framework.

Appendices A and B summarize the mathematical details supporting the operator-theoretic and geometric results. Appendix A outlines the formal properties of the generalized conditional expectation operator

From a practical standpoint, the Bregman perspective offers new pathways for modeling data in contexts where nonlinear relationships arise naturally from ordinal response formats, bounded rating scales, or skewed score distributions. It supports the use of polychoric, copula-based, and entropy-based estimators as natural extensions of Euclidean reliability measures (Zumbo, in press-b). More broadly, this framework invites a reexamination of measurement models through the lens of geometry, revealing reliability, regression, and estimation as manifestations of the same underlying principle: projection in spaces shaped by convex potentials.

Footnotes

Appendix A

Appendix B

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by the Canada Research Chairs program (AWD-016179 UBCEDUCA 2020) and the UBC Paragon Research Initiative to Bruno Zumbo (Award Number: AWD-024645 UBCEDUCA 2023), the UBC Distinguished University Scholar program where the author is a member of the UBC Institute of Applied Mathematics.