Abstract

The speed–accuracy tradeoff (SAT), where increased response speed often leads to decreased accuracy, is well established in experimental psychology. However, its implications for psychological assessments, especially in high-stakes settings, remain less understood. This study presents an experimental approach to investigate the SAT within a high-stakes spatial ability assessment. By manipulating instructions in a within-subjects design to induce speed variations in a large sample (N = 1,305) of applicants for an air traffic controller training program, we demonstrate the feasibility of manipulating working speed. Our findings confirm the presence of the SAT for most participants, suggesting that traditional ability scores may not fully reflect performance in high-stakes assessments. Importantly, we observed individual differences in the SAT, challenging the assumption of uniform SAT functions across test takers. These results highlight the complexity of interpreting high-stakes assessment outcomes and the influence of test conditions on performance dynamics. This study offers a valuable addition to the methodological toolkit for assessing the intraindividual relationship between speed and accuracy in psychological testing (including SAT research), providing a controlled approach while acknowledging the need to address potential confounders. Future research may apply this method across various cognitive domains, populations, and testing contexts to deepen our understanding of the SAT’s broader implications for psychological measurement.

Keywords

Introduction

It is well known from experimental studies using simple perceptual tasks that participants’ response accuracy decreases when response speed is increased (i.e., the time that test takers spend on a given task): a phenomenon that is widely recognized as the speed–accuracy tradeoff (SAT; for an overview, see Heitz, 2014). The SAT has also been reported in studies objecting psychological assessments (Davison et al., 2012; Goldhammer et al., 2017; Goldhammer & Kroehne, 2014; Mutak et al., 2024; Semmes et al., 2011). Thus, the actual performance in a test needs to be described by both the effective speed (i.e., the speed level a test taker choose) and the effective ability (i.e., the ability level a test taker exhibits with the chosen level of speed; Dennis & Evans, 1996; Goldhammer & Klein Entink, 2011). Understanding the SAT in psychological assessments can deepen our understanding of individual differences in cognitive performance, thereby improving the validity of test results (e.g., Lohman, 1989; Pohl et al., 2021; van der Linden, 2009).

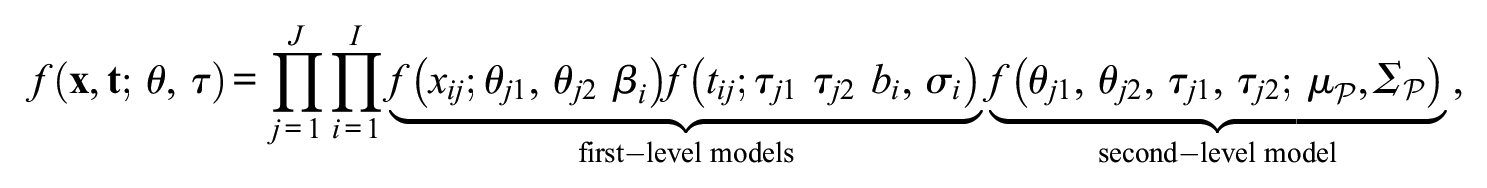

The SAT plays a vital role in psychological assessments. To illustrate this, consider the SAT of three fictional test takers (Figure 1). In the figure, we depict an SAT with the commonly assumed inverse monotonic intraindividual relationship between speed and accuracy (Goldhammer, 2015; Heitz, 2014; Thurstone, 1937). In the example in Figure 1, test takers differ with regard to their maximum level of ability (capability; that is, ability given infinite time on responding to an item; that is, the ability level to which the curve converges with a decrease in effective speed) as well as the rate of trading ability for an increase in effective speed (i.e., the gradient at a given point of the SAT curve; Goldhammer, 2015; Pohl et al., 2021; Wickelgren, 1977). The curves represent every possible combination of ability and speed that the test taker could adopt. As can be seen in Figure 1, Test Taker 1 (blue) has a higher capability than Test Takers 2 (yellow) and 3 (pink). Also, given any speed level, Test Taker 1 outperforms both other test takers in terms of ability. Comparing Test Takers 2 and 3 with each other, Test Taker 2 outperforms Test Taker 3 for lower effective speed, while for medium to high speed levels, Test Taker 3 outperforms Test Taker 2. Usually in assessments, not the whole SAT curve, but only one point of the SAT curve is assessed for a given person. Test takers also do not choose the same speed level, but differ in the effective speed. In the depicted hypothetical scenario, the effective speed and ability level of each person is marked with a dot. Assessing only effective ability, we would conclude that Test Taker 2 is the most able one and Test Taker 1 has the lowest ability. This comparison, however, might not fully capture the nuances of cognitive performance, as Test Taker 1, despite performing worse than Test Takers 2 and 3 in terms of effective ability, works at a much faster pace. Even if Test Takers 2 and 3 choose the same speed level, a single observation does not reveal whether the chosen speed level affects whether Test Taker 2 or 3 has a higher ability. A more comprehensive understanding of the differences in performance among these three test takers would require knowledge of their individual SAT curves.

Hypothetical SAT Curves for Three Test Takers With Varying Capabilities

In this study, we present an experimental approach for assessing the SAT in psychological assessments of cognitive abilities (i.e., spatial ability).

Approaches for Investigating Intraindividual Speed–Accuracy Relationships in Psychological Assessments

Previous research on the within-person relationship of speed and accuracy relied on one of three strategies: (a) nonstationarity of ability and speed within a test, (b) external measures, and (c) experimental manipulations of speed.

Nonstationarity

Ample evidence exists suggesting that test takers change their working speed during test administration (Dennis & Evans, 1996; Maris & vander Maas, 2012). Some research on intraindividual dependencies of ability and speed make use of this to infer different values on the SAT curve of a person. Different approaches have been proposed that model how changes in response speed coincide with changes in accuracy (for an extensive overview, see De Boeck & Jeon, 2019). Most prominently, local dependency models have been proposed to account for the possibility that item responses and response times may be dependent on each other, even after controlling for the latent traits of speed and ability (under the assumption of stationarity). If test takers change their working speed during a test, it might lead to local dependencies between items, especially if the change in speed affects response accuracy. This can be used to infer the intraindividual relation of ability and speed (Bolsinova et al., 2017; De Boeck et al., 2017). Research employing local dependency models has shown that the relationship between response speed and accuracy varies with the difficulty of the cognitive tasks (Bolsinova et al., 2017; De Boeck et al., 2017; Goldhammer et al., 2014). Specifically, higher accuracy is associated with faster responses in easy tasks, while for more difficult tasks, slower responses correspond to higher accuracy.

Domingue et al. (2022) also used a residual-based approach. They reanalyzed 29 data sets from cognitive assessments and looked at how response times can explain residual responses. They reported inconsistent results across the different data sets; positive as well as negative dependencies were found. The authors concluded that next to motivational mechanisms, differences in task designs (e.g., the existence of time limits for the whole test) as well as the cognitive domain of interest (e.g., working memory) impacted the relationship. Interestingly, across all data sets, test takers who performed worse in cognitive tasks also showed higher variation in response speed.

Recently, Mutak et al. (2024) introduced a statistical approach extending the hierarchical model by van der Linden (2007) capturing changes in speed and ability during test administration through a latent growth term. By applying the model to Programme for International Student Assessment (PISA) test data, the authors confirmed the expected negative intraindividual relationship between changes in speed and ability, indicative of the SAT. They also identified instances in which this relationship does not exist and discussed the influence of confounding variables such as concentration or motivation.

External Measures

For estimating intraindividual relationships of ability and speed, Ranger et al. (2021) relied on external measures of proficiency. They used the ELO-score of chess players as an external measure of capability and grouped test takers into groups with similar ELO-scores. Assuming that persons with a similar capability have a similar SAT curve, they analyzed the relationship of response time and accuracy from a chess test within each subgroup. They argued that approaches relying on nonstationarity may be confounded by other factors such as concentration, persistence, or guessing. For instance, higher levels of concentration could result in both higher effective ability and higher working speed, while persistence could have a beneficial effect on response accuracy and decrease working speed. This would explain the inconsistent results of previous studies. They proposed various forms of the estimated SAT curve, each adjusted for different confounding factors. All of their estimated SAT curves showed a consistent positive relationship between ability and speed within the subgroups of persons with similar capability: Spending more time responding to an item relates to lower response accuracy. The authors conclude that other aspects, such as differences in concentration, may also be present and confound the inference of these curves as representations of intraindividual SAT curves.

Experimental Manipulations of Speed

Experimental manipulations of speed have mainly been performed in cognitive psychology on simple perceptual tasks (Heitz, 2014). Forcing participants to adapt their speed of responding to a task is commonly achieved by either introducing time limits on tasks (Goldhammer et al., 2024; Ratcliff & Rouder, 2000; Van Zandt et al., 2000) or by instructions that emphasize or even incentivize speed over accuracy or vice versa (Hale, 1969; Howell & Kreidler, 1964).

As pointed out by De Boeck et al. (2017), only very few studies exist that employ experimental manipulations of working speed in psychological assessments. Some studies have experimentally varied the speed of the test takers by introducing conditions with and without item time limits. These studies were either not directly concerned with investigating the SAT (Semmes et al., 2011) or investigated SAT in speed tests with simple tasks, for which responses are nearly always solved correctly if enough time is available (similar to perceptual tasks from experimental psychology; Goldhammer et al., 2017, 2024; Goldhammer & Kroehne, 2014) Also, while introducing time limits on the item level allows for a precise manipulation of time, it may result in other unwanted response processes such as item omission or guessing (Pohl & Von Davier, 2018).

Semmes et al. (2011) conducted a study that applied experimental manipulation of speed on tests with complex cognitive tasks (i.e., power tests, where responses require to take multiple seconds up to minutes). Specifically, they administered items of a numerical reasoning test to participants in two conditions: one with a set time limit for each item (experimenter-paced) and another where participants could take as long as they needed (self-paced). The primary objective of the study was not to investigate the SAT but to understand how introducing time limits might change the underlying factor structure of the test. Their analysis revealed that when time limits were imposed, performance was not just about accuracy (numerical ability) alone. Instead, a random effect of administration condition was found to be of best fit in modeling a second dimension, a speed factor. In terms of the SAT, the authors concluded that individuals vary in the degree to which ability is decreased while increasing their speed. However, the study ignored response times and only modeled response data in their analyses.

The data set by Semmes et al. (2011) was reanalyzed by Davison et al. (2012) comparing statistical models where self-paced response times were also incorporated in a latent speed factor. Interestingly, they interpreted the speed dimension as a measure of how well someone maintains their self-paced numerical reasoning under time constraints. High speed scores indicated minimal performance drops under time limits, while low scores signaled a significant decline. On average, speed levels increased in the time-limit condition while, at the same time ability levels decreased, aligning with the SAT. In addition, De Boeck et al. (2017) analyzed the data set to explore the relationship between speed and accuracy, examining speed’s main effects on accuracy and item interactions across conditions with and without time constraints, and between fast and slow responses. This approach, based on findings that time constraints might assess different traits, aimed to identify measurement invariance violations and further correlating item difficulty discrepancies, thereby assessing effects both within and across conditions. Although, within conditions, increased response speed was associated with higher response accuracy; on average, a decrease in ability was found between conditions when test takers were prompted to answer faster. The latter finding aligns with research in simple perceptual tasks and supports the existence of an SAT in psychological assessments.

Previous experimental studies have primarily relied on conceptualizing response speed using observed variables, analyzing median (log) response times at both item and person levels and examining residuals from these averages. This method overlooks the potential insights gained from using latent variables for response times, which could clarify the effects of item properties (e.g., time intensities) and individual test taker characteristics (e.g., working speed). A latent variable approach allows for the adjustment of response times based on each item’s time requirements, crucial for power tests where item difficulty and time demands vary significantly (Marianti et al., 2014; Mutak et al., 2024; van der Linden, 2006, 2009; van der Linden & Guo, 2008).

Aim of the Study

The SAT is a well-studied phenomenon in simple perceptual decision tasks (e.g., lexical discrimination), which are designed so that nearly all responses would be correct given enough time. However, the nature of SAT in more complex aptitude and competency tests, which demand greater cognitive effort and advanced problem-solving skills, is not yet well understood. Most previous studies investigating the intraindividual relationship of ability and speed in psychological assessments rely on nonstationarity. However, these approaches can only investigate SAT within the scope of speed changes within a test. These speed changes are often confounded by other factors, such as concentration or motivation. Studies on SAT relying on external measures operate on the strong assumption that individuals with similar capacities have the same SAT curve. While experimental studies, which allow to better isolate SAT from other factors impacting the within-person relationship between speed and accuracy, are often used in cognitive psychology, they are hardly utilized in psychological assessments of complex tasks. We aim to contribute to the understanding of the SAT in psychological assessments by using an experimental approach and utilizing a psychometric model to study SAT in psychological assessment.

Almost all existing research on SAT in psychological assessment has focused on low-stakes assessments. We hardly know anything about how SAT is present in high-stakes assessments. However, the response process may differ in high-stakes assessments, which may probably also impact the SAT or the investigations of the SAT. In our study, we focus on investigating the SAT in a high-stakes assessment setting.

In this study, we investigated the following research questions:

Method

We will leverage unpublished data from a high-stakes assessment designed for selecting trainee air traffic controllers (ATCOs) in Austria, measuring spatial abilities which are of paramount interest in the air traffic controlling profession (e.g., Rathje et al., 2004; Soldatov et al., 2018). 1

Participants

Over the time period between August 2011 and January 2015, overall 1,305 participants took part in an annual process of selecting trainee ATCOs in Austria. About 29.27% of the participants were female and 70.73% male aged 17 to 55 (M = 21.89, SD = 3.74) years. The age distribution is highly skewed, with 97.1% of the persons being between age 17 and 30, 2.6% between age 31 and 40, and 0.3% above 40. The highest level of education for 88.51% of individuals was high school completion, whereas 9.27% had already obtained a university degree.

Measures

Spatial ability was measured by two different computerized tests, the Endless Loops Test (ELT; Gittler & Arendasy, 2003) and the Three-Dimensional Cubes Test (3DC; Gittler, 1990). Each test consists of 20 Rasch-scaled items. For a short description, psychometric properties and item examples see the Supplementary Materials.

Design

We experimentally manipulated speed in a within-subject design by two instructions. In the first condition, the self-paced condition (SPC), the test takers were instructed to solve the items “as accurate as possible.” In the second condition, the time-pressured condition (TPC), the participants were instructed to solve the items “as fast and as accurate as possible.” No time limits were imposed in either condition.

First, the ELT test was administered to all participants, first under the SPC and then under the TPC. Then, there was a short break in which participants answered cognitively undemanding questions of a job-related interest questionnaire. After the questionnaire, the 3DC test was administered to the participants, first under the SPC and then under the TPC. Note that due to the high-stakes nature of the selection process for trainee ATCOs, the order of the tests and conditions was fixed rather than counterbalanced. This was to ensure that every candidate experienced the same sequence, maintaining fairness and consistency across the selection process.

For assigning items to each of the conditions, each cognitive ability test was split into two parts: Eight items were assigned to the SPC and 12 items to the TPC. Furthermore, the items within the two sets (SPC and TPC) were administered in a fixed order, with difficulty levels mixed throughout the sequence rather than arranged from easiest to hardest. This rationale is in line with the original test construction principles of the ELT and 3DC (Gittler, 1990; Gittler & Arendasy, 2003).

Analyses

Data Preparation

To allow for comparisons of latent variables between the two conditions, we obtained item parameters of all items from other studies, in which the two tests were administered under SPCs in a low-stakes setting (e.g., Arrer, 1992; Gittler, 2000). In the analyses of the data of this study, we did not estimate item parameters, but fixed them to the values of the pre-studies.

From the 20 administered items of the ELT, 17 items (7 in SPC, 10 in TPC) were used in the analyses, as (a) the first item in each condition needed to be treated as a hidden warmup item to ensure Rasch homogeneity as stated by the test author (Gittler, 1990, 2000) and (b) the final item of the TPC was excluded from scoring as its response time data were not available in the low-stakes assessment studies that we reanalyzed for item calibration. From the 20 administered items of the 3DC, two hidden warmup items of the 3DC were excluded from scoring, resulting in 18 items (7 in SPC, 11 in TPC) that were used in the analyses.

To control for random guessing and aberrant responding, we excluded fast responses based on a visual inspection of multimodality in distributions of log-transformed response times on the item level (Kroehne et al., 2019; Wise, 2017; Wise & DeMars, 2006). Overall, 490 responses and response times out of 23,490 (2.09%) were excluded by this procedure. In the analyses, we treated these values as missing values.

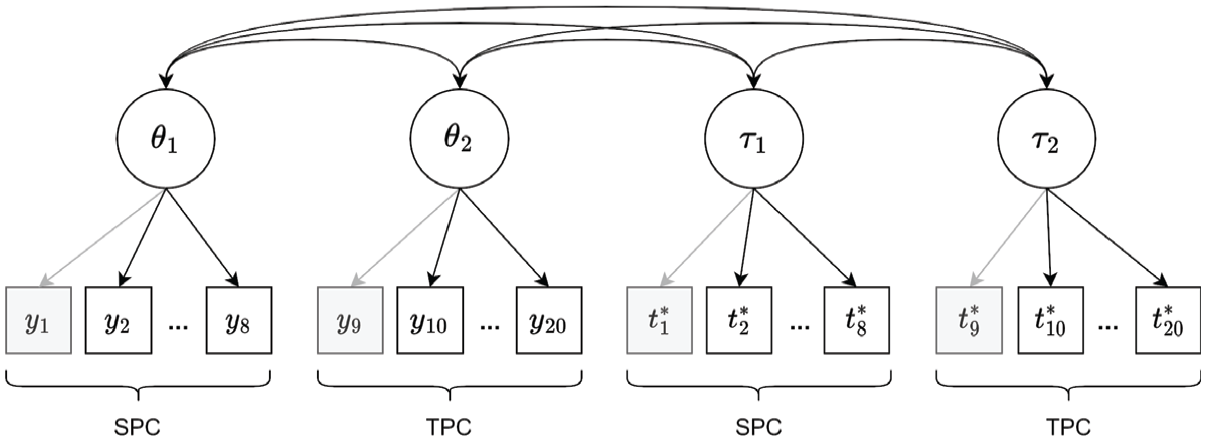

Model Specification

In our analyses, we made use of both responses and response times for each item. For analyzing the data, we combined the hierarchical modeling framework by van der Linden (2007) for speed and ability with a between-item multidimensional approach (Adams et al., 1997). For each condition, a latent speed and ability variable is modeled representing the test taker’s effective speed and ability levels across items, respectively. The model that was applied separately to each test is depicted in Figure 2. 2

Between-Item Multidimensional Extension of the Hierarchical Modeling Framework by van der Linden (2007)

The model consists of two levels: The first-level model describes the relationship of the model parameters with the observed data, while the second level describes the joint distribution of the model parameters.

First-Level Model

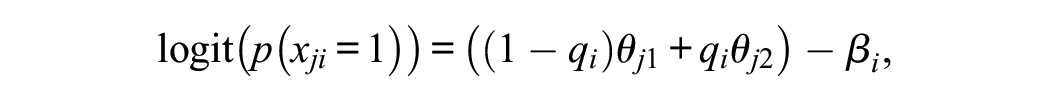

Regarding the modeling of item responses, we rely on the Rasch model (Rasch, 1960), which has been shown to well depict the ELT and the 3DC items (Gittler, 1990; Gittler & Arendasy, 2003). We specify a separate ability dimension for each condition:

where

Regarding the modeling of response times, we assume that logarithmized response times are following a normal distribution as suggested by van der Linden (2006). Similar as for ability, we assume a separate speed dimension for each condition (Zhan et al., 2020, 2021):

where

To evaluate the impact of a change in speed on effective ability for each person, latent change scores in both ability and speed across the two conditions were derived by

Second-Level Model

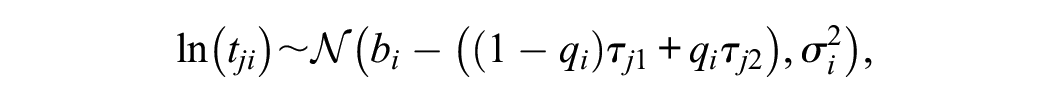

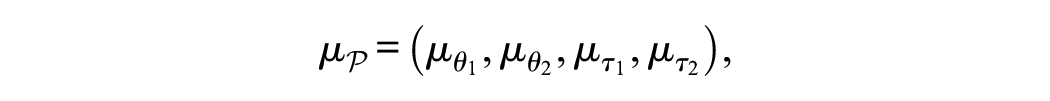

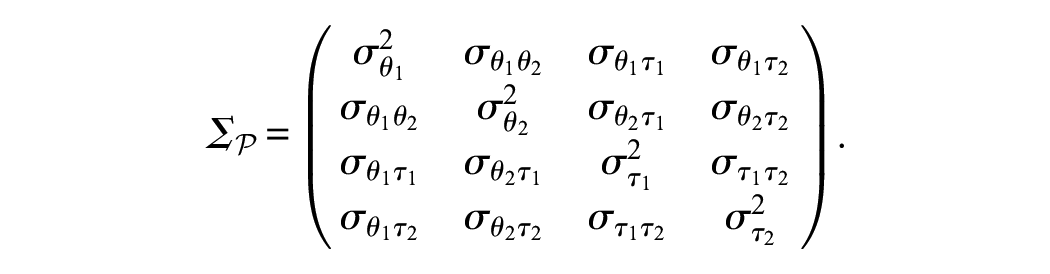

On the second level, we specify the joint distribution of the model parameters. Person parameters are assumed to follow a multivariate normal distribution with mean vector:

and variance-covariance matrix

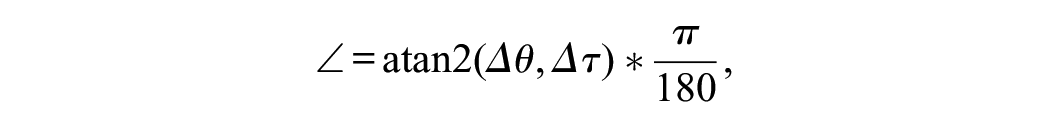

The sampling distribution of the model can be written as

where

Parameter Estimation

Data preparation, data analysis, and model evaluation were carried out using the programming language Julia version 1.9.3 (Bezanson et al., 2017). The model was fitted separately to each of the cognitive tests. Bayesian parameter estimation for the proposed model was conducted using Stan version 2.31 (Carpenter et al., 2017) and its command-line interface CmdStan. To sample from the posterior, Stan utilizes an adaptive form of the Hamiltonian Monte Carlo (HMC; Neal, 2011) algorithm, the No-U-Turn Sampler (Hoffman & Gelman, 2014). Priors used in the analysis are given in the Supplementary Materials. We ran four Markov chain Monte Carlo (MCMC) chains, each consisting of 12,000 iterations while the first 8,000 iterations were discarded as burn-in. Model parameters were summarized using expected a posteriori (EAP) estimates, accompanied by 90% highest posterior density (HPD) intervals. The Stan code used for model specification can be found in the Supplementary Materials.

In addition, we wish to emphasize that the latent change scores

Convergence and Model Fit

To ensure the robustness of our model parameters, we assessed convergence using the

For model fit evaluation, we utilized both graphical and numerical posterior predictive checks (PPMCs; Gelman et al., 2013), flagging Posterior Predictive p-Values-values outside the .025 to .975 range as indicative of potential model-data misfit (Sinharay et al., 2006). We also applied Bayesian residual analysis for the lognormal response time model, focusing on the uniform distribution of PPP-values and the alignment of item curves with the identity line as indicators of fit (van der Linden & Guo, 2008). Using Yen’s Q3 statistic, we assessed whether the assumption of local independence and unidimensionality holds in the data. Detailed descriptions of these methods and additional analyses are available in the Supplementary Materials.

Manipulation Check

We evaluated whether the instructions had the desired impact on test takers’ speed and ability, that is, speed increases and ability decreases from SPC to TPC, both on group as well as individual level. On the group level, we evaluated whether on average the difference in effective speed between TPC and SPC

For investigating the impact of the instruction on speed at the individual level, we calculated the proportion of participants who exhibited a significant increase in effective speed from SPC to TPC. An individual change score was considered significant if the EAP estimate of latent speed changes

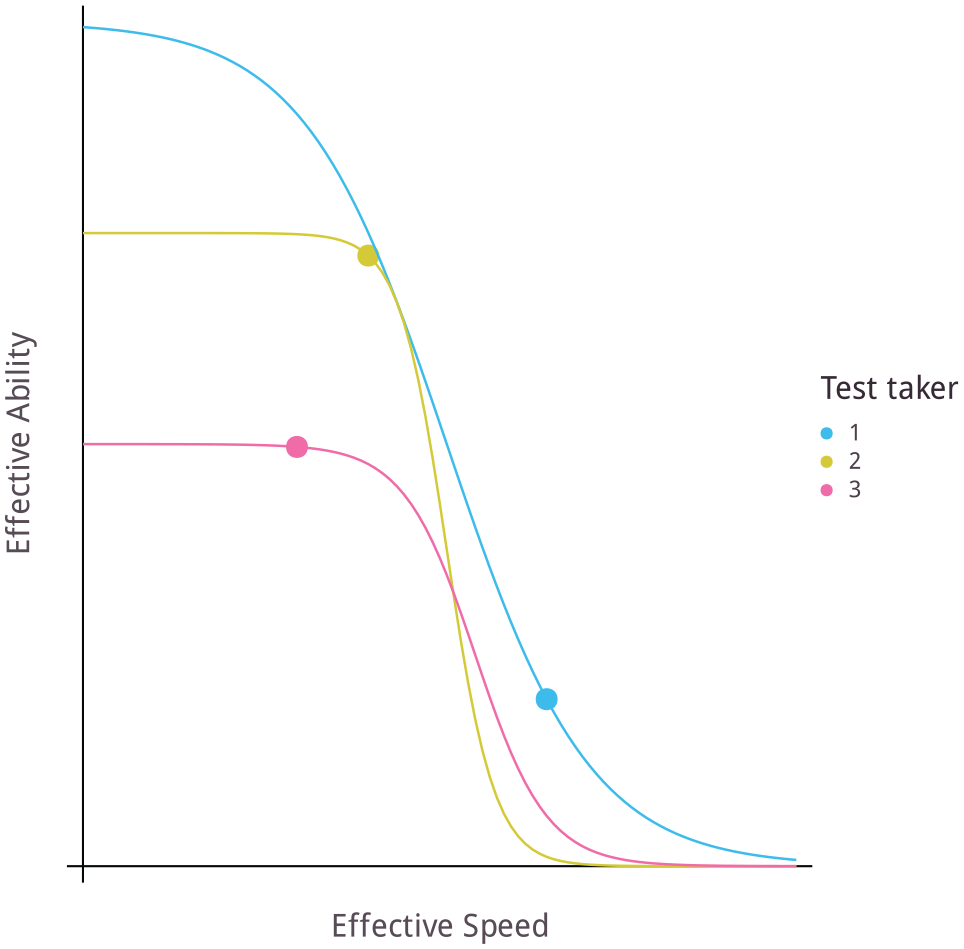

Investigating the SAT

To depict the different intraindividual relations of ability and speed, we reported on the number of test takers within each of the four possible patterns of change in ability and speed across conditions: (i) increasing speed and decreasing ability; (ii) increasing both, speed and ability; (iii) decreasing speed and increasing ability; and (iv) decreasing both, speed and ability. Note that Patterns (i) and (ii) align with the manipulation, while Patterns (iii) and (iv) indicate that the manipulation did not work. Both (i) and (iii) show patterns consistent with the SAT, while Patterns (ii) and (iv) do not. Increase or decrease in person parameters was determined based on the individual’s scores on the latent variables (not considering significance). 5 For each pattern, we reported the percentage of test takers, as well as summary statistics of estimated EAPs of latent changes (mean, standard deviation).

We further investigated SAT, by describing simultaneous change in ability and speed across conditions by two-dimensional change vectors, with ability depicted on the y-axis and speed depicted on the x-axis. The vectors are constructed by connecting the ability and speed scores of both conditions for each test taker. To facilitate a more meaningful comparison between changes in ability and speed, we scaled the change in ability and change in speed by unit variance. We focused on magnitude

where

On the group level, we evaluated the correlation between the change in ability and the change in speed. A negative correlation would support the existence of the SAT.

Results

In the following, we will present the results of the analyses, first for the ELT and then for the 3DC.

Endless Loops Test

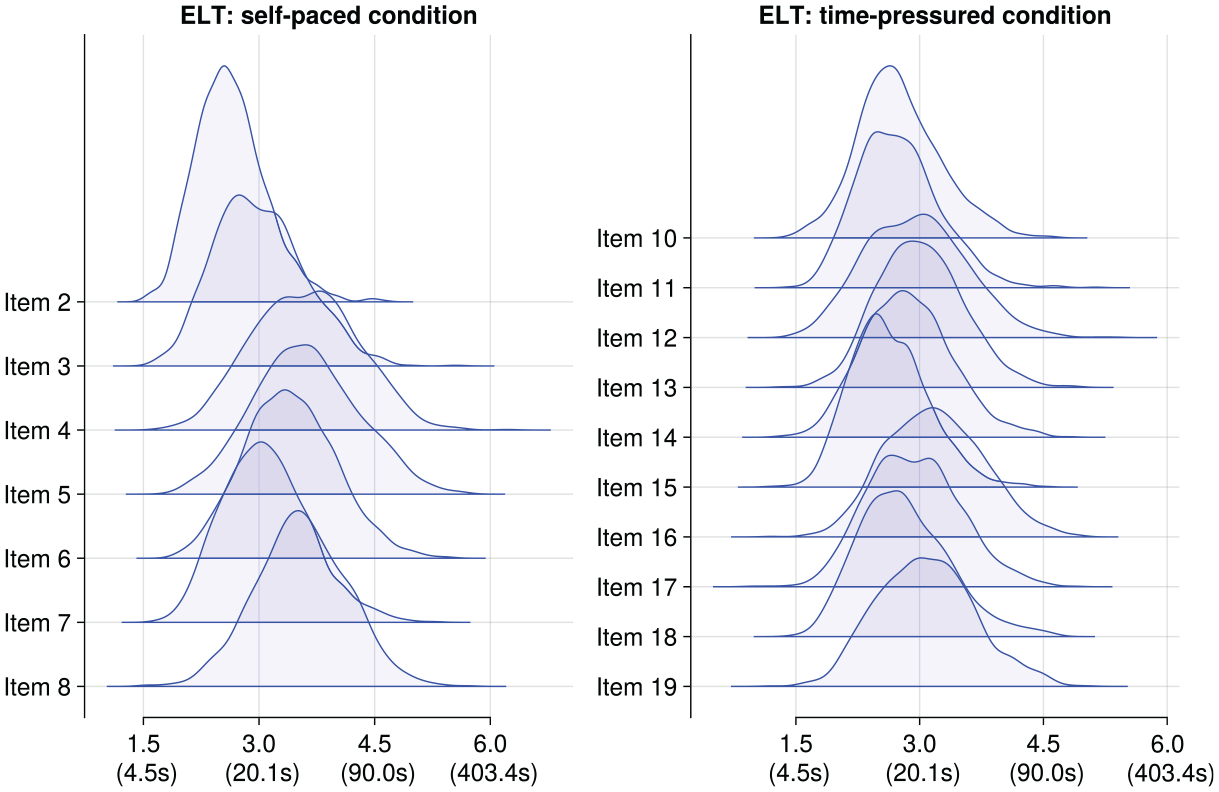

Figure 3 shows the frequency distributions of log-transformed response times for ELT items in both conditions. We observed no signs of multimodality regarding very fast responses, and as such, we did not exclude any fast responses.

Frequency Distributions of Log-Transformed Response Times on ELT Items (Split by Condition)

Convergence and Model Fit

The model estimation successfully converged (

Manipulation Check

On the group level, as expected, mean latent speed increased from SPC

An increase in speed occurred for almost all test takers. Most test takers were significantly influenced by the TPC in terms of latent speed, with

Investigating the Speed–Ability Tradeoff

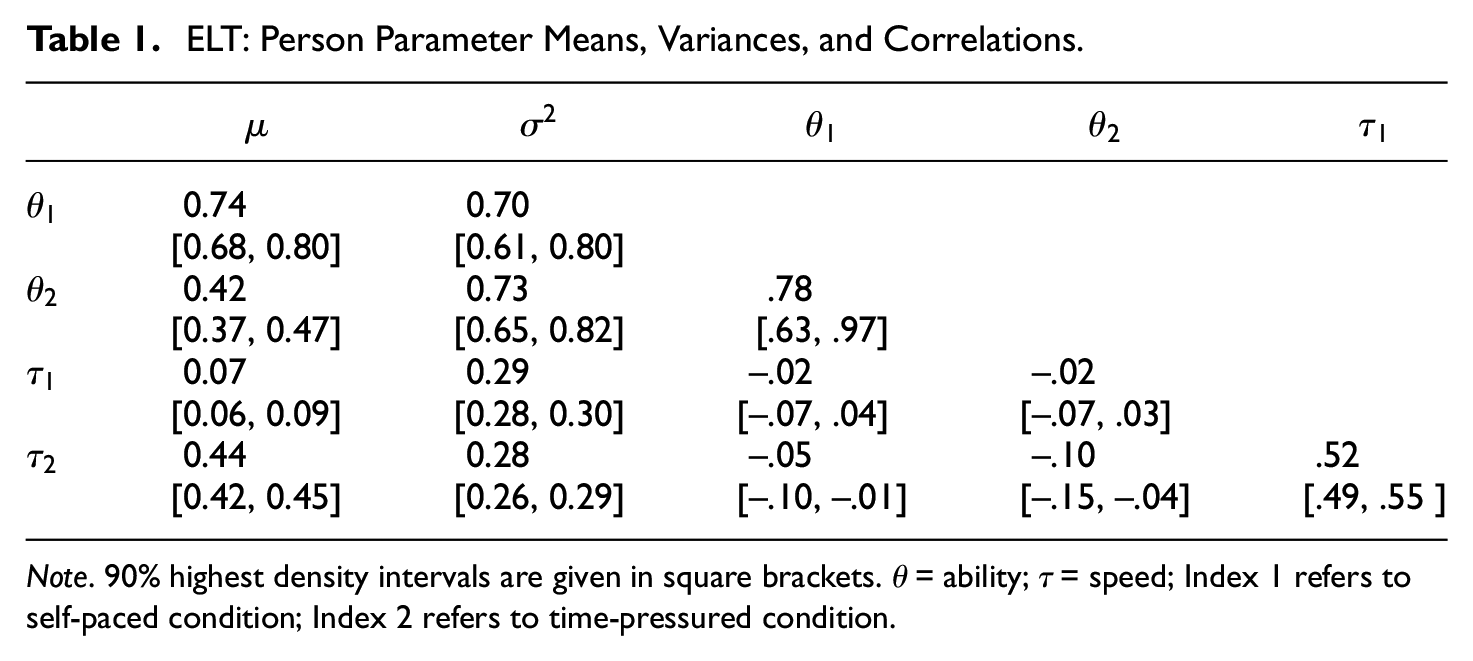

The posterior means of person parameter means, variances, and correlations are presented in Table 1. Notably, there are large correlations between ability levels,

ELT: Person Parameter Means, Variances, and Correlations

Note.

As test takers transitioned from SPC to TPC, as expected, their latent ability tended to decrease. Mean latent ability estimates decreased from SPC to TPC with

On the group level, there is a nonsignificant weak relation of intraindividual change in speed and change in ability (

On the individual level, all test takers exhibited a lower ability in the TPC as compared with the SPC. These results suggest that for every (except one) test taker not only speed is higher in the TPC (see “Manipulation Check” section), but that, at the same time, ability decreases. This aligns well with what we would expect in the presence of an SAT. Notably,

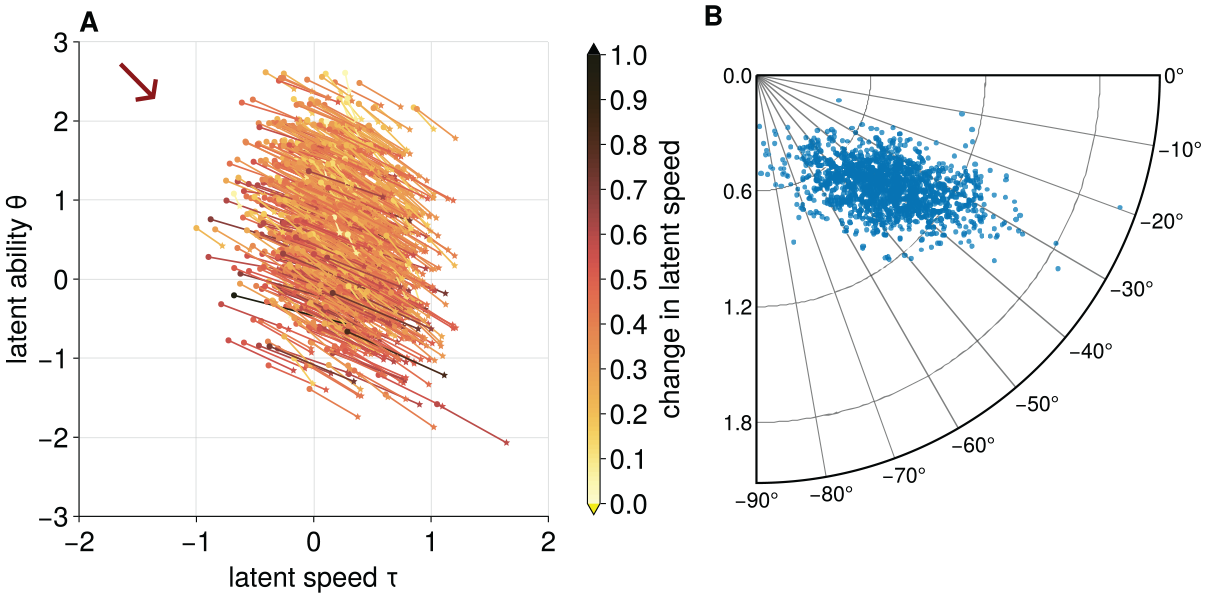

Figure 4 shows simultaneous changes in latent ability and latent speed for each test taker. In Figure 4A, the individual speed and ability levels under each of the two conditions are depicted by two-dimensional change vectors. Figure 4B illustrates the magnitude

ELT: Changes in Latent Ability and Latent Speed Across Conditions on the Individual Level. Panel A: Points Indicate Values in Self-Paced Condition, While Stars Indicate Values in Time-Pressured Condition. The Magnitude of Latent Speed Changes Is Color-Coded, Ranging From Very Small Changes in Yellow (Light) to Very Strong Changes in Black (Dark); Panel B: Polar Plot Showing the Magnitude and Angle of Individual Change Vectors With Unit-Variance Scaled Speed and Ability Changes

Three-Dimensional Cubes Test

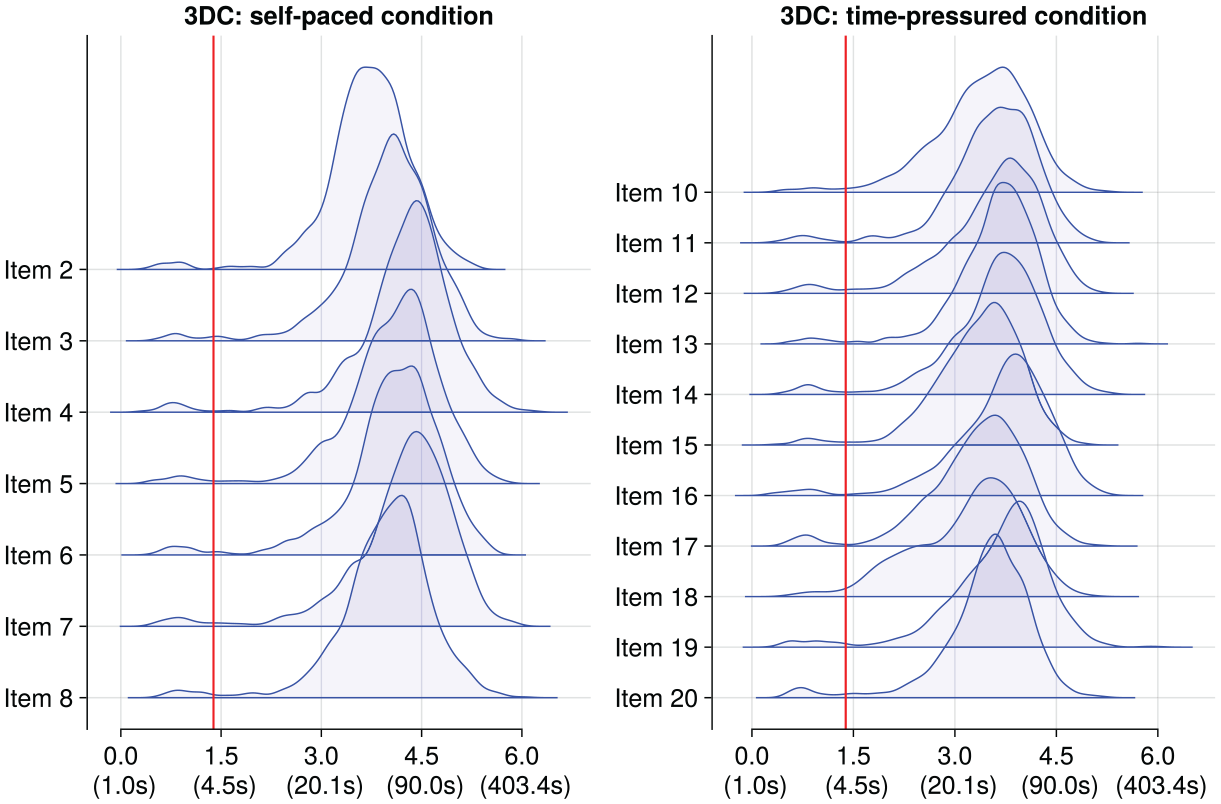

As shown in Figure 5, we identified multimodality in frequency distributions of log-transformed response times on 3DC items in both conditions. In total, this anomaly appeared in

Frequency Distributions of Log-Transformed Response Times on 3DC Items(Split by Condition)

Convergence and Model Fit

The model estimation converged successfully (

Manipulation Check

Mean latent speed estimates increased from SPC

The vast majority of test takers

Investigating the Speed–Ability Tradeoff

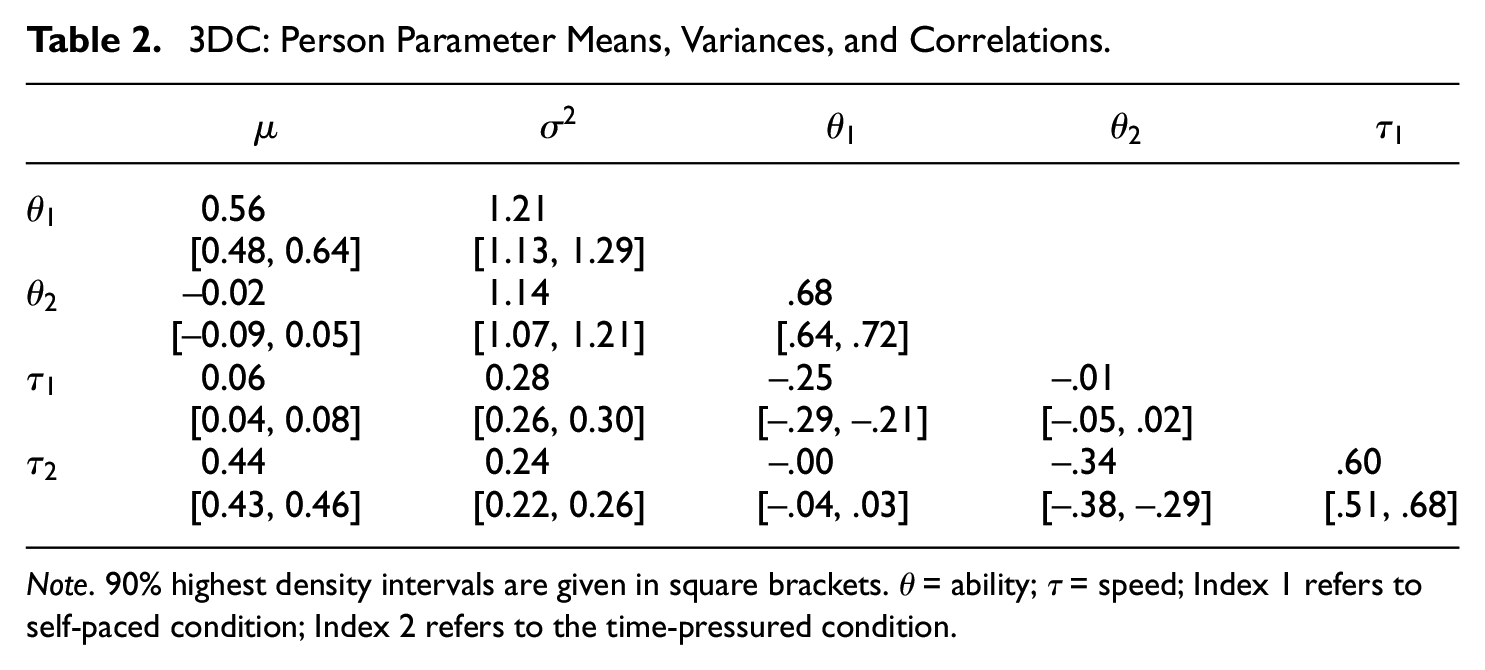

The posterior means of person parameter means, variances, and correlations are presented in Table 2. Large correlations were observed both for ability levels across conditions,

3DC: Person Parameter Means, Variances, and Correlations

Note.

Mean latent ability estimates significantly decreased from SPC to TPC by

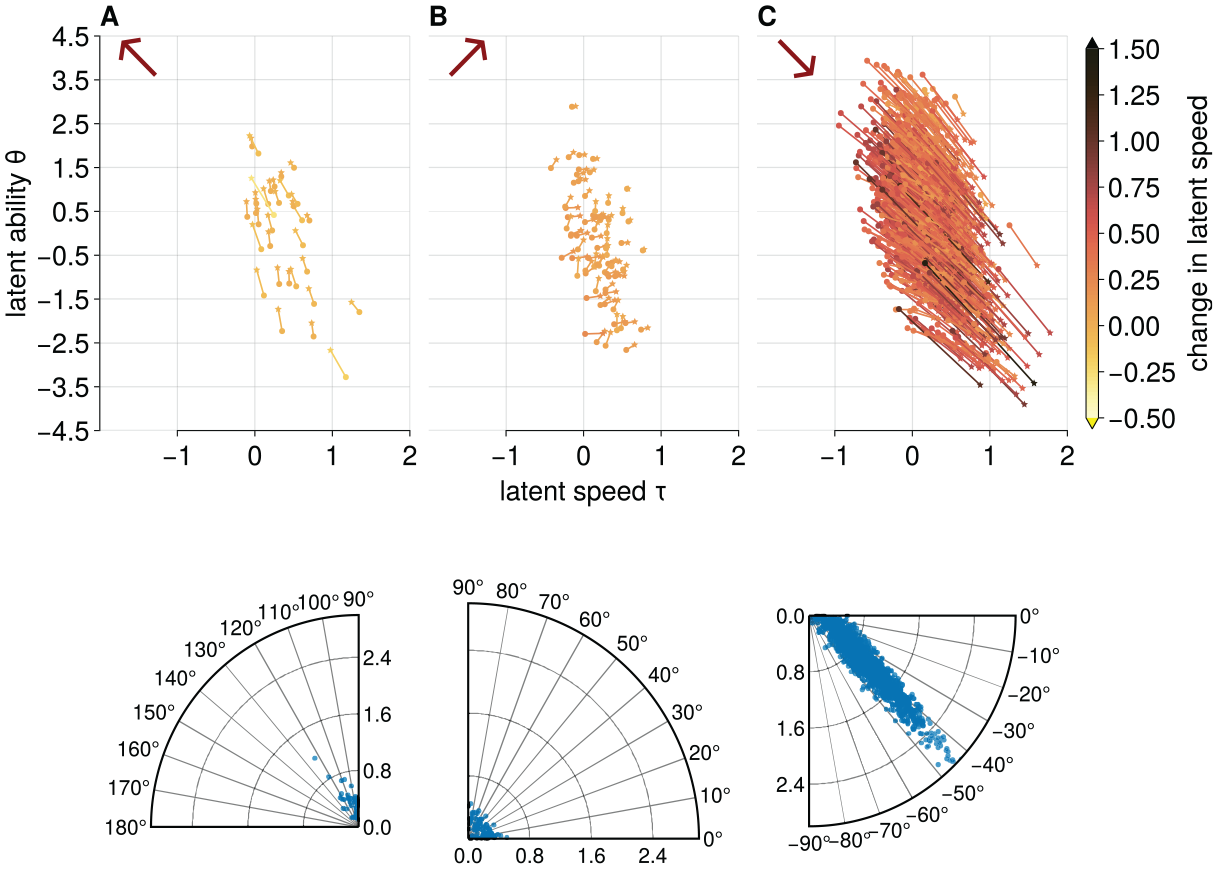

We found three groups of test takers: (a) test takers that behaved as expected, that is, they increased speed and decreased ability in TPC as compared with SPC (

3DC: Changes in Latent Ability and Latent Speed Across Conditions on the Individual Level. Panel A Depicts Those With Increased Ability and Decreased Speed, Panel B Shows Increased Ability and Speed, While Panel C Illustrates Increased Speed With Decreased Ability

According to Panel A in Figure 6,

The

The largest group of test takers (

The majority, that is,

Discussion

In this article, we presented an experimental approach to the investigation of the SAT in psychological assessments and investigated the occurrence of the SAT in two spatial ability tests within a high-stakes setting. The results showed that it is indeed possible to increase working speed by instructions. About 99.92% and 98% of the test takers increased their speed from a self-paced to a TPC in the ELT and the 3DC, respectively. Most of the test takers (99.92% in ELT and 94.48% in 3DC) showed patterns aligning with the existence of an SAT. While the amount of decrease of ability for a given increase in speed was rather homogeneous in the 3DC, it varied considerably in the ELT, indicating similar SAT curves in the 3DC across persons and a heterogeneous SAT in the ELT. While for most test takers the SAT seemed to be present, notably a small subgroup (5.52%) in the 3DC showed patterns contradicting the SAT; they gained in both speed and ability under time pressure. This outcome may suggest that time pressure has positive motivational effects for some test takers. Aligning with this notion, previous research posited that mild time constraints could enhance performance (i.e., reading comprehension; Walczyk et al., 1999), further indicating that the impact of time pressure on performance varies among test takers.

This research introduces an additional method to the toolbox of approaches for investigating the SAT. As compared with previous approaches, our approach has different strengths and limitations. Unlike approaches such as those by Domingue et al. (2022) or Mutak et al. (2024), which require nonstationarity within test conditions to investigate SAT, our method does not rely on this assumption. By deliberately manipulating speed levels within the test via small instructional changes, our approach ensures changes in speed, facilitating the investigation of the SAT. Our approach also does not depend on external measures of proficiency or on the assumption of uniformity in SAT functions across groups of test takers such as in Ranger et al. (2021). However, compared with previous methods, especially those relying on nonstationarity, our experimental approach is more time-intensive as it involves administering the same test under both speeded and unspeeded conditions. We also note that this approach assumes that items from the same test, whether presented under speeded or unspeeded conditions, are comparable and measured on the same scale. This requires that precalibrated item parameters remain valid across different testing conditions.

Our approach only captures two points on the SAT curve and, as such, limits our understanding of its functional form. Previous studies have modeled nonlinear relations speed and ability, but these models often relied on specific assumptions, such as similar SAT curves across groups of persons (Ranger et al., 2021), the need for nonstationarity (Domingue et al., 2022), or opposite functional forms between conditions of low and high accuracy (Kang et al., 2022).

In our study, we implemented a within-person design and manipulated the instructions for the cognitive test to explore individual differences in the SAT. Similar as with all previous approaches, the results on the intraindividual relationship may be impacted by confounding variables, such as ordering effects (Hambleton & Traub, 1974), position effects (Debeer and Janssen, 2013; Kanopka & Domingue, 2022; Weirich et al., 2014), learning, concentration, or fatigue effects (Ranger et al., 2021). While the fixed sequence of tests and conditions was necessary to ensure fairness in this high-stakes selection setting, it limited our ability to control for potential confounders. For instance, variations in concentration or fatigue throughout the study, compounded by the order of the instructional conditions, could systematically affect both ability and speed, potentially biasing the results. Consequently, a decrease in ability might result from an increase in speed, or it could also stem from reduced concentration. However, it is important to note that the administration time for the two spatial ability tests within the high-stakes assessment of our study was relatively short: The 3DC took an average of 17 min, compared with a mean of 8.5 min for the ELT (excluding test instructions and practice items).

Some variables may pose fewer problems in our study compared with previous research. Given the high-stakes nature of our testing environment, it is reasonable to assume that all participants maintained high motivation throughout the assessment, thereby reducing the likelihood of motivation as a confounding factor. This may differ in low-stakes assessments. Furthermore, our experimental design required all test takers to increase their speed, thus partially controlling for person-level confounders. Nevertheless, the chosen speed within each condition could still be influenced by individual-level confounders.

In an ideal research setup, potential confounding variables could be more effectively controlled. This would allow for a clearer interpretation of how different instructions influence cognitive performance across various settings. In future research, one may aim at assessing such possible confounders and control for them. In fact, a preregistered study is currently investigating potential confounding effects like motivation and concentration on the intraindividual relationship of speed and ability (Much et al., 2023).

In our study, we focused on a specific application, that is, investigating young, primarily male trainee ATCO applicants in Austria, and assessing spatial abilities. While this provides detailed insights into this context and provides further knowledge on the SAT in cognitive testing, it may not reflect the complexities in other cognitive areas or among different populations. Research has shown that age differences in speed, ability, and also their trade-offs exist for mental rotation tasks (Berg et al., 1982; Debelak et al., 2014; Hertzog et al., 1993; Linn & Petersen, 1985; Voyer et al., 1995; Zhao et al., 2019). Domingue et al. (2022) have found both positive and negative intraindividual relationships between accuracy and response time in cognitive tasks and highlighted the importance of task design. As such, our study can be seen as adding another piece to the previous results.

While the majority of previous research investigated SAT in low-stakes assessments, this study provides results from high-stakes assessments. Specifically in line with results from low-stakes settings, most participants displayed a decrease in ability with an increase in speed, aligning with traditional SAT expectations. Notably, the high-stakes nature of our tests may have influenced participants to adhere more strictly to the testing conditions, possibly due to the increased pressure and significant consequences associated with their performance outcomes. How well the proposed approach for investigating the SAT would work in low-stakes assessments also depends on how much test takers adhere to the instructions. Previous research (Hertzog et al., 1993; Lerche & Voss, 2017; Nietfeld & Bosma, 2003) has shown positive results. As such, we are optimistic that this approach would also be applicable to low-stakes assessments. This, however, needs to be further investigated in future research.

In future studies, it would be beneficial to directly compare our method applied to a high-stakes environment with results obtained from psychological assessments conducted in low-stakes settings using the same procedural framework. This comparative analysis would help delineate how test stakes influence the manifestation of the SAT and could reveal important variations in cognitive performance dynamics under different settings. Such studies would not only validate the robustness of our method across different testing conditions but also enhance our understanding of the contextual factors that affect test outcomes in psychological assessments.

Another avenue for future research involves exploring which timing conditions are most appropriate for specific diagnostic questions. Findings from Goldhammer et al. (2024) indicate that timed conditions might provide more diagnostic value by revealing how well individuals perform under pressure. For instance, a student’s ability to recognize words rapidly under timed conditions could give more insight into their reading efficiency, particularly with unfamiliar words. This suggests that the choice of a speed level may heavily impact the interpretation of ability scores.

These broader findings indicate that our study, while shedding light on the SAT in spatial ability, is part of a larger, more complex picture. Applying our methodology across various psychological tests, methodological approaches, or populations one may add to the generalizability of the results or identify boundary conditions for our claims. That said, our research underscores that SAT exists in tests and emphasizes the need to account for SAT in assessments. If we do not consider the SAT appropriately, we might misinterpret how people perform on cognitive tasks (Lohman, 1989; Pohl et al., 2021; van der Linden, 2009).

Supplemental Material

sj-pdf-1-epm-10.1177_00131644241271309 – Supplemental material for Assessing the Speed–Accuracy Tradeoff in Psychological Testing Using Experimental Manipulations

Supplemental material, sj-pdf-1-epm-10.1177_00131644241271309 for Assessing the Speed–Accuracy Tradeoff in Psychological Testing Using Experimental Manipulations by Tobias Alfers, Georg Gittler, Esther Ulitzsch and Steffi Pohl in Educational and Psychological Measurement

Footnotes

Acknowledgements

The authors would like to thank the High-Performance Computing Service of Freie Universität Berlin for computing time (Bennett 2020).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the German Research Foundation (Deutsche Forschungsgemeinschaft DFG) under Grant PO 1655/4-1. Open Access Funding provided by Freie Universität Berlin.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.