Abstract

Algorithms are increasingly being adopted in healthcare settings, promising increased safety, productivity and efficiency. The growing sociological literature on algorithms in healthcare shares an assumption that algorithms are introduced to ‘support’ decisions within an interactive order that is predominantly human-oriented. This article presents a different argument, calling attention to the manner in which organisations can end up introducing a non-negotiable disjuncture between human-initiated care work and work that supports algorithms, which the authors call algorithmic work. Drawing on an ethnographic study, the authors describe how two hospitals in England implemented an Acute Kidney Injury (AKI) algorithm and analyse ‘interruptions’ to the algorithm’s expected performance. When the coordination of algorithmic work occludes care work, the study finds a ‘dismembered’ organisation that is algorithmically-oriented rather than human-oriented. In the discussion, the authors examine the consequences of coordinating human and non-human work in each hospital and conclude by urging sociologists of organisation to attend to the importance of the formal in algorithmic work. As the use of algorithms becomes widespread, the analysis provides insight into how organisations outside of healthcare can also end up severing tasks from human experience when algorithmic automation is introduced.

Introduction

Civilization advances by extending the number of important operations which we can perform without thinking about them. (Alfred North Whitehead, 1911)

Algorithms are increasingly being used to create improvements in organisational processes that would otherwise be implemented by humans (Eason, 2014). There has been widespread speculation about whether algorithms will entirely replace workers or whether humans will work alongside algorithms, having varying degrees of influence over the means and ends of algorithms and their output (Brynjolfsson and McAfee, 2014; Ford, 2015; Spencer, 2018). The introduction of algorithms into work has thus reinvigorated a long-standing sociological interest in the limits of human agency (e.g. Brummans, 2017; Orlikowski, 1992). This has drawn the attention of a wide range of social scientists, who conceptualise algorithms in different ways, depending significantly on their disciplinary assumptions about the relationship between humans and technology (e.g. Amoore and Piotukh, 2015; Seyfert and Roberge, 2016). In spite of these differences, there is unanimous consensus in this literature that algorithms are not humans; yet there remains a significant lack of consensus about how their introduction into organisations will affect the future of human work and employment.

Our focus in this article is upon the organisation of the digital because organisation is key to understanding in what ways and how the digital is remaking work and employment. We examine how the use of algorithms, specifically in healthcare organisations, is affecting the capacity of humans to make decisions about patient care. However, the problems we examine relating to the work and employment of humans alongside algorithms are not limited to our empirical field and are being experienced much more widely across many different sectors and states. Our empirical focus is upon the

In 2015, a mandatory electronic alert was introduced to all acute hospitals in the National Health Service (NHS) in England, based upon an algorithm that automates the identification of AKI by detecting a change in the level of serum creatinine, which is a by-product of normal muscle metabolism. AKI made mainstream news in 2016 as the subject of a partnership between the Google-owned artificial intelligence firm Deepmind and The Royal Free NHS Trust in London. In 2017 The Royal Free was found to have breached UK data protection laws in sharing the health records of around 1.6 million patients (Lacobucci, 2017). These events raise important issues about power, privacy and responsibility in relation to such partnerships (Powles and Hodson, 2017), while also articulating a clear link between technology and market-making in public organisations (Bailey et al., 2019).

We are similarly concerned here with the relationship between responsibility and organisation in the context of technological innovation. The study from which the current article is drawn was a three-year ethnographic study of the implementation of the mandated AKI algorithm in two hospitals. Our research focused on how the two hospitals made the algorithm work in practice. Our broad focus is therefore upon organising the digital, and within this, what we have termed algorithmic work: the work that people do to support the functioning of the algorithm. As sociologists of organisation, the convergence point between algorithmic work and other kinds of work interests us because different kinds of work require different coordination, yet coordination has almost exclusively been premised on the assumption that technology is an extension of human capacities, not that technology can have an independent reality of its own that might even be inaccessible to humans (Lenglet, 2019). There is a growing recognition among social scientists of the challenges to governance and regulation posed by algorithms, manifested in their capacity for secrecy, opacity and inscrutability (Pasquale, 2015). Empirical studies of financial algorithms have noted the capacity of algorithms to generate ‘cognition beyond conscious thought’ (Beverungen and Lange, 2018: 10), but no studies of algorithms to date have examined the possible implications this has for healthcare organisations. To be clear, our starting concern here is neither that human healthcare workers are being replaced by technology nor about whether humans have agency over technology as some universal ontological principle. Rather, we want to examine the ‘material embeddedness’ (Pinch, 2009) of algorithms in formal healthcare organisations, to understand how algorithmic work is being coordinated and what this coordination tells us about organisation.

In medical sociology, materialist approaches to understanding technology often begin with the work of Marc Berg (1997), conceptualising algorithms as ‘computer assisted decision support’ technologies (e.g. Peiris et al., 2011). The emphasis on textual practices and bodies stemming from this line of research has more recently been developed to explore the meaning-making practices of algorithmic data users, analysing how these users make sense of algorithmic process and output (e.g. Maiers, 2017). Both kinds of analyses involve a coupling of the algorithmic world with the social world of medical practices, and proceed according to the negotiations and compromises involved in making this coupling ‘work’, for example, through users managing the risks associated with automating the complex and contingent task of emergency call handling (Turnbull et al., 2017). This approach to the study of algorithms provides measured balance against unrestrained technologically determinist and sensationalist claims about the total erasure of human employment (e.g. Frey and Osborne, 2015). However, a growing body of research in healthcare has increasingly fixed the ontological priority of the humanist domain of interpretation and action over the technological domain of algorithms at a theoretical level, thus losing touch with one of Berg’s (1997) most important early concerns about the co-constitution of practices and tools.

We thus return to the work of Berg (1997) and others, who have called attention to the specificities of technologies-in-practice, and the mutually constitutive role of technologies and users in the transformation of work practices (e.g. Pope and Turnbull, 2017; Ruppert et al., 2013; Swinglehurst, 2014). This helps us understand technological artefacts ethnographically and elucidate differences in the approach of the two hospitals we study, alongside the consequences that ensue. Similar to science and technology studies in healthcare, we align with the view of algorithms as performative, and follow the algorithm through the interactions of people and machines within each hospital. However, unlike these studies, our attention is not fixed on opening the algorithmic ‘black box’ (e.g. Johnson, 2007; Rystedt et al., 2011). Rather, we attend to the manner in which the algorithm and its output become embedded in, and are ultimately disruptive for, field level dynamics (cf. Neyland, 2014). These dynamics are illuminated by the different approach that each hospital took to implementing the algorithm. Different ways of coupling algorithmic work with other kinds of work have different consequences for how healthcare systems will develop once the use of algorithms becomes more prevalent.

Through our data we show how algorithms introduce temporal disruptions into care work. This problematises the coordination of algorithmic work, and by extension, the authority and responsibilities of healthcare professionals. We draw upon work on algorithms in social studies of finance to explain how the reconstruction of time produced by algorithms can end up severing tasks from human experience, constituting what Lenglet (2013: 313) describes as a ‘dismembered’ organisational state that is ‘maximally cleared of human components’. Our analysis therefore poses problems for the understanding of formal organisation as an ethical domain in which the situated judgement of self-responsible humans is the basis of authority and legal personhood (du Gay and Vikkelsø, 2016). We conclude by examining the implications of these problems for the regulation of algorithms and draw lessons for sociologists of organisation in this and other contexts.

Algorithms, medicine and work

The humanist preoccupations that we find in medical sociological accounts of technology are perhaps indicative of the qualitatively complex domain of healthcare, vis-a-vis knowledge and uncertainty (Moreira, 2007). Illness states are complex, variable, unpredictable and highly subjective. Medical knowledge therefore is not only highly specialised, but also necessarily uncertain and adaptive. It should therefore come as little surprise that the decision-making of two different medical practitioners presented with the same description of symptoms might be divergent. However, such variation, in what might be literally ‘life and death’ circumstances, presents problems at the individual, organisational and political levels. It is this variation that has given rise to the perceived need for standardisation in medical practices, and yet, the complexity underlying the variation has made standardisation extremely difficult to achieve in practice (Berg, 1997). In spite of this history, a so-called ‘techno-optimism’ dominates accounts of technology in healthcare, often uniting otherwise divergent ontological positions (Greenhalgh et al., 2009). Materialist approaches have gone some way to providing a range of alternative perspectives, from which derive two guiding principles for the examination of algorithms in healthcare: firstly, algorithms are inscribed with standards which shape the possible practices into which they can be enrolled; secondly, the mutually adaptive process of translation in which algorithms and humans are enrolled gives rise to unintended consequences (Berg, 1997; Berg and Timmermans, 2000).

The approach to working through the introduction of a technology then proceeds according to a set of questions concerning the practices and processes which develop from this situated and adaptive human/non-human work, and the consequences that ensue:

The relevant questions to be asked of IT [information technology] in work practices are

This guides a micro-sociological approach to understanding the kinds of work required in order to situate technology in practice; two forms of this work that we draw upon are ‘articulation’ (Star, 1991; Star and Strauss, 1999; Strauss et al., 1985) and ‘contextualisation’, which we derive from the translation required to situate a ‘boundary object’ in a new site (Star and Griesemer, 1989). Both these kinds of work proceed from the assumption that the organisation of any kind of work requires ‘infrastructure’, or ‘something that other things “run on”’ (Lampland and Star, 2009: 17). Infrastructure in this sense is to be understood as a relational concept, an embedded feature of human organisation ‘made real’ through organising practices (Star and Ruhleder, 1996). To make real means to situate and make practical within a particular context. Particularity implies difference, thus ‘one person’s infrastructure is another’s brick wall’ (Lampland and Star, 2009: 17). Both articulation and contextualisation describe forms of work made necessary in making infrastructure work, in order for the situation at hand to ‘run’.

Articulation ‘consists of all the tasks needed to coordinate a particular task’ (Gerson and Star, 1986: 258). Within this, we focus in particular on the ‘work that gets things back “on track” in the face of the unexpected, and modifies action to accommodate unanticipated contingencies’ (Star, 1991: 275). Articulation is essential to the collective organisation of technology in practice as it ‘manages the consequences of the distributed nature of the work’ (Star and Strauss, 1999: 10) yet in contrast to ‘production work’ (Strauss et al., 1985), it is invisible to rationalised models of work and organisation (Berg, 1997; Star and Strauss, 1999). In contemporary studies of technology in healthcare, articulation is described as situated actions which can ‘bridge the gap between the formal and informal, the social and the technical’ (Greenhalgh et al., 2009: 756).

Contextualisation can be seen as a form of articulation work, but where we use articulation to describe the situated interaction of user and technology, we use contextualisation to draw attention to the collective, multi-site coordination of the algorithm. Within our empirical case, this distinction helps us articulate the different kinds of work required to coordinate the different ‘phases’ of the algorithm-in-use (detection, communication and response). There is a common assumption in healthcare research and policy that technology will produce economies of scale by facilitating the instantaneous distribution of information across times and spaces (Greenhalgh et al., 2009, 2017). Critics of this assumption have shown that as technological systems grow they become more complex and more resource intensive, as the information must be re-situated and made practical, or contextualised, in each new site (Ellingsen and Monteiro, 2003b; Hanseth et al., 2006). This connects back to the above distinction of Strauss et al. (1985) between production work and articulation work; economies of scale imply that production can increase with no marginal increase to costs, however this ignores the ‘invisible’ costs of articulation and contextualisation.

We use these two forms to draw attention to the work of people first interpreting and working upon the output of algorithms (articulation) and then collectively acting upon it (contextualisation). This helps us illuminate an important distinction between the two hospitals we study and how they organise the algorithm. If articulation is conceived as individually mediated activities in which the technical ‘rubber’ hits the human ‘road’, then we conceive contextualisation as being concerned with the collective attempt to coordinate these acts. Both articulation and contextualisation demonstrate the contingency of time and space in any matter of human organisation; that is, things cannot be transported pre-fabricated into an organisational context and expected to just ‘work’; rather they must be made to work, and this work must be coordinated within and across heterogeneous sites, such that organisations can be stabilised, and their activities made visible and accountable.

Crucially, these conceptions of work are located within an order that is characterised by interpretation; in which it might be said that technology presents objects to humans, who interpret them in order to reach an appropriate course of action; hence the aforementioned notion of healthcare technology as ‘decision support’ (e.g. Peiris et al., 2011). Foundational concepts in organisational theory such as the self-responsible subject and legal personhood are likewise situated in this same normative order. From this is derived the surveillance, regulation, stability and predictability associated with formal organisation (du Gay and Vikkelsø, 2016; Weber, 1978). It might be argued that algorithms institute a recursive and mechanical principle of organisation, which favours a bureaucratic ideal of predictability (Totaro and Ninno, 2014). However, the analytical leap from a recursive algorithm to a recursive logic of organisation appears to entangle organisational politics and procedures in questionable ways (Neyland, 2014). As has been shown in other domains, algorithmic technology is disrupting times and spaces in a manner which makes problematic the interpretive work of bureaucratic stabilising and making visible (Lenglet, 2019). This puts into question the status of, and relationship between, the different kinds of formal and informal work instituted by algorithms, their coordination, and the different images of organisation with which we are left. It is around these concerns that the present analysis is oriented.

Research context

Our article draws on an ethnographic study of two NHS hospitals in England as they introduced the algorithm to support the identification and management of AKI. AKI is a relatively new classification and awareness of it among non-specialists is low. Attempts to improve the identification and management of AKI followed a highly critical report (NCEPOD, 2009), which led to the production of guidelines and an international classification (KDIGO, 2012; NICE, 2013).

The mandatory introduction of the algorithm was put into effect in 2014, with lab systems instructed to implement from March 2015. The algorithm resulted from a consensus conference in 2012 through which it was agreed that a detection algorithm would be developed based upon a scale of increasing severity from Stage One to Three. The development of the algorithm was undertaken by an interdisciplinary project group organised by the Association for Clinical Biochemistry and included clinical biochemists, nephrologists and laboratory software systems providers. According to guidance (Think Kidneys, 2014), the algorithm should be implemented as part of a three-phase process:

A detection phase, in which an acute change in serum creatinine produces an AKI warning stage test result via the algorithm;

An alert phase, in which the AKI warning stage test results are communicated to clinicians;

A response phase, in which an appropriate care process is put into action.

AKI is indeterminate, in the sense that it is a process, in dynamic interaction with other processes, and not always clearly identifiable as a discrete ‘episode’. It is also distributed, in the sense that it can present in patients across a very wide range of specialisms and clinical and surgical areas. These two factors create substantial challenges to standardisation (Berg, 1997; Goodwin, 2014).

Methods

The principal study objective was to use ethnographic methods to explore the implementation and spread of quality and safety programmes related to AKI. The research aimed to develop a nuanced understanding of the material accomplishment of ‘quality’ and ‘safety’ across two sites (‘Hospital X’ and ‘Hospital Y’) and attend to differences in the modes by which these programmes operated. The introduction of the algorithm and design of an alert and response system were the principal objectives of each hospital’s improvement programme.

The two hospitals under investigation were both part of the same regional network, and between them provided the majority of specialist renal services across that region. The AKI programmes in each trust began at around the same time and in response to the same perceived problems. In each trust the approach taken to quality improvement (QI) was quite different; however, they both adopted the national algorithm at the same time and had very similar outcome targets.

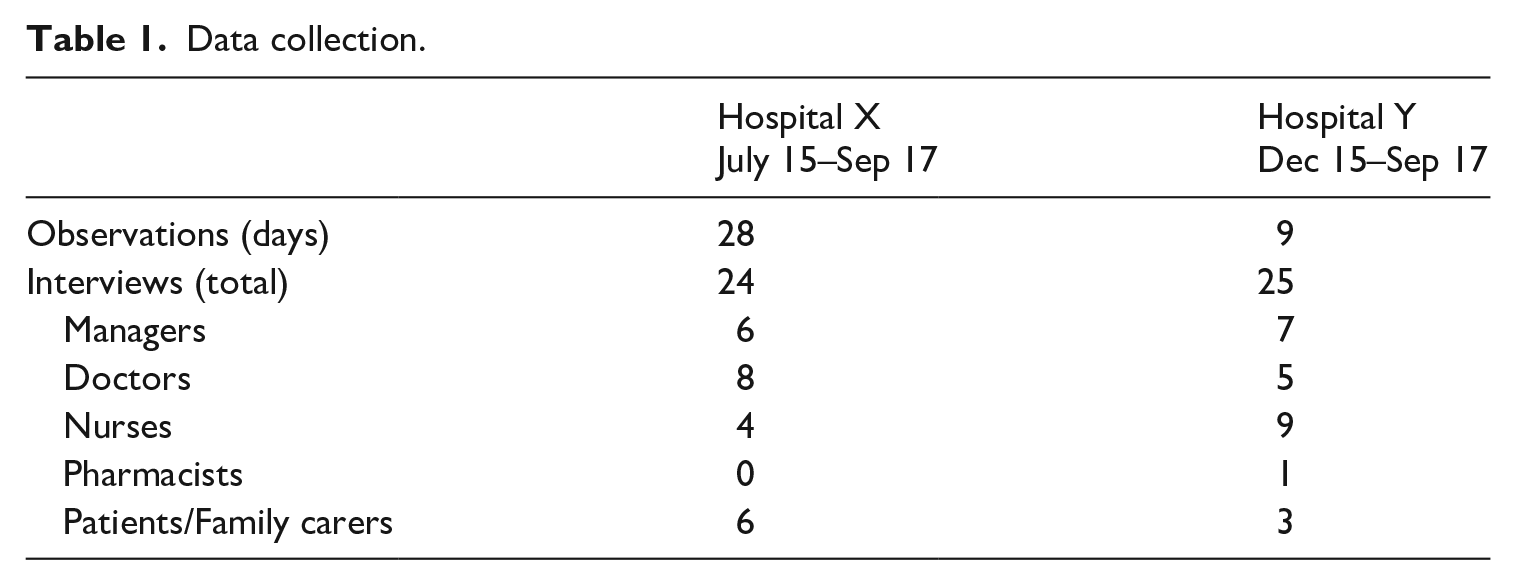

In this article our focus is not upon these outcomes, but upon the process according to which the algorithmic system was introduced and the different work that both the algorithm and its users undertook in each setting (Kitchin, 2016). The focus upon process was not simply a focus upon ‘doings’, but was a method for reorienting our ethnographic focus towards the use of the algorithm and associated practices (Christin, 2017), and the manner in which the actions of both technologies and users were coordinated within these settings. The study was funded as part of the National Institute of Health Research (NIHR) Collaboration for Leadership in Health Research and Care (CLAHRC) programme. CLAHRCs are research networks involving close partnerships between research and practice in the NHS. The hospitals involved in this research were existing partners of the network, and the study scope developed through a series of conversations between the researchers and the quality improvement teams in each trust, initiated in March 2015. The protocol for the Hospital X case was agreed, and internal ethical review was completed in July 2015, at which point the research team started observing programme meetings in this site. A full ethics application, which was required in order to interview patients, was also initiated at this time and received a favourable opinion from the Wales 7 REC committee (15/WA/0400, 16 November 2015). Data collection in Hospital Y commenced in December 2015. A summary of observations and interviews in both sites is provided in Table 1.

Data collection.

At the start of data collection Hospital X had just entered the first, ‘initiation’ phase of their improvement programme, and Hospital Y were moving into the second, ‘spread’ phase of theirs. Consequently, there was more emphasis in Hospital X on observations of contemporary events.

Observations in Hospital X were comprised of attending and participating in events related to the quality improvement programme, including formal group learning and discussion sessions, and more informal ward-based meetings and activities. The programme proceeded according to ‘Plan Do Study Act’ cycles, through which the care processes that are described in more detail in our findings were iteratively and painstakingly developed over a sustained period of time. While our findings might make these appear like abstract schemas, rather, they represented little more than temporary ‘settlements’ made between the requirements of national policy and the situated organisational capacity and capability to implement these.

By contrast, in Hospital Y, a pilot improvement programme had already taken place in four wards, and at the time of data collection, two specialist nurses were involved in trying to spread the learning from the programme to the rest of the hospital. Therefore, interviews and conversations with the two nurses and with others who had been involved in the work were the principal means through which the care process described below was constructed. Observations were comprised of shadowing the two nurses through their ward rounds and observing their interactions with non-specialist staff.

The two hospitals differed in their approach to introducing the algorithm, which related partly to their different approaches to QI more generally and differing technological capacity and capability. This is discussed at greater length in the first part of our findings. In brief, the technological capacities of Hospital X were described as sophisticated both locally and nationally due to its electronic patient record and, more generally it was known as an organisation with a positive ‘culture’ of improvement. The approach adopted to improving AKI was collaborative and drew on existing workforce capacity. It mobilised established improvement methodologies and the dedicated resource of an experienced in-house improvement directorate (a rarity in NHS organisations). In contrast, Hospital Y, lacking both the technological infrastructure and the knowledge and experience of improvement, designed their approach around additional workforce through the employment of two specialist AKI nurses, and employed external consultants to help them design a programme of improvement.

Findings

We begin by describing the different process of care that developed in each site around the introduction of the algorithm. We examine what we refer to as the ‘interruptions’ within each process, which were produced by the inaccuracies of the algorithm. These interruptions necessitated various kinds of work, which had consequences for the formal organisation of care in each setting.

Following the algorithm: Care process mapping

The algorithm was situated within a process of care which began with blood samples being taken by a member of clinical staff then processed in a laboratory. The algorithm detected a change in levels of serum creatinine from baseline data and identified the appropriate stage of AKI according to pre-defined thresholds. The organisational task was then to establish a reliable process for verifying this alert, subsequently communicating it to the relevant parties and establishing a protocol for the appropriate response. Three issues emerged from the detection phase, which we describe as ‘interruptions’ that complicated this task:

The potential for over-diagnosis (the algorithm detects a possible case of AKI where there is none).

The potential for under-diagnosis (the algorithm misses a possible case of AKI).

The reliance of the algorithm upon baseline data.

The first two problems are methodological and technical, situated within the prescribed method for identifying cases of AKI according to changes in levels of serum creatinine, which becomes embedded in the technical apparatus for automating this method; the algorithm itself. The third problem is an organisational and inter-organisational issue; for if the hospital does not have a baseline on record for the patient in question then they must attempt to identify one from the records of other health providers. This might be a lengthy undertaking, unsupported by shared electronic records between providers. We now describe the different processes adopted in each organisation to manage the algorithm and its associated issues.

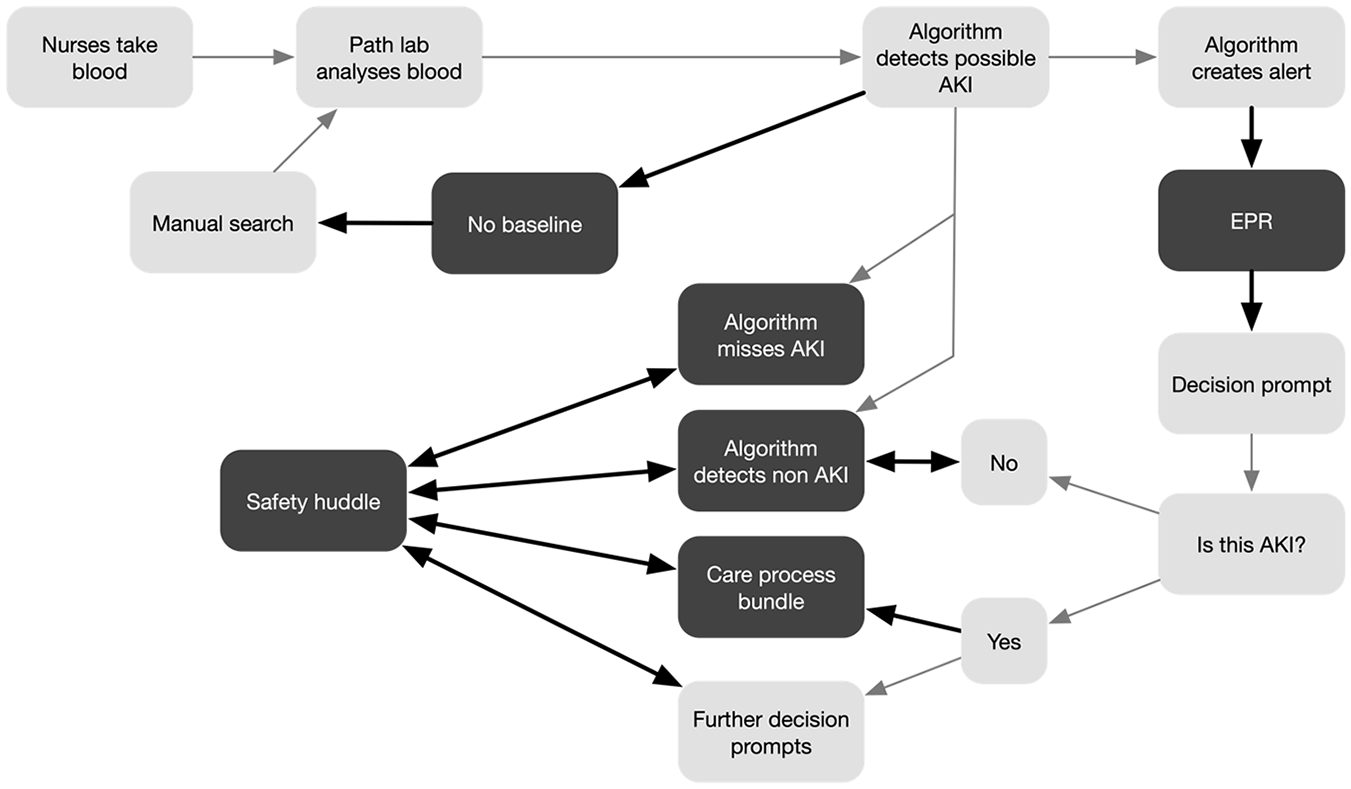

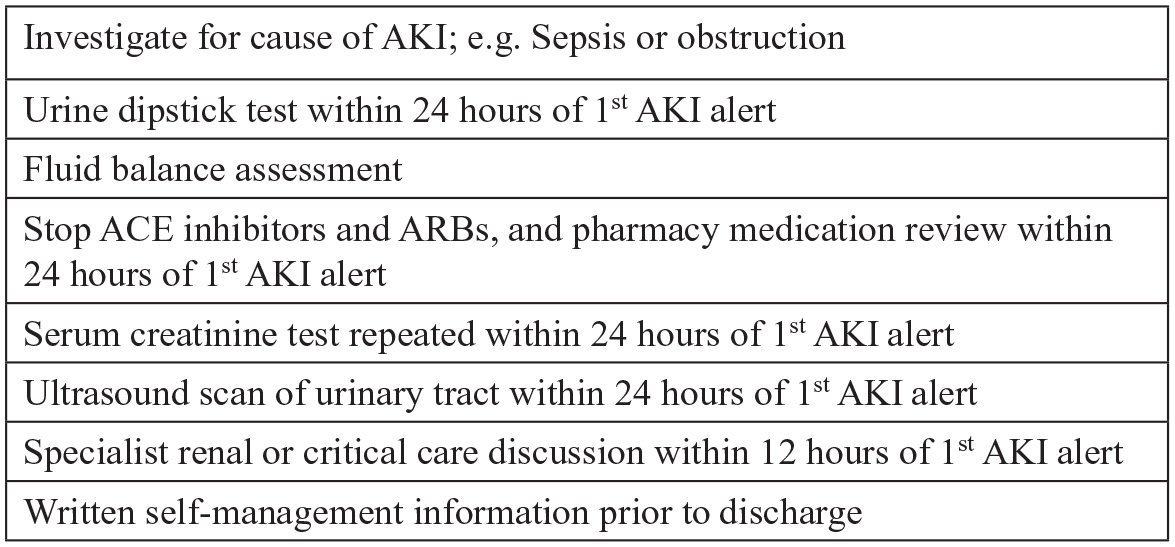

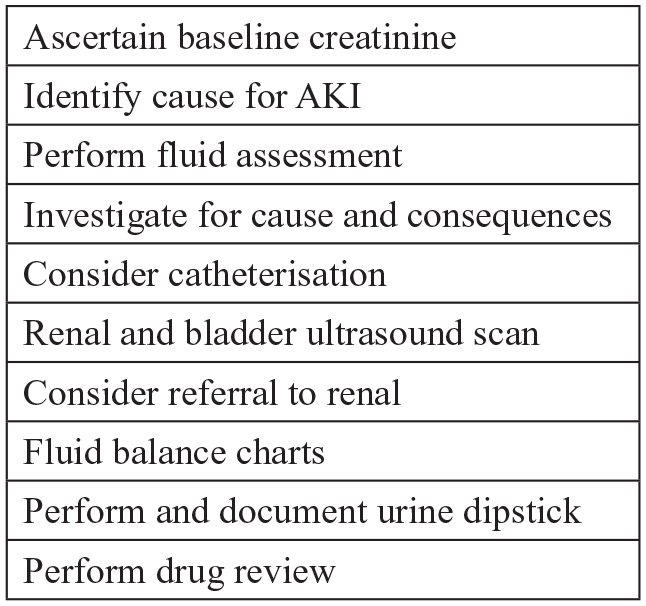

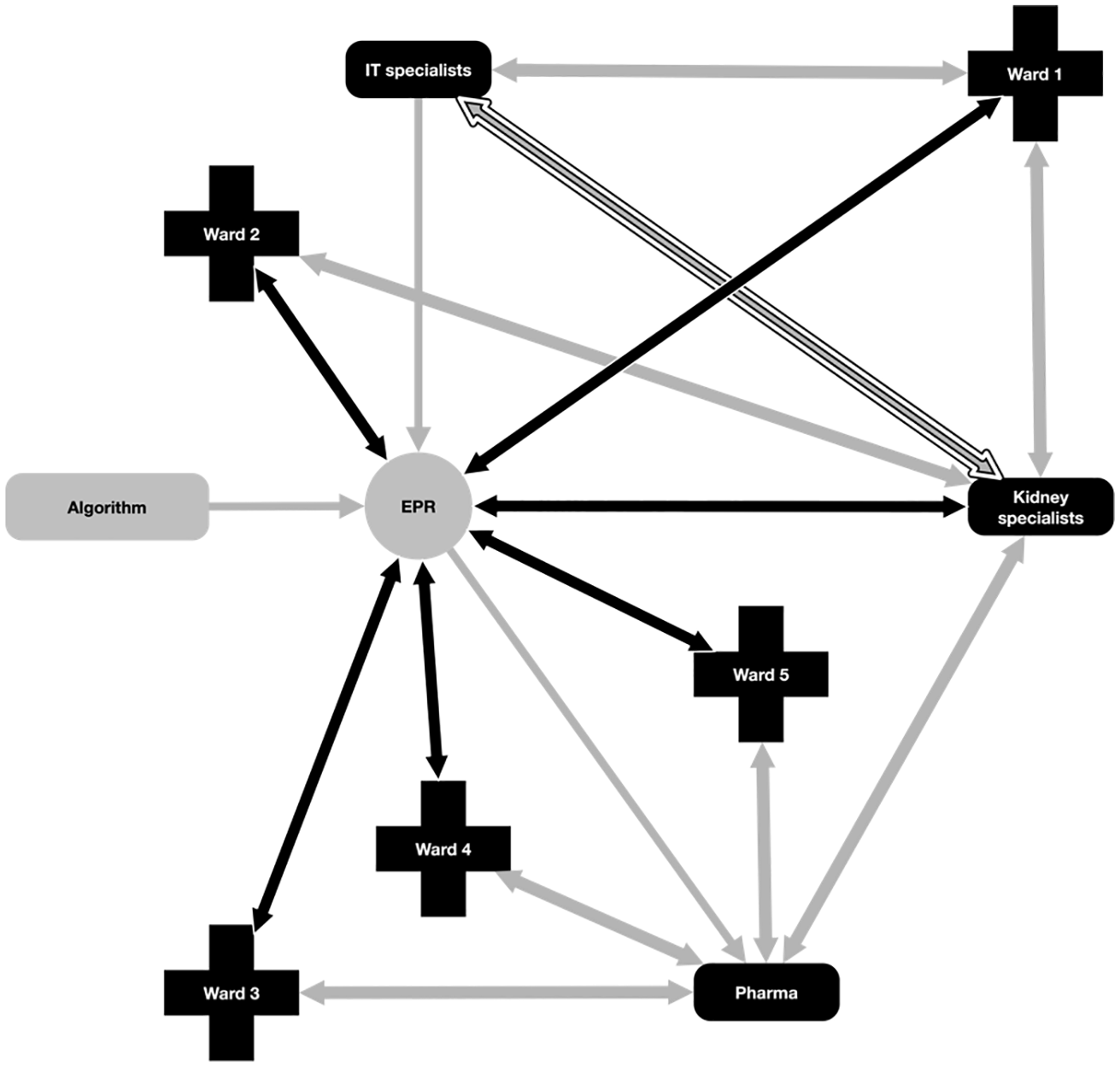

Hospital X adopted the national algorithm for detecting possible cases of AKI. In the alert phase they sought to capitalise upon their relatively sophisticated IT systems in order to integrate the alert into the electronic patient record (EPR) and produce a set of automated decision prompts for staff; that is, they linked the AKI algorithm to the algorithms that ran the EPR. The first of these decision prompts asked for verification of correct diagnosis, from here further prompts were enabled, and the protocol for the appropriate clinical team response (the ‘care process bundle’) was triggered. The care process bundle was specific to AKI and was made up of a number of actions and investigations that should be instigated upon identification of AKI (see Figure 2).

The problem of over-identification of AKI (a false positive) was managed through the automated verification prompt. However, under-identification, by definition, was not detected by the algorithm so never entered the automated alert and prompt system, and instead relied upon human-initiated work. Similarly, in cases where there was no baseline measure, human intervention was required. A visual representation of the Hospital X process and the care process bundle are provided in Figures 1 and 2, respectively.

Hospital X care process map.

Hospital X care process bundle.

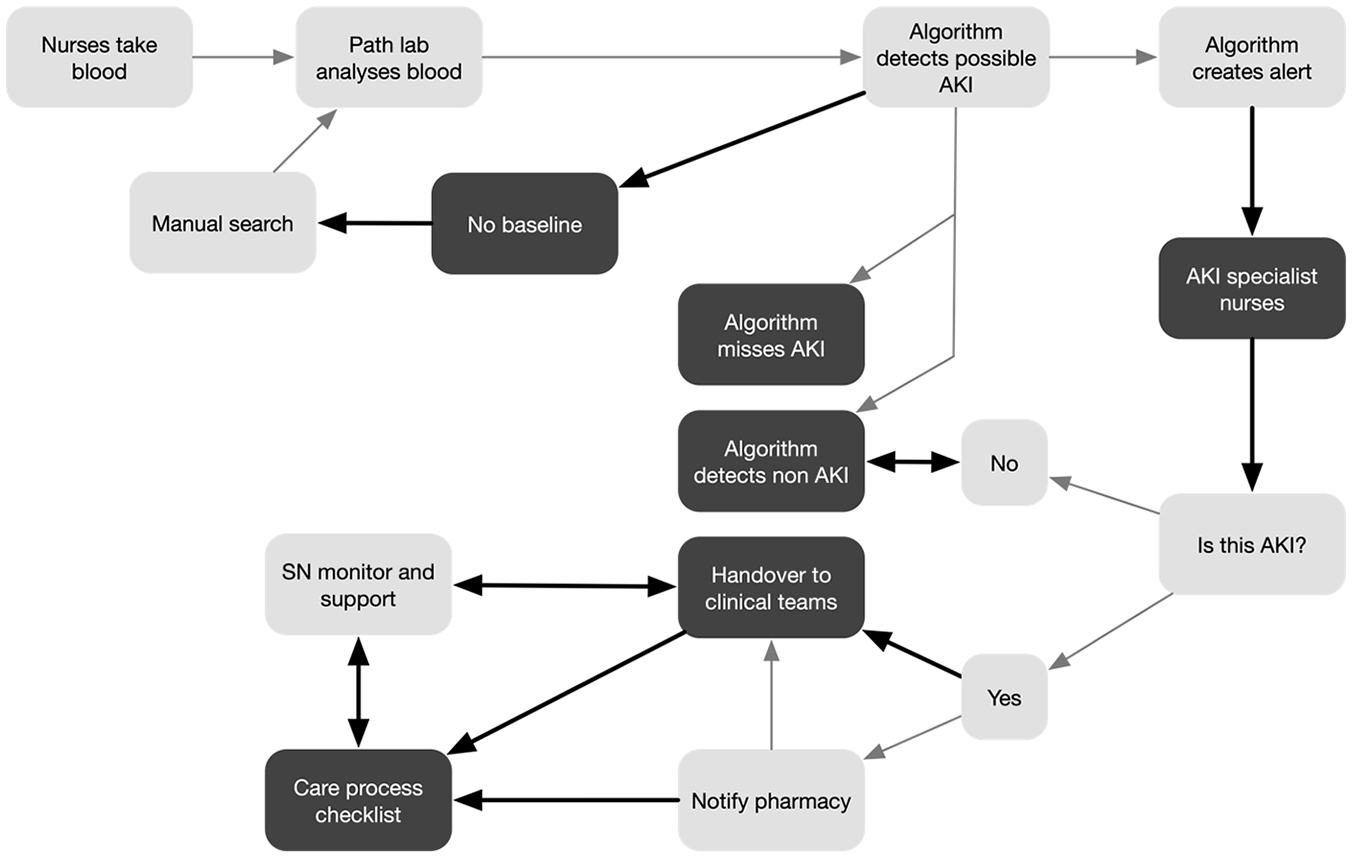

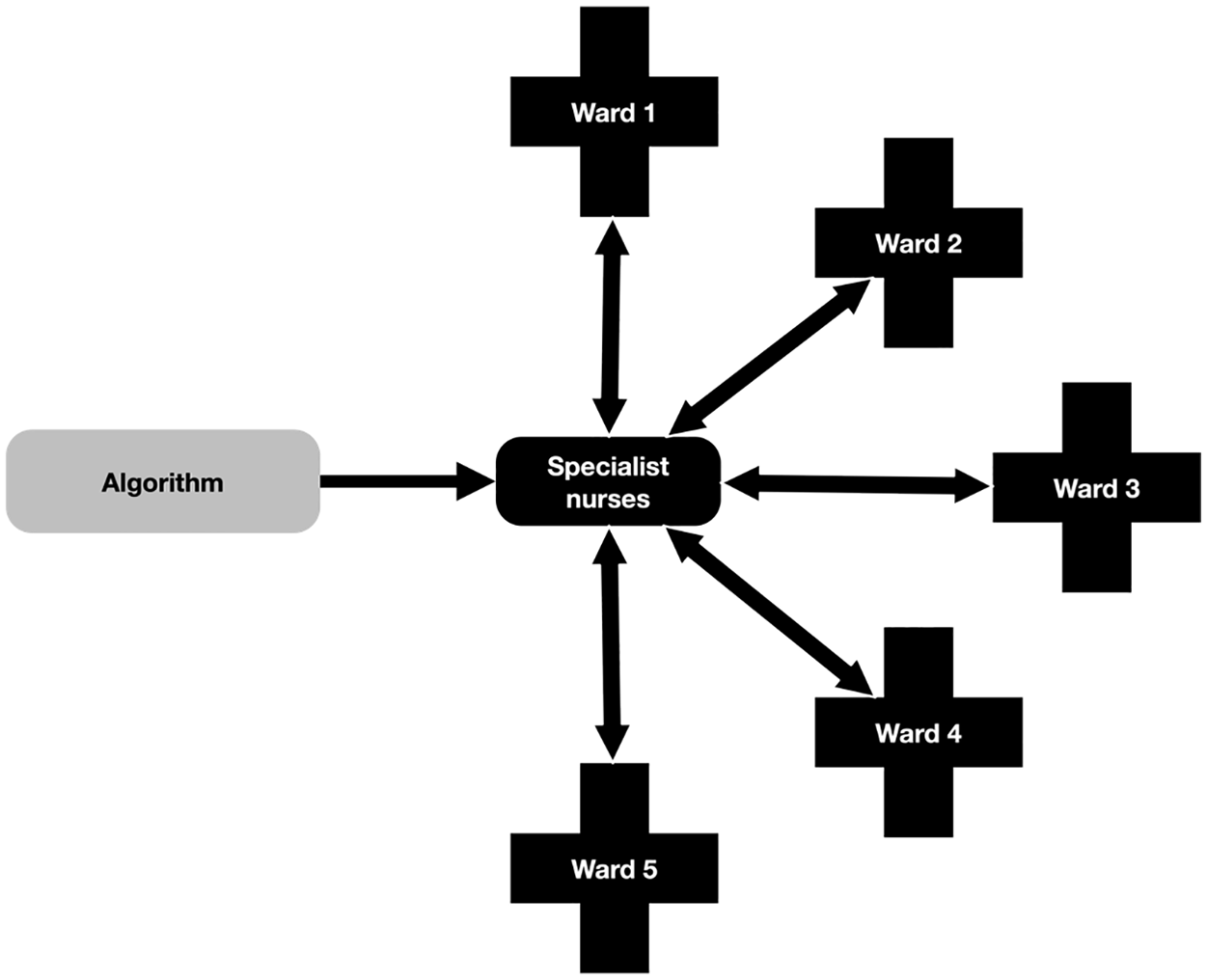

Rather than adopt the national algorithm, Hospital Y developed their own algorithm, that aimed to eradicate all under-identification (missed cases), at the cost of an increase in over-identification. Lacking the same EPR systems as Hospital X but with the additional workforce capacity of two specialist nurses, the alert phase was based upon the specialist nurses manually checking all of the algorithmic output, verifying the existence of AKI, and communicating this to clinical teams. In the response phase, the nurses initiated the protocol for appropriate response (the ‘care priority checklist’; see Figure 4) and monitored and supported the ongoing process of ward-based care. A visual representation of the Hospital Y process and the care priority checklist are provided in Figures 3 and 4, respectively.

Hospital Y care process map.

Hospital Y care priority checklist.

We now consider the different kinds of work produced by the three interruptions.

Interruption one: Over-identification

The national algorithm that was deployed in Hospital X in the detection phase resulted in both over- and under-identification of AKI, which created the need for articulation. In the alert and response phase, the electronic patient record was used in order to communicate the alert to staff and elicit appropriate responses, which created the need for contextualisation. However, the direct machine–machine communication made possible by the Hospital X system (in which AKI algorithm communicated directly with EPR algorithm) made it harder to make visible and manage the necessary work, as we now illustrate.

In the detection phase, in the case of the algorithm identifying a false positive, the automated alert and prompt was set up to manage this; staff were prompted by the EPR to verify the diagnosis, and upon doing so, they were fed the next appropriate action. This instance of articulation reduced the human input to a binary choice and provided the appropriate data to make that choice. Assuming that any member of staff able to interpret the data might be expected to come to the same conclusion regarding this choice, the false positive might produce little additional work.

In Hospital Y, the decision was taken to accept a greater degree of over-identification in the interests of eradicating all under-identification. In place of the EPR, the specialist nurses were tasked with taking the data produced by the algorithm, verifying the accuracy of the diagnosis (articulation), and communicating verified cases to ward staff and initiating and monitoring the appropriate procedure (contextualisation). In the case of over-identification, the extra cases to which the Hospital Y system was subject were managed by the dedicated human resource available. In both cases, over-identification required articulation in the form of verification, and contextualisation in the form of communication. The Hospital X process relied more on the EPR, while the Hospital Y process relied on human- and paper-based systems. In neither case did the interruption appear problematic in terms of coordination; the necessary work was minimal and could be rendered formally visible.

Interruption two: Under-identification

We next consider the case of under-identification, or a ‘missed case’ of AKI. This interruption only occurred in the Hospital X system, but it also illuminates some of the differences in the work required by the two systems.

Understanding a missed case requires an appreciation of the care process in which it was situated. AKI was identified through the analysis of what were termed ‘routine bloods’, that is, the majority of patients entering the acute assessment unit of Hospital X were likely to have their blood taken, and this blood would be analysed for AKI. Many bloods would be taken in a day, and around one-fifth of these might be possible cases of AKI. In Hospital X this was expected to be approximately 40 possible cases of AKI out of approximately 200 bloods assessed per day. The care of each patient would likely be the responsibility of several different individual staff. The results of blood tests would routinely be seen and acted upon by different people than those who took the blood, who might be different again from those who ordered the blood taken. AKI competed for attention among many other concerns. This meant that a missed case – something the algorithm did not detect, and to which there was no alert communicated to staff – had to be brought into being by a suitably qualified and informed individual with the knowledge of a particular patient’s condition combined with the knowledge of their lack of identified AKI. Therefore a ‘missed case’ was not a ready-formed object, but rather one that had to be constructed through further investigations. Articulation in this case was therefore complex and heterogeneous – that is, it would be a different character of work for different patients in different locations – and subject to influence by many factors external to the immediate point of care. The most immediate problem this interruption presented was how a missed case might even be identified. This offers a glimpse of the temporal disjuncture created by the algorithm: a missed case is something for which retrospective work must be undertaken in order for it to be made visible. At the same time, the algorithm carries on, inexorably, producing more cases (and, by extension, missing more).

Referring to Figure 1, in order to try and manage the potential for a missed case, the human-oriented component of the Hospital X system was built upon a regular handover between staff referred to as the ‘safety huddle’. The safety huddle was conducted twice daily on each ward and was oriented around a checklist of priority points for discussion at handover of staff from one shift to another. Part of the improvement work in Hospital X was to incorporate AKI into this checklist. During the improvement work, members of the improvement team would sometimes join huddles and give a brief presentation about AKI in order to try and raise awareness. Updates were provided from wards about how the huddle was working through the group learning sessions which punctuated the improvement programme. Through this process it appeared that the huddle worked differently, and responded to AKI differently, in different sites across the hospital. Where the huddles were not working well, it appeared that although AKI might be discussed, this did not always translate into timely and effective action by staff following the huddle.

The huddle was combined with the introduction of a protocol for appropriate responses to possible AKI, the ‘care process bundle’ (see Figure 2). The bundle, which was integrated into the EPR prompts for staff, was made up of seven steps which should be taken in every possible case of AKI – of which taking blood was just one. The temporal standards embedded in AKI policy, with particular actions to be completed within particular timeframes were re-inscribed through the care process bundle. In cases where a ‘true case’ had been detected, performance against these standards could be closely monitored according to the algorithmic time stamp on each detection and alert. The missed case, in contrast, was one that could not be made formally visible and amenable to management, rather, informal collective work was required. Both the huddle and the bundle shared a common characteristic: they were protocol-driven approaches. That is, they broke the appropriate response to AKI down to a number of verifiable procedures. Yet, although these procedures were interventions of a sort, they were not in themselves ‘care provision’, but rather were about ordering appropriate tests or delegating to other parties to carry out future actions. They were also resource-bound. A recurrent problem with the safety huddle was finding an appropriate person to ‘lead’ them: that is, someone with the time and expertise to administer a collective conversation driven by a number of different protocols (e.g. one for AKI, one for sepsis, one for falls prevention, etc.).

Together the safety huddle and care bundle were the major components of the contextualisation undertaken in Hospital X in order to manage the interruptions caused by under-identification. As described above, these measures were driven by a need to establish a reliable process for identifying and responding to individuals with AKI. However, with their protocol-driven approach it was unclear how effective they were as a resource with which to bring the ‘missed case’ into existence. Below we expand on how contextualisation via the safety huddle in Hospital X was necessary for making visible what the machine–machine interaction of their system had rendered obscure: that is, the possible need for articulation to correct a missed case.

Interruption three: Missing data

Interruption three concerned the scenario in which there were no baseline data with which the algorithm could identify a possible case of AKI. In this scenario, the process in each organisation was for a manual search to be undertaken in order to locate baseline data, however the different orientations of the two systems illuminate different forms of articulation and contextualisation. In Hospital Y, part of the specialist nurse role was to provide ongoing monitoring and support to non-specialist, ward-based teams. In cases where baseline data were not present, this provided ward teams with a dedicated resource to support the work of manually retrieving the data. This was not simply a practical task requiring time and effort to contact other services and locate data, it was also a task requiring clinical judgement to evaluate whether or not it was necessary to find the data to enable the algorithm to identify the possible case of AKI.

Three hypothetical scenarios then presented themselves: one, the specialist nurse might judge there to be no likely AKI and the manual search for baseline data would be deemed unnecessary; two, the specialist nurse might think there was a possible case of AKI and the search for data would be deemed necessary; three, the specialist nurse might believe there to be an urgent case of AKI, in which case they could support the appropriate escalation process in the absence of baseline data. As with the interruption caused by over-identification, the specialist nurses here embody both the articulation and contextualisation necessary to manage missing data. At the same time, their embodiment of both types of work makes the analytical distinction we are drawing between these forms of work easier to demarcate – they mobilised their expertise in ‘speaking for’ the algorithm when it lacked the necessary inputs required to compute a decision. They then mobilised this knowledge in interaction with colleagues in order to reach a collective course of action. Just as this process renders our distinction visible, so too could it be rendered formally visible.

In Hospital X there was no dedicated human resource to support the identification and management of AKI. Instead the alert was communicated by the EPR, which distributed the work of AKI through prompts to staff across the hospital. The ideal scenario established in the development of a response protocol for AKI was that staff on each ward should be made collectively aware of AKI through the use of the care process bundle and safety huddles – that is, that these processes should successfully contextualise AKI. As with the under-identification, the identification of cases of missing data relied on these processes in order to make visible the need for further work. Yet, as described above, these processes, though populated by people, were still driven by a protocol-approach, and were resource-bound. Where the possible need for further work was identified, Hospital X lacked the dedicated specialist-nurse resource with which to support decision-making. Therefore, decisions taken regarding which missing baseline data should be followed up were not linked to particular individuals, and were likely to be made with greater uncertainty, resulting in a process less amenable to formalisation.

In the case of missing data, the problem of time, and the differences between the two systems are made plain. The Hospital Y process introduced a human-oriented and formally visible ‘pause’ in the system, embodied by the specialist nurses (see Figure 5). These nurses interrogated each case of missing data as part of their verification (articulation; ‘speaking for’ the algorithmic interruption) in collaboration with ward staff, with whom they established a collective response (contextualisation; making the necessary work practical).

Hospital Y informational flow.

In contrast, in Hospital X, the AKI algorithm communicated directly with the EPR algorithm and instantaneously distributed possible AKI, along with each possible interruption, around the hospital (see Figure 6). This distribution created the need for articulation, a need that it synchronously rendered obscure. The need for articulation then had to be identified collectively, retrospectively, and from the point of view of the formal organisation they had established, invisibly.

Hospital X informational flow.

In Hospital Y, therefore, the nurses acted as a mediator between the human and non-human aspects of algorithmic work; they ‘humanised’ a technologically supported care process (cf. Pope and Turnbull, 2017; Turnbull et al., 2017). In contrast, Hospital X presents a ‘dismembered’ system, ‘maximally cleared of human components’ (Lenglet, 2013: 313). By this we do not mean that humans have been ‘erased’ from the care process, but that the algorithm constitutes a distinct normative and temporal order that is inaccessible to humans. In a dismembered system, human judgement and action comes ‘after the fact’ of the algorithmic detection or missed case. Human judgement is in this case always reactive, and regulated by the technology and its limitations.

Discussion

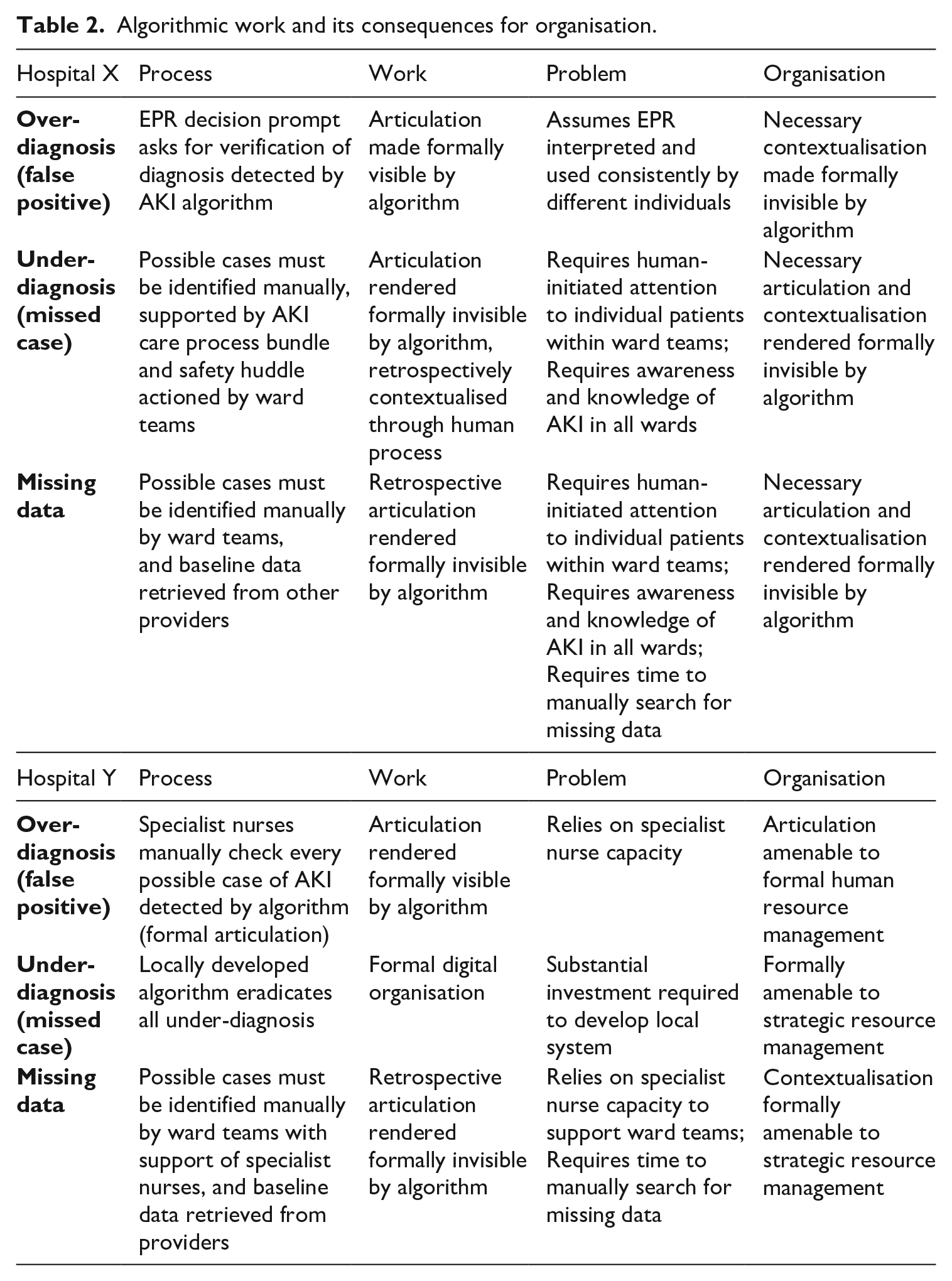

The findings we present in this article describe the organisation of an algorithm that was meant to identify possible cases of Acute Kidney Injury (AKI). The algorithm had three core limitations, which we describe as ‘interruptions’: over-diagnosis, under-diagnosis and reliance on baseline data. Each of the two hospitals we studied adopted a different approach to managing these interruptions and each approach illuminated different challenges associated with the introduction of an algorithm, the work created by its inaccuracies, and the possible organisational response to these (see Figures 1 and 3). A core feature of the observable differences between the two approaches was the distributive capacity of the system in which the algorithm was embedded and the extent to which individuals and organisations could contain the flow of information (see Figures 5 and 6). Table 2 summarises the approaches of the two hospitals to organising the algorithm and its attendant work.

Algorithmic work and its consequences for organisation.

The interruption caused by over-identification appeared to create the least disruption to either system. Nevertheless, the assumption of consistent use of the EPR by different individuals in different times, places and specialisms has been shown to be a somewhat problematic assumption associated with this technology (Greenhalgh et al., 2009). This is because of the contextualisation work required in order to make sense of, and act upon, information in practice (Ellingsen and Munkvold, 2007). The larger the system – i.e. the more different times and spaces being traversed instantaneously by the EPR – the more this contextualisation work is likely to cause interruptions to the smooth operation of the system (Ellingsen and Monteiro, 2003a). The value of the EPR, that it can distribute information and prompt actions across times and spaces, is therefore also the source of a key coordination challenge with regard to the algorithmic work necessitated. The problem of management in Hospital Y, by contrast, was a much more familiar concern of resource dependency.

The interruption caused by under-identification was specific to Hospital X. The problem was that a missed case was, by definition, something missed by the automated system. If the automated system missed a case then it did not communicate ‘missed case’ to the EPR, and, in turn, the EPR did not prompt a decision to be taken by a member of staff related to a missed case. Rather a possible missed case had to be initiated and assembled manually. This meant that Hospital X was reliant on the human-oriented systems that had been created around the AKI alert – the care process bundle and the safety huddle – to initiate investigations related to possible missing cases.

One concern here is that this initiation relies on a set of care practices characterised by individualised knowledge of patients and adaptability to changing circumstances. These practices are somewhat at odds with the chronically under-resourced and fragmented character of contemporary NHS care (Bresnen et al., 2017; Nicolini et al., 2008). They are also at odds with nursing practices governed by electronic alerts and decision prompts; what we might term

A relevant concept to consider here is ‘alert fatigue’, which describes the possibility that clinical staff become so used to the presence of electronic alerts that they ignore or ‘override’ them (Baker, 2009; Cash, 2009). This concern has been related to the AKI alert under discussion here, with the argument made that the alert can ‘irritate’ clinicians, who then find ways to ‘bypass’ the system (Kanagasundaram et al., 2016). This implies an active relationship between the human and non-human components of the care process, with the human making judgements as to the credibility of the information received. Far from ‘fatigued’, this bypass suggests an agentic attempt to

In the case of the second two interruptions in Hospital X, the instantaneous transfer of information between algorithms deferred the possibility of active, human interrogation to a future time after the possible cases had already been distributed around the hospital. The ideal ‘just-in-time’ principle of care imagines a set of procedures which can be clearly specified in advance and which depend on humans as little more than technicians, linking one part of the system to another. This is at odds with the active and situated sensibility made visible by the idea of alert fatigue. As algorithmic-oriented care becomes more widespread there is a danger that processes of care will leave less space for active and situated human judgement, and the collective and relational resources upon which this depends (cf. Webster, 2002). This mismatch draws attention to the competing normative orders that are at stake with the use of algorithms in human-oriented work. The electronic prompts and alerts that characterise just-in-time care are comprised of various attempts to improve efficiency, reduce variability and draw focus to poorly known but potentially fatal problems, such as AKI. This brings us back to the conception of technology supporting and optimising human performance. The problem that our analysis identifies is that the technological means through which just-in-time care is embedded in practice – the algorithm itself – alters the assumed relationship between the technological and social domains of work. What Lenglet (2019) describes as ‘the closure of the algorithm’, its inherent ability to execute itself, makes a place for human interpretation that is always after the fact. Algorithmic just-in-time care, therefore, is always already ‘out of time’.

The two systems under examination here illuminate two contrasting perspectives upon this problem, at the centre of which is the different manner in which temporal disjuncture was managed in each setting. This difference is derived from the observation that interruptions related to under-identification and missing baseline data in Hospital X could not be submitted to automated detection and alerting. Referring back to Figures 5 and 6, both systems present images of complex, heterogeneous networks made up of human and non-human agents. However, the two images differ substantially. Hospital Y presents us with a network made up of multiple dialogical relationships, with the specialist nurses participating as gatekeepers in all possible relationships. The network is therefore stabilised and contained by human capacities such as the ability to only be in one place at one time. Hospital X in contrast presents us with a network made up of multiple polyvocal relationships, with the EPR simultaneously distributing possible AKI among multiple networks. Because of this, in the case of interruptions due to under-identification or missing data, the Hospital X system is at risk of compounding the failures inherent to the algorithm itself. Put differently, there is an instantaneous distribution of possible AKI, which is at once also a possible false positive or missed case. This creates multiple uncertain future cases of AKI, for which retrospective human work will be required, assuming they can first be rendered visible.

Ultimately, the organisation of this algorithmic device presents the field of healthcare with a problem of responsibility. The interrupted scenarios we have described are examples of clinical work as conceived in Garfinkel’s (1967) account of social action, proceeding according to an emergent and reflexive engagement with the question of ‘what to do next’. This conception does not lend itself easily to the technological attempt to codify and standardise (Berg, 1997), nor is it easily accounted for, being the product of overlapping, negotiated and collective orders (Star, 1991; Strauss et al., 1985). This is further complicated by the temporal disjuncture to which we have drawn attention, allowing us to extend these earlier conceptions of social action to account for the introduction of algorithms in clinical settings.

At the same time, the technology offers a means of surveilling, representing and quantifying particular aspects of the care process, as well as shaping the distribution and normalisation of nursing tasks and roles. In this scenario the technology, and its failings, play a mediating role in the disjuncture between the informal work necessary to make things run, and the formal account made of that work. Consequently, the surveillance of humans made possible by algorithms appears in sharp contrast to their own inscrutability. This makes it possible for a human-oriented care process in all its layered complexity to be reduced for the purposes of legal scrutiny to a set of time-stamped decision prompts, while the algorithm itself recedes into the background.

Our research highlights a need to think more carefully about the conditions under which formal organisation makes the acts of self-responsible humans visible or invisible. In their re-reading of classical organisation theory, du Gay and Vikkelsø (2016) develop an understanding of organisational decision-making which is premised upon the idea that the formal and informal are necessarily intertwined. Within this conception, the purpose of organisational structure is to provide a system of roles and relationships within which the formal and informal emerge from a problem and are decided upon in a casuistic manner. It is to the unfolding of such events that the ‘making visible’ of formal organisation pertains. Our analysis shows how algorithms can introduce a split between the formal and informal, which under particular organisations of the digital (such as that described in Hospital X) is enforced as though it were a universal metaphysical principle. The consequence is that it becomes structurally impossible to conceive of the relationship between formal and informal organisation, or solve problems, casuistically. Algorithms compute decisions faster than humans can interpret situations, make judgements or take action, and often they do so invisibly. Yet ethics and legal accountability are solely attributed to the actions of humans, rendered visible, not algorithms, whether visible or invisible.

Conclusion

Our study of the introduction of an algorithmic detection and alert system for Acute Kidney Injury (AKI) in two hospitals exposes a disjuncture between human and non-human work in algorithmic decision-making that results from the organisation of the different normative and temporal orders in which humans and algorithms operate. We have shown how this is a problem for the formal organisation of algorithmic work and argued that further research is needed to better understand how the introduction of algorithms to organisations is being managed. We conclude with two linked issues which emerge from this analysis.

Firstly, we have shown that algorithmic decision-making can be organised in such a way that it interrupts and directs human decision-making. Beyond the effect this can have on autonomy, it can also introduce a cascade of ethical problems when we consider the potentially inscrutable nature of algorithmic decision-making (Mittelstadt et al., 2016). Inscrutability creates a problem of governance: if one of the algorithm’s ‘missed cases’ resulted in a preventable mortality, then how might the distributed responsibility we find, particularly in the case of Hospital X, be made accountable? Martin (2019) argues that this is a problem of design rather than the value-laden-ness of algorithms; however, we have shown that regardless of how an algorithm is designed, there will be ethical problems related to how any algorithmic work is coordinated. All of this points to more general questions of why and how any algorithm is to be regulated, questions that are not simply a matter of technological design.

Secondly, we have shown that embedding algorithmic technologies in the two hospitals from our study necessitated human work and that algorithms do not on their own constitute organisation. Some of this human work is informal and ongoing. The informal, invisible nature of the work can create problems for authority. Du Gay and Vikkelsø (2016) draw on Wilfred Brown to raise a concern that considerations such as authorisation and authorising relationships have fallen out of fashion in contemporary studies of organisation. This can result in what Brown (1965: 144) understood to be ‘informal organisation’, in which there is an absence of prescribed boundaries to govern legitimate authority. We have shown how algorithmic work can impede care for people and illness, which is a core task for a hospital. If nurses take time away from caring work in order to undertake algorithmic work, then according to what codes and principles are they governed in this informal work? Moreover, what effect does this have on their ability to discharge the caring duties to which they are formally held to account?

As algorithms ‘shape, transform and govern’ more and more aspects of contemporary living (Amoore and Piotukh, 2015: 4), so too will organisations increasingly settle on particular approaches to coordinating the work that algorithms require to operate. We have argued that some of these approaches decouple algorithmic work from the experiences of the humans toward which the purpose of the organisation is directed. In healthcare, caring for the health and illness of humans can end up becoming secondary to delivering the data requirements of a non-human algorithm. Drawing on our ethnographic research, we have argued that this is a matter of how both algorithmic work and healthcare work are organised across formal and informal lines, yet scant attention seems to be given to organisation in either the scholarly literature or the policies encouraging algorithmic automation. We suspect that algorithmic work is being introduced and coordinated in ways similar to how we describe here, and at an astounding pace, throughout many societies and in sectors other than healthcare. We therefore stress the importance of attending to the organisation of this new kind of work. What practical, ethical and legal problems will follow if we do not urgently attend to the organisation of algorithms?

Footnotes

Funding

The project from which this article was drawn was funded by the National Institute for Health Research (NIHR). The views expressed in this article are those of the authors and not necessarily those of the NHS, NIHR or the Department of Health and Social Care.