Abstract

Introduction

Children aged 5 to 10 years use fine motor skills daily, not only at home but also at school, where 30% to 66% of their time is spent on tasks such as writing, eating, and crafting (Caramia et al., 2020; Marr et al., 2003). Fine motor skills can be defined as activities that involve the coordination of small movements made by the hands and fingers, often in coordination with eye movements (Cuffaro, 2011). Proficiency in these skills is crucial (Summers et al., 2008; Valentini, 2024; Zwicker et al., 2013), as struggles with fine motor tasks can lead to significant challenges in daily life and delays in academic performance (Caramia et al., 2020; Li et al., 2024), making it difficult for children to keep up with lessons or engage with their social environment (Ekornås et al., 2010; Magalhães et al., 2011; Valentini, 2024). Writing is a complex skill that requires the integration of fine motor control, visual-motor coordination, and cognitive processes (Berninger et al., 1992, 2002; Berninger & Rutberg, 1992; Chandler et al., 2021). Research has shown that fine motor proficiency is closely linked to handwriting quality and speed, which contribute to academic success (Chandler et al., 2021; Graham et al., 2012). Additionally, fine motor skills are important for other school-related tasks such as folding paper and cutting with scissors for crafts, which also require precise hand and finger movements (Anderson, 2002; Chandler et al., 2021; Daly et al., 2003; Edwards, 2018; Gabbard, 2021; Gerde et al., 2012; Henderson & Pehoski, 2006; Ratcliffe et al., 2011; Travers et al., 2018; Wu & Sun, 2020). Detecting and treating fine motor difficulties early on is therefore essential, as fine motor skills play a key role not only in childhood but also in everyday adult tasks, such as writing, wrapping a gift, or opening a package. Early identification and intervention for children with motor skill difficulties or children with or at risk of developmental coordination disorder (DCD) are especially important. Both early identification and intervention help to prevent secondary challenges such as anxiety or low self-esteem (Cairney et al., 2010; Cocks et al., 2009; Engel-Yeger & Hanna Kasis, 2010; Piek et al., 2008, 2010). Together they enable families and educators to provide targeted support, ultimately improving long-term outcomes (Lee & Zwicker, 2021; Zwicker & Lee, 2021).

The identification of fine motor difficulties is often conducted by physical and occupational therapists (OTs), who frequently rely on standardized, quantitative assessments to support diagnostic decisions and monitor outcomes. Commonly used instruments include the Movement Assessment Battery for Children-Third Edition (Henderson & Barnett, 2023), the Peabody Developmental Motor Scales-Third Edition (Folio & Fewell, 2023), and the Bruininks-Oseretsky Test of Motor Proficiency (Bruininks & Bruininks, 2005). In school-based settings, OTs often begin with informal observational assessments to evaluate a child's performance in the school context before turning to standardized tools. These observations, focusing on how a child approaches tasks such as handwriting, cutting, or using classroom tools, provide valuable insights into grip type, movement patterns, and task engagement, capturing authentic behaviour in natural environments (Al-Hendawi et al., 2025). While such qualitative observations are central to OT practice, they are typically unstandardized and can vary significantly across practitioners. As a result, it can be challenging to compare assessments or track progress reliably. Standardized quantitative instruments, therefore, play a critical role in supporting eligibility decisions, communicating with interdisciplinary teams, and justifying services (O’Brien & Kuhaneck, 2025). They provide objective benchmarks required for diagnosis or to justify services, particularly in systems that rely on objective benchmarks. These quantitative instruments typically focus on measurable outcomes, such as the speed at which a child completes a task, the number of correct or incorrect attempts, or the number of errors made. While these standardized quantitative instruments are effective in recognizing fine motor difficulties, they have limitations when it comes to evaluating the quality of task execution (Hadwin et al., 2023). Some of these instruments do include short checklists on qualitative performance, but these checklists focus mostly on identifying abnormal or atypical behaviour, overlooking the full scope of a child's performance (Hadwin et al., 2023; Missiuna & Pollock, 1995). A comprehensive standardized qualitative assessment captures not only a child's challenges but also their skills and progress, allowing tailored support for individual needs. The lack of a standardized approach for qualitative observations can lead to inconsistencies when two individuals attempt to describe qualitative performance of a child, making it difficult to compare notes reliably, and leading to subjective interpretations, reducing the feasibility and reliability of assessments (Bowen et al., 2009; Klingberg et al., 2019). Thus, without a standardized approach or a comprehensive tool for assessing qualitative fine motor performance, accurate conclusions, progress tracking, and nuanced evaluations become challenging, further limiting the accuracy and overall evaluation.

Dynamical systems theory (Thelen & Smith, 2006) highlights that there are multiple ways in which a child's arms and hands can move to successfully complete a task (degrees of freedom), but these different ways vary widely in terms of efficiency and skill. For instance, a child may complete a task such as cutting or folding paper, but the specific movements and strategies used may not be optimal. Observing how a child performs a task is as important as evaluating if they perform the task fast or without mistakes (Missiuna & Pollock, 1995). Qualitative observations, particularly for children whose outcomes appear adequate but indicate inefficiencies, can reveal hidden motor difficulties not apparent in outcome-based assessments (Hadwin et al., 2023; Missiuna & Pollock, 1995; Wilson, 2005). Current qualitative observation tools for fine motor tasks are scarce, especially for school-age children. Much of the available research on qualitative fine motor performance targets younger children or adults, focusing on grip type or manipulation (e.g., Edwards, 2018; Feix et al., 2016; Gabbard, 2021; Mitchell et al., 2012; O’Brien & Kuhaneck, 2025; Ratcliffe et al., 2011; Travers et al., 2018), but do not fully capture all motor components that influence how a child performs a task. This gap in the literature highlights the need for a deeper understanding of qualitative fine motor development and a comprehensive observation tool with adequate psychometric properties to assess fine motor skills.

The current study addresses the need for a tool that captures the quality of fine motor skill performance by developing the Hands-On! observation tool to assess the quality of fine motor performance of 5- to 10-year-old children. Three tasks of an existing instrument for the assessment of activities of daily life (ADL), the DCDDaily (Van der Linde, 2014), are used for this purpose, that is, paper folding, writing numbers, and paper cutting, as these are commonly performed by children at school. While Hand-On! and the DCDDaily (both test and questionnaire) share a common focus on assessing fine motor functioning in daily life activities, and Hands-On! uses tasks from the DCDDaily test, they differ in both purpose and approach. The DCDDaily questionnaire is designed as questionnaire for assessment of a child's ADL performance, as reported by parents, specifically assessing whether a child can perform a particular task in a proficient way. In contrast, Hands-On! is designed to provide a standardized and reliable observation of how children perform specific tasks. The Hands-On! tool divides each task into intratask components, such as grip type, arm movement, and posture, with specific actions that describe behaviours within each component, ranging from less to more advanced for a child's age (Faber, Schoemaker, et al., 2024; Gabbard, 2021). We have based Hands-On! on tasks from the DCDDaily, as these tasks could be assessed in a standardized way according to the instruction in the manual of the DCDDaily. This standardized assessment enabled us to make comparisons across children of different ages. Although Hands-On! can be used as an extension of the DCDDaily to enable qualitative observations of certain tasks of the DCDDaily, it can also be used as a stand-alone observation tool for comparable tasks, such as writing, including letter writing, writing of shapes, or even a tracing task, as well as for a variety of paper cutting tasks. Only the paper folding task is very specific to folding a paper spring, however, the dimensions of the paper strips can vary and the observation could still be done. Therefore, the aims of the current study are to develop the Hands-On! qualitative observation tool and to assess its reliability (intra- and interobserver), concurrent validity (comparing qualitative scores with the DCDDaily metric scores), and construct validity to evaluate the influence of age and sex. For construct validity, we expect that older children and girls will outperform their younger peers and boys (Fairbairn et al., 2020; Junaid & Fellowes, 2006).

Method

This study is part of the “Uniek in je Motoriek” [Being unique with regards to your motor skills] research project, a collaborative project of the Department of Human Movement Sciences from the University Medical Center Groningen (UMCG) and University of Groningen, The Netherlands. The current study was approved by the Ethics Review Committee of the UMCG (Research Registration number: 202100788). After obtainment of ethical approval, schools were approached for participation. Schools willing to participate sent out invitations, accompanied by an information letter and an information video about the study to parents. Children could only participate after an informed consent form was signed by their parent(s), caregiver(s), or guardian(s). Inclusion criteria for all participants were (a) aged between 5 and 10 years and (b) attending regular Dutch primary schools. To exclude children with (a) physical disabilities, (b) developmental disorders (e.g., intellectual disability, autism spectrum disorder, DCD), and (c) sensory impairments, parents filled in a short questionnaire. This initial phase of the project focused on typically developing children to establish age-based benchmarks for the tool. Including children with neurodevelopmental disorders such as DCD will be addressed in future validation studies.

Participants

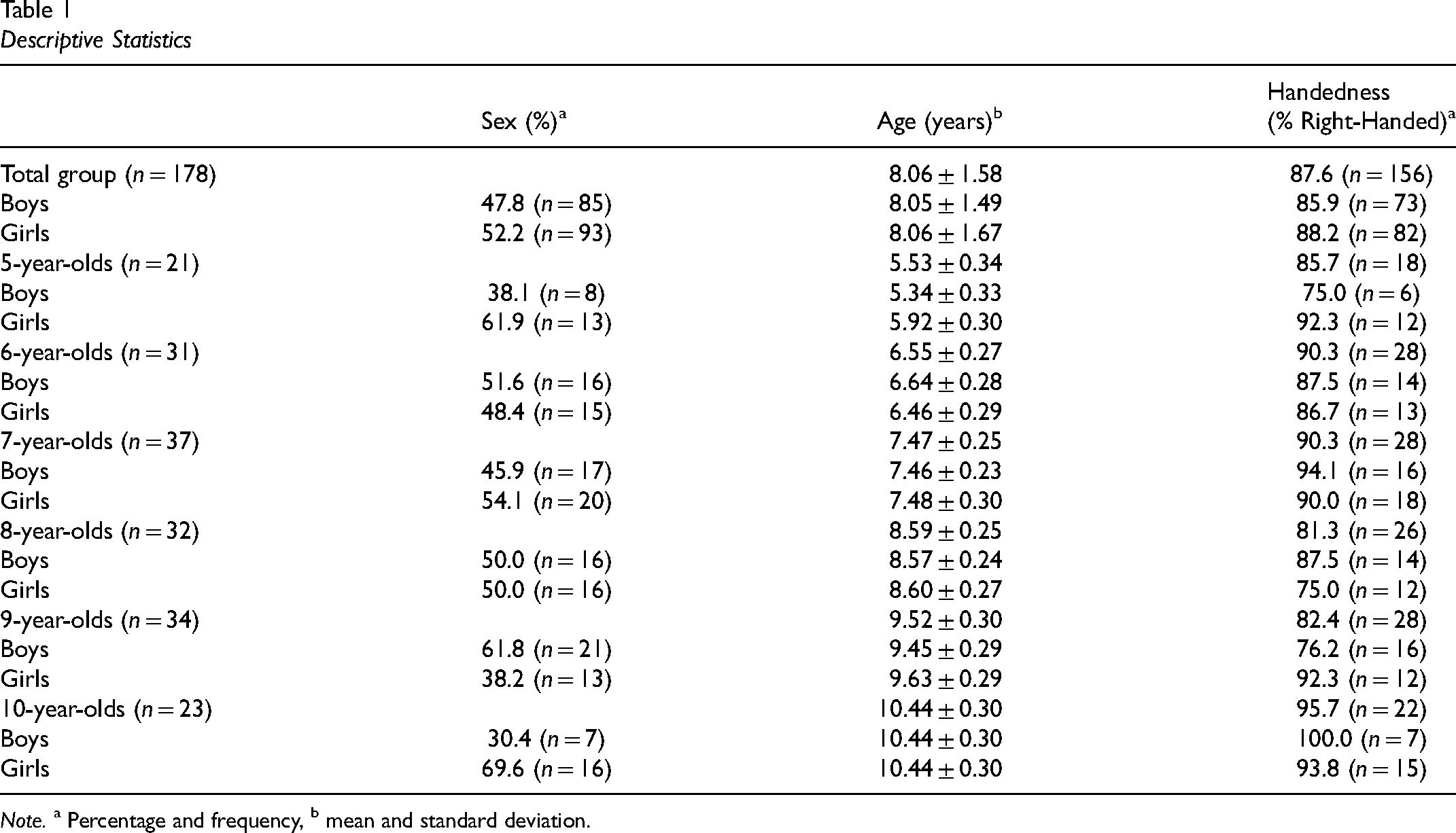

Participants were 178 Dutch children (85 boys; 47.8%) ranging from 5 to 10 years with a mean age of 8.06 (SD ± 1.58) years from three primary schools throughout the Netherlands. The descriptive statistics for age, sex, and handedness are shown in Table 1. The child's handedness was determined by asking the child to draw a line with a pen that was placed in the middle of a piece of paper and observing which hand they used to draw the line.

Descriptive Statistics

Note. a Percentage and frequency, b mean and standard deviation.

DCDDaily Tasks

The DCDDaily (Van der Linde, 2014) is a standardized test to assess the ability to perform activities of daily living in 5- to 8-year-old children with DCD. The DCDDaily includes 18 items grouped into the following three domains: Self-care and Self-maintenance (11 items), Productivity and Schoolwork (5 items), and Leisure and Play (2 items; Van der Linde, 2014). Although designed for children with DCD, the DCDDaily has been shown to be a reliable and valid tool for assessing activities of daily living in both children with DCD and typically developing children (Van der Linde, 2014; Van der Linde et al., 2013).

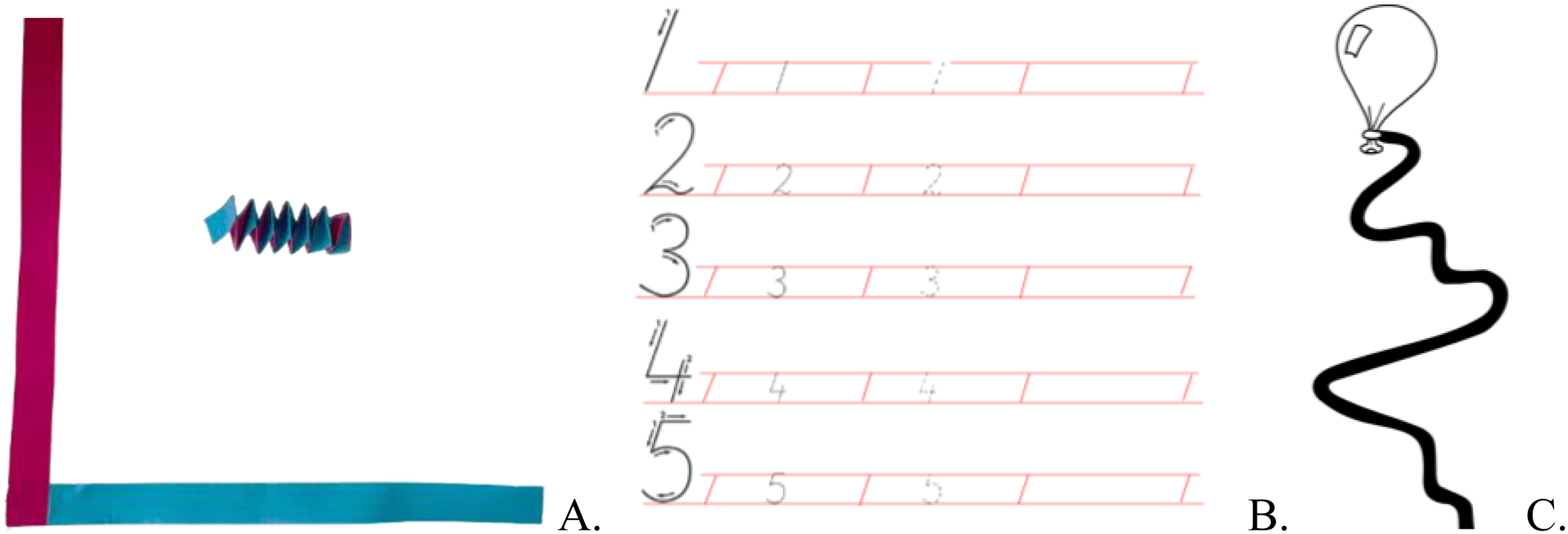

In this study, we assessed tasks from the Productivity and Schoolwork domain, which included paper folding, writing numbers, and paper cutting, following the instructions in the manual of the DCDDaily test available via http://www.dcddaily.com (Van der Linde, 2014). Both speed and accuracy were assessed. The paper folding task involved folding two paper strips, glued together at one corner, into a paper spring (see Figure 1(a)). The raw score is the number of completed, correct folds in the paper spring (i.e., the folded part covers the underlying part). The writing numbers task consisted of tracing, completing, and writing the numbers 1 to 5 (see Figure 1(b)). The writing score is determined by the number of errors made, including writing outside the lines, the size and shape of the numbers, and the shakiness of the written numbers. A maximum of 25 errors could be recorded in total. The paper–paper cutting task involved cutting over a wavy line (see Figure 1(c)). The cutting score was based on the number of times the child cuts outside the designated line. For a detailed description of the scoring procedures, see the DCDDaily manual (Van der Linde, 2014). In our study, we also included 9- and 10-year-old children, for whom no norm scores are available in the DCDDaily. Consequently, only raw scores were included in the analyses.

Folding (a), Writing (b), and Cutting (c) Tasks of the DCDDaily.

Development of the Qualitative Observation Tool Hands-On!

The final version of Hands-On! and the accompanying links to the video demonstrations are available on Zenodo (Faber, Hartman, et al., 2024). Zenodo is a general-purpose open-access repository developed under the European OpenAIRE programme, which enables researchers to share, preserve, and access a wide range of digital research outputs, including publications, datasets, and software. The Hands-On! qualitative observation tool was developed in three phases to measure the motor components of paper folding, writing numbers, and paper cutting. Each task was broken down into intratask components (e.g., manipulating paper, pen, or scissors) and specific actions that a child could perform within that component (for instance, in-hand manipulation, transferring hand-to-hand, or surface assist).

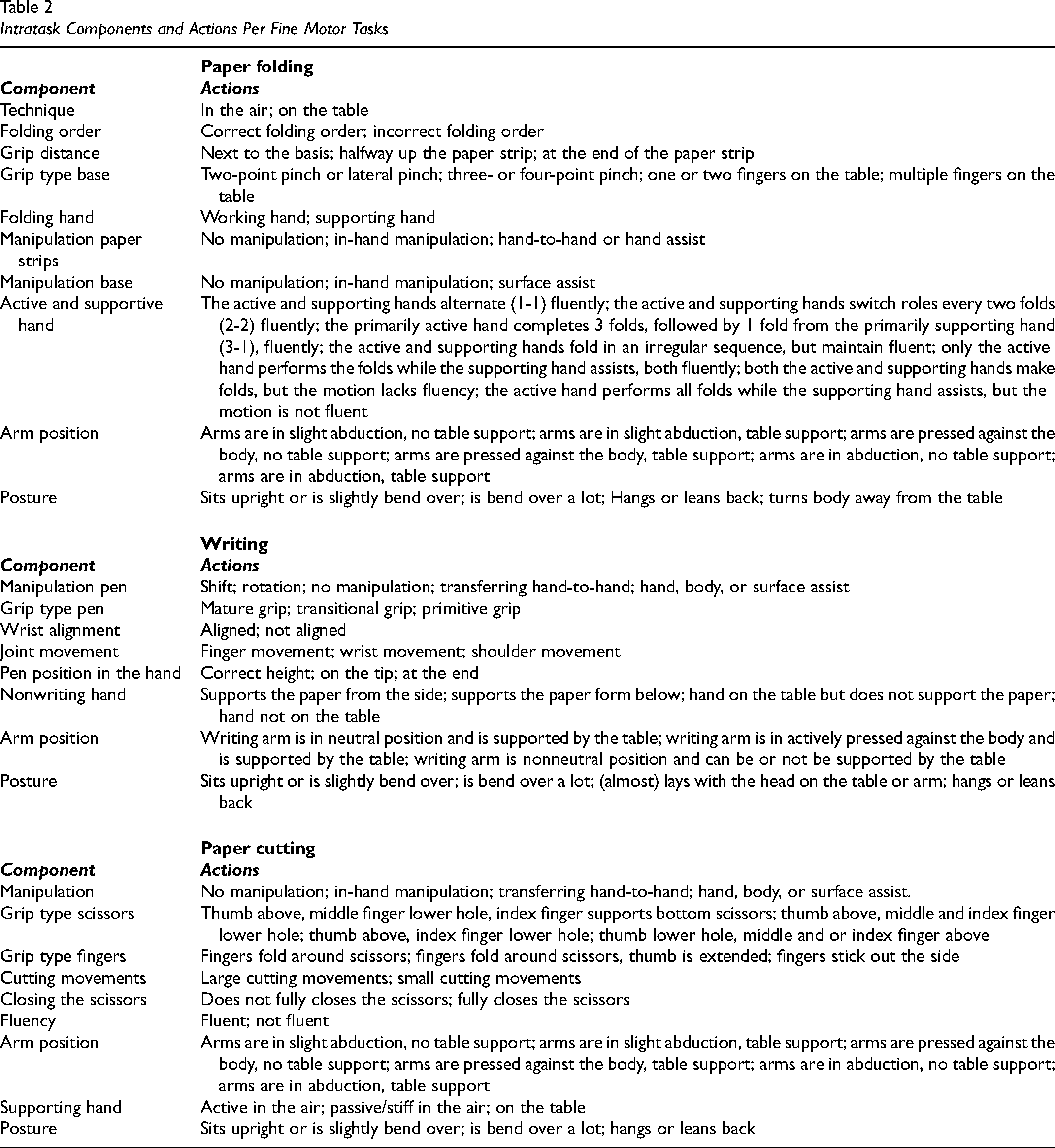

In the first phase, we examined the DCDDaily manual (Van der Linde, 2014) and related literature to identify relevant components (see the Hands-On! observation tool for the literature list). In the second phase, we observed video recordings of 14 children (at least one boy and one girl from each age group) performing the tasks to refine the list of intratask components and actions. These findings were discussed with four experts in human movement sciences, psychology, pedagogy, and physical therapy, resulting in an initial version of the Hands-On! tool and an instruction manual with descriptions of the components and actions, ranging from less to more proficient. Within each component, multiple actions were identified. To ensure clarity and consistency in the observation process, these actions were described to be mutually exclusive, meaning that only one action could be observed at a given time. For each intratask component, the most prevalent action—defined as the action performed for the majority of the task's duration—was scored. In the third phase, we conducted pilot observations with 12 children to assess the tool's feasibility (duration of observation and training time) and clarity, leading to final adjustments to the research version of the tool. The completed Hands-On! tool includes 10 components for folding, 8 for writing, and 10 for cutting, with a total of 36, 27, and 35 actions, respectively (see Table 2). Actions were scored on a scale (1 to 2 or 1 to 3), with lower scores indicating more proficient behaviour. What is considered less and more proficient behaviour was based on existing literature and the experience of the expert group. The full observation tool is available via https://zenodo.org/records/14185207.

Intratask Components and Actions Per Fine Motor Tasks

Procedure

Each child was assessed individually in a quiet room of the school by a trained assessor, either a master's student human movement science or the main researcher (LF). As this study was part of the “Uniek in je Motoriek” project, other DCDDaily tasks were also assessed. This assessment included buttering and cutting gingerbread, gluing paper strips, and colouring and followed the instructions described in the DCDDaily manual (Van der Linde, 2014). During the assessment, a SONY Cybershot DSC-WX500 compact camera was used to record each session in 720p high-definition. The camera was mounted on a tripod and positioned in front of the child and the table, providing a clear view of both the child's posture and hand movements during the tasks. Before starting, the purpose of the video recordings and the procedures for data storage were explained to the children in an age-appropriate way. The video recordings were used exclusively to observe performance in line with the Hands-On! tool. To assess errors on the DCDDaily tasks, the produced outcomes (folded paper spring, written product, cut-out figure) were afterwards scored based on the number of correct folds or the number of errors, following the guidelines in the manual of the DCDDaily. On average, administering the complete assessment took between 15 and 20 min.

The video recordings of the 178 children performing the paper folding, writing numbers and paper-cutting tasks were observed by the main researcher using the Hands-On! observation tool. The complete trial of each task was observed, including the folding of the paper strips in their entirety, the writing of all numbers, and the cutting of the complete line. Each observation, that is, one child performing one task, took approximately 2 min.

To enable the use of the qualitative observations in our validity analysis (both concurrent and construct), we assigned scores to the actions within each component of the Hands-On! tool, differentiating between less and more proficient performance. By scoring each qualitative behaviour, we translate these observations into a quantitative scale, generating total scores that reflect overall proficiency levels.

To assess interobserver reliability, 24 randomly selected children—four children per age group (two of each sex)—were observed independently by pairs of researchers and (clinical) experts. First, two researchers, one with expertise in physical therapy and human movement science and a human movement scientist not experienced in observation of motor performance (LF and DD) conducted observations. Training the second observer took approximately 15 to 30 min. Second, interobserver reliability was also assessed between two clinical experts: a physical therapist (LF) and a paediatric OT (MS) to investigate the tool's reliability for use in occupational therapy practice. In this case, the OT received the tool along with a brief explanation about the tool of approximately 5 min. Consistent with the intended clinical use of the tool, the OT was asked to use her professional expertise to guide observations. Intraobserver reliability was evaluated by having a single researcher (LF) observe video recordings of the same 24 children on two separate occasions, with a 5-week interval between observations, based on practical considerations.

Data Analysis

The data were analysed using Statistical Package for the Social Sciences (SPSS, Version 27, IBM, USA). The level of significance was set at p < .05. Prior to analysis, normality tests were conducted, revealing that several variables were nonnormally distributed. As a result, nonparametric analyses were applied.

The reliability was determined by comparing the observations of two pairs (interobserver reliability: LF and DD; LF and MS) and between the two separate occasions (intraobserver reliability, LF), with agreement noted when the same action within the task was identified. To measure the agreement, percentage agreement was chosen as the method for ascertaining reliability, with <59% indicating weak reliability, 60%–79% moderate, 80%–90% strong, and >90% very strong reliability (McHugh, 2012).

To assess concurrent validity, Spearman's rho was calculated between the total qualitative score from the Hands-On! observation tool and the DCDDaily task metrics. In the Hands-On! tool, a lower score indicates better performance. This was compared with the duration of the DCDDaily tasks, as well as specific task outcomes: the number of correct folds for the paper spring folding task (where a lower score reflects poorer performance) and the number of mistakes for the writing numbers and paper cutting tasks (where lower scores reflect better performance). The Spearman's rho correlation coefficients can be interpreted as follows: <.20 negligible, .21–.40 weak, .41–.60 moderate, .61–.80 strong, and .81–1.0 very strong correlations (Prion & Haerling, 2014). Construct validity was determined using multiple regression analyses (one for each task) with the enter method. These analyses aimed to predict the qualitative performance scores for paper folding, writing numbers, and paper cutting, using age and sex as predictors. The R2 represents the percentage of variance explained by the model. Additionally, we examined how much of this variance was specifically attributed to age and sex in explaining qualitative performance across tasks.

Results

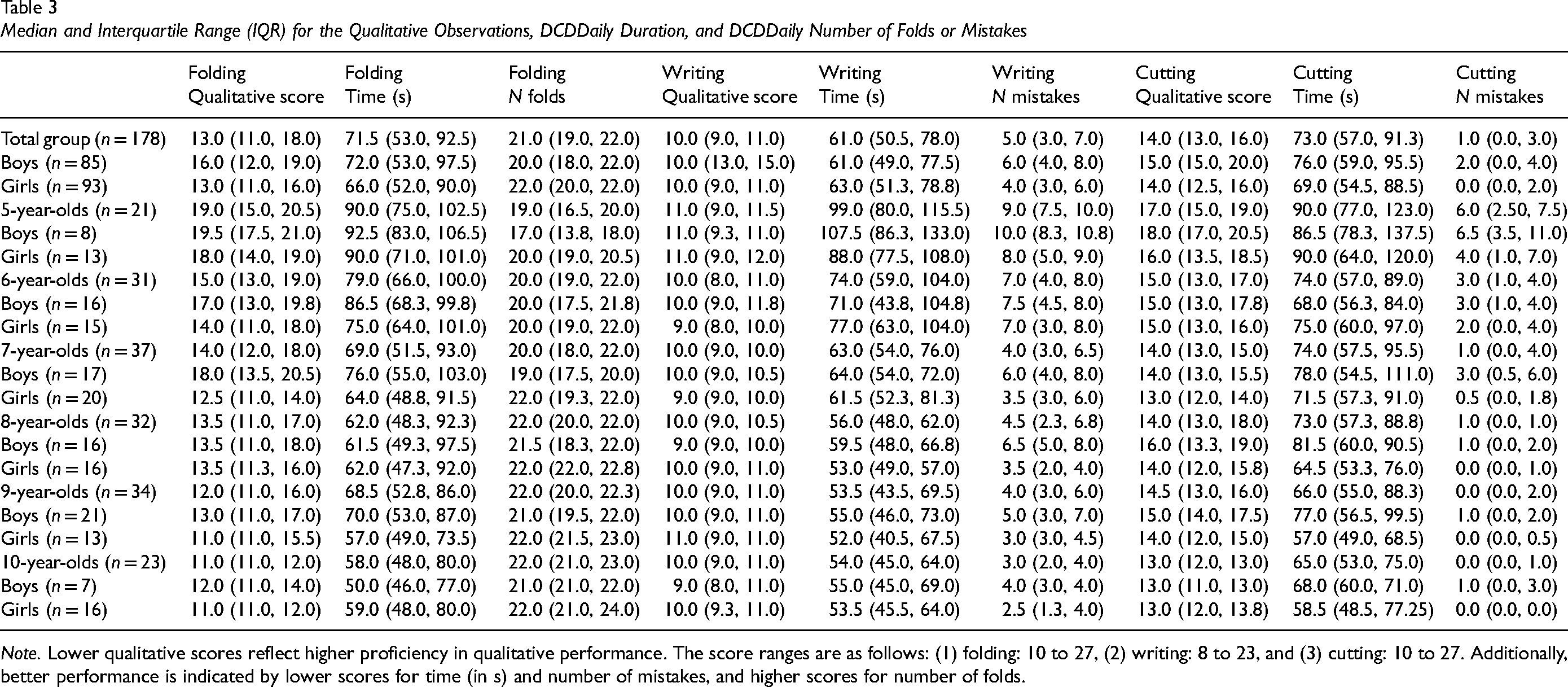

The median and interquartile range for the qualitative observations from the Hands-On! tool and the raw scores of the DCDDaily, per by age group, sex, and the total sample, are presented in Table 3.

Median and Interquartile Range (IQR) for the Qualitative Observations, DCDDaily Duration, and DCDDaily Number of Folds or Mistakes

Note. Lower qualitative scores reflect higher proficiency in qualitative performance. The score ranges are as follows: (1) folding: 10 to 27, (2) writing: 8 to 23, and (3) cutting: 10 to 27. Additionally, better performance is indicated by lower scores for time (in s) and number of mistakes, and higher scores for number of folds.

Interobserver Reliability

The overall interobserver reliability between a clinical expert (physiotherapist) and a human movement scientist not experienced in observation of motor behaviour, demonstrated moderate to very strong reliability across all three tasks. The interobserver reliability for the paper folding task was 84.48% (range: 66.7%–100%) indicating a strong overall agreement, with individual components ranging from moderate to very strong. Disagreements primarily occurred in the roles of the active and supporting hand (how the hands coordinate during the paper spring folding) and variations in hand movements. For the writing numbers task, the interobserver reliability was 81.75% (range: 62.4%–100%) indicating very strong overall agreement, with individual components ranging from moderate to very strong. Differences were mostly observed in posture and the manipulation of the pen. Finally, the paper cutting task showed the lowest interobserver reliability at 75.40% (range: 50%–100%) reflecting moderate overall agreement, with weak to very strong agreement across components. Disagreements in the observations centred on arm positioning, specifically whether the arms were slightly or fully abducted.

The interobserver reliability between two clinical experts, a physiotherapist and a paediatric OT, also demonstrated moderate to very strong agreement across all three tasks. For the paper folding task, the interobserver reliability was 84.87% (range: 70.9%–95.8%) indicating strong overall agreement, with component-level agreement ranging from moderate to very strong. Discrepancies were similar to those noted in the researcher pairing and primarily involved subtle variations in the coordination between the active and supporting hands during folding. In the writing numbers task, agreement was 85.95% (range: 79.1%–91.7%), reflecting strong overall reliability. Similarly to the previous pair, differences were mainly observed in the interpretation of posture and manipulation of the pen. The paper cutting task showed an interobserver agreement of 83.76% (range: 70.9%–95.9%), also indicating strong reliability, with some variation in the assessment of arm positioning and cutting movement.

Intraobserver Reliability

The overall intraobserver reliability, demonstrated strong to very strong reliability across all three tasks. For the paper folding task, the intraobserver reliability was 93.74% (range: 79.2%–100%), indicating very strong overall agreement, with individual components ranging from moderate to very strong. Similarly, the writing numbers task showed an overall intraobserver reliability of 91.68% (range: 83.4%–100%), indicating very strong overall agreement and reflecting strong to very strong agreement across all components. The paper cutting task yielded an overall intraobserver reliability of 92.79% (range: 79.2%–100%) indicating very strong overall agreement, with component intraobserver reliability ranging from moderate to very strong.

Concurrent Validity

For concurrent validity of the paper folding task, a strong positive correlation was found between the qualitative folding score and the duration in seconds of the DCDDaily task (ρduration = .624, p < .001). This suggests that better qualitative folding scores (indicated by lower scores) are associated with faster task performance. Additionally, a moderate negative correlation was identified between the qualitative folding score and the number of correct folds in the DCDDaily task (ρfolds = −.441, p < .001). This indicates that as the quality of folding improves (indicated by lower scores), the number of correct folds increases.

For the writing numbers task, a significant but negligible correlation was found between the qualitative writing score and the duration in seconds of the DCDDaily task (ρduration = .157, p = .038). This suggests that better qualitative writing scores (indicated by lower scores) are related to faster writing task performance. No significant correlation was found between the qualitative writing score and the number of writing mistakes in the DCDDaily task (ρmistakes = −.032, p = .674), indicating that the quality of writing does not correlate with the number of mistakes made.

Finally, for the paper cutting task, a significant moderate positive correlation was found between the qualitative cutting score and the duration in seconds of the DCDDaily task (ρduration = .335, p < .001), suggesting that children who scored higher in cutting quality (indicated by lower scores) tended to spend less time on the task. Additionally, a moderate significant correlation was observed between the qualitative cutting score and the number of cutting mistakes in the DCDDaily task (ρmistakes = .377, p < .001). This indicates that children who perform better in terms of quality (marked by a lower score) also made fewer mistakes while cutting.

Construct Validity

For construct validity, multiple regression analyses were conducted to predict qualitative performance of paper folding, writing numbers, and paper cutting with age and sex as predictors. This produced a significant model for both the folding task (F(2) = 26.055, p < .001, R2 = .221) and the cutting task (F(2) = 15.520, p < .001, R2 = .141), but not for the writing numbers task (F(2) = 0.070, p = .932, R2 = −.011). For folding and cutting, both age (t = −5.999, p < .001 and t = −4.203, p < .001, respectively) and sex (t = −4.064, p < .001 and t = −3.692, p < .001, respectively) were significant predictors. Neither age (t = −0.310, p = .757) nor sex (t = −0.214, p = .831) significantly predicted performance in the writing numbers task. Age and sex explained 22.1% of the variance in the folding task's qualitative score and 14.1% in the cutting task.

Discussion

The aim of the current study was to develop the Hands-On! observation tool and assess its reliability (both intra- and interobserver) and validity (concurrent and construct) in typically developing children aged 5 to 10 years.

Reliability

The Hands-On! tool demonstrated moderate to very strong interobserver reliability across all tasks for both observer pairs. For the first pair (physical therapist and human movement scientist), reliability scores were: paper folding (84.5%), writing numbers (81.8%), and paper cutting (75.4%). For the second pair (physical therapist and paediatric OT), reliability was: paper folding (84.9%), writing numbers (86.0%), and paper cutting (83.8%). These consistent results across different professional backgrounds support the tool's reliability in diverse clinical and research settings.

Intraobserver reliability, assessed by LF, ranged from strong to very strong across all tasks: paper folding (92.8%), writing numbers (91.7%), and paper cutting (93.7%). These results indicate a consistently high level of agreement in observations, suggesting reliable assessments across all tasks. Discrepancies in interobserver reliability mainly involved hand coordination in folding, posture in writing, and arm positioning in cutting. To further enhance reliability, we improved the manual of the Hands-On! tool for future use by adding specific degree ranges for arm movements, and video demonstrations to complement written descriptions and pictures of actions, particularly for dynamic components where movement is harder to capture with text alone.

Importantly, these results suggest that the tool can be used by people with different levels of experience in observation of motor performance. While the least experienced observer required only 15–30 min of training, the OT was able to use the tool with minimal instruction, applying it in a way that reflects its intended clinical use, as a flexible tool to guide expert observation. This demonstrates the tool's potential for integration into current practice, supporting both inexperienced users through brief training and experienced clinicians through intuitive application.

Validity

For concurrent validity, the Hands-On! tool demonstrated strong and moderate correlations between qualitative scores and duration and number of correct folds or mistakes of the DCDDaily for the folding (ρduration = .624, ρfolds = −.441) and cutting tasks (ρduration = .335, ρmistakes = .377), indicating that better qualitative scores are linked to faster task performance of both tasks, more correct folds and fewer cutting mistakes. These correlations provide evidence that the Hands-On! tool effectively measures related aspects of fine motor skill performance for the folding and cutting tasks, as assessed by the DCDDaily, reinforcing its validity as a reliable assessment instrument. The moderate correlation aligns with expectations, as both assessments involve the same motor skills but with focus on different aspects. The DCDDaily measures the speed and accuracy of daily tasks, using an error model (Gabbard, 2021). In contrast, Hands-On! assesses the quality of performance in each task component, rating actions from less to more proficient.

However, a significant but negligible correlation was found between the qualitative writing score and the duration in seconds of the DCDDaily task (ρduration = .157) and no significant correlation was found between the qualitative writing score and the number of writing mistakes in the DCDDaily task (ρmistakes = −.032). Interestingly, speed and the number of errors in performing all three fine motor tasks tend to decrease with age, reflecting improved efficiency and proficiency. In contrast, the qualitative scores for the writing performance specifically remained consistently high, with children demonstrating nearly optimal coordination patterns as early as age 5. This observation aligns with our previous research, which showed that 5- and 6-year-old children already displayed the best qualitative performance in the Movement Assessment Battery for Children-Second Edition (Henderson et al., 2007) drawing trail task (Faber, Schoemaker, et al., 2024). This could suggest that while motor coordination patterns are well-established by this age, children continue to adapt the speed and accuracy of their motor patterns to meet the specific demands of various tasks. This aligns with the stages of motor learning, where the initial focus is on acquiring a coordination pattern, followed by the adaptation of that pattern to fit environmental and task-specific requirements (Fitts & Posner, 1967; Taylor & Ivry, 2012). Thus, even though younger children exhibit strong qualitative scores, they are still refining their ability to adjust and apply these skills in diverse contexts as they grow. Furthermore, it is important to note that the current writing task observed with the Hands-On! tool involves writing numbers, which may not fully capture the complexities of language-based writing tasks. Writing numbers is primarily a motor control task involving pen on paper, and does not engage the same cognitive and linguistic processes required for writing letters and words (Berninger et al., 2002). While the writing numbers task in the Hands-On! tool provides valuable insights into fine motor control, which is foundational for many school-based activities, it may not fully address the complexities of handwriting, especially how it involves cognitive and linguistic processes related to written language production. Therefore, it may be beneficial for future research to apply the Hands-On! observation tool in the observation of language-based writing tasks as well. Incorporating language-based writing tasks in future studies with the Hands-On! tool could provide a more comprehensive assessment of handwriting difficulties offering insights into both motor and cognitive-linguistic aspects of writing.

The results suggest that construct validity is supported for the folding and cutting tasks of the Hands-On! tool, as age and sex significantly predict performance quality in these tasks. Specifically, the regression models indicate that both age and sex explain 22.1% of the variance in folding performance and 14.1% in cutting performance, aligning with expectations that older children and girls would perform these fine motor tasks with higher proficiency (Fairbairn et al., 2020; Junaid & Fellowes, 2006). While the contributions of age and sex are meaningful, the relatively low percentages in predicting overall qualitative performance are to be expected. This suggests that age and sex alone cannot fully account for motor performance, highlighting the multifaceted nature of fine motor skills. Other factors, such as individual differences in motor experience, cognitive abilities, motivation, and environmental influences, also play significant roles in performance (Newell, 1986).

In contrast, the lack of significant results for the writing numbers task (with only 0.7% of the variance explained by age and sex) suggests that the qualitative writing performance is not as strongly influenced by these factors. The absence of differences in writing performance between age groups and between boys and girls may be attributed to specific instruction provided in the classroom, such as guidance on supporting the paper and proper pen-holding techniques. Learning to write is a key educational objective in primary schools in the Netherlands (Inspection of Education, 2021). However, previous studies suggest that there is potential for further improvement in writing when focusing on aspects such as speed and neatness (Rietdijk et al., 2018). This aligns with the previous explanation for the concurrent validity of the writing task as it seems that children are at different stages of motor learning for writing compared to the folding and cutting tasks. We expect that more children are in the later stages of motor learning for writing, where they are adapting their skills to meet environmental and task-specific demands, adjusting the speed and accuracy of their movements accordingly (Fitts & Posner, 1967; Taylor & Ivry, 2012). As a result, age and sex have less influence on qualitative performance once the initial stages of learning the writing skill have been passed, with the focus shifting more towards refining and adjusting the acquired skills.

Applications of Hands-On!

The Hands-On! observation tool was developed to assess the qualitative components of fine motor skills in typically developing children aged 5 to 10 in paper folding, writing numbers, and paper cutting tasks. The tool aims to enhance the clinical assessment and intervention planning of occupational and physical therapists by offering a standardized qualitative observation tool which enables to capture key details of performance such as grip type and joint coordination, without increasing assessment time. Hands-On! not only supports tracking progress over time, but also enables the identification of specific components of fine motor performance that may hinder occupational participation, guiding targeted and individualized interventions.

Fine motor skills are closely linked to academic achievement (Caramia et al., 2020; Li et al., 2024) and psychosocial outcomes such as self-efficacy (Lopes et al., 2022). Since children spend a significant portion of the school day engaged in fine motor activities (Caramia et al., 2020; Marr et al., 2003), identifying and supporting difficulties in fine motor activities is essential for meaningful participation in school life. The Hands-On! tool has the potential to contribute to school-based physical and occupational therapy within tiered models of service delivery. Although this study focused on one-on-one administration, experienced therapists could also use the tool more flexibly, for example to scan small groups of children for fine motor challenges or focus on specific components of performance within tasks when a full administration is not feasible. In this way, Hands-On! could complement classroom-based observation approaches such as those described in partnering for change (Campbell et al., 2023; Meuser et al., 2024; Missiuna et al., 2012; VanderKaay et al., 2025). While not yet suited for whole-class screening, the tool could provide a structured follow-up when teachers or therapists note early concerns. Future feasibility studies are needed to determine how Hands-On! can best be embedded in inclusive school practices.

Hands-On! can be integrated smoothly into current clinical and educational routines, as observation of motor performance by occupational and physical therapists is already common practice, while offering a more structured and standardized format. Observations can be completed in real time or via video review, which only takes 2 to 5 min per task. Minimal training is required, about 15 to 30 min, with a manual that includes clear instructions, illustrations, and videos to support consistent and reliable use. With straightforward scoring, no need for specialized equipment, and efficient administration, the Hands-On! tool offers a practical and reliable way to assess, track progress and provide tailored support for each child across a range of occupational therapy settings.

However, it is important to note that observations such as grip type should always be interpreted in the context of functional performance (Latash & Anson, 1996). Deviant grip types, for example, should not automatically be targeted for change unless they interfere with task execution. In the case of writing, research presents mixed findings on the impact of grip type on handwriting performance (Donica et al., 2018; Rosenblum et al., 2006; Schneck & Henderson, 1990; Schneider et al., 2023). As such, observations should always be interpreted in the context of a child's overall performance quality to support appropriate, individualized intervention decisions. By integrating qualitative observations alongside quantitative outcomes, the tool provides a more comprehensive understanding of children's fine motor skill performance, better informing intervention strategies that may ultimately enhance children's participation in everyday school activities.

Strengths, Limitations, and Implications

The current study highlights the development, the reliability, and the validity of the Hands-On! observation tool, designed to evaluate qualitative performance of fine motor skills in 5- to 10-year-old children during paper folding, writing numbers, and paper cutting. This tool fills a gap in the literature on qualitative assessment tools of motor skills. The established reliability of Hands-On! ensures consistent and reproducible observations, facilitating meaningful comparisons across future studies and observers. Furthermore, the tool is ready for use by researchers and therapists, making it a valuable resource for assessing children's fine motor skill performance.

While this study has notable strengths, some limitations must be acknowledged. First, intra- and interobserver reliability among paediatric clinicians has not yet been evaluated. However, the strong reliability demonstrated suggests that the clinicians’ experienced in observing motor skill performance would probably reach the same results or even enhance it. Second, although this research used total scores to assess the tool's validity and facilitate comparisons with other tests, the primary purpose of the Hands-On! tool in clinical practice remains centred on observing qualitative performance and determining whether a child's behaviour is less or more proficient (Missiuna & Pollock, 1995). Currently, the Hands-On! tool assesses only three tasks: paper folding, writing numbers, and paper cutting, which may not capture the full range of challenges in ADL fine motor tasks faced by children. We aim to expand the tool to include additional tasks, such as self-care activities.

Conclusion

Overall, the Hands-On! observation tool is a reliable and valid tool for assessing qualitative fine motor skill performance in children aged 5 to 10. Its standardized approach ensures reliable observations, facilitating accurate comparisons across studies and observers. By integrating qualitative observations alongside quantitative outcomes, the tool provides a more comprehensive understanding of fine motor skill performance. This approach has significant implications for school-based occupational therapy practice. The Hands-On! tool can enhance assessment practices by providing detailed insights into fine motor skills, crucial for children's participation in school activities. OTs can develop targeted interventions that support children's academic performance and overall occupational participation. Investigating the typical development trajectory of qualitative performance is essential for establishing benchmarks, which can help to identify difficulties and inform targeted interventions for children with developmental disorders. By providing a comprehensive assessment framework, the Hands-On! tool supports the occupational therapy profession's goal of enhancing children's occupational participation and overall well-being in school settings.

Key Messages

The Hands-On! observation tool was developed to standardize the assessment of qualitative fine motor performance in children aged 5 to 10. It focuses on observation of detailed intratask components of paper folding, writing numbers, and cutting, providing a comprehensive tool for evaluating motor skills in clinical and educational settings. Hands-On! demonstrated strong concurrent and construct validity for folding and cutting tasks, linking more proficient qualitative performance to better performance outcomes and showing expected age and sex differences. Additionally, the tool exhibited high inter- and intraobserver reliability across the three tasks, ensuring consistent and accurate assessments. These findings confirm Hands-On! as a robust, evidence-based instrument for evaluating fine motor skills in research and practice, supporting its use in occupational therapy settings. While quantitative measures such as task duration and number of errors provide valuable data, examining qualitative performance offers deeper insights into fine motor skill development. By capturing details such as grip type, joint movement, and posture, the Hands-On! tool provides a more complete understanding of a child's motor skills. This approach enables therapists to identify specific target areas and tailor interventions more effectively, promoting better outcomes in clinical settings.

Supplemental Material

sj-docx-1-cjo-10.1177_00084174251397726 - Supplemental material for A Qualitative Observation Tool for Folding, Writing, and Cutting in School-Aged Children: Hands-On!

Supplemental material, sj-docx-1-cjo-10.1177_00084174251397726 for A Qualitative Observation Tool for Folding, Writing, and Cutting in School-Aged Children: Hands-On! by Leila Faber, Esther Hartman, Dagmar F. A. A. Derikx, Maaike G. A. Steggink, Suzanne Houwen, and Marina M. Schoemaker in Canadian Journal of Occupational Therapy

Footnotes

Acknowledgements

We acknowledge the contributions of our Master's student, Anouk Nijland, who conducted most of the assessments of the children using the DCDDaily. We also extend our gratitude to the schools and teachers for providing the necessary space and time for these assessments. Furthermore, we thank the children and their parents for their participation in this study. Lastly, we acknowledge the use of ChatGPT, a language model, to assist in rewriting text for clarity and correctness. ChatGPT was not involved in generating new ideas or structures.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.