Abstract

This review argues that the role of technology in business and society debates has predominantly been examined from the limited, narrow perspective of technology as instrumental, and that two additional but relatively neglected perspectives are important: technology as value-laden and technology as relationally agentic. Technology has always been part of the relationship between business and society, for better and worse. However, as technological development is frequently advanced as a solution to many pressing societal problems and grand challenges, it is imperative that technology is understood and analyzed in a more nuanced, critical, and comprehensive way. The two additional perspectives invite a broader research agenda, one that includes questions, such as “Which values and whose interests has technology come to emulate?”; “How do these values and interests play out in stabilizing the status quo?”; and, importantly, “How can it be contested, disrupted, and changed?” Any research that endorses green, sustainable, environmental, or climate mitigating technologies potentially contributes to maintaining the very thing that it seeks to change if questions such as these are not being addressed.

Businesses have since long developed, used, and commercialized technologies in ways that have influenced society. Starting from the idea that businesses’ competitive successes depend to a significant degree on their technological competence, many firms have explored novel technological opportunities. Many of these technologies have dramatically altered our lives, often by enriching and easing them, but not always; they have also been associated with significant instances of disturbing, exploiting, and polluting natural environments, and of posing serious threats to our health and safety, and—more recently—our privacy. The arrival of novel technologies has always been part of the relationship between business and society and it is most likely to remain so, for better and worse.

We live in a “risk society” (Beck, 1992) in which we seek technological solutions for technologically inflicted risks, but which, paradoxically, also create novel risks. This paradox has intensified debates about societal issues in relation to technology, for example, around genetic engineering, nanotechnology, energy production, and information and communication technologies. Being instrumental in advancing welfare and well-being while contributing to societies’ discomforts, technology has two faces, like the Roman god Janus: one beneficial, enabling, and full of promise; the other detrimental, exploitative, and risky. In light of such considerations, and given the rapid speed with which technologies are currently being developed, we believe that a reflection on how technology has been discussed in the study of business and society is highly relevant.

We address this question through a review of articles published in Business & Society (BAS). We first focus in a deductive manner on the relative prevalence of three perspectives on technology in these articles. Subsequently, we inductively analyze the various meanings of the word “technology.” Our choice to focus on the articles published in BAS is motivated by our belief that the journal is representative of the field.

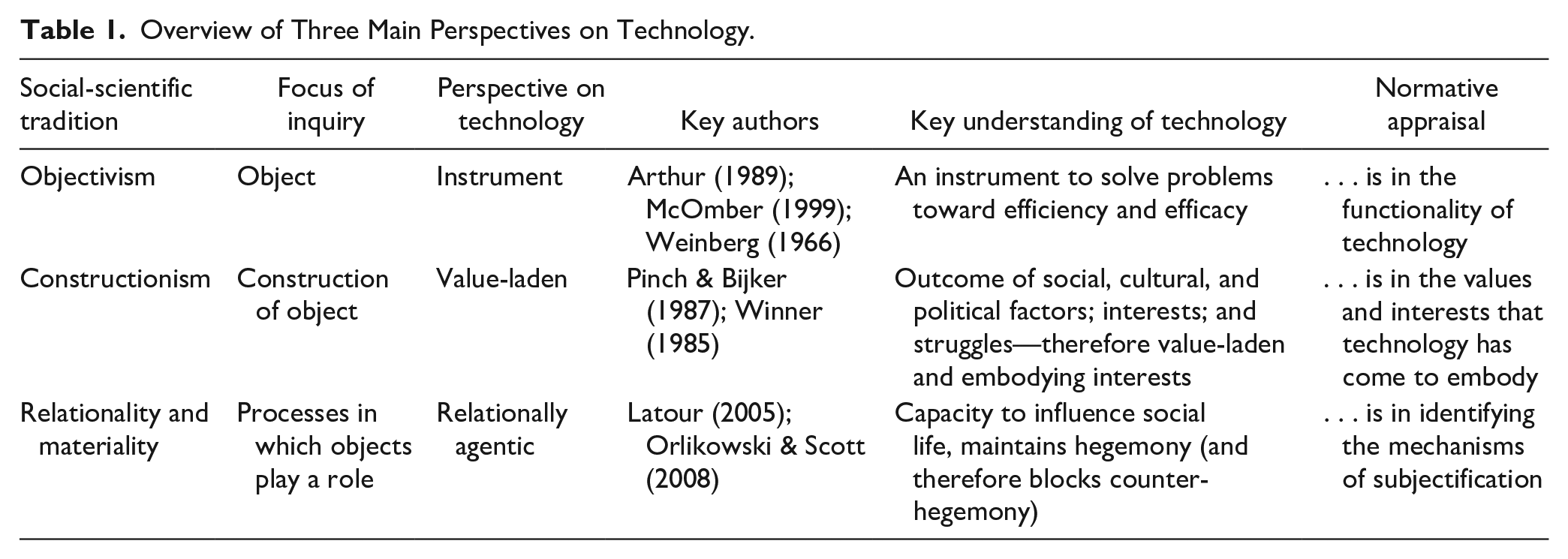

Whereas our inductive analysis relies on common procedures for qualitative content analysis, our deductive analysis starts from a framework that mirrors the main ontological assumptions in the social sciences; it distinguishes three perspectives from which technology has been approached and studied (cf. Coeckelbergh, 2017; Grint & Woolgar, 1997; Vurdubakis, 2012). Social inquiries are often focused on either objects or discrete entities and their instrumentality (in the tradition of objectivism; Bryman, 2012), on the social shaping or social construction of objects (in the tradition of constructionism; Bryman, 2012), or on relational processes in which “objects” no longer have an independent status (in the tradition of relationality, materiality, and process studies; cf. Hernes, 2007; Thompson, 2011). These three perspectives emphasize, respectively, how technology is an instrument that can be functional, effective, and efficient for a particular purpose (McOmber, 1999; Weinberg, 1966); how technology is not necessarily functionally effective but predominantly the embodiment of particular social, cultural, or political interests and in that sense value-laden (Bijker et al., 1987; MacKenzie & Wajcman, 1985); and how technology operates in relation to, and thereby influences, other entities—things, people, ideas—such that it is relationally agentic (Latour, 2005; Orlikowski & Scott, 2008).

We interpret these three perspectives as adding to each other, in the sense that shortcomings of the first perspective inspired and informed the two subsequent perspectives. We recognize there are important ontological distinctions between the three perspectives. However, by conceiving of them as the result of investigation (instead of as a priori strong claims about the supposed “real, true nature” of, in this case, technology) and thus as accomplishments (van Fraassen, 2002), we see these perspectives as in a conversation, complementing each other on their respective blind spots. As we will argue in more detail later, recognizing the different blind spots and leveraging the insights from the three perspectives allow us to develop a more nuanced and comprehensive research agenda for the field of business and society, which, as we believe, is crucial to inform its future research on, and engagement with, technology.

Based on our deductive and inductive analyses, we find that despite the ubiquity and importance of technology in society, the topic has attracted surprisingly little attention. Although attention for the topic has risen somewhat since the turn of the Millennium, we also find that the role of technology in business and society research has been conceptualized and studied in limited ways. Just like in the broader field of management and organizational studies, it seems that technology has been “missing in action” (Orlikowski & Scott, 2008, p. 434).

Why would it matter for business and society scholars to take technology seriously? There are at least three reasons that emerge from our analysis and should inform future research. First, we make the case that the dominant instrumental technology perspective (“technology is an instrument to solve problems toward efficiency and efficacy”) is overly simplistic and therefore has limited potential for scientific inquiry. Building on this blind spot, and second, we argue that by acknowledging how technology is value-laden and the embodiment of interests, we can investigate which values and whose interests are embodied (or neglected or suppressed) in a particular technology, and how they have become embedded. Such investigations can help to extend often descriptive and normative stakeholder theories beyond their focus on the intentions and practices of corporations, in explaining why and how some stakeholder interests are included in a company’s management, while others remain marginalized. Examining the social construction of technology may therefore uncover how stakeholder relationships can be manipulated and exploited.

Third, and building on another blind spot of the first perspective, we believe that we should take seriously the relational agency of technology (i.e., its capacity to influence our social life, intended or unintended). Networks (or assemblages) of humans and technology mediate and dominate how we think and talk about issues relevant for business and society scholars, for example, sustainability or sustainability reporting. As a consequence, research endorsing technology potentially contributes to maintaining the hegemony, often serving corporate interests and profits. Understanding and exposing the agency of technology in stabilizing and maintaining hegemonic discourses is a prerequisite for any viable counter-hegemony.

Background: Three Perspectives on Technology

In this section, we discuss three major perspectives on technology (cf. Coeckelbergh, 2017; Grint & Woolgar, 1997; Vurdubakis, 2012). In our discussion, we deliberately gloss over differences in understandings of technology within each of these perspectives, because we deem their similarities more relevant in light of the purpose of our argument. We also elaborate on how in each of the three perspectives technology can be normatively appraised. Normative appraisal is needed, because—as we believe—it is central to the field of business and society. After all, research in this field does not only seek to understand and explain, but it also has the ambition to offer ideas and insights on developing the business–society relationship to mutual benefit in terms of well-being and ethics.

The first perspective treats technology in a functionalist manner. We label it technology as instrumental, referring to its tools and machines, as well as the knowledge to develop and utilize them. The second and third perspectives seek to overcome blind spots of the first. Questioning a central idea in the first perspective, that technology development is determined toward increased efficiency, the second perspective sees technology as value-laden. The third perspective emphasizes how technology is relationally agentic in being an inalienable part of the structuring of life, shaping the social as much as the social shapes technology. Table 1 offers a comparative overview.

Overview of Three Main Perspectives on Technology.

Technology as Instrumental

In this first perspective, “technology” refers to both the artifacts and arrangements that are developed as solutions to problems and to make life easier, and to the knowledge and ingenuity to develop and use them, for better and worse. The focus in this perspective is thus on the instrumentality of technology (McOmber, 1999), on how it enables human beings “to control their lives and their environments by interfering with the world in an instrumental way, by using things in a purposeful and clever way” (Franssen et al., 2018). Technology is thus no more—nor any less—than an instrument to solve problems, a solution to society’s problems, even to the very problems that are associated with the use of technology.

This perspective is often encountered in research fields adjacent to business and society. In strategic management, technology and innovation management, and international business, for example, technology is typically seen as something that can be managed as a tool, resource, or capability associated with competitive advantage. According to contingency approaches, corporate strategies need to be tailored to prevailing political, economic, social, and, indeed, technological conditions and developments (the focus of the so-called political, economic, social, technological (PEST) analysis). In economic studies of technology, it is recognized that the adoption of new technologies is not only associated with increased productivity, greater efficiency, and novel opportunities but also that it is an extension or elaboration of earlier technology. For this reason, path dependencies and lock-in effects are relevant to understanding technology and its economic implications (Arthur, 1989; David, 1986; Dosi et al., 1988). In all these fields, competitive advantage and business success depend on having timely access to the latest technology, whereas lack of access is a sure recipe for failure.

Technological development is understood in two seemingly contradictory ways. One is technological determinism, the other “techno-optimism.” Although seen as instrumental, and therefore as created by human beings, there appears to be something inevitable in technology development; it seems to follow an autonomous and necessary trajectory toward ever greater efficiency and efficacy, such that at any moment in time the latest is also the best technology. Technology is thus seen as separate and distinct from the social: an exogenous factor that advances society toward ever greater efficacy and efficiency, something that can be adopted or to which one has to defer. According to Grint and Woolgar (1997), technological determinism portrays technology as an exogenous and autonomous development which coerces and determines social and economic organizations and relationships. Technological development appears to advance spontaneously and inevitably, in a manner resembling Darwinian evolution, in so far as only the most “appropriate” innovations survive and only those who adapt to such innovations prosper. (p. 11)

“Most ‘appropriate’” are those technological developments or innovations that have superior performance in terms of functionality, productivity, efficacy, or efficiency; they survive because they crowd out less performing alternatives. It is through this process of variation and selective retention that technology development seems to proceed in an autonomous manner and to determine social change (Kline, 2001).

Although the idea of technological determinism has been seriously criticized as naïve and unfounded (Kline, 2001; Vurdubakis, 2012), it does have intuitive appeal. After all, technology is often perceived as something that intrudes into one’s life world, as something developed by others that imposes itself, for better or worse, onto the “social and economic organizations and relationships” (Grint & Woolgar, 1997, p. 11) that are important to individuals, groups, or organizations.

However, for “techno-optimists” and those who develop technology, technology is extremely versatile and full of promise. It entails the possibility to solve almost any problem encountered, if only one works hard enough and receives sufficient financial support. One emblematic example is the success of the 1960s Apollo project in the United States—President John F. Kennedy’s ambition to overtake the Soviet Union in the “space race” by safely sending an astronaut to the Moon before the end of the 1960s. Another emblematic example is in the recent successes, in terms of speed of development and the degree of protection obtained, of the massive investments in the development of vaccines against COVID-19. Both attest to humanity’s technological abilities. More generally, the belief in the abilities of technology to solve specific problems has extended to the belief that “many of our social problems do admit of technological solutions” (Weinberg, 1966, p. 8) and therefore can be technologically “fixed.”

In this first perspective, technologies are typically seen as morally neutral—as tools that can be developed, utilized, applied, discarded, and put aside at will. Normative appraisal is therefore in the functionality of technology: It can be more or less effective or efficient in performing what it should do, and there is always possibility for further improvement. Moreover, the moral significance of technology is assumed to lie with its users and uses, and not with the technology itself. This stance is epitomized by the “guns don’t kill” argument. And if it happens that there are unforeseen, unexpected, or undesired outcomes associated with the use of a particular technology, then it is asserted that the technology in question is insufficiently developed, followed by a plea for its further development or by the adoption of additional technological solutions—“fixes”—to address the problematic outcomes.

However, it has been argued that assertions such as these are shortsighted. Thomas Hughes, for example, argued that technological fixes are “partial, reductionist responses to complex problems. They are not solutions . . . While technological fixes ignore the systematic interactions required to solve a complex problem, they often initiate unintended consequences, as well” (in Rosner, 2004, pp. 208–209). And Morozov (2013) argued that the reliance on technological fixes in Western societies and the belief in the benefits of technological solutions has now entered a stage of exaggeration: “technological solutionism” as the belief in technological fixes in the overdrive, the quest to improve everything, even to solve problems “that are not problems at all” (p. 6).

Technology as Value-Laden

This first perspective has been criticized and extended by two additional perspectives. By emphasizing how technology is socially constructed and thus the embodiment of particular values and interests, the first of these two—the second perspective—challenges two core ideas in the technology-as-instrumental perspective: its presumed inevitability (technological determinism) and its belief in functional efficiency. The particular shape that a specific technology obtains is the outcome of a social action—sometimes convoluted, sometimes contested—in which specific cultural preferences, political interests, and societal values have come to dominate other preferences, interests, and values (Bijker et al., 1987; MacKenzie & Wajcman, 1985). Therefore, the second perspective highlights how technology is not a neutral instrument; its presumed efficacy and efficiency is merely a cloak to hide the preferences, interests, and values it has come to embody.

The examples of the Apollo project and the COVID-19 vaccines not only serve as illustrations of the spectacular advances that can be accomplished through development of technology but also of the main claim of the second perspective that technologies are value-laden and that they embody political and other interests; they are significantly influenced by social, cultural, and political factors; interests; and struggles (Bijker et al., 1987; MacKenzie & Wajcman, 1985). This is in contrast to technologies “just” being the outcome of the quest for increased economic and/or functional superiority, as is presumed in the first perspective. Therefore, technologies should not be assumed to be stable entities with fixed and determinate “uses”; rather, all processes of technology design, development, manufacture, implementation, and consumption are socially constructed (Grint & Woolgar, 1997, p. 24).

The social construction of technology involves a number of stages. Bijker (1995) explains these through his famous analysis of the history of the design of bicycles from the penny-farthing to the rear-wheel propelled safety bicycle. Initially, there is flexibility in the interpretation of the technology, allowing multiple stakeholders in its development to advance their interests and interpretations; then, a search or struggle ensues in which multiple stakeholders seek to stabilize the design according to their preferences, values, or interests; finally, closure around a specific form and function of the technology is achieved as one set of preferences, values, or interests emerges as the “winner.” Thus, during these successive stages, technologies come to embody particular values, preferences, and interests. On that account, this second perspective emphasizes how technology development may be contested: It may be subject to social, economic, political, and/or cultural struggles between various stakeholders in its development. In other words, there is nothing inevitable in the creation of technology, and efficiency must be judged in light of particular criteria.

There may, of course, be asymmetries in the ability of various stakeholders to influence technology development, which reinforces the idea that technology has political properties. Winner (1985) argues that there are two ways in which artifacts [and more broadly: technology] can contain political properties. First are instances in which the invention, design, or arrangement of a specific technical device or system becomes a way of settling an issue in a particular community . . . Second are cases of what can be called inherently political technologies, man-made [sic] systems that appear to require, or to be strongly compatible with, particular kinds of political relationships. (p. 27)

In Winner’s interpretation of the social-constructivist perspective to understanding technology, it is therefore possible to take an “evaluative stance or [adopt] any particular moral or political principles that might help people judge the possibilities that technologies present” (Winner, 1993, p. 371). What is important in this perspective is therefore the recognition—shared with critical management scholarship—that technologies are infused with some values (but not others), advance some interests (but not others), and can be used in the exercise of power (while leaving others powerless, disadvantaged, or disciplined).

In the social-constructivist perspective of understanding technology, it is problematic to normatively appraise technology as such, because it does not have inherent qualities. Instead, normative appraisal of technology is of the values and interests it has come to embody. For example, Winner (1985) argues, machines, structures, and systems of modern material culture can be accurately judged not only for their contributions of efficiency and productivity, not merely for their positive and negative environmental side effects, but also for the ways in which they can embody specific forms of power and authority. (p. 26, emphasis added)

We agree with Winner’s (1993) argument that because technology embodies values and interests, one should be concerned with the questions of which values and whose interests technology has come to emulate, and how these values and interests play out.

Technology as Relationally Agentic

Whereas the first perspective has been challenged for adopting a technological determinist stance, the second perspective has been criticized for being close to adopting a social determinist position (Klein & Kleinman, 2002). Simultaneously with the development of the second perspective, a third perspective emerged that is less susceptible to the social-determinism critique. This third perspective differs from the second in its adoption of a relational—instead of social-constructivist—perspective and in ascribing agency to materiality and entities (“things”), thereby expanding the circle of entities that are attributed agency to well beyond human beings. The third perspective thus adds yet another dimension to the first and second: It sees technology as relational and agentic. The technological and the social are a duality, each constituting, enabling, and constraining the other. The third perspective is distinctive in insisting not only that technology is socially shaped but also, and importantly so, that the social is technologically shaped. That is, technology is understood to have agency.

In this context, agency refers to the capacity of an entity to be causally effective in the production of events and states of affairs, including how “we,” human beings, think and behave. Entities can be generative of change (“performative”), such as when they make humans do or think in particular ways (Leonardi, 2011; Pickering, 1993), sometimes even operating autonomously and independently from human beings (Johnson & Noorman, 2014). 1 Although the idea of material agency—“that artifacts have, or embody, some level of agency; even if it is very limited or derived in some way”—may sound unusual, it has in fact become “generally accepted” (Introna, 2014, p. 31).

This third perspective includes Actor-Network Theory (ANT; Latour, 2005; Law & Hassard, 1999) and sociomateriality (Leonardi et al., 2012; Orlikowski & Scott, 2008). ANT does not make a fundamental difference between people (“human actants”) and things or objects (“non-human actants”); both are related in dynamic networks of dependence and interaction (“heterogeneous assemblages”) in which they continuously re-assemble the patterns and processes in our lives (Latour, 2005). ANT therefore denies the validity of drawing “hard and fast distinctions between what is social and what is technological . . . the social and the technological already presuppose and contain one another” (Vurdubakis, 2012, p. 475); it claims that “we are the beings that we are through our entanglements with things; we are thoroughly hybrid beings, cyborgs through and through” (Introna, 2013, p. 260).

This central idea of ANT—that not only people but also non-human entities have agency in relation to other entities—is also key in the sociomateriality literature. For example, it has been argued that human and material agencies are “imbricated” (Leonardi, 2011), that is, intertwined, interlocked, inseparable, entangled, and mutually constitutive, such that one facilitates and constrains the other. Therefore, human beings shape their technologies as much as these technologies shape human life (Orlikowski, 2007), including its practices, routines, convictions, values, norms, power relationships, and cultures.

Scholars in the third perspective have long argued that the qualities, properties, identities, and agencies of entities stem from their relationships to other entities. Their argument implies an understanding of agency as fundamentally relational: neither the entity (whether human or non-human) nor its agency can be understood in isolation—the meaning and agency of an entity only emerge in relation to other entities in the dual sense of “obtaining meaning/agency from” and “giving meaning/agency to.” For example, sociomateriality scholar Leonardi (2011) claims: “By themselves, neither human nor material agencies are empirically important. But when they become imbricated—interlocked in particular sequences—they together produce, sustain, or change either routines or technologies” (p. 149). Similarly, ANT scholars Akrich and Latour (1992) argue that relationality refers to the distribution of competences and performances over “assemblages” of human and non-human entities, such that the object of analysis must be the assemblage in which an entity takes part. In short, human and non-human entities “acquire form, attributes, and capabilities through their interpenetration” (Orlikowski & Scott, 2008, pp. 455–456). Pickering points out that assemblages develop in unpredictable ways but nevertheless can be tracked: “No one knows in advance the shape of future machines, but . . . we can track the process of establishing that shape without returning to the humanist position that only human agency is involved in it” (Pickering, 1993, p. 565).

A normative appraisal of technology is not straightforward in this third perspective, because its understanding of technology is ultimately rooted in Heidegger’s (1977) understanding of technology as humanity’s “way of being.” Homo sapiens is a technological being; a being, however, that is inevitably at risk of becoming dominated by its own technology in a process of subjectification (Agamben, 2009; cf. Heidegger, 1977). Heidegger’s “question of technology” is therefore a question of how we exist in the world: how we sociomaterially shape, control, govern, discipline, and exploit both the world, which includes ourselves. However, although this process of subjectification is necessarily associated with humanity’s technological way of being, its specific form is not determined, and hence there is the possibility of an alternative (Agamben, 2009; Deleuze & Guattari, 1987). Normative appraisal is therefore not in the question whether there is subjectification—because that is inevitable—but what kind of subjectification we construct for ourselves. Normative appraisal is thus oriented to identifying the specific mechanisms through which subjectification takes place and to the particular political, economic, or cultural interests they serve, as well as to suggesting and working for alternatives. Koehn (1999) and Martin and Freeman (2004) have attempted to mobilize such a kind of technology appraisal in the domain of business ethics. More recently, Moser et al. (2021) offered such an appraisal of artificial intelligence, starting from the vantage point that the “technical” and the “social” are mutually constitutive.

Of course, the third perspective has also been criticized, for example, for its decentering of human agency, for its often inaccessible jargon stemming from an inclination to continental philosophy, and for the impracticality of its proposal that everything is related to everything else. These points of critique—we believe—are less fundamental than those advanced in relation to the first and second perspectives. In a way, the third perspective not only pushes further the idea, that technology “enables human beings to control their lives and their environments” (Franssen et al., 2018), but also turns it around to show how technology has come to control our lives.

Method

We undertook two complementary approaches to map how “technology” has been conceptualized and utilized in business and society research: a deductive analysis to identify the relative prevalence of the three perspectives on technology, and an inductive analysis of the meaning of the word “technology.” We restricted our analyses to articles published in BAS, considering the journal to be representative for the field. In August 2020 and in May 2021, we did full-text searches of BAS in the SAGE archive, including online first articles. We opted for a full-text search, because articles prior to 1986 did not contain abstracts and keywords have only been added since 2003. We noticed that some of the articles published in the journal in the 1990s were stored as image-based PDFs. We manually added the PDFs of those articles that we expected to be relevant and made them searchable by transforming them into text-based PDFs.

Deductive Analysis: Relative Prevalence of the Three Perspectives

Considering that the first, technology-as-instrumental perspective is an intuitive, common sense understanding of technology, we infer that there is no need to make it explicit if one adopts this perspective. By contrast, authors adopting either the second or the third perspective would feel obliged to acknowledge and be explicit about their choice. Therefore, in our deductive analysis, we identified the presence of the second and third perspectives. Any article on technology engaging with either the second or the third perspective would necessarily cite one or more of its key authors; the absence thereof would by default imply endorsement of the first perspective. In the background section, we referred to key authors in the second and third perspectives: Bijker, Pinch, Winner, and MacKenzie for the second perspective; Latour, Callon, Law, Orlikowski, and Leonardi for the third perspective. Through our full-text search of articles in BAS (executed in May 2021), using these authors’ names as search terms, we identified only 10 articles that cite one or more of these key authors (see “Findings: Technology in Business and Society Research” section).

Inductive Analysis: Meaning of Technology

Through our full-text search, executed in August 2020, we identified 520 articles to match the search term “technolog*” (which captures articles mentioning “technology,” “technologies,” and “technological”), downloaded them, and compiled a library of references. Then, we coded what we inferred to be the meaning of the phrase “technolog*” for each appearance of the phrase in all the items in our set. Our coding of what we deemed meaningful appearances of the phrase “technolog*” followed the typical procedures of emergent, open coding in qualitative content analysis, followed by the consolidation thereof through clustering. The coding revealed the presence of “false positives,” for example, when the phrase “technolog*” only appeared in author affiliation, biographical note, or reference list. We removed such false positives, defined as any item in which all appearances of the phrase “technolog*” met one or more of the following conditions:

“technolog*” appears in authors’ affiliation;

“technolog*” appears as a word in the list of references;

“technolog*” appears as a label for an industry category in empirical research;

“technolog*” appears as a part of the name of an organizational entity (company, program, etc.);

“technolog*” appears as a part of the name of a managerial function or role description (such as in “chief technology officer”);

“technolog*” appears as an adjective (such as in “technological advance,” “technological competence,” “technological know-how,” “advanced technological innovations”);

“technolog*” appears in a quote extracted from an earlier publication or from empirical data and the article itself does not deal with, problematize, or use the term “technolog*.”

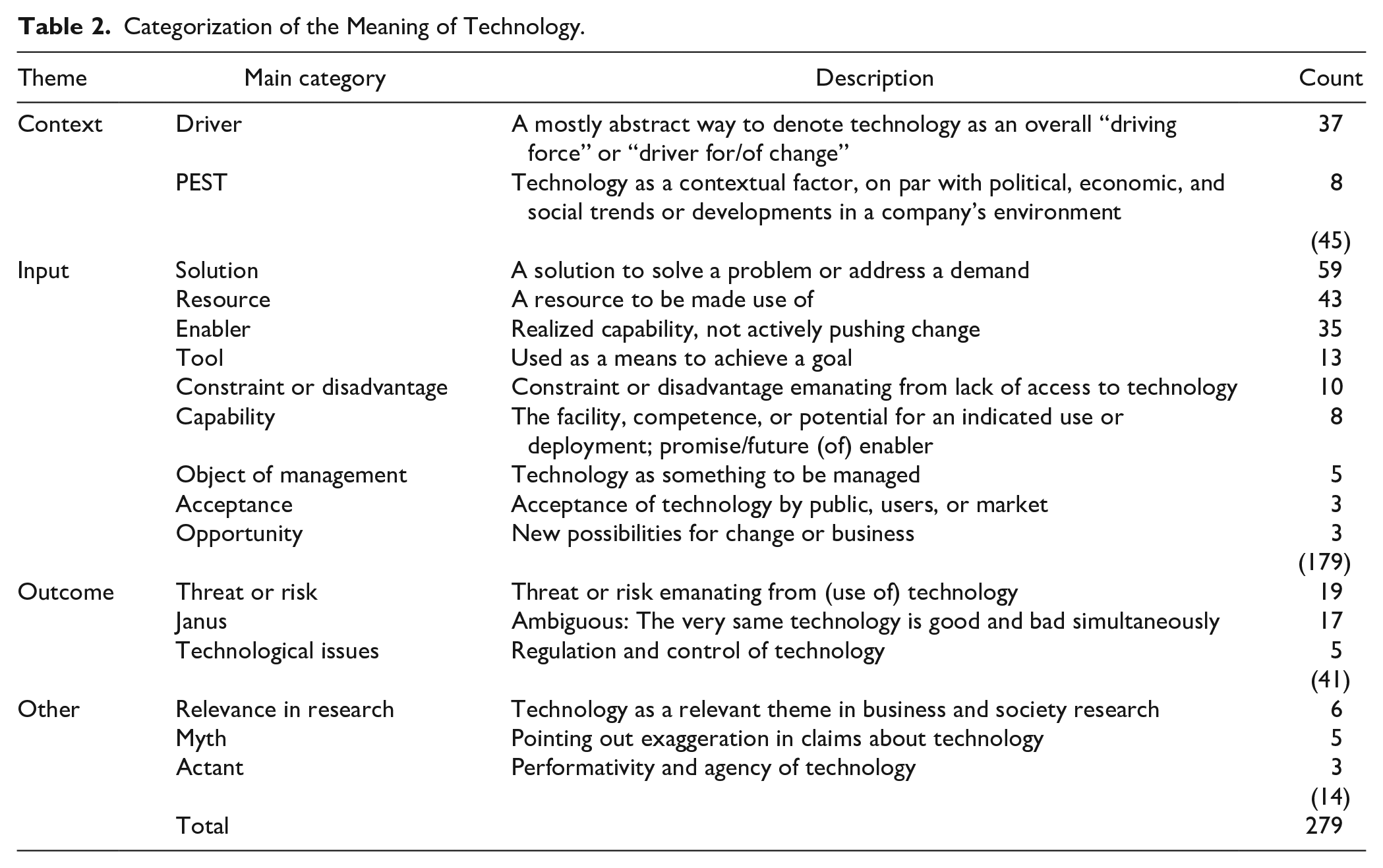

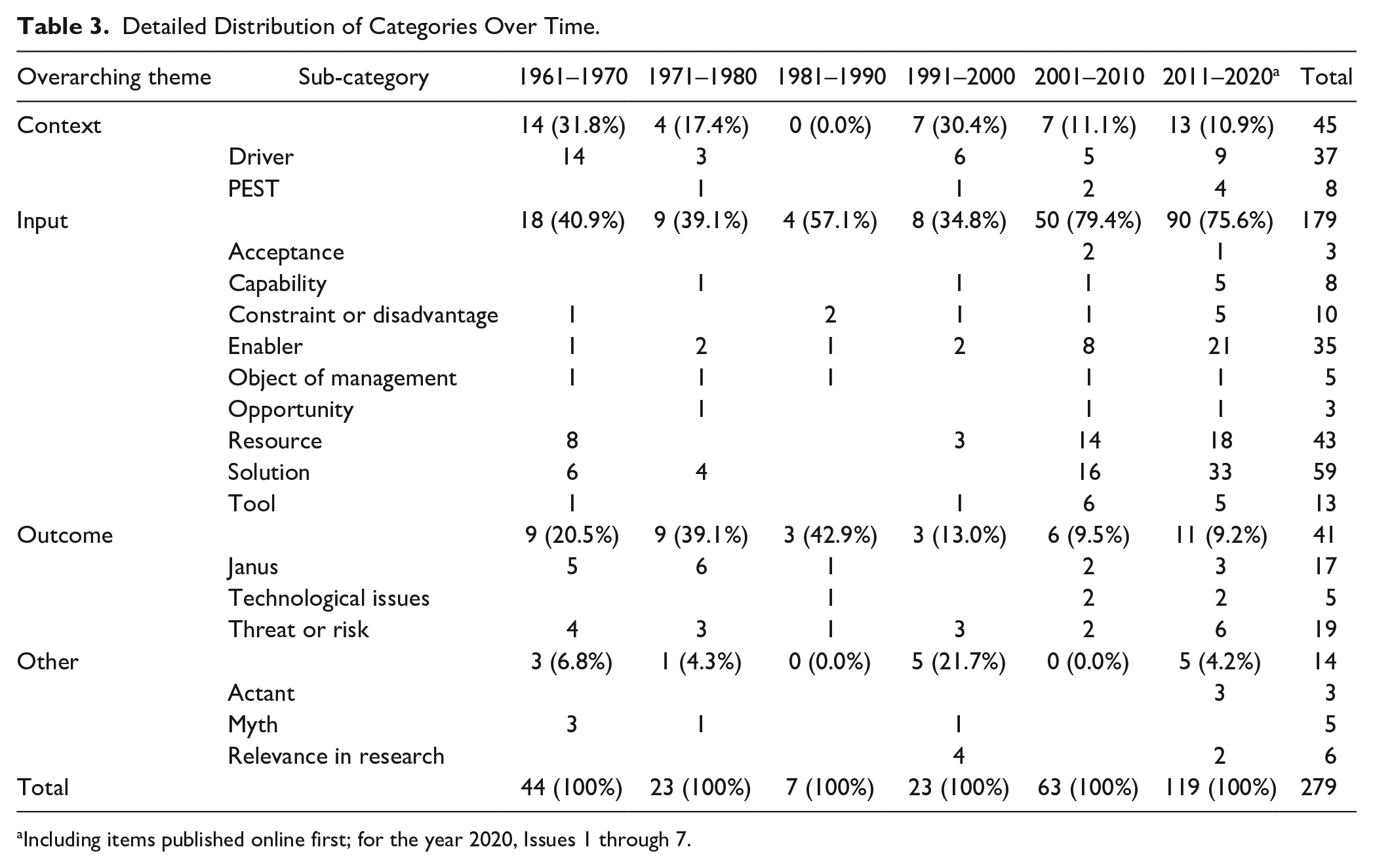

This procedure resulted in a final set of 237 items that form the basis for our exploration. We concluded our coding by identifying 17 main categories and four overarching themes (Table 2). Their distributions over time are included in Table 3.

Categorization of the Meaning of Technology.

Detailed Distribution of Categories Over Time.

Including items published online first; for the year 2020, Issues 1 through 7.

Findings: Technology in Business and Society Research

In this section, we characterize in two steps how technology has been discussed in the study of business and society, relying on the articles in BAS as a proxy for the field. We first examine the relative prevalence of the three perspectives on technology and then explore the meaning of the word “technology.”

Absence of Second and Third Perspectives

We found few articles in BAS that cite key authors in the second and third perspectives: 10 articles mobilize the work of these authors, only seven of these engage in a meaningful way with technology, and most of them were published in 2019 or later. It seems that recently, a more nuanced and perhaps more sophisticated and critical understanding of technology is gaining traction, arguably in relation to the 2019 special issue on digital technology, big data, and the internet (Flyverbom et al., 2019).

While the number of articles mobilizing the second and third perspectives is limited, their content aligns with our description of them. For example, citing Pinch and Bijker (1987), Lin (2019, p. 546) acknowledges the social construction of technology, as “multiple actors are involved in technological paths emerging in real time.” Bled (2010) draws on Callon and Law to argue that “the objects that were mobilized to fill the networks were heterogeneous and could take the form of people, organizations, machines, or scientific findings” (p. 576). Invoking sociomateriality, Yang and Liu (2020) rely on Leonardi to claim that “in different issue areas, the structure and boundaries of hyperlink networks may help the public construct their understanding of social issues and make sense of corporations’ roles” (p. 1085).

Meanings of “Technology” in the First Perspective

Given the lack of presence of the second and third perspectives, the vast majority of articles that mention “technology” can be considered to subscribe to the first perspective. Table 2 presents our inductively developed codes for the meaning of technology. We differentiate between technology as a context, input, or outcome, and also found articles that fit neither of these categories; we labeled the latter cases as other. Table 3 shows the distribution of these categories over time. In the following, we offer references and quotes to illustrate the appearance of the various meanings of technology in the first perspective.

To start with, technology is frequently seen as an important macro-level contextual factor in the business environment, on par with trends in politics, the economy, and society at large. More specifically, technology is seen as a driver of change, regarded in PEST studies as an autonomous force that propels the business environment and to which companies have to adapt. In his plea for a “naturological” perspective, Frederick (1998) discusses “technologizing” as one of five value clusters that are central in understanding corporate–community relations. Technologizing refers to the activity or outcome of efforts to make economizing more effective: “[Technology] is the corporation’s major change-making force. In the language of complexity theory, technology is the corporation’s principal autocatalytic change agent” (p. 372, emphasis in original).

Second, and in line with the view of technology as a driver of change, it is portrayed in several ways as an input, a productive factor that can be used in an instrumental manner, such as in enabling or facilitating something. For example, Etter and colleagues (2018) discuss how internet and social media technologies enable and constrain stakeholders’ ability to construct legitimacy judgments of organizational actions. More frequently, however, technology is seen as a solution to solve a problem or address a demand, as a resource that can be used at will, or as a tool or means to achieve some purpose. It is available to companies, if not readily so, then as a capability or opportunity that needs to be developed or mustered. For example, Malen and Marcus (2019) join a bandwagon when arguing that “business development of novel technologies that reduce environmental pollution is increasingly recognized as an essential step toward addressing contemporary environmental problems” (p. 1600). They continue by examining how the opportunity to capture value affects companies’ innovation efforts. When the cost or risk associated with developing socially desirable technologies is high, governments may step in to stimulate companies to develop technologies that are “sustainable,” “environmentally friendly,” “clean,” or “green.” In this context, Hoppmann (2015) examined “how policy makers can foster technological progress in clean energy technologies to alleviate adverse environmental impacts of the energy sector, reduce the dependence on fossil fuels, and create new jobs in dynamic high-tech industries” (p. 541).

The other side of the coin has been recognized as well: The lack of access to technology is seen as a constraint or disadvantage for further economic development and prosperity (e.g., in developing countries). In the context of investigating company–stakeholder relationships, Driscoll and Crombie (2001) describe how the lack of access to technological resources such as phone lines put a small monastery at a disadvantage and left it relatively powerless in its conflict with a large company. In examples such as these, technology is an input to (business) activity, something that can contribute, albeit under constraints, in a positive or beneficial way to businesses or society at large, to welfare, and/or well-being. As such, it is or must be an object of managerial attention, including in situations in which various relevant stakeholders—the general public, users, or the market—do not readily accept the technology (Heugens, 2002; Purtik & Arenas, 2019).

Third, the issue of the acceptance of technology points to discussions around outcomes of the adoption of technology. Here, typically there is the implicit assumption that outcomes of the adoption of technology will or must be beneficial. However, in a number of cases, technology is portrayed as a threat or a risk, for example, in being associated with the pollution of natural environments or with dependencies on large-scale techno-industrial complexes. Thus, a number of publications argue that technology needs to be regulated and controlled as technological issues emerge. For example, Samuels et al. (1989) argued that the increased use of computerized workstations requires new legislation and regulation to curtail newly emerged occupational health hazards. More recently, Bled (2010) discussed the struggles and negotiations to regulate genetic manipulation in the new biotechnology. The article by Ferns and Amaeshi (2019) can be read as a warning against the belief in technological fixes; it describes how in discussions during the UN Earth Summits, technology was seen as a means to achieve sustainable development, while it was also acknowledged that “anthropogenic attempts to control the environment through science and technology are [. . .] futile, or even dangerous” (p. 1536).

In dealing with outcomes, most publications one-sidedly highlight either the benefits of technology—implicitly so in the majority of cases—or its undesired consequences as threats and risks. Nevertheless, a number of publications depict specific technologies as having both beneficial and undesired consequences. This Janus view of technology was particularly prevalent in the 1960s and 1970s. For example, Peters (1967) asserted that “technology both follows and leads business; it is servant and master alike. It meets the demands of the individual enterprise for a new or improved product or process, and then deserts its employer to serve his competitor” (p. 34). McKenna and Oritt (1981) believe that “it is possible that our industrial system, and its associated technology, has worked so well that its success is simultaneously the cause of a good deal of worker dissatisfaction” (p. 37).

A handful of other studies do not fit the categories of context, input, or outcome. Some of these articles point toward the relevance of technology for business and society research, relative to other topics and themes; we referred to these in the introduction section. Others discuss the myth of technology, pointing out the embellishment or beatification of technology and critiquing the exaggerated and almost sacred belief in technology as the savior of humankind. Yet, these rare examples that relativize and adopt an ironic stance toward technology do not fully rupture with the first perspective.

Discussion: Toward a Broader Understanding of Technology

Overall, our analysis paints a clear picture. In the field of business and society, the first perspective is overwhelmingly dominant, whereas the second and third perspectives are almost absent. Technology is understood instrumentally, as tools, machines, and entities “that facilitate the adaptation of human collectivities to their environments” (Vurdubakis, 2012, p. 450), as well as the knowledge and skills that are necessary to create, understand, and operate them. Interestingly enough, since the 1990s, there seems to have been an increasing tendency to see technology as input, while interest in technology as context and its outcomes seems to have waned (Table 3). Some articles nevertheless problematize technology’s potential to bring harm and point to risks associated with technology. Yet, many of these more cautious articles endorse the view that such harms and risks can be mitigated or controlled through regulation or further technology development; this view reinforces the instrumentality of the perspective in which technology is seen as something to be developed, controlled, used, regulated, and managed.

There has been very little recognition that technology is socially constructed and/or that it can be relationally agentic. The ideas have been largely neglected that technology embodies particular values and interests, and that it is the outcome of social, cultural, and political processes. Neither has there been much recognition that technology itself can exercise agency in its relation to the social. Given this state of affairs, we argue in this section for the relevance of a richer understanding of technology in the study of business and society. The time is now, in the age of big data, algorithms, and globally dominant tech firms, for business and society scholarship to develop a more nuanced and comprehensive research agenda that builds on, and takes seriously, the ideas that inform the second and third perspectives. In the following, we therefore project how the second and third perspectives can inform future business and society scholarship such that it can powerfully contribute to solutions for complex problems. We chose to include articles published in BAS in our examples, to show that the second and third perspectives are not entirely alien to the field.

Relevance of the Second Perspective

The main insight from the second perspective is that technology is socially constructed. That is, the form and shape of technologies are significantly influenced by social, cultural, and political processes. In an effort to unpack the processes and mechanisms that underlie the social construction of technology, theorists of institutional work, for example, discuss “technology work” as the “purposeful, reflexive efforts of individuals, collective actors, and networks of actors to shape a technology’s material form, as well as the understandings and practices associated with it” (Lawrence & Phillips, 2019, p. 192). In a similar vein, Jones (1996) offers a critical focus on the political mechanisms in the social construction of technology. He endorses a Marxist-institutionalist perspective in arguing that technology is a central element in capitalist production systems, by fueling productivity growth through labor intensification and enhancing managerial control over the labor process within firms. In Jones’s account, technology is created and used in a manner that serves the capitalists’ interests.

Extending from these arguments, and considering that institutions legitimize certain values and interests (but not others), we can argue that institutions such as logics (Friedland & Alford, 1991), orders of worth (Boltanski & Thévenot, 2006), and value sets (Frederick, 1995, 1998) inform the creation and use of specific technologies and also that in turn these technologies stabilize the institutions that legitimized their creation and use. In short, institutions have a material dimension (Friedland & Arjaliès, 2021). Thus, by conceiving of technology as value-laden and embodying interests, the second perspective allows us to investigate which values and whose interests are embodied in a particular technology, and how they have become embedded—and, of course, also whose values and interests are neglected, disregarded, or suppressed. In this way, a social-constructivist perspective on technology promises to extend descriptive and normative stakeholder theories beyond their focus on the intentions and practices of corporations, toward explaining why and how some stakeholder interests are included in a company’s management, while others remain or are kept marginalized. Examining the social construction of technology may therefore uncover how stakeholder relationships can be manipulated and exploited.

West (2019) offers a compelling example of the latter possibility. She uncovered how algorithms embody corporate interests by showing how technology makers such as Google have commodified user-created data and thus created data capitalism. West (2019) describes “both these material developments and the discursive foundations that underpin them—how new tracking technologies and corporate practices were both created and communicated to the public” (p. 21). Ultimately, socially constructed data capitalism causes information asymmetries, unpaid labor, and social control. Data capitalism is the continuation of a long history of feudal, religious, commercial, and state authorities seeking to categorize and control ordinary people (e.g., state censuses to facilitate recruitment for the military and collection of taxes, or customer segmentation to increase corporate revenues). The “repurposing of cookie technologies” (West, 2019, p. 27) is a point in case. West (2019) recounts how the originally intended functionality of cookies (to enhance user experience by enabling a site to remember a visitor, p. 27) quickly became compromised by commercial interests in spite of concerns about the consequences thereof. The logic of data acquisition by means of cookies and the subsequent data analytics are legitimized “ostensibly for the sake of making information more democratically accessible” (West, 2019, p. 33). However, these arguments hide that “forms of information asymmetry, uncompensated labor, and social control [are] at play when data emerges as a key resource for commercial actors” (Flyverbom et al., 2019, p. 15).

It is also conceivable that technologically mediated stakeholder relationships have implications for companies, in addition to those for stakeholders. To illustrate this possibility, we invite you to reflect on the example of Amazon’s hiring algorithm, developed in 2014 and disbanded a few years later (Dastin, 2018). Amazon, a company that relies heavily on computational technologies, had developed an algorithm that was supposed to take over the task of human resource management professionals in scanning resumes of job applicants. True to the first perspective on technology as neutral and providing solutions to problems, Amazon was apparently unaware of the technology’s bias toward male job candidates: In effect, Amazon’s system taught itself that male candidates were preferable. It penalized resumes that included the word “women’s,” as in “women’s chess club captain.” And it downgraded graduates of two all-women’s colleges, according to people familiar with the matter. (Dastin, 2018)

If Amazon had recognized that technology is value-laden and embodies interests, their developers might have realized these potential pitfalls and prevented the building up of bias against women candidates in the algorithm. The system was not only inexcusably discriminatory to women, it is also likely that Amazon suffered some reputational damage. As the above examples of Google and Amazon show, technology can be a mediator in stakeholder relationships with implications for both companies and stakeholders. More generally speaking, we believe that acknowledging the social, cultural, and political factors that imbue technology with values and interests is critical, both to understanding the pervasive power of technology and to finding ways of keeping in check the possibly disastrous consequences thereof.

Relevance of the Third Perspective

The recent material turn in organizational studies has drawn attention to the relationality and material agency of entities. Both ANT and sociomateriality scholars point out how technology is not only socially constructed but also agentic in having the capacity to influence social life. This capacity stems from their embeddedness in “assemblages” (dynamic networks of dependence and interaction between entities: people, things, etc.) and has far-reaching implications for how we (can) shape, control, govern, discipline, and exploit the world and ourselves. We provide here examples from research on sustainability and so-called green information systems.

One example of how technology can exert agency is in research critical of corporate sustainability. Hahn and colleagues (2017) argued that corporate sustainability research is often more in the service of corporate interests than it is advancing sustainability. Tregidga et al. (2018) extend this critique by exposing sustainability discourses as “hegemonic” (Laclau & Mouffe, 2001; that is, as structured and dominated by large corporations and their associations). In particular, they argue that when academic research does not examine how this hegemony has been created and maintained, it is complicit in maintaining it, and therefore call for academic research “to resist and challenge the hegemonic discourse of sustainable development within the corporate context” (p. 294). Hegemony emerges from “the structuring of meanings through discursive practices” (p. 298). It carries with it the possibilities of critique, subversion, and resistance, and thereby the possibility to articulate a counter-hegemony. Importantly for the discussion here is the notion that the structuring of meanings through discursive practices relies not only on linguistic but also on non-linguistic practices (Tregidga et al., 2018, p. 298), such as measurement technologies.

We argue that the hegemonic structuring of meaning—such as in the case of sustainable development—has a material component in the forms of corporate reporting and other management systems and the measurement technologies associated with them. Such material components are performative in guiding or perhaps even structuring our perception of what counts and what not. This means that a hegemonic discourse is stabilized in a network (or assemblage) that also comprises corporate reporting, management systems, sensors, spreadsheets, and other technological tools. These, in turn, stimulate the selection of only those corporate measures that are compatible with these tools. Because such technological tools carry with them an air of objectivity and neutrality (boyd & Crawford, 2012), their important role in stabilizing these hegemonic discourses is easily overlooked.

Another example of how technology can exert agency can be found in Carberry et al.’s (2019) discussion of the adoption of green information systems, “which are information systems employed to transform organizations and society into more sustainable entities” and whose “historical emergence . . . [is] a corporate response to increasing demands for sustainability reporting” (p. 1083). The availability of “big data” and the possibility to analyze them through advanced artificially intelligent systems may elevate transparency projects—such as the green information systems that Carberry and colleagues (2019) herald—to a next level and embellish their output with “an aura of truth, objectivity and accuracy” (boyd & Crawford, 2012, p. 663, emphasis added). However, as Hansen and Flyverbom (2015) argue, “access to reality is mediated by algorithmically coded soft and hardware devices, which afford particular kinds of knowledge and insights, but never the full picture of anything” (p. 883). It is in this manner that the material agency of AI technology shapes transparency projects (Albu & Flyverbom, 2019; Hansen & Flyverbom, 2015). Hence, sustainability reporting is no longer “just” a linguistic practice but an algorithmically mediated managerial technology (Boiral & Henri, 2017, p. 308) that exerts agency in shaping how corporate sustainability practices are understood and appraised.

Yet another example of the relational agency of technology can be found in Whelan (2019). By analyzing the Google–copyright relationship, he takes a major step toward the analysis of relationally agentic technologies through a “dispositive analysis.” Combining three such theoretical concepts, Whelan shows how the interconnectedness of human and non-human entities in heterogeneous networks (or assemblages) can both enable and constrain (Foucault), be put to work for specific interests (Gramsci), and be reconstructed to allow for the creation of new possibilities (Deleuze and Guattari). In this way, by “constructively appropriating, rather than simply replicating, all that it takes from Foucault, Deleuze and Guattari, and neo-Gramscians” (Whelan, 2019, p. 64), Whelan “shows that organizations are political from Day 1” (Whelan, 2019, p. 65). In particular, by acting within and upon a heterogeneous network—changing the relationships among its various elements and directing their agencies—organizations such as Google mobilize the agentic capabilities of their technologies to profit from them. This example testifies to the agency of technologies, as well as new ways of exploitation that they facilitate.

Building on the above examples, we conclude that heterogeneous networks (or assemblages) of human and non-human entities dominate through hegemonic discourses how we think and talk about issues relevant for business and society scholars, for example, sustainability or sustainability reporting. In addition, technology shapes how we understand and appraise these issues, for example, where algorithms mediate (and often constrain or restrict) the kind and amount of information that we receive. As a consequence, any research that endorses green, sustainable, environmental, or climate mitigating technologies potentially contributes to maintaining the very thing that it seeks to change by submitting, without or with little reflection, to the hegemonic discourse. Too often, these heterogeneous networks serve the interests and profits of multinational corporations such as Google (Whelan, 2019).

Therefore, we believe that understanding and exposing the agency of technology in stabilizing or maintaining hegemonic discourses is a prerequisite for any viable counter-hegemony. For example, only if we understand how particular interests (profit, control, reputation, power, etc.) are embodied in technologies such as sustainability reporting, can we lay bare the ways in which these technologies contribute to keeping alive particular ways of talking about sustainability. We believe that this is a crucial step to providing answers and solutions to the complex problems that currently only seem to be addressed by green, sustainable, environmental, or climate mitigating technologies—without the technologies, however, bringing about the desired change. In other words, only by examining and exposing the assemblages that keep us from adequately addressing the grand challenges will we be able to work toward a sustainable future.

Conclusion

In spite of a number of articles that are critical of the use and consequences of technology (the outcome theme), we were somewhat surprised by the relative absence of articles reflecting the societal debate about major technologies, such as nuclear energy in the 1970s and 1980s, the new biotechnology (genetic modification) in the 1990s, and nanotechnology in the 2000s. The recent attention to computational technologies may signal a reversal of this trend, although it seems that the increased frequency by which “technology” does appear is largely related to a growth in the input theme. “Technology” is relevant for core theories in the business and society field, such as stakeholder theory, social responsibility, and sustainability. We found some examples of articles in BAS pointing toward company–stakeholder relationships being mediated by access to communication technology, yet there is less recognition of how technology—by embodying values and interests, and/or by exerting agency—structures company–stakeholder relationships. The same point can be made in relation to investigations of corporate initiatives and activities regarding social responsibility and sustainability. For example, there has been little recognition of, and attention to, questions of how measurement and reporting technologies are agentic parts of assemblages around corporate social responsibility and sustainability, and thus are structuring elements of efforts to stimulate corporate social responsibility and sustainability.

Therefore, the time is now for business and society scholarship to revisit and revamp its conceptualization of, and engagement with, technology. If we are to develop a sustainable and thoughtful engagement with technology in the future, we need to better understand its dimensions and properties: “the problems surrounding the misuse of technology lie in a lack of understanding of technology’s inherently social and moral dimensions” (Buchholz & Rosenthal, 2002, p. 47). Koehn (1999) argued that if we only thought about what technology is, our own thinking would help us to “grasp the forces shaping us” (p. 86). We want to conclude our essay with a plea for research that indeed endorses and mobilizes this kind of thinking and understanding. We claim that core ideas in the second and third perspectives—that technology is value-laden and embodies interests, and that technology is relational and agentic in its embeddedness in assemblages—have much to offer in advancing the kind of thinking and understanding that Buchholz and Rosenthal (2002), as well as Koehn, advocate.

Our plea, that the field of business and society takes a fresh and more sophisticated perspective on technology, builds on the assumption that it is an illusion to pretend that technology is merely an instrumentally functional object in the relationships between business and society (as in the first perspective). One implication of our advancing the second and third perspectives is that the point is not so much to focus on technology per se but instead to scrutinize the role it plays in assemblages. It is therefore imperative to look for where the technology is and to answer some salient questions: Which values and whose interests does a technology embody? How does it stabilize the status quo? How does its material agency operate? And, importantly, how can it be contested, disrupted, and changed? Answering questions such as these requires the adoption of more sophisticated understandings of technology, such as those developed in the second and third perspectives that we discussed in this article.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.