Abstract

Teachers’ assessment practices are invariably related to their knowledge, skills, and beliefs or their assessment literacy. While teachers’ assessment literacy continues to gain attention, there is limited empirical research on the relationship between assessment literacy and teachers’ practices and beliefs, in particular junior secondary school teachers. Drawing from a larger project, this paper employs a synthesised conceptual framework on assessment literacy to interrogate the assessment practices of eight teachers. The findings reveal that teachers’ conceptual knowledge and their conceptions of assessment are influenced by government policies. Teachers acknowledged the importance of effectively interpreting and communicating assessment data in order to support student learning. Finally, the study found that the ways in which teachers meaningfully engaged students in the feedback process created opportunities for building assessment literacy in both teachers and students. This article highlights the gap in how teachers draw upon their conceptual knowledge and how that contextual knowledge allows them to enact assessment within their varied school contexts.

Introduction

Educational policies are increasingly defining what teachers should know, what they should do and how their performance should be evaluated (Acuña, 2022; Daliri-Ngametua & Hardy, 2022). Despite this, there is still limited understanding of how teachers’ assessment literacy, or their knowledge, skills, beliefs and dispositions about assessment, influences their assessment practices, particularly in light of policy initiatives. While there is evidence that federal, state and institutional assessment policies shape teachers’ assessment practices (e.g. Department of Education and Training [DET], 2022a; Murchan, 2018; Verger et al., 2019), there is limited exploration into how teachers’ assessment literacy mediates or is mediated by the influence of policy on practice. This article, therefore, explores the relationships between the socio-political influences of policies, teachers’ assessment literacy and their assessment practices.

Understandably, assessment processes are different in different years of schooling, seemingly entangling, for example, broad expectations and requirements in secondary junior years where external assessments for tertiary education are not yet involved. According to Wyatt-Smith et al., (2010), teachers in junior secondary levels are not required to follow assessment practices and principles which are reflected in the senior secondary ‘high-stakes’ environment. They suggest that an assessment barrier exists where roles, responsibilities and tasks are different in junior secondary classes when compared with senior secondary classes, especially when it comes to assessment practices. With significant research uncertainty remaining around assessment literacy and how teachers’ assessment practices are negotiated, this article begins by synthesising assessment literacy approaches in the literature, seeking commonality in the various elements that have been presented. Following this, the article analysis seeks to contribute to the broader understanding of assessment policies and practices in such junior secondary years, specifically, ‘low-stakes’ secondary contexts. Specifically, it intends to identify how teachers come to understand assessment, through their understanding of the elements of

Over the past two decades, there has been an increased focus of assessment-related research within education and teacher education (e.g. Black & Wiliam, 1998; Looney et al., 2018; Mølstad & Pettersson, 2018; Popham, 2011; Tolgfors, 2018), with some scholars pointing to the importance of the role of teacher assessment literacy to respond to and navigate the accountability demands of policy and to support student learning in the classroom (Hailaya et al., 2014; Popham, 2018). To this point, we acknowledge the work of Coombs and DeLuca (2022) who conducted a scoping review of the different assessment constructs in the educational literature and state that ‘assessment literacy has become the dominant construct to describe teachers’ assessment work’ (p. 288). Given that assessment is now discussed in light of its interconnected relationship with curriculum and pedagogy in contemporary schooling (Black & Wiliam, 2018; Penney et al., 2009), it is increasingly discussed by governments, schools and teachers, stressing the need to improve student outcomes (DET, 2022b).

The article provides a synthesis of assessment literacy approaches, highlighting the complex interplay of the various socio-political elements (e.g. personal, social, school, policy) that may affect teachers’ enactment of assessment in practice. Most empirical studies examining assessment literacy have tended to focus on contexts other than Australia (DeLuca, et al., 2016; Mertler, 2004; Pastore & Andrade, 2019; Popham, 2011). Furthermore, the scholarly work that has been published within Australian schooling contexts has tended to focus on applying principles of assessment literacy to practice without due consideration of socio-political factors, such as the impact of curriculum and jurisdictional support (e.g. Dinan-Thompson & Penney, 2015; Willis et al., 2013). We acknowledge the contextual differences in curriculum policy and assessment practices that exist in different states and territories within Australia. As discussed later, our research is situated in the Victorian junior ‘low-stakes’ secondary school context, where we examine the assessment perspectives and practices of junior secondary teachers (e.g. teachers teaching Years 7–10). Next, we present a synthesis of the extant literature on assessment literacy that frames our analytical approach. We then describe the methods, data collection and analysis processes. Finally, we discuss the findings, illustrating the complex nature of how assessment is understood and enacted by teachers (their understanding and engagement with assessment literacy) in the complex world of schools. We conclude by examining how research of assessment literacy can continue to develop with support from developing assessment agendas.

Assessment Literacy

Within education policy landscapes, teacher professionalism and accountability are often discussed in light of how specific teacher practices and particular behaviours seek to improve student learning and increase a range of student outcomes (Burroughs et al., 2019; Reddy et al., 2021). Given that teachers have been asked to continually integrate assessment in their practices it is understandable that researchers within this space have attempted to conceptualise and operationalise teachers’ practices. Coombs and DeLuca (2022) have highlighted multiple constructs to understand teachers’ practices. These include assessment competency, assessment capability, assessment identity and assessment literacy. For the purposes of our article, and following Hay and Penney (2012) and Taylor (2009), we use the term

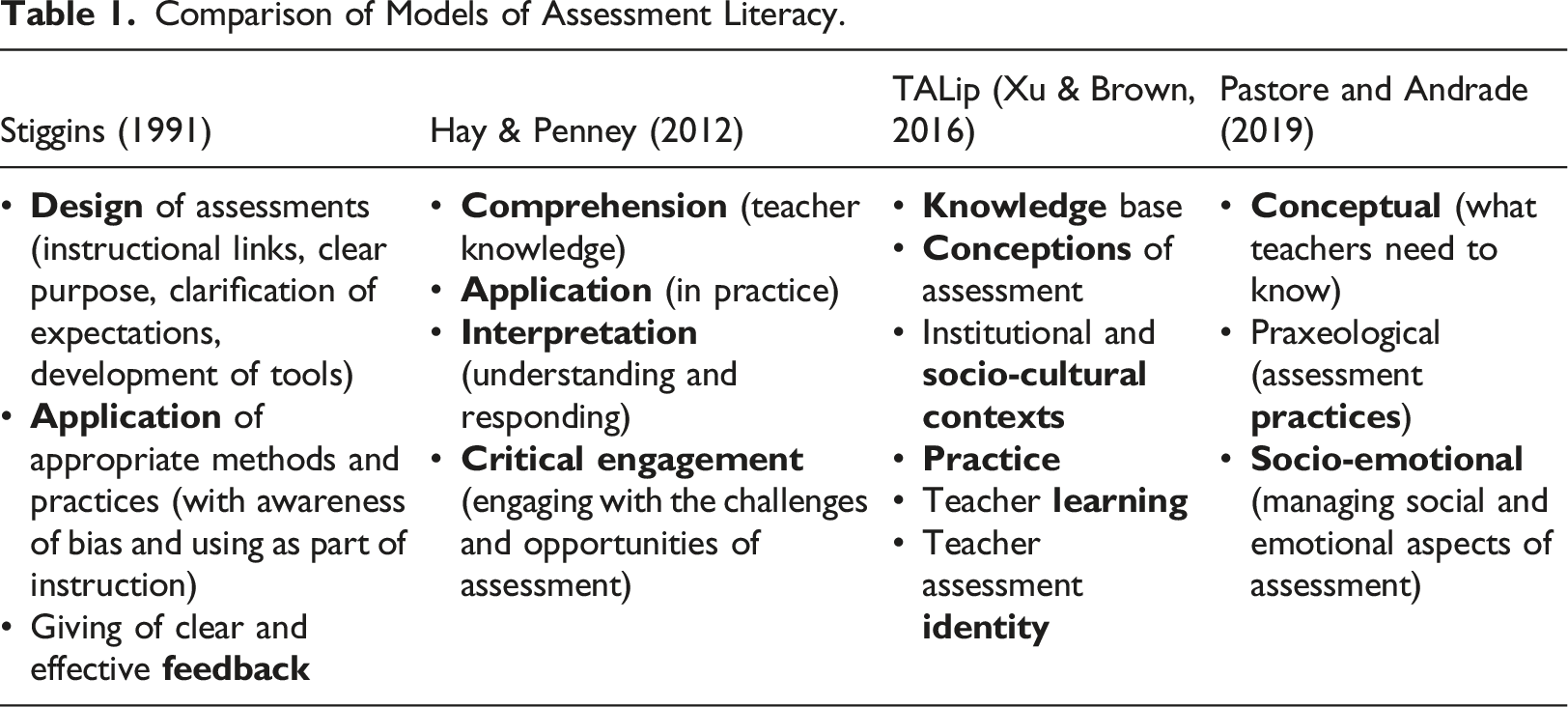

Comparison of Models of Assessment Literacy.

Building upon Stiggins’ work, scholars sought to further define what assessment literacy is and what it is not (DeLuca & Klinger, 2010; Dinan-Thompson, 2013; Fullan & Watson, 2000; Hay & Penney, 2012; McMillan, 2001; Mertler, 2004; Shepard, 2000; Willis et al., 2013) with an increasing focus on how social, cultural and contextual factors shape assessment knowledge, behaviours and practices (Fullan & Watson, 2000; Hay & Penney, 2012; Redelius & Hay, 2009; Xu & Brown, 2016). Fullan and Watson (2000) argue that assessment literacy must factor in the personal and social factors which shape teachers’ capacity to ‘(a) to examine and accurately understand student work and performance data, and, correspondingly, (b) to develop classroom, and school plans to alter conditions necessary to achieve better results’ (p. 457).

Considering both personal and the social-cultural factors shaping assessment, Hay and Penney (2012) developed a model of assessment literacy consisting of four interrelated elements of assessment literacy which seek to explore how teachers and students critically engage with assessment: (1) (2) (3) (4)

In developing these elements, Hay and Penney (2012) conceptualise assessment literacy by attempting to move the concept from an overly instrumental approach of understanding and reporting to a broader concept whereby teachers were enabled to critically engage with assessment practices. More recently, Xu and Brown (2016) composed a Framework of Teacher Assessment Literacy in Practice (TALiP), as a result of their systematic review of assessment literacy scholarship. Their model, presented in Table 1, presents six components of assessment literacy with a focus on acknowledging personal, social and cultural understandings of teachers’ assessment work.

More recently, Pastore and Andrade’s (2019) three-dimensional model of teacher assessment literacy draws on a socio-constructivist lens to explore the concept. The model nests the contexts of classrooms, schools, systems and nation alongside the inter-related assessment literacy dimensions of conceptual, praxeological and socio-emotional: (1) (2) (3)

In synthesising the salient concepts across the literature, a number of key concepts are worthy of our attention. The comparison of these four different models highlights that conceptual knowledge about designing and applying assessment practices and principles is an important part of assessment literacy. This conceptual knowledge requires a deep and critical understanding of assessment design and implementation, as well, as understanding how to effectively interpret and provide feedback on assessment data to improve student learning. However, it is the interaction between the dimensions of conceptual and application which allows conceptual understanding of assessment to be translated into practice. Our synthesis highlights three important considerations as it applies to our research. Firstly, any interpretation of teachers’ assessment work must embrace the

Instead, our synthesis attempts to scaffold the contributions of the various models presented in Table 1 to capture: (1) what teachers should know about assessment, (2) how they should apply it in their various socio-political contexts and (3) how to critically interpret data to engage students in learning. In alignment with this, the research presented in this article was designed to address the following questions: (1) How do teachers conceptualise and apply assessment practices and principles within junior secondary contexts? (2) How do teachers interpret and communicate assessment data to improve student learning and engagement in junior secondary contexts??

Methodology

Victorian Context

The Australian Curriculum was introduced as a national curriculum in 2014 (Australian Curriculum and Reporting Authority [ACARA], 2022). The objective was that it would improve the quality, equity and transparency in the education system. The implementation of the national curriculum was left to individual states and territories (e.g. Victoria, Australian Capital Territory) through their statutory curriculum and school authorities (e.g. Victorian Curriculum and Assessment Authority [VCAA]). Each state made a decision pertaining to the content and achievement standards, thus affording flexibility to schools in the development of appropriate curriculum to be delivered to students.

The Victorian Curriculum F-10 has two distinctive features: i. The curriculum is represented as a continuum of learning; and, ii. The structural design. The continuum of learning highlights that levels of achievement occur within an individual student, not years of schooling. Moreover, this enables a focussed approach of learning progression for all students. The structural design element highlights the developed set of coherent and comprehensive content descriptions (what teachers are expected to teach and students expected to learn) and associated achievement standards (what students are able to understand and do). Assessment practices are therefore understood through the content descriptions and achievement standards. This enables teachers to plan, monitor, assesses and report on the learning achievement of every student. These details are important when considering assessment literacy (Brown & Whittle, 2021; VCAA, 2023).

Participants and Data Collection

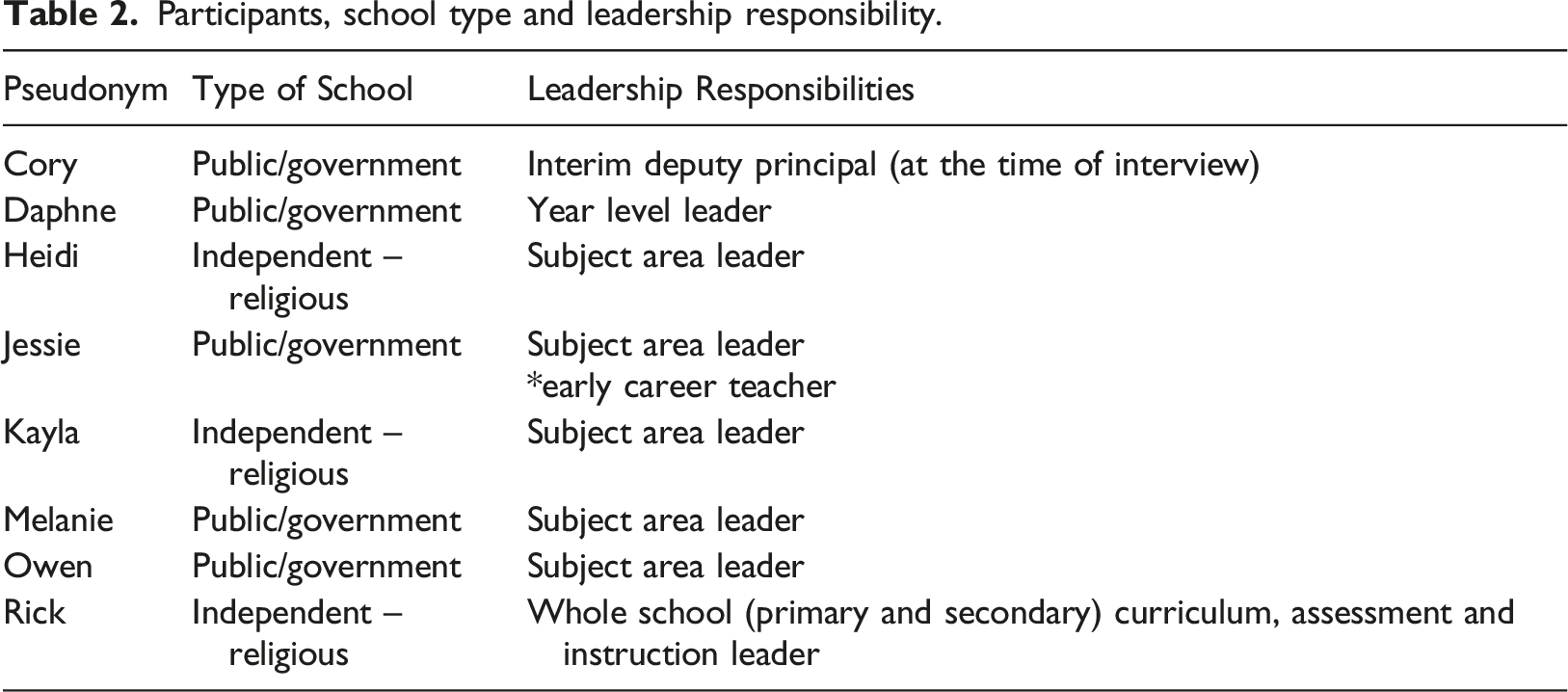

The data presented in this article are part of a larger mixed-method sequential research study that explored how assessment policy is enacted in Victorian junior secondary schools.

Once ethics approval was obtained from Monash University’s Human Research Ethics Committee (project number:11576), a snowball sampling technique was used to recruit teachers through the social media platform, Twitter, where an invitation to participate in an online survey and an interview on Victorian school assessment practices was shared. Snowball sampling is a sampling approach where one interviewee gives the research team the name of at least one more potential interviewee. In the online space, such as Twitter, this occurs when followers of the researchers’ tag or re-tweet the original post to their followers. Twenty-two teachers of the 109 survey respondents expressed interest in participating in an interview. Those teachers’ that agreed to participate in the interview received a US$15 gift certificate for their participation.

All interviews were conducted face-to-face in a private space of the participant’s request. Interviews were recorded and later transcribed. Survey questions covered the areas of demographic information (school type, experience, subjects taught), contextual information (school-based assessment policies and processes) and teachers’ personal approaches to assessment (e.g. feedback, grading, evaluative processes).

Interview Guide

The semi-structured interview drew upon an interview guide with 19 open-ended questions. These questions aimed to interrogate institutional and state-policies on assessment and their influence on teachers’ individual practices, personal beliefs and conceptions on assessment, and the application of assessment practices and principles. Questions included, for example: How does the Victorian Curriculum Achievement Standards guide how you approach student assessment and how does it inform your reporting practices? Please explain. Based on your experience, what affects teachers’ assessment practices? What practices does your school or do you use to ensure aspects such as validity, consistency (reliability) of results? Explain. Would you say that overall, students receive the marks they deserve? Why? How would you explain that?

Participants, school type and leadership responsibility.

Data Analysis

All interviews with the 22 participants were audio transcribed verbatim and subjected to theoretical thematic analysis consistent with Braun and Clarke’s (2006) heuristic utilising inductive-deductive thematic analysis framed by our synthesis of assessment literacy (conceptual, application, critical interpretation and engagement). After the reading of the interviews in order to gain a sense of the assessment dialogue between the researchers and the teachers, the analytical procedure involved several steps: i) reading and rereading transcripts to code and separate information into themes informed by the elements of assessment literacy, (ii) interpreting and comparing the texts within each theme across the teachers to identify both common and contrasting descriptions between and within them and (iii) reflecting on our synthesis to see how this might best inform our understanding of assessment literacy. After codes were identified, inductively (e.g. teacher judgement, parents, communication, feedback, purposes of assessment, etc.) and deductively (e.g. conceptual, application and critical interpretation and engagement), salient verbatim quotes were selected and were cross-checked to ensure they were reflective of the responses of the 22 participants as a whole. Twenty-one separate codes were identified that were subsequently categorised into the key themes of the paper (e.g. policy enactment, interpreting and communicating assessment data, providing feedback, etc.). The eight participants presented in this article were identified as being

Results

Overall, the participating teacher’s interview responses reveal that assessment literacy is socially and contextually constructed and that all teachers need to possess assessment literacy. In particular, the analysis of the collected interview data brings to light three key themes that provide insight into how teachers’ assessment literacy is shaped and realised within Victorian school contexts. First, teachers’ conceptual knowledge about assessment, their conceptions of assessment and how they then translate this knowledge into practice is shaped by state and institutional policies. Second, the participating teachers acknowledge the importance of effectively interpreting and communicating assessment data in order to support student learning. Finally, the ways in which teachers meaningful engage students in the feedback process creates opportunities for building assessment literacy in both teachers and students. Given that assessment practices are socio-politically and contextually constructed, the findings reveal how the various factors, such as policies, students and parents, influence what teachers know about assessment and how they enact assessment practices. The following section of the article discusses the data collected in relation to these three key themes.

Teachers’ Assessment Literacy is Shaped by Governmental and Institutional Policies

The interview transcripts revealed that the participating teachers, regardless of the type of school they taught in, understood that there were governmental, institutional, and parental factors shaping assessment practices and/or conceptions in their school. The following excerpt from Daphne discusses their school’s policies in and around assessment, thus demonstrating the interplay between government policies and school-based policies concerning assessment: We do have an assessment policy and we have a common assessment task requirement, and this is something that I introduced when I first came to the school [as a teacher with leadership responsibilities]. My understanding is that most schools do the same. So, we have three common assessment tasks from seven to 10 and we bounced back from what was happening at 11 and 12, but what we’re finding with the seven to 10 assessment is that a lot of it is test-based as opposed to being skill-based, and that’s actually been a real challenge to create something that’s different. So, I don’t have a test for the second half of the year, and I know that I’ve created waves, and we’ve done something different with seven and eight. So yes, the common assessment task has been a challenge to develop… We’ve had a group of interested teachers working together to organise a school wide rubric arrangement, and we’re about to start feeding that out to the faculties. But I think it’ll take a long time for that to actually work. So yes, it is very difficult, and I’ve just started doing an audit on our common assessment tasks but there’s not a lot of information there. (Daphne, government school)

It is clear from the excerpt that institutional and government policies that address the interpretation and enactment of assessment are prioritised as important drivers of assessment practices within the local context (Connelly & Connelly, 2013). Whilst our focus is on assessment literacy and assessment practices in junior secondary schools, it is clear that in Victorian schools, assessment procedures and practices for senior years (e.g. Years 11 and 12), that are considered high-stakes and are tightly regulated by the Victorian Curriculum and Assessment Authority (2021), are seen by teachers as an ‘ideal’ or appropriate model to enact. The following quote from Cory alludes to this. … we have incredibly tight processes for everything in Year 11 and 12. And moderation is done up there impeccably. The teachers who work in that space work incredibly hard to make sure that they’re doing the right things by the kids. And they’ll moderate, they’ll give really high-quality feedback and they’ll do everything by the book and they’ll do it right. (Cory, government school) So, yes eleven and twelve is incredibly structured. (Melanie, government school)

While Daphne comments that there is ‘bounce back’, the push back from the state-based governance and policy of senior secondary assessment (Years 11 and 12), she discloses that there is a tension between the ‘test-based’ ‘bounce-back’ of senior secondary assessment design and practices and her belief that assessment should be more ‘skill-based’. Daphne’s assessment literacy is shaped by ensuring that her school has common assessment tasks as ‘most schools do’ but while also attempting to introduce the application of assessment tasks that reflect her conceptions of assessment. She believes that assessment in lower secondary school should focus more on assessment skills and competencies than discrete knowledge that is mainly assessed by tests, but this must be tempered by the ‘bounce-back’ of senior secondary assessment policies. Her ‘audit’ of common assessment tasks allows for her to make sense of the design and procedures of these tasks in order to negotiate the relationship between what she knows about these institutional assessments and how assessment is enacted elsewhere (conceptual) and how to apply it in a way that resonates with her own conceptions and beliefs about assessment (application). While she explains that this is a difficult process, she acknowledges the support of other teachers in created socially and contextually appropriate assessment instruments and procedures.

While Daphne reflects on what she feels assessment should entail, Heather suggests that schools and teachers tend to be ‘slave-ish’ to government assessment policies and, as a result, many teachers’ conceptions and beliefs about assessment are shaped by what the government policies suggest teachers should know and do. Both Daphne’s earlier excerpt about the difficulty of designing and applying assessment instruments and procedures and comments by other teachers suggest that changes in assessment can be difficult. For example, Melanie, who was teaching at a government school, suggested developing a ‘consistent approach’ in assessment across departments and had a lot of push back. She explained that ‘the science department was mortified that we discussed getting rid of percentages’. Therefore, a push towards consistency in assessment, which ultimately leads to a more consistent approach to accountability, was the main goal of institutional assessment policies in the schools of the participating teachers. However, the conflicts between teachers’ conceptions of assessment for their discipline and/or their teaching and schools’ desire to have a consistent approach created push back from a number of teachers. This suggests that while government and institutional assessment policies can shape assessment knowledge and conceptions, the push for a consistent, one size fits all approach is not only difficult and time-consuming, as Daphne can relate, but not all teachers feel that this is achievable in their classrooms.

Assessment Literacy and Interpreting and Communicating Assessment Data

In addition to the tensions between one’s assessment conceptions and institutional or state-government-driven assessment practices, other tensions (Berry, 2007; Brandenburg, 2006; Pierce et al., 2013) exist as schools attempt to communicate about assessment (e.g. results, reports) to parents and teachers. Kayla, who has experience teaching in both independent and government schools in Australia, highlights the lived tension between being accountable to and engaging with governance structures that sit beyond government bureaucracies. I think it’s a different kind of governance [when working in private schools], not a governmental bureaucratic governance. It might be more a parental and community governance in this situation which have different needs that need to be met. So, I’d say it’s more about ticking boxes in the government system [in government schools] because there’s an accountability there. I’m not saying we’re not accountable but it’s a different form of accountability (Kayla, independent school).

Kayla suggests that in a private schooling context, the application of assessment practices is not shaped as much by government policies, but by parents. This reveals how the socio-political context, for example, parents’ positioning in the assessment process can influence how assessment practices are applied. The exploration of how teachers enact assessment-related processes is a focus within the dimensions of assessment application and critical interpretation and engagement as teachers’ ability to implement effective assessment practices for student learning is shaped by contextual factors, including parental conceptions of what assessment should look like. Kayla reveals that parents’ responses to feedback on assessment tasks shapes how she interprets and communicates assessment data. If they [my students] don’t understand why they received a mark they will want you to talk to them about it, which is fair enough. And if sometimes parents aren’t very happy with what we’ve done. So, you need to actually be really careful or be very clear what it is – (Kayla, independent school)

Therefore, it is not only about being accountable to government and institutional policies but being accountable to parents. Interestingly, Kayla highlights that teachers must be ‘careful’, especially when parents are not ‘happy with what we’ve done’. This suggests that parents want to know more than just the mark that their children receive but are interested in knowing the processes and procedures that surround assessment. Both Kayla and Melanie contend that teachers must be able to clearly communicate, to both students and parents, what is being assessed and how the assessment process leads to improved student learning. You have to be really clear about what your expectations were, how they weren’t met and what the student can do to make sure they improve for next time… So, I think that if you’re willing to actually ensure that the kids understand what it is you’re trying to do then I think that’s fine, but that makes you a better teacher because you’re actually very clear about what it is. (Kayla, independent school) We kept trying to kind of shift people into this concept of a growth model and what that looks like instead of agreeing on sort of a school-wide assessment scale, I suppose, that we can then communicate to parents and get that sort of shared understanding of how we work…. And then also I think, through that, ideally then be able to do so without all of that judgment and be able to speak to parents about growth in a way that we hadn’t previously. (Melanie, government school)

In this scenario, the teachers reported needing to communicate effectively to the student about what they “needed to do” and how to “improve for next time” but in the process this created opportunities to communicate with parents. Therefore, teachers are accountable to both students and parents in being able to communicate why students are being assessed, how assessment data are interpreted and what students need to do to improve. The interview data from the participating teachers suggest that this is an area of concern for many teachers. Many teachers struggle to know how to communicate where students are at and how to improve: I think it’s because we don’t understand the learning continuum…we really don’t have the skill to advise students how to get from one place to another…. (Daphne, government school)

This suggests that there is a disconnect between the first two dimensions of assessment literacy (conceptual and application) and the third dimension, critical interpretation and engagement. Whilst teachers can know about evidence-based practices in designing and implementing assessment tasks in a variety of socio-political contexts, they must also possess competencies that equip them to effectively communicate assessment, and subsequently teaching and learning strategies to parents and students. They must be able to clearly

Engaging Students in Feedback: The Role of Assessment Literacy for Both Students and Teachers

Communicating assessment data, with a particular focus on providing feedback to students, was discussed frequently among the participating teachers. While many teachers were grappling with how to provide meaningful feedback, others’ comments suggested that in order to improve student outcomes through feedback, they need to better engage students in the process.

Across each of the eight schools, assessment understanding and assessment practices related to the key concept of feedback – being an important component to improve student learning. However, the amount, frequency and delivery of feedback are often questioned, suggesting that there is not a commonality between teachers or across subject domains. Developing student assessment literacy must become both a priority in school, enacted through the pedagogical approaches undertaken by teachers and via school-wide support (e.g. school assessment policies, school-wide professional learning) to enact assessment. I think if I could change something about assessment reporting, I think it would actually be the students’ attitudes to why we do assessment. Because I did a test with my Year 8 class this morning. Now, I will go home, over the next few days I will mark that test. I will write them all a paragraph of feedback, I will give them that feedback, I’ll make it available online to them. I will print it and give it to them on a sheet of paper. But I can guarantee to you that half of those sheets of paper will get scrunched up and thrown in the bin. Now, that may be because we finished that topic and tomorrow we move on, we start something new tomorrow, and so what’s the point of feedback to what we’ve already done? (Cory, government school)

Being able to support students’ understanding of how to respond to feedback requires assessment literacy among students, which can only be developed if teachers possess assessment literacy, particularly in being able to explain what is being assessed (conceptual), use students’ needs as the basis for developing feedback strategies (application) and then provide clear feedback for students to engage with (critical interpretation and engagement). Cory explains, ‘…I’m very open to students supporting and helping each other and everything we do in there is collaborative. And so students will build each up other up and they will give each other feedback’. However, this appeared to be the exception rather than the rule.

One of the issues that Cory reveals is that the feedback being given is not always transferrable, so receiving specific feedback on one assignment does not necessarily always improve performance on future tasks. Despite this, many teachers argued that students did not engage with feedback given because it was not specific and targeted: We’re aware that for written feedback, the students themselves aren’t doing much with the written feedback and it has little effect on what they are able to do or to change. And also how specific the feedback is, so urging them to write better, or to be more accurate or something like that. Kids need an actual mechanism to be able to do that. So we’ve been grappling with this idea of what’s the most effective way, and efficient way really, of being able to give feedback to kids. We’ve experimented with things like examiner’s reports, but they seem to be more effective for the older students who are able to link the features of their work to examiners’ reports for particular assessment tasks. The younger kids really struggle to do that. They need particular aspects to be pointed out. But what we do is we limit the amount of feedback we give, and it’s linked to the instruction that we’ve just been working on. (Rick, independent school) ...if kids are producing a piece of writing, they’ll receive just one piece of written feedback that relates to the things that we’ve been working on prior to the assessment task. Whatever it is that we’re trying to demonstrate or include in our work, that’s the feedback that we’re trying to give to the kids. And the reason for that is, if we give feedback that the kids will never use, or don’t have the opportunity to use, or isn’t linked to anything that we’ve actually been teaching them to do, then it’s useless. It’s a waste of everybody’s time providing that written information. (Cory, government school) But also, again, it just constantly felt like you were going in and just so much of your time was spent on giving feedback to students. And trying to get better at giving feedback to students and making sure that I was having conversations with students. (Melanie, government school)

The three teachers acknowledged that teachers need to provide feedback and to provide feedback that is systematic yet purposeful. Rick and Cory both argue that teachers need to focus on key aspects that are relevant to what is being taught and to the specific context of learning. In other words, there is no need to provide feedback on everything but ensure that it is targeted and specific. This, however, makes it difficult when creating school-wide decisions about feedback practices as targeted and specific does not fit well with one-size-fits all approaches to feedback that ensure teachers are held accountable. The participating teachers, more generally, argue that teachers must possess assessment literacy to know what needs to be assessed and why, while responding to the specific needs of the curriculum and the students. Rick and Melanie also point out that feedback must be effective and efficient, which is a difficult balancing act in schools. With increased discussion on the increase of teacher workloads, efficient may take precedence over effective.

Discussion

To understand and interrogate a sample of Victorian secondary school teachers’ assessment literacy practices within ‘low-stakes’ secondary contexts (Years 7–10), this study synthesised the literature on assessment literacy highlighting three important considerations, namely: conceptual, application and critical interpretation and engagement dimensions (Hay & Penney, 2012; Pastore & Andrade, 2019; Stiggins, 1991; Xu & Brown, 2016). This allowed for a critical examination into what these teachers participants know (conceptual), how they apply their knowledge (application) and how teachers critically engage with assessment as a process and issues that arise from engagement with assessment (critical interpretation and engagement). The findings revealed that teachers’ assessment beliefs (e.g. conceptions about assessment) and practices are shaped by both state and institutional policies. In addition, this study revealed that the participating teachers recognised the importance of how assessment data are interpreted and communicated, both in how it was communicated to parents and students in different school settings and how teachers engaged students in the feedback process. Despite arguments that feedback needs to be specific and targeted, teachers continue to have limited conceptual knowledge about how to make feedback meaningful so that students actively engage with the feedback, and it contributes to improved performance. Feedback is highlighted both in the literature (Assessment Reform Group, 2002; Wiliam, 2018) and in government policies (DET, 2020) as paramount in the success of the teaching and learning and ultimately student outcomes. We argue that this is the role of assessment literacy, both for students and teachers, as they acknowledge what they know about assessment, how it is applied and ways in which to effectively engage. This study highlights the complex interactions between government policy, school contexts, students and parents in how teachers develop their knowledge about assessment and how they enact their assessment practices in the classroom.

The findings provide several key insights into teachers’ assessment literacy and assessment practices in junior secondary schools. Firstly, despite the teachers in this study demonstrating conceptual understanding of assessment policies and practices, the data analysis revealed that their conceptual knowledge was heavily influenced by federal, state and institutional policy documents and initiatives (see also Murchan, 2018; Verger et al., 2019).

Similar to findings by Connelly and Connelly (2013), the language of assessment found in key policy documents (e.g. formative tasks, diagnostic assessment, validity, reliability, provision of feedback) was evident in the participants’ explanation of their understanding of assessment and how this knowledge was then applied into practice. This is unsurprising given the investment by various government bodies in developing teacher standards and/or formal policies within state (e.g. DET, 2020; 2022a; 2022b) and national jurisdictions (e.g. ACARA, 2022; Australian Institute for Teaching and School Leadership, 2022). Such policy documents exemplify the importance of assessment and its role in the development of student learning and broader student outcomes, defining what teachers should know and what they should do (Acuña, 2022; Daliri-Ngametua & Hardy, 2022). This is notable given that Wyatt-Smith et al., (2010) argued that junior secondary levels are traditionally not required to follow the same high-stakes assessment practices and principles, which are shaped by a range of accountability measures, as senior secondary levels. The findings in this article suggest that the extent to which policy impacts junior secondary teachers is still high even though it is more low stakes and there is more flexibility possible with teaching approaches, relative to senior secondary teaching.

Another key finding revealed in the collected data exposes the complexity and diversity in how assessment is planned, practiced and engaged for a range of purposes and in varied contexts. The participating teachers’ application of their assessment knowledge was influenced by their negotiation of the social, cultural and contextual factors shaping their behaviours and practices (Fullan & Watson, 2000; Hay & Penney, 2012; Redelius & Hay, 2009; Xu & Brown, 2016). The schooling contexts represented in this article reflect different types of schooling (e.g. government vs. independent) and teachers’ participants who were positioned in various leadership roles. The participating teachers had to negotiate the social, cultural and contextual factors that shaped their school, while also navigating their positionality as both teacher and school leader. Ball (2012) acknowledge that teachers’ work is influenced by situational, professional, material and external dimensions thus leading to different readings, interpretations and enactments of their assessment practices. This study reveals that teachers’ interpretations and enactments of assessment policy are influenced by a range of factors. These may include such external factors as national/state curriculum and/or professional standards. Alternatively, at a local level, school/department/learning area policies and/or social practices related to assessment may impact this interpretation and enactment of assessment. Furthermore, while teachers’ practices may be shaped by contextual factors, this study exposes the need to explore how teachers’

Limitations

One limitation of this study is that it did not attempt to untether teachers’ assessment conceptions and practices from what was expected of them institutionally from their own personal beliefs and conceptions. Looney et al. (2018) suggest that teacher beliefs and assessment identities are also key components of assessment literacy and these were unfortunately not appropriately explored in this article. Looney and colleagues argue that teachers’ assessment identities, grounded in their personal conceptions of assessment, work alongside their understanding and use of assessment as defined and enacted by their local school context.

Another limitation is that data used in this study came from only one state of Australia. Future research should consider the effects of education policy and its implications in other contexts around the country. Another key area for future research is exploring how other social actors, such as teacher colleagues, mediate assessment knowledge, practice and engagement. This might provide insight into how teachers’ assessment literacy can be developed and improved within schooling contexts.

Conclusion

The findings from this study suggest that junior secondary school teachers interviewed possessed superficial attitudes and understandings towards being critical in their interpretation of assessment data and how they engaged students in the assessment process. For example, they could articulate the importance of policies impacting the governance across classes, subjects and schools, but also accepted that their procedures and practices were focussed in their local contexts. Like Dinan-Thompson and Penney (2015), our findings also demonstrated that while teachers possessed some sophisticated understandings about the roles, purposes and enactments of assessment, they expressed not being able to act in critical discussions surrounding assessment. We argue that the opportunity for teachers to be critical is clearly related to their values, beliefs and expectations (Hay & Penney, 2012; Looney et al., 2018). There is some evidence within the teacher’s quotes that highlighted a degree of reflexivity about assessment policies and assessment processes both within and outside the local contexts. However, within their responses, teachers were less prepared to share or highlight how their ‘…awareness of the unavoidable and disproportionate distribution of power through the process of assessment’ (Hay & Penney, 2012, p. 76) existed. We acknowledge that critical interpretation and engagement as an inherent component of assessment literacy is situated within and sometimes beyond local contexts. While the teachers expressed the importance of engaging both students and parents in the assessment process and providing opportunities to critically reflect and engage with assessment data with students and parents, the data suggest that there is still a lot of uncertainty on how to go about this systematically and efficiently. The ongoing development of critical interpretation and engagement with assessment must be enabled by teacher educators, researchers and school leaders. In doing so, advancing critical engagement in assessment can be achieved both through appropriate policy agendas, but also by ‘policy actors’ (Barnes, 2021; Hickey et al., 2022) who acknowledge the opportunities and challenges for a deeper critical engagement in assessment and how this can subsequently lead to improved student learning.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.