Abstract

It is well known that learners using intelligent learning environments make different use of the feedback provided by the intelligent learning environment and exhibit different patterns of behaviour. Traditional approaches to measuring such behaviour have focused on observational methods, think-aloud protocols, ratings and log data. More recently, the field of educational neuroscience has placed greater emphasis on real-time measures using eye tracking, electroencephalogram and functional magnetic resonance imaging. Our work fits into the latter vein. Drawing on a literature review from cognitive psychology, neuroscience and education, we describe our Learner Processing of Feedback in Intelligent Learning Environments model of how learners process feedback. We also present findings from a pilot study as a preliminary test of the model. Seventeen learners participated in an experiment using the intelligent learning environment known as Crystal Island. A range of data was collected, including a pre-test measuring prior knowledge, think-alouds, log data, video recordings, biometrics and post-task questionnaires. We discuss these findings and steps forward to further validate the model using physiological measures.

Keywords

Introduction

The Science of Learning Research Centre (SLRC) is a special research initiative of the Australian Research Council and provides the cross-disciplinary study of learning with researchers from education, neuroscience and cognitive psychology. There are three learning strands, with the current work falling under the umbrella of ‘measuring learning’. Experimental psychologists working in the area of learning traditionally use behavioural measures such as response times or response accuracy. However, in recent years measurement of brain function has complemented such measures to include electroencephalography (EEG) and event-related potentials (ERPs), as well as functional magnetic resonance imaging (fMRI). Cognitive neuroscience also aims to explore the neural bases of cognitive and behavioural phenomena using brain measurement. However, linking psychological theories of learning with neuroscientific data is quite different to the action research that characterises educational pedagogy and classroom practice (Pettito & Dunbar, 2004). As such, the need for inter-disciplinary collaboration, for a new science of learning, is more important than ever.

The current paper focuses on how learners process feedback in intelligent learning environments (ILEs). An ILE is a computer-based learning system that uses principles of artificial intelligence in education to track a learner’s progress and intervene with help support or hint levels when the student needs assistance. A typical ILE system can flag an error in red, provide written hints on the screen and as a final recourse, deliver a ‘bottom-out hint’ that provides the right answer. To date there has been no investigation into how learners use different types of feedback to complete such tasks.

Twenty-first century advances for computer-based learning have run concurrently with extensive research addressing different feedback types within diverse classroom and laboratory settings. The timing of feedback (Metcalfe, Kornell, & Finn, 2009), use of help functions (Aleven & Koedinger, 2000), use of formative feedback (Shute, 2008), scaffolded hint levels (Goldin, Koedinger, & Aleven, 2012) and corrective feedback in second language learning (Goo, 2012) are all such examples. While much research has focused on when an ILE should intervene as a learner works through a task (and what level of assistance to provide), there has been much less attention paid to how learners actually process the feedback delivered by the system, and how differences among learners may influence feedback. This paper addresses that gap. We also discuss the challenges encountered in conducting research that brings together methods from education, cognitive psychology and neuroscience to investigate questions about learning that can inform classroom practice.

Learner processing of feedback in intelligent learning environment (LP-FILE) model

ILEs monitor a student’s progress through learning tasks and also determine when they have made an error or appear to be floundering. At that point the system may provide feedback or the offer of help, which is available at the student’s request. The help typically flags the error, states what the student should do next and provides a rationale in terms of underlying problem-solving principles. It is assumed that feedback will help students to gain an understanding of key concepts and principles (Aleven, 2013; Anderson, 1993).

There exists a substantial body of research that addresses the relationship between feedback and learning (see Mory (2004) for a historical review of the literature) over the last 60 years. The quantity of research addressing different aspects of feedback (e.g. timing, help support and ability level) within diverse classroom and laboratory settings has also increased. For example, the emergence of computer-based learning (and theoretical discussions for feedback) placed an emphasis on computational models of self-regulated (Butler & Winne, 1995; Fischer & Mandl, 1988) and reflective (Collins & Brown, 1988) learning. Self-regulated learning (SRL) describes a cognitive operation that involves an internal monitoring process. Learners are aware of their own knowledge, motivational aspirations and ways of thinking. Recognising the need for help is a metacognitive skill that requires students to monitor their own progress and understanding (Aleven & Koedinger, 2000, p. 293).

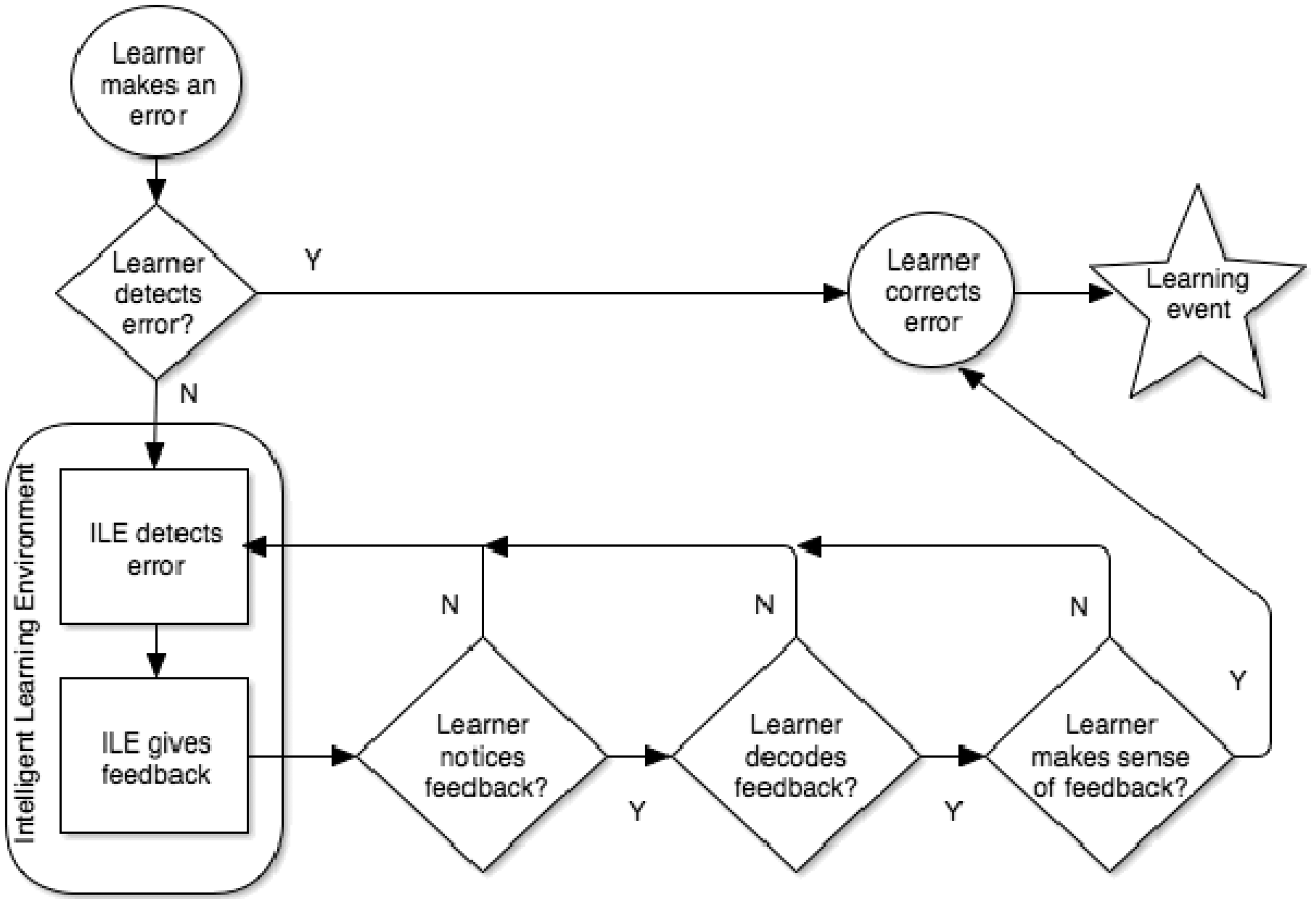

We present the LP-FILE model, as already proposed in Timms, DeVelle and Schwanter (2015) and provide a brief description of how it works. The LP-FILE model as shown in Figure 1 is designed with the key intention of capturing how learners notice, decode, receive and make sense of feedback when delivered within a typical ILE environment.

The LP-FILE model.

The process represented in Figure 1 begins at the top left with the circle labelled Learner makes an error. In this step, an error is made following the actions of the learner. An ‘error’ can be a mistake (such as entering the wrong value in a math problem or choosing an incorrect option) or a deviation from what the ILE judges as an optimal path for learning. After making an error, a decision point in the model is reached, namely Learner detects error? If the learner detects his/her own error they can then correct it, unaided by the ILE’s assistance system (Learner corrects error), and still learn from their internal monitoring system. This process can also represent what we call a learning event. The model hypothesises that such an event does not guarantee permanent learning; however, it may contribute towards long-term learning. The accumulation of such learning events reinforces neural pathways during the encoding of long-term memory that allows the brain to later retrieve the knowledge or skills gained.

The ILE is, of course, tracking the learner’s progress and if the learner does not detect that he/she has made an error (Learner detects an error? = N) we can trace a path down the left side of the model and implementation from the ILE’s help system. When the learner makes an error without realising, the ILE will detect the error (ILE detects ‘error’) and provide feedback to the learner (ILE gives feedback). Typical feedback upon initial error detection is ‘flagging’, usually by highlighting the written error in red. At this point, for the feedback to have any effect, the learner must notice it (Learner attends to feedback?). Assuming the learner has noticed feedback he/she must then decode the message (Student decodes feedback?). By decoding we mean extracting the message from the medium. For example, beyond flagging of errors, typical ILEs use written text in pop-up boxes to deliver hints. Decoding of a written hint would be the act of reading it, and decoding an aural hint, the act of listening to the message. After the learner has decoded the feedback message, they must then make sense of it within the context of the learning task (Student makes sense of feedback?). In other words, the student must work out what the message means and apply the feedback message to the problem at hand. Assuming the learner can do this, he/she will correct the error (Learner corrects error) and a learning event takes place (Learning event).

The LP-FILE model also takes account of individual differences (ID) among learners. For example, there are three decision points where feedback may fail to achieve the intended effect. At the first point (Learner notices feedback), the learner may carry on, regardless of the feedback. The usual result here is that the learner remains in the feedback loop and does not reach a learning event end point. To work around this the ILE may increase the level of help, such as providing a next level hint. The second step at which the learner may also remain within the feedback loop is at the decoding stage (Learner decodes feedback). Some learners may struggle with decoding the message from the medium (namely reading) due to poor reading skills. Third, the learner may notice the feedback and decode feedback but cannot make sense of it within the context of the task. One factor that can influence this is the learner’s prior knowledge in the domain. Existing research suggests that higher ability learners do better within computer-mediated environments that allow for more learner control, compared to lower ability students that do not (Recker & Pirolli, 1992). Also, those students with higher ability have shown to be better at using help after errors, compared to their lower ability peers (Wood & Wood, 1999). Another factor might be low working memory (WM) capacity; namely the inability to hold simultaneous information before moving to the next step (Learner makes sense of feedback) in the task (Carpenter & Just, 1989). A learner who fails to make sense of the feedback in the context of the task would remain in the feedback loop.

The model also posits that two learners can make the same kind of error (and receive the same initial feedback), but due to ability, ID and/or WM capacity they may process the feedback in different ways.

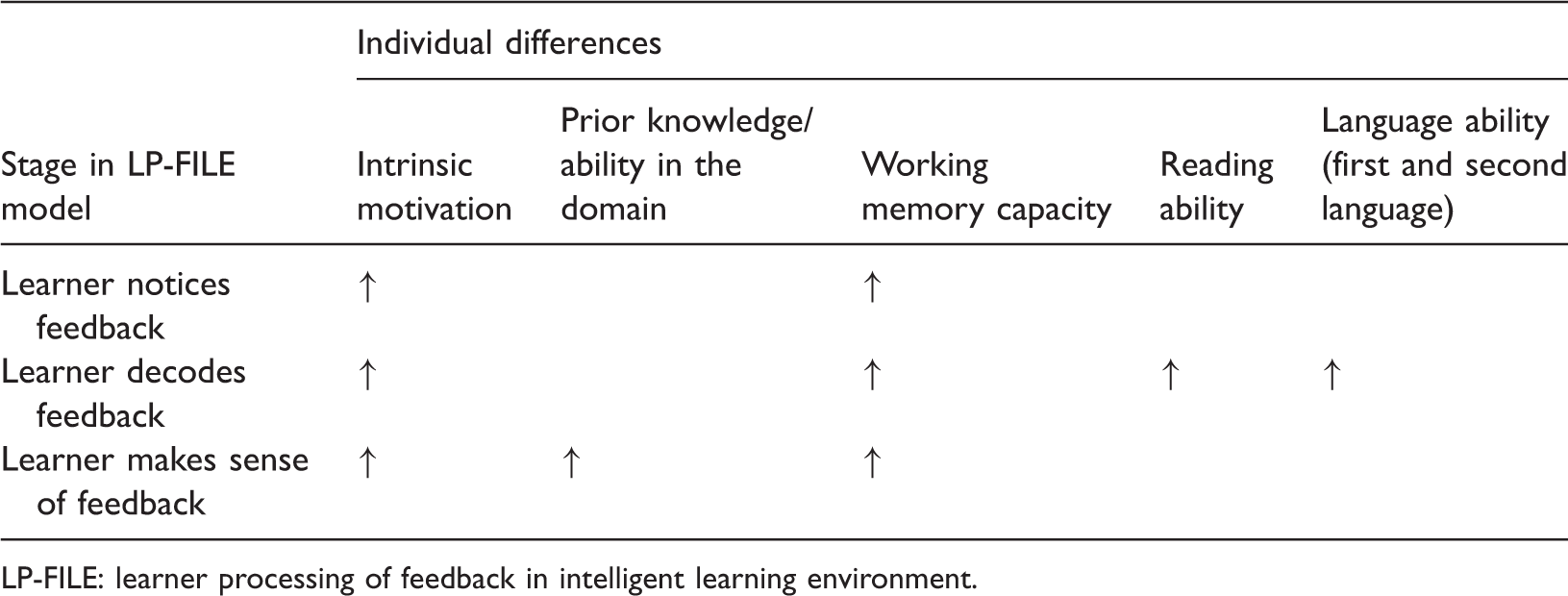

Factors that influence a learner’s processing of feedback in the LP-FILE model

Shute’s (2008) recent review of formative feedback identifies key features that most effectively promote learning. Formative feedback is defined as the communication of information to a learner, with the intention of modifying their behaviour to improve the learning process (Shute, 2008, p. 176). Some of the influencing factors relate to the feedback itself (e.g. feedback type and timing) while others relate to the learner (e.g. ability level and goal orientation). The outcome of her review, however, shows an inconsistency in findings across studies. Shute (2008, p. 176) suggests that these inconsistencies are due to IDs among learners and reflect motivational expectations such as intrinsic motivation, metacognitive skills and academic achievement. Similarly, Goldin et al.’s (2012, p. 6) work on the use of different hint levels found that students are equipped with disparate learning skills, and therefore approach information within a hint in contrasting ways. For example, some may be proficient at applying unknown principles, but poor at identifying obvious problem features. It is these IDs among learners that we posit will influence how different learners process feedback as represented in the stages of the LP-FILE model. Differences in intrinsic motivation may influence the whole learning process, including the learner’s processing of feedback. Evidence from other studies shows that learners might differ on this aspect. A recent review by Aleven et al. (2016) on the effects of hints in ILEs concludes that while providing principle-based hints may help learning of concepts and procedural knowledge, for students to benefit they must ‘self-explain’ the feedback offered and be able to make sense of it. This represents a shift in their thinking about hints (Aleven, McLaren, & Koedinger, 2006) which did not focus on the sense making that needs to happen in the learner’s processing of the feedback. The LP-FILE model makes this explicit in the Learner makes sense of the feedback step.

Other researchers have identified IDs that would influence the Learner decodes feedback step of the LP-FILE model. These include differences in reading skill (Carpenter and Just, 1989) and language skills (first or second language). As much of the feedback in current ILEs is provided in the form of text, some of which can be complex, a learner’s reading and language skills can be very influential on his/her ability to decode the feedback. If reading and language skills are insufficient to decode and extract the meaning from the feedback message, a failure to understand the feedback may result in a cognitive load added to the processing.

Another factor that research has shown to affect a learner’s processing of feedback is WM capacity, which refers to the ability to store and process information simultaneously in real time (Andrews, Birney, & Halford, 2006; Harrington & Sawyer, 1992). In the case of an ILE, the learner can be simultaneously exposed to sounds, images, feedback and comprehension tasks while navigating around a game-like environment. They must actively process information in real time and draw from prior knowledge in long-term memory (Mayer, 2014, p. 134). However, a learner has limited WM capacity: how limited will depend on a number of factors related to programme design and learner ID just described. If a learner has a low WM capacity, it could influence the Learner decodes the feedback step of the LP-FILE model. The learner’s ability to decode the feedback message would require allocation of WM to that task when other information about the task is also being held in WM. Therefore, limits on a learner’s WM would also limit his/her ability to decode the feedback.

During the Learner makes sense of the feedback step, the learner first notices and decodes the feedback message and then tries to make sense of such information. This process appears to be influenced by the learner’s prior ability in the domain of instruction. Several studies have investigated this. Mason and Bruning (2001) reviewed the literature on feedback and computer-based instruction to further investigate the role of student ability. The authors then developed a theoretical framework that included student achievement levels, task level, timing of feedback, type of feedback and prior knowledge. The authors showed that students with low achievement levels perform better on both simple and complex tasks when feedback was immediate. However, students with high achievement levels perform better with delayed feedback, particularly on complex tasks. Also, a data mining study of log data from the Geometry Cognitive Tutor by Roll, Baker, Aleven, and Koedinger (2014) showed that students with a medium level of domain skills benefitted from hints. This suggests that successful use of feedback is, in some way, mediated by student ability.

How individual differences might influence three stages of the LP-FILE model.

LP-FILE: learner processing of feedback in intelligent learning environment.

In the next section, we discuss how the LP-FILE model might be validated.

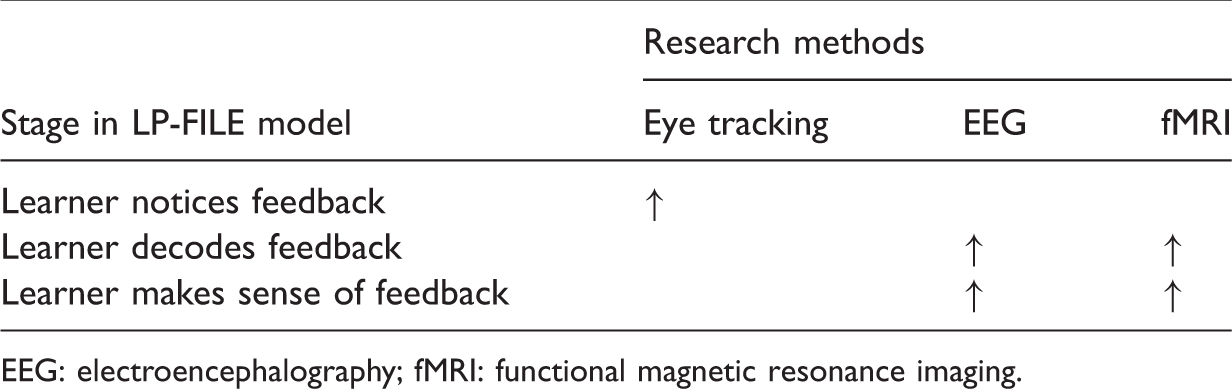

Validation of the LP-FILE model

We are in the process of designing and implementing a study to validate the LP-FILE model and have reviewed the literature to determine what methodologies might be suitable. In recent years, there has been a shift in how feedback and learning is measured in general and with ILEs in particular. Experimental procedures such as eye tracking, EEG and fMRI are cases in point. We now discuss those studies that we believe have most relevance for validation of the LP-FILE model.

To investigate the Learner notices feedback step of the model requires a methodology that will determine whether, when feedback is presented, the learner visually attends to that part of the screen. Eye-tracking measures are thought to provide a moment-to-moment representation of the participant’s visual attention as they carry out a task. Very early work in this area was driven by theories of language processing and had a strong reading focus (see Rayner (1998) for a comprehensive review). Temporal measures of gaze fixations (time on task), saccades (movement of the eyes from one point to another) and regressions (revisiting previous information) are collected in specified regions of interest. To date, learning and eye-tracking studies have focused primarily on information processing and the effect of instructional strategies (Lai et al., 2013). Eye-tracking studies of the effects of instructional strategies have focused predominantly on student learning in multimedia environments. These have included gaze-based tutoring systems (D’Mello, Olney, Williams, & Hays, 2012), the use of concept maps (Amadieu, Van Gog, Paas, Tricot, & Mariné, 2009) and animation manipulation (Boucheix & Lowe, 2010). Of particular relevance is the work by D’Mello et al. (2012) that investigated if a feedback intervention strategy during a biology lecture improved students’ motivation to learn. Students watched four lectures delivered via an animated agent on screen. The ILE measured student engagement using an eye tracker that followed eye gaze patterns. Students who looked away from the screen for more than 5 s triggered one of four randomly selected feedback responses (i.e. please pay attention, I’m over here you know, snap out of it, let’s keep going, you might want to focus on me for a change). The results showed that gaze-reactive dialogues refocused most students back to the computer screen when they looked away, although with much variation in how this was carried out (i.e. immediately, with more than one cue and with no adaptation of behaviour). The gaze-reactive dialogues also positively influenced learning outcomes. However, this finding was more marked for students with higher pre-test aptitude scores (D’Mello et al., 2012, p. 391). The study also raises the question of what students were doing when they looked away from the screen. It is plausible that processing of the lecture content was being carried out regardless of whether they focused on the screen or not. It should also be noted that 16 of the 48 students (33.3%) did not avert their gaze during the experiment.

A more recent study by Conati, Jaques and Muir (2013) used an eye tracker to measure students’ attention towards adaptive hints using a number factorisation computer game. The programme was based on a probabilistic student model that drives hint activation and included three hint types (definition, tool and bottom out). The area of interest (AOI) was the text from the hint message. Those findings showed that careful reading of hints generally indicated that the next move was more likely to be correct. Also, attention to definition hints decreased during the second half of the game. The authors put this latter finding down to a repetition effect: students grew bored of the same definition hint being repeated which resulted in decreased attentional processing. Of particular interest was the attitude towards receiving help (collected during the post-questionnaire) that mediated time on both gaze duration and attention to hint messages (Conati et al., 2013, p. 158). More specifically, those students with a positive attitude towards help spent more time on reading help messages.

Conati et al. (2013) and D’Mello et al. (2012) studies show that eye-tracking technology does offer a method to investigate whether the learner looks at the feedback offered by the ILE, and how long their gaze rests on the feedback message. However, feedback on screen can occupy quite a small AOI, such as altering text to red or flagging an incorrect response with a symbol. Also, eye-tracking technology needs multiple trials of the same type for signal averaging. This requirement can place a constraint on the feedback task and design, and needs to be carefully considered when using experimental approaches such as eye tracking.

The challenge for investigating the Learner decodes feedback and Learner makes sense of feedback steps of the model is that these are internal mental processes that are hard to detect with behavioural measures. Traditional measurement of learning uses assessment of achievement after the learning has occurred to infer processing during the act of learning. However, on its own, this kind of after-the-fact assessment of learning is not helpful in validating the LP-FILE model because it does not directly provide information on the steps involved, only indicators of the outcome.

A method from neuroscience, from which inferences can be drawn about the processing occurring during learning, is EEG. EEG records signals of brain activity via electrodes attached to the scalp and has been used successfully to study ERPs during feedback in laboratory experiments. Over 200 studies have revealed a front central negativity called the feedback-related negativity (FRN) that appears after negative feedback (for a summary see Walsh and Anderson (2012)). However, there is currently scant EEG-based research on student learning and feedback in ILEs. One exception is the work of Chaouachi and Frasson (2010). They examined the relationship between task, a learner’s emotional state and feedback using an intelligent tutoring system that incorporated two emotional dimensions: arousal (calm or aroused) and valence (pleasant or unpleasant). Both dimensions were designed to guide the system into choosing the most appropriate feedback intervention strategy. The EEG engagement index was a modified version of an earlier NASA system (designed to measure how pilots switched between manual and automatic piloting) and modulated task allocation. Their findings showed that a series of both correct and incorrect answers lowered engagement. There are some aspects of this research, however, that lack a pedagogical perspective. For example, participants carried out a problem-solving task that involved general knowledge questions, spell checking and responding to a series of logical statements. This in itself is a knowledge test rather than a learning task. Because every response received feedback, the overall experiment became very task driven. Also, the computation of the engagement index, and related EEG signal, lacks little (if any) neuroscientific, psychological or pedagogical theoretical support, and as such the method and design lack validity and reliability. This is important given that the original index was designed to determine switches between automatic and manual task controls. Criticisms aside, the authors address a gap in current educational research: a pedagogical intervention strategy that optimises ILEs via the monitoring of affective states. EEG warrants consideration as a method that can investigate the level of brain activity when a student is presented with feedback in an ILE and can be used to determine which areas of the brain are most associated. This experimental approach could be used to test the Learner decodes feedback and the Learner makes sense of feedback steps of the LP-FILE model.

Another method from neuroscience research that offers insight into brain activity during mental processes is fMRI, which reflects changes in relative blood oxygenation level that occur in response to neural activity. When an area of the brain is activated, it uses more oxygen. To meet this demand blood oxygenation increases in the active area. Through measuring relative changes (active state versus a comparison baseline) blood oxygenation levels using fMRI, activation maps can be created that show which parts of the brain are associated with a particular mental process.

There is evidence from combined behavioural and fMRI data that suggests adults adjust more successfully to negative feedback compared to young children (Crone, Zanolie, Van Leijenhorst, Westenberg, & Rombouts, 2008; Van Duijvenvoorde, Zanolie, Rombouts, Raijmakers, & Crone, 2008). Initial processing of negative feedback appears to emerge between 8–9 and 11–13 years old, and age groups adopt different strategies. Eight- to nine-year-olds place greater value on positive feedback, compared to 11–13-year-olds who learn from conflict signals. In comparison, young adults (18–25 years old) simultaneously incorporate positive and negative feedback, and also evaluate conflict signals (Van Duijvenvoorde et al., 2008, p. 9501).

How different methodologies might be used to validate the three stages of the LP-FILE model.

EEG: electroencephalography; fMRI: functional magnetic resonance imaging.

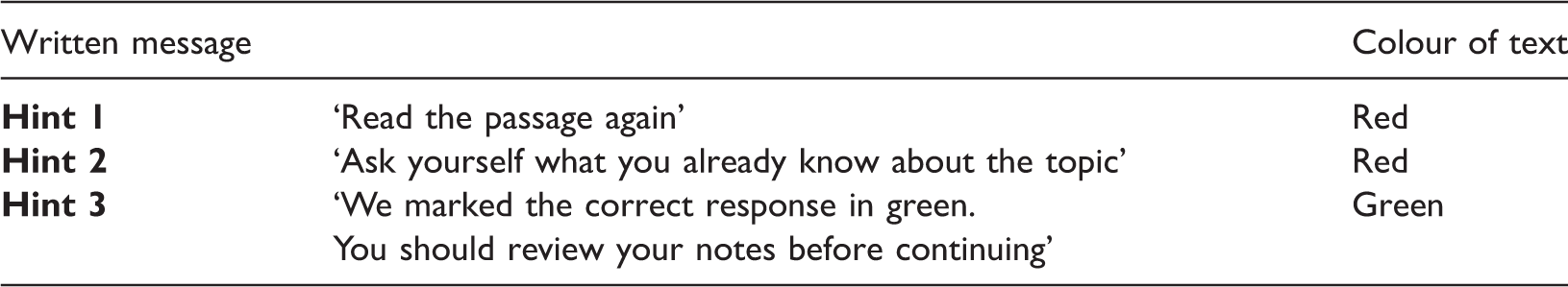

Written message across three hints and related text colour.

In the next section, we describe a pilot behavioural study that investigates how learners process feedback within an ILE in order to test key aspects of the LP-FILE model. This is intended as a precursor to determining how a more fine-grained study could be designed that uses eye tracking and/or EEG.

Crystal Island pilot study

One of the challenges for educational neuroscience studies is to ensure that there are clear links from classroom practice to the research laboratory studies and vice versa. To begin our investigation of the LP-FILE model, we chose to start at the classroom practice end of this chain by studying how learners processed feedback in Crystal Island: The Lost Investigation (CI), an ILE developed at North Carolina State University. Crystal Island is a game-based learning environment for middle grade science and literacy and has been used by over 4000 students in North Carolina. Our research questions were as follows:

How do students notice, decode and make sense of the various types of feedback provided in CI? What differences are there among learners, in how they use and react to the various types of feedback? How do learners process feedback related to errors they make?

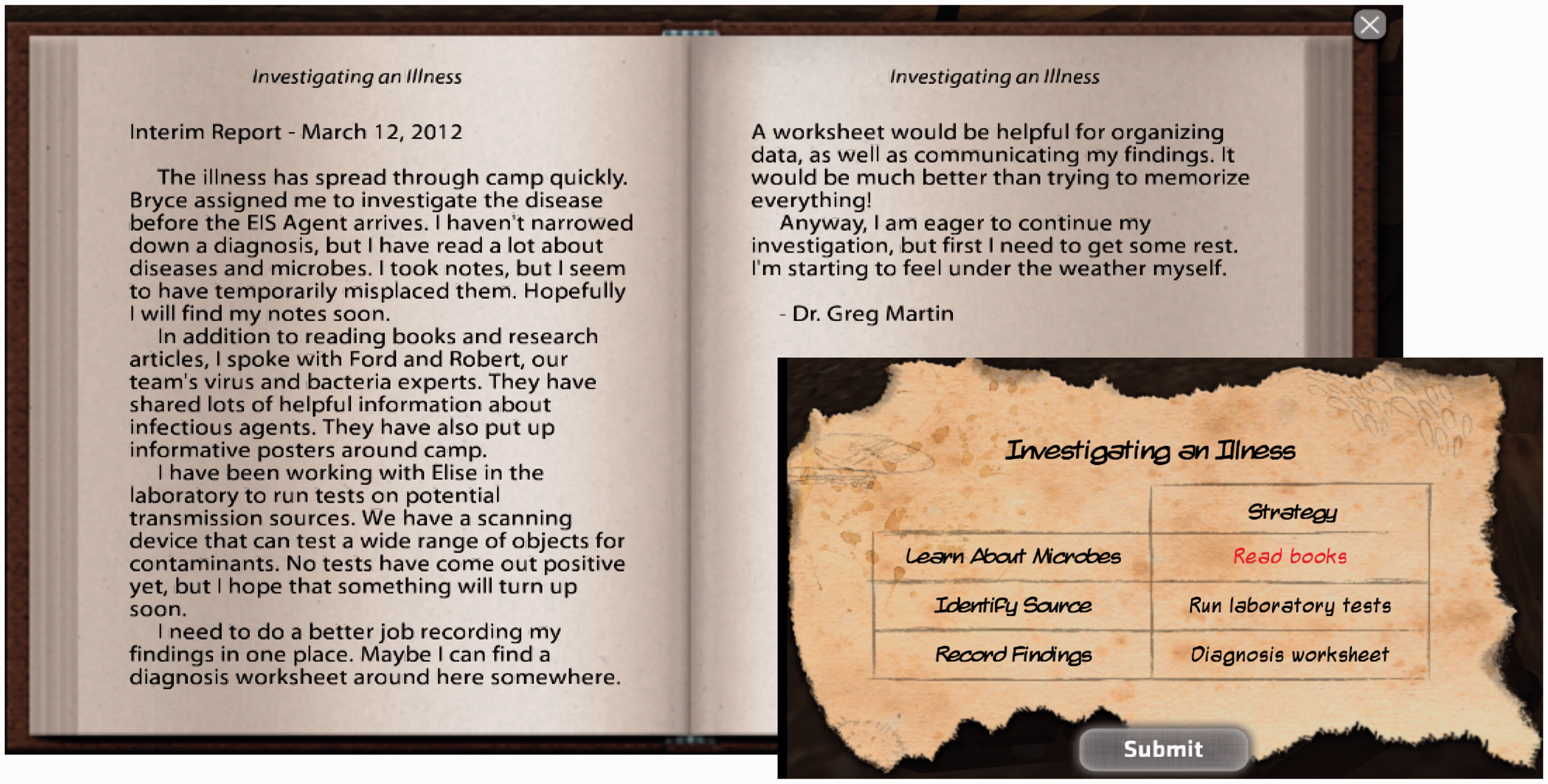

In Crystal Island students adopt the role of researcher to investigate the outbreak of disease on the island. They must navigate around the camp to find hidden clues about the disease. The learner must then read a set of comprehension texts and respond to tasks shown in the lower right of Figure 2.

Example of Investigating an Illness task and related comprehension text.

As shown in Figure 2, incorrect responses are flagged in red. If any of the three answers are submitted incorrectly, the learner will receive feedback via three hint levels. All hints and related message content are written in a text box that covers the whole screen. The box remains on screen until the learner clicks ‘okay’ to remove it. Table 1 shows the three hint levels and message content.

Participants are presented with three questions within the ‘investigating an illness’ task and can only ‘submit’ their responses once they have answered all three questions. We now describe the method, design, procedure and results from our pilot study that tested the feedback aspects of CI.

Method

Participants

Eighteen students were recruited for the study. One chose to withdraw midway through the experiment, leaving 17 students who participated. The mean age was 13.53 (SD = 0.72). There were 10 males and seven females. All spoke English as their first language.

Materials

Crystal Island: The Lost Investigation is a science-based enquiry programme developed at North Carolina State University. Students take on the role of researcher to investigate the outbreak of a disease in a virtual research camp. They must navigate around the island to find hidden clues about the disease and are presented with specific tasks to complete throughout that process. If a task is not completed correctly, feedback hints are provided automatically by the system. Table 3 shows the content of the three levels of hints provided. All participants first completed an eight-item pre-test on science content equivalent to the tasks presented in CI. To help reveal students’ mental processes as they worked with CI, we also designed think-aloud protocols that encouraged students to verbally express their thoughts. As this pilot study did not involve any neuroscience methods like EEG we wanted to have a measure that correlated to mental activity and so we used Empatica wristbands that captured heart rate and galvanic skin response in real time to monitor learners’ physiological responses to feedback. Upon finishing, learners completed a post-interview questionnaire that consisted of 10 questions asking about their overall impressions of CI.

Design and procedure

Testing was done individually and lasted approximately 55 min. All participants completed the pre-test first. After an initial briefing, each learner was fitted with an Empatica™ wristband that captured data via bluetooth onto an iPad and was then streamed to the control room of the SLRC’s Learning Interactions classroom at the University of Melbourne. Each learner participated in a short tutorial that lasted 10 min and practised talking aloud. Once the experiment started learners worked through the learning tasks in CI for 20 min and log data were collected based on keystrokes and mouse clicks. Learners’ reactions to feedback and use of hints were observed and documented by the researcher using checklists designed for this purpose. General observations on usability, gaming and confidence in tasks were also recorded.

Results

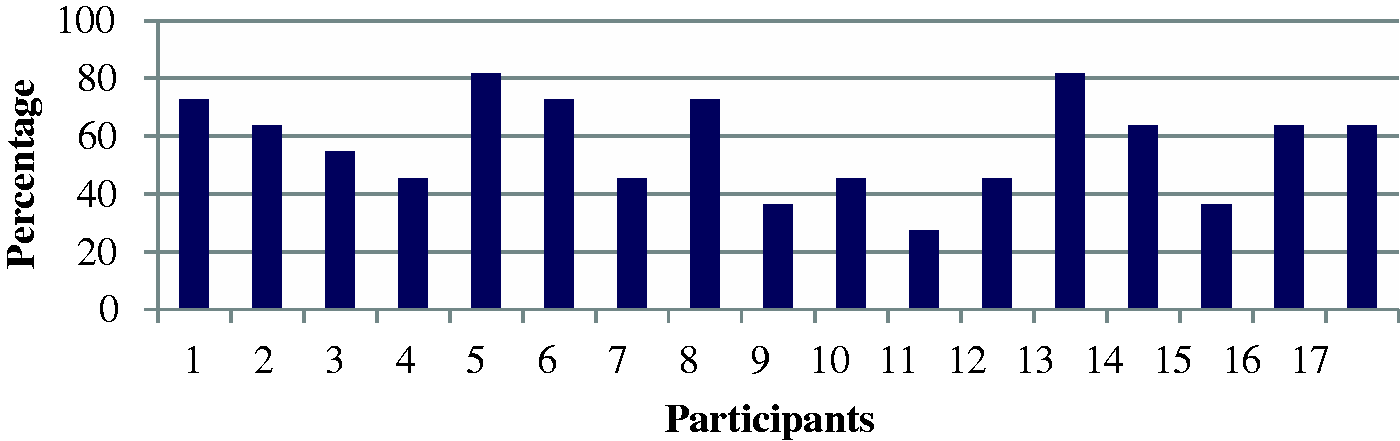

Students worked at different rates and we focus here specifically on the findings from the ‘investigating an illness’ task that all participants completed in the 20 min time frame. Figure 2 shows a screen shot from that task, which involved students reading a passage from The Lost Investigation and answering questions about it. Students’ science ability as measured by the pre-test ranged from 27 to 82% correct (M = 57.2%), as shown in Figure 3.

Percentage correct on Crystal Island pre-test.

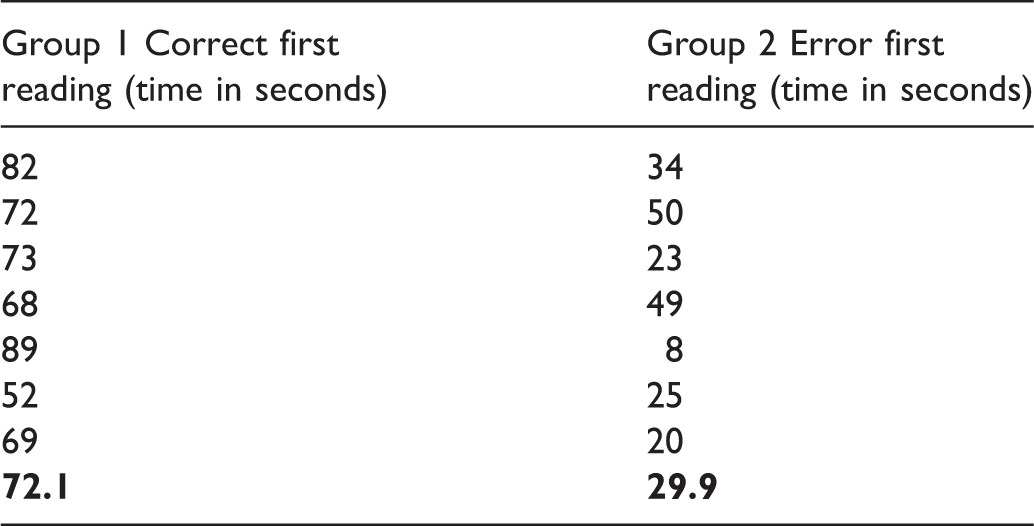

Mean comparisons of first reading time for investigating an illness.

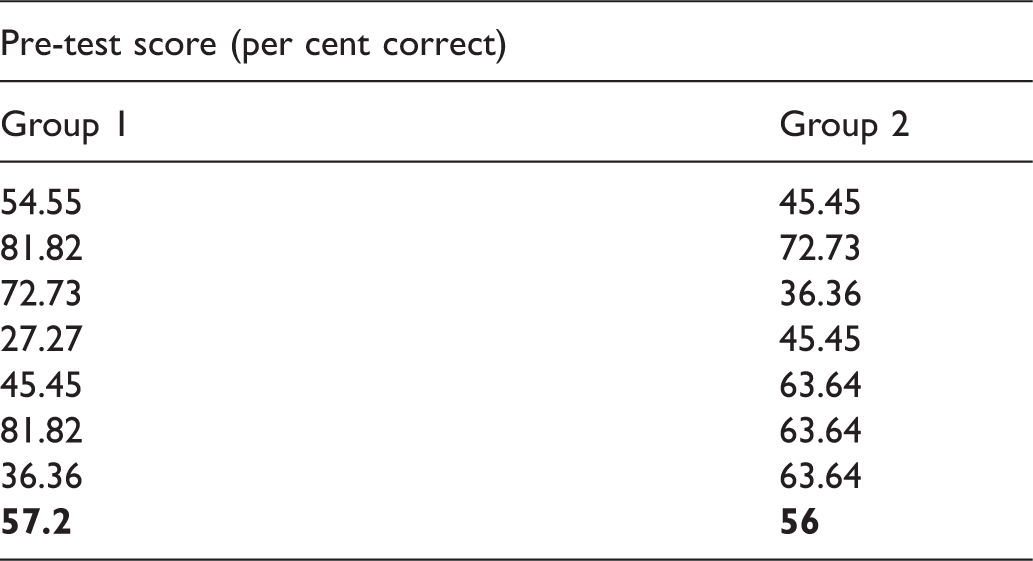

Pre-test score percentages for Groups 1 and 2.

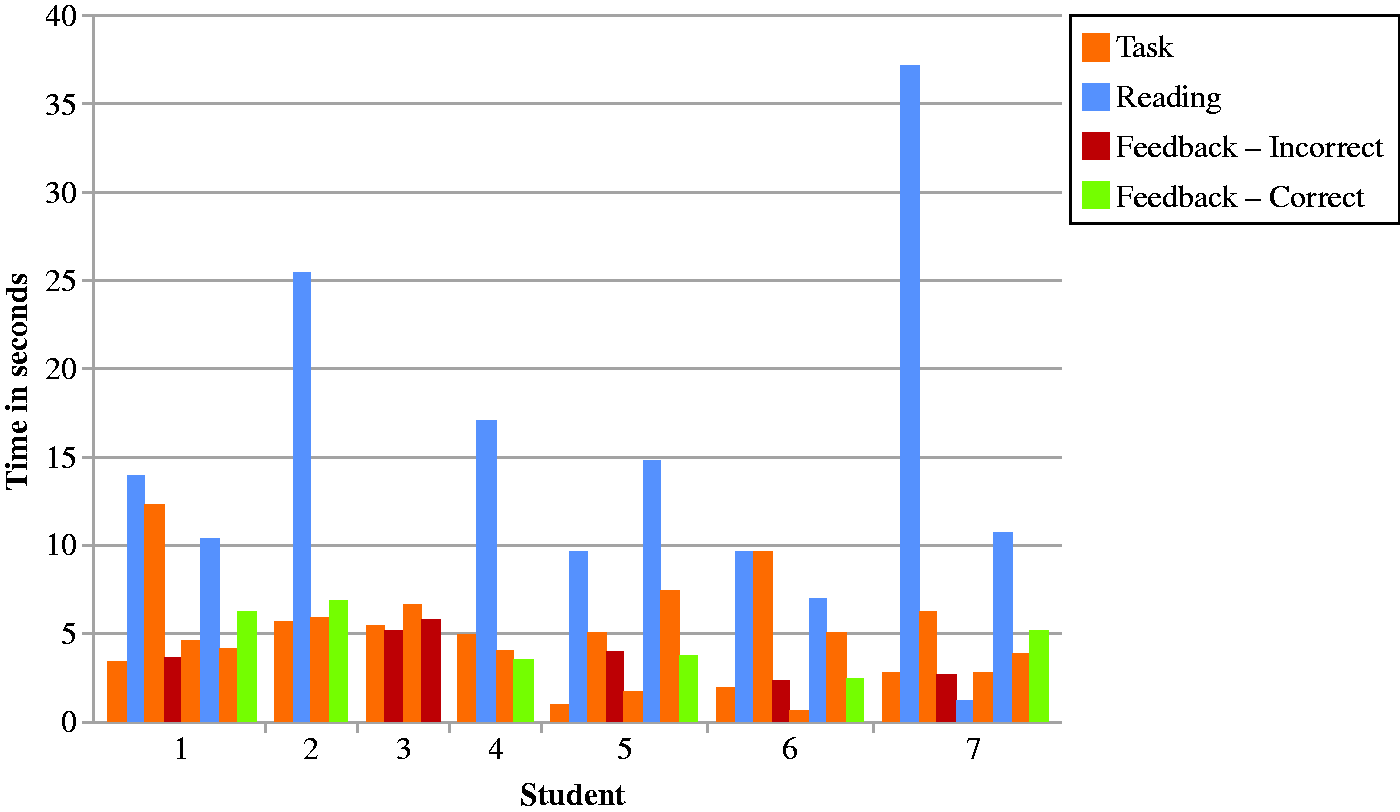

Only one student received the bottom-out (third level) hint that provides the answer (see Table 3). This latter finding suggests that students did not use the bottom-out hint to misuse (namely game) the system. We then looked more closely at Group 2 to see how they processed feedback on tasks following their initial wrong response, as shown in Figure 4. The chart shows, for each of the seven students categorised into Group 2, the time spent (a) reading the task, (b) reading the text in passage, (c) receiving feedback on incorrect action and (d) receiving feedback on a correct action.

Group 2 action sequence following first wrong response.

Figure 4 shows that six of the seven students read the text again when prompted by Hint 1. However, the amount of time spent on reading the text again was still well below the average carried out by Group 1 (see Table 5). Only one student (Student 3) did not follow the instructions from Hints 1 and 2 and continued to make two more errors.

The Empatica wristband used to gather physiological signals in real time, including heart rate and galvanic response, was not fit for purpose in this experiment. Even though students were instructed to keep their wrist still, there was still a lot of movement made during the experiment and this interfered with data capture and subsequent analysis, so we have not reported these results.

The post-interview questionnaire (and 10 questions asking about their overall impressions of CI) showed very uniform responses across participants. For example, the majority felt the hints allowed them to find the right answer, felt they learnt something new, enjoyed the programme and found the tasks easy to carry out.

Discussion

We now discuss our original research questions in light of the pilot study findings and the LP-FILE model:

How do students notice, decode and make sense of the various types of feedback provided in CI?

CI flags errors in red, and it should be noted that such flagging operates separately to the three hint levels. Flagged red errors are simply ‘noticed’ using the LP-FILE model. We define noticing as a surface level operation that does not involve deeper processing effort. The Level 1 Hint (i.e. read the passage again) provides an instructional message which learner must first notice, but then decode it, although this is a simple message to decode especially as all the students spoke English as a first language and were around 13 years old. The student must also understand the meaning of the message and apply that to the problem at hand, in this case by returning to the reading passage to read it again. We saw consistent evidence of this during our pilot study, although with much variation on time spent on task. The Level 2 Hint (think about what you know about the topic) also requires ‘decoding’ and ‘making sense’. However, because we cannot measure the student’s internal thinking process we cannot test this model. The final ‘bottom-out’ hint does not apply to the model at any stage because the model relies on a response from the learner.

What differences are there among learners, in how they use and react to the various types of feedback?

More than half of the participants did not make an error when carrying out the ‘investigating an illness task’. More specifically, those students (Group 1) that spent significantly longer reading the comprehension text performed correctly at their first attempt of the multiple-choice tasks. In contrast, those students that made an initial error (Group 2) spent little time reading the text. The pre-test results (see Table 4) suggest that prior ability was not a reason for group differences. Group 2 learners may not have been engaged in the task, had an ambivalent attitude towards receiving help (Conati et al., 2013) or were generally poorer readers.

How do learners process feedback related to errors they make?

Those students that made an error following the first reading all went back and read the text again – and this resulted in most submitting a correct response on the second or third attempt. However, the amount of time spent reading varied considerably across participants. It seems that many followed the instructions to read the passage again, but needed further corrective feedback to guide them on where to focus.

Overall, there were a number of lessons learnt from our pilot study and we discuss these next.

Student feedback on the post-task questionnaire was extremely uniform. For example, the majority felt the hints allowed them to find the right answer, felt they learnt something new, enjoyed the programme and found the tasks easy to carry out. However, our first participants struggled with the think-aloud task during the practice phase that involved simultaneously navigating around the system, reading the comprehension passages and responding to the MC tasks. Think-alouds encourage the student to continuously voice their thoughts as they carry out a task and are widely used in educational research. However, within the current context it appeared that WM capacity was overwhelmed, and so this task was removed from the experimental phase.

We also underestimated how quickly participants would move through the programme. The experimental phase lasted 20 min to reduce fatigue and to limit the total time in the laboratory (including the pre-test, post-questionnaire and debriefing period) to approximately 60 min. It is for this reason we only captured complete data across all 17 participants for one task (investigating an illness). Learners put under more pressure to perform, with tighter time constraints, may produce quite different results.

We also found a number of weaknesses in CI feedback design. Hint 1 encouraged students to read the text again upon a wrong response – and this was carried out by all but one participant during the ‘investigating an illness’ task. However, Hint 2 (asking a student to think about what they know) was introspective, and the response to it cannot be measured and so it had no real value to the current study. We recommend that feedback in general, and hints in particular, addresses this measurement issue. Only one participant continued to the third (and final) bottom-out hint that provided the answer. Research shows that gaming the system emerges as the student begins to figure out how the system works (Aleven & Koedinger, 2000, p. 299); however, our cohort did not take this route. Repetitive feedback can also negatively affect student responses (Conati et al., 2013). To what extent the current three tiered hint system triggered a repetition effect is a valid question for future research.

There are also a number of psycholinguistic elements within the three-tiered hint provision that we feel undermine ILEs in general and CI in particular. For example, CI provides written-only feedback, with tasks centring on comprehension texts that must be read first before carrying out the task at hand. As discussed earlier, there is research to suggest that such settings benefit more from aural rather than printed input (Ginns, 2005; Mayer, 2009). Second, there has been scant focus on measurement issues such as word frequency, sentence length, syntax, semantics, phonological properties, ambiguity and prosodic structure when designing the feedback message (DeVelle, 2015). We acknowledge there are valid reasons for this oversight: greater emphasis on the mechanics of the ILE and cross-disciplinary areas that lack experts in psycholinguistics and language processing.

We have referred to a number of weaknesses within the current CI system. However, these are not overt criticisms per se. CI was designed to address new ways of teaching a science enquiry programme via a computer-driven system that allows for the absence of a teacher. Our approach is to measure, from a neuroscientific perspective, how students learn from the feedback provided within such systems. Such an approach moves away from the practical implications of the system (i.e. teaching), to a focus on how the learner processes feedback within such environments.

Conclusions

This paper has introduced the LP-FILE model, examined how it relates to existing research and discussed it within the context of our Phase 1 pilot study findings, and the efficacy of hints built into the ILE Crystal Island. Our conclusion at this stage is that existing research on the use of feedback in ILEs has shown that processing of feedback is related to prior knowledge but not fully explained by it. Various researchers point to IDs as being a further possible explanation. The LP-FILE model is complementary to this view: the three steps of noticing, decoding and making sense of feedback are influenced by IDs. Our Phase 1 pilot study also found similar results to prior studies in that prior knowledge does not always predict how learners interact with feedback.

There were some limitations to the validation of the LP-FILE model based on biometric and think-aloud data that revealed non-significant results during the feedback episodes. Second, the outcomes of this study highlighted the lack of feedback occurrences within CI – and this has repercussions for the design of future experiments that focus on eye tracking or EEG-driven data collection.

Increasing the number of feedback instances within CI was a possible but costly option. We are now focusing on a creative insight problem-solving task (Sandkühler & Bhattacharya, 2008) that provides the number of trials necessary for neuroscientific experimentation. Our preliminary work using this task with adolescents (DeVelle, 2016) also incorporated a feedback component and has shown some interesting results that warrant further investigation. Therefore, we continue to focus on the role of errors as this is the core of the model we are testing. However, we will refine the type and mode of feedback based on what we have learnt and place greater emphasis on the psycholinguistic properties of the message – a focus that has been virtually overlooked to date. The primary goal is to take our experimental findings and apply them to a broader educational practice. This is also the principal aim of the SLRC – to create a translational science of learning.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.