Abstract

Background:

We evaluated the reliability, validity and acceptability of the Pediatric Symptom Checklist-17, a free, brief measure of child mental health, in a sample of parents of preschool-age (3–5 years) and school-age children (6–17 years). This is the first study to examine parent-reported Pediatric Symptom Checklist-17 for children aged 3 years.

Method:

A national community sample of Australian parents (N = 2097) completed a demographic questionnaire and the Pediatric Symptom Checklist-17. We used a cross-sectional, test–retest design to assess the structural validity, internal consistency, test–retest reliability, concurrent and predictive validity of the Pediatric Symptom Checklist-17 total and subscale scores. Predictive validity was evaluated using a sub-sample of parents (n = 122) who completed the Pediatric Symptom Checklist-17 and Child Behaviour Checklist at a second timepoint. Normative data were also produced.

Results:

Factor analysis supported the three-factor model for the Pediatric Symptom Checklist-17. Total (r = 0.82–0.93) and subscale scores (r = 0.75–0.89) strongly correlated with the Child Behaviour Checklist and demonstrated strong internal consistency (total scores α = 0.87–0.88). Test–retest reliability was acceptable for school-age children. The Pediatric Symptom Checklist-17 demonstrated excellent classification accuracy for preschool children; however, it did not perform as strongly in older age ranges. Normative data for age and gender were produced for the measure. Results indicated high levels of parent acceptability for the measure.

Conclusion:

Findings contribute new validation evidence for the use of the Pediatric Symptom Checklist-17 as a screening and assessment measure in research and clinical settings, for parents of children from 3 years and above. This study is the first to ascertain Australian normative data for the Pediatric Symptom Checklist-17.

While it has been established that many mental health (MH) problems begin before 14 years of age (Kessler et al., 2007), a recent review of prospective longitudinal studies found MH difficulties experienced in children as young as 5 years are associated with adult MH disorders (Mulraney et al., 2021). This highlights the need to identify preschool children as a cohort of focus due to parents’ lack of confidence in recognising MH difficulties, high proportion of children with clinically significant MH problems and the high levels of unmet MH needs in this age range (Kataoka et al., 2002; Oh et al., 2015; Rhodes, 2017; Robinson et al., 2008).

A plethora of child MH measures currently exist, which may assist parents to identify child MH difficulties. However, there is a lack of available, brief, parent-report measures for screening and assessment that are appropriate for a range of ages including preschool children aged 3–5 years (McLean et al., 2024; Tully et al., 2024). Measures also need to produce valid and reliable scores (Humphrey and Wigelsworth, 2016). In addition, clinical cut-offs and normative data relevant to specific populations can facilitate the use of measures in research and clinical practice (Kendall and Sheldrick, 2000). The prevalence of child MH problems in Australia is high and stable (Sawyer et al., 2018), despite the increased availability of evidence-based intervention. There is an urgent need to identify measures that can be used to detect children with MH problems in the Australian context. Thus, the aim of this study was to validate and ascertain normative data for the Pediatric Symptom Checklist-17 (PSC-17) in a national Australian sample.

Overview of the PSC-17

Adapted from a 35-item version (Jellinek and Murphy, 1988), the PSC-17 is a 17-item, parent-report measure of child MH, validated and normed for use with children aged 4–17 years in the United States (Gardner et al., 1999). Total scores and three subscales based on internalising, externalising and attention problems are produced by summing responses to items. Items are based on a reflective model and response options include Never, Sometimes, Often. It has been one of the most frequently recommended screening measures by US MH authorities (Semansky et al., 2003). Within Australia, interest in the PSC-17 has been growing due to the increasing use of digitised measures in clinical and research settings and lack of freely available measures for assessing parent reports of child MH (Tully et al., 2024).

Widely used, the PSC-17 is considered feasible to implement in primary care settings due to its advantages over other measures; it is brief, free to implement in digital and paper-based format, easy to comprehend, simple to score, covers a wide range of ages and is also suitable for use as a screening measure by caregivers who may have limited or no training in MH, such as early childhood educators and teachers (Sheldrick et al., 2012).

Psychometric properties

The technical accuracy of measures, as indicated by various psychometric or measurement properties, is critical when considering the use of measures in screening and assessment of child MH. The PSC-17 has established validity and reliability in children aged 4–17 years, and as part of a recent review of 95 child MH measures, was rated as having excellent psychometric properties in terms of reliability, validity, normative data and treatment sensitivity; one of 21 measures to be rated as such (Becker-Haimes et al., 2020; Spencer et al., 2020; Stoppelbein et al., 2012). Multiple studies have reported strong internal consistency for the PSC-17; Cronbach’s alpha 0.85–0.89 for total scores, 0.78–0.79 internalising, 0.80–0.83 externalising and 0.82–0.83 attention (Gardner et al., 1999; Murphy et al., 2016; Wagner et al., 2015).

There is limited research on the test–retest reliability of the PSC-17. One community study reported good test–retest reliability (intraclass coefficient [ICC] = 0.85) after 8–14 days (Murphy et al., 2016). Another study reported an ICC of 0.55; however, the retest was implemented at 6 months, among children in foster care (Jacobson et al., 2019).

Previous research has reported the PSC-17 subscales had significant relationships with associated diagnosed disorders, e.g., internalising subscale scores with depression and anxiety, indicating construct and convergent validity (Jacobson et al., 2019). The original validation study for the PSC-17 found total scores correlated with the clinician-rated Children’s Global Assessment Scale (r = −0.64; 95% confidence interval [CI]: −0.71 to −0.55) and Child Behaviour Checklist (CBCL) (r = −0.60; 95% CI: −0.67 to −0.52) (Gardner et al., 2007).

The PSC-17 has strong predictive validity, which is important for screening measures. A systematic review of screening measures identified three studies reporting high sensitivity (SE) 0.82–0.95 and specificity (SP) 0.81–0.91 (Lavigne et al., 2016). Mean sensitivity was 0.90 and specificity 0.85, which surpassed benchmark standards for developmental screening measures (Aylward, 1997; Lavigne et al., 2016).

While the PSC-17 has been well-validated in older children, there remains a need to evaluate the PSC-17 as a measure of child MH in young children in terms of factor structure, internal consistency, test–retest reliability, concurrent and predictive validity.

Use of the PSC-17 with preschool-aged children

While much of the existing research on the PSC-17 has been on school-aged children, limited research has examined the predictive validity and reliability of the PSC-17 in preschools. Studies with teacher report found strong internal consistency across total scores and subscales (DiStefano et al., 2017; Liu et al., 2020; Moore et al., 2021). In a large paired-sample study, partial scalar invariance was established indicating parents and teachers of children aged 4–5 years interpreted items similarly (Gao et al., 2022). However, there remains a need to validate parent-reported ratings in children aged 3 years, since the measure is currently being used in clinical practice with this age (Wagner et al., 2015; Benheim T, Jellinek M and Murphy M, Personal communication, March 7, 2023).

Normative data

The PSC-17 has not been validated with parents in Australia nor is there normative data outside of the United States, which is important for the systematic interpretation of results and efficient identification of children who may have emerging MH problems (Sheldrick et al., 2012). Moreover, the PSC-17 does not have normative data for the preschool age in any country. Using normative data developed for older children, in clinical settings, may not be appropriate for young children, in community settings. US data also may not be appropriate in the Australian cultural context. Producing localised normative data ensures recommended clinical cut-offs are appropriate for screening and assessment purposes in non-clinical populations.

Acceptability

Some researchers and practitioners have considered the use of MH screening measures with young children as contentious (Frances, 2012; Jureidini and Raven, 2012). The (lack of) acceptability of a measure can affect uptake and implementation of child MH or screening measures across settings (Harrison et al., 2013). Acceptability may be defined by its perception as helpful or useful, whether it is easy to understand and whether users would recommend it to others (Brinley et al., 2024; Humphrey and Wigelsworth, 2016). Therefore, the perceived acceptability of a measure by users (in this case parents) is an important aspect to investigate if it is to be adopted as part of improving access to early intervention.

The current study

This study seeks to add to existing evidence by evaluating the parent-reported PSC-17 and ascertaining normative data across preschool and school-age populations. To our knowledge, this is the first study to validate the PSC-17 for children aged 3 years and the first to ascertain normative data, including age and gender versions for an Australian population. The aims of the study were, first, to evaluate the psychometric properties of the PSC-17 and validate parent ratings for preschool children (3–5 years) and school-age children (6–17 years), in terms of factor structure, internal consistency, test–retest reliability and concurrent and predictive validity. Second, to ascertain the specific age and gender normative data for parent-report PSC-17 in Australia for children aged 3–17 years and finally to examine the acceptability among parents of preschool children compared to school children.

Method

Procedures

Study approval was obtained from the University of Sydney Human Research Ethics Committee. There are no set conventions specific to sample sizes for establishing normative data, albeit ideal samples should be large and representative of the defined population. Previous normative studies have recruited samples varying from 500 to 5400 participants (D’Souza et al., 2017; Mellor, 2005). Based on methods previously used by the investigators, this study recruited two samples of 1000 parents of preschool (3–5 years) and school-age children (6–17 years).

Participants were recruited through an online research panel and sampled to represent Australian families based on key sociodemographic characteristics from national census data (i.e. household income, marital status and residential location). Inclusion criteria included adult parents or caregivers of a child aged 3–17 years, who reside in Australia and have basic English literacy. Caregivers could include anyone in a caregiving role such as fathers, mothers, kinship carers or foster carers. Quotas were used for the recruitment of two samples, including an even ratio of child gender and ages within each sample. See supplementary material for further details about recruitment procedures.

After providing informed consent, participants completed measures which were counterbalanced in order of completion. Baseline questionnaires took approximately 10–15 minutes to complete. After data quality checks, the final sample included parent reports of 1048 preschool and 1049 school-age children.

To evaluate retest reliability, concurrent and predictive validity, a sub-sample of participants completed a second set of questionnaires, which took approximately 15–20 minutes to complete. A subset of preschool parents (n = 55) completed questionnaires 6–73 days after baseline (M = 35) and 67 school parents 11–69 days (M = 43) later. In the preschool sample, 16.4% of parents and in the school sample, 4.5% of participants completed the second assessment between 7 and 14 days.

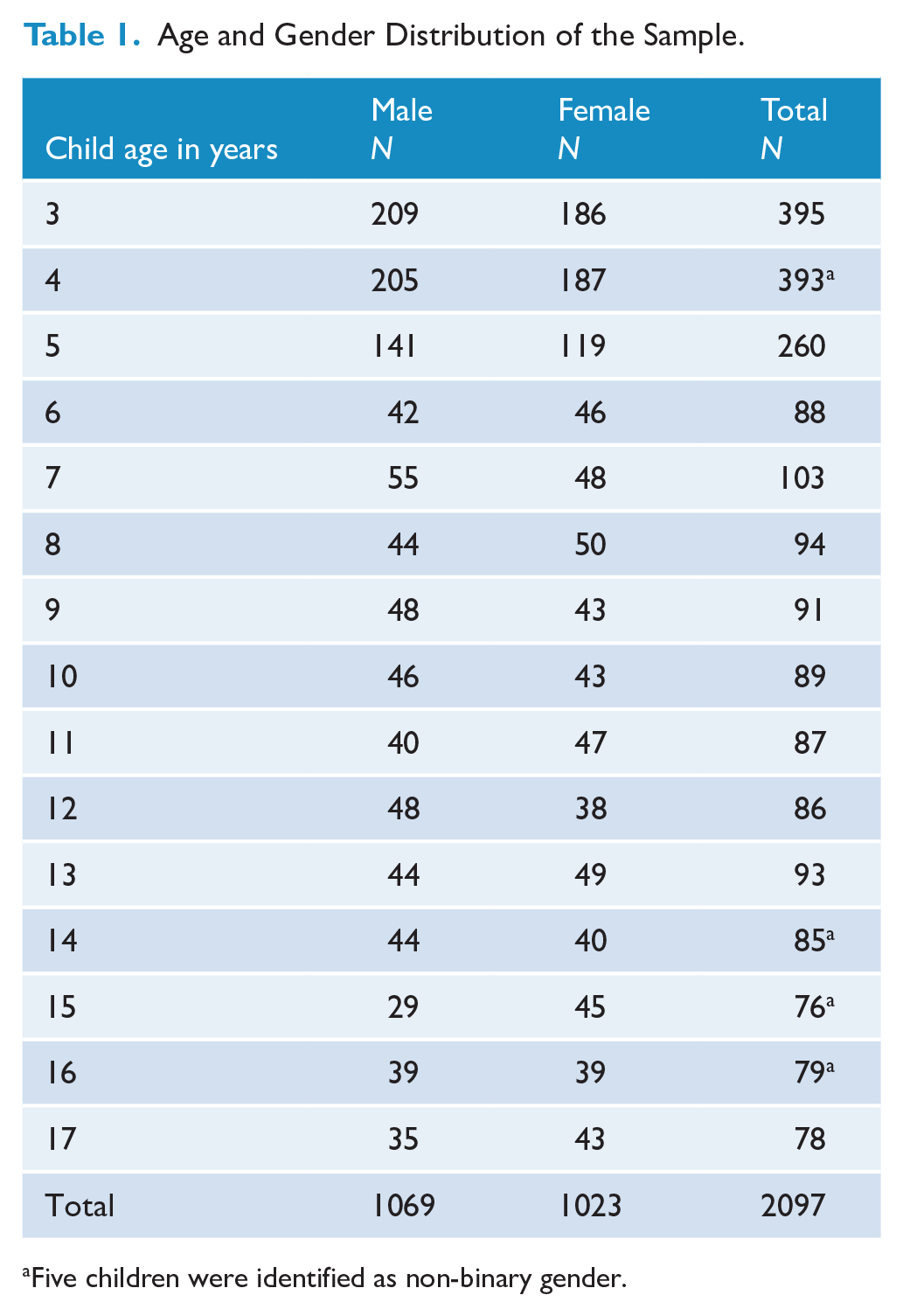

Participants

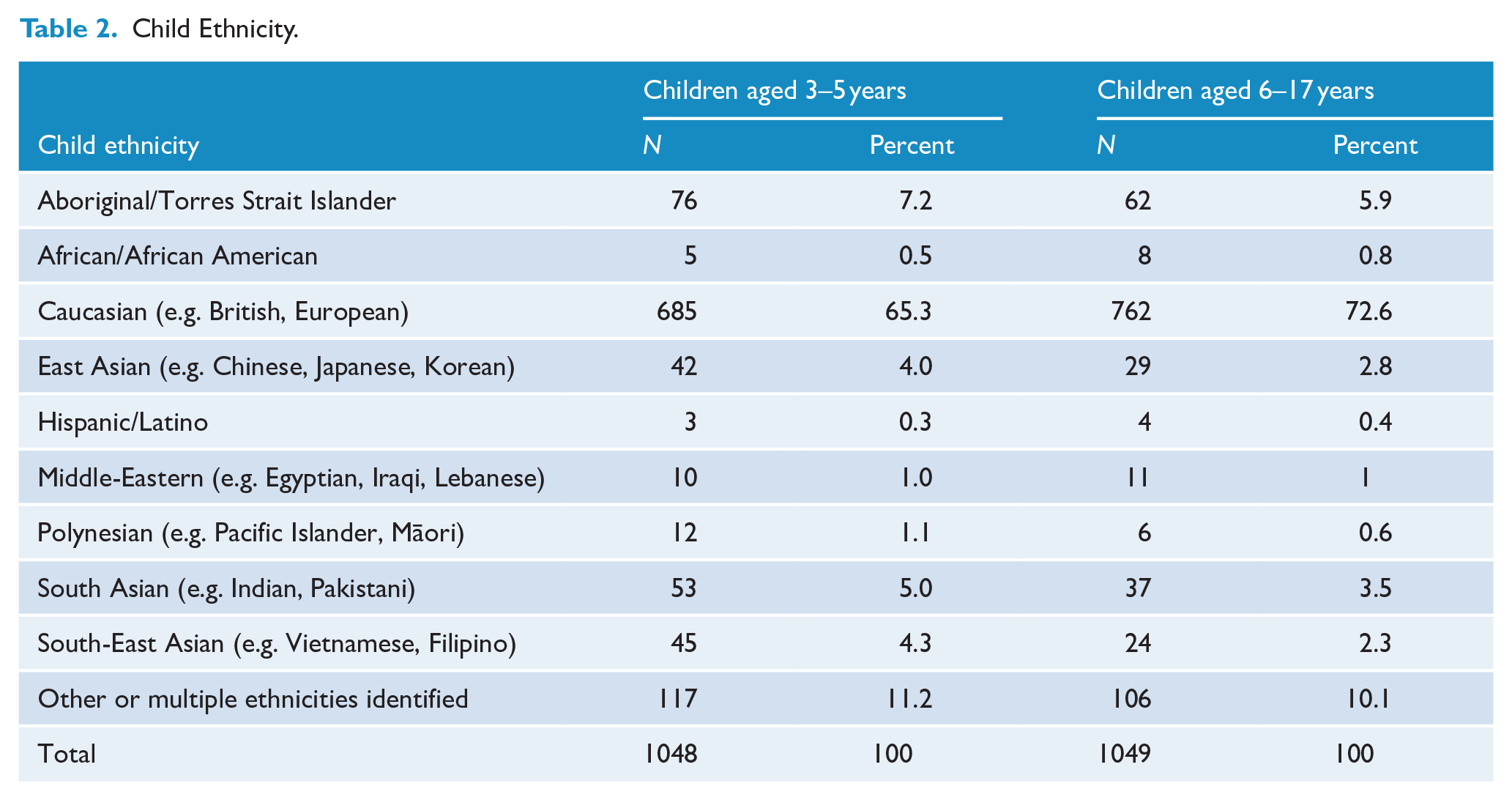

Preschool children were aged 3.87 years on average (SD = 0.78) and school children were aged 11.28 years on average (SD = 3.42). Preschool and school-age samples were almost evenly split between male and female (see Table 1). One preschool child and four school-aged children were identified as non-binary gender. Child ethnicity was predominantly Caucasian, followed by Aboriginal and Torres Strait Islander and multiple ethnicities (see Table 2). Parents from all states and territories were represented in both samples and were culturally diverse. See supplementary material for further details.

Age and Gender Distribution of the Sample.

Five children were identified as non-binary gender.

Child Ethnicity.

Measures

In addition to the PSC-17, parents completed questions about child and family sociodemographic details and the following measures.

CBCL

The CBCL (Achenbach, 1999) is a comprehensive measure of child MH with strong psychometric properties across two age versions – 1.5–5 and 6–18 years. It is the most common criterion measure in classification accuracy studies (Lavigne et al., 2016). The CBCL consists of 99 items (1.5–5 years) and 113 items (6–18 years) and produces internalising, externalising and total problems scales. The CBCL was used in the current study as a criterion measure to establish concurrent and predictive validity.

Acceptability

Parents’ views on the acceptability of the PSC-17 were assessed using four items adapted from a parent-report measure developed by Hawes et al. (2021). Responses were collected on a 5-point Likert-type response scale from not at all/strongly disagree (1) to very/strongly agree (5). See supplementary material for further details about this measure.

Analytic plan

Factor structure, reliability and validity were evaluated separately for preschool and school-age children. Using the original three-factor model (Gardner et al., 1999), confirmatory factor analysis (CFA) was performed and fit statistics reported. CFA was conducted using SPSS Amos (IBM SPSS, 2022). The latent variables, internalising, externalising and attention, were allowed to be correlated, and all measurement error was presumed to be uncorrelated. Hu and Bentler’s (1999) standards of acceptable model fit were used to evaluate the model. Further details are outlined in the supplementary material.

To align with the age of school entry (preschool, primary and secondary school) and CBCL scoring, analyses for predictive validity were conducted on young children (3–5 years), older children (6–11 years) and adolescents (12–17 years). Receiver operator characteristic (ROC) analysis in each of these age groups examined the association between PSC-17 subscales and CBCL case classifications (clinical/non-clinical cases). Normative data are also presented for these age ranges. As the online questionnaire required input for all items, there were no missing data.

Since the test–retest time interval was longer than intended, reliability estimates for short intervals (7–14 days) compared those outside that time period (<7 and >14 days) were analysed, and no significant difference was found. Thus, we report retest reliability for the full sub-sample.

Psychometric properties were evaluated against Youngstrom et al.’s (2017) criteria set out in a rubric by De Los Reyes and Langer (2018). Since the rubric does not include specific thresholds for predictive validity or classification accuracy, predictive validity of the scale was evaluated against benchmark criteria for developmental screening measures of 70% and above for sensitivity and specificity (Aylward, 1997), and area under curve (AUC) values of 0.80 or higher were considered ‘very good’ and 0.90 or higher as ‘excellent’ (Chaffin et al., 2017).

Normative data were determined by reporting scoring cut-offs for the 90th (borderline) and 95th percentile (at-risk cases) for subscales and total scores, gender and age. At-risk scores indicate positive identification on the screening measure and further investigation of child MH may be warranted.

Results

Factor structure

For the preschool sample, the chi-square fit statistic was large and significant, χ2(116, N = 1048) = 661.73, p < 0.001. χ2/df = 5.70. Other fit statistics, however, revealed a root mean square error of approximation (RMSEA) = 0.07, CI 90%: 0.06–0.07, standardised root mean square residual (SRMR) = 0.05, comparative fit index (CFI) = 0.91, Tucker–Lewis index (TLI) = 0.90, indicating some support for the hypothesised model. Factor loading estimates were moderately to strongly correlated to the latent factors (R2: 0.49–0.82) in the preschool sample.

For the school sample, the chi-square fit statistic was large and significant, χ2(116, N = 1049) = 474.84, p < 0.001. χ2/df = 4.09. Other fit indices supported the hypothesised model in the school-age sample, RMSEA = 0.05, CI 90%: 0.05–0.06, SRMR = 0.05, CFI = 0.94, TLI = 0.93. Factor loading estimates were moderately to strongly correlated to the latent factors (R2: 0.57–0.84) in the school sample.

The modified preschool model and the school model met three out of the six criteria, with two criteria ‘close to’ cut-offs.

Distribution of scores

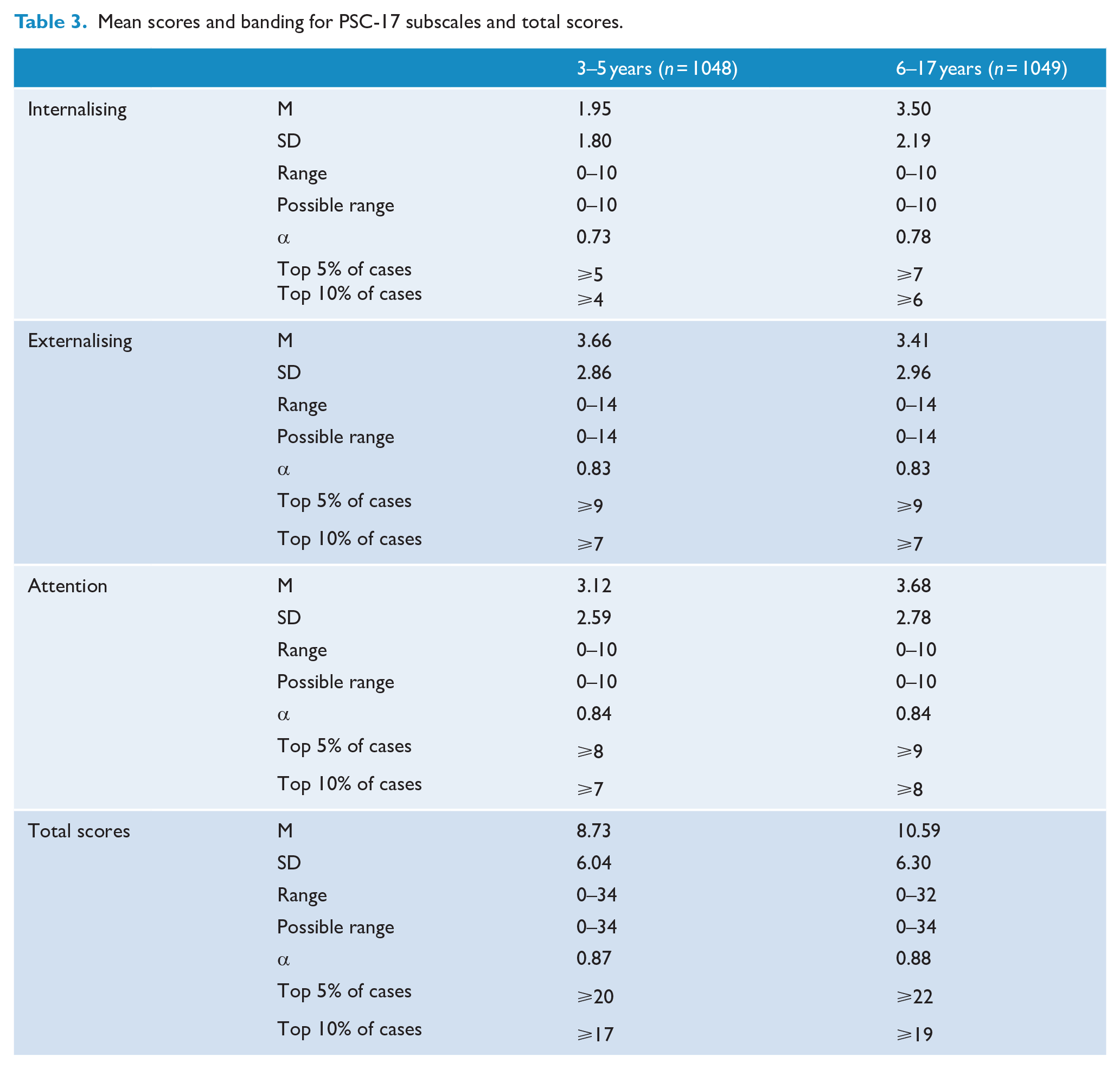

The mean, standard deviation and ranges for each of the samples are outlined in Table 3.

Mean scores and banding for PSC-17 subscales and total scores.

Reliability

In the preschool sample, good internal consistency was found for the PSC-17 total scores (α = 0.87), externalising (α = 0.83) and attention subscales (α = 0.84). Internal consistency of the internalising subscale was adequate (α = 0.73). In the school sample, internal consistency for total scores (α = 0.88), externalising (α = 0.83) and attention subscales (α = 0.84) was also good. Internal consistency of the internalising subscale was adequate (α = 0.78).

In the preschool sample, analyses for test–retest reliability was performed and reported as follows: total scores (ICC = 0.66), internalising (ICC = 0.62), externalising (ICC = 0.61) and attention subscales (ICC = 0.63). In the school sample, good test–retest reliability was found for total scores (ICC = 0.73) and the internalising subscale (ICC = 0.70). Test–retest reliability for externalising (ICC = 0.67) and attention subscales (ICC = 0.68) did not meet adequate criteria. ICC estimates and their 95% confident intervals were calculated based on a single rating, absolute-agreement, two-way mixed-effects model.

Concurrent validity

The PSC-17 strongly correlated with the CBCL across ages demonstrating concurrent validity. In the preschool sample, PSC-17 total scores strongly and significantly correlated with the CBCL total problems at the bivariate level, r = 0.93, p < 0.001, n = 55. PSC-17 subscales also strongly correlated with the CBCL subscales: internalising r = 0.86, p < 0.001; externalising r = 0.86, p < 0.001; and attention r = 0.89, p < 0.001. In the school sample, PSC-17 total scores were strongly associated with the CBCL total problems, r = 0.82, p < 0.001, n = 67; internalising r = 0.75, p < 0.001; externalising r = 0.80, p < 0.001; and attention r = 0.81, p < 0.001.

In the preschool sample, the AUC for PSC-17 total scores was excellent 0.86 with a standard error of 0.12. This was significant at 0.002 (CI: 0.628–1.084). Internalising AUC was adequate 0.68, SE: 0.14, p = 0.184 (CI: 0.414–0.947); externalising AUC was excellent 0.83, SE: 0.14, p = 0.018 (CI: 0.555–1.101); and attention AUC was excellent 0.89, SE: 0.05, p < 0.001 (CI: 0.792–0.996). In the school sample, the AUC for PSC-17 total scores was excellent 0.83 with a standard error of 0.07. This was significant at p < 0.001 (CI: 0.683–0.967). Internalising AUC 0.79, SE: 0.07, p < 0.001 (CI: 0.649–0.926); externalising AUC: 0.88, SE: 0.08, p < 0.001 (CI: 0.713–1.038); and attention AUC: 0.91, SE: 0.08, p < 0.001 (CI: 0.758–1.065) subscales all met criteria for excellent validity.

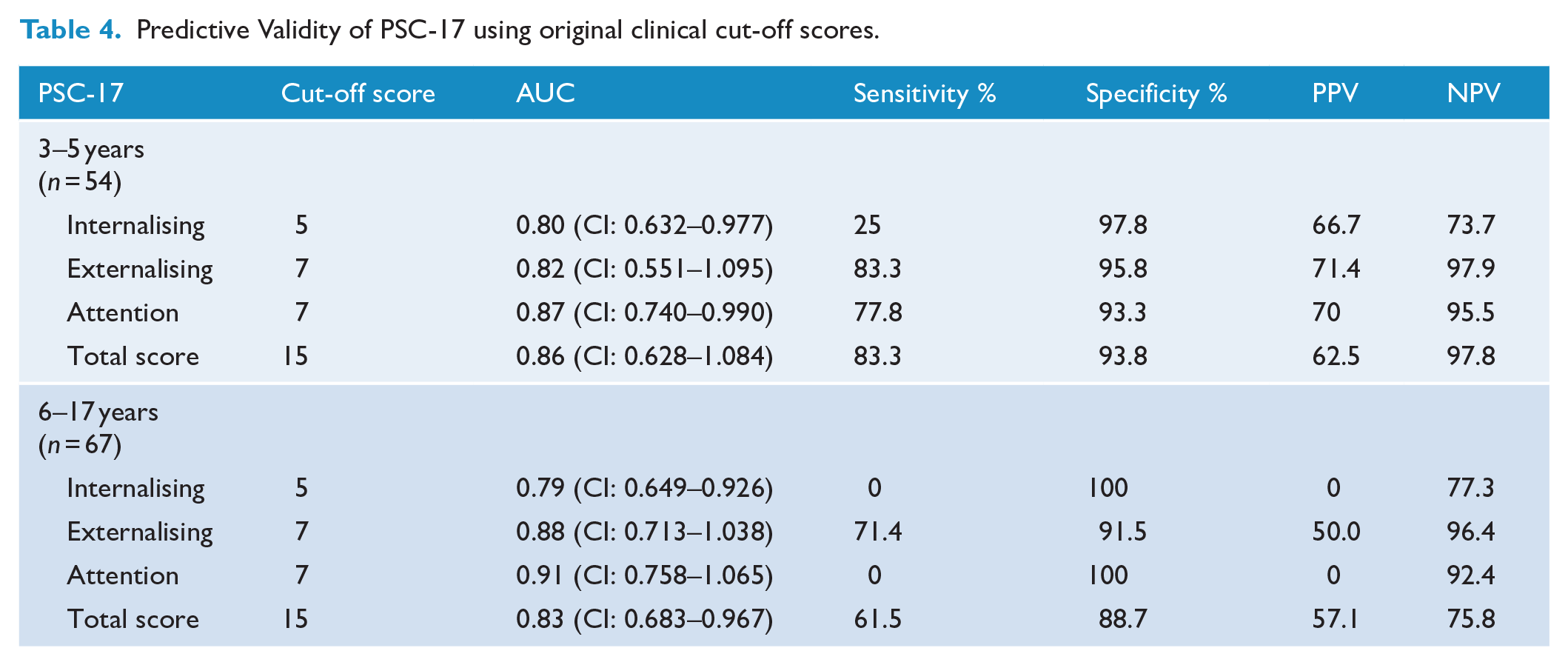

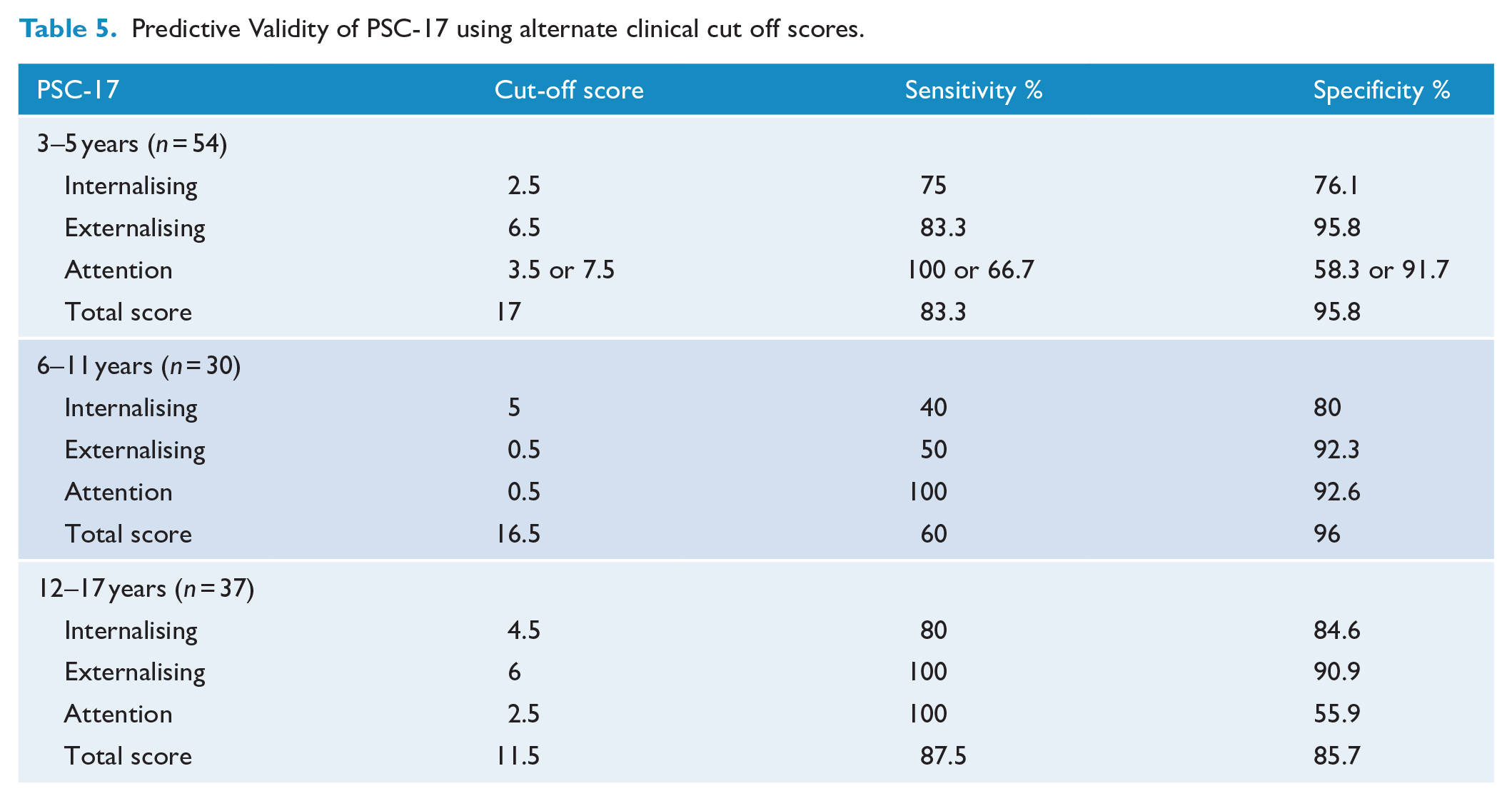

Predictive validity

Predictive validity was examined in young children (3–5 years, n = 55), older children (6–11 years, n = 30) and adolescents (12–17 years, n = 37). A low number of clinical cases across ages meant assumptions were violated for chi-square tests. The older children and adolescent categories were combined for analysis; however, case numbers for the attention subscale remained low. In the preschool sample, high sensitivity was demonstrated for total scores (83.3), externalising (83.3) and attention subscales (77.8). Very high specificity was demonstrated for total scores and all subscales (93.3–97.8). In the school sample, moderate sensitivity was demonstrated for the externalising subscale only (71.4). High specificity was demonstrated for total scores and all subscales (88.7–100). Predictive validity of the PSC-17 using original clinical cut off scores for each of the samples is presented in Table 4. ROC analysis identified alternate cut off points which maximised sensitivity and specificity. Alternate clinical cut-points and their respective sensitivity and specificity are presented in Table 5.

Predictive Validity of PSC-17 using original clinical cut-off scores.

Predictive Validity of PSC-17 using alternate clinical cut off scores.

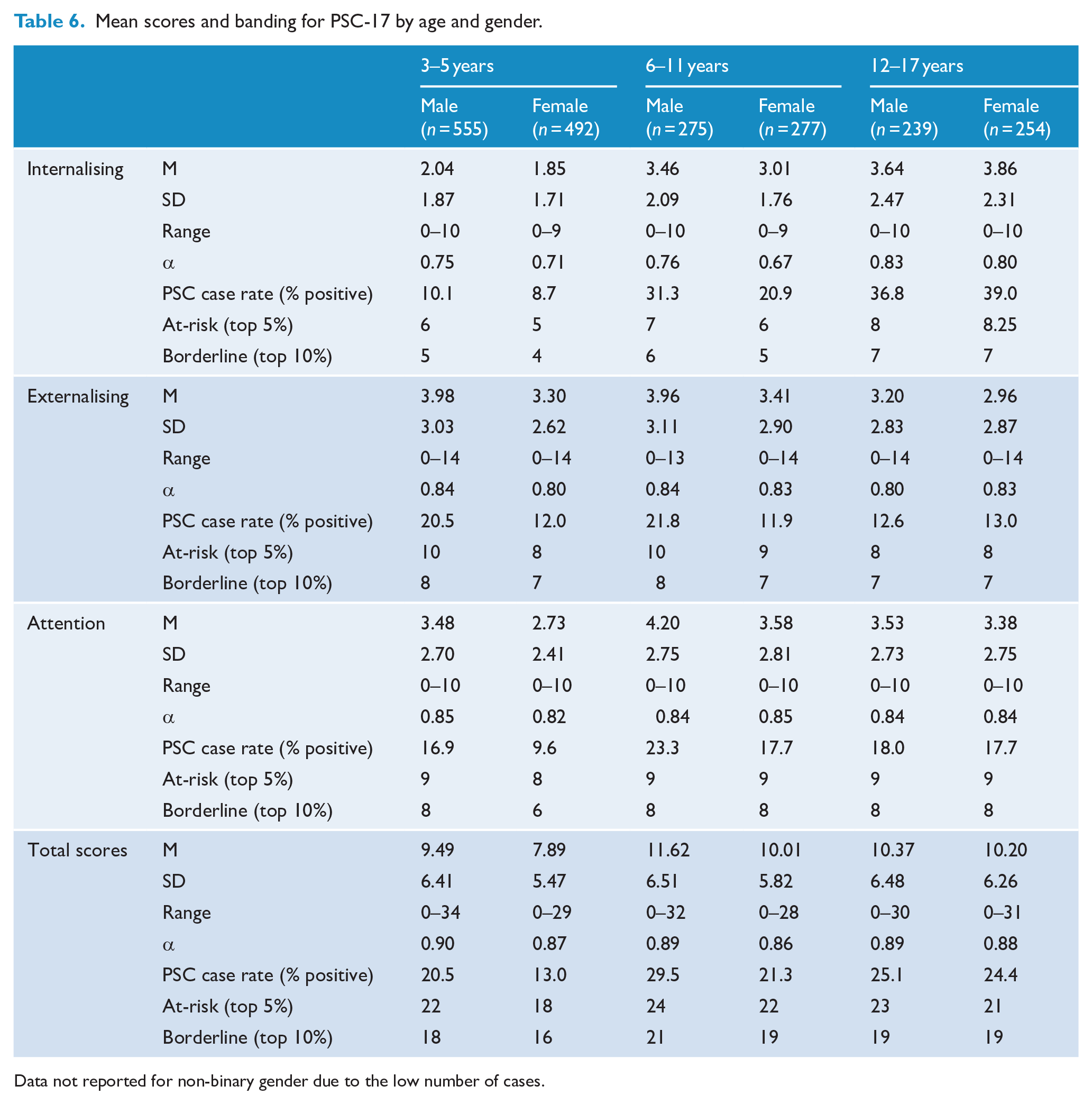

Normative data

In the preschool-age range, 17% of cases were positively identified (at-risk) on total scores, 9.4% internalising, 16.5% externalising and 13.5% attention. In the school-age range (6–17 years), 25.3% were positively identified on total scores, 31.8% internalising, 15.1% externalising and 19.2% attention. Fine-grained age analysis revealed 25.4% of older children were positively identified on total scores, 26.1% internalising, 16.8% externalising and 20.5% attention. Among adolescents, 25.2% were positively identified on total scores, 38.2% internalising, 13.1% externalising and 17.7% attention. For each age and gender group, Table 6 presents cut-off scores for the highest-ranking cases.

Mean scores and banding for PSC-17 by age and gender.

Data not reported for non-binary gender due to the low number of cases.

Males across ages scored higher than females on total scores, as such, further analyses were performed to test the significances of these differences by gender. Preschool males (M = 9.49) scored significantly higher than females (M = 7.89) on total scores as indicated by an independent samples t-test (F = 7.79, p < 0.001). A two-way analysis of variance (ANOVA) indicated there was no significant interaction between the effects of preschool age and gender on PSC-17 total scores. While total scores were also higher for school males (M = 11.04) than for females (M = 10.10), this was not a significant difference (F = 2.98, p = 0.085).

Acceptability

Parents’ ratings of dimensions related to the acceptability indicated that most parents found the PSC-17 to be very or quite appropriate (preschool 87.3%, n = 55; school-age 82.9%, n = 1049) and very or quite clear (preschool 92.8%; school-age 93.3%). Most parents were very or quite confident in the ratings they provided (preschool 96.3%; school-age 95.3%); and agreed or strongly agreed that the PSC-17 asked important questions about their child’s MH (preschool 89.1%; school-age 92.5%). Independent t-tests showed that there was no significant difference between ratings of acceptability between preschool parents and school-age parents.

Discussion

The purpose of this study was to evaluate the reliability and validity of the parent-reported PSC-17 in young children, ascertain Australian normative data and examine acceptability among parents. To address the first aim, this study presents new evidence in support of the measure’s psychometric properties and validates parent ratings for children aged 3–17 years, in terms of factor structure, reliability and validity. Considered alongside the factor loadings, the three-factor model was supported across preschool and school-age ranges, which is consistent with previous studies which included 4- to 15-year-old children (DiStefano et al., 2017; Murphy et al., 2016).

Overall, using specified evaluation criteria, the PSC-17 for preschool parents was found to have good internal consistency, excellent concurrent validity and good predictive validity. For school parents, the PSC-17 was found to have good internal consistency, good test–retest reliability and excellent concurrent validity. In terms of reliability, the PSC-17 demonstrated strong internal consistency across ages. Test–retest reliability fell just below acceptable levels for the preschool sample according to the specified evaluation criteria; however, this analysis was hampered by a large variation and lengthy time period between testing. Given the rapid child development that occurs during the preschool years, it is not surprising that preschool ICCs were lower than school-age coefficients. The acceptable test–retest reliability for school-age children was higher than other studies which had longer, 6-month retest periods (ICC = 0.55) (Jacobson et al., 2019) and on par with more ideal 8- to 14-day retest period (ICC = 0.85) (Murphy et al., 2016).

In terms of concurrent validity, the PSC-17 correlated strongly with the CBCL across subscales and total scores for both samples. Moreover, ROC analyses revealed the PSC-17 had excellent classification accuracy with very high AUC results across ages. The AUC for the internalising subscale for the preschool sample was borderline acceptable and acceptable for the school sample, which was lower than the AUC of 82% found in the original derivation study (Gardner et al., 1999). That study included a large sample of clinical and primary care presentations which may explain the difference.

In terms of predictive validity, the PSC-17 demonstrated excellent screening accuracy for total scores, externalising and attention subscales using the recommended cut-offs for preschool-age children. Surprisingly the PSC-17 did not perform as strongly in the older age ranges. While excellent specificity was found for all subscales and total scores for school-age children and positive predictive values (PPV) were acceptable for externalising and total scores, only the externalising subscale passed benchmark standards for combined high sensitivity, specificity and PPV. Results may have been hampered by a low number of clinical cases, as is common when recruiting from community samples. Further work is required to investigate sensitivity for school-age children since numerous studies have attained high sensitivity and high specificity for the PSC-17 in this age range (Lavigne et al., 2016).

Normative data

The second aim of this study was to ascertain age and gender normative data for parent-report PSC-17 in Australia for children aged 3–17 years. This study found positive screening rates of 17% for preschool children and 25.3% for school-age children, which is higher than the most recent Australian child and adolescent survey of MH which identified 13.9% of children aged between 4 and 17 years as having a mental disorder (Lawrence et al., 2015) and higher compared to the two previous normative studies from the United States, which were reported as 15% for children of 4–15 years (Gardner et al., 1999) and 11.6% (Murphy et al., 2016).

The higher number of cases may be due to differences in the local population and timing of data collection. It has been established over the past decade that rates of child MH in Australia are high and stable (Sawyer et al., 2018), and Australian-born children are more likely to be positively identified on screening and have mental illness compared to children born elsewhere (Australian Institute of Health and Welfare, 2020). Cut-offs on similar measures are also higher in Australia compared to samples in the United Kingdom and the United States (Kremer et al., 2015).

Elevated scores of child MH may also be due to the effects of the COVID-19 pandemic on Australian children, who experienced a national lockdown, restricted access to schooling and social supports (Sicouri et al., 2023). Research has shown Australian parents had poorer MH and functioning indicating the pandemic had a significant, negative impact on Australian families (Westrupp et al., 2023). While robust systematic comparisons of current child MH symptoms to pre-pandemic data is still emerging, this study’s results suggest further investigation is required into the ostensibly high rates of children identified with emerging MH difficulties and is a consideration for the generalisability of the results.

Acceptability

The final aim of this study was to examine acceptability. Results indicated that parents found the PSC-17 to be highly appropriate, easy to understand and important in terms of assessing their child’s MH, and there was no difference in levels of acceptability between preschool and school-age parents. The construct of acceptability is associated with the likelihood that screening measures are adopted in future, and thus, these results are a promising indication of parental support for the use of this measure (Glover and Albers, 2007; Kamphaus et al., 2007). High levels of acceptability may be associated with an increased parental awareness of child MH challenges in the aftermath of the pandemic.

Strengths and limitations

Strengths of this study included the large and diverse sample recruited from across Australia and the use of a comprehensive, comparative measure in the form of the CBCL. However, the representativeness of the sample cannot be determined beyond comparative analysis to census data. Moreover, the CBCL is not a gold-standard, criterion measure nor is it a diagnostic measure. Furthermore, recruiting from the community combined with a small sub-sample at the second time point meant that a limited number of clinical cases were identified. This affected the predictive validity analysis; however, low clinical cases are symptomatic of recruiting from a non-clinical population. Common method variance may have also affected results since both assessment measures were questionnaires. Future studies should seek to replicate this study’s findings and employ alternate methods of assessment such as comprehensive clinical interviews to assess diagnostic and screening accuracy. Furthermore, future research may investigate the measure’s responsiveness and sensitivity to change which are important measurement properties in clinical and research contexts.

Implications

The findings of this study support the PSC-17 as a valid, reliable and acceptable parent measure for preschool- and school-age children within this population. As a freely available, brief measure, it can be adopted widely in a range of contexts. By spanning the ages of 3–17 years, it enables measurement continuity, i.e., using the same measure at different ages, which is particularly important for longitudinal research, in which children often age out of measures and cross-measure comparisons are difficult. Continuity of measures may also benefit clinical work such as measurement-based care or universal screening conducted at discrete timepoints in a child’s life, e.g., at preschool, school entry or high school graduation. In fact, recent surveys have found practitioners perceive brief and free measures as important facilitators to increase the use of measures in practice (Tully et al., 2024). Thus, the PSC-17 may serve as an important instrument for tracking the progress of clinical interventions to improve child MH.

Conclusion

The current study contributes new evidence for the PSC-17 as an appropriate and acceptable measure of child MH, which can be utilised in clinical and community settings for a wide range of ages including preschool-aged children. This study was the first to ascertain normative data for the PSC-17 outside of the United States and for children aged 3 years. By ascertaining normative data for the Australian population and young children, children with emerging MH difficulties can be accurately identified and directed towards further assessment and early intervention.

Supplemental Material

sj-docx-1-anp-10.1177_00048674251342952 – Supplemental material for Reliability, predictive validity and normative data for the Pediatric Symptom Checklist-17 in a national Australian sample

Supplemental material, sj-docx-1-anp-10.1177_00048674251342952 for Reliability, predictive validity and normative data for the Pediatric Symptom Checklist-17 in a national Australian sample by Rebecca K McLean, Lucy A Tully and Mark R Dadds in Australian & New Zealand Journal of Psychiatry

Footnotes

Acknowledgements

Thanks to Domna Alloush for contributing to data preparation.

Author Contributions

RM designed the study, analysed and interpreted the data and drafted the manuscript.

LT was involved in the conception and the design of the study, reviewed the data and reviewed the manuscript.

MD was involved in the conception and the design of the study, reviewed the data and reviewed the manuscript.

All authors contributed to the development of the research questions; read, reviewed and approved the final manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship and/or publication of this article: This research was supported by Growing Minds Australia: A National Trials Strategy to Transform Child and Youth Mental Health Services, and the Growing Minds Australia Clinical Trials Network (Medical Research Future Fund: MRF2006438).

Ethical Approval and Informed Consent statements

Study approval was obtained from the University of Sydney Human Research Ethics Committee (2023/704) on 24 October 2023. All participants provided written informed consent prior to participating.

Data Availability Statement

The datasets generated during and/or analysed during the current study are available from the corresponding author on reasonable request and subject to ethical considerations.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.