Abstract

Objective:

The aim of this systematic review was to critically appraise the psychometric properties of antipsychotic medication side effect assessment tools.

Methods:

Systematic searches were undertaken in PubMed, CINAHL and CENTRAL from inception to October 2014. Studies were included if they detailed the evaluation of psychometric properties of antipsychotic medication side effect assessment tools in mental health populations. Studies were excluded if they examined the use of antipsychotic medication side effect assessment tools in non-mental health populations, including people suffering from dementia, Parkinsonism and Alzheimer’s. Narrative reviews and studies published in any language other than English were also excluded.

Results:

Content validity was appropriately established for only one of the tools, reliability was inappropriately evaluated for all but one tool, and the assessment of responsiveness was not acceptable for any tool.

Conclusion:

Further psychometric studies are warranted to consolidate the psychometric properties of the included antipsychotic medication side effect assessment tools before any of these tools can be confidently recommended for either research or clinical purposes.

Background

Antipsychotic medication remains one of the principal approaches to managing psychotic disorders. These medications reduce psychotic symptoms but at the same time produce side effects that are often unbearable (Morrison et al., 2015a, 2015b, 2015c). Such side effects include severe sedation, substantial weight gain, sleep disorders, sexual dysfunction and difficulties in social activities (Leucht et al., 2009; Lieberman et al., 2005). The intolerability of these side effects has been identified as a key determinant of non-adherence to antipsychotic medication (Haddad et al., 2014a; Lieberman et al., 2005).

Discontinuing antipsychotic medication often leads to relapse in psychotic disorders, which in turn may result in loss of employment, loss of housing, relationship and social problems and increased risk of suicide (Ascher-Svanum et al., 2008; Chapman and Horne, 2013; Yen et al., 2009). Evidently, mental health consumers may find themselves in a perpetual pattern of illness occurrence, prescribed medication subsequently producing unendurable side effects, discontinuation of medication and eventual relapse (Llorca, 2008; Naber and Karow, 2001; Salomon and Hamilton, 2013).

To intervene in this cycle, clinicians need to communicate about side effects resulting from antipsychotic medication in an open manner with mental health consumers, while also considering the potential benefits of taking medication (Gerlach and Larsen, 1999; Happell et al., 2004; Naber and Karow, 2001; Salomon and Hamilton, 2013). However, mental health consumers often experience difficulties in communicating openly with clinicians, possibly because they may be reluctant to fully disclose their personal circumstances, or the medication or the illness has impaired their ability to communicate (Naber, 2008; Roe and Goldblatt, 2009; Seale et al., 2007). Exacerbation of the communication breakdown between mental health consumers and clinicians may be due to clinicians having poor communication or diagnostic skills leading to a less than comprehensive understanding of mental health consumers’ situation (Hungerford and Fox, 2014; Morrison et al., 2000; Roe and Goldblatt, 2009). Studies have found that clinicians consistently underestimate the rate of side effects resulting from antipsychotic medication, which suggests that it is a common clinical problem (Dassori et al., 2003; Hellewell, 1999).

These issues with the identification of antipsychotic medication side effects and how these may be managed constructively could be addressed through methods that facilitate effective communication between mental health consumers and clinicians. This underlines the need for assessment tools that either enable clinicians to readily ascertain the nature, frequency and impact of side effects or allow mental health consumers to detail the side effects they experience (Cabeza et al., 2000; Dott et al., 2001; Goff et al., 2010; Hellewell, 1999). Information from such tools can provide clinicians with a better understanding of the real and potential issues mental health consumers encounter when taking antipsychotic medication, and thereby facilitate discussions with consumers about strategies to ameliorate side effects and improve medication adherence. This problem is central to the quality of life and recovery journey for many mental health consumers (Dassori et al., 2003; De Leeuw et al., 2012; Dott et al., 2001).

The objectives of this systematic review were twofold: (1) to identify all available antipsychotic medication side effect assessment tools and (2) to critically appraise the psychometric properties of these assessment tools.

Methods

Search strategy

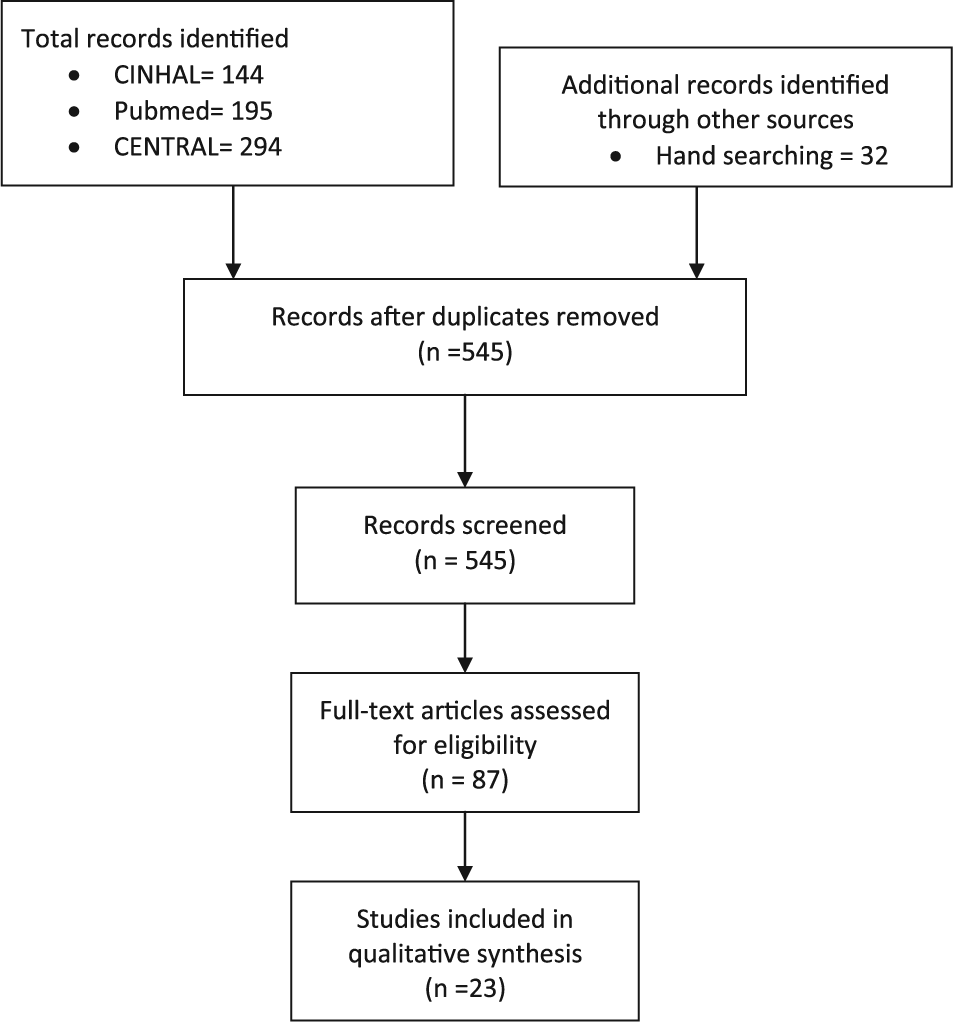

Figure 1 displays the implementation of the search strategies and subsequent selection of studies. We developed electronic search strategies to identify English language studies of tools that assessed antipsychotic medication side effects. The PubMed, CINAHL and CENTRAL databases were searched from inception to October 2014. Supplementary Appendix 1 contains the specific search strategies used in PubMed, CINAHL and CENTRAL. The titles and abstracts for all studies retrieved by the initial searches were reviewed to identify potentially relevant studies detailing antipsychotic medication side effect assessment tools.

Implementation of search strategies and selection of studies.

For each of the identified potentially relevant antipsychotic medication side effect assessment tools, individual PubMed and CINAHL searches were undertaken by using these tools’ full titles and acronyms. Additional studies were identified by a manual search of the citation lists for the identified studies detailing potentially relevant antipsychotic medication assessment tools. Finally, full text copies of studies that described either the validation or use of any of the potentially relevant measures were retrieved and considered for inclusion in this review.

Selection criteria

We included studies published between 1950 and October 2014 that detailed the evaluation of psychometric properties of antipsychotic medication assessment tools in mental health populations. Studies were excluded if they examined the use of antipsychotic medication side effect assessment tools in non-mental health populations, including people suffering from dementia, Parkinsonism and Alzheimer’s. We also excluded narrative reviews and studies published in any language other than English.

Explicit review criteria

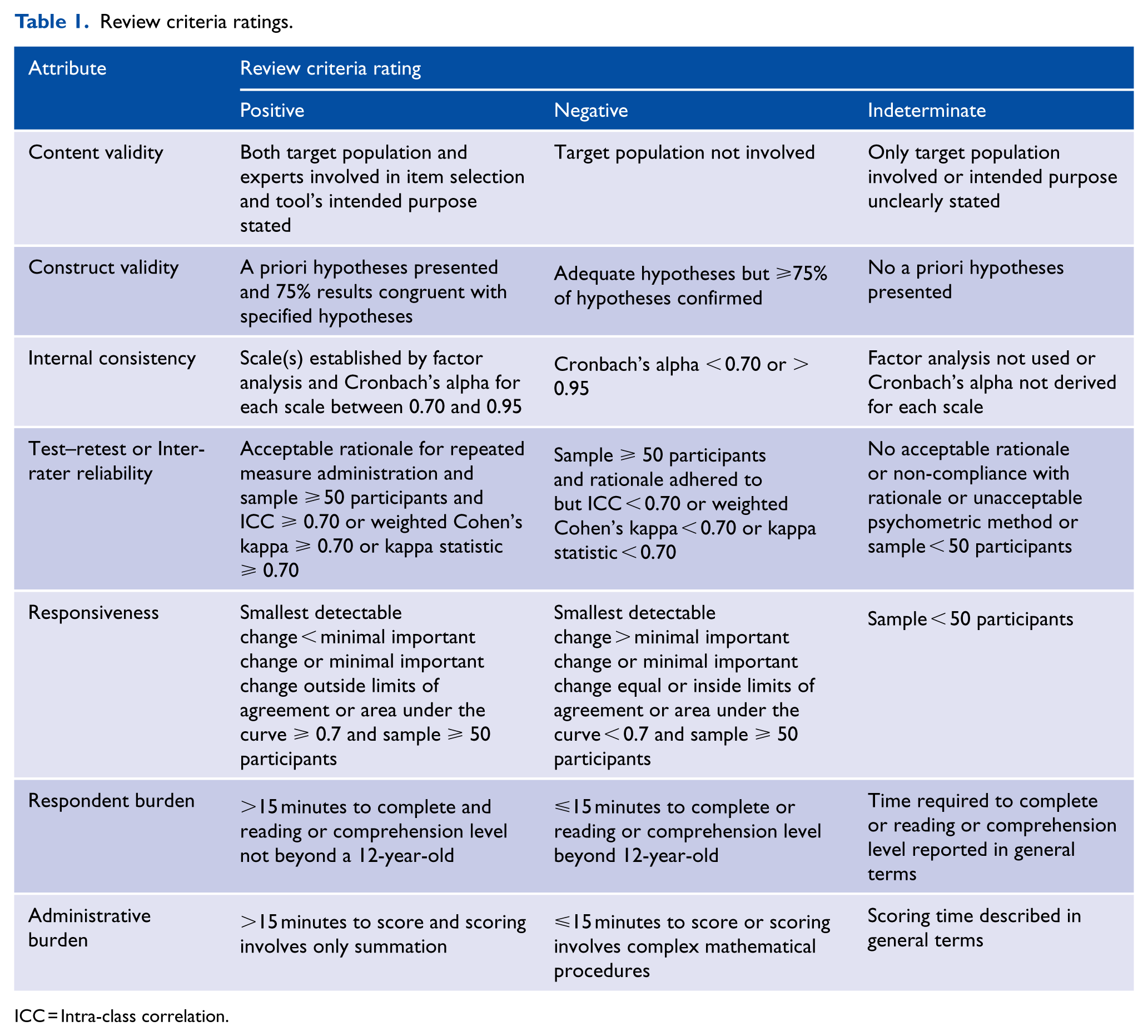

To evaluate the quality of the studies included in the review, we used a previously developed critical appraisal tool (Stomski et al., 2010) that was primarily based on the Medical Outcomes Trust (Aaronson et al., 2002) and Terwee et al.’s (2007) recommendations for the evaluation of outcome measures. This tool contained criteria that appraised the following psychometric properties: content validity, construct validity, internal consistency, test–retest/inter-rater reliability, responsiveness and respondent/administrative burden. Table 1 displays these criteria, and the following section explains these criteria in more detail.

Review criteria ratings.

ICC = Intra-class correlation.

Content validity

Content validity involves the extent to which the measure’s items encompass all relevant concepts (Aaronson et al., 2002). The assessment of content validity was considered to be adequately undertaken if the measure’s intended purpose was clearly stated, and both the target population (mental health consumers in this case) and relevant experts were involved in developing the measure’s items.

Construct validity

Construct validity reflects the degree to which a measure’s scores are consistent with predefined hypotheses such as relationships to scores of other instruments, or differences between pertinent groups (Aaronson et al., 2002). The assessment of construct validity was considered to be adequately undertaken when specific predefined hypotheses regarding anticipated correlations or differences were reported, and these hypotheses were then confirmed.

Internal consistency

Internal consistency establishes the degree to which scale items measure a particular concept (Aaronson et al., 2002). The assessment of internal consistency was considered to be adequately undertaken if: the dimensions of the measure’s scales were established using either factor analysis, principal components analysis or Rasch analysis, and then Cronbach’s alpha was calculated for each identified scale and the value was between 0.70 and 0.95 (Terwee et al., 2007).

Test–retest/inter-rater reliability

Test–retest and inter-rater reliability establish the extent to which a measure’s results remain consistent between repeated administrations over time (Aaronson et al., 2002). The assessment of test–retest, or inter-rater, reliability was considered to be adequately undertaken if: it was evaluated in sample size of at least 50 participants, and the intra-class correlation coefficient, or weighted Cohen’s kappa, or kappa coefficient, equalled or was above 0.70 (Terwee et al., 2007).

Responsiveness

Responsiveness involves the ability of a specific measure to identify change over time (Aaronson et al., 2002). The assessment of responsiveness was considered to be adequately undertaken if: it was evaluated in a sample of at least 50 participants; and either the smallest detectable change was less than the minimal important change; minimal important change was outside of the limits of agreement; or the area under the receiver operating characteristics curve was at least 0.70 (Terwee et al., 2007).

Administrative/respondent burden

Respondent burden involves the time and comprehension skills required to complete the measure (Aaronson et al., 2002). Respondent burden was considered acceptable if the mean time required to complete the measure was less than 15 minutes, and the required comprehension level was not beyond a 12-year-old child. Administrative burden refers to the demands required of those administering the measure (Aaronson et al., 2002). Administrative burden was considered to be acceptable if the mean time required to administer the measure was less than 15 minutes, and only straightforward arithmetical tasks were required to calculate the measure’s scores.

Results

Identified studies

The search strategy yielded 545 potentially relevant studies. After screening titles and abstracts, 87 full text studies were retrieved and considered for inclusion in this review. We included 23 studies, which detailed 16 antipsychotic medication side effect assessment tools. The included antipsychotic medication tools were the Abnormal Involuntary Movement Scale (AIMS) (Guy, 1976), Akathisia Rating Scale (ARS) (Barnes, 1989), Antipsychotic Non-Neurological Side-Effects Rating Scale (ANNSERS) (Ohlsen et al., 2008), Approaches to Schizophrenia Communication–Self-Report (ASC-SR) (Dott et al., 2001), Approaches to Schizophrenia Communication–Clinic (ASC-C) (Dott et al., 2001), Arizona Sexual Experience Scale (ASES) (Byerly et al., 2006), Extrapyramidal Symptom Rating Scale (ESRS) (Chouinard et al., 1980), Extrapyramidal Side Effects Scale (ESES) (Simpson and Angus, 1970), Glasgow Antipsychotic Side-effect Scale (GASS) (Waddell and Taylor, 2008), Hillside Akathisia Scale (HAS) (Fleischhacker et al., 1989), Liverpool University Neuroleptic Side Effect Rating Scale (LUNSERS) (Day et al., 1995), Maryland Psychiatric Research Center Scale (MPRC) (Cassady et al., 1997), Nursing Extra Pyramidal Symptoms Assessment Scale (NEPSAS) (Fagan-Pryor and May, 2000), Prince Henry Hospital Akathisia Rating Scale (PHHARS) (Sachdev, 1994), Systematic Monitoring of Adverse events Related to TreatmentS (SMARTS) (Haddad et al., 2014b), and Yale Extrapyramidal Symptom Scale (YESS) (Mazure et al., 1995).

We identified a further 17 antipsychotic medication side effect assessment tools that were excluded for the following reasons: validated in a language other than English (De Haan et al., 2002; Gerlach et al., 1993; Kaneda, 2009; Kikuchi et al., 2011; Kim et al., 2002; Lako et al., 2013; Lindstrom et al., 2009; Lingjaerde et al., 1987; Loonen et al., 2000; Naber, 1995; Prieto et al., 2004; Wolters et al., 2006), validated in a population of people with intellectual disabilities (Bodfish et al., 1997; Kalachnik and Sprague, 1993; Matson et al., 1998) and not specifically developed to assess antipsychotic medication side effects (Gaebel et al., 2010; Hogan et al., 1983; Mojtabai et al., 2012; Nielsen et al., 2012).

Types of antipsychotic medication side effect tools

About half of the 16 antipsychotic medication side effect assessment tools included in this review assessed drug-induced movement disorders. Of these tools, one (AIMS) assessed only dyskinesia, one (ESES) evaluated only Parkinsonism and several (ARS, HAS, PHHARS) evaluated only akathisia. One tool (MPRC) assessed dyskinesia and Parkinsonism. One tool (YESS) evaluated dyskinesia, Parkinsonism and dystonia. One tool (NEPSAS) assessed akathisia, Parkinsonism and dystonia. Only one tool (ESRS) assessed the entire range of drug-induced movement disorders. All drug-induced movement disorder assessment tools were observer-rated.

Most of the other assessment tools (ANNSERS, ASC-SR, ASC-C, LUNSERS, GASS, SMARTS) included in this review assessed both neurological (movement disorders) and non-neurological side effects, which commonly include sedation, weight gain, prolactinaemic problems, gastrointestinal problems and genitourinary problems. All but two of these tools (ASC-C, ANNSERS) were self-rated. The final tool (ASES) included in this review specifically assessed sexual dysfunction and was self-rated.

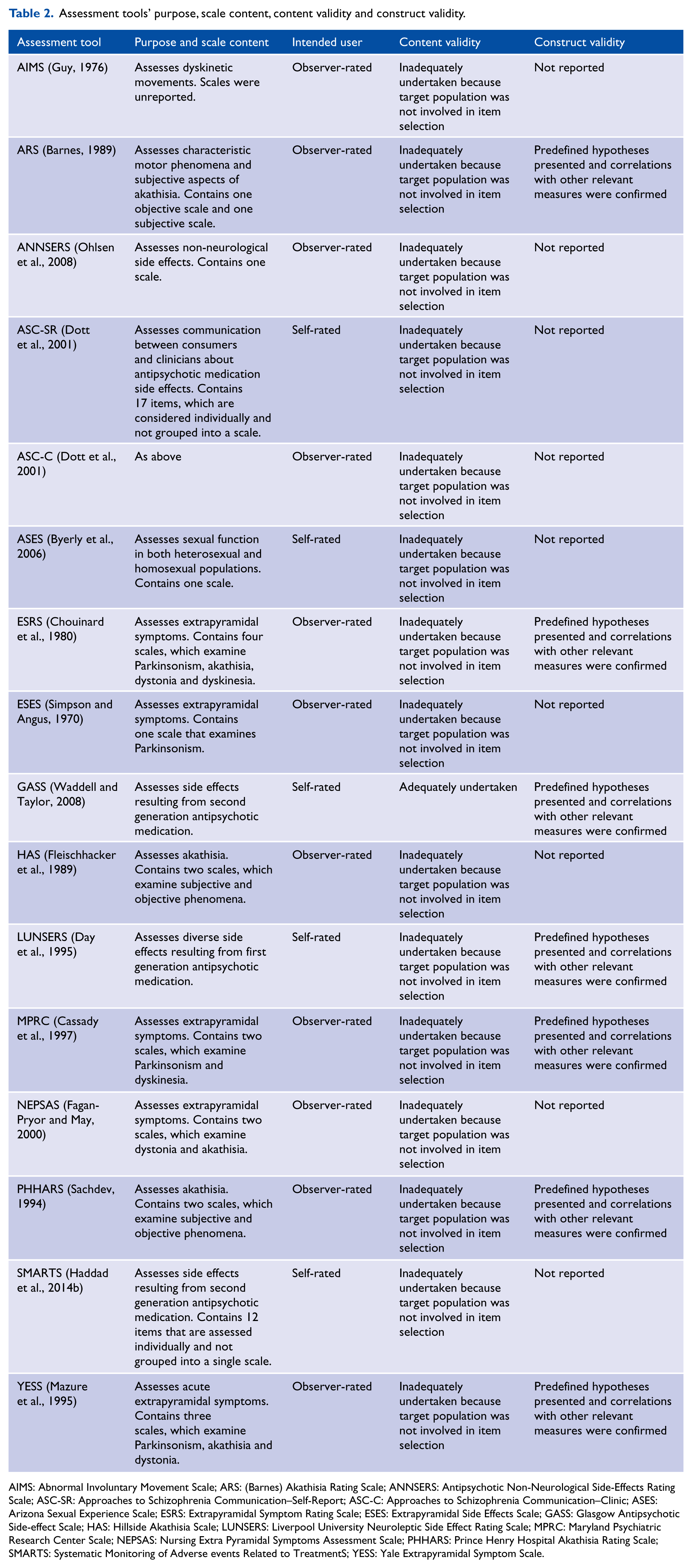

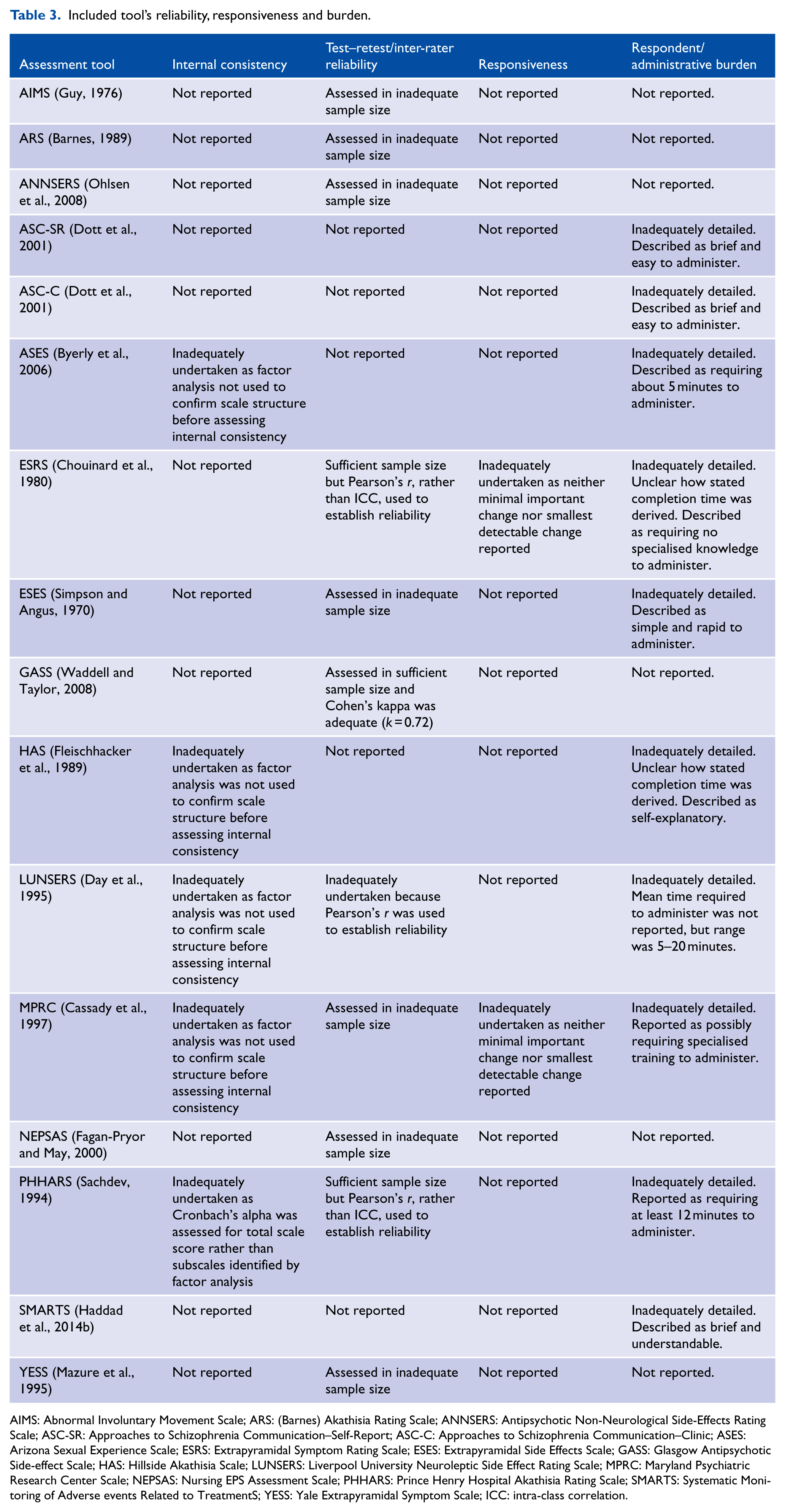

Evaluation of the psychometric properties of antipsychotic medication side effect assessment tools

Tables 2 and 3 display summarised details for the included tools’ purpose, scale content, intended user, content validity, construct validity, internal consistency, test–retest reliability, inter-rater reliability, responsiveness, respondent burden and administrative burden. Supplementary Tables S1–S4 display the full details that were extracted to rate each of these psychometric attributes. Supplementary figures and tables can be found online with this article http://anp.sagepub.com/. Supplementary Table S5 displays the summarised ratings for the included tools.

Assessment tools’ purpose, scale content, content validity and construct validity.

AIMS: Abnormal Involuntary Movement Scale; ARS: (Barnes) Akathisia Rating Scale; ANNSERS: Antipsychotic Non-Neurological Side-Effects Rating Scale; ASC-SR: Approaches to Schizophrenia Communication–Self-Report; ASC-C: Approaches to Schizophrenia Communication–Clinic; ASES: Arizona Sexual Experience Scale; ESRS: Extrapyramidal Symptom Rating Scale; ESES: Extrapyramidal Side Effects Scale; GASS: Glasgow Antipsychotic Side-effect Scale; HAS: Hillside Akathisia Scale; LUNSERS: Liverpool University Neuroleptic Side Effect Rating Scale; MPRC: Maryland Psychiatric Research Center Scale; NEPSAS: Nursing Extra Pyramidal Symptoms Assessment Scale; PHHARS: Prince Henry Hospital Akathisia Rating Scale; SMARTS: Systematic Monitoring of Adverse events Related to TreatmentS; YESS: Yale Extrapyramidal Symptom Scale.

Included tool’s reliability, responsiveness and burden.

AIMS: Abnormal Involuntary Movement Scale; ARS: (Barnes) Akathisia Rating Scale; ANNSERS: Antipsychotic Non-Neurological Side-Effects Rating Scale; ASC-SR: Approaches to Schizophrenia Communication–Self-Report; ASC-C: Approaches to Schizophrenia Communication–Clinic; ASES: Arizona Sexual Experience Scale; ESRS: Extrapyramidal Symptom Rating Scale; ESES: Extrapyramidal Side Effects Scale; GASS: Glasgow Antipsychotic Side-effect Scale; HAS: Hillside Akathisia Scale; LUNSERS: Liverpool University Neuroleptic Side Effect Rating Scale; MPRC: Maryland Psychiatric Research Center Scale; NEPSAS: Nursing EPS Assessment Scale; PHHARS: Prince Henry Hospital Akathisia Rating Scale; SMARTS: Systematic Monitoring of Adverse events Related to TreatmentS; YESS: Yale Extrapyramidal Symptom Scale; ICC: intra-class correlation.

Content validity

Mental health consumers and experts were involved in item generation for only one (GASS) of the tools, and hence only this tool received a positive rating for content validity.

Construct validity

Construct validity was evaluated for eight tools (ANNSERS, ARS, ESRS, GASS, LUNSERS, MPRC, PHHARS, YESS), and in all cases the correlation coefficients were adequate and confirmed the predefined hypotheses. Hence, these tools received a positive rating for construct validity.

Internal consistency

Of the 12 tools that contained scales, internal consistency was only assessed for five tools (ASES, HAS, LUNSERS, MPRC, PHHARS), but factor analysis was not used to establish the scales’ dimensions for four of those tools (ASES, HAS, LUNSERS, MPRC). In the case of the tool (PHHARS) that factor analysis was used to establish the scales’ dimensions, Cronbach’s alpha was not calculated for the identified subscales. No tool received a positive rating for internal consistency.

Test–retest/inter-rater reliability

In considering the studies that assessed test–retest or inter-rater reliability, an adequate sample size was only used to assess three tools (ESRS, GASS, PHHARS). Of these tools, an appropriate statistical approach to establish reliability was only used for one tool (GASS), which therefore was the only tool that received a positive reliability rating.

Responsiveness

The assessment of responsiveness was not adequately undertaken for any tool included in this review.

Respondent/administrative burden

The reporting of administrative or respondent burden was not adequately detailed for any tool included in this review.

Discussion

The overall quality of the psychometric properties of the antipsychotic medication side effect assessment tools included in this systematic review was very modest. Foremost among the issues that need to be addressed in subsequent validation studies is a re-evaluation of the tools’ content validity. Only one of the tools (GASS) reviewed here incorporated the views of mental health consumers in generating the tool’s items. However, both the target population and clinicians’ views need to be elicited in deriving the items to ensure that the content reflects all pertinent aspects of the constructs captured by the tool (Reeve et al., 2013; Terwee et al., 2007). The reassessment of the tools’ content validity should be prioritised because evaluations of construct validity, reliability and responsiveness are immaterial until content validity has been acceptably established (Evans et al., 2004; Terwee et al., 2007).

Once content validity has been clearly established, some form of statistical approach should be used to determine whether the items tap one scale or form more than one scale, and if some items may be redundant (Aaronson et al., 2002). Acceptable statistical approaches to examine dimensionality include factor analysis, principal components analysis and Rasch analysis (Terwee et al., 2007). After such approaches have confirmed the tool’s dimensions, Cronbach’s alpha can be derived to establish whether the correlation between items is high enough to justify summation of the item scores (Terwee et al., 2007). For the tools included in this review, a statistical approach examining dimensionality was only used in five cases (ASES, ESRS, ESES, MPRC, PHHARS), and even then the items were appropriately grouped into the identified scales for only one tool (MPRC). This finding indicates that additional studies are required to establish the structure of most of the tools included in this review.

Reliability was assessed for almost all tools included in this review, but only in one case (GASS) was the evaluation of reliability consistent with recommended guidelines (Barten et al., 2012; Reeve et al., 2013; Terwee et al., 2007). The most common issue, found in 11 studies examining the tools’ reliability, was the use of an inadequately sized sample. When reliability studies comprise an insufficient number of participants, it results in an unacceptable level of imprecision in reliability estimates (Terwee et al., 2007). Another common issue was the use of Pearson correlation coefficients to establish reliability, which does not adjust for systematic differences between raters leading to imprecise reliability estimations (Mokkink et al., 2010; Reeve et al., 2013). Finally, in several studies, reliability coefficients were calculated for individual items, and in some cases not all items, rather than overall scale scores. In summary, our findings demonstrate that reliability should be reassessed for all tools included in this review apart from the GASS.

Responsiveness was only assessed for one of the tools included in this review, despite the developers of many of the tools claiming that the tools would be useful in monitoring changes in antipsychotic medication side effects. Reliability was commonly evaluated for the tools included in this review, and it may be the case that the tools’ developers, in establishing reliability, thought that it also provides support for the tools’ ability to track change over time. However, reliability relates to the extent to which a tool’s scores remain consistent when the tool is administered over a period in which the construct (e.g. akathisia or dyskinesia) under evaluation has not changed (Barten et al., 2012; Terwee et al., 2007). Alternatively, responsiveness establishes a tool’s ability to identify change in the construct under evaluation over time (Barten et al., 2012; Terwee et al., 2007). These psychometric properties, responsiveness and reliability, differ distinctly and require different statistical approaches (Barten et al., 2012).

Limitations

Our search strategy identified a substantial number of antipsychotic medication side effect assessment tools. However, the sensitivity and specificity of search strategies for the identification of side effect assessment tools have not been formally evaluated, and hence, it seems likely that not all relevant tools were located. Also, screening of titles and abstracts was undertaken by only one author, which increases the likelihood of overlooking relevant studies. Finally, the critical appraisal criteria used in this study were based on the Medical Outcome Trust (Aaronson et al., 2002) and Terwee et al.’s (2007) recommendations, but as they note these criteria are generally derived from ‘useful rules of thumb’ and no empirical evidence exits to support their use.

Conclusion

In general, the psychometric properties of the antipsychotic medication side effect tools included in this review were deficient in almost every regard. None of the tools included in this review received a positive rating for all of the appraised psychometric properties. Indeed, only one of the tools received more than one positive rating, which indicates that further studies are required to consolidate the psychometric quality of the tools included in this study. Given this context, and the need to develop evidence-based health care for mental health consumers, the findings of this systematic review may be of interest to policy makers and practitioners and to the consumers they serve.

Footnotes

Declaration of interest

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.