Abstract

Artificial intelligence (AI) has rapidly emerged as a transformative force in surgical research, driving innovation in preoperative planning, intraoperative guidance, workflow optimization, and academic productivity. Advances in computational power and multimodal data have expanded applications beyond retrospective analytics and predictive modeling to real-time clinical decision support. Current use cases span AI-assisted three-dimensional planning, automated scheduling, and ambient scribe technology. Simultaneously, large language models are increasingly applied to literature synthesis, data analysis, and manuscript development. Yet, this rapid integration raises critical ethical concerns, including algorithmic bias, data privacy, model opacity, and issues of accountability and generalizability. This review synthesizes recent technological advancements and examines these ethical challenges, proposing structured frameworks for responsible implementation. By embedding principles of transparency, equity, and patient safety, the surgical research community can ensure AI innovation translates into meaningful and trustworthy improvements in care.

Introduction

Artificial intelligence is reshaping surgical research and practice, moving from theoretical promise to demonstrable clinical and academic utility. Intraoperatively, AI-assisted planning is reducing operative times and improving early functional outcomes.1,2 Outside the operating room, AI tools such as ambient scribe systems and machine learning (ML)-driven scheduling algorithms streamline workflows, reduce documentation burden, and optimize resource allocation. In the academic domain, large language models (LLMs) accelerate literature reviews, hypothesis generation, and manuscript drafting, lowering barriers to scientific contribution.

Widespread and rapid adoption of AI has simultaneously presented new ethical challenges. Algorithms may perpetuate biases embedded in their training data, and their decision-making processes often lack transparency. In the setting of their use involving sensitive patient data, this raises significant concerns regarding privacy and consent. Generative AI in scientific writing also disrupts traditional concepts of authorship, intellectual integrity, and ownership of ideas. Addressing these issues requires a proactive governance that balances innovation with core ethical principles of beneficence, justice, autonomy, and nonmaleficence. 3

This review highlights the dual narrative of AI in surgical research—its rapid, technological progress and accompanying ethical implications—and proposes a framework for responsible integration into surgical logistics, research, and practice.

Recent AI Advancements in Surgical Logistics

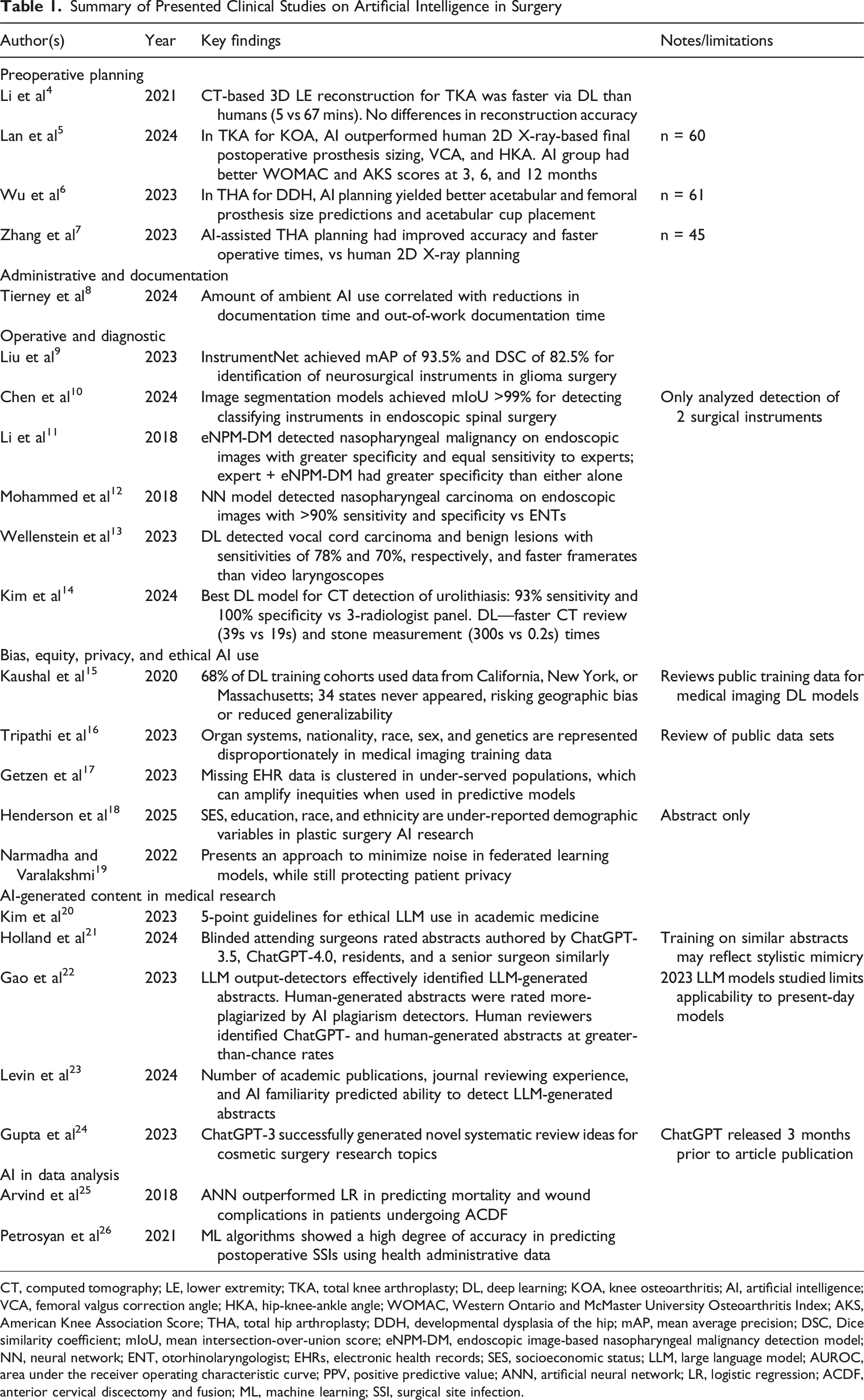

Summary of Presented Clinical Studies on Artificial Intelligence in Surgery

CT, computed tomography; LE, lower extremity; TKA, total knee arthroplasty; DL, deep learning; KOA, knee osteoarthritis; AI, artificial intelligence; VCA, femoral valgus correction angle; HKA, hip-knee-ankle angle; WOMAC, Western Ontario and McMaster University Osteoarthritis Index; AKS, American Knee Association Score; THA, total hip arthroplasty; DDH, developmental dysplasia of the hip; mAP, mean average precision; DSC, Dice similarity coefficient; mIoU, mean intersection-over-union score; eNPM-DM, endoscopic image-based nasopharyngeal malignancy detection model; NN, neural network; ENT, otorhinolaryngologist; EHRs, electronic health records; SES, socioeconomic status; LLM, large language model; AUROC, area under the receiver operating characteristic curve; PPV, positive predictive value; ANN, artificial neural network; LR, logistic regression; ACDF, anterior cervical discectomy and fusion; ML, machine learning; SSI, surgical site infection.

Preoperative Planning

An example of highly efficient AI-driven preoperative planning is evident in orthopedics. Traditional two-dimensional imaging limits accuracy in estimating implant size and alignment. Deep learning now enables 3D reconstructions that improve precision, reduce time, and enhance outcomes. For example, AI-guided CT reconstructions for robot-assisted total knee arthroplasty significantly shortened planning time while maintaining accuracy comparable to expert operators. Artificial intelligence-based planning also outperforms conventional methods in predicting prosthesis size, alignment, and correction angles, leading to superior functional outcomes.4,5 Similarly, Wu et al used AI-assisted 3D planning software for patients with developmental dysplasia of the hip undergoing total hip arthroplasty. 6 Artificial intelligence-assisted 3D planning improved accuracy of acetabular and femoral prosthesis placement, reduced operative time, and yielded higher postoperative Harris scores reflecting improved postoperative functional recovery. 7 Collectively, these findings underscore AI’s capacity to transform complex imaging data into individualized, efficient, and highly accurate surgical plans.

Administrative Efficiency

Artificial intelligence has also been adopted to reduce administrative burden, a major driver of physician burnout. 27 New ambient AI scribe technology has emerged as an innovative solution to this problem. In 2023, The Permanente Medical Group enabled the use of ambient AI scribes for more than 10 000 physicians and staff. 8 Within 10 weeks, the technology was used in over 300 000 patient encounters, with physicians reporting significant reductions in after-hour administrative work, enhanced quality of patient interactions, and improved overall satisfaction. Patient feedback was just as favorable, with many patients perceiving improvements in the depth and personalized nature of their clinical encounters. Statistical analyses and evaluation metrics showed that the use of this ambient AI scribe technology produced high-quality clinical documentation and was also linked with reduced time spent in the EHR.

Artificial intelligence is also optimizing surgical scheduling, historically plagued by inaccurate time estimates. Machine learning-based scheduling algorithms that account for case duration patterns, surgeon-specific factors, and procedural complexity have been shown to improve the accuracy of operative time predictions. At one institution, the application of such a system decreased overtime rates by 21% while maintaining overall surgical volume, yielding an estimated $469,000 in cost savings over three years. 28 In the summer of 2024, Tampa General Hospital launched a new surgical operations system that utilizes cameras and AI to precisely determine schedule-pertinent data such as case durations and turnover times, saving the hospital more than 3000 minutes per week and allowing them to schedule hundreds of additional procedures that otherwise could not have been offered. 29 These advances highlight AI’s potential to reduce inefficiencies, lower costs, and allow surgeons to focus more on patient care.

Preoperative Diagnosis, Intraoperative Guidance, and Surgeon Feedback

Artificial intelligence is increasingly applied intraoperatively through real-time video segmentation, improving visualization of instruments in complex fields to improve surgical accuracy. Artificial intelligence-based image segmentation technologies have facilitated the precise determination of the specific position of surgical instruments in real-time. Liu et al proposed a deep learning surgical instrument segmentation model, InstrumentNet, that demonstrated high accuracy on benchmark data sets in the context of craniotomy for glioma surgery. 9 Similarly, Chen et al reported the potential of another highly accurate deep learning instrument segmentation model to be used in spinal endoscopic surgeries. 10 Such models can augment surgical environmental awareness and guide intraoperative navigation.

Artificial intelligence has also shown strong performance in surgical diagnostics. Algorithms for nasopharyngeal carcinoma and laryngeal lesions using transnasal endoscopic images have achieved diagnostic accuracies comparable to or exceeding expert clinicians, often at real-time processing speeds.11,12 One specific algorithm developed at Sun Yat-sen University Cancer Center in China was trained on a data set of 19 000 biopsy-proven images from 5557 patients and outperformed fully trained physicians in the diagnostic classification of nasopharyngeal malignant masses. 11 In addition to single-frame image analysis, AI algorithms have been created to localize and identify benign and malignant laryngeal lesions on video endoscopy in real time. One such algorithm developed by a group of researchers from the Netherlands processed video at a frame rate of 63 frames per second, made decisions in 16 milliseconds per frame, and had malignancy detection sensitivities ranging from 71% to 78%. 13 In urology, deep learning models have achieved 95% accuracy in detecting urolithiasis on CT scans, outperforming specialists in speed. 14 These applications support earlier detection, reduced diagnostic error, and timely intervention through AI. Beyond patient outcomes, AI-driven virtual reality simulators provide individualized feedback, while visual question-answering systems offer real-time intraoperative guidance for trainees.30,31 Such innovations suggest AI may not only enhance surgical precision and efficiency but also safeguard surgeon performance and accelerate training.

AI in Surgical Research and Academic Publishing

Artificial intelligence is being rapidly adopted in surgical research, with LLMs and ML tools increasingly integrated into literature review, study design, data extraction, and manuscript preparation. Surveys across medical specialties demonstrate widespread adoption, with nearly half of urologists and one-third of postdoctoral researchers reporting use of LLMs for academic tasks.32,33 For surgeon-investigators, the meaningful impact of AI lies not in abstract technological potential but in its practical applications to common study formats, as with retrospective cohort analyses, National Surgical Quality Improvement Project (NSQIP) reviews, institutional EMR studies, and quality-improvement (QI) projects.

AI in Study Design

Artificial intelligence can support study conception by assisting with identifying literature gaps, refining hypotheses, and protocol planning. Topic-modeling algorithms such as latent Dirichlet allocation can rapidly analyze thousands of publications, highlighting patterns, emerging themes, and understudied “cold spots” in the literature.34,35 These tools help surgeon-scientists avoid duplicative work and design studies that contribute meaningful novelty, particularly in fields where terminology is inconsistent across subspecialties.

Bibliometric and literature-mapping platforms (eg, ResearchRabbit) further assist by visualizing citation networks, clustering conceptually related articles, and identifying influential works from a single seed paper. 36 Such tools significantly reduce the time required to assemble a comprehensive background and can help refine clinical questions using registry-level or outcomes-based data. Large language models also support protocol drafting by suggesting inclusion criteria, variable definitions, and conceptual statistical approaches, as long as their outputs are independently validated.

AI in Data Analysis

Artificial intelligence has become particularly impactful in data-rich research environments, including NSQIP analyses, EMR-derived retrospective cohorts, and institutional QI studies. Predictive analytics using artificial neural networks or other ML algorithms frequently outperform traditional logistic regression for outcomes such as postoperative complications or morbidity prediction.25,26 NLP tools can extract comorbidities, complications, or time-stamped events from free-text notes, transforming workflows previously limited by manual chart review. This capability is especially valuable in trauma, oncology, and acute care surgery, where clinically meaningful variables (eg, operative findings, complications, and symptom descriptions) often appear primarily in narrative documentation. 37

Large language models equipped with code-execution environments can assist with exploratory analysis, data cleaning, and generation of preliminary statistical output. These tools reduce technical barriers for investigators without formal programming training, though rigorous oversight remains essential.15,38 Surgeons must verify model performance, assess bias, and ensure appropriate handling of missingness, class imbalance, and covariate structure. As these tools accelerate data-driven discovery, they may also shift research priorities toward more computationally tractable questions, underscoring the need for methodological literacy among surgeon-scientists.

Ethical Implications of AI-Driven Surgical Research

Bias, Equity, and Data Set Limitations

Artificial intelligence models inevitably reflect the data sets on which they are trained. Within surgical research, underrepresentation of racial minorities, low-income populations, and complex social determinants lead to biased predictions and limited generalizability.16-18 Because many surgical AI studies do not report socioeconomic variables, the risk of propagating inequity is substantial.39,40 Investigators should disclose data set composition, identify potential sources of bias, and interpret findings with transparency regarding limitations. Institutional review boards (IRBs) and journals should prioritize clear reporting standards and encourage the use of diverse or federated data sets when feasible.

“Black Box” Problem and Interpretability

Opaque models challenge informed consent, clinical trust, and scientific transparency. 41 This “black box” nature complicates informed consent and accountability. 42 Explainable AI (XAI) techniques offer partial solutions, improving trust and collaboration. 43 This term encompasses any technique in AI/ML research that increases interpretability of results, such as saliency maps, similarity classification, internal representation modeling, and more recently with the surge of LLMs, written model explanations. 44 These methods provide deeper insights into why AI/ML models make the decisions they make and what information they rely on from the given input to generate an output. Explainable AI also helps ameliorate concerns about the accuracy of a model, giving users insights into how a model maps inputs to outputs.44,45 Surgeons using AI models in research must understand model limitations and be prepared to justify outputs using clinically meaningful reasoning. 46

Data Privacy and Governance

Artificial intelligence-driven research requires sensitive patient data, raising concerns about privacy, ownership, and security. HIPAA de-identification guidelines are insufficient in the AI era, as cross-linking multiple data sets can re-identify patients.19,42,47,48 Federated learning provides one feasible alternative by allowing models to train locally on protected institutional data, but broader governance updates are needed.49,50 Open-source LLMs such as Ollama (ollama.org LLC, Fort Lauderdale, FL) can be easily downloaded and hosted on institutional computers and servers, allowing LLMs to be used on protected data that would otherwise raise privacy concerns. Surgeons conducting EMR-based research should work with institutional privacy officers to ensure secure storage, transparent patient consent practices, and data-use agreements that reflect modern risks.

Authorship, Accountability, and Academic Integrity

Scientific Writing

Large language models are increasingly used for text generation, figure preparation, coding, and summarization. While these tools can improve clarity and accessibility, they introduce risks of hallucinated content, fabricated citations, plagiarism, and unintentional appropriation of others’ intellectual work, raising questions regarding liability.42,51,52 Although present frameworks remain underdeveloped, authors ultimately remain fully responsible for verifying all AI-assisted output.20,53 The United States Food and Drug Administration (FDA) guidance on Software as a Medical Device offers vague recommendations regarding AI accountability, while the European Union’s 2024 AI Act mandates human oversight. 21 Regulatory clarity will be necessary to ensure fair and safe allocation of responsibility.

ChatGPT and similar LLMs are powerful tools for scientific writing. One study investigated the ability of ChatGPT versions 3.5 and 4.0 to both write and grade surgical research abstracts and found no statistical difference in the grading of LLM- and trainee-written abstracts when graded by senior surgeons. 22 The ability to distinguish AI-produced work from human writing was also found to be slightly improved with reviewer experience and assistance from simple AI-detection tools, yet in each the discrimination rate remained no better than a coin flip.23,54 While proponents argue that LLMs democratize access to scientific writing and may streamline peer review, unchecked use risks undermining authorship, peer review, and intellectual property norms.24,55

However, the flaws associated with LLM use, including hallucinations, biased training data, and plagiarism of existing work, are well documented and disclaimers about their use are readily available.56-58 Until further guidelines are developed concerning the responsible use of AI/ML tools in research and scientific writing, best practices for surgeon-investigators include the following: logging all prompts and AI-assisted steps; independently validating facts, references, and data outputs; using LLMs only to augment (not replace) analytic reasoning; explicitly disclosing all AI contributions in accordance with ICMJE, WAME, CONSORT-AI, and SPIRIT-AI recommendations.

Peer Review

Large language models can also benefit peer review, as ChatGPT’s ability to grade surgical abstracts can be further harnessed to speed up the review process for publication. 22 There is an ethical obligation, however, for both the writers and reviewers to maintain diligent supervision of how such tools are used to avoid the scenario where AI review tools are used by journals to review and provide feedback on work that is primarily AI-generated, which ultimately renders authentic scientific writing and peer-review obsolete.

Taken together, these limitations make the use of LLMs and AI tools in writing seem daunting, but these considerations are imperative to guide ethical use. Independent verification of all claims and supporting references generated by LLMs is crucial for safeguarding academic integrity, preventing accidental plagiarism and full disclosure of AI use should become the norm for academic journals.

Generalizability and Model Robustness

Many AI tools fail during external validation because of limited data set diversity, inconsistent labeling, or institution-specific practice patterns.59,60 Surgeon-scientists must assess overfitting, calibrate models across relevant subgroups, and apply cross-validation or regularization where appropriate. 61 Poorly generalizable models risk patient harm and ethical development requires thoughtful design, transparent reporting, and independent external validation prior to translating research into clinical environments.

Frameworks for Ethical AI in Surgical Research

The ethical considerations outlined above underscore the need for structured frameworks to guide the responsible use of AI/ML in surgical research. As AI technology evolves rapidly, developing such frameworks is challenging yet paramount. While this review does not propose prescriptive guidelines, we highlight key avenues grounded in transparency, accountability, collaboration, and justice.

Current Regulatory Frameworks

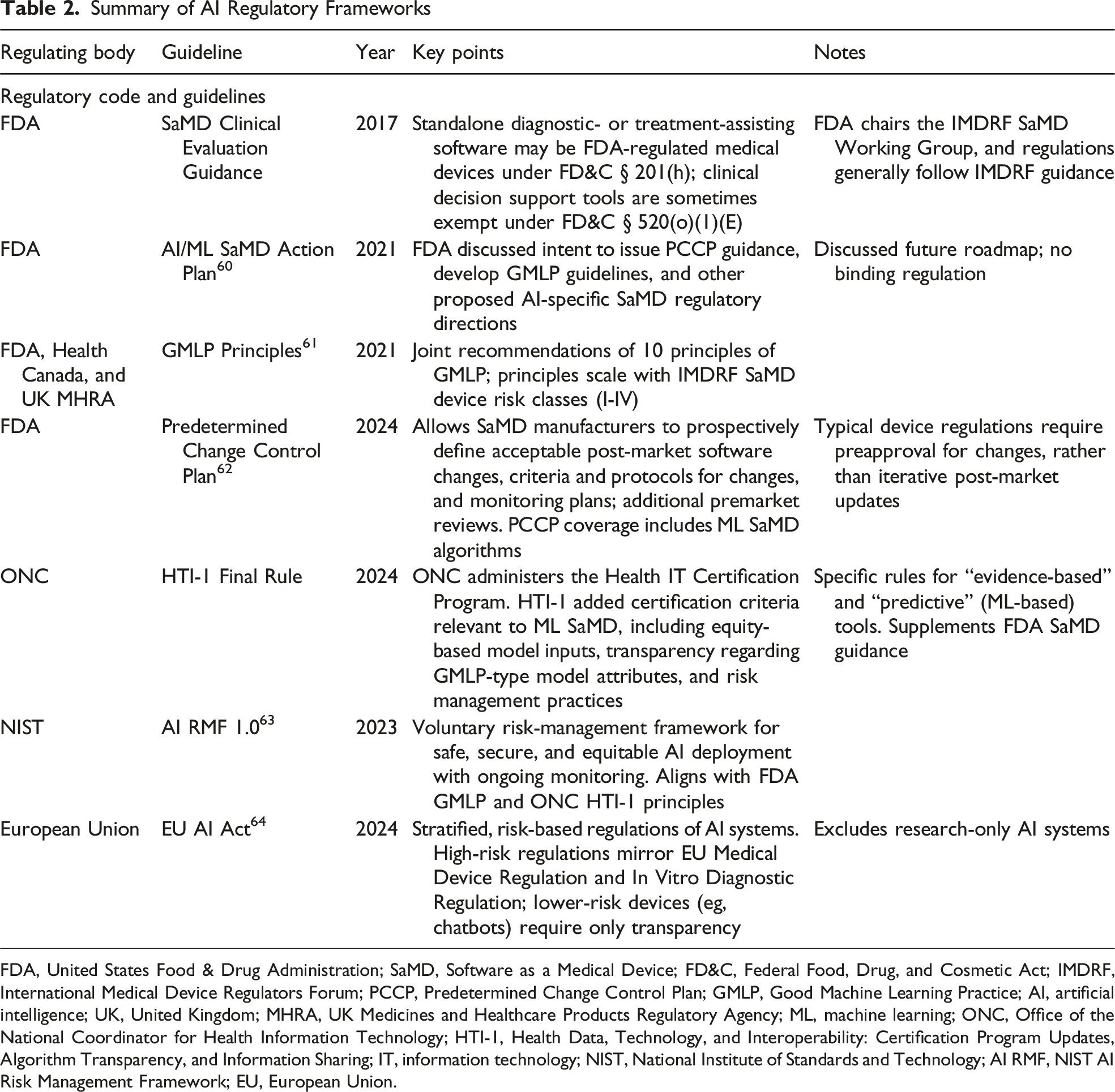

Summary of AI Regulatory Frameworks

FDA, United States Food & Drug Administration; SaMD, Software as a Medical Device; FD&C, Federal Food, Drug, and Cosmetic Act; IMDRF, International Medical Device Regulators Forum; PCCP, Predetermined Change Control Plan; GMLP, Good Machine Learning Practice; AI, artificial intelligence; UK, United Kingdom; MHRA, UK Medicines and Healthcare Products Regulatory Agency; ML, machine learning; ONC, Office of the National Coordinator for Health Information Technology; HTI-1, Health Data, Technology, and Interoperability: Certification Program Updates, Algorithm Transparency, and Information Sharing; IT, information technology; NIST, National Institute of Standards and Technology; AI RMF, NIST AI Risk Management Framework; EU, European Union.

In the United States, the FDA regulates AI-enabled clinical tools under its Software as a Medical Device (SaMD) framework, which sets standards for safety and effectiveness in software performing medical functions. Its 2021 AI/ML SaMD Action Plan emphasized the need for transparency, real-world monitoring, and regulatory clarity. 62 In the same year, joint guidance from the FDA, Health Canada, and UK MHRA introduced Good Machine Learning Practice principles (2021) to further outline standards for data integrity, algorithm training, and performance evaluation. 63 The introduction of the Predetermined Change Control Plan (FDA 2024) marked a shift in the industry, enabling manufacturers to predefine acceptable algorithm updates in initial submissions. 64 This allows for safer and more efficient deployment of evolving AI tools, which is critical for surgical research applications amid evolving technologies and practices.

Risk management guidance is also being standardized through the National Institute of Standards and Technology (NIST) AI Risk Management Framework (RMF 1.0), 65 released in January 2023. Although voluntary, the NIST RMF provides practical tools for identifying and mitigating risks related to bias, robustness, interpretability, and privacy, and it has seen broad uptake among U.S. health institutions to structure AI governance throughout the system lifecycle.

Globally, the European Union (EU) AI Act (2024) 66 designates AI systems used for medical purposes as “high-risk,” requiring representative training data, technical documentation, human oversight, and post-market monitoring. These provisions apply alongside the EU Medical Device Regulation (MDR) and In Vitro Diagnostic Regulation (IVDR), with phased implementation starting in 2026-2027.67,68 Similarly, the UK MHRA has advanced its Software and AI as a Medical Device Change Programme, updated through 2025, aligning with international principles while retaining UK-specific requirements. 69 Additional global touchstones include IMDRF SaMD guidance and WHO frameworks on ethics and governance, both emphasizing traceability, equity, and continuous oversight.70-72

Intellectual Property, Transparency, and Reporting Standards

The rise of AI-assisted writing intensifies the need for clear authorship and reporting rules. AI tools cannot assume authorship, and most editorial bodies—including the Committee on Publication Ethics (COPE), the World Association of Medical Editors (WAME), and the Journal of the American Medical Association (JAMA)—require human accountability with disclosure of AI use. 73 However, journal policies remain inconsistent. 74 For example, while the International Committee of Medical Journal Editors recommends detailing the use of AI for writing assistance in the acknowledgments section, 75 some publishers (eg, Springer) note that the use of AI for improvements to human-generated texts for readability and style does not need to be disclosed. 76 Other organizations like WAME encourage authors to report the exact prompts that were used and to disclose the version and type of AI tools employed. 77 These inconsistencies not only increase the risk that researchers unintentionally violate editorial policies but could also incentivize authors to submit to certain journals over others simply due to more lenient reporting policies.

To address this patchwork, AI-specific reporting standards are emerging. Traditional guidelines (CONSORT for randomized controlled trials, PRISMA for systematic reviews and meta-analyses, SPIRIT for randomized controlled trials, STARD for diagnostic accuracy studies) predate AI, but extensions such as SPIRIT-AI and CONSORT-AI are already published, with PRISMA-AI and STARD-AI forthcoming.78-80 Broad adoption of such extensions could harmonize transparency, reduce policy contradictions, and safeguard integrity—provided they are developed through rigorous, consensus-based processes. 77 The current extension guidelines promote the inclusion of all pertinent methodological information required to report an AI model used in a research study, and the adoption of similar reporting standards across medical journals would level the playing field of AI tool development. For example, these guidelines include the reporting of the algorithm version used, the hardware used to train the model, the environment in which the model was developed, the procedure of gathering and processing input data, and even the instructions and skills needed to reproduce the training. These guidelines are designed to maximize the transparency and reproducibility of AI-based work and are also flexible to change in response to the rapidly changing field. Medical journals should strongly consider requiring adherence to appropriate AI extension reporting guidelines for all submissions, and peer reviewers should make sure that their reviewed submissions adhere to these guidelines before signing off for publication.

Algorithm Auditing and Validation

Most surgical AI models are trained on limited data sets, necessitating external validation to ensure generalizability and patient safety. Despite the development of AI-specific extensions of research and publication guidelines, validation methods are inconsistent, complicating interpretation. 81 To address this concern, a recent study by Kenig et al described a novel classification system named SURVAS (Surgical Validation Score) that stratifies the different validation tests based on levels of reported scientific evidence. 81 A Level 1 (High Evidence) classification, for example, was assigned to validation methods of high statistical robustness and those that had commonly and reliably been used for testing generalizable models (ie, AUROC, repeated cross-validation, and Cox regression). A Level 3 (Low Evidence) classification, on the other hand, was given to those that offered limited value and had mainly been used in exploratory or smaller studies (ie, hold-out validation and simple split methods). The creation and standardization of a classification system like SURVAS that delineates AI validation methods by quality and reliability is necessary as more AI models are considered for integration into surgical workflow.

Beyond validation, continuous auditing is essential once AI tools enter clinical practice. Models are vulnerable to “data drift,” where changing populations degrade performance over time and could ultimately affect patient safety. 82 The systematic auditing for these algorithms can model the regulatory framework for the post-market surveillance of medical devices in which manufacturers continuously track performance, update safety profiles, and report adverse events as evidence emerges, thereby providing a necessary safeguard.

Ethical Oversight and Regulation

Generative AI makes it easier than ever to produce scientific output, raising the stakes for robust ethical oversight. Frameworks must reinforce the principles of beneficence, nonmaleficence, justice, and autonomy. The EU AI Act provides a model, emphasizing human oversight throughout AI development and application. This framework advocates for human-centric AI tools throughout the entire course of development, beginning with thorough input from human experts on the back end and respecting human dignity and autonomy in application on the front end. Such an approach aims to foster trust in the systems being developed, uphold guiding ethical principles, and maintain accountability among the individuals involved in the training of AI tools.

In surgical research, a human-centric approach would encompass the below three principles: 1. Using AI to augment, not replace, writing and analysis, with full disclosure of its role. 2. Mandating surgeon input when AI assists surgical decision making. 3. Ensuring post-publication monitoring to safeguard autonomy and patient trust.

Promoting Equity and Access

Without intentional design, AI risks exacerbating global disparities. High-resource institutions are most likely to adopt cutting-edge tools, while underrepresented populations remain excluded. Frameworks should therefore mandate inclusive data sets and encourage collaboration with low-resource centers, supported by investment in infrastructure and digital literacy.

Frameworks that promote collaboration and open access are another step towards ensuring equitable AI tools are developed. Open-access data sets and models can democratize participation and allow local refinement for diverse populations. While such initiatives raise challenges around security and consent, they counter the risk of proprietary and high-cost AI tools serving only privileged markets. Ensuring equitable access is not peripheral to AI governance; rather, it is central to realizing its promise in surgical care.

Future Directions

Future efforts should prioritize standardized frameworks for validation and reporting to ensure AI models are rigorously tested across diverse data sets before clinical use. Establishing institutional oversight for ethics, bias, and data protection, alongside stronger collaboration among surgeons, data scientists, and ethicists, will be key to developing clinically meaningful and transparent tools. Expanding federated learning can enable large, representative data sets while preserving privacy, and advances in explainable AI will enhance clinician trust and patient consent. Clear authorship and disclosure policies in academic publishing will further promote accountability. As AI continues to evolve, its growth will likely be characterized not by a single dominant paradigm but by diverse, context-driven innovations that redefine surgical research and practice. Editors and peer reviewers have a critical role in upholding research integrity by verifying that data sets are sufficiently representative to ensure generalizability and holding authors to addressing potential biases and limitations of their work. Reviewers should also assess if authors maintained full accountability for intellectual content and took steps to ensure the accuracy of AI-generated text, citations, or analyses. Finally, adherence to emerging reporting frameworks, such as CONSORT-AI and SPIRIT-AI, should be encouraged, or even required, as part of the editorial checklist to help safeguard transparency and reproducibility.

Conclusion

Artificial intelligence is rapidly becoming integral to surgical research, with demonstrated gains in accuracy, efficiency, and knowledge dissemination. These advances come with significant ethical responsibilities; neglecting them risks eroding trust, widening disparities, and undermining scientific integrity. The future of AI in surgery will be defined by how well the field balances innovation with responsibility—through representative data sets, interpretable algorithms, robust data protections, clear accountability, and transparent authorship standards. By embedding these principles into every stage of development and deployment, the surgical community can harness the immense potential AI offers while upholding the foundational ethics of medicine.

Footnotes

Author Contributions

MXM, FR, and BC—conceptualization, draft of preliminary manuscript, revision, and approval of final version.

DL, NR, and LG—conceptualization, critical review of manuscript with revision for incorporating intellectual content, and approval of final version.

AR—senior author, conceptualization, critical review of manuscript with revision for incorporating intellectual content, and approval of final version.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.