Abstract

To understand how beliefs about mis- and disinformation affect citizens’ (correct) classification of pseudo-information, this paper relies on an experimental survey study in the United States and the Netherlands in which we (a) measured mis- and disinformation attitudes, (b) exposed participants to a real versus fake article on immigration and criminality, and (c) compared classifications of mis- and disinformation in response to the real and fake news article. The main findings indicate that the veracity of information did not play a clear role in the attribution of mis- and disinformation. People with stronger mis- and disinformation beliefs, and people with incongruent prior attitudes, were most likely to classify information as false irrespective of the level of untruthfulness. These findings imply that beliefs about misinformation play a key role in the classification of information as false, whereas these beliefs do not contribute to the accuracy of veracity judgments.

In recent years, concerns about the (un)truthfulness of journalism and political communication are getting more widespread. Some even argue that today’s digital information ecology—fueled by the technological affordances of social media—can be referred to as “post-truth” or “post-factual” (Lewandowsky et al., 2012; Van Aelst et al., 2017). This means that consensus on the factual status of verified empirical evidence is declining, and that reality is subject to (partisan) framing and interpretation. This has crucial ramifications for the foundations of deliberative democracy: When citizens differ in the acceptance of basic facts on which (political) opinions and preferences are based, disagreement may no longer be founded on a deliberation and interpretation of the same factual reality (Arendt, 2005). As pseudo-information, as well as concerns about and weaponizations of Fake News, are getting more pronounced in digital media ecologies, we have to pay attention to its impact on society (Weeks & Gil de Zúñiga, 2019). Building further on research that has looked at citizens’ beliefs related to Fake News as a weaponized term (e.g., Tong et al., 2020) and individual-level differences in susceptibility to false information (e.g., Pennycook & Rand, 2018; Schaewitz et al., 2020), this paper investigates how prior beliefs about the veracity and honesty of information relate to the (in)correct classification of pseudo-information.

To navigate the increasingly more fragmented digital information society, citizens need to be able to correctly separate true from false information and distinguish lies from honest mistakes (e.g., Jones-Jang et al., 2021). Yet, such decisions may not always be motivated by media literacy: Increasing levels of media critique and “Fake News” beliefs among citizens may lead to systematic errors in the recognition of false information. True information may be labeled as false, whereas false information that resonates with prior beliefs is labeled as true. In this complex media environment where truthfulness and alternative realities compete for legitimacy, this paper aims to answer the question whether mis- and disinformation beliefs help or harm the correct classification of pseudo-information.

To do so, we rely on an experimental survey study in the United States and the Netherlands—most different cases when it comes to partisan media and political systems—to map (a) perceptions regarding the accuracy and honesty of the mainstream press and (b) the extent to which these perceptions play a role in the (correct) identification of pseudo-information. With this study, we thus aim to understand whether news consumers with skeptical views on the news media (misinformation beliefs) versus those with more extreme cynical views closer to a populist worldview (disinformation beliefs) perform better or worse in accurately classifying pseudo-information.

Pseudo-Information: Distinguishing Between Mis- and Disinformation

Mis- and disinformation can be regarded as supply-side phenomena that pertain to the spread of untrue or false information (e.g., Tandoc et al., 2018; Wardle, 2017; Weeks & Gil de Zúñiga, 2019). Misinformation refers to any type of information that is simply incorrect—without being intentionally deceptive (Vraga & Bode, 2020; Wardle, 2017). Disinformation, however, can be defined as the intentional or goal-directed manipulation of information to achieve political goals (e.g., Bennett & Livingston, 2018; Tandoc et al., 2018). Both concepts are captured by the umbrella term pseudo-information, which refers to false information with problematic or harmful consequences irrespective of its intentions (Kim & Gil de Zúñiga, 2019).

To arrive at a more comprehensive understanding of the societal and democratic consequences of mis- and disinformation, we need to take the user-side into account. We specifically argue that beliefs about false information and the approach and persuasiveness of false content can reinforce each other: The more people believe that their information environment contains false and deceptive information, the more they may approach alternative media that have a higher likelihood to actually contain untruthfulness. This is backed-up by literature on the link between alternative media use and populist attitudes (Müller & Schulz, 2019) and research indicating that citizens with populist attitudes trust the news media less (Fawzi, 2018). In addition, Zimmermann and Kohring (2020) show that lower levels of media trust correspond with susceptibility to online disinformation. Together, this could imply that people with mis- and disinformation beliefs may be more likely to be drawn to disinformation.

Media Credibility in the Context of Pseudo-Information

As “Fake News” has become a weaponized term—both in the communication tactics of politicians that use it as a label (Egelhofer & Lecheler, 2019) and the perceptions of news consumers (Tong et al., 2020), this paper aims to measure citizens’ evaluations of media credibility in the context of increasing concerns about mis- and disinformation. Media credibility literature distinguished between beliefs related to the competence and trustworthiness of information (Hovland et al., 1953; McCroskey & Young, 1981). Competence implies that people trust the level of knowledge a source has on a given subject. Trustworthiness refers to the level of trust people have in the motives of the communicator, for example, that they are fair, rational, and honest (McCroskey & Young, 1981). Another relevant distinction in trust and credibility literature concerns the differentiation between skepticism and cynicism (Jackob, 2010). Skepticism refers to the awareness that information can contain errors (although not necessarily deceptive in intention). Cynicism, however, refers to a more profound rejection of media information based on the belief that the media are lying to the public.

These differential aspects of media credibility, skepticism, and cynicism are incorporated in the measurement of perceived mis- and disinformation used in the paper: We first of all measure news consumers’ more general beliefs regarding the accuracy, expertise, and factual basis of information (corresponding to the definition of misinformation, see Vraga & Bode, 2020). Second, we measure more “extreme” credibility beliefs that relate to the evaluation of the news media as deliberately misleading or deceptive, a lying outsider, or an enemy of the people. These perceptions relate to recent conceptualizations of populist attitudes that reach beyond the perceived divide between the people and political elites (e.g., Mede & Schäfer, 2020). Science-related populist attitudes, for example, cultivate the people’s opposition to scientific elites and express distrust in the honesty and veracity of elitist truth claims. A similar rationale follows the perceived disinformation measurement proposed here: They can be regarded as populist anti-media beliefs that regard the news media as a lying, dishonest enemy of the ordinary people.

Together, in conceptualizing perceptions of pseudo-information, we distinguish between more general accuracy (misinformation) beliefs and a sub-set of more extreme and populist-oriented (disinformation) beliefs. We look at these beliefs in response to exposure to false information (post-treatment credibility ratings) and as pre-treatment beliefs that make the (correct) classification of false information more or less likely. We aim to contribute to a better understanding of the consequences of pseudo-information for society, specifically related to the factors that contribute to the acceptance of such harmful content (Weeks & Gil de Zúñiga, 2019).

The (In)correct Classification of Pseudo-Information

Research by Allcott and Gentzkow (2017) revealed that people have a hard time recognizing false information. In this paper, we further argue that perceptions of mis- and disinformation may influence people’s (in)correct classification of content as pseudo-information. This correspondence between mis- and disinformation attitudes and attributions of untruthfulness can be understood as a confirmation bias (e.g., Knobloch-Westerwick et al., 2017) rooted in psychological processes of motivated reasoning (Taber & Lodge, 2006).

Specifically, people are argued to be intrinsically motivated to avoid the discomfort experienced by cognitive dissonance (Festinger, 1957). This means that (political) judgments and news selection may be guided by the need to maintain consonance between prior attitudes and convictions, behaviors, and evaluations. Hence, people who perceive that the news media do not accurately report on facts and perceive the news media to be distant from reality should rate political news in line with these perceptions. News consumers who perceive that news media are subject to misinformation should consequentially rate information in line with their perceptions of the media’s performance. Likewise, when people believe that disinformation—deliberate deception and harmful content—is more salient, they should use these perceptions when judging the truthfulness and honesty of (political) information.

As a first exploratory step, this study assesses whether people with mis- and disinformation beliefs are more likely to classify information as “Fake News”—irrespective of its actual veracity. Whereas misinformation classifications simply refer to the lack of facticity of information, disinformation ratings are a more severe accusation that correspond to the (right-wing) populist belief that the news media deliberately distort reality (e.g., Fawzi, 2018; Schulz et al., 2018). Based on the mechanisms of motivated reasoning, we believe that these divergent classifications are driven by prior credibility beliefs: More extreme disinformation ratings should be more accessible among news users that perceive the media as a dishonest enemy of the people, whereas the less severe misinformation ratings may result from a more critical (skeptical) perception of the news media’s general accuracy.

We introduce the following hypotheses: Participants with more pronounced misinformation attitudes are more likely to classify information as incorrect or inaccurate than participants with less pronounced misinformation attitudes (H1).

Participants with more pronounced disinformation attitudes are more likely to classify information as Fake News than participants with less pronounced disinformation attitudes (H2).

The Role of Veracity in Mis- and Disinformation Attributions

Oh and Park (2021) postulate that news users are generally not very well equipped to detect deception—which makes it relevant to explore how existing beliefs about mis- and disinformation help or harm the accurate detection of pseudo-information: Are people able to correctly distinguish fake arguments and evidence from claims that are substantiated by empirical evidence and expert knowledge?

To answer this question, we first have to understand how we can distinguish authentic content from pseudo-information based on content features. As shown by Molina et al. (2021), it is difficult to automatically distinguish pseudo-information from accurate content. However, it can be argued that fact-checked information, hard evidence, scientific reporting, and statistical data separate authentic information from mis- and disinformation (Molina et al., 2021) (also central in information literacy, see Jones-Jang et al., 2021). Building further on this classification, we exposed participants to real versus pseudo-information by varying the epistemic value of messages: Mis- and disinformation was created by removing statistical evidence, base-rate facts, and expert analyses and replace these informational factors for an emphasis on the people’s experiences, exemplars, and non-expert sources.

When it comes to the classification of the accuracy and honesty of information, it may be argued that base-rate information—relying on the indicators of truthfulness outlined above—trumps episodic frames and exemplars. Hard evidence should be scrutinized less than false statements that are not substantiated by expert knowledge and/or empirical evidence. In support of this, extant literature shows that citizens may rely on heuristics, such as the source or coherence of arguments, to assess the credibility of information (Lewandowsky et al., 2012), although individual differences may have a stronger relationship to misinformation’s credibility (Schaewitz et al., 2020). In addition, misinformation may be most credible when it uses aspects of reality to frame untruthfulness (Rogers et al., 2017). We thus assume that, across the board, misinformation framed without expert sources and empirical evidence is less credible than fabricated storylines that circumvent evidence. The following hypotheses are introduced: Exposure to false statements yields higher misinformation (H3a) and disinformation (H3b) attributions compared to exposure to real statements.

It could be argued that news credibility beliefs correspond to the media literacy citizens need in order to distinguish false from correct information—which would suggest that people with stronger misinformation beliefs are better able to judge the veracity of information. If we regard anti-media (populist) disinformation beliefs as an expression of severe system-level distrust in the news media, and distrust as a positive predictor of disinformation susceptibility (Zimmermann & Kohring, 2020), it could be argued that people with stronger disinformation beliefs are less likely to arrive at accurate judgments on disinformation’s lack of truthfulness. Hence, whereas misinformation beliefs, just like moderate skepticism, may correspond to more critical media literacy skills, disinformation beliefs may correspond to a system-level rejection that can lead to the incorrect classification of truthful information as fake. In other words, we expect that information media literacy corresponds to misinformation, but not disinformation beliefs.

We therefore hypothesize: Participants with more pronounced misinformation attitudes are more likely to classify incorrect information as false than participants with less pronounced misinformation attitudes (H3c) and participants with more pronounced disinformation attitudes are more likely to classify correct information as false than participants with less pronounced disinformation attitudes (H3d).

Attitudinal Congruence and Attributions of Pseudo-Information

Citizens show a tendency to uncritically accept information that aligns with their partisan or ideological lenses, whereas they are more likely to scrutinize information they disagree with. This is supported by fact-checking research. Although backfire effects are not as persistent as assumed in early research (see Nyhan et al., 2019), the alignment between false information and existing attitudes makes disinformation more and corrections less effective (Hameleers & Van der Meer, 2019). In addition, Schaewitz et al. (2020) show that the congruence of people’s prior beliefs with misinformation plays a central role in the credibility of false information. We hypothesize:

H4a: The higher the level of attitudinal congruence, the less likely people are to perceive political information as inaccurate or dishonest.

H4b: Participants with more pronounced misinformation attitudes are more likely to classify correct information as incorrect than participants with less pronounced misinformation attitudes, but this is less pronounced for participants that agree with the article’s statements.

H4c: Participants with more pronounced disinformation attitudes are more likely to classify correct information as incorrect than participants with less pronounced disinformation attitudes, but this is less pronounced for participants that agree with the article’s statements.

Mis- and Disinformation Across Different Settings: The United States and the Netherlands Compared

To better understand to what extent mis- and disinformation attitudes and classifications are perceived similarly in different contexts, we compare the bipartisan setting of the United States to a country with a multiparty government and opposition and a less partisan media system: the Netherlands. The rationale for the inclusion of these different cases is a “most different system design” (Przeworski & Teune, 1970): The United States and the Netherlands are expected to vary on the extent to which mis- and disinformation are experienced. According to Statista (2020), trust in the news media is substantially higher in the Netherlands (52%) than in the United States (29%), which could also mean that citizens in the United States are more likely to classify information as false. Hence, extant literature suggests that increasing levels of distrust in the (established) media is an important contextual opportunity structure for disinformation’s pervasiveness (e.g., Waisbord, 2018). Across these different settings, the aim is to assess the robustness of mis- and disinformation beliefs and classifications of untruthfulness.

Method

An online survey with an experimental module was conducted in the United States and the Netherlands. Specifically, a pre-treatment survey included measures for both dimensions of perceived communicative untruthfulness (mis- and disinformation attitudes). The experimental module randomly exposed participants to anti-immigration news: either a neutral, thematic version that was based on a real news article (but without source cues) and an adjusted, manipulated version in which falsehoods were deliberately added to make the article reflect a clearer right-wing political agenda (pseudo-information). We thus contrasted actual existing information to a manipulated article in which numbers and developments were placed out of context and extended with false interpretations. In terms of the conceptualization of mis- and disinformation introduced in the theoretical framework, this false article mainly reflects deliberate misleading in which existing information is manipulated to mobilize support for right-wing political agendas.

Design and Stimuli

Participants were randomly exposed to either a neutrally framed online news article that used real statements, or a made-up news story that described the same developments, but manipulated to reflect a (radical) right-wing agenda on immigration (see Figures A1 and A2 in Appendix A for the two news articles). Both articles described a situation of declining crime rates (a verified fact according to statistical bureaus and empirical research in both the United States and the Netherlands). However, in the fake news article, this development was reversed and connected to fabricated causes and opinions. Specifically, the fake article connected the development to failing elites in government. In addition, the article stressed that ordinary citizens were deprived by this development—which was again not verified by empirical evidence. In the real article, existing sources of evidence were used (research reports by Dutch and U.S. universities, as well as national statistical bureaus). In the fake article, evidence was framed as common sense and the opinion of ordinary citizens (a false opinion platform was invented). The lay-out was identical in both conditions: an online news platform without a clear source or author. The length was similar as well: 235 words in the realistic article versus 228 words in the fake article.

We did not use existing articles as stimuli for different reasons: (a) to maintain equivalence and credibility across the two different countries; (b) to avoid priming effects of news articles participants already encountered in their media environments; (c) to reach equivalence between the two treatments; and (d) to avoid the priming effect of attitudes toward existing news sources. In a pilot test among a convenience sample (N = 43), the credibility and perceived reality of the news articles was assessed. The “real” article was seen as more closely reflecting everyday news coverage (M = 5.85, SD = 1.43) than the fake article (M = 4.05, SD = 1.56, seven-point disagree-agree scales, p < .001). Both articles received similar credibility scores (real: M = 4.27, SD = 1.33, fake: M = 4.10, SD = 1.28, p = .265). There was a significant difference between the articles regarding the similarity to everyday news coverage, but the credibility ratings did not significantly differ.

Sample

In both countries, soft quotas were used to ensure a varied sample that reflected national distributions on age, gender, and education as close as possible. An international research agency (Dynata) recruited participants from blended panel sources, and distributed the survey link via email and on their online platform. A total of 542 participants were retained in the analyses reported in this paper (83.0% Cooperation Rate, and 87.0% Completion Rate). A post hoc power analysis (α = .05) revealed that the power was 0.839.

The research company aimed to reach the general audience and did not set limitations on the recruitment procedure (everyone had an equal chance to be invited). Looking at the final composition of the panel, 50.1% was male, 21.7% was lower educated (49.6% had a moderate level of education, 28.7% was higher educated). The sample sizes were identical in the two countries. Regarding ideological priors in the Netherlands, 22.7% classified as Liberal, and 17.6% as Conservative. 23.1% self-identified as Progressive, and 36.6% did not self-identify with any of these pre-defined categories. This was different in the United States: 33.2% was Liberal, 35.6% was Conservative, and 31.0% was Independent/different. Only 0.2% did not self-identify with any of these ideological leanings. Although these distributions are not nationally representative, they do reflect the relative salience of different ideologies in the respective countries closely. Based on recent census data, the deviations between the sample and population data fall within a 5% difference in the Netherlands and the United States.

Dependent Variables: Classification and Attributions of Mis- and Disinformation

Misinformation ratings were assessed with two items: “the message reflects reality” (reverse-coded) and “the message is not accurate.” The two items were averaged into a single misinformation perception scale (Cronbach’s alpha = .62, M = 4.24, SD = 1.30). To measure perceptions of disinformation, three items on seven-point disagree-agree scales were used: “The message can be regarded as Fake News,” “The message is completely made-up,” and “The message deliberately tried to mislead me” (Cronbach’s alpha = .84, M = 4.16, SD = 1.45). Country differences were marginal for the misinformation scale (United States: M = 4.29, SD = 1.34, NL: M = 4.22, SD = 1.26), and slightly larger but non-significant for the disinformation scale (United States: M = 4.31, SD = 1.51, NL: M = 4.01, SD = 1.37). The bivariate correlation between both scales is strong and significant (r = .693, p < .001)—but suggests that mis- and disinformation ratings cannot be merged into a single scale of post-treatment credibility beliefs. This is confirmed by a confirmatory factor analysis: A one-dimensional credibility scale fits the data substantially and significantly worse (χ²(5) = 20.29, χ²/df = 4.06, p < 0.001; RMSEA = .05, 90% CI [0.03, 0.08], CFI = .99) than the proposed two-dimensional model (χ²(4) = 9.93, χ²/df = 2.48, p = .042; RMSEA = .03, [0.01, 0.07], CFI = .99; ∆χ² (1) = 10.36, p < 0.001).

Moderators: Credibility Beliefs Related to Mis- and Disinformation

In the pre-treatment survey, participants were asked to indicate their general perceptions toward the news media’s honesty and accuracy. Misinformation attitudes were measured using six statements measured on a seven-point disagree-agree scale: “the news media are far-removed from the facts,” “the news media are an inaccurate source of factual information,” “you cannot rely on the news media to be informed about the world around us,” “the news media cannot tell us what is going on in society,” “the news media do not capture the life-worlds of the people,” and “the news media give an unprecise depiction of reality” (Cronbach’s alpha = .94, M = 4.20, SD = 1.51). Six other items measured perceived disinformation: “The news media try to mislead citizens,” “the news media are an enemy of the ordinary people,” “the news media are responsible for spreading Fake News,” “the news media try to force their own political agenda on us,” “the news media are deliberately lying to the people,” and “the news media are dominated by their own biased ideological views” (Cronbach’s alpha = .93, M = 3.91, SD = 1.40).

The correlation between both dimensions of perceived untruthfulness is high (r = .88, p < 0.001). Yet, confirmatory factor analyses demonstrate that distinguishing between mis- and disinformation attitudes yields a significantly and substantially better model fit (χ²(64) = 223.80, χ²/df = 3.49, p < .001; RMSEA = 0.05, 90% CI [0.04, 0.05], CFI = 0.81) than a one-dimensional model that regards mis- and disinformation attitudes as a single construct (χ2(64) = 328.84, χ²/df = 5.14, p < .001; RMSEA = 0.06, [0.06, 0.07], CFI = 0.61; ∆χ²(1) = 121.21, p < .001). The model fit and model structure are similar in the United States and the Netherlands. However, we see some small differences in the scores across countries in misinformation (United States: M = 4.35, SD = 1.60, NL: M = 4.03, SD = 1.40) and disinformation attitudes (United States: M = 4.36, SD = 1.64, NL: M = 3.91, SD = 1.40). The mean score difference of disinformation attitudes is significantly lower in the Netherlands than in the United States.

We further assessed the resonance of the news article with prior attitudes toward immigration and safety (pre-treatment survey). The statements used to tap issue agreement were connected to the central topic of the news article (immigration and criminality): “Our country has become less safe over the last years,” “I do not feel safe in my own country,” “migrants pose a threat on our security,” “migrants have a stronger tendency to engage in violent crimes than ordinary native people” (Cronbach’s alpha = .79, M = 4.23, SD = 1.45).

Manipulation Checks

First of all, we assessed whether the main storyline, topic, and interpretations were perceived as identical between the realistic and fake news article. These manipulation checks succeeded: participants in both conditions were equally likely to classify the article as a crime-rate news story (ΔM = −0.14, ΔSE = 0.13, t = −1.05, p = .295), in which the elites (ΔM = −0.20, ΔSE = 0.13, t = −1.55, p = .121) and migrants (ΔM = −0.15, ΔSE = 0.12, t = −1.28, p = .381) are connected to negative developments of crime rate increases among segments of the population. Two factors were perceived as significantly different across the two versions of the news articles: people-centric coverage was more likely to be attributed to the fake news article (M = 5.15, SD = 1.46) than the realistic article (M = 3.30, SD = 1.71, p < .001), and empirical evidence and expert knowledge were more likely to be attributed to the realistic article (M = 5.10, SD = 1.46) than the fake article (M = 3.38, SD = 1.72, p < .001). This was also intended by the manipulations of real versus fake news. Controlling for these factors as covariates did not change the results.

Results

Classifying Information as Inaccurate and Dishonest

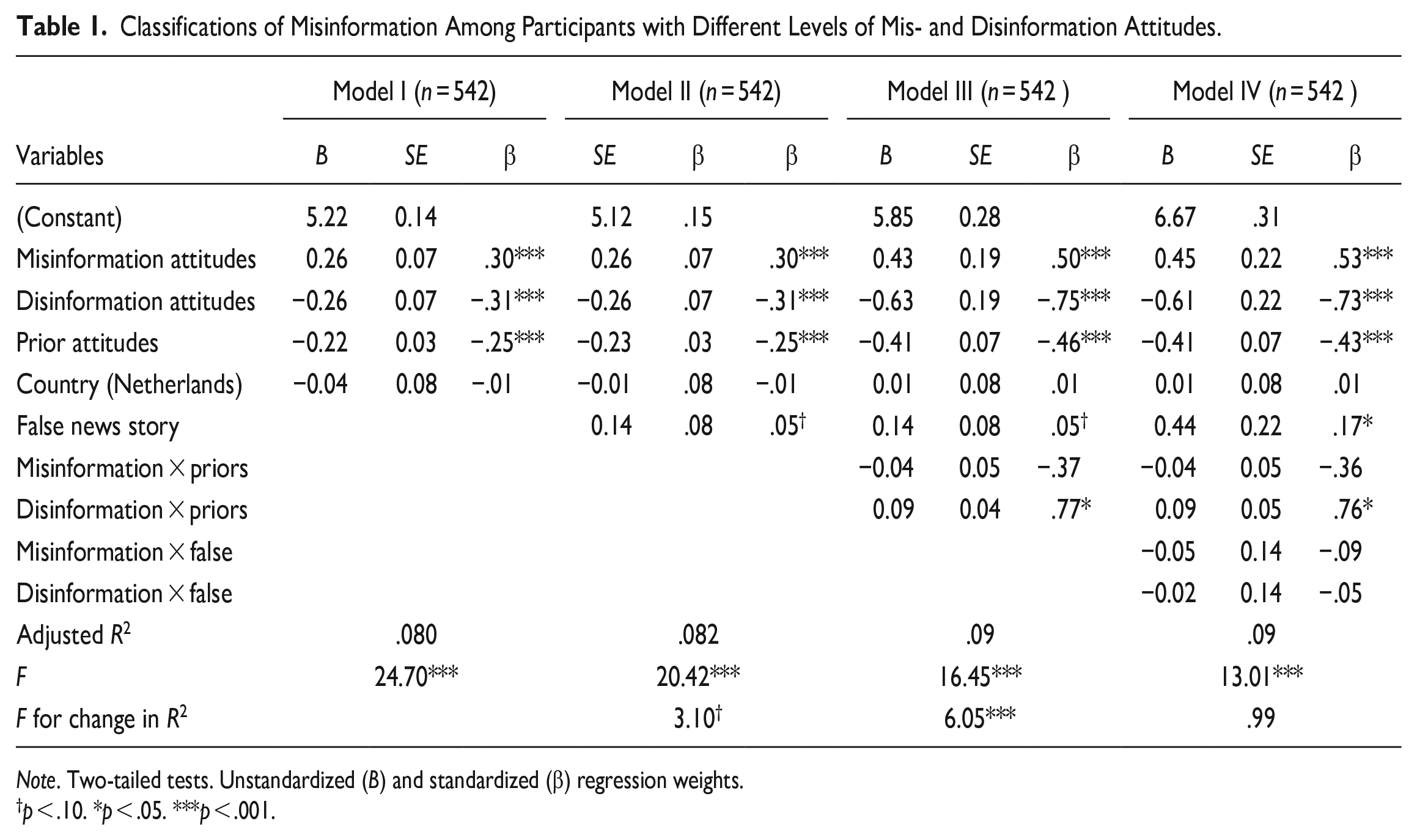

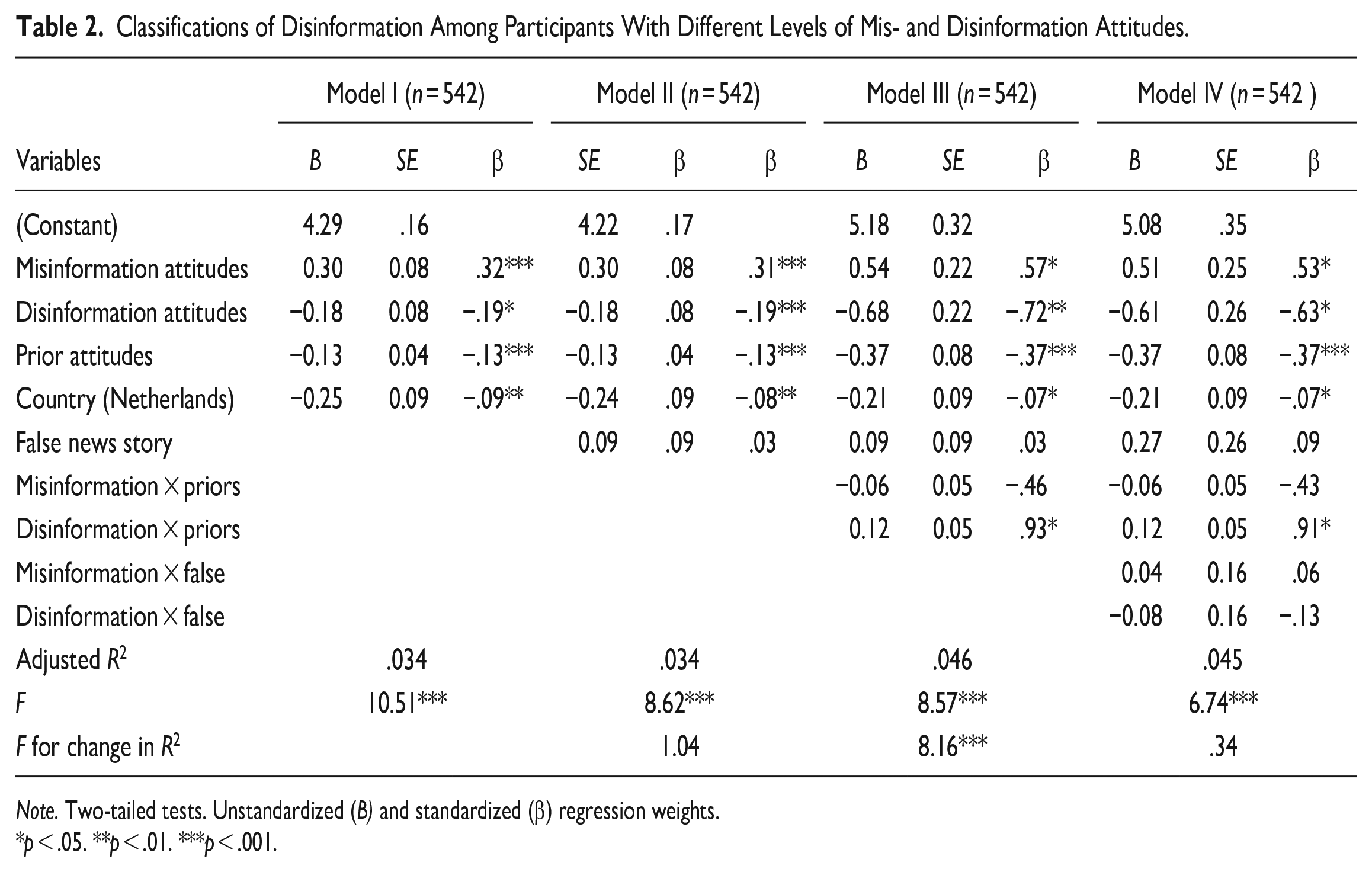

First of all, we assessed if participants with more pronounced misinformation (H1) and disinformation (H2) attitudes attributed more untruthfulness to political news than participants with a stronger tendency to trust the veracity and honesty of the press. The findings depicted in Tables 1 and 2 demonstrate that misinformation attitudes positively correspond to the attribution of mis- and disinformation, whereas higher levels of perceived disinformation negatively relate to the classification of information as mis- and disinformation. As robustness check, we reran the models with (a) mis- and disinformation attitudes entered in separate models and (b) a single falsehood attitudes scale that does not distinguish between these separate dimensions. When we run the models for mis- and disinformation attitudes separately, we confirm our main conclusions: More pronounced misinformation attitudes correspond to a higher likelihood of classifying information as false, whereas disinformation attitudes correspond to a lower likelihood of mis- and disinformation classifications. When merging both scales, we find that general misinformation attitudes positively relate to the classification of disinformation (B = 0.13, SE = 0.03, p ≤ .001), but not misinformation (B = 0.01, SE = 0.03, p = .76).

Classifications of Misinformation Among Participants with Different Levels of Mis- and Disinformation Attitudes.

Note. Two-tailed tests. Unstandardized (B) and standardized (β) regression weights.

p < .10. *p < .05. ***p < .001.

Classifications of Disinformation Among Participants With Different Levels of Mis- and Disinformation Attitudes.

Note. Two-tailed tests. Unstandardized (B) and standardized (β) regression weights.

p < .05. **p < .01. ***p < .001.

We thus find partial support for H1: Participants with more pronounced misinformation attitudes are more likely to classify information as incorrect than participants with less pronounced misinformation attitudes. Yet, when merging both scales, general misinformation attitudes only correspond to disinformation classifications. However, H2 is not supported by our data, as disinformation attitudes correspond to higher levels of perceived accuracy and honesty of false information. But what if we take the veracity of the news article into account—are people with mis- and disinformation actually better equipped to correctly classify pseudo-information?

In Model II through IV of the regression analyses depicted in Tables 1 and 2, we assessed whether perceptions of mis- and disinformation made the correct classification of pseudo-information more likely. First of all, as can be seen in Table 1, exposure to a news article based on false and incorrect arguments results in higher attributions of misinformation compared to exposure to an article based on real arguments and evidence. This effect is, however, only marginally significant—which offers no support for H3a. The veracity of the message did not affect disinformation attributions: Articles based on real or false evidence are equally likely to be labeled as disinformation (Table 2, Model II). Robustness checks in which mis- and disinformation attitudes are entered separately or as single variable give the same results. These findings do not offer support for H3b.

As main finding, the veracity of the news article did not affect the correspondence between perceptions of communicative untruthfulness and attributions of mis- and disinformation (Tables 1 and 2, Model IV). Irrespective of whether we enter mis- and disinformation separately into the regression models or as a single untruthfulness perceptions, pre-exposure beliefs of communicative untruthfulness do not impact the correct classification of false information shown to participants. We thus fail to support H3c and H3d: The actual veracity of the message did not play a role in the classification of information as mis- or disinformation, and these (in)correct classifications did not differ among people with more or less pronounced credibility perceptions related to mis- and disinformation.

Classifications of Pseudo-Information and Attitudinal Congruence

Finally, we assessed the extent to which attitudinal congruence with the statements made in the (false) article affected the relationship between perceptions and attributions of mis- and disinformation (H4). First of all, the findings demonstrate that the stronger the congruence between participants’ prior attitudes and the arguments of the article, the less likely they are to regard the article as mis- or disinformation (Tables 1 and 2, Model I). These findings support H4a: mis- and disinformation attributions are contingent upon confirmation biases.

The findings in Table 1 (Model III) further show that there is no significant interaction between misinformation attitudes and attitudinal congruence on attributions of misinformation. The same was found for attributions of disinformation (Table 2, Model III). Again, the findings are robust when we enter mis- and disinformation separately or merge these pre-exposure beliefs into a general perceived untruthfulness perception. These findings thus do not offer support for H4b: Irrespective of attitudinal congruence, higher levels of misinformation attitudes correspond to stronger attributions of mis- and disinformation.

The interaction effect between prior attitudes and disinformation attitudes on the appraisal of mis- and disinformation was significant and positive (Tables 1 and 2, Model III). This means that the higher the level of attitudinal congruence, the stronger the correspondence between disinformation attitudes and misinformation attributions. Yet, our findings do not offer support for an interaction effect between attitudinal congruence and exposure to false information: Participants with issue-congruent prior attitudes are not more or less likely to correctly distinguish authentic from false information. Correct classifications of mis- and disinformation are thus not contingent upon the resonance of falsehoods with prior beliefs related to false statements. Our findings thus do not offer support for H4c.

Discussion

This paper explored whether mis- and disinformation as individual-level attitudes can predict the susceptibility to false information in people’s media environments. The results demonstrate that misinformation attitudes correspond more strongly to classifications of false information than disinformation attitudes. This correlational evidence can arguably operate in two different causal pathways, which we may regard as a spiral of distrust: The more people (correctly or incorrectly) identify false claims in the media, the more cynical they become toward their information environment. These perceptions, in turn, may result in a stronger confirmation bias when people rate subsequent information. Hence, the more people perceive the information surrounding them is false or misleading, the more they confirm this pessimistic outlook when rating the veracity of the news.

Our findings point to an affinity between (right-wing) populist attitudes and negative perceptions of the established press (e.g., Fawzi, 2018; Schulz et al., 2018). Hence, people with stronger populist attitudes are most likely to distrust the established media and regard them as the people’s enemy. In line with this, the “fake” news article that aligned with a populist worldview used in this experimental study may have appealed most among people that severely distrust the intentions by which established media report on politics and society, whereas it was flagged as incorrect or deliberately misleading among people with more critical attitudes toward the truthfulness and accuracy of the established press. These results align with the confirmation bias we found on an attitudinal level as well: The more people’s prior attitudes align with the content of disinformation, the more likely it is perceived as authentic and truthful news coverage.

Another important findings is that false news is equally likely to be flagged as disinformation than news with authentic statements and verified empirical evidence. Although the false news story was slightly more likely to be flagged as misinformation than the real news story, these different stories were regarded as disinformation to similar extents. As an important implication for democracy, it thus seems that actors of disinformation can get away with distributing (deliberately) false or manipulated content on issues such as immigration. In other words, the extent to which citizens classify information as true or false seems to be contingent upon perceptions of untruthfulness and resonance with prior attitudes rather than the veracity of the content of the message. This is in line with the findings of Schaewitz et al. (2020): Individual-level differences, such as prior beliefs and defensive motivations, can play a stronger role in the credibility of misinformation than actual message characteristics of (fake) narratives.

In line with the fact that pseudo-information represents only a very small part (about 1%) of people’s overall news diets (Watts et al., 2021), our findings illustrate that people are generally not capable of detecting such unfamiliar content. Perceptions of mis- and disinformation do not improve the correct classification of pseudo-information, which may imply that more direct proxies of information media literacy are more important to consider (also see Jones-Jang et al., 2021). The finding that perceived mis- and disinformation may play a strong role in people’s evaluations of pseudo-journalism irrespective of its actual veracity suggests that interventions may additionally be targeted at restoring trust in the honesty and accuracy of truthful information: Interventions need to prevent that citizens systematically reject authentic content out of the (mis)perception that Fake News is omnipresent.

These findings need to be contrasted with a number of limitations that should be addressed in future research. First and foremost, the manipulation of political disinformation was based on a “most likely” case of anti-immigration news (Bennett & Livingston, 2018). In addition, to circumvent the confounding role of source cues, both the true and false messages were presented in the same format: A news article. Although the presentation of disinformation in the format of a real article may correspond to a disinformation strategy that aims to make use of a truth bias, disinformation encountered online typically also takes on the shape of blogs, comments, or social media post. In light of these shortcomings, we recommend future research to rely on different stimuli presented in a different format (i.e., social media posts vs. fake news articles), varying the type of manipulations (i.e., presenting just fake numbers versus misleading interpretations) and using different issues (i.e., varying left- and right-wing issue positions). To also explore whether the null findings are robust across experimental designs, we recommend future research to employ a more extensive operationalization of pseudo-information as independent variable. Hence, this experiment mainly conceptualized false information as lacking empirical evidence and base-rate information, but more diverse (and perhaps more distinguishable) elements should also be considered, such as the extremity of issue positions and (hyper)partisan content, specific language use, and reliance on emotions and gut feelings.

Second, we investigated the resonance between perceptions of mis- and disinformation and congruent attributions in only two strongly differing national settings. Future research should assess the robustness and generalizability of these conclusions in other regions as well. Third, the manipulation of “real” versus “fake” news was based on actual examples of real and fake news, but we did not directly assess the effects of actual instances of disinformation and truthful news that has been published. This was done to enhance internal validity while making the stimuli as credible and realistic as possible. However, although being at risk of priming participants with familiar news, future research should more comprehensively assess the influence of different message and source cues in disinformation: what is the influence of real versus fictional source cues that aim to reflect legitimate news sources? Does the platform play a role in the persuasiveness of disinformation?

It should also be noted that a single-message design was used, which increases the likelihood that findings are based on idiosyncrasies of the chosen message and manipulations (also see Slater et al., 2015 for a discussion). Especially given the fact that we did not find effects of the treatments, future research should experiment with different messages manipulating pseudo-information, which can either be presented as within- or between-subjects factors. For example, such messages can vary the extremity of the deviation from facticity, the topic or the frames used in misleading content. This will advance our understanding of the boundary conditions of the (null) effects.

Despite these limitations, this study has offered an important next step in understanding people’s susceptibility to mis- and disinformation. On a positive note, inducing more critical media literacy skills (misinformation attitudes) may instill a healthy sense of skepticism among news consumers that can help them to distinguish fake news from truthful reporting. On a more pessimistic note, disinformation attitudes can result in a stronger acceptance of fake news claims that align with people’s prior attitudes. The appeal of the radical-right to distrusting media consumers that oppose immigration may thus have real-life consequences in fostering support for fake statements that support pre-existing views.

Footnotes

Appendix A: Stimulus Materials

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.