Abstract

Adolescents’ heavy engagement with digital news and social media brings them considerable exposure to race-related content, especially during election cycles. We assess how well young people navigate that kind of digital content, using a nationally representative longitudinal study in which baseline data was collected during and after the 2020 election. We categorize young people’s responses to two real-life examples of digital media related to participation in the election as beginner, emerging, and mastery level in terms of their ability to critique racism. We also find responses that we categorize as race evasive, anticritical, and white supremacist. Most of these young people performed at the beginner level, and a minority achieved mastery. We argue that there is a clear need for young people to be better prepared to assess race-related online information and that educators need to support them in developing those skills.

Keywords

During the 2016 and 2020 elections, race-related disinformation and propaganda proliferated online (e.g., DiResta et al. 2018; Reddi, Kuo, and Kreiss 2023). This content furthered racist agendas, undermined informed electoral engagement, and contributed to broad race-related political polarization. If adolescents are to be gainfully politically engaged in this historical moment, they need to develop skills to critically assess and analyze race-related digital media. Teaching those skills, then, should be integral to civics learning in the post-2020 sociopolitical world.

Educators and scholars have increasingly been highlighting the importance of digital and media literacy (e.g., Garcia et al. 2021; Kahne, Hodgin, and Eidman-Aadahl 2016; Kellner and Share 2007; Tynes et al. 2021). Teenagers tend to access news media online; 53 percent of young people ages 18 to 29 found online sources such as social media and news websites/apps to be more helpful than other sources for learning about the 2016 U.S. election (Gottfried et al. 2016). A 2018 report found that three-quarters of teens say they get news from social media “often” or “sometimes” (Dautrich 2018).

Entangled as they are in the digital world, teens must develop critical digital literacy skills to help them navigate life online. With these skills, teens will be better equipped to build healthy communities (Mihailidis 2018) by understanding how the internet shapes information (Lynch 2016). Adolescents need to know how to seek and find high-quality information (Breakstone et al. 2021; Metzger, Flanagin, and Medders 2010), how to challenge oppressive media narratives (Mills and Unsworth 2018; Tynes et al. 2021), how to navigate an oppressive digital infrastructure with biased algorithms (Noble 2018; Tynes et al. 2021), and how to critique corporate digital platforms (Benjamin 2019; Garcia and de Roock 2021). These digital skills will both enable youth to engage media reports about electoral politics in a manner consistent with such democratic ideals as informed and agentic decision-making and will also help them navigate polarized, and possibly abusive, media content. Few studies have assessed young people’s critical digital literacy needs and skills with respect to political information, and this article is a contribution to that growing literature.

Critical Race Digital Literacy: The Concept

The concept of critical race digital literacy (CRDL) comes from a pilot study by Tynes et al. (2021) and a National Academy of Education report (Garcia et al. 2021). CRDL goes beyond existing digital media literacy efforts that focus on building individual skills and protecting young people from threats online. It aims to prepare students to “understand, recognize, and respond to structural factors, particularly racism, as they relate to discourse and reasoning in the digital age” (Garcia et al. 2021, 320). Specifically, we define CRDL as the knowledge, skill, and awareness required to access, identify, organize, integrate, evaluate, synthesize, critique, create, counter, and cope with race-related media and technologies. It includes the ability to critically and laterally read race and intersecting oppressions in digital contexts, as well as the ability to recognize and subvert the ways that technologies (algorithms, artificial intelligence, bots, etc.) oppress certain groups while maintaining the status quo for others. CRDL also includes recognizing the ways that technology can be used and designed to foment racial division to suit political and economic ends. Additionally, CRDL refers to one’s capacity to develop historical knowledge and a lens to situate racist content, anti-Blackness, and whiteness. (Tynes et al. 2021, 112)

CRDL is informed by critical race theory (CRT), which originated in legal studies and has been used widely across disciplines to analyze race as a social construct with social consequences (Crenshaw et al. 1995). Building on foundational applications of CRT in education research (Ladson-Billings and Tate 1995), media literacy educators and researchers have applied CRT to study how people recognize, read, and challenge social power relationships related to race and racism in various media (e.g., Hawkman and Shear 2020; Mills and Unsworth 2018; Watts, Abdul-Adil, and Pratt 2002; Yosso 2002). Some politicians are currently using CRT as a misnomer to cover their party’s fierce opposition to teaching an accurate depiction of America, particularly with respect to systemic racism and the heinous treatment of Indigenous, Black, and other people of color. Their claims that CRT provokes psychological distress in students have been challenged by research that shows the developmental benefits of critical consciousness (Jemal 2017) and learning about race and racism (Jayakumar 2022). Studies further show that these benefits can be nurtured through developmentally appropriate learning activities, whereas race-evasive approaches have been shown to harm students by increasing self-segregation among white students and decreasing academic participation among students of color, especially in higher-ed STEM (science, technology, engineering, and mathematics) classes (Jayakumar 2022).

Following CRT, CRDL views racism as systemic and pervasive in online discourses, infrastructures, and platforms (Benjamin 2019; Daniels 2018). For example, CRDL highlights how race-related disinformation and propaganda campaigns exacerbate long-standing racial oppression and divisions in U.S. society (Tynes et al. 2021). It also challenges racism in all forms—from structural to interpersonal, subtle to explicit—and supports students in learning to critique race-evasive ideologies, such as the color-blind racial ideology (Abaied and Perry 2021; Neville et al. 2013) that holds the idea that race and racism are not relevant, do not or should not matter, and can be ignored (e.g., Jupp et al. 2019; Wetzel 2020).

CRDL skills also include recognizing and countering the more explicit white supremacist ideas. We define white supremacy as the ideological claim that white people are racially, culturally, and/or biologically superior to non-white people; it is used to promote legal, economic, and political structures and practices that have historically excluded communities defined as non-white from voting rights, land ownership, labor protections, full participation in public institutions and services, political representation, and the protection of the courts (Nakagawa 2021). White supremacist ideology can be shaped by technically sophisticated digital activities that use emerging technologies and digital discourses (Daniels 2018). Explicit white supremacist digital propaganda simultaneously draws from and disrupts race-evasive practices (Daniels 2018; Nakagawa 2021). This will, at times, take the form of anticritical stances that either co-opt critical-sounding, race-related language to argue against challenging racism or reframe racist ideas and practices by claiming them as grassroots, populist challenges to elites or oppressive media institutions (Ganesh 2018). Young people need the skill to point out these messages, whether they are being spewed out by politicians (e.g., Hawkman and Diem 2022) or appearing in textbooks, as they have for centuries (Yacovone 2022). If given CRDL assessments, a student may endorse white supremacist ideas on one task yet not notice race on another but, over time, can achieve mastery-level critiques of racism. The CRDL framework suggests students can and do make progress from demonstrating explicit forms of racism and white supremacy toward the ability to critique racism at beginner, emerging, and mastery levels.

Digital Literacy: The Literature

CRDL research builds on broader education research that applies CRT to the teaching and learning of media literacy skills. Foundational work supporting Black (Watts, Abdul-Adil, and Pratt 2002) and Latinx (Yosso 2002) young people in critiquing media stereotypes has been conducted in more than a dozen studies at the K–12 and higher education levels; these are included in a review article (Mills and Unsworth 2018) and a special issue of the International Journal of Multicultural Education (Hawkman and Shear 2020). To our knowledge, however, none of the earlier studies focus specifically on young people critiquing racism in online contexts (Mills and Unsworth 2018). Two of the more recent studies did find that digital media can be used to challenge racism among college students (Chang 2020; Degand 2020), but more research is needed on the ability of adolescents to critique race-related digital media and the adult support they need to do so effectively. Given the increasingly hostile and polarized terrain of race-related politics online, the need for such research becomes all the more apparent; a national survey found that social media users were more likely in 2020 than in 2016 to negatively describe political discourse on online platforms, with 55 percent saying they are worn out by political posts and discussions (Anderson and Auxier 2020).

Existing studies show that many young people struggle with evaluating sources of information online (e.g., Flanagin and Metzger 2007; Hargittai et al. 2010; Kahne and Bowyer 2017; Metzger, Flanagin, and Medders 2010). Initiated by research from the Stanford History Education Group (SHEG), studies of adolescents’ civic online reasoning needs and skills asked participants to engage with real-life digital media content and then evaluated their responses on a rubric with beginner, emerging, and mastery levels (Breakstone et al. 2021; McGrew et al. 2018; Wineburg and McGrew 2017; Wineburg et al. 2016). The evaluations assess participants’ ability to look beyond surface-level features, such as site design and domain name, by using such technical literacy skills as lateral reading practices. (Employed by professional fact checkers, lateral reading involves checking what other online sources say about the initial information and its source.) These studies generally report low scores, which indicate a need for adolescents in the U.S. to develop a greater ability to distinguish online fact from fiction and to analyze bias in the digital realm.

That said, the media analysis tasks studied in this strand of research have not focused on race and racism, so little is known about how adolescents assess race-related online information in particular. Tynes et al. (2021) began to fill this gap; in four CRDL assessments, adolescents evaluated race-related materials taken from platforms like Google, Twitter, and Facebook, and researchers assessed their responses. Findings show that the majority of adolescents were indeed able to evaluate race-related search results but that, to recognize disinformation and racist propaganda, they need to improve their technical skills, specifically race-related lateral reading; they also need to deepen their understanding of the social, historical, and political contexts of race-related digital media artifacts.

In a study of youth online civic participation, participatory politics, and exposure to racially oriented conflict, Black youth were found most likely to participate in acts of online participatory politics, such as circulating and commenting on political content (Cohen et al. 2012). But more research is needed to determine how young Black people analyze race-related online information about political events, such as elections. One recent study reported that Black students scored lower than did other racial-ethnic groups on the rubrics assessing analyses of real-life digital media (Breakstone et al. 2021). However, this pattern does not hold when young people are asked to evaluate a wider range of information, especially that of particular relevance to Black communities (e.g., Cohen and Luttig 2020).

The Current Study

To extend the research on civic reasoning in the online world, this study assesses adolescents’ skills and needs by analyzing their performance on real-life digital media literacy tasks. Moreover, it fills critical gaps in the research by focusing on tasks that involve issues of race and racism. Building on our pilot study (Tynes et al. 2021), we have developed our CRDL rubric iteratively and included updated, developmentally appropriate tasks and more refined measures that address the context of the 2020 elections and beyond. Given the highly racialized dynamics of online information in recent election cycles, we need a clear evidence base for understanding young people’s critical engagement with politicized, race-related digital media. Equipped with that understanding, we can then inform educational practice and policymaking around race and digital literacies.

To accomplish this, we pose the following questions:

1) How do adolescents perform on critical race digital literacy tasks?

2) Are there racial-ethnic differences in performance on these tasks?

3) What critical race digital literacy needs do adolescents have to ensure that they can successfully navigate a post-2020 digital landscape?

Methods

Sample

This study is part of the larger National Survey of Critical Digital Literacy (NSCDL), which was approved by the University of Southern California’s Institutional Review Board and administered in the fall of 2020 (during and after the election) by Ipsos through their KnowledgePanel, the largest online panel in the U.S. that relies on probability-based sampling methods for recruitment (Ipsos, n.d.). Online panels are groups of pre-screened participants prepared to engage in research. Ipsos provided a sample of 1,138 participants. The nationally representative sampling frame was based on the U.S. Postal Service’s Delivery Sequence File, which contains all the delivery addresses serviced. The study had a target population of white, Black, Latinx, and bi-multiracial 11- to 19-year-olds, including a representative oversample of Black participants. Ipsos invited one parent from the representative sample of households with one or more age-eligible teens to enroll their children in the survey, and one child/teen per household was randomly selected for participation.

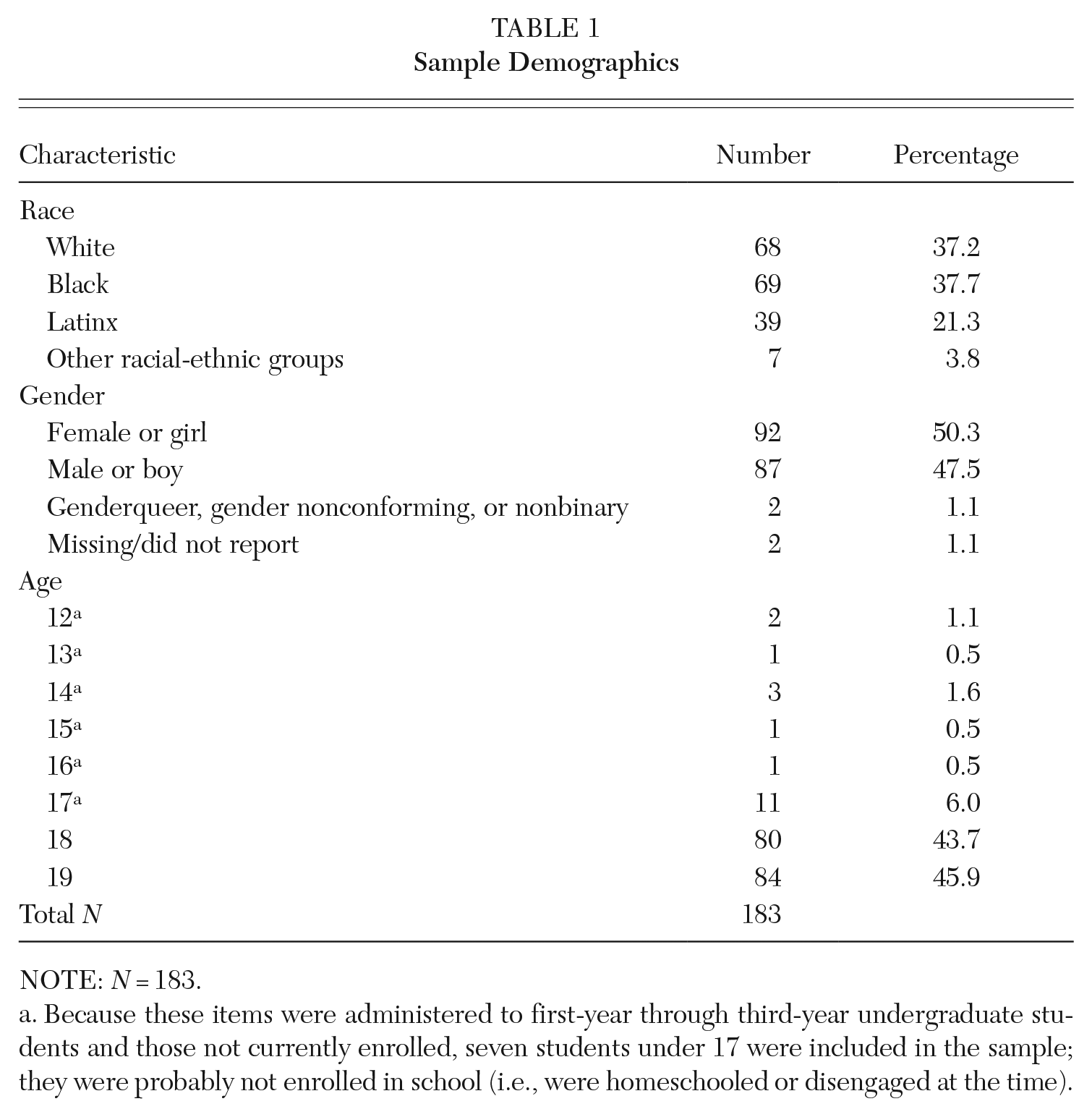

The NSCDL contained eight CRDL assessment items, which were developmentally appropriate for specific age groups. This article reports responses to two of them, from a subsample (n = 183) of mostly 18- to 19-year-olds. The full age range is 12 to 19; the two items were administered to first- through third-year undergraduate students and those not currently enrolled, so seven students under 17 (who were likely homeschooled or disengaged) were included in the sample. Table 1 illustrates full sample demographics, including participants’ self-reported age, race, and gender.

Sample Demographics

NOTE: N = 183.

Because these items were administered to first-year through third-year undergraduate students and those not currently enrolled, seven students under 17 were included in the sample; they were probably not enrolled in school (i.e., were homeschooled or disengaged at the time).

Measures: CRDL assessment items

NSCDL participants were given real-life examples of digital media and were prompted to respond to them. These measures were iteratively developed based on previous CRDL measures and results from a pilot study (Tynes et al. 2021), and we conducted interviews with people from the same age group as the sample to ensure developmentally appropriate language for the measures. The two CRDL assessment items analyzed in this article both relate to issues of race in the 2020 election.

Voter suppression task

For this task, we assessed participants’ ability to identify, situate in historical or social context, analyze, and critique racism and white supremacy in digital information and elected officials’ practices as represented in the digital media samples. We presented participants with the beginning of an article with the following instructions: “According to a Daily Beast article, ‘Over three million Black voters in key states were identified by President Donald Trump’s 2016 campaign as people they had to persuade to stay at home on Election Day to help him reach the White House.’ Why would elected officials and their employees suppress the votes of certain racial groups rather than others? Are/were voter suppression tactics being used in the 2020 election? Please provide examples.”

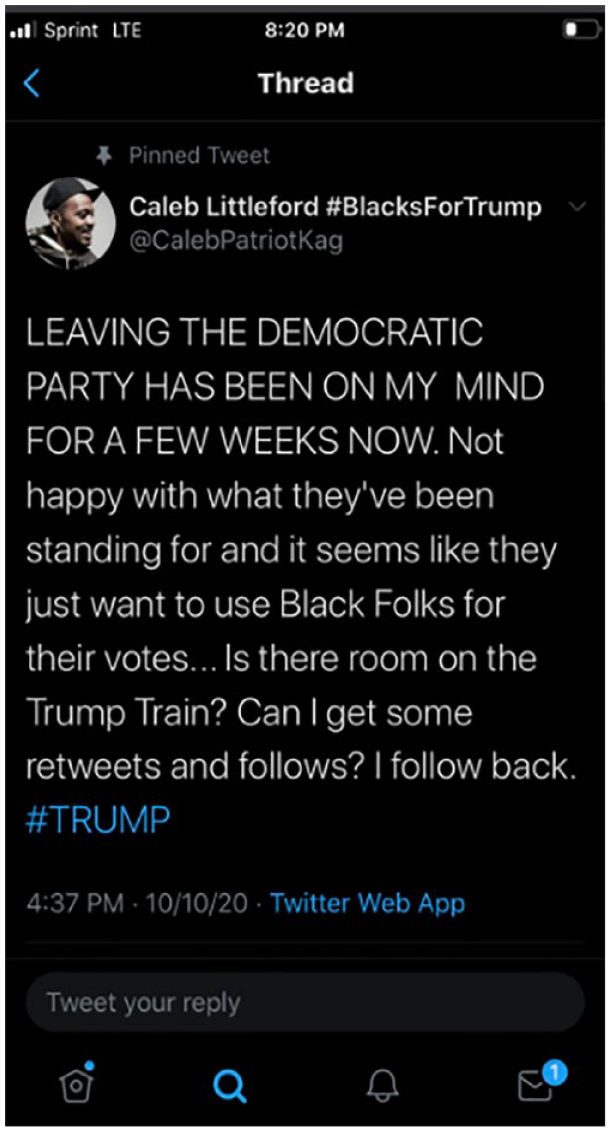

Election propaganda task

This task assessed skills of evaluating social media profiles/posts to determine if they are reliable, engaging in lateral reading, recognizing automated or fake accounts, and critiquing race-related propaganda related to the election. We presented participants with a screenshot from a Twitter account (see Figure 1) with the following instructions: “The post below is from a Twitter account that received 10,000 retweets in three hours. How would you respond if one of your followers retweeted this post?” The tweet’s rapid spread, its handle, and some of its language suggest it is a political bot targeting Black people to persuade them to support the Trump campaign. Political bots are “automated social media accounts, often built to look and act like real people, in order to manipulate public opinion” (Woolley 2020, 93). Participants may also view the post as a troll; trolls differ from bots in that they are real users who post intentionally inflammatory remarks (Barojan 2018). Distinguishing between the two may be difficult for even experienced adult social media users.

Screenshot Used in the Election Propaganda Analysis Task

Data analysis procedures

Thematic analysis of overall CRDL task performance using a rubric

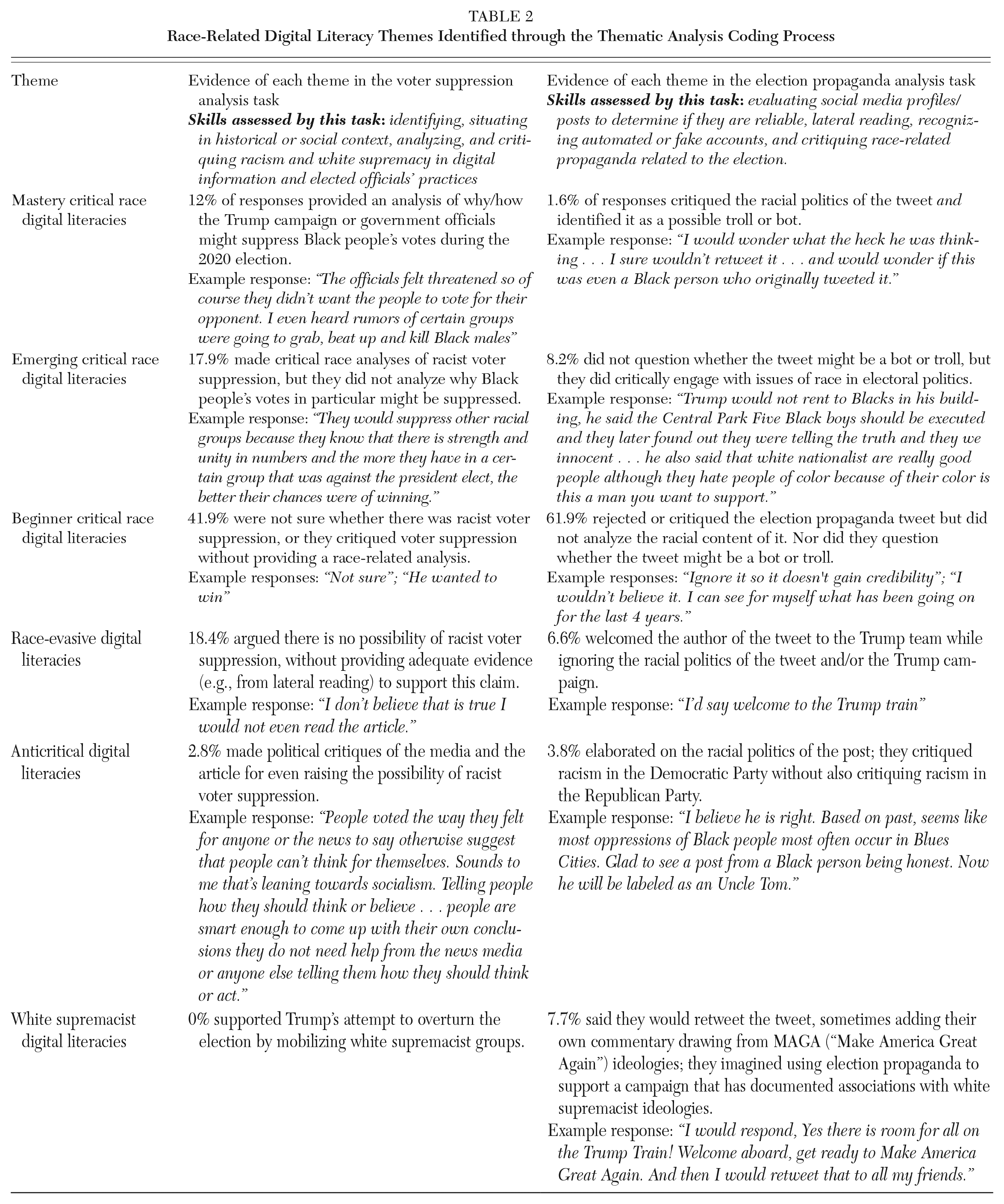

We conducted an inductive and deductive thematic analysis on the full NSCDL baseline dataset and coded all responses, including those reported here; this allowed us to identify themes based on a priori theory from previous research as well as patterns we analyzed in the data set itself (Boyatzis 1998). The deductive themes were new iterations of themes used in our pilot study (Tynes et al. 2021), where we revised the Stanford History Group (SHEG) three-point rubric to assess CRDL skills, with beginner-, emerging-, and mastery-level CRDL; we inductively refined these themes through close readings of the new NSCDL data set as a whole. We also identified new inductive themes. We noticed responses in the data that indicate some level of digital literacy skills in their ability to assess race-related digital media, but participants were using these skills in uncritical ways so they could not be coded with the original CRDL codes. Some responses explicitly argued against talking about race online; we coded these as race-evasive digital literacies. 1 Other responses used race-related language to argue against critiquing racism; we coded these as anticritical digital literacies. Finally, some responses explicitly defended or advocated for white supremacist ideologies or social structures, and these were coded as white supremacist digital literacies. See Table 2 for descriptions of each theme along with data excerpts that exemplify each theme.

Race-Related Digital Literacy Themes Identified through the Thematic Analysis Coding Process

Although we discovered the new themes through inductive analysis, we named and conceptualized them by operationalizing our CRDL theoretical framework. CRDL education requires helping people develop the skills to recognize and critique white supremacist, anticritical, and race-evasive approaches to digital media. Consistent with broader research informed by CRT, we see these three phenomena as racist to greater or lesser degrees; CRT holds that racism is endemic in the status quo of U.S. society, so race-evasive and anticritical attempts to avoid critiques of this status quo still function as defenses of racism, even if they are conceptually distinct from overt white supremacy (Abaied and Perry 2021; Neville et al. 2013). We conceptualized these themes in order to assess and analyze social phenomena observed in the data. Our analytic process assessed participants’ responses at a snapshot in time; for example, people who responded in anticritical ways to the prompts might learn to respond in more critical ways in the future.

After multiple rounds of discussions about the coding scheme among the whole research team, the first and third authors each used it to code the same 10 percent of the data and reached interrater reliability with a weighted Cohen’s kappa of .865 for the voter suppression item and .890 for the election propaganda item. We then each coded half of the remaining data. We interpreted the resulting distribution of themes as a continuous variable indicating adolescents’ abilities and willingness to analyze race-related digital information with a greater or lesser degree of critical thought. This approach builds on previous literature that treats adolescent performance in analyzing online information as a continuous variable in analyses of group variations (Breakstone et al. 2021; Tynes et al. 2021). The beginner, emerging, and mastery CRDL themes indicate some level of critique of racism to a greater or lesser degree. The other codes indicate some level of actively refusing to critique racism: responses coded race-evasive did this dismissively, ones coded anticritical refused to critique more directly (often by co-opting critical language to make an explicit case against critical/anti-racist perspectives), and responses coded white supremacist explicitly expressed racist politics.

We understand that some may question whether this construct is best understood as a continuous variable rather than, perhaps, as categorical variables or two separate constructs. For purposes of analysis, we treated it as continuous for three reasons: (1) Our CRDL theoretical framework suggests that some responses are better than others; for example, it is better to admit one does not know how to critique racism (coded as beginner) than to argue that nobody should critique it (coded as race-evasive). (2) The framework also holds that skills are developmental, that people can learn and improve over time. (3) The distribution of themes in the data followed a roughly normal distribution (a hallmark of continuous variables), with most responses clustered around beginner CRDL. Because the resulting 6-point rubric comes from qualitative analyses of themes that have been understudied in the literature thus far, readers should recognize there are qualitative differences between the themes that are not precisely represented at scale (e.g., the difference in performance between mastery-level CRDL and beginner-level CRDL is not exactly the same as the difference in performance between beginner-level CRDL and anticritical digital literacies).

Analyzing associations between task performance and racial-ethnic group membership

To infer possible associations between participants’ performance on the election propaganda and voter suppression tasks and their self-reported racial-ethnic group membership, we conducted a series of analyses of variance (ANOVAs). This allowed us to determine if the means of each racial-ethnic group’s performance on the tasks were significantly different from one another, that is, whether different racial-ethnic groups show different average levels of performance on the skills assessed by this study (listed in Table 2). For the ANOVAs, the assumption of normality was tested and met through an examination of the residuals. The test was conducted using an alpha level of .05. Post hoc analyses were conducted given the statistically significant omnibus ANOVA F-tests using Bonferroni on all possible pairwise contrasts.

Results

How do adolescents perform on CRDL tasks?

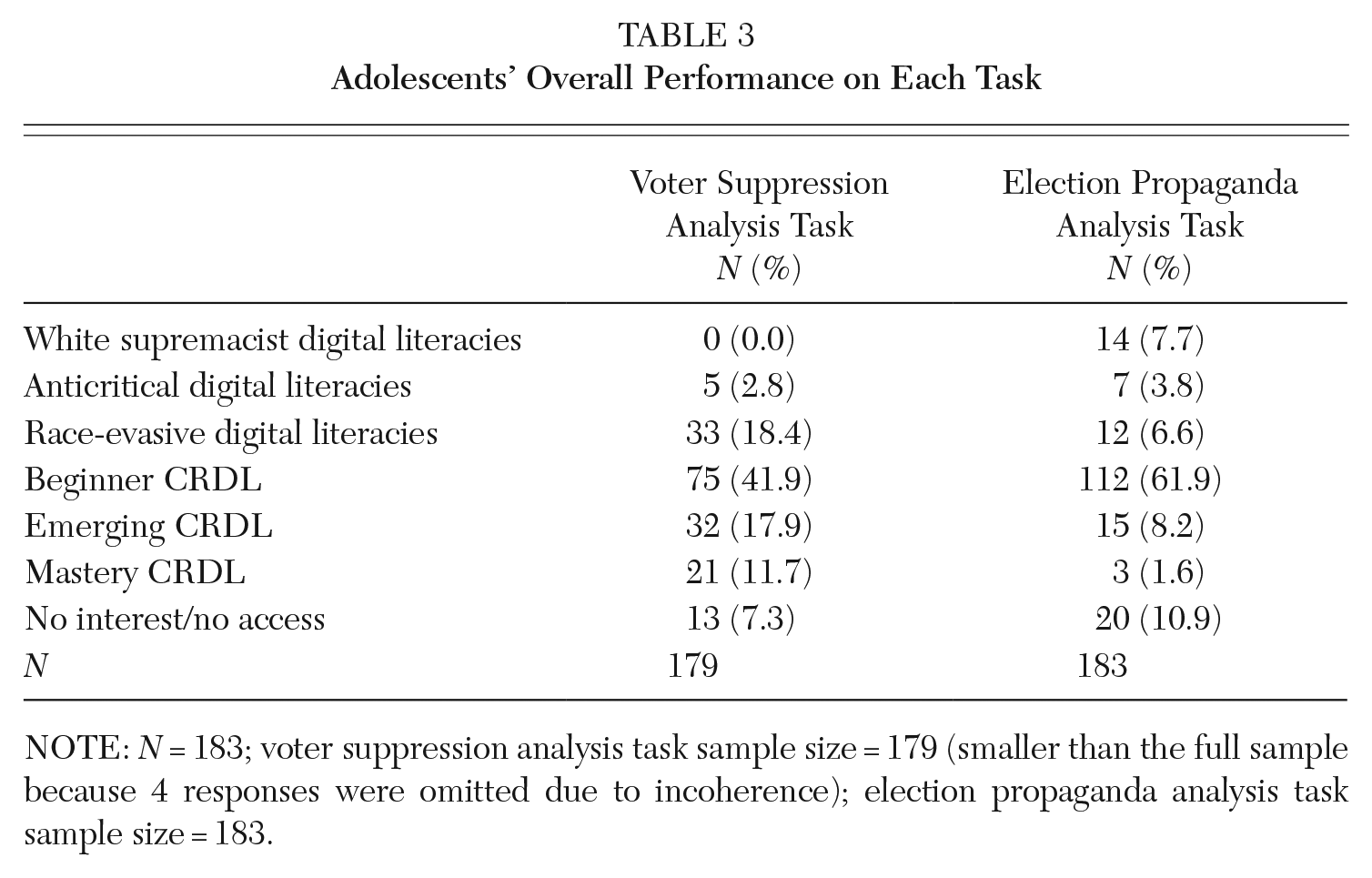

Here, we turn to the question of adolescents’ overall performance. Tables 2 and 3 show how they performed on both tasks measuring their CRDL skills. Participant responses that were most representative of performance at each level are presented and analyzed as examples in Table 2 and in the text below. Some participants chose not to respond to the assessment task, in some cases because they were not familiar with the social media platform mentioned (7 percent for the voter suppression analysis task and 11 percent for the election propaganda analysis task). These responses were not included in the analyses presented here.

Adolescents’ Overall Performance on Each Task

NOTE: N = 183; voter suppression analysis task sample size = 179 (smaller than the full sample because 4 responses were omitted due to incoherence); election propaganda analysis task sample size = 183.

Voter suppression analysis task

As Table 2 shows, the 18 percent of responses coded as emerging and the 12 percent coded as mastery on this task analyzed the Daily Beast article on voter suppression in relation to a broader social and historical context shaped by racism. The mastery-level responses provided an analysis of why Black people’s votes in particular might be suppressed in the 2020 election because the majority of Black people were likely to vote against Trump. Some analyzed the current situation in relation to a broader social context and the history of Black voter suppression, with references to misinformation campaigns and inequitable access to voting in majority-Black areas. These responses sometimes gave specific examples of voter suppression they had noticed in 2020; for example, this response was coded as mastery: “Trump is supported by white supremacists who walk around with loaded weapons, unchecked and sometimes supported by law enforcement. Some of these people are near polls, making it appear to be dangerous for people who don’t vote for Trump.”

The 18 percent of responses coded as emerging made broader critical race analyses of voter suppression but did not analyze why Black people in particular might be targeted. The 41 percent coded as beginner either were unsure whether or not there had been voter suppression or argued that elected officials tend to suppress votes to maintain power but provided no race-related analysis of how this might happen. For example, this response was coded as beginner: “Politicians do what they can to suppress votes so they can win. Is it happening in 2020? Probably.”

The 18 percent coded as race-evasive doubted the possibility of voter suppression without providing any evidence (e.g., from lateral reading) to support their doubt. For example: “Don’t believe any of this information. This is just stirring the pot more.” Given the well-documented history of Black people’s votes being suppressed in the U.S., arguing that one should dismiss evidence that it may be happening without further investigation is a form of race-evasive thinking.

The 3 percent who argued against attempts to criticize white supremacy/institutional racism were coded as anticritical. These participants made political critiques of the media and the article for even raising the possibility of voter suppression. Some suggested that doing so is evidence of left-wing bias: “This is more fake news. This never happened. Voters are not suppressed. Daily Beast is another ultra left propaganda machine.” There were no explicitly white supremacist responses to this item; while that theme was present in other items in the larger data set, we did not detect it for this one.

Election propaganda analysis task

As Table 2 shows, the majority of responses (61 percent) to this task were coded as beginner, indicating that the majority of adolescents in the sample rejected or critiqued the election propaganda tweet in some way but did not explicitly and critically analyze its racial content. Nor did they question whether the tweet might be a bot or troll. We detected several subthemes across these beginner responses. Some participants said they would ignore the tweet, either because they think every voter is entitled to their own opinion or because they disagree with it and do not want to bring more attention to it. One said they would retweet the post with a critique of it attached: “Your choice for a candidate is yours! But please understand the platforms by which you determine what party you would affiliate.” Others said they would block or unfollow anyone who posted the tweet.

Nor did the 8 percent of responses coded as emerging explicitly question whether the original tweet might be a bot or troll. But they did critically engage with issues of race in electoral politics. Some critiqued racist practices evident in both the Democrat and the Republican parties; for example, “Because the Democratic party isn’t the party of the working class, minorities, or underserved anymore, that doesn’t mean we enable the Republican party, either.” These responses draw from race-related political knowledge; participants said they would use this knowledge on their own social media to counter the election propaganda presented in the prompt.

It is important to note that some of these responses might be effective in future situations involving race-related election propaganda delivered by bots or trolls; for example, blocking or unfollowing a fake account might limit the spread of its propaganda and would limit its author’s ability to manipulate one’s attention and emotional energy. (This tactic should be distinguished from that of indiscriminately blocking all actual Trump supporters—a move that might risk reinforcing filter bubbles.) Similarly, in a response scored beginner, a participant described how they might intervene if a friend retweeted the propaganda: “I wouldn’t respond but i would text my friend privately and explain why that is a bad decision to do.” This avoids feeding the public controversy that political bots and trolls often aim to create, while still sharing digital literacy skills with a peer. Similarly, a response coded emerging stated, Dependibg

2

on how close I am to that follpwer and if I knew them well I may ask them to explain and elaborate why they would want to vote for Trump instead and how it would be beneficial to them. I would most likely begin with a neutral statement to allow the space for clarification. Then I may go into furthr detail avout how this may be more dangerous than being ‘used’. While that isnt desirable either, it is better than the alternative of voting for Trump who is actuvely harming minority groups

Allowing space for clarification might allow a person to assess whether their follower is expressing a personally meaningful and nuanced stance or whether they are simply regurgitating stilted ideological language—a potential sign of a bot. However, we did not see any evidence that these responders were consciously saying they would make these choices to outmaneuver potential trolls and bots.

In contrast, the 2 percent of responses coded as mastery critiqued both the racial politics of the tweet and identified it as a possible troll or bot. For example, one participant said, “Unfollow/block . . . The patriot tag in the twitter handle indicates a strong nationalistic bent, hence likely a republican, troll or bot.” This response demonstrates sophisticated knowledge about the political context combined with technical digital literacy skills (i.e., knowledge about Twitter’s design features); the participant used both to assess the source’s reliability.

The 6.6 percent of responses coded as race evasive and the 3.8 percent coded as anticritical made comments agreeing with the post without saying they would repost it. Race evasive comments welcomed the author of the tweet to the Trump team while ignoring the racial context of the tweet and the Trump campaign; for example, one response said, “Trump’s team will let anyone in.” Anticritical responses went further and elaborated on the racialized politics of the post, for example, “This tweet is actually true. The Dems have used ‘Black Folks’ for years. Everyone knows it.” These responses articulated a necessary critique of racism in electoral politics but failed to apply it equally to the Republican party and the Trump campaign, not just the Democrats, and thus amplified the original Tweet’s polarization along party lines. The 7.7 percent of responses coded as white supremacist digital literacies said they would retweet the post (sometimes adding their own articulations of Trump campaign propaganda); this would involve using election propaganda to support a political campaign that had a documented history of association with white supremacist ideologies (Hawkman and Diem 2022).

Are there racial-ethnic differences in the performance of critical race digital literacy tasks?

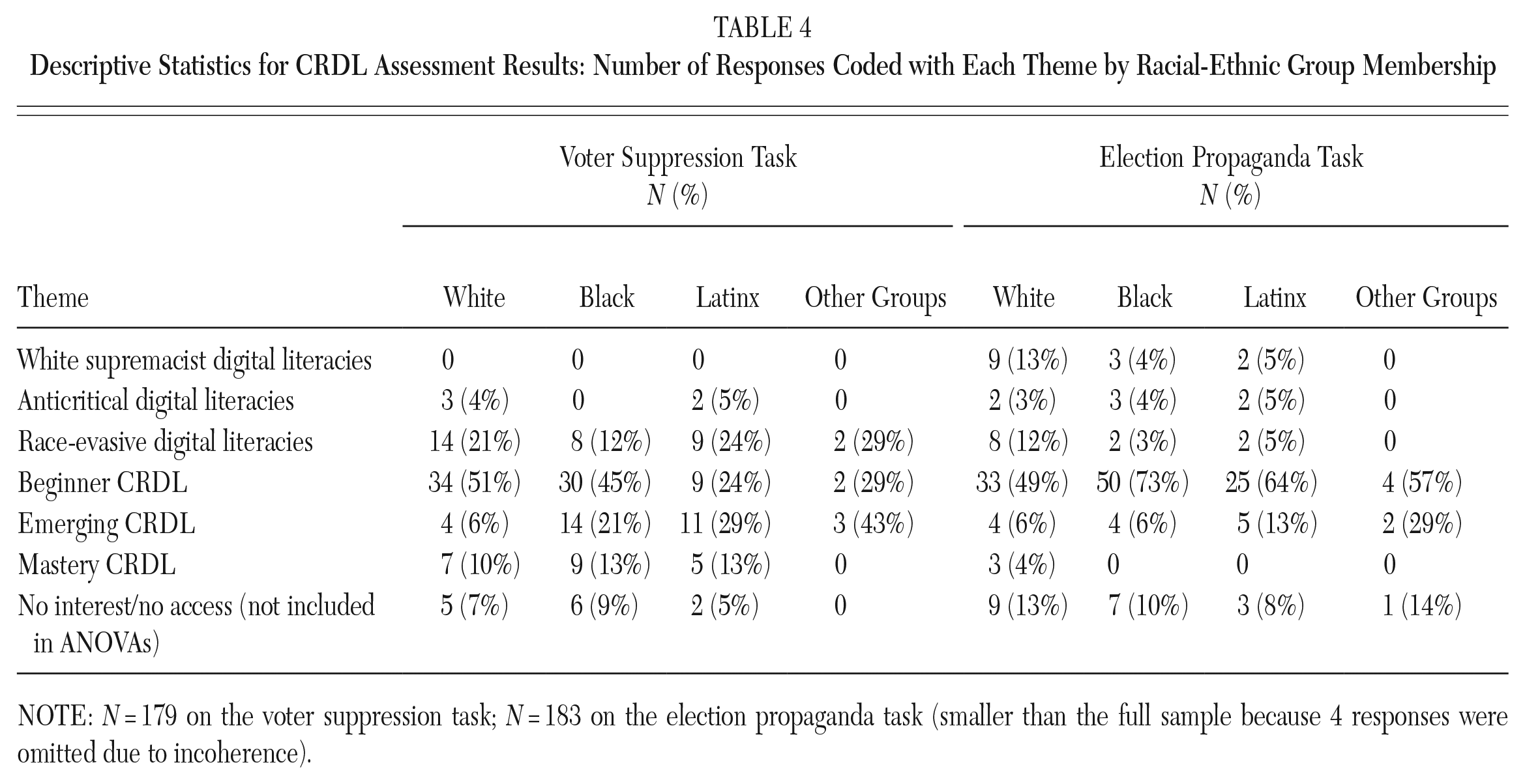

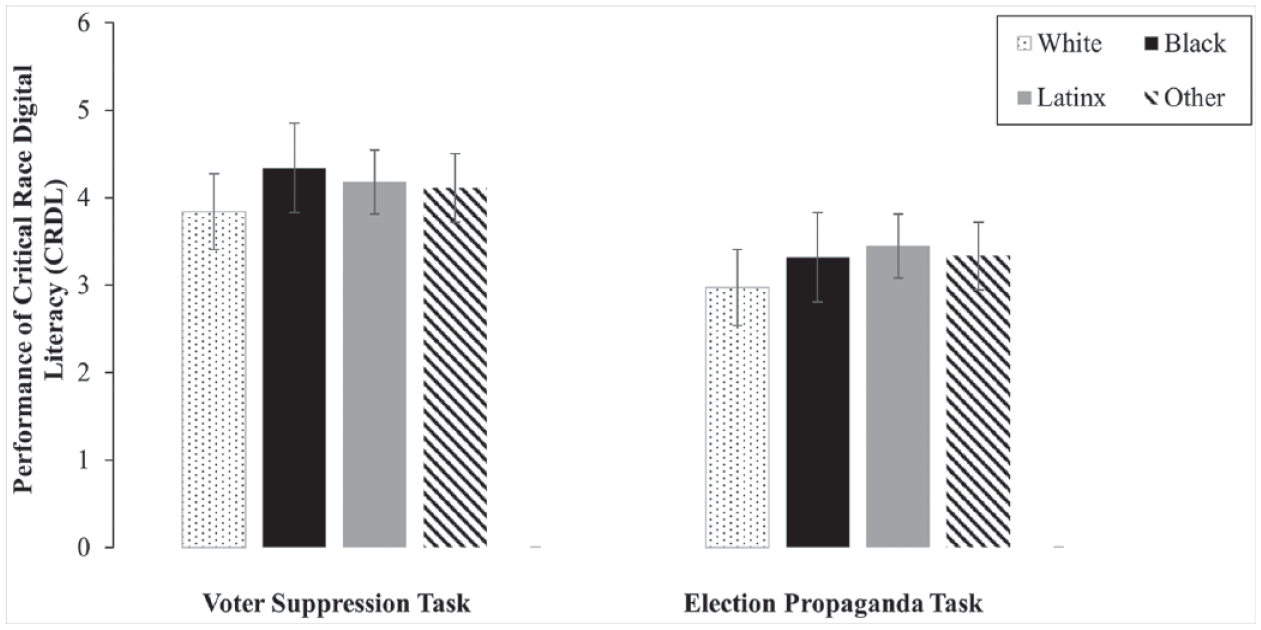

Table 4 shows descriptive statistics for the number of responses coded with each theme, aggregated by racial-ethnic group membership. Figure 2 illustrates the comparison of the mean performance scores on the CRDL assessment tasks aggregated by each racial-ethnic group, showing differences between the groups. To see whether any of these differences are statistically significant, we conducted separate ANOVAs, comparing the group means of each racial-ethnic group on each task. For the voter suppression analysis task, the overall ANOVA model was significant, F(3, 161) = 2.78, p = .043. For the main effect on this task, Bonferroni post hoc comparisons revealed that Black participants (M = 4.34, SD = 0.94) scored significantly higher than white participants (M = 3.84, SD = 0.96). However, Black participants’ scores were not significantly higher than other ethnic-racial groups (e.g., Latinx and other). In regard to the election propaganda analysis task, the overall ANOVA model was nonsignificant, F(3, 167) = 1.02, p = .386. This means that performance on the election propaganda task was not significantly different based upon participants’ ethnic-racial group membership.

Descriptive Statistics for CRDL Assessment Results: Number of Responses Coded with Each Theme by Racial-Ethnic Group Membership

NOTE: N = 179 on the voter suppression task; N = 183 on the election propaganda task (smaller than the full sample because 4 responses were omitted due to incoherence).

Comparing Mean Scores on the CRDL Assessment Items by Racial-Ethnic Group Membership

What CRDL needs do adolescents have to ensure that they can successfully navigate a post-2020 digital landscape?

In this section, we extend the thematic analysis to our third research question on the CRDL needs of adolescents and note ways that their CRDL skills might be developed further so that adolescents can successfully navigate the increasingly complex post-2020 landscape of race-related digital media. When considering performance across both tasks, we clearly see that adolescent skepticism about digital sources is overdeveloped in some ways and underdeveloped in others—and that skepticism alone is not enough to help them interpret digital information. Adolescents need more opportunities to learn how to contextualize their skepticism within evidence drawn from critical analyses of the history and ongoing social realities of race in the U.S. At the same time, such critical race analyses need to be combined with technical aspects of digital literacy, that is, identifying bots and trolls.

Across the responses to the voter suppression task coded race evasive, anticritical, and white supremacist, participants expressed skepticism about the digital source but did not ground their skepticism in evidence (e.g., assessments of the source provided by other sites found through lateral reading); as a result, they dismissed important information about race-related voter suppression. These adolescents (21 percent)—as well as those coded beginner (41 percent)—need opportunities to learn both about race-related lateral reading and about racialized politics in the U.S. (e.g., the history of voter suppression); this might help them develop the contextual lens necessary for assessing and interpreting evidence.

On the other hand, responses to the election propaganda task coded with these themes—and also those coded as beginner and emerging CRDL—did not express enough skepticism about the original tweet or question whether it might be a bot or troll. Only the 2 percent of responses coded as mastery posed this question. Most participants then need a better understanding of political bots, computational propaganda, and disinformation campaigns. Among the majority of participants who scored beginner and emerging on the propaganda analysis task, those who chose strategies that might effectively resist a bot/troll intervention—but without metacognitively naming that is what they were doing—often did so out of skepticism rooted in knowledge of the political context (e.g., skepticism of Trump supporters due to perceptions of the Trump campaign’s past racism). That virtually none of the participants questioned the bona fides of the tweet itself (i.e., is this a bot?) is cause for concern. This habit of skepticism most certainly needs to be cultivated more among those who scored race evasive, anticritical, or white supremacist. But even among anti-Trump responses, the ability to contextualize the tweet’s politics was not grounded in skepticism of the tweet itself. Given this across-the-board pattern, there is a need for adolescents to supplement critical racial consciousness and political knowledge with more refined technical knowledge about how information actually spreads on social media platforms.

Discussion

Building on a CRDL framework, this study set out to assess adolescents’ ability to critique and evaluate race-related material online, detect differences in these abilities among racial-ethnic groups, and identify adolescents’ needs as they navigate a post-2020 election digital landscape. The study’s nationally representative sampling methods support generalizable findings about CRDL skills among Black, Latinx, and white 18- to 19-year-olds in the U.S. Findings show that the majority of participants demonstrated beginner-level CRDL skills for both tasks, and that few reached mastery; at the same time, a minority of participants used their digital literacy skills to express race-evasive, anticritical, or white supremacist politics.

Relation to previous research

Our findings diverge from a previous study by Breakstone et al. (2021), in which Black students scored significantly lower in online civic reasoning tasks. We found no statistically significant association between CRDL task performance and race for the election propaganda analysis task. For the voter suppression analysis task, there was indeed a significant association overall—but one that contradicted Breakstone et al. (2021): Black participants scored higher than white participants at a statistically significant level. That finding suggests that any attempt to build a standardized test of digital literacy skills will likely be problematic. As the field of digital literacies grows, we must take care to ensure that measures used in research are designed to recognize diversity in behaviors and are not based on a norm from a presumedly dominant group. Critically assessing race-related online information involves distinct skills, and measures of digital literacies and online civic reasoning need to account for those skills. In particular, CRDL skills are distinct from those measured by online civic reasoning studies, which have not included assessment tasks explicitly involving race-related digital media. Our findings amplify the question raised by Cohen and Luttig (2020): what constitutes political knowledge today, and for which communities? Just as they found that Black young people scored higher on measures of political knowledge most directly related to the ways the state interacts with their communities, we found that Black adolescents in our sample performed higher than white adolescents on questions about race-related online information pertaining to voter suppression.

Our findings are consistent with research that suggests African Americans are particularly adept at recognizing and critically reflecting on racial-ethnic inequality. They are least likely to be uncritical, to hold meritocratic beliefs, and to endorse the idea that the U.S. has equal opportunity structures among marginalized groups (Godfrey et al. 2019). What is more, studies have shown that as the Black Lives Matter movement took shape from 2014 to 2016, it expanded the sophistication of the thinking of young adolescents about race and structural racism (Rogers et al. 2021). This phenomenon was especially true for Black and multiracial participants and may explain why, in our study, late adolescents were similarly sophisticated in 2020, when data were collected.

Limitations of the study

Because the current article is limited by its focus on people of voting age or close to it, it does not capture differences across adolescent years. Future studies will include the full sample of NSCDL participants (11- to 19-year-olds) and, thus, will allow us to determine CRDL needs and skills by age groups. We recognize as well that our broad analyses of performance differences among racial-ethnic groups risks homogenizing these groups. For example, among Latinx participants, there may be vast differences among Afrolatinx, Indigenous, and other groups; or differences by age and gender that remain aggregated in our analysis here. Further analysis is needed to unpack the complexity of representing the unique experiences of each individual and group.

Implications for future research and practice

This study provides heuristics for systematically analyzing digital media. The fact that technical literacy skills and critical race analysis are so intertwined in the results suggest that they should be considered closely related in future research and pedagogical practices around CRDL. Future measures could assess more distinctly each of these dimensions and how they are intertwined.

Studies are also needed of adolescent CRDL needs and skills in relation to more recent events since 2020, and we will be contributing to that effort with an analysis of the second wave of the NSCDL, fielded in fall 2021. Moreover, the field needs studies that examine the possible correlations between CRDL learning opportunities and assessments on CRDL tasks like those undertaken in this study. Because the NSCDL measured such learning opportunities, we will eventually be able to conduct statistical analyses converting the qualitative scores on CRDL tasks into quantitative outcome variables and examine the statistical relationships between these two variables.

Perhaps most important, we need to develop interventions that support CRDL learning for both school and informal educational settings, for example, curriculum designs. Such efforts would build on the CRDL skills adolescents already possess and address the deeper needs identified in our study. In our sample, a concerning 18 percent endorsed race-related election propaganda, and an alarming number of the participants were unable to challenge it. In light of those figures, it is not too fanciful to imagine a time in the U.S. when a sophisticated minority of digitally literate adolescents are creating and spreading white supremacist digital propaganda that influences a substantial portion of their peers. To counter this possibility, teachers and other educational professionals should support young people in learning CRDL skills, and they should be supported in doing so through educational policies that prioritize it. In the current political context, some states and school districts have enacted laws or policies that dramatically limit education related to race (Pollock et al. 2022). In such places, teaching CRDL would be hard—and, in some cases, even illegal. People—parents, teachers, and students—can organize to change the situation, to transform their schools and districts. They can facilitate media campaigns, interventions at school board meetings, policy work, philanthropic and foundation work, and so forth. In the meantime, CRDL should be taught wherever it is possible to do so, including in community programs, informal learning spaces, museums, after-school programs, freedom schools, online affinity spaces, and so forth.

Conclusion

The barrage of race-related provocations we have witnessed in recent years highlights the need for our young people to be taught skills of CRDL. Given the role of race in digital media around the 2020 election, this study makes that need crystal clear: adolescents need to be able to interpret the role of digital media in framing our understanding of race and racism in the U.S. As they develop this ability, they will be better prepared to challenge structural racism and white supremacy online and offline, pushing back against oppressive policies and platforms that would otherwise limit their adult lives. Adolescents and young adults have been at the forefront of freedom movements in the U.S. and other countries, and CRDL skills can help ensure that their civic engagement can continue, undeterred by race-related digital media campaigns that are designed to suppress, fragment, and co-opt their power. Moreover, it is imperative that we do not allow race-evasive approaches to digital literacies and campaigns against “critical race theory” to suppress important learning. Rather, we must provide opportunities—wherever it is possible to do so—to teach our young people this essential component of their education.

Footnotes

NOTE:

This study was funded with a Lyle Spencer Award to Transform Education from the Spencer Foundation. This article and findings do not necessarily represent the views of the foundation.

Notes

Matthew Coopilton (formerly Hamilton) recently completed their PhD in urban education policy/education psychology at the University of Southern California and is now a President’s Sustainability Postdoctoral Fellow at the USC School of Cinematic Arts’ Interactive Media and Games Division. Their research focuses on how people learn and develop critical digital literacies, especially through playing and designing games.

Brendesha M. Tynes is Dean’s Professor of Educational Equity and professor of education and psychology at the University of Southern California. Her research focuses on the racial landscape adolescents navigate in online settings, online racial discrimination, critical race digital literacy, and the design of digital tools that empower youth of color.

Stephen M. Gibson is currently a fourth-year doctoral candidate in the Developmental Psychology program at Virginia Commonwealth University. His research focuses on contextual factors that protect or mitigate the effects of racism on mental health symptomatology among Black youth.

Joseph Kahne is Ted and Jo Dutton Presidential Professor for Education Policy and Politics at the University of California, Riverside. Professor Kahne’s research, writing, and school reform work focus on ways that educational practices, policies, and contexts impact equitable outcomes and support youth civic and political development.

Devin English is an assistant professor in the Department of Urban-Global Public Health at Rutgers University. His current research aims to promote the health and wellbeing of Black LGBTQ youth communities through understanding and confronting the intersection of racism and heterosexism.

Karinna Nazario is a developmental psychology PhD student at the University of California, Santa Cruz. Her research examines adolescents’ and emerging adults’ civic identity development on social media. She is particularly interested in how aspects of young people’s cultural identities such as political ideologies and worldviews shape, and are shaped by how they navigate information online and develop digital literacy skills.