Abstract

Prior research on data-driven innovation, which assumes quantitative analysis as the default, suggests a tradeoff: Organizations that rely heavily on data-driven analysis tend to produce familiar, incremental innovations with moderate commercial potential, at the expense of risky, novel breakthroughs or hit products. We argue that this tradeoff does not hold when quantitative and qualitative analysis are used together. Organizations that substantially rely on both types of analysis in the new-product innovation process will benefit by triangulating quantifiably verifiable demand (which prompts more moderate successes but fewer hits) with qualitatively discernible potential (which prompts more novelty but more flops). Although relying primarily on either type of analysis has little impact on overall new-product sales due to the countervailing strengths and weaknesses inherent in each, together they have a complementary positive effect on new-product sales as each compensates for the weaknesses of the other. Drawing on a unique dataset of 3,768 new-product innovations from NielsenIQ linked to employee résumé job descriptions from 55 consumer-product firms, we find support for our hypothesis. The highest sales and number of hits were observed in organizations that demonstrated methodological pluralism: substantial reliance on both types of analyses. Further mixed-method research examining related outcomes—hits, flops, and novelty—corroborates our theory and confirms its underlying mechanisms.

Keywords

In 2011, R&D managers at Procter & Gamble (P&G) faced a critical juncture regarding the future of their Tide Pods prototype. An organization with strong data-driven norms and processes for innovation, P&G considered abandoning the laundry product due to lackluster demand projections based on early consumer tests and analysis of historical sales data from related products. But discussions arose among R&D managers about how much to rely on these quantitative market analyses for assessing the new product’s commercial potential.

Prior research informs P&G’s specific innovation challenge and forms the basis of a more general theoretical perspective on innovation in data-driven organizations. 1 On one hand, the strategy-and-IT literature supports a broadly positive view: Data-driven innovation processes enhance an organization’s ability to generate and select products that meet observable customer demand, thereby increasing firm-level sales (Thomke, 2003, 2020; McAfee and Brynjolfsson, 2012; Wu, Lou, and Hitt, 2019; Kesavan and Kushwaha, 2020; Koning, Hasan, and Chatterji, 2022; Conti, de Matos, and Valentini, 2023). On the other hand, the organizational-innovation literature takes a more skeptical stance, suggesting that data-driven processes lead organizations to allocate too many resources to easily measurable yet merely incremental improvements in products and technologies (Christensen and Bower, 1996; Benner and Tushman, 2002; Deniz, 2020; Felin et al., 2020; Ghosh, 2021). Synthesizing and extrapolating these perspectives to product-level commercial success, we observe that a tradeoff emerges: More data-driven innovation processes may lead organizations to prioritize familiar, incremental product innovations with moderate commercial potential over risky, novel breakthroughs or hit products (Wu, Hitt, and Lou, 2020; Felin et al., 2020).

At first, this tradeoff seemed to play out at P&G as R&D and product managers debated whether the quantitative market analyses could accurately gauge the commercial potential of Tide Pods, which marked a significant departure from traditional liquid laundry detergents. But they were able to move forward when several senior managers emphasized qualitative insights that contradicted the quantitative analyses, like the observation that “[consumers] would hold the pod in their hands like it was a jewel.” 2 These observations sparked deeper examination of how the quantitative evaluation process had anchored their projections on comparisons to past products’ value dimensions (like cleaning power and scent) but had overlooked the value of new unique features (like aesthetic appeal and portability). Guided by this combination of quantitative and qualitative insights, managers conducted additional analyses and ultimately undertook a large-scale launch that generated billions in annual sales and revitalized the sleepy laundry category.

This anecdote highlights that by equating data-driven processes solely with quantitative analysis, prior research on innovation may not fully capture their impact on the commercial outcomes of new products. Despite the rapid rise of quantitative analytics in recent years (Brynjolfsson and McElheran, 2016; Wu, Hitt, and Lou, 2020; Lenox, 2023), new-product development processes typically incorporate both quantitative and qualitative analyses to generate and assess new-product ideas (Hargadon and Douglas, 2001; Seemann, 2012; Martin and Golsby-Smith, 2017). And the literature on academic research methods underscores that qualitative analysis has distinct strengths (and weaknesses) compared to quantitative analysis in generating and evaluating ambiguous or novel research ideas (Edmondson and McManus, 2007; Siggelkow, 2007; Kaplan, 2016; Akerlof, 2020; Tidhar and Eisenhardt, 2020). These observations suggest that methodological pluralism—substantial reliance on both quantitative and qualitative analysis—may be a crucial factor in explaining innovation success in organizations. Seeking to generalize these anecdotal observations and insights from prior research, we ask, how does reliance on quantitative and qualitative analysis in an organization’s innovation process influence the commercial success of its new-product innovations?

We argue that quantitative and qualitative analyses have a complementary effect on an organization’s ability to develop commercially successful products. Drawing from the research-methods and innovation literatures, we describe this complementarity as rooted in the unique benefits and limitations inherent in each type of analysis. Used alone, quantitative analyses like market tests and historical sales panels offer a statistically consistent view of demand for existing products, leading to the development of more familiar products that yield moderate successes but fewer big hits (see Katila and Ahuja, 2002). Conversely, qualitative analyses such as customer interviews and focus groups lack statistical consistency but can identify potential in unquantified value propositions, leading to more novel products but also more risk and thus more flops (failed products). But innovation processes that substantially integrate both methods will result in products that have quantifiably verifiable demand and qualitatively discernible potential—both of which are vital for successful innovation (Burgelman, 1983; Ahuja and Lampert, 2001). Therefore, organizations exhibiting methodological pluralism are more likely to succeed in new-product innovation than are those that heavily rely on one method or minimally use both.

Empirically examining the impact of methodological pluralism presents a dual challenge: measuring the data-analysis methodologies used in organizations’ innovation processes and linking them to product-level commercial outcomes. Drawing on a unique data source of excerpts from innovation-relevant employees’ résumés, we use mentions of qualitative or quantitative analysis in their work descriptions as a proxy for an organization’s relative reliance on the methods embedded in its norms and processes over time. By mapping these mentions to a unique point-of-sale dataset from NielsenIQ that tracked the commercial performance of 3,768 new products launched by 55 large consumer-packaged-goods (CPG) firms between 2010 and 2016, we empirically link the product sales data to the employee résumé data.

We find evidence consistent with our theory of quantitative and qualitative complementarity in organizations’ innovation processes. Specifically, neither quantitative nor qualitative analysis has a significant individual impact on new-product sales, but jointly the two methods have a robust positive interaction effect. The highest new-product sales resulted when organizations exhibited methodological pluralism: relatively high levels of both qualitative and quantitative analysis.

We further investigate the theoretical underpinnings of our findings via additional mixed-methods analyses, leveraging both archival analysis and interviews with CPG industry professionals. With the archival data, we demonstrate that qualitative analysis leads to more flops and fewer moderate successes, whereas quantitative analysis results in fewer hits but more moderate successes; substantial use of both together yields more hits and fewer flops. Additionally, qualitative analysis increases product novelty, which partially explains the success of methodological pluralism in innovation. Insights from executive interviews and other additional analyses provide more-granular explanations for how organizations use quantitative and qualitative analysis in product development.

This article contributes to the innovation literature by clarifying that quantitatively driven innovation processes lead to fewer high-impact hits only when used without substantial qualitative analysis. The study contributes to the strategy-and-IT literature by demonstrating that the effectiveness of data analytics adoption varies not only with the type of innovation but also with the mix of analyses used, and it contributes to the literature on culture and strategy by illustrating how leader-driven norms shape perceptions of innovation opportunities.

Theoretical Background

Prior empirical research has not specifically investigated how organizations’ use of multiple types of analyses or solely qualitative analysis affects the commercial success of new products. In the case of quantitative analysis, however, several strands of research in organizational theory and strategy inform our theorizing and provide a foundation for hypothesis development.

The Strategy-and-IT Literature: Quantitative Analysis and Firm-Level Sales

The first pertinent research stream, situated at the intersection of IT and strategy research, supports a generally positive view of quantitative analysis in innovation by establishing a link between data analytics adoption and firm-level sales. This research stream advances the idea that quantitatively data-driven organizations are apt to be better equipped to test their assumptions about the market (Thomke, 2003, 2020; Camuffo et al., 2020; Koning, Hasan, and Chatterji, 2022) and to process large volumes of external information to align their products to customer preferences (Tambe, 2014; Hitt, Jin, and Wu, 2015; Müller, Fay, and vom Brocke, 2018; Wu, Hitt, and Lou, 2020; Brynjolfsson, Jin, and McElheran, 2021). Such organizations may also be less prone both to undisciplined organizational politics and to confirmation bias that supports management’s pet projects (Brynjolfsson, Hitt, and Kim, 2011; Brynjolfsson and McElheran, 2019; Thomke, 2020; Kim et al., 2024). All these advantages would, theoretically, augment organizations’ ability to produce the most-promising innovations.

This viewpoint is consistent with empirical observations that on average, firm-level adoption of data analytics is associated with higher firm-level sales, productivity, and market value (McAfee and Brynjolfsson, 2012; Tambe, 2014; Brynjolfsson and McElheran, 2016, 2019; Müller, Fay, and vom Brocke, 2018; Koning, Hasan, and Chatterji, 2022; Conti, de Matos, and Valentini, 2023). These benefits are especially pronounced in firms that have various organizational complements and IT capabilities (Tambe, 2014; Müller, Fay, and vom Brocke, 2018; Wu, Lou, and Hitt, 2019; Brynjolfsson, Jin, and McElheran, 2021; Koning, Hasan, and Chatterji, 2022).

The benefits of data analytics also appear to depend on the nature of innovation the firm pursues. Examining links among data analytics adoption, patents, and sales, a recent study shows how the adoption of quantitative analytics impacts sales at firms that specialize in various types of innovation. The results suggest that firm-level adoption of quantitative data analytics contributes significantly to sales only in firms that produce patents that are amenable to quantitative analysis, such as process-oriented or incremental innovation (Wu, Hitt, and Lou, 2020). By contrast, firms that innovate with novel technologies benefit less from quantitative analytics, the authors argue, because existing quantitative data is most useful for improving existing products and processes.

The Organizational-Innovation Literature: Quantitative Analysis and Incremental Innovations

The second research stream, rooted in organizational theories of innovation, supports a less sanguine view, namely, that increasing reliance on quantitative analytics and related practices may suppress innovation by over-allocating resources to minor changes and incremental innovations. This perspective harkens back to classic arguments that allocating innovation resources on the basis of short-term measurable outcomes could unbalance such investments to favor incremental rather than more-radical or long-term innovation (March, 1991; Leonard-Barton, 1992; Christensen and Bower, 1996; Ahuja and Lampert, 2001; Benner and Tushman, 2003; O’Reilly and Tushman, 2008). For example, Baldwin and Clark (1994: 79) argued that after U.S. firms widely adopted quantitative capital-budgeting systems, “managers at all levels lacked objective data and analytic tools” with which to invest in less-measurable investments; certain organizational capabilities were thus ignored, to the long-term detriment of these firms’ new-product innovation and growth. Similar mechanisms were observed at organizations that adopted ISO 9000 process management. Benner and Tushman (2002: 682, 2003) observed that “exploratory activities [were] increasingly unattractive compared with the short-term measurable improvements” and that exploratory patents were thus “crowded out” by exploitative patents. Jointly, this stream of research established that focusing on quantitatively measurable outcomes unintentionally inhibits organizations’ investment in less-measurable exploratory innovations.

Continuing the theme, recent studies have theorized that innovating based on quantitative evidence of consumer preferences may similarly promote incremental improvements rather than radical or more-impactful innovation (McDonald and Gao, 2019; Felin et al., 2020). These studies have focused on organizations’ use of A/B testing, arguably the gold standard among quantitative tests of consumer preferences. For instance, Ghosh (2021) found that inexpensive access to A/B testing tools had the unintended consequence of shifting engineering and cognitive resources away from intentional planning, resulting in less-impactful product-development experimentation. Another study found that U.S. newspapers that adopted A/B testing tools made less-impactful feature changes and adaptations to their websites (Deniz, 2020).

Thus, despite shifts over time in the specific tools in question, in sources of uncertainty (from technical uncertainty to market uncertainty), and in the types of innovation (from process to product) being studied, this second research stream has maintained the view that increased reliance on quantitative analysis has the potential to discourage novel, radical, or breakthrough innovations. Though the relationship between quantitative analysis and product-level commercial success is not established, some research has also implied a connection (Christensen, 1997; Ahuja and Lampert, 2001). The underlying argument has also remained constant: that in the intra-organizational competition for scarce resources (Bower, 1972; Burgelman, 1991; Christensen and Bower, 1996; Klingebiel and Rammer, 2014; Keum and Eggers, 2019), highly quantitative organizations tend to develop more easily measurable—and thus relatively incremental—innovations.

Synthesis of Literature

It is possible to reconcile these views by recognizing that they focus on different dependent variables: the positive view on firm-level productivity and sales and the critical view on the magnitude of changes to products and technologies. These views may, indeed, coexist if the impact of data-driven processes on innovation outcomes simply varies depending on the nature of the innovation. Synthesizing all prior perspectives and extrapolating them to product-level commercial success, we observe an underappreciated tradeoff: Organizations’ increased reliance on quantitative analysis in innovation processes can promote familiar incremental innovations with at least moderate commercial potential but perhaps at the expense of risky, novel products with outlier commercial potential (Felin et al., 2020; Wu, Hitt, and Lou, 2020).

Though this observation is insightful, we argue that prior research ultimately provides an incomplete picture of how data-driven analysis impacts innovation. First, prior research implicitly uses the terms data-driven or data analysis to mean quantitative analysis, which is at odds with the observation that qualitative analysis is also common in innovation (Hargadon and Douglas, 2001; Martin and Golsby-Smith, 2017; Gao and McDonald, 2022). The research-methods literature demonstrates that the value of quantitative analysis in research can depend on its interplay with qualitative analysis (Jick, 1979; Edmondson and McManus, 2007; Siggelkow, 2007; Kaplan, 2016), which suggests that research on data-driven innovation could benefit from a more expansive perspective that incorporates multiple methodologies. Second, on an empirical level, prior research has not directly linked data-driven innovation processes to product-level commercial success. Even for quantitative analysis, which has been studied far more than qualitative, prior studies link firm-level data analytics adoption to outcomes like patents or productivity, or they link quantitative practices to the extent of product changes. But these outcomes are not necessarily directly related to the actual commercial performance of new-product innovations (Lee, 2022). Therefore, in contexts in which producing hits is important, the overall impact of data-driven innovation processes—whether through quantitative analysis, qualitative analysis, or their interaction—remains an open question.

Hypothesis

Quantitative and Qualitative Analysis in the Innovation Process

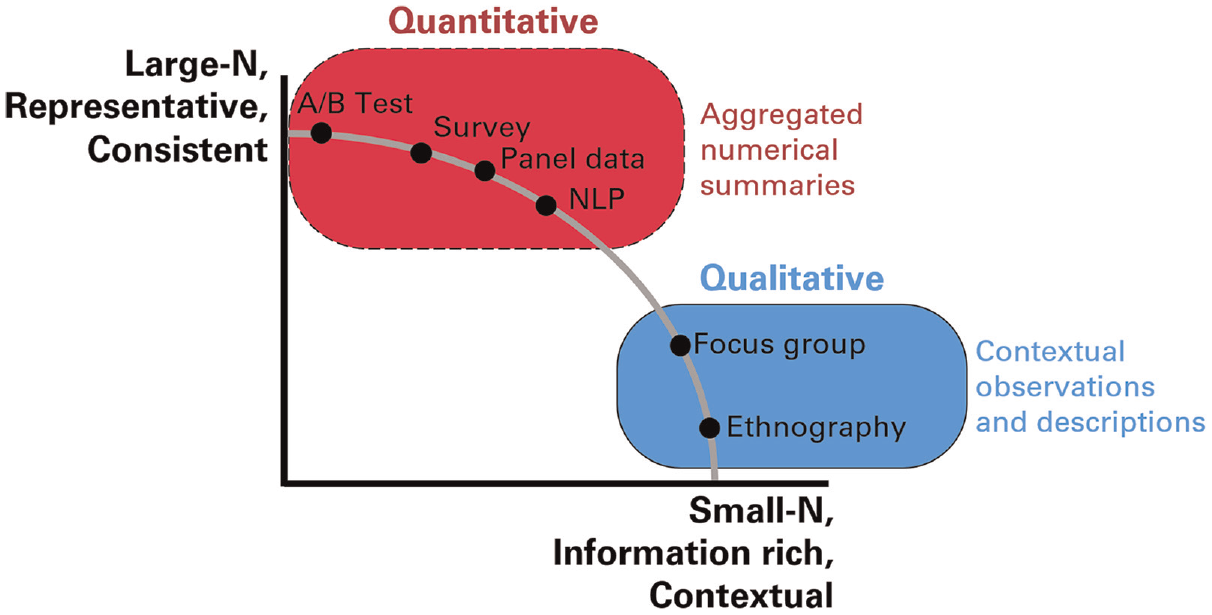

Quantitative and qualitative analysis can be viewed as two ends of a methodological spectrum (see Figure 1). Though different methodologies occupy different points on the spectrum, we define as quantitative those analyses that produce large-N numerical information intended to be representative and statistically consistent (e.g., data analytics, fixed-response surveys, or A/B testing). On the other end, we define as qualitative those analyses that produce small-N non-numerical information that is richly descriptive and contextual (e.g., focus groups or interviews) (Edmondson and McManus, 2007; Lamont, 2009; Kaplan, 2016; Tidhar and Eisenhardt, 2020; Hong, Lamberson, and Page, 2021). 3

Methodological Spectrum: Quantitative and Qualitative

Our theory of quantitative and qualitative complementarity focuses on the variation and selection stages of the process, during which product concepts are generated, evaluated, prioritized, and developed (Levinthal, 2007; Criscuolo et al., 2017; Keum and See, 2017). Note that according to our theory, the respective strengths and weaknesses of quantitative and qualitative analysis operate similarly regardless of whether these types of analysis are used at the variation or selection stage. In other words, these analyses will be amenable to generating the same types of products that they will be amenable to selecting; we do not theorize or test the sequencing of methods over time between stages. 4 Furthermore, how an organization executes the final stage of innovation, implementation (e.g., via marketing or operations), falls outside the theoretical and empirical scope of this article. As detailed in the Methods and Results sections, we took several steps to ensure that the quantitative and qualitative analyses used in the implementation stage do not influence our results.

Quantitative Analysis and New-Product Success

As established in the Theoretical Background section, high reliance on quantitative analysis in new-product development involves tradeoffs. Though prior research has not directly linked quantitative analysis to product-level sales, we expect that tradeoffs similar to those we have described will apply. Quantitative analysis anchors the generation or assessment of new products in broadly observable market demand. This information can serve as a base rate for estimating the potential of future products, which is generally viewed as effective in contexts that resemble past circumstances (i.e., for products that will match the data-generating process of existing products) (Mellers et al., 2015; Tetlock and Gardner, 2016; Tetlock, Mellers, and Scoblic, 2017; Kapoor and Wilde, 2023). As a result, quantitative analysis should be helpful for the development of incremental products (Wu, Hitt, and Lou, 2020).

But the inclination to anchor generation and assessment of new products in observable demand can also hamper innovation. Products with high commercial potential are not apt to be readily derived from aggregate trends (Allen, 2024) because the opportunities with the greatest commercial potential are often unfamiliar and less likely to be perceived by competitors or recognized by customers (Levinthal and March, 1993; Levinthal, 1997; Gavetti and Levinthal, 2000; Denrell, Fang, and Winter, 2003; Gavetti, 2012; Felin and Zenger, 2017; McDonald and Allen, 2022). Thus, a product-development process that tilts heavily toward quantitative analysis may result in failure to pursue more-impactful opportunities (Christensen and Bower, 1996; Benner and Tushman, 2002; Deniz, 2020; Felin et al., 2020; McDonald and Eisenhardt, 2020; Ghosh, 2021; Allen, 2024).

In short, though increased quantitative analysis may increase the likelihood of launching moderately successful products, it may also stifle the development of high-potential outsize hits. The overall impact on the commercial success of new products hinges on whether the increase in moderate successes outweighs the decrease in big hits.

Qualitative Analysis and New-Product Success

As noted, previous research has not explicitly explored the impact of qualitative analysis on innovation outcomes. But extrapolating from the research-methods literature, we argue that reliance on such analysis could either enhance or diminish the commercial success of an organization’s new products. Qualitative analysis lacks statistical consistency (Edmondson and McManus, 2007; Siggelkow, 2007; Kaplan, 2016; Tidhar and Eisenhardt, 2020) and may not yield a representative estimate of market demand. Therefore, such analysis may not provide as reliable a market signal as quantitative analysis does.

But qualitative analysis, which is context-rich and focuses on in-depth understanding of a few cases, is adept at identifying potential in unquantified possibilities (Edmondson and McManus, 2007; Siggelkow, 2007; Kaplan, 2016; Tidhar and Eisenhardt, 2020). This ability is crucial for recognizing new value dimensions that differ from those of past successful products (Gavetti, Helfat, and Marengo, 2017; Rindova and Courtney, 2020; Morris et al., 2023). These unique value propositions are less likely to face competition, potentially creating substantial commercial value for the innovator (Denrell, Fang, and Winter, 2003; Felin and Zenger, 2017; Allen, 2024). Thus, while qualitative analysis may lead to more product failures due to unreliable estimates of broad market demand, it can also foster the development of novel products with unique value propositions. The overall impact on the commercial success of new products hinges on whether the rise in failures outweighs the increase in novelty.

Methodological Pluralism: Complementarity of Quantitative and Qualitative Analysis

We conceptualize methodological pluralism in organizations as comparatively high reliance on both quantitative and qualitative analysis, relative either to low reliance on both or to high reliance on only one method. Although the respective contributions of quantitative and qualitative analysis to new products’ commercial success are ambiguous (i.e., each could be either positive or negative), we theorize that the two types of analysis will jointly have a positive, complementary effect. The strengths of each type counteract the weaknesses of the other (Jick, 1979), so organizations characterized by high methodological pluralism can benefit from a process of validation that compensates for the blind spots of any single method (Eisenhardt, 1989; Kaplan, 2016; Page, 2018; Ott and Eisenhardt, 2020). Such organizations are more likely to pursue new products that exhibit (at least to some extent) both quantifiably verifiable demand and qualitatively discernible novel value. This complementarity can be explained through mechanisms at both the individual and organizational levels.

At the individual level, innovation processes that rely on both methods will allow more-accurate perceptions of new products’ potential. Because innovations’ commercial success depends on both estimating existing demand and recognizing novel potential (Burgelman, 1983; Ahuja and Lampert, 2001; Allen, 2024), using both methods will enhance the accuracy of organizational members’ assessment of a set of high-potential new products. If both types of analysis align to produce consistently strong or weak signals, the decision about the product is clear. But if the methods do not align, with one giving positive and the other negative signals, then using both will result in better information than will using one in isolation (Jick, 1979). For instance, if quantitative analysis suggests low demand but qualitative insights are favorable, organizations might adjust the quantitative evaluation criteria (Vinokurova and Kapoor, 2020) while still considering quantitative predictions. Alternatively, if quantitative assessments are positive but qualitative excitement is lacking, innovators may reassess the assumptions that led to potentially inflated quantitative assessments.

At the organizational level, innovation processes that employ both types of analysis are likely to allocate resources to more successful products. Organizational processes and norms significantly influence the methods that individuals choose to use, as well as the perceived value and legitimacy of these methods (Lamont, 2009; Anthony, 2018). In addition to shaping individuals’ perceptions of a product’s market potential, emphasizing a particular method may influence collective expectations about which products will win in the internal competition for scarce resources (Bower, 1972; Burgelman, 1991; Gilbert and Bower, 2005). For instance, in an organizational resource-allocation process that heavily relies on quantitative analysis for validation of new-product ideas, organization members will be unlikely to risk their careers or marshal the political will to champion products that are not amenable to that preferred method of analysis (Christensen and Bower, 1996; Benner and Tushman, 2002; Deniz, 2020; Vinokurova and Kapoor, 2020). Thus, an organization with high methodological pluralism would be more likely to allocate resources to products that are (at least moderately) amenable to both quantitative and qualitative methods rather than to products that strongly appeal to just one.

We thus expect that by anchoring on a quantitative baseline estimate of demand while also qualitatively discerning novel potential, methodologically pluralistic organizations will develop new products that are more commercially successful. High reliance on both quantitative and qualitative analysis not only leads to more accurate individual valuations of products’ commercial potential but also promotes organizational norms and processes that allocate resources to products that are at least moderately supported by both methods. We therefore hypothesize as follows:

Methods

Research Context

We test our hypothesis in the context of new-product innovation in the U.S. CPG sector. This sector consists of firms that manufacture products sold to consumers via retail channels, a market with estimated U.S. sales of $815 billion in 2019. It spans a wide range of product categories, including products in food and beverages, household cleaning, beauty, over-the-counter drugs, and electronics from companies like Procter & Gamble, Unilever, Mattel, Coty, Church & Dwight, and Newell-Rubbermaid.

Two characteristics of the CPG sector make it a fertile setting in which to empirically examine our theory of methodological pluralism. First, the CPG sector is well represented in the NielsenIQ Retail Scanner dataset (introduced in the next section), which is one of the richest datasets available for capturing product-level innovation and commercial outcomes on a broad scale (Argente, Lee, and Moreira, 2018; Granja and Moreira, 2023). Second, due to the CPG sector’s need for innovation and the difficulty of anticipating successful products, both quantitative and qualitative analyses are routinely used to generate and assess new products. As in many industries, the cost of developing and launching products is sufficiently high that products must be validated and vetted before being fully developed. To control costs, most CPG organizations require product concepts to pass through a formal funnel process with evaluation checkpoints; at these junctures, empirical evidence of consumer demand is important for determining which products will receive further investment. Products and features are generated and assessed throughout this process through an array of qualitative and quantitative analyses: forecasts based on past purchasing patterns of similar products in consumer-panel data, surveys, ethnography, consumer interviews, focus groups, and concept tests.

To augment our archival analysis with in-depth understanding of how quantitative and qualitative methods are used in the CPG innovation setting, we conducted semi-structured interviews with 36 CPG industry informants. Consistent with similar multi-method investigations (Pahnke et al., 2015; Bermiss and McDonald, 2018), we used a snowball technique to identify suitable interviewees. We interviewed executives, R&D personnel, data scientists, and product managers at eight leading companies in a broad range of product categories. Our goal was to understand how managers at each organization use data to assess the commercial potential of new-product innovations. We refer to these interviews in the following sections to elaborate on our main results.

Sample and Data

To formally test our hypothesis, we undertook an extensive data-collection effort by gathering résumé entries for innovation-related employees at large CPG companies and carefully merged the entries with a granular dataset of product-level sales at retail stores. This rich, multi-source dataset allowed us to track a nearly comprehensive sample of 3,768 new-product launches at 55 large CPG organizations in the period 2010–2016. 5 It allowed for in-depth, precise measurement of the commercial success of new-product innovations that was linked to the methodologies used in market analysis at those organizations each year.

We constructed measures of product-level features and commercial performance by using the NielsenIQ Retail Measurement Services scanner dataset, which is among the most comprehensive and representative point-of-sale retail datasets available.6,7 To avoid treating minor changes to existing products as new products, we aggregated UPC barcode-level data up to the brand/product-module level. 8 We matched products to firms by using the GS1 UPC-firm-matching database.

To construct measures of each organization’s use of quantitative and qualitative analyses, we used job-description text from résumés posted on a popular online career networking website (Wu, Hitt, and Lou, 2020; DeSantola, Gulati, and Zhelyazkov, 2023). 9 (For a detailed explanation of why it was necessary to use résumé job descriptions rather than titles, skills, or alternative data sources, see Online Appendix A.) Because our aim was to examine the use of quantitative and qualitative analysis in the innovation-development process, we collected résumés only for employees whose reported job functions included “product management” or “research,” using the networking website’s internal job-function classification system (for additional details, see Online Appendix A). Because the NielsenIQ data are most representative for CPG firms, we also filtered for firms with the two-digit NAICS codes 31, 32, and 33. 10

Our data collection resulted in 101,919 unique employee résumés describing 182,403 positions at CPG firms between 2010 and 2016. This large number of descriptions was ultimately reduced in our sample after we applied several necessary filters to the data. First, to ensure that we had relevant text for our measures, we filtered for profiles with valid English text in the job description, and we kept only the innovation-related sentences (as described in the Measures section below). Second, to ensure a sufficiently large sample every year for meaningful text analysis, we kept only firm–years with 100 descriptions that contained such innovation-related text. 11 Ultimately, our analysis used a final sample of 49,052 job descriptions of innovation-related work, from 55 CPG firms. For summary statistics displaying the number of observations dropped at each step of filtering the data, see Online Appendix D.

For additional controls in robustness checks, we also obtained supplementary firm-level information by collecting Glassdoor reviews for the firms in our sample and Compustat data for the public companies among them.

To construct our primary dataset, we aggregated all our data sources at the product level. (See Online Appendix A for additional details on sample construction and data merging.) For additional analyses, we also constructed datasets at the firm–year panel and individual levels. The product- and firm-level datasets include comprehensive information on all 3,768 new products launched by 55 consumer-product firms in the NielsenIQ data for 2010–2016. Due to the necessary filtering steps described above, the final sample of firms does not contain small CPG firms; it is, however, a nearly comprehensive sample of large CPG firms with operations in the United States. Approximately 80 percent of the firms in the sample belonged to the Fortune 1000 or Global 500 in 2016.

Measures

Dependent variable: New-product sales

The primary dependent variable is new-product sales, operationalized as the sum of dollar sales of product i in the two quarters that conclude the two-year post-launch period (New-product salesi). The time of product launch was determined as the first quarter that the product has observable sales in the NielsenIQ data, which is consistent with the approach laid out by Argente, Lee, and Moreira (2018). We used sales in the final two quarters of the two-year post-launch period to mitigate confounding signals from the initial size of the product launch, which is more likely than later sales to be endogenously determined by the firm (Bass, 1969). The firm–year panel data aggregate this variable by summing the new-product sales of all products launched each year.

Dependent variable(s): Hits and flops

To examine the mechanisms underlying our proposed theory, we discretized the new-product sales measure to identify which products were hits and which were flops. A product that reached the top 5 percent of new-product sales in each product group was classified as a Hiti (which accounted for about 50 percent of total new-product sales in our sample), while those in the bottom 50 percent were classified as a Flopi (which accounted for about 5 percent of total new-product sales in our sample). We also conducted analyses with alternative thresholds, discussed below, showing the effects of quantitative and qualitative analysis on each decile of new-product sales. In the firm–year panel dataset, we tallied the classifications Hits and Flops by summing them at the firm level for all products launched each year. We also assessed outcomes by calculating Hit rate and Flop rate, or the proportion of products launched that were hits and failures, respectively.

Independent variables: Quantitative and qualitative analysis

Our independent variables were designed to capture each firm’s norms and processes for reliance on quantitative and qualitative analysis in new-product development. To do so, we calculated the mean number of innovation-related sentences at the firm that referred to “qualitative” or “quantitative” analysis in employee résumés.

To construct these measures, we filtered the résumé text of innovation-focused employees to identify sentences containing at least one word related to the product-innovation process (see Online Appendix B for the list of words). We applied this filter because even innovation-related employees like R&D managers and product managers engage in (and mention in their résumés) many tasks that do not directly involve generating and assessing product concepts (e.g., general operations, hiring, etc.). Furthermore, the same job titles have different functions in different organizations (Baron and Bielby, 1986), so capturing only the relevant innovation-related work acted as an important filter for increased theoretical precision aligned with our theory’s focus on the generation and assessment of new products, not on unrelated tasks listed under the same job title. Overall, 133,193 of 354,532 sentences (about 38 percent) in our résumé sample included a product-innovation-related word. (For additional details on sample construction, see Online Appendix A; for detailed summaries of the numbers of descriptions and sentences in our sample, see Online Appendix D.)

We obtained the measures Quantitative analysisft and Qualitative analysisft for each firm f in year t by calculating the mean number of innovation-related sentences in the résumés of its employees that contained at least one quantitative or qualitative word, respectively. 12 We confirmed that alternative measure specifications using word-level and description-level mentions of quantitative and qualitative words produced highly similar results (see Online Appendix E). To identify relevant quantitative and qualitative words, we used a word-embedding model (Kozlowski, Taddy, and Evans, 2019; Li et al., 2021). Online Appendix B provides additional details on the methodologies used to construct these measures.

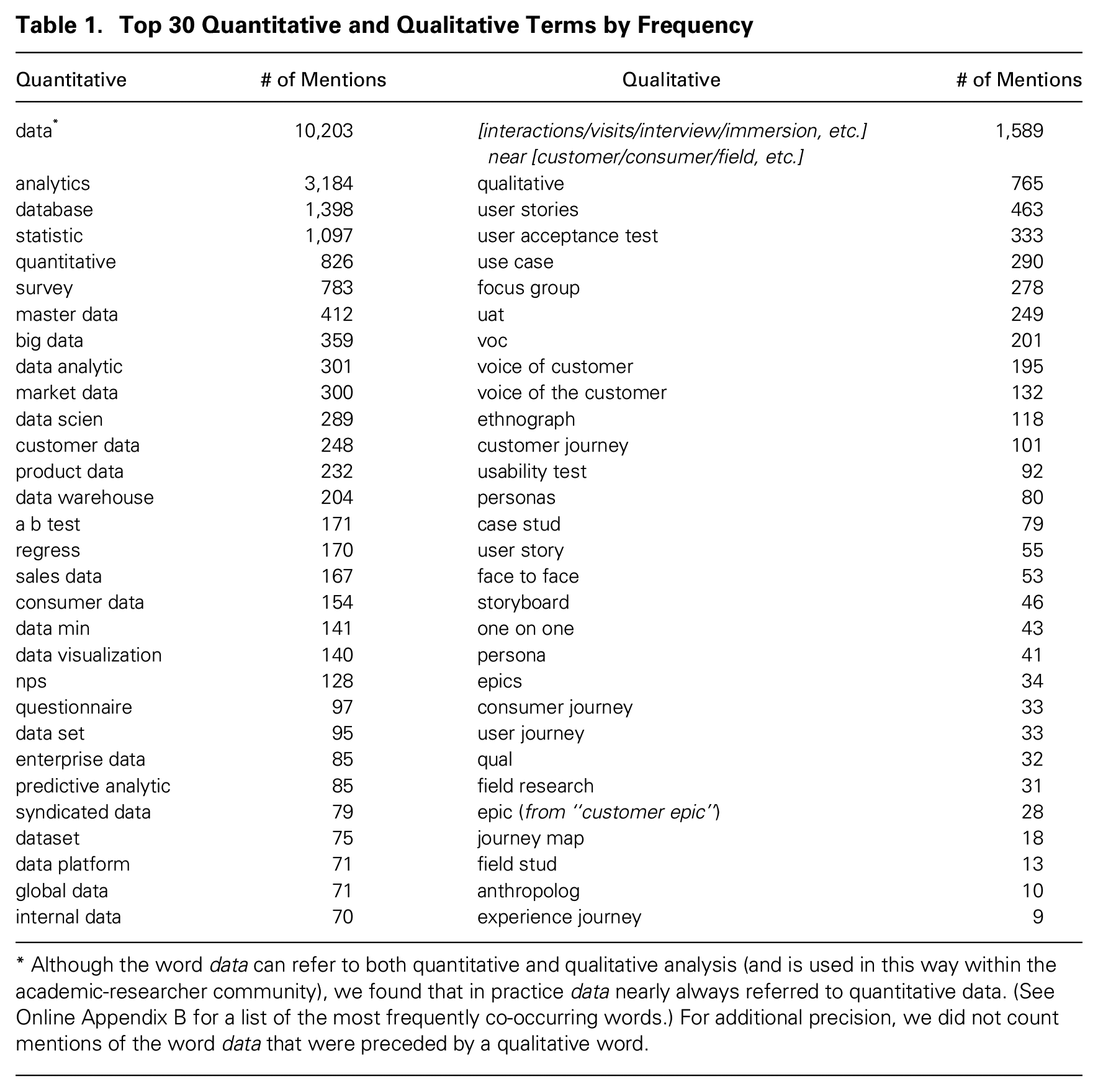

Table 1 lists the quantitative and qualitative terms that appeared most frequently in the dataset. (For a more comprehensive list, see Online Appendix B.) Quantitative terms included words such as analytics, statistics, and data science; qualitative terms included words such as focus groups, customer interviews, and user stories. To ensure that our measure was not highly sensitive to the removal or inclusion of any particular words, in robustness checks we created a bootstrapped variable consisting of the mean of 1,000 draws of each measure, with each draw randomly excluding 25 percent of the quantitative or qualitative terms in our dictionary. The bootstrapped measures yielded highly similar results (see Online Appendix E).

Top 30 Quantitative and Qualitative Terms by Frequency

Although the word data can refer to both quantitative and qualitative analysis (and is used in this way within the academic-researcher community), we found that in practice data nearly always referred to quantitative data. (See Online Appendix B for a list of the most frequently co-occurring words.) For additional precision, we did not count mentions of the word data that were preceded by a qualitative word.

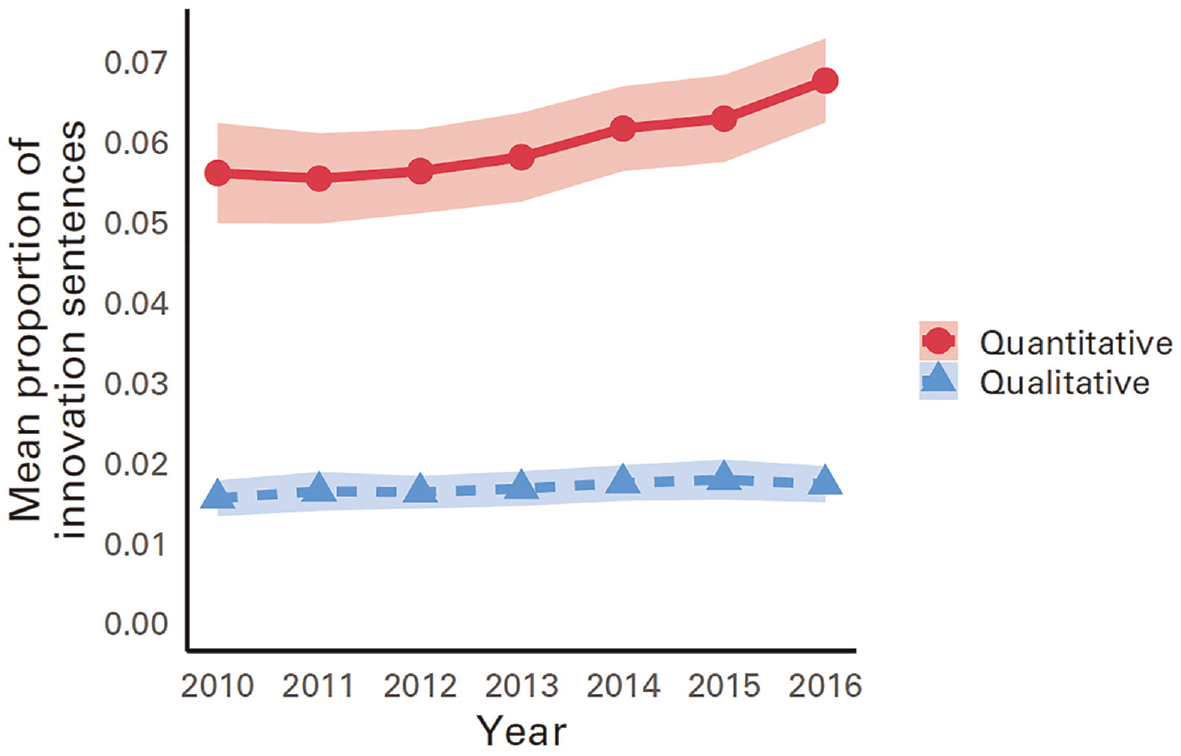

We used an array of analyses to extensively validate these independent-variable measures. First, we confirmed that our quantitative-analysis measure captured variation consistent with the previous literature’s observation of an increase in firms’ adoption of quantitative tools and methods during this time period (Wu, Hitt, and Lou, 2020). As expected, Figure 2 displays a steady increase in the mean use of quantitative analysis in innovation-focused work between 2010 and 2016, with a slight increase for qualitative analysis as well. Note that the use of quantitative analysis was roughly 3.5 times more prevalent than that of qualitative analysis; this pattern provides important context when interpreting the coefficients in the regressions reported in the Results section. Note, too, that Figure 2 displays only an aggregated mean, which could potentially give the impression that at all firms, reliance on quantitative and qualitative analysis increased. But this was not the case: Online Appendix C confirms that there was significant within-firm variation over time, including many instances of firms that significantly decreased or increased the magnitude of their quantitative and qualitative analysis in the time period. The existence of such within-firm variation validates our approach of using firm–year fixed-effect models to test our hypothesis, as described below.

Mean Quantitative and Qualitative Analysis, 2010–2016*

Second, we validated our independent-variable measures by using two external data sources: job-posting descriptions from the data vendor LinkUp and mentions of quantitative terms in earnings calls from Capital IQ. Prior research has shown that job-posting descriptions capture demand for particular tasks and skills in the labor market, such as quantitative or qualitative analysis (Hershbein and Kahn, 2016; Goldfarb, Taska, and Teodoridis, 2020; Lee and Kim, 2024). Although this does not replicate the same construct as our measure based on résumé descriptions, we expect the two data sources to be correlated: Both relate to the underlying use of quantitative and qualitative data in decision making and thus serve as an external validation (see Wu, Hitt, and Lou, 2020). Similarly, we do not expect data on earnings calls to serve as an ideal measure of how much organizational members use, value, and rely on quantitative analysis. But because organizational leaders can have a profound effect on norms and processes, we expect mentions of quantitative analysis in earnings calls to correlate with our résumé measures of the use of quantitative analysis throughout the organization. Both datasets underwent the same process that we used for our résumé data to identify relevant terms and to construct measures of quantitative and qualitative analysis. The measures exhibit strong convergent and discriminant validity and high reliability, with adjusted Cronbach’s alphas of 0.73 for quantitative and 0.78 for qualitative measures. Further details and analysis appear in Online Appendix C.

Third, using résumé text as a data source relies on a key assumption: that the language organizational members use to describe their innovation work appropriately conveys some degree of reliance on quantitative and qualitative analysis in the organization. A possible concern when researchers use résumé data is that employees might selectively compose job descriptions that are aspirational rather than strictly factual. This scenario could be particularly problematic if quantitative or qualitative analysis were mentioned as a ploy to appear attractive to the external labor market. Because this hypothetical could be of concern if mentioning quantitative or qualitative analysis in a specific role predicted turnover, we conducted an individual-level analysis to mitigate this concern. Using a Cox proportional-hazard model on individual-level data, we verified that mentions of quantitative or qualitative analysis from an employee who held a particular position did not predict turnover in that position when we controlled for prior quantitative and qualitative experience (see Online Appendix C). This analysis helps to allay concern about our empirical estimation strategy because the results were robust when we controlled for quantitative and qualitative experience (which we show in robustness checks in Online Appendix E). Given that aspirational résumé text does not appear to bias our results, we assert that the two most plausible reasons that an employee would mention, say, data analytics in a résumé are (1) that the individual actually devoted considerable time and attention to data analytics in the position in question; or (2) that the individual perceived data analytics to be valued within the organization and thus emphasized it. Both reasons, aggregated over hundreds or thousands of employees, likely indicate an organization that relies on quantitative analysis (and similarly for qualitative analysis).

Finally, we conducted analyses to validate our claim that the measure captures an organization-level construct (reliance on analyses embedded in the norms and process of innovation) that is distinct from human-capital acquisition. If we are genuinely capturing an organization-level construct, we would expect that individuals’ likelihood of mentioning quantitative or qualitative data would reflect reliance on quantitative and qualitative analyses in the organizations they join; this is what we observed (see Online Appendix C for analysis). We further corroborated this idea by showing in our robustness checks (see Online Appendix E) that additional controls for skills and past analysis-specific experience did not alter the results—again, as expected from measures that primarily capture organizational-level norms and processes.

Control variables

We controlled for several firm–year-level factors that may correlate with the level of innovation activity and commercial success. These controls include the number of new products introduced by a firm in a given year (an indication of innovative activity), total annual sales (an indication of size and resources), the total stock of products (an indication of size and scope), and the number of employee descriptions from the career networking website (an indication of size and representation on the platform that was our data source). We lagged the sales, products, and employee profile measures by one year and took the natural log of each control variable to normalize model residuals due to highly skewed distributions. We discuss a large set of additional controls in the Robustness Checks section below; variable descriptions and results appear in Online Appendix E.

Statistical Estimation

A standard test for complementarity between two of an organization’s activities, represented by two continuous variables, is to include the variables in a regression equation along with an interaction term that multiplies them to predict a performance outcome (Brynjolfsson, Hitt, and Yang, 2002; Brynjolfsson and Milgrom, 2013). When both variables are normalized and mean-centered at 0, a statistically significant positive value on the coefficient of the interaction term is evidence of complementarity, when we assume that the two activities are independent and exogenous (Brynjolfsson and Milgrom, 2013). The interaction term intuitively represents complementarity by indicating that the marginal performance effect of one variable is stronger in the presence of the other variable.

That the choice of the level of each type of analysis is independent and exogenous is a strong assumption. We can, however, take steps to bolster our confidence in this assumption. In addition to the raw correlations presented below, we tested for independence by performing supplemental tests of the relationship between the quantitative and qualitative analysis measures (see Online Appendix D, Table D2). The tests confirm that when we controlled for firm and year fixed effects, there is no statistically significant relationship between the two variables, indicating an acceptable level of independence for the purposes of our models.

Regarding exogeneity, the ideal experimental design would be to randomly assign varying degrees of quantitative and qualitative analysis to organizations. Such a design is not feasible; nor was a valid shock or instrumental variable available in our data. Thus, although we cannot claim strict exogeneity or causal identification, we take several additional steps to establish our theory and its boundary conditions empirically. First, our models include an array of fixed effects, including firm fixed effects. Therefore, time-invariant interfirm heterogeneity—such as one firm exhibiting a higher level of risk aversion than others do—will be less likely to bias the results via the intensity of quantitative and qualitative analysis. 13 Second, we conducted an array of robustness checks with additional controls and specifications, such as controls for R&D spending and inverse propensity-score weighting for causal identification (described in the Results section and Online Appendix E). Finally, we validated the theoretical underpinnings of our findings by conducting additional mechanism analyses (such as examining hits, failures, and product novelty) and by supplementing our quantitative results with corroborating insights from informant interviews.

Throughout the study, we used fixed-effects OLS models for continuous outcome variables (e.g., New-product sales), conditional logistic models for binary outcomes (e.g., Hiti), and conditional fixed-effect Poisson models for count outcomes (e.g., counts of Hits for firm-level analyses) (Wooldridge, 2010). Following prior work, we used nonparametric models to visualize the complementarity of the performance effects (Brynjolfsson, Hitt, and Yang, 2002; Brynjolfsson and Milgrom, 2013).

Results

Summary Statistics

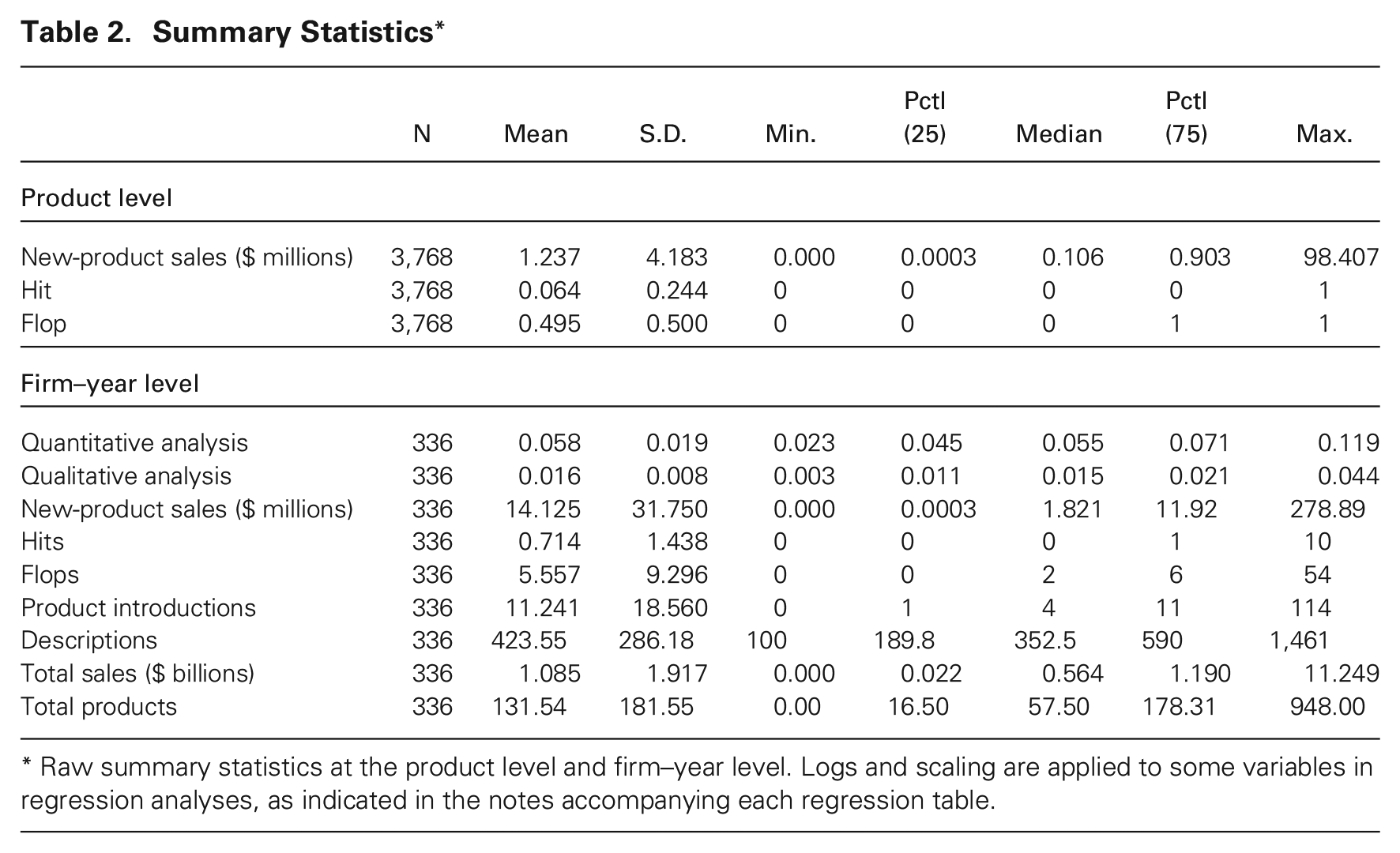

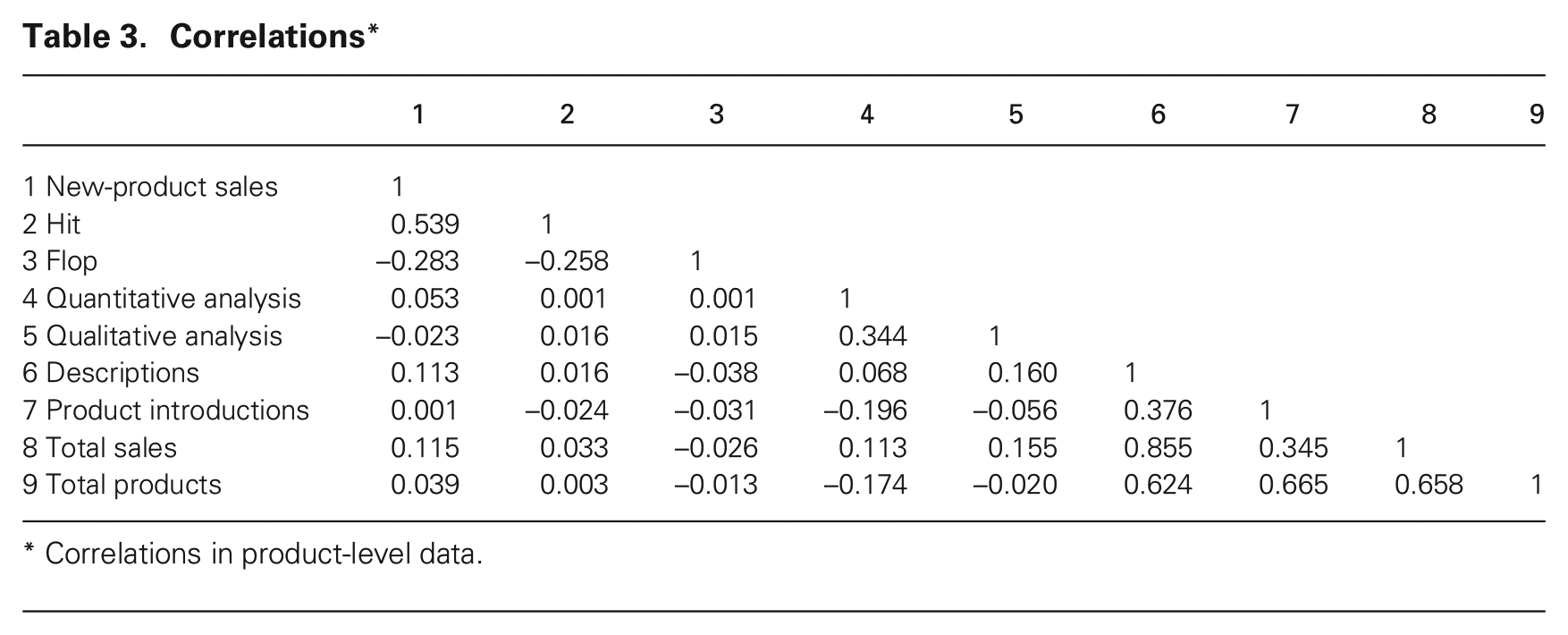

Table 2 presents both product-level and firm–year-level variable summary statistics. The New-product sales variable confirms the skewed nature of commercial performance in the CPG industry: Its mean was about $1.2 million, with a median of only $ 0.1 million and a maximum of $98 million. By definition, about 5 percent of products in our final sample were hits, and 50 percent were failures. 14 Across firms, the mean percentages of innovation-related sentences containing a quantitative or a qualitative word were 5.8 percent and 1.6 percent, respectively. In a given year, a firm could expect about $14 million in new-product sales as recorded in the NielsenIQ data, 0.7 hits, and 5.6 flops. Table 3 presents a correlation matrix.

Summary Statistics*

Raw summary statistics at the product level and firm–year level. Logs and scaling are applied to some variables in regression analyses, as indicated in the notes accompanying each regression table.

Correlations*

Correlations in product-level data.

For additional summary statistics, including individual-level statistics, within- vs. between-individual variation in quantitative and qualitative methods, and raw performance summary statistics comparing high vs. low quantitative and qualitative analysis, see Online Appendix D. These statistics are consistent with our theory of complementarity.

Main Results

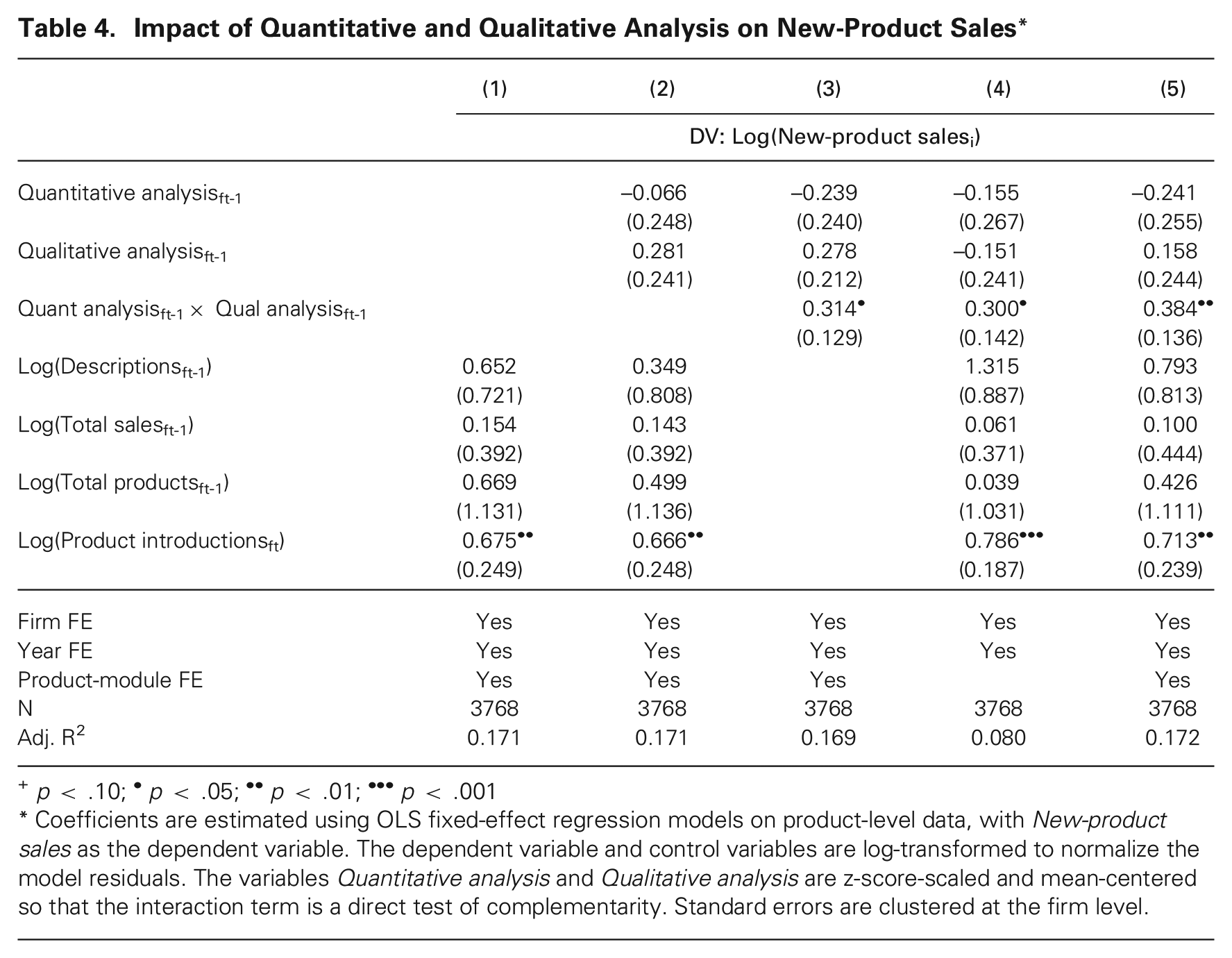

Table 4 presents coefficient estimates from regressions testing our main hypothesis, using product-level data. This analysis includes firm, product-module, and year fixed effects, with New-product sales as the dependent variable. Column 1 includes only control variables. Column 2 adds the variables Quantitative analysis and Qualitative analysis, indicating no statistically significant relationship with New-product sales for either variable individually. This is unsurprising, as our theory suggests that using either type of analysis alone invokes countervailing forces, leading to an ambiguous average effect on commercial success.

Impact of Quantitative and Qualitative Analysis on New-Product Sales*

p < .10; •p < .05; ••p < .01; •••p < .001

Coefficients are estimated using OLS fixed-effect regression models on product-level data, with New-product sales as the dependent variable. The dependent variable and control variables are log-transformed to normalize the model residuals. The variables Quantitative analysis and Qualitative analysis are z-score-scaled and mean-centered so that the interaction term is a direct test of complementarity. Standard errors are clustered at the firm level.

Columns 3–5 test the hypothesis by adding the interaction term Quantitative analysis×Qualitative analysis. Column 3 reports the test without any controls, Column 4 reports it with no product-module fixed effects, and Column 5 reports the full model with all controls and fixed effects. As predicted, all three models support our hypothesis of a positive complementary effect for quantitative and qualitative analysis on new-product innovation success. On average, a new product launched by a firm with a one-standard-deviation increase in Quantitative analysis is associated with a 47 percent (

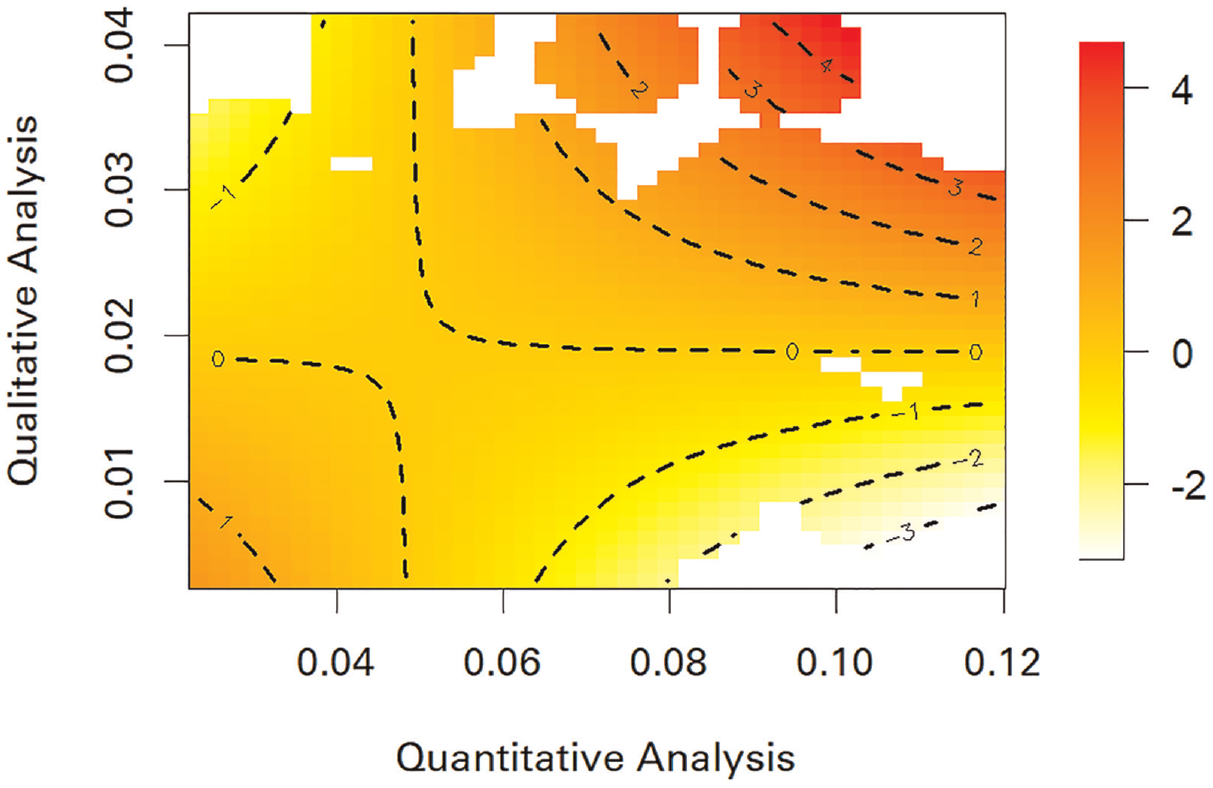

To visualize this complementary relationship between quantitative and qualitative analysis with respect to new-product sales, we used nonparametric estimation (see Brynjolfsson, Hitt, and Yang, 2002; Brynjolfsson and Milgrom, 2013).

15

Figure 3 displays the resulting visualization. The color gradient and contour lines on the figure represent the smoothed predicted values of the interaction term’s effect on the logarithm of New-product sales. Darker orange heatmap colors and higher numbers on the contour lines indicate higher predicted sales, while lighter yellow heatmap colors and lower numbers on the contour lines indicate lower predicted sales. Regions in which the model extrapolates beyond the reliable data range are marked in white. Positive and negative numbers on the contour lines reflect values above and below the mean, respectively (specifically,

Visualization of the Quantitative x Qualitative Complementary Relationship on New-Product Sales*

Visually, the figure confirms the hypothesized complementary relationship: The predicted marginal effect of quantitative analysis on new-product sales is increasingly positive as the level of qualitative analysis increases and vice versa. High levels of both types of analysis outperform high levels of either analysis alone and low levels of both. Here, it bears reiterating that the baseline levels of quantitative and qualitative analysis are quite different (see Table 2, Figure 2, and Figure 3). Therefore, our results do not imply that equally high levels of quantitative and qualitative analysis increase commercial success but, rather, that relatively high levels of each do.

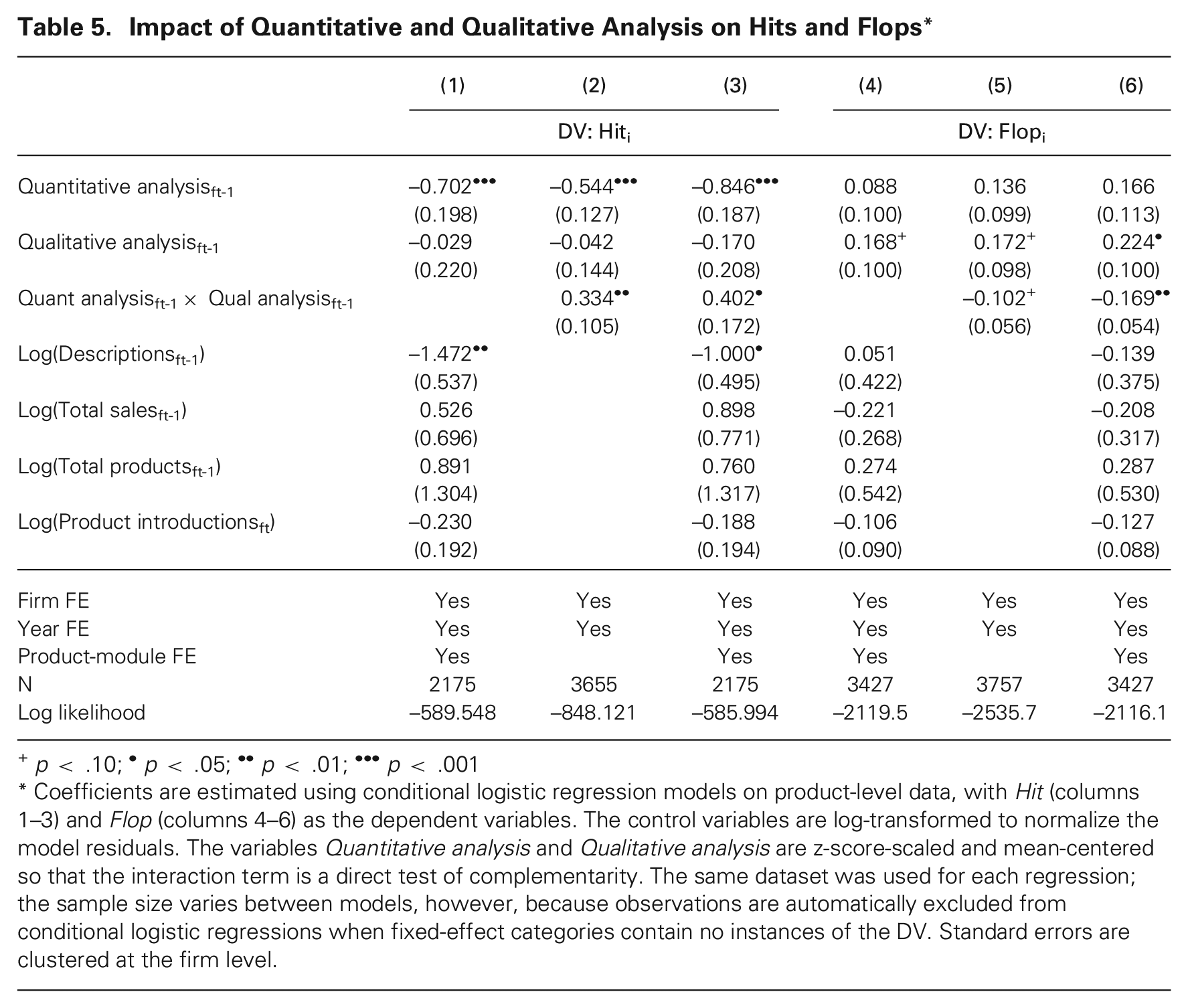

To further validate the theoretical underpinnings of our findings, Table 5 reports two additional outcomes: the probability of a launched product being a hit or a flop. Columns 1–3 use Hit as the dependent variable. In accordance with our theory, the models indicate that increases in Quantitative analysis are significantly related to a decreased probability of hits. Although the Quantitative analysis term is strongly negative, the full model in Column 3 still shows complementarity in the methods for predicting hits: The interaction Quantitative analysis×Qualitative analysis is associated with a 49 percent (

Impact of Quantitative and Qualitative Analysis on Hits and Flops*

p < .10; •p < .05; ••p < .01; •••p < .001

Coefficients are estimated using conditional logistic regression models on product-level data, with Hit (columns 1–3) and Flop (columns 4–6) as the dependent variables. The control variables are log-transformed to normalize the model residuals. The variables Quantitative analysis and Qualitative analysis are z-score-scaled and mean-centered so that the interaction term is a direct test of complementarity. The same dataset was used for each regression; the sample size varies between models, however, because observations are automatically excluded from conditional logistic regressions when fixed-effect categories contain no instances of the DV. Standard errors are clustered at the firm level.

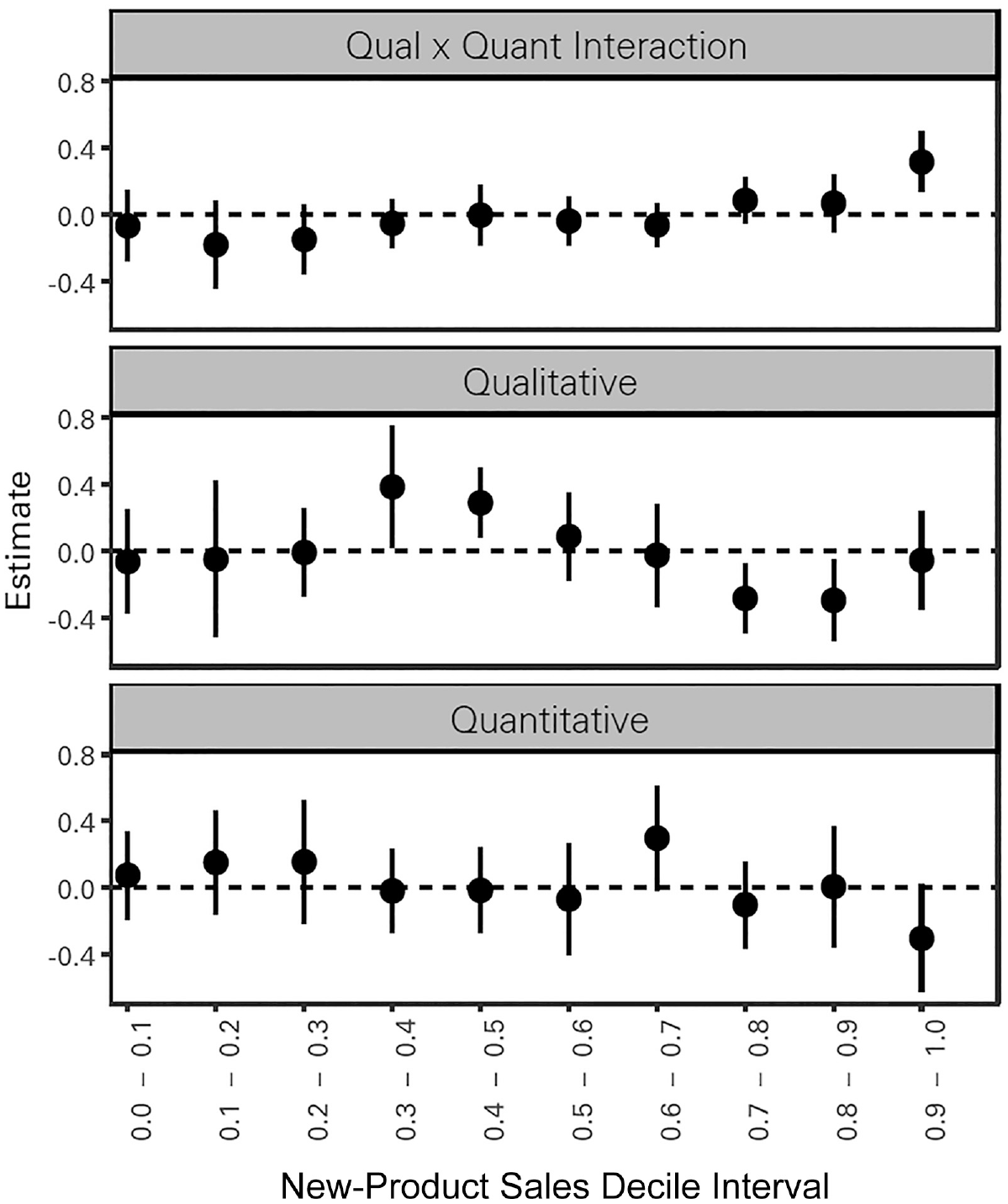

To visualize these effects on a wider range of outcomes, Figure 4 plots the coefficient estimates and 95 percent confidence intervals for quantitative analysis, qualitative analysis, and their interaction term for each decile of New-product sales. To create this plot, we estimated the regression model from Table 5, column 3, applying a range of outcome variables for each decile of new-product sales. The plot displays the resulting coefficient estimates for each decile-outcome regression. The figure corroborates our theory and the evidence presented in Table 5: The interaction term Quantitative analysis×Qualitative analysis is significantly associated with the highest decile of new-product sales and shows a generally increasing trend up the decile range. Qualitative is associated with significantly more flops (0.3–0.5 decile range of new-product sales) and fewer high-to-moderately successful products (0.7–0.9) but is no less likely to produce hits. And Quantitative is associated with significantly more moderately successful products (0.6–0.7) but fewer hit products (0.9–1.0). These patterns are consistent with our theory.

Coefficient Estimates and Confidence Intervals for New-product sales Deciles*

Robustness Checks

We conducted several analyses to further examine the robustness of the main results. Online Appendix E reports these results and provides more details on how additional variables were constructed. We include some highlights in this section.

First, we confirmed that in accordance with our theory, the results were driven by the use of quantitative and qualitative analysis during the variation and selection stages of innovation rather than during the implementation stage. We constructed alternative variants of our measure that counted only sentences containing at least one word each from a variation dictionary (e.g., generate, ideate), a selection dictionary (e.g., develop, test, evaluate), and an implementation dictionary (e.g., advertise, pricing). We found the complementarity result to be driven mainly by the interaction between quantitative and qualitative selection.

Second, to allay concerns about potential omitted variables, we added a broad array of additional firm-level control variables derived from Compustat, Glassdoor, and our résumé data: (1) General firm performance, based on market value and return on assets; (2) Innovation focus, based on R&D expenditures and capital expenditures; (3) Skills and human capital, based on employees’ quantitative and qualitative skills, quantitative and qualitative experience, and formal education; (4) Composition of occupations, based on job titles; and (5) Organizational positivity and diversity, based on Glassdoor ratings and firm-level compositions of gender, seniority, and race.

Third, we demonstrate that the results are robust across several alternative measures of quantitative and qualitative analysis. We also ensured these measures were not overly sensitive to the inclusion or exclusion of specific words, and we Winsorized all variables to mitigate the impact of outliers.

Finally, we attempted to further mitigate potential selection effects by using Inverse Probability Weighting with Covariate Balancing Propensity Scores (Imai and Ratkovic, 2014). For further details on all these analyses and the results, see Online Appendix E.

Firm-Level Analysis

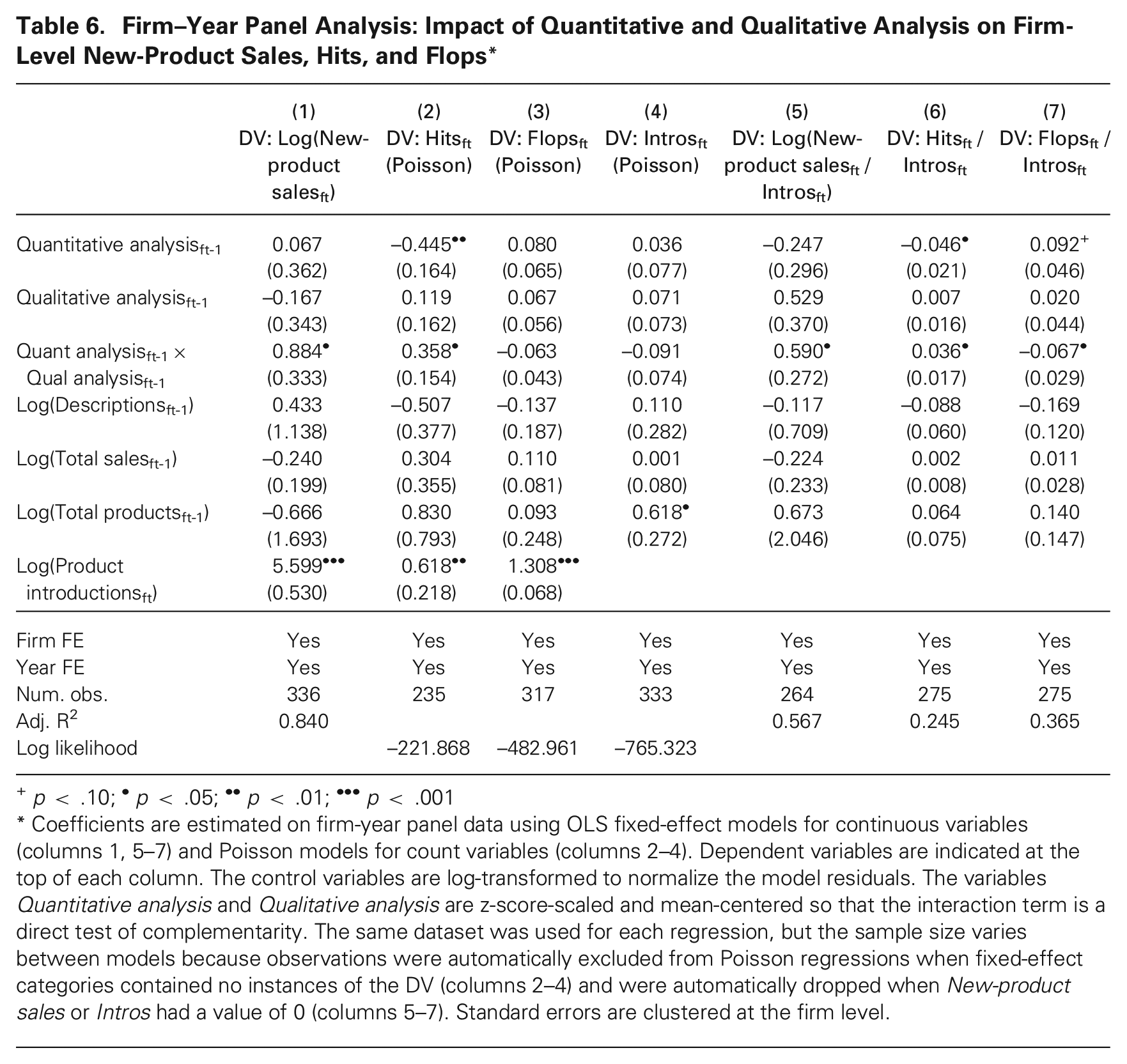

In addition to the product-level analysis described above, we present results from analyses of a firm–year panel dataset. The product-level analysis estimated the commercial success of individual products, conditional on the product being launched; the firm-level analysis captures a more expansive view—that of firms’ overall innovation performance over time. Table 6 displays these firm-level results, which largely confirm the results presented in prior tables and figures.

Firm–Year Panel Analysis: Impact of Quantitative and Qualitative Analysis on Firm-Level New-Product Sales, Hits, and Flops*

p < .10; •p < .05; ••p < .01; •••p < .001

Coefficients are estimated on firm-year panel data using OLS fixed-effect models for continuous variables (columns 1, 5–7) and Poisson models for count variables (columns 2–4). Dependent variables are indicated at the top of each column. The control variables are log-transformed to normalize the model residuals. The variables Quantitative analysis and Qualitative analysis are z-score-scaled and mean-centered so that the interaction term is a direct test of complementarity. The same dataset was used for each regression, but the sample size varies between models because observations were automatically excluded from Poisson regressions when fixed-effect categories contained no instances of the DV (columns 2–4) and were automatically dropped when New-product sales or Intros had a value of 0 (columns 5–7). Standard errors are clustered at the firm level.

Table 6, column 1, confirms a positive coefficient on Quant analysis × Qual analysis (p < 0.05); the individual effects of quantitative and qualitative analysis are smaller in magnitude and not significant. Table 6, columns 2 and 3 report results from Poisson models of the impact of quantitative and qualitative analysis (and their interaction) on the number of hits and failures at each firm each year. In column 2, Quant analysis × Qual analysis is associated with more hits (p < 0.05); Quantitative analysis individually is associated with fewer hits. Column 3 shows no significant relationship with the overall number of flops, though the coefficients are directionally consistent with the product-level analysis previously presented in Table 5.

Table 6, column 4, with Product introductions as the dependent variable, confirms that the results are not driven merely by the fact that firms that use more quantitative and qualitative analysis launch more products. Columns 5–7 also support this interpretation, showing that the rate of new-product sales, hits, and flops is consistent with prior results: The interaction term was significantly positive for average new-product sales per number of products introduced and for the rate of hit products, and it was significantly negative for the rate of flops.

Product Novelty

We further validate the theoretical foundations of our findings by examining the relationship between methodological pluralism and product novelty. We defined the novelty of each product as the proportion of its NielsenIQ product attributes that were never before seen within its product module (Argente, Lee, and Moreira, 2018; Granja and Moreira, 2023). To increase the measure’s comparability across products in different NielsenIQ product modules (because only products within a module share the same attribute categories and values), the variable was calculated as the percentile of novelty within each product category (Allen, 2024). Because product-attribute data were not available for all products, we were able to calculate this measure only for a subset of products (for details on creating the novelty measure, see Online Appendix A).

In an analysis included in Online Appendix F, we confirm that as expected in our theory, qualitative analysis is, indeed, associated with an increase in the mean novelty of products launched. We also find that as expected, the novelty of products launched serves as a partial explanation for the relationship observed between methodological pluralism and the commercial success of new-product innovations.

Inter- vs. Intra-Personal Methodological Pluralism

Given the existence of individual-level measures of the use of quantitative and qualitative methodologies in organizations, it is possible to examine how much the results are driven by inter- vs. intra-personal methodological pluralism. That is, are organizations that have more purely quantitative and purely qualitative employees more commercially successful than those that contain more mixed-method employees? We explore them as an extension of our main results in Online Appendix F.

As outlined in Appendix F, we find that the main Quant × Qual result is not impacted by controlling for the degree of intra-personal pluralism; and we find evidence of an inverted U-shaped relationship in which some degree of intra-individual methodological pluralism (i.e., multi-method individuals) may enhance organizations’ innovation performance but that too much may be detrimental. In other words, neither pure methodologically specialized individuals nor all mixed-methods individuals is optimal. Therefore, both inter- and intra-individual mechanisms appear to be at play. These findings are consistent with our qualitative observations, described in the next section, which indicate that the organization-level result is shaped by individual evaluation as well as organizational resource-allocation processes.

Supplementary Insights from Interview Data with Product and R&D Managers

To supplement our archival analysis, we also draw on our 36 interviews with industry informants to illustrate the organizational processes underlying our theory. In interviews, we sought to understand the mechanisms by which the use of quantitative and qualitative analyses in organizations impacts their innovation outcomes. Several insights emerged from these interviews.

One insight was that organizational members readily identified the presence of data-driven norms and processes in their organizations. One manager (#2) said, “We consider ourselves to be a very data-driven company. I know that moniker has been taken up by many, many companies of late, but we feel like we have always been a data-driven company—and that is evident in all of our systems and processes.”

Organizations’ top leaders seemed to play an important role in shaping these processes and norms regarding reliance on quantitative and qualitative analysis (see Schein, 2010). Many leaders in our sample tended to favor quantitative over qualitative. One marketing manager (#20) described how difficult it was to present qualitative analysis to some leaders: “It’s really hard to stand up in front of the senior management and they’re like, ‘Why do you think X?—And you don’t have [quantitative] data, right?’ . . . A lot of leaders call bs [on qualitative evidence], like, ‘You got one guy telling you he doesn’t like the color orange, and then you changed your mind?’” The marketing manager added, “I know all the leaders in the building who don’t really believe, who don’t like focus groups or whatever.”

Leaders also appeared to take cues from broader external trends, like the data analytics fad—an observation from informants that was consistent with our archival analysis (the aggregate trend in Figure 2). For instance, one innovation executive (#21) described how senior leaders mediated the influence of external fads: I think that there is this faith in big data right now, that it’s hip and cool and fun to talk about at the country club, and CEOs say to themselves, “Well, what are you doing?”“Oh, I just started an analytics team.” . . . So I think some of it may also just be a trend. . . . It’s just—it’s cool.

Despite the prevailing quantitative focus, some organizations endorsed a more pluralistic approach. A senior R&D executive (#1) described an intentional transition led by the leaders at her organization from a strictly quantitative focus to a more holistic, multi-method approach: So, before, the company was very unidirectional: did [the quantitative tests] give you good volume? Yes? Let’s go—without even questioning whether the model is right, anything. . . . But we started to look into how we can use the body of evidence.

Another theme was that organizational norms and processes regarding quantitative and qualitative analysis appeared to meaningfully influence the direction of innovation. At the organizational level, expectations for analyses influenced which products were generated and selected. One product manager (#11) recalled, “The ubiquity of [quantitative] data genuinely changed the way in which decisions were made—if there was [quantitative] data to back a decision, it was often approved. . . . Otherwise, it was often dismissed.” Less-measurable projects were described by one product manager (#10) as an “uphill climb”; product managers would not champion them without being thoroughly convinced and having the “political capital” to push forward (see Dyer, Furr, and Hendron 2020). One senior manager (#4) recounted that a former colleague had had difficulty rallying support for his projects when he moved to an organization that heavily favored quantitative results: “[He] had a really hard time selling the organization on supporting him because he’s not coming back with that rigor. . . . He came from . . . a place that didn’t operate the same way.” One data scientist (#34) said he would “actively discourage a junior PM doing a [less-measurable project]” due to career risk. This phenomenon may have been particularly acute for high-potential innovations, which tended to be less amenable to analysis using broad quantitative trends. In one R&D manager’s words (#3), That was the insanity of [quantitatively] data-driven decision making at [our company]: because decision makers need [quantitative] data, data that have been validated, because we have done the same test for years . . . [but] if I do the things that are already in the data, someone else will be able to catch up. That’s my dilemma.

Informants reported that in such settings, organizational members deferred to the quantitative tests rather than attempting to dig deeper to figure out which products truly had the highest potential. Referencing a missed opportunity in a new-product space, one product manager lamented (#6), “The worst kind of decision making . . . is when you say that we’re going to stick to our core revenue metrics for this evaluation; if it doesn’t move those [metrics], then we don’t care.” The senior executive (#1) reported that her organization had placed “excessive” value on the output of a handful of quantitative consumer tests: “It was kind of a ‘check the box.’ . . . People stopped digging deep into the richness of all the data we collected and just sort of checked the box and said, ‘We got a win on this one.’”

In organizations that relied on different types of analyses, informants also reported that combining methods improved their individual-level evaluations of high-potential products. Qualitative information helped some managers recognize value in opportunities that might otherwise have been overlooked (#17): Sometimes you get these little nuggets that you could never get in [quantitative] data, and you can use the qualitative to “see” your quantitative . . . just these little things that you would never really get, or you might ignore, because they’d be “standardized out” [in quantitative data]. Like when you’re looking at the [quantitative] data, they don’t pop. And then you can take those nuggets into either more quantitative or qualitative testing.

One R&D manager observed (#9), “The [quantitative] data can tell us what is happening, but the moment you start getting into the why it is happening, that’s where the qualitative insights play a big role. . . . For us, it’s the combination of the two which really gives us the breakthrough.”

Triangulation of methods was characterized as valuable for appropriately assessing innovations with high commercial potential. According to the R&D manager (#9), when “inventing something totally new,” the product might require “dealing with a consumer habit that we had to create and help [the consumer] understand.” Because most quantitative market tests do not capture this “habit formation,” (#9) they may produce misleading findings about high-potential products that require it. But rather than ignoring the potentially problematic quantitative tests, a senior R&D manager (#1) spoke of using the quantitative information while still being “grounded in the qualitative”—interpreting all the information available rather than merely deferring to the quantitative test.

Taken together, these observations were generally consistent with our theory and archival results. Methodological pluralism shapes innovation by helping organizational members identify products that may not be amenable to purely quantitative analysis (the default in many organizations), and it motivates them to champion high-potential products that are better suited to a multi-method approach.

Discussion

Examining how methodological pluralism impacts an organization’s likelihood of developing commercially successful new products, we conducted tests on a unique archival dataset of new-product innovations launched by large CPG firms. The tests confirmed our hypothesis that while heavy reliance on either quantitative or qualitative analysis individually would have ambiguous impacts on new-product sales due to the inherent strengths and weaknesses of each method, together they would have a complementary positive effect. We also confirmed the theoretical underpinnings of our results, finding quantitative analysis to be associated with more numerous moderate successes but with fewer hits and qualitative analysis to be associated with more flops and more novelty. Finally, we found that novelty had a positive impact on commercial success and partially explained the relationship between methodological pluralism and commercial success. Collectively, these findings suggest that methodological pluralism helps organizations produce more hits and more novel products than if they heavily used quantitative analysis without substantial qualitative analysis, and it leads to fewer flops than if they heavily used qualitative analysis without substantial quantitative analysis. Overall, using more of both quantitative and qualitative analysis together leads to more new-product sales (both overall and per product).

Our theory and findings contribute new theoretical insight to three streams of research: organizational theories of innovation, theories of data-driven decision making in strategy and IT, and research on the link between elements of organizational culture and strategic performance.

Implications for Organizational Theories of Innovation

Prior research on organizational theories of innovation has warned against being too data-driven when organizations pursue novel, radical, or breakthrough innovations (Christensen and Bower, 1996; Benner and Tushman, 2002; Deniz, 2020; Felin et al., 2020). The argument is that organizations that use more quantitative analysis tend to allocate resources to innovation initiatives that are easier to measure and thus relatively incremental or less impactful.

We extend this work by clarifying that the proposed negative impact of data-driven decisions may occur only when quantitative analysis is used without substantial levels of qualitative analysis. With low qualitative analysis, quantitative analysis aligns with prior research expectations, leading to more moderate successes but fewer significant hits. However, when quantitative analysis is paired with high levels of qualitative analysis, organizations produced not only more successful products on average but also a high number and high rate of impactful hits. By distinguishing between the types of data analysis that organizations use, we show that the magnitude of reliance on quantitative analysis may be less relevant to innovation success than is the pluralism of the methods employed. Thus, our results suggest that organizations seeking commercial hits may benefit more from increasing the variety of analyses they use rather than from diminishing their use of quantitative analysis.

More broadly, these results may be used to extend established research on the productivity dilemma and the exploration vs. exploitation tradeoff, wherein increased reliance on short-term productivity decreases longer-term innovation (Abernathy, 1978; Baldwin and Clark, 1994; Christensen and Bower, 1996; Benner and Tushman, 2003, 2015). Whereas several studies have suggested how organizational structure (Gibson and Birkinshaw, 2004; O’Reilly and Tushman, 2008; Markides, 2013) or other methods like innovation portfolio management (Klingebiel and Rammer, 2014; Brasil et al., 2021) might help to transcend this tradeoff, our results suggest that creating pluralistic methodological environments could also offer a solution, particularly in the context of new-product innovation.

Implications for Theories of Strategy and IT

Our theory and findings also have potential to inform a broader set of theories about data-driven decision making in organizations. Research at the intersection of strategy and IT, for example, offers evidence of a broad-based empirical pattern: that organization-level adoption of quantitative analysis increases overall sales and productivity (Brynjolfsson and McElheran, 2016, 2019; Wu, Lou, and Hitt, 2019; Koning, Hasan, and Chatterji, 2022). We add to this literature by identifying the types of data analysis used as an underappreciated factor contributing to this variation in firm performance.

Prior research in this vein has further posited that adoption of data-driven decision making contributes most beneficially to certain types of innovations that are amenable to quantitative analysis (e.g., process-oriented or incremental innovation) (Wu, Hitt, and Lou, 2020) and less helpfully to novel innovations. Our study is broadly consistent with this thesis but offers an important modification: The impact of data analytics depends not just on the nature of the innovation (e.g., novel or incremental) but also on the types of data analysis (quantitative and qualitative). In fact, organizations are most successful and produce the most hits (even when producing novel products) when the two types of analysis are heavily used together.