Abstract

Research on group creativity has concentrated on explaining how the group context influences idea generation and has conceptualized the evaluation of creative ideas as a process of convergent decision making that takes place after ideas are generated to improve the quality of the group’s creative output. We challenge this view by exploring the situated nature of evaluations that occur throughout the creative process. We present an inductive qualitative process analysis of four U.S. healthcare policy groups tasked with producing creative output in the form of policy recommendations to a federal agency. Results show four modes of group interaction, each with a distinct form of evaluation: brainstorming without evaluation, sequential interactions in which one idea was generated and evaluated, parallel interactions in which several ideas were generated and evaluated, and iterative interactions in which the group evaluated several ideas in reference to the group’s goals. Two of the groups in our study followed an evaluation-centered sequence that began with evaluating a small set of ideas. Surprisingly, doing so did not impede the groups’ creativity. To explain this, we develop an alternative conceptualization of evaluation as a generative process that shapes and guides collective creativity.

Keywords

Collectively developing the creative products that are at the heart of organizational innovation requires that small, diverse groups are able to both draw on members’ perspectives and expertise to generate novel and potentially useful (i.e., creative) ideas and evaluate their most creative ideas as worthy of pursuit (Amabile, 1988; Woodman, Sawyer, and Griffin, 1993; Nemeth, 1997), yet groups struggle with both of these tasks. In general, groups generate fewer and less creative ideas than do individuals working alone (McGrath, 1984; Diehl and Stroebe, 1987, 1991; Paulus and Nijstad, 2003) and judge relatively average ideas to be the most creative (Rietzschel, Nijstad, and Stroebe, 2006). How can groups collectively engage in the creative process to overcome these challenges?

Until now, research has concentrated on ways to improve idea generation in groups to answer this question. This approach assumes that the collective creative process mirrors that of individual creativity: recursive stages of idea generation followed by evaluation (Drazin, Glynn, and Kazanjian, 1999; Jackson and Poole, 2003; cf. Osborn, 1953; Amabile, 1988). Collective creativity occurs when group members stimulate one another’s divergent thinking and their individual ideas are aggregated into the group’s creative output (Nemeth, 1986; Paulus and Yang, 2000; George, 2007; Sacramento, Dawson, and West, 2008). Idea evaluation is a later-stage convergent decision-making process that filters out poor ideas (Paletz and Schunn, 2010; Singh and Fleming, 2010). This yields a useful dichotomy between idea generation and evaluation that has allowed researchers to isolate and examine each stage individually, holding the process constant. Explanations for collective creativity are then based on how the group’s cognitions (e.g., Paulus and Yang, 2000; Miura and Hida, 2004; Nijstad and Strobe, 2006), dynamics (e.g., Watson, Kumar, and Michaelson, 1993; Gilson and Shalley, 2004; Hirst, van Knippenberg, and Zhou, 2009), and environments (e.g., Taggar, 2002) affect the creative process.

The problem is that this approach neglects the evaluative processes that are situated in the on-going interactions of creative groups. Studies of collectives engaged in creative tasks are replete with examples of such evaluations. Members of creative collectives choose consciously or subconsciously to ignore ideas, advocate for their own ideas, show enthusiasm for others’ ideas, and provide interpersonal rewards for good ideas (Murnighan and Conlon, 1991; Sutton and Hargadon, 1996; Elsbach and Kramer, 2003; Jackson and Poole, 2003; Hargadon and Bechky, 2006; Long-Lingo and O’Mahony, 2010). Those processes reflect the iterative and integrated nature of idea evaluation that has been recognized at the individual (e.g., Lubart, 2001; Runco, 2003; Cropley, 2006) and organizational (e.g., Drazin, Glynn, and Kazanjian, 1999; Hage, 1999; Hargadon, 2002) levels. Those processes are not well integrated into group creativity research, however, because it focuses on the set of ideas a group selects during the final stage of convergent decision making, rather than the process through which those decisions evolve. Given the importance of flexibility and adaptability for creativity (Amabile, 1996; Pentland, 2003), the conclusion that it is facilitated by a single, structured process of generation followed by evaluation is counterintuitive. The idea-generation perspective may therefore be an idealization that overlooks the variety of situated evaluations that are integral to how collective creativity occurs.

Examining situated evaluations of creative ideas can provide a deeper theoretical understanding of how creativity functions at the group level because the process of evaluating ideas interacts with cognitions about ideas and the broader context to produce judgments (Collins, 2005; Elsbach, Barr, and Hargadon, 2005). A group’s ability to select a final set of creative ideas therefore cannot be isolated from the process of forming their evaluations. We suggest that examining evaluations situated within the creative process can provide three insights into group creativity. First, situated evaluations are likely to influence a group’s problem framework because the process of evaluating ideas shapes the evaluation criteria that people attend to (Hsee, 1996). At the individual level, the process of forming criteria into a problem framework tends to improve creativity (Getzels and Csikszentmihalyi, 1976); however, part of the value of group work is the diversity of perspectives members bring to the problem (Kurtzberg and Amabile, 2000; Paulus and Yang, 2000). Examining the situated evaluations through which a group’s problem framework develops is important for understanding how groups navigate this tension.

Second, articulating the differences between forms of situated evaluations can help to explain why a group’s decision-making skills do not appear to extend to creative tasks. Recent research suggests that although groups can be effective decision makers (Laughlin, 1988), they tend not to recognize their most creative ideas (Rietzschel, Nijstad, and Stroebe, 2006, 2010; Putman and Paulus, 2009). These are different kinds of decisions, but by equating creative idea evaluation with other kinds of group decision making, previous research focuses on how groups identify high-quality ideas at the expense of other outcomes, such as how groups identify their most novel ideas. Group members are likely to respond negatively initially to novel ideas (Mueller, Melwani, and Goncalo, 2012), so that creative ideas may first be ignored but may move back into the consideration set over time. Examining situated evaluations throughout the process is necessary to explain how novel ideas are evaluated and retained by the group in its final creative output.

Third, in order for groups to build on and integrate ideas, members must converge around some ideas as worthy of further pursuit during idea generation (Cropley, 2006; Kohn, Paulus, and Choi, 2011; Harvey, 2013). Relatively less is known about these forms of idea generation (Kutzberg and Amabile, 2000), however, because the processes recommended for improving divergent generation limit the opportunity for members to decide which ideas to build on and integrate by minimizing group interactions. For example, mediating group discussions with technology or interspersing them with independent work reduce the cognitive and social challenges of generating ideas in a group setting to improve divergent idea generation (Osborn, 1953; Gallupe, Bastianuttti, and Cooper, 1991; Paulus and Yang, 2000). But these interventions are less likely to promote the kinds of unexpected connections we hope for groups to make, because without interaction, how can members decide which ideas to build on or integrate? Examining evaluations that occur within the creative process can provide insight into those processes.

To address these issues, we explored the role of evaluations situated throughout the collective creative process by conducting an inductive process analysis of four public healthcare policy groups over a five-month period. Our study builds on individual and organizational creativity research to offer an alternative conceptualization of evaluation as a process that enriches idea generation by guiding and shaping collective creativity.

Evaluating Creative Ideas in Groups

Creative idea evaluation in groups has been defined as a convergent decision-making process through which groups select ideas. In contrast, idea generation is a divergent process in which a variety of ideas are generated, then explored (Guilford, 1950; Collins and Loftus, 1975; Finke, Ward, and Smith, 1992), when unexpected ideas or perspectives stimulate new associations in group members (Nemeth, 1986; Paulus and Yang, 2000). Idea generation is expected to produce novel ideas, whereas idea evaluation is expected to improve the quality of ideas (Paletz and Schunn, 2010).

Studies of individuals and larger creative collectives suggest that evaluation may fulfil a more varied role in the creative process than this characterization portrays. Precisely how different roles of evaluation unfold to influence group creativity is unclear, however, because different literatures predict different effects. We consider the evidence on how evaluation functions as a group decision making activity, a source of feedback, and a problem framework to lay the foundation for our exploration of evaluations situated in the creative process of the group.

Evaluation as Convergent Decision Making

For groups to select ideas, their generated ideas must be winnowed down until a smaller set of the best ideas remain (Larey and Paulus, 1999; Rietzschel, Nijstad, and Stroebe, 2006; Staw, 2009; Putman and Paulus, 2009; Paletz and Schunn, 2010). This process entails validating ideas against task criteria (Amabile, 1996) to choose options that may be implemented. Convergent thinking underlies this process because it involves narrowing alternatives toward a correct or best answer (Guilford, 1950; Cropley, 2006). Evaluation therefore improves the usefulness, appropriateness, or quality of a group’s creative ideas (Paletz and Schunn, 2010; Singh and Fleming, 2010).

Because evaluation involves winnowing down the idea set, it may entail negative feedback about some ideas that can create anxiety (Mullen, Johnson, and Salas, 1991) and limit cognitive flexibility (Isen, 1999) and willingness to share ideas (Amabile, Goldenfarb, and Brackfield, 1990; Camacho and Paulus, 1995). Groups are therefore advised not to evaluate ideas during idea generation (Osborn, 1953; Litchfield, 2008). Interventions such as sequentially writing ideas down or inputting them into an electronic system facilitate this separation (Van de Ven and Delbecq, 1971; Gallupe, Bastianutti, and Cooper, 1991; Cooper et al., 1998). Evaluation therefore occurs at the end of the creative process, once a large set of ideas is available to choose from (Staw, 2009), and proceeds by comparing a set of generated ideas with one another.

Groups are expected to have an advantage in convergent decision making because they have a large quantity of information and diverse resources for identifying mistakes (Shaw, 1932; Hastie, 1986; Laughlin, 1988). Groups should therefore be effective at evaluating creative ideas (Singh and Fleming, 2010). Decision-making research further suggests that comparing ideas with one another can improve the quality of decisions (Hsee et al., 1999) by making it easier to judge attributes that are otherwise difficult assess (Hsee, 1996).

The limited research that directly examines the evaluation of creative ideas in groups, however, suggests that despite following this process, interacting groups do not outperform nominal groups at selecting ideas (Faure, 2004), and they generally fail to identify the most creative of the ideas that they generate (Rietzschel, Nijstad, and Stroebe, 2006, 2010; Putman and Paulus, 2009). Instead, some evidence indicates that evaluating creative ideas improves when groups move away from this decision-making process. For example, when a group is asked to select a set of ideas, so that each member’s preferred idea can be included in the set, the selected ideas are more original (Putman and Paulus, 2009; Rietzschel, Nijstad, and Stroebe, 2010). Similarly, priming group members to act individualistically, so that they advocate strongly for their own ideas, improves the selection of creative ideas (Goncalo and Staw, 2006). Alternatively, novel ideas may be more positively valued when groups evaluate them throughout the creative process. Doing so provides opportunities to draw on others’ expertise to develop ideas and is more likely to generate commitment to one another’s ideas (Obstfeld, 2005; Fleming, Mingo, and Chen, 2007; Singh and Fleming, 2010). What the best process is for evaluating creative ideas is therefore an open question.

Evaluation as Feedback

Though apprehension over how others will evaluate one’s ideas may impair idea generation, evaluation is also a source of disagreement and debate that can stimulate divergent thinking (Nemeth, 1986; Nemeth et al., 2004). Research therefore has shown that developmental feedback can improve creativity (Shalley, 1995; Shalley, Zhou, and Oldham, 2004; De Stobbeleir, Ashford, and Buyens, 2011). Evaluation provides the information necessary to build on, elaborate, or refine ideas (Runco, 1994).

Indirectly, receiving feedback can also facilitate attention to and engagement with one’s own ideas (Kanfer and Heggestad, 1997; Quinn, 2005), while providing feedback can help to engage with others’ ideas (Langer, 1989). For example, the process of evaluating writers’ pitches has been shown to increase Hollywood producers’ interest in ideas (Elsbach and Kramer, 2003). Similarly, Uzzi and Spiro (2005) emphasized that difficult editing choices were central to picking the right material for the development of Broadway musicals. These examples highlight that evaluation also entails positively valuing ideas (Runco, 1994). Feedback therefore need not create a negative interpersonal environment. For example, Long-Lingo and O’Mahony (2010) found that feedback helped country music writers and producers to maintain positive interpersonal relationships. It was how that feedback was delivered that mattered. Research therefore needs to consider how groups draw on developmental feedback without interfering with idea generation.

Evaluation as Problem Framework

The problem framework contains the assumptions, values, and rules underlying group members’ understandings of a task (Gioia, 1986; Walsh, 1995). Inherent in the problem framework, therefore, are the criteria for evaluating ideas (Mumford, Whetzel, and Reiter-Palmon, 1997). Exposure to different problem frameworks stimulates new ways of thinking (Paulus and Yang, 2000; Milliken, Bartel, and Kurtzberg, 2003; Perry-Smith, 2006), prompts the search for novel alternatives (Wiersema and Bantel, 1992; Ford, 1996), and improves the evaluation of ideas (Paletz and Schunn, 2010; Singh and Fleming, 2010).

At the same time, however, a shared problem framework gives structure and meaning to a collective’s otherwise dispersed knowledge (Weick, Sutcliffe, and Obstfeld, 2005), directs communication (Cronin and Weingart, 2007), and enables members to use one another’s information and ideas for collective idea generation (Reiter-Palmon, Herman, and Yammarino, 2008). It therefore guides the search for solutions (Getzels and Csikszentmihalyi, 1976; Mumford, Baughman, and Sager, 2003; Cropley, 2006). In addition, when members share a novel and appropriate problem framework, it can improve the group’s creativity (cf. Coskun et al., 2000; Gilson and Shalley, 2004; Baruah and Paulus, 2011). For example, having similar evaluations of the art of the day led the Batignolle group of French impressionist artists in the mid-nineteenth century to make riskier, more creative artistic choices (Farrell, 1982). Shared knowledge of underlying content allows members of improvisation groups to recognize the value of their collaborators’ ideas (Weick, 1998; Vera and Crossan, 2005). Leavitt (1996) noted that common professional standards were the foundation for interaction in academic “hot groups.” How groups draw on their diverse perspectives while communicating effectively within the creative process is therefore a further unresolved issue.

The literature thus suggests that evaluations that occur throughout the creative process may affect both idea generation and the identification and retention of creative ideas, yet how these processes develop and interact within a group is unclear. The present paper aims to systematically explore idea evaluation in the creative processes of organizational groups to shed light on this issue. In particular, we ask what is the role of evaluation in the group’s creative process and how does it influence the nature of collective creativity?

Methods

We used an inductive, qualitative process analysis to develop an explanation of creative idea evaluation grounded in organizational groups (Glaser and Strauss, 1967; Van de Ven and Poole, 1995; Langley, 1999). We studied the creation of healthcare information technology policy in four cross-functional and cross-organizational groups from each group’s first meeting to its first deliverable. Throughout the process, we identified when ideas were generated, the point at which idea evaluation occurred, and the nature of decisions about ideas that resulted.

Research Setting

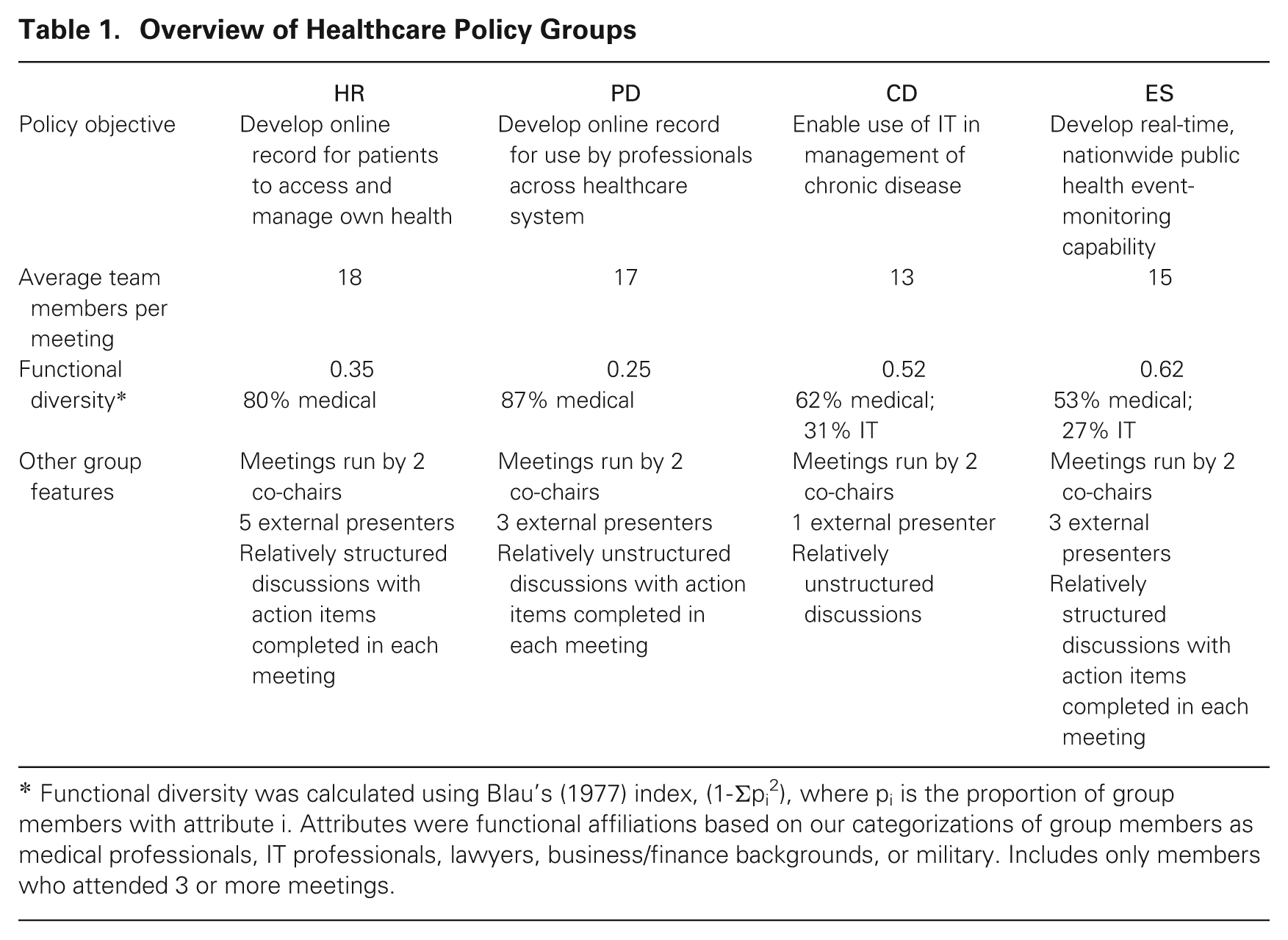

This study takes place in the context of the American Health Information Community (AHIC), a federal advisory committee that established four groups charged with developing policy on the use of electronic information technology (IT) in healthcare. These groups held monthly public meetings to develop recommendations for the U.S. Department of Health and Human Services on four interrelated issues: enabling the use of IT in the treatment of chronic diseases; facilitating the use of patient data in emergency situations; establishing a system for patients to access and manage their own personal health records; and consolidating patient data across points of healthcare delivery. We label the groups CD, ES, HR, and PD, respectively.

An overview of the groups is provided in table 1. Experts from public healthcare (e.g., doctors, nurses, academics), private healthcare (e.g., insurance companies, medical services start-ups), information technology (e.g., executives from IT companies), and government agencies (e.g., the Veteran’s Association, the Treasury) were appointed to the groups. For example, members included a recognized telemedicine expert who had worked as a doctor for over 25 years, a hospital president, and the chairman of the board of an international technology company. Some members had prior professional relationships. The groups had formal co-chairs who primarily acted as facilitators and liaisons with the secretary of the department, to whom the groups reported. The secretary occasionally joined meetings to thank the groups or discuss their goals. Otherwise, the groups were largely self-managing.

Overview of Healthcare Policy Groups

Functional diversity was calculated using Blau’s (1977) index, (1-Σpi 2), where pi is the proportion of group members with attribute i. Attributes were functional affiliations based on our categorizations of group members as medical professionals, IT professionals, lawyers, business/finance backgrounds, or military. Includes only members who attended 3 or more meetings.

We examined the first five meetings of each group over a five-month period, after which the groups’ first deliverables were due. The secretary to whom the groups reported described the first deliverable as an “important transition” from “the thinking phase . . . to the very specific action phase.” Thus the need for creativity was concentrated in the period of our study. Meetings lasted from one to over four hours (two hours and 32 minutes, on average). As part of the AHIC, the groups were based in Washington, DC, but meetings typically involved some members who were co-located and interacting face to face, communicating with others in different locations in a teleconference and web conference. Like an increasing number of groups, those in the present study therefore often operated virtually (e.g., Gibson and Gibbs, 2006).

The tasks facing the groups required a significant amount of creativity because they required developing policy in response to emerging technologies. Problems such as who should be able to access genomic testing information or how to generate data that are standardized and anonymous yet useful are novel, ambiguous, and open-ended, and therefore require creativity (Dillon, 1982). Group members had to engage in creative behaviors such as generating novel ideas (Amabile, 1988) about how technology could be used to deliver healthcare and how it would evolve over time, reframing the group task around key issues (Getzels and Csikszentmihalyi, 1976), and solving problems in response to unexpected or ambiguous regulatory constraints (Weisberg, 1988). Moreover, group members were likely to search for novel responses because they framed the task as one requiring creativity (Gilson and Shalley, 2004). For example, the facilitator at one meeting of CD emphasized the need for creativity:

One of the things that I’m taking away from this conversation is that we need to find ways to push the envelope a little bit . . . we need to really think through as creatively as possible, and I do mean creatively, any idea is a good idea on this one in terms of brainstorming, how we might be able to push that envelope.

The setting provides several other advantages that make it ideal for studying groups’ creative processes. First, the full transcripts of all group meetings were available, and group members interacted within the scope of the group’s task primarily in these meetings. This is because, as federal advisory committees, all groups’ meetings had to be public and transparent, making it difficult to coordinate additional meetings. Second, the issues were personally and professionally important to group members, who demonstrated strong motivation to achieve the group’s goals. Perhaps because of this high level of commitment, as well as the fact that individuals only met once per month, often virtually, we detected relatively little interpersonal conflict in the groups. The setting is therefore somewhat unique, but it is this uniqueness that enables us to trace how ideas evolved over time by bringing to the surface the group interactions through which creative output developed (Bamberger and Pratt, 2010).

Data Collection and Sources

The primary data for the study were collected from verbatim transcripts, supported by audio recordings, of 20 group meetings that were publicly available from the AHIC. These comprise over 50 hours of group interaction. Meeting data were supplemented by archival material such as agendas, presentation documents, and the formal recommendations that were submitted to the AHIC.

Analytic Strategy

To address our question about the role of evaluation in the groups’ creative process, we began by focusing on group interactions over a single idea, then placed these interactions in the context of meetings, and finally the group process over time. We tracked ideas and their evaluation within group discussions and groups’ immediate responses.

Stage I: Identifying group interactions during the creative process

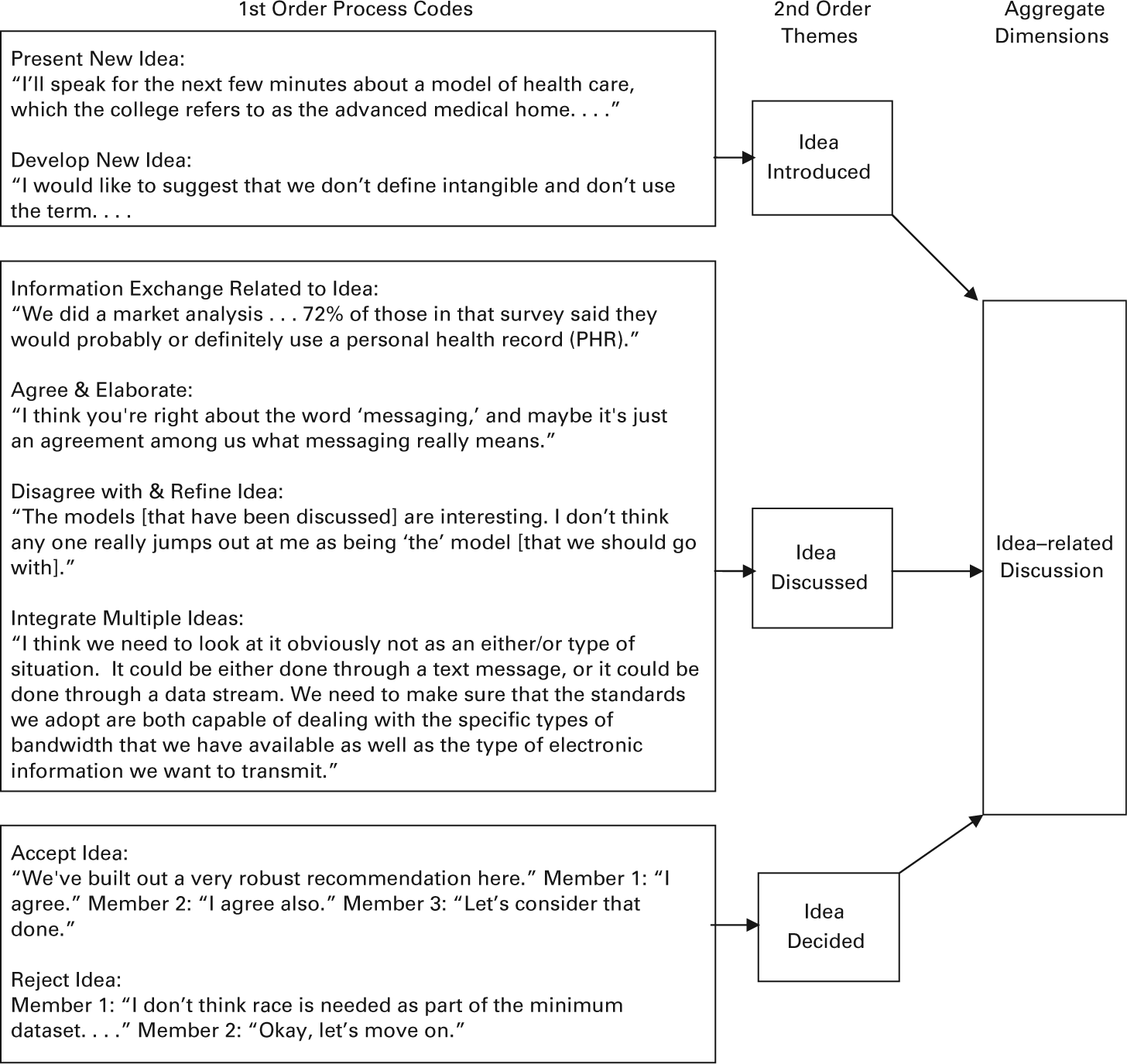

Our initial approach was grounded in the data to develop a coding scheme to describe the activities that made up group interactions over creative ideas. We used ideas as focal events (Abbott, 1990) and attempted to track the activities related to ideas in each meeting. One aggregate dimension that emerged from the data and its development is illustrated in figure 1.

Illustration of the data structure.

Both authors initially read through the entire set of transcripts for one group to become familiar with the content and flow of group discussion. The first author then open-coded statements with process codes (Strauss and Corbin, 1990) to describe activities occurring in the groups. For example, the statement “I just have to say that I disagree with this decision vehemently” (ES group member) was coded as disagree with and refine idea. Next, we shifted from comprehensively describing the data to formulating more meaningful interpretations from which to develop our theory (Glaser and Strauss, 1967; Van Maanen, 1979). To do this, we used constant comparison techniques (Miles and Huberman, 1984) to assemble the first-order codes into more abstract, second-order themes through axial coding (Van Maanen, 1979). For example, disagree with and refine idea was similar to integrate multiple ideas in that both related to the discussion of ideas. Finally, the themes were gathered into aggregate dimensions (see figure 1).

Throughout the process, we iterated between the data and frameworks used in previous research (cf. Bales and Cohen, 1979; Gersick, 1988; Jackson and Poole, 2003). Because our focus was on the creative process, our final framework differs somewhat from previous research, but it is also consistent with that research. In particular, we identified one aggregate dimension related to the progress of ideas in the group (illustrated in figure 1) and a separate dimension related to interpersonal and process issues.

To ensure that the framework was trustworthy (Lincoln and Guba, 1985), the second author, who had not been involved in coding at that point, was trained in using the coding scheme and performed two reliability checks. First, a randomly selected set of 70 statements from the meeting transcripts were coded according to the two aggregate dimensions. The Cohen’s kappa between the two sets of coding was 0.77, indicating a high level of reliability. Discrepancies were discussed and resolved by the authors to update the framework. Second, the authors compared their coding of two full meeting transcripts according to the complete data structure. This revealed that the categories captured the group interactions over time.

Stage II: Developing meeting maps

Once we were satisfied with the reliability of our framework for describing the activities of the group, we used the coding from Stage I to develop a visual map (Langley, 1999) of group interactions over the course of a meeting. Our assumption at this stage was that examining the sequence of events would be insightful for understanding group creativity (Mohr, 1982; Rescher, 1996). Because our primary interest was in evaluative processes, we focused on identifying when and how ideas were introduced, discussed, and decided upon within a meeting. These were the second-order themes related to ideas described in figure 1.

Specifically, we defined introducing an idea as the first mention or presentation of a task-related idea during group discussion. Ideas could be solicited (e.g., when a group member asked for suggestions about an issue), formally presented (e.g., when a group member gave a presentation that outlined a particular idea or model for the group to consider), or spontaneously introduced to the group (e.g., when a group member offered a new task-relevant idea unprompted). Ideas were new if they were entirely novel, extended a previous idea with novel content, or provided an alternative to a suggested idea. Discussion of an idea occurred when group members’ comments explicitly addressed the idea. We defined decisions as explicit consensus of agreement or disagreement with an idea or an expressed decision by one or more group members that was not challenged. When no explicit expression of value was made or one or more group members challenged an idea without resolving the disagreement, it was deemed that no decision had been made. Appendix A provides a map illustrating how the themes were arranged over a meeting. As demonstrated in this map, more than one idea could be discussed at a time. We also included breaks in the maps for non-idea-related group activities identified in the other aggregate dimension in Stage I, such as the process discussions at the beginning of the map in Appendix A.

At this point, we looked for commonalities across and differences between meeting maps. We closely examined each map to identify ways of interacting over ideas. For example, in the map in Appendix A, we observed that ideas 3 and 5 were introduced separately and each became the single focus of discussion, whereas ideas 16 and 17 were introduced and discussed together. We developed hypotheses about alternative modes of interaction based on this and compared the emerging modes with newly examined data as we went through subsequent maps (Miles and Huberman, 1984; Strauss and Corbin, 1990). This iterative process resulted in a stable set of four modes of group interaction, which we discuss more fully below: brainstorming mode, sequential mode, parallel mode, and iterative mode. The two authors developed maps separately for three meetings to compare the overall patterns for reliability and identified the same patterns in each of the three meetings.

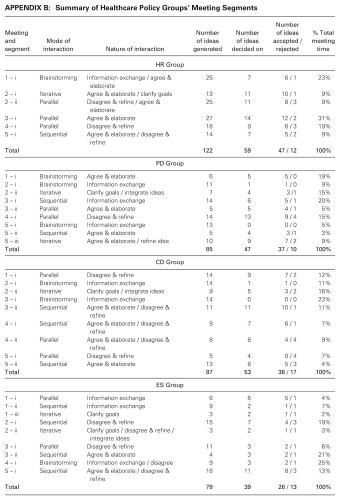

One observation that emerged during this stage of the analysis was that groups engaged in more than one mode of interaction in 11 out of 20 meetings. That is, the mode of interaction changed part of the way through the meeting, so that two or more modes each took up a substantial portion of the meeting. This is illustrated in Appendix A: up to idea 9, ideas were primarily discussed one after the other, while from idea 10, two or more ideas were usually discussed together. Each group had at least one meeting with multiple modes of interaction; for HR, this occurred in only one meeting, for PD and ES, it occurred in three meetings, and for CD it occurred in four meetings. In addition, transitions out of each mode of interaction occurred across the groups; these switches were not limited to one type of interaction. We therefore segmented the meetings based on these differences. This resulted in 33 meeting segments from the 20 meetings; meeting segments became the primary unit of analysis at this point. We provide details of each segment of each group in Appendix B. This is the underlying data on which the comparisons of modes and sequences we present in the paper are based.

Stage III: Examining the creative process over time

Finally, we created visual maps of the order in which the modes occurred across meetings for each group. Our unit of analysis at this point shifted from meeting segments to the entire group process across the five meetings. We ordered each of the meeting segments identified in stage II of the analysis (see Appendix B) across each group’s meetings, retaining information about the acceptance or rejection of ideas at each point. As in the previous stage of analysis, we searched for commonalities across and differences between the groups.

Findings

Four Modes of Creative Interactions in Groups

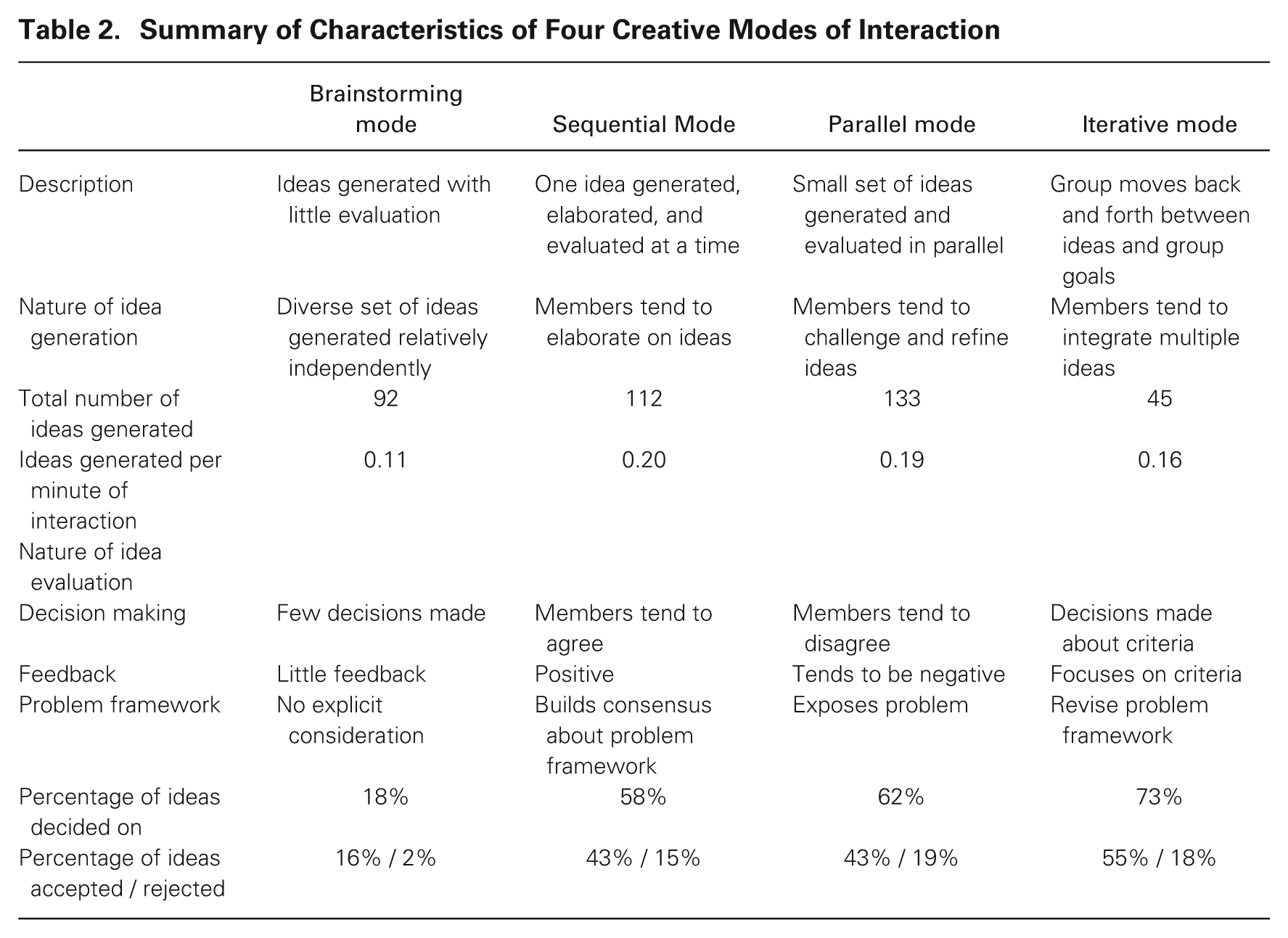

Examining the 33 meeting segments revealed four different modes of interaction over creative ideas. In brainstorming mode, ideas were generated without evaluation; in sequential interactions, one idea was generated, elaborated, and evaluated; in parallel interactions, several ideas were generated and then evaluated simultaneously; and in iterative interactions, the group evaluated multiple ideas with reference to group goals. We summarize the primary features of the modes in table 2. All of the groups engaged in each of the four modes we had identified. Each mode involved different ways of evaluating and generating ideas.

Summary of Characteristics of Four Creative Modes of Interaction

Brainstorming mode

In some cases, groups interacted in a way that closely resembled the traditional conception of idea generation. Brainstorming mode was characterized by group members generating ideas with little if any evaluation, relying on their own interpretation of the problem framework to do so. Decisions rarely occurred in this mode.

Groups exchanged a great deal of information either before or during brainstorming, but information was rarely used to elaborate or evaluate ideas. The following brief excerpt from group CD’s second meeting illustrates brainstorming interactions. 1 In this discussion, the group brainstormed barriers that could prevent medical professionals or consumers from using technology to help manage their healthcare.

“. . . the way you reimburse physicians drives, in a lot of ways, how physicians perform. I would also ask us to consider the whole idea of personal health records and who owns the data. We have constant conversations about physicians owning that information. . . .” “I would echo the reimbursement issue. I also think one of the biggest barriers . . . is workflow in the physician or caregiver’s office. If you don’t get 20 to 30 percent use rate for secure messaging systems, it creates a new workflow that doesn’t ever take over the existing workflow. . . .”

[The co-chair then directs the group to discuss legal issues, followed by an information exchange about legal issues. Several other group members introduce barriers.]

“I know we’ve talked about barriers and have defined a number of them, but one of the questions you had asked early on was which of the definitions of secure messaging we feel as a group should be recommended. I would like to see if we can get the group to focus on which of these would make the most sense.”

In this interaction, group members identified several barriers: reimbursement, ownership of data, physician workflow, and legal restrictions. In some cases, members attended to others’ ideas; for example, John “echoed” his support for the reimbursement issue raised by Raj. But the issues were not discussed further. Although an idea may have been influenced by others’ comments, the connection was rarely obvious. For example, raising the issue of reimbursement may have stimulated John to think about what to reimburse, leading to his comments about workflow; however, no member of the group, including John, made this connection explicit. As a result, ideas in this mode tended to have little relation to one another. Despite the focus on idea generation in this mode, groups generated only 92 ideas in brainstorming; fewer than in parallel mode (which produced 133 ideas) and in sequential mode (which produced 112 ideas). When the amount of time spent brainstorming is accounted for, it was the least productive mode, with only 0.11 ideas generated per minute of interaction time, as shown in table 2.

Groups also very rarely made decisions about ideas in brainstorming mode (only 18 percent of ideas generated were decided on in this mode). The group either failed to recognize and attend to ideas or failed to obtain consensus on them. This can be observed in the above excerpt, in that members did not pick up on one another’s ideas, and following the discussion, it was not clear whether the group agreed that any of the ideas were real barriers to implementing technology. The quotation from Josh, above, also illustrates that members tended to rely on their own problem framework during brainstorming interactions. At the beginning of the interaction, group members raised the question of how to define “secure messaging,” a term that was presented to them in the group’s goals. Josh’s attempt to refocus the group on this term indicates that members did not resolve or even address its meaning as they generated ideas.

Sequential mode

A second pattern was the sequential generation, discussion, and evaluation of one idea at a time. In this mode, groups elaborated on ideas and built consensus about the problem framework by considering the advantages and disadvantages of each idea.

The following excerpt from a sequential discussion by the PD group during their third meeting illustrates this process. This excerpt follows one member, Sam, describing an electronic health record (EHR) system in one state and proposing it as a model for wider roll out:

“I think, obviously, this is the model all others should probably follow. I think they had a lot of success. But something that is not mentioned here that I think drove adoption . . . was incentivizing laboratories and creating some type of an add-on payment so that they do transmit their results [to other providers].”

“I wonder if maybe [you are referring to] the DFD messaging system, which replaces their existing delivery processes. That has been a very powerful influence in engaging laboratories and radiology centers.”

“. . . I appreciate the discussion between you and Charlton that there is a value proposition for this system based on the ability to not have to ship out paper. You estimate the cost of doing that is about $ 0.81, correct?”

“Correct, although I will say the $ 0.81 is for hospital results. We find a lot of the commercial laboratories are more efficient. . . .”

“I’m curious; do you have any experience with the physician office lab?”

“We do, and there’s two flavors of those. One is the larger practice, the 10-physician internal medicine practice, and they work just like everybody else. The other one, which is trickier, is physician offices.”

“I wanted to complete the thought that beyond the value proposition, though, it is not absolutely necessary for it to be a centralized database. In fact other models could sustain this as well. The value proposition would remain intact.”

During this exchange, group members focused on one idea: adopting the federated model for capturing patient data that was in use in one state. They exchanged information about the model, asked questions, and proposed ways to change it.

Sequential mode was the most productive, with 0.20 ideas generated per minute of interaction, resulting in 112 ideas. Ideas generated in this mode tended to be elaborations of existing ideas, because members generally agreed with and built on a focal idea. This can be seen in the above discussion, when Brad noted that to achieve the value proposition of the model, the data did not need to be centralized. As another example, the same group later discussed how to build a system to provide information to first responders in emergency situations. One group member suggested using a web-based system. Simon, who had experience in these situations, responded by proposing that this was not a complete solution:

. . . [a] transmission path for the Internet is really a challenge in those situations. But I would echo the comments that it’s exceedingly important to deliver Internet to your hospitals. What becomes really difficult, particularly in a combat zone, is delivering Internet to the point of injury. So you rely heavily on your voice networks.

Sequential discussion of new ideas therefore appeared to be a mechanism through which groups attended to and built on a single idea, rather than diverging in different directions.

Sequential interactions also built consensus about the problem framework. During the first exchange quoted in this section, Daniel’s comment identified engagement with laboratories and radiology centers as one dimension on which to evaluate solutions. Sam then implied that the value proposition of the solution was an important criterion for judging solutions. Although the group did not explicitly choose between these criteria, their subsequent discussion built consensus about the importance of the value proposition. For example, when Brad built on the idea of using a database, he confirmed that “the value proposition would remain intact.” Thus evaluating an idea as it was discussed involved elaborating evaluation criteria, which directed subsequent discussions and built consensus about the problem framework.

Ultimately, groups decided on 58 percent of ideas discussed in sequential mode. Only 15 percent of ideas were rejected, even though group members were not always in full agreement. In the discussion about using the Internet for first responders, it was clear that Simon disagreed that an Internet solution was entirely correct, and in the discussion of the federated model, Brad did not think that a centralized model was necessary. But members expressed their views by agreeing with and then broadening the idea. For example, Simon agreed with the value of providing Internet access to hospitals and added a suggestion for dealing with situations in which that would not work. Similarly, Brad agreed with the model being discussed and noted that “other models” would allow the group to achieve the same benefits. Evaluation was not a negative experience in this context, nor did it interfere with subsequent idea-generating efforts. In fact, idea evaluation promoted idea generation and helped the group to build consensus about the problem framework in sequential mode.

Parallel mode

A third mode that emerged from the data was the parallel discussion of multiple ideas at the same time. In parallel mode, groups generated then compared and contrasted a small number of ideas, clarifying the problem framework and making decisions.

Ideas generated in parallel mode tended to be alternatives to one another. For example, during their second meeting, the CD workgroup discussed the appropriate population for a pilot test. Two options were suggested: focusing on all of the patients with a particular disease, or focusing on a geographic region:

“I would recommend segmenting out a specific population. I believe that you have to isolate the customers you are serving. If you were to take it in a diffuse manner, you really haven’t segmented your market enough to win with some[thing] measurable.”

“. . . you could segment it within that group. Let’s just focus on diabetics. Let’s just focus on congestive heart failure . . . prove that one community shows savings, and then expand it. . . . Or would you say that the geographic provider-based approach is better?”

“That is a very, very challenging question for me. I believe that the disease set in chronic illnesses is really connected. It’s really hard to isolate one particular disease like that and say, ‘That’s the one we can work with.’ So I believe you have to take a geographically specific environment . . . and take a set of diseases that are correlated.”

“Could I just clarify that? As an example, one of the things we had talked about was [a model of engaging] primary care physicians and/or cardiologists and/or endocrinologists and/or nephrologists. It would encompass a number of . . . illnesses.”

“Yes, that would be an example.”

“If you don’t have a critical mass of physicians adopting this type of technology and actually using it . . . it doesn’t matter how you structure it. . . . So I would argue strongly that we define our charter around a geographic pilot first and then find the disease-specific opportunities within that.”

Because ideas were compared with one another, the nature of idea generation was often to disagree and therefore to refine rather than build on ideas. This is evident in the preceding exchange, when Raj argued that it was not possible to isolate specific diseases. These disagreements were task-based conflicts between group members. Their effect was to narrow the scope of ideas. For example, during one conversation about the minimum data needed by emergency first responders in the ES group, members proposed a list of data elements. One member argued that many of the elements were not needed and proposed an edited list:

A broader . . . system really keeps it much simpler than this proposal. . . . When I’ve heard [our manager] talk about what he sees as the need, you really want to keep it extremely simple . . . how many people are in your ICUs, how many people are in your hospitals, and what’s your excess capacity? So I have a lot of concerns about this minimum dataset.

This conflict did not prevent idea generation, however. As shown in table 2, the most ideas (133) were generated in parallel mode.

Evaluation during parallel discussions provided direct feedback about ideas. For example, during their second meeting, the HR group discussed four ways to implement personal health records (PHRs), including leveraging regional systems (option 1) and expanding an existing emergency information system (option 2). One group member, Kyle, commented:

In looking at the options, my concern [with option 1] is: are we engaged with every one of these providers and exchanging information with them. . . . I think this would be a huge distraction for them. Option two, being involved with this system, I think whatever we do should be scalable. And whatever we demonstrate to do should be scalable. As much as we enjoy doing this and helping out, this is not a scalable solution.

Kyle directly criticized option 1 as a “huge distraction.” This illustrates that parallel discussions focused on eliminating ideas from consideration. In this mode, decisions were made about 62 percent of ideas, and rejection was the most likely, with 19 percent of ideas rejected, as shown in table 2.

Directly comparing ideas also made the problem framework explicit. For example, Kyle, above, was adamant that “. . . whatever we . . . do should be scalable.” Whereas identifying assumptions led the group to build consensus about the problem framework in sequential mode, making the problem framework explicit by comparing ideas allowed the group to clarify and choose which criteria to base decisions on. For example, in the exchange at the beginning of this section, Raj described his understanding that chronic diseases were connected to one another and should be treated as a whole. Nina clarified her understanding of his point, and John directly suggested that another criterion, physician participation, should take priority. Therefore, in parallel mode, evaluation stimulated refinements of ideas and helped the group to develop the problem framework.

Iterative mode

The final mode through which ideas developed was an iterative interaction in which groups introduced and discussed one idea, then introduced a new idea without directly comparing it with the previous idea, then returned to the original idea. Ideas from earlier in the group discussion may have been re-introduced in this mode. This mode involved integrating ideas and shaping the problem framework in the process of making decisions, as summarized in table 2.

In iterative mode, group interactions also built on and elaborated ideas, similar to sequential interactions, but by moving back and forth between ideas, groups also identified ways to integrate multiple ideas. This seemed to occur naturally, in response to additional information or others’ ideas, rather than because the group was focused on a particular idea. For example, during one of the CD workgroup meetings, members attempted to identify ways to measure the success of a secure messaging system:

“The bottom line is: how many hospital stays or visits do you avoid?”

“Exactly, exactly.”

“How much cost do you take out of the system while providing better care?”

“Exactly. So the assumption is that it will reduce patient-physician office visits while increasing the care outcomes. That’s what I think we are trying to go for, right?”

“I agree with you completely, and exactly that was the point I was making in my initial remarks, that it cannot just be based on technology enabling, but also needs to be based on some specific outcomes.”

“In our experience in a prospective trial . . . we actually did see a reduction in per-member-per-month costs in the treatment group versus control. So this is a case for cost reduction or cost avoidance. I think another way to think about it, too, is compliance.”

“I want to go back to the question relating to the specific charge of the workgroup and the issue of secure messaging versus secure e-mail. I would hope that we would keep the broader definition so that we could access all of these outcomes.”

In this excerpt, Raj, Tim and John built on Tim’s initial suggestions about how to measure success. Then, Martin referred back to the group’s previous ideas about how to define secure messaging, connecting it to outcomes, and stimulating a discussion that iterated between these topics and the connections between them. The group concluded by framing the problem in a way that allowed them to integrate ideas so that they could achieve “all of these outcomes.”

Disagreements during this type of interaction tended to focus on a single idea, rather than the trade-offs between ideas. For example, one member of the ES group suggested including data from animal communities in the minimum dataset for emergency situations. Another member disagreed without comparing the idea with others:

There are . . . when you start to delve into this, an increasingly broad realm of data. . . . One is the animal realm, one could go to the environmental realm, etc. While lots of that may be important, it is critically important that we consider the very specific charge that the group has been given in terms of a deliverable inside of a year. And that, I think, may be something that has to scope us in terms of some of the activities we pursue.

This contrasts with parallel discussions, in which group members argued that others’ ideas, such as focusing on a specific disease category, were not possible.

As in parallel and sequential interactions, ideas were likely to be decided on in iterative mode. Decisions were made on 73 percent of ideas in this mode, in contrast to 62 percent in parallel mode and 58 percent in sequential mode, as shown in table 2. In this mode, members frequently referred back to the group’s goals and often refined both the problem framework and ideas in light of the framework. Evaluation therefore tended to occur in response to the problem framework. For example, in the quotation above about the value of animal data, the group member disagreed by referring to the scope of the task and what was achievable within the timeframe. The group was not bound by the problem framework in iterative discussions, however; members often challenged the framework when they were concerned that it would lead the group to support a poor idea. For example, after trying to gain consensus on privacy issues related to their group task, members of the HR group fundamentally challenged whether they could provide good recommendations given the constraints of time and information:

“I would hope that our workgroup would advocate to the community as a whole that there be a more rigorous public process. . . . I haven’t seen a time or process set aside yet where the issues will be fully discussed, and my concern honestly is, [we are] not the right set of players to discuss these basic values and privacy issues.”

“. . . I’ve been a little concerned about the time—this is all being done under a number of constraints that are challenging at best and daunting. We’re trying to do many, many things at once. And I am concerned that we have to slide those down to the ones that are essential for our narrow charge.”

The group went on to navigate a consensus about how to refine the framework based on their ideas, identifying where the task goals were too broad. In this way, evaluation in iterative processing contained judgments about the problem framework used to assess ideas.

Sequences of Creative Interactions over Time

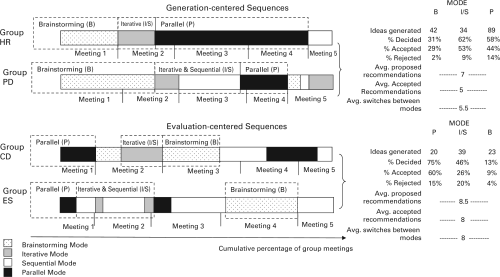

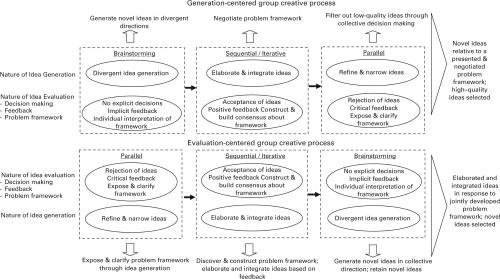

Figure 2 displays the results of our analysis of group interactions over time. It illustrates that groups did not engage in the four modes of interaction in the same sequence over time. Instead, we observed two broad ways that the modes of interactions were ordered. We describe the process followed by groups HR and PD as a generation-centered sequence. In the generation-centered sequence, groups engaged in divergent idea generation through brainstorming and then narrowed down the set of ideas selected. Given the resemblance of the generation-centered sequence to the creative process described in research to date, it was somewhat surprising to discover that groups CD and ES followed a sequence that was essentially the mirror image of this pattern. We describe this process as an evaluation-centered sequence. In the evaluation-centered sequence, groups evaluated a small number of ideas early in parallel mode then developed a shared problem framework and elaborated on and integrated their ideas.

Sequences of creative modes of interaction over time.*

The differences between modes in the nature of idea generation and evaluation that we described above corresponded to some differences in outcomes. For example, ideas were more likely to be decided on in sequential, parallel, and iterative mode than in brainstorming, as table 2 showed. Comparing the two sequences revealed that the order in which a mode occurred also influenced the periods during which groups generated versus decided on ideas, as shown in figure 2. We explore these differences in more detail below. Describing the sequences this way inevitably obscures some of their complexity. Our goal was to explore key commonalities and differences rather than to comprehensively account for the sequences in each of the four groups.

Generation-centered sequences

We pool the data from groups HR and PD in figure 2 to describe generation-centered sequences. Brainstorming dominated the first meeting of the generation-centered sequence. Facilitators of the two groups in this category encouraged members to generate ideas individually early on, either implicitly or explicitly. For example, the facilitator of PD solicited comments from group members who hadn’t spoken, shifting the conversation to a new topic, whereas the facilitator of HR encouraged members “. . . to be developing [a] list of issues individually. . . .” Figure 2 shows that in the generation-centered sequence, 42 ideas occurred in early brainstorming interactions, more than were generated in the evaluation-centered sequence early on or during brainstorming. This supports the view that evaluation stunts idea generation. When following this sequence, groups also spent more time brainstorming than in the evaluation-centered sequence. Yet evaluation was not entirely absent from this mode: the group made decisions on over 30 percent of ideas while brainstorming in the generation-centered sequence.

Next, groups built on and integrated ideas through sequential and iterative interactions. Their productivity dropped to 34 ideas at this point, while they made decisions on over 60 percent of ideas. The generation-centered sequence concluded with parallel interactions. Despite continuing to evaluate ideas, this was the most productive part of the sequence, as shown in figure 2, generating 89 ideas. These groups also spent longer in parallel mode than evaluation-centered groups. In addition, once these groups entered parallel mode, they only exited it at the end of a meeting. For HR, there was relatively little movement out of any mode within a meeting; a switch only occurred in their second meeting. The movement from brainstorming to iterative / sequential to parallel interactions occurred over the first four meetings.

Overall, group members diverged early in the generation-centered sequence and then refined ideas through parallel interactions late in the sequence. Mid-stage sequential and iterative interactions focused on evaluating ideas, rather than elaborating and integrating ideas.

Evaluation-centered sequences

Pooling data from groups CD and ES reveals that evaluation through parallel mode occurred early in this sequence. Figure 2 illustrates that early interactions produced only 20 ideas, fewer than the generation-centered sequence, but groups decided on 75 percent of those ideas and spent relatively little time in this mode.

Like the generation-centered sequence, the evaluation-centered sequence next transitioned into iterative and sequential interactions. In contrast to the generation-centered sequence, however, these interactions were the most productive, with 39 ideas generated in these modes, while ideas decided on decreased to 46 percent, as shown in figure 2. The sequence concluded with brainstorming. Both idea generation and decision making decreased during this mode. Decisions continued to be made about ideas after these three modes, primarily through sequential interactions late in the group process. Those later-stage interactions were more important in the evaluation-centered sequence, which was also more varied than the generation-centered sequence: groups transitioned between modes eight times in this sequence, while the generation-centered sequence involved less than six transitions. As a result, groups following this sequence engaged in parallel mode for short periods late in the process. In addition, there were multiple modes of interaction in at least three meetings for both CD and ES. Unlike generation-centered groups, they transitioned back and forth between parallel and other modes within a meeting.

Overall, the evaluation-centered sequence began with a short period in which a small number of ideas were evaluated in parallel mode. Most ideas were decided on during that time. Idea generation followed and involved elaborating on and integrating ideas in sequential and iterative modes. This sequence also involved more frequent transitions between modes within and across meetings than the generation-centered sequence.

Group Creative Sequences and Performance

It is apparent that some groups engaged in the creative task by essentially reversing the traditional creative process. Even more intriguing is that these groups did not appear to suffer as a result of ordering the process this way. As table 3 shows, all four groups generated many ideas, and each group put forward several formal recommendations in their first deliverable, most of which were accepted. Recommendations were collated by a group’s facilitator based on group discussions and may have included several elaborated or integrated ideas that emerged during group discussion. We cannot be definitive about the way that evaluations throughout the process resulted in these recommendations, nor do we know the ultimate novelty or quality of the recommendations. It is not our intention to make strong claims about performance differences between the groups. There is ample evidence to conclude that both sequences enabled groups to develop some creative solutions.

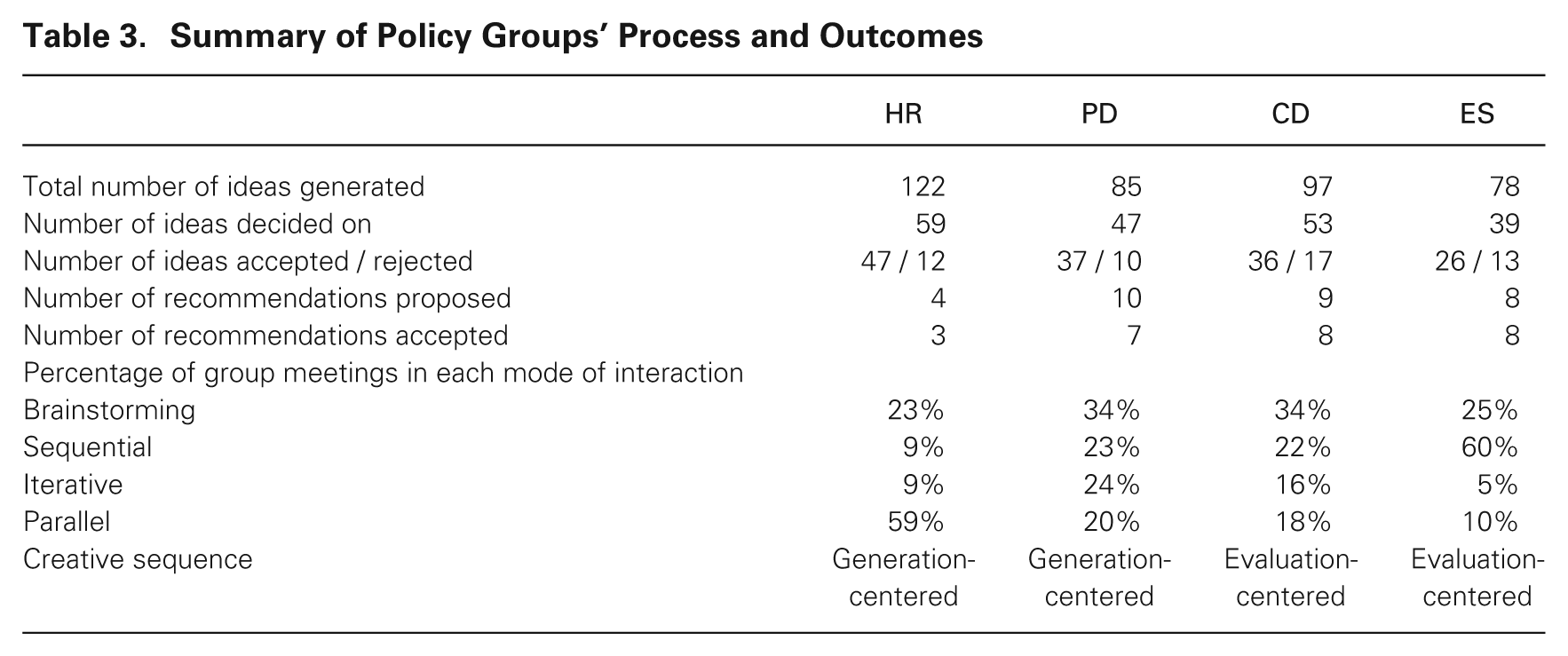

Summary of Policy Groups’ Process and Outcomes

Table 3 does offer some support, however, for the two sequences of creativity we observed across the groups. Generation-centered groups generated more ideas (207) than evaluation-centered groups (175), supporting the focus we observed on divergent generation in this sequence. The HR group may be described as sticking most closely to the brainstorming process, because it transitioned between modes infrequently and usually only between meetings; it also generated the most ideas but decided on less than half and rejected few (less than 10 percent). Interestingly, however, members spent almost 60 percent of their time in parallel mode; this emphasizes the importance of the sequence in which the modes occurred. Evaluation-centered groups agreed on more recommendations (17, versus generation-centered groups’ 14) and had more recommendations accepted (16 versus 10). This is consistent with mid-stage iterative and sequential interactions enabling the group to establish a problem framework through which to evaluate ideas. These differences cannot be explained by the degree of structure present in the group meetings, as both the generation-centered and evaluation-centered sequences occurred in relatively more and relatively less structured environments. We take these outcome data as support for our contention that the generation-centered process stimulates divergent idea generation, while the evaluation-centered process enhances creativity by establishing a problem framework that enables group members to elaborate and integrate ideas.

An Evaluation-Centered Model of Collective Engagement with Creative Tasks

Groups are often responsible for creative output in organizations because members can stimulate one another’s divergent thinking (Nemeth, 1986; Amabile, 1988; Staw, 2009), bring different perspectives to the group task (Wiersema and Bantel, 1992; Milliken, Bartel, and Kurtzberg, 2003), and filter out poor ideas (Laughlin, 1988; Paletz and Shunn, 2010). This is consistent with an idea-generation-centered creative process. By focusing on idea generation as the core creative activity of the group process, however, existing theoretical conceptions provide less insight into how groups develop a problem framework, retain novel ideas, and build on and elaborate ideas.

Figure 3 contrasts this model with an alternative in which idea evaluation is central to the group creative process. In the evaluation-centered model, evaluation directs collective attention to ideas and therefore shapes idea generation. An evaluation-centered process that begins with comparing a small number of ideas and moves toward divergent idea generation later in the process provides an alternative way for groups to engage with creative tasks. The core creative activities of the evaluation-centered process are the construction of a problem framework, the retention of novel ideas, and the elaboration and integration of those ideas.

Alternative models of the group creative processes.

Creative idea evaluation

In our model, evaluation is not a stage of the creative process; it is embedded within a mode of interaction. Consistent with a long-standing body of research that demonstrates that the process of decision making affects the evaluation criteria used and therefore the resulting judgments (Tversky and Kahneman, 1974; Hsee et al., 1999; Elsbach, Barr, and Hargadon, 2005), we suggest that the process of evaluating creative ideas in groups influences the problem framework, the type of feedback provided, and therefore decisions.

Specifically, comparing ideas exposes and clarifies the categories for comparison or evaluation criteria (Hsee, 1996; Langer and Moldoveanu, 2000). Parallel and iterative modes rely more on direct comparisons than the other modes. The parallel mode helps to illuminate the problem framework, while the iterative mode helps to refine that problem framework. Although this makes ideas easier to critically evaluate (Hsee et al., 1999), it also calls attention to the lack of information about ambiguous, novel alternatives (Knight, 1921; Heath and Tversky, 1991). When uncertainty is salient during evaluation, individual and group judgments tend to be more negative (Ellsberg, 1961; Fox and Tversky, 1995; Keller, Sarin, and Sounderpandian, 2007; Mueller, Melwani, and Goncalo, 2012). We propose that these negative evaluations are likely to result in refining and improving focal ideas (cf. De Stobbeleir, Ashford, and Buyens, 2011). In sequential mode, in contrast, group members do not focus on the ambiguity of novel ideas and are therefore more likely to positively value them, so that those ideas may become a starting point for elaboration (Runco, 1994; cf. Elsbach and Kramer, 2003). This also builds consensus about the implied problem framework. In iterative mode, the group is explicit in deciding which elements of the problem framework to focus on, providing indirect feedback about ideas in the process. Even in brainstorming, when there is no shared problem framework, members rely on their own view of the problem so that there is little explicit feedback or decision making. Implicitly, no ideas are selected into the group’s discussion. This may highlight the group’s need for better ideas, directing members to individually generate ideas that are worthy of the group’s attention.

This integrated view of creative idea evaluation provides three insights into its role in the creative process. First, rather than fulfilling a single role at one stage, evaluation fills three roles: providing feedback, constructing the problem framework, and decision making. These roles are linked through the mode of interaction in which they are enacted (Collins, 2005; Elsbach, Barr, and Hargadon, 2005). Second, we propose that what integrates these roles is that evaluation directs collective attention to ideas. Evaluative processes like distinguishing between ideas or concepts promote attention and understanding (Langer and Moldoveanu, 2000; Thompson, Gentner, and Loewenstein, 2000). Evaluation may therefore be necessary to stimulate other group members’ interest in an idea. In addition, the more group members who focus on an idea, the more psychologically meaningful it becomes to each member (Shteynberg, 2010), making the group more likely to invest time and effort to develop the idea. Evaluative processes therefore facilitate interaction over and engagement with ideas. Third, whether group members diverge, elaborate, integrate, or refine ideas depends on how ideas are evaluated. Idea evaluation therefore shapes the nature of idea generation in groups (Elsbach and Kramer, 2003; Runco, 2003).

These three insights into the role of idea evaluation within the creative process reveal that idea generation and evaluation are embedded within a mode of interaction. The activities of the group therefore cannot be understood without reference to the process that produces them (Poole, McPhee, and Seibold, 1982; Sawyer, 2003; Collins, 2005).

The evaluation-centered creative process

We further propose that the combination of idea generation and evaluation within a mode of interaction shapes subsequent engagement with the creative task and the nature of creativity. Just as individuals can engage in a search for tried and tested solutions or for novel ideas (Ford, 1996; Gilson and Shalley, 2004; Zhang and Bartol, 2010), groups can engage in the collective creative process in alternative ways. Figure 3 reveals that when idea generation is the focal activity of a group, the generation-centered sequence—individual divergence, building on and integrating ideas, refining ideas—makes intuitive sense. Consistent with existing literature, this sequence stimulates divergent thinking early in the process (Nemeth, 1986) and later involves collective decision making (Paletz and Schunn, 2010).

In our model, however, idea generation is not the only activity occurring during this sequence. Moving from divergent generation to idea elaboration and integration also means moving from individually interpreting the problem framework to decision making. This provides little opportunity for the group to collectively construct the problem framework. Members are likely to rely on their own preexisting frameworks to make sense of ideas (Gioia, 1986; Weick, 1993; Walsh, 1995), so that constructing a problem framework for the group can become a battle over whose perspective should dominate the group’s choices (Drazin, Glynn, and Kazanjian, 1999; Kaplan, 2008). This seems particularly likely when members have already committed to their own ideas in brainstorming mode. Although the conflict may improve the rigorous selection of high-quality ideas (Singh and Fleming, 2010), it also limits the group’s ability to construct a problem framework by integrating perspectives.

In contrast, in the evaluation-centered process, moving from parallel to iterative/sequential interactions means that members expose and clarify the problem framework early on, providing an opportunity for members to construct the problem framework together. Ideas generated during the early stages of this process can act as boundary objects (Carlile, 2002), like prototypes or experiments, helping to uncover otherwise hidden problems and making assumptions explicit (Schrage, 2000). They therefore provide a mechanism for exposing and manipulating the problem framework. Without such a mechanism, underlying assumptions would likely remain hidden (Cronin and Weingart, 2007). Identifying gaps between members’ problem frameworks may then enable the group to reframe the problem in creative ways (Langer, 1989; Carlile, 2002; Necka, 2003). The group can build common knowledge that can only be known by mentally experimenting with and exploring ideas (Bechky, 2003; Lee et al., 2004). Groups following this sequence are therefore better positioned to construct the problem framework. Research suggests that actively developing the problem framework can result in novel ways of viewing the problem (Getzels and Csikszentmihalyi, 1976; Gersick, 1988) and that having a clear and shared problem framework facilitates deeper engagement in the creative task (Gilson and Shalley, 2004; Quinn, 2005; Reiter-Palmon and Robinson, 2009).

A second advantage of the evaluation-centered process is revealed by considering the placement of parallel interactions. Although relatively more novel ideas may be rejected during early parallel interactions in the evaluation-centered sequence due to members’ aversion to their ambiguity (Fox and Tversky, 1995), such ideas are unlikely to be the group’s most creative. The most easily accessible and therefore common solutions to a problem tend to be identified first; novel ideas emerge with time and effort (Basadur and Thompson, 1986). Eliminating relatively more novel early ideas is likely to pose a minimal risk to group creativity. In contrast, prolonged parallel interactions later in the process are likely to result in rejecting the most novel well-developed ideas. Again, this may reduce the risk of selecting a poor idea, but it may also eliminate the group’s most novel ideas, even when they are high in quality.

Finally, moving from mid- to late-stage interactions reveals that a third advantage of the evaluation-centered sequence is the opportunity to use developmental feedback for idea generation. In the generation-centered sequence, evaluation becomes increasingly salient as it moves from problem framework to ideas and increasingly negative as it moves from accepting to rejecting ideas. This is reinforced by the negotiation of the problem framework. This is an unlikely ground for further elaboration or integration of others’ ideas (Amabile, Goldenfarb, and Brackfield, 1990; Edmondson, 1999). Paradoxically, discussing a small number of ideas in parallel early in the sequence may lead to a more positive evaluation environment. By exposing group members to direct evaluation early in the process, parallel interactions may set a group norm in which members are comfortable providing and receiving feedback (Edmondson, 1999). Group members may also be less committed to ideas and therefore more open to evaluation early on, particularly because feedback at that stage provides the opportunity to develop ideas (Shalley, 1995; Shalley, Zhou, and Oldham, 2004). The evaluation-centered sequence provides an opportunity for groups to use feedback to elaborate and integrate ideas. Although decisions were not explicitly made at the end of the sequence, we suggest that ideas are more likely to be noticed and remembered because they are better developed and understood within a problem framework (Walsh, 1995).

An evaluation-centered sequence offers an alternative path to creativity. We do not suggest that it will necessarily be more effective than the generation-centered creative process. Instead, our model proposes that the core creative activities of the sequence are collectively constructing the problem framework, retaining novel ideas, and elaborating and integrating ideas based on feedback. We propose that this can result in more novel final output. In contrast, the core creative activity of the generation-centered creative process is the generation of novel alternatives stimulated by others’ ideas. This should lead to more ideas and a more diverse set of ideas. But it may also cause groups to undervalue novel ideas, because members do not share a problem framework, may negatively evaluate novel ideas in comparison to less ambiguous, high-quality ideas, and have less opportunity to elaborate and integrate ideas. We describe these as alternative ways of engaging with creative tasks. In the early stages of the generation-centered sequence, individuals generate ideas in a group context, followed by collective decision making; in the evaluation-centered sequence, groups collectively generate ideas.

Boundary Conditions

The context that allowed us to uncover the nature of creative idea evaluation also has unique features. It therefore provided an extreme case that is ideal for theory building (Bamberger and Pratt, 2010), but that may limit the generalizability of our results. The groups we studied were cross-functional and cross-organizational, so they brought diverse perspectives to the task. In addition, the group’s tasks were highly ambiguous and complex, providing the opportunity for interpretation. In a context with less underlying variability, or in which opportunities for interpretation do not exist, an evaluation-centered process may inhibit creativity.

We also found few interpersonal problems in the groups, and those that did occur were quickly diffused with minimal impact. It is unusual for long discussions with a high degree of task conflict to remain so interpersonally neutral. This may have been aided by members’ deep personal commitment to the group’s goals. In addition, groups were not ultimately responsible for implementing their ideas, so members’ may have been less politically motivated to propose particular solutions than in other contexts. In groups with negative interpersonal environments or in which power and status are predominant, early-stage evaluation of ideas could have become more contentious, and the process may have been driven by dialectic conflicts between partisan actors (Drazin, Glynn, and Kazanjian, 1999; Kaplan, 2008). Thus we speculate that the evaluation-centered process may be bounded by members’ commitment to common goals.

Finally, the groups in our study interacted in a relatively minimal way, with much of their discussion occurring virtually and little contact between monthly meetings. This is similar to a growing number of organizational groups in which members divide their time and affiliation between many groups with whom they interact virtually (e.g., Gibson and Gibbs, 2006; Wageman, Gardner, and Mortensen, 2012). But this context does not lend itself to examining the role of informal dyadic and subgroup interactions that often occur outside of group meetings. These informal interactions are also likely to be characterized by a host of power and status dynamics that we did not uncover. In addition, the context provided less opportunity for group members to influence one another through nonverbal cues than in more traditional groups, which may have helped to shift the group between modes of interaction. We expect that informal interactions would replicate the patterns we found, in effect replicating outside of formal meetings the same modes of group interaction we observed. For example, when presented with an idea in an informal interaction, a group member may discuss that idea in detail (sequential mode) or generate an alternative (parallel mode). At the same time, informal interactions may alter the likelihood of different modes occurring and may produce additional patterns that we did not observe.

Discussion

Collectives evaluate ideas throughout the creative process as they ignore or build on one another’s ideas, provide interpersonal rewards and punishments for ideas, or follow particular idea paths (Sutton and Hargadon, 1996; Elsbach and Kramer, 2003; Jackson and Poole, 2003; Long-Lingo and O’Mahony, 2010). We built on research into these situated evaluations to reconceptualize evaluation as a process that guides how groups combine members’ inputs into creative collective products. Rather than viewing idea evaluation in groups as a stage of convergent decision making (Paletz and Schunn, 2010), we view it as a different aspect of the same mode of interaction through which ideas are generated. When one group member shifts a discussion toward an idea suggested by another (e.g., Elsbach and Kramer, 2003; Sawyer, 2003), that is a moment of idea generation for the originator and evaluation for the other. For the collective, it is both. The actions and reactions of the group reveal and determine generation and evaluation (Sawyer, 2003; Collins, 2005; Weick, Sutcliffe, and Obstfeld, 2005).

Overcoming the challenges of collective creativity

A central contribution of our research is to provide new insights into how groups can use evaluation to engage in the creative process in a way that overcomes the challenges of collective creativity identified in previous research. One way is to prompt the process of problem construction. Previous research emphasizes that diversity enhances a group’s divergent cognitive and dynamic processes (e.g., Watson, Kumar, and Michaelson, 1993; Miura and Hida, 2004), but it can also make it more difficult for groups to identify and select creative ideas (Milliken, Bartel, and Kurtzberg, 2003; Harvey, 2013). Our research resolves this tension by suggesting that evaluation enables groups to synthesize members’ diverse perspectives into a shared problem framework. This casts a new light on diversity research, which has emphasized the value of diversity to divergent thinking. In contrast, our study suggests that diverse perspectives are also valuable when they converge, to the extent that they provide a novel problem framework for the group. Our study further implies that problem construction requires engaging with the content of ideas, and that, as others have also observed, it does not occur at the beginning of the creative process (e.g., Gersick, 1988; Jehn and Mannix, 2001). Our study identifies problem construction as a key element of the collective creative process that unites idea generation and evaluation and calls for further research on the facilitators of this process and the conditions under which it is more or less valuable to collective creativity.