Abstract

As higher education attainment has become increasingly essential for both individual socioeconomic outcomes and the economic competitiveness of nation-states, and as the cost of financing the higher education enterprise continues to rise, university quality has become an urgent concern for students, families, and policy makers around the globe. The widespread interest in assessing university quality manifests itself in the rise of global rankings (Hazelkorn, 2015) and the increasing use of so-called performance indicators by government agencies. This paper focuses on the latter phenomenon. The first part of the paper examines the benefits and limitations of higher education performance indicators as conventionally implemented, and the second part advances a set of suggestions to address these shortcomings by adapting performance systems to represent and incentivize evidence-informed improvement efforts.

As higher education attainment has become increasingly essential for both individual socioeconomic outcomes and the economic competitiveness of nation-states, and as the cost of financing the higher education enterprise continues to rise, university quality has become an urgent concern for students, families, and policy makers around the globe.

The widespread interest in university quality manifests itself in the rise of global rankings (Hazelkorn, 2015) and the increasing use of so-called performance indicators by government agencies. This paper responds to contemporary imperatives related to higher education quality assessment and improvement, with a particular focus on metrics-linked accountability efforts in the form of performance indicators (PIs) deployed to assess quality and promote its improvement. The first part of the paper examines the benefits and limitations of higher education PIs as conventionally implemented, and the second part advances a set of suggestions to address these shortcomings by adapting performance systems to represent and incentivize evidence-informed improvement efforts.

Quality of higher education is frequently invoked by political actors and the media as if a consensual and unproblematic understanding of the term exists. But in an enterprise such as higher education that lacks a unitary bottom line, quality is multifaceted. Indeed, Harvey and Green (1993) articulate five distinct conceptualizations of quality: exception (exceeding standards), perfection (an absence of defects or an organization-wide “quality culture”), fitness for purpose (meeting the needs and expectations of clients or conforming to mission), value for money (efficient provision), and transformation (eliciting transformative qualitative change). In Harvey and Green’s view, PIs align with the value for money perspective, although they acknowledge that they can also inform effectiveness.

Performance Indicators and Accountability

According to the (U.S.) National Forum on Education Statistics (2005), a good indicator has five characteristics: it is useful (i.e., fit for purpose), valid, reliable, timely, and cost-effective (the information value justifies the cost of collection). PIs with these characteristics make the invisible visible, rendering diffuse or murky concepts concrete. As a result, they are subject to verification through audit procedures and they are comparable across units and over time. These standards inform my treatment of performance indicators in higher education accountability systems.

To illustrate the complexity of assessing quality in higher education, consider the tripartite mission of the university comprising teaching, research, and service. Teaching spans a range of disciplines at both undergraduate and graduate levels to produce a variety of credentials, with another dimension of variation involving the students to be served. Research involves the production of new knowledge in a similarly broad range of disciplines that vary with regard to the inputs, procedures, and outputs required to produce new knowledge, as well as the associated costs. Service, also referred to as “third mission,” encompasses a still broader range of activities related to how higher education institutions (

Population served (e.g., representation of historically underrepresented groups)

Retention rates (aggregate or by degree program)

Degree production by level

Degree production in specific fields (e.g.

Quality of instruction

Qualifications of instructional staff

Graduation rates (aggregate or by degree program)

Graduate employment and earnings (aggregate or by degree program)

Research funding

Research-related publications

Research impact

Licenses and patents

Engagement with community by students and academic programs

International engagement by academic staff

To be sure, this is not a comprehensive list, and there are challenges in reducing each of these to concrete measures that meet the criteria for a good indicator set forth above. But for the sake of argument let us stipulate first, that the list above suitably captures the facets of quality for a given set of policy actors; second, that consensus exists on how each can be measured; and third, that policy makers wish to use the resulting measures as PIs.

Equipped with this set of PIs, policy actors can then implement them in an accountability framework. A “soft” accountability framework would emphasize transparency for the purpose of public reporting and consumer information, relying on market logics to reward strong performers and incentivize improvement on the part of poor performers. PIs can also be used in “hard” accountability systems that link rewards and sanctions to performance measures—strong performers may enjoy increased funding, while laggards may suffer reductions, or they may receive an infusion of special funds to remedy shortcomings. Both types of systems are in use in the United States, with hard accountability in the ascendance in the form of so-called performance-based funding systems.

The logic of such an accountability system is straightforward: greater accountability will yield better performance. As appealing and intuitive as this proposition may be, the underlying mechanisms linking accountability to performance have not been clearly articulated (Dubnick, 2005). Furthermore, in the case of higher education, attempts to link hard accountability to improved performance have yielded at best mixed results and unintended consequences. In a recent example, Bell, Fryar and Hillman (2018) carried out a sophisticated meta-analysis of 12 empirical studies of the impact of performance-based funding on access to higher education and degree completion rates. In estimating the impact of performance funding on access to higher education by traditionally underserved students, the researchers found that “the average effect is not significantly different from zero” (p. 121). With regard to degree completion rates, they similarly concluded that “the average effect of performance funding on completion rates is not significantly different from zero” (p. 120). Discussing these results, they observe:

Literature in public management has already investigated the effects of performance funding in sectors such as health care, social services and K-12 education, which largely concludes that complex indicators are hard to improve through financial rewards. In the context of higher education, obtaining a bachelor’s degree involves a complex process shaped by student preparedness, sense of belonging on campus and financial constraints, which not all public university administrators can affect. (p. 121)

Why Is Indicator-Based Accountability Problematic in Higher Education?

There are at least four reasons why indicator-based accountability systems may fall short in driving stronger performance by higher education institutions: organization and field complexity; externalities related to how students are allocated to institutions; limited understanding of and capacity to influence performance; and goal displacement resulting from the use of proxy measures. I elaborate on each of these reasons below.

Organization and Field Complexity

PIs are, by design, simplified representations of a complex underlying reality. As a result they have limited sensitivity to heterogeneity within and between

Heterogeneity between institutions also come into play, in the form of variability in the student populations served as well as the mix of disciplines and degree programs offered. In some cases variation in indicator scores may reveal more about these differences than about objective performance differences.

Externalities Related to the Matching of Students and Staff to Institutions

Another complication of traditional performance indicators involves the process by which students and staff are allocated to institutions. When high-ability students are channeled into a select group of institutions—a near-universal structural feature of higher education systems—those institutions will show stronger performance on indicators that are affected by student ability—such as retention and graduation rates, learning and achievement, postgraduate employment and earnings, etc. These institutions also tend to enjoy strong reputations, excellent facilities, strong administrative infrastructure, and generous government funding that in turn help them attract talented faculty who can contribute to their performance in research and knowledge production. In many ways this is the institutional parallel of what sociologist Robert K. Merton (1968a) termed the “Matthew effect” in science, whereby benefits accrue to those who already enjoy advantageous positions.

Limited Understanding of and Capacity to Influence Performance

Indicator-driven accountability presupposes first that institutions can recognize and diagnose problems revealed by performance indicators, and second that leaders can design and implement interventions that will remedy the identified problems. These assumptions may be unrealistic. Although more than 40 years have passed since Cohen, March and Olsen (1972) described colleges and universities as “organized anarchies” characterized by problematic preferences, unclear technologies, and fluid participation by key actors, the fundamental insight remains apt in contemporary higher education. Whether

Goal Displacement Resulting from the Use of Proxy Measures

As noted above, performance indicators are proxy measures that reduce complex phenomena to one or more concrete measures through a process of selection and simplification. As I have written elsewhere (McCormick, 2017), proxy measures vary in the degree to which they are subject to measurement error (see Figure 1). A common problem when representing social phenomena such as those listed in the previous section is that fidelity to the underlying quality domain (e.g., teaching quality) is often sacrificed in the quest to reduce measurement error: the more error-free a measurement is, the wider the gap between the unobservable phenomenon of interest and the concrete measure selected to represent it. Yet in the context of a high-stakes performance indicator system—especially in the case of hard accountability—there is understandable interest on both sides of the accountability relationship to minimize measurement error, thereby ensuring that the consequences are fair, justifiable, and defensible.

The use of proxy measures to represent an unobservable quality domain

Source: McCormick, 2017; adapted from Bevan & Hood, 2006

The use of proxy measures to represent an unobservable quality domain

In light of the foregoing discussion, it is important to consider the impact on institutional behavior, or more specifically, the behavior of institutional leadership. In theory, a performance-based accountability system (whether soft or hard accountability) incentivizes leaders to improve performance in the quality domain. Yet due to the reliance on proxy measures, leaders’ attention will inevitably be drawn to the proxy measures rather than the quality domain represented by those measures (McCormick, 2017). After all, it is the proxy measures that determine the consequences for the institution. The goal to improve quality is thus displaced by the proximal goal to improve the measures selected to represent quality, a phenomenon that Merton (1968b) termed “goal displacement.” When proxy measures are designed to minimize measurement error, the likelihood that interventions to improve them will have much impact on the broader quality domain is bound to be quite low. As March (1984: 28) concisely observes, “A system of performance rewards linked to precise measures is not an incentive to perform well; it is an incentive to obtain a good score”.

Consistent with the limitations discussed above, accountability scholars Melvin Dubnick and George Frederickson (2011: 32) offer the following cautions about the use of performance measures in accountability systems:

Performance measures are best understood as information that may help sharpen questions rather than the answers to questions. Such measures, particularly in fields with elusive bottom lines, are best thought of as clues, interpretations, impressions, and useful input rather than facts. And of course all such interpretations carry with them certain biases, assumptions, and values.

With its multifaceted goals and well known loose coupling between inputs, processes, and outcomes, higher education presents a clear case of a social institution “with elusive bottom lines.” In the remainder of the paper, I advance a different perspective on how policy might motivate improved performance in higher education. The argument rests on two assumptions:

1. The ultimate goal of performance assessment is to facilitate performance improvement.

2. Improvement efforts should be guided by evidence.

Proceeding from these assumptions, how might we design a performance system to explicitly promote evidence-informed improvement? My thinking here is informed by two sources: a developmental model of evidence-informed improvement activity developed with my colleague, Jillian Kinzie (McCormick & Kinzie, 2018), and the element of the (U.S.) National Institute for Learning Outcomes Assessment’s (

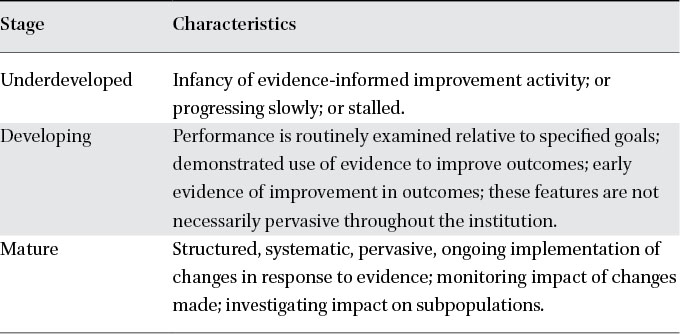

Building on Cecilia Lopez’s (2002) framework for classifying an institution’s stage of development relative to the assessment of student learning, McCormick and Kinzie (2018) proposed a simple developmental model of the stages of evidence-informed improvement (Table 1). The 3-stage model recognizes that an institution’s orientation toward evidence-informed improvement is not a binary state, but rather it progresses over time as comfort and facility with the use of evidence develop at both micro and macro levels—individual actors and the broader organizational culture. While the developmental model shown in Table 1 is oriented toward the use of evidence to improve student learning, the stages can be generalized to other aspects of performance. Although not depicted in the table, it must be acknowledged that different units within a university may be at different stages of development. Indeed, such variability is to be expected. It is also important to note that there is no assumption of uniform linear progress from stage to stage. Retrogression may well occur in response to external conditions, changes in leadership, institutional politics, and so on.

A developmental model of evidence-informed improvement activity

Source: Adapted from McCormick & Kinzie, 2018 and Lopez, 2002

A developmental model of evidence-informed improvement activity

The

At the risk of stating the obvious, evidence-informed improvement requires evidence. Asserting this fact calls attention to the bedrock principle that a viable improvement process is not guided by idiosyncratic whims and desires or by externally imposed metrics, but by the systematic collection of data that bear on specific and established institutional priorities, such as those articulated in a long-term strategic plan or stipulated by a governing body. The gathering of evidence presupposes careful planning and wide consultation to ensure that the results will be credible and taken seriously. Referring back to the first element of the

Communicating Evidence

Evidence does not promote improvement if locked away or confined to an organizational silo. Positive or negative, the results must be shared with relevant constituencies to facilitate the interpretation process: What do the results mean, and what do they suggest about needed improvements? Are there contradictions across the multiple sources? How can these contradictions be resolved? There must be formal processes for communicating results to leadership, faculty, staff, and external constituents in the form of meetings, reports, websites, or other formal mechanisms to facilitate the sharing and discussion of evidence within programs and units. These mechanisms must afford opportunities to collectively discuss and interpret the results—a prerequisite for the next category of activity.

Evidence-Informed Action Plans

Having arrived at a general consensus about what the evidence means, what is arguably the most challenging step follows: deciding on interventions and change processes required to address identified needs and deficiencies. A common trap here is to cycle back to data collection to resolve inconsistencies, reduce uncertainty, answer new questions that may emerge from the communication phase, and otherwise strengthen the evidence base. Higher education is especially vulnerable to these tendencies because the trained researchers who constitute the faculty and senior leadership understand that every answer leads to more questions. Some activities to resolve questions and puzzles may be warranted, but they cannot be allowed to impede progress indefinitely or serve as a delay tactic for those who are unwilling to implement change. The external assessment of evidence-informed improvement processes proposed here helps to dampen this tendency.

Traces of this stage include the formation of committees and other bodies charged to develop action plans in response to identified needs; the identification of explicit goals, including specification of how and when success shall be recognized; the allocation of resources to implement the plans; the dissemination of plans and goal statements to affected units across campus; and the explicit designation of organizational units responsible for implementation. Implementation timelines, milestones, and reporting schedules are established and disseminated to affected units. This stage also involves the planning of evaluation activities to determine the impact of improvement efforts. Evaluation planning may include the development and use of rubrics specifying elements and levels of goal achievement.

Implementation

The implementation phase may include prototyping or pilot testing with feedback mechanisms that inform the refinement of action plans. Implementation may also include necessary adjustments and course corrections to ensure success. New programs and activities will be disseminated to internal and external stakeholders. Just as evaluation processes are part of the planning phase, the evaluation team is named and charged as part of the implementation process.

Units responsible for implementation issue progress reports at previously determined milestones, with dissemination to relevant stakeholders. Academic and administrative changes made are documented during implementation. Although the point is not to bureaucratize the implementation process, it is important to leave a detailed and descriptive permanent record of the improvement process.

Loop-Closing

Once action plans are fully implemented and in place long enough to realize anticipated impacts, the ongoing evaluation plan concludes. This includes feedback processes involving those responsible for implementation as well as those most affected (e.g., students). Evaluation reports document results with recommendations for adjustments or new plans and are shared with relevant stakeholders.

The Developmental Perspective

We return momentarily to the developmental model set forth in Table 1. The efficacy and pervasive embrace of evidence-informed improvement as outlined above can be interrogated through the lens of the developmental framework, opening the possibility for a more nuanced and contextually situated assessment of evidence-informed improvement.

Limitations and Caveats

The argument above is predicated on two assumptions, both of which are rooted in a rational view of accountability processes: That the ultimate goal of performance assessment is to facilitate performance improvement, and that improvement efforts should be guided by evidence. There may be scenarios in which either or both assumptions fail. For example, accountability regimes that assess performance may be little more than political theater organized to demonstrate the commitment and seriousness of policy actors or to achieve preordained conclusions that favor certain institutions over others. Similarly, improvement efforts may be occasions to put in place those interventions favored by key actors, entirely apart from what the evidence may say. Under such circumstances, rationality in the design of accountability systems is irrelevant.

The preceding discussion identifies a set of traces—signals and artifacts of evidence-informed change—that can be presented to constituents, governing agencies, and others to demonstrate a commitment to evidence-informed improvement. The emphasis is unapologetically on process—there is no requirement or expectation that interventions are successful, though a series of unsuccessful interventions may be suggestive of unrealistic ambitions, inadequate planning, or insufficient commitment and engagement on the part of key actors—all of which present opportunities for growth and organizational learning. The traces approach is intended to authentically represent serious and sustained improvement efforts guided by evidence, while itself producing an evidentiary base of its own.

Certain of the limitations of the performance indicator approach described earlier—the matching of students and staff to institutions and the reliance on problematic proxy measures—are far less worrisome in the vision set forth here. However, limitations and vulnerabilities remain. The various traces described in the foregoing discussion are themselves proxy measures. It remains possible that an institution will “teach to the test” by engaging in the various steps without genuine commitment, but the risks of goal displacement and other pernicious consequences are much reduced by the intentional design to serve institutional needs and priorities. Nevertheless, limitations that are inherent to higher education as a social institution—organizational and field complexity, and limits to the understanding of performance and capacity to improve it—remain. We may hope that calling attention to the features of evidence-informed improvement processes may mitigate (or even accommodate) these challenges, but this remains an empirical question.

Conclusion

The use of performance indicators in accountability regimes is subject to a set of severe limitations and often involves what should be unacceptable compromises that prioritize measurement precision over fidelity to underlying conceptions of quality. Despite the intuitive appeal of indicator-based accountability systems, there is insufficient evidence that they produce the desired effect of improved performance. These failures may be attributed to four characteristics of higher education as a social institutions: organization and field complexity; externalities related to how students are allocated to institutions; limited understanding of and capacity to influence performance; and goal displacement resulting from the use of proxy measures.

Proceeding from the twin assumptions that the goal of accountability policy is improved performance and that improvement should be informed by evidence, I offer an alternative vision that focuses explicitly on evidence-informed improvement activities and how they might be documented, recorded, and incentivized. Whereas the operative metaphor of performance indicators is accounting, a more fitting metaphor for the signals-and-artifacts approach to improvement processes may be archaeology. In this view, specific measures of performance matter less than the verifiable traces of an organizational culture oriented toward the use of evidence to guide improvement efforts, and the reliance on the professional responsibility of leaders and educators to take assessment and improvement seriously.