Abstract

The current research explores links between university productivity and student success in Australia. Interviews were conducted with 15 stakeholders and experts on the topic of higher education productivity. The research uses qualitative methods to identify instances when participants discussed institutional productivity in conjunction with student success factors. Four common themes emerged that linked institutional productivity to student success: “Student experience and engagement,” “attrition, retention and progression,” “cross-subsidies,” and “teaching-research effort.” Findings reveal two feasible options for improving productivity estimation for the education function of universities. Findings also reveal leverage points for intervention to improve student success and productivity. The research highlights where mutual interests lie for managing resources and facilitating better student outcomes.

Introduction

The paper draws insight about student success from the interview phase of a larger project investigating performance and productivity in Australian higher education. The project involves designing and validating measures for indicating multiple dimensions of university productivity. The validation process includes interviews with key stakeholders and experts to evaluate the prototype measurement model. The interview form for the evaluations includes technical, conceptual, and practical questions to consider validity across a range of contemporary contexts. Findings from the interview phase of the project represent the contribution to knowledge discussed in this paper. The combination of technical and non-technical questions provided insights about productivity issues that require improved measurement techniques. Patterns of student-centric stances on productivity emerged across participants. The paper explores the relationships between productivity and student success and how the study of each can inform the other.

Institutional productivity analyses are often quantitative and economic in nature. Productivity measures are precise, and they resemble the scientific results of research in more positivist disciplines. 1 However, productivity analysis in higher education is not values free, which is why quantitative productivity research in the field can be met with scepticism and sometimes criticism. 2 The findings of this research are intended to illustrate how productivity metrics can be improved and where leverage points may exist to address both institutional productivity and student success.

The paper focuses on interests concerning student success and exhibits how those interests are portrayed through a productivity lens. The research function of universities is covered, but only in relation to the teaching-research nexus and the costs and benefits of institutions pursuing a balance of both academic functions. The purpose of the study is twofold: (1) to gain insight on how to improve productivity metrics using student success data and interests and (2) to identify what institutional productivity improvement initiatives are tied to improving student success.

Context

A prime function of universities is to deliver education and to enable student success. While the next section details institutional functioning in term of productivity and student success, this section explores how broader higher education dynamics set the stage for examining the two topics. Three contemporary issues that pose challenges to universities in Australia and around the world include: (1) higher education expansion and student growth, (2) pressures on funding and budgets, and (3) the decoupling of teaching and research. The three topics are not exhaustive, but they help frame the analysis that follows.

A rich literature details the implications of the global higher education expansion. 3 The expansion has been rapid, and decision-makers now face mounting tensions. High growth rates strain structures of governance and administration, as well as physical infrastructure. 4 New issues of increased student diversity and inequality must be also addressed for higher education systems to serve societies effectively and equitably. 5 In Australia, growth of domestic students has been accompanied by growth of international students, as the country has also absorbed international demand. University functions and facilities—and how they are resourced—are impacted by the magnitude of this scaling up of higher education provision.

The demands of growth have been met with increased pressure on public funding. Public funding has tended to cover smaller proportions of total higher education expenditure across the globe. 6 There are some countries, notably in Europe, that remain exceptions to this rule, but global strain on system inputs has elevated the urgency to improve higher education efficiency and productivity. 7 Australia is no exception, where recent drivers for productivity improvement have centred on the limits of tax payer dollars, as well as perceptions that substantial benefits of higher education are private, rather than public. 8 To address these concerns, increasing proportions of student financial contribution has become a priority issue for policy development. 9 Increasing international student enrolment is a factor for this trend as well. Overseas student enrolment is not only a consequence of worldwide demand for higher education, but also overseas student enrolment in Australia has been actively sought as a means of closing funding gaps. Australia’s volume of higher education exports makes the country unique. International student fees have become a means of financing normal university operations. 10

Models and paradigms for higher education delivery have also been evolving. Modern universities were built on the principle of research and teaching being inseparable. 11 However, the notion is not as strong as it once was. The nexus has been described as a relic from the past in a new era of the “multiversity.” 12 A dichotomy is growing between teaching and research. Segmentation of teaching institutions and research institutions, as well as teaching staff and research staff has become more common. 13 A common worry is that the two functions have become imbalanced, and incentive structures favour performance in research over teaching. 14 A 2011 survey found that 80 per cent of Australian academics wanted to raise their research profile. 15 It is argued that these incentive structures have led to rational decisions by academics to prioritise research, and concerns have been raised about the ability of undergraduate teaching programs maintain quality in this environment. 16

Conceptual Framework

Productivity

Productivity studies derive from engineering and economics. The lens of the current study focuses on the productivity of institutions, not individuals, and is defined as the ratio of institutional outputs to inputs. Universities use multiple inputs to produce multiple outputs. The ability to define clear inputs and outputs for higher education remains a limitation of productivity study for universities. However, key performance indicators already in use—such as staff and student data, as well as graduate completions, publications, and citations—allow for output-input ratios to be calculated and can serve as proxies for performance. 17

The productivity lens draws boundaries around the activities and functions performed by and within institutions. It has garnered increased attention as institutions face mounting financial constraints. Given scarce resources, academic institutions face difficult decisions about how to structure and finance operations. Productivity indicators provide a baseline for judging the effectiveness of resource management, and they inform broader dimensions of performance and quality. 18 Using empirical findings to make judgements about performance and quality, however, requires overcoming challenges with available data. Current data and current metrics cannot capture all aspects of university performance, but if limitations of metrics are acknowledged and disclosed, then productivity assessment can provide insight on specific institutional issues for both education and research. 19

The productivity lens can be helpful for analysis in the absence of indicators. The productivity lens centres on solutions for maximising the achievement of mission objectives under budgeting and resource constraints. 20 Student success is a key mission objective for most institutions, yet it has no definitive universal indicator. Examining student success factors in tandem with productivity issues can shed light on where institutional productivity and student success converge. If pursued in silos, market objectives and resource management objectives may compete with educational objectives. 21 Whether it is possible for universities to pursue financial and resource management objectives, excel in research, and simultaneously maintain the quality of undergraduate education remains an open question. 22 The current study seeks to find where synergies lie.

Student Success

Student success is the hallmark of a quality undergraduate education, but defining student success is not straightforward. As with productivity, input-output framing can help for understanding student success. George Kuh and colleagues review the literature on student success and synthesize a framework to encapsulate student success factors. 23 The framework is an input-output heuristic. Inputs are defined as “pre-university experiences” and outputs as “post-university outcomes.” The student’s time within the university constitutes the main body of the framework and is shown to be influenced by (A) “student behaviours” and (B) “institutional conditions.” Student behaviours and institutional conditions drive “student engagement,” and student engagement is framed as the principle student success factor. 24 In terms of facilitating success, institutional conditions are more under the control of the university than is the time and effort students put into their studies. Universities can, however, incentivise positive student behaviours and optimise formal instruction time through more effectual educational practices and quality instruction. 25

Student success remains an elusive notion to define independently. Post-university outcomes may dominate the thinking of some stakeholders in terms of how student success should be determined. However, post-university outcomes are also influenced by factors and contexts outside the control or scope of higher education. Studies that link success factors to success results often use one (or a combination) of three common proxies for student success: (1) student marks or grades, (2) retention and progression, and (3) completion or qualification. 26 These proxies confine the understanding of student success to phenomena confined to the institution. Multiple perspectives on student success have been entertained and studied, including sociological, organisational, physiological, cultural, and economic. 27 The organisational perspective highlights the institutional structures and processes that affect student performance. 28 It emphasises the institution as the unit of analysis and highlights certain characteristics that influence success, such as institutional mission, standards, assessment practices, and learning and teaching practices. The perspective provides a natural link between student success and productivity.

Some aspects of student success remain intangible, but the idea represents much of what universities are built to achieve. Measured indicators of productivity and student success should not always be taken at face value. Sullivan and colleagues warn that relying too heavily on metrics can increase risks of a ‘race to the bottom’ in terms of quality. 29 Certain qualitative aspects of student success may never be informed by productivity analyses, but there are inextricable links between the two concepts. Further, the discussion below illustrates how interest in one necessitates interest in the other, and all else held equal, improving one means improving the other.

Research Design

Participants

For interviews, a purposive sample of 15 participants was selected to provide technical and institutional perspectives on the topic of university productivity. They were selected to inform the development of a measurement model. Participants were not selected randomly or as representative of a population. They were selected as either (A) key stakeholders or (B) technical experts who could provide specialised information. Key stakeholders were chosen based on their current or former roles as institutional leaders who use performance data to make decisions. They include current and former university executives and deans, as well as advisors to executives. Technical experts were chosen based on their role and experience in analysing university productivity and efficiency data and in composing reports to be used for decision-making. They include consultants, researchers, and officials of public agencies. Participants were selected from eight different Australian universities, four different consulting firms, and one public sector agency. Table 1 shows participant codes and monikers for different universities.

Participant codes

Participant codes

One data collection tool was developed to collect qualitative data. The tool serves as a form for semi-structured interviews. The form consisted of five questions. The questions prompted participants to (1) define productivity for higher education, (2) critiquing a current measurement technique, (3) suggest improvements for university productivity measurement (4) identify improvement areas for higher education productivity, and (5) identify constraints to productivity improvement. The tool was developed in consultation with experts and was piloted with a former university executive. The final interview form remained unchanged across all 15 interviews.

Participants’ consent was obtained before commencing and recording each interview. The discussions lasted for a duration of 45 to 75 minutes. Transcripts were transcribed verbatim in Microsoft Word. Thematic analysis and category coding was used to detect emergent patterns in the data. 30 The process involved identifying segments of the data where participants mentioned student success factors in relation to productivity topics being discussed. Open coding was then performed at two levels to classify main themes and sub-categories. Main themes represent different linkages between student success and productivity. Coding of sub-categories linked main themes to alternative participant stances and views on the themes. Coding results illustrate commonly referenced productivity issues that relate to student success and to alternative perspectives on interpreting the issues. All transcripts were explored simultaneously to categorise main themes across the dataset.

Findings

Findings Summary

Results exhibit the top four most frequently mentioned Australian university productivity issues that participants associated with student success. Each issue arose as a direct response to questions about university productivity, but student success factors arose during discussion. Student success factors identified in the transcripts that participants linked to productivity include student progression, retention, experience, engagement and satisfaction, as well as learning and teaching processes, teaching responsibilities, teaching preparation, learning outcomes, student financial aid, student support activities, and institutional accountability to students.

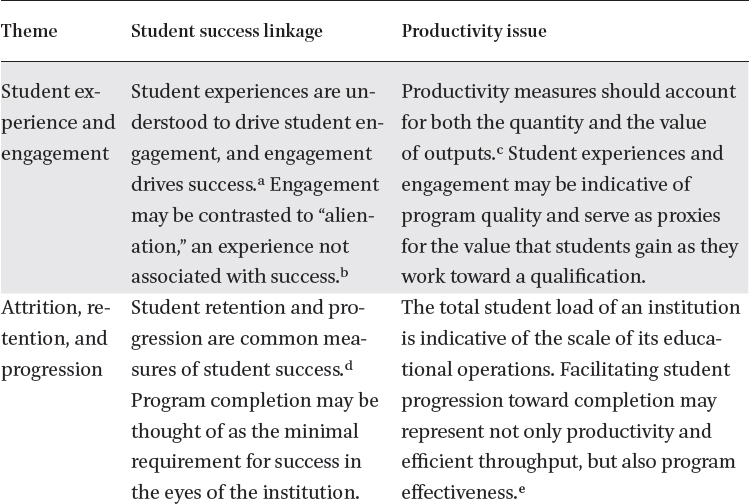

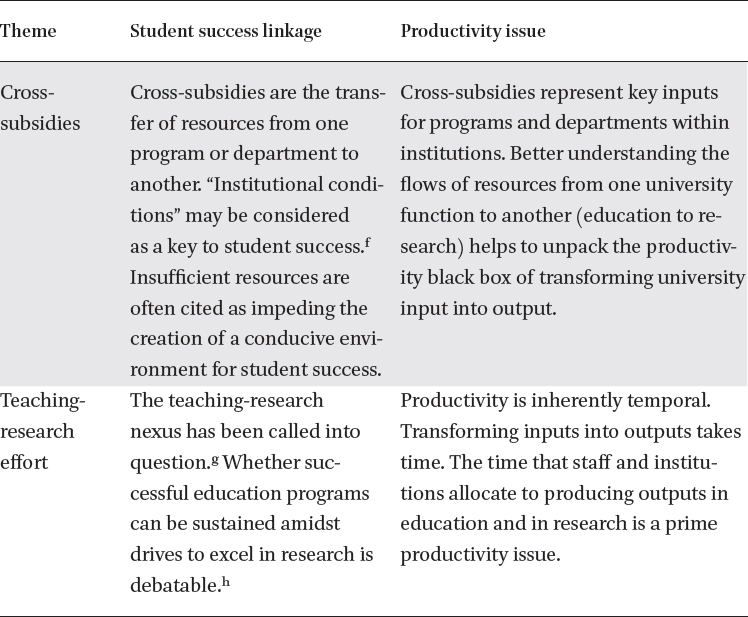

The thematic analysis reveals four common productivity themes participants raised in relation to student success. They include (1) student experience and engagement, (2) attrition, retention, and progression (3) cross-subsidies, and (4) teaching-research effort. The topics were raised by eight, seven, five and five participants, respectively, and each participant mentioned at least one connection. Table 2 explicates the linkages participants drew between student success and productivity. The linkages are supported by the literature. Table 3 shows second level coding results of different stances participants took on the themes and the frequencies with which the stances were raised. Each stance is clarified below with respect to the associated productivity theme.

Linkages between productivity and student success

Linkages between productivity and student success

a Robert M. Carini, George D. Kuh, and Stephen P. Klein, “Student Engagement and Student Learning: Testing the Linkages*,” Research in Higher Education 47, no. 1 (February 2006): 1-32.

b Mann, “Alternative Perspectives on the Student Experience: Alienation and Engagement,” 2001.

c Erkin Bairam, Homogeneous and Nonhomogeneous Production Functions: Theory and Applications (Avebury, 1994); Massy, Reengineering the University: How to Be Mission Centered, Market Smart, and Margin Conscious. 2016.

d Kahu and Nelson, “Student Engagement in the Educational Interface: Understanding the Mechanisms of Student Success”; Collier, “Why Peer Mentoring Is an Effective Approach for Promoting College Student Success.”

e Sullivan et al., “Improving Measurement of Productivity in Higher Education,” 2012.

f Carini, Kuh, and Klein, “Student Engagement and Student Learning: Testing the Linkages,*” 2006; Mann, “Alternative Perspectives on the Student Experience: Alienation and Engagement,” 2001.

g Neumann, “Researching the Teaching-Research Nexus: A Critical Review,” 1996.

h Harland and Wald, “Vanilla Teaching as a Rational Choice: The Impact of Research and Compliance on Teacher Development,” 2017.

Frequencies by theme and by stance

Quality Indication and Measurement Validity

Four of the eight participants who discussed this theme raised the topic with respect to the validity of university productivity measurements. They took the stance that incorporating indicators for the quality of student learning experiences and engagement into productivity measurement is crucial for accurate productivity assessment. Participants compared student engagement and experience indicators to citation information for research publications. Just as with research publications, not all student experiences are alike, and counting student numbers paints only half the picture for productivity.

Prioritising Better Educational Practices

Three of the eight participants raised the issue from the perspective that—all else held equal—productivity increases as educational practices become more effective. The concern of the three participants was that academics are often incentivised to prioritise research performance over teaching performance. And they suspected that educational productivity lacks because of current incentive structures. Tracking and reporting student experiences for individual subjects was viewed as a mechanism to increase educational productivity by incentivising more effective instruction. An emphasis was placed on developing better assessment tools for students to use at the end of every subject delivery, and on using student assessments more consistently across fields of study for institutional decision-making. KS2 elaborated.

Every student now carries out a unit evaluation form at the end of each semester … The aspiration [for lecturers] is to receive at least a ranking of four out of five. Two or below, we would put them on performance improvement plans … They’ve got to have consistent fours to get promoted. So, the unit evaluation is absolutely driving teaching quality.

Experience Not Equal to Success

One participant warned against incorporating student experience indicators for productivity assessment. The concern was that student experience indicators are not necessarily valid proxies for learning outcomes, and learning outcomes are better indicators of productive educational delivery. Perceptions of experience may not be correlated with actual success. Such caveats are also raised and supported in the literature on student experience. 31

Attrition, Retention and Progression

Quality Indication and Measurement Validity

Like student experience and engagement, two participants emphasised that attrition, retention, and progression measures are also crucial to incorporate for the validity of a productivity assessment. Student load has commonly been used as the primary output factor for learning and teaching processes in Australian studies because it represents the scale of educational service delivery. 32 Participants argued, however, that progression measures represent the dynamics and the outcomes of the learning and teaching process. Participants explained that attrition and retention measures can also indicate impact on students in ways that citation metrics can do so for research publications.

Value-Add

Rather than emphasising how student progression should be tracked to paint an accurate picture of institutional productivity, five other participants explained that course completions represent a value-add to the time spent undertaking the degree. The value-add of a qualification in addition to having participated in university education is often referred to as the “sheepskin effect.” 33 Participants emphasised that increasing productivity depends upon improving student progression. TE7 explained.

… one of the big areas [to improve] is attrition. It’s not valuable to anyone involved. It doesn’t help the student or the tax payer … and [it’s] not a help to the university either.

TE7 elaborated on interventions related to government policy, stating that better formulas for performance-based funding could incentivise institutions to prioritise getting better results with instructional activities. Other participants took more student-oriented approaches, emphasising that accessibility and clarity of student support services should be improved. The comments of each of the five participants centred around the idea that the visible signal of a university’s value-add for students is the degree. Students who attend university without earning a qualification do not reap the same benefits as the students who do earn a qualification.

Cross-Subsidies

Fundamental

Cross subsidies relate to student success because, from one perspective, they allow for the continuation of programs or development of programs that may not command a large market value in terms of year-end revenue. On the other hand, they may represent the depletion of resources from key programs. One stance is that students benefit from cross-subsidisation between university departments. TE5 characterised cross-subsidisation as an essential internal funding model and a fundamental good for any university. It exists because it is needed for institutions to pursue non-financial, long-term objectives. Cross subsidies allow a university to address a more diverse set of student needs, for example, by allowing a lower attendance program to continue if that program is essential to those students who attend. TE5 elaborated.

Just because something may be high cost and losing money, if it’s actually core to the mission, then [the institution] can’t get rid of it, and you have to figure out how to subsidise it in some way.

Necessary

Other participants framed cross subsidies as neither a fundamental good nor a fundamental bad. Rather, cross-subsidisation is a necessary mechanism in the current environment for Australian universities to compete on a global scale. Universities are judged on the merit of their research accomplishments, but since research does not usually produce annual profit margins, it must be supported by other activities. There are pros and cons to such an internal funding model.

Bad Practice

Two participants framed cross-subsidisation in the Australian system as evidence that focus has shifted toward research performance and away from educational excellence and student outcomes. KS4 elaborated.

The greatest casualty in the equation is the student … the students are casualties in the sense of a system that has robbed resources from education to pay for research.

TE2 added.

We know that universities cross-subsidise their research with teaching and … it comes at the expense of good quality teaching.

Teaching-Research Effort

Workload and Division of Responsibility

Four of the five participants who spoke to this theme raised concerns about how inflexibility of negotiating enterprise bargaining agreements (

Two other participants reported that obstacles to productivity presented by

You have to go through a lot of elaborate processes to get the agreement. We’ve always ended up winning the cases, but it takes time … the enterprise agreement ties you up, and we need more flexibility to do this stuff more quickly to be as agile has we have to be.

Institutional Differentiation

One participant explained that the core of the problem rests in institutional missions, current regulations, and the opportunity costs for students and education. KS2 explained that universities in Australia should differentiate themselves. A more productive system would have certain institutions focusing on innovation in research and others focusing on innovation in learning and teaching. This might be achieved if Australia’s Tertiary Education Quality and Standards Agency (

… if we look at where the waste is, we have not segmented our system. Governments have not segmented our system or the funding requirements. They haven’t given appropriate parameters … [University X], for example, drives its people to put in research grants, spend all that time, and then they have a one per cent hit rate on applications … but

Discussion

The discussion outlines how findings inform (A) how productivity metrics should be improved to reflect student success and (B) leverage points for positive intervention. Productivity topics and indicators straddle interests in mission objectives, resource management, and finances. The trouble with developing a measurement model and selecting data elements for the model is establishing clear boundaries around what the model should inform, and what it should not. For the education function of universities, the findings above illustrate that student success should be incorporated into productivity metrics, not just for the sake of appeasing diverse interests, but for base model validity. Productivity increases as the value of outputs increases. In the private sector, the proxy for value-add is financial. In higher education, however, where the prime currency is knowledge, not dollars, 34 outputs need value-add adjustments based upon other principles.

To capture elements of value and quality, productivity analysis for universities should begin with outputs for the education function that are scaled using student success indicators. Just as numbers of “widgets” sold in private sector industries are scaled by selling price, the volume of instructional delivery in universities should be scaled by student success. Findings presented two options for accomplishing this: student retention measures and student experience measures. The former has been proposed previously by using a productivity output metric of “adjusted credit hours” 35 or “adjusted student load.” 36 These metrics scale instructional delivery data with completion data. Sullivan and colleagues explain. “An effect will be captured to the extent that higher quality inputs lead to higher graduation rates … For example, if smaller classes or better teaching lead to higher graduate rates, this will figure in as a greater sheepskin effect—that is, an added value assigned for degree completion.” 37 Results using adjusted load on Australian data have recently been published. 38

Alternatively, student experience measures might serve to capture the value-add of university education. Output metrics using student experience data, however, come with more caveats because they are less robust. Different universities use different measures to portray student experience, so benchmarking between institutions would be difficult. Further, summative student experience data gathered from graduation surveys may not be suitable for productivity metrics. Empirical relationships between student satisfaction, student learning outcomes, and perceived learning outcomes, for example, are tenuous and complex. 39 Rather, internal course unit evaluations—designed to provide targeted feedback to academic staff for improving effectual educational practice and incentivising quality teaching—could be more promising for capturing value-add to students. Again, however, data would need to be collected and reported consistently within and between institutions.

Participants also identified high-priority leverage points for intervention. They include regulatory and quality frameworks, workload arrangements, incentive structures, and internal funding models. None was portrayed as a magic bullet, but rather as urgent and high-impact. The participants raised each as a mechanism for driving better results in education and learning and teaching. These leverage points add depth to current policy debate in Australia, where key discussion about efficiency and productivity currently centres on portions and levels of student funding contribution. 40 More research in Australia needs to be conducted to isolate the effects of alternative staffing compositions and workload requirements on performance and results. Research should also explore conditions under which cross-subsidisation from education to research might bolster or hinder long term objectives. For this to occur, however, there is a need for better reporting of resource allocation and financing of research and education. 41 Current data make it difficult to determine how much teaching surplus is used for research funding at different institutions. 42 Knowing these figures would help stakeholders better understand the principles under which institutions operate and would allow for more accurate input-output analysis of education and research productivity. In 2017 the Australian government announced intentions to establish a more transparent framework for reporting research and education finances. Findings of this research re-establish the urgency of such an initiative.

Findings illustrate the interdependencies between productivity and student success. Yet they are often treated separately. Institutional productivity and efficiency concerns are often relegated to the administrative and financial domains, where non-academic staff are often disparagingly refer to as “bean counters.” 43 Likewise, issues of student success and engagement fall to the academic domain, often disparagingly referred to by outsiders as “the secret garden” or “the ivory tower.” 44 The current research exhibits that shared interests exist in abundance, and that exploiting those shared interests has the potential to generate performance gains. In an era when performance-based-funding is a growing reality for Australian higher education, 45 key stakeholders must work together to determine appropriate performance metrics and to explicate the limitations of the metrics to funding bodies. No single metric will encompass all dimensions of higher education performance, nor should measure performance determine all funding, but current data can be used to better effect for portraying the accomplishments and the aspirations of universities.

Footnotes

1

Marvin A. Titus and Kevin Eagan, “Examining Production Efficiency in Higher Education: The Utility of Stochastic Frontier Analysis.” In Higher Education: Handbook of Theory and Research, ed. M. Paulsen, vol. 31 (Dordrecht: Springer International Publishing, 2016); Amir Moradi-Motlagh, Christine Jubb, and Keith Houghton, “Productivity Analysis of Australian Universities,” Pacific Accounting Review 28, no. 4 (November 7, 2016): 386-400.

2

Nicholas Drengenberg and Alan Bain, “If All You Have Is a Hammer, Everything Begins to Look like a Nail—How Wicked Is the Problem of Measuring Productivity in Higher Education?” In Higher Education Research & Development 36, no. 4 (June 7, 2017): 660-73; Lynn P. Nygaard, “Publishing and Perishing: An Academic Literacies Framework for Investigating Research Productivity,” Studies in Higher Education 42, no. 3 (2017): 519-32.

3

Evan Schofer and John W. Meyer, “Education in the Twentieth Century,” American Sociological Review 70, no. December (2005): 898-920; Martin Trow, “Reflections on the Transition from Elite to Mass to Universal Access: Forms and Phases of Higher Education in Modern Societies since WWII,” in International Handbook of Higher Education (Dordrecht: Springer Netherlands, 2007), 243-80.

4

Martin Trow, “Problems in the Transition from Elite to Mass Higher Education,” 1973.

5

Ye Liu, Andy Green, and Nicola Pensiero, “Expansion of Higher Education and Inequality of Opportunities: A Cross-National Analysis,” Journal of Higher Education Policy and Management 38, no. 3 (2016): 242-63.

6

Jane Knight, “Concepts, Complexities and Challenges,” in International Handbook of Higher Education, ed. James J. F. Forest and Philip G. Altbach (Springer, 2007), 207-27.

7

Debi McDonald, “Doing More with Less: Five Trends in Higher Education Design,” Planning for Higher Education Journal 42, no. 1 (2013): 1-5; Ricky Tompkins and Laura Cates, “Non-Academic Assessment in an Accountability World: Achieving More with Less,” Community College Journal of Research and Practice 33, no. 11 (2009): 953-55.

8

Australia Productivity Commission, “University Education, Shifting the Dial,” 2017.

9

Department of Education and Training, “The Higher Education Reform Package,” 2017.

10

Brendan Cantwell, “Are International Students Cash Cows? Examining the Relationship Between New International Undergraduate Enrollments and Institutional Revenue at Public Colleges and Universities in the US,” Journal of International Students 512, no. 4 (2015): 512-25.

11

Daniel Fallon, The German University: A Heroic Ideal in Conflict with the Modern World (Colorado Associated University Press, 1980).

12

Ruth Neumann, “Researching the Teaching-Research Nexus: A Critical Review,” Australian Journal of Education 40, no. 1 (1996): 5-18.

13

Gill Nicholls, Professional Development in Higher Education: New Dimensions & Directions (Kogan Page, 2001); Philip G. Altbach, Liz Reisberg, and Laura E. Rumbley, “Trends in Global Higher Education : Tracking an Academic Revolution Trends in Global Higher Education: Tracking an Academic Revolution,” no. July (2009).

14

Hamish Coates, The Market for Learning (Singapore: Springer Singapore, 2017); Ellen Hazelkorn, Rankings and the Reshaping of Higher Education: The Battle for World-Class Excellence (Basingstoke: Palgrave Macmillan : [distributor] Macmillan (US), 2015., 2015).

15

E. Bexley, R. James, and S. Arkoudis, “The Australian Academic Profession in Transition,” 2011.

16

Tony Harland and Navé Wald, “Vanilla Teaching as a Rational Choice: The Impact of Research and Compliance on Teacher Development,” Teaching in Higher Education, October 27, 2017, 1-16; James S. Fairweather, “Academic Research and Instruction: The Industrial Connection,” The Journal of Higher Education 60, no. 4 (1989): 388-407.

17

Barbara A. Miller, Assessing Organizational Performance in Higher Education (Jossey-Bass, 2007); D. Besanko, R. R. Braeutigam, and K. Rockett, “Inputs and Production Functions 6.1,” 5th ed. (Hoboken, NJ: Wiley, 2015), 183-220; Teresa A Sullivan et al., “Improving Measurement of Productivity in Higher Education” (Washington, DC: National Academies Press, 2012).

18

Miller, Assessing Organizational Performance in Higher Education. 2007.

19

Sullivan et al., “Improving Measurement of Productivity in Higher Education,” 2012.

20

William F. Massy, Reengineering the University: How to Be Mission Centered, Market Smart, and Margin Conscious (Baltimore: The Johns Hopkins University Press, 2016).

21

Ibid.

22

Fairweather, “Academic Research and Instruction: The Industrial Connection,” 1989.

23

George D. Kuh et al., “What Matters to Student Success: A Review of the Literature,” 2006.

24

Ibid.

25

George D. Kuh, Student Success in College: Creating Conditions That Matter, 1st ed. (San Fransisco: Jossey-Bass, 2010).

26

Ella R. Kahu and Karen Nelson, “Student Engagement in the Educational Interface: Understanding the Mechanisms of Student Success,” Higher Education Research and Development 37, no. 1 (2018): 58-71; Peter Collier, “Why Peer Mentoring Is an Effective Approach for Promoting College Student Success,” Metropolitan Universities 28, no. 3 (2017): 9.

27

Sarah J. Mann, “Alternative Perspectives on the Student Experience : Alienation and Engagement,” Studies in Higher Education 26, no. 1 (2001): 7-19; Shaun R. Harper and Stephen John Quaye, Student Engagement in Higher Education: Theoretical Perspectives and Practical Approaches for Diverse Populations (Routledge, 2009).

28

George D. Kuh et al., Piecing Together the Student Success Puzzle Research, Propositions, and Recommendations:

29

Sullivan et al., “Improving Measurement of Productivity in Higher Education,” 2012.

30

Kirsty Williamson and Graeme Johanson, eds., Research Methods: Information, Systems and Context, 1st Edition (Prarhan, Australia: Tilde Publishing and Distribution, 2013); Amanda Coffey and Paul Atkinson, Making Sense of Qualitative Data: Complementary Research Strategies (Sage Publications, 1996).

31

Nicholas A. Bowman, “Validity of College Self-Reported Gains at Diverse Institutions,” Educational Researcher 40, no. 1 (January 1, 2011): 22-24; Carini, Kuh, and Klein, “Student Engagement and Student Learning: Testing the Linkages*,” 2001.

32

Andrew C. Worthington and Boon L. Lee, “Efficiency, Technology and Productivity Change in Australian Universities, 1998-2003,” Economics of Education Review 27, no. 3 (2008): 285-98; Moradi-Motlagh, Jubb, and Houghton, “Productivity Analysis of Australian Universities,” 2016.

33

Jin Heum Park, “Estimation of Sheepskin Effects Using the Old and the New Measures of Educational Attainment in the Current Population Survey,” Economics Letters 62, no. 2 (February 1, 1999): 237-40; David A. Jaeger and Marianne E. Page, “Degrees Matter: New Evidence on Sheepskin Effects in the Returns to Education,” The Review of Economics and Statistics 78, no. 4 (November 1996): 733.

34

Coates, The Market for Learning, 2017; Massy, Reengineering the University: How to Be Mission Centered, Market Smart, and Margin Conscious, 2016.

35

Sullivan et al., “Improving Measurement of Productivity in Higher Education.”

36

Kenneth Moore, Hamish Coates, and Gwilym Croucher, “Understanding and Improving Higher Education Productivity,” in Research Handbook on Quality, Performance and Accountability in Higher Education, ed. Ellen Hazelkorn, Hamish Coates, and A. C. McCormick (In Press: Edward Elgar, 2018).

37

Sullivan et al., “Improving Measurement of Productivity in Higher Education,” 2012: 5.

38

Moore, Coates and Croucher. “Investigating applications of university measurement models using Australian data”, 2018.

39

Bowman, “Validity of College Self-Reported Gains at Diverse Institutions,” 2011; Jacqueline K. Eastman, Maria Aviles, and Mark D. Hanna, “Determinants of Perceived Learning and Satisfaction in Online Business Courses: An Extension to Evaluate Differences Between Qualitative and Quantitative Courses,” Marketing Education Review 27, no. 1 (2017): 51-62.

40

Department of Education and Training, “The Higher Education Reform Package,” 2017; Australia Productivity Commission, “University Education, Shifting the Dial,” 2017.

41

Coates, The Market for Learning, 2017.

42

Australia Productivity Commission, “University Education, Shifting the Dial,” 2017.

43

Massy, Reengineering the University: How to Be Mission Centered, Market Smart, and Margin Conscious, 2016.

44

Ronald Barnett, Improving Higher Education : Total Quality Care (Society for Research into Higher Education, 1992).

45

Australia Productivity Commission, “University Education, Shifting the Dial,” 2017.