Abstract

Minimizers and convolutional neural networks (CNNs) are two quite distinct popular techniques that have both been employed to analyze categorical biological sequences. At face value, the methods seem entirely dissimilar. Minimizers use min-wise hashing on a rolling window to extract a single important k-mer feature per window. CNNs start with a wide array of randomly initialized convolutional filters, paired with a pooling operation, and then multiple additional neural layers to learn both the filters themselves and how they can be used to classify the sequence. In this study, our main result is a careful mathematical analysis of hash function properties showing that for sequences over a categorical alphabet, random Gaussian initialization of convolutional filters with max-pooling is equivalent to choosing a minimizer ordering such that selected k-mers are (in Hamming distance) far from the k-mers within the sequence but close to other minimizers. In empirical experiments, we find that this property manifests as decreased density in repetitive regions, both in simulation and on real human telomeres. We additionally train from scratch a CNN embedding of synthetic short-reads from the SARS-CoV-2 genome into 3D Euclidean space that locally recapitulates the linear sequence distance of the read origins, a modest step toward building a deep learning assembler, although it is at present too slow to be practical. In total, this article provides a partial explanation for the effectiveness of CNNs in categorical sequence analysis. *

INTRODUCTION

It is a pithy statement now that the near exponential explosion of biological sequence data we confront requires the construction of more efficient tailored algorithms capable of handling the data deluge (Kahn, 2011; Berger et al., 2016). For the past couple decades, local k-mer subsampling schemes such as minimizers (Schleimer et al., 2003; Roberts et al., 2004), syncmers (Edgar, 2021), and minimally overlapping words (Frith et al., 2020) have been applied to reduce the redundancy found in the overlapping neighboring k-mers of a sequence, and thus allow speeding up tasks such as taxonomic classification (Wood et al., 2019), Average Nucleotide Identity estimation (Shaw and Yu, 2023), read mapping (Li, 2016), or assembly (Ekim et al., 2021).

These methods have become necessary because although Moore's law on transistor density has continued unabated, the same cannot be said about single-threaded processing times or fast memory access.

However, it bears remarking that even as traditional genomics analysis has turned to increasingly efficient algorithmics, the burgeoning subarea of deep learning in biology has exploded (Angermueller et al., 2016; Fiannaca et al., 2018), and the neural networks being used for the same tasks (such as metagenomic classification) are by comparison much less computationally efficient. Instead, deep learning is able to continue to tap into Moore's law because its computational primitive of numerical linear algebra is easily vectorized onto hardware accelerators such as graphics processing units (GPUs) and tensor processing units (TPUs) (Lu, 2010; Jouppi et al., 2017).

On bioinformatics tasks that map directly onto traditional ML (Machine Learning) tasks—for example, classification and prediction of images—those models often generally outperform traditional methods on accuracy (Deepak and Ameer, 2019; Liu et al., 2018). Furthermore, deep learning methods are often able to achieve comparable accuracy even on core computational biology tasks such as variant calling (Poplin et al., 2018) and metagenomic binning (Yoshimura et al., 2021), but at heavy computational cost. Thus, choosing the right analysis methodology requires carefully navigating the relevant trade-offs (Berger and Yu, 2023).

However, although in theory with unbounded compute, neural networks can learn any function (Hornik et al., 1989), a lot of work has been done to create more efficient architectures, including convolutional neural networks (CNNs) (Fukushima and Miyake, 1982), recurrent neural networks (RNNs) (Rumelhart et al., 1986), long-short-term memory structures (Hochreiter and Schmidhuber, 1997), Transformer networks (Vaswani et al., 2017), and more. However, although some ML models have an intuitive justification, often, they are simply found to perform well empirically, and it is difficult to interpret why they work, or what the learned weights might mean biologically (Montavon et al., 2018).

In this article, we focus on understanding the efficacy of CNNs on categorical sequence data. Although interpretations of a CNNs weights and neurons are often made by way of comparison with the receptive fields of visual neurons, that interpretation falls apart when applied to biological sequence data. Unlike the brightness of a pixel in an image, biological sequences are not ordinal, but rather instead categorical vectors—a nucleotide is either present or not in a particular position, without any sense of relative magnitude.

In images, for example, a filter may detect a horizontal edge, which is characterized by bright pixels on one side and dark on the other; if you slightly brighten the pixels on one side, or add some noise to the image, the edge is still characterized by that pattern, which filters are trained to pick up. However, although you could imagine patterns of nucleotides that correspond to an edge, for example, AAAATTTT, there are no intermediate “levels” for a CNN filter to pick up.

Still, CNNs have proved effective on genomic data, such as in the classification of metagenomic sequences of bacteria by taxonomy (Fiannaca et al., 2018). It thus seems that CNNs are not intuitively suited for categorical sequence classification, but empirically, they irrespectively have excellent performance. Why?

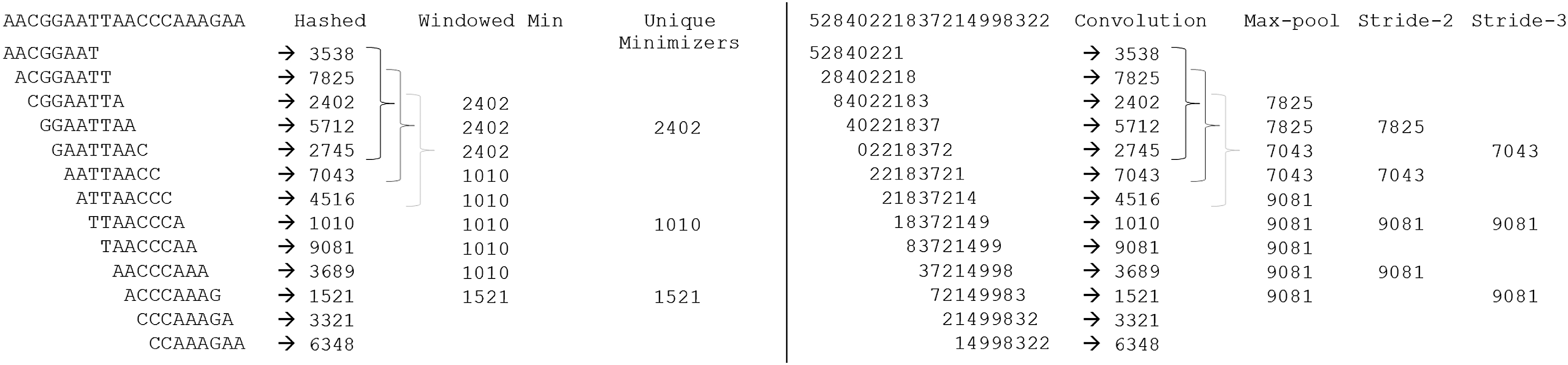

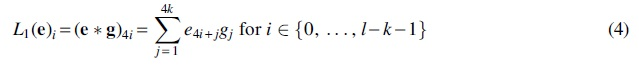

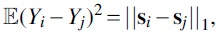

In this study, we prove two technical Theorems 1 and 2 showing that the hash family of dot products with random spherical Gaussian multivariates preferentially selects more extremal elements as maximums, but uniformly randomly selects among equally distinct elements, and that maximums are more likely to be similar to each other. An immediate corollary is then our Main Theorem 3, showing that on categorical sequences, minimizers and untrained randomly initialized convolutional filters with max-pooling (Fig. 1) are nearly equivalent.

(Left) Construction of minimizers in bioinformatics algorithms. A string of nucleotides is separated into overlapping k-mers (

We then show in empirical simulations that these properties manifest as decreased density in highly repetitive sequences as compared with random minimizer schemes, both in simulation and on real human telomeres. Although at least one minimizer is guaranteed to be selected from each window, the number of minimizers that are selected overall determines the density; lowering the density can improve the efficiency of minimizer-based algorithms, as has been a design goal in the minimizer literature (Marçais et al., 2017; Zheng et al., 2020). Our results show that Gaussian convolutions are better for density than random minimizers, although special-purpose methods such as Miniception (Zheng et al., 2020) still do better than Gaussian convolutions.

Lastly, we demonstrate application of these ideas to genome assembly. To our knowledge, there exist no deep learning assemblers—a few studies apply deep learning to consensus-sequence determination (Vrček et al., 2022; Padovani de Souza et al., 2019), but nothing for generation of the assembly graph itself—which would seem to suggest that these problems are not amenable to ML. We take inspiration from the convolutional filter/minimizer equivalence to train a neural embedding of synthetic short-reads from the SARS-CoV-2 genome into 3D Euclidean space that respects linear distance along the genome of the read origins.

Although this is not a full assembler, it does show that at least in principle, the main task of assembly is amenable to deep learning, even in a fully de novo manner, although as might be expected, the computational cost of doing so is likely currently prohibitive. Together, our theory and experiments resolve connect together two seemingly unrelated methods that have both achieved powerful results in computational biology, explaining some of the advantages of using random Gaussian convolutions for feature selection, and hinting at novel ways of combining deep learning with more traditional computational biology algorithms.

Hashing and permutations

The key to our analysis will be in understanding the role of hashing (Carter and Wegman, 1979) and permutations. We recall some basic concepts here. For reference, see any standard text or review (Thorup, 2015).

To illustrate, let us start with a classical example. Let

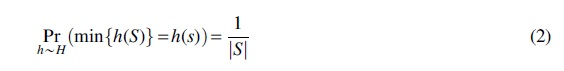

Another more sophisticated modern example is the vector multiply-shift family of hash functions, introduced by Dietzfelbinger (2018), designed to hash vectors to scalars without making use of finite field arithmetic. We introduce it here because the construction is mathematically equivalent to a dot product with uniform random integers in the integer ring, followed by an integer division/bit-shift operation. Let  and

and  as well as a uniform random element

as well as a uniform random element

We define a hash function

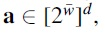

Depending on the application at hand, different properties of a hash function may be desirable, such as universality (Carter and Wegman, 1979), strong universality, k-independence (Wegman and Carter, 1979), or min-wise independence (Broder et al., 2000). Both of the examples given earlier exhibit strong universality (also known as 2-independence).

Roughly speaking, any two hash values can be construed as independent uniform random variables on the range.

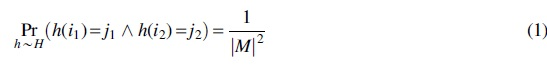

However, in this study, we are primarily interested in permutations. Nontrivial permutations are expensive to encode, but they can be approximated through random hashing. This is the basis of the celebrated MinHash algorithm for computing set similarity (Broder, 1997). Note that for any set

Min-wise independence unfortunately does not follow as a consequence of strong universality, although you can approximate it with sufficiently high degrees of k-independence (Indyk, 2001) or by using a twisted variant of tabulation hashing (Dahlgaard and Thorup, 2014).

Alternately, many real-world implementations eschew the formal guarantees of random hashing, and instead just use a deterministic hash function—such as Murmurhash3 (Appleby, 2008), the SHA (Secure Hash Algorithm) family of cryptographic hash functions (Eastlake and Jones, 2001), or efficient canonical choices of group generators in prime fields. This is very common in bioinformatics software making use of minimizers. However, such constructions obscure the connections we will be drawing in this article.

Minimizers

Minimizers (Schleimer et al., 2003; Roberts et al., 2004) are a local k-mer selection scheme, in some ways, the classic k-mer selection scheme. The most important feature of a local k-mer selection scheme is translation invariance; we want to subsample the set of k-mers in a sequence in such a way that even if we insert or delete a letter at the beginning of the sequence, the set of minimizers does not change very much. There are a number of more modern k-mer selection schemes (Edgar, 2021; Frith et al., 2020; Shaw and Yu, 2021), with slightly different properties, but minimizers were among the first used in computational biology.

Minimizers are related to min-wise hashing (Broder, 1997), but instead of getting features for an entire sequence at once, they break up a sequence into smaller windows and get the minimum hash within each window. That minimum k-mer, a minimizer, is used to match the sequence to some reference, for example, for sequence assembly (Ekim et al., 2021), comparison (Shaw and Yu, 2023), or mapping (Li, 2016). Often, for longer sequences, we match the sequence to a reference only if multiple minimizers from different windows match.

The compressive nature of minimizers appears because most of the time, the minimizer remains constant as the window rolls, so the total number of minimizers for a sequence is much smaller than the total number of windows. Indeed, one of the key metrics considered for minimizer schemes is the density, defined as the fraction of all k-mers in a sequence that are selected.

Let us more precisely state a few standard results. Consider an alphabet

Proof. There are

Thus, the deduplicated set of minimizers of a sequence is much fewer than

Minimizers only require translation invariance, which is satisfied by any arbitrary permutation, rather than needing a random permutation. Indeed, this fact has been exploited to construct nonuniformly random minimizers that are more evenly distributed, or that are likely to be rarer in a genome (Zheng et al., 2020; Jain et al., 2020). These works can reduce the density from the

Of course, some amount of randomness is important; in the worst case, an adversarially chosen permutation that orders k-mers in the same order as appears in the sequence gives no amount of subsampling. Or, more realistically, simply taking a lexicographic ordering on the space of k-mers works quite badly, for example, on poly-A strings where the repetitiveness of the poly-A prefix causes many distinct k-mers to be minimizers of adjacent windows, increasing density, which is bad. One of the main empirical results of this article is that for minimizer schemes based on random Gaussian convolutional filters, repetitiveness actually helps decrease density, improving rather than hurting performance.

Convolutional neural networks

CNNs are inspired by the visual cortex of mammals, and were notably demonstrated to be effective for image processing (Hubel and Wiesel, 1959; Fukushima and Miyake, 1982). CNNs are characterized by applying a set of filters in a translation-invariant noise-robust way to different parts of an image to generate a set of features, adding a pooling layer to reduce the information passed downstream, and then following up with a feed-forward neural network for analyzing the features and connecting them to a classification or prediction (Lawrence et al., 1997). Intuitively, each filter looks for a particular type of feature (e.g., an edge or corner) at every position of the image. Of course, this is a gross oversimplification, and modern architectures are much more complex, but this captures the basic idea.

For our analysis, we consider a simple 1D-CNN on a 4-letter alphabet

More formally, let

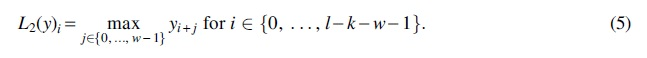

The second layer of the CNN is the max-pooling function, given by

After the max-pooling layer, the remainder of the neural network is trained to use the outputted features for the designated classification or prediction task.

It should be apparent that the setup for convolutional filters closely resembles that of the setup for minimizers, where the “hash function” is a dot product with the weights vector. In both cases, some function is applied to k-mers in the string on a rolling basis. Then, we keep the extreme value of the output of that function, whether it is a minimum or maximum, and pass that onto further analytical pipelines. We address hereunder why convolutional filters work on categorical sequences, when the usual story told is about robustness to noncategorical (ordinal/interval/ratio) noise in feature selection.

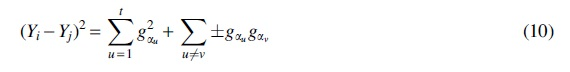

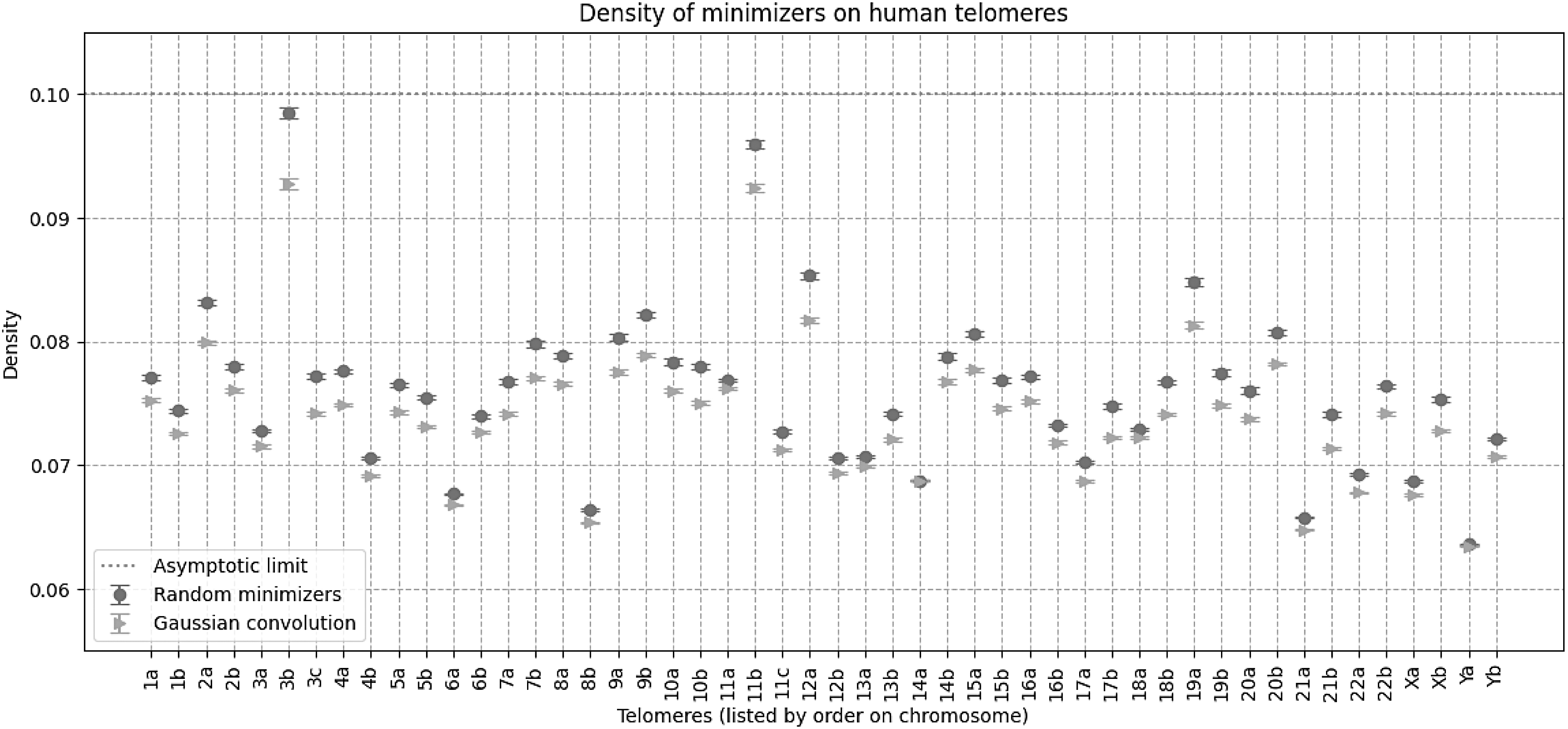

PROOFS

Recall that the vector-multiply-shift family of hash functions defined by dot product with a uniform random ring element, followed by division without remainder, is two-independent. Roughly speaking, our main claim is that the family of hash functions defined by dot product with a multivariate spherical Gaussian has the property that equally distinct k-mers are equally likely to be selected as minimizers/maximizers, and more distinct k-mers are more likely to be selected as minimizers/maximizers, but the set of minimizers/maximizers selected tends to be similar to each other.

To make this rigorous, we first need to know what we mean by the “distinctness” of a k-mer. We define that by the total (summed) Hamming distance from all the other unique k-mers in a set; this is basically the absolute deviation of the k-mer from the set. We will call this the “degree” of the k-mer in the set—consider a graph with k-mers as vertices and edge weights corresponding to the Hamming distance. Our first goal is to show that the degree of a k-mer determines its likelihood of being either the minimum or maximum under the Gaussian convolution hash family. Later we will also show that extrema also correlated in composition, so the overall set of selected k-mers is similar to each other.

Because we are considering convolution with a multivariate spherical Gaussian, we can take advantage of a large body of literature on manipulating i.i.d. Gaussians. However, we must first rewrite a k-mer in a one-hot encoding so that convolution with a multivariate Gaussian is well-defined (otherwise, what does it mean to multiply a nucleotide “C(ytosine)” with a real number). Given these preliminaries, we are now able to state our theorem.

Let

Then for all

Proof. The naive approach would be to try to directly prove that

Let us order all the elements of S and place

First, we are going to relate the degree of an element to the square of its hash's deviation from all the other hashed values.

Proof. Each Yi is a sum of 1D Gaussians from

Since we canceled out any shared terms, all the remaining Gaussians are i.i.d., and due to the symmetry of a Gaussian under reflection, that means that we have that many independent Gaussians. The sum of t independent  and the lemma follows by linearity of expectation.

and the lemma follows by linearity of expectation.

Interpreting Lemma 2, the degree

We now define a new random variable by the difference of the squared deviations

When

As in the proof of Lemma 2, we use the fact that the Yi's arise as sums of selected Gaussians from

Then  is the sum of t squared Gaussians and all of the cross terms, which are either positive or negative copies of products of two independent Gaussians (for notational simplicity, we did not bother writing out which copies are positive or negative):

is the sum of t squared Gaussians and all of the cross terms, which are either positive or negative copies of products of two independent Gaussians (for notational simplicity, we did not bother writing out which copies are positive or negative):

Summing everything together, we can rewrite

where the ci's and

The signs of the

which is just the difference of two independent parameter-1

In addition, using

The only asymmetric part of Z is, therefore, the leftover

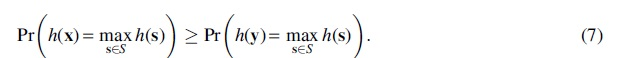

Proof. The proof is elementary; intuition is being correlated with a maximizer makes you larger on average.

First note that the event that there are two distinct maximizers has measure zero, so in this proof we assume

Then we can explicitly compute

Then subtracting and substituting in the distance assumption, we get

which completes the proof.

We can now directly apply Theorems 1 and 2 to get Theorem 3, which we state in full generality.

It is at least as likely to choose the most distinct categorical feature in a window as any other k-mer, where distinctness is measured in absolute Hamming deviation from the other features;

And the set of minimizers it chooses overall are likely to be closer in Hamming distance to each other than a random set of k-mers.

Proof. A one-hot encoding of a categorical vector precisely gives binary vectors with the same number of set bits. The setup follows directly from the definitions set up in Sections 2.2 and 2.3 and illustrated in Figure 1: convolution with a randomly initialized spherical Gaussian is clearly some kind of hash function, vector-mapped across positions in the sequence (although perhaps not one with the standard properties), and a max-pooling operation is equivalent to taking a minimum across windows. However, we proved in Theorem 1 that a dot-product hash function with spherical Gaussian parameters preferentially selects more distinct k-mers, but is equally likely to select k-mers that are equally distinct. The hash function so defined striates k-mer features by degree (Hamming distance from all other k-mers), and all k-mers of a given degree have the same probability of being chosen as a minimum or maximum, but at least as high a probability as any k-mer of lower degree, proving the first part of Theorem 3. The second part of Theorem 3 is a consequence of the Theorem 2, as we showed that the closer a k-mer is to the universal minimizer, the more likely it is to be selected as a local minimizer.

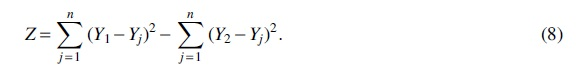

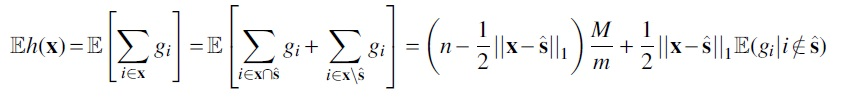

Although we have proven general properties of using Gaussian convolution as a minimizer selection scheme, our theorems do not say anything about in what situations these behaviors manifest. To address that, we turn to numerical simulation (Fig. 2). The correct interpretation of our theory is that k-mers that are further away (in Hamming distance) from other k-mers in a window, but similar to other minimizers, are more likely to be selected.

We generated repetitive sequences of length 1007, with repeat length on the x-axis and a 10% repeat mutation substitution rate. We used a k-mer size of 8 and a window size of 19, for an expected random minimizer density of 0.1 over the 1000 k-mers of the sequence. All data points are an average of 400 random runs, with standard error bars. (Left) The average Hamming distance of the minimizers from all k-mers in the sequence is basically the same for random minimizers and Gaussian convolutions; this is consistent with Theorem 1. (Center) However, the average distance from the other minimizers is significantly lower for Gaussian convolutions, as predicted by Theorem 2. (Right) On repetitive windows of a sequence, Gaussian convolutions tend to have lower density than random minimizers.

This phenomenon thus primarily occurs in repetitive sequences, where k-mers within a window are similar to each other. We thus run our experiments on repetitive sequence with mutations, both by simulating tandem repeats with mutations, and measuring on real human telomeres. ‡ Finally, we finish by designing a CNN architecture to learn a neural embedding of simulated short-reads that respects linear distance along a genome, the first step in building a genome assembly graph. §

We chose a k-mer size of 8 for our experiments, and a total sequence length of 1007 (so that we have 1000 k-mers). We chose a window size of

We measured three different quantities: (1) average distance from all k-mers, (2) average distance from other minimizers, and (3) the density of the minimizers. The first two quantities correspond to empirical validation of our two technical theorems. Random minimizers and Gaussian convolutions are on average the same distance from all k-mers, asymptotically approaching 6 because 8-mers with a 4-letter alphabet on average share 2 characters; importantly, Theorem 1 says that Gaussian convolutions should be on average far from other k-mers, and this turns out to match random minimizers. However, Gaussian convolutions minimizers are on average much closer to each other than random minimizers are; this is due to Theorem 2.

One seeming consequence is that Gaussian convolution minimizers have lower density in repetitive windows than random minimizers do. Once the repeat length grows to the window size, the density effects disappear entirely, but we see a minimum density as low as

As a caveat, the decreased density is not alone a reason to choose Gaussian convolution minimizers though, as Gaussian convolution minimizer density only decreases for repetitive regions, whereas existing work such as Miniception allows a consistent lowered density of around

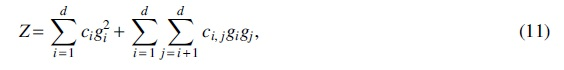

Real human telomeres

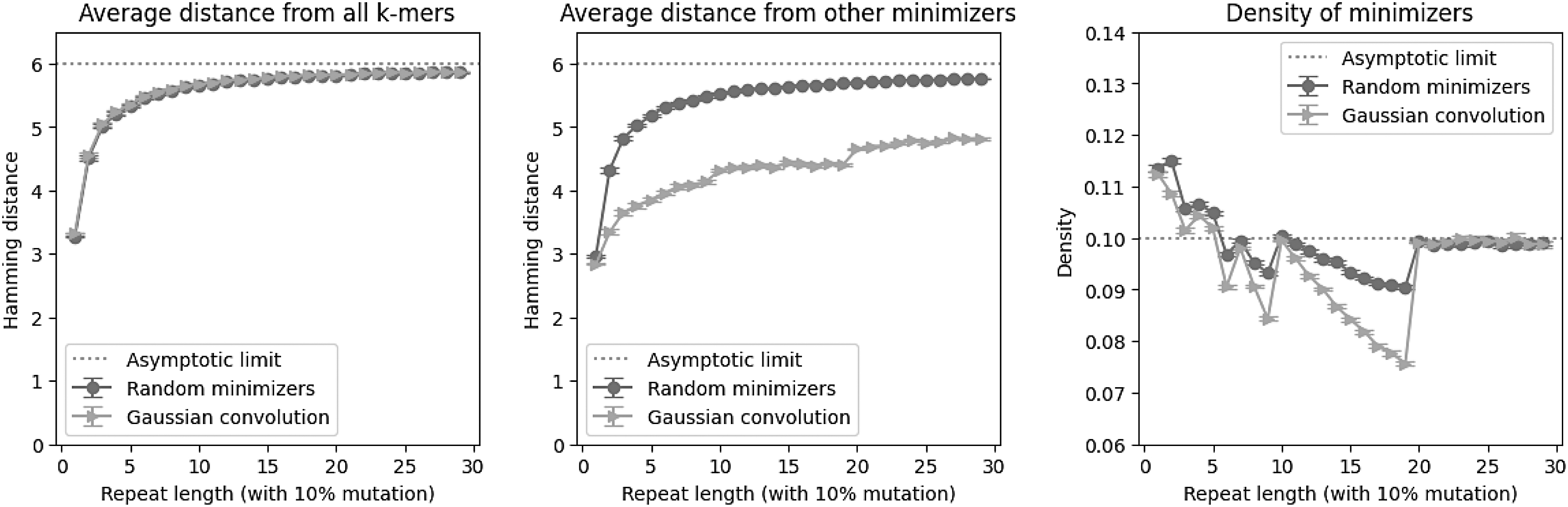

We extracted the annotated telomeric regions from version 2.0 of the Telomere to Telomere consortion CHM13 project (Rhie et al., 2022). Each chromosome has at least two annotated telomeric regions at the ends, and some chromosomes have an additional telomeric region; we labeled all telomeric regions in order of position on the genome (the first telomere on chromosome 3 is telomere 3a, the second is 3b, etc.). Then, using the same parameters (k-mer size of 8 and window size of 19, for an expected random minimizer density of 0.1 over the telomere), we compared the density of using random minimizers versus Gaussian convolutions. These are highly repetitive regions of the genome.

We see in Figure 3 that the performance on real repetitive data generally matches the simulated data: the Gaussian convolutions in expectation have lower density than random minimizers—occasionally the trend is the opposite direction, but we averaged over 400 random runs. This behavior is very different from that of other k-mer selection schemes, like open syncmers (Edgar, 2021), which can sometimes exhibit much higher density in repetitive regions.

Density on human telomeres, using k-mer size of 8 and window size of 19, for an expected random minimizer density of 0.1. All data points are an average of 400 random runs, with standard error bars. The general trend on real data matches the simulated data: Gaussian convolutions have lower density than random minimizers. Note that the x-axis is annotated with the chromosome and telomere number.

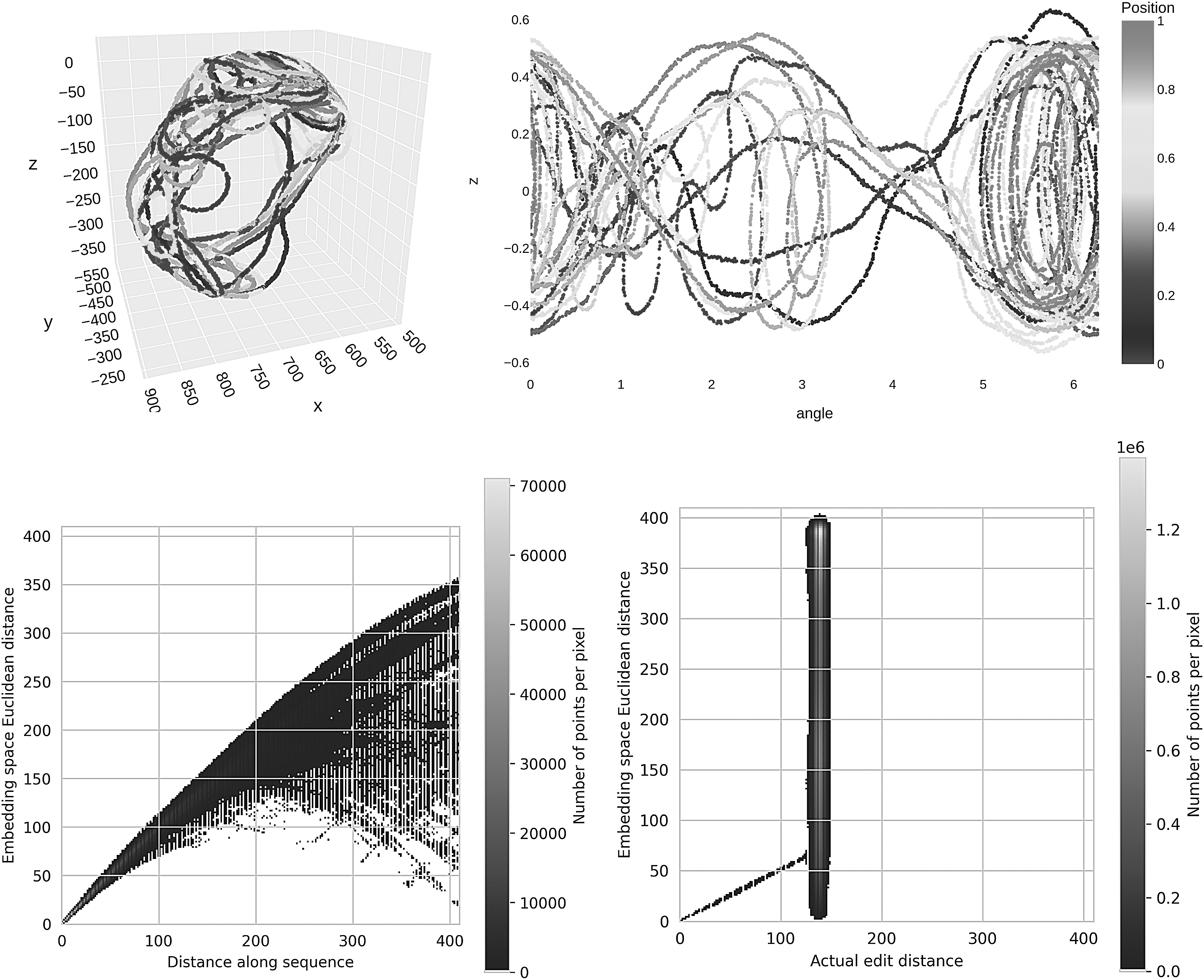

We started with the SARS-CoV-2 genome NC_045512.2, which is just under 30 kilobases long. A corpus of synthetic short-reads of length 256 bp were generated to a depth of coverage of 200. The synthetic reads had an error rate of 1%, evenly divided three-ways among insertions, deletions, and substitutions. Reads with deletions were padded randomly to ensure constant length; insertions were uniformly chosen among the four nucleotides, and substitutions were uniformly chosen among the other three nucleotides.

Our architecture was a 1D-CNN designed to pick out k-mers for

Then, for training, in each epoch, we randomly generate one “adjacent” read (a rotation followed by random substitutions) for each read in our corpus, and train the network to produce an embedding that recapitulates the rotation length, which we use as a proxy for training the network on edit distance, which takes quadratic time to compute. Thus, the network learns embeddings for pairs of reads similar to our reads, without the danger of overfitting on any particular pairs. For this experiment, we trained for 16,000 epochs, although the training and validation loss both started plateauing at around 3000 epochs.

Figure 4 shows that the neural network did indeed learn an embedding that respected the linear distance along the genome—that is, reads that are neighbors in the original genome are still neighbors in the embedding. We should add though that this training took 27 hours on Google Colab with an A100 GPU to just get an embedding, without even a full assembly, as compared against minutes to do the same with a modern assembler, so this is highly impractical.

Euclidean embedding of synthetic short-reads (length

However, it does show that neural networks are able in principle to de novo generate an almost assembly graph, by simply connecting each read with its nearby neighbors in the embedding. In addition, note that for visualization purposes, we embedded into 2D/3D, which imposes a heavier information bottleneck, and that a practical deep learning assembler would likely use larger embedding dimension.

Minimizers and CNN features do not depend on more classical notions of uniform hashing. Indeed, this idea has been independently explored for both minimizers and CNNs. On the minimizer front, there are modifications to the hash function for better density (Zheng et al., 2020) or postprocessing the permutation using inverse document/genome frequency (Jain et al., 2020). For CNNs, feature construction is largely trained, instead of designed, but there is also work specifically on architectures for promoting better features (Caron et al., 2018).

Here we show that just initializing with Gaussian weights causes some correlation to distinctiveness, a proxy for inverse frequency related to density in repetitive regions. Notably, clusters of similar data are mapped close together with a Gaussian convolution, which does not happen for random minimizers. This is a known property of CNN filters when it comes to ordinal data, and we show it remains true for categorical data. In biological terms, all k-mers matched by a spaced seed get mapped close to each other by a Gaussian convolution.

There is recent work in the computational biology literature that has demonstrated it is possible to use deep neural networks to train better minimizer schemes (Hoang et al., 2022), but that work treats minimizers as just another function to be learned. This does highlight the primary limitation of our theory, which is that our analyses only hold at initialization time, and more sophisticated mathematical machinery is necessary to understand behavior after training. We did follow up with an empirical exploration of applying and training CNNs for neural embeddings of reads for assembly graphs, but that was in many ways beyond the scope of what we actually proved.

Some of the other seeming limitations are related to generalizing our results beyond a single layer with a single convolutional filter and stride lengths of one, but these limitations are actually easily overcome. Our theorems generalize to 2D (or 3D) filters, at only the cost of notational complexity. Longer stride lengths simply amount to a sparsification of the redundancy found in neighboring minimizers; minimizer schemes sparsify through deduplication of the minimums, but that operation is not easily vectorized, so CNNs instead sparsify by using longer stride lengths.

Stride-length sparsification is less efficient space-wise than a full deduplication, but with high probability conveys the same information, provided that the strides are not too long (Fig. 1). Also, multiple filters within a layer correspond to just taking multiple minimizers within each region. Another reason why this may be helpful for CNNs is due to the fact that memorizing all the output values of a single neuron is possible for CNNs, but rather hard at training time; thus, breaking up the minimizer information across multiple filters likely makes it easier to train.

Furthermore, having multiple layers of convolutional filters effectively creates minimizer schemes that are able to learn more complicated hash functions than just Gaussian dot products. These theoretical connections deserve further study.

In this article, through a careful probability computation, we proved that the family of hash functions defined by Gaussian dot products is useful for minimizer style analysis. By recasting CNN convolutional filters in a hash-function-based framework, we were able to demonstrate their equivalence to minimizers, one of the workhorses of computational biology. Furthermore, we validated our theory empirically, showing a lowered density in repetitive regions for Gaussian convolution minimizers, both in simulation, and on real human telomeres.

Lastly, we demonstrated that CNNs can learn neural embeddings for the construction of assembly graphs, although at present, it is still so slow as to be impractical. We hope that the connection proves fruitful for both methods, enabling the design of better minimizers, as well as providing some mathematically rigorous justification for why CNNs work in categorical data space, and hinting at future hybrid algorithms taking advantage of both classical algorithms and deep learning.

Footnotes

ACKNOWLEDGMENTS

I thank Daphne Ippolito, Jim Shaw, and David Rolnick for fruitful discussions.

AUTHOR CONTRIBUTIONS

Conceptualization, methodology, software, validation, formal analysis, investigation, resources, writing—original draft, writing—review and editing, visualization, and funding acquisition by Y.W.Y.

AUTHOR DISCLOSURE STATEMENT

The author declares there are no conflicting financial interests.

FUNDING INFORMATION

We acknowledge the support of the Natural Sciences and Engineering Research Council of Canada (Grant No. RGPIN-2022-03074).