Abstract

In the past 10 years, debate has arisen over the need for outcome assessment within psychiatric services [1,2]. With increasing competition on health service funding, outcome measurement has been championed as a mechanism by which psychiatric services can demonstrate their effectiveness [3]. Writers in the area have proposed instruments that can be used across service settings that capture the main outcome domains in psychiatric populations [4]. These instruments have the unenviable task of being all things to all people, while at the same time being sensitive to change and possessing psychometric properties that are both reliable and valid.

In the early 1990s, the ambitiously titled Health of the Nation Outcome Scale (HoNOS) was developed to be used by clinicians in their routine work to measure consumer outcome in psychiatric services [5]. After extensive field trials within the UK involving over 2700 administrations of the instrument, 12 scales were identified as important domains in measuring psychiatric outcome [6]. The authors claimed that ‘the instrument was generally acceptable to clinicians, sensitive to change or the lack of it, showed good reliability … and compared reasonably well with equivalent items in the Brief Psychiatric Rating Scales and Role Functioning Scale’ [6]. The appeal of an instrument often lies in its comprehensive coverage of clinical domains, its ease of use by all mental health practitioners and its brevity [7]. Indeed for these attributes (among others), the HoNOS was chosen by the Commonwealth Government of Australia in 1996 to perform as one of its primary outcome measures in a national study involving 22 psychiatric service sites, 4500 clinicians and 60 000 clinical ratings. Although these are important qualities for the use of an instrument, these qualities say little about the instrument's ability to measure what it claims to measure (i.e. its construct validity) and its generalisability across service sites.

Results of published studies using the HoNOS have been mixed [3]. A number of papers have raised concerns about the instrument's discriminatory power [8], sensitivity to change in long-stay or chronic patients [9,10], and predictive power [11]. Concerns for the use of the HoNOS in assessing outcome have also been raised [12] as well as its utility in care planning [13]. Other papers have been more favourable, citing its usefulness in monitoring outcome measurement and research [14,15]. Papers have also used the HoNOS for criterion-related validity [15] for other instruments. The concern raised by the present paper, centres around whether the HoNOS has established evidence for solid construct validity, and whether the proposed factor structure of the instrument is invariant across similar, or indeed disparate, psychiatric populations. For the instrument to claim to measure outcomes across similar service sites and psychiatric populations, the underlying factorial structure of the instrument must remain equivalent. Passing reference within the field trials have been made regarding confirmatory factor analysis, however, no fit indices have been provided indicating the model's ability to explain the data [6]. It appears that the underlying factor structures of the instrument have largely been guided by principle components analysis and other exploratory factor analytic techniques.

The seminal work by Jöreskog [16] has allowed researchers over time to statistically and empirically test a priori particular linkages between observed variables and their underlying factors. While exploratory factor analysis is designed to investigate linkages between the observed and latent variables when they are unknown [17], confirmatory factor analytic techniques allow these linkages to be tested a priori. How well the model fits the data tells the researcher whether the prescribed latent structures are observable and validated. Simultaneous confirmatory factor analysis can allow for different or similar populations to be measured simultaneously on the same measurement and structural model. This technique provides insight into whether outcome instruments have the same internal structure across populations, and whether comparisons of populations can proceed based upon the model proposed by the developers of the instrument. Since the HoNOS has been argued to be useful in comparative analysis between health services and, indeed, has been used to perform such a task [18], it is important to investigate whether these internal structures hold equivalent between populations. In the instance of this paper the interest is in factorial invariance across similar regional mental health services.

The purpose of the study is two-fold. First, to test for the factorial validity of the HoNOS separately across health regions using confirmatory factor analytic techniques; and, second, to test for equivalence of factorial measurement and structure across the two health regions. By doing this we can claim whether the underlying structure of the HoNOS holds equivalent between similar health regions or whether the fundamental structure of the instrument is different.

Method

Data collection

From September to November 1996 both the south-west metropolitan (Fremantle) and south-east metropolitan (Bentley) regions of Perth, Western Australia were involved in a national study called the Mental Health Classification and Service Costs Project (MH-CASC). This 22-site study aimed to derive casemix funding based upon surveying sites around Australia. The Bentley and Fremantle regions serve populations of 120 000 and 170 000 residents respectively. Each region is served by an approved psychiatric hospital with 50 beds. Both provide an integrated, community-focused, multidisciplinary, team-based approach to service delivery. This includes mobile community teams, a day hospital program, early psychosis intervention, psychogeriatric services and acute and secure ward facilities. Each service has evolved from the devolution of one central mental health institution in the southern metropolitan region of Perth in 1994 into two comparable services. Mental health workers within each of the regions were trained as part of the study on how to administer the HoNOS. Standardised case scenarios prior to the study period were provided to test for administration convergence and inter-rater agreement on each of the 12 items within the HoNOS. These items included: item 1, problems resulting form over-active, aggressive, disruptive or agitated behaviour; item 2, suicidal thoughts or behaviour, or non-accidental self-injury; item 3, problem drinking or drug taking; item 4, cognitive problems involving memory, orientation or understanding; item 5, problems associated with physical illness; item 6, problems associated with hallucinations and delusions; item 7, depressed mood; item 8, other behavioural problems; item 9, problems making supportive social relationships; item 10, problems associated with daily living; item 11, opportunities for using and improving abilities; and item 12, opportunities for creating and improving abilities (occupational and recreational). Each of the 12 items were placed upon a five-point Likert scale asking raters to rate over the past 2 weeks the most severe problem on each of the 12 items as one of (0) no problem, (1) minor problem, (2) mild problem, (3) moderately severe problem or (4) severe to very severe problem. As part of the data collection regime, the HoNOS was used to record the opening and closure of all outpatient episodes that occurred within the study period. The present study utilised the opening assessment of all initial community outpatient episodes within both health services. Subsequent outpatient episodes within the study period were excluded from the data sets so that each line of data identified a unique client. Data were also recorded for each outpatient client on ICD-9 diagnosis, age, gender and length of outpatient episode in days within the study period. Tests for equivalence on outpatient characteristics between the two health regions including Chi-squared and analysis of variance, were performed.

Development of the HoNOS baseline model

The baseline model was derived from the original scoring structure outlined in the HoNOS scoring manual [5]. Four factors were identified using principle components analysis: (i) behaviour, denoted by items 1, 2 and 3; (ii) impairment, denoted by items 4 and 5; (iii) symptoms, denoted by items 6, 7, and 8; and (iv) social problems, denoted by items 9, 10, 11 and 12. The confirmatory factor analytic model in the present study hypothesised a priori that: (i) responses to the HoNOS could be explained by the four-factor model; (ii) each item would have a non-zero loading on the HoNOS factors it was designed to measure and zero loading on all other factors (iii) the four-factor model would be correlated; and (iv) the error/uniqueness terms for the items would be uncorrelated [17]. The EQS for Windows Version 5.7b was used in the structural equation modelling [19]. The baseline model was first tested on the Fremantle health region. The Comparative Fit Index (CFI) was used in the present study as the standard fit index for model specification [17]. Goodness of fit indices indicated the relative amount of variances and covariances jointly explained by the model; they ranged from 0 to 1.0, with values close to 1.00 indicating an acceptable fit [20]. Models with fit indices higher than 0.9 were considered to have adequate fit to the data [21,22]. Although the Normed Fit Index (NFI) has often been the practical criterion of choice, the NFI can have a tendency to underestimate fit in small samples [23]. Bentler revised the NFI to take sample size into account and proposed the CFI [24]. Model specification was derived using the Maximum Likelihood method allowing for the Lagrange Multiplier Test to inform the respecification of the baseline model where required. However, as recommended by a number of researchers [16,25–27], model fit was based upon multiple criteria that considered statistical, theoretical and practical considerations, namely the interpretability of the instrument.

Tests for factorial invariance

The proposed baseline model was entered allowing for the Fremantle region to provide the first data set with the Bentley data set stacked afterwards. Models with increasing restricted hypotheses were performed to identify the source of the nonequivalence. The first hypotheses tested the equivalence between the factor-pattern loadings. The error/uniqueness and, finally, the factor variances and covariances were held equal between the two health regions to test for structural invariance at these levels. Failure to reach invariance was determined by a significant change in Chi-squared values as well as significant univariate Chi-squared results on the constrained parameters for each successive model specification. Further restricted hypotheses are aborted when the previous restrictions proved non-invariant [20].

The procedures for testing invariance between two populations were identical to testing those between model fitting. In both instances, certain parameters were constrained to be equal across groups and the result was compared with a less restrictive model in which the same parameters were free to take on any value. If the differences in Chi-squared values (Δχ2) were not significant, the hypothesis that the two populations are equivalent is accepted [20].

Results

Patient and health service characteristics

Table 1 shows the tests for equivalence across health services on age, gender and length of episode. Diagnosis using ICD-9 was tested for equivalence, diagnostic categories with less than five cases in each cell were dropped from the analysis. Both gender (χ2 = 1.06, df = 1, p = 0.302) and diagnosis (χ2 = 7.52, df = 6, p = 0.275) were statistically non-significant. Age (F = 4.94, df = 1, 1955, p = 0.026) and episode length in days (F = 4.554, df = 1, 1955, p = 0.033) were significantly different showing clients in the Bentley region were on average 2 years older and spent 2 days longer within an outpatient setting. These differences can be explained by the younger transitory population attracted to the urban environment of Fremantle city while the Bentley region is more characteristic of a suburban catchment area.

Results of equivalence across health services

Confirmatory factor analyses

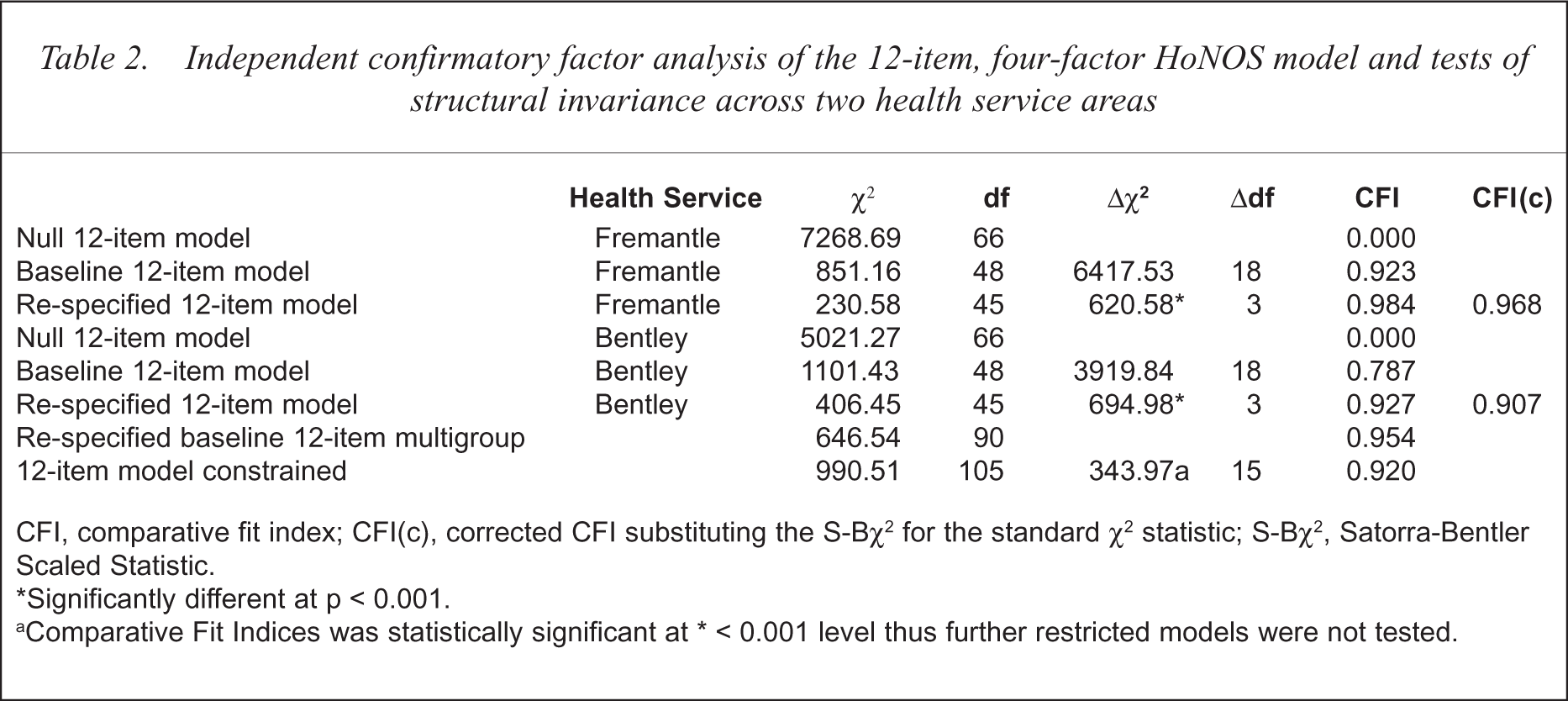

Table 2 indicates the confirmatory factor analysis of the 12-item, four-factor HoNOS model for both health regions. The CFI indicated that the four-factor model demonstrated acceptable fit to the data for the Fremantle region CFI = 0.92 (χ2 = 851.16, df = 48) with the model converging within 11 iterations. This model showed inadequate fit for the Bentley data CFI = 0.78 (χ2 = 1101.43, df = 48). Observation of the Lagrange Multiplier Test from both regions, independently, indicated that correlations between the error/covariances of items 7 and 8, 11 and 12, and 2 and 7 accounted for a substantial proportion of the mis-specification of the Bentley data. These parameters were then allowed to be freely estimated for both data sets and the respecified baseline model was then computed. This had led to improvement in fit (CFI = 0.98) for the Fremantle data and a substantial improvement in the Bentley data (CFI = 0.93) with the change in Chi-squared values improved by 694.98. With the respecified four-factor model confirming good fit in both health regions, it was now safe to proceed with testing for structural invariance.

Independent confirmatory factor analysis of the 12-item, four-factor HoNOS model and tests of structural invariance across two health service areas

CFI, comparative fit index; CFI(c), corrected CFI substituting the S-Bχ2 for the standard χ2 statistic; S-Bχ2, Satorra-Bentler Scaled Statistic.

Significantly different at p < 0.001.

Comparative Fit Indices was statistically significant at ∗ < 0.001 level thus further restricted models were not tested.

Tests for multivariate normality for both health services proved problematic (normalised estimates Fremantle = 90.74, Bentley = 52.85). Despite eliminating outlying cases from each data set, the multivariate kurtosis remained high suggesting highly non-normal distributions. From these results the Satorra-Bentler Scaled Statistic (S-Bχ2) was calculated for each service area (see Table 2) to account for sample kurtosis values. This fit index has proved to be more robust under various distributions and sample sizes [17].

Tests for factorial invariance

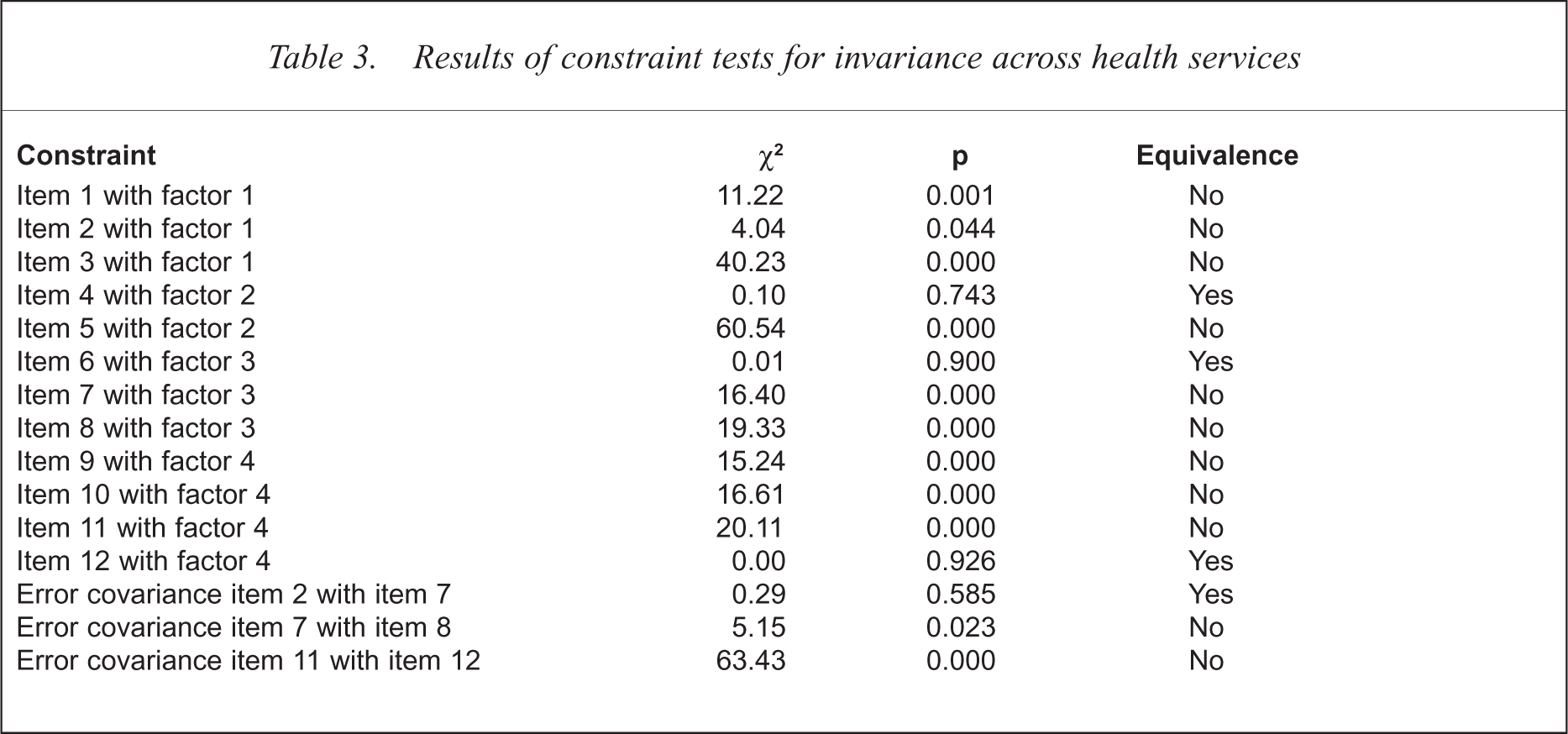

The first test centred on the investigation of the scaling units for each factor. Non-invariance between the observed variables on their respective factors, suggested that the items are differentially valid across groups [28]. Testing for invariance involved specifying the observed items on their respective factors and the respecified error/covariance items mentioned previously to be constrained equal across the two health regions. In all, 15 constraints were specified including the 12 items on their respective factors and the three re-specified error-covariances. Observation of the change in Chi-squared values in Table 2 reached significance indicating non-invariance between the two health regions. Of the 15 constraints, only four were invariant, leading to the conclusion that there was substantial differentiation in the interpretation of the items between the two health services. Such results eluded to the notion that comparisons of health services on the HoNOS may be problematic because HoNOS scores are likely to be differentially valid between health services. Of the 12 items, item 4 (cognitive problems involving memory and orientation), item 6 (problems associated with hallucinations and delusions) and item 12 (occupational and recreational activities) remained invariant between the two health services. The other items all showed significant variance in interpretation (see Table 3).

Results of constraint tests for invariance across health services

Conclusions

Factorial validity of the HoNOS was first tested using the original four-factor model prescribed by the originators of the instrument [5]. Using confirmatory factor analytic techniques for each health service independently, the model demonstrated substantial fit once the error/covariances between items 7 and 8, 11 and 12, and 2 and 7 were free to be calculated. The original model along with these re-specifications were then constrained to test for factorial invariance between the two health services. Invariance of the item measurements on their prescribed factor would lend support to the generalisability of the HoNOS across similar health services. The model proved non-invariant, indicating that there was substantial differentiation between the two health services on the interpretation of the HoNOS. Non-invariance in interpreting the HoNOS may have lain in the attempt by the originators of the HoNOS to construct items that are too inclusive and over-comprehensive. In their attempt to create items which are broad and comprehensive, they missed the specificity required in producing stable and invariant item interpretation. Including item descriptions which allowed for clinical ratings to be based upon a multiplicity of observed behaviour as evidenced in item 1, may have contributed to structural invariance between two similar health services. In the instance of item 1, it was difficult to attribute whether the rating of the item was based on disruptive, overactive or aggressive behaviour, and in the attempts of clinicians making judgements of ratings, the item may have had different interpretations. In other words, in attempting to be comprehensive, the items lent themselves to possible ambiguity in rating.

The original model mis-specification was largely due to correlating error between items 7 and 8, 11 and 12, and 2 and 7. This suggests that systematic error may have operated in both health service populations, highlighting the need to reinvestigate the relationship and, in particular, the order in which the items are assessed. The process of prescribing each item to its respective factor in order of each other, begs the question as to whether the confirmed factor structures are due to response-set bias, rather than to true observed factor loadings. By reordering the item responses in a randomised manner, the HoNOS could be re-examined to observe whether the four-factor model is reconfirmed. Debate continues as to whether models should be re-specified with correlated error as demonstrated in this paper. Byrne and others argued that such model specifications are justified, because they ‘represent nonrandom measurement error due to method effects such as item format associated with subscales of the same measuring instrument’ [20]. Equally, this increases the likelihood for models to be over-specified in the attempt to make a model fit the data by allowing correlated errors to be freely estimated in most instances. What was favourable for the HoNOS is that only three error covariances were respecified, with significant gains in the improvement of model fit. What was also encouraging was that none of the observed items cross factored, which would require a re-conceptualisation of the factors themselves.

One shortcoming of the present study which can be levelled at other confirmatory factor analytic studies on instruments used in psychiatry were the independence of observations [29–32]. Although independence has been ensured by including only discrete observations of a single patient, there is uncertainty as to whether the observation is at the point of the patient or the observer. Some studies have tried to overcome this by including cases where ratings on the instrument were made by different raters but of the same patient [30], while most studies have not provided any detail on whether each observation was rated by a unique and independent rater for each observation [32]. In one study, only five clinicians were used to rate the observations used in the validation study [29]. The problem lay particularly in the type of instrumentation used, as all these instruments are interview-based or require expert evaluation rather than self-report. With these types of instruments, the observations can be one-to-many, where the rater observes a number of patients. Where this has serious problems for factor-analysing observational instruments is in the question of whether the co-varying structures of the instrument occur due to true independent observations of the items within the instrument, or as a result of the predetermined constructs held by the raters. The problem with some instruments within psychiatry is that factor structures are prescribed as part of the training before rating begins [33]. It is little wonder that these structures are then confirmed in factor-analytic analysis. It is possible that the models of how clinicians observe psychopathological phenomena or, in the case of the HoNOS, the problems caused by these psychopathologies may be nested within the model of how these phenomena naturally arise. It is difficult in this instance to separate the bias of the rater from observational phenomenon. When one is measuring structural invariance between health services on observational instruments, one may in fact be measuring the differentially prescribed notions of psychopathology, or problems caused by that psychopathology rather than psychopathology itself. One limitation of the present study is that it was not possible to test for sources of variance between the raters and the rated. Further research using this multilevel approach will enable researchers to determine whether structural invariance is the property of the instrument, or how the model of the instrument is predetermined to be used.

One of the main purposes of outcome assessment is to draw conclusions from instruments as to the current status of different health services. This allows researchers to make meaningful and informed comparisons of health services based upon the domains of interest. It is important that the interpretation of these domains (whether they be measures of psychiatric functioning or social functioning or any other outcome) be interpreted in an equivalent manner. Confirming factorial invariance for a well-recognised instrument allows the instrument to act not only as a valid and stable measure of outcome, but also as an instrument by which other instruments can be criterion related. The present study sheds some doubt on the ability of the HoNOS to hold equivalent interpretability across similar health services. While the measurement and structural model were confirmed, the way the model was interpreted may have varied between health services. This may be due to the way the items were constructed, the order in which they were presented and the lack of specificity in item description when ratings are made. Although it is important to develop items that are inclusive and broad in their domain coverage, this may lead to ambiguity in exactly what is being measured. Items 1, 2 and 6 within the HoNOS may be expanded to investigate each of the symptoms described in each item. For example, in item 1, problems with over-active behaviour may be measuring more manic behaviour than aggression, and disruptive behaviour may be the response of overactive behaviour rather than a symptom in itself. Including a number of causal pathways by which a problem can be assessed may lead to difficulty in item interpretation. With respect to item 6 for example, are clinicians asked to rate hallucinations or delusions or both in the same instance, and can these symptoms be successfully included together without compromising item interpretability? The other concern is that both health services recorded systematic correlated error between similar items. It is quite likely that responses on suicidality in item 2 will have affected ratings of depression on item 7 which in turn affects scores on other behavioural problems rated in item 8. It is the purpose of each item to measure a given phenomenon independent of other observed phenomenon, despite requiring the covariation of prescribed items to measure a given latent construct. Good psychometric test construction allows for each item to be independent of the effects of other items. The problems faced with the HoNOS may lie in the merging of symptoms with behaviours where the effects of one symptom may affect the rating of another when independence of rating is desired. This is further compounded with observational instruments, as the instrument needs to overcome the constructs of psychopathology already predetermined by the observer.

The challenge for instrument designers in psychiatry may not be to develop more instruments where many are provided, but to concentrate on the well-established instruments and thoroughly investigate their psychometric properties using such techniques as confirmatory factor analysis and structural equation modelling. A discussion needs to occur about whether observer-based instruments such as the HoNOS are as ‘expert rated’, as some may claim them to be, or are elaborate tests of predetermined social constructs of psychopathology? Identifying well-defined items and robust latent constructs will allow outcome assessment to meet the challenges and claims to its importance in psychiatry.

Acknowledgements

The author wishes to thank both the Fremantle and Bentley Mental Health Services for providing the data for the research, and to Peter Sevastos, Psychology Department, Curtin University for his technical advice on the statistical analyses.