Abstract

This article proposes a flexible and distributed stochastic automaton-based network partitioning algorithm that is capable of finding the optimal k-way partition with respect to a broad range of cost functions, and given various constraints, in directed and weighted graphs. Specifically, we motivate the distributed partitioning (self-partitioning) problem, introduce the stochastic automaton-based partitioning algorithm, and show that the algorithm finds the optimal partition with probability 1 for a large class of partitioning tasks. Also, a discussion of why the algorithm can be expected to find good partitions quickly is included, and its performance is further illustrated through examples. Finally, applications to mobile/sensor classification in ad hoc networks, fault-isolation in electric power systems, and control of autonomous vehicle teams are pursued in detail.

Introduction

Networks of communicating agents, including sensor networks and autonomous-vehicle teams, require distributed algorithms for a variety of tasks, including data communication/routing, estimation/agreement, and pattern-formation control, among others (see [1] and [2] for interesting overviews). In this article, we put forth the perspective that algorithms for network

Distributed algorithms for self-partitioning may be valuable for various sensor networking and autonomous vehicle control applications. Consider the following:

A group of autonomous vehicles in the field may need to self-assemble into multiple teams, in order to simultaneously complete multiple control tasks, e.g. search-and-destroy tasks (see e.g. [4,5] for formation-control algorithms for autonomous vehicles). The vehicles should be grouped (partitioned) in such a manner that the self-assembly takes little time, and the robots in each group can easily communicate with each other. Sensors in an ad hoc network must choose one of several base stations for communication, so as to minimize the power required for multicasting as well as the latency of transmission from the sensors back to the base (see [10] for an overview of multicasting in ad hoc networks). Further, the sensors may need to classify themselves in such a manner that all the sensors associated with a particular base station can communicate among themselves, and further the network can tolerate any single failure in a communication link. Weakly-connected subnetworks within a computer network may need to be identified, so as to isolate a spreading computer virus.

In each of these tasks, the nodes in a network must be partitioned so as to minimize the cost. Further, for a variety of reasons (including security concerns, need for low-power and hence localized communication, and possibly for topological changes that are not known by a central authority), we may require a distributed algorithm for these partitioning tasks.

While there is a wide literature on graph partitioning (which derives primarily from parallel-processing applications, see [6] for an overview), the partitioning tasks for the communicating-agent networks described above are novel in several respects:

As motivated above, the algorithms used often must be distributed, e.g. because of the high power cost of communicating with a central agent or the need for security. For the same reasons, sparsity of communication in use of the algorithm is also often a must. Further, algorithms that are scalable, i.e. ones in which the computational cost for each agent grows in a reasonable manner with the network size, are needed; distributed algorithms can permit scalability. The cost to be minimized is often a complex or multivariate one (e.g., for sensor network applications, delay, power dissipation, and reliability may each play a role in the cost), and varies from one application to another. Thus, we require algorithms that are flexible with respect to the cost minimized. This contrasts with the bulk of the literature on partitioning [7, 8, 9], in which algorithms are designed for a particular cost, typically a min-cut cost or a min-cut cost with partition-size constraints

1

. Communicating-agent networks are commonly subject to topological changes, for instance due to the addition of an agent or the failure of a particular communication link. Thus, partitions of the network may need to be adapted rapidly and frequently, ideally with minimal communication.

These novel features have motivated us to develop a distributed and flexible algorithm for network partitioning/classification.

Specifically, we introduce an algorithm for network self-partitioning (i.e., distributed partitioning) that is based on a stochastic automaton known as the influence model. The influence model can be viewed as a network of discrete-time, finite-state Markov chains, which interact in the sense that the current status (state) of each site (chain) probabilistically influences the future statuses of its neighboring sites [3]. The basic premise for using the influence model (specifically the copying influence model for partitioning graphs is that groups of sites in the model that are separated by weak influences tend to have a different statuses, while sites interconnected by strong influences tend to form a cluster with a common status. Therefore, by associating influences with edge weights in a graph, allowing the influence model to run for some time, and then examining the statuses, we can identify a good partition quickly with respect to many typical cost functions. At the same time, the algorithm randomly searches through many potential partitions, and hence holds promise for minimizing multi-objective and complex cost functions. The technique is distributed in that each site only needs to communicate with graphical neighbors to determine its own partition.

This algorithm for network partitioning builds on our earlier work on a control-theoretic approach to distributed decision-making or agreement [11] (see also [12, 13] for other control-theoretic approaches to agreement and [14] for a study of sensor fusion that addresses/motivates distributed detection/decision-making). In the context of decision-making, we used the influence model to reach consensus among nodes in a manner that reflected their initial divergent opinions about a topic of interest; here, the influence model does not generate one opinion, but instead finds low cost cuts as boundaries between multiple opinions or statuses. Also of interest to us, stochastic automata have been used as tools for routing in sensor networks (e.g. [15]), and have been used as

While our primary motivation is self-partitioning, our studies suggest that the influence model-based algorithm is also valuable for centralized partitioning problems in which multiple complicated costs must be minimized, or in which costs are implicitly found through a simulation. For instance, motivated by fault-tolerance and fault-isolation applications (e.g. [19]), we have applied the algorithm to partition an electric power system so as to minimize both a line-weight and a power-imbalance cost in isolating two generators from each other. We shall briefly explore this centralized application in the article.

The remainder of this article is organized as follows. Section 2 poses the graph partitioning problem in quite a general way, in the process overviewing commonly-studied partitioning problems and standard algorithms for solving them. Section 3 briefly reviews the influence model, on which our partitioning algorithm is based. Section 4 describes the influence model-based distributed partitioning algorithm, in particular describing the mapping from the graph to the influence model, the distributed recursion used for partitioning, and centralized/distributed means for stopping the algorithm. In Section 5, we prove that the algorithm finds the optimal partition with certainty given certain weak graph-structural assumptions, and also discuss the performance of the algorithm. In Section 6, we pursue applications and give several illustrative examples, to better motivate our approach to partitioning and to further evaluate its performance.

Since one of the features of our influence model-based partitioning algorithm is its flexibility, we begin by describing the partitioning (classification) problem in quite a general manner, but taking care to highlight sub-problems of particular interest. In the process, we give a brief review of the research on partitioning that is relevant to our development. We refer the reader to [6, 23] for thorough reviews of the partitioning literature.

Broadly, a

The partitioning problem that we consider is to classify the

Formally, we define a

where

We use the notation

A variety of partitioning problems considered in the literature are examples of the problem described above. It is worth our while to briefly discuss these problems and associated literature, focusing in particular on The The geometric partitioning algorithms based on coordinate information [29, 30] greedy search algorithms like the Kernighan-Lin algorithm [8] spectral methods (methods based on eigenvalue/eigenvector structure of matrices associated with the network graph) [7, 31], and stochastic algorithms including genetic algorithms [32, 33] and simulated annealing [9, 34]. In some applications, the exact mass constraint of bisection is not needed, yet it is useful to have subsets of roughtly equal or mass size. For such applications, a Sometimes, an application dictates that one of the above problems (or another problems in several distributed applications—for instance, the problem of grouping sensor nodes with base stations for multicasting—have this form these problems are known to be NP-hard for our algorithm is naturally designed to address this problem and hence gives fast solutions to the problem.

There is a wide literature on algorithms for solving these partitioning problems (see, e.g., the review articles [6, 23, 29]). A thorough review of this literature is far beyond the scope of this article, but lets us attempt to briefly summarize this work with the aim of delineating our approach from that in the literature. Most of the current partitioning algorithms are aimed at solving a particular problem (perhaps most commonly the bisection problem) in a centralized manner. For example, spectral methods have been used for min-cut and bisection problems, while SA, GA, and K-L are designed specifically for bisection [32, 9, 8]. In constrast to these methods, our applications motivate us to seek an algorithm that can find the optimal partition for a range of cost functions, even if perhaps at slightly higher computational complexity.

The algorithms in the literature that are stochastic (e.g., GA and SA) are of interest to us [6], since our algorithm is also stochastic. Very broadly, our algorithm is similar to these in that it searches randomly through plausible partitions, using the uncertain generation to seek more optimal partitions. However, our algorithm is significantly different from those in the literature, in that it does not react to the cost of the current partition: instead, its update is based solely on the graph topology. This topological approach has the advantage of permitting flexibility in the optimized cost, and (as we shall show) of allowing identification of the minimum-cost solution with probability 1.

Perhaps most significantly, we contribute to this broad literature by developing an algorithm for distributed or self-partitioning, i.e. an algorithm in which agents associated with graph vertices can decide their optimal partitions based solely on communication with neighboring agents. To the best of our knowledge, there have been no other algorithms developed that achieve partitioning without any global perspective at all in the graph.

Our algorithm for partitioning is based on evolving a stochastic automaton model. Specifically, we map a graph to a dynamic

An influence model is a network of Each site Site

We shall only be concerned with a special case of the influence model called the copying

The influence model and copying influence model are compelling as modeling and algorithmic tools because they have a special quasi-linear structure. In general, for stochastic network models such as the influence model, we note that the statuses of all sites together are updated in a Markovian fashion, and hence the joint status of all sites are governed by a very large “master” Markov chain with

Furthermore, the special structure of the influence model permits us to identify qualitative features of the master Markov chain based on the low-order recursions. These special tractabilities of the influence model make it possible to characterize the performance of algorithms built using the model, such as the algorithm developed here.

We can use the copying influence model as a tool for solving the partitioning problem described in Section 2 under rather broad conditions. Furthermore, since the influence model update only requires interaction among graphical neighbors (in a sense that will be made precise shortly), the algorithm is essentially decentralized (though a bit further effort is needed to

We map the graph to a copying influence model with

where Δ is chosen such that

In addition to the above direct interpretation, we can also give a linear systems-based interpretation for the mapping. In particular, we can show that the status-probability recursion (Equation 1) of the developed influence model is a discretized version of a certain linear differential equation defined on the graph. This linear system viewpoint is valuable because it indicates the close connection of our algorithm with some typical network dynamics, and because it can potentially permit an analytical connection of our algorithm with spectral partitioning algorithms. From the linear system viewpoint, the parameter Δ can be interpreted as the discretization step. More generally, Δ should be chosen large enough to achieve a fast convergence rate. We have specified the upper bound to guarantee that all the influence model parameters are valid.

In many decentralized and centralized applications, we envision this mapping stage as being done

Let us first develop an algorithm for

(In a distributed context, notice that we only require that the reference nodes know their own identities to implement this initialization).

To generate a good partition, we then update the copying influence model. The state at each time-step of the recursion identifies a partition of the graph: that is, we classify the nodes whose associated sites are in status

In practice, we must develop a methodology for stopping the algorithm. Below, we discuss distributed and centralized approaches for stopping. The stopping methodologies seek to select low-cost partitions, while checking possible algebraic constraints. We shall show that appropriate stopping criteria permit identification of the optimal solution under broad assumptions, with probability 1.

Conceptually, one might expect this partitioning algorithm to rapidly identify low-cost partitions, because strongly-connected sites in the influence model (sites that strongly influence each other) tend to adopt the same status through the influence model recursion 4 , while weakly-connected sites do not influence each other and hence maintain different statuses. Recalling that the influence strengths reflect edge weights and node masses, we thus see that the partitions identified by the model typically have strongly-connected subsets with weak cuts between them. For many typical cost functions, the optimal partition comprises strongly-connected subsets with weak links, and hence we might expect the algorithm to find good cuts quickly.

For

A few further notes about the recursion are worthwhile:

For simplicity of presentation, we have considered a discrete-time update, and hence a distributed implementation of the recursion in a network nominally requires a common clock for the agents in the network. However, we can equivalently use an update in which each site updates its status at random times (specifically, according to a Poisson arrival process); the recursion in this case is amenable to the same analyses as the recursion described here, and hence can be shown to achieve optimal partitioning. Regarding scalability in a distributed setting, we note that each agent in a network only needs to randomly select a neighbor and poll that neighbor at each time-step to implement the recursion, so the processing/communication per time step does not increase with the size of the network. The total processing/communication cost thus scales with the duration of the recursion. In the next section, we give an argument that the scaling of the algorithm's duration with the size of the network is good compared to other partitioning algorithms in many cases. In some applications, we may already have one partition of a graph, and may wish to improve on this partition (with respect to a cost of interest) or to adapt the partition to changes in the graph. In such cases, we can speed up the recursion by initializing the influence model according to the original partition.

Again, consider the

For distributed problems, it is unrealistic that a single agency can evaluate the global cost of a partition as in the centralized case, since each node only has available local information. A simple strategy in the distributed case is the blind one: the algorithm can be stopped after a finite number of time-steps, where this number is based on the convergence properties of influence models. A more complex strategy is to distributedly compute the cost at each stage using an agreement protocol (see, e.g. [12]).

Another clever strategy for distributed stopping is to use an influence model with state-dependent parameters. In particular, we progressively isolate (reduce the influence) between sites with different statuses after each update (and increase the self-influence correspondingly), until the influence model is disconnected (partitioned). More specifically, for each update, the (time-varying) influence If If If

When this time-varying algorithm is used, we note that the statuses of sites converge asymptotically (see Fig. 1). This is because the influence model becomes disconnected, so that each partitioned component has only one injecting site and is guaranteed to reach consensus. Thus, a partition is found asymptotically. Furthermore, it is reasonable that this algorithm finds a good partition, since weak edges in the original graph tend to have different statuses at their ends in the influence model, and hence these edges are removed by the algorithm. We refer to this strategy as partitioning with adaptive stopping.

This diagram illustrates how a network partitions itself (based on the update of the time-varying copying influence model) in a totally distributed manner.

In this section, we prove that the influence model-based partitioning algorithm finds the optimal solution when either centralized or decentralized stopping is used. Specifically, we show that the influence model algorithm identifies the optimal solution with probability 1, given that the optimal solution satisfies certain broad connectivity conditions (which, as we show, is automatic for several common partitioning problems). The (quite-weak) connectivity conditions required of the optimal solution are based on the requirement that the influence model must be able to distribute a single status to all sites corresponding to a particular subset, from a particular

Before presenting results on the algorithm's ability to find optimal partitions, let us begin by formally defining source vertices, so that we can formalize the connectivity conditions required of the optimal:

We are now ready to present the main result on the algorithm's ability to obtain the optimal solution. We assume throughout this development that the partitioning problem of interest to us has at least one feasible solution. We first give conditions under which the algorithm can reach the optimal solution for a partitioning problem with reference nodes.

In showing that the optimal state can be reached, let us limit ourselves to updates in which sites determine their statuses from other sites in the same partition in the optimal solution—only such updates are needed. Now consider a single subset in the optimal solution. Let us call the reference vertex in the subset

We note that this proof is closely related with the proof characterizing the asymptotics of a

We have thus shown that the algorithm can solve the partitioning problem for a wide variety of cost functions, specifically ones in which the optimal solution has the described connectivity condition. We stress that the connectivity condition—namely, the existence of paths from each reference vertex to the other vertices in its subset—is quite weak: connectedness of the subsets in the (directed) graph is sufficient but not necessary for the connectivity condition to hold. In fact, for a range of distributed applications (for instance, for multicasting in mobile networks or tracking using autonomous-vehicle teams), such connectivity may automatically be required or desired since we need agents/nodes in each identified subset to subsequently communicate among themselves.

Let us next formalize that the algorithm can be used to find optimal solutions for the

We have noted that Theorems 5.1 and 5.2 require the optimal solution to have a particular weakly-connected structure to guarantee its identification. Of course, the optimal partition is not known

Theorems 5.1, 5.2, and Corollary 5.3 show that an optimal solution is identified with probability 1 (i.e. the influence model passes through an optimal solution), given that this solutions satisfies the appropriate connectivity conditions. However, we have not yet shown that the algorithm will stop at the optimal solution with certainty. The following two theorems show that our partitioning scheme is successful when the centralized and distributed stopping criteria are used, respectively.

Partitioning with distributed stopping (in particular, partitioning with adaptive stopping) is quite a bit more complicated to analyze than the centralized algorithms, because the parameters of the influence model are changing in reaction to the site statuses. Here, we formalize that the partitioning-with-adaptive-stopping algorithm is able to solve the min-cut

Here is the formal result, with proof:

To do so, we construct a new influence network

Considering

Now that we know for

We have thus shown that our algorithm can find the optimal partition in both a centralized and a distributed manner, under broad conditions. Next, it is natural to characterize or test the performance of the algorithm: of course, any algorithm that searches through all possible partitions can find the optimal one, so an algorithm such as ours is useful only if it can find the optimal solution quickly compared to a combinatorial search. Although we leave a full analytical treatment of the algorithm's performance for future work, we give here a conceptual discussion of why the algorithm is fast, and also evaluate the performance of the algorithm in examples in the next section.

The

We have recently obtained an analytical justification for the performance of the algorithm. Specifically, we can show that, on average, the algorithm solves the

Applications and Examples

In this section, we briefly introduce several potential applications of our algorithm, and also present canonical examples that illustrate or enrich aspects of our analytical development. The applications and examples together are meant to further motivate the described algorithm.

Application 1: Classification for Multicasting in Ad Hoc Networks

Distributed partitioning holds promise as a tool for classification in distributed sensor networks and mobile ad hoc networks, e.g. for the purpose of multicasting or of transmitting information from the sensors/mobiles back to “leader nodes” or base stations or central authorities.

There is a wide literature on

Several recent articles have addressed multi-hop multicasting in ad hoc networks (see e.g. [10]). In multicasting applications as well as other settings where data may be transmitted to/from several sources or base stations, classification of mobiles/sensors with the base stations is important. We contend that the influence model-based partitioning tool can advance the state-of-the-art on classification in ad hoc networks, for several reasons:

As made clear by the comparison of location-known and location-unknown algorithms for routing, decentralized algorithms for classification may be needed in cases where there is no central authority with full knowledge of the network. Even if the classification is done in a centralized manner, only partial information may be known about the network. For instance, the distances between sensors/mobiles or at least the connection topology may be known, but the exact locations of each sensor/mobile may not. Conversely, the exact locations may be known, but the connection topology may be unknown. The mapping from the graph to the influence model, and the influence model update itself, are based on local information and hence our partitioning method is suited for this setting. We may need to optimize the classification with respect to several (possibly complex) cost criteria (including for example minimum (or average) hops to each base station, average delay cost, and various reliability criteria). In fact, the costs may depend on the specifics of the decentralized algorithm used for routing/multicasting. The influence model-based algorithm permits us to consider multiple and complex cost criteria. Often, the topologies of sensor networks and mobile ad hoc networks change with time, and hence it is beneficial to use an algorithm that can update the optimum with little effort (in either a distributed or centralized case). The influence model-based algorithm has this advantage.

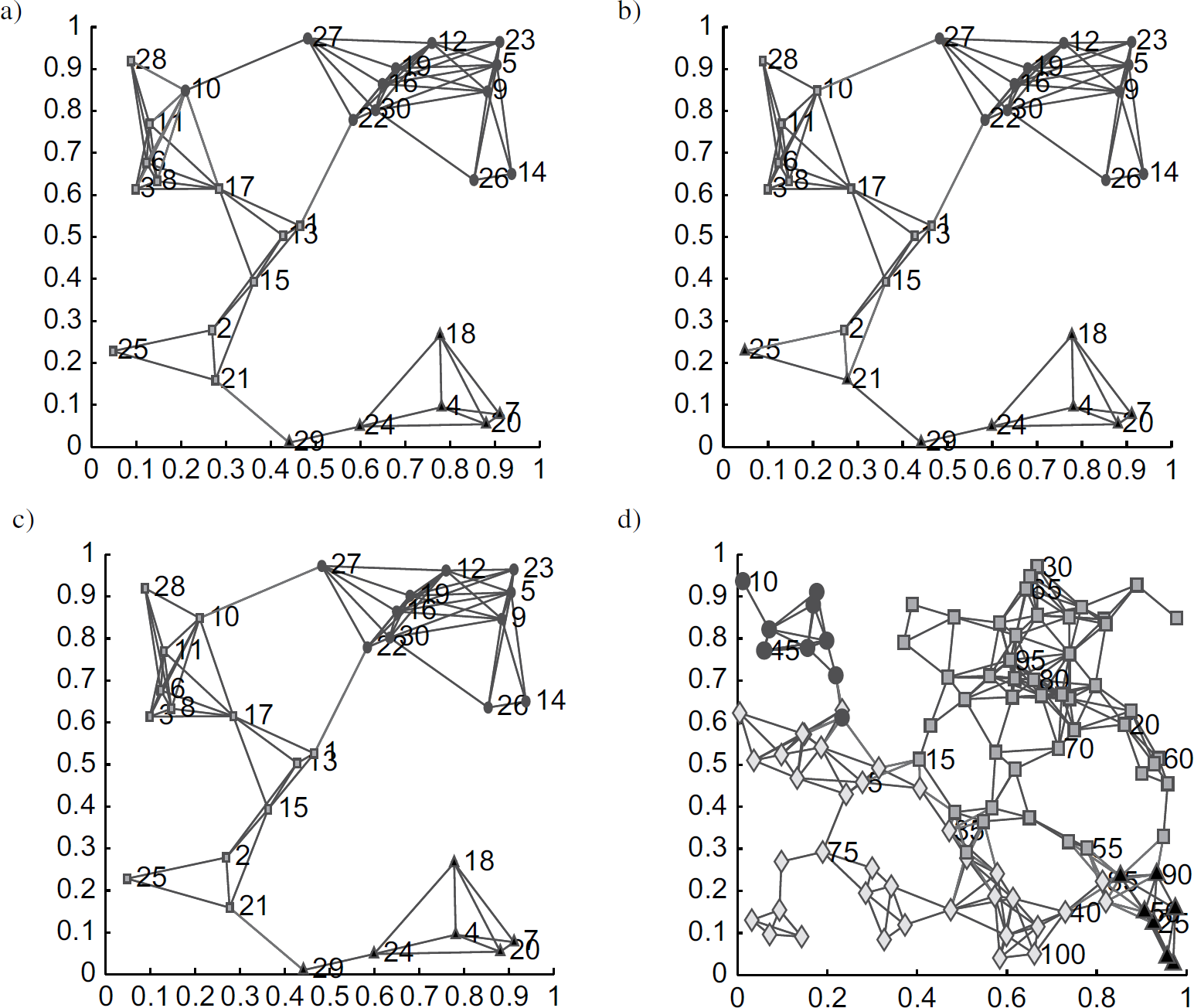

For illustration of this application, we have used the influence model-based algorithm for sensor classification in a small example (one with 3 base stations and 27 sensor nodes). The example was generated by placing the 30 sensors in a uniform i.i.d. manner within the unit square, allowing communication between sensors within 0.3 units of each other, and choosing three sensors (Sensors 3, 14, and 20) to also serve as base stations. We associate a weighted undirected graph with the sensor network in which the 30 vertices correspond to the 30 sensors, and the branches indicate communication between sensors. Each branch weight is chosen to be inversely proportional to the distance between the pair of sensors, with the motivation that longer communication links are more apt to failure and delay and hence are more weakly connected. We consider 3-way partitioning of this graph with reference vertices 3, 14, and 20 using the influence model algorithm.

We consider partitioning with centralized stopping, with respect to two cost functions:

First, we partition the graph so as to Our second cost measure is motivated by consideration of low-cost and low-overhead distributed routing for ad hoc and sensor networks. A simple greedy algorithm for routing when location information is available is to send the message to the node (sensor) closest to the destination during each transmission (see e.g. [40]). Assuming such a greedy routing algorithm is used, our aim is to classify the sensors with base stations so that the maximum number of hops to a sensor from its base station is minimized. (The average number of hops could be used instead.) Thus, we partition the graph using this maximum number of hops when greedy routing is used. The optimal partition when this

Partitioning a 30-sensor network with reference nodes a) based on a minimum-subgraph-eigenvalue cost, b) based on a greedy-routing cost, and c) with distributed stopping. We also partition a 100-sensor network based on a minimum-subgraph-eigenvalue cost (d).

We have also considered min-cut partitioning with distributed (adaptive) stopping for this example. The result is shown in Fig. 2. We note that such a distributed algorithm could be implemented in the sensor network itself, and would only require individual sensors to have local parameters (in particular, distances to neighbors). Such a distributed algorithm might be especially useful in cases where the topology is subject to change, so that the sensors must re-classify themselves periodically.

As further illustration, we also show a 4-way partition with reference nodes of a 100-sensor network in Fig. 2.

We believe that our algorithm potentially has significant application in power system analysis, because of its flexibility. One potential application is for

We consider partitioning the Standard 14-Bus IEEE Test System, for the purpose of isolating a disturbance at Bus 6 from the slack bus 1. The upper figure shows the cut susceptance and generator-load imbalance for a sequence of 250 partitions generated by our influence model algorithm. The lower figure highlights that the minimum-cost partitions according to these two criteria are different. In the lower figure, the solid line indicates the minimum susceptance cut while the the dashed line indicates minimum generator-load imbalance.

Because our algorithm can track multiple costs, we believe that it can potentially enhance the recent approach to islanding suggested in [19], which is slow-coherency (equivalently, spectrally) based. More generally, we believe that the flexibility offered by our partitioning algorithm may be valuable for various model-reduction tasks in power system analysis, as well as other network-centric tasks such as the identification of line trips of minimal cardinality that make infeasible the power flow solution.

Our distributed partitioning algorithm provides a means for networked autonomous vehicles to classify themselves. For instance, say that a network of communicating autonomous vehicles seeks to form two teams, each of which must congregate at a convenient location in space. Our self-partitioning algorithm can be used to identify groups of autonomous vehicles that are close to each other, and so to form the teams, in a completely distributed manner. We note that using partitioning in this context permits task dynamics in autonomous-vehicle applications, for which the role played by each agent actually depends on its initial position (state). In the interest of space, we do not pursue this application in detail here.

Canonical Example 1: Performance Simulations

We explore the performance of our algorithm by pursuing min-cut partitioning with reference nodes on a seven-node circuit. Figure 4 illustrates the mapping between the circuit's conductance graph and an influence model. It is worth noting that the mapping has a meaningful dynamic interpretation in this case: the expected dynamics of the influence model is a discretization of the dynamics of a circuit with the specified conductances and unit capacitors from each node to electrical ground.

Seven-node circuit and its influence model: Conductance values of

Our algorithm is guaranteed to find the optimal cut. Table 1 shows the performance of our algorithm, in terms of the average number of time-steps needed to reach this cut, for several values of the cut strength and discretization time-step. We note that the expected number of time-steps needed to reach the optimal cut is dependent on the strength of the optimal cut: the weaker the cut, the faster the algorithm.

The Average Steps to First Reach the Partition State with Respect to Δ and ɛ Based on 1000 Sample Runs

We have compared our algorithm with spectral methods for this circuit example. When the weak cut is between nodes 2 and 3 and nodes 4 and 5, both the spectral method and our algorithm find the min-cut partition for all 0 ε <1. We notice that our algorithm requires 5 random number generations and 5 copying actions (communications in a decentralized setting) per time-step, and requires between 5 and 10 time-steps for a good choice of Δ and 0 ∊ 1. In comparison, a simple implementation of the spectral method requires on the order of 73 additions and multiplications. We notice that the expected computational cost of our algorithm depends on the strength of the cut, in contrast to the spectral algorithm. Interestingly, if the size-∊ cuts are placed between node 1 and nodes 2 and 3 instead, the spectral method only finds the weak cut for ∊ 0.26, while our algorithm obtains the optimal solution for all ∊ (albeit at higher computational cost).

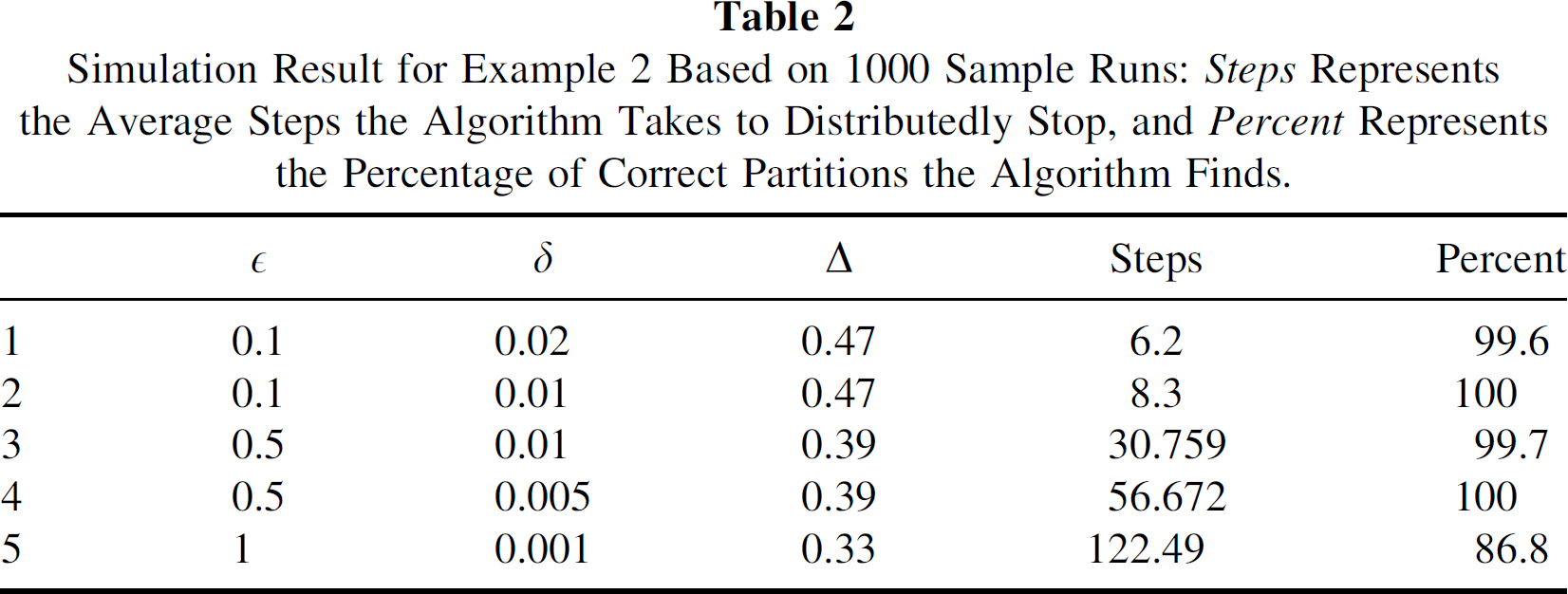

Recall that distributed stopping of our algorithm is achieved through reduction of influences. In this case, the algorithm's ability to obtain the minimum cut is predicated on choosing a sufficiently small influence reduction size. In this example (see Fig. 5), we have characterized the percent of time that the correct partition is found, for several cut strengths and influence reduction sizes. In each case, we have also determined the expected number of iterations until the algorithm stops (see Table 2 for the results). The simulation results indeed show that the optimal cut is found with certainty, when the influence reduction size is chosen sufficiently small. We note that the time required to find the optimal partition increases as the influence reduction size is decreased.

Example for distributed partitioning.

Simulation Result for Example 2 Based on 1000 Sample Runs:

Several directions of future work are worth discussing:

Footnotes

Acknowledgements

The second author thanks Professor George C. Verghese of the Massachusetts Institute of Technology for several illuminating conversations on influence model-based partitioning. The second and third authors were partially supported by the National Science Foundation under Grant ECS-0528882 (Sensors), and the third author was also partially supported by the Office of Naval Research under Grant N000140310848.

1

2

We can in fact allow a far more general cost function, e.g. one that depends on dynamics defined on the graph. We adopt this form here for clarity in our explanation of why our algorithm is expected to work well.

3

For notational convenience, we focus on the case where there is one reference node per component, but our development can straightforwardly be generalized to cases where the number of partitions is different from the number of references.

4

5

We notice a maximization problem can routinely be converted to a minimization by choosing a cost that is the negative of the original cost.