Abstract

The recent success of deep neural network techniques in natural language processing rely heavily on the so-called distributional hypothesis. We suggest that the latter can be understood as a simplified version of the classic structuralist hypothesis, at the core of a programme aiming at reconstructing grammatical structures from first principles and corpus analysis. Then, we propose to reinterpret the structuralist programme with insights from proof theory, especially associating paradigmatic relations and units with formal types defined through an appropriate notion of interaction. In this way, we intend to build original conceptual bridges between computational logic and classic structuralism, which can contribute to understanding the recent advances in NLP.

Keywords

The triumph of distributionalism

The past decade has witnessed the success of deep neural networks (

The debate in this regard has been mostly governed by the revived alternative between connectionist and symbolic approaches. Significantly, both perspectives covet the same battlefield of the ‘human mind’ as the object and the source of epistemological enquiry, thus centring the discussion around the validity of

Interestingly, performance improvements in the treatment of given tasks have been commonly brought about by the substitution of one network architecture by another. Yet, those models differ by significant features – customarily named, still owing to a metaphorical perspective, after cognitive faculties such as ‘perception’ (eg. MLP), ‘memory’ (eg. LSTM) or ‘attention’ (eg. Transformer). This variety of architectures prevents us from attributing to any one of them a decisive epistemic capacity with respect to general linguistic phenomena. Accordingly, devoid of the specifics by which each algorithm organizes the internal representation of the input data,

Now, if we take our eyes off their strictly technical aspects and the metaphors that usually surround their epistemic claims, it is possible to see that all those models, insofar as they take natural language as their object, share a unique theoretical perspective, known as the distributional hypothesis. Simply put, this principle maintains that the meaning of a word is determined by, or at least strongly correlated with, the multiple (linguistic) contexts in which that word occurs (its ‘distribution’) 1 .

As such, a distributional approach is at odds with the generative perspective that dominated linguistic research during the second half of the 20th century. Indeed, the latter intends to account for linguistic phenomena by modelling linguistic competence of cognitive agents, the source of which is thought to reside in an innate grammatical structure. In such a framework, the analysis of distributional properties in linguistic corpora can only play a marginal role, if any, for the study of language.

2

By referring the properties of linguistic units to intralinguistic relations, as manifested by the record of collective linguistic performance in a corpus, the distributional hypothesis imparts a radically different direction to linguistic research, where the knowledge produced is not so much about cognitive agents than about the organization of language. It follows that, understood as a hypothesis, distributionalism constitutes a statement about the nature of language itself, rather than about the capacities of linguistic agents. Hence, if the success of

Linguistic distributionalism is far from new. As often recalled in the recent NLP literature, the distributional hypothesis finds its roots in the decades preceding the emergence of generative grammar, in the works of authors such as J. R. Firth (1957) or, more significantly before him, Z. Harris (1960, 1970a). It can be argued that this classical work in linguistics was chiefly theoretical for, although classical distributional methods provided some formal models (by the standards of that time) and even some computational tests on specific aspects of linguistic structure (cf. Harris 1970b, 1970c), they were not generally applied on real-life corpora at a significant scale.

And yet,

Although produced very differently than dense vector representations of previous matrix models, neural word embeddings rely on the same distributional phenomenon. Indeed, it has been shown that such word embeddings encode a great amount of information about word co-occurrence (Schnabel et al. 2015). More significantly, in a series of papers following the introduction of neural word embedding models, Levy and Goldberg (2014b) showed that one of the most successful of them, the Skip-gram model (Mikolov et al. 2013), was performing an implicit factorization of a (shifted) pointwise mutual information word-context matrix. What is more, the authors were capable of exhibiting performances comparable to that of neural models by transferring some of the latter's design choices and hyperparameter optimizations to traditional matrix distributional models (Levy and Goldberg 2014a; Levy, Goldberg, and Dagan 2015).

The pioneering neural embedding models succeeded in establishing distributed vector representations as the fundamental basis for the vast majority of

Under distributions, the structure!

If we look back to its origins, it is possible to see that the distributional hypothesis constitutes, in fact, a corollary, or rather a simplified and usually semantically oriented version of a classic and more comprehensive approach to linguistic phenomena, known as structuralism. Structuralist linguistics precedes, and at least in part includes Harris's work, finding its most prominent American exponent in Harris's mentor, L. Bloomfield (cf. Bloomfield 1935), while its European roots go back to the seminal work of F. de Saussure, at the beginning of the 20th century, further developed by authors such as R. Jakobson and L. Hjelmslev (cf. Hjelmslev 1953; Saussure 1959; Hjelmslev 1975; Jakobson 2001).

As distributionalism, structuralism is above all a theory about the nature of language rather than linguistic agents, based on a series of interconnected conceptual and methodological principles aiming at (and to a great extent required by) the complete description of linguistic phenomena of any sort. All those principles are organized around the central idea that linguistic units are not immediately given in experience, but are, instead, the formal result of a system of oppositional or differential relations that can be established, through linguistic analysis, at the level of the multiple supports in which language is manifested. A thorough assessment of the whole set of structuralist principles falls out of the scope of the present paper. 8 However, it is worth focusing on one of those principles which represents a key component of what structuralism takes to be the basic mechanism of language, namely the idea that those oppositional relations constituting linguistic units are of two irreducible yet interrelated kinds: syntagmatic and paradigmatic.

Syntagmas and paradigms

In their most elementary form, syntagmatic relations are those constituting linguistic units (eg. words) as part of an observable sequence of terms (eg. phrases or sentences). For instance, the units

What is most striking in the organization of language are syntagmatic solidarities; almost all units of language depend on what surrounds them in the spoken chain or on their successive parts. Saussure (1959, p. 127) 10

Yet, structuralism considers another kind of relations that a linguistic unit can contract, namely associative or paradigmatic relations with all the other units which could be substituted to it at that particular position. Such units are not – and could not be as such – present in the explicit linguistic contexts of the term being considered. In the context given by our example,

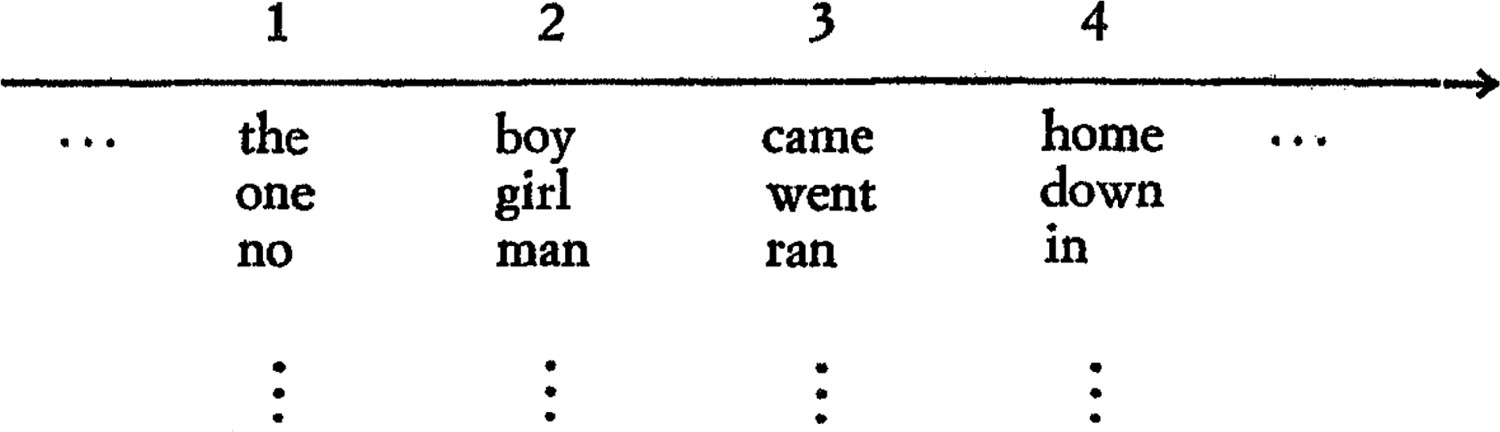

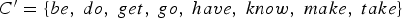

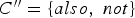

Figure 1 shows an illustration of syntagmatic and paradigmatic relations between lexical units (i.e. words) in the context of a linguistic expression, with the horizontal axis representing possible syntagmatic relations and the vertical paradigmatic ones. But such relations are not restricted to lexical units, and can be shown to hold between linguistic units at different levels, both supra and sub-lexical. For instance, following another example from Hjelmslev (1953, p. 36), from the combinations allowed by the successive paradigms { Hjelmslev's illustration of syntagmatic and paradigmatic relations respectively represented by the horizontal and the vertical axes (Hjelmslev 1971, p. 210).

It follows that, from a structuralist point of view, the properties of linguistic units are determined at the crossroads of syntagmatic and paradigmatic relations. Now, even without entering into all the subtleties associated with this dual determination of units, 11 considering paradigmatic relations in addition to syntagmatic ones broadens the perspectives and goals of the linguistic analysis stemming from a popularized version of distributional semantics. For the paradigmatic organization revealed by the old structuralist lens can provide a much more precise account of the mechanisms involved in the relation among terms and contexts and of their effect on linguistic meaning. Indeed, if at any point of a linguistic sequence we can establish the multiple paradigmatic relations at play by providing the specific list and characterization of possible units from which the corresponding unit is chosen, a manifold of both syntactic and semantic structural features can be represented. If we agree to speak about ‘content’ instead of ‘meaning’ to circumvent the exclusive semantic import usually attributed to the latter, then we can say that paradigms helps us to identify at least three different dimensions of the content of linguistic units.

Syntactic content

As for the first of those dimensions, returning to the example in Figure 1, we can see that a word like

Characteristic content

The second dimension of a unit's content revealed by paradigms is what we can call characteristic content. By being included in a class of substitutable terms, a term receives from the latter a positive characterization, given by the properties shared by all the terms in the class. In our example, all the words susceptible of occupying the place of

Informational content

If the characteristic content was the only semantic principle provided by paradigmatic relations, then the content of a linguistic unit would be indistinguishable from that of any of the members of its paradigm. At best, its meaning could only be singularized at the intersection of all the paradigms to which it belongs. Yet, the mere existence of more than one member in a paradigm is an indication of the fact that the content of those members is not identical, as subtle as the difference may be. From this perspective, the choice of a particular term within the syntagmatic chain is done at the expense of all the others in the corresponding paradigm. Not only is such a choice related to the content of the term, but it can also be understood as constitutive of it. Indeed, following the classic views of Shannon (1948), in line with those of structuralism on this point, 12 the information conveyed by a term is completely determined by its choice among a class of other possible terms. We can thus call informational content this third dimension of content which singularizes each term by contrast with all the others belonging to the same paradigm.

Here again, we can see how the explicit derivation of paradigms can provide new perspectives compared to usual probabilistic models. For instead of computing the information conveyed by one term with respect to the entire vocabulary, paradigms restrict at each point of the syntagmatic chain the domain of terms whose distribution is relevant for such computation. In this way, the probability of a term acquires a semantic value. Take, for instance, the probability of  .

13

If this probability is compared to, say, that of

.

13

If this probability is compared to, say, that of

It appears that, by focusing on paradigmatic relations and units, the vague notion of meaning referred to in the distributional hypothesis can be specified into syntactic, characteristic and informational content, each of which manifests distinctive effects of contexts on words. This is not to say that meaning is entirely reducible to these three kinds of contents. Indeed, one can easily imagine others, such as referential, pragmatic or psychological contents. Yet, the three varieties revealed by the action of paradigms can very well be considered as the main dimensions of the formal content of linguistic units, that is the content resulting from formal relations, if we understand by ‘formal’, following the young Chomsky, ‘nothing more than that it holds between linguistic expressions’ (Chomsky 1955, p. 39). This notion of formal also recalls Saussure's famous tenet that language is a form, not a substance (Saussure 1959, p. 113). And indeed, in the three varieties presented here, the content of terms is the result of oppositional relations determining differential entities, as in Saussure's account of linguistic mechanisms. Yet, in each case those relations are of a specific kind: in the case of syntactic content, the relation between classes of terms constitute paradigmatic units of different types, while the characteristic content results from differentiating paradigms irrespective of their type and the informational content emerges from differentiating singular terms within a paradigm.

It is worth insisting on the fact that, through the derivation of paradigmatic relations, the structuralist approach can capture both syntactic and semantic properties of language as the result of one and the same procedure. In this way, it recovers one of the most remarkable aspects of current distributional models, and of word embeddings in particular, which also exhibit this joint treatment of syntax and semantics (Mikolov et al. 2013; Avraham and Goldberg 2017; Gastaldi 2020). But unlike the latter, the structuralist representation of those properties is not limited to elementary probability distributions, similarity and relatedness measures or even clustering methods in the global embedding space. Relying on the derivation of paradigms, the structuralist approach promises to provide a representation of language as a complex system of classes and dependencies at different levels.

The structuralist hypothesis

The strengthening of the distributional hypothesis through structuralist methods, and especially through the derivation of explicit paradigms, entails some important conceptual consequences. Starting with the fact that, owing to the specification of the mechanisms by which linguistic context conditions the content of terms, a structuralist approach can dispense with the rather elusive notion of use supposed to be somehow reflected in the organization of language. Significantly, while resorting to such a notion of use would imply opening the linguistic model to the study of extralinguistic pragmatic or psychological aspects, the remarkable results of current distributional models do not benefit from any substantial contribution from them, other than those recorded in the corpus under analysis. Certainly, corpora are not disembodied devices unrelated to extralinguistic dimensions. Despite a general tendency to treat corpora as neutral and unbiased datasets, it is in the nature of a corpus to be an expression of concrete practices, as well as of a partial way of recording, selecting, normalizing and organizing them. However, within the limits of a corpus, those practices can only take a linguistic form. Corpus analysis is therefore entirely formal, in the double sense advanced above, that is relying on relations between expressions only, without considering any kind of substance. 14 This is not to say that psychological or pragmatical studies are not interesting per se, or that the results of current models should not be complemented with such studies, but only that, as a matter of fact, those results do not depend on such investigations. The resort to a notion of use in most of the literature around current distributional models thus remains mostly speculative and ineffective. In line with this situation, a structuralist viewpoint suggests that the source of linguistic content (both syntactic and semantic) is to be sought, neither in pragmatic or psychological dimensions beyond language nor in any substantial organization of the world, but primarily in the fairly strict (although not closed) system of interdependent paradigms derivable, in principle, from the explicit utterances that system is implicitly governing. As Harris puts it:

The perennial man in the street believes that when he speaks he freely puts together whatever elements have the meanings he intends; but he does so only by choosing members of those classes that regularly occur together, and in the order in which these classes occur. […] the restricted distribution of classes persists for all their occurrences; the restrictions are not disregarded arbitrarily, e.g. for semantic needs. Harris (1970a, pp. 775–776)

It follows that the analysis of a linguistic corpus, inasmuch as it succeeds in deriving the system of classes and dependencies that can formally account for the regularities in that corpus, is a sufficient explanation of everything that is there to be linguistically explained. This idea constitutes a key component of what can henceforth be called the structuralist hypothesis, namely that linguistic content is the effect of a virtual structure of classes and dependencies at multiple levels underlying (and derivable from) the mass of things said or written in a given language. Accordingly, the task of linguistic analysis is not just that of identifying loose similarities between words out of distributional properties of a corpus, but rather this other one – before which the latter appears as a rough approximation – of explicitly drawing from that corpus the system of fairly strict dependencies between implicit linguistic categories. If we agree to adopt Hjelmslev's terminology and call process a complex of syntagmatic dependencies and system a complex of paradigmatic ones (Hjelmslev 1975, p. 5), then the following passage from Hjelmslev's Prolegomena can be reasonably taken to express the essence of the structuralist hypothesis:

A priori it would seem to be a generally valid thesis that for every process there is a corresponding system, by which the process can be analyzed and described by means of a limited number of premisses. It must be assumed that any process, can be analyzed into a limited number of elements recurring in various combinations. Then, on the basis of this analysis, it should be possible to order these elements into classes according to their possibilities of combination. And it should be further possible to set up a general and exhaustive calculus of the possible combinations. Hjelmslev (1953, p. 9)

The challengens of an emergent calculus

Notice that, in Hjelmslev's view, the ultimate goal of linguistic analysis goes beyond the pure description of the data, and pursues the derivation of an exhaustive calculus. This goal is at least partially fulfilled by current distributional models, which are intended to be applied to data outside the training corpus or to be used as generative models. But if a calculus is necessarily at work in those models once they are trained, its principles remain entirely implicit. Here too, we can see how the structuralist derivation of a (paradigmatic) system out of (syntagmatic) processes can contribute to providing an explicit representation of such a calculus, based on the particular way in which generalization is achieved trough paradigms. The example in Figure 1 can offer an elementary intuition of this mechanism. If, from a hypothetical corpus made of the three expressions corresponding to the three horizontal lines of the table, we are able to derive the four paradigms A, B, C, D, corresponding to the latter's columns, and then establish some of their combinatorial properties, for instance, the capacity of composing them in the order

,

15

then the explicit calculus that starts to be drawn in this way appears as the correlate of the generalization achieved by considering all possible combinations of the members of the paradigms at their corresponding positions (such as

,

15

then the explicit calculus that starts to be drawn in this way appears as the correlate of the generalization achieved by considering all possible combinations of the members of the paradigms at their corresponding positions (such as

Incidentally, under this interpretation, the structuralist programme challenges the classic distinction between connectionist and symbolic methods and its philosophical consequences (cf., for instance, Minsky (1991)). While beginning with combinatorial properties of linguistic units as raw data whose structure is only presupposed, the structuralist hypothesis aims at reconstructing an explicit and interpretable representation of the structure underlying such data, taking the form of a symbolic system at different levels (from the phonological all the way up to the grammatical or even stylistic level). From this perspective, symbolic systems implementing different aspects of algebraic structures are the direct result of the interaction of terms (including sub- or pre-symbolic ones) reflected in the statistics of given corpora. Conversely, when those symbolic systems are put into practice – in the performance of linguistic agents, for instance – the corresponding symbolic processes cannot but reproduce to a significant extent the statistical properties of the terms upon which that system was derived. Hence, from a structuralist perspective, connectionist and symbolic properties appear as two sides of the same phenomenon.

With the rather frail means of the epoch, the classic structuralist approach was able to prove its fecundity in the description of mainly phonological and morphological structures of multiple languages. However, empirical studies of more complex levels of language, and of grammar in particular, received mostly circumscribed and limited treatment. More generally, despite some valuable early efforts (Harris 1960; Hjelmslev 1975) structuralist linguistics encountered difficulties in providing effective formalized methods to describe syntactic structures in their full generality. The rise of Chomsky's generativist programme in the late 1950s pushed the structuralist approach into obsolescence, until some of the latter's intuitions were recovered in the form of distributional methods by the resurgence of empiricist approaches in the wake of the emergence of new computational techniques in the 1980s (cf. MacWhinney 1999; McEnery and Wilson 2001; Chater et al. 2015). As it turns out, the resurgence of distributional methods was mostly driven by semantic concerns, to the extent that, in the current state of the art, distributionalism has become indistinguishable from distributional semantics. Therefore, the question of a distributional calculus of a broader scope, including structural aspects of syntax no less than semantics, remains largely open.

Obstacles to paradigm derivation

As we have seen, structuralism's main strategy to tackle the problem of an explicit calculus focuses on the derivation of paradigmatic units. However, establishing paradigms is a highly challenging task outside strongly controlled and circumscribed conditions. For if, at first sight, paradigms appear as simple classes of terms, such classes have the particularity of being at the same time of an extreme precision – since the inclusion of one incorrect term would be enough to jeopardize the successful interaction of linguistic terms – and perfectly general – since paradigms may contain an indefinite number of terms, either unseen in the data upon which they were derived or even not yet existent in the language under analysis, thus virtually allowing for an indefinite number of syntagmas or linguistic processes.

Those two conditions are somewhat in tension: generality excludes any purely extensional definition of paradigms, while precision makes intensional or logical definitions particularly complex, especially considering that they are to be drawn exclusively from distributional properties. Indeed, such precision is the result of the simultaneous action of multiple restricting principles, which are realized by terms interacting within a definite context.

Take, for instance, the expression

Circularity

The first and most important of those obstacles concerns the nature of the dependencies upon which a paradigm is supposed to be established. As it appears clearly in the example, the restricting principles establishing a paradigm correspond to several dependencies within the syntagmatic chain. But we also saw that such dependencies do not hold directly between terms but between classes of terms. From the point of view of paradigmatic derivation, this means that, while we can only rely on terms within the syntagmatic chain – the only ones accessible to experience –, paradigms do not contract dependencies with terms but with their respective characteristic contents (for instance, with the noun and singular characters of the term

The circularity of the task is manifest: paradigms are needed to establish paradigms. Yet, this circularity is not to be attributed to the method itself, but to the very nature of its object. Indeed, from a purely internal or empirical viewpoint, what are, for instance, adjectives, other than a particular class of terms associated to nouns? And what are nouns if not something that can be modified by adjectives? These mutual dependencies are by no means restricted to syntactic classes, but pervade all levels of language: phonological (eg. vowels and consonants), morphological (eg. verb stems and inflections), semantic (eg. agent and actions), or even stylistic (eg. formal and familiar).

Hierarchical compositionality

A second difficulty defying paradigmatic derivation concerns the composite organization of the restrictions delineating a paradigm. In the example above, for instance, the context of the term

To deal with this difficulty, some sort of compositionality principle should be found. But the mere composition of paradigms is not enough either. A more subtle mechanism is needed to assess the multiple ways in which different compositional principles are capable of interacting to derive a hierarchical structure.

Internal paradigmatic structure

Finally, when establishing a paradigm, it can happen that what constitutes its unity might not be immediately evident from the list of terms it contains. In our example, the words

While the previous difficulty can be understood as concerning syntagmatic relations between paradigmatic units defining the structure of linguistic contexts, in this case we are confronted with the problem of the paradigmatic relations between (sub-)paradigms defining the structure of a paradigm containing them. The difficulty of this task resides in that, in principle, the context upon which the paradigm was derived in the first place has no explicit means to perform further discriminations within that paradigm, and it is not obvious what could be the source of those discriminations.

These difficulties have not remained unnoticed, even in the old days of structuralist research (see, for instance, Chomsky 1953). They are also not the only ones that derivation of paradigms can encounter, 16 most of all considering we have only presented them though extremely simple illustrations. Real-life analysis can only make this situation worse. In particular, trying to derive paradigms exclusively through corpus analysis can raise new difficulties unforeseen to a pre-computational structuralist perspective, for which the automatic processing of corpus of significant size remained after all a promising but peripheral possibility. Indeed, most of the structuralist original theoretical and methodological constructions are consciously or unconsciously conceived on the basis of linguistic data that can be produced by elicitation from an informant, if not through simple introspection. Problems like adequacy of probability measures, scarcity of data or impossibility statements (establishing, for instance, that two terms cannot stand in a given relation) barely appear among its original theoretical concerns.

And yet, in view of the resurgence of distributional methods and the growing necessity of making explicit the mechanisms directly or indirectly responsible for that success, it seems worth readdressing those main difficulties concerning paradigmatic inference, in the perspective of the renewal of those methods in this new setting. For the structural features current models have been shown to grasp, if only implicitly, are an indication that such difficulties can be overcome.

Towards a type-theoretical emergent calculus of language

Despite their mainly semantic orientation,

Paradigms as types

If we accept that paradigm derivation is a promising strategy to provide a representation of implicit structural features, then we should adopt an analytic framework where we can tackle the obstacles presented in the previous section. Now, all of those obstacles revolve around the idea that paradigmatic units do not pre-exist the dependency relations they contract with other units of the same kind. Therefore, the intended framework should address the dynamic establishment of dependencies as constitutive of its elementary classificatory objects. For this reason, we propose to represent paradigms as computational types.

The idea of representing linguistic phenomena through types is not new (Lambek 1958; McGee Wood 1993; Moot and Retoré 2012; Fouqueré et al. 2018). Moreover, the recent success of

Within the type-theoretical tradition stemming from the study of the correspondence between logical proofs and computational processes (i.e. the Curry-Howard correspondence; cf. de Groote 1995), a singular research programme originating in French proof-theory brings to the fore a notion of interaction upon which the types of a system are built from an intricate web of dependencies (Girard 1989, 2001; Krivine 2010; Miquel 2020). This original perspective offers a powerful framework which could be mobilized to address the difficulties associated with the derivation of paradigms as more than simple classes, since not only derived types, but also atomic ones can be conceived as resulting from one and the same procedure, and are endowed with an internal structure that contain traces of the principles of their mutual relationships 17 .

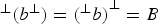

In this approach, types are conceived as sets that are closed under operations resulting from a notion of successful interaction defined at the level of their elements. More precisely, given a set A of elements of some set

, we can consider its orthogonal, the set

, we can consider its orthogonal, the set

containing all the elements of

containing all the elements of

that successfully interact with the elements of A, for a given notion of successful interaction. Types are then defined as exactly the sets that are orthogonal to a set, and for any given set A, it is possible to construct a type that includes it by considering its bi-orthogonal (i.e.

that successfully interact with the elements of A, for a given notion of successful interaction. Types are then defined as exactly the sets that are orthogonal to a set, and for any given set A, it is possible to construct a type that includes it by considering its bi-orthogonal (i.e.

), which is fully defined by A. As a consequence, all the elements of a type constructed in this way are characterized by a common interactive behaviour with respect to all the others elements in

), which is fully defined by A. As a consequence, all the elements of a type constructed in this way are characterized by a common interactive behaviour with respect to all the others elements in

, whose behaviour is, in turn, represented by their respective types.

18

, whose behaviour is, in turn, represented by their respective types.

18

To the best of our knowledge, this approach to types through interaction has not yet been applied to the treatment of natural language in a way that can contribute to the intelligibility and development of current NLP methods. However, the capabilities exhibited by this framework permit to suggest that a proper interpretation of interaction within natural language can help developing current distributional methods in the direction established by the structuralist hypothesis, by addressing the challenges to which the latter is confronted. In the rest of this article, we indicate how the obstacles presented in the previous section could find a suitable treatment from this perspective.

Circularity as (Bi-)Orthogonality

By understanding types as sets which are the orthogonal of other sets, types are conceived as the sets that are stable by the operations resulting from correct interaction. Hence, the circularity intrinsically involved in the extraction of paradigms is here embedded in the formal definition of types through orthogonality. Types are exactly the fixed points of this circularity, whose construction is inseparable from their dependencies with other types. That A is a type means nothing more (and nothing less) than that there is a certain dependency (captured by a notion of interaction) between the terms in A and other classes of terms, which can, in turn, be constructed as other types, thanks, among others, to the action of A.

All that is needed to put this framework into practice is an adequate notion of successful interaction defined over terms and classes of terms. In a pure computational setting, where terms are computational processes (i.e. programmes), termination of interacting programmes is often used (Riba 2007). Although there is no unique natural way of defining such interaction in the case of natural language, we can intuitively associate it with distributional properties, i.e. two linguistic terms successfully interact if they co-occur with statistical significance within relevant contexts across a given corpus. 19

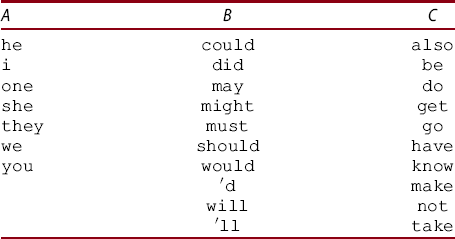

If we call A, B, C those three classes, we can now consider the set

containing all the possible combinations of the terms of those classes, in that order (eg.

containing all the possible combinations of the terms of those classes, in that order (eg.  (and in fact most, in the general case) will not exist in the corpus, although they constitute correct expressions of the language under study. As a result, the analysis can be carried beyond the original available data.

21

(and in fact most, in the general case) will not exist in the corpus, although they constitute correct expressions of the language under study. As a result, the analysis can be carried beyond the original available data.

21

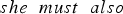

We can then say that a set A is orthogonal to a set B if all the of terms of A can co-occur with the terms of B. In our simple example, we can restrict the notion of successful interaction to simple concatenation of terms. Since the interaction between terms is not commutative in this case, a given set A will have two orthogonals: its left-orthogonal

, which contains all the left terms with which A interacts correctly, and its right orthogonal

, which contains all the left terms with which A interacts correctly, and its right orthogonal

, which is the same on the right. For example, if we take any subclass b of the class B defined before, say b = {

, which is the same on the right. For example, if we take any subclass b of the class B defined before, say b = { coincides with the class A (i.e.

coincides with the class A (i.e.

) and its right orthogonal is equal to C (

) and its right orthogonal is equal to C (

), both of which become types following our definitions. Moreover, we can consider the (right or left) bi-orthogonal of b, which is equal to the entire class B, and thus also a type, i.e.

), both of which become types following our definitions. Moreover, we can consider the (right or left) bi-orthogonal of b, which is equal to the entire class B, and thus also a type, i.e.

.

.

In this way, we have constructed three types which are nothing more than the expression of the mutual dependencies that hold between them. Such types can behave like idealized paradigms which could be further refined based on the statistical properties of the initial corpus. More significantly, their formal construction permits to mobilize the entire type-theoretical apparatus in such a way that the remaining obstacles concerning paradigm derivation can be addressed in a new perspective.

Compositionality through connectives

As we said, types constructed in this way, are endowed with an internal structure: not every set is a type, but only those that behave uniformly with respect to the selected notion of interaction. As it turns out, this internal structure can be used to govern the hierarchical compositionality of types. For, from a logical viewpoint, only the composition of types yielding another type (in the defined sense) are legitimate. Since there are, in principle, multiple ways in which we can compose types to build other types, owing to different properties of the interaction between those type's terms, and between those terms and their (respective or shared) contexts, different modes of compositionality can be expressed, which take the form of emergent logical connectives (Girard 2001).

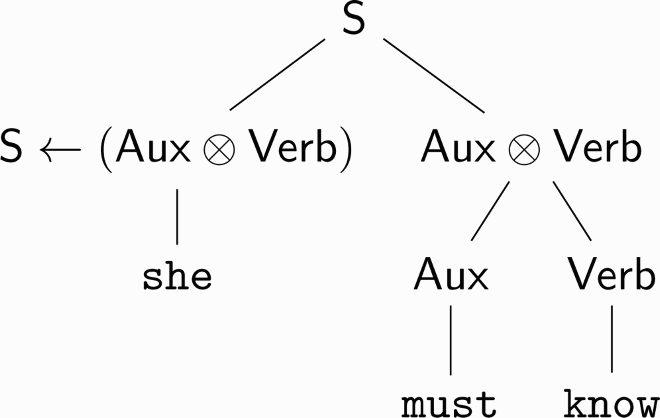

Turning back to our example, we can see that the type B contains principally modal verbs. It is not unreasonable to think that the consideration of other expressions in the corpus would reveal the significant presence of similar types in such a way that a type

Suppose now that

is in ⊥(

is in ⊥( (the type of sentences, for instance), then, opening the door to an iterative definition, we can establish that

(the type of sentences, for instance), then, opening the door to an iterative definition, we can establish that  (

(

As composition can engender different kinds of relations between the component types and the type resulting from their composition, different connectives are necessary. Here

Analyzing paradigms with subtyping

Finally, if we take a closer look to our example, we can see that it relies not only on the orthogonals of the three classes we presented, but also on our ability, among the third type

representing verbs, from

representing verbs, from

containing adverbs. This problem corresponds precisely to the third of the obstacles presented in the previous section.

containing adverbs. This problem corresponds precisely to the third of the obstacles presented in the previous section.

The difficulty resides in the fact that, within the context considered, there is no means to operate the necessary distinction. Of course, if the type

mentioned before was already constructed, then the difficult would be easily circumvented (since

mentioned before was already constructed, then the difficult would be easily circumvented (since

). But constructing the type

). But constructing the type

might, of course, require that we are able to distinguish

might, of course, require that we are able to distinguish

from

from

in the first place. However,

in the first place. However,

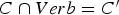

can result from more direct distributional properties if other interactions are taken into account. For instance, if we consider, in the way described, the most frequent words coming after

can result from more direct distributional properties if other interactions are taken into account. For instance, if we consider, in the way described, the most frequent words coming after

and

and

, we get the following classes, respectively:

, we get the following classes, respectively:

and

and

is conveniently approached.

is conveniently approached.

Conclusion

In this paper we have attempted to extend the scope of the distributional hypothesis underlying current

The ideas presented in these pages should be seen as an exercise of conceptual bridging between disciplines which are not usually considered together (NLP with AI, philosophy of language, structuralist linguistics, computational logic). As such, they have a primarily speculative value. Their validity can only be established through empirical results, which are the object of our current work.

Footnotes

1

2

Chomsky's rejection of probabilistic methods is well-known, as is his frequently quoted statement that ‘the notion “probability of a sentence” is an entirely useless one, under any known interpretation of this term’ (Chomsky 1969). For an early exposition of this viewpoint, see Chomsky (![]() , Section 2.4).

, Section 2.4).

4

5

7

8

One may consult Ducrot 1973 for synthetic yet precise and faithful presentation of linguistic structuralism, as well as Maniglier 2006 for an in-depth analysis of its conceptual and philosophical stakes. We have addressed the connection between the structuralist approach and current trends in NLP in Gastaldi ![]() .

.

9

All our subsequent examples will be taken from the English language. We introduce the convention of writing linguistic expressions under analysis in a font.

10

Notice, however, that Saussure's notion of syntagmatic relations takes into account not only the relations between units but also those between sub-units within a unit.

11

Actually, for structuralism, linguistic or semiological units are determined at the intersection of not one but two sets of such series of syntagmatic and paradigmatic relations: the signifier and the signified, or the expression and the content planes. The need of a second set of syntagmatic-paradigmatic relations can, in principle, be explained by the insufficiency of just one series to determine all the relevant properties of units (the two sets borrowing determinations from one another). For the sake of simplicity, in this paper we restrict ourselves to syntagmatic and paradigmatic relations of expressions only, which is also closer to the way in which this problem is treated in current NLP models.

12

For a historical connection between the structuralist and the information-theoretical approaches to language, see Apostel, Mandelbrot, and Morf 1957; Jakobson ![]() .

.

13

According to Google Books Ngram Viewer Michel et al. 2010, ![]() , en_2019corpus.

, en_2019corpus.

14

Notice that, under such definition, formal analysis and properties are not supposed to be neutral or unbiased.

15

16

In particular, we have disregarded here a fundamental problem which is nevertheless central from a structuralist standpoint, namely that syntagmatic relations between terms upon which our construction of paradigms relies as a given, do in fact require to be established in a way that also depends on the paradigmatic relations they are supposed to help constructing. Hence, in order to be entirely faithful to the structuralist perspective, a segmentation procedure should make part of the derivation of a linguistic system, not just as a preliminary step (such as ‘tokenization’) but on a par with paradigmatic derivation. We leave the treatment of segmentation within this framework for an upcoming work.

17

19

20

For this toy example, we compute the paradigmatic classes rather naively, using Google Books Ngram Viewer (Michel et al. 2010, ![]() ), with the following parameters: en_2019 corpus, from 1900 to 2019, with a smoothing of 3. The use of wildcards permits to recover the most frequent (up to 10) words at a given place. Since this toy example has only an illustrative character, we disregard the difference in frequency of words within each class, and we order them alphabetically.

), with the following parameters: en_2019 corpus, from 1900 to 2019, with a smoothing of 3. The use of wildcards permits to recover the most frequent (up to 10) words at a given place. Since this toy example has only an illustrative character, we disregard the difference in frequency of words within each class, and we order them alphabetically.

21

Disclosure statement

No potential conflict of interest was reported by the authors.