Abstract

Mental health service delivery is complex. In Australia, assessment, treatment and rehabilitation are provided by State-funded mental health services, private psychiatrists, GPs, community health centres, and consumer/ carer and community support services. (Theterm ‘consumer’ is used in preference to ‘patient’ throughout this paper, consistent with the terminology of the National Mental Health Strategy and projects funded by it, and in respect of the wishes of the Partnership Project and its subcommittees, all of which have consumer representation.)

There is growing recognition that liaison between these groups is suboptimal. There is also increasing concern in the theoretical literature that poor linkagescan be detrimental to care, although the effect of improving collaboration on consumer outcomes has not been well studied in the empirical literature [1], [2].

There have been a number of initiatives aimed at improving collaboration between mental health services and GPs, usually involving some sort of shared care arrangements [3], [4]. More recently, the focus has shifted to improving partnerships between public mental health services and private psychiatrists. Under the Second National Mental Health Plan, the Commonwealth Department of Health and Aged Care has funded several National Demonstration Projects in Integrated Mental Health Services. These projects aim to create a more flexible service delivery framework that can produce improved outcomes, and to demonstrate more efficient ways to deliver care by removing current organizational and financial barriers [5–8]. In Victoria, St Vincent's Mental Health Service (SVMHS) and The Melbourne Clinic (TMC) are collaborating on one of these projects, the Public and Private Partnerships in Mental Health Project (Partnership Project) [9]. St Vincent's Mental Health Service is a State-funded Area Mental Health Service that provides multidisciplinary care to Melbourne's inner urban east via an acute inpatient unit, a residential community care unit, and community mental health services. The Melbourne Clinic is a private psychiatric hospital, with approximately 200 accredited psychiatrists, which provides a broad range of general and specialist psychiatric services in inpatient and outpatient settings.

The Australian mental health sector is not alone in its concern that poor linkages equate to poor outcomes or in putting in place joint programmes to address theproblem [1], [2]. For example, in the USA, collaborative arrangements have been put in place to link mental health services with substance-abuse services [10] and public health agencies [11]. Because others have grappled with the issue, a conceptual and practical literature has amassed.

This paper uses a specific mental health case study, the Partnership Project, to illustrate a conceptual framework for developing, implementing and evaluating programmes concerned with linkages.

Conceptualizing integrated programmes

Konrad [12] discusses systematic integration initiatives in the human service delivery field. She identifies different levels of integration, viewing them as a continuum that moves from informal to formal arrangements:

1. Information-sharing and communication: at this level, collaborative partners share general information about programmes, services and consumers (e.g. a working party involving public mental health service staff and community health centre staff to develop and implement projects relating to mental health issues).

2. Cooperation and coordination: here, partners work collaboratively to improve relevant services (e.g. private psychiatrists and GPs having reciprocal referral arrangements; public psychiatric inpatient facilities discharging consumers to GPs for follow up).

3. Collaboration: at this level, partners share activities in an effort to achieve a common goal (e.g. written agreements between public mental health services and community health centres; staff rotations and educational exchanges at public and private mental health facilities).

4. Consolidation: a consolidated system involves a single umbrella organization, under which separate entities provide care (e.g. provision of public mental health sector services and community health centre services under the auspices of an Area Health Service).

5. Integration: at this level, there is a single authority that comprehensively addresses consumer needs, coordinates activites and has uniform eligibility criteria (e.g. pooling of public-sector funding with Medical Benefits Schedule (MBS) and Pharmaceutical Benefits Schedule (PBS) expenditure associated with private practice as a means of coordinating public and private mental health services).

An initiative may exhibit integration at several levels and have several dimensions on which the level of integration may vary [12]. This paper primarily concerns itself with integration up to the level of collaboration, since this is the model under which the Partnership Project is operating. The Partnership Project also has elements of cooperation and coordination, and of information-sharing and communication.

Evaluation issues and ways of addressing them

The evaluation of programmes to address poor linkages is essential to guarantee that individual programmes are effective, and to ensure that lessons from these individual programmes can contribute to the body of knowledge regarding how best to improve linkages. There is a dearth of evaluative research examining the impact of collaborative programmes on consumer outcomes [1], [2].

Knapp [13] summarizes three key issues associated with evaluating collaborative initiatives: (i) ensuring that the perspectives of all players in the collaborative programme are represented in the evaluation; (ii) specifying what is measured in the evaluation: examining processes may be just as important as measuring impacts and outcomes; and (iii) attributing effects to causes, which is often made difficult by the fact that collaborative initiatives are multifaceted and operate within already-complex systems.

Knapp [13] considers how best to address these issues, suggesting that to be most helpful, evaluations should be:

1. Strongly conceptualized: the complexity of collaborative programmes and the resultant difficulties in terms of attributing cause and effect, mean that it is crucial that the evaluation explicates the causal linkages between elements of the programme at the outset. In the evaluation literature, doing this is known as clarifying the programme's logic or its theory of action [14–16].

2. Descriptive: because different players interpret the nature of collaboration differently, it is important that the evaluation provides detailed descriptions of the elements of the collaborative programme (e.g. structural arrangements, informal and formal collaborative mechanisms, pathways to care for consumers and the experience of collaboration for consumers, carers and providers).

3. Comparative: the evaluation should offer insights into the extent to which collaborative arrangements improve the experiences of consumers, carers and providers. They should provide information about the range of factors that augur well for or militate against collaboration working effectively.

4. Constructively sceptical: the evaluation should be wary of the ‘hype’ associated with collaboration, and acknowledge that there may be negative impacts or outcomes as well as positive ones (e.g. improvement in the joint operation of the two sectors may occur at the expense of the individual operation of each sector).

5. Positioned from the bottom up: the evaluation should be anchored at the level of consumers and carers (i.e. the ultimate recipients of the collaborative programme). This is not to say that the evaluation should be solely concerned with consumers' and carers' perceptions (although these are clearly important). Rather, it is about ensuring that there is a balanced approach that considers service and system benefits at the ground level.

6. Collaborative: where possible, the evaluation should be collaborative, engaging input from all players to incorporate a range of perspectives. It is acknowledged, however, that good collaborative evaluation is timeconsuming and requires a sharing of control.

The Partnership Project

As noted above, the Partnership Project is a joint initiative of SVMHS and TMC. It is overseen by a Steering Committee that includes representation from each service, as well as from consumers, carers, funders and other relevant parties. The project has three key goals: (i) to improve community access to specialized mental health services; (ii) to improve the coordination between public and private mental health services; and (iii) to support the role of GPs when treating people with a mental illness. In order to achieve these goals, the Partnership Project has two major strategies: (i) the development of a Linkage Unit to develop and support shared care arrangements between the public and private sectors; and (ii) the development of an expanded role for private psychiatrists to include non-direct patient activities (e.g. case conferences, conferences, supervision/training sessions, secondary consultations). After a planning period, the Partnership Project moved into its 2-year implementation phase in September 2000. The Partnership Project has been described in detail elsewhere [9].

Evaluation of the Partnership Project

The Centre for Health Program Evaluation (CHPE) has been commissioned to conduct an independent local evaluation of the Partnership Project. Approval to conduct the evaluation has been received from the University of Melbourne's Human Research Ethics Committee and the Victorian Department of Human Services' Ethics Committee.

In evaluating a programme as complex as the Partnership Project it was considered important to use triangulation, or ‘the combination of a number of methodologies in the study of the same phenomenon’ [15], [16]. Refining the evaluation design in terms of its components has been an iterative process. The final components are described in Table 1.

Components of the Partnership Project evaluation

The remainder of this section describes the way in which the evaluation of the Partnership Project is satisfying Knapp's criteria of being strongly conceptualized, descriptive, comparative, constructively sceptical, positioned from the bottom up and collaborative [13]. Reference is made to particular components of the evaluation as relevant.

Strongly conceptualized

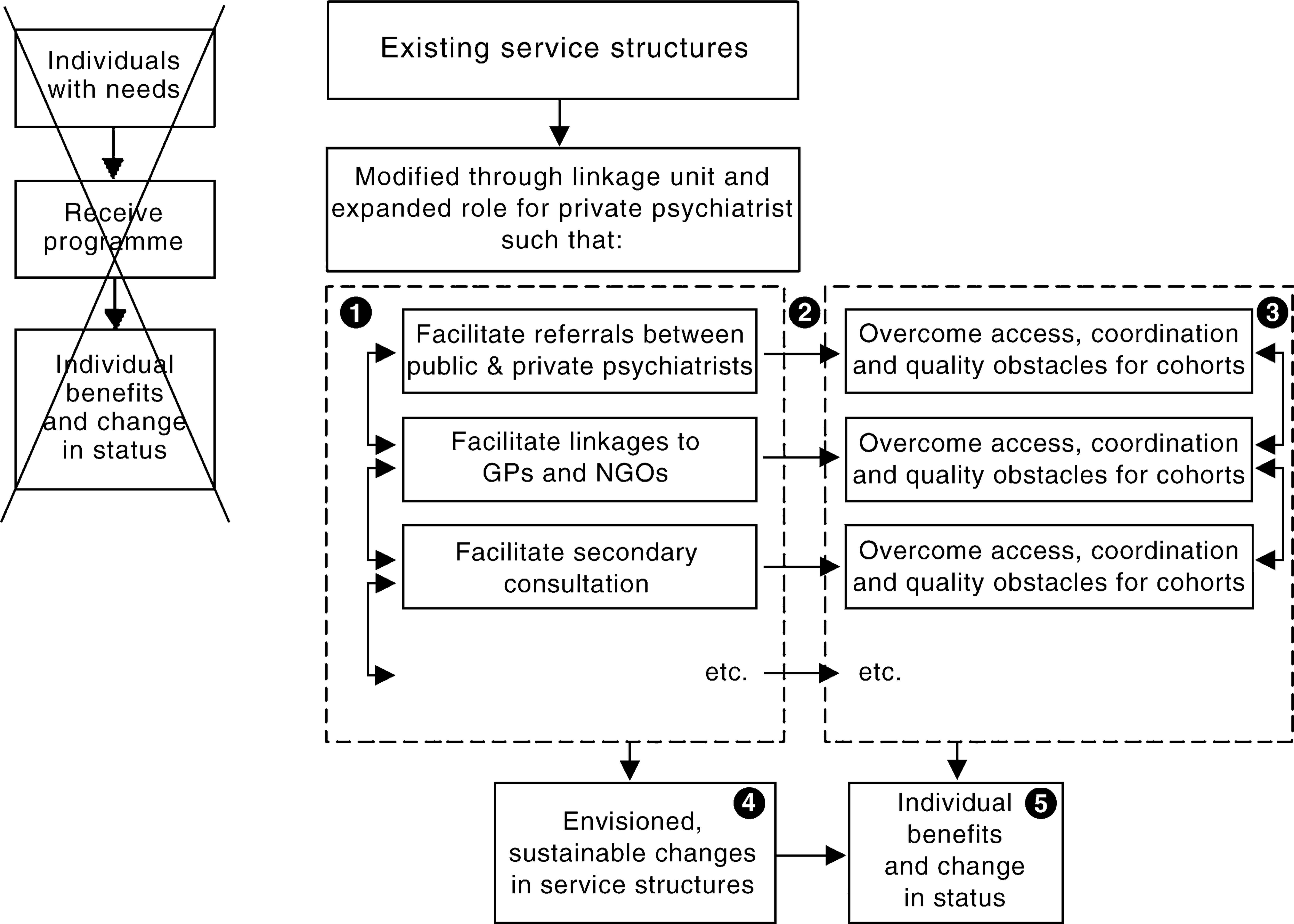

From the outset, a key consideration in conceptualizing the Partnership Project (and in designing its evaluation) has been the fact that the project is continuous with a process of evolutionary development that has been occurring for some time. It involves a sophisticated set of refinements to an already-complex service system. It would be undesirable therefore to conceptualize the programme (and the evaluation) in terms of an input, intervention, outcome framework. Rather, it should be understood as a process of structural reform where each step has the potential to activate processes that will impact upon providers and ultimately be of benefit to consumers and carers. This contrast is represented in Figure 1.

Conceptualization of the Partnership Project.

Such a framework suggests five levels of possible objectives (refer to labels on Fig. 1):

Level 1: objectives related to the structural changes that will occur.

Level 2: objectives related to the potential causal mechanisms that will be activated.

Level 3: objectives related to care coordination, consumer access, quality of care, etc.

Level 4: objectives related to the totality of systems reform.

Level 5: objectives related to consumer and carer satisfaction and health outcomes

Early in the life of the Partnership Project, the evaluators interviewed key informants and held a Program Logic Workshop in which members of the Steering Committee participated. Workshop participants considered the relationship between the goals described above and these levels of objectives, and agreed that it made sense to view each goal as a broad area of impact that could subsume objectives at each of the five levels from structural through to consumer and carer benefits.

They then went on to consider each level in more detail, in terms of the specific objectives falling within them. Table 2 provides a summary.

Objectives of the Partnership Project

At the workshop, each of the individual objectives was then examined in the light of intersectoral collaboration needs identified by workshop participants (and by key informants). Specifically, consideration was given to the activities and resources necessary to achieve these objectives, factors affecting the likelihood of their being achieved, and criteria against which success might be judged.

Descriptive

It is important to describe the Partnership Project in some detail because it is a pilot project and if it is to be replicated elsewhere it is crucial that the elements that do and do not work can be elucidated. It is for this reason that the evaluation is emphasizing processes as well as impacts and outcomes. As recommended by Knapp [13], the strong conceptual framework outlined above is guiding the description of the Partnership Project.

Table 1 shows that a number of the components of the evaluation were conducted during the baseline period (e.g. key informant interviews, the mapping exercise, and examination of complaints/incidents databases). Some of the data from these activities will be used for comparison purposes, in order to examine the impact of the Partnership Project (e.g. ongoing monitoring of complaints/ incidents data will provide insight into whether there are fewer complaints/incidents related to suboptimal collaboration). Other data are descriptive in their own right, and serve to clarify the make-up of the Partnership Project (e.g. key informants provided their views on the activities and resources of the Partnership Project that would be likely to overcome problems of poor linkages between sectors). This descriptive information has been compiled in a baseline report [17].

Supplementary descriptive work is being undertaken for a progress report examining the first 6 months of operation of the Partnership Project. It is appropriate that this report focus on processes, since the achievement of impacts and outcomes at this early stage is unrealistic. Indeed, ascertaining that there is a rational fit between the programme's objectives and its activities and that the programme is being implemented as intended is part of evaluability assessment, or checking that a programme is ready to be evaluated in terms of its impacts and outcomes [13], [18]. Key processes that will be described will be the structure and organization of the Linkage Unit and the expanded roles for private psychiatrists. Evaluation components that will contribute to this picture include the review of programme documentation and supplementary key informant interviews with Partnership Project personnel.

Comparative

The evaluation is comparative in that it will provide insights into whether collaborative arrangements improve the experiences of consumers, carers and providers. For example, outcomes for consumers in shared care arrangements will be compared with outcomes for those who are not in such arrangements. It is acknowledged that by itself this information would be open to interpretation. Even if it can be demonstrated that clinical outcomes are better when people are involved in shared care arrangements, it would not be possible to determine from this data component in isolation that the shared care arrangements caused the improvements. It might be, for example, that those who were selected as appropriate for shared care were more likely to have a good prognosis.

Complementary evaluation components are important here. Causal connections will be able to be attributed with more certainty if there are other factors that also suggest such a relationship exists. For example, if the consumers who participate in focus groups say their experiences are more positive than previously, and that they would attribute this change to their receipt of shared care arrangements, then this would constitute further evidence for a causal relationship. Likewise, if, over time, there is a reduction in complaints/incidents related to poor collaboration, this might also be suggestive of shared care arrangements leading to positive outcomes.

The comparisons in the evaluation rely on sound conceptualization and accurate description (see above).

Constructively sceptical

By taking what is essentially a needs-based approach, the evaluation is constructively sceptical. From the outset, the evaluation has undertaken activities to describe the issues that indicated the need for specific reforms (e.g. the Program Logic Workshop). Having identified these needs, it will examine the impact of the Partnership Project on them. This approach provides scope for identifying positive and negative impacts.

Positioned from the bottom up

The evaluation gives priority to the experiences of consumers and carers (particularly the former), the ultimate recipients of the Partnership Project. A number of the evaluation components described in Table 1 elicit the perceptions of consumers and carers (e.g. examination of complaints/incidents databases, consumer/carer focus groups), and others examine their experiences by different means (e.g. the use of outcome data). By carefully conceptualizing the Partnership Project (see above) in a way that clarifies the causal linkages between its component parts, it will be possible to consider the impacts and outcomes for consumers and carers in the light of the collaborative model. In this way, it will be possible to clarify questions such as:

Were positive impacts/outcomes achieved for consumers and carers?

If so, what elements of the collaborative model contributed to their achievement?

If not, what elements of the collaborative model contributed to their lack of achievement?

Collaborative

The evaluation of the Partnership Project is being conducted under a collaborative model that is ensuring that the perspectives of all players are incorporated. As the local evaluator, the CHPE is represented on the project's Steering Committee. This means that evaluation results can be fed back to the project team on a regular basis, and that these findings can inform implementation decisions. In turn, the evaluation is overseen by a subcommittee of the project's Steering Committee. This means that the players in the Partnership Project have the opportunity to influence the evaluation, and to provide advice about the way in which it is conducted. For example, consumer and carer representatives have had the opportunity to voice their views on the appropriateness of different methodologies for examining consumer and carer satisfaction.

This evaluation model mirrors the collaboration and partnerships being promoted within the Partnership Project itself, and from the perspective of those involved is working well. It has the advantages of an external evaluation (e.g. independence of the evaluator), without these being at the expense of the benefits of an internal evaluation (e.g. ‘insider knowledge’ that allows accurate interpretation of findings, ready access to data).

Conclusions

Collaboration is hard to conceptualize and collaborative programmes usually have many players and components, and tend to operate within already-complex systems. This creates difficulties for evaluation, in terms of what to measure, how to measure it, and how to interpret findings.

In spite of these difficulties, this paper has demonstrated a model for evaluating collaborative programmes that is currently working well. It balances the advantages of internal and external evaluations in a way that embodies the principles of good collaboration. This model, or aspects of it, could be extended to the evaluation of other mental health programmes and services that have collaborative elements, which are increasing in popularity.

Footnotes

Acknowledgements

The authors would Roy Batterham for his early work on the conceptualization of the evaluation, and Gillian Halliday, Beth Bailey, Natalie Appleby, Jenni Livingston and Rhonda Goodwin for their valuable comments on earlier drafts of this paper.