Abstract

Supervision is the cornerstone of psychiatric training. It represents the psychiatric equivalent of the apprentice–master relationship, being the vehicle for the learning of skills as well as knowledge and attitude. It is the place of integration of the practical, theoretical and personal elements of professional development [1].

Supervision is also a focus of great ambivalence. It can be at times nurturant and at other times hostile; a place of excitement and learning or of boredom and stagnation. It can engender esteem and at other times shame; give praise or humiliation. It has been called the ‘single most effective teaching implement which we have’ [2, p.500] and yet between 40% and 60% of trainees report educational or emotional neglect, severe criticism or humiliation from their supervisors [3]. If we believe that it is of such importance and yet so often goes wrong, how can this be addressed? How can light be shone into the very private activity of supervision? This report describes the development and trial use of a ‘quality improvement’ instrument for evaluating the supervision and training experience from the trainees' perspective.

Development of the questionnaire required a consideration of what is good supervision, and also what problems are commonly encountered in supervision. Although there is not always agreement about this, the literature gives some guidance. Generally, it is accepted that a good supervisory relationship offers warmth, respect, understanding and trust, and this is particularly important for beginner trainees [4]. A good supervisor allows the trainee to tell their story about their encounter with the patient and consistently tracks the trainee's concerns, helping the trainee to understand the patient [5]. In a survey of Australian trainees, although emotional support was seen as important (and emotional neglect a criticism), attention was also drawn to the importance of competency, availability, knowledge and willingness to take responsibility on the part of supervisors [6]. Trainees are requesting more clinical guidance and managerial responsibility from their supervisors. These practical aspects of supervision are particularly important when the workplace is stressed. Beginning trainees need structure and specific instruction [7]. Observed interviews are important [8] and prescribed in training guidelines. More experienced trainees desire assistance with developing conceptual skills, a coherent theoretical framework and personal development [9, [10]]. All of these demands are embedded in a relationship in which the supervisor is modelling what it is to be a psychiatrist.

These different requirements highlight some of the difficulties inherent in supervision. Being both a manager and a teacher, or an employer and a mentor is not easy. Good supervision requires a balance between structure and freedom, listening and intervening, giving responsibility and taking responsibility, encouraging and confronting. It is this balance which we aim for in supervision, and which this evaluation attempted to measure.

Method

The study was conducted in a metropolitan hospital-based training program. There were 16 training posts at any one time in adult, child and adolescent, and consultation–liaison psychiatry, including both inpatient and outpatient services.

On the basis of the literature reviewed here and elsewhere [1], a draft set of questions was formulated. These included questions about: (i) organised educational activities such as clinical meetings and journal club; (ii) ‘structural’ aspects of supervision such as the availability and punctuality of the supervisor; and (iii) the ‘quality’ of supervision. Some questions were included that could be seen as ‘performance indicators’ or ‘critical incident’ items such as ‘received introduction and orientation to placement’, ‘given opportunity to develop and clarify learning goals’, and ‘supervisor observed diagnostic and/or management interview.’ Within the items describing the quality of supervision, the tensions between providing critical feedback and being encouraging, and being educational yet providing specific clinical guidance were represented. A Likert scale from 1 to 5 was used with anchor points of 1 == unsatisfactory, 3 == satisfactory and 5 == outstanding. A draft questionnaire was circulated to trainees and supervisors with an explanation and a request for comments concerning the content of the questionnaire as well as the process. A few questions were modified in the light of responses received. The final questionnaire consisted of 20 scored items (obtainable from the author upon request). Trainees were asked to complete the evaluation at the end of each 6-month rotation. After 2 years, the data were compiled for presentation to the consultant group for discussion. These data are presented here. All trainees and consultants agreed to the conduct of the evaluation.

Over 2 years, ratings were conducted at four time periods and involved 25 trainees and 13 supervisors. All trainees in the program submitted evaluations although occasional items were not completed. If a trainee had more than one supervisor an evaluation was completed for each. The number of ratings per supervisor ranged from 2 to 6, with a mean of 3.6.

Methods

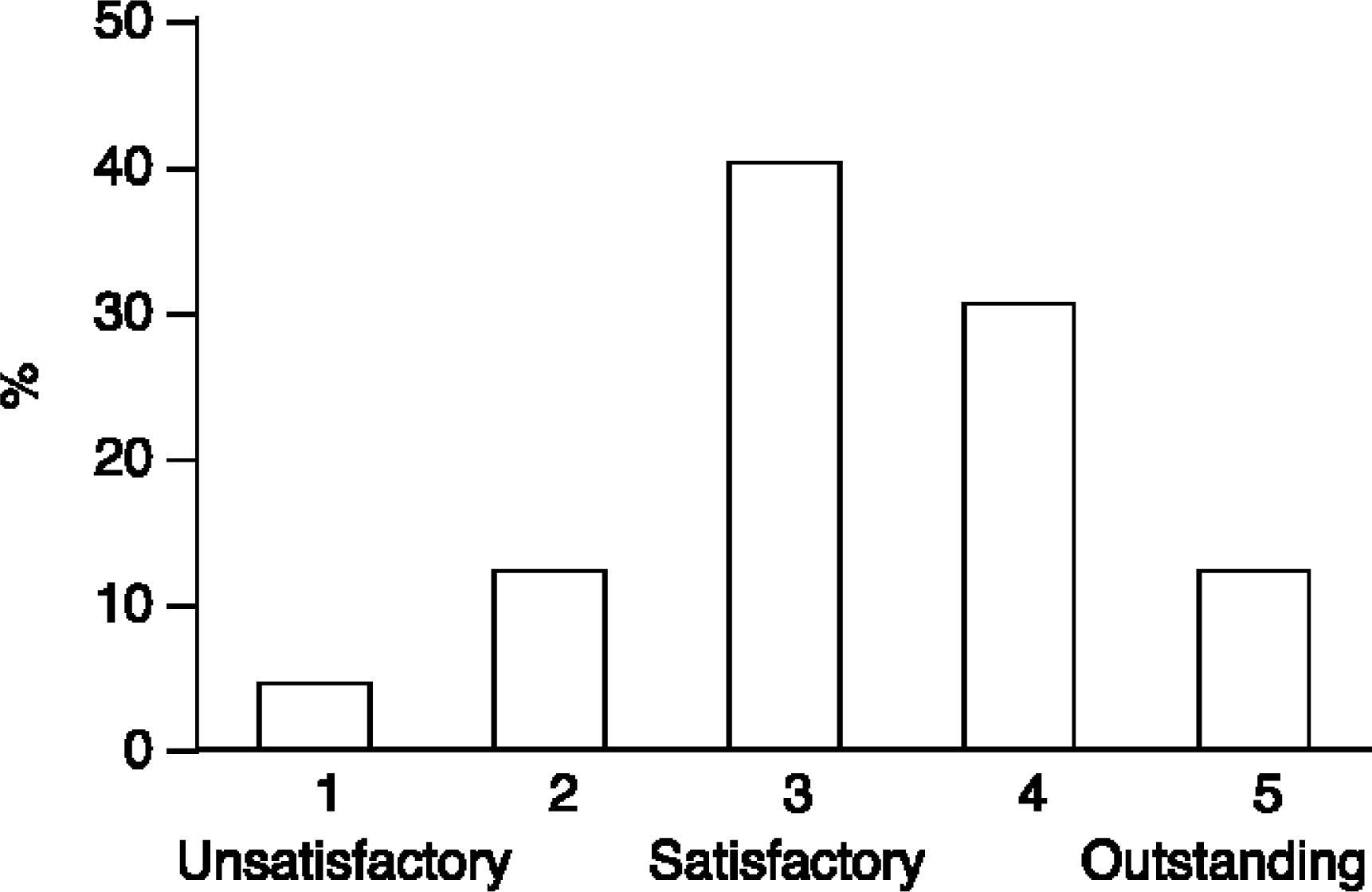

The distribution of item scores is illustrated in Fig. 1. Seventy-one percent of all ratings (n == 1259) were either 3 or 4. Seventeen percent were less than ‘satisfactory’ (i.e. given scores of 1 or 2). The mean of all ratings was 3.3 (SD == 0.81).

Frequency distribution of scores (n == 1259)

There were between 39 and 44 completed ratings for the general training questions (orientation, range of clinical experience, educational activities; eight items). Two items had means of less than 3. Journal club rated a mean score of 2.5 with 40% of trainees rating it less than satisfactory. Clinical meetings had a mean of 2.9 with 20% of ratings being less than satisfactory.

Mean scores for structural and ‘quality’ aspects of supervision

Psychotherapy supervision (two items) received the highest ratings with a mean of 4.0 for ‘frequency, duration and availability’ (n == 33) and a mean of 4.3 for ‘quality and usefulness’ (n == 32). Supervision within the outpatient service tended to rate lower on all measures than supervision in consultation–liaison and inpatient settings and the ‘amount’ of supervision in outpatients was considered overall less than satisfactory (mean == 2.6).

Discussion

This work is presented as a demonstration of how attributes of supervision and training may be measured, and of some of the difficulties involved in the process of objectifying such an evaluation. Particularly in relation to the supervision relationship there is no objective measure possible. There are only two people who share the experience and each may judge it differently. Just as there are difficulties in an individual supervisor rating a trainee, there are difficulties in a single trainee rating a supervisor. Assessment of supervision is affected by processes of idealisation, denigration, envy or competitiveness, and other transferential reactions which are prone to occur in supervisory relationships [1]. For example, second-year trainees are known to be more critical of supervisors than first- or third-year residents [11]. This is considered part of the ‘second-year slump’ described by Sadock and Kaplan [12]. A single evaluation may be considered idiosyncratic. If a supervisor receives a poor rating it may be explained by a ‘personality clash’, just as a poor evaluation of a trainee may be. Consequently, individual ratings cannot be considered ‘reliable’ from a statistical point of view. A further difficulty arises with the maintenance of anonymity in the processing of individual ratings.

So how many ratings are required to attain some reliability and protect anonymity? In educational institutions, evaluation of teachers by students is now commonplace. In the rating of performance of clinical tutors in undergraduate medical education, Dolmans et al. advise a minimum of six students rating the one tutor at the one time [13]. The evaluation reported here ran over a period of 2 years giving most supervisors four ratings. This is probably not enough for test reliability. Conversely, waiting a longer period to gain more ratings defeats the purpose of quality improvement which relies on prompt feedback of assessment, as well as introducing the possibility of real change occurring over time. Reliability cannot, therefore, be claimed with respect to precision of scores. However, an alternative way that data derived from trainee evaluations can be used is more like ‘clinical indicators’ where one is concerned about the number of ‘unsatisfactory’ ratings [14]. For instance, a standard could be set of two or more unsatisfactory ratings for any item from four ratings as flagging a ‘likely problem area’. Using this criterion, examination of the data indicated a few supervisors who needed to pay attention to some ‘structural’ aspects of their supervision: punctuality, reliability and availability. In addition, one supervisor required attention in many of the ‘quality’ areas.

What does one do after collecting this information? Herrmann has described the use of a similar questionnaire in Toronto [15]. Despite concerns expressed about the reliability of the ratings, he used the evaluations, with aggregate scores and narrative comments, as part of the procedures for promotion and re-appointment of consultants. This administrative use might have appeal to some, but seems problematic considering the impossibility of establishing independent, objective or reliable measures. It was not the intention to use our evaluation in this managerial function but rather as part of a quality improvement process. The principles of quality improvement are that: (i) the ‘customer’ defines quality; (ii) ‘quality’ is measured; (iii) the results are presented and reflected upon; (iv) ways to improve performance are considered and implemented; and (v) the ‘quality’ measures are repeated. The focus of attention should be on the system or the process rather than the individual person [16]. This last requirement is difficult when the attention is naturally drawn to the dyadic relationship but is an important consideration. In this ‘experiment’, combined data were presented in graph form to the consultant group. This encouraged reflection and discussion of supervision and other training matters. We did not proceed to routine individualised feedback, although such information was offered to supervisors upon request. Neither is there yet enough data to show that the quality of the supervision and training experience is improving, although this is an important element of quality improvement. If an ‘unsatisfactory’ supervisor fails to make significant changes after several cycles of the quality improvement process there is, perhaps, an argument for more assertive action on the part of the training coordinator.

Conclusion

There are some major difficulties involved in measuring the quality of supervision. Nevertheless, if the aim is to encourage thinking about the process of supervision, reflection about one's personal supervision style, and ultimately to improve supervision through deliberate and thoughtful action, then this sort of evaluation seems a helpful tool. The process needs to be conducted in a non-condemnatory environment rather than as an administrative action with threatened punitive outcome. The process would be helped by creating an environment of learning and training in the art of supervision. Other methods for creating this could also be employed such as group supervision for supervisors, with didactic input as well as a subjective exploration of current supervisory relationships [17, [18]]. One of the problems with evaluating supervision is gaining objectivity. As a further method to facilitate this videotaped supervision sessions could be reviewed in the group or with another respected colleague.

If supervision really is seen to be the central and most important component in the training of a psychiatrist, then much more attention needs to be given to it, both to the relational qualities as well as the structural aspects. It has been said that not all psychiatrists have the skills to be supervisors and not all should be supervisors [19]. This is a premature judgement as very little consistent attempt has been made to train psychiatrists in the craft. To affirm such a position would be to move away from the apprentice model of training and toward an educational one, as if some psychiatrists are practitioners and some teachers. This is quite contrary to the traditions of medicine. Rather, more attention needs to be given to the training, education and supervision of psychiatrists in their supervisory role. A ‘quality improvement’ measure may be a useful tool in this process.

Footnotes

Acknowledgements

Thanks are due to the psychiatrists and trainees who were part of the training program, who created an environment for this activity to occur and who participated in it. Drs Denis Handrinos and Tom Trauer kindly read and commented on an earlier draft.