Abstract

This report describes an integrated, software-based quality control system designed to significantly improve the sample analysis throughput and quality of beryllium analysis laboratories throughout the U.S. Department of Energy complex. Originally, this system was developed to minimize the downtimes of expensive instrumentation (here: Perkin Elmer® 4300™ DV Inductively Coupled Plasma Optical Emission Spectrometers) and to automate the sample analysis quality control process. This automated software system was also expected to eliminate time-consuming and error-prone result data interpretation, which previously was done manually. To achieve these goals, Los Alamos National Laboratory recently implemented a rule-based decision support system in the C#.NET™ that continuously extracts and analyzes data from the instrument's result database as the data is being generated. Using a customer-specific expert rule base, the system is capable of detecting abnormal operating situations fully autonomously in real time. This also enables the system to perform on-the-fly quality control and automatic, electronic event notification of lab personnel via e-mail and pager.

Introduction

This report provides an overview of a software-based Quality Control (QC) system that was recently developed at the Los Alamos National Laboratory (LANL) to automate the QC process of instrument-generated surface sample analysis data. The primary objectives of this automation project were to speed up and improve the QC on instrument-generated result data, as the outcome of the instrument-driven sample analysis would determine whether a worker or a facility has been contaminated with a hazardous material such as beryllium (Be). Due to the potential health and environmental implications of the analysis outcome, LANL's analytical laboratories must follow a very stringent set of QC protocols to minimize the potential for human and device error. Unfortunately, heavyweight, manual QC processes like the ones employed in this particular surface sample analysis application come at a price. This report provides an example of how software-augmented QC can be used to lower the QC cost per sample while, at the same time, increasing the result data quality and shortening the analysis result reporting cycle.

From a high-level perspective, LANL's surface sample collection and analysis process can be summarized by five major steps: (1) Whatman filter-based surface samples are collected at random at predefined sampling points in the field and sent to a LANL internal analytical laboratory, (2) filter-based surface samples are run through a digestion process and then distributed into test tubes, (3) digested, liquidized filter samples are analyzed by Perkin Elmer® (PE) Optima 4300TM DV Inductively Coupled Plasma Optical Emission Spectrometers (ICP-OES), (4) ICP-OES result data undergo elaborate QC checks (with a potential repeat of step 3 if sample results fail QC checks), and (5) sample analysis results are reported to the customer. An analysis of this workflow model revealed that the QC performed during step 4, which used to be done manually and is now done fully automatically by the QC software described herein, represents the major process bottleneck.

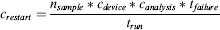

Figure 1 is a picture of a Perkin Elmer® (PE) Optima 4300TM DV ICP-OES, which is LANL's primary workhorse instrument for surface sample analysis.

Perkin Elmer® 4300TM DV ICP-OES.

Inductively coupled plasma optical emission spectroscopy is a widely used technique for elemental analysis. To ensure repeatable sample analysis performance, it is necessary to frequently calibrate the ICP-OES device with samples of predefined analyte concentrations. These types of samples, which are usually referred to as calibration blanks and standards, allow the laboratory technician to set the linearity and the sensitivity range of the instrument.

Based on LANL internal QC guidelines, the analytical results that are generated by the Optima 4300TM DV devices need to be reviewed in stages by three independent reviewers to minimize human interpretation errors. On average, it takes an analyst about 20 min to review the results of a full scan of 11 analytes per batch of 20 samples. Batch runs that result in invalid data (e.g., result values outside the sensitivity range of the optical spectrometer) usually require a rerun of the complete batch of samples. Based on its current auto sampler configuration, the Optima 4300TM DV could run batch sizes of up to 180 samples, excluding calibration blanks and internal standards. However, since there is currently no easy and quick way to determine which samples within a batch may need to be reassayed, the complete sample batch is rerun in case of invalid data or device failures. This is the main reason why LANL currently limits the sample batch sizes on the Optima 4300TM DV instruments to about 20 samples. On average, the Optima 4300TM DV takes 100 min to process a batch of 20 samples. Given the relatively long run time per batch and the overall cost of analysis, it is highly desirable to detect abnormal situations that may result in invalid data as soon as they occur. Many scenarios could potentially lead to an off-normal situation. For example, there could be an instrument defect such as a clogged tube. The samples also could be too concentrated, and/or rinsing of the sample introduction system between samplings could be incomplete, causing carry-over contamination from the previous sample, thus yielding inaccurate data.

The Optima 4300TM DV instruments at LANL are set up such that they generate a run-time log on a printer, as well as continuous log entries in a Microsoft Access® -based result database. Periodically, the analyst checks the device's printout to ensure the samples are being analyzed within acceptable limits. The analyst also uses the run-time log to receive a status report of the instrument's “health” condition. These periodic checks are time consuming and interrupt the work of the analyst. In the worst case, the device may start to generate invalid data just a few minutes into the run, which might go undetected until the very end of the run (here: >100 minutes). As a result, more than 1.5 h of valuable device time plus consumables may be lost. To address these and other shortcomings of the traditional sample analysis QC process, Los Alamos National Laboratory developed and deployed an automated, software-based QC decision support system (QCDSS).

The remainder of this report is organized into three sections. The first section describes the software implementation of LANL's new QCDSS. The second section provides a detailed cost–benefit analysis of QCDSS. The third and last section then summarizes the major results of the QCDSS automation project.

Implementation

It is the limited capability of the Optima 4300TM DV's built-in reporting system (here: periodic printouts of intermediate result data on a printer and direct data export into a Microsoft Access® database) that requires analysts to constantly monitor the result data output of the instrument. Because the Optima simultaneously records sample analysis results in a Microsoft Access®-based result database, it was decided to automate the result data quality control by monitoring the database directly. The data access to the Optima's raw result database was implemented using Microsoft's new C#.NET programming language and its ADO.NETTM (ActiveX Data Object) data management framework. The primary goal of this automated system is to emulate the manual, result data-driven quality control process previously performed by an analyst. As a result, LANL developed a software-based decision support system that completely automates this human-based sample analysis QC process.

Because the continuous monitoring of the Optima's periodic analysis result data output to its database is important, a C#.NET-based, timer-controlled database event handler was implemented first. This event handler was set up so that each time the timer function generates or “fires” a timer event (here: every few seconds), QCDSS establishes a temporary connection to the Optima's raw result database, copies the most recent result data entries of interest into memory, closes the database connection, and then resets the timer function for the next event/check. This mechanism allows QCDSS to start processing the copied result data noninvasively in the background, while the Optima device continues to run and continues to generate more result data.

As mentioned previously, QCDSS had to be able to emulate the analyst's sample analysis QC process exactly. While there was some flexibility with respect to the order in which certain QC checks had to be performed, the overall QC performance had to be identical. Before QCDSS existed, the analyst would periodically look at the Optima's run-time log and result data printout and perform two types of quality checks. The first manual quality check was used to determine whether the data for the calibration blank and the internal standards were within acceptable error limits. The second quality check looked at whether the sample data for the analytes of interest were within their valid calibrated ranges. QCDSS completely automates both of these QC checks. The two conditions that cause failures are a device error or a concentration of analytes above the calibration range. Either condition requires the operator to stop the Optima's current analysis run, prepare an identical batch of samples, and rerun the new batch.

Based on an analysis-specific QC rule set (which was obtained through an elaborate interview process with an expert analyst), QCDSS is able to detect either of these two abnormalities automatically in real time. QCDSS's rule set is composed of a set of QC single- and multi-rules. A single-rule QC procedure, for example, uses a single criterion or a single set of control limits, such as a Levey-Jennings chart with control limits set as either the mean ± 2 standard deviations (2s) or the mean ± 3s. 1 In the case of QCDSS, QC checks of instrument result data are done against calibration blanks and standards of known concentration with predetermined acceptable error limits (i.e., control limits). For surface sample analysis, for example, LANL typically sets its control limits to ±10 percent of the expected value. In contrast to single-rule QC, multi-rule QC uses a combination of decision criteria, or control rules, to decide whether an analytical run is in-control or out-of-control. The well-known Westgard multi-rule QC procedure, for example, uses five different control rules to judge the acceptability of an analytical run. 2 “Westgard rules” are generally used with two or four control measurements per run, which means they are appropriate when two different control materials are measured one or two times per material. The most complex QCDSS multi-rule requires three control measurements.

Currently, QCDSS's expert rule base is composed of 14 single- and 4 multi-rules. QCDSS primarily uses single-rules to validate individual result data elements as they are being generated by the Optima instrument. The multi-rules, on the other hand, are mainly used for performing automated post-run QC. The QCDSS rule base is stored in a Microsoft Access®-based database, which allows for easy rule base updates, replacement, and replication. The rules within this relational database are represented through sets of data identifiers, metadata descriptors, cross-referenced data identifiers, comparison operators (e.g., =,>,<,> =,< =,!), and value ranges.

The ability to directly access the analysis result data from the Optima instruments and analyze the data with its expert rule base enables QCDSS to decide autonomously when to notify lab personnel (e.g., via e-mail and/or pager). As a result, QCDSS's electronic notification capability eliminates the need for the constant presence of an analyst and his, or her, periodic QC checks. It also allows operators to minimize running time on compromised sample sets.

While the Optima device is capable of screening for a wide variety of analytes concurrently, the list of analytes of interest and their respective data ranges highly depend on the type of samples and the customer. To keep QCDSS from performing unnecessary QC checks on irrelevant data and to prevent it from triggering false alarms, a list of analytes of interest along with their acceptable value ranges can be provided to QCDSS in the form of run-time parameters. By enabling the analyst to provide the QC parameters to QCDSS at run time, the system can be quickly reconfigured to run different QC protocols. It also enables the analyst to build up a QC protocol database that can be shared among and validated by other laboratories.

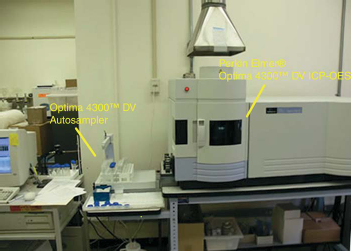

Figure 2 provides a simplified overview of the information flow in the target laboratory system. Before the introduction of QCDSS, analysts were responsible for periodically checking on the device status and result data quality. Today, QCDSS performs these tasks autonomously in the background, thus simplifying system operations and freeing up valuable analyst time.

System architecture and information flow.

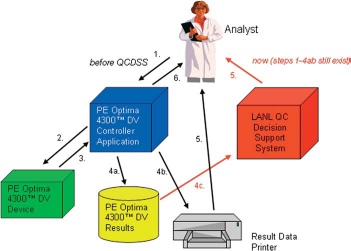

Figure 3 provides an overview the software architecture of QCDSS. QCDSS is composed of four major system components: an expert rule database, an inference engine, a graphical user interface, and a user notification system. As described previously, the QCDSS expert rule base is a Microsoft Access®-based database and it stores the expert QC rules. The QCDSS inference engine is used to continuously extract and analyze the latest data from the Optima's raw result database using QCDSS's expert rule base. Access to the Optima's raw result database and the expert rule database is achieved with Microsoft's ADO.NETTM data management framework. Based on the outcome of the inference engine's analysis, the QCDSS's user notification system keeps the analyst informed about the QC status of ongoing Optima runs. This e-mail-based notification system uses Microsoft's SMTP (Simple Mail Transfer Protocol) implementation.

QCDSS software architecture.

To validate the proper operation of QCDSS, a validation test plan as well as an automated test routine for black-box and regression testing was developed. The test program was based on an elaborate set of systematic and randomly generated test cases. The systematic test cases, which were part of the test plan, were developed in close collaboration with the domain experts. The domain experts’ input provided assurance that the test plan included all commonly encountered real-world analysis scenarios. The final test plan was then independently validated by three domain experts.

All QCDSS software components are written in C#.NET. The total development time for QCDSS was about 2 person-months, which included the programmer's time to learn the C# programming language (prior knowledge of C and C++ existed) and the required .NET libraries.

Results

One of the design objectives of QCDSS was to minimize the time between the occurrence of analysis failures (here: either device- or sample-induced) and their detection by laboratory personnel.

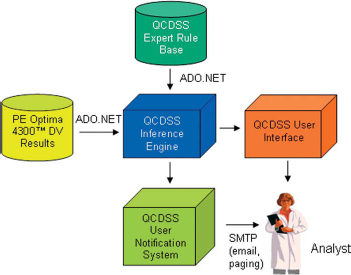

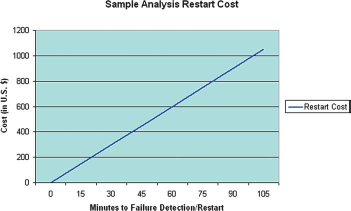

Figure 4, for example, shows that the cost associated with restarting a sample run after detecting it 30 min into a run is about$300.

Figure 4 and Eqn. 1 show a cost function that illustrates the costs associated with the restart/rerun of a sample batch due to an off-normal event. This figure and equation further stress the importance of early analysis failure detection and QC.

Batch restart cost function for a PE Optima 4300TM DV device.

Eqn. 1 describes the restart cost function of the PE Optima 4300TM DV in more detail. The underlying assumptions for this cost function are as follows. LANL processes about 14,000 samples a year on a single Optima 4300TM DV instrument. The procurement cost for this device is about $100,000, and the device has a life expectancy of about 5 years. LANL currently owns and operates five Optima instruments. The annual maintenance contract with PE costs more than $15,000 per device. The supplies (e.g., Argon, Dewars, etc.) to process 14,000 samples a year per device run about $7000. To run a single batch (here: 20 samples) through the system (i.e., logging in, digestion, instrument time, data acquisition, and QC) takes about 6 hours, which, at LANL, translates into about $300 of labor cost per batch run.

Eqn. 1. Sample Batch Restart Cost Function

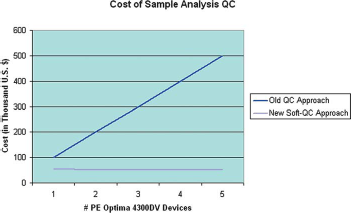

Another design objective of the automated QCDSS was to eliminate the time-consuming and potentially error-prone, manual result data interpretation. Figure 5 contrasts the cost of the traditional, manual QC versus the new, automated QCDSS-based QC. The plots shown in Figure 5 are based on the following assumptions. The one-time development cost of QCDSS was about $50,000. The average cost of an analyst at LANL, including all labor-related overhead and training, is estimated to be about $100,000. As mentioned previously, the QC guidelines at LANL mandate that result data be reviewed by three independent analysts. Given a daily sampling rate of about 60 to 80 samples per Optima device in the QCDSS pilot laboratory, the QC usually requires one full-time analyst per device. This means that scaling-up the number of devices in the laboratory would also require a linear scale-up of the QC personnel. As a result, the cost of sample analysis QC would also rise proportionally. This obviously does not apply to the scale-up cost associated with QCDSS.

Cost of old (manual) QC process vs. new (automated) QCDSS-based QC.

The QC for additional Optima devices could be easily handled by simply installing additional copies of QCDSS (i.e., one instance of QCDSS per device). QCDSS comes with a standard Windows application-style setup program, which is very easy to operate. Empirical data shows that, on average, the installation of QCDSS by a laboratory technician with basic PC skills takes about 3 min per instrument. Assuming an hourly technician rate of $100, the software installation translates to about $5 per installation. To estimate the annual software maintenance cost of QCDSS, we assume the software industry standard rate of 10% of the product cost per annum. So given the $50,000 development cost of QCDSS, the total annual maintenance cost is estimated to be around $5000. Assuming one software release per year (which, of course, could be also done via automated network updates), the annual maintenance per instrument could be calculated as $5 per manual reinstall/update, plus $5000 per number of installations. Due to QCDSS's streamlined and easy-to-use graphical user interface, user training requires an average of no more than 20 min. Assuming the previously mentioned hourly technician rate, the cost of user training thus translates to about $33 per user. A similar rate could be applied for the instructor if live instruction is preferred over the program's built-in online tutorial.

Before the deployment of QCDSS, it took three lab technicians (two for sample preparation and one for QC) to keep one Optima ICP machine continuously busy. With QCDSS, it now takes only two people to run the Optima 4300TM DV device in non-stop mode. For example, doing the QC for a batch of 20 samples with QCDSS on a PC with a 500-MHz Pentium 3 processor and 256 MB of RAM (Random Access Memory) takes less than 1 s total.

The data shown in Figure 5 indicate that QCDSS will pay for itself within just 6 months of operation (assuming it is run on only one Optima device). The slight decrease in the QC cost function of the new approach (as shown in Fig. 5) can be explained by the way the development cost for the annual release is split over the number of installations (see previous paragraph for details). Running the software on multiple Optima instruments would obviously multiply the return on investment and shorten QCDSS's amortization phase proportionally. Furthermore, QCDSS's quasi real-time failure detection and electronic operator notification capability enables system operators to minimize the QC-related “downtimes” of their Optima instruments. More specifically, QCDSS has been able to reduce QC-related Optima downtimes at LANL by more than 98%. Due to QCDSS's ability to speed up the QC process and shorten the analysis result reporting cycle, customer's now receive their results in about two-thirds of the time.

Statistically, the manual QC approach used to yield on average one result data interpretation error per 100 samples. Based on performance data obtained through extensive automated testing and simulations, the new QC approach is expected to improve the error rate by 2 orders of magnitude, i.e., 1 error per 10,000 samples.

For many of the sample analysis laboratory's customers, a reduction in sample turnaround by 4 h or more is one of the most important side benefits QCDSS had to offer. For example, in the case of accident assessment involving beryllium or other hazardous materials, a small time delay or error in the sample analysis process could have a critical impact on the health and well being of many people.

Conclusions

In an effort to replace a labor-intensive and costly beryllium sample analysis QC process, LANL developed a software-based QC decision support system. Besides speeding up the sample analysis QC process, the system also eliminates the potential for human error during manual result data interpretation, which helps to reduce the sample analysis error rate by 2 orders of magnitude. QCDSS also shortens the result data reporting cycle by about 33%. This new software-based QC system also helps to reduce the analysis cost in the laboratory by detecting potential system malfunctions and electronically notifying lab personnel in real-time, thus optimizing valuable device time and saving costly reagents. An initial cost-benefits analysis reveals that QCDSS, running on just a single PE Optima 4300TM DV device, will amortize its development cost within about 6 months of operation. The system also saves 8 h of analyst time per day.

Being able to implement QCDSS in about 2 person-months indicates that using C#.NET for implementation was a good choice. QCDSS could have been implemented in other programming languages and development environments. In this case, however, the choice to use C# and .NET as software development environment was primarily driven by the programmer's background (C and C++), C#'s powerful language constructs, and .NET's feature-rich class libraries. The successful completion of the project in such a short period of time further indicates that C#.NET provides a very good alternative to other, more established, rapid prototyping languages such as Visual Basic.

Due to QCDSS's modular software architecture and use of standard data access and communication interfaces and protocols, LANL expects to be able to apply the same framework to other types of laboratory devices. As a future extension, LANL is considering a direct link between the device-level QCDSS(s), a LIMS (laboratory information management system), and a Web-based reporting system. This would enable the analytical laboratories at LANL and at other sites to move their QC processes completely from a paper-based, check list-style format, to an electronic format. It would also further speed up the reporting cycle, thus reducing the sample turnaround times for customers.

Disclaimer

The identification of certain commercial equipment or materials in this paper does not imply recommendation or endorsement by the Los Alamos National Laboratory, nor does it imply that the materials or equipment are necessarily the best available for the purpose.

Footnotes

Acknowledgment

This project has been funded in part by the U.S. Department of Energy's ADAPT program and LANL's C-ACS group. The authors are particularly thankful for the support and contributions from Dr. Cynthia Mahan, Dr. Rebecca Chamberlin, Dan Knobeloch, Dr. Pete Pittman, and Maura Wilhelm. Furthermore, the authors would also like to thank the JALA editor and the referees for their comments, which helped to improve this paper.