Abstract

To what extent are intergroup attitudes associated with regional differences in online aggression and hostility? We test whether regional attitude biases towards minorities and their local variability (i.e. intraregional polarization) independently predict verbal hostility on social media. We measure online hostility using large US American samples from Twitter and measure regional attitudes using nationwide survey data from Project Implicit. Average regional biases against Black people, White people, and gay people are associated with regional differences in social media hostility, and this effect is confounded with regional racial and ideological opposition. In addition, intraregional variability in interracial attitudes is also positively associated with online hostility. In other words, there is greater online hostility in regions where residents disagree in their interracial attitudes. This effect is present both for the full resident sample and when restricting the sample to White attitude holders. We find that this relationship is also, in part, confounded with regional proportions of ideological and racial groups (attitudes are more heterogeneous in regions with greater ideological and racial diversity). We discuss potential mechanisms underlying these relationships, as well as the dangers of escalating conflict and hostility when individuals with diverging intergroup attitudes interact. © 2020 The Authors. European Journal of Personality published by John Wiley & Sons Ltd on behalf of European Association of Personality Psychology

Keywords

Introduction

Hostile intergroup behaviour is frequently expressed through hateful speech on social media (Chau & Xu, 2007; Gerstenfeld, Grant, & Chiang, 2003). People use social media to express their outrage towards opposing groups (Crockett, 2017) and even endorse or threaten others with physical violence. Such instances of verbal hostility are facilitated through the anonymous nature of online environments, where aggression is less risky than it would be offline (see online disinhibition: Suler, 2004). Online aggression can occasionally spark offline violence, making online hostility a risk factor for both psychological and physical well–being (Hinduja & Patchin, 2007, 2012). This spill–over effect from online to offline aggression was documented in a series of studies by Müller and Schwarz (2019a, 2019b), who respectively used temporary social media outages and an instrumental variable strategy to ascertain the causal order of aggressive acts. Notice that the reverse effect, offline behaviour affecting online behaviour, has also been observed across a range of studies and that the order and interaction of both spheres are ongoing issues of debate (e.g. Greijdanus et al., 2020).

Importantly, online hostility often consists of intergroup aggression, with minority members suffering from victimization more frequently than majority members (Awan & Zempi, 2016; Müller & Schwarz, 2019a). Online hate often does ‘not attack individuals in isolation’ but rather targets a collective of people (Hawdon, Oksanen, & Räsänen, 2017, p. 254). Psychological researchers therefore frequently measure vicarious experiences of online hate in which the reader is not personally attacked, but belongs to the derogated minority group (Tynes, Rose, & Williams, 2010). Given that online hostility towards minorities affects large amounts of people (Abbott, 2011; Costello, Hawdon, Ratliff, & Grantham, 2016), varies across geographic areas (Hawdon et al., 2017), and might even turn into physical violence (Awan & Zempi, 2016), it is important to identify the environments in which it is most likely to occur.

We analyse US American samples from Twitter and nationwide surveys from Project Implicit to examine geographical differences in online hostility. More precisely, we test whether regional averages of attitudinal biases towards minorities and their local variability (i.e. intraregional polarization) independently predict verbal hostility on social media. In the following section, we review prior research pointing to the idea that average regional attitudes towards minorities are related to regional differences in hostility. Subsequently, we argue that average regional attitudes do not tell the whole story and introduce the idea that it is also important to consider how attitudinal biases are spread within local regions. More precisely, we propose that high variance in regional attitudes (which indicates the presence of conflicting ideological or demographic groups) is positively associated with online hostility.

Regional Attitudes Towards Minorities and Online Hostility

In recent years, online social media have become a major outlet for blatant intergroup discrimination (for reviews see Keum & Miller, 2018; Peterson & Densley, 2017). While offline discrimination often takes on subtle forms, online hostility is often blatant and explicit. Arguably, online aggression is bolstered by anonymity for perpetrators and decreased visibility of victims’ suffering compared with offline settings (Kahn, Spencer, & Glaser, 2013). Accordingly, online abuse is common for both racial minorities (Tynes, Giang, Williams, & Thompson, 2008; Tynes, Reynolds, & Greenfield, 2004) and sexual minorities (Cooper & Blumenfeld, 2012; Varjas, Meyers, Kiperman, & Howard, 2013). In most cases, such forms of online hostility are argued to originate from antiminority biases including racism and homophobia, which vary across geographical locations (e.g. Hehman, Flake, & Calanchini, 2018; Swank, Frost, & Fahs, 2012).

What factors lead to the local emergence of hostile online environments? Online hostility can emerge from current local events (Williams & Burnap, 2015) or local history (Payne, Vuletich, & Brown–Iannuzzi, 2019). For example, Kaakinen, Oksanen, and Räsänen (2018) observed that the Paris terror attack from November 2015 was associated with a rise in fear and intergroup hostility among Finnish internet users, who related to the pre–attack situation of their fellow Europeans (c.f., Oksanen et al., 2018). According to the authors, this finding is in line with the general observation that threatening societal events serve as a trigger for outgroup blaming and intergroup hostilities. In their study, hostility was measured as the experienced frequency of verbal online hate. Groups that were targeted more often than before the event included religious, ethnic, and political groups, as these groups were labelled responsible for the attacks and the resulting societal uncertainty.

More generally, there is a long tradition of research rooted in the social identity approach on the connection between ingroup threat and outgroup hostility (Tajfel & Turner, 1979; Turner, 1985). Under threat, outgroup derogation appears to be a common strategy of regaining collective self–esteem (Branscombe & Wann, 1994). Specifically, regional experiences of ingroup threat seem to elicit heightened aggression and negative intergroup emotions (Fischer, Haslam, & Smith, 2010; Huddy & Feldman, 2011), which can be locally engrained and passed on over generations if the eliciting event was impactful enough (Obschonka et al., 2018; Payne et al., 2019). Note that regional construct aggregates (here, intergroup attitudes and hostility) are interpreted as a psychological facet of regional culture (Kitayama, Ishii, Imada, Takemura, & Ramaswamy, 2006) and that neither their interpretations nor their intercorrelations can be generalized to the individual level (Rentfrow, 2010; Rentfrow, Gosling, & Potter, 2008). Specifically, interracial biases aggregated on a regional level were defined as ‘average, or collective, psychological predisposition’ that local groups have towards each other (Hehman, Calanchini, Flake, & Leitner, 2019, p. 1025).

In the context of group relations, local animosity is associated with negative phenomena for all the involved groups. For instance, the more negatively White locals feel towards Black (compared with White) people, the more stress–related health problems Black locals experience (specifically circulatory diseases) and the higher the mortality for both Black and White locals rises (Leitner, Hehman, Ayduk, & Mendoza–Denton, 2016a, 2016b; Orchard & Price, 2017). Similarly, regional levels of anti–Black sentiment predict lethal police force against Black people (Hehman et al., 2018). Arguably, racially biased environments put a strain on both intergroup and interpersonal relationships, thereby enforcing the local propensity for violence against minority members (Hehman et al., 2018). Similarly, Johnson and Chopik (2019) found that in US counties with strong racial stereotypes, the targeted minority group itself also engages in more violence (measured as rates of murder, aggravated assault, and illegal weapon possession). Thus, there appears to be a relationship between average regional attitudes and local rates of violent behaviours. This relationship is in line with common correlations between aggregated psychological measurements and local indices of well–being (Plaut, Markus, & Lachman, 2002).

Importantly, the link between average antiminority attitudes and regional hostility holds true for other types of (e.g. nonracial) intergroup attitudes. There is substantial regional variation in attitudes towards other minority groups including gay men and lesbian women (gay people from here). While regional covariates of attitudes towards gay people have received less attention, prior research suggests that the well–being of sexual minorities is linked to their regional environments (Morandini, Blaszczynski, Dar–Nimrod, & Ross, 2015). For example, gay people living in southern states of the USA were subjected to increased levels of discrimination and stigma (e.g. thinly veiled hostility) compared with gay people living in non–southern states (Swank et al., 2012).

Building on previous research, we investigate if biases against minorities and prevalence of verbal online hostility are associated across geographical spaces. However, we go beyond prior work, which focused on comparing average regional attitudes, to investigate if hostility is related to the distribution of intergroup attitudes within regions. We test if high levels of hostility are more likely to be observed in regions where individuals with conflicting attitudes and ideologies are more likely to come into contact. In other words, hostility may also be observed in regions with high levels of intraregional variability in attitudes.

Intraregional Attitude Variability and Online Hostility

While average local attitudes (i.e. the extent to which citizens from a region are, on average, biased against certain groups) are an important indicator of regional culture, they paint a simplified picture and discard valuable environmental information. Hostility is usually the result of people having divergent, rather than convergent, attitudes (Harinck & Ellemers, 2014), and local averages do not capture the level of local divergence. Intergroup conflicts emerge when two groups disagree about adequate group hierarchy (often involving their own group; Bobo, 1999). That is to say, compared with the effects of average levels of social biases, intraregional variability of social biases may be more strongly associated with regional hostility. Variability in relative attitudes towards minorities implies ideological opposition or disagreement between the different attitude holders (e.g. some are pro–White, whereas others are pro–Black). Past work highlights that such ideological opposition can indeed lead to substantial aggression and segregation (Brandt, Crawford, & Van Tongeren, 2019; Brandt, Reyna, Chambers, Crawford, & Wetherell, 2014; Kouzakova, Ellemers, Harinck, & Scheepers, 2012).

Donald Trump's presidential candidacy in 2016 illustrates how diverging intergroup attitudes can create a hostile online environment. Arguably, dehumanizing and aggressive antiminority rhetoric led to hostile backlashes among targeted minority members (Kteily & Bruneau, 2017) and among majority members whose egalitarian attitudes were in dissonance with Trump's antiminority standpoint (Meyer & Tarrow, 2018). These recent examples suggest that ideological polarization, especially regarding minority treatment, often entails aggression (Iyengar & Westwood, 2015; Miller & Conover, 2015). This aggression is frequently expressed through partisan activity on social media (Crockett, 2017; Hasell & Weeks, 2016), which in turn often crosses the threshold of hate speech (e.g. Ben–David & Matamoros–Fernández, 2016). Theoretical concepts like perceived injustice (van Zomeren, Postmes, & Spears, 2008), status instability (Scheepers, 2009), and politicized identities (van Zomeren, Postmes, & Spears, 2012) all point towards conflict under diverging attitudes (rather than consensually positive or negative attitudes).

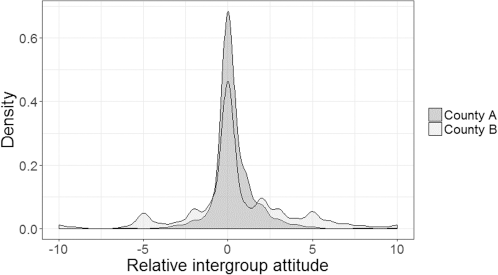

In sum, regions not only differ in the average attitudes towards minorities (see previous section), but also in how variable these attitudes are within each region (Evans & Need, 2002). In regions with high attitude variability, biased people clash with people who hold more egalitarian values (or with people who are biased in favour of minority groups). Given that polarized attitudes towards minorities spur conflict and hostility, divided regions should be characterized by frequent aggression, especially in anonymous online environments. Conversely, if locals are homogenous and similarly biased (i.e. there is little attitude variability), then there is less reason for local conflict (see Figure 1 for two example counties).

Counties A and B have somewhat similar mean levels of social bias (MA = 0.33 and MB = 0.51; percentiles 18 and 38), whereas they clearly differ in terms of intraregional variability. The distribution of bias in county A is less variable (SD = 1.26, percentile 1) than the distribution of bias in county B (SD = 3, percentile 99). In other words, members of county A are more homogenous in their intergroup attitudes than members of county B.

Our central hypothesis is that intraregional variability in minority attitudes is correlated with online hostility. Importantly, we expect this correlation to remain significant even after controlling for the effect of average regional attitudes. In other words, we expect that variance in social biases (i.e. regional heterogeneity) is positively associated with online hostility.

Importantly, intergroup attitudes are likely to covary according to regional group composition. Two opposing groups of people, each preferring their ingroup, imply relatively polarized intergroup attitudes and therefore reason for intergroup hostility (Tajfel & Turner, 1979). Given the importance of social identity for eliciting intergroup biases, we assume that the effect of attitude variability on local hostility will be subdued when controlling for regional differences in racial and political diversity. That is, opposition between opinion holders is likely confounded with opposition between racial or political groups. Social identity research suggests that local contact between groups with opposing group attitudes (e.g. racial or political groups) can spark anxiety and intergroup tension (Tausch, Hewstone, Kenworthy, Cairns, & Christ, 2007; Zeitzoff, 2017). Notice that this proposed connection between local opposition and hostility appears to contradict the very influential contact hypothesis, which states that intergroup contact should, under certain conditions, improve intergroup relations (Allport, 1954). This apparent theoretical contradiction has been treated by multiple scholars observing that regional diversity in the USA constitutes a special case (Rae, Newheiser, & Olson, 2015; van der Meer & Tolsma, 2014). Most importantly, local outgroup presence/diversity in this context often does not entail personal, beneficial intergroup interaction, which could suppress negative threat effects (for a detailed discussion see Laurence, 2014). Therefore, introducing local diversity into predictive models should subtract from the effect of attitude variability on hostility.

Method

We examine how social media hostility is associated with regional averages and regional variability in relative attitudes towards minorities. Specifically, we focus on US American counties and examine (i) attitudes towards Black people relative to attitudes towards White people (attitudinal bias) and (ii) attitudes towards gay people (men and women) relative to attitudes towards heterosexual people. We focus on these groups because attitudes towards minorities (including Black people and gay people) are a prevalent topic in US American politics and social media discussions. We use two operationalizations of attitude variability (standard deviation and kurtosis). We use large social media samples (from Twitter) to measure regional differences in hostility, as online language reflects regional variations in psychological phenomena (Eichstaedt et al., 2015). We include two measures of social media hostility (expressions of anger and swearing). All data and code for the current work can be found online (https://osf.io/r69xj/). We refer to the supporting information by pointing out the specific files in question.

Sample

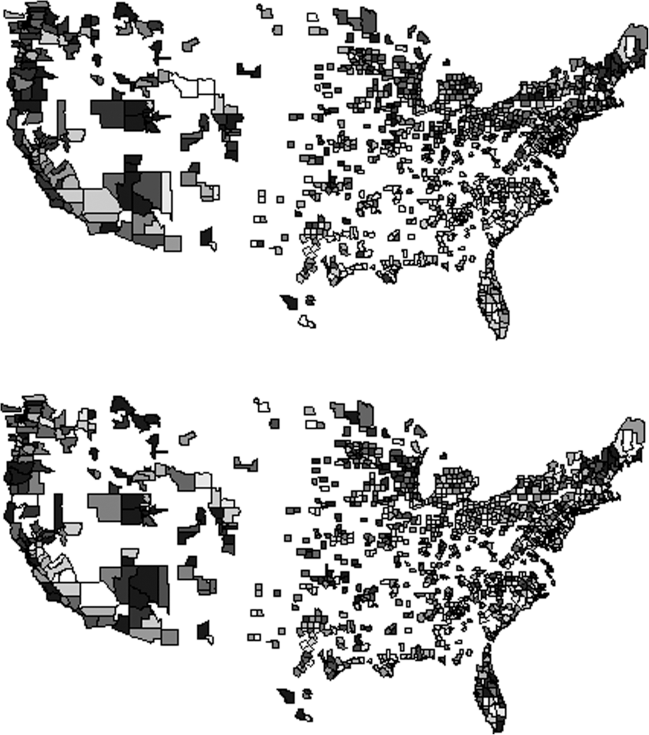

In many US counties, both the Project Implicit and Twitter datasets have zero or very few measurements, which does not allow for meaningful county–level scores. Thus, researchers have to decide how many measurements are sufficient to compute a county–level score and include the county in subsequent analyses. In the past, researchers have applied different cut–off scores. We selected US counties with at least 172 racial attitude scores per county, which leads to a sample of 1094 counties, as this constitutes the average of prior research using a range of different cut–offs (Leitner et al., 2016a, 2016b; ; Orchard & Price, 2017; Rae et al., 2015). We excluded one additional county from Alaska (FIPS 02110), because it complicated our corrections for spatial autocorrelation (it is ∼900 km away from the next county). The remaining 1093 counties were selected to have sufficient individual attitude scores to assure the reliability of our aggregated county–level attitude measures. About 2,000 counties or similar small regions are not included in the sample because of insufficient local measurements. Figure 2 depicts the geographical coverage of the utilized sample.

Top: Attitude variability scores (in deciles) of US counties in the utilized sample. Lighter tones indicate a higher variability. Bottom: Relative frequency of anger expression (in deciles) of US counties in the utilized sample. Lighter tones indicate higher frequencies of anger expression on Twitter. For additional maps showing the distribution of average bias, bias towards gay people, and frequency of swearing, please see the folder ‘maps’ in the Open Science Framework (OSF) repository.

In order to examine the effect of our cut–off decision, we conducted sensitivity analyses with samples of 815 and 1280 counties (at least 301 and 124 scores per county, respectively); these alternative cut–offs corresponded to the minimum and maximum sample sizes used previously for the racial attitudes sample (Leitner et al., 2016a; Leitner et al., 2016b; Orchard & Price, 2017; Rae et al., 2015). In our sensitivity analyses, only 4% of all tests for the full and the White population failed to replicate [average absolute beta coefficient deviation of 0.029; see file ‘ethnicity bias (main analyses for all & white residents).R’ in the Supporting Information], indicating the robustness of our primary results. However, results for Black respondents were highly sensitive to sample restrictions, with 39% of tests varying in their conclusions (average beta coefficient deviation of 0.035; see file ‘ethnicity bias (all analyses for black residents).R’). This instability was likely the outcome of the limited availability of data for the county–level attitude measure (i.e. there were often few individual attitude scores from Black respondents). Thus, results for this group should be interpreted with caution. Unless specified otherwise, we always report the most conservative result in the main text.

There is less prior research using data on regional attitudes towards gay people, so we did not use prior research cut–offs. Instead, we used counties that had at least as many attitude scores as the county with the smallest number of scores for the racial attitude data above (172 scores). Thus, for the analyses on relative attitudes towards sexual minorities, we restricted our analyses to 677 counties. In line with the analyses for racial attitudes, we again conducted sensitivity analyses with limits of at least 301 and 124 scores per county, respectively. The sensitivity of the results was again quite high for the relative attitudes towards gay people as 25% of test results differed in the sensitivity analyses (average beta coefficient deviation of 0.034). We again advise to interpret the results for relative attitudes towards sexual minorities with caution. All conclusions drawn from the analyses were compatible with the results in the main text and the sensitivity analyses.

Measures

All measures were on the county level. We used explicit attitude measures, as the meaning of implicit test scores on individual and collective levels remains uncertain (Blanton & Jaccard, 2017). However, the utilized data source for explicit attitude scores also contain implicit measures (Project Implicit; Xu, Nosek, & Greenwald, 2014), and we include implicit measures in the supporting information (see folder ‘unprocessed data from past publications’). Selection biases are a potential limitation of relying on data from Project Implicit, meaning some resident groups (e.g. women, young people, Xu et al., 2014, and educated people, Morris & Ashburn–Nardo, 2009) are overrepresented while others are underrepresented. This problem is present in virtually all large datasets (e.g. Gosling Potter Internet Project, Gosling, Vazire, Srivastava, & John, 2004; BBC Lab dataset, Rentfrow et al., 2013). In order to obtain more representative county scores, we therefore employed raking by age, gender, and education. Raking (for an introduction, see Battaglia, Izrael, Hoaglin, & Frankel, 2009) is the process of comparing one's sample to a representative sample (often census data of the target population) on a range of, usually demographic, variables. If the demographic distribution in one's sample deviates from the target population, say there are more women in the sample, the scores of the underrepresented group, in this case men, receive larger weights when computing aggregate scores (say the sample mean or standard deviation of a variable). Thus, in the current project, we compared the demographics of each county's Project Implicit sample with the county's census data (US Census Bureau, 2017; US Department of Agriculture, 2017) and reweighted participant scores, so that county–level attitude scores (mean, standard deviation, and kurtosis) are more closely in line with the expected population scores. Hoover and Dehghani (2019) provide a discussion of sample representativeness in large subnational datasets. 1 All variables were standardized for better comparability of the results. Notice that attitude scores were collected between 2003 and 2017 while online hostility was measured between 2009 and 2010. This temporal overlap prevents claims of one–directional causality (as does the correlational nature of the data).

The supporting information includes an earlier version of our analyses conducted without raking; note that this procedure does not substantively change our findings.

Interracial attitudes

The data were obtained from OSF repositories (https://osf.io/52qxl/) and are described by Xu et al. (2014). We estimated the regional level (mean) and variability (standard deviation) of bias in interracial attitudes using geo–tagged scores of warmth felt towards Black people on an 11–point scale subtracted from warmth felt towards White people. Thus, positive scores indicate a relative pro–White bias, a score of 0 indicates a neutral attitude, and negative scores indicate a pro–Black bias. Given that the two individual warmth questions were presented back–to–back in the survey and that they were formatted in the same way (the only difference being the target group), we assume that participants were very conscious of the difference between their two answers and that this numerical difference can be interpreted as explicit bias. Previous research therefore generally utilized this operationalization of explicit bias (e.g. Connor, Sarafidis, Zyphur, Keltner, & Chen, 2019; Hehman et al., 2018; Leitner et al., 2016a; Leitner et al., 2016b; Payne et al., 2019). The difference scores computed for each participant were subsequently used to compute two scores per county: the average local difference score (interpreted as regional bias) and the standard deviation of the local difference scores (interpreted as local disagreements in bias). Validation studies of the utilized data and measurements are described by Hehman et al. (2019). The sample included attitude measurements from 2 048 781 participants (county–level minimum = 172, median = 624, maximum = 54 235). Separate analyses are added for the White and Black subsamples (respectively 1 634 117 and 283 239 participants).

Attitudes towards gay and straight people

The Project Implicit data can be obtained from Project Implicit's OSF repositories (https://osf.io/ajdgr). We estimated relative attitudes towards gay people using geo–tagged scores of warmth felt towards gay men and lesbian women on an 11–point scale subtracted from warmth felt towards heterosexual men and women (Xu et al., 2014). The relative attitude scores for men and women were averaged into one score indicating attitudes towards gay people relative to attitudes towards heterosexual people. Thus, a higher score indicates a stronger bias against gay (or in favour of heterosexual) people. The sample included attitude measurements from 763 907 participants (county–level minimum = 172, median = 513, maximum = 22 412).

Verbal online hostility

Regional variation in online hostility was assessed through Twitter language extracted from US counties. The dataset (provided by Eichstaedt et al., 2015) included 148 000 000 tweets and was successfully used in the original publication to make psychological comparisons between US counties. We utilized the LIWC2015 software (Pennebaker, Boyd, Jordan, & Blackburn, 2015) to count swear words (e.g. ‘bullshit’) and amount of anger expressions (e.g. ‘annoyed’, ‘angry’, and ‘stupid’), with relative frequencies being interpreted as regional levels of hostility on social media. The LIWC measures of anger and swearing were used previously for assessing hostility (Hancock, Woodworth, & Boochever, 2018; Ksiazek, 2015; Matsumoto, Hwang, & Frank, 2016). While we believe that the validation procedures for the hostility measures should generally be conducted on the individual level (see citations), we ascertain their validity on the collective level in the Supporting Information (see file ‘validity of hostility measure.R’).

Racial and political proportions

We obtained the regional numbers of Black and White residents from the website of the US Census Bureau (2012–2016 data, 2017) and the amount of votes for Donald Trump versus Hillary Clinton from McGovern (2017).

Analysis plan

In the results section, we analyse the association between racial attitudes and regional hostility. Our primary analyses consisted of linear regression analyses in which we computed the effects of regional attitudes and attitude variability on social media hostility. First, we probed average attitudes among all residents, then we conducted separate analyses using average attitudes among only White and only Black residents as predictors of hostility. Note, however, that we always focused on overall regional hostility as our dependent variable, as the county–level Twitter dataset did not allow us to differentiate between the hostility of White versus Black Twitter users.

For each analysis, we first report the results for a simple regression of regional hostility on average attitude bias. Then, we introduce regional proportions of racial and ideological groups as covariates into the same model. After our analyses on average levels of regional bias, we introduce variability in attitudes as an additional predictor of regional hostility. Here, we again present results for all, White, and Black residents, and under inclusion of the additional covariates. We also dedicate one section to attitude kurtosis as an alternative measure to the dispersion of attitudes. Lastly, we replicate the analyses for relative attitudes towards gay people. In the supporting information, we include additional analyses with post–hoc county matching on further covariates (county–level income, employment, crime rates) to ascertain the observed effects described in the main text. All effects of attitude variability were robust in these analyses (see file ‘matched controls.R’).

Assumption checks

The assumptions of heteroscedastic and normally distributed errors were checked through residual plots. Residual maps and a significant Moran's I statistic indicated that the assumption of independent observations was violated through spatial autocorrelation (see file ‘test spatial autocorrelation.R’). That means counties in close vicinity of each other had similar model residuals. To account for this, we added an autocovariate to each regression model, which used the weighted average of hostile online language in neighbouring counties as predictors. This correction substantially improved our satisfaction with the residual maps. However, in some cases, the Moran's I was still statistically significant at α = .05. As it is always greatly reduced, as maps no longer show visible patterns, as and the inclusion of the autocovariate does not seem to shift our effects of interest, we assume that the remaining autocorrelation does not threaten the conclusions drawn from the data. For brevity, we do not describe results for the highly significant autocovariate term for each model, but they are included in the supplementary results (see all. R files in the folder ‘results presented in the main text’). 2

The supporting information also includes an earlier version of our analyses conducted without the corrective autocovariate term.

Results

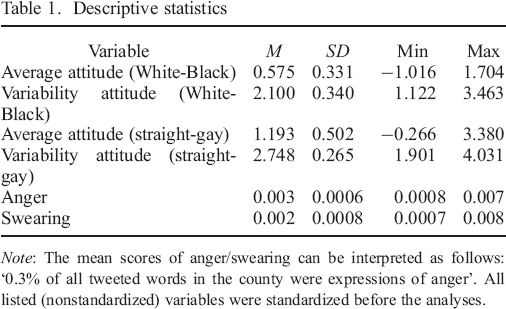

We report descriptive statistics for the primary variables in Table 1. Counties were, on average, biased against Black people, t(1,092) = 57.322, p < .001, and gay people, t(676) = 61.867, p < .001.

Descriptive statistics

Note: The mean scores of anger/swearing can be interpreted as follows: ‘0.3% of all tweeted words in the county were expressions of anger’. All listed (nonstandardized) variables were standardized before the analyses.

Average racial attitudes, regional variance, and online hostility

In the following section, we test how the average interracial attitudes of local residents relate to local online hostility. In the second paragraph of the section, we change the focus from average attitudes to the variability in attitudes.

Analyses including attitudes of all residents

Average anti–Black (pro–White) attitudes (averaged across all local citizens) were associated with decreased swearing (β = −0.145, 95% confidence interval (CI) [−0.199, −0.091], p < .001). This effect was rendered nonsignificant when controlling for local proportions of Black and White people (β = −0.048, 95% CI [−0.113, 0.017], p = .149). For anger, the effect was also negative and nonsignificant (β = −0.027, 95% CI [−0.082, 0.029], p = .344). Thus, there is no strong evidence that counties with different levels of average anti–Black bias show different levels of online hostility.

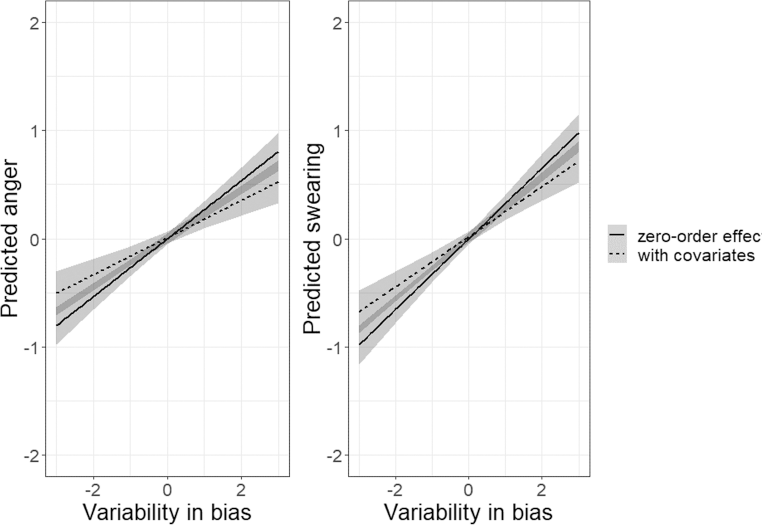

In contrast to county–level means, county–level variability in bias was positively associated with anger (β = 0.268, 95% CI [0.212, 0.323], p < .001) and swearing (β = 0.327, 95% CI [0.269, 0.384], p < .001), even after controlling for average regional attitudes, race proportions, and ideological proportions (the proportions of Trump and Clinton votes in the 2016 election, β anger = 0.171, 95% CI [0.106, 0.236], p < .001; β swearing = 0.231, 95% CI [0.168, 0.294], p < .001). Introducing racial and political control variables decreased the size of the bias variability slopes by an average of 32.8% (see Figure 3). In the full model, the relative number of Clinton voters (over Trump voters) was associated with less online hostility (β anger = −0.201, 95% CI [−0.271, −0.131], p < .001; β swearing = −0.171, 95% CI [−0.237, −0.105], p < .001) while racial diversity was associated with more online hostility (β anger = 0.710, 95% CI [0.460, 0.960], p < .001; β swearing = 0.854, 95% CI [0.616, 1.092], p < .001).

The association between bias variability and regional hostility. Local levels of hostility were positively associated with intraregional variability in bias. Covariates are average bias, and local racial and ideological proportions.

Separate analyses for White and Black attitude holders

In this section, we repeat the first set of analyses, but instead of computing the mean and standard deviation of attitudes of all residents, we compute them separately for White and Black residents. This allows us to test whether average attitudes and variability in attitudes show the same relationship with hostility across racial groups. When restricting attitude measurements to White residents, average anti–Black bias positively predicted hostility (β anger = 0.228, 95% CI [0.174, 0.283], p < .001; β swearing = 0.201, 95% CI [0.146, 0.257], p < .001). Conversely, when restricting attitude measurements to Black residents, pro–Black (anti–White) bias marginally predicted hostility, with the absolute effect size being much smaller than for White residents and not significant for the anger measure (β anger = −0.055, 95% CI [−0.111, 0.001], p = .054; β swearing = −0.063, 95% CI [−0.117, −0.009], p = .023). In other words, hostility levels were high in counties where White residents had strong anti–Black biases and where Black residents had strong anti–White biases. Simultaneously introducing local proportions of racial and ideological groups into the models renders these effects nonsignificant for White residents (β anger = 0.060, 95% CI [−0.024, 0.143], p = .162; β swearing = 0.020, 95% CI [−0.061, 0.102], p = .620) and Black residents (β anger = −0.038, 95% CI [−0.097, 0.021], p = .209; β swearing = −0.045, 95% CI [−0.100, 0.010], p = .106).

County–level variability in attitudes among White residents emerged as a positive predictor of online hostility (β anger = 0.221, 95% CI [0.167, 0.275], p < .001; β swearing = 0.210, 95% CI [0.162, 0.271], p < .001). Thus, diversity in interracial attitudes among White residents is also associated with online hostility. Again, these effects are substantially decreased when introducing local proportions of racial and ideological groups into the model. For white residents, the decrease in effect size was 45.3% (β anger = 0.104, 95% CI [0.026, 0.183], p = .009; β swearing = 0.131, 95% CI [0.055, 0.206], p < .001). County–level variability in attitudes among Black residents was not significantly associated with online hostility (β anger = −0.008, 95% CI [−0.064, 0.047], p = .774; β swearing = −0.007, 95% CI [−0.061, 0.047], p = .805).

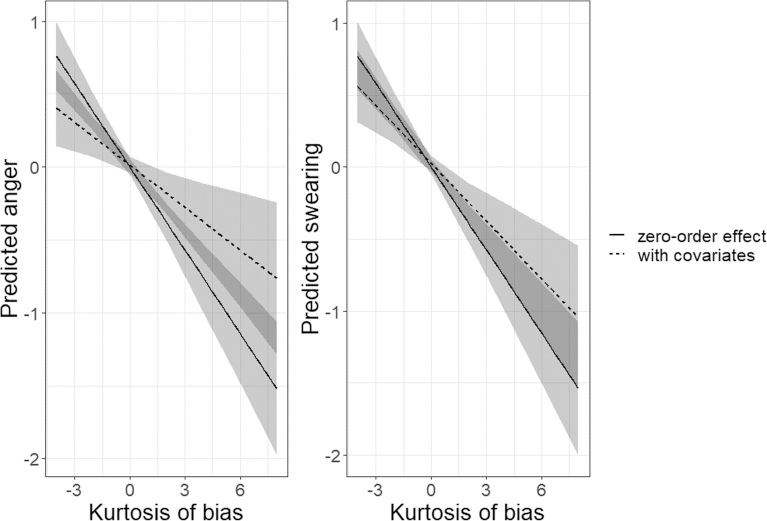

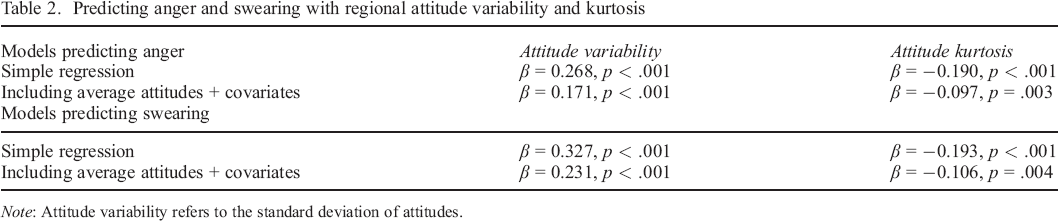

Analyses using kurtosis as a measure of regional polarization

In our previous analyses, we estimated attitudinal variability within each region using the standard deviation of regional intergroup attitudes. As a higher standard deviation implies a larger dispersion of attitudes, this operationalization is in line with our reasoning. However, the relationship between regional standard deviations and attitudinal polarization is merely indirect. High polarization means that many cases score at the extremes of the distribution. Clearly, this phenomenon can contribute to high standard deviations, but a more direct measure of polarization is a variable's kurtosis. The (Pearson) kurtosis does not, as often assumed, quantify the peakedness of a distribution, but tail extremity (i.e. the propensity of extreme values on either side of the distribution; Westfall, 2014). Smaller kurtosis values indicate saturated tails with relatively many values close to the poles, which makes kurtosis a good (negative) measure of polarization. Using a sample's kurtosis to assess polarization of intergroup attitudes is supported by the work of DiMaggio, Evans, and Bryson (1996), who argued that kurtosis is likely better suited than standard deviation to assess polarization. The following two subsections replicate all previous effects of attitude variability, but operationalize the construct as attitude kurtosis rather than standard deviation of attitudes.

Analyses including attitudes of all residents

When replacing attitude variability in the upper analyses with bias kurtosis, all full–sample effects of kurtosis regardless of hostility measure or covariates are statistically significant (all βs ≤ −0.097, all ps ≤ .003; see Figure 4). 3

When loosening sample restriction to 1280 counties and controlling for average bias, regional racial opposition, and regional ideological opposition, the attitude kurtosis is not significantly associated with swearing (β = −0.057, p = .058).

The association between bias kurtosis and regional hostility. The kurtosis of attitudes, serving as a reversed measure of polarization, was negatively associated with hostility. Thus, polarization again predicted hostility. Covariates are average bias, and racial and ideological proportions.

Regions with higher levels of kurtosis (indicating fewer extreme scores) had less online hostility compared with regions with lower levels of kurtosis (indicating more extreme scores and greater regional polarization). The inclusion of local proportions of racial and political groups as covariates decreased the initial effect size by 46.9%. As attitudinal polarization can be expected to relate closely to both standard deviation and kurtosis of opinions (the measures are correlated r = −.535), it is not surprising that the results from earlier replicate to such a large degree (see Table 2 for a side–by–side view).

Predicting anger and swearing with regional attitude variability and kurtosis

Note: Attitude variability refers to the standard deviation of attitudes.

Separate analyses for White and Black attitude holders

When restricting the sample of attitude holders to White locals, the attitude kurtosis again predicted hostility (β anger = −0.167, 95% CI [−0.222, −0.112], p < .001; β swearing = −0.155, 95% CI [−0.210, −0.099], p < .001). When simultaneously controlling for the above–mentioned covariates, the kurtosis of attitudes no longer predicted verbal hostility (β anger = −0.033, 95% CI [−0.105, 0.039], p = .368; β swearing = −0.050, 95% CI [−0.120, 0.019], p = .156; drop in effect size 74%). For Black residents, we also found significant effects of attitude kurtosis on online hostility (β anger = −0.082, 95% CI [−0.138, −0.027], p = .004; β swearing = −0.068, 95% CI [−0.122, −0.014], p = .014). 4 Again, when simultaneously controlling for the above–mentioned covariates, the kurtosis of attitudes no longer predicted verbal hostility (β anger = −0.050, 95% CI [−0.106, 0.006], p = .080; β swearing = −0.030, 95% CI [−0.082, 0.022], p = .255; drop in effect size 47.5%). This pattern of results for the full, White, and Black sample is consistent with the analyses above, with the exception that local proportions of racial and ideological groups actually accounts for all the variance explained by attitude kurtosis among White residents.

These effects are not significant when loosening sample restrictions to 1280 counties (βanger = 0.023, p = .375; βswearing = −0.032, p = .208)

Relative attitudes towards gay people and online hostility

In this last section, we used the above approach to examine the association between relative attitudes towards gay people and online hostility. This allowed us to investigate whether intergroup attitudes towards other minorities, and the local distribution of these attitudes, also predicted hostility on social media.

The average anti–gay attitude (i.e. averaged across all local citizens) positively predicted hostility (β anger = 0.320, 95% CI [0.252, 0.388], p < .001; β swearing = 0.321, 95% CI [0.251, 0.391], p < .001). When introducing attitude variability, ideological proportions, and racial proportions as covariates, the effect of average attitudes on anger was no longer significant (β anger = 0.100, 95% CI [−0.012, 0.205], p = .081; β swearing = 0.121, 95% CI [0.016, 0.227], p = .025). 5 Variability in relative attitudes towards gay people also positively predicted regional levels of hostility (β anger = 0.307, 95% CI [0.237, 0.376], p < .001; β swearing = 0.287, 95% CI [0.215, 0.358], p < .001). When entering the covariate set into the model, the effect of attitude variability on swearing became nonsignificant (β anger = 0.134, 95% CI [0.037, 0.231], p = .007; β swearing = 0.061, 95% CI [−0.033, 0.156], p = .204; drop in effect size 67.6%). When replacing the standard deviation of attitudes with its kurtosis, the regional attitude kurtosis positively predicted online hostility (β anger = −0.237, 95% CI [−0.306, −0.167], p < .001; β swearing = −0.225, 95% CI [−0.297, −0.153], p < .001). When entering regional divides as covariates into the model, the effect of attitude kurtosis decreased by 60.1% (β anger = −0.098, 95% CI [−0.178, −0.019], p = .016; β swearing = −0.086, 95% CI [−0.164, −0.009], p = .029 6 ). We refrained from estimating the effect of relative attitudes towards gay people held by people with specific sexual orientations, as county–level effects become increasingly unstable with lower numbers of attitude scores. However, the data in the Supporting Information allow for such analyses. For a full list of all stepwise inferential tests, please see code/results in the folder ‘results presented in the main text’.

The effect on swearing was also no longer significant when applying either looser (β = 0.088, p = .071) or tighter (β = 0.103, p = .104) sample restrictions.

In fact, the effects became nonsignificant when applying either looser (βanger = −0.071, p = .065; βswearing = −0.046, p = .209) or tighter (βanger = −0.075, p = 0.118; βswearing = −0.078, p = .095) sample restrictions.

Discussion

We set out to test the relationship between regional biased attitudes towards minority groups and regional levels of verbal hostility on Twitter. The present analysis shows multiple connections between both phenomena, supporting past research on the broad spectrum of negative correlates of regional attitudinal bias. First, we found that average levels of anti–Black bias were not reliably associated with online hostility (i.e. the significance and magnitude of the relationship depended on the operationalization of hostility and the covariate set). However, these results differed (and became clearer) when we examined the separate attitudes of White versus Black county residents. Average levels of anti–Black bias among White residents were positively associated online hostility, whereas the opposite relationship was observed when viewing the attitudes of Black residents. In other words, we observed greater hostility in counties where White residents held stronger pro–White biases and in counties where Black residents held stronger pro–Black biases. Thus, anti–outgroup attitudes were positively associated with hostility, although not beyond the underlying effects of local racial and ideological group proportions.

Critically, attitudinal variability was more strongly associated with online hostility than regional attitude averages. Overall, regions with dispersed racial attitudes were more hostile compared with regions with less attitudinal variability. This effect was present in the total sample, as well as in the analyses restricted to White residents (whereas the effect was less stable among Black residents). Further, the introduction of regional divides between racial and voter groups subtracts from the effect of attitude variability. In other words, controlling for the presence of conflicting ideology or demographic groups reduces the relationship between attitudinal variability and hostility, sometimes to a point where attitude variability is no longer a significant predictor. This pattern of results suggests that the observed effects might be primarily because of tensions between racial and ideological groups, whose ingroup identities are closely attached to interracial relations (Leach & Allen, 2017; Perry & Whitehead, 2015).

When conducting similar analyses using relative attitudes towards gay people, the main effect of average anti–gay bias on regional hostility was significant, but did not remain significant when controlling for regional divides (as the relative presence of Trump supporters appears to be closely aligned with regional attitudes towards gay people). Results also differed regarding the effect of attitude variability. It was distinctly weaker than in the analyses of interracial attitudes, and could almost always be fully accounted for by controlling for ideological divides and average attitudes towards gay people. While it is true that attitude variability measures were less reliable and effects were less stable across sensitivity analyses compared with the analyses of interracial attitudes, we assume that findings might just not be generalizable across minority groups. While discrimination of either kind remains a divisive issue, racism might be a more frequent cause of hostility given the larger size and visibility of racial minority groups, and the USA's regionally specific history with slavery. Thus, racial attitudes might relate to regional online conflict relatively more often than homophobia or simply contribute more to regional stress and tacit intergroup tension.

Intergroup conflict and online hostility

Computational and social identity research has described relationships between ideological opposition, group conflict, and aggressive behaviour on social media (Bail et al., 2018; Cicchirillo, Hmielowski, & Hutchens, 2015; Kwon & Cho, 2017; Kwon & Gruzd, 2017; Ott, 2017; Postmes, Spears, Sakhel, & De Groot, 2001; Spears & Postmes, 2015). We find evidence extending this research line by showing that regions with relatively variable interracial biases, as largely captured in local proportions of racial and political groups, are characterized by more hostility on social media compared to other regions.

Scholars have argued that the USA is experiencing an increase in ideological polarization (Twenge, Honeycutt, Prislin, & Sherman, 2016) and that treatment of minorities poses one of the most divisive topics today (Schaffner, MacWilliams, & Nteta, 2018). This phenomenon occasionally makes for an explosive mix when combined with the often–discussed online disinhibition effect, which describes people expressing more anger and hatred online than they would in person (e.g. Suler, 2004). Crockett (2017, p. 717) stated that ‘Polarization in the US is accelerating at an alarming pace, with widespread and growing declines in trust and social capital. If digital media accelerates this process further still, we ignore it at our peril’. While the current research does not address whether this dynamic is in fact cascading over time, we revealed that ideological divisions and social media hostility are associated across geographical regions.

We want to highlight that while we find correlational evidence for a connection between attitude variability and online hostility, the nonexperimental data and the temporal overlap of variable measurements prevent identification of causal mechanisms. Multiple phenomena are likely responsible for the correlation between regional attitude variability and social media hostility. An obvious candidate explanation is that people disagree with other users holding different attitudes, for instance, by expressing outrage and insulting each other, which should be more likely to occur in areas where anti–Black sentiment clashes with egalitarian or anti–White sentiment. Another explanation is that the social uncertainty resulting from divided neighbourhoods is expressed through negative affect and venting online. Both directions from prejudice to online hostility (Bliuc, Faulkner, Jakubowicz, & McGarty, 2018) and from online hostility to prejudice (e.g. through desensitization; Soral, Bilewicz, & Winiewski, 2018) have been suggested in previous psychological research and both are in line with the observed effects as well as the decrease in effect sizes when introducing regional divides. Lastly, it is possible that the presence of certain extremist groups contributes to both the variability in bias and habitual anger/swearing online. Aggressive online activity of such extremist groups is, in turn, likely to spark similarly emotional backlash by opponents.

While the preceding mechanisms have a common root, opposition sparking hostility, there is another intriguing explanation for the statistical association. Perpetrators (and victims; Kaakinen, Keipi, Oksanen, & Räsänen, 2018) of online hate tend to have certain dispositions (Kurek, Jose, & Stuart, 2019; McCreery & Krach, 2018), which can be clustered across geographical areas (for psychological traits of US regions, see Rentfrow et al., 2013). It is reasonable to assume that a region's dispositional hostility can foster polarization and cross–group rejection. Imagine intergroup relations in an area where people are prone to swear and curse at each other. This is to say that the causal directionality of ideological variability and hostility might well be reversed. We estimate that a bidirectional relationship is most likely with polarization and online hostility mutually enforcing each other as an explosive mix. Crockett (2017, p. 771) might have forecasted this finding by asking ‘If moral outrage is a fire, is the internet like gasoline?’

Limitations

In the current work, we could only interpret the hostility measure as an all–inclusive county–level characteristic. While social biases were suggested to heighten hostility for both perpetrator and victim (Borders & Hennebry, 2015; Weber, Lavine, Huddy, & Federico, 2014), it would be interesting to examine who was hostile towards whom. While it is possible to employ predictive methods to estimate demographic information from individual Twitter profiles (Kteily, Rocklage, McClanahan, & Ho, 2019), the linguistic dataset we worked with only includes text aggregated over many anonymous users thereby preventing such methods. Other shortcomings are related to spatial nature of the utilized datasets. For instance, the well–known modifiable areal unit problem (Manley, 2014) applies to the current work. This problem suggests that aggregated measures can be biased by both the shape and the scale of the unit of analysis (counties). Our focus on county–level differences was based on convention, rather than theoretical justification. Future research should consider whether the present results would replicate on a city or even neighbourhood level. More fine–grained analyses would allow a more detailed examination of local intergroup relations and online hostility. Relatedly, despite the large amounts of data available through Project Implicit and the Twitter publication, the geographical coverage is far from complete, as indicated in Figure 2. This has been an issue throughout all past spatial analyses of the datasets and future efforts to fill blind spots through targeted data collection or value imputation would be highly beneficial.

Conclusion

We find that a wide intraregional variation in relative attitudes towards minorities is associated with hostility on social media. This pattern is seemingly stronger when examining attitudinal bias towards racial, rather than sexual, minorities. The effect of intraregional variability runs parallel to regional divides between racial groups and political groups; and controlling for the local proportions of these groups reduces the association between intraregional variability and online hostility. Together, the results suggest that ideological polarization is accompanied with local unrest and aggression on social media. Further research is needed to pinpoint the dynamic processes that give rise to this association.

Supporting Information

Supporting Information, per2301-sup-0001 - Interregional and intraregional variability of intergroup attitudes predict online hostility

Data S1. Supporting Information

Supporting Information, per2301-sup-0001 for Interregional and intraregional variability of intergroup attitudes predict online hostility by HANNES ROSENBUSCH, ANTHONY M. EVANS and MARCEL ZEELENBERG, in European Journal of Personality

Data S1. Supporting Information

Supporting Information

Supporting Information, per2301-sup-0002 - Interregional and intraregional variability of intergroup attitudes predict online hostility

Supporting info item

Supporting Information, per2301-sup-0002 for Interregional and intraregional variability of intergroup attitudes predict online hostility by HANNES ROSENBUSCH, ANTHONY M. EVANS and MARCEL ZEELENBERG, in European Journal of Personality

Supporting info item

Footnotes

Supporting Information

Additional supporting information may be found online in the Supporting Information section at the end of the article.