Abstract

The purpose of this study is to prove the convergence of the simultaneous estimation of the optical flow and object state (SEOS) method. The SEOS method utilizes dynamic object parameter information when calculating optical flow in tracking a moving object within a video stream. Optical flow estimation for the SEOS method requires the minimization of an error function containing the object's physical parameter data. When this function is discretized, the Euler-Lagrange equations form a system of linear equations. The system is arranged such that its property matrix is positive definite symmetric, proving the convergence of the Gauss-Seidel iterative methods. The system of linear equations produced by SEOS can alternatively be resolved by Jacobi iterative schemes. The positive definite symmetric property is not sufficient for Jacobi convergence. The convergence of SEOS for a block diagonal Jacobi is proved by analysing the Euclidean norm of the Jacobi matrix. In this paper, we also investigate the use of SEOS for tracking individual objects within a video sequence. The illustrations provided show the effectiveness of SEOS for localizing objects within a video sequence and generating optical flow results.

Introduction

Computer vision-based motion analysis has been the subject for numerous research activities [1–15] for several decades. Optical flow- and feature-based techniques can be identified as the two most popular approaches employed in estimating motion parameters in a video stream. Feature-based approaches rely on the feature extraction of the moving object and the tracing of correspondence points to capture the motion, whereas in the optical flow approach, object motion is represented as sampled velocity fields in the image plane. In this study, we consider an optical flow-based approach to determine the object's motion relative to the camera frame of reference.

Both optical flow- and feature-based techniques have been used with the dynamic modelling of the objects involved for the purpose of parameter estimation [16]. Broida et al. [17] modelled the object's motion by retaining an arbitrary number of terms in the appropriate Taylor series, while the neglected terms of the series were modelled as process noise. Recursive estimation can be performed with an iterative extended Kalman filter (IEKF). Roach and Aggarwal [18] introduced a system of nonlinear equations that relate the relative position of the object with respect to the camera position, with solutions being generated by numerical techniques. This also draws attention (also in [19]) to the fact that most existing techniques perform poorly when the images (coordinates of matched points) are noisy. More recently, Blostein et al. [20] demonstrated the feasibility and significance of an optical flow-based approach over a feature-based approach while applying an extended Kalman filter to an object motion model with a constant velocity.

The process of determining optical flow is generally carried out by applying a brightness constancy constraint equation (BCCE), which makes use of spatio-temporal derivatives of the image intensity [1, 2]. Determining optical flow using the BCCE is an ill-posed problem. This arises from the fact that when a straight moving edge is viewed through a narrow aperture, the only motion component that can be determined is perpendicular to that edge [21]. There are several methods to overcome the ill-posedness of differential techniques, two of which are the Lucas-Kanade [1] and Horn-Schunck [2] techniques. Methods utilizing the BCCE are differential techniques, which can be classified as either local or global. Local techniques involve the optimization of a local energy functional, as in the Lucas-Kanade method [1] or the frequency-based minimization methods [22] and [23]. The global category refers to methods that determine optical flow through the minimization of a global energy functional [2], and numerous other discontinuity-preserving approaches [24–30]. Differential techniques are widely used due to their high level of performance [31]. Local methods offer robustness to noise, but lack the ability to produce dense optical flow fields. Global techniques provide 100 percent dense flow fields, but exhibit much greater sensitivity to noise [31, 32].

Motion parameter estimation and optical flow calculation are generally considered to be separate problems. In this paper, we focus on a unified approach to image-object localization coupled with 3D parameter estimation of a moving target. This unified approach is known as the simultaneous estimation of optical flow and object state (SEOS) [9, 16]. In SEOS, a more refined and robust approach will be employed for image-object localization coupled with the parameter estimation of a moving target. The nonlinear relationship between the motion parameters of the object and its projected image on the video sequence is used to simultaneously estimate the optical flow as well as the motion parameters of the object in question. Uncertainty exists in vision-based systems with respect to the initial conditions and also in image position localization [19]. To deal with this issue, a robust extended Kalman filtering (REKF) technique is implemented [33, 34]. This robust version of the extended Kalman filter takes into account large uncertainties and errors in the measurements and the system model. The performance that is attainable using SEOS is investigated for real image sequences. The use of SEOS for target localization and tracking applications is investigated. SEOS is used to track specific objects within image sequences that are generated from a stationary mounted camera. An optical flow equation-based segmentation technique is used to locate the object of interest within the video stream. These optical flow results are compared and contrasted with the well-established Horn-Schunck method [2].

This paper is organized as follows. Firstly, in Section 2, motion models and measurement equations for the simultaneous estimation problem are formulated. In Section 3, we outline a procedure for the set-value state estimation of a nonlinear signal model. In Section 4, the SEOS cost function is stated and iterative expressions to the solution (the Gauss-Seidel and Jacobi methods) are analysed for the convergence. Section 5 contains optical flow and state estimation results for both the SEOS and the ‘Horn and Schunck’ techniques. SEOS performance improvements (over ‘Horn and Schunck') on real-image data are shown. Section 6 contains the conclusion.

Dynamic Model and Monocular Vision Measurement

Dynamic Model

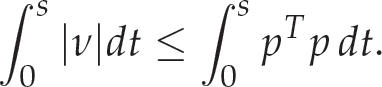

Consider a fixed camera and a mobile target (object) within the image plane of the camera, having unknown state dynamics (

where:

In (1),

Let

where

where

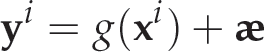

Object

Distances are measured in relation to the camera reference frame. The pixel coordinates of an image point are linked to the camera reference frame coordinates by the camera's intrinsic properties [39]. These properties represent the optical and geometric characteristics of the camera. We assume that the image coordinates have their origin at the point where the optical axis intersects the image plane. This intersection point is commonly referred to as the principle point.

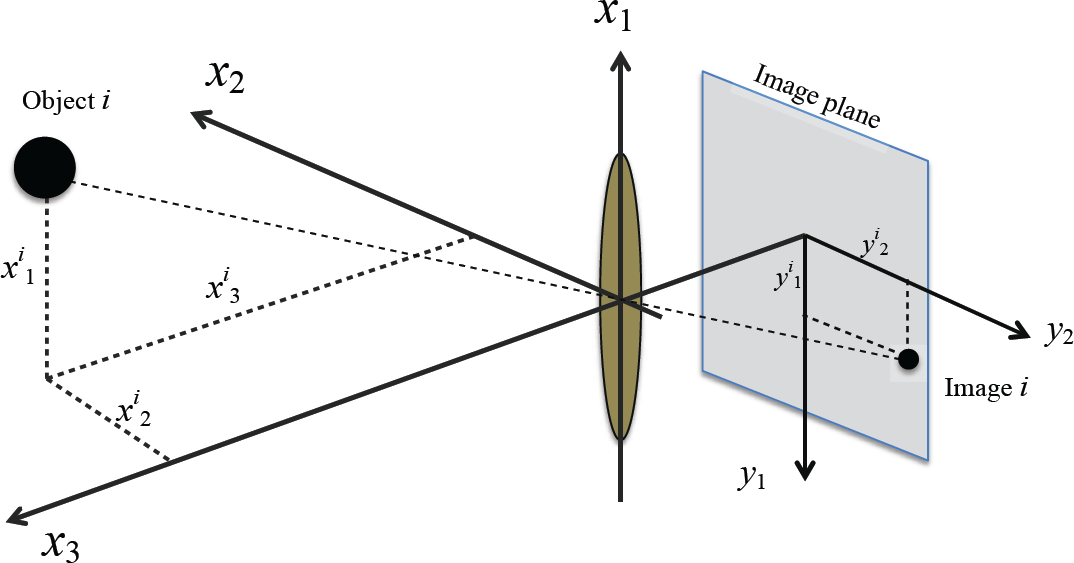

Consider a nonlinear uncertain system of the form:

defined on the finite time interval [0,

The uncertainty in the system is defined by the following

where

where || · || is the Euclidean norm with β > 0 and ϕ = Φ,

Petersen and Savkin in [34] presented a characterization of the set

where

where

An approximate formula for the set

where:

In the application of the robust extended Kalman filter (REKF) in video-based tracking, the object system is represented during the time interval by the nonlinear uncertain system in (1) and the IQC given in (7), where

where |δ| ≤ ξ with ξ is a constant indicating the upper bound of the norm-bounded portion of the noise. By choosing

Considering

it is clear that this uncertain system leads to the satisfaction of the inequality in (5) and, hence, the constraint in (7) is satisfied (see [34]). This more realistic approach removes any noise model assumptions in the algorithm development and guarantees the robustness of the solution.

The REKF seeks to increase the robustness of the state estimation process and reduce the chance that a small deviation from the Gaussian process in the system noise causes a significant negative impact on the solution. However, we will lose optimality and our solution will be only sub-optimal. To explain the connection between REKF and the standard extended Kalman filter, consider the system (4) with:

where

see, e.g., [42]. The parameter

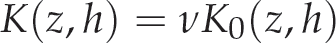

Optical flow is the apparent motion of the brightness/intensity patterns observed when there is relative motion between a camera (observer) and the objects being imaged; [38, 43]. Let

where

where

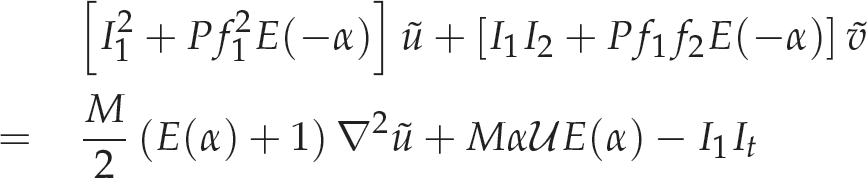

The following two conditions are obtained from the function (13) using the calculus of variations [2]:

where

for all grid point indices

and:

where

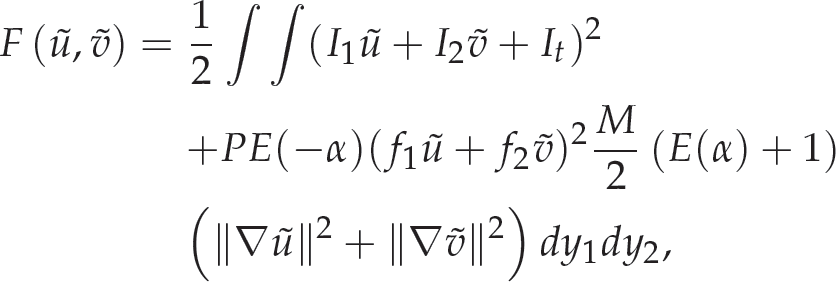

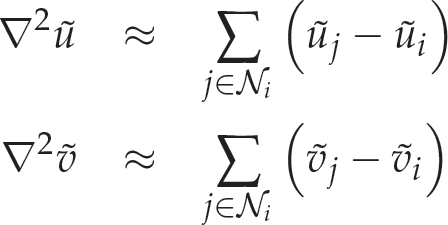

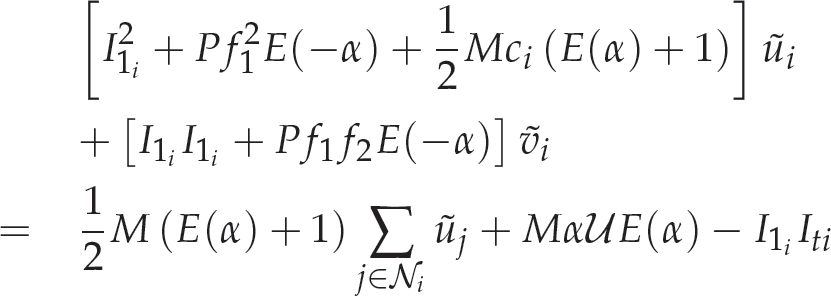

Various numerical methods have been suggested [44] to solve the large-scale system of equations represented by (16) and (17). One such iterative technique is the Gauss-Seidel method [45]. The Gauss-Seidel iterative scheme exhibits a fast rate of convergence due to its ability to use refined estimates within a particular iteration as they become available. A Gauss-Seidel iterative solution scheme for

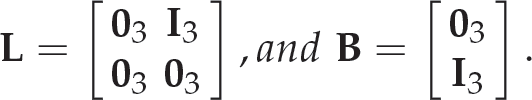

where:

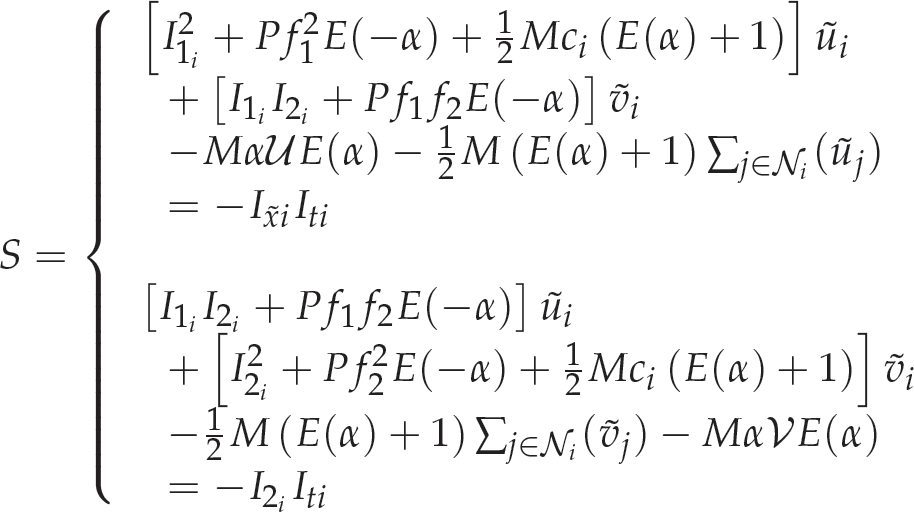

In order to examine the convergence of the Simultaneous Estimation of Optical Flow and Object State (SEOS) method, the cost functions (14) and (15) are represented as a large scale system (S) of linear equations by rearranging equations (16) and (17), followed by the application of sufficient convergence criteria. A sufficient condition of convergence will be shown, identifying that the SEOS solutions for a Gauss-Seidel iterative scheme will converge for any arbitrary choice of initial approximation. The following system (S) of linear equations represents discrete approximations of the SEOS cost function for all grid point indices

Large scale systems are most effectively solved by iterative techniques [46]. We express the system (S) of linear equations in matrix form as

where

where

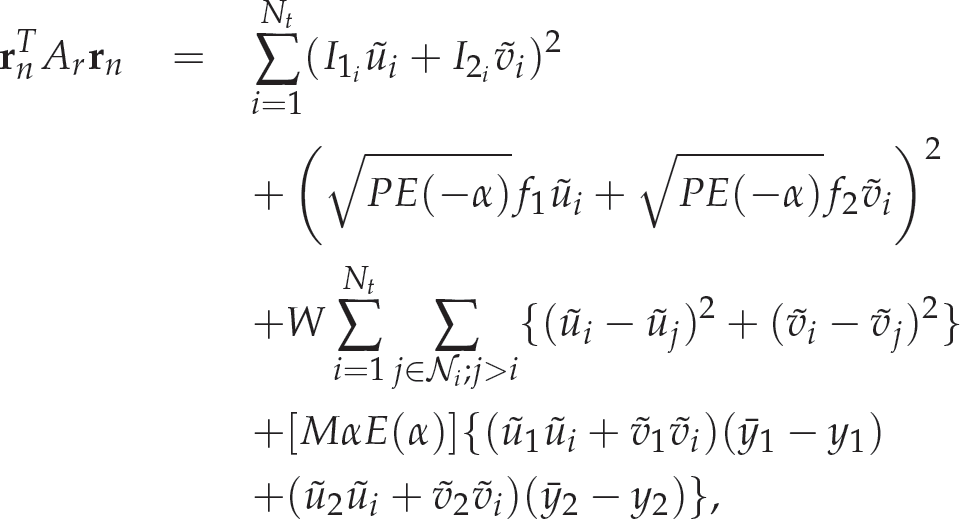

In order to verify that matrix

Provided the object is not moving in the form of

It was previously shown in section 4.1 that matrix

Equations (21) and (22) can be written in matrix form as:

where

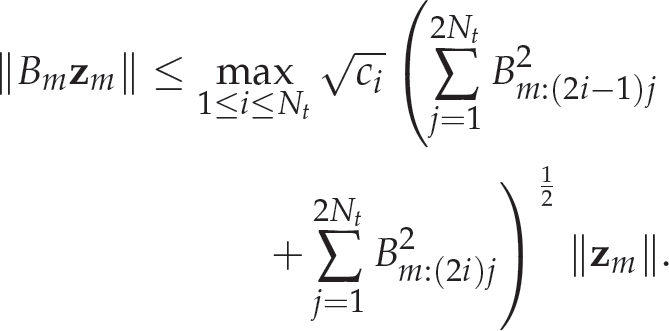

Consider the following vector and matrix norms:

The following inequality satisfies the Euclidean norm in: 葷2

Hence:

Introducing the neighbourhood weighting factor

Therefore:

We have:

where

where

For sequences

Optical Flow Estimation

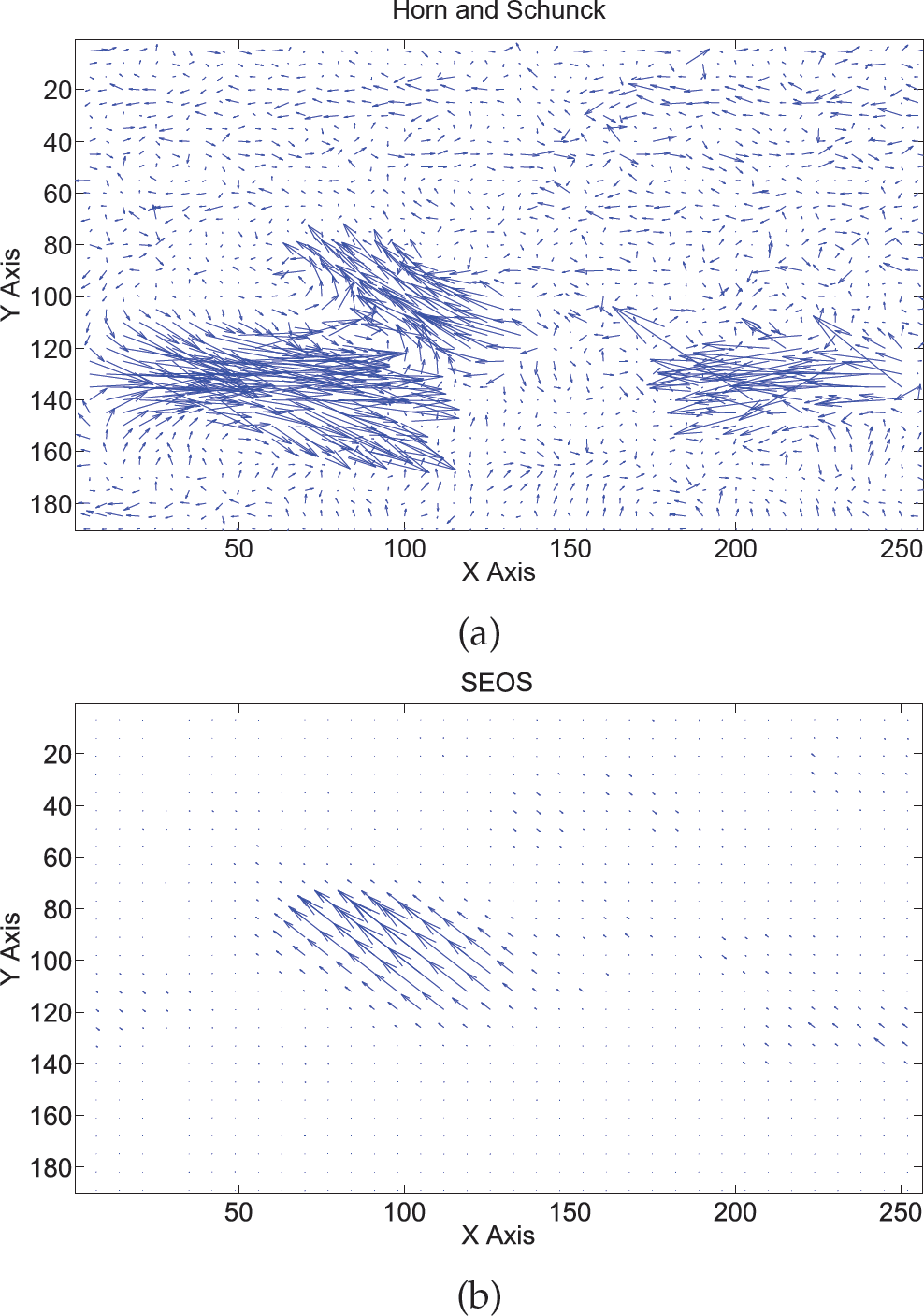

Tests were conducted on the well-known ‘Hamburg taxi sequence'; see Figure 2. The optical flow results generated by the ‘Horn and Schunck’ and SEOS algorithms can be seen in Figures 3(a) and 3(b), respectively. The main goal of the SEOS simulation was to focus on an object and track it in the image sequence while smoothing out all remaining objects. As seen in Figure 3(b), SEOS produced a dense and uniform flow for the central vehicle in the image sequence.

‘Hamburg taxi sequence’ showing the central vehicle as the object to be tracked

An important feature of the SEOS method is the fact that any object within the image sequence can be individually tracked and its corresponding flow vectors plotted.

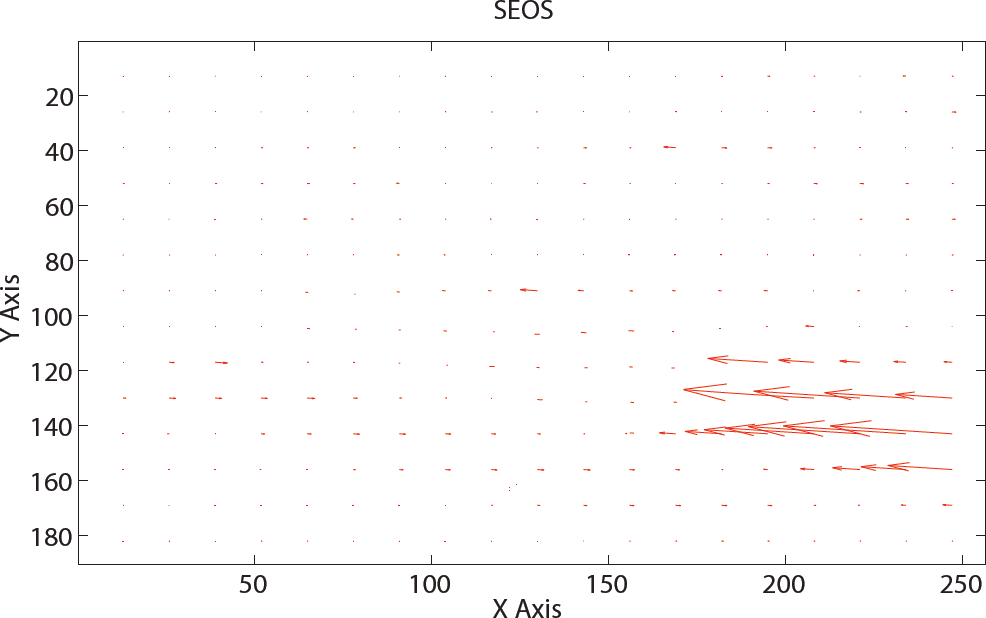

The object being tracked within the image is changed by adjusting the real-world coordinates of the object, and through projection, finding the corresponding image plane coordinates. Figures 4 and 5 illustrate that, with a change in image coordinates, the object being tracked is changed. The SEOS results (Figures 3(b) and 5) illustrate that smoothing is decreased within the object region, increasing the sharpness of the optical flow vectors. In areas outside the object, the smoothing is increased, resulting in minimal flow outside the object. With the ‘Horn and Schunck’ method (the data of Figure 3(a)), there is no uniformity in the vector directions associated with each vehicle, and there is randomness in the vector magnitudes.

SEOS produces an improvement in optical flow estimation over the ‘Horn and Schunck’ method by allowing the compensation of flow to the objects of interest, thereby aligning vector directionality. This is achieved due to the input of the object state parameters into the SEOS function and the assignment of suitable weighting parameters. When comparing the two methods, it is quite evident that there are noticeable performance gains with SEOS.

(a) Optical flow field of the ‘Hamburg taxi sequence’ using the Horn and Schunck method. (b) ‘Hamburg taxi sequence’ using SEOS to focus in on the central vehicle.

‘Hamburg taxi sequence’ showing the right moving vehicle as the object of interest

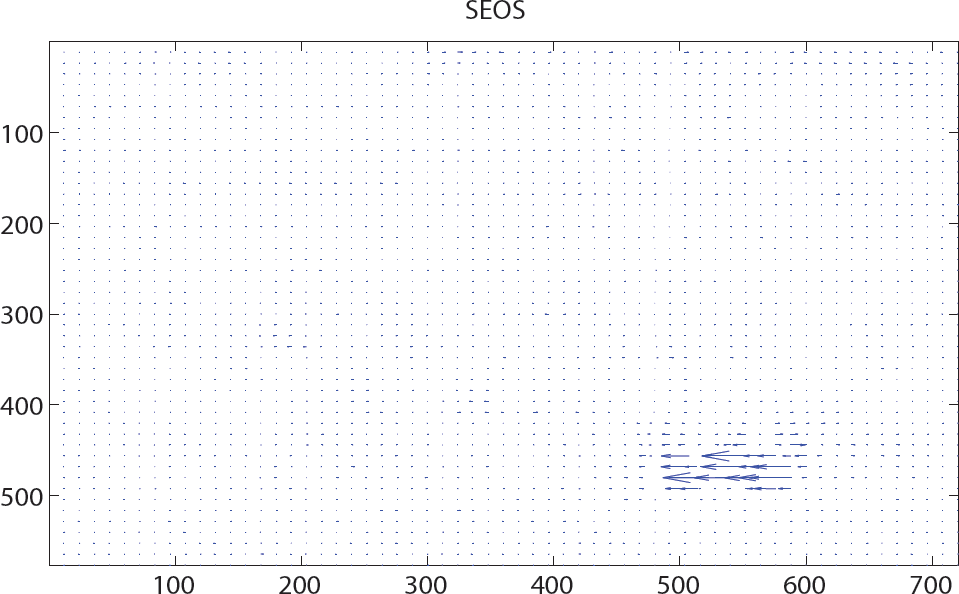

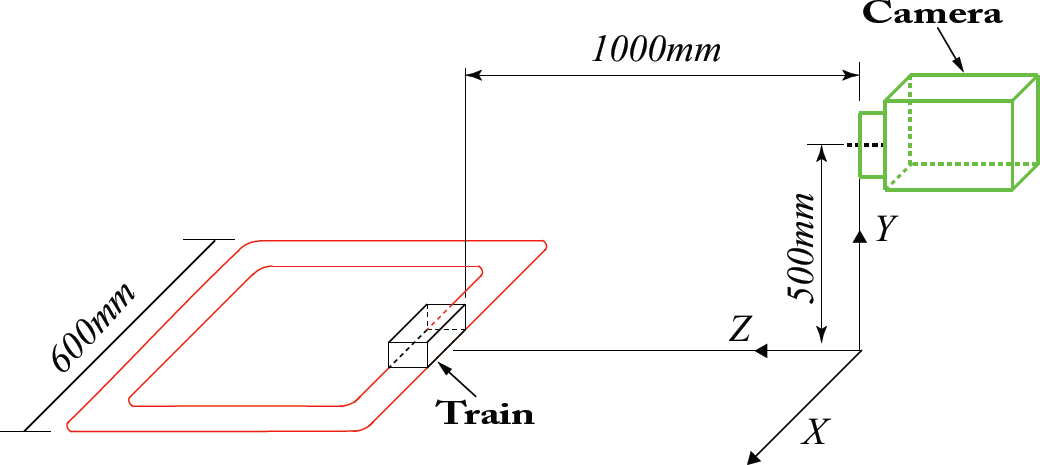

To further establish the benefits of employing SEOS for tracking, another test sequence was evaluated (see Figure 6). This video sequence consists of a toy train moving clockwise around a rectangular track. Consider the train sequence with the optical flow estimated with the use of the Horn and Schunck algorithm in Figure 7. It is quite obvious in Figure 7 that the Horn and Schunck method has quite a lot of difficulty identifying the motion of the train, with optical flow vectors scattering in an array of different directions and with varying magnitudes. The SEOS method applied to the train sequence can be seen in Figure 8. The SEOS method is used to focus in on the moving train. Results show that SEOS generates a dense and uniform flow field, and represents a good approximation of the true 2D motion of the train. The environmental scene around the train is not moving and, in an ideal scenario, should not experience optical flow. The Horn and Schunck method generates erroneous flow results in the area surrounding the train. On the other hand, the SEOS method only calculates the optical flow in areas pertaining to the object of interest. This area of interest is a user-specified input in the form of dynamic state parameters.

‘Hamburg taxi sequence’ using SEOS to focus in on the rightmost moving vehicle

Image of the toy train sequence

Optical flow of the toy train sequence estimated with the Horn and Schunck algorithm

State parameter estimation is achieved through the implementation of REKF. Robustness is crucial in vision-based systems due to the inherent and relatively significant initial state error. The performance characteristics of the SEOS state estimation were evaluated for the toy train sequence (Figure 6). The noise in the images is assumed to be a bounded function of time and space, and hence the estimated target locations are subject to bounded functions in time. These robust assumptions are in line with the REKF assumptions presented in Section 3. As we are using monocular vision, we only use the linear motion to demonstrate the underlying concept of simultaneous estimation. The nonlinear motion for trajectory tracking is considered (without simultaneous localization) in our stereo-vision papers ([7, 8]).

Optical flow of the toy train sequence estimated with the SEOS algorithm

Experimental schematic for state estimation of the toy train sequence

The simulation parameters used are given in Table 1. The train is assumed to have a constant velocity of 170

Simulation parameters

Motion of the object/train in 3D

Error in magnitude estimation of [

In this paper, we examined the convergence of the SEOS optical flow method. The system of linear equations associated with the SEOS technique was ordered such that its property matrix was positive definite symmetric. Satisfying this property is a sufficient condition for proving that the Gauss-Seidel pointwise and blockwise solutions converge. A block diagonal Jacobi iterative scheme was devised for SEOS and its convergence was proved. We also examined the effect of applying SEOS to a real-image sequence and tracking various objects within the ‘Hamburg taxi sequence'. Our results show a uniform directional flow field for the tracked vehicle. All objects outside the vehicle of interest were able to be smoothed out. This object isolation feature of the SEOS technique could prove invaluable in numerous tracking applications. The effectiveness of using SEOS for 3D state parameter estimation was evaluated. The state parameters were estimated with the use of a REKF. The clear performance benefits of SEOS (over the Horn and Schunck method) were exhibited for the toy train sequence, with reductions in state estimation error. The Horn and Schunck method is not capable of deciphering one object from another, and as such lacks accuracy in the estimation of state parameters.

Acknowledgements

This article is a revised and expanded version of paper entitled “Object focused simultaneous estimation of optical flow and state dynamics” by N.J. Bauer and P.N. Pathirana, presented at the International Conference of Intelligent Sensors, Sensor Networks and Information Processing, Melbourne, Australia in 2008.