Abstract

This paper presents our work of integration during the last years within the context of sensor-based robot navigation systems. In our motion system, as in many others, there are functionalities involved such as modeling, planning or motion control, which have to be integrated within an architecture. This paper addresses this problematic. Furthermore, we also discuss the lessons learned while: (i) designing, testing and validating techniques that implement the functionalities of the navigation system, (ii) building the architecture of integration, and (iii) using the system on several robots equipped with different sensors in different laboratories.

Introduction

Robots are being developed that operate under a wide variety of conditions including unknown, unstructured and dynamic scenarios. Mobility in such scenarios is a key issue to increase the degree of autonomy of a robotic system since it is the basis to incorporate more subsystems and functionalities. Thus, the performance of the motion system strongly affects the task carried out by the vehicle. The capabilities required for the navigation of an autonomous robot are tied up with the specific application and vehicle. For example, the a priori knowledge, the sensor information, the motion constraints of the vehicle or the computational power. This usually leads to the development of specific navigation systems to accommodate the requirements of each application.

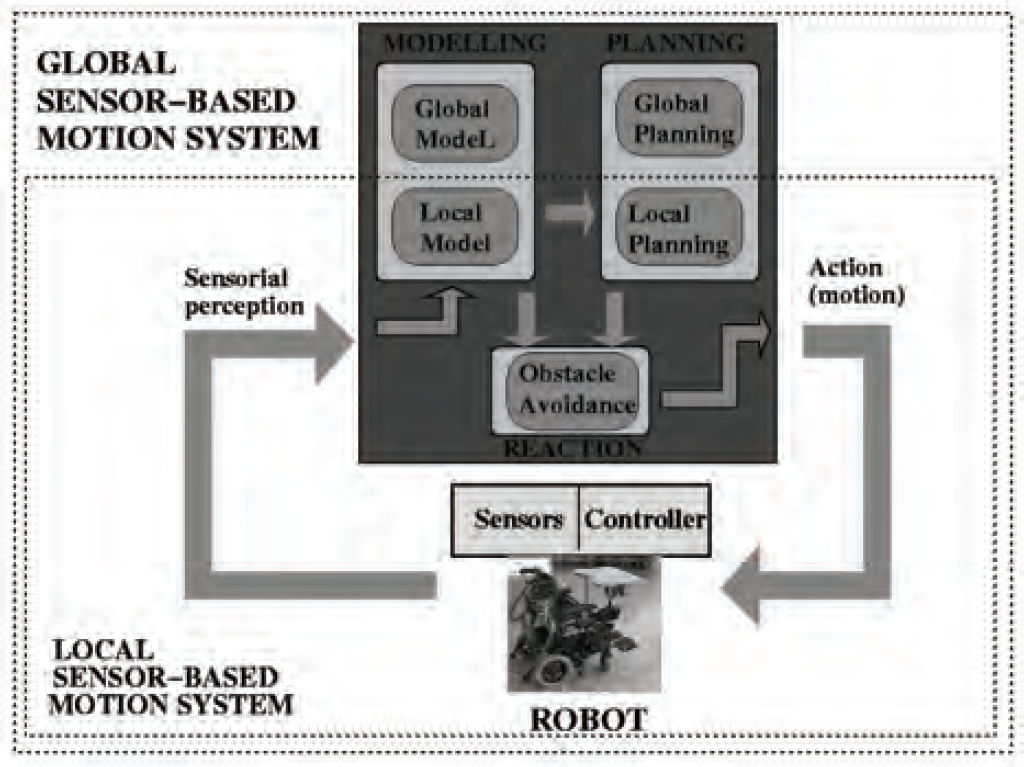

One important issue is to bound the scope of the mobility system, which is related to the differences between global and local navigation systems (Fig. 1). In fact, the concerns of these systems are different. For instance, for global systems, the construction of accurate models and the tracking of the position of the vehicle are important to create global plans and to guarantee motion convergence, while real-time execution is not. However, for local systems, simpler local models and rough planning are enough, while motion constraints related to real-time or to the vehicle such as shape, kinematics and dynamics are important to guarantee robust obstacle avoidance.

Global vs Local Sensor-Based Navigation

Nevertheless, the mobility aspect is inherently related with some functionalities necessary for a fully autonomous operation (modeling, planning and reaction). More precisely, the topic of motion in evolving environments includes issues such as knowledge representation (model construction), deliberation and reactivity. Hybrid systems (Arkin, R., 1989) attempt to combine these paradigms by including the best of the artificial intelligence to represent and use the knowledge, with the best reactivity, robustness, adaptation and flexibility. Basically these schemes combine a planner (deliberation) and a reactor (execution). This work focuses on local navigation, where hybrid approaches have been used in several systems (Ulrich, I. & Borenstein, J., 2000), (Brock, O. & Khatib, O., 1999), (Stachniss, C. & Burgard, W., 2002) or (Philipsen, R. & Siegwart, R., 2003). Our integration scheme follows this approach combining modeling, planning and reactivity:

In this context the

Existing works only address partially the integration issues of these navigation systems (Ulrich, I. & Borenstein, J., 2000), (Brock, O. & Khatib, O., 1999), (Stachniss, C. & Burgard, W., 2002) or (Philipsen, R. & Siegwart, R., 2003). This paper presents the evolution of our work during the last years within the context of local sensor-based navigation systems focusing on those aspects related to the integration architecture. Moreover, we show experimental results obtained with different real robots that illustrate the benefits of using an architecture of integration.

The work is organized as follows: first we present the evolution of our navigation system in Section 2. Section 3 describes the architecture. Section 4 presents the experimental results and Section 5 the conclusions.

The objective of a local motion system is to drive the vehicle among locations while avoiding collisions with obstacles. The operation is governed by a perception -action process repeated at a high frequency (Fig. 1). Sensors gather information of the environment (obstacles) and the robot. This information is then processed to compute the motion. The vehicle executes the motion and the process restarts. The result is an on-line sequence of motions that drives the vehicle to the destination without collisions. In this section we provide an historical perspective of the selection of the techniques, which are closely related with the problems that might be addressed to design a sensor-based navigation system (Table 1).

A summarized evolution of the sensor-based navigation system

A summarized evolution of the sensor-based navigation system

Some years ago we started to deal with the mobility problem of autonomous robots. For obstacle avoidance, we selected a potential field method (PFM in short) (Khatib, O., 1986). Our experience with this obstacle avoidance method (Montano, L. & Asensio, J.R., 1997) confirmed the problems that were described for this type of methods (Koren, Y. & Borenstein, J., 1991). In fact, at that time many methods exhibited problems to address the motion in troublesome scenarios. Thus, we understood that the first step was to design a method to close the research gap of reactive motion in dense, complex and cluttered scenarios.

Motion in Troublesome scenarios M2

To address this issue we developed the

With this new technique, we were able to address motion in places where it was difficult to maneuver vehicles. However, the problems of trap situations and cyclic behaviors were unavoidable due to the local nature of the obstacle avoidance methods.

Trap situations and cyclic behaviors: M3

With this problematic in mind, we realized that it was necessary to integrate local planning with obstacle avoidance. Besides, building a local model would also increase the spatial domain of the planner while acting as a memory for the obstacle avoidance method (sensor visibility constraints). The necessity of integrating these functionalities was the beginning of the work described in this paper. We proposed the

The GND implements a hybrid architecture with three layers (modeling, planning and reaction). The modeler constructs a representation of the environment integrating the sensory information, which is the base for the rest of modules. We used a robot-centred binary occupancy grid updated whenever a new sensory measurement is available. The planner computes tactical information to direct the vehicle. We implemented the

The key result was the integration of the modules in a unified system (Section 4 provides a detailed description of the architecture). This integration concentrates the best of the deliberative and reactive worlds, since the planning information helps to guide the motion toward zones without traps, and the reactive component quickly directs the execution according to the evolution of the environment. The advantage of this system was to perform robust and trustworthy navigation in difficult scenarios.

The vehicle constraints: M4

At this moment, we addressed the portability of the motion system to different platforms. In order to generate robust obstacle avoidance, the vehicle constraints (shape, kinematics and dynamics) could not be ignored. For this reason we included the vehicle constraints within the obstacle avoidance paradigm with the

The ND and many existing techniques assume that the robot is a point free of any constraint (omnidirectional motion). The idea behind the Abstraction Layers is to abstract these constraints the collision avoidance methods. The solution is to encapsulate the constraints within the spatial representation. By doing this, we transform the three-dimensional obstacle avoidance problem with shape, kinematics and dynamic constraints into the simpler problem of moving a point in a two dimensional space without constraints (usual approximation in obstacle avoidance). Thus, many existing methods that do not address these constraints can be applied in this representation. The consequence is that the methods take into account the vehicle constraints without being redesigned (the information is implicitly represented in the application space). Alternatively, the motion generator MG is based on a dynamic motion controller that converts the solution of the obstacle avoidance method into a command that complies with the vehicle kinematics and dynamics.

With these new techniques integrated in the previous system, we took into account the vehicle constraints in the obstacle avoidance module. In parallel, we ameliorated our previous ND version leading to the ND+ (Minguez, J.; Osuna, J. & Montano, L., 2004). The ND+ method improved the previous method with new navigational situations and a new design of the motion laws. Another advantage of the ND+ method is its efficiency that liberates computational resources for the other modules of the architecture.

Local correction of the vehicle localization and time requirements: M5

At this point in time, the precision of the localization of the vehicle became a serious limitation. In order to deal with vehicles with bad odometry information, it was necessary to correct the robot pose. Models built only with odometry accumulate errors. As the model is the base of the planning and obstacle avoidance methods, it strongly affects the performance of the system. At this point, time constraints were another important issue. The planning method was computationally very demanding and we investigated more efficient planners that do not penalize the reactivity and modeling performance of the system.

To improve the localization of the vehicle, we integrated a scan matching technique that improves the odometry readings using the information provided by the sensors. We used the

Furthermore, we developed a planner (Minguez, J.; Montesano, L. & Montano, L., 2004) independently but similar to the

Dynamic scenarios: M6

The previous systems do not differentiate between the static structure of the environment and the moving objects.

Reactivity against changes in the environment is achieved through a high sensing frequency. However, when dealing with dynamic scenarios, taking into account the nature of the obstacles might ameliorate the performance of the system. A reliable solution must address both: a module able to model the static and dynamic parts of the scenario, and a way to use this information within the system.

First, we designed a modeling module that carries out the detection and tracking of moving objects and the mapping of the static parts at the same time (Montesano, L.; Minguez, J. & Montano, L., 2005). We used a maximum likelihood approach that complies with the spatial and time constraints of the local navigation system. As a result we obtain a map of static obstacles and a separate map of dynamic objects and their velocities. Within this process, we integrated a new scan matching (Minguez, J.; Lamiraux, F. & Montesano, L. 2005), the

The other modules selectively use the dynamic/static information of the modeler. The role of the tactical planner is to determine at each cycle the main cruise to direct the vehicle. Therefore, the planner only uses the map of static features. The obstacle avoidance method generates the collision-free motion to align the vehicle toward the cruise (computed by the planner). Here we use the map of static obstacles, since all the obstacles included in the map must be avoided. Furthermore, we use information of the dynamic obstacles, but taking advantage of their velocity by projecting their position to the collision point with the vehicle.

At the same time we explored the use of the

Architecture design

This section describes the architecture of the navigation system focusing on those aspects related with the integration of the different functionalities and their interactions. The system has been designed to work on a single node. This is because many applications in which autonomous motion systems operate are

Overview of the architecture: interaction between modules and data flows

The exact data structures of depend on the technologies used (see Table 1). These structures define the interactions and dependencies among the modules. Replacing a module requires complying with the interface and usually does not require redefining it. For instance, sets of points are a common way to represent obstacles for several obstacle avoidance methods (modeler-reactor interface). The interface between the planner and reactor is a subgoal location (tactical information). With respect to the modeler-planner interface, we use a grid. Although there exist many other representations, grids are commonly used to compute navigation functions and are able to represent dense information. The bandwidth required by each flow varies depending on the modules but remains reasonable (under kilobytes per second).

The class diagram of Fig. 3 shows the static design of our motion system. The proposed design can be reused to implement a sensor-based motion system as the one described in this paper.

Class diagram of the architecture

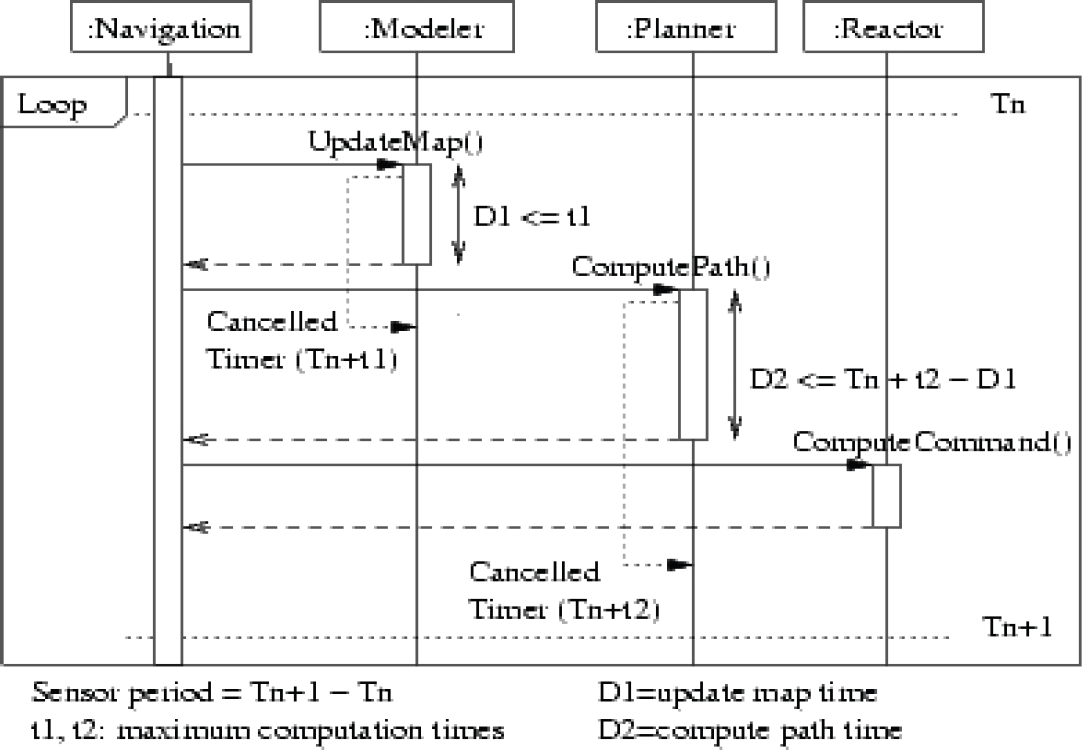

So as to comply with the time constraints and close the motion control loop at the desired sensor rate, we assigned timeouts to the

Scenario of an iteration and time constraint.

The system also has to manage failures (Fig. 2). Currently, our architecture includes the following ones:

Summarizing, the architecture decouples the functional modules necessary for the motion generation and assures their correct interaction and coordination. It also specifies the interfaces with the external modules and hardware devices. The benefits of the integration within the architecture are: (

The purpose of this section is to demonstrate that the navigation system successfully carries out the motion task and to show some of the benefits of the architecture: (

Some of the platforms where the motion system has been integrated

Firstly, we integrated and tested the system in seven robots at three different laboratories (Minguez, J. & Montano, L., 2002). Fig. 5 shows some of the vehicles where the motion system has been used. In all the cases, the results were very satisfactory from the motion execution point of view. The vehicles successfully achieved the motion task in unknown, unstructured and dynamic scenarios, where maneuvering was a determinant factor.

One of these implementations has been used daily in a museum for several months (Clodic, A.; Fleury, S.; Alami, R.; Herrb, M. & Chatila, R., 2005), and others are daily used for demonstrations (Alami, R.; Belousov, I.; Fleury, S.; Herb, M.; Ingrand, F.; Minguez, J. & Morisset, B., 2000) and (Montesano, L.; Minguez, J. & Montano, L., 2005).

Secondly, with this architecture we have been able to integrate our on going research. This is a key issue in developing time. Thanks to this architecture we have been able to design, integrate, test and validate more than 20 technologies in the last four years in our robots and in robots of other laboratories (Table 1 and Fig. 5).

Thirdly, another issue is the portability among different vehicles. This includes the following aspects:

In order to integrate the navigation system in the different vehicles, the first important issue was to take into account the vehicle shape, kinematics and dynamics for obstacle avoidance. This is important since the vehicles where the motion system was tested had different geometries like circular, square or rectangular. Furthermore the vehicles ranged from omnidirectional to differential-drive, and all of them had very different dynamics. These facts impose different constraints in the

In the vehicles used in the experiments the sensors ranged from ultrasounds and lasers to cameras. The usage of different sensors required using the appropriate sensor interfaces. Nevertheless, if the type of data needed a specific processing, the modeling module had to be replaced too. For instance, for the lasers we used the solution described in this paper and we adopted other solutions for the ultrasounds (Borenstein, J. & Koren, Y., 1991) and for the cameras (Haddad, H.; Khatib, M.; Lacroix, S. & Chatila, R., 1998). This is important since the information given by these three sensors is far different and any of them requires a processing step. The important point is that changes affected only to the model builder and the corresponding interfaces.

In this paper we have proposed an architecture to integrate the functionalities required to perform local sensor-based navigation. The architecture decouples the main functionalities of the system, defines their interfaces and assures their correct interactions. It provides a framework to continuously upgrade the system with new developments in the field, to ease the development process and to migrate it among different platforms. Currently we are transferring some of the functionalities described in the paper to the Stage/Player project 1 . We understand that this is a clear indicator of the maturity of the techniques and their successful integration in many different systems.

In addition, we have presented an historical perspective of the technologies and their main characteristics together with the lessons we learned. The requirements extracted from our experience during the development of the motion system (portability, real time constraints, adaptability, modularity) led to the design proposed in the paper.