Abstract

This paper focuses on the determination of active personal space (APS) for a service robot based on emotional status. This is required for human-robot interaction at ease. Here, APS means the active distance (relative distance during interaction and action) between the robot and the human. APS is a function of emotion both in the human and in the robot. The other factors which are considered here are age, height, familiarity with robot, and relative motion between robot and human. According to these factors, the changes in APS are shown with graphs. During the experiments two robot emotions are considered: normal and angry. The causes of variation of APS based on the two modes are also explained.

Keywords

1. Introduction

Since advancements in modern artificial intelligence systems, human interaction with robots has been of paramount importance, especially where humans and robots work together. Observing this interaction from different viewpoints, researchers have developed the concept of active personal space (APS). APS means the space that should be maintained between human and robot. APS plays a vital role in ensuring safety and comfort. That is, there should be a safe distance between the robot and the human or other objects. The objectives now are therefore to ascertain how this distance will be optimized, whether there will be any advantages gained by achieving this, whether the optimization will help to improve the condition of robots, how humans will react, what effect emotional states will have on APS, etc.

Personal space is the region surrounding a person, which he or she regards as his own, psychologically. Most people value their personal space and feel discomfort, anger, or anxiety when their personal space is encroached upon [1]. A number of relationships may allow personal space to be modified, for example with family members, partners, friends, and close acquaintances, where there is a greater degree of trust and knowledge of the person [2].

The scope of this research mainly includes the findings on APS during the interaction of a human with a service robot.

When a robot approaches or passes a human, there should of course be a safe distance between them for the comfort of the human. An avoidance algorithm is therefore necessary so that the robot will not cross into personal space. In addition, it is necessary for the robot itself to have a sense of APS when interacting with humans and to act according to the human mode, as APS varies drastically with respect to culture, functions, environment, etc.

In this study, at first a service robot (as shown in Figure 1a) is made to be used during data collection. This robot is used for two modes of emotion: normal mode (Figure 1b) and angry mode (Figure 1c)

(a) Appearance of the service robot under consideration; (b) Robot face in normal state; (c) Robot face with red eye (glowing eye), showing anger.

Section 2 describes briefly the related works about active personal space. Using the service robot several experiments are performed as discussed in Section 3. The findings are discussed in Section 4. A conclusion is presented and suggestions for future development are mentioned in Section 5.

2. Related Works

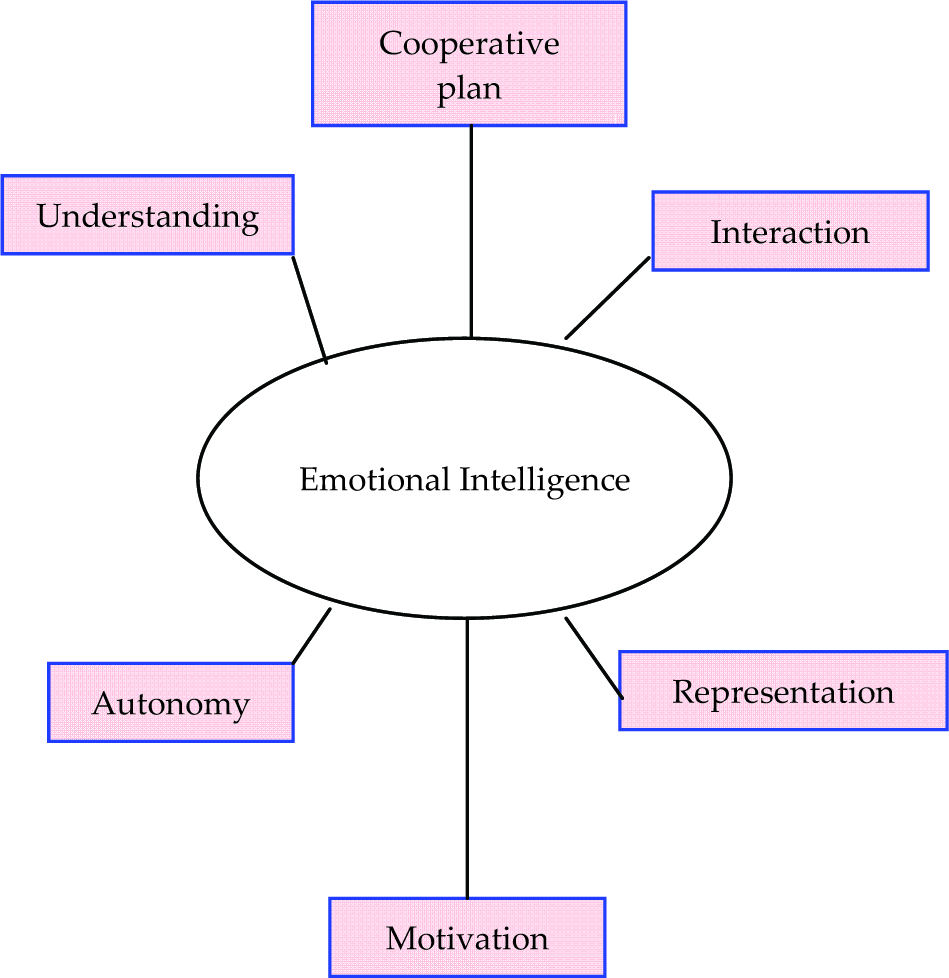

Emotions are crucial for social intelligence, as shown in Figure 2. This is particularly true for agents with limited resources [3] and for cooperative tasks [4–7]. It has been observed that introducing emotional intelligence may lead to more autonomous and efficient robots and robotic teams [5], and can improve group performance [6]. As emotion acts like compressed information [8], in which a lot of different information and internal statuses are incorporated, it can provide some motivation to activate actions/reactions to events [5, 9–11] or make communication between agents easier. In direct interaction among agents, it is necessary to find a comfort APS, which is highly dependent on the emotional status of both agents. There are wide areas of research where emotion is used for human-robot interaction [12–14], in which the concept of APS and its dependence on emotions can be incorporated for further advancement.

Beneficial effects of emotional intelligence

The notion of personal space was introduced by E. T. Hall, who created the concept of

One influential study on active personal space for autonomous robots was that of Balasuriya [16]. Balasuriya dealt with different aspects of the active personal space of autonomous robots in ubiquitous environments and developed a mathematical model using fuzzy logic. Several experiments were performed in [17] and the results of two empirical studies of HRI were presented. The first study involved the subject approaching the static robot and the second considered the robot approaching the standing subject. In these trials a small majority of subjects preferred a distance corresponding to the ‘personal zone’ typically used by humans when talking to friends. However, a large minority of subjects were happy to be significantly closer, suggesting that they treated the robot differently to a person, and possibly did not view the robot as a social being.

According to Sack [18] and Malmberg [19], the actual size of the personal space at any given instant varies depending on cultural norms and on the task being performed. For a simplified scenario for experimental analysis, appearance (mainly of the robot), previous acquaintance or familiarity, gender, age, height of the bodies (especially for interaction in the standing position), emission of any sound, emotions on the face, carrying objects, etc., were considered to play important roles in governing the personal space. Hence, in the research project presented in [16], the construction of an automated system to generate the most suitable personal space for any environment is attempted. In First, three parameters, namely height (H), age (A), and familiarity (F), were considered to generate an active personal space (APS) determination system.

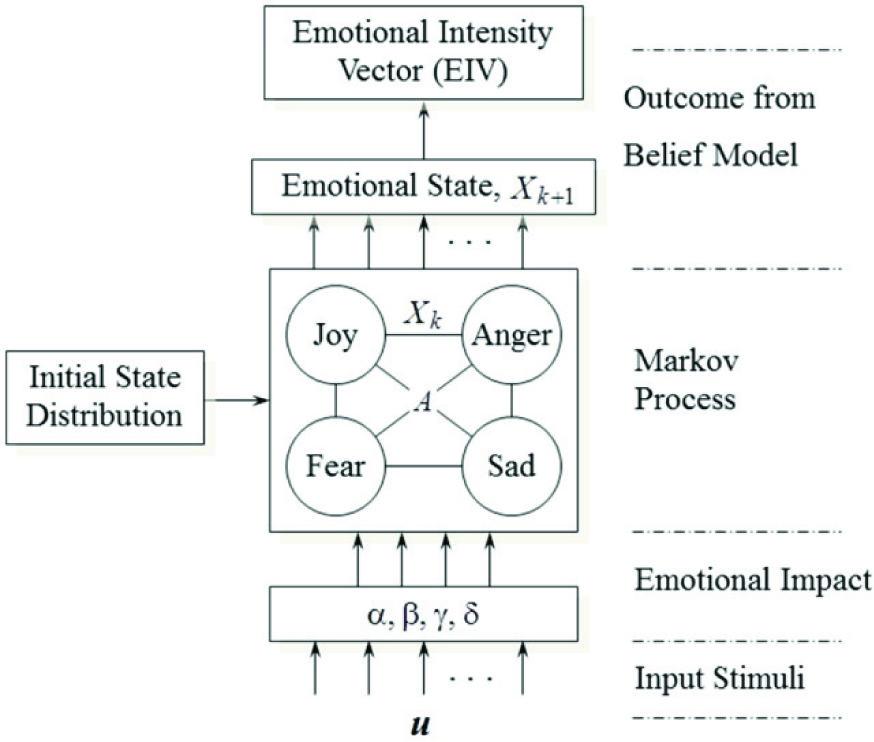

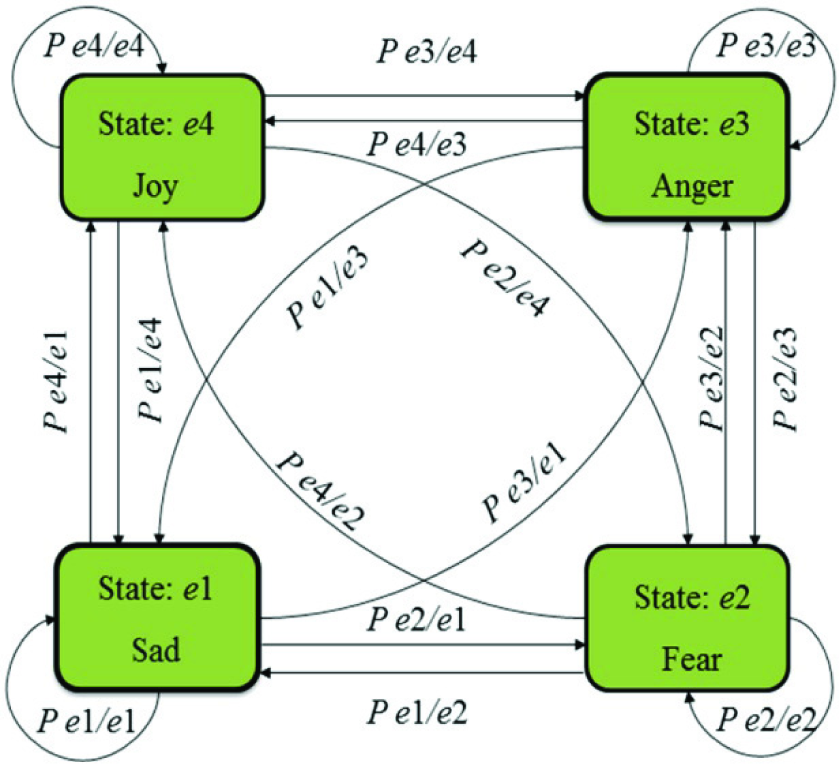

This paper finds that in addition to height (H), age (A) and familiarity (F), there is a further relationship of emotion with APS. Emotion is generated in the social robot, limited to two specific emotions based on the emotional model as proposed in [7, 20]. This model is a Markovian emotion model, where emotion is generated from some input stimuli and an initial state of emotion, as shown in Figure 3, where

Emotional state generator based on Markovian emotion model [20]

The Markovian emotion model with four emotional states can be expressed as follows:

with emotional state points

where

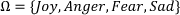

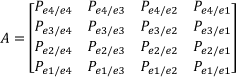

In the Markovian emotion model, the nodes represent the emotional states and the arcs/arrows indicate the probability of moving to the directed state as shown in Figure 4. The arc/arrow values are set to initial values (e.g.,

Markovian emotion model for emotional states [20]

These values can be modified later on with the influence of

The values of emotion-inducing factors (α, β, γ and δ) are updated through the input variables

Updating process of emotion-inducing factors with a fuzzy inference system (FIS) [7]

Though the model is structured for four emotional states, in the present study only two emotional states of the service robot are considered (as stated above) simplicity. The project attempts to develop various facial expressions for different emotional states. Here, a light red (glowing) eye is used to symbolize the robot's angry mode, as shown in Figure 1 (c), which can create fear in the interacting people.

3. Experimental Procedure

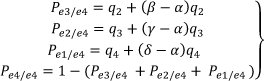

The experiment is performed in order to observe the reaction of the human and the interaction distance between human and robot. Human subjects of different ages and heights, with different educational backgrounds, were considered for data collection. Data were collected following several steps for a single person. The two modes of emotion (normal and angry) were explained to each interacting person. The glowing eyes of the robot created fear in the people.

All the human subjects (sample size was 25) were confronted with the service robot one by one. In the experimental procedure, one human subject was asked to move towards the robot as if he or she needed to talk with it, as shown in Figure 6. The human was asked to stand along the scaled line, look at the robot face and move closer to it until the proximity was such that the subject felt uncomfortable or unsafe. In this case, the human was moving and the robot was standing. This step was carried out with the two emotional conditions of the robot: normal and angry, as shown in Table 1. The interaction distances between human and robot were measured on a scale in the case of ‘moving human and standing robot’.

Experimental set-up for data collection

Six interaction statuses for a single person

The next experimental procedure is the same as the previous one, except that this time the robot was moving and the human was standing. This step was also carried out with the two emotional states of the robot. When the interacting person felt uncomfortable/unsafe, he/she said ‘Stop’. The robot's motion was then stopped at that moment by remote control. The last step of the experiment was ‘moving human and moving robot’ for both emotional states of the robot. Thus, six interaction distances (APSs from the front side of both for the six cases) were collected for a single person. In this way, 150 interaction distances were collected for 25 persons.

4. Results and Discussion

4.1. Standing Human and Moving Robot

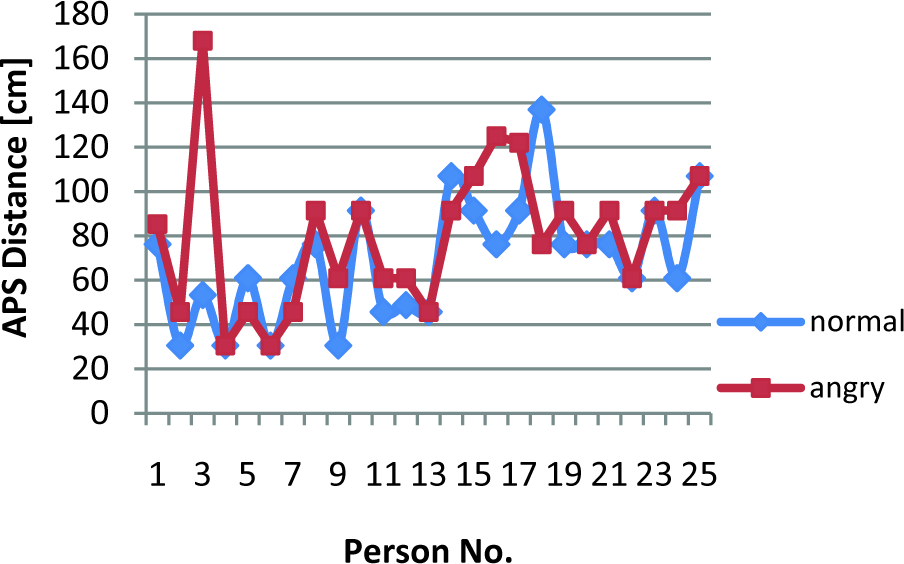

Figure 7 shows the graph of comparison between Int.1 and Int.2, where Int.1 is for the normal state and Int.2 is for the angry state. This graph shows that in most cases the interaction distance increases when the robot is in the angry state. However, in some cases the distance in the angry state is the same as for the normal state. This may occur for a person who is not aware of the robot's state.

APS when human is standing and robot is moving

4.2. Moving Human and Standing Robot

Figure 8 shows the graph of comparison between Int.3 and Int. 4, where Int.3 is normal and Int.4 is angry, when the human is moving and the robot is standing. This graph gives the same information as Figure 7, in that, in most of the cases, interaction distance increases when the robot is in its angry state.

APS when human is moving and robot is standing

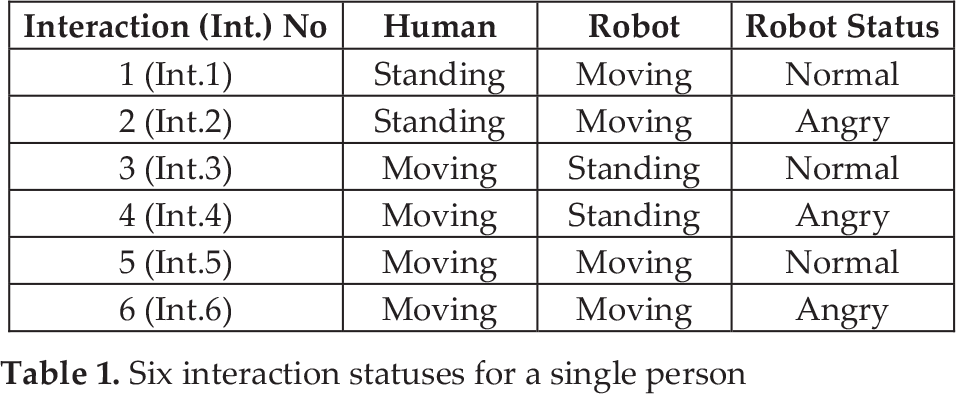

4.3. Moving Human and Moving Robot

Figure 9 shows the graph of comparison between Int.5 and Int.6, where Int.5 is normal and Int.6 is angry. The graph also gives the same information about APS as Figures 7 and 8. However, in some cases the APS remains the same for both conditions. This may have happened because of the different characters of the humans interacting with the robot.

APS when human and robot are moving towards each other

4.4. Comparisons between Int.1, Int.3 and Int.5

Figure 10 shows the graph of comparison between Int.1, Int.3 and Int.5, where all interactions are with the robot in normal state. It can be seen that the higher value of APS is obtained when the human is standing and the robot is moving (Int.1). Int.1 has an average APS of 69.31 cm. The human is closely concentrating on the robot when the robot is moving towards him/her, and wants to keep the APS higher for safety and comfort. This can be concluded after collecting subjects' opinions about keeping the distance between robot and human.

APS for interactions 1, 3 and 5 when robot is in normal condition.

4.5. Comparison between Int.2, Int.4 and Int.6

Figure 11 shows the graph of comparison between Int.2, Int.4 and Int.6, where all interactions are with the robot in its angry state. Here, it can be seen that the highest value of APS is obtained when the human is standing and the robot is moving. The average APS is 79.75 cm.

APS for Interactions 2, 4 and 6 with the robot in its angry state

Summarizing the results from Figures 7, 8, 9, 10 and 11, it can be stated that APS distance is high when the robot is in angry mode (Int.2, Int.4 and Int.6) for any state of relative motion, as shown in Figure 12. It is highest when the robot is moving towards a standing person.

Average value of APS for six interactions

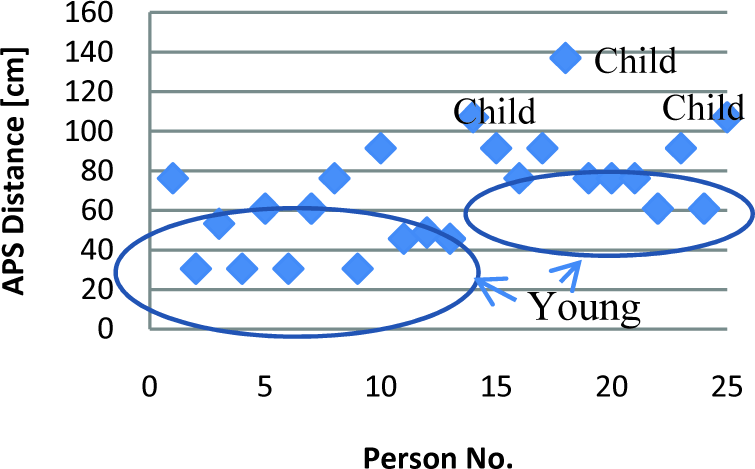

Ten males (40%) and 15 females (60%) participated in the robot interaction for APS determination. The age-range of the participants was from six to 55. Children made up 12% (6-18 years), young people 64% (19-30 years) and middle – aged people 24% (31-55 years) of the sample (as shown in Figure 13). Summarizing the data, children usually maintained a higher value of APS as they are not as familiar with robots, having only seen them in videos or on TV. Most of the young people were students with an engineering background. They have maintained a moderate value of APS during the experiments, as they are familiar with robots and have previous knowledge about robotics. The middle-aged individuals maintained a higher value of APS compared to the young people. Their education level was below secondary school and they had very little familiarity with robots. This style of variation of APS can be seen in every type of interaction in Table 1. For example, Figure 14 shows this information for Int. 1 (Human standing, Robot moving with normal mode).

Percentage of children, young people and middle-aged people in the sample

APS of children, young people and middle-aged people according to Int.1 (the non-marked APSs are for middle-aged people)

Some general observations regarding the experiments [21] follow below.

Rather than a very specific area for the interactions, for example a laboratory, a more general, everyday place would be much better. It would then be possible to use several robots with several humans. As people do not interact only in a standing position, other postures such as sitting, walking, etc., either alone or in groups, should be considered.

It is also necessary to formalize a model for the robots to learn about an individual to personalize the relationship, in addition to the question of personal space. The robots will then be able to build closer relationships with people, modifying the APS. Therefore, identifying and defining the mechanism for sustaining long-term relationships is an important area of future research in human-robot interaction. Furthermore, there should be a way to formalize a model of the relationships between humans and robots over time, and establishing a method to promote lasting interactive relationships. It has been observed by some researchers that several pet robots, for example, have a special pseudo-learning mechanism with several functions, but which shows only a few functions initially, gradually revealing more according to their interactions [16].

In order to generate various emotional states of the robot in finding an APS, it is necessary to develop an emotion generation engine as proposed in [22]. This will provide a convenient means for triggering multiple emotions of different intensity in different situations and interactions, focusing on achieving the desired behaviour through emotional states – the main concern in human-robot interaction.

5. Conclusion and Future Work

This paper has discussed APS in interaction between humans and robots where the robot is considered as a social being, using a different concept to [17]. This project has the goal of designing a system that can give information about APS based on some parameters. The relationship between the human and the robot is likely to change as time passes, much like inter-human relationships. Thus, it is important to observe the relationship between human and robot in an environment where long-term interaction is possible. The results of incorporating the robot into such a constantly participating human environment may be entirely different to interactions that occur in a short time period. With an increasing number of interactions of a person with a robot, the APS may be reduced as familiarity is increased with the interactive robot.

In future research, such information will be very helpful to combine qualitative observations with each person, where detailed information is recorded. Although there are many scattered studies that address the same topic in different ways, there is not a single one that combines all these approaches. So, attempts will be made to combine all outcomes in the field of APS, which is one of the critical issues for HRI.

In this research, it was attempted to make the robot look like a human. Only two emotional states have been incorporated into the robot in this research. In future, more emotions with different appearances will be used to analyse APS more critically and fruitfully. The considered parameters were limited. It would be possible to expand the system for any number of parameters, once a very basic model is created. In this research, the number of human subjects was limited. In order to make the research self sufficient, the number of human subjects can be extended for stochastic analysis and the data can be applied using the fuzzy Markovian emotion model as proposed in [20]. Face and appearance analysis is also an important issue that should be further considered in future research for determining APS.