Abstract

This paper discusses the transference process of emotional states driven by psychological energy in the active field state space and also builds a robot expression model based on the Hidden Marov Models(HMM). Facial expressions and behaviours are two important channels for human-robot interaction. Robot performance based on a static emotional state cannot vividly display dynamic and complex emotional transference. Building a real-time emotional interactive model is a critical part of robot expression. First, the attenuating emotional state space is defined and the state transfer probability is acquired. Secondly, the emotional expression model based on the HMM is proposed and the performance transference probability is calculated. Finally, this model is verified by a 15 degrees of freedom robot platform. Moreover, the interactive effects are analysed by a statistical algorithm. The experimental results demonstrate that the emotional expression model can acquire an expressive performance and remove the mechanized interactive mode when compared to traditional algorithms.

Keywords

1. Introduction

Human emotion is complex and multi-interrelated and affective computing is gradually developing alongside psychological research. The OCC cognitive-affective model is generated by the affective situations (including events, objects and agents) that not only induce cognitive processes but also trigger a subjective emotional experience. Therefore, emotion can be regarded as a kind of psychological energy to drive human behaviours [1-3]. Free mental energy and constrained mental energy are the two main kinds of psychological energy form. Free mental energy guides spontaneous emotional state transference and is dependent on personal interest and character [4]. Constrained mental energy is an emotional state suppressed by an objective purpose or prerequisite [5, 6]. The internal manifestation of psychological energy is emotional state transference and the external is behaviour.

Facial expressions and behaviours are two important channels for human-robot interaction. Psychology research has shown that in the emotional expression process language only accounts for 7% and tone for only 35%, the remaining 55% comes from facial and behavioural expressions [7, 8]. Researchers pay more attention to facial expressions and robot behaviour in human-robot interactions.

This paper discusses the transference process of emotional states driven by the psychological energy in the active field sate space and builds a robot expression model based on the HMM. The attenuating emotional state space is defined and the state transference probability is acquired. This model is verified by a 15 degrees of freedom robot platform in the experiment and the interactive effects are analysed by a statistical algorithm.

2. Dynamic Emotional Regulation in the Active Field State Space

2.1. Description of the Emotional State Space and Regulation Process

The robot's own emotional state space is defined by S = {s1,s2,…,sn}. Here, n is the number of emotional states. The space S, where the robot emotion is freely transferred, includes all the available emotional states. The robot input is W = {w1, w2,…,wm}. m is the emotional stimulus from the facial expression input. In this paper, the facial expression of an interactive object is mapped into 7 basic emotional states in order to simplify the experiment. Based on Ekman's emotional theory, human emotional states can be divided into 6 basic emotions: anger, disgust, fear, happiness, sadness and surprise. Calming is included in the emotional state space to make the robot more similar to humans. Stimulus emotional state space is:

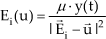

As a result of the external stimulus, the robot's own emotion is aroused and expressed by facial expressions and behaviour. As shown in Figure 1, the robot emotional expression model is built in terms of the above description.

2.2. State Transference Probability in Emotion Space

2.2.1. Active Field State Space

Based on Kismet's emotion space [9], each external stimulus from the expression input is looked upon as a source in the active field state space. In this space, shown in Figure 2, the intensity of the external stimulus decides the active degree of the field. According to basic theory of the active field, when there is only one field source in the space, point u(x,y,z)‘s field strength and direction vector

Here,

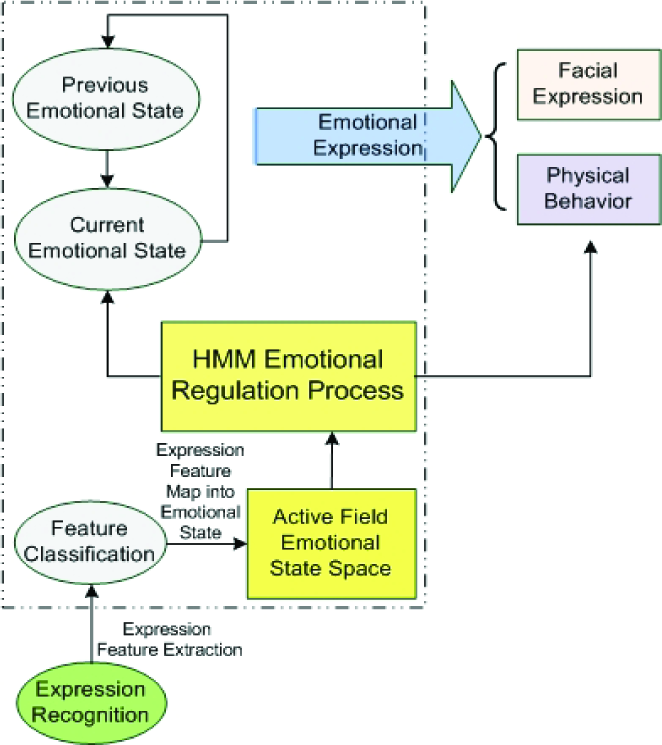

2.2.2. Definition of Attenuation Function

The robot's correct emotional state is regarded as a point charge in the active field. The electric quantity of each field source is Q0 without an external stimulus. Because the emotion is a reactive and movable process, emotional state changes with surroundings, character and satisfaction [10-12]. As a result of the external stimulus, the robot's emotional state is transferred according to subjective psychological processing. Because emotional intensity weakens gradually with time, the attenuation function is defined. As is shown in Figure 3, at time t0 emotional intensity begins to decline and the attenuation function is:

Robot emotional expression model.

Emotional state space in the active field.

The attenuation function curves of 7 basic emotions.

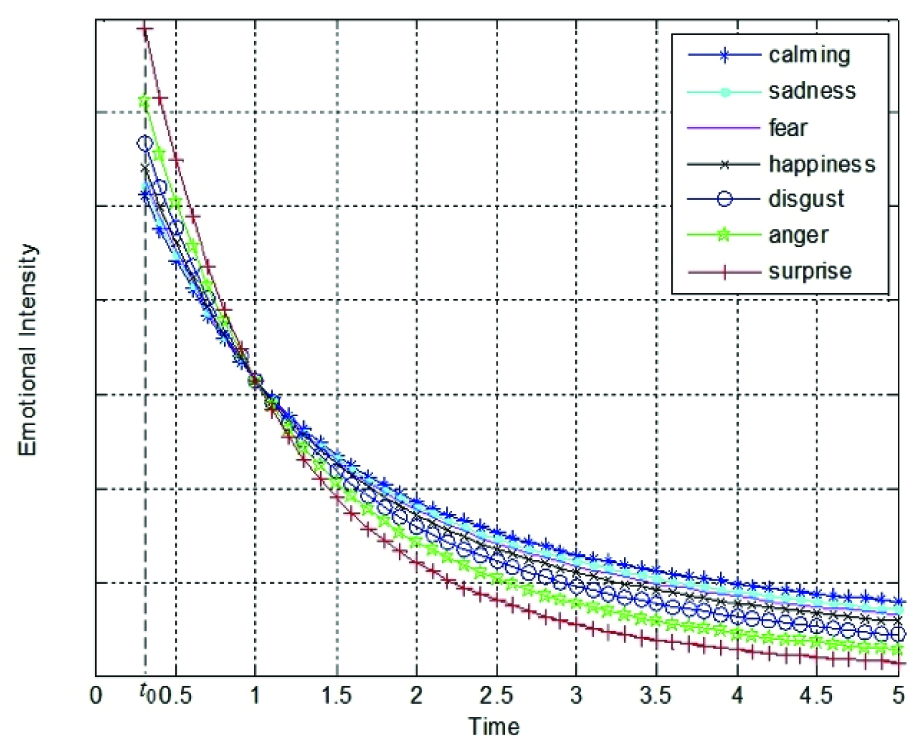

Here, λ = {1,2,3,4,5} is the intensity factor of the external stimulus. In addition to the type of cognition, the emotional essence also influences the intensity of attenuation, so factor C is defined. Each emotion has its own attenuation speed. The progression from slow to fast occurs in the following order: sadness, fear, happiness, disgust, anger and surprise. The external stimulus attenuation factor of calming c7 is zero. The external stimulus attenuation factor of the other 6 emotions ci is calculated by the Analytic Hierarchy Process. First, disgust, surprise, happiness, anger, fear and sadness are expressed as w1, w2,…,w6 respectively. The comparative value between emotions i and j is shown in Table 1.

The comparative value of the attenuation speed between emotion i and j

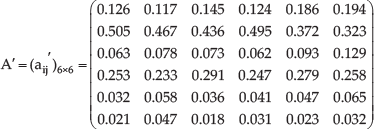

Secondly, aij is the comparative result between emotion i and j and aij = 1/aji. The comparative matrix is:

A‘ is A normalized by formula (4).

The external stimulus attenuation factor C = [0.15 0.43 0.08 0.26 0.05 0.03 0]is calculated by formula (5).

2.2.3. Emotional State Transference Probability

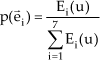

As mentioned in Section 2.2.1, the field strength influences the direction of emotional state transference in the active field space. The transference probability is proportional to the electric quantity of the field source and is inversely proportional to the distance from the stimulus emotion to the current emotion state. Based on formula (1-3), the stimulus intensity matrix of 7 basic emotions is E = [E1(u) E2(u) … E7(u)]. The transference probability from the current emotional state to the next along

Here,

3. Emotional Expression Model based on HMM and Implementation

3.1. Emotional Expression Model based on HMM

Human emotion can be divided into two parts, the primary psychology and the secondary emotional feeling. Primary emotional feeling is an unconditioned reflex in the external environment. Secondary emotional feeling is produced when the relation between the primary emotion and the present perception is built [13, 14]. Therefore, the HMM stimulation-transference model is proposed for realizing emotional regulation and expression, as shown in Figure 4.

The emotional state transference probability matrix A=[p(

When the robot emotional state is sk, bij is the transference probability from performance vi to vj.

Performance transference is full state transference. So the sum of the probability from one performance state to all the others in V is p(

According to Gross emotional response regulation, the expression suppression strategy is proposed in the emotional regulation process for controlling facial expression and behaviour. The degree of facial expression and behaviour is Ω in theory and the expression suppression degree is η(0 < η < 1). Therefore, the performance degree is Ω' = (1 – η)Ω. The magniloquent degree of robot performance is adjusted by the factor η.

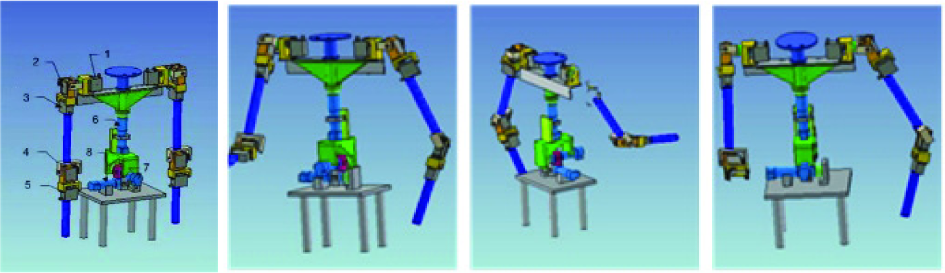

3.2. Implementation of Emotional Performance

The robot mechanical structure is designed according to research on the proportion relationship of the torso and limbs of Chinese adults [11]. As shown in Figure 5, there are 15 degrees of freedom and 100 kinds of facial expression. Therefore, the robot can achieve real time control for an abundance of behaviours and facial expressions. The robot's upper-body joint is composed of 13 motors and many connecting pieces. The mechanical structure is shown in Figure 6. Basic movements of a human arm can be completely simulated by this structure. The specific distribution of degrees of freedom is shown in Table 2.

HMM transference model.

Robot's structure and typical expressions.

Robot's upper-body mechanical structure.

The specific distribution of degrees of freedom.

The current emotional state is composed of external stimuli and previous emotions and has a certain randomness. When the emotion is produced, it will trigger a series of behaviours. The robot determines the current behaviour via the production rule:

Typical emotional performance in the experiment.

Real time expression recognition results: fear, sadness, anger, disgust, surprise, calming, happiness.

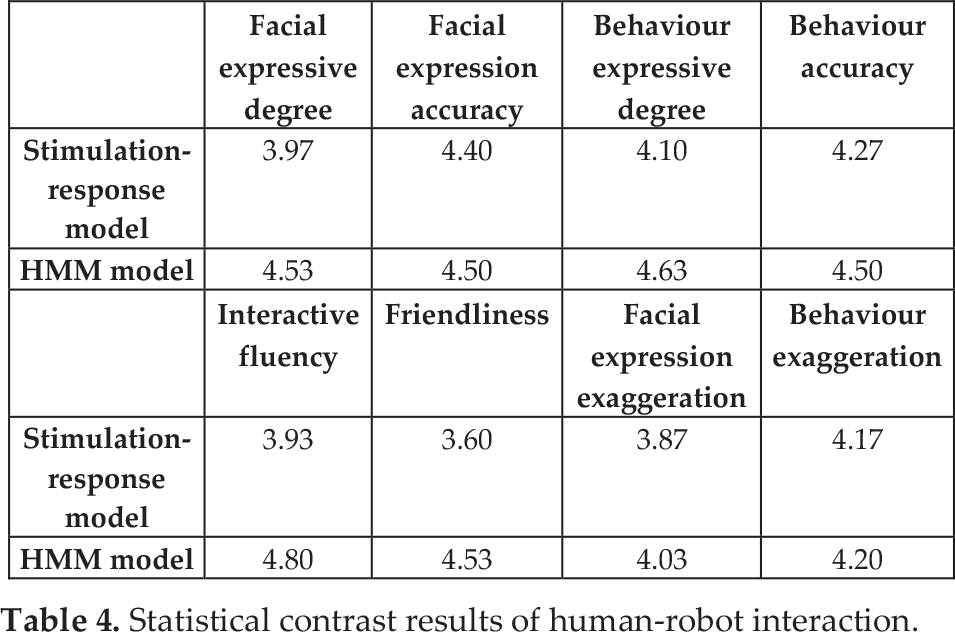

Statistical contrast results of human-robot interaction.

4. Experiment

Emotion is a high-level intelligent behaviour that reflects human thinking activity and is represented as an implied state in the HMM model. Emotional performance is the external manifestation and is represented as an observable state in the model. The emotional performance model is used in the real human-robot interactive process for proving this impact. First, the feature of the facial expression is extracted by Gabor wavelet and the SVM is used as a classifier for obtaining the stimulus emotional state. The recognition result is shown in Figure 7. There were 30 volunteers in this experiment. The accuracy rate of the expression recognition is approximately 78.9%. Second, the emotional state model is built in the active field via the calculation of matrix A. Third, the performance transference matrix B and the expression suppress factor η are determined and then the robot performance model based on HMM is built. When the object's facial expression is recognized and mapped into the emotion space, the robot produces the facial expression plan and behaviour plan via the HMM model. Finally, the robot implements the performance via control of the hardware devices. A typical robot emotional expression in the human-robot interactive experiment is shown in Figure 8.

The robot facial expression and behaviour is an observable state for the emotional regulation process mentioned in Section 2. If the robot expression corresponds with interactive context and its manifestation is flexible, the emotional expression model is believable. 30 volunteers evaluated the interactive process based stimulation-response model and HMM model via the Likert scale. The scale includes involved with the advantageous projects, such as interactive fluency, facial expressive degree, facial expression accuracy, the plenty degree of behaviours/expressions, behaviour accuracy and friendliness. And itIt also includes involved with disadvantageous projects, such as the exaggeration degree of facial expressions exaggeration, the exaggeration degree of behaviours exaggeration. Advantageous projects from very satisfied to discontented is divided into five levels, which is given a mark as 5, 4, 3, 2, 1 credit respectively. Disadvantageous project from very agreement to disagreement is also divided into five levels, which is given a mark as 1, 2, 3, 4, 5 credit respectively. The statistical contrast results of the human-robot interaction are shown in Table 4.

6 typical emotional performances.

The experimental evaluation results show that compared with the Stimulation-response model, robot performance is more expressive and friendly in the HMM model and the accuracy and exaggeration degree is not raised obviously. It can be seen that the robot expression mode is more interesting due to the introduction of the emotion and performance probability space. Under the control of this model, a robot can regulate its own emotion autonomously and the mechanical interactive mode is improved to a certain extent. A robot can dynamically regulate emotion and performance in real time and the interactive quality improves significantly.

5. Conclusion

Based on the OCC cognitive-affective model and the psychological energy theory, the emotional state transference process is described in the active field state space. The HMM emotional expression model, combined with the robot's own emotional effect from the interactive object, human emotional attenuation and expression suppression, is used in the human-robot dynamic interaction. Because the model has double stochastic processes, the robot performance model is expressive and the interaction is more natural. The model is applied to a real robot platform and psychological surveys from 30 volunteers are analysed. The survey results show that the robot communicates with objects by means of facial expressions and behaviour and removes the mechanized interactive mode to improve the effect of the human-robot interaction.

There are numerous effects in emotional regulation, including internal psychology elements and external surroundings. In this paper, the model and implementation only considers a few obvious effects. Continuing further research in infinite-dimensional continuous space is very important.

Footnotes

6. Acknowledgments

This work is supported by National Natural Science Foundation of China (No. 61170115; No. 61170117; No. 61105120), and the 2012 Ladder Plan Project of Beijing Key Laboratory of Knowledge Engineering for Materials Science (No. Z121101002812005).