Abstract

We developed a method for pattern recognition of baby's emotions (discomfortable, hungry, or sleepy) expressed in the baby's cries. A 32-dimensional fast Fourier transform is performed for sound form clips, detected by our reported method and used as training data. The power of the sound form judged as a silent region is subtracted from each power of the frequency element. The power of each frequency element after the subtraction is treated as one of the elements of the feature vector. We perform principal component analysis (PCA) for the feature vectors of the training data. The emotion of the baby is recognized by the nearest neighbor criterion applied to the feature vector obtained from the test data of sound form clips after projecting the feature vector on the PCA space from the training data. Then, the emotion with the highest frequency among the recognition results for a sound form clip is judged as the emotion expressed by the baby's cry. We successfully applied the proposed method to pattern recognition of baby's emotions. The present investigation concerns the first stage of the development of a robotics baby caregiver that has the ability to detect baby's emotions. In this first stage, we have developed a method for detecting baby's emotions. We expect that the proposed method could be used in robots that can help take care of babies.

1. Introduction

In Japan, the number of births has shown a downward trend. This trend is considered a serious problem. One of the reasons for the decrease in births may be the lack of childcare support as a social system. In many cases, the mother works outside the home and needs childcare to take care of her baby.

The number of people living together has also shown a downward trend. As a result, a young mother often takes care of her baby by herself. However, she might not have the opportunity to learn appropriate childcare practices.

As is well known, it can be extremely dangerous to leave a baby alone. Nevertheless, a mother typically must do much housework and may want to enjoy another activity. In these cases, raising a child safely becomes more difficult. Unfortunately, baby abuse and neglect have been increasing because of the stress of mothers taking care of their babies. Therefore, we think that decreasing the mother's stress may slow the decreasing number of births.

Investigations on a baby's cry have primarily used frequency analysis [1]–[9]. However, clipping of the sound form of a baby's cry from wave data has been performed by manual operations. Because manual operation is time consuming, automatic detection of a baby's cry and automatic recognition of a baby's emotion have not been resolved.

We have been researching a system for improving baby care by recognizing a baby's emotion from the baby's cry. As stated above, clipping of the sound form of a baby's cry from wave data has typically been performed manually [9]. We previously proposed a method for detecting a baby's cry by using a speech recognition system [10] and fundamental frequency analysis [11]. In this paper, we propose a method for pattern recognition of baby's emotions (“discomfortable”, “hungry”, or “sleepy”) by using the sound form clips, which are detected by our reported method [11].

To our best knowledge, there are no published papers on robotics baby caregiver. The present investigation concerns the first stage of the development of a robotics baby caregiver that has the ability to detect baby's emotions. In this first stage, we have developed a method for detecting baby's emotions. We expect that the proposed method could be used in robots that can help take care of babies.

2. Method for Detection of a Baby's Cry

Fig. 1 shows the flow chart of our reported method [11] for detection of a baby's cry. We explain the procedure in the following.

Flow chart for detecting the sound form of a baby's cry [11].

2.1 Word Recognition

We use a speech recognition system named Julius [10] for word recognition [11]. When Julius recognizes a sound form segment as expressing a certain word, the sound form segment is used in the next processing. However, in our reported method [11], when Julius recognizes a sound form segment as silent, the sound form segment is omitted.

2.2 Threshold Treatment of the Sound Form

The sound form segment recognized as not silent by Julius might contain sound mostly composed of noise. We neglect the sound form segment having an amplitude below a threshold decided experimentally beforehand, because the sound form segment may be composed of noise [11]. The threshold was experimentally decided as the value of m+2σ, where m and σ are the average and the standard deviation, respectively, of the sound amplitude when neither voice nor unusual sound is present in the recorded sound form [11].

2.3 Detection of a Baby's Cry

We proposed the following two conditions for recognizing a sound form segment as coming from a baby's cry [11]:

Condition 1: The word reliability for a sound form segment obtained using Julius is under a specified threshold.

Condition 2: For a certain time period, the change of the fundamental frequency of the sound form segment is more than another specified threshold.

The two thresholds and the time period are experimentally decided. When at least one of the above two conditions is met, the sound form segment is judged as coming from a baby's cry. We do not need a special template to detect baby's cry.

2.3.1 Judgment by Word Reliability

Julius outputs a word reliability of 0 to 1 for a recognized word. High word reliability occurs when the difference of the likelihood of other candidate words is large. Accordingly, when the value of the word reliability is small, the speech recognition result may be doubtful. In the case of a baby's cry, the word reliability given by Julius is assumed to be small.

We decided a threshold for distinguishing the word reliability of a baby's cry from that of an adult's voice [11].

2.3.2 Fundamental Frequency Analysis for a Short Time Period

In this study, we found that the fundamental frequency of a baby's cry suddenly changes, for example, in the time ranges of 1.5 to 1.8 s and 6.6 to 6.8 s, as shown in Fig. 2 [11].

Large change of fundamental frequency of baby's cry for a short period [11].

Therefore, we decided a certain time period and another threshold of change in the fundamental frequency for distinguishing a baby's cry from other sounds [11].

3. Pattern Recognition of a Baby's Cry

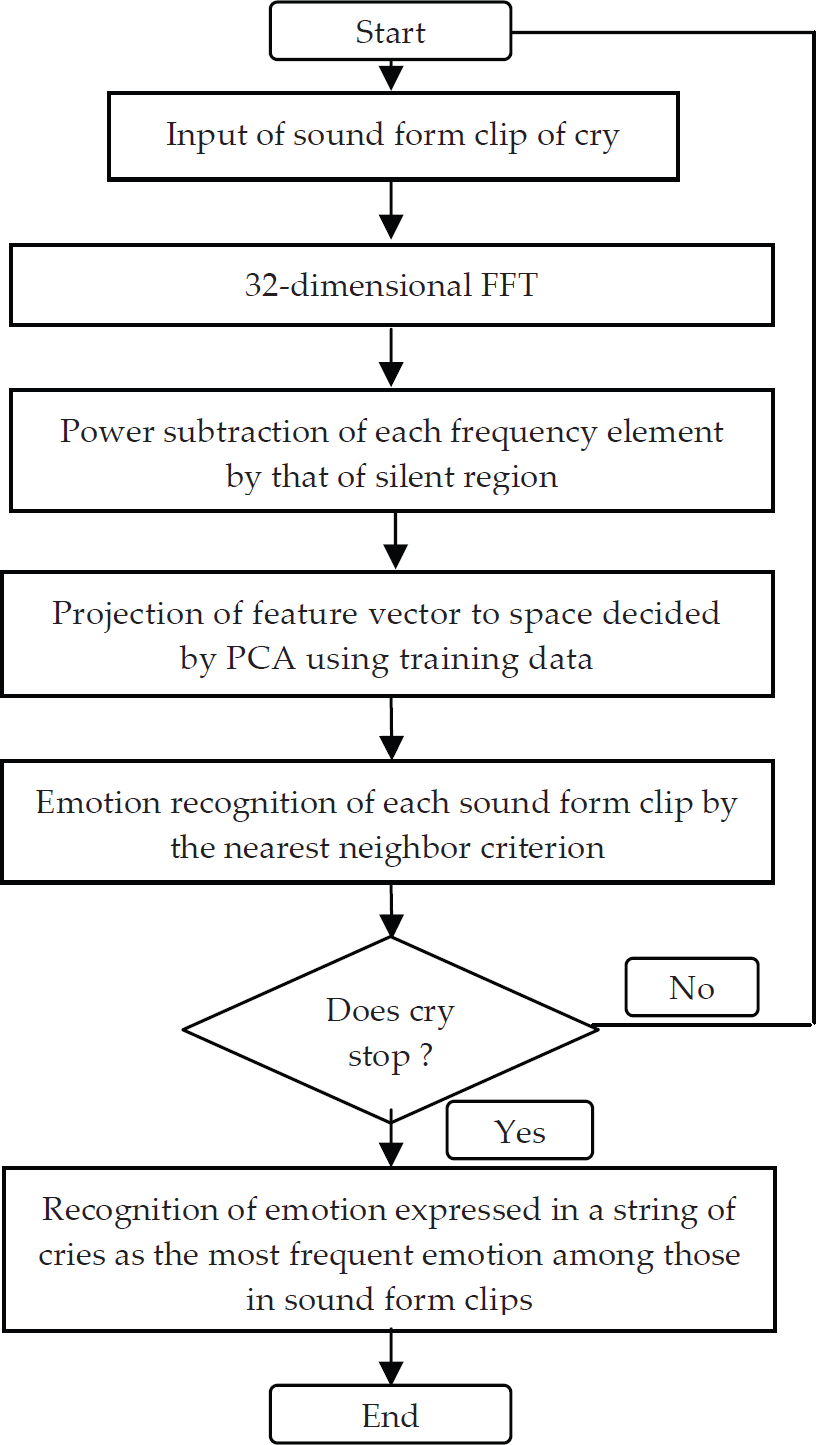

Fig. 3 shows a flow chart for emotion recognition of a baby by using the baby's cry. The 32-dimensional fast Fourier transform (FFT) is performed for the sound form clips to be used as training data. Then, the power of the sound form judged as a silent region is subtracted from each power of the frequency element. The power of each frequency element after the subtraction is treated as one of the elements of the feature vector. We perform principal component analysis (PCA) for the feature vectors of the training data under the condition that the main components are assembled up to the lowest dimension, at which the cumulative contribution ratio of PCA exceeds 80%. Then, the feature vectors for the sound form clips of the test data are calculated in the same way as those for the training data. The emotion of the baby is recognized by the nearest neighbor criterion applied to the feature vector obtained from the test data of the sound form clips after projecting the feature vector on the PCA space set from the training data. We use the Euclidean distance as the distance metric between the feature vectors. Then, the emotion with the highest frequency among the recognition results for the sound form clip is judged as the emotion expressed by the baby's cry. When the recognition results of two or more emotions have the highest frequency among the three emotions, the second nearest neighbor feature vector for each sound form clip in the PCA space is treated in the same manner as that of the nearest one for judging the emotion expressed by the baby's cry.

Flow chart for recognizing a baby's emotion from his or her cry.

Table 1 shows an example that explains the judgment of the emotion expressed by the baby's cry. In this case, “sleepy” is judged as the emotion expressed by the baby's cry because the emotion with the highest frequency is “sleepy” among the three emotions.

Example of emotion recognition using a continuous cry form.

4. Performance Evaluation

4.1 Experimental Conditions

4.1.1 Voice Recording

We used a wireless or wired microphone and notebook computer for recording sound. The sound forms are saved as WAVE files with the following specifications: PCM format, 16 kHz, 16 bits, monaural.

We used three kinds of sound forms for a total of four experiments: (1) the cry of a male baby (age: one and one-half months), which was recorded in his home and was composed of 57 pieces of continuous sound forms having 10 to 12 cries for each piece; (2)-1 a mixture (hereinafter referred to as mixture 1) of baby, adult voices, car and toy noises, which were recorded in a day nursery and composed of three pieces of continuous sound forms having 8 to 21 cry regions for each piece; (2)-2 a mixture (hereinafter referred to as mixture 2) of baby and adult voices, which were also recorded in the day nursery and were composed of five pieces of continuous sound forms having 8 to 21 cry regions for each piece; (3) voices of five male and two female adults, which were recorded in our laboratory at Kyoto Prefectural University and were composed of seven pieces of continuous sound forms having 14 to 22 pronunciations of “taro” for each piece.

The sound data described above as (3) were the same neutral parts used in our previously reported study [12]. The experiment was performed in the following computational environment: the personal computer was a DELL OPTIPLEX GX260 (CPU: Pentium IV 2.4 GHz; main memory: 512 MB); the OS was Microsoft Windows XP; the development language was Microsoft Visual C++ 6.0.

4.1.2 Method for Evaluation

First, we obtained the threshold for the amplitude of the sound form and the threshold for the word reliability using sound data for the first half of (1) the baby's cry, and (3) the adult voices, as described in Section 4.1.1. Fig. 4 shows the distribution of the word reliability of a baby's cry and an adult voice. Then, using the sound data of (1), we experimentally decided the condition of a sudden change in the fundamental frequency as 0.1 s of the time for checking and 150 Hz as the threshold.

Word reliability distributions of a baby and an adult [11].

Moreover, to judge whether the sound form segment came from the baby's cry, we checked all sound form segments that were recognized as not silent by Julius, in the following two ways: the sound form segments were checked by eye, and the real sounds were checked by ear. Then, we compared the sound form segments, judged as coming from the baby's cry by the eye and the ear checking, with the segments recognized by our reported method [11]. Here, we used two criteria, the detection and mis-detection rates defined below, for evaluation of our reported method [11].

The detection rate (%) is defined as (N(A∩B)/N(A)) × 100, where N(X) is the number of elements of set X, A is the set of sound segments of the baby's cry, and B is the set of sound segments detected by the proposed method. The mis-detection rate (%) is defined as {(N(B) – N(A∩B)) / (N(J) – N(A))} × 100, where N(X), A, and B are described above, and J is the set of all sound form segments judged as not silent by Julius. Fig. 5 shows the relationship among the sets of A, B, and J. In this study, the accuracy of the distinguishing of baby's cry from other sounds is measured as the above detection rate.

Schematic diagram showing sets and their relationship used for explaining detection and mis-detection rates [11].

Next, we used the sound form clip of a cry detected by our reported method [11] for emotion recognition of (1) the cry of the male baby of age one and one-half months. The patterns of emotions assumed were “discomfortable”, “hungry”, and “sleepy”. The emotion expressed by his cry was recognized by his caregiver during the recording of his cry. The first half of the recorded sound form was used as the training data, and the second half was used as test data for evaluating the proposed method.

4.2 Experimental Results and Discussion

4.2.1 Baby's Cry Detection

Table 2 shows the results of the baby's cry detection [11]. The detection rates fall in the relatively narrow range of 65.0% to 69.4%, whereas the mis-detection rates are spread in the range of 2.0% to 34.1%. In the case of mixture 1, the mis-detection rate is high because mixture 1 contains much noise, such as that of an automobile and a toy for calming the baby. Noise causes low word reliability by Julius, resulting in a high mis-detection rate. Moreover, in both cases of mixture 1 and mixture 2, the adult voice for calming the baby causes low word reliability, resulting again in a high mis-detection rate. As shown in Fig. 4, word reliability for the adult voice of “taro” is sometimes low. Therefore, the adult voice may lead to mis-detection because word reliability is one of the judgment criteria for a baby's cry.

Results of a baby's cry detection [11].

The main goal of this study is to apply the proposed method to improve baby care in the baby's home. Therefore, the most important result among those shown in Table 2 is the column of “Baby”. In this case, the detection rate is 69.4% and the mis-detection rate is 2.0%. Accordingly, our reported method [11] can be useful as a pre-processing module of the proposed method.

Table 3 shows accuracy (%) of recognition of baby's cry and other sounds. Table 3 is obtained using detection rate and mis-detection rate shown in Table 2. The average accuracy of recognition of baby's cry and other sounds is 83.7 %, 80.2 %, 65.5 % and 81.8 % in the case of baby, adult, mixture 1 and mixture 2, respectively. In the case of adult, there were no sounds of baby's cry. Therefore, the average accuracy of recognition of baby's cry and other sounds corresponds to the accuracy of recognition of other sounds. As described above, the most important result among those shown in Table 3 is the case of “Baby”. In this case, the average accuracy of recognition of baby's cry and other sounds is 83.7 %.

Accuracy (%) of recognition of baby's cry and other sounds.

4.2.2 Application to Emotion Recognition

Table 4 shows examples that explain the judgment of the emotion expressed by the male baby's cry. The emotion of “hungry” was easier to be recognized by the proposed method than those of “discomfortable” and “sleepy”.

Example of emotion recognition using continuous cry form of a male baby.

D= Discomfortable, H= Hungry, S= Sleepy

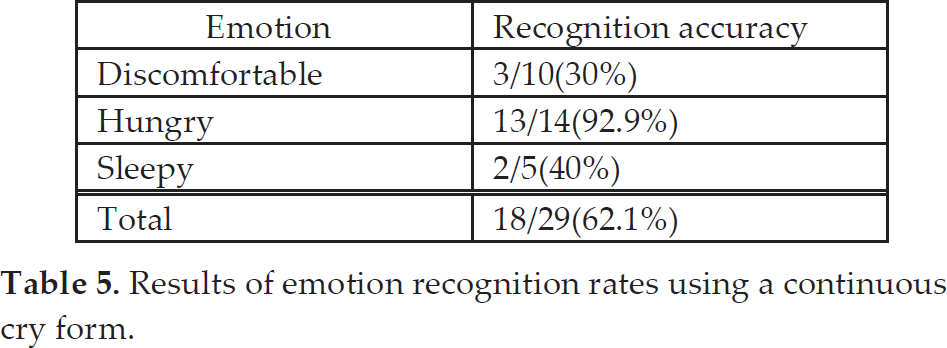

Table 5 shows the emotion recognition results. The average accuracies of emotion recognition are 62.1%. This result suggests that the proposed method is applicable to emotion recognition.

Results of emotion recognition rates using a continuous cry form.

The accuracy of “discomfortable” is lower than that of other types of emotion. This is because the sound expressing “discomfortable” has significant variations, resulting in difficulties of pattern recognition when the nearest neighbor criterion in the PCA space is used. The accuracy of “discomfortable” might be improved when considering the second nearest neighbor in the PCA space.

One of the reasons why the emotion recognition rate of the proposed method for “sleepy” is low is the sound form clip expressing “sleepy” may not be detected by our reported method [11], which uses a threshold for the amplitude of the sound form to neglect noise. Because the sound form clip expressing “sleepy” tends to have a small amplitude, this emotion is sometimes neglected.

We think that the stress of baby care while doing activities away from the baby and waiting for notification of the baby's cry and corresponding emotion recognized by the proposed method would be less than the stress while doing activities and always paying attention to the sound sent through a wireless microphone beside the baby. The system can have the function of sending an image of the baby to the person taking care of the baby only when the baby expresses an emotion. Consequently, the system enables the person to stay apart from the baby when the baby is not in need of immediate care. We expect that the system can be implemented in robots helping to take care of babies.

Since there are no published papers on robotics baby caregiver to our best knowledge, to develop a robotics baby caregiver might be one of the most challenging targets in the field of robotics research. The present investigation concerns the first stage of the development of a robotics baby caregiver that has the ability to detect baby's emotions. In this first stage, we have developed a method for detecting baby's emotions. The second stage is to develop an automatic, real-time system using the proposed method. The third stage is to develop a robotics baby caregiver that has functions developed at the first and second stages and functions for appropriately reacting according to the detected baby's emotions for taking care of the baby.

5. Conclusion

The present investigation concerns the first stage of the development of a robotics baby caregiver that has the ability to detect baby's emotions. In this first stage, we developed a method for pattern recognition of baby's emotions expressed in the baby's cries. The main goal of this study is to apply the proposed method to improve baby care in the baby's home. We successfully applied the proposed method for pattern recognition of baby's emotions. The average accuracies of emotion recognition were 62.1% when assuming that the emotion was one of “discomfortable”, “hungry”, or “sleepy”. In future work, we will apply the proposed method to more babies because the emotions expressed in their cries might have variations. Moreover, we will investigate the influence of noise on the accuracy of the proposed method. We expect that the proposed method could be used in robots that can help take care of babies.

Footnotes

6. Acknowledgments

We would like to thank all the participants who cooperated with us in the experiments.