Abstract

The adaptive extended set-membership filter (AESMF) for nonlinear ellipsoidal estimation suffers a mismatch between real process noise and its set boundaries, which may result in unstable estimation. In this paper, a MIT method-based adaptive set-membership filter, for the optimization of the set boundaries of process noise, is developed and applied to the nonlinear joint estimation of both time-varying states and parameters. As a result of using the proposed MIT-AESMF, the estimation effectiveness and boundary accuracy of traditional AESMF are substantially improved. Simulation results have shown the efficiency and robustness of the proposed method.

1. Introduction

Recently, improvements in the sequence estimation method make online real time modelling and control methods based on real time models feasible [1]. Among the statistical system estimation methods, the Kalman filter is the best known [2, 3, 4]. This filter is also known as a state estimator, utilizing the statistical knowledge of both the system measurement noise and the process noise – for instance, white noise – and achieves the optimal estimation by optimizing the minimum function of the estimation residuals. Also, its algorithm representation includes prediction and update, which is convenient in online applications. Hence, this method is widely used and it has been developed to the address nonlinear system estimation method and extends its application field, such as the extended Kalman filter (EKF) [5], the unscented Kalman filter (UKF) [6] and the derivative-free nonlinear Kalman filter [7], etc.

However, and generally, these Kalman filters require some statistical knowledge of the system measurement noise and process noise, or else assume that those noises meet some distributions in order to solve the optimal problems. However, in reality the system and environment noise characteristics are typically quite complicated, time varying, and difficult to measure and evaluate accurately. In particular, for robot systems, mechanical manufacture accuracy, sensory noise and air flow disturbances will result in uncertain statistical noises. The mismatch of the noise's statistical characteristics causes filter bias. Moreover, due to the Kalman filter's noise sensitivity, this bias is amplified and causes estimator instability. Therefore, many adaptive schemes – such as the adaptive unscented Kalman filter (AUKF) [8] – have been introduced to Kalman estimators in order to achieve the online noise estimation of the system's process noise distribution, and to compensate the filtering faults. However, the statistical dependency and sensitive nature of the Kalman estimator limits its system applications.

In most real systems, although the noise's statistical characteristics are unknown, they could be assumed as an unknown-but-bounded (UBB) noise. Due to the above defect of Kalman filtering, researchers have proposed a set-membership filter (SMF). Based on the UBB assumption, this filter gives a feasible state boundary by calculating the available set members. Thus, the estimation result is a viable state set rather than a single value. This viable state set describes possible ranges of the estimated parameters and makes sure that the real value falls in this set. At the end of the 1960s, ellipsoidal set-member filtering was first proposed by Schweppe [11] and Bertsekas [10]. They suggested deciding the real system state by applying the ellipsoidal geometric boundary. However, the optimization of the ellipsoid remained unconsidered. Later, Fogel and Huang [13] developed a nonlinear system optimal bounded ellipsoid (OBE) algorithm and then applied it to system identification. Maksarov [14], Kurzhanski [15] and Chernousko [16] improved and standardized the state and parameter ellipsoid estimation algorithm. Polyak [17] derived a linear system ellipsoid algorithm with model uncertainty, and it was extended to the present field of application.

Scholte and Campbell [18] expanded this ellipsoid algorithm into a nonlinear system and proposed an extended set-member filter (Extended SMF – ESMF). This follows the same mechanism for Kalman filtering by linearizing nonlinear systems. However, the difference is that ESMF uses Taylor expansion rather than nonlinear system linearization. This turns the linearization inaccuracy into the process noise in order to accomplish nonlinear system estimation. Yet, due to its numerical instability, poor real-time performance and difficulties in the tuning of the filter parameters, Zhou proposed a UD factorization-based adaptive extended set-membership filter (Adaptive ESMF – AESMF) [19] and overcame the flaw. This method represents and updates the envelope matrices using UD factorization; the method combines measurements in an updating strategy, which improves the algorithm's stability and reduces its complexity. Furthermore, as an adaptive filter parameter tuning method, it simplifies the algorithm complexity and search for the optimal results [20]. This method has been successfully applied in online modelling in robot systems.

However, the described method – the AESMF method – still requires the priori boundary information of the system noise. To be specific, this algorithm needs to know the uncertain boundaries of the process noise and measurement noise. Normally, measurement noise depends on sensory accuracy. If the sensors work under normal conditions, the uncertain boundary does not drift. Therefore, the sensor measurement uncertain boundary can be decided in advance by analyzing the measurement data; on the other hand, process noise comes from the mismatch between the system state space model and the systems' real dynamics, such as the parameters' mismatching. For most under-actuated and strongly-coupled nonlinear systems – such as robotic systems [22, 23, 24] – different operating modes result in a dissimilar dynamic model, even parameter transitions, which means that the system cannot be modelled accurately. Therefore, it is necessary to manually tune the boundary parameters since the priori information of uncertain boundaries are unknown. Meanwhile, as the priori knowledge is limited, manually setting the process noise uncertain boundary may not include or may well exceed the real process noise boundary. This will lead to the AESMF's estimated uncertain boundary being deviation too large, causing the filter to diverge during the estimation.

In this paper, a MIT-based AESMF (MIT-AESMF) is proposed to address the process noise uncertain boundary issue. This method applies MIT optimization rules and does online calculation to obtain the minimal ellipsoid boundaries of the prediction error, which also ensures that the filter's health-index satisfies the working condition. At the end, based on the MIT-AESMF method, an example of ground mobile robot state and dynamic parameter estimation proves the efficiency of this proposed method.

2. Traditional Adaptive Extended Set-Membership Filter (AESMF)

The main concept of AESMF is to cast the nonlinear dynamics in a way that allows the algorithm to take advantage of the linear SMF framework. Specifically, the nonlinear dynamics are linearized about the current estimation; the remaining terms are then proven bounded using interval analysis and are considered together with the process or measurement noises. Then, a linear SMF algorithm is used and an ellipsoid estimation set is achieved. The true value is guaranteed to lie in the ellipsoid.

The nonlinear system considered here has the following form:

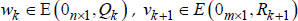

where xk ∈ Rn and yk+1 ∈ Rm are the state and measurement vectors, respectively, f(•) and h(•) are nonlinear C2 functions, wk ∈ Rn and vk ∈ Rm are the process and measurement noise vectors, respectively, and they meet the conditions:

where E(a, P) stands for an ellipsoid set as:

where a is the centre, x is any point within the ellipsoid, and P is a positive-definite matrix.

The initial estimation ellipsoid is defined as:

where

Then, at time k+1, k=0, 1, 2,…, the AESMF algorithm [19] can be summarized as follows:

1. Calculate the state interval based on the ellipsoid extrema:

where the superscript i, j stands for the (i, j) element of a matrix.

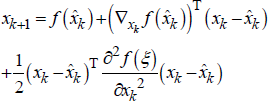

2. Find the maximum interval for the Lagrange remainder using the interval analysis: expanding the process function, Equation (1) (using one state as an example) yields:

where ∇xf is the gradient of the function, ∂2 f / ∂xk2 is the second derivative of the function and £ can take any value on an interval X̄k where (xk – x̂k) is defined. Then, the interval of the Lagrange remainder is defined as:

Extending (9) to all states yields:

where Hesi, i =1, 2, …, n are the Hessian matrix of the nonlinear function f(•) and the interval vector Xk is now defined as X̄ = (X̄1 k , X̄k2,…, X̄nk).

3. Calculate the ellipsoid bounding the linearization error:

Then, the ellipsoid of linearization error is defined as E(0n×1,Q̄k).

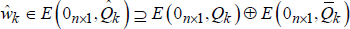

4. Calculate the final process and linearization error bound ŵk ∈ E(0nx1,Q̂k):

where ŵk is the sum of the linearization error and wk, and:

where βQk is a filter parameter to be chosen to minimize the ellipsoid E(0,Q̂k). The observation model should be dealt with in the same way as with computing ŵk in Steps 2 – 4 to attain the incorporated measurement noise:

5. Calculate the state prediction ellipsoid E(x̂k+1,k,Pk+1,k) using the linear SMF filter, which is the direct sum of the predicted ellipsoid of the linearized model E(f(x̂k), AkPkAkT) and the virtual noise ellipsoid E(0nx1,Q̂k):

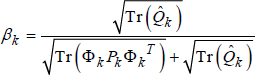

where βk is a filter parameter to be chosen to minimize the ellipsoid E(x̂k+1,k,Pk+1,k) and

6. Calculate the updated state ellipsoid Ek+1=E(x̂k+1,Pk+1) using the linear SMF, which is the intersection of the predicted ellipsoid E(x̂k+1,k,Pk+1,k)and the observation set

where ρk is a filter parameter to be chosen to minimize the ellipsoid E(x̂k+1,Pk+1), and δk is defined as:

where

For the selection of the parameters βQk, βk and ρk, reference [20] proposes the following adaptive strategy:

where pm and rm are maximum singular values of the matrix Hk+1 Pk+1,k Hk+1T and R̂k+1.

The update condition is given by reference [19] as:

where η ∈ R is a parameter to be selected. When this condition is not satisfied, only (10) and (11) are used to compute E(x̂k+1,k, Pk+1,k) and stop computing (12)-(16). It has to be selected carefully, according to the initial assumption. Normally, larger η can increase the chance of not updating but will lead to larger estimation bounds. Namely, there is a trade-off between ‘update saving’ and conservative estimation.

Special attention should be given to the following two problems. First, when δk ≤ 0 in Equation (17), the uncertainty ellipsoid of Eq. (16) is not defined [19]. This will occur when the bound assumption (8) is not satisfied. That is, the practical state or noise is beyond the assumed bound. Thus, it provides an indication of the health of the algorithm. Second, the measurement noise bound Rk+1 can be decided through sensor calibration [25]. However, the process noise boundary Qk, which comes from the model errors of the system (1) and which cannot be decided simply. Qk is changed online and often selected by experience, while wkTQk−1wk = 1 is often assumed as the optimal condition as reference [20]. However, in real applications, model errors cannot be known accurately; thus, if the selected Qk is much larger than real bounds, which means wkTQk−1wk « 1 and the estimated bound P will be much larger according to Eq. (11). This will result in an conservative estimation of state boundaries; if Qk is much smaller than real bounds, which means wkTQk−1wk » 1, the estimated bound P will be much smaller according to Eq. (11, and no element can exist in the intersection of the predicted ellipsoid E(x̂k+1,k,Pk+1,k) and the observation set

This will result in δk ≤ 0, which means an unstable and undefined estimation.

In the following, a cost function is developed and a MIT adaptive strategy is introduced to solve the above two problems.

3. MIT-Based AESMF

As discussed above, the AESMF algorithm suffers from the mismatch of the process boundaries in practical usage. To solve this problem, a MIT adaptive strategy is introduced into the original AESMF algorithm to address the problem of the selection of Qk online so as to ensure δk > 0.

Let qki be the i-th diagonal element in Qk, thus:

According to the healthy index δk, the following optimized function is selected:

where operator tr{*} is the trace of the matrix *.

Because tr{Pk+1}>0 and δk < 1, the cost function (23) is positive definite. Hence, based on the AESMF process (3–20), Qk, which makes Jk(Qk) minimal, and makes 1 – δ

k

and tr{Pk+1} minimal at the same time. This means that the smallest boundaries of the estimated states set E(x̂k+1,Pk+1) and δ

k

→ 1 are both guaranteed by:

Qk must be the extreme point of Jk(Qk) because Jk(Qk) is positive definite, as discussed above. Thus, Qk can be obtained by the following equation:

However, in (25), Wk−1 is included in δk and P̄k+1, and Wk includes variable Qk according to (11) and (12). This results in no analytic expressions for Qk in (25). So, only a numerical solution can be obtained. We apply the MIT strategy [12] for the computation of Qk as:

where Q̂ki is the estimate of qki at time k, ΔT is the sampling time, η

k

is the parameter for the converge rate which is selected by hand and must satisfy the following condition:

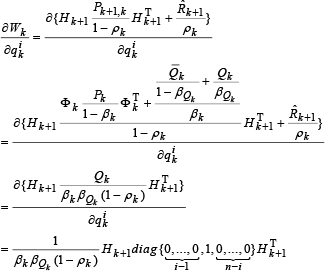

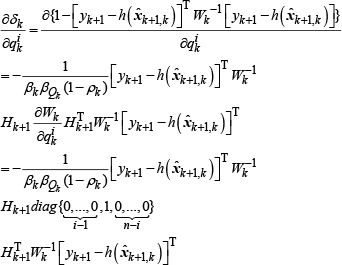

According to (9), (11) and (12):

Hence the MIT strategy for estimation Q̂ki is:

Hence the MIT based AESMF method for model (1) and (2) is listed as following:

Initial conditions:

2. Construction of the noise boundaries at time k+1:

3. Time update at time k+1:

4. Measure update at time k+1:

5. Process noise boundaries update at time k+1:

where i = 1,2,..,n and j = 1,2,..,n.

4. Simulation

The proposed MIT-AESMF is tested by simulation to estimate the motion states and slip parameters of a tracked mobile robot, as shown in Figure 1. A general kinematics model of the tracked vehicle with consideration of slip is developed what follows. To compare the performance of MIT-AESMF with AESMF, this paper uses the same model for simulation with reference [20], in which AESMF is first proposed and tested.

Platform of the Vehicle Undergoing General Planar Motion

In order to describe the motion of the tracked vehicle, define a fixed reference frame OwXwYw and a moving frame OmXmYm attached to the vehicle body, with an origin at the geometric centre of the vehicle. The motion of the vehicle is composed of the translation velocity Vc and the rotation velocity wc=dψ / dt, where ψ is the yaw angle. Because of the sideslip, the instantaneous centre of rotation (ICR) shifts to the front of the vehicle centroid by d. The angle between the lines of Om-ICR and O'-ICR is defined as α, where O'-ICR is perpendicular to the axis Xm. Then, the discrete-time process model is:

where X and Y are positions under the frame OwXwYw, b is the distance between the midpoints of the two tracks, r is the radius of the wheels which drive the tracks, ΔT is the sampling interval, ωL and ωR are the angular velocities of the left and right wheels, iL,k and iR,k are the slip ratios of the left and right tracks. See reference [20] for the details of the dynamics of the robot.

In the simulations, X, Y and ψ are assumed as measurements. Thus, the observation model is:

The goal of the simulation is to estimate the state (Xk Yk Ψk)

T

and the three slip parameter vectors (iL,k YL,k ΨL,k)

T

simultaneously. To solve this joint nonlinear estimation problem, a new augmented state vector is defined as the combination of the state and parameter vectors:

The slip parameters are often unknown in practice; hence, they are modelled as follows:

where pk = (L,k iR,k σk) and wp,k ∈ R3 is the additive process noise that drives the model (39). Thus, the joint-estimation equation is obtained [23] as:

The constants in the model of Equation (40) are chosen as b=0.65m, r=0.35m, ΔT=100ms and ωL= ωR= 1.5 rad/s. The duration of the simulation is 200 sampling times (20s).

In order to demonstrate the tracking performance, step changes are simulated so as to occur in the three slip parameters (iL,k YL,k ψL,k) at time k =100, which changes from (0.00 0.00 0.00) to (0.20 −0.10 0.15).

Assuming the real process noise wk, the parameter driving noise wp,k and the measurement noise vk+1 are 5% equal distribution boundary noises. Assume the measurement noise is process noise and that vk is the real boundary ellipsoid, R0=diag{0.0025,0.0025,0.0025}. For every iteration, use x̂k – the centre of the estimated ellipsoid Ek – as the estimated value of time step k.

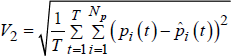

In order to accurately compare and demonstrate the effectiveness of the filter, the state and parameter estimation accuracy in the least squares case is listed [20]:

In the equations, xi(t) and x̂i(t) are real and estimated states, Nx is the dimension of the states, pi(t) and p̂i(t) are real and estimated parameters, Np is the dimension of the parameters. p̄i and pi are the upper and lower boundary. The complexity of the different algorithms is described in terms of the CPU computing time. The health index recovering time is measured by the accumulation of the negative values.

In the following simulation, the normal AESMF and the proposed MIT-AESMF are compared in terms of stability, performance and computational load under the three conditions of the initial process boundary Q0.

Condition 1:

The initial process noise ellipsoidal boundary is equal to the real boundary as:

Figure 2 depicts the estimation and stability improvement of MIT-AESMF. Figure 2-a is the estimation comparison of the system state Yk, Figure 2-b is the comparison of the parameter iL,k, Figure 2-c is the comparison of the filter health index δ k . Figure 2 illustrates the fact that during the simulation the parameters and state estimates as well as the stable boundary estimation accuracy of both MIT-AESMF and AESMF are identical; however, because of the sudden changes, the initial ellipsoidal boundaries are far less than the parameter variations' equivalent process noise. Thus, the AESMF method employs this small ellipsoid in calculation, which causes the real value to be excluded from the estimation of the boundaries of the states and parameters, to fail the measure update requirements (21) and to stop the measurement updating. Ultimately, it causes uncertain boundaries to slowly inflate, and lasts for more than 10 sampling cycles until the boundaries include this sudden change range. Since the MIT-AESMF method adapts a MIT adaptive mechanism, it expands the process's ellipsoidal boundary to the entire sudden change range in 3 calculation cycles when a sudden parameter change occurs. Figure 2-c also demonstrates that when sudden change occurs, the MIT-AESMF health index uses 7 cycles less to return to 0 than the AESMF method. The comparisons of the performance of AESMF and MIT-AESMF are listed in Table 1 and Table 2.

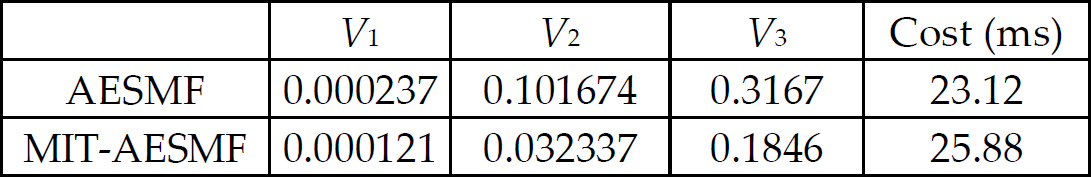

Performance of AESMF and MIT-AESMF (proper set-process boundaries and no parameter change)

Performance of AESMF and MIT-AESMF following the step change of the parameters (proper set-process boundaries)

Performance comparison between AESMF and MIT-AESMF when the process noise boundaries equal the true value

According to Figure 2, Table 1 and Table 2, when the initial process noise ellipsoidal boundary equals the real boundary, and when compared to AESMF, MIT-AESMF displays similar parameter and boundary accuracy. However, when sudden change occurs, the estimation accuracy is bled and the health index is recovered in quite a short time, whereby the recovery speed is 5 time faster. MIT-AESMF is only 10% slower due to its online adaptive computing. These performances illustrate the adaptive ellipsoid MIT mechanism's effectiveness.

Condition 2 :

The initial process noise ellipsoidal boundary is larger than the real boundary as:

Figure 3 depicts the estimation and stability improvement of MIT-AESMF. Figure 3-a is the estimation comparison of the system state Yk, Figure 3-b is the comparison of the parameter iL,k. Figure 3 illustrates that within the 200 points simulation, and besides 1 parameter's sudden change of moment,

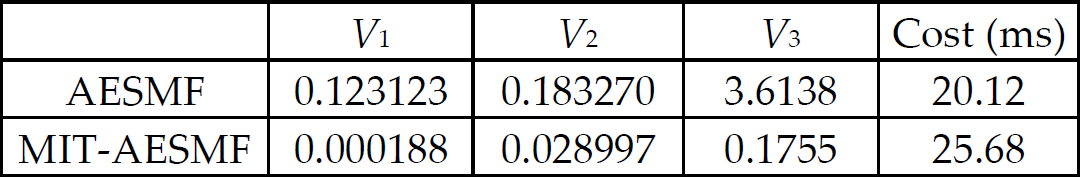

Performance comparison between AESMF and MIT-AESMF when the process noise boundaries are larger than the true value

MIT-AESMF and traditional AESMF have similar parameters and state estimation values. However, since the initial ellipsoidal boundary is set as too large, AESMF runs the calculation based on this big ellipsoid for the entire time, which leads to the states and parameter estimations' uncertain boundary being nearly twice as big as the MIT-AESMF method. This is mainly because the adaptive strategy of the MIT-AESMF method in the process noise ellipsoidal boundary estimation makes the real-time tuning of the ellipsoid boundary come close to the real process noise boundary. This result also implies that when the parameter's sudden change occurs, such as k=100, the MIT-AESMF method's noise adaptive mechanism makes Qk closely approach the real boundary. Figure 3 clearly demonstrates, again, that because of the selective mechanism (21) in AESMF, when the parameter's sudden change happens, the initial process noise ellipsoidal boundary cannot include the span of the parameter variation and thereby causes the measurement to fail. Thus, just calculating the time update leads to the uncertain boundary's inflation, which remains for more than 20 sampling cycles until it includes the sudden change range, and the state estimation of AESMF becomes biased since there is no measurement updating. On the other hand, because the MIT-AESMF method adapts the MIT adaptive mechanism, which makes the process noise ellipsoidal boundary enlarge to the parameter of a sudden change range, it only takes 3 computing cycles whereas AESMF consumes about 20 or more cycles. Table 3 shows the comparison of the performance of the two filtering algorithms when the process noise ellipsoidal boundary is two times larger than the real boundary.

Performance of AESMF and MIT-AESMF (larger set-process boundaries)

According to Figure 3 and Table 3, when the initial process noise ellipsoidal boundary is twice as large as the real boundary, and compared to AESMF, MIT-AESMF has better accuracy and the parameter's uncertain boundary is about 1/2 that of the AESMF method during the estimation, which improves the effectiveness of traditional AESMF. Meanwhile, MIT-AESMF is only 10% slower due to its online adaptive computing.

Condition 3 :

The initial process noise ellipsoidal boundary is smaller than the real boundary as:

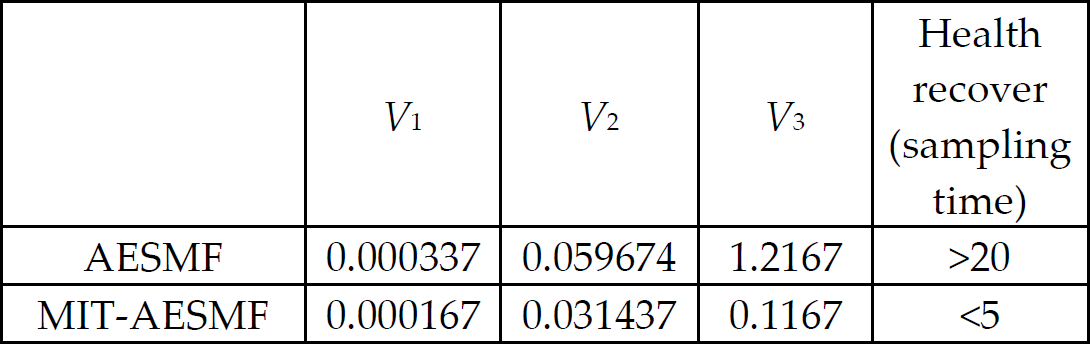

Figure 4 describes the improvements in MIT-AESMF estimation and stability performance when the initial process noise ellipsoid is set as small. Figures 5-a and 5-b demonstrate that, in the 200 simulation moments, and because the initial ellipsoidal boundary is set too small, AESMF is using this small ellipsoid to calculate, which leads to no overlaps between the state estimation and the measurement estimation ellipsoids. Therefore, it does not satisfy AESMF's selective updating requirements (21), and at the same time the measurement updating makes the uncertain boundary shrink to the initial small ellipsoid boundary. Thus, there are no overlaps in measurement updating. This repeating process is shown clearly in Figure 4-c. Most of the time, the health index is far smaller than 0, and AESMF estimation is invalid, leading to the parameter and state estimation being both biased from the real value. In contrast, MIT-AESMF adapts the MIT adaptive mechanism in the process noise's ellipsoidal boundary estimation, and adjusts the process noise ellipsoidal boundary to approach the process noise's real value in real time, which causes MIT-AESMF to include the uncertain boundary of the real value, whereby the estimated noise boundary is twice as big as the valid AESMF estimated boundary (because of the mixing of the linearization error uncertain boundary and the measurement uncertain boundary – therefore, it does not exactly equal double the AESMF boundary). This feature corresponds with the initial 1/2 of the real process noise ellipsoidal boundary. This implies that the estimation of the process noise by using the MIT-AESMF method is close to the real value. The health index in Figure 4-c clearly shows that there is only a very short time for the health index to be less than 0, and it quickly recovers to 1 when a sudden change happens through using the MIT-AESMF method. This figure implies that the MIT-AESMF's estimation is guaranteed as valid. Table 4 shows the results of the two filtering methods when the initial process noise's ellipsoidal boundary is 1/2 of the real value.

Performance of AESMF and MIT-AESMF (smaller set-process boundaries)

Performance comparison between AESMF and MIT-AESMF when the process noise boundaries are smaller than the true value

According to Figure 4 and Table 4, when the initial process noise ellipsoidal boundary is 1/2 of the real boundary, the original AESMF fails in making both the state and parameter estimation due to there being no overlaps in the prediction and measurement ellipsoids. Because of the selective updating mechanism, the filter is not valid for most of time. However, MIT-AESMF is effective for state and parameter estimation during the entire simulation, and maintains the same accuracy in Table 1. This implies that it is necessary to employ the MIT adaptive mechanism. Most of the time, AESMF does not perform measurement updating so that time is shortened when compared to Table 2. MIT-AESMF keeps the same time consuming level with Table 2.

To summarize MIT-AESMF and AESMF in the three scenarios. Although the proposed MIT-AESMF consumes 10% more computing time, when parameters suddenly change or the initial process noise boundaries are set larger or smaller than the real noise boundaries, it ensures that the estimation and the boundaries maintain the same accuracy as the original AESMF under ideal conditions. However, the traditional AESMF method generates a significant uncertain boundary estimation bias – estimation is even invalid under simulated conditions. Furthermore, in a situation such as where the health index is less than 0 due to system model being inaccurate, MIT-AESMF makes the health index recover faster its effective range, whereby the health index's recovery rate is close to 5 times that of the original AESMF method based on the selective method (21).

5. Conclusion

In this paper, a MIT-based adaptive set-membership filter is proposed for the joint state and parameter estimation of nonlinear systems. The normal AESMF is enhanced by the adaptive selection of the process noise boundaries based on the MIT adaptive strategy. This improves its effectiveness, realizes the optimal bound-guaranteed performance of the normal AESMF and does not increase the computation complexity. The proposed algorithms are presented and analysed in detail, and extensive simulations are carried out to perform the joint state and parameter estimation of a tracked mobile robot and to make comparisons between the normal AESMF and the proposed MIT-AESMF. It has been demonstrated that the proposed MIT-AESMF successfully solves the instability problem, realizes sub-optimal estimation and increases the estimation accuracy of the normal AESMF

Footnotes

6. Acknowledgments

This work was supported by The National Natural Science Foundation of China under Grant: 61035005, 61273025 and 61203334; National Key Technology R&D Program under Grant: 2011BAD20B07; National High Technology Research and Development Program (863) under Grant: 2012AA041501