Abstract

In order to adaptively calibrate the work parameters in the infrared-TV based eye gaze tracking Human-Robot Interaction (HRI) system, a kind of gaze direction sensing model has been provided for detecting the eye gaze identified parameters. We paid more attention to situations where the user's head was in a different position to the interaction interface. Furthermore, the algorithm for automatically correcting work parameters of the system has also been put up by defining certain initial reference system states and analysing the historical information of the interaction between a user and the system. Moreover, considering some application cases and factors, and relying on minimum error rate Bayesian decision-making theory, a mechanism for identifying system state and adaptively calibrating parameters has been proposed. Finally, some experiments have been done with the established system and the results suggest that the proposed mechanism and algorithm can identify the system work state in multi-situations, and can automatically correct the work parameters to meet the demands of a gaze tracking HRI system.

Keywords

1. Introduction

Improvements in image processing and computer technology have made eye gaze tracking technology a strong candidate to support and enhance Human-Robot Interaction (HRI). Based on its direct information acquiring methods and natural interaction modes [1, 2], infrared-TV based eye gaze tracking technology is frequently researched to facilitate interaction with an HRI interface for people with nonverbal, immobile disabilities, because of its high accuracy and fast, easy calibration procedure [3]. Many rapid and highly accurate approaches to perform the work parameter calibration have been verified. Morimoto et al. [4] applied a simple second order polynomial transformation computed from a brief calibration procedure in which the user is asked to fixate his gaze on the nine specific target points. Ohno and Mukawa [5] offered free-head, simple personal calibration, with accuracy being about 1.0 degrees (view angle), using two cameras and asking the user to look at two marks on the screen. Kondou and Ebisawa [6] proposed a variant “two-point” calibration method executed by visually tracking a moving target on a PC screen and employing a narrow view camera and two stereo view cameras. Chi et al. [7] and Hennessey and Lawrence [8] applied dual cameras to obtain accurate calibration by synchronizing external signals to the camera data. Brolly and Mulligan [9] applied two fixed, wide-field “face” cameras equipped with active-illumination systems that enabled rapid localization of the subject's pupils, also simplifying calibration. Funk and Yang [10], Bonfort et al. [11] and Tarini et al. [12] respectively proposed calibration techniques using additional equipment and a special prepared mirror as geometry constraints, which unexpectedly increased the complexity of the system.

However, the calibration methods described above cannot meet the requirements of adaptability and good accessibility for all kinds of users. In these systems, restrictions on head movements have not been removed after calibration, which makes Human-Robot Interaction (HRI) uncomfortable. Head movements always accompany variation of system work parameters and consequently, once the head moves, the system will deviate from the normal work situation. Although free-head movements will be allowed for by using a multi-camera (two or three) system, the complexity and calibration difficulty of the system will increase, so the systems may be inaccessible for some users, e.g., the elderly and disabled. Therefore, considering these impacts on a gaze tracking system, we employ one camera to perform image acquisition and calibration works.

In short, the traditional calibration method is inadaptable and inaccessible, even tedious, because the calibration procedure needs to be repeated manually several times [13, 14]. To realize an adaptive HRI system, a kind of gaze direction sensing model has been provided in this paper, and then a kind of adaptive calibration mechanism has been provided for correcting system working parameters, based on certain multi-initialized reference system work states and minimum error rate Bayesian decision-making theory.

2. System working principle

The HRI system we developed in this research is based on an infrared-TV technique. This technique requires an infrared light source to generate the corneal reflection called “Purkinjie”. Assuming a static head, an eye can only rotate in its socket and the eye can be approximated as a spherical ball. Since the light source and the camera are identical, the Purkinjie spot can be taken as a reference point, thus the vector from the Purkinjie spot to the pupil centre will describe the gaze direction.

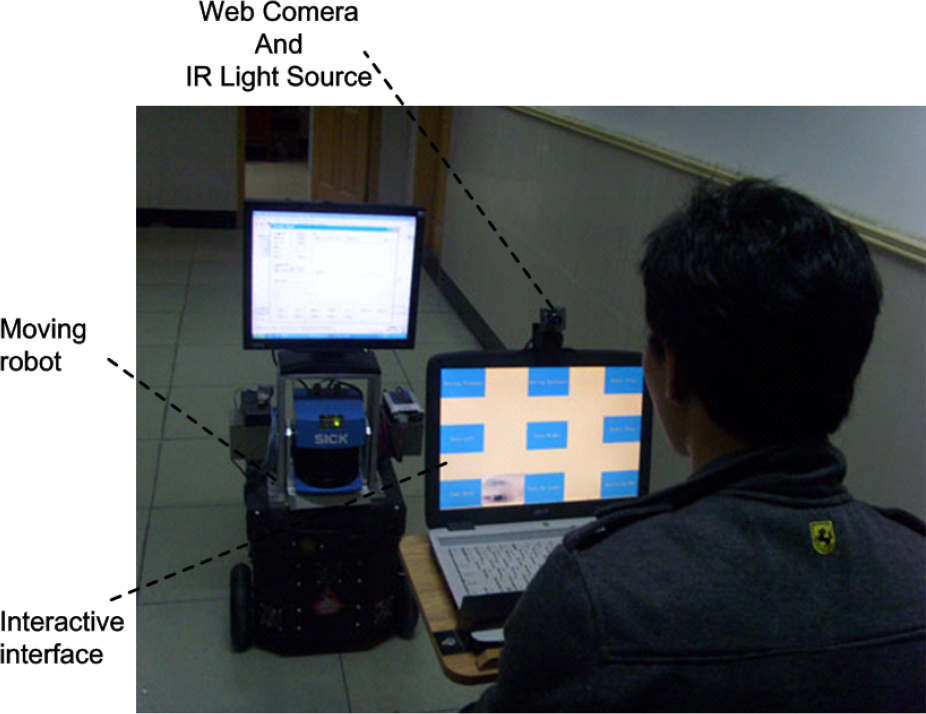

As shown in Figure 1, the developed HRI system includes three modules: Information Processing and Control Module, Visual Interaction Module and Communication Module. The Information Processing and Control Module is a service robot. The Visual Interaction Module consists of an interaction interface, a video camera and an infrared light source. The Communication Module is actually embedded in the previous two modules.

The image is captured and delivered to the robot by the video camera. With image information processing completed by the robot, the gaze direction of the user can be obtained. Thus, the robot can explain where the user is looking and at what, and then perform relative tasks to meet the user's needs. For example, after interpreting the delivered information through eye gaze, the robot can turn on the TV by remote control (I), turn on the light (II), go to get a book (III) or make a cup of tea (IV), etc.

System Configuration and Working Principle

Primarily, the precondition is that the system can perform the work parameter calibration procedure adaptively and accurately, so that the user can communicate with the HRI system correctly and smoothly. In order to detect the eye gaze identified parameters, a kind of gaze direction sensing model has been provided in this paper.

3. Gaze sensing model

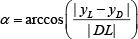

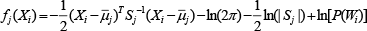

Analysing the working principle of the Visual Interaction Module, a gaze direction sensing model has been built for identifying eye gaze parameters, shown in Figure 2. The angle γ, between the vector from pupil centre C to fixation point D and the line from Purkinje spot P to light source L, is defined as the eye gaze identified angle. The angle α, between the vector from light source L with respect to the fixation point D and axis X, is defined as the eye gaze direction angle.

Eye gaze sensing model

When the head of a user keeps stationary, the fixation point D can be uniquely determined with pupil centre C at the relevant position since the light source and the camera can be formerly located. That is, the geometric relationships between pupil-purkinjie and eye gaze fixation point are determined according to the gaze identified angle γ and the direction angle α in the eye gaze sensing model, defined as:

Where

Where |DE| is the distance from fixation point D to eyeball centre E, |EL| the distance from E to infrared light source position L, |DL| the distance from D to L,

4. Adaptive Calibration Mechanism

4.1. Work Parameter Correction

When calibrating the work parameters of the system, the user is asked to focus as long as possible on the centre of each region. Then an eye gaze mapping function can be established to estimate gaze direction and the calibration work is done. For a certain position,

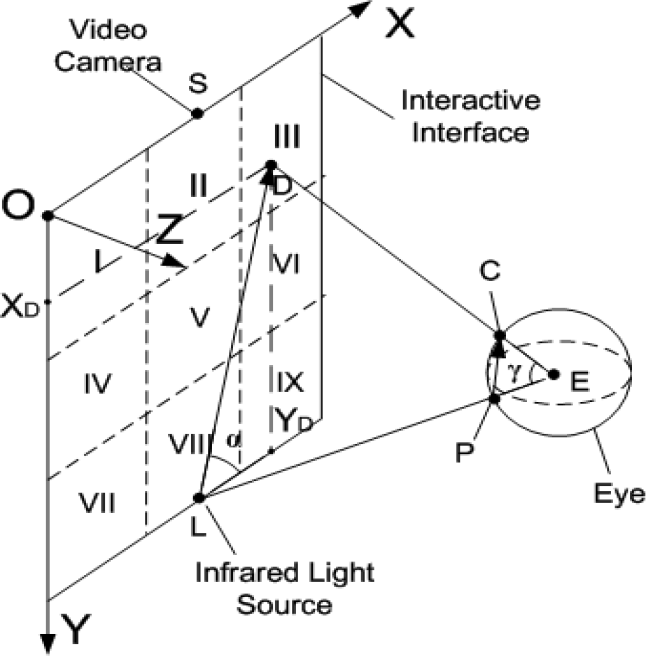

However, it is difficult for the user to keep his head still all the time, and with head movement, the previous calibrated work parameters will no longer adapt to the HRI system if no recalibration is done. In addition, for laying the foundation for adaptive calibration work, the work parameters described in Table 1 are defined as initial reference system work states as shown in Figure 3. If the head position is changed against to the interface, then

Where

4.2. State Identification

It is expected that the HRI system can perform calibration autonomously and adaptively, since the head movement will influence the HRI system working situations. According to the method of work parameter correction, it should firstly be known which direction the head moves toward, such as forward or backward.

On condition that influence factors would be mostly not known completely, Bayesian decision theory can make optimal decisions within a minimum error rate by estimating the probability of unknown states and compensating for the deviation [16]. Head movements take place randomly and naturally in an eye gaze tracking HRI system, and it is usually accompanied with system work parameters changing with respect to the variation of the head position. Fortunately, Bayesian decision-making methods are an alternative to help correcting the work parameters by predicting the trends of head movements in an eye gaze tracking HRI system.

Description of gaze identified parameters in different work states

Description of Fixation Region and Head Movements

Multi-Initialized States for calibrating the work parameters

There are many factors impacting on the system work states, such as infrared light source, camera properties and the head position of users in the interactive space. Since infrared light source position, interface parameters and camera properties are selected and required almost not to change, head movement prediction using Bayesian decision theory is preferable. As shown in Figure 3, Xi (i= 1,2,…, 9) refers to region I (I, II,…, IX), Wj (j=1,2,…, 6) represents tendency of head movement and Xi and Wj are described in Table 2.

According to the basic Bayesian formula, when gazing at the region i, the probability of head position Wj can be obtained as follows:

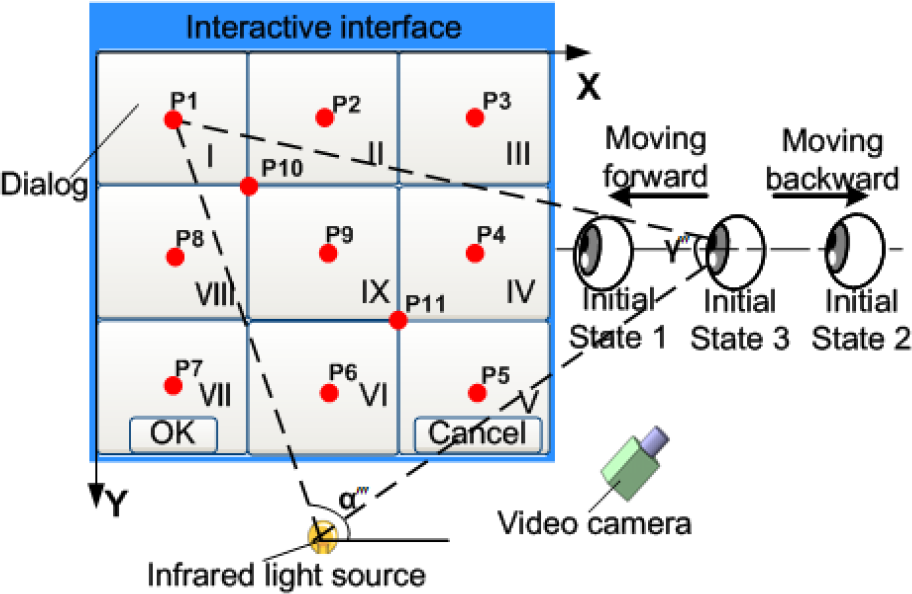

Combined the normal distribution with Eq. (3), it can be finally obtained as follows:

Where

4.2. Adaptive Calibration Procedure

Different from the traditional eye gaze tracking system, the provided HRI system can independently identify system working states based on interaction historical information between users and the system, and can correct the work parameters automatically through adaptively calibrating parameters.

As illustrated in Figure 4, a kind of adaptive calibration procedure is provided. There is no need to perform the calibration procedure repeatedly if the system work states can meet the requirements of accurate and natural interaction between the user and the system. Thus, according to the testing data and experiences, the tolerated accuracy threshold for recalibration can be predefined as a constant T. In addition, the system assesses the status of task performing in a way, by setting two kinds of task status flag on the interface, such as “OK” and “Cancel”, if the command “OK” is invoked, it means that the task has been successfully completed, on the other hand, if the command “Cancel” is selected, it means that the result can not meet the requirements of users. Then based on the historical information of interaction, the system can calculate the success rate of interaction tasks, defined as P(F)=n/m, where F represents the event of successful execution, n stands for the frequency of F and m is the total times for performing the interaction tasks. Once P(F)<T, the system will warn the user with a pop-up message-box asking if the calibration procedure should be performed right now. If “Yes” is chosen, the system will judge if the user's head moves forward or backward and identifies which side the user's head moves by Bayesian decision-making theory with minimum error rate, and then correcting the system work parameters using the relevant Eq. (4) or Eq. (5). After each calibration procedure, a pop-up message-box will appear asking the user if it should end the calibration immediately.

5. Experiment and Analysis

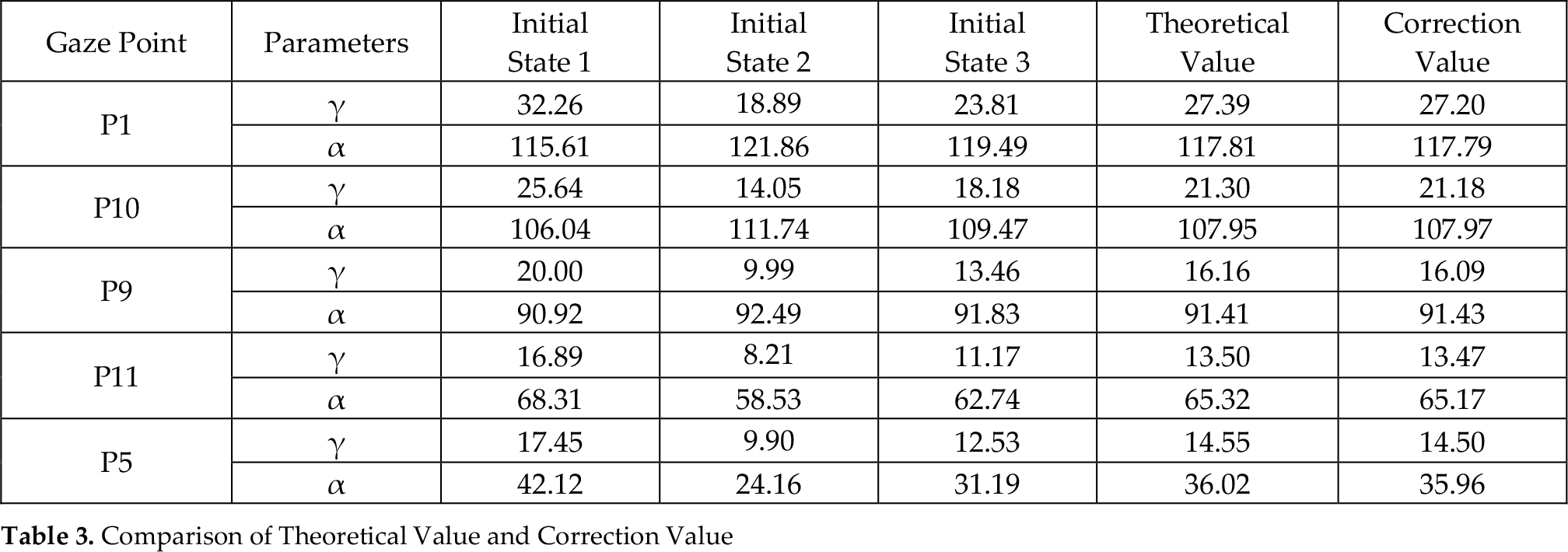

In order to verify the practicality of the proposed adaptive calibration mechanism, some experiments have been performed. The HRI system is set up as shown in Figure 5. The interactive interface, shown in Figure 6, is defined by 3×3 nine regions and displayed on a screen with the size of 306mm × 192mm. Moreover, the interaction semantics or commands corresponding to each region, such as robot start, robot stop, moving forward, moving backward, are managed hierarchically by a XML file, in this way, the system can supply multifunction and meet the different interaction requirements of users. In light of the established sensing model shown in Figure 2, taking the upper left corner of the interface as the origin of coordinates, the coordinate of infrared light source is (165,155,190) and that of camera is (170,155,170). The eye images are captured by video camera and the eye features and parameters can be detected in real-time as shown in Figure 7, so the vector from the purkinjie spot to the pupil centre can be easily calculated. Eye gaze identified angle and eye gaze direction angle will also be obtained respectively with Eq. (3) and Eq. (4). Then, the robot gets what the user wants to do right now and implements it instead of the user himself.

Adaptive Calibration Procedure

The data for three initial reference states are obtained and recorded when calibrating the working parameters, while users apply for the first time to interact with the system. During the experiments, the three data states are obtained relative to corresponding initial calibrating positions, initial state 1 is under the condition that the user's head is 400mm off the interface, initial state 2 is 600mm, and initial state 3 is 500mm for the users head position to the interface. When performing calibration, five specified points (P1, P10, P9, P11, P5) were used for calibrating as shown in Figure 3.

The HRI System work environment

The interactive interface of the system

Eye features and parameter detected in the interaction process

Finally, the correcting parameters of gaze identified angle γ and gaze direction angle α were recorded for different work situations, e.g., the experimental data of the parameters were recorded as shown in Table 3, when the user's head position is 450mm from the interface.

Comparison of Theoretical Value and Correction Value

Gaze identified angle γ correction

Gaze direction angle α correction

As can be seen from the experiment results shown in Table 3, Figure 8 and Figure 9, it indicates that correction values, neither of gaze identified angle γ nor of gaze direction angle α, are well approximated to theoretical values achieved with the gaze sensing model Eq. (2) and Eq. (3), therefore, the proposed mechanism and algorithm can effectively correct the work parameters to meet the demands of the gaze tracking HRI system.

Parameters recorded for Bayesian Decision-Making

The system working parameters can be affected by many factors, such as light source changes, interface size and camera properties, and these factors almost always exist in the system. Additionally, the user's head movements have a significant influence on the accuracy of the system.

According to Eq. (2) and Eq. (3), we can accurately predict the theoretical values as described in Table 3. Automatically, based on interaction historical information, the system would soon figure out that P(F) is lower down if the user's head position varies out of toleration and a new calibration is performed. In the calibration process, the tendency of head movements could be judged using Bayesian decision-making theory. For example, when the user's head position changes from 500mm to 450mm off the interface, the work data of

Where

6. Conclusion

Adaptive HRI systems have been researched intensively for a long time, especially since they have been applied to helping the elderly and disabled. To realize an adaptive infrared-TV eye gaze tracking HRI system, a kind of adaptive calibration mechanism has been provided for correcting the system working parameters, based on a gaze direction sensing model built in this paper. The direction sensing model provided for detecting the eye gaze identified parameters and we paid more attention to situations where the user's head is in a different position to the interaction interface. The algorithm included within the calibration mechanism for automatically correcting work parameters of the system was put up by defining certain initial reference system work states and analysing the interaction historical information between users and the system. Furthermore, considering some application cases and factors, the mechanism autonomously identified system state and adaptively calibrated parameters based on minimum error rate Bayesian decision-making theory. In the established gaze tracking HRI system, the favourable properties of the proposed calibration mechanism and algorithm have been verified, that is, the mechanism and algorithm can well identify the system work states in many situations and can automatically correct the work parameters.

Footnotes

7. Acknowledgments

This work was supported by National Natural Science Foundation of China (NO. 51075252 & NO. 61101177).