Abstract

The present work proposes a method for human gait and kinematic analysis. Gait analysis consists of the determination of hip, knee and ankle positions through video analysis. Gait kinematic for the thigh and knee is then generated from this data. Evaluations of the gait analysis method indicate an acceptable performance of 86.66% for hip and knee position estimation, and comparable findings with other reported works for gait kinematic. A coordinate systems assignment is performed according to the DH algorithm and a direct kinematic model of the legs is obtained. The legs' angles obtained from the video analysis are applied to the kinematic model in order to revise the application of this model to robotic legs in a power assisted system.

1. Introduction

Nowdays a huge amount of research exists on human-robot interaction and hybridization between humans and robots. Results of this research can be seen in recent years through daily reports in the media. The different applications of robotics into human life can be considered a very challenging research area with massive benefits [1]. Exoskeleton and active orthosis [2] are of special interest because they are assistive technologies for physically challenged persons [1, 3]. In rescue operations, firefighters and paramedics can carry equipment, tools, food, medication and surgical instruments, without extra effort and exhaustion, when necessary to cover long distances [3]. In industrial applications, a worker can carry heavy loads without stress or injuries. The combination of intelligent control with great manoeuvrability, by the pilot, and the high power of the exoskeleton, make these systems an excellent tool to enhance human capabilities, or recuperates a person with a physical disability to a productive life.

Incorporation of robot systems to interact with humans requires systems able to perceive human stimulus such that the robot system may achieved the related action associated with the human stimulus [4]. A robot system aimed to simulate human gait must receive gait information that allows the system to mimic this action. Human gait is related to the style or characteristics involved in a person's walking [5]. Gait analysis has proved to be relevant to several fields, including biomechanics, robotics, sport analysis, rehabilitation engineering, etc. [6, 7, 8, 9].

The research reported in this paper presents an effort to extract real or natural gait information that is used posteriorly to generate robotic bipedal gait simulations and the implementation of these movements in a mechatronic structure. The gait information is extracted through a vision system used to automatically analyse human gait. The system is able to provide information related to hip, knee and ankle trajectories, as well as gait cycle analysis, cycle time, step and stride length, speed and cadence. The system was developed using the CASIA Gait Database [13].

The paper is organized in the following sections. The proposed method for visual gait analysis is reported in Section II. Evaluation of the method is analysed in Section III. Simulations of gait in a robot system are provided in Section IV. Finally, Section V presents the results and conclusions of the work.

2. Algorithm to Obtain the thigh and knee kinematic

2.1. Introduction

The algorithm developed determines automatically position marks of the hip, knee and ankle associated to the different gait phases. This information is then used to define the thigh and knee kinematic.

The gait analysis algorithm is base on the Approximated Median method [14]. This method is used to segment the human silhouette from videos and then gait markers of the hip, knee and ankle are found. Finally, angles are extracted from the silhouette. In addition, the gait features, cycle time, step and stride length, speed and cadence are extracted.

The initial condition of the segmentation algorithm considers the first video frame as the initial background which is continuously updated through the analysis to generate the updated background needed to extract the human silhouette.

2.2. Segmentation Stage

Considering a video

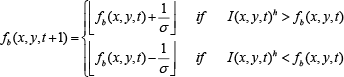

The background update is achieved by [14]

In order to avoid the incorporation of slow objects into the background, a parameter

Once the background is modelled, the dynamic objects can be obtained, Figure 1c, by [14]

where

a) Background model

2.3. Gait Mark Location in Non-occlusion Frames

Based on the image

The height is the first computation because other parameters like neck, shoulder, hip, knee and ankle row positions depend on it. Based on anatomic studies [16, 17], the estimation of the neck, shoulder, hip, knee and ankle row positions are [15, 18]

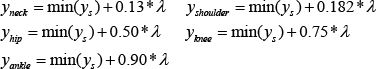

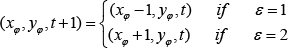

Silhouette regions, b) skeletonization, video 124-nm-04-090 CASIA gait database

Base on the previous estimations the human silhouette is divided horizontally into several horizontal regions, Figure 2a. These regions are used to obtain better linearization of the skeleton model of the silhouette. The regions are defined as follows: region 1 [

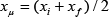

The hip coordinates correspond to the midpoint of the row

The segments of this skeleton are used to estimate the body marks relevant to the gain analysis. These segments are determined by computing the angles of the skeleton segments

For example

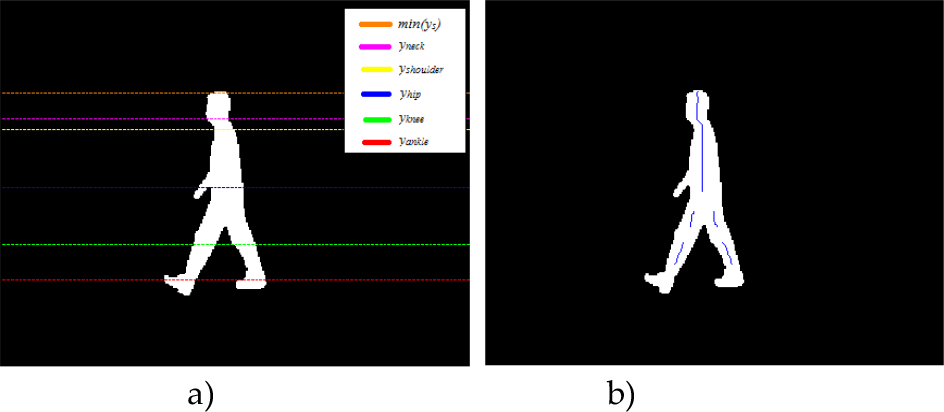

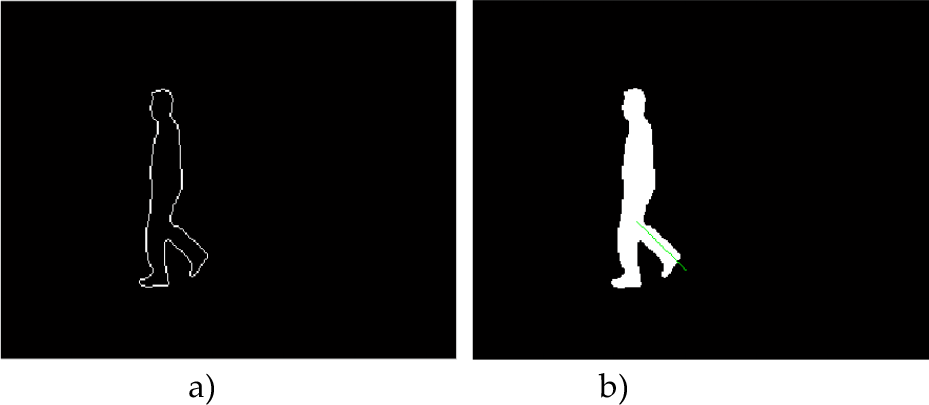

a) Silhouette segments, b) gait mark estimations, video 124-nm-04-090 CASIA gait database

where

2.4. Knee and Ankle Mark Location During Occlusion Frames of the Stance Limb, Initial and mid-Swing

One of the problems involved in gait body mark location and where the previous method does not work is during leg occlusion. This situation occurs in the stages of mid-stance and mid-swing. Therefore, knee and ankle detection is performed during occlusion frames as follows. The stance limb marks during occlusion are obtained from its marks prior to the occlusion. This is because the knee and ankle movements are minimal. The new knee position is calculated by

where (

2.5. Knee and Ankle Mark Location During Occlusion Frames of the Swinging Limb, Initial Swing

The next step is to calculate the swinging limb marks during occlusion. In the CASIA database, occlusion occurs during 4 or 5 frames. The two first frames correspond to initial swing and the other frames to the mid-swing.

Silhouette contour during occlusion, b) swinging limb slope,

Gait mark detection of the swinging limb during the initial swing is obtained by analysis of a contour silhouette, Figure 4, as follows. A line segment is obtained by finding the rightmost points of the calf of the leg. These points are determined by analysis of the rows

It is important to notice that segment linearization is only computed on the maximum points of the perimeter with a negative slope because these points correspond to the calf of the leg. It can be observed in Figure 4a that the sole has a positive slope, such that using the sole point for segment linearization, the swinging limb angle will be incorrect. Considering the aforementioned issues, the swinging limb slope

and the knee coordinate, (

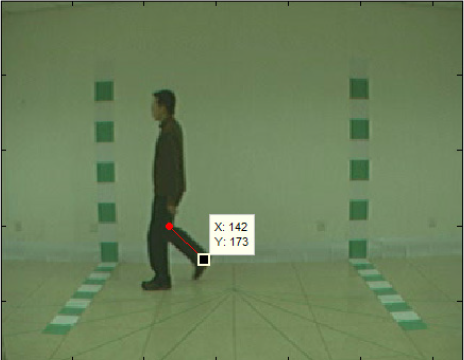

Figure 5 illustrates the marks of knee and ankle in the swinging limb during occlusion.

Knee and ankle mark location for initial swing during occlusion

2.6. Knee and Ankle Mark Location During Occlusion Frames of the Swinging Limb, Mid-swing

The knee and ankle location during mid-swing requires a new approach because there is a significant loss of the swinging limb information. First the ankle position is located. The ankle row

Ankle and knee positions during mid-swing

2.7. Cycle Time

Cycle time,

where

Position representation to obtain cycle time

2.8. Step and Stride Length

A step is considered as the distance from the heel strike of the stance foot to the heel strike of the foot ending the swing. A stride is the distance of the stance foot heel strike or the heel strike of the foot ending the swing to the next occurrence of the heel strike of the corresponding foot. The step length is the distance covered by the heel strike in a single limb support in the first occlusion frame to the end of the step, Figure 7a –7b. The stride length is the distance covered from the heel strike in a single limb support to the third single limb support, Figure 7a – 7c.

2.9. Speed and Cadence

Speed is computed by [18],

where

In this research the unit time used was 120 seconds [19].

2.10. Gait Marks Adjustment

The previous section described the estimation of the main gait marks. In order to obtain a better modelling of the gait kinematic, an adjustment to the estimation marks of the hip, knee and ankle is achieved. The adjustment is performed through a local search of a better location considering the leg shape and gait cycle phase. The basic idea is to adjust a location if the estimation, for example, is too close to an edge. Due to space restriction just a brief description is provided in this paper, a complete description can be found in [20].

3. Evaluation of the Algorithm

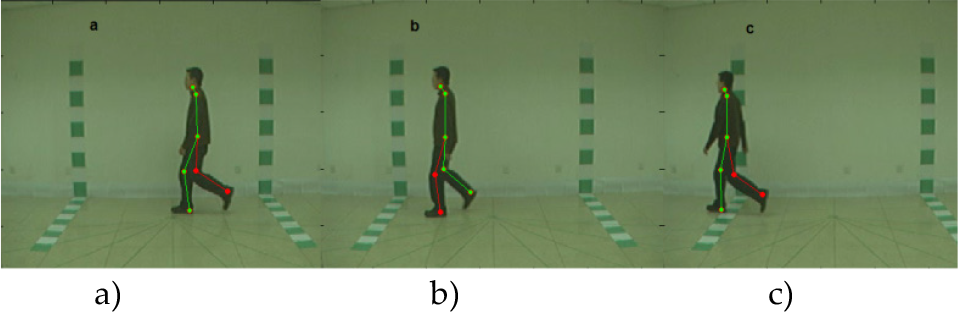

3.1. Ground Truth

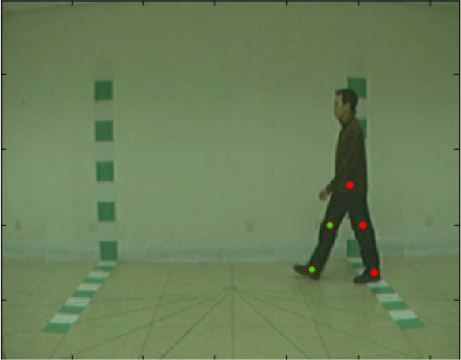

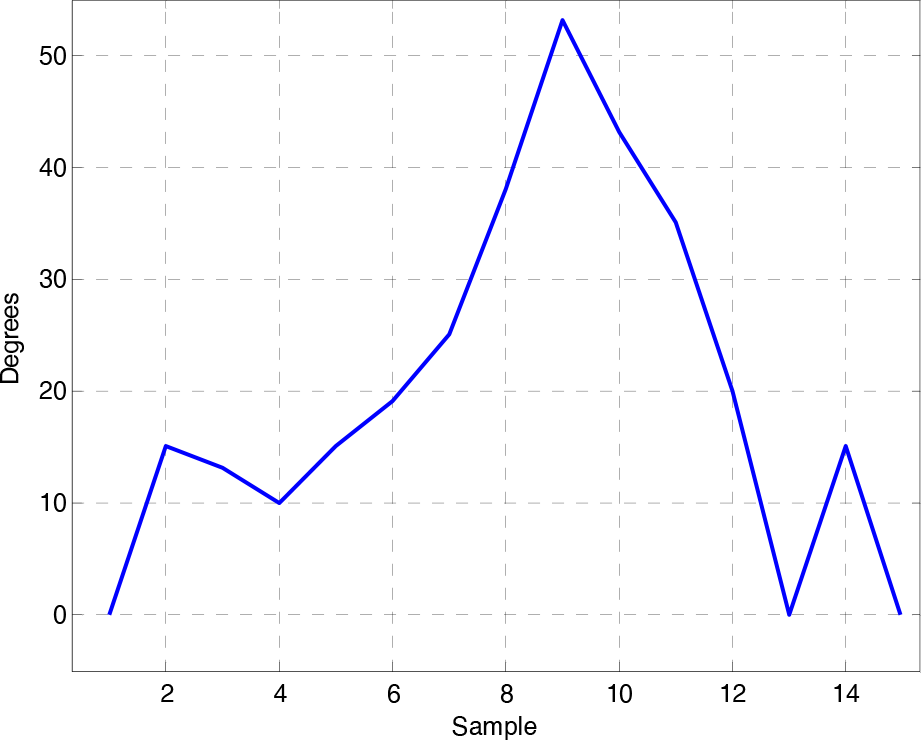

The gait mark determination algorithm was evaluated against a ground truth. The ground truth was generated by manually locating the gait marks of the hip, knee and ankle as shown in Figure 8. The ground truth was obtained from three videos randomly chosen; 124-nm-06-090 frames 50 to 67, 007-nm-04-090 frames 64 to 87 and 100-nm-06-090 frames 50 to 87. One video corresponds to a man and the other two to women. Comparisons of the kinematic results of the proposed algorithm versus the ground truth are illustrated in Figures 9 - 11. The upper plots show the angles of the knee and the lower the angles of the hip. The x- axis is the number of the video frame and the y-axis is the angles of the articulation.

Ground truth for a frame of video 124-nm-06-090

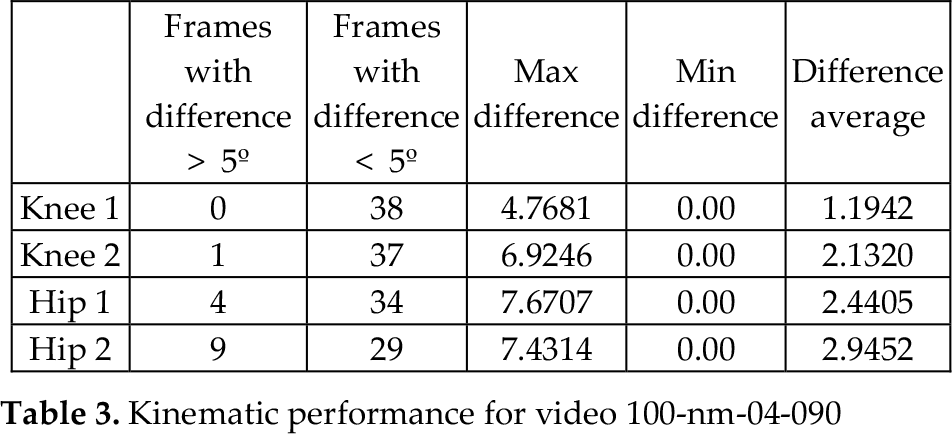

Quantitative results are provided in Tables 1–3. The green colour corresponds to the most forward leg.

On the one hand, the evaluation of video 124-nm, Figure 9 and Table 1, shows that the worst case occurs in hip 1 with errors greater than 5o in 5 frames. On the other hand, positions of the knees are correctly located, as well as hip 2 in several frames. The good performance of the algorithm can also be observed since 97 of 108 frames, 89%, have differences of less than 5o.

Kinematic comparison of the proposed algorithm versus ground truth, video 124-nm-06-090

Kinematic performance for video 124-nm-06-090

The results of video 007-nm-04-090, Figure 10 and Table 2, are not as good as for the video 124-nm-06-90, but they are still acceptable. They indicate 80% of correct detection, 76 frames out of 96 with an error less than 5o.

Video 100-nm-04-90 presents the best results, Figure 11 and Table 3. It reaches 91%, 138 over 152 frames, of performance for differences less than 5o. The average of the maximum differences is 6.695o.

Kinematic comparison of the proposed algorithm versus ground truth, video 007-nm-04-090

Kinematic performance for video 007-nm-04-090

Kinematic comparison of the proposed algorithm versus ground truth, video 100-nm-04-090

Comparisons of the proposed method with the work of Yoo [18] and the medical experiments reported by Murray [21] and Kabada [22] with respect to cycle time, step and stride length and gait speed were performed in the research. These results also showed comparable findings with respect to the medical results.

Kinematic performance for video 100-nm-04-090

In general and according to the previous results the proposed method to determine information of human gait kinematic provides acceptable information to be used in a powered assistance robotic mechanism. The use of this information in robotic simulation is described in the next section.

4. Kinematic analysis

We apply the DH algorithm to the legs and trunk, and only consider the sagittal plane results in the coordinate systems' assignation shown in Figure 12. The body is upright with the left leg raised to avoid overlapping of coordinate systems in the image.

The

Where

Coordinate systems' assignation

Here,

Here,

In order to be able to simulate the motion of the body using the angles obtained from the video sequences, we need to map the swing leg with respect to the stance leg. Considering the right leg as the stance leg, we can map the left knee with respect the right ankle using Equation (23).

The transformation matrices

The transformation matrix

Now, considering the left leg as the stance leg and the right leg as the swing leg, we need to map the right ankle with respect to the left ankle. Equation (25)

The transformation matrices

Equations (17)–(22) are consistent with Equation (9) for the determination of the knee, hip and shoulder positions for both legs, with respect to the previous body mark. Now we need to link or relate the definition of the hip, knee and ankle angles with the definition of the angles in Figure 12. The angle of the knee is defined with respect to the vertical and is measured with an absolute value as shown in Figure 13. The angle of the hip is measured with respect to vertical, also as shown in Figure 13. Considering the right leg as the stance leg, we can calculate the angle of the stance (right) knee using Equation (26).

The angle of the swinging (left) knee can be calculated using Equation (27).

Definition of the knee and hip angles

Considering the walking simulation of the system in Figure 12, shown in Figure 14, and using the values for

Comparing the upper left plot in Figure 9, we can see a very good approximation of the knee angle pattern. The angle of the hip can be calculated in a similar way to that used for the knee.

5. Results and conclusions

This paper presented an automatic visual gait analysis method able to detect hip, knee and ankle positions in the different gait phases, as well as gait cycle analysis, cycle time, step and stride length, speed and cadence. The proposed method was also able to deal with the occlusion situations that occur in the stages of mid-stance and mid-swing. The visual gait analysis method, besides being an automatic method, shows acceptable results compared with the manual ground truth as demonstrated in section III. Quantitative results indicate 86.66% of correct detection for the thigh and knee angles with values less than 5o. A kinematic analysis was also completed obtaining the equations to map the main body marks with respect to the stance ankle. Simulation results present a very good approximation of the knee angle pattern compared with the data obtained in the gait analysis part. In addition, some equations are given to relate to the medical definition of the limbs' angles with the definition given by the coordinate systems' assignation using the DH algorithm.

A simulation of a walking pattern

Angle of the knee as defined in Figure 13

Footnotes

6. Acknowledgments

The authors thank Fondo Mixto de Fomento a la Investigación Científica y Tecnológica CONACYT- Gobierno del Estado de Chihuahua for supporting this research under grant CHIH-2009-C02-125358. Portions of the research in this paper use the CASIA Gait Database collected by Institute of Automation, Chinese Academy of Sciences.