Abstract

For robots to be able coexist with people in future everyday human environments, they must be able to act in a safe, natural and comfortable way. This work addresses the motion of a mobile robot in an environment, where humans potentially want to interact with it. The designed system consists of three main components: a Kalman filter-based algorithm that derives a person's state information (position, velocity and orientation) relative to the robot; another algorithm that uses a Case-Based Reasoning approach to estimate if a person wants to interact with the robot; and, finally, a navigation system that uses a potential field to derive motion that respects the person's social zones and perceived interest in interaction.

The operation of the system is evaluated in a controlled scenario in an open hall environment. It is demonstrated that the robot is able to learn to estimate if a person wishes to interact, and that the system is capable of adapting to changing behaviours of the humans in the environment.

1. Introduction

Mobile robots are moving out into open-ended human environments such as private homes or even public spaces. The success of this shift relies on the robots' abilities to be responsive to, and interact with, people in a sociable, natural and intuitive manner. Research addressing these issues is labelled Human-Robot Interaction (HRI) (Dautenhahn K., 2007; Breazeal, 2002; Kanda, 2007). The focus is often on close interaction tasks like gestures, object recognition, manipulation, face expressions or speech (Althaus, Ishiguro, Kanda, Miyashita & Christensen, 2004; Fong, Nourbakhskh & Dautenhahn, 2003; Stiefelhagen, et al., 2007; Gockley, Forlizzi & Simmons, 2006; Kahn, Ishiguro, Friedman & Kanda, 2006). In shared dynamic environments, there is also a need for motion planning and navigation, which is another widely studied research topic. However, rather than just being a question of getting from A to B while avoiding obstacles, navigation algorithms have to adapt to people in the environment. As in Althaus, et al. (2004), HRI and navigation must be addressed simultaneously, to support the idea of shared environments.

An example from everyday human interaction could be a person working with customer service in a shopping centre. This person will detect other people in the environment, actively aiming to provide help. The service person will try to determine their interest or need for help and adjust actions accordingly. The service person will, over time, learn from experience and the degree of success, and thereby determining subsequent behaviour in similar situations. The service person may start approaching, while determining if the person being observed is interested in help. If the person is perceived as being interested in help, the service person will typically aim to reach a position in front of the person to initiate a closer interaction. If not, the service person will get out of the way and initiate a new interaction process by looking for other people.

This may be a simplified process, but enabling a robot to support such an interaction process is not a simple task. Detection requires that the robot is able to locate people in the environment and discriminate them from obstacles. Observation requires that people can be tracked and requires an interpretation of the interest in interaction. The robot must be able to learn from previous interaction to support this interpretation. Given an interpretation of the interest to interact, the robot must adapt its navigation strategy to either approach the person in a way perceived as comfortable or get out of the way. Finally, some type of closer interaction must be supported.

Detection and tracking of people, that is, estimation of the position and orientation (which combined is denoted as pose) has been discussed in Sisbot, et al. (2006) and Jenkins, Serrano & Loper 2007). Several sensors have been used, including 2D and 3D vision (Dornaika & Raducanu, 2008; Munoz-Salinas, Aguirre, Garcia-Silvente & Gonzalez, 2005), thermal tracking (Cielniak, Treptow & Duckett, 2005) or range scans (Rodgers, Anguelov, Pang & Koller, 2006; Fod, Howard & Mataric, 2002; Kirby, Forlizzi & Simmons, 2007). Laser scans are typically used for person detection, whereas the combination with cameras also produces pose estimates (Feil-Seifer & Mataric, 2005; Michalowski, Sabanovic & Simmons, 2006). Using face detection requires the person to always face the robot, and the person to be close enough to be able to obtain a sufficiently high resolution image of the face (Kleinehagenbrock, Lang, Fritsch, Lomker, Fink & Sagerer, 2002), limiting the use in environments where people are moving and turning frequently. The possibility of using 2D laser range scanners provides extra long range and lower computational complexity. The extra range enables the robot to detect the motion of people further away and thus have enough time to react to people moving at a higher speed.

Interpreting another person's interest in engaging in interaction is an important component of the human cognitive system and social intelligence, but it is such a complex sensory task that even humans sometimes have difficulties with it. Research that addresses the ability to recognise human social signals and behaviour is called Social Signal Processing (SSP) (Vinciarelli, Pantic & Bourlard, 2009; Vinciarelli, Pantic, Bourlard & Pentland, 2008; Zeng, Pantic, Roisman & Huang, 2008). In the example scenario, with the person from the customer service, learning was used as a way to build on previous experiences to form an interpretation of interest for a given person. Researchers have investigated Case-Based Reasoning (CBR) (Kolodner, 1993; Ram, Arkin, Moorman & Clark, 1997). CBR allows recalling and interpreting past experiences, as well as generating new cases to represent knowledge from new experiences. To our knowledge, CBR has not yet been used elsewhere in an HRI context but has proven successful in solving spatial-temporal problems in robotics (Likhachev & Arkin, 2001; Ram, Arkin, Moorman & Clark, 1997; Jurisica & Glasgow, 1995). An advantage of using CBR is the simple implementation and the simple parameter tuning. Other methods, like Hidden Markov Models, have (Kelley, Tavakkoli, King, Nicolescu, Nicolescu & Bebis, 2008) been used to learn to identify the behaviour of humans (Kelley, Tavakkoli, King, Nicolescu, Nicolescu & Bebis, 2008). Bayesian inference algorithms and Hidden Markov Models have also successfully been applied to modelling and for predicting spatial user information (Govea, 2007). To approach a person in a way that is perceived as natural and comfortable requires human- aware navigation. Human-aware navigation respects the person's social spaces is discussed in (Walters M. L., et al., 2005; Dautenhahn, et al., 2006; Takayama & Pantofaru, 2009). Several authors have investigated the interest of people to interact with robots that exhibit different expressions or follow different spatial behaviour schemes (Bruce, Nourbakhsh & Simmons, 2001; Christensen & Pacchierotti, 2005; Dautenhahn, et al., 2006; Hanajima, Ohta, Hikita & Yamashita, 2005). In Michalowski, Sabanovic & Simmons (2006) models are reviewed that describe social engagement based on the spatial relationships between a robot and a person, with emphasis on the movement of the person. Although the robot is not perceived as a human when encountering people, the hypothesis is that robot behavioural reactions with respect to motion should resemble human-human scenarios. This is supported by Dautenhahn, et al. 2006); Walters M. L., et al. 2005). Hall has investigated the spatial relationship between humans (proxemics) as outlined in Hall (1963; 1966), which can be used for Human-Robot encounters. This was also studied by Walters M. L, et al. (2005), whose research supports the use of Hall's proxemics in relation to robotics.

In this paper the focus is on detection, observation, navigation, and learning to determine the person interest in close interaction, which means that psychological studies of the naturalness and comfortable manner of the motion is out of the scope of this work. A simplified close interaction has also been implemented to complete the interaction process. We present a novel method for inferring a human's motion state (position, orientation and velocity) from 2D laser range measurements. The method relies on a fast algorithm for detecting the legs of people (Feil-Seifer & Mataric, 2005; Xavier, Pacheco, Castro, Ruano & Nunes, 2005), and fuses this with odometry data in a Kalman filter to obtain the motion state. Observation and learning is based on a CBR algorithm. Based on observed SSP signals, the CBR algorithm estimates a person's interest in interaction. In the given implementation, only the observed motion patterns are used as SSP information. For navigation, an adaptive human-aware navigation algorithm using a potential field, based on results from Fajen & Warren (2003); Sisbot, et al. (2005); Sisbot, Clodic, Urias, Fontmarty, Brèthes & Alami (2006), is presented. The shape of the potential field is derived from the human-human spatial relations described by Hall (1963).

An overview of how the different components are connected in the current system.

The algorithms have been implemented and demonstrated to work together in an open environment at the university.

2. Methodology

The robotic system is designed for a specific set-up, where a person encounters a robot in an open space and starts an interaction process as described above. We define the part of the interaction process starting from when the person is detected, until close interaction is initiated, as a session. In each session the person might, or might not, wish to engage in close interaction with the robot. The session is defined to last as long as the robot and person mutually adapt their motion according to each other, and is considered over when the person has either disappeared from the robot's field of view, or has initiated close interaction. Close interaction is, in this setup, initiated by pressing a simple button on the robot. In this case the person is perceived by the robot to be interested in close interaction. Since SSP is difficult (Vinciarelli, Pantic, Bourlard & Pentland, Social signal processing: state-of-the-art and future perspectives of an emerging domain, 2008) and interest cannot be directly measured as an absolute value, we refer to interest only as it is perceived by the robot and not as the true internal state of the person. When the person has disappeared without pressing the button, they are considered to not be interested in further interaction. When a session is over, the system proceeds to detecting new persons, and no further close interaction is considered.

The structure of the proposed system set-up is outlined in Figure 1. First, the pose of the person is estimated using a Kalman filter and an autoregressive filter. This information is sent to a person evaluation algorithm, which provides information to the navigation subsystem. The loop is closed by the robot, which executes the path in the real world and thus has an effect on how humans move in the environment.

2.1 Person Pose Estimation

First, the pose and velocity of the people in the robot's vicinity is determined. The method is derived for only one person, but the same method can be utilised when several people are present.

We define the pose of a person as the position of the person given in the robot's coordinate system, and the angle towards the robot, as seen from the person (p

pers

and θ in

The estimation of the person's pose can be broken down into three steps:

Measure the position of the person;

Use the continuous position measurements to find the state (position and velocity) of the person;

Use the velocity estimate to find the orientation (θ) of the person.

2.1.1 Position Measurements

The position measurements could, in principle, be done by any instrument, for example, external or on-board devices such as cameras, laser scanners or heat sensors. In our case we use an on-board laser range finder to find the position of the person, which has the advantage that it supports autonomy with no external sensors. Furthermore, processing a laser scan is computationally very efficient, compared to image processing from on-board cameras. The laser scans the area just below knee height, and a typical output from the laser range scanner is seen in

The state variables p

pers

and v

pers

hold the position and velocity of the person in the robot's coordinate frame. θ is the orientation of the person and

2.1.2 Person State Estimation

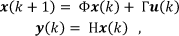

The next step is to estimate velocity vector of the person in the robot's coordinate frame. The velocity of the person cannot be calculated directly from the person position measurements since there will be an offset corresponding to the movement of the robot itself. If, for example, the person is standing still and the robot is moving then different position measurements will be obtained, but the velocity estimate should still be zero. Therefore, the position measurements from the laser range finder algorithm and robot odometry measurements are combined to obtain a state estimate. The fusion of these measurements is done in a Kalman filter, where a standard discrete state space model formulation for the system is used:

where

An image of the ranges from one laser rangefinder scan. The distance scale on the polar plot is in metres and the angle is in degrees.

The state is comprised of the person's position, person's velocity and the robot's velocity. The measurements are the estimate of the person's position and the odometry measurements.

The position of the person in the robot's coordinate frame (

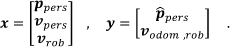

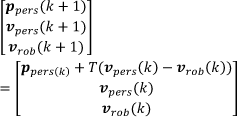

where T is the sampling time. The output matrix H from Eq. (1) follows directly from the measurement vector in Eq. (2), and thus Φ and H are given as:

where

The error in the linear process model, which is introduced by the rotational velocity, is compensated for by adding it to the input Γ

where

If the sample time is small compared to the rotational velocity, then a small angle approximation of the equations can be used. This means that cos(ψ̇T) ≈ 1 and sin(ψ̇T) ≈ ψ̇T, and Eq. (5) can be rewritten as:

where p

x

and p

y

are the x and y coordinates of the vector

where

If Φ(k) is not constant, the Kalman gain does not converge to a steady state value. Small deviations of Φ(k) will not be a practical problem, but some infrequent long sampling intervals have been observed. In the practical implementation Φ(k) and corresponding Kalman, gain is therefore calculated online.

2.1.3 Orientation Estimation

The direction of the velocity vector found above is not necessarily equal to the orientation of the person.

The adaptive coefficient β in the first order autoregressive filter used in the orientation estimation. The faster the person moves relative to the robot, the lower β is and the more the filter relies on the measurements.

Consider, for example, a situation where the person is standing almost still in front of the robot, but is moving slightly backwards. This means that the velocity vector is pointing away from the robot, although the person is facing the robot. To obtain a correct orientation estimate the velocity estimate is filtered through a first order autoregressive filter, with adaptive coefficients relative to the velocity. When the person is moving quickly, the direction of the velocity vector has a large weight, but if the person is moving slowly, the old orientation estimate is given a larger weight. The autoregressive filter is implemented as:

where β has been chosen experimentally relative to the absolute velocity v as:

In

2.2 Learning and Evaluation of Interest in Interaction

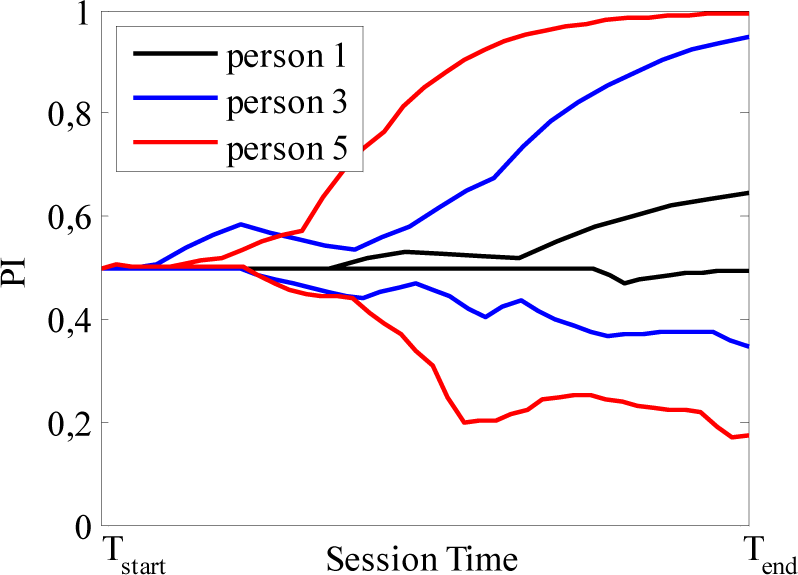

To represent the person's interest in interaction, a continuous fuzzy variable, Person Indication (PI), is introduced, which serves as an indication of whether or not the person is interested in interaction. PI belongs to the interval [0,1], where PI=1 represents the case where the robot believes that the person wishes to interact, and PI=0 the case where the person is not interested.

To determine the value of PI, an adaptive person evaluator, based on a CBR system, is designed. The method for estimating the PI of a person is to observe the behaviour of the person continuously, find out if something similar has happened in previous sessions, and set PI according to what has happened in previous sessions. The implementation of the CBR system is basically a database system which holds a number of cases describing each session. There are two distinct stages of the CBR system operation. The first is the evaluation of the PI, where the robot estimates the PI during a session using the experience stored in the database. The second stage is the learning stage, where the information from a new experience is used to update the database. The two stages can be seen in Figure 5, which shows a state diagram of the operation of the CBR system. Two different databases are used. The Case Library is the main database which represents all the knowledge the robot has learned so far, and is used to evaluate PI during a session. All information obtained during a session is saved in the Temporary Cases database. After a session is over, the information from the Temporary Cases is used to update the knowledge in the Case Library.

Operation of the CBR system. First the robot starts to look for people. When a person is found, it evaluates whether the person wants to interact. After a potential interaction, the robot updates the knowledge library with information about the interaction session, and starts to look for other people.

2.2.1 Database Format

Specifying a case is a question of determining a distinct and representative set of features connected to the event of a human-robot interaction session. The set of features could be anything identifying the specific situation, such as, the person's velocity and direction, position, relative position and velocity to other people, gestures, time of day, day of the week, location, height of the person, colour of their clothes, their facial expression, their apparent age and gender etc. The selected features depend on available sensors. For the experimental system considered in this paper, we consider only the motion behaviour of the person, and thus the following fields corresponding to each case will be used in the CBR database:

CaseID, is a reference number for each case

(p x , p y ), is the estimated position of the robot

(v x , v y ), is the estimated velocity of the robot

θ, is the estimated orientation of teh person

PI, is the corresponding Person Indication output value. To be able to compare cases to see if any are identical, each value is rounded to an implementation specific resolution. The database parameters are illustrated in

Input variables and the corresponding output PI stored in the CBR database.

2.2.2 Evaluation of Person Interest

Initially, when the robot locates a new person in the area, nothing is known about this person, so PI is assigned to the default value PI=0.5. After this, the PI of a person is continuously evaluated using the Case Library. First a new case is generated by collecting the relevant set of features described in Section 0. The case is then compared to existing cases in the Case Library to find matching cases. When searching for matching cases in the Case Library, a similarity function must be used to define when two cases are similar enough to be interpreted as a match, since in physical system, measurements will seldom be completely identical. This means that if, for example, the distance estimates are within 10cm, the similarity function can define the cases as matching. In our system, two cases are defined as matching given that the position and orientation difference are both within a specified range limit. If a match is found in the Case Library, the PI is updated towards the value found in the library according to the formula

where PI new is the new updated value of PI. This update is done to continuously adapt PI according to the observations, but still not trusting one single observation completely. If the robot continuously observes similar PI library values, the belief of the value of PI, will converge towards that value, e.g., if the robot is experiencing a behaviour, which earlier has resulted in interaction, lookups in the Case Library will result in PI library values close to 1. This means that the current PI will quickly approach 1 as well. After this, the case is copied to Temporary Cases, which holds information about the current session. If no match is found in the Case Library, the PI value is updated with PI library = 0.5, which indicates that the robot is unsure about the intentions of the person. The case is still inserted into the Temporary Cases.

2.2.3 Updating the Database

If the session resulted in close interaction, the robot assumes that the person was actually interested in the close interaction, and assumes the opposite if no close interaction occurred. This information, together with the Temporary Cases is used to revise the Case Library. The Case Library is updated according to Algorithm 1.

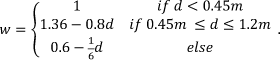

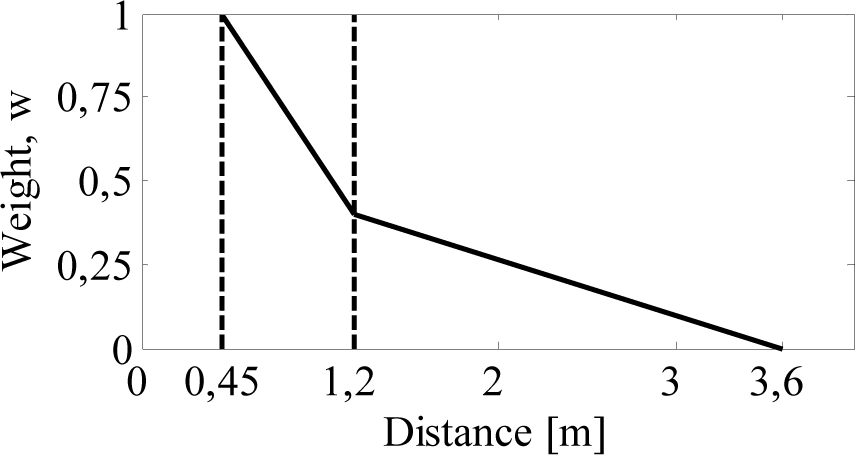

For each of the cases in the Temporary Cases the matching case in the Case Library is found. If there is no matching case in the Case Library, a new one is created with default PI=0.5 value. Then the associated PI is updated by a value of wL, where w is a weight related to the distance to the person, and L is a learning rate. Events which happen at close distances will often have more relevance and be easier to interpret than those at longer distances. Therefore, an observed case should have a larger impact the closer the person is, which is taken care of by w. The weight is implemented utilising the behavioural zones as designated by Hall (1966) and the weight as a function of the distance is shown in Eq. (12) and illustrated in

The weight w as a function of the distance between the robot and the test person. w is a weight used for updating the level of interest PI.

The learning rate L controls the temporal update of PI. It is an adjustable parameter, which determines how fast the system is adapting to new environments. In a conservative system L is low, so new observations will only affect PI to a limited degree. In an aggressive set-up L is large and, consequently, PI will adapt faster. After the main Case Library has been updated, the robot is ready to start over and look for new people for potential interaction.

2.3 Human-Aware Navigation

As written in the introduction, the human-aware navigation is inspired by the spatial relation between humans described by Hall (1963). Hall divides the area around a person into four zones according to the distance to the person:

the public zone, where d > 3.6m

the social zone, where d > 1.2m

the personal zone, where, d > 0.45m

the intimate zone, where d < 0.45m

If it is most likely that the person does not wish to interact (i.e. PI ≈ 0), the robot should not violate the person's personal space, but move towards the social or public zone. On the other hand, if it is most likely that the person is willing to interact, or is interested in close interaction with the robot (i.e. PI ≈ 1), the robot should try to enter the personal zone in front of the person. Another navigation issue is that the robot should be visible to the person, since it is uncomfortable for a person if the robot moves around, where it cannot be seen.

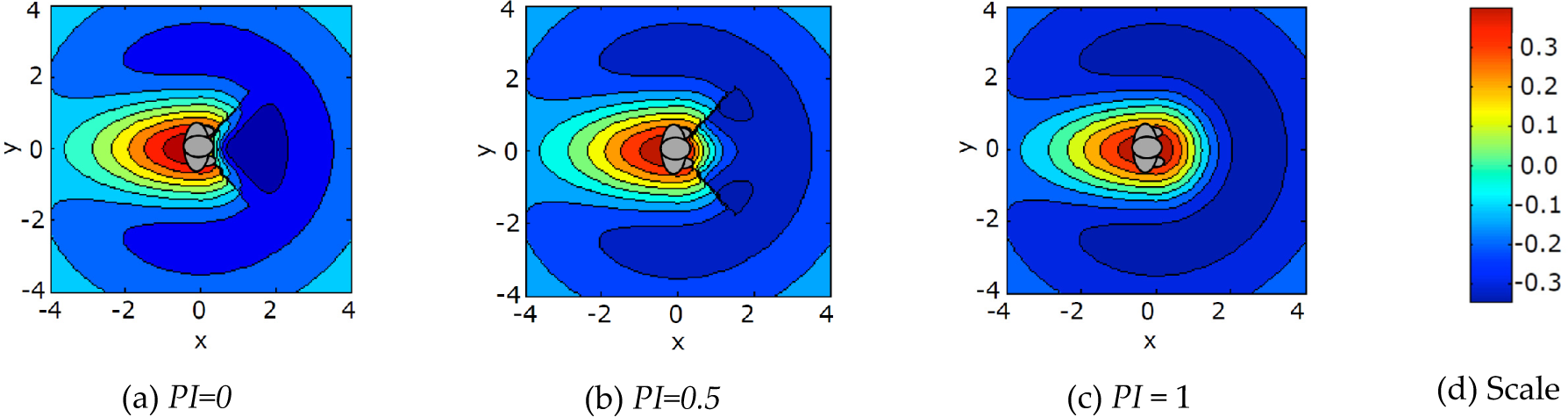

To allow human-aware navigation, a person-centred potential field is introduced. The potential field has high values where the robot is not allowed to go, and low values where the robot should be, or should try to move towards.

All navigation is done relative to the person(s), and hence no global positioning is needed in the proposed model. The method is inspired by Sisbot, Clodic, Urias, Fontmarty, Brèthes & Alami (2006) and further described in Andersen, Bak & Svenstrup (2008). The potential field landscape is derived as a sum of several different potential functions with different purpose. The different functions are:

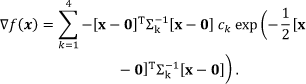

All four potential functions are implemented as normalised bi-variate Gaussian distributions. A Gaussian distribution is chosen for several purposes. It is smooth and easy to differentiate to find the gradient, which becomes smaller and smaller (i.e. has less effect) further away from the person. Furthermore, it does not grow towards ∞ around 0 as, for example, a hyperbola (e.g.

The four Gaussian distributions used for the potential field around the person. The rear area is to the left of the y axis. The frontal area (to the right of y axis) is divided into two: one in the interval from [−45°; 45°], and the other in the area outside this interval. The parallel and perpendicular distributions are rotated by the angle α.

The shapes of the variances of the four Gaussian distributions are illustrated in

where k = 1 … 4 is the different potential functions, c

k

is a normalising constant and

The attractor and rear distribution are both kept constant for all instances of PI. The parallel and perpendicular distributions are continuously adapted according to the PI value and Hall's proximity distances during an interaction session. Furthermore, the preferred robot-to-person encounter direction, reported in Dautenhahn, et al. (2006); Woods, Walters, Koay & Dautenhahn (2006), is taken into account by changing the width by rotation of the distributions. The width of the parallel and perpendicular distributions is adjusted by the value of the variances σ2

x,std

and σ2

y,std

. The rotation α may be adapted by adjustment of the rotation matrix R. For the parallel distribution σ2

x,std

= 1, and for the perpendicular distribution σ2

y,std

= 1. The mapping from PI to the respective other value of σ2

x,std

or σ2

y,std

is illustrated in

The adaptation of the potential field distributions enables the robot to continuously adapt its behaviour to the current interest in interaction of the person in question.

The resulting potential field contour can be seen in

Mapping of PI to the width (σ2 std ) along the minor axis of the parallel and perpendicular distributions, together with the rotation (α) of the two distributions.

Instead of just moving towards the lowest point at a fixed speed, the gradient of the potential field ▽f(

The reference velocity is then calculated as

where

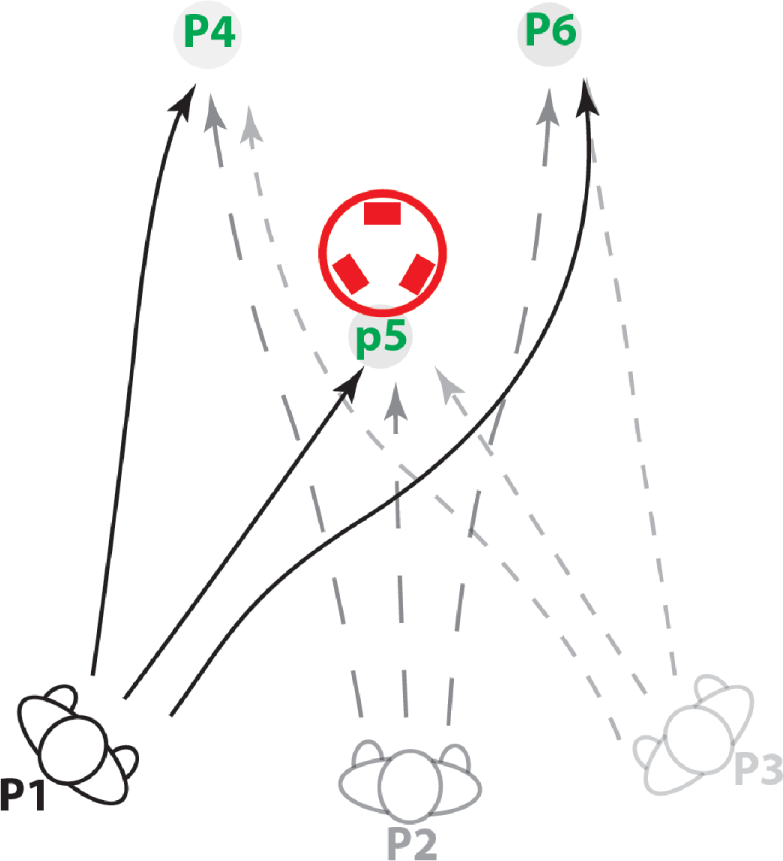

3. Experimental Set-up

In Svenstrup, Bak, Maler, Andersen & Jensen (2008) it is demonstrated that the person detection algorithm is working in a real-world scenario in a shopping centre. The person state estimation and human-aware navigation methods are tested in a laboratory setting in Svenstrup, Hansen, Andersen & Bak (2009). Therefore, the experiments here focus on integrating all three of the methods described above into a combined system, with the main focus on the operation of learning and evaluation of interest. The experiments are designed to illustrate the operation and the proof of concept of the combined methods. It took place in an open hall, with only one person at a time in the shared environment. This allowed for easily repeated tests with no interference from other objects than the test persons. The test persons were selected randomly from the students on campus. None had prior knowledge about the implementation of the system.

The FESTO Robotino robot used for the experiments.

Shape of the potential field for (a), a person not interested in interaction, (b) a person considered for interaction, and (c) a person interested in interaction. The scale for the potential field is plotted to the right and the value of the person interested indicator PI is noted under each plot.

3.1 Test Equipment and Implementation

The robot used during the experiments was a FESTO Robotino platform, which provides omnidirectional motion. A head, which is capable of showing simple facial expressions, is mounted on the robot (see

The figures show the values stored in the CBR system after completion of the 1st, 3rd and 5th test person. Note that the robot is located at the origin (0,0), since the measurements are in the robot's coordinate frame, which follows the robot's motion. Each dot represents a position of the test person in the robot's coordinate frame. The direction of the movement of the test person is represented by a vector, while the level (PI) is indicated by the colour range.

The CBR database has been implemented using MySQL. To make the case database tractable, the position (p x , p y ) was sampled using a grid resolution of 40cm, and the resolution of the orientation of a person has been set to 0.2rad. A learning rate, L = 0.3, which is a fairly conservative learning strategy, has been used for the experiments.

3.2 Test Specification

For evaluation of the proposed methods, two experiments with the combined system were performed. During both experiments the full system, as seen in

In

In

Illustration of possible pathways around the robot during the experiment. A test person starts from points P1, P2 or P3 and passes through either P4, P5 or P6. If the trajectory goes through P5, a close interaction occurs by handing an object to the robot.

4. Results

4.1 Experiment 1

As interaction sessions are registered by the robot, the database is gradually filled with cases. All entries in the database after different stages of training are illustrated by four-dimensional plots in

In

PI as a function of the session time for three different test persons. For each test person, PI is plotted for a session where close interaction occurs and for a session where no close interaction occurs. The x-axis shows the session time. The axis is scaled such that the plots have equal length.

A snapshot of the database after the second experiment was done. It shows how the mean value for PI is calculated for three areas: 1) the frontal area; 2) the small area; and 3) for all cases. The development of the mean values over time for all three areas is illustrated in Figure 16.

4.2 Experiment 2

Experiment 2 tests the adaptiveness of the CBR system. In order to see the change in the system over time, the average PI value in the database after each session is calculated. The averages have been calculated as an average for three different areas (see

•

As can be seen from

5. Discussion

As can be seen in

In all three figures (

Graph of how the average of PI evolves for the three areas indicated in Figure 14. 36 person interaction sessions for one test person is performed. The red vertical line illustrates where the behaviour of the person changes from seeking interaction to not seeking interaction.

In short, the experiments show that:

Determination of PI improves as the number of CBR case entries increases, which means that the system is able to learn from experience.

The CBR system is independent of the specific person, such that experience based on motion patterns of some people, can be used to determine the PI of other people.

The algorithm is adaptive, when the general behaviour of the people changes.

5.1 General Discussion on Reasoning System and Adaptive Behaviour

Generally, the conducted experiments show that CBR can be applied advantageously to a robot, which needs to evaluate the behaviour of a person. The method for assessment of the person's interest in interaction with the robot is based on very limited sensor input. This is encouraging as the method may easily be extended with support from other sensors, such as computer vision, acoustics etc.

The results demonstrate how, by fairly simple training, a robot can learn to estimate the interaction interest of a person. Such training may be used to give the robot an initial database that may later be refined to the specific situation in which it operates.

The proposed method for human-aware navigation demonstrates how Hall's zones, together with more recent results about the preferred robot encounter, may be used for the design of an adaptive motion strategy. The three methods — person pose estimation, learning and evaluation of interest in interaction, and human-aware navigation — are decoupled in the sense that they only have a simple interface with each other. This opens a way of using the methods separately with other control, navigation or interaction strategies.

This work shows that by coupling the CBR system with human-aware navigation, the result is an adaptive robot behaviour respecting the personal zones depending on the person's interest to interact — a step forward from previous studies (Sisbot, et al., 2005; Sisbot, Clodic, Urias, Fontmarty, Brèthes & Alami, 2006).

6. Conclusions

In this work, we have described an adaptive system for natural motion interaction between mobile robots and humans. The system forms a basis for human-aware navigation respecting a person's social spaces. The system consists of three independent components:

A new method for pose estimation of a human by using laser rangefinder measurements.

Learning human behaviour using motion patterns and Case-Based Reasoning (CBR).

A human-aware navigation algorithm based on a potential field.

Pose estimates are used in a CBR system to estimate the person's interest in interaction, and the spatial behaviour strategies of the robot are adapted accordingly using adaptive potential functions.

The evaluation of the system has been conducted through two experiments in an open environment. The first of the two experiments of the combined system shows that the CBR system gradually learns from interaction experience. The experiment also shows how motion patterns from different people can be stored and generalised in order to predict the outcome of an interaction session with a new person.

The second experiment shows how the estimated interest in interaction adapts to changes in behaviour of a test person. It is illustrated how the same motion pattern can be interpreted differently after a period of training.

The presented system is a step forward in creating socially intelligent robots, capable of navigating in an everyday environment and interacting with human beings by understanding their interest and intention. In the long-term perspective, the results could be applied to service or assistive robots in e.g. health-care systems.

In this paper, the potential field is used to steer the robot around one person, who might or might not be interested in interaction. But the potential field could also be used for navigation in populated areas with more people present. This could, for example, be in a pedestrianised street, where the robot has to move around.