Abstract

The Prediction of RNA secondary structures has drawn much attention from both biologists and computer scientists. Many useful tools have been developed for this purpose. These tools have their individual strengths and weaknesses. As a result, based on support vector machines (SVM), we propose a tool choice method which integrates three prediction tools: pknotsRG, RNAStructure, and NUPACK. Our method first extracts features from the target RNA sequence, and adopts two information-theoretic feature selection methods for feature ranking. We propose a method to combine feature selection and classifier fusion in an incremental manner. Our test data set contains 720 RNA sequences, where 225 pseudoknotted RNA sequences are obtained from PseudoBase, and 495 nested RNA sequences are obtained from RNA SSTRAND. The method serves as a preprocessing way in analyzing RNA sequences before the RNA secondary structure prediction tools are employed. In addition, the performance of various configurations is subject to statistical tests to examine their significance. The best base-pair accuracy achieved is 75.5%, which is obtained by the proposed incremental method, and is significantly higher than 68.8%, which is associated with the best predictor, pknotsRG.

Introduction

An RNA secondary structure is the fold of a nucleotide sequence. The sequence is folded due to bonds between non-adjacent nucleotides. These bonded nucleotide pairs are called base pairs. Three possible combinations of nucleotides may form a base pair: A-U, G-C, and G-U, where A-U and G-C are called Watson-Crick pairs and G-U is called the Wobble pair. Generally, an RNA secondary structure can be regarded as a sequence S with a set S' of base pairs (i, j), where 1 ≤ i < j ≤ |S| and ∀(i, j), (i', j') ∈ S', i = i' if and only if j = j'. By this definition, no base belongs to more than one base pair. The RNA secondary structure prediction problem is to identify the folding configuration of a given RNA sequence.

There are mainly two kinds of bonded structures, namely nested and pseudoknotted ones. Although prediction techniques on nested structures have been well developed, those on pseudoknotted ones are still limited in accuracy due to high computational requirements.1,2 A pseudoknotted structure is a configuration in which the bases outside a helix hold hydrogen bonds to form another helix. Given an RNA sequence S with a set S' of base pairs, the sequence is pseudoknotted if in S' there exist two pairs (i, j) and (i', j') such that i < i' < j < j'. These two types of bonded structures are illustrated in Figure 1. In the past decades, lots of tools have been developed to predict the RNA secondary structure. However, for computational reasons, most of them do not take the pseudoknotted structures into considerations as the required computational efforts for some prediction models have been proved to be NP-hard. 3

The nested (top) and pseudoknotted (bottom) bonded RNA structures.

The methods for predicting RNA secondary structures could be roughly categorized into two types, which are based on thermodynamics,2,4,5 and comparative approaches.6,7 The thermodynamic approaches manage to integrate some experimentally determined parameters with criteria of minimum free energy. Therefore, the obtained results conform with the true configurations. The comparative approach adopts the sequence comparison strategy. It requires users to input one sequence with unknown structure, and a set of aligned sequences whose secondary structures are known in advance.

For predicting RNA secondary structures, pknotsRG, 4 RNAStructure, 8 and NUPACK 9 are well-developed software tools. Since these tools resort to different criteria, each of them has its own metric and weakness. With their distinctness in prediction power, we propose a tool preference choice method that integrates these softwares in order to improve prediction capability.

Our method is based on the machine learning approach, which includes feature extraction, feature selection, and classifier combination methods. The features are first extracted from the given sequence and then these features are input into the classifier to determine the most suitable prediction software. In this paper, the feature selection methods, mRMR 10 and mRR, 11 are employed to identify the important features and SVM (support vector machine)12,13 is used as the base classifier.

To further improve prediction accuracies, we propose a multi-stage classifier combination method, namely incremental mRMR and mRR. Instead of selecting features independently, our classifier combination method takes the outputs of classifiers in the previous stages into consideration. Thus, the method guides the feature selector to choose the features that are most relevant to the target class label while least dependent on the previously predicted labels. The performance of various combination strategies is further subject to the statistical test in order to examine their significance. The best base-pair accuracy achieved is 75.5%, which is obtained by the proposed incremental mRMR and is significantly higher than 68.8%, the accuracy of the single best prediction software, pknotsRG. The experimental results show that our tool preference choice method can improve base-pair prediction accuracy.

The rest of this paper is organized as follows. In Preliminaries, we will first describe the SVM software and the RNA secondary prediction tools used in this paper. Then, we will present some classifier combination methods. The methods for bootstrap cross-validations are also presented. In addition, we describe features adopted in this paper. The detailed feature extraction methods are presented in Supplementary materials. Feature relevance and feature selection presents the feature relevance and selection. In Classifier combination, we focus on how to integrate multiple classifiers. The data sets used for experiments and the performance measurements are presented in Data sets and performance evaluation. Our experimental results and conclusions are given in Experimental results and conclusions, respectively.

Preliminaries

Support vector machines

Support vector machine (SVM)

14

is a well-established technique for data classification and regression. It maps the training vectors

pknotsRG

Some algorithms for predicting pseudoknotted structure are based on thermodynamics.1,5 Since predicting arbitrary pseudoknotted structures in athermodynamic way is NP-complete, 16 Rivas and Eddy 2 thus took an alternative approach, which is based on the dynamic programming algorithm. Their method mainly focuses on some classes of pseudoknots and the complexity is of O(n 6 ) in time and of O(n 4 ) in space for the worst case, where n denotes the sequence length. Based on Rivas's system (pknots), 2 another prediction software tool, pknotsRG, 4 was developed. The idea is motivated by the fact that H-type pseudoknots are commonly observed in RNA sequences. Hence, by setting some proper constraints, pknotsRG can reduce the required prediction time to O(n 4 ) and space to O(n 2 ) for predicting pseudoknotted structures. The program (including source codes and precompiled binary executable codes) is available on the internet for download. Unlike other web-accessible tools, it is free of web service restrictions and it is appropriate for a large amount of data analysis. It folds arbitrary long sequences and reports as many suboptimal solutions as the user requests.

RNAStructure

RNAStructure was developed by Mathews et al, 8 and it is also based on the dynamic programming algorithm. The software incorporates chemical modification constraints into the dynamic programming algorithm and makes the algorithm minimize free energy. Since both chemical modification constraints and free energy parameters are considered, the software works reasonably better than those that adopt only free energy minimization schemes. The program is also available for both source codes and web services. It can be used for secondary structure and base pair probability predictions. In addition, it also provides a graphical user interface for Microsoft Windows (Microsoft Corporation, Redmond, WA) users.

NUPACK

NUPACK is the abbreviation for Nucleic Acid Package and is developed by Dirks and Pierce. 9 It presents an alternative structure prediction algorithm which is based on the partition function. Because the partition function gives information about the melting behavior for the secondary structure under the given temperature, 17 the base-pairing probabilities thus can be derived accordingly. The software can be run on the NUPACK webserver. For users who want to conduct a large amount of data analysis, source codes can be downloaded and compiled locally. In most cases, pseudoknots are excluded from the structural prediction.

Majority vote

The majority vote (MAJ)18,19 assigns an unknown input

The disadvantage of the original majority vote cannot handle conflicts from classifiers or even numbers. Let us assume that there are four classifiers involved in solving 3-class classification problem. If the outputs of these four classifiers are (1, 0, 0), (0, 1, 0), (1, 0, 0), (0, 1, 0), there would be no way to resolve the conflict. In this paper, we take the idea of weighted majority vote (WMJ), that is, if classifiers' predictions are not equally accurate, then we assign the more competent classifiers more power in making the final decision. Let us take the same problem as an example. If the classification error rates of the four classifiers are 0.1, 0.3, 0.2, and 0.35, respectively, it would be reasonable to report c = 1. This is because the output obtains the most common agreement among all classifiers. Conventionally, the voting weights are expressed in terms of classification error rates. That is, in the weighted majority voting scheme, the voting weight is defined as log((1 - err)/err) x constant, 20 where err denotes the error rate and the constant is set to 0.5.

Behavior knowledge space

Behavior knowledge space (BKS)

21

is a table look-up approach for classifier combinations. Let us consider a classification task for m classes. Assume a classifier ensemble is composed of L classifiers which collaborate to perform the classification task. Given an input

In practice, the algorithm can be implemented by a look-up table, called a BKS table. Each entry in the table contains L cells where each cell accumulates the number of the true classes of training samples falling in. During the recognition stage, the ensemble first collects each classifier's output Di(x), 1 < i ≤ L. Then it locates which entry matches the outputs, and then picks up the class label corresponding to the plurality cell.

Table 1 illustrates an example of the BKS table with m = 3 and L = 2. D1 and D2 represent outputs from the two classifiers. Entries below D1 and D2 are all possible predictions. Cells below “true class,” which are P1,P2, and P3, are the numbers of class labels that the training data fall into. Thus, each entry in the table contains the most representative label that associates with the preference of the classifier ensemble.

An example of the BKS table

Adaboost

Adaboost, short for Adaptive Boosting, is a machine learning algorithm, 20 which may be composed of any types of classifiers in order to improve performance. The algorithm builds classifiers in an incremental manner. That is, in each stage of single classifier training, the weights of incorrectly classified observations are increased while those of correctly classified observations are decreased. Consequently, subsequent classifiers focus more attention on observations that are incorrectly classified by previous classifiers. Since there are several classifiers involved making decisions, the WMJ approach is adopted. Although the individual classifiers may not be good enough to perform good prediction alone, as long as their prediction power is not random, they would contribute improvements to the final ensemble. In this paper, Adaboost experiments are served as a performance benchmark for classifier combination.

Bootstrap cross-validation

In this paper, we adopt bootstrap cross-validation (BCV)

21

for performance comparison of classifiers with the k-fold cross-validation. Assume that a sample S = {(x1, y1), (x2, y2), …, (x

n

, y

n

)} is composed of n observations, where xi represents the ith feature vector, whose class label is yi. A bootstrap sample

Features

The features involved in this paper are summarized in Table 2, and the total number of the features is 742. The last column of the table shows the 50 top-ranking features selected by both mRMR and mRR, which will be discussed later. The hyphen symbol means that no feature of the factor falls into the 50 top-ranking features. All features are detailed in Supplementary materials.

The feature sets and the 50 top-ranking features by mRMR and mRR

Feature Relevance and Feature Selection

Feature relevance

In information theory, entropy is a measure of uncertainty of a random variable.

23

The entropy H of a discrete random variable X with possible values x1, x2, …, xh is formulated as:

Mutual information I(X, Y) quantifies the dependence between the joint distribution of X and Y, and it is defined as:

Conditional mutual information I(Xi, Y | Xj) stands for how much information variable Xi can explain variable Y, but variable Xj cannot. It is defined as:

Assume Y is a dependent variable, and Xi and Xj are independent variables. Conditional mutual information measures the discrepancy of prediction capability between variable Xi and Xj. It can also be considered as a dissimilarity of the two variables in terms of prediction power. In general, the conditional mutual information is not symmetric, that is I(Xi, Y | Xj) ≠ I(Xj, Y | Xi). To account for the distinction between these two variables, the average conditional mutual information DCMI(Xi, Xj) is usually adopted, that is (I(Xi, Y | Xj) + I(Xj, Y | Xi))/2.

Feature selection

The feature relevance constitutes the basic idea for feature ranking and feature selection. mRMR (minimal redundancy and maximal relevance) 10 and mRR (minimal relevant redundancy) 11 are two of well known information theoretic methods.

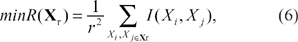

Most feature selection methods select top-ranking features based on F-score or mutual information without considering relationships among features. mRMR

10

manages to accommodate both feature relevance with respect to class label and dependency among features. The strategy combines both the maximal relevance and the minimal redundancy criteria. The maximal relevance criterion selects feature subset

where Xi is a feature within

In order to take the above two criteria into consideration and to avoid an exhaustive search, mRMR adopts an incremental search approach. That is, the rth selected feature should satisfy:

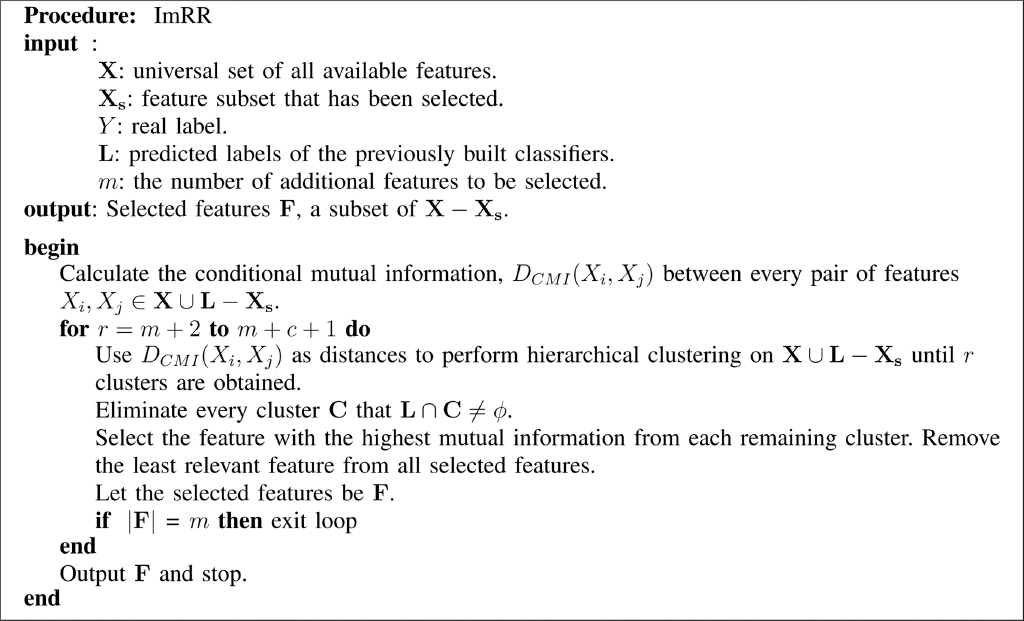

Instead of dealing with dependency between features, mRR 11 adopts the conditional mutual information to express distance between features. Let the target number of selected features be denoted by r. The algorithm starts with calculating average conditional mutual information DCMI(Xi, Xj) between any pair of distinct features. Next, the conditional mutual information is served as distances between features and hierarchical clustering processes are performed repeatedly until r + 1 groups remain. These clusters stands for the most distinct and representative feature groups. Then, the algorithm picks the feature with the highest mutual information from each cluster and sorts them into nonincreasing order. Since the last feature is assumed to come from the feature group of random noises, thus finally, only the first r features are reserved.

Classifier Combination

Incremental feature selection

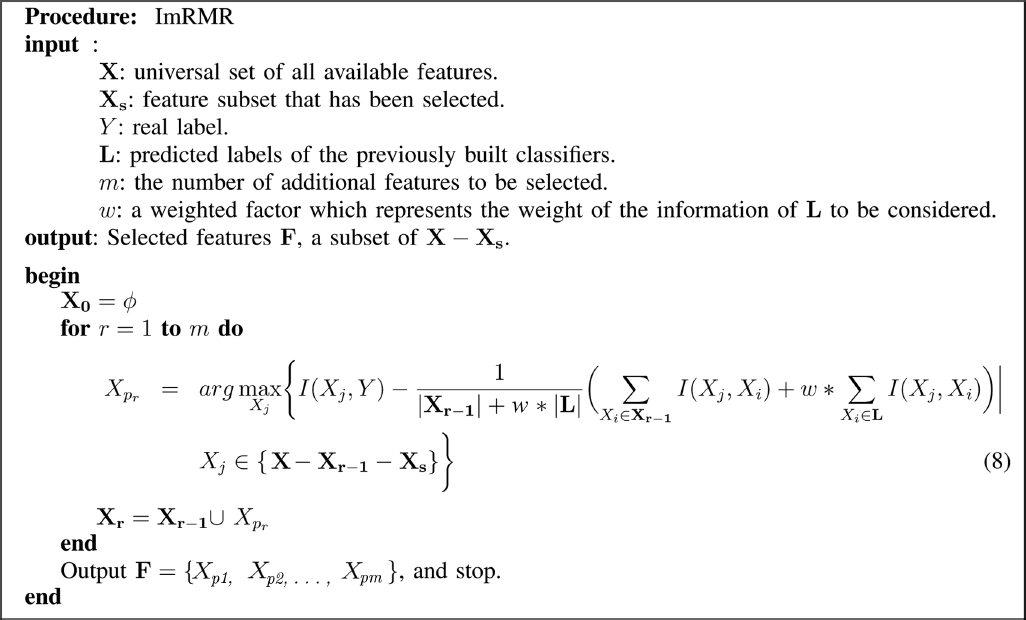

In this subsection, we propose an incremental feature selection method for improving classification accuracy. Our method is based on the mRMR 10 or mRR 11 feature selection method. Our method incrementally selects features in multiple stages. The feature selection in the latter stages involves the factor of the classifier preference of the former stages. Our method has the predicted labels of previous classifiers serve as preselected features, so that the subsequently selected features will be as relevant as possible to the real label, while being the least dependent on these previously predicted labels.

Suppose we take mRMR as our kernel selection function. At stage 1, α features are selected by mRMR and then they are used to train a classifier. In this paper, α is set to 50. Then, the training data elements are predicted by the classifier, and thus the predicted label of each element becomes the output. These predicted labels serve as artificial features for subsequent stages. Consequently, the subsequent feature selection procedure encourages the unselected features, which are most relevant to the real labels, but least dependent on the previously predicted labels. Note that the predicted labels are used only for evaluating the degree of mutual information (conditional mutual information in mRR), but they are not real candidate features to be selected. Since our method is designed to learn incrementally, it is called incremental mRMR (ImRMR). When we invoke mRR as the kernel selection function, our method is called incremental mRR (ImRR).

Our incremental mRMR feature selection method is formally described in Procedure 1. The incremental mRR method is formally given in Procedure 2.

Data Sets and Performance Evaluation

The experimental data sets are obtained from PseudoBase 24 and RNA SSTRAND 25 websites. We retrieve all PseudoBase and RNA SSTRAND tRNA sequences and their secondary structure information. The sequences are then fed into pknotsRG, RNAStructure, and NUPACK for secondary structure prediction. To determine which software is the most suitable one for a given sequence, we adopt the base-pair accuracy for evaluation.

Suppose we are given an RNA sequence S = a1a2 … aN. Let the real partner of a nucleic base ai be denoted by

For each sequence, we calculate its base-pair accuracies given by the three softwares and assign a class label, which corresponds to the preferred software to the sequence. The labels are pk, rn, and nu, which are associated with pknotsRG, RNAStructure and NUPACK, respectively. Since our goal is to apply the machine learning approach to identifying the most prominent software for prediction, we remove the sequence that any two softwares have identical highest accuracies in order to avoid ambiguity. Hence, the final numbers are 495 for RNA SSTRAND tRNA and 225 for PseudoBase database. The numbers of the tool preference classes are shown in the first row of Table 3. As we can see, each sequence has its software tool preference for prediction.

Number of sequences and predicted base-pair accuracies in each tool preference class

The second row of Table 3 shows the overall base-pair accuracy, which each software alone predicts all sequences; it also shows the extreme level of accuracy that arises when we select the correct software for predicting each sequence. In the table, BP means base-pair. The overall accuracy here is defined as the quotient of the total number of correctly predicted bases and total number of bases from all sequences. It implied that we can obtain a more powerful predictor if the preference classification task is done well.

Procedure 1.

Procedure 2.

We adopt two performance measures for comparison, the classification accuracy and base-pair accuracy. The classification accuracy here is defined as Q = Σpi/N, where pi denotes the number of testing targets; those belong to class i and are correctly classified, and N denotes the total number of testing targets.

Experimental Results

We first perform classification experiments and then compare their performance. In each experiment, the leave-one-out cross-validation (LOOCV) method is used in order to obtain the average performance measure. Since our main goal is to obtain a tool selector with a good base-pair accuracy. Hence, for the base-pair accuracy, the BCV is first carried out and significant tests are conducted. Finally, we perform feature analysis and identify important features.

Experiments for classification accuracy

By default, mRMR selects the 50 most prominent features, and we also adopt this setting for mRR. Hence, at each stage, we obtain 50 features to train classifiers. The original feature selection of each stage in mRMR or mRR does not consider the classifiers' predicted labels from the previous stages. Thus, in our ImRMR or ImRR, once features are selected, we just exclude them and perform the original mRMR or mRR procedure in the subsequent stages.

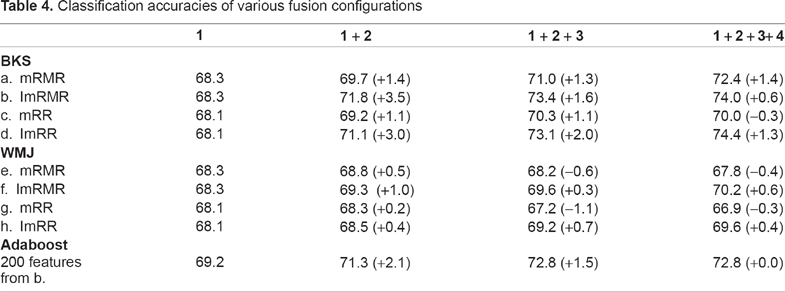

The methods for classifier fusion include weighted majority vote (WMJ) and behavior knowledge space (BKS). Table 4 shows the experimental result of various combinations. Since there are two kernel feature selection methods mRMR and mRR, and two classifier fusion methods BKS and WMJ, four totally different combinations are obtained. In each combination, two feature selection configurations, the original (or non-incremental) and our incremental, are compared. The weighted factor w for ImRMR is set to 1. In the table, 1 + … + C, where 1 ≤ C ≤ 4, means the classifiers built in stages from 1 to C are combined together. The last row of this table shows the performance of Adaboost, which will be discussed later.

Classification accuracies of various fusion configurations

It is observed that the classification accuracies of the incremental feature selection (ImRMR or ImRR) are higher than those of the original one. This may be due to the fact that the additional discriminant information is involved. Comparing results obtained from BKS and WMJ configurations, b, d, f, and h, we can see that BKS achieves higher accuracies. This is because WMJ can not reach correct answers when most classifiers give wrong predictions. In contrast, BKS can deal with the dilemma because it records data that are consistently misclassified (and classified) by classifiers and thus corrects the final answers. It is interesting to note that configuration g achieves the worst result. This may be due to the fact that the acquired feature subsets in each stage are extracted from the almost identically corresponding clusters as the previous stages; nearly no extra information is obtained.

During the fourth stage of BKS fusion, there should be 34 = 81 distinct patterns of classifier preferences. However, we find that less than 81 patterns are formed occasionally, and thus we have to trace back to the BKS table of the third stage. In addition, if we proceed the same procedure to the fifth stage, which will have at most 35 = 243 distinct patterns, we would get a BKS table that is quite sparse. Since we only have 719 samples for building BKS tables, it would imply that the fusion results may not be reliable enough. In this sense, the diversity or the sparseness of BKS tables would implicitly limit the times of fusion. As a result, only four stages were performed.

For the configurations e and g, after the third stage, the classification accuracies keep going down. This is because the most prominent features have been selected in the previous stages; the classifiers built in the subsequent stages would get less competent. Once the system starts to be dominated by these incompetent classifiers, the classification accuracies would go down. However, compared with configuration f and h, it again shows the merit of the incremental feature selection strategy.

To understand how the data fusion has effect on the classification accuracies, all 742 features and 200 features of configurations a, b, c and d (from Table 4) are used for LOOCV experiments, which are made without combining classifiers. The experiments about 200 features of configurations e, f, g, and h are omitted because of similar settings. The results are shown in Table 5. It reveals that training with all 742 features deteriorates the classification as too many incompetent features would ruin the system. Compared with configuration b and d in Table 4, it shows that the systems built with BKS or WMJ fusion achieve higher classification accuracies. This is because each group of 50 selected features is specialized for both the class label and the classifier preference of the previous stages. The obtained improvement exists only when the above condition is satisfied. Once these features are combined directly, conflicts may occur among these features. Consequently, the classification accuracies are not so good.

The classification accuracies of combined features

The last row in Table 4 shows the performance of Adaboost, whose base classifier is SVM and the number of stages is also set to four to comply with the previous experiments. To avoid randomness, we use the sample weights to derive exact sample numbers for training. That is, at each stage, the normalized sample weights are first multiplied by the number of total training samples and then rounded to integers. Samples are trained with their individual rounded numbers. Since the standard SVM does not perform feature selection, the best 200 features of configuration b is adopted throughout the experiments.

With the similar weighted majority vote for fusion, it shows that the Adaboost ensemble outperforms those of configurations/and h. This may be due to the fact that the Adaboost ensemble always uses good feature subset for classification while those of configuration f and h adopt less and less dominant features gradually. Hence, even being able to provide distinct information, the base classifiers of configuration f and h are not so competent. When comparing performances of the Adaboost ensemble and that of configuration a, the former one only yields a slightly better classification rate. Since the mRMR ranks features according to both maximal relevance and minimal redundancy, the base classifier in each stage is intrinsically distinct. In addition, the BKS mechanism also facilitates recording the preference of base classifiers. Even still, when compared with the Adaboost ensemble, the configuration a does not explicitly enhance the training of not-yet-learned samples. This may partly account for its lower accuracy. However, when we compare the configuration a and b, the latter one more explicitly focuses on information that has theoretically not been learned. Hence, the classification accuracies get elevated again. For the adaptation to what has not been learned yet, the Adaboost algorithm is more aggressive than information-theoretical methods because it directly aims at wrongly classified samples. Nevertheless, by choosing the classifier combination method to appropriately accommodate the classifier preference, as shown in configuration a, the comparative performance is still possible to be achieved.

Significance test for the base-pair accuracy and feature analysis

In this paper, we adopt bootstrap cross-validation 22 to verify which configuration is statistically significant in base-pair accuracy. The accuracies of each configuration are obtained by applying B = 50 bootstrap sampling procedures and then performing LOOCV (k = 720). The overall procedure is shown as follows. Procedure 3, the configuration Hj represents any one from Tables 4 and 5.

Before performing statistical tests, base-pair accuracies of each configuration are subject to the Kolmogorov-Smirno test 26 to ensure normality. Following Table 4, to examine whether incremental approaches are significantly better than non-incremental ones in base-pair accuracy, we extracted Abj from the above procedure, where j can be any configuration from Tables 4 and 5. Since the distribution of the bootstrap performance measures is approximately normal, we perform a paired t-test. 27 Table 6 shows the P-values for incremental against non-incremental ones for mRMR and mRR combined with BKS or WMJ fusion. The P-value is the probability of obtaining a test statistic to reject the null hypothesis, which means that there exists no systematic difference in base-pair accuracy between incremental against non-incremental fusions. The smaller the P-value, the stronger the test rejects the null hypothesis. It shows that there is merely 10% ((0.11 + 0.09 + 0.08 + 0.09)/4) that incremental approaches are not significantly better than non-incremental ones on average. In other words, there is a large probability that incremental approaches are significantly better than non-incremental ones. Consequently, we will not include non-incremental fusion approaches further in the subsequent comparison.

Paired t-test of base-pair accuracies for incremental versus non-incremental ones for BKS or WMJ fusion

Table 7 shows the classification, base-pair prediction accuracies, and numbers of selected features under various configurations. Each value behind the base-pair accuracy is the percentage exceeding the baseline accuracy, which is achieved by the most prominent software, pknotsRG. As the table shows, applying all features for prediction tool choice achieves 72.2% base-pair accuracy, which is higher than the baseline accuracy. If the feature selection methods, such as mRMR and mRR, are adopted, the accuracies can be improved. Furthermore, once the incremental feature selection methods are combined with their tailored fusion methods, the results are further improved. The best base-pair accuracy achieved is 75.5%.

procedure 3.

The classification and base-pair prediction accuracies of various configurations

To verify the significance in base-pair prediction capability in Table 7, we first apply both normality tests and analysis of variance (ANOVA) analysis to ensure the obtained performance measures are adequate for the subsequent statistical tests. 28 Since there indeed exists difference, we thus conduct the TukeyHSD test 27 for further examination. Table 8 illustrates the P-values obtained from the TukeyHSD test in a pairwise manner. Each P-value[i, j] represents the significance for the ith row item against the corresponding jth column. The symbols ‘(++)’ and ‘(+)’ indicate 95% and 90% significance levels, respectively. For example, P-value [i = 2,j = 1] indicates that the SVM tool selector, trained with the features selected by mRMR, significantly outperforms pknotsRG in base-pair accuracy. However, P-value [i = 2,j = 2] implies that the mRMR-SVM selector is almost of the same power as the SVM selector with all features. As the table shows, all selectors have significantly higher base-pair accuracy than pknotsRG. The first three selectors (all features, 50 mRMR features, and 50 mRR features) are equally well tool selectors. This further implies that both mRMR and mRR are powerful feature selection methods and the prediction capability with 50 features is good enough to compete that with all features. The composite selectors (from row four to row eight) are dominantly better than any single selector. Except for ImRMR + WMJ, it is difficult to distinguish which composite selector is significantly powerful. It may imply that the proposed incremental fusion approach is comparable to Adaboost, even with fewer features in each fusion stage. In addition, it seems that the BKS and WMJ fusions are not significantly different.

TukeyHSD test for base-pair accuracies

The last column in Table 2 shows the 50 top-ranking features selected by both mRMR and mRR, which may represent the most important features for the SVM-based tool choice. The 50 features are obtained as follows. We start from m = 50, which represents top-ranking features by both mRMR and mRR. Then we check whether the intersection of the two selected feature sets is of size 50. If the size is not equal to 50, we set m = m + 1 and repeat the above procedure until 50 intersected features are selected. Following each feature in the table, the estimated mutual information and standard error are calculated. 29 We find that these features indeed come from diverse sources. Among those, there are 19 protein features, and 13 features come from the bi-transitional, tri-transitional, and dinucleotide factors. Other feature factors, like the compositional, spaced bi-gram, nucleotide proportional, potential single-stranded, co-occurrence, and length ones, are not included. These factors can be regarded as less prominent feature sets for the purpose of tool choice, since mutual information is a relevance measure between feature and label. From the ratio of the mutual information to standard error, we can conjecture how accurate the relevance is. If we use 2.0 as a ratio threshold, there are only four features, MM-10, Seg5, RF3-C10, and the sequence specific score, whose ratios are below this threshold, and those associated with the rest of 46 features are above the threshold. Hence, the obtained relevance information can be considered reliable. It is worth mentioning that the length feature is categorized as less important. It thus implies that our SVM-based tool selector relies little on the sequence length information. Consequently, this method may be extended to other RNA sequences, like truncated ones, without sacrificing too much accuracy.

Conclusions

In this paper, we propose a tool preference choice method, which can be used for RNA secondary structure prediction. Our method is based on the machine learning approach. That is, the preferred tool can be determined by more than one classifier. The tool choice starts by extracting features from the RNA sequences. Then the features are inputted into the classifier or ensemble to find out the most suitable tool for prediction. We apply the feature selection methods to identify the most discriminant features. Although these methods are proven to be powerful, they still require users to specify the number of features to be picked up. Hence, we adopt the default settings and devise data fusion methods tailored to the feature selection. The classifiers are thus trained with selected features incrementally. The number of combinations (ensembles) is determined implicitly by the fusion methods, which could be the diversity of classifiers' predicted labels or cross-validation accuracies. The experiments reveal that our tool choice method for the RNA secondary structure prediction works reasonably well, especially combined with the feature selection method and some fusion strategies. The best achieved base-pair accuracy is 75.5%, which is significantly higher than those of any stand-alone prediction software. Note that up to now, pknots RG is the best predictor, which has an accuracy rate of 68.8%.

Although we use RNA sequences from two databases and adopt three prediction softwares in this paper, our method is flexible for adding new features, RNA sequences, or new prediction software tools in the future. To further improve the prediction accuracies, more features could be exploited in the future. For example, researchers proposed other 2D,30–34 3D,35–39 4D, 40 5D, 41 and 6D 42 graphical representations for discrimination of nucleotide sequences. These features are able to differentiate sequences of real species and may also be useful for the tool choice purpose.

Footnotes

Author Contributions

Conceived and designed the experiments: CBY, CYH. Analysed the data: CYH, CHC. Wrote the first draft of the manuscript: CYH, CHC, CTT. Contributed to the writing of the manuscript: CYH, CBY, HHC. Agree with manuscript results and conclusions: CYH, CBY. Jointly developed the structure and arguments for the paper: CYH, CBY, HHC. Made critical revisions and approved final version: CBY. All authors reviewed and approved the final manuscript.

Funding

This research work was partially supported by the National Science Council of Taiwan under contract NSC-98-2221-E-110-062.

Competing Interests

Author(s) disclose no potential conflicts of interest.

Disclosures and Ethics

As a requirement of publication author(s) have provided to the publisher signed confirmation of compliance with legal and ethical obligations including but not limited to the following: authorship and contributorship, conflicts of interest, privacy and confidentiality and (where applicable) protection of human and animal research subjects. The authors have read and confirmed their agreement with the ICMJE authorship and conflict of interest criteria. The authors have also confirmed that this article is unique and not under consideration or published in any other publication, and that they have permission from rights holders to reproduce any copyrighted material. Any disclosures are made in this section. The external blind peer reviewers report no conflicts of interest.

Supplementary Materials

We describe the feature extraction used in this paper.