Abstract

Microarray data repositories as well as large clinical applications of gene expression allow to analyse several hundreds of microarrays at one time. The preprocessing of large amounts of microarrays is still a challenge. The algorithms are limited by the available computer hardware. For example, building classification or prognostic rules from large microarray sets will be very time consuming. Here, preprocessing has to be a part of the cross-validation and resampling strategy which is necessary to estimate the rule's prediction quality honestly.

This paper proposes the new Bioconductor package

Introduction

Studies of gene expression using high-density oligonucleotide microarrays have become standard in a variety of biological and clinical fields. They enable scientists to investigate the functional relationship between the cellular and physiological processes of organisms by studying transcription at genome-wide system levels. Affymetrix GeneChip® arrays are a very common variant of high-density oligonucleotide expression microarrays. The data recorded by means of the Affymetrix GeneChip® microarray technique are characterized by the typical levels of noise induced by the preparation, hybridization and measurement processes as well as a specific structure. Removing the sources of bias needs a specific preprocessing of the raw data as far as the steps background correction, normalization, and summarization are concerned. For more details and a brief introduction see e.g. Gentleman et al. 1

The open source projects R

2

and Bioconductor

3

provide tools in computational biology and bioinformatics. R is a free software environment and provides a wide and extensible range of statistical and graphical techniques. Bioconductor is an open source software project and repository of instruments for the analysis and comprehension of genomic data. It is primarily based on the R programming language. Especially, for the preprocessing of microarrays the Bioconductor repository offers many tools which are implemented and stored in various packages

4

:

Problems and challenges

Processing a large number of microarrays is generally limited by the available computer hardware. The main memory limits the number of arrays analysed. Furthermore, most of the existing preprocessing methods are very time consuming (http://bmbolstad.com/misc/ComputeRMAFAQ/size.html). 8 Tasks which request a repeated preprocessing of large microarray sets may block computing devices for a long time. A specific class of such tasks is the building of classification or prognostic rules which uses resampling of the original data to estimate the classification or prognostic error. 9

A further challenge is the fact that microarray experiments are becoming increasingly large. Meanwhile, large multi-centre studies — e.g. the Microarray Innovations in LEukaemia (MILE) study

10

— prepare for a standardised introduction of gene expression profiling in diagnostic algorithms, aiming to translate this novel methodology into clinical routine for the benefit of patients with the complex disorders. In the MILE study, data of more than 2000 patients is used to derive diagnostic rules based on gene expression. To preprocess the data so called ‘Add-On normalization’ has to be used repeatedly.

11

Add-On normalization allows to normalize a new microarray with respect to a normalization performed for a set off arrays. The

Solutions

Most of these problems can be solved using faster computer processors and bigger main memories. Some preprocessing methods included in the

Description

Parallel computing divides large computation problems into smaller ones, which are then solved concurrently. An overview, review and benchmark of parallel computing techniques and tools for parallel computing with R is available in Schmidberger et al. 13

Parallel computing for microarray data distributes arrays to different processors, performs demanding computations on smaller sets of microarrays and communicates the results between the processors efficiently to achieve the needed overall result. Microarray data are stored in a matrix structure. Using the block cyclic distribution the arrays will be distributed equally to all nodes. Therefore, the amount of required main memory per processor gets smaller. Basic Bioconductor packages can be used on the single processors since their data structure is array oriented. The

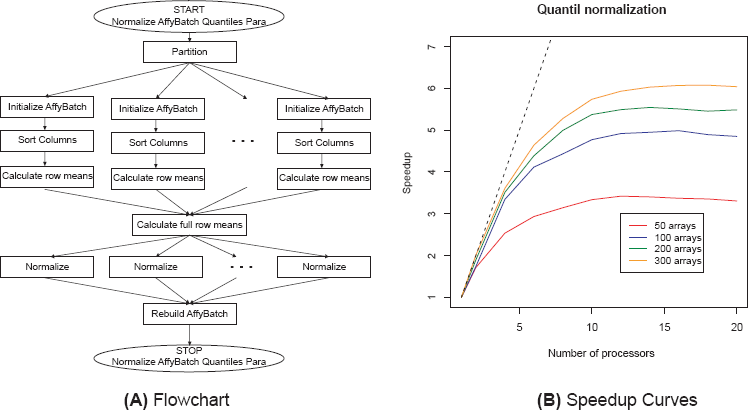

Existing statistical algorithms and data structures had to be adjusted and reformulated for parallel computing. Using the parallel infrastructure the methods could be enhanced and new methods will become available. For background correction the methods RMA and MAS 5.0 are implemented. These methods only depend on the actual array and can easily be parallelized. Normalization methods make measurements from different arrays comparable and multi-chip methods have proved to perform very well. 4 The normalization methods contrast, invariantset, quantile, and vsn (in development) are parallelized. The parallelization of the quantile normalization is visualized by the flowchart in Figure 1 (a). It was also possible to parallelize several summarization methods (avgdiff, liwong, mas, medianpolish) as well as complete preprocessing strategies (e.g. rma). Furthermore quality assessment tools optimized for huge numbers of microarrays are implemented.

Flowchart and relative speedup curves for parallelized quantile normalization calculated on the super-computer HLRBII at the LRZ in Munich, Germany.

The user-interface of the

> library (affy)

> AB < - ReadAffy()

> AB bgc < - bg.correct(AB, method = “rma”)

> library (affyPara)

> makeCluster (5, tpe = “MPI”)

> AB < - ReadAffy()

> AB bgc < - bgCorrectPara(AB, method = “rma”)

> stopCluster()

To use the power of parallel computing, the user needs only a working computer cluster and cluster start or administration programs (e.g. Sun Grid Engine). The R syntax is very similar and only two more lines for starting and stopping the cluster are required.

Results

In parallel computing, speedup (S) refers to how much a parallel algorithm is faster than a corresponding sequential algorithm: SN =T1/TN Where N is the number of processors, T1 the execution time of the sequential algorithm and TN the execution time of the parallel algorithm with N processors. 15 Limits for the speedup are described in ‘Amdahl's Law’. 16 For example in theory using N processors can not achieve a speedup of more than factor N.

Figure 1(b) visualizes the relative speedup for quantile normalization. The plot compares the parallelized and new implemented code in the

More details about the implementation, speedup of other methods and usage can be found in Schmidberger and Mansmann (2008) 8 or the vignette of the package.

Conclusion

The

Footnotes

Acknowledgement

The work of Markus Schmidberger and Ulrich Mansmann is supported by the LMUinnovative collaborative centre “Analysis and Modelling of Complex Systems in Biology and Medicine”.

The authors report no conflicts of interest.